## What changes were proposed in this pull request?

This pr adds `transform` function which transforms elements in an array using the function.

Optionally we can take the index of each element as the second argument.

```sql

> SELECT transform(array(1, 2, 3), x -> x + 1);

array(2, 3, 4)

> SELECT transform(array(1, 2, 3), (x, i) -> x + i);

array(1, 3, 5)

```

## How was this patch tested?

Added tests.

Author: Takuya UESHIN <ueshin@databricks.com>

Closes#21954 from ueshin/issues/SPARK-23908/transform.

## What changes were proposed in this pull request?

This pull request provides a fix for SPARK-24742: SQL Field MetaData was throwing an Exception in the hashCode method when a "null" Metadata was added via "putNull"

## How was this patch tested?

A new unittest is provided in org/apache/spark/sql/types/MetadataSuite.scala

Author: Kaya Kupferschmidt <k.kupferschmidt@dimajix.de>

Closes#21722 from kupferk/SPARK-24742.

## What changes were proposed in this pull request?

Remove the AnalysisBarrier LogicalPlan node, which is useless now.

## How was this patch tested?

N/A

Author: Xiao Li <gatorsmile@gmail.com>

Closes#21962 from gatorsmile/refactor2.

## What changes were proposed in this pull request?

This PR is to refactor the code in AVERAGE by dsl.

## How was this patch tested?

N/A

Author: Xiao Li <gatorsmile@gmail.com>

Closes#21951 from gatorsmile/refactor1.

## What changes were proposed in this pull request?

The PR adds the SQL function `array_except`. The behavior of the function is based on Presto's one.

This function returns returns an array of the elements in array1 but not in array2.

Note: The order of elements in the result is not defined.

## How was this patch tested?

Added UTs.

Author: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

Closes#21103 from kiszk/SPARK-23915.

## What changes were proposed in this pull request?

When user calls anUDAF with the wrong number of arguments, Spark previously throws an AssertionError, which is not supposed to be a user-facing exception. This patch updates it to throw AnalysisException instead, so it is consistent with a regular UDF.

## How was this patch tested?

Updated test case udaf.sql.

Author: Reynold Xin <rxin@databricks.com>

Closes#21938 from rxin/SPARK-24982.

## What changes were proposed in this pull request?

Previously TVF resolution could throw IllegalArgumentException if the data type is null type. This patch replaces that exception with AnalysisException, enriched with positional information, to improve error message reporting and to be more consistent with rest of Spark SQL.

## How was this patch tested?

Updated the test case in table-valued-functions.sql.out, which is how I identified this problem in the first place.

Author: Reynold Xin <rxin@databricks.com>

Closes#21934 from rxin/SPARK-24951.

## What changes were proposed in this pull request?

Similar to SPARK-24890, if all the outputs of `CaseWhen` are semantic equivalence, `CaseWhen` can be removed.

## How was this patch tested?

Tests added.

Author: DB Tsai <d_tsai@apple.com>

Closes#21852 from dbtsai/short-circuit-when.

## What changes were proposed in this pull request?

It proposes a version in which nullable expressions are not valid in the limit clause

## How was this patch tested?

It was tested with unit and e2e tests.

Please review http://spark.apache.org/contributing.html before opening a pull request.

Author: Mauro Palsgraaf <mauropalsgraaf@hotmail.com>

Closes#21807 from mauropalsgraaf/SPARK-24536.

## What changes were proposed in this pull request?

When the pivot column is of a complex type, the eval() result will be an UnsafeRow, while the keys of the HashMap for column value matching is a GenericInternalRow. As a result, there will be no match and the result will always be empty.

So for a pivot column of complex-types, we should:

1) If the complex-type is not comparable (orderable), throw an Exception. It cannot be a pivot column.

2) Otherwise, if it goes through the `PivotFirst` code path, `PivotFirst` should use a TreeMap instead of HashMap for such columns.

This PR has also reverted the walk-around in Analyzer that had been introduced to avoid this `PivotFirst` issue.

## How was this patch tested?

Added UT.

Author: maryannxue <maryannxue@apache.org>

Closes#21926 from maryannxue/pivot_followup.

## What changes were proposed in this pull request?

In the PR, I propose to support `LZMA2` (`XZ`) and `BZIP2` compressions by `AVRO` datasource in write since the codecs may have better characteristics like compression ratio and speed comparing to already supported `snappy` and `deflate` codecs.

## How was this patch tested?

It was tested manually and by an existing test which was extended to check the `xz` and `bzip2` compressions.

Author: Maxim Gekk <maxim.gekk@databricks.com>

Closes#21902 from MaxGekk/avro-xz-bzip2.

## What changes were proposed in this pull request?

I didn't want to pollute the diff in the previous PR and left some TODOs. This is a follow-up to address those TODOs.

## How was this patch tested?

Should be covered by existing tests.

Author: Reynold Xin <rxin@databricks.com>

Closes#21896 from rxin/SPARK-24865-addendum.

## What changes were proposed in this pull request?

This pr supported Date/Timestamp in a JDBC partition column (a numeric column is only supported in the master). This pr also modified code to verify a partition column type;

```

val jdbcTable = spark.read

.option("partitionColumn", "text")

.option("lowerBound", "aaa")

.option("upperBound", "zzz")

.option("numPartitions", 2)

.jdbc("jdbc:postgresql:postgres", "t", options)

// with this pr

org.apache.spark.sql.AnalysisException: Partition column type should be numeric, date, or timestamp, but string found.;

at org.apache.spark.sql.execution.datasources.jdbc.JDBCRelation$.verifyAndGetNormalizedPartitionColumn(JDBCRelation.scala:165)

at org.apache.spark.sql.execution.datasources.jdbc.JDBCRelation$.columnPartition(JDBCRelation.scala:85)

at org.apache.spark.sql.execution.datasources.jdbc.JdbcRelationProvider.createRelation(JdbcRelationProvider.scala:36)

at org.apache.spark.sql.execution.datasources.DataSource.resolveRelation(DataSource.scala:317)

// without this pr

java.lang.NumberFormatException: For input string: "aaa"

at java.lang.NumberFormatException.forInputString(NumberFormatException.java:65)

at java.lang.Long.parseLong(Long.java:589)

at java.lang.Long.parseLong(Long.java:631)

at scala.collection.immutable.StringLike$class.toLong(StringLike.scala:277)

```

Closes#19999

## How was this patch tested?

Added tests in `JDBCSuite`.

Author: Takeshi Yamamuro <yamamuro@apache.org>

Closes#21834 from maropu/SPARK-22814.

## What changes were proposed in this pull request?

When we do an average, the result is computed dividing the sum of the values by their count. In the case the result is a DecimalType, the way we are casting/managing the precision and scale is not really optimized and it is not coherent with what we do normally.

In particular, a problem can happen when the `Divide` operand returns a result which contains a precision and scale different by the ones which are expected as output of the `Divide` operand. In the case reported in the JIRA, for instance, the result of the `Divide` operand is a `Decimal(38, 36)`, while the output data type for `Divide` is 38, 22. This is not an issue when the `Divide` is followed by a `CheckOverflow` or a `Cast` to the right data type, as these operations return a decimal with the defined precision and scale. Despite in the `Average` operator we do have a `Cast`, this may be bypassed if the result of `Divide` is the same type which it is casted to, hence the issue reported in the JIRA may arise.

The PR proposes to use the normal rules/handling of the arithmetic operators with Decimal data type, so we both reuse the existing code (having a single logic for operations between decimals) and we fix this problem as the result is always guarded by `CheckOverflow`.

## How was this patch tested?

added UT

Author: Marco Gaido <marcogaido91@gmail.com>

Closes#21910 from mgaido91/SPARK-24957.

## What changes were proposed in this pull request?

Implements INTERSECT ALL clause through query rewrites using existing operators in Spark. Please refer to [Link](https://drive.google.com/open?id=1nyW0T0b_ajUduQoPgZLAsyHK8s3_dko3ulQuxaLpUXE) for the design.

Input Query

``` SQL

SELECT c1 FROM ut1 INTERSECT ALL SELECT c1 FROM ut2

```

Rewritten Query

```SQL

SELECT c1

FROM (

SELECT replicate_row(min_count, c1)

FROM (

SELECT c1,

IF (vcol1_cnt > vcol2_cnt, vcol2_cnt, vcol1_cnt) AS min_count

FROM (

SELECT c1, count(vcol1) as vcol1_cnt, count(vcol2) as vcol2_cnt

FROM (

SELECT c1, true as vcol1, null as vcol2 FROM ut1

UNION ALL

SELECT c1, null as vcol1, true as vcol2 FROM ut2

) AS union_all

GROUP BY c1

HAVING vcol1_cnt >= 1 AND vcol2_cnt >= 1

)

)

)

```

## How was this patch tested?

Added test cases in SQLQueryTestSuite, DataFrameSuite, SetOperationSuite

Author: Dilip Biswal <dbiswal@us.ibm.com>

Closes#21886 from dilipbiswal/dkb_intersect_all_final.

## What changes were proposed in this pull request?

Implements EXCEPT ALL clause through query rewrites using existing operators in Spark. In this PR, an internal UDTF (replicate_rows) is added to aid in preserving duplicate rows. Please refer to [Link](https://drive.google.com/open?id=1nyW0T0b_ajUduQoPgZLAsyHK8s3_dko3ulQuxaLpUXE) for the design.

**Note** This proposed UDTF is kept as a internal function that is purely used to aid with this particular rewrite to give us flexibility to change to a more generalized UDTF in future.

Input Query

``` SQL

SELECT c1 FROM ut1 EXCEPT ALL SELECT c1 FROM ut2

```

Rewritten Query

```SQL

SELECT c1

FROM (

SELECT replicate_rows(sum_val, c1)

FROM (

SELECT c1, sum_val

FROM (

SELECT c1, sum(vcol) AS sum_val

FROM (

SELECT 1L as vcol, c1 FROM ut1

UNION ALL

SELECT -1L as vcol, c1 FROM ut2

) AS union_all

GROUP BY union_all.c1

)

WHERE sum_val > 0

)

)

```

## How was this patch tested?

Added test cases in SQLQueryTestSuite, DataFrameSuite and SetOperationSuite

Author: Dilip Biswal <dbiswal@us.ibm.com>

Closes#21857 from dilipbiswal/dkb_except_all_final.

## What changes were proposed in this pull request?

In the PR, I added new option for Avro datasource - `compression`. The option allows to specify compression codec for saved Avro files. This option is similar to `compression` option in another datasources like `JSON` and `CSV`.

Also I added the SQL configs `spark.sql.avro.compression.codec` and `spark.sql.avro.deflate.level`. I put the configs into `SQLConf`. If the `compression` option is not specified by an user, the first SQL config is taken into account.

## How was this patch tested?

I added new test which read meta info from written avro files and checks `avro.codec` property.

Author: Maxim Gekk <maxim.gekk@databricks.com>

Closes#21837 from MaxGekk/avro-compression.

## What changes were proposed in this pull request?

This PR adds a new collection function: shuffle. It generates a random permutation of the given array. This implementation uses the "inside-out" version of Fisher-Yates algorithm.

## How was this patch tested?

New tests are added to CollectionExpressionsSuite.scala and DataFrameFunctionsSuite.scala.

Author: Takuya UESHIN <ueshin@databricks.com>

Author: pkuwm <ihuizhi.lu@gmail.com>

Closes#21802 from ueshin/issues/SPARK-23928/shuffle.

## What changes were proposed in this pull request?

AnalysisBarrier was introduced in SPARK-20392 to improve analysis speed (don't re-analyze nodes that have already been analyzed).

Before AnalysisBarrier, we already had some infrastructure in place, with analysis specific functions (resolveOperators and resolveExpressions). These functions do not recursively traverse down subplans that are already analyzed (with a mutable boolean flag _analyzed). The issue with the old system was that developers started using transformDown, which does a top-down traversal of the plan tree, because there was not top-down resolution function, and as a result analyzer performance became pretty bad.

In order to fix the issue in SPARK-20392, AnalysisBarrier was introduced as a special node and for this special node, transform/transformUp/transformDown don't traverse down. However, the introduction of this special node caused a lot more troubles than it solves. This implicit node breaks assumptions and code in a few places, and it's hard to know when analysis barrier would exist, and when it wouldn't. Just a simple search of AnalysisBarrier in PR discussions demonstrates it is a source of bugs and additional complexity.

Instead, this pull request removes AnalysisBarrier and reverts back to the old approach. We added infrastructure in tests that fail explicitly if transform methods are used in the analyzer.

## How was this patch tested?

Added a test suite AnalysisHelperSuite for testing the resolve* methods and transform* methods.

Author: Reynold Xin <rxin@databricks.com>

Author: Xiao Li <gatorsmile@gmail.com>

Closes#21822 from rxin/SPARK-24865.

## What changes were proposed in this pull request?

This is an extension to the original PR, in which rule exclusion did not work for classes derived from Optimizer, e.g., SparkOptimizer.

To solve this issue, Optimizer and its derived classes will define/override `defaultBatches` and `nonExcludableRules` in order to define its default rule set as well as rules that cannot be excluded by the SQL config. In the meantime, Optimizer's `batches` method is dedicated to the rule exclusion logic and is defined "final".

## How was this patch tested?

Added UT.

Author: maryannxue <maryannxue@apache.org>

Closes#21876 from maryannxue/rule-exclusion.

## What changes were proposed in this pull request?

If we use `reverse` function for array type of primitive type containing `null` and the child array is `UnsafeArrayData`, the function returns a wrong result because `UnsafeArrayData` doesn't define the behavior of re-assignment, especially we can't set a valid value after we set `null`.

## How was this patch tested?

Added some tests.

Author: Takuya UESHIN <ueshin@databricks.com>

Closes#21830 from ueshin/issues/SPARK-24878/fix_reverse.

## What changes were proposed in this pull request?

Besides spark setting spark.sql.sources.partitionOverwriteMode also allow setting partitionOverWriteMode per write

## How was this patch tested?

Added unit test in InsertSuite

Please review http://spark.apache.org/contributing.html before opening a pull request.

Author: Koert Kuipers <koert@tresata.com>

Closes#21818 from koertkuipers/feat-partition-overwrite-mode-per-write.

## What changes were proposed in this pull request?

In the PR, I propose to extend the `StructType`/`StructField` classes by new method `toDDL` which converts a value of the `StructType`/`StructField` type to a string formatted in DDL style. The resulted string can be used in a table creation.

The `toDDL` method of `StructField` is reused in `SHOW CREATE TABLE`. In this way the PR fixes the bug of unquoted names of nested fields.

## How was this patch tested?

I add a test for checking the new method and 2 round trip tests: `fromDDL` -> `toDDL` and `toDDL` -> `fromDDL`

Author: Maxim Gekk <maxim.gekk@databricks.com>

Closes#21803 from MaxGekk/to-ddl.

## What changes were proposed in this pull request?

Improvement `IN` predicate type mismatched message:

```sql

Mismatched columns:

[(, t, 4, ., `, t, 4, a, `, :, d, o, u, b, l, e, ,, , t, 5, ., `, t, 5, a, `, :, d, e, c, i, m, a, l, (, 1, 8, ,, 0, ), ), (, t, 4, ., `, t, 4, c, `, :, s, t, r, i, n, g, ,, , t, 5, ., `, t, 5, c, `, :, b, i, g, i, n, t, )]

```

After this patch:

```sql

Mismatched columns:

[(t4.`t4a`:double, t5.`t5a`:decimal(18,0)), (t4.`t4c`:string, t5.`t5c`:bigint)]

```

## How was this patch tested?

unit tests

Author: Yuming Wang <yumwang@ebay.com>

Closes#21863 from wangyum/SPARK-18874.

## What changes were proposed in this pull request?

Thanks to henryr for the original idea at https://github.com/apache/spark/pull/21049

Description from the original PR :

Subqueries (at least in SQL) have 'bag of tuples' semantics. Ordering

them is therefore redundant (unless combined with a limit).

This patch removes the top sort operators from the subquery plans.

This closes https://github.com/apache/spark/pull/21049.

## How was this patch tested?

Added test cases in SubquerySuite to cover in, exists and scalar subqueries.

Please review http://spark.apache.org/contributing.html before opening a pull request.

Author: Dilip Biswal <dbiswal@us.ibm.com>

Closes#21853 from dilipbiswal/SPARK-23957.

## What changes were proposed in this pull request?

When `trueValue` and `falseValue` are semantic equivalence, the condition expression in `if` can be removed to avoid extra computation in runtime.

## How was this patch tested?

Test added.

Author: DB Tsai <d_tsai@apple.com>

Closes#21848 from dbtsai/short-circuit-if.

## What changes were proposed in this pull request?

The HandleNullInputsForUDF would always add a new `If` node every time it is applied. That would cause a difference between the same plan being analyzed once and being analyzed twice (or more), thus raising issues like plan not matched in the cache manager. The solution is to mark the arguments as null-checked, which is to add a "KnownNotNull" node above those arguments, when adding the UDF under an `If` node, because clearly the UDF will not be called when any of those arguments is null.

## How was this patch tested?

Add new tests under sql/UDFSuite and AnalysisSuite.

Author: maryannxue <maryannxue@apache.org>

Closes#21851 from maryannxue/spark-24891.

## What changes were proposed in this pull request?

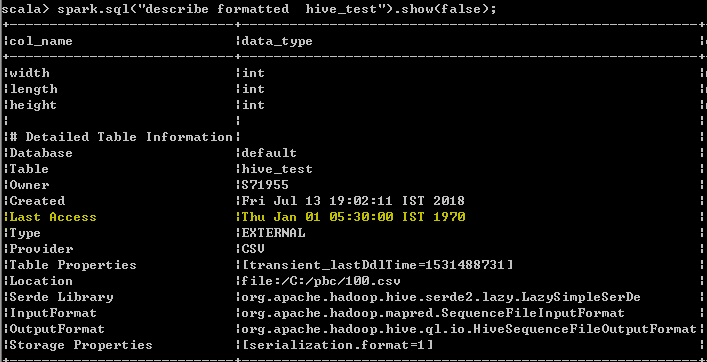

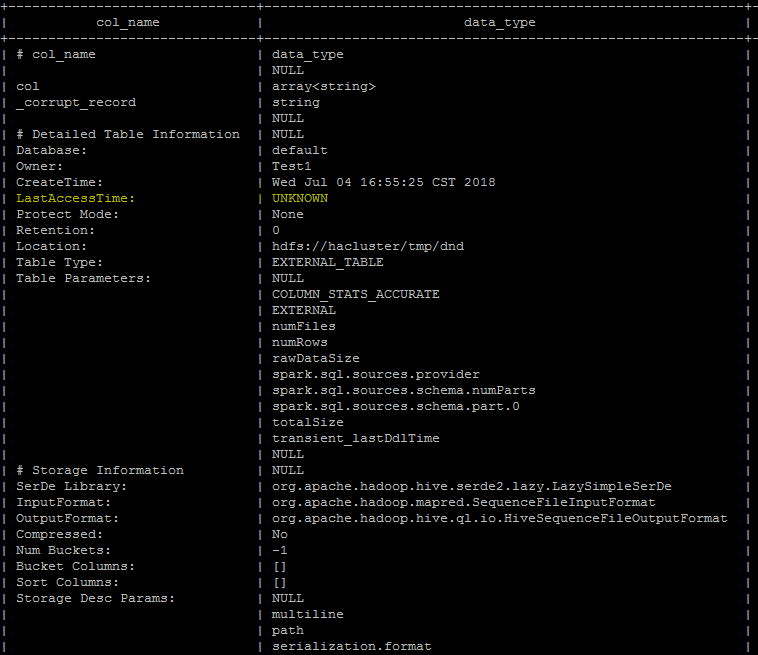

Last Access Time will always displayed wrong date Thu Jan 01 05:30:00 IST 1970 when user run DESC FORMATTED table command

In hive its displayed as "UNKNOWN" which makes more sense than displaying wrong date. seems to be a limitation as of now even from hive, better we can follow the hive behavior unless the limitation has been resolved from hive.

spark client output

Hive client output

## How was this patch tested?

UT has been added which makes sure that the wrong date "Thu Jan 01 05:30:00 IST 1970 "

shall not be added as value for the Last Access property

Author: s71955 <sujithchacko.2010@gmail.com>

Closes#21775 from sujith71955/master_hive.

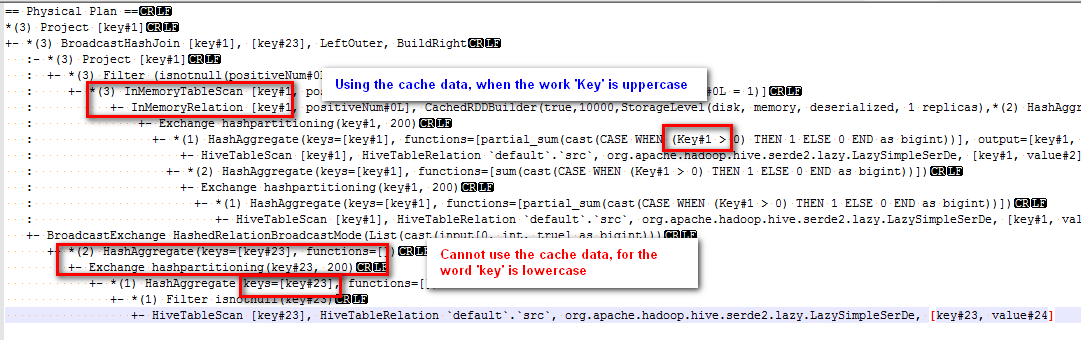

## What changes were proposed in this pull request?

Modified the canonicalized to not case-insensitive.

Before the PR, cache can't work normally if there are case letters in SQL,

for example:

sql("CREATE TABLE IF NOT EXISTS src (key INT, value STRING) USING hive")

sql("select key, sum(case when Key > 0 then 1 else 0 end) as positiveNum " +

"from src group by key").cache().createOrReplaceTempView("src_cache")

sql(

s"""select a.key

from

(select key from src_cache where positiveNum = 1)a

left join

(select key from src_cache )b

on a.key=b.key

""").explain

The physical plan of the sql is:

The subquery "select key from src_cache where positiveNum = 1" on the left of join can use the cache data, but the subquery "select key from src_cache" on the right of join cannot use the cache data.

## How was this patch tested?

new added test

Author: 10129659 <chen.yanshan@zte.com.cn>

Closes#21823 from eatoncys/canonicalized.

## What changes were proposed in this pull request?

Modify the strategy in ColumnPruning to add a Project between ScriptTransformation and its child, this strategy can reduce the scan time especially in the scenario of the table has many columns.

## How was this patch tested?

Add UT in ColumnPruningSuite and ScriptTransformationSuite.

Author: Yuanjian Li <xyliyuanjian@gmail.com>

Closes#21839 from xuanyuanking/SPARK-24339.

## What changes were proposed in this pull request?

Since Spark has provided fairly clear interfaces for adding user-defined optimization rules, it would be nice to have an easy-to-use interface for excluding an optimization rule from the Spark query optimizer as well.

This would make customizing Spark optimizer easier and sometimes could debugging issues too.

- Add a new config spark.sql.optimizer.excludedRules, with the value being a list of rule names separated by comma.

- Modify the current batches method to remove the excluded rules from the default batches. Log the rules that have been excluded.

- Split the existing default batches into "post-analysis batches" and "optimization batches" so that only rules in the "optimization batches" can be excluded.

## How was this patch tested?

Add a new test suite: OptimizerRuleExclusionSuite

Author: maryannxue <maryannxue@apache.org>

Closes#21764 from maryannxue/rule-exclusion.

## What changes were proposed in this pull request?

Currently, the Analyzer throws an exception if your try to nest a generator. However, it special cases generators "nested" in an alias, and allows that. If you try to alias a generator twice, it is not caught by the special case, so an exception is thrown.

This PR trims the unnecessary, non-top-level aliases, so that the generator is allowed.

## How was this patch tested?

new tests in AnalysisSuite.

Author: Brandon Krieger <bkrieger@palantir.com>

Closes#21508 from bkrieger/bk/SPARK-24488.

## What changes were proposed in this pull request?

Refactor `Concat` and `MapConcat` to:

- avoid creating concatenator object for each row.

- make `Concat` handle `containsNull` properly.

- make `Concat` shortcut if `null` child is found.

## How was this patch tested?

Added some tests and existing tests.

Author: Takuya UESHIN <ueshin@databricks.com>

Closes#21824 from ueshin/issues/SPARK-24871/refactor_concat_mapconcat.

## What changes were proposed in this pull request?

Enhances the parser and analyzer to support ANSI compliant syntax for GROUPING SET. As part of this change we derive the grouping expressions from user supplied groupings in the grouping sets clause.

```SQL

SELECT c1, c2, max(c3)

FROM t1

GROUP BY GROUPING SETS ((c1), (c1, c2))

```

## How was this patch tested?

Added tests in SQLQueryTestSuite and ResolveGroupingAnalyticsSuite.

Please review http://spark.apache.org/contributing.html before opening a pull request.

Author: Dilip Biswal <dbiswal@us.ibm.com>

Closes#21813 from dilipbiswal/spark-24424.

## What changes were proposed in this pull request?

As stated in https://github.com/apache/spark/pull/21321, in the error messages we should use `catalogString`. This is not the case, as SPARK-22893 used `simpleString` in order to have the same representation everywhere and it missed some places.

The PR unifies the messages using alway the `catalogString` representation of the dataTypes in the messages.

## How was this patch tested?

existing/modified UTs

Author: Marco Gaido <marcogaido91@gmail.com>

Closes#21804 from mgaido91/SPARK-24268_catalog.

## What changes were proposed in this pull request?

Made ExprId hashCode independent of jvmId to make canonicalization independent of JVM, by overriding hashCode (and necessarily also equality) to depend on id only

## How was this patch tested?

Created a unit test ExprIdSuite

Ran all unit tests of sql/catalyst

Author: Ger van Rossum <gvr@users.noreply.github.com>

Closes#21806 from gvr/spark24846-canonicalization.

## What changes were proposed in this pull request?

Currently, the group state of user-defined-type is encoded as top-level columns in the UnsafeRows stores in the state store. The timeout timestamp is also saved as (when needed) as the last top-level column. Since the group state is serialized to top-level columns, you cannot save "null" as a value of state (setting null in all the top-level columns is not equivalent). So we don't let the user set the timeout without initializing the state for a key. Based on user experience, this leads to confusion.

This PR is to change the row format such that the state is saved as nested columns. This would allow the state to be set to null, and avoid these confusing corner cases. However, queries recovering from existing checkpoint will use the previous format to maintain compatibility with existing production queries.

## How was this patch tested?

Refactored existing end-to-end tests and added new tests for explicitly testing obj-to-row conversion for both state formats.

Author: Tathagata Das <tathagata.das1565@gmail.com>

Closes#21739 from tdas/SPARK-22187-1.

## What changes were proposed in this pull request?

This patch proposes breaking down configuration of retaining batch size on state into two pieces: files and in memory (cache). While this patch reuses existing configuration for files, it introduces new configuration, "spark.sql.streaming.maxBatchesToRetainInMemory" to configure max count of batch to retain in memory.

## How was this patch tested?

Apply this patch on top of SPARK-24441 (https://github.com/apache/spark/pull/21469), and manually tested in various workloads to ensure overall size of states in memory is around 2x or less of the size of latest version of state, while it was 10x ~ 80x before applying the patch.

Author: Jungtaek Lim <kabhwan@gmail.com>

Closes#21700 from HeartSaVioR/SPARK-24717.

## What changes were proposed in this pull request?

Fix regexes in spark-sql command examples.

This takes over https://github.com/apache/spark/pull/18477

## How was this patch tested?

Existing tests. I verified the existing example doesn't work in spark-sql, but new ones does.

Author: Sean Owen <srowen@gmail.com>

Closes#21808 from srowen/SPARK-21261.

## What changes were proposed in this pull request?

1. Extend the Parser to enable parsing a column list as the pivot column.

2. Extend the Parser and the Pivot node to enable parsing complex expressions with aliases as the pivot value.

3. Add type check and constant check in Analyzer for Pivot node.

## How was this patch tested?

Add tests in pivot.sql

Author: maryannxue <maryannxue@apache.org>

Closes#21720 from maryannxue/spark-24164.

## What changes were proposed in this pull request?

Two new rules in the logical plan optimizers are added.

1. When there is only one element in the **`Collection`**, the

physical plan will be optimized to **`EqualTo`**, so predicate

pushdown can be used.

```scala

profileDF.filter( $"profileID".isInCollection(Set(6))).explain(true)

"""

|== Physical Plan ==

|*(1) Project [profileID#0]

|+- *(1) Filter (isnotnull(profileID#0) && (profileID#0 = 6))

| +- *(1) FileScan parquet [profileID#0] Batched: true, Format: Parquet,

| PartitionFilters: [],

| PushedFilters: [IsNotNull(profileID), EqualTo(profileID,6)],

| ReadSchema: struct<profileID:int>

""".stripMargin

```

2. When the **`Collection`** is empty, and the input is nullable, the

logical plan will be simplified to

```scala

profileDF.filter( $"profileID".isInCollection(Set())).explain(true)

"""

|== Optimized Logical Plan ==

|Filter if (isnull(profileID#0)) null else false

|+- Relation[profileID#0] parquet

""".stripMargin

```

TODO:

1. For multiple conditions with numbers less than certain thresholds,

we should still allow predicate pushdown.

2. Optimize the **`In`** using **`tableswitch`** or **`lookupswitch`**

when the numbers of the categories are low, and they are **`Int`**,

**`Long`**.

3. The default immutable hash trees set is slow for query, and we

should do benchmark for using different set implementation for faster

query.

4. **`filter(if (condition) null else false)`** can be optimized to false.

## How was this patch tested?

Couple new tests are added.

Author: DB Tsai <d_tsai@apple.com>

Closes#21797 from dbtsai/optimize-in.

## What changes were proposed in this pull request?

Remove the non-negative checks of window start time to make window support negative start time, and add a check to guarantee the absolute value of start time is less than slide duration.

## How was this patch tested?

New unit tests.

Author: HanShuliang <kevinzwx1992@gmail.com>

Closes#18903 from KevinZwx/dev.

## What changes were proposed in this pull request?

The PR tries to avoid serialization of private fields of already added collection functions and follows up on comments in [SPARK-23922](https://github.com/apache/spark/pull/21028) and [SPARK-23935](https://github.com/apache/spark/pull/21236)

## How was this patch tested?

Run tests from:

- CollectionExpressionSuite.scala

- DataFrameFunctionsSuite.scala

Author: Marek Novotny <mn.mikke@gmail.com>

Closes#21352 from mn-mikke/SPARK-24305.

## What changes were proposed in this pull request?

Two new rules in the logical plan optimizers are added.

1. When there is only one element in the **`Collection`**, the

physical plan will be optimized to **`EqualTo`**, so predicate

pushdown can be used.

```scala

profileDF.filter( $"profileID".isInCollection(Set(6))).explain(true)

"""

|== Physical Plan ==

|*(1) Project [profileID#0]

|+- *(1) Filter (isnotnull(profileID#0) && (profileID#0 = 6))

| +- *(1) FileScan parquet [profileID#0] Batched: true, Format: Parquet,

| PartitionFilters: [],

| PushedFilters: [IsNotNull(profileID), EqualTo(profileID,6)],

| ReadSchema: struct<profileID:int>

""".stripMargin

```

2. When the **`Collection`** is empty, and the input is nullable, the

logical plan will be simplified to

```scala

profileDF.filter( $"profileID".isInCollection(Set())).explain(true)

"""

|== Optimized Logical Plan ==

|Filter if (isnull(profileID#0)) null else false

|+- Relation[profileID#0] parquet

""".stripMargin

```

TODO:

1. For multiple conditions with numbers less than certain thresholds,

we should still allow predicate pushdown.

2. Optimize the **`In`** using **`tableswitch`** or **`lookupswitch`**

when the numbers of the categories are low, and they are **`Int`**,

**`Long`**.

3. The default immutable hash trees set is slow for query, and we

should do benchmark for using different set implementation for faster

query.

4. **`filter(if (condition) null else false)`** can be optimized to false.

## How was this patch tested?

Couple new tests are added.

Author: DB Tsai <d_tsai@apple.com>

Closes#21442 from dbtsai/optimize-in.

## What changes were proposed in this pull request?

We have some functions which need to aware the nullabilities of all children, such as `CreateArray`, `CreateMap`, `Concat`, and so on. Currently we add casts to fix the nullabilities, but the casts might be removed during the optimization phase.

After the discussion, we decided to not add extra casts for just fixing the nullabilities of the nested types, but handle them by functions themselves.

## How was this patch tested?

Modified and added some tests.

Author: Takuya UESHIN <ueshin@databricks.com>

Closes#21704 from ueshin/issues/SPARK-24734/concat_containsnull.

## What changes were proposed in this pull request?

Support Decimal type push down to the parquet data sources.

The Decimal comparator used is: [`BINARY_AS_SIGNED_INTEGER_COMPARATOR`](c6764c4a08/parquet-column/src/main/java/org/apache/parquet/schema/PrimitiveComparator.java (L224-L292)).

## How was this patch tested?

unit tests and manual tests.

**manual tests**:

```scala

spark.range(10000000).selectExpr("id", "cast(id as decimal(9)) as d1", "cast(id as decimal(9, 2)) as d2", "cast(id as decimal(18)) as d3", "cast(id as decimal(18, 4)) as d4", "cast(id as decimal(38)) as d5", "cast(id as decimal(38, 18)) as d6").coalesce(1).write.option("parquet.block.size", 1048576).parquet("/tmp/spark/parquet/decimal")

val df = spark.read.parquet("/tmp/spark/parquet/decimal/")

spark.sql("set spark.sql.parquet.filterPushdown.decimal=true")

// Only read about 1 MB data

df.filter("d2 = 10000").show

// Only read about 1 MB data

df.filter("d4 = 10000").show

spark.sql("set spark.sql.parquet.filterPushdown.decimal=false")

// Read 174.3 MB data

df.filter("d2 = 10000").show

// Read 174.3 MB data

df.filter("d4 = 10000").show

```

Author: Yuming Wang <yumwang@ebay.com>

Closes#21556 from wangyum/SPARK-24549.

## What changes were proposed in this pull request?

`Timestamp` support pushdown to parquet data source.

Only `TIMESTAMP_MICROS` and `TIMESTAMP_MILLIS` support push down.

## How was this patch tested?

unit tests and benchmark tests

Author: Yuming Wang <yumwang@ebay.com>

Closes#21741 from wangyum/SPARK-24718.