# What changes were proposed in this pull request?

After all these attempts https://github.com/apache/spark/pull/28692 and https://github.com/apache/spark/pull/28719 an https://github.com/apache/spark/pull/28727.

they all have limitations as mentioned in their discussions.

Maybe the only way is to forbid them all

### Why are the changes needed?

These week-based fields need Locale to express their semantics, the first day of the week varies from country to country.

From the Java doc of WeekFields

```java

/**

* Gets the first day-of-week.

* <p>

* The first day-of-week varies by culture.

* For example, the US uses Sunday, while France and the ISO-8601 standard use Monday.

* This method returns the first day using the standard {code DayOfWeek} enum.

*

* return the first day-of-week, not null

*/

public DayOfWeek getFirstDayOfWeek() {

return firstDayOfWeek;

}

```

But for the SimpleDateFormat, the day-of-week is not localized

```

u Day number of week (1 = Monday, ..., 7 = Sunday) Number 1

```

Currently, the default locale we use is the US, so the result moved a day or a year or a week backward.

e.g.

For the date `2019-12-29(Sunday)`, in the Sunday Start system(e.g. en-US), it belongs to 2020 of week-based-year, in the Monday Start system(en-GB), it goes to 2019. the week-of-week-based-year(w) will be affected too

```sql

spark-sql> SELECT to_csv(named_struct('time', to_timestamp('2019-12-29', 'yyyy-MM-dd')), map('timestampFormat', 'YYYY', 'locale', 'en-US'));

2020

spark-sql> SELECT to_csv(named_struct('time', to_timestamp('2019-12-29', 'yyyy-MM-dd')), map('timestampFormat', 'YYYY', 'locale', 'en-GB'));

2019

spark-sql> SELECT to_csv(named_struct('time', to_timestamp('2019-12-29', 'yyyy-MM-dd')), map('timestampFormat', 'YYYY-ww-uu', 'locale', 'en-US'));

2020-01-01

spark-sql> SELECT to_csv(named_struct('time', to_timestamp('2019-12-29', 'yyyy-MM-dd')), map('timestampFormat', 'YYYY-ww-uu', 'locale', 'en-GB'));

2019-52-07

spark-sql> SELECT to_csv(named_struct('time', to_timestamp('2020-01-05', 'yyyy-MM-dd')), map('timestampFormat', 'YYYY-ww-uu', 'locale', 'en-US'));

2020-02-01

spark-sql> SELECT to_csv(named_struct('time', to_timestamp('2020-01-05', 'yyyy-MM-dd')), map('timestampFormat', 'YYYY-ww-uu', 'locale', 'en-GB'));

2020-01-07

```

For other countries, please refer to [First Day of the Week in Different Countries](http://chartsbin.com/view/41671)

### Does this PR introduce _any_ user-facing change?

With this change, user can not use 'YwuW', but 'e' for 'u' instead. This can at least turn this not to be a silent data change.

### How was this patch tested?

add unit tests

Closes#28728 from yaooqinn/SPARK-31879-NEW2.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR disables week-based date filed for parsing

closes#28674

### Why are the changes needed?

1. It's an un-fixable behavior change to fill the gap between SimpleDateFormat and DateTimeFormater and backward-compatibility for different JDKs.A lot of effort has been made to prove it at https://github.com/apache/spark/pull/28674

2. The existing behavior itself in 2.4 is confusing, e.g.

```sql

spark-sql> select to_timestamp('1', 'w');

1969-12-28 00:00:00

spark-sql> select to_timestamp('1', 'u');

1970-01-05 00:00:00

```

The 'u' here seems not to go to the Monday of the first week in week-based form or the first day of the year in non-week-based form but go to the Monday of the second week in week-based form.

And, e.g.

```sql

spark-sql> select to_timestamp('2020 2020', 'YYYY yyyy');

2020-01-01 00:00:00

spark-sql> select to_timestamp('2020 2020', 'yyyy YYYY');

2019-12-29 00:00:00

spark-sql> select to_timestamp('2020 2020 1', 'YYYY yyyy w');

NULL

spark-sql> select to_timestamp('2020 2020 1', 'yyyy YYYY w');

2019-12-29 00:00:00

```

I think we don't need to introduce all the weird behavior from Java

3. The current test coverage for week-based date fields is almost 0%, which indicates that we've never imagined using it.

4. Avoiding JDK bugs

https://issues.apache.org/jira/browse/SPARK-31880

### Does this PR introduce _any_ user-facing change?

Yes, the 'Y/W/w/u/F/E' pattern cannot be used datetime parsing functions.

### How was this patch tested?

more tests added

Closes#28706 from yaooqinn/SPARK-31892.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

As mentioned in https://github.com/apache/spark/pull/28673 and suggested via cloud-fan at https://github.com/apache/spark/pull/28673#discussion_r432817075

In this PR, we disable datetime pattern in the form of `y..y` and `Y..Y` whose lengths are greater than 10 to avoid sort of JDK bug as described below

he new datetime formatter introduces silent data change like,

```sql

spark-sql> select from_unixtime(1, 'yyyyyyyyyyy-MM-dd');

NULL

spark-sql> set spark.sql.legacy.timeParserPolicy=legacy;

spark.sql.legacy.timeParserPolicy legacy

spark-sql> select from_unixtime(1, 'yyyyyyyyyyy-MM-dd');

00000001970-01-01

spark-sql>

```

For patterns that support `SignStyle.EXCEEDS_PAD`, e.g. `y..y`(len >=4), when using the `NumberPrinterParser` to format it

```java

switch (signStyle) {

case EXCEEDS_PAD:

if (minWidth < 19 && value >= EXCEED_POINTS[minWidth]) {

buf.append(decimalStyle.getPositiveSign());

}

break;

....

```

the `minWidth` == `len(y..y)`

the `EXCEED_POINTS` is

```java

/**

* Array of 10 to the power of n.

*/

static final long[] EXCEED_POINTS = new long[] {

0L,

10L,

100L,

1000L,

10000L,

100000L,

1000000L,

10000000L,

100000000L,

1000000000L,

10000000000L,

};

```

So when the `len(y..y)` is greater than 10, ` ArrayIndexOutOfBoundsException` will be raised.

And at the caller side, for `from_unixtime`, the exception will be suppressed and silent data change occurs. for `date_format`, the `ArrayIndexOutOfBoundsException` will continue.

### Why are the changes needed?

fix silent data change

### Does this PR introduce _any_ user-facing change?

Yes, SparkUpgradeException will take place of `null` result when the pattern contains 10 or more continuous 'y' or 'Y'

### How was this patch tested?

new tests

Closes#28684 from yaooqinn/SPARK-31867-2.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

If `LLL`/`qqq` is used in the datetime pattern string, and the current JDK in use has a bug for the stand-alone form (see https://bugs.openjdk.java.net/browse/JDK-8114833), throw an exception with a clear error message.

### Why are the changes needed?

to keep backward compatibility with Spark 2.4

### Does this PR introduce _any_ user-facing change?

Yes

Spark 2.4

```

scala> sql("select date_format('1990-1-1', 'LLL')").show

+---------------------------------------------+

|date_format(CAST(1990-1-1 AS TIMESTAMP), LLL)|

+---------------------------------------------+

| Jan|

+---------------------------------------------+

```

Spark 3.0 with Java 11

```

scala> sql("select date_format('1990-1-1', 'LLL')").show

+---------------------------------------------+

|date_format(CAST(1990-1-1 AS TIMESTAMP), LLL)|

+---------------------------------------------+

| Jan|

+---------------------------------------------+

```

Spark 3.0 with Java 8

```

// before this PR

+---------------------------------------------+

|date_format(CAST(1990-1-1 AS TIMESTAMP), LLL)|

+---------------------------------------------+

| 1|

+---------------------------------------------+

// after this PR

scala> sql("select date_format('1990-1-1', 'LLL')").show

org.apache.spark.SparkUpgradeException

```

### How was this patch tested?

manual test with java 8 and 11

Closes#28646 from cloud-fan/format.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Five continuous pattern characters with 'G/M/L/E/u/Q/q' means Narrow-Text Style while we turn to use `java.time.DateTimeFormatterBuilder` since 3.0.0, which output the leading single letter of the value, e.g. `December` would be `D`. In Spark 2.4 they mean Full-Text Style.

In this PR, we explicitly disable Narrow-Text Style for these pattern characters.

### Why are the changes needed?

Without this change, there will be a silent data change.

### Does this PR introduce _any_ user-facing change?

Yes, queries with datetime operations using datetime patterns, e.g. `G/M/L/E/u` will fail if the pattern length is 5 and other patterns, e,g. 'k', 'm' also can accept a certain number of letters.

1. datetime patterns that are not supported by the new parser but the legacy will get SparkUpgradeException, e.g. "GGGGG", "MMMMM", "LLLLL", "EEEEE", "uuuuu", "aa", "aaa". 2 options are given to end-users, one is to use legacy mode, and the other is to follow the new online doc for correct datetime patterns

2, datetime patterns that are not supported by both the new parser and the legacy, e.g. "QQQQQ", "qqqqq", will get IllegalArgumentException which is captured by Spark internally and results NULL to end-users.

### How was this patch tested?

add unit tests

Closes#28592 from yaooqinn/SPARK-31771.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

1. Describe standard 'M' and stand-alone 'L' text forms

2. Add examples for all supported number of month letters

<img width="1047" alt="Screenshot 2020-05-18 at 08 57 31" src="https://user-images.githubusercontent.com/1580697/82178856-b16f1000-98e5-11ea-87c0-456ef94dcd43.png">

### Why are the changes needed?

To improve docs and show how to use month patterns.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

By building docs and checking by eyes.

Closes#28558 from MaxGekk/describe-L-M-date-pattern.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

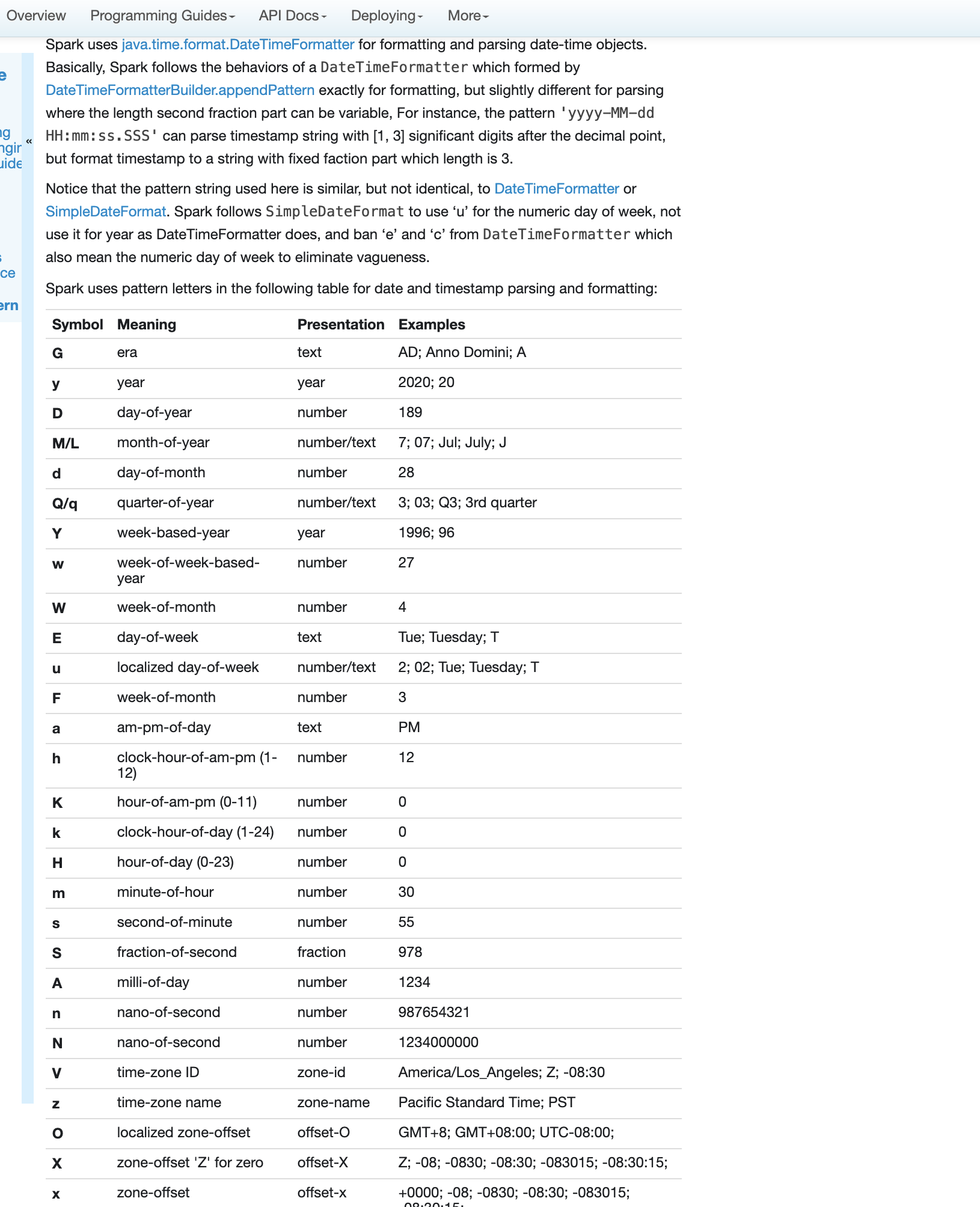

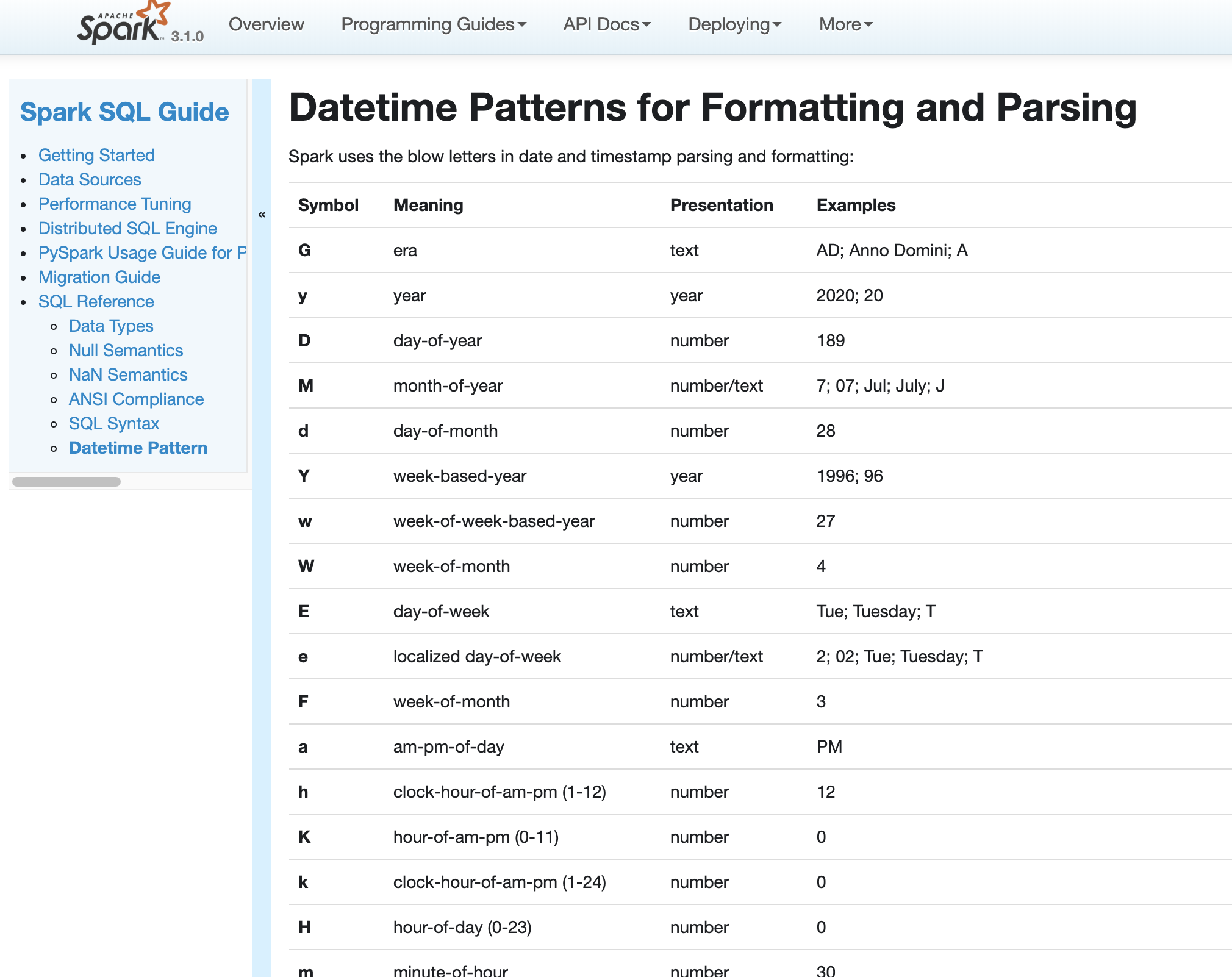

### What changes were proposed in this pull request?

Fix errors and missing parts for datetime pattern document

1. The pattern we use is similar to DateTimeFormatter and SimpleDateFormat but not identical. So we shouldn't use any of them in the API docs but use a link to the doc of our own.

2. Some pattern letters are missing

3. Some pattern letters are explicitly banned - Set('A', 'c', 'e', 'n', 'N')

4. the second fraction pattern different logic for parsing and formatting

### Why are the changes needed?

fix and improve doc

### Does this PR introduce any user-facing change?

yes, new and updated doc

### How was this patch tested?

pass Jenkins

viewed locally with `jekyll serve`

Closes#27956 from yaooqinn/SPARK-31189.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

The meaning of 'u' was day number of the week in SimpleDateFormat, it was changed to year in DateTimeFormatter. Now we keep the old meaning of 'u' by substituting 'u' to 'e' internally and use DateTimeFormatter to parse the pattern string. In DateTimeFormatter, the 'e' and 'c' also represents day-of-week. e.g.

```sql

select date_format(timestamp '2019-10-06', 'yyyy-MM-dd uuuu');

select date_format(timestamp '2019-10-06', 'yyyy-MM-dd uuee');

select date_format(timestamp '2019-10-06', 'yyyy-MM-dd eeee');

```

Because of the substitution, they all goes to `.... eeee` silently. The users may congitive problems of their meanings, so we should mark them as illegal pattern characters to stay the same as before.

This pr move the method `convertIncompatiblePattern` from `DatetimeUtils` to `DateTimeFormatterHelper` object, since it is quite specific for `DateTimeFormatterHelper` class.

And 'e' and 'c' char checking in this method.

Besides,`convertIncompatiblePattern` has a bug that will lose the last `'` if it ends with it, this pr fixes this too. e.g.

```sql

spark-sql> select date_format(timestamp "2019-10-06", "yyyy-MM-dd'S'");

20/03/18 11:19:45 ERROR SparkSQLDriver: Failed in [select date_format(timestamp "2019-10-06", "yyyy-MM-dd'S'")]

java.lang.IllegalArgumentException: Pattern ends with an incomplete string literal: uuuu-MM-dd'S

spark-sql> select to_timestamp("2019-10-06S", "yyyy-MM-dd'S'");

NULL

```

### Why are the changes needed?

avoid vagueness

bug fix

### Does this PR introduce any user-facing change?

no, these are not exposed yet

### How was this patch tested?

add ut

Closes#27939 from yaooqinn/SPARK-31176.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In Spark version 2.4 and earlier, datetime parsing, formatting and conversion are performed by using the hybrid calendar (Julian + Gregorian).

Since the Proleptic Gregorian calendar is de-facto calendar worldwide, as well as the chosen one in ANSI SQL standard, Spark 3.0 switches to it by using Java 8 API classes (the java.time packages that are based on ISO chronology ). The switching job is completed in SPARK-26651.

But after the switching, there are some patterns not compatible between Java 8 and Java 7, Spark needs its own definition on the patterns rather than depends on Java API.

In this PR, we achieve this by writing the document and shadow the incompatible letters. See more details in [SPARK-31030](https://issues.apache.org/jira/browse/SPARK-31030)

### Why are the changes needed?

For backward compatibility.

### Does this PR introduce any user-facing change?

No.

After we define our own datetime parsing and formatting patterns, it's same to old Spark version.

### How was this patch tested?

Existing and new added UT.

Locally document test:

Closes#27830 from xuanyuanking/SPARK-31030.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>