## What changes were proposed in this pull request?

Fix minor error in the code "sketch of pregel implementation" of GraphX guide.

This fixed error relates to `[SPARK-12995][GraphX] Remove deprecate APIs from Pregel`

## How was this patch tested?

N/A

Closes#22780 from WeichenXu123/minor_doc_update1.

Authored-by: WeichenXu <weichen.xu@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Since we didn't test Java 9 ~ 11 up to now in the community, fix the document to describe Java 8 only.

## How was this patch tested?

N/A (This is a document only change.)

Closes#22781 from dongjoon-hyun/SPARK-JDK-DOC.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Fix some broken links in the new document. I have clicked through all the links. Hopefully i haven't missed any :-)

## How was this patch tested?

Built using jekyll and verified the links.

Closes#22772 from dilipbiswal/doc_check.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

This PR adds `prettyNames` for `from_json`, `to_json`, `from_csv`, and `schema_of_json` so that appropriate names are used.

## How was this patch tested?

Unit tests

Closes#22773 from HyukjinKwon/minor-prettyNames.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Adds error checking and handling to `docker` invocations ensuring the script terminates early in the event of any errors. This avoids subtle errors that can occur e.g. if the base image fails to build the Python/R images can end up being built from outdated base images and makes it more explicit to the user that something went wrong.

Additionally the provided `Dockerfiles` assume that Spark was first built locally or is a runnable distribution however it didn't previously enforce this. The script will now check the JARs folder to ensure that Spark JARs actually exist and if not aborts early reminding the user they need to build locally first.

## How was this patch tested?

- Tested with a `mvn clean` working copy and verified that the script now terminates early

- Tested with bad `Dockerfiles` that fail to build to see that early termination occurred

Closes#22748 from rvesse/SPARK-25745.

Authored-by: Rob Vesse <rvesse@dotnetrdf.org>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

JVMs don't you allocate arrays of length exactly Int.MaxValue, so leave

a little extra room. This is necessary when reading blocks >2GB off

the network (for remote reads or for cache replication).

Unit tests via jenkins, ran a test with blocks over 2gb on a cluster

Closes#22705 from squito/SPARK-25704.

Authored-by: Imran Rashid <irashid@cloudera.com>

Signed-off-by: Imran Rashid <irashid@cloudera.com>

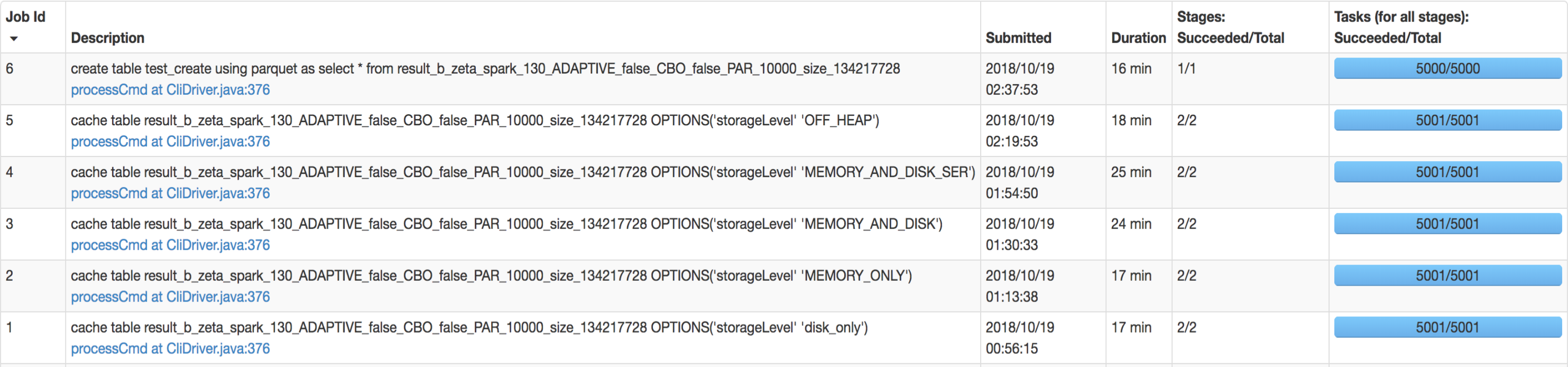

## What changes were proposed in this pull request?

SQL interface support specify `StorageLevel` when cache table. The semantic is:

```sql

CACHE TABLE tableName OPTIONS('storageLevel' 'DISK_ONLY');

```

All supported `StorageLevel` are:

eefdf9f9dd/core/src/main/scala/org/apache/spark/storage/StorageLevel.scala (L172-L183)

## How was this patch tested?

unit tests and manual tests.

manual tests configuration:

```

--executor-memory 15G --executor-cores 5 --num-executors 50

```

Data:

Input Size / Records: 1037.7 GB / 11732805788

Result:

Closes#22263 from wangyum/SPARK-25269.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Without this PR some UDAFs like `GenericUDAFPercentileApprox` can throw an exception because expecting a constant parameter (object inspector) as a particular argument.

The exception is thrown because `toPrettySQL` call in `ResolveAliases` analyzer rule transforms a `Literal` parameter to a `PrettyAttribute` which is then transformed to an `ObjectInspector` instead of a `ConstantObjectInspector`.

The exception comes from `getEvaluator` method of `GenericUDAFPercentileApprox` that actually shouldn't be called during `toPrettySQL` transformation. The reason why it is called are the non lazy fields in `HiveUDAFFunction`.

This PR makes all fields of `HiveUDAFFunction` lazy.

## How was this patch tested?

added new UT

Closes#22766 from peter-toth/SPARK-25768.

Authored-by: Peter Toth <peter.toth@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This is a follow-up PR for #22259. The extra field added in `ScalaUDF` with the original PR was declared optional, but should be indeed required, otherwise callers of `ScalaUDF`'s constructor could ignore this new field and cause the result to be incorrect. This PR makes the new field required and changes its name to `handleNullForInputs`.

#22259 breaks the previous behavior for null-handling of primitive-type input parameters. For example, for `val f = udf({(x: Int, y: Any) => x})`, `f(null, "str")` should return `null` but would return `0` after #22259. In this PR, all UDF methods except `def udf(f: AnyRef, dataType: DataType): UserDefinedFunction` have been restored with the original behavior. The only exception is documented in the Spark SQL migration guide.

In addition, now that we have this extra field indicating if a null-test should be applied on the corresponding input value, we can also make use of this flag to avoid the rule `HandleNullInputsForUDF` being applied infinitely.

## How was this patch tested?

Added UT in UDFSuite

Passed affected existing UTs:

AnalysisSuite

UDFSuite

Closes#22732 from maryannxue/spark-25044-followup.

Lead-authored-by: maryannxue <maryannxue@apache.org>

Co-authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

This allows an implementer of Spark Session Extensions to utilize a

method "injectFunction" which will add a new function to the default

Spark Session Catalogue.

## What changes were proposed in this pull request?

Adds a new function to SparkSessionExtensions

def injectFunction(functionDescription: FunctionDescription)

Where function description is a new type

type FunctionDescription = (FunctionIdentifier, FunctionBuilder)

The functions are loaded in BaseSessionBuilder when the function registry does not have a parent

function registry to get loaded from.

## How was this patch tested?

New unit tests are added for the extension in SparkSessionExtensionSuite

Closes#22576 from RussellSpitzer/SPARK-25560.

Authored-by: Russell Spitzer <Russell.Spitzer@gmail.com>

Signed-off-by: Herman van Hovell <hvanhovell@databricks.com>

## What changes were proposed in this pull request?

CSVs with windows style crlf ('\r\n') don't work in multiline mode. They work fine in single line mode because the line separation is done by Hadoop, which can handle all the different types of line separators. This PR fixes it by enabling Univocity's line separator detection in multiline mode, which will detect '\r\n', '\r', or '\n' automatically as it is done by hadoop in single line mode.

## How was this patch tested?

Unit test with a file with crlf line endings.

Closes#22503 from justinuang/fix-clrf-multiline.

Authored-by: Justin Uang <juang@palantir.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

The PR updates the examples for `BisectingKMeans` so that they don't use the deprecated method `computeCost` (see SPARK-25758).

## How was this patch tested?

running examples

Closes#22763 from mgaido91/SPARK-25764.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

When the first dropEvent occurs, LastReportTimestamp was printing in the log as

Wed Dec 31 16:00:00 PST 1969

(Dropped 1 events from eventLog since Wed Dec 31 16:00:00 PST 1969.)

The reason is that lastReportTimestamp initialized with 0.

Now log is updated to print "... since the application starts" if 'lastReportTimestamp' == 0.

this will happens first dropEvent occurs.

## How was this patch tested?

Manually verified.

Closes#22677 from shivusondur/AsyncEvent1.

Authored-by: shivusondur <shivusondur@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

1. Split the main page of sql-programming-guide into 7 parts:

- Getting Started

- Data Sources

- Performance Turing

- Distributed SQL Engine

- PySpark Usage Guide for Pandas with Apache Arrow

- Migration Guide

- Reference

2. Add left menu for sql-programming-guide, keep first level index for each part in the menu.

## How was this patch tested?

Local test with jekyll build/serve.

Closes#22746 from xuanyuanking/SPARK-24499.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

The PR proposes to deprecate the `computeCost` method on `BisectingKMeans` in favor of the adoption of `ClusteringEvaluator` in order to evaluate the clustering.

## How was this patch tested?

NA

Closes#22756 from mgaido91/SPARK-25758.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

This way the image generated from both environments has the same layout,

with just a difference in contents that should not affect functionality.

Also added some minor error checking to the image script.

Closes#22681 from vanzin/SPARK-25682.

Authored-by: Marcelo Vanzin <vanzin@cloudera.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

Currently each test in `SQLTest` in PySpark is not cleaned properly.

We should introduce and use more `contextmanager` to be convenient to clean up the context properly.

## How was this patch tested?

Modified tests.

Closes#22762 from ueshin/issues/SPARK-25763/cleanup_sqltests.

Authored-by: Takuya UESHIN <ueshin@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Only `AddJarCommand` return `0`, the user will be confused about what it means. This PR sets it to empty.

```sql

spark-sql> add jar /Users/yumwang/spark/sql/hive/src/test/resources/TestUDTF.jar;

ADD JAR /Users/yumwang/spark/sql/hive/src/test/resources/TestUDTF.jar

0

spark-sql>

```

## How was this patch tested?

manual tests

```sql

spark-sql> add jar /Users/yumwang/spark/sql/hive/src/test/resources/TestUDTF.jar;

ADD JAR /Users/yumwang/spark/sql/hive/src/test/resources/TestUDTF.jar

spark-sql>

```

Closes#22747 from wangyum/AddJarCommand.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Also update Kinesis SDK's Jackson to match Spark's

## How was this patch tested?

Existing tests, including Kinesis ones, which ought to be hereby triggered.

This was uncovered, I believe, in https://github.com/apache/spark/pull/22729#issuecomment-430666080Closes#22757 from srowen/SPARK-24601.2.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

Master

## What changes were proposed in this pull request?

Previously Pyspark used the private constructor for SparkSession when

building that object. This resulted in a SparkSession without checking

the sql.extensions parameter for additional session extensions. To fix

this we instead use the Session.builder() path as SparkR uses, this

loads the extensions and allows their use in PySpark.

## How was this patch tested?

An integration test was added which mimics the Scala test for the same feature.

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#21990 from RussellSpitzer/SPARK-25003-master.

Authored-by: Russell Spitzer <Russell.Spitzer@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

The test fix is to allocate a `Resource` object only after the resource

types have been initialized. Otherwise the YARN classes get in a weird

state and throw a different exception than expected, because the resource

has a different view of the registered resources.

I also removed a test for a null resource since that seems unnecessary

and made the fix more complicated.

All the other changes are just cleanup; basically simplify the tests by

defining what is being tested and deriving the resource type registration

and the SparkConf from that data, instead of having redundant definitions

in the tests.

Ran tests with Hadoop 3 (and also without it).

Closes#22751 from vanzin/SPARK-20327.fix.

Authored-by: Marcelo Vanzin <vanzin@cloudera.com>

Signed-off-by: Imran Rashid <irashid@cloudera.com>

## What changes were proposed in this pull request?

Currently if we run

```

sh start-thriftserver.sh -h

```

we get

```

...

Thrift server options:

2018-10-15 21:45:39 INFO HiveThriftServer2:54 - Starting SparkContext

2018-10-15 21:45:40 INFO SparkContext:54 - Running Spark version 2.3.2

2018-10-15 21:45:40 WARN NativeCodeLoader:62 - Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

2018-10-15 21:45:40 ERROR SparkContext:91 - Error initializing SparkContext.

org.apache.spark.SparkException: A master URL must be set in your configuration

at org.apache.spark.SparkContext.<init>(SparkContext.scala:367)

at org.apache.spark.SparkContext$.getOrCreate(SparkContext.scala:2493)

at org.apache.spark.sql.SparkSession$Builder$$anonfun$7.apply(SparkSession.scala:934)

at org.apache.spark.sql.SparkSession$Builder$$anonfun$7.apply(SparkSession.scala:925)

at scala.Option.getOrElse(Option.scala:121)

at org.apache.spark.sql.SparkSession$Builder.getOrCreate(SparkSession.scala:925)

at org.apache.spark.sql.hive.thriftserver.SparkSQLEnv$.init(SparkSQLEnv.scala:48)

at org.apache.spark.sql.hive.thriftserver.HiveThriftServer2$.main(HiveThriftServer2.scala:79)

at org.apache.spark.sql.hive.thriftserver.HiveThriftServer2.main(HiveThriftServer2.scala)

2018-10-15 21:45:40 ERROR Utils:91 - Uncaught exception in thread main

```

After fix, the usage output is clean:

```

...

Thrift server options:

--hiveconf <property=value> Use value for given property

```

Also exit with code 1, to follow other scripts(this is the behavior of parsing option `-h` for other linux commands as well).

## How was this patch tested?

Manual test.

Closes#22727 from gengliangwang/stsUsage.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

When deserializing values of ArrayType with struct elements in java beans, fields of structs get mixed up.

I suggest using struct data types retrieved from resolved input data instead of inferring them from java beans.

## What changes were proposed in this pull request?

MapObjects expression is used to map array elements to java beans. Struct type of elements is inferred from java bean structure and ends up with mixed up field order.

I used UnresolvedMapObjects instead of MapObjects, which allows to provide element type for MapObjects during analysis based on the resolved input data, not on the java bean.

## How was this patch tested?

Added a test case.

Built complete project on travis.

michalsenkyr cloud-fan marmbrus liancheng

Closes#22708 from vofque/SPARK-21402.

Lead-authored-by: Vladimir Kuriatkov <vofque@gmail.com>

Co-authored-by: Vladimir Kuriatkov <Vladimir_Kuriatkov@epam.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The SQL execution listener framework was created from scratch(see https://github.com/apache/spark/pull/9078). It didn't leverage what we already have in the spark listener framework, and one major problem is, the listener runs on the spark execution thread, which means a bad listener can block spark's query processing.

This PR re-implements the SQL execution listener framework. Now `ExecutionListenerManager` is just a normal spark listener, which watches the `SparkListenerSQLExecutionEnd` events and post events to the

user-provided SQL execution listeners.

## How was this patch tested?

existing tests.

Closes#22674 from cloud-fan/listener.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

this PR correct some comment error:

1. change from "as low a possible" to "as low as possible" in RewriteDistinctAggregates.scala

2. delete redundant word “with” in HiveTableScanExec’s doExecute() method

## How was this patch tested?

Existing unit tests.

Closes#22694 from CarolinePeng/update_comment.

Authored-by: 彭灿00244106 <00244106@zte.intra>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

`Literal.value` should have a value a value corresponding to `dataType`. This pr added code to verify it and fixed the existing tests to do so.

## How was this patch tested?

Modified the existing tests.

Closes#22724 from maropu/SPARK-25734.

Authored-by: Takeshi Yamamuro <yamamuro@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The PR adds new function `from_csv()` similar to `from_json()` to parse columns with CSV strings. I added the following methods:

```Scala

def from_csv(e: Column, schema: StructType, options: Map[String, String]): Column

```

and this signature to call it from Python, R and Java:

```Scala

def from_csv(e: Column, schema: String, options: java.util.Map[String, String]): Column

```

## How was this patch tested?

Added new test suites `CsvExpressionsSuite`, `CsvFunctionsSuite` and sql tests.

Closes#22379 from MaxGekk/from_csv.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Maxim Gekk <max.gekk@gmail.com>

Co-authored-by: Hyukjin Kwon <gurwls223@gmail.com>

Co-authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Set a reasonable poll timeout thats used while consuming topics/partitions from kafka. In the

absence of it, a default of 2 minute is used as the timeout values. And all the negative tests take a minimum of 2 minute to execute.

After this change, we save about 4 minutes in this suite.

## How was this patch tested?

Test fix.

Closes#22670 from dilipbiswal/SPARK-25631.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

- Exposes several metrics regarding application status as a source, useful to scrape them via jmx instead of mining the metrics rest api. Example use case: prometheus + jmx exporter.

- Metrics are gathered when a job ends at the AppStatusListener side, could be more fine-grained but most metrics like tasks completed are also counted by executors. More metrics could be exposed in the future to avoid scraping executors in some scenarios.

- a config option `spark.app.status.metrics.enabled` is added to disable/enable these metrics, by default they are disabled.

This was manually tested with jmx source enabled and prometheus server on k8s:

In the next pic the job delay is shown for repeated pi calculation (Spark action).

Closes#22381 from skonto/add_app_status_metrics.

Authored-by: Stavros Kontopoulos <stavros.kontopoulos@lightbend.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

Remove Kafka 0.8 integration

## How was this patch tested?

Existing tests, build scripts

Closes#22703 from srowen/SPARK-25705.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

AFAIK multi-column count is not widely supported by the mainstream databases(postgres doesn't support), and the SQL standard doesn't define it clearly, as near as I can tell.

Since Spark supports it, we should clearly document the current behavior and add tests to verify it.

## How was this patch tested?

N/A

Closes#22728 from cloud-fan/doc.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Only test these 4 cases is enough:

be2238fb50/sql/core/src/main/scala/org/apache/spark/sql/execution/datasources/parquet/ParquetWriteSupport.scala (L269-L279)

## How was this patch tested?

Manual tests on my local machine.

before:

```

- filter pushdown - decimal (13 seconds, 683 milliseconds)

```

after:

```

- filter pushdown - decimal (9 seconds, 713 milliseconds)

```

Closes#22636 from wangyum/SPARK-25629.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

LOAD DATA INPATH didn't work if the defaultFS included a port for hdfs.

Handling this just requires a small change to use the correct URI

constructor.

## How was this patch tested?

Added a unit test, ran all tests via jenkins

Closes#22733 from squito/SPARK-25738.

Authored-by: Imran Rashid <irashid@cloudera.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

This PR is a follow-up of https://github.com/apache/spark/pull/22594 . This alternative can avoid the unneeded computation in the hot code path.

- For row-based scan, we keep the original way.

- For the columnar scan, we just need to update the stats after each batch.

## How was this patch tested?

N/A

Closes#22731 from gatorsmile/udpateStatsFileScanRDD.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This is the work on setting up Secure HDFS interaction with Spark-on-K8S.

The architecture is discussed in this community-wide google [doc](https://docs.google.com/document/d/1RBnXD9jMDjGonOdKJ2bA1lN4AAV_1RwpU_ewFuCNWKg)

This initiative can be broken down into 4 Stages

**STAGE 1**

- [x] Detecting `HADOOP_CONF_DIR` environmental variable and using Config Maps to store all Hadoop config files locally, while also setting `HADOOP_CONF_DIR` locally in the driver / executors

**STAGE 2**

- [x] Grabbing `TGT` from `LTC` or using keytabs+principle and creating a `DT` that will be mounted as a secret or using a pre-populated secret

**STAGE 3**

- [x] Driver

**STAGE 4**

- [x] Executor

## How was this patch tested?

Locally tested on a single-noded, pseudo-distributed Kerberized Hadoop Cluster

- [x] E2E Integration tests https://github.com/apache/spark/pull/22608

- [ ] Unit tests

## Docs and Error Handling?

- [x] Docs

- [x] Error Handling

## Contribution Credit

kimoonkim skonto

Closes#21669 from ifilonenko/secure-hdfs.

Lead-authored-by: Ilan Filonenko <if56@cornell.edu>

Co-authored-by: Ilan Filonenko <ifilondz@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

Project logical operator generates valid constraints using two opposite operations. It substracts child constraints from all constraints, than union child constraints again. I think it may be not necessary.

Aggregate operator has the same problem with Project.

This PR try to remove these two opposite collection operations.

## How was this patch tested?

Related unit tests:

ProjectEstimationSuite

CollapseProjectSuite

PushProjectThroughUnionSuite

UnsafeProjectionBenchmark

GeneratedProjectionSuite

CodeGeneratorWithInterpretedFallbackSuite

TakeOrderedAndProjectSuite

GenerateUnsafeProjectionSuite

BucketedRandomProjectionLSHSuite

RemoveRedundantAliasAndProjectSuite

AggregateBenchmark

AggregateOptimizeSuite

AggregateEstimationSuite

DecimalAggregatesSuite

DateFrameAggregateSuite

ObjectHashAggregateSuite

TwoLevelAggregateHashMapSuite

ObjectHashAggregateExecBenchmark

SingleLevelAggregateHaspMapSuite

TypedImperativeAggregateSuite

RewriteDistinctAggregatesSuite

HashAggregationQuerySuite

HashAggregationQueryWithControlledFallbackSuite

TypedImperativeAggregateSuite

TwoLevelAggregateHashMapWithVectorizedMapSuite

Closes#22706 from SongYadong/generate_constraints.

Authored-by: SongYadong <song.yadong1@zte.com.cn>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

The PR addresses [the comment](https://github.com/apache/spark/pull/22715#discussion_r225024084) in the previous one. `outputOrdering` becomes a field of `InMemoryRelation`.

## How was this patch tested?

existing UTs

Closes#22726 from mgaido91/SPARK-25727_followup.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Update the next version of Spark from 2.5 to 3.0

## How was this patch tested?

N/A

Closes#22717 from gatorsmile/followupSPARK-25372.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Add `outputOrdering ` to `otherCopyArgs` in InMemoryRelation so that this field will be copied when we doing the tree transformation.

```

val data = Seq(100).toDF("count").cache()

data.queryExecution.optimizedPlan.toJSON

```

The above code can generate the following error:

```

assertion failed: InMemoryRelation fields: output, cacheBuilder, statsOfPlanToCache, outputOrdering, values: List(count#178), CachedRDDBuilder(true,10000,StorageLevel(disk, memory, deserialized, 1 replicas),*(1) Project [value#176 AS count#178]

+- LocalTableScan [value#176]

,None), Statistics(sizeInBytes=12.0 B, hints=none)

java.lang.AssertionError: assertion failed: InMemoryRelation fields: output, cacheBuilder, statsOfPlanToCache, outputOrdering, values: List(count#178), CachedRDDBuilder(true,10000,StorageLevel(disk, memory, deserialized, 1 replicas),*(1) Project [value#176 AS count#178]

+- LocalTableScan [value#176]

,None), Statistics(sizeInBytes=12.0 B, hints=none)

at scala.Predef$.assert(Predef.scala:170)

at org.apache.spark.sql.catalyst.trees.TreeNode.jsonFields(TreeNode.scala:611)

at org.apache.spark.sql.catalyst.trees.TreeNode.org$apache$spark$sql$catalyst$trees$TreeNode$$collectJsonValue$1(TreeNode.scala:599)

at org.apache.spark.sql.catalyst.trees.TreeNode.jsonValue(TreeNode.scala:604)

at org.apache.spark.sql.catalyst.trees.TreeNode.toJSON(TreeNode.scala:590)

```

## How was this patch tested?

Added a test

Closes#22715 from gatorsmile/copyArgs1.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

[SPARK-22479](https://github.com/apache/spark/pull/19708/files#diff-5c22ac5160d3c9d81225c5dd86265d27R31) adds a test case which sometimes fails because the used password string `123` matches `41230802`. This PR aims to fix the flakiness.

- https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/97343/consoleFull

```scala

SaveIntoDataSourceCommandSuite:

- simpleString is redacted *** FAILED ***

"SaveIntoDataSourceCommand .org.apache.spark.sql.execution.datasources.jdbc.JdbcRelationProvider41230802, Map(password -> *********(redacted), url -> *********(redacted), driver -> mydriver), ErrorIfExists

+- Range (0, 1, step=1, splits=Some(2))

" contained "123" (SaveIntoDataSourceCommandSuite.scala:42)

```

## How was this patch tested?

Pass the Jenkins with the updated test case

Closes#22716 from dongjoon-hyun/SPARK-25726.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

improve the code comment added in https://github.com/apache/spark/pull/22702/files

## How was this patch tested?

N/A

Closes#22711 from cloud-fan/minor.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Currently, if we try run

```

./start-history-server.sh -h

```

We will get such error

```

java.io.FileNotFoundException: File -h does not exist

```

1. This is not User-Friendly. For option `-h` or `--help`, it should be parsed correctly and show the usage of the class/script.

2. We can remove deprecated options for setting event log directory through command line options.

After fix, we can get following output:

```

Usage: ./sbin/start-history-server.sh [options]

Options:

--properties-file FILE Path to a custom Spark properties file.

Default is conf/spark-defaults.conf.

Configuration options can be set by setting the corresponding JVM system property.

History Server options are always available; additional options depend on the provider.

History Server options:

spark.history.ui.port Port where server will listen for connections

(default 18080)

spark.history.acls.enable Whether to enable view acls for all applications

(default false)

spark.history.provider Name of history provider class (defaults to

file system-based provider)

spark.history.retainedApplications Max number of application UIs to keep loaded in memory

(default 50)

FsHistoryProvider options:

spark.history.fs.logDirectory Directory where app logs are stored

(default: file:/tmp/spark-events)

spark.history.fs.updateInterval How often to reload log data from storage

(in seconds, default: 10)

```

## How was this patch tested?

Manual test

Closes#22699 from gengliangwang/refactorSHSUsage.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>