## What changes were proposed in this pull request?

`hsync` has been added as part of SPARK-19531 to get the latest data in the history sever ui, but that is causing the performance overhead and also leading to drop many history log events. `hsync` uses the force `FileChannel.force` to sync the data to the disk and happens for the data pipeline, it is costly operation and making the application to face overhead and drop the events.

I think getting the latest data in history server can be done in different way (no impact to application while writing events), there is an api `DFSInputStream.getFileLength()` which gives the file length including the `lastBlockBeingWrittenLength`(different from `FileStatus.getLen()`), this api can be used when the file status length and previously cached length are equal to verify whether any new data has been written or not, if there is any update in data length then the history server can update the in progress history log. And also I made this change as configurable with the default value false, and can be enabled for history server if users want to see the updated data in ui.

## How was this patch tested?

Added new test and verified manually, with the added conf `spark.history.fs.inProgressAbsoluteLengthCheck.enabled=true`, history server is reading the logs including the last block data which is being written and updating the Web UI with the latest data.

Closes#22752 from devaraj-kavali/SPARK-24787.

Authored-by: Devaraj K <devaraj@apache.org>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

docker-image-tool.sh uses getopts in which a colon signifies that an

option takes an argument. Since -n does not take an argument it

should not have a colon.

## How was this patch tested?

Following the reproduction in [JIRA](https://issues.apache.org/jira/browse/SPARK-25803):-

0. Created a custom Dockerfile to use for the spark-r container image.

In each of the steps below the path to this Dockerfile is passed with the '-R' option.

(spark-r is used here simply as an example, the bug applies to all options)

1. Built container images without '-n'.

The [result](https://gist.github.com/sel/59f0911bb1a6a485c2487cf7ca770f9d) is that the '-R' option is honoured and the hello-world image is built for spark-r, as expected.

2. Built container images with '-n' to reproduce the issue

The [result](https://gist.github.com/sel/e5cabb9f3bdad5d087349e7fbed75141) is that the '-R' option is ignored and the default container image for spark-r is built

3. Applied the patch and re-built container images with '-n' and did not reproduce the issue

The [result](https://gist.github.com/sel/6af14b95012ba8ff267a4fce6e3bd3bf) is that the '-R' option is honoured and the hello-world image is built for spark-r, as expected.

Closes#22798 from sel/fix-docker-image-tool-nocache.

Authored-by: Steve <sel@users.noreply.github.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

Refactor ObjectHashAggregateExecBenchmark to use main method

## How was this patch tested?

Manually tested:

```

bin/spark-submit --class org.apache.spark.sql.execution.benchmark.ObjectHashAggregateExecBenchmark --jars sql/catalyst/target/spark-catalyst_2.11-3.0.0-SNAPSHOT-tests.jar,core/target/spark-core_2.11-3.0.0-SNAPSHOT-tests.jar,sql/hive/target/spark-hive_2.11-3.0.0-SNAPSHOT.jar --packages org.spark-project.hive:hive-exec:1.2.1.spark2 sql/hive/target/spark-hive_2.11-3.0.0-SNAPSHOT-tests.jar

```

Generated results with:

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "hive/test:runMain org.apache.spark.sql.execution.benchmark.ObjectHashAggregateExecBenchmark"

```

Closes#22804 from peter-toth/SPARK-25665.

Lead-authored-by: Peter Toth <peter.toth@gmail.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

In standalone cluster mode, one could launch driver with supervise mode

enabled. StandaloneRestServer class uses the host and port of current

master as the spark.master property while launching the driver

(even if you are running in HA mode). This class also ignores the

spark.master property passed as part of the request.

Due to the above problem, if the Spark masters switch due to some reason

and your driver is killed unexpectedly and relaunched, it will try to

connect to the master which is in the driver command specified as

-Dspark.master. But this master will be in STANDBY mode and after trying

multiple times, the SparkContext will kill itself (even though secondary

master was alive and healthy).

This change picks the spark.master property from request and uses it to

launch the driver process. Due to this, the driver process has both

masters in -Dspark.master property. Even if the masters switch, SparkContext

can still connect to the ALIVE master and work correctly.

## How was this patch tested?

This patch was manually tested on a standalone cluster running 2.2.1. It was rebased on current master and all tests were executed. I have added a unit test for this change (but since I am new I hope I have covered all).

Closes#21816 from bsikander/rest_driver_fix.

Authored-by: Behroz Sikander <behroz.sikander@sap.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

We find below warnings when build spark project:

```

[warn] * com.google.code.findbugs:jsr305:3.0.0 is selected over 1.3.9

[warn] +- org.apache.hadoop:hadoop-common:2.7.3 (depends on 3.0.0)

[warn] +- org.apache.spark:spark-core_2.11:3.0.0-SNAPSHOT (depends on 1.3.9)

[warn] +- org.apache.spark:spark-network-common_2.11:3.0.0-SNAPSHOT (depends on 1.3.9)

[warn] +- org.apache.spark:spark-unsafe_2.11:3.0.0-SNAPSHOT (depends on 1.3.9)

```

So ideally we need to upgrade jsr305 from 1.3.9 to 3.0.0 to fix this warning

Upgrade one of the dependencies jsr305 version from 1.3.9 to 3.0.0

## How was this patch tested?

sbt "core/testOnly"

sbt "sql/testOnly"

Closes#22803 from daviddingly/master.

Authored-by: xiaoding <xiaoding@ebay.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This is inspired during implementing #21732. For now `ScalaReflection` needs to consider how `ExpressionEncoder` uses generated serializers and deserializers. And `ExpressionEncoder` has a weird `flat` flag. After discussion with cloud-fan, it seems to be better to refactor `ExpressionEncoder`. It should make SPARK-24762 easier to do.

To summarize the proposed changes:

1. `serializerFor` and `deserializerFor` return expressions for serializing/deserializing an input expression for a given type. They are private and should not be called directly.

2. `serializerForType` and `deserializerForType` returns an expression for serializing/deserializing for an object of type T to/from Spark SQL representation. It assumes the input object/Spark SQL representation is located at ordinal 0 of a row.

So in other words, `serializerForType` and `deserializerForType` return expressions for atomically serializing/deserializing JVM object to/from Spark SQL value.

A serializer returned by `serializerForType` will serialize an object at `row(0)` to a corresponding Spark SQL representation, e.g. primitive type, array, map, struct.

A deserializer returned by `deserializerForType` will deserialize an input field at `row(0)` to an object with given type.

3. The construction of `ExpressionEncoder` takes a pair of serializer and deserializer for type `T`. It uses them to create serializer and deserializer for T <-> row serialization. Now `ExpressionEncoder` dones't need to remember if serializer is flat or not. When we need to construct new `ExpressionEncoder` based on existing ones, we only need to change input location in the atomic serializer and deserializer.

## How was this patch tested?

Existing tests.

Closes#22749 from viirya/SPARK-24762-refactor.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Check the `spark.sql.repl.eagerEval.enabled` configuration property in SparkDataFrame `show()` method. If the `SparkSession` has eager execution enabled, the data will be returned to the R client when the data frame is created. So instead of seeing this

```

> df <- createDataFrame(faithful)

> df

SparkDataFrame[eruptions:double, waiting:double]

```

you will see

```

> df <- createDataFrame(faithful)

> df

+---------+-------+

|eruptions|waiting|

+---------+-------+

| 3.6| 79.0|

| 1.8| 54.0|

| 3.333| 74.0|

| 2.283| 62.0|

| 4.533| 85.0|

| 2.883| 55.0|

| 4.7| 88.0|

| 3.6| 85.0|

| 1.95| 51.0|

| 4.35| 85.0|

| 1.833| 54.0|

| 3.917| 84.0|

| 4.2| 78.0|

| 1.75| 47.0|

| 4.7| 83.0|

| 2.167| 52.0|

| 1.75| 62.0|

| 4.8| 84.0|

| 1.6| 52.0|

| 4.25| 79.0|

+---------+-------+

only showing top 20 rows

```

## How was this patch tested?

Manual tests as well as unit tests (one new test case is added).

Author: adrian555 <v2ave10p>

Closes#22455 from adrian555/eager_execution.

## What changes were proposed in this pull request?

As this is targeted for 3.0.0 and Python2 will be deprecated by Jan 1st, 2020, I feel it is appropriate to change the default to Python3. Especially as these projects [found here](https://python3statement.org/) are deprecating their support.

## How was this patch tested?

Unit and Integration tests

Author: Ilan Filonenko <ifilondz@gmail.com>

Closes#22810 from ifilonenko/SPARK-24516.

## What changes were proposed in this pull request?

Before the code changes, I tried to run it with 8G memory:

```

build/sbt -mem 8000 "core/testOnly org.apache.spark.serializer.KryoBenchmark"

```

Still I got got OOM.

This is because the lengths of the arrays are random

669ade3a8e/core/src/test/scala/org/apache/spark/serializer/KryoBenchmark.scala (L90-L91)

And the 2D array is usually large: `10000 * Random.nextInt(0, 10000)`

This PR is to fix it and refactor it to use main method.

The benchmark result is also reason compared to the original one.

## How was this patch tested?

Run with

```

bin/spark-submit --class org.apache.spark.serializer.KryoBenchmark core/target/scala-2.11/spark-core_2.11-3.0.0-SNAPSHOT-tests.jar

```

and

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "core/test:runMain org.apache.spark.serializer.KryoBenchmark"

Closes#22663 from gengliangwang/kyroBenchmark.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Remove JavaSparkContextVarargsWorkaround

## How was this patch tested?

Existing tests.

Closes#22729 from srowen/SPARK-25737.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Remove deprecated accumulator v1

## How was this patch tested?

Existing tests.

Closes#22730 from srowen/SPARK-16775.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

In the PR, I propose to switch `from_json` on `FailureSafeParser`, and to make the function compatible to `PERMISSIVE` mode by default, and to support the `FAILFAST` mode as well. The `DROPMALFORMED` mode is not supported by `from_json`.

## How was this patch tested?

It was tested by existing `JsonSuite`/`CSVSuite`, `JsonFunctionsSuite` and `JsonExpressionsSuite` as well as new tests for `from_json` which checks different modes.

Closes#22237 from MaxGekk/from_json-failuresafe.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

This is a follow-up PR for #22708. It considers another case of java beans deserialization: java maps with struct keys/values.

When deserializing values of MapType with struct keys/values in java beans, fields of structs get mixed up. I suggest using struct data types retrieved from resolved input data instead of inferring them from java beans.

## What changes were proposed in this pull request?

Invocations of "keyArray" and "valueArray" functions are used to extract arrays of keys and values. Struct type of keys or values is also inferred from java bean structure and ends up with mixed up field order.

I created a new UnresolvedInvoke expression as a temporary substitution of Invoke expression while no actual data is available. It allows to provide the resulting data type during analysis based on the resolved input data, not on the java bean (similar to UnresolvedMapObjects).

Key and value arrays are then fed to MapObjects expression which I replaced with UnresolvedMapObjects, just like in case of ArrayType.

Finally I added resolution of UnresolvedInvoke expressions in Analyzer.resolveExpression method as an additional pattern matching case.

## How was this patch tested?

Added a test case.

Built complete project on travis.

viirya kiszk cloud-fan michalsenkyr marmbrus liancheng

Closes#22745 from vofque/SPARK-21402-FOLLOWUP.

Lead-authored-by: Vladimir Kuriatkov <vofque@gmail.com>

Co-authored-by: Vladimir Kuriatkov <Vladimir_Kuriatkov@epam.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Our current doc does not explain how we are passing the data source specific options to the underlying data source. According to [the review comment](https://github.com/apache/spark/pull/22622#discussion_r222911529), this PR aims to add more detailed information and examples

## How was this patch tested?

Manual.

Closes#22801 from dongjoon-hyun/SPARK-25656.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

The serializers of `RowEncoder` use few `If` Catalyst expression which inherits `ComplexTypeMergingExpression` that will check input data types.

It is possible to generate serializers which fail the check and can't to access the data type of serializers. When producing If expression, we should use the same data type at its input expressions.

## How was this patch tested?

Added test.

Closes#22785 from viirya/SPARK-25791.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

The original test would sometimes fail if the listener bus did not keep

up, so just wait till the listener bus is empty. Tested by adding a

sleep in the listener, which made the test consistently fail without the

fix, but pass consistently after the fix.

Closes#22799 from squito/SPARK-25805.

Authored-by: Imran Rashid <irashid@cloudera.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This takes over original PR at #22019. The original proposal is to have null for float and double types. Later a more reasonable proposal is to disallow empty strings. This patch adds logic to throw exception when finding empty strings for non string types.

## How was this patch tested?

Added test.

Closes#22787 from viirya/SPARK-25040.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This goes to reduce test time for ContinuousStressSuite - from 8 mins 13 sec to 43 seconds.

The approach taken by this is to reduce the triggers and epochs to wait and to reduce the expected rows accordingly.

## How was this patch tested?

Existing tests.

Closes#22662 from viirya/SPARK-25627.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Fix the following issues in PythonWorkerFactory

1. MonitorThread.run uses a wrong lock.

2. `createSimpleWorker` misses `synchronized` when updating `simpleWorkers`.

Other changes are just to improve the code style to make the thread-safe contract clear.

## How was this patch tested?

Jenkins

Closes#22770 from zsxwing/pwf.

Authored-by: Shixiong Zhu <zsxwing@gmail.com>

Signed-off-by: Shixiong Zhu <zsxwing@gmail.com>

## What changes were proposed in this pull request?

This is a follow up of #21601, `StreamFileInputFormat` and `WholeTextFileInputFormat` have the same problem.

`Minimum split size pernode 5123456 cannot be larger than maximum split size 4194304

java.io.IOException: Minimum split size pernode 5123456 cannot be larger than maximum split size 4194304

at org.apache.hadoop.mapreduce.lib.input.CombineFileInputFormat.getSplits(CombineFileInputFormat.java: 201)

at org.apache.spark.rdd.BinaryFileRDD.getPartitions(BinaryFileRDD.scala:52)

at org.apache.spark.rdd.RDD$$anonfun$partitions$2.apply(RDD.scala:254)

at org.apache.spark.rdd.RDD$$anonfun$partitions$2.apply(RDD.scala:252)

at scala.Option.getOrElse(Option.scala:121)

at org.apache.spark.rdd.RDD.partitions(RDD.scala:252)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:2138)`

## How was this patch tested?

Added a unit test

Closes#22725 from 10110346/maxSplitSize_node_rack.

Authored-by: liuxian <liu.xian3@zte.com.cn>

Signed-off-by: Thomas Graves <tgraves@apache.org>

## What changes were proposed in this pull request?

add R API for PrefixSpan

## How was this patch tested?

add test in test_mllib_fpm.R

Author: Huaxin Gao <huaxing@us.ibm.com>

Closes#21710 from huaxingao/spark-24207.

## What changes were proposed in this pull request?

Currently in PagedTable.scala pageNavigation() method, if it is having only one page, they were not using the pagination.

Now it is made to use the pagination, even if it is having one page.

## How was this patch tested?

This tested with Spark webUI and History page in spark local setup.

Author: shivusondur <shivusondur@gmail.com>

Closes#22668 from shivusondur/pagination.

## What changes were proposed in this pull request?

`removeExecutorFromSpark` tries to fetch the reason the executor exited from Kubernetes, which may be useful if the pod was OOMKilled. However, the code previously deleted the pod from Kubernetes first which made retrieving this status impossible. This fixes the ordering.

On a separate but related note, it would be nice to wait some time before removing the pod - to let the operator examine logs and such.

## How was this patch tested?

Running on my local cluster.

Author: Mike Kaplinskiy <mike.kaplinskiy@gmail.com>

Closes#22720 from mikekap/patch-1.

## What changes were proposed in this pull request?

Upgrade netty dependency from 4.1.17 to 4.1.30.

Explanation:

Currently when sending a ChunkedByteBuffer with more than 16 chunks over the network will trigger a "merge" of all the blocks into one big transient array that is then sent over the network. This is problematic as the total memory for all chunks can be high (2GB) and this would then trigger an allocation of 2GB to merge everything, which will create OOM errors.

And we can avoid this issue by upgrade the netty. https://github.com/netty/netty/pull/8038

## How was this patch tested?

Manual tests in some spark jobs.

Closes#22765 from lipzhu/SPARK-25757.

Authored-by: Zhu, Lipeng <lipzhu@ebay.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

Currently, there are some tests testing function descriptions:

```bash

$ grep -ir "describe function" sql/core/src/test/resources/sql-tests/inputs

sql/core/src/test/resources/sql-tests/inputs/json-functions.sql:describe function to_json;

sql/core/src/test/resources/sql-tests/inputs/json-functions.sql:describe function extended to_json;

sql/core/src/test/resources/sql-tests/inputs/json-functions.sql:describe function from_json;

sql/core/src/test/resources/sql-tests/inputs/json-functions.sql:describe function extended from_json;

```

Looks there are not quite good points about testing them since we're not going to test documentation itself.

For `DESCRIBE FCUNTION` functionality itself, they are already being tested here and there.

See the test failures in https://github.com/apache/spark/pull/18749 (where I added examples to function descriptions)

We better remove those tests so that people don't add such tests in the SQL tests.

## How was this patch tested?

Manual.

Closes#22776 from HyukjinKwon/SPARK-25779.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

`needsUnsafeRowConversion` is used in 2 places:

1. `ColumnarBatchScan.produceRows`

2. `FileSourceScanExec.doExecute`

When we hit `ColumnarBatchScan.produceRows`, it means whole stage codegen is on but the vectorized reader is off. The vectorized reader can be off for several reasons:

1. the file format doesn't have a vectorized reader(json, csv, etc.)

2. the vectorized reader config is off

3. the schema is not supported

Anyway when the vectorized reader is off, file format reader will always return unsafe rows, and other `ColumnarBatchScan` implementations also always return unsafe rows, so `ColumnarBatchScan.needsUnsafeRowConversion` is not needed.

When we hit `FileSourceScanExec.doExecute`, it means whole stage codegen is off. For this case, we need the `needsUnsafeRowConversion` to convert `ColumnarRow` to `UnsafeRow`, if the file format reader returns batch.

This PR removes `ColumnarBatchScan.needsUnsafeRowConversion`, and keep this flag only in `FileSourceScanExec`

## How was this patch tested?

existing tests

Closes#22750 from cloud-fan/minor.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Refactor `WideSchemaBenchmark` to use main method.

1. use `spark-submit`:

```console

bin/spark-submit --class org.apache.spark.sql.execution.benchmark.WideSchemaBenchmark --jars ./core/target/spark-core_2.11-3.0.0-SNAPSHOT-tests.jar ./sql/core/target/spark-sql_2.11-3.0.0-SNAPSHOT-tests.jar

```

2. Generate benchmark result:

```console

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.WideSchemaBenchmark"

```

## How was this patch tested?

manual tests

Closes#22501 from wangyum/SPARK-25492.

Lead-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

PIP installation requires to package bin scripts together.

https://github.com/apache/spark/blob/master/python/setup.py#L71

The recent fix introduced non-ascii compatible (non-breackable space I guess) at ec96d34e74 fix.

This is usually not the problem but looks Jenkins's default encoding is `ascii` and during copying the script, there looks implicit conversion between bytes and strings - where the default encoding is used

https://github.com/pypa/setuptools/blob/v40.4.3/setuptools/command/develop.py#L185-L189

## How was this patch tested?

Jenkins

Closes#22782 from HyukjinKwon/pip-failure-fix.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Fix minor error in the code "sketch of pregel implementation" of GraphX guide.

This fixed error relates to `[SPARK-12995][GraphX] Remove deprecate APIs from Pregel`

## How was this patch tested?

N/A

Closes#22780 from WeichenXu123/minor_doc_update1.

Authored-by: WeichenXu <weichen.xu@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Since we didn't test Java 9 ~ 11 up to now in the community, fix the document to describe Java 8 only.

## How was this patch tested?

N/A (This is a document only change.)

Closes#22781 from dongjoon-hyun/SPARK-JDK-DOC.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Fix some broken links in the new document. I have clicked through all the links. Hopefully i haven't missed any :-)

## How was this patch tested?

Built using jekyll and verified the links.

Closes#22772 from dilipbiswal/doc_check.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

This PR adds `prettyNames` for `from_json`, `to_json`, `from_csv`, and `schema_of_json` so that appropriate names are used.

## How was this patch tested?

Unit tests

Closes#22773 from HyukjinKwon/minor-prettyNames.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Adds error checking and handling to `docker` invocations ensuring the script terminates early in the event of any errors. This avoids subtle errors that can occur e.g. if the base image fails to build the Python/R images can end up being built from outdated base images and makes it more explicit to the user that something went wrong.

Additionally the provided `Dockerfiles` assume that Spark was first built locally or is a runnable distribution however it didn't previously enforce this. The script will now check the JARs folder to ensure that Spark JARs actually exist and if not aborts early reminding the user they need to build locally first.

## How was this patch tested?

- Tested with a `mvn clean` working copy and verified that the script now terminates early

- Tested with bad `Dockerfiles` that fail to build to see that early termination occurred

Closes#22748 from rvesse/SPARK-25745.

Authored-by: Rob Vesse <rvesse@dotnetrdf.org>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

JVMs don't you allocate arrays of length exactly Int.MaxValue, so leave

a little extra room. This is necessary when reading blocks >2GB off

the network (for remote reads or for cache replication).

Unit tests via jenkins, ran a test with blocks over 2gb on a cluster

Closes#22705 from squito/SPARK-25704.

Authored-by: Imran Rashid <irashid@cloudera.com>

Signed-off-by: Imran Rashid <irashid@cloudera.com>

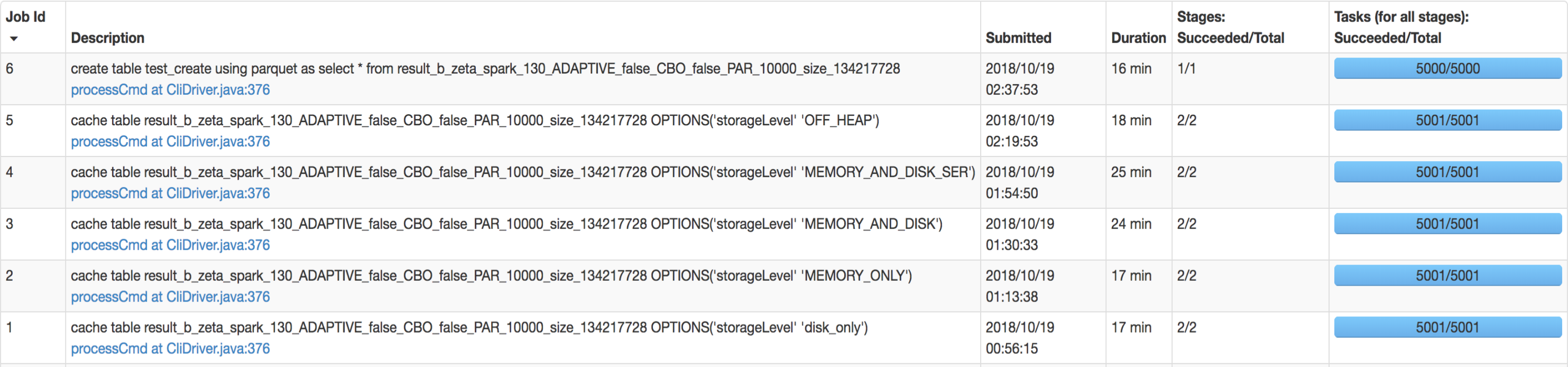

## What changes were proposed in this pull request?

SQL interface support specify `StorageLevel` when cache table. The semantic is:

```sql

CACHE TABLE tableName OPTIONS('storageLevel' 'DISK_ONLY');

```

All supported `StorageLevel` are:

eefdf9f9dd/core/src/main/scala/org/apache/spark/storage/StorageLevel.scala (L172-L183)

## How was this patch tested?

unit tests and manual tests.

manual tests configuration:

```

--executor-memory 15G --executor-cores 5 --num-executors 50

```

Data:

Input Size / Records: 1037.7 GB / 11732805788

Result:

Closes#22263 from wangyum/SPARK-25269.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Without this PR some UDAFs like `GenericUDAFPercentileApprox` can throw an exception because expecting a constant parameter (object inspector) as a particular argument.

The exception is thrown because `toPrettySQL` call in `ResolveAliases` analyzer rule transforms a `Literal` parameter to a `PrettyAttribute` which is then transformed to an `ObjectInspector` instead of a `ConstantObjectInspector`.

The exception comes from `getEvaluator` method of `GenericUDAFPercentileApprox` that actually shouldn't be called during `toPrettySQL` transformation. The reason why it is called are the non lazy fields in `HiveUDAFFunction`.

This PR makes all fields of `HiveUDAFFunction` lazy.

## How was this patch tested?

added new UT

Closes#22766 from peter-toth/SPARK-25768.

Authored-by: Peter Toth <peter.toth@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This is a follow-up PR for #22259. The extra field added in `ScalaUDF` with the original PR was declared optional, but should be indeed required, otherwise callers of `ScalaUDF`'s constructor could ignore this new field and cause the result to be incorrect. This PR makes the new field required and changes its name to `handleNullForInputs`.

#22259 breaks the previous behavior for null-handling of primitive-type input parameters. For example, for `val f = udf({(x: Int, y: Any) => x})`, `f(null, "str")` should return `null` but would return `0` after #22259. In this PR, all UDF methods except `def udf(f: AnyRef, dataType: DataType): UserDefinedFunction` have been restored with the original behavior. The only exception is documented in the Spark SQL migration guide.

In addition, now that we have this extra field indicating if a null-test should be applied on the corresponding input value, we can also make use of this flag to avoid the rule `HandleNullInputsForUDF` being applied infinitely.

## How was this patch tested?

Added UT in UDFSuite

Passed affected existing UTs:

AnalysisSuite

UDFSuite

Closes#22732 from maryannxue/spark-25044-followup.

Lead-authored-by: maryannxue <maryannxue@apache.org>

Co-authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

This allows an implementer of Spark Session Extensions to utilize a

method "injectFunction" which will add a new function to the default

Spark Session Catalogue.

## What changes were proposed in this pull request?

Adds a new function to SparkSessionExtensions

def injectFunction(functionDescription: FunctionDescription)

Where function description is a new type

type FunctionDescription = (FunctionIdentifier, FunctionBuilder)

The functions are loaded in BaseSessionBuilder when the function registry does not have a parent

function registry to get loaded from.

## How was this patch tested?

New unit tests are added for the extension in SparkSessionExtensionSuite

Closes#22576 from RussellSpitzer/SPARK-25560.

Authored-by: Russell Spitzer <Russell.Spitzer@gmail.com>

Signed-off-by: Herman van Hovell <hvanhovell@databricks.com>