### What changes were proposed in this pull request?

Improve the error message in test GroupedMapInPandasTests.test_grouped_over_window_with_key to show the incorrect values.

### Why are the changes needed?

This test failure has come up often in Arrow testing because it tests a struct with timestamp values through a Pandas UDF. The current error message is not helpful as it doesn't show the incorrect values, only that it failed. This change will instead raise an assertion error with the incorrect values on a failure.

Before:

```

======================================================================

FAIL: test_grouped_over_window_with_key (pyspark.sql.tests.test_pandas_grouped_map.GroupedMapInPandasTests)

----------------------------------------------------------------------

Traceback (most recent call last):

File "/spark/python/pyspark/sql/tests/test_pandas_grouped_map.py", line 588, in test_grouped_over_window_with_key

self.assertTrue(all([r[0] for r in result]))

AssertionError: False is not true

```

After:

```

======================================================================

ERROR: test_grouped_over_window_with_key (pyspark.sql.tests.test_pandas_grouped_map.GroupedMapInPandasTests)

----------------------------------------------------------------------

...

AssertionError: {'start': datetime.datetime(2018, 3, 20, 0, 0), 'end': datetime.datetime(2018, 3, 25, 0, 0)}, != {'start': datetime.datetime(2020, 3, 20, 0, 0), 'end': datetime.datetime(2020, 3, 25, 0, 0)}

```

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Improved existing test

Closes#28987 from BryanCutler/pandas-grouped-map-test-output-SPARK-32162.

Authored-by: Bryan Cutler <cutlerb@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

In https://issues.apache.org/jira/browse/SPARK-29283, we only show the error message of root cause to end-users through JDBC client. In some cases, it erases the straightaway messages that we intentionally make to help them for better understanding.

The root cause is somehow obscure for JDBC end-users who only writing SQL queries.

e.g

```

Error running query: org.apache.spark.sql.AnalysisException: The second argument of 'date_sub' function needs to be an integer.;

```

is better than just

```

Caused by: java.lang.NumberFormatException: invalid input syntax for type numeric: 1.2

```

We should do as Hive does in https://issues.apache.org/jira/browse/HIVE-14368

In general, this PR partially reverts SPARK-29283, ports HIVE-14368, and improves test coverage

### Why are the changes needed?

1. Do the same as Hive 2.3 and later for getting an error message in ThriftCLIService.GetOperationStatus

2. The root cause is somehow obscure for JDBC end-users who only writing SQL queries.

3. Consistency with `spark-sql` script

### Does this PR introduce _any_ user-facing change?

Yes, when running queries using thrift server and an error occurs, you will get the full stack traces instead of only the message of the root cause

### How was this patch tested?

add unit test

Closes#28963 from yaooqinn/SPARK-32145.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR aims to reduce the required test resources in WorkerDecommissionExtendedSuite.

### Why are the changes needed?

When Jenkins farms is crowded, the following failure happens currently [here](https://amplab.cs.berkeley.edu/jenkins/view/Spark%20QA%20Test%20(Dashboard)/job/spark-master-test-sbt-hadoop-3.2-hive-2.3/890/testReport/junit/org.apache.spark.scheduler/WorkerDecommissionExtendedSuite/Worker_decommission_and_executor_idle_timeout/)

```

java.util.concurrent.TimeoutException: Can't find 20 executors before 60000 milliseconds elapsed

at org.apache.spark.TestUtils$.waitUntilExecutorsUp(TestUtils.scala:326)

at org.apache.spark.scheduler.WorkerDecommissionExtendedSuite.$anonfun$new$2(WorkerDecommissionExtendedSuite.scala:45)

```

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Pass the Jenkins.

Closes#29001 from dongjoon-hyun/SPARK-32100-2.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This PR aims to disable dependency tests(test-dependencies.sh) from Jenkins.

### Why are the changes needed?

- First of all, GitHub Action provides the same test capability already and stabler.

- Second, currently, `test-dependencies.sh` fails very frequently in AmpLab Jenkins environment. For example, in the following irrelevant PR, it fails 5 times during 6 hours.

- https://github.com/apache/spark/pull/29001

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Pass the Jenkins without `test-dependencies.sh` invocation.

Closes#29004 from dongjoon-hyun/SPARK-32178.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR changes `webui.css` to fix a style issue on moving mouse cursor on the Spark logo.

### Why are the changes needed?

In the webui, the Spark logo is on the top right side.

When we move mouse cursor on the logo, a weird underline appears near the logo.

<img width="209" alt="logo_with_line" src="https://user-images.githubusercontent.com/4736016/86542828-3c6a9f00-bf54-11ea-9b9d-cc50c12c2c9b.png">

### Does this PR introduce _any_ user-facing change?

Yes. After this change applied, no more weird line shown even if mouse cursor moves on the logo.

<img width="207" alt="removed-line-from-logo" src="https://user-images.githubusercontent.com/4736016/86542877-98cdbe80-bf54-11ea-8695-ee39689673ab.png">

### How was this patch tested?

By moving mouse cursor on the Spark logo and confirmed no more weird line there.

Closes#29003 from sarutak/fix-logo-underline.

Authored-by: Kousuke Saruta <sarutak@oss.nttdata.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

docs/sql-ref-syntax-qry-select-usedb.md -> docs/sql-ref-syntax-ddl-usedb.md

docs/sql-ref-syntax-aux-refresh-table.md -> docs/sql-ref-syntax-aux-cache-refresh-table.md

### Why are the changes needed?

usedb belongs to DDL. Its location should be consistent with other DDL commands file locations

similar reason for refresh table

### Does this PR introduce _any_ user-facing change?

before change, when clicking USE DATABASE, the side bar menu shows select commands

<img width="1200" alt="Screen Shot 2020-07-04 at 9 05 35 AM" src="https://user-images.githubusercontent.com/13592258/86516696-b45f8a80-bdd7-11ea-8dba-3a5cca22aad3.png">

after change, when clicking USE DATABASE, the side bar menu shows DDL commands

<img width="1120" alt="Screen Shot 2020-07-04 at 9 06 06 AM" src="https://user-images.githubusercontent.com/13592258/86516703-bf1a1f80-bdd7-11ea-8a90-ae7eaaafd44c.png">

before change, when clicking refresh table, the side bar menu shows Auxiliary statements

<img width="1200" alt="Screen Shot 2020-07-04 at 9 30 40 AM" src="https://user-images.githubusercontent.com/13592258/86516877-3d2af600-bdd9-11ea-9568-0a6f156f57da.png">

after change, when clicking refresh table, the side bar menu shows Cache statements

<img width="1199" alt="Screen Shot 2020-07-04 at 9 35 21 AM" src="https://user-images.githubusercontent.com/13592258/86516937-b4f92080-bdd9-11ea-8ad1-5f5a7f58d76b.png">

### How was this patch tested?

Manually build and check

Closes#28995 from huaxingao/docs_fix.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Huaxin Gao <huaxing@us.ibm.com>

### What changes were proposed in this pull request?

Set the JSON option `inferTimestamp` to `true` for the cases that measure perf of timestamp inference.

### Why are the changes needed?

The PR https://github.com/apache/spark/pull/28966 disabled timestamp inference by default. As a consequence, some benchmarks don't measure perf of timestamp inference from JSON fields. This PR explicitly enable such inference.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

By re-generating results of `JsonBenchmark`.

Closes#28981 from MaxGekk/json-inferTimestamps-disable-by-default-followup.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

1.Merge two similar tests for SPARK-31061 and make the code clean.

2.Fix table alter issue due to lose path.

### Why are the changes needed?

Because this two tests for SPARK-31061 is very similar and could be merged.

And the first test case should use `rawTable` instead of `parquetTable` to alter.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Unit test.

Closes#28980 from TJX2014/master-follow-merge-spark-31061-test-case.

Authored-by: TJX2014 <xiaoxingstack@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Check the namespace existence while calling "use namespace", and throw NoSuchNamespaceException if namespace not exists.

### Why are the changes needed?

Users need to know that the namespace does not exist when they try to set a wrong namespace.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Run all suites and add a test for this

Closes#27900 from stczwd/SPARK-31100.

Authored-by: stczwd <qcsd2011@163.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

For queries like `t1d in (SELECT t2d FROM t2 ORDER BY t2c LIMIT 2)`, the result can be non-deterministic as the result of the subquery may output different results (it's not sorted by `t2d` and it has shuffle).

This PR makes the test more robust by sorting the output column.

### Why are the changes needed?

avoid flaky test

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

N/A

Closes#28976 from cloud-fan/small.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

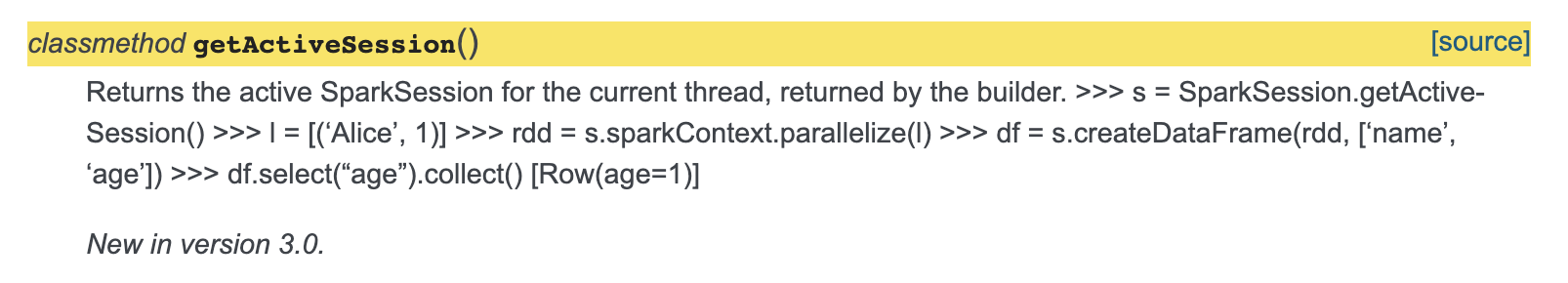

### What changes were proposed in this pull request?

Minor fix so that the documentation of `getActiveSession` is fixed.

The sample code snippet doesn't come up formatted rightly, added spacing for this to be fixed.

Also added return to docs.

### Why are the changes needed?

The sample code is getting mixed up as description in the docs.

[Current Doc Link](http://spark.apache.org/docs/latest/api/python/pyspark.sql.html?highlight=getactivesession#pyspark.sql.SparkSession.getActiveSession)

### Does this PR introduce _any_ user-facing change?

Yes, documentation of getActiveSession is fixed.

And added description about return.

### How was this patch tested?

Adding a spacing between description and code seems to fix the issue.

Closes#28978 from animenon/docs_minor.

Authored-by: animenon <animenon@mail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Correct file seprate use in `ExecutorDiskUtils.createNormalizedInternedPathname` on Windows

### Why are the changes needed?

`ExternalShuffleBlockResolverSuite` failed on Windows, see detail at:

https://issues.apache.org/jira/browse/SPARK-32121

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

The existed test suite.

Closes#28940 from pan3793/SPARK-32121.

Lead-authored-by: pancheng <379377944@qq.com>

Co-authored-by: chengpan <cheng.pan@idiaoyan.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This patch fixes wrong groupBy result if the grouping key is a null-value struct.

### Why are the changes needed?

`NormalizeFloatingNumbers` reconstructs a struct if input expression is StructType. If the input struct is null, it will reconstruct a struct with null-value fields, instead of null.

### Does this PR introduce _any_ user-facing change?

Yes, fixing incorrect groupBy result.

### How was this patch tested?

Unit test.

Closes#28962 from viirya/SPARK-32136.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

When a Spark Job launched in Cluster mode with Yarn, Application Master sets spark.ui.port port to 0 which means Driver's web UI gets any random port even if we want to explicitly set the Port range for Driver's Web UI

## Why are the changes needed?

We access Spark Web UI via Knox Proxy, and there are firewall restrictions due to which we can not access Spark Web UI since Web UI port range gets random port even if we set explicitly.

This Change will check if there is a specified port range explicitly mentioned so that it does not assign a random port.

## Does this PR introduce any user-facing change?

No

## How was this patch tested?

Local Tested.

Closes#28880 from rajatahujaatinmobi/ahujarajat261/SPARK-32039-change-yarn-webui-port-range-with-property-latest-spark.

Authored-by: Rajat Ahuja <rahuja@twitter.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

As the followup of #28900, this patch extends coalescing partitions to repartitioning using hints and SQL syntax without specifying number of partitions, when AQE is enabled.

### Why are the changes needed?

When repartitionning using hints and SQL syntax, we should follow the shuffling behavior of repartition by expression/range to coalesce partitions when AQE is enabled.

### Does this PR introduce _any_ user-facing change?

Yes. After this change, if users don't specify the number of partitions when repartitioning using `REPARTITION`/`REPARTITION_BY_RANGE` hint or `DISTRIBUTE BY`/`CLUSTER BY`, AQE will coalesce partitions.

### How was this patch tested?

Unit tests.

Closes#28952 from viirya/SPARK-32056-sql.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Set the JSON option `inferTimestamp` to `false` if an user don't pass it as datasource option.

### Why are the changes needed?

To prevent perf regression while inferring schemas from JSON with potential timestamps fields.

### Does this PR introduce _any_ user-facing change?

Yes

### How was this patch tested?

- Modified existing tests in `JsonSuite` and `JsonInferSchemaSuite`.

- Regenerated results of `JsonBenchmark` in the environment:

| Item | Description |

| ---- | ----|

| Region | us-west-2 (Oregon) |

| Instance | r3.xlarge |

| AMI | ubuntu/images/hvm-ssd/ubuntu-bionic-18.04-amd64-server-20190722.1 (ami-06f2f779464715dc5) |

| Java | OpenJDK 64-Bit Server VM 1.8.0_252 and OpenJDK 64-Bit Server VM 11.0.7+10 |

Closes#28966 from MaxGekk/json-inferTimestamps-disable-by-default.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This is a followup of https://github.com/apache/spark/pull/28760 to fix the remaining issues:

1. should consider data source options when refreshing cache by path at the end of `InsertIntoHadoopFsRelationCommand`

2. should consider data source options when inferring schema for file source

3. should consider data source options when getting the qualified path in file source v2.

### Why are the changes needed?

We didn't catch these issues in https://github.com/apache/spark/pull/28760, because the test case is to check error when initializing the file system. If we initialize the file system multiple times during a simple read/write action, the test case actually only test the first time.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

rewrite the test to make sure the entire data source read/write action can succeed.

Closes#28948 from cloud-fan/fix.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Gengliang Wang <gengliang.wang@databricks.com>

### What changes were proposed in this pull request?

This PR aims to add `PrometheusServletSuite`.

### Why are the changes needed?

This improves the test coverage.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Pass the newly added test suite.

Closes#28865 from erenavsarogullari/spark_driver_prometheus_metrics_improvement.

Authored-by: Eren Avsarogullari <erenavsarogullari@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This is a followup of https://github.com/apache/spark/pull/28534 , to make `TIMESTAMP_SECONDS` function support fractional input as well.

### Why are the changes needed?

Previously the cast function can cast fractional values to timestamp. Now we suggest users to ues these new functions, and we need to cover all the cast use cases.

### Does this PR introduce _any_ user-facing change?

Yes, now `TIMESTAMP_SECONDS` function accepts fractional input.

### How was this patch tested?

new tests

Closes#28956 from cloud-fan/follow.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Add summary to RandomForestClassificationModel...

### Why are the changes needed?

so user can get a summary of this classification model, and retrieve common metrics such as accuracy, weightedTruePositiveRate, roc (for binary), pr curves (for binary), etc.

### Does this PR introduce _any_ user-facing change?

Yes

```

RandomForestClassificationModel.summary

RandomForestClassificationModel.evaluate

```

### How was this patch tested?

Add new tests

Closes#28913 from huaxingao/rf_summary.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

Spark can't push down scan predicate condition of **Or**:

Such as if I have a table `default.test`, it's partition col is `dt`,

If we use query :

```

select * from default.test

where dt=20190625 or (dt = 20190626 and id in (1,2,3) )

```

In this case, Spark will resolve **Or** condition as one expression, and since this expr has reference of "id", then it can't been push down.

Base on pr https://github.com/apache/spark/pull/28733, In my PR , for SQL like

`select * from default.test`

`where dt = 20190626 or (dt = 20190627 and xxx="a") `

For this condition `dt = 20190626 or (dt = 20190627 and xxx="a" )`, it will been converted to CNF

```

(dt = 20190626 or dt = 20190627) and (dt = 20190626 or xxx = "a" )

```

then condition `dt = 20190626 or dt = 20190627` will be push down when partition pruning

### Why are the changes needed?

Optimize partition pruning

### Does this PR introduce _any_ user-facing change?

NO

### How was this patch tested?

Added UT

Closes#28805 from AngersZhuuuu/cnf-for-partition-pruning.

Lead-authored-by: angerszhu <angers.zhu@gmail.com>

Co-authored-by: AngersZhuuuu <angers.zhu@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Update documentation to reflect changes in faf220aad9

I've changed the documentation to reflect updated statistics may be used to improve query plan.

### Why are the changes needed?

I believe the documentation is stale and misleading.

### Does this PR introduce _any_ user-facing change?

Yes, this is a javadoc documentation fix.

### How was this patch tested?

Doc fix.

Closes#28925 from emkornfield/spark-32095.

Authored-by: Micah Kornfield <micahk@google.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

fix error exception messages during exceptions on Union and set operations

### Why are the changes needed?

Union and set operations can only be performed on tables with the compatible column types,while when we have more than two column, the exception messages will have wrong column index.

Steps to reproduce:

```

drop table if exists test1;

drop table if exists test2;

drop table if exists test3;

create table if not exists test1(id int, age int, name timestamp);

create table if not exists test2(id int, age timestamp, name timestamp);

create table if not exists test3(id int, age int, name int);

insert into test1 select 1,2,'2020-01-01 01:01:01';

insert into test2 select 1,'2020-01-01 01:01:01','2020-01-01 01:01:01';

insert into test3 select 1,3,4;

```

Query1:

```sql

select * from test1 except select * from test2;

```

Result1:

```

Error: org.apache.spark.sql.AnalysisException: Except can only be performed on tables with the compatible column types. timestamp <> int at the second column of the second table;; 'Except false :- Project [id#620, age#621, name#622] : +- SubqueryAlias `default`.`test1` : +- HiveTableRelation `default`.`test1`, org.apache.hadoop.hive.serde2.lazy.LazySimpleSerDe, [id#620, age#621, name#622] +- Project [id#623, age#624, name#625] +- SubqueryAlias `default`.`test2` +- HiveTableRelation `default`.`test2`, org.apache.hadoop.hive.serde2.lazy.LazySimpleSerDe, [id#623, age#624, name#625] (state=,code=0)

```

Query2:

```sql

select * from test1 except select * from test3;

```

Result2:

```

Error: org.apache.spark.sql.AnalysisException: Except can only be performed on tables with the compatible column types

int <> timestamp at the 2th column of the second table;

```

the above query1 has the right exception message

the above query2 have the wrong errors information, it may need to change to the following

```

Error: org.apache.spark.sql.AnalysisException: Except can only be performed on tables with the compatible column types.

int <> timestamp at the third column of the second table

```

### Does this PR introduce _any_ user-facing change?

NO

### How was this patch tested?

unit test

Closes#28951 from GuoPhilipse/32131-correct-error-messages.

Lead-authored-by: GuoPhilipse <46367746+GuoPhilipse@users.noreply.github.com>

Co-authored-by: GuoPhilipse <guofei_ok@126.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This PR proposes to partially reverts back in the tests and some codes at https://github.com/apache/spark/pull/27728 without touching any behaivours.

Most of changes in tests are back before #27728 by combining `withNestedDataFrame` and `withParquetDataFrame`.

Basically, it addresses the comments https://github.com/apache/spark/pull/27728#discussion_r397655390, and my own comment in another PR at https://github.com/apache/spark/pull/28761#discussion_r446761037

### Why are the changes needed?

For maintenance purpose and to avoid a potential conflicts during backports. And also in case when other codes are matched with this.

### Does this PR introduce _any_ user-facing change?

No, dev-only.

### How was this patch tested?

Manually tested.

Closes#28955 from HyukjinKwon/SPARK-25556-followup.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Modify the example for `timestamp_seconds` and replace `collect()` by `show()`.

### Why are the changes needed?

The SQL config `spark.sql.session.timeZone` doesn't influence on the `collect` in the example. The code below demonstrates that:

```

$ export TZ="UTC"

```

```python

>>> from pyspark.sql.functions import timestamp_seconds

>>> spark.conf.set("spark.sql.session.timeZone", "America/Los_Angeles")

>>> time_df = spark.createDataFrame([(1230219000,)], ['unix_time'])

>>> time_df.select(timestamp_seconds(time_df.unix_time).alias('ts')).collect()

[Row(ts=datetime.datetime(2008, 12, 25, 15, 30))]

```

The expected time is **07:30 but we get 15:30**.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

By running the modified example via:

```

$ ./python/run-tests --modules=pyspark-sql

```

Closes#28959 from MaxGekk/SPARK-32088-fix-timezone-issue-followup.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

JDBC connection providers implementation formatted in a wrong way. In this PR I've fixed the formatting.

### Why are the changes needed?

Wrong spacing in JDBC connection providers.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Existing unit tests.

Closes#28945 from gaborgsomogyi/provider_spacing.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

When loading DataFrames from JDBC datasource with Kerberos authentication, remote executors (yarn-client/cluster etc. modes) fail to establish a connection due to lack of Kerberos ticket or ability to generate it.

This is a real issue when trying to ingest data from kerberized data sources (SQL Server, Oracle) in enterprise environment where exposing simple authentication access is not an option due to IT policy issues.

In this PR I've added Oracle support.

What this PR contains:

* Added `OracleConnectionProvider`

* Added `OracleConnectionProviderSuite`

### Why are the changes needed?

Missing JDBC kerberos support.

### Does this PR introduce _any_ user-facing change?

Yes, now user is able to connect to Oracle using kerberos.

### How was this patch tested?

* Additional + existing unit tests

* Test on cluster manually

Closes#28863 from gaborgsomogyi/SPARK-31336.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This PR fixes a typo for a configuration property in the `spark-standalone.md`.

`spark.driver.resourcesfile` should be `spark.driver.resourcesFile`.

I look for similar typo but this is the only typo.

### Why are the changes needed?

The property name is wrong.

### Does this PR introduce _any_ user-facing change?

Yes. The property name is corrected.

### How was this patch tested?

I confirmed the spell of the property name is the correct from the property name defined in o.a.s.internal.config.package.scala.

Closes#28958 from sarutak/fix-resource-typo.

Authored-by: Kousuke Saruta <sarutak@oss.nttdata.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

`formatDate` in utils.js `org/apache/spark/ui/static/utils.js` is partly refactored.

### Why are the changes needed?

In branch-2.4,task launch time is returned as html string from driver,

while in branch-3.x,this is returned in JSON Object as`Date`type from `org.apache.spark.status.api.v1.TaskData`

Due to:

LaunchTime from jersey server in spark driver is correct, which will be converted to date string like `2020-06-28T02:57:42.605GMT` in json object, then the formatDate in utils.js treat it as date.split(".")[0].replace("T", " ").

So `2020-06-28T02:57:42.605GMT` will be converted to `2020-06-28 02:57:42`, but correct is `2020-06-28 10:57:42` in GMT+8 timezone.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Manual test.

Closes#28918 from TJX2014/master-SPARK-32068-ui-task-lauch-time-tz.

Authored-by: TJX2014 <xiaoxingstack@gmail.com>

Signed-off-by: Thomas Graves <tgraves@apache.org>

### What changes were proposed in this pull request?

This patch fixes the missed spot - the test initializes FileStreamSinkLog with its "output" directory instead of "metadata" directory, hence the verification against sink log was no-op.

### Why are the changes needed?

Without the fix, the verification against sink log was no-op.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Checked with debugger in test, and verified `allFiles()` returns non-zero entries. (It returned zero entry, as there's no metadata.)

Closes#28930 from HeartSaVioR/SPARK-29999-FOLLOWUP-fix-test.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR add unlimited MATCHED and NOT MATCHED clauses in MERGE INTO statement.

### Why are the changes needed?

Now the MERGE INTO syntax is,

```

MERGE INTO [db_name.]target_table [AS target_alias]

USING [db_name.]source_table [<time_travel_version>] [AS source_alias]

ON <merge_condition>

[ WHEN MATCHED [ AND <condition> ] THEN <matched_action> ]

[ WHEN MATCHED [ AND <condition> ] THEN <matched_action> ]

[ WHEN NOT MATCHED [ AND <condition> ] THEN <not_matched_action> ]

```

It would be nice if we support unlimited MATCHED and NOT MATCHED clauses in MERGE INTO statement, because users may want to deal with different "AND <condition>"s, the result of which just like a series of "CASE WHEN"s. The expected syntax looks like

```

MERGE INTO [db_name.]target_table [AS target_alias]

USING [db_name.]source_table [<time_travel_version>] [AS source_alias]

ON <merge_condition>

[when_matched_clause [, ...]]

[when_not_matched_clause [, ...]]

```

where when_matched_clause is

```

WHEN MATCHED [ AND <condition> ] THEN <matched_action>

```

and when_not_matched_clause is

```

WHEN NOT MATCHED [ AND <condition> ] THEN <not_matched_action>

```

matched_action can be one of

```

DELETE

UPDATE SET * or

UPDATE SET col1 = value1 [, col2 = value2, ...]

```

and not_matched_action can be one of

```

INSERT *

INSERT (col1 [, col2, ...]) VALUES (value1 [, value2, ...])

```

### Does this PR introduce _any_ user-facing change?

Yes. The SQL command changes, but it is backward compatible.

### How was this patch tested?

New tests added.

Closes#28875 from xianyinxin/SPARK-32030.

Authored-by: xy_xin <xianyin.xxy@alibaba-inc.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR tries to unify the method `getReader` and `getReaderForRange` in `ShuffleManager`.

### Why are the changes needed?

Reduce the duplicate codes, simplify the implementation, and for better maintenance.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Covered by existing tests.

Closes#28895 from Ngone51/unify-getreader.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This patch proposes to coalesce partitions for repartition by expressions without specifying number of partitions, when AQE is enabled.

### Why are the changes needed?

When repartition by some partition expressions, users can specify number of partitions or not. If the number of partitions is specified, we should not coalesce partitions because it breaks user expectation. But if without specifying number of partitions, AQE should be able to coalesce partitions as other shuffling.

### Does this PR introduce _any_ user-facing change?

Yes. After this change, if users don't specify the number of partitions when repartitioning data by expressions, AQE will coalesce partitions.

### How was this patch tested?

Added unit test.

Closes#28900 from viirya/SPARK-32056.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This is a followup of https://github.com/apache/spark/pull/28876/files

This PR proposes to use the name of the original expression, as the alias name of the normalization expression.

### Why are the changes needed?

make the query plan looks pretty when EXPLAIN.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

manually explain the query

Closes#28919 from cloud-fan/follow.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This PR fix `UserDefinedType.equal()` by comparing the UDT class instead of checking `acceptsType()`.

### Why are the changes needed?

It's weird that equality comparison between two UDT types can have different result by switching the order:

```scala

// ExampleSubTypeUDT.userClass is a subclass of ExampleBaseTypeUDT.userClass

val udt1 = new ExampleBaseTypeUDT

val udt2 = new ExampleSubTypeUDT

println(udt1 == udt2) // true

println(udt2 == udt1) // false

```

### Does this PR introduce _any_ user-facing change?

Yes.

Before:

```scala

// ExampleSubTypeUDT.userClass is a subclass of ExampleBaseTypeUDT.userClass

val udt1 = new ExampleBaseTypeUDT

val udt2 = new ExampleSubTypeUDT

println(udt1 == udt2) // true

println(udt2 == udt1) // false

```

After:

```scala

// ExampleSubTypeUDT.userClass is a subclass of ExampleBaseTypeUDT.userClass

val udt1 = new ExampleBaseTypeUDT

val udt2 = new ExampleSubTypeUDT

println(udt1 == udt2) // false

println(udt2 == udt1) // false

```

### How was this patch tested?

Added a unit test.

Closes#28923 from Ngone51/fix-udt-equal.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

The `optimizedPlan` in IncrementalExecution should also be scoped in `withActive`.

### Why are the changes needed?

Follow-up of SPARK-30798 for the Streaming side.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Existing UT.

Closes#28936 from xuanyuanking/SPARK-30798-follow.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Fix bug of exception when parse event log of fetch failed task end reason without `Map Index`

### Why are the changes needed?

When Spark history server read event log produced by older version of spark 2.4 (which don't have `Map Index` field), parsing of TaskEndReason will fail. This will cause TaskEnd event being ignored.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

JsonProtocolSuite.test("FetchFailed Map Index backwards compatibility")

Closes#28941 from warrenzhu25/shs-task.

Authored-by: Warren Zhu <zhonzh@microsoft.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Bug fix for overflow case in `UTF8String.substringSQL`.

### Why are the changes needed?

SQL query `SELECT SUBSTRING("abc", -1207959552, -1207959552)` incorrectly returns` "abc"` against expected output of `""`. For query `SUBSTRING("abc", -100, -100)`, we'll get the right output of `""`.

### Does this PR introduce _any_ user-facing change?

Yes, bug fix for the overflow case.

### How was this patch tested?

New UT.

Closes#28937 from xuanyuanking/SPARK-32115.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Add benchmarks for interval constructor `make_interval` and measure perf of 4 cases:

1. Constant (year, month)

2. Constant (week, day)

3. Constant (hour, minute, second, second fraction)

4. All fields are NOT constant.

The benchmark results are generated in the environment:

| Item | Description |

| ---- | ----|

| Region | us-west-2 (Oregon) |

| Instance | r3.xlarge |

| AMI | ubuntu/images/hvm-ssd/ubuntu-bionic-18.04-amd64-server-20190722.1 (ami-06f2f779464715dc5) |

| Java | OpenJDK 64-Bit Server VM 1.8.0_252 and OpenJDK 64-Bit Server VM 11.0.7+10 |

### Why are the changes needed?

To have a base line for future perf improvements of `make_interval`, and to prevent perf regressions in the future.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

By running `IntervalBenchmark` via:

```

$ SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.IntervalBenchmark"

```

Closes#28905 from MaxGekk/benchmark-make_interval.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

According to the dev mailing list discussion, this PR aims to switch the default Apache Hadoop dependency from 2.7.4 to 3.2.0 for Apache Spark 3.1.0 on December 2020.

| Item | Default Hadoop Dependency |

|------|-----------------------------|

| Apache Spark Website | 3.2.0 |

| Apache Download Site | 3.2.0 |

| Apache Snapshot | 3.2.0 |

| Maven Central | 3.2.0 |

| PyPI | 2.7.4 (We will switch later) |

| CRAN | 2.7.4 (We will switch later) |

| Homebrew | 3.2.0 (already) |

In Apache Spark 3.0.0 release, we focused on the other features. This PR targets for [Apache Spark 3.1.0 scheduled on December 2020](https://spark.apache.org/versioning-policy.html).

### Why are the changes needed?

Apache Hadoop 3.2 has many fixes and new cloud-friendly features.

**Reference**

- 2017-08-04: https://hadoop.apache.org/release/2.7.4.html

- 2019-01-16: https://hadoop.apache.org/release/3.2.0.html

### Does this PR introduce _any_ user-facing change?

Since the default Hadoop dependency changes, the users will get a better support in a cloud environment.

### How was this patch tested?

Pass the Jenkins.

Closes#28897 from dongjoon-hyun/SPARK-32058.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

**First**

`DAGSchedulerSuite` provides `completeNextStageWithFetchFailure` to make all tasks in non first stage occur `FetchFailed`.

But many test case uses complete directly as follows:

```scala

complete(taskSets(1), Seq(

(FetchFailed(makeBlockManagerId("hostA"),

shuffleDep1.shuffleId, 0L, 0, 0, "ignored"), null)))

```

We need to reuse `completeNextStageWithFetchFailure`.

**Second**

`DAGSchedulerSuite` also check the results show below:

```scala

complete(taskSets(0), Seq((Success, 42)))

assert(results === Map(0 -> 42))

```

We can extract it as a generic method of `checkAnswer`.

### Why are the changes needed?

Reuse `completeNextStageWithFetchFailure`

### Does this PR introduce _any_ user-facing change?

'No'.

### How was this patch tested?

Jenkins test

Closes#28866 from beliefer/reuse-completeNextStageWithFetchFailure.

Authored-by: gengjiaan <gengjiaan@360.cn>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

The 3rd link in `IBM Cloud Object Storage connector for Apache Spark` is broken. The PR removes this link.

### Why are the changes needed?

broken link

### Does this PR introduce _any_ user-facing change?

yes, the broken link is removed from the doc.

### How was this patch tested?

doc generation passes successfully as before

Closes#28927 from guykhazma/spark32099.

Authored-by: Guy Khazma <guykhag@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Add American timezone during timestamp_seconds doctest

### Why are the changes needed?

`timestamp_seconds` doctest in `functions.py` used default timezone to get expected result

For example:

```python

>>> time_df = spark.createDataFrame([(1230219000,)], ['unix_time'])

>>> time_df.select(timestamp_seconds(time_df.unix_time).alias('ts')).collect()

[Row(ts=datetime.datetime(2008, 12, 25, 7, 30))]

```

But when we have a non-american timezone, the test case will get different test result.

For example, when we set current timezone as `Asia/Shanghai`, the test result will be

```

[Row(ts=datetime.datetime(2008, 12, 25, 23, 30))]

```

So no matter where we run the test case ,we will always get the expected permanent result if we set the timezone on one specific area.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Unit test

Closes#28932 from GuoPhilipse/SPARK-32088-fix-timezone-issue.

Lead-authored-by: GuoPhilipse <46367746+GuoPhilipse@users.noreply.github.com>

Co-authored-by: GuoPhilipse <guofei_ok@126.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Add training summary for LinearSVCModel......

### Why are the changes needed?

so that user can get the training process status, such as loss value of each iteration and total iteration number.

### Does this PR introduce _any_ user-facing change?

Yes

```LinearSVCModel.summary```

```LinearSVCModel.evaluate```

### How was this patch tested?

new tests

Closes#28884 from huaxingao/svc_summary.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

Adding support to Association Rules in Spark ml.fpm.

### Why are the changes needed?

Support is an indication of how frequently the itemset of an association rule appears in the database and suggests if the rules are generally applicable to the dateset. Refer to [wiki](https://en.wikipedia.org/wiki/Association_rule_learning#Support) for more details.

### Does this PR introduce _any_ user-facing change?

Yes. Associate Rules now have support measure

### How was this patch tested?

existing and new unit test

Closes#28903 from huaxingao/fpm.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

This pull request fixes a bug present in the csv type inference.

We have problems when we have different types in the same column.

**Previously:**

```

$ cat /example/f1.csv

col1

43200000

true

spark.read.csv(path="file:///example/*.csv", header=True, inferSchema=True).show()

+----+

|col1|

+----+

|null|

|true|

+----+

root

|-- col1: boolean (nullable = true)

```

**Now**

```

spark.read.csv(path="file:///example/*.csv", header=True, inferSchema=True).show()

+-------------+

|col1 |

+-------------+

|43200000 |

|true |

+-------------+

root

|-- col1: string (nullable = true)

```

Previously the hierarchy of type inference is the following:

> IntegerType

> > LongType

> > > DecimalType

> > > > DoubleType

> > > > > TimestampType

> > > > > > BooleanType

> > > > > > > StringType

So, when, for example, we have integers in one column, and the last element is a boolean, all the column is inferred as a boolean column incorrectly and all the number are shown as null when you see the data

We need the following hierarchy. When we have different numeric types in the column it will be resolved correctly. And when we have other different types it will be resolved as a String type column

> IntegerType

> > LongType

> > > DecimalType

> > > > DoubleType

> > > > > StringType

> TimestampType

> > StringType

> BooleanType

> > StringType

> StringType

### Why are the changes needed?

Fix the bug explained

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Unit test and manual tests

Closes#28896 from planga82/feature/SPARK-32025_csv_inference.

Authored-by: Pablo Langa <soypab@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR aims to add `WorkerDecomissionExtendedSuite` for various worker decommission combinations.

### Why are the changes needed?

This will improve the test coverage.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Pass the Jenkins.

Closes#28929 from dongjoon-hyun/SPARK-WD-TEST.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

When you use floats are index of pandas, it creates a Spark DataFrame with a wrong results as below when Arrow is enabled:

```bash

./bin/pyspark --conf spark.sql.execution.arrow.pyspark.enabled=true

```

```python

>>> import pandas as pd

>>> spark.createDataFrame(pd.DataFrame({'a': [1,2,3]}, index=[2., 3., 4.])).show()

+---+

| a|

+---+

| 1|

| 1|

| 2|

+---+

```

This is because direct slicing uses the value as index when the index contains floats:

```python

>>> pd.DataFrame({'a': [1,2,3]}, index=[2., 3., 4.])[2:]

a

2.0 1

3.0 2

4.0 3

>>> pd.DataFrame({'a': [1,2,3]}, index=[2., 3., 4.]).iloc[2:]

a

4.0 3

>>> pd.DataFrame({'a': [1,2,3]}, index=[2, 3, 4])[2:]

a

4 3

```

This PR proposes to explicitly use `iloc` to positionally slide when we create a DataFrame from a pandas DataFrame with Arrow enabled.

FWIW, I was trying to investigate why direct slicing refers the index value or the positional index sometimes but I stopped investigating further after reading this https://pandas.pydata.org/pandas-docs/stable/getting_started/10min.html#selection

> While standard Python / Numpy expressions for selecting and setting are intuitive and come in handy for interactive work, for production code, we recommend the optimized pandas data access methods, `.at`, `.iat`, `.loc` and `.iloc`.

### Why are the changes needed?

To create the correct Spark DataFrame from a pandas DataFrame without a data loss.

### Does this PR introduce _any_ user-facing change?

Yes, it is a bug fix.

```bash

./bin/pyspark --conf spark.sql.execution.arrow.pyspark.enabled=true

```

```python

import pandas as pd

spark.createDataFrame(pd.DataFrame({'a': [1,2,3]}, index=[2., 3., 4.])).show()

```

Before:

```

+---+

| a|

+---+

| 1|

| 1|

| 2|

+---+

```

After:

```

+---+

| a|

+---+

| 1|

| 2|

| 3|

+---+

```

### How was this patch tested?

Manually tested and unittest were added.

Closes#28928 from HyukjinKwon/SPARK-32098.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

### What changes were proposed in this pull request?

This PR is to add a redirect to sql-ref.html.

### Why are the changes needed?

Before Spark 3.0 release, we are using sql-reference.md, which was replaced by sql-ref.md instead. A number of Google searches I’ve done today have turned up https://spark.apache.org/docs/latest/sql-reference.html, which does not exist any more. Thus, we should add a redirect to sql-ref.html.

### Does this PR introduce _any_ user-facing change?

https://spark.apache.org/docs/latest/sql-reference.html will be redirected to https://spark.apache.org/docs/latest/sql-ref.html

### How was this patch tested?

Build it in my local environment. It works well. The sql-reference.html file was generated. The contents are like:

```

<!DOCTYPE html>

<html lang="en-US">

<meta charset="utf-8">

<title>Redirecting…</title>

<link rel="canonical" href="http://localhost:4000/sql-ref.html">

<script>location="http://localhost:4000/sql-ref.html"</script>

<meta http-equiv="refresh" content="0; url=http://localhost:4000/sql-ref.html">

<meta name="robots" content="noindex">

<h1>Redirecting…</h1>

<a href="http://localhost:4000/sql-ref.html">Click here if you are not redirected.</a>

</html>

```

Closes#28914 from gatorsmile/addRedirectSQLRef.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>