## What changes were proposed in this pull request?

In the Planner, we collect the placeholder which need to be substituted in the query execution plan and once we plan them, we substitute the placeholder with the effective plan.

In this second phase, we rely on the `==` comparison, ie. the `equals` method. This means that if two placeholder plans - which are different instances - have the same attributes (so that they are equal, according to the equal method) they are both substituted with their corresponding new physical plans. So, in such a situation, the first time we substitute both them with the first of the 2 new generated plan and the second time we substitute nothing.

This is usually of no harm for the execution of the query itself, as the 2 plans are identical. But since they are the same instance, now, the local variables are shared (which is unexpected). This causes issues for the metrics collected, as the same node is executed 2 times, so the metrics are accumulated 2 times, wrongly.

The PR proposes to use the `eq` method in checking which placeholder needs to be substituted,; thus in the previous situation, actually both the two different physical nodes which are created (one for each time the logical plan appears in the query plan) are used and the metrics are collected properly for each of them.

## How was this patch tested?

added UT

Closes#22284 from mgaido91/SPARK-25278.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Add the version number for the new APIs.

## How was this patch tested?

N/A

Closes#22377 from gatorsmile/followup24849.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This PR is to solve the CodeGen code generated by fast hash, and there is no need to apply for a block of memory for every new entry, because unsafeRow's memory can be reused.

## How was this patch tested?

the existed test cases.

Closes#21968 from heary-cao/updateNewMemory.

Authored-by: caoxuewen <cao.xuewen@zte.com.cn>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

How to reproduce:

```scala

spark.sql("CREATE TABLE tbl(id long)")

spark.sql("INSERT OVERWRITE TABLE tbl VALUES 4")

spark.sql("CREATE VIEW view1 AS SELECT id FROM tbl")

spark.sql(s"INSERT OVERWRITE LOCAL DIRECTORY '/tmp/spark/parquet' " +

"STORED AS PARQUET SELECT ID FROM view1")

spark.read.parquet("/tmp/spark/parquet").schema

scala> spark.read.parquet("/tmp/spark/parquet").schema

res10: org.apache.spark.sql.types.StructType = StructType(StructField(id,LongType,true))

```

The schema should be `StructType(StructField(ID,LongType,true))` as we `SELECT ID FROM view1`.

This pr fix this issue.

## How was this patch tested?

unit tests

Closes#22359 from wangyum/SPARK-25313-FOLLOW-UP.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Apache Spark doesn't create Hive table with duplicated fields in both case-sensitive and case-insensitive mode. However, if Spark creates ORC files in case-sensitive mode first and create Hive table on that location, where it's created. In this situation, field resolution should fail in case-insensitive mode. Otherwise, we don't know which columns will be returned or filtered. Previously, SPARK-25132 fixed the same issue in Parquet.

Here is a simple example:

```

val data = spark.range(5).selectExpr("id as a", "id * 2 as A")

spark.conf.set("spark.sql.caseSensitive", true)

data.write.format("orc").mode("overwrite").save("/user/hive/warehouse/orc_data")

sql("CREATE TABLE orc_data_source (A LONG) USING orc LOCATION '/user/hive/warehouse/orc_data'")

spark.conf.set("spark.sql.caseSensitive", false)

sql("select A from orc_data_source").show

+---+

| A|

+---+

| 3|

| 2|

| 4|

| 1|

| 0|

+---+

```

See #22148 for more details about parquet data source reader.

## How was this patch tested?

Unit tests added.

Closes#22262 from seancxmao/SPARK-25175.

Authored-by: seancxmao <seancxmao@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

How to reproduce:

```scala

val df1 = spark.createDataFrame(Seq(

(1, 1)

)).toDF("a", "b").withColumn("c", lit(null).cast("int"))

val df2 = df1.union(df1).withColumn("d", spark_partition_id).filter($"c".isNotNull)

df2.show

+---+---+----+---+

| a| b| c| d|

+---+---+----+---+

| 1| 1|null| 0|

| 1| 1|null| 1|

+---+---+----+---+

```

`filter($"c".isNotNull)` was transformed to `(null <=> c#10)` before https://github.com/apache/spark/pull/19201, but it is transformed to `(c#10 = null)` since https://github.com/apache/spark/pull/20155. This pr revert it to `(null <=> c#10)` to fix this issue.

## How was this patch tested?

unit tests

Closes#22368 from wangyum/SPARK-25368.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Remove `BisectingKMeansModel.setDistanceMeasure` method.

In `BisectingKMeansModel` set this param is meaningless.

## How was this patch tested?

N/A

Closes#22360 from WeichenXu123/bkmeans_update.

Authored-by: WeichenXu <weichen.xu@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

When running TPC-DS benchmarks on 2.4 release, npoggi and winglungngai saw more than 10% performance regression on the following queries: q67, q24a and q24b. After we applying the PR https://github.com/apache/spark/pull/22338, the performance regression still exists. If we revert the changes in https://github.com/apache/spark/pull/19222, npoggi and winglungngai found the performance regression was resolved. Thus, this PR is to revert the related changes for unblocking the 2.4 release.

In the future release, we still can continue the investigation and find out the root cause of the regression.

## How was this patch tested?

The existing test cases

Closes#22361 from gatorsmile/revertMemoryBlock.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Add spark.executor.pyspark.memory limit for K8S

## How was this patch tested?

Unit and Integration tests

Closes#22298 from ifilonenko/SPARK-25021.

Authored-by: Ilan Filonenko <if56@cornell.edu>

Signed-off-by: Holden Karau <holden@pigscanfly.ca>

## What changes were proposed in this pull request?

Add new optimization rule to eliminate unnecessary shuffling by flipping adjacent Window expressions.

## How was this patch tested?

Tested with unit tests, integration tests, and manual tests.

Closes#17899 from ptkool/adjacent_window_optimization.

Authored-by: ptkool <michael.styles@shopify.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

At Spark 2.0.0, SPARK-14335 adds some [commented-out test coverages](https://github.com/apache/spark/pull/12117/files#diff-dd4b39a56fac28b1ced6184453a47358R177

). This PR enables them because it's supported since 2.0.0.

## How was this patch tested?

Pass the Jenkins with re-enabled test coverage.

Closes#22363 from dongjoon-hyun/SPARK-25375.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Deprecate public APIs from ImageSchema.

## How was this patch tested?

N/A

Closes#22349 from WeichenXu123/image_api_deprecate.

Authored-by: WeichenXu <weichen.xu@databricks.com>

Signed-off-by: Xiangrui Meng <meng@databricks.com>

## What changes were proposed in this pull request?

This took me a while to debug and find out. Looks we better at least leave a debug log that SQL text for a view will be used.

Here's how I got there:

**Hive:**

```

CREATE TABLE emp AS SELECT 'user' AS name, 'address' as address;

CREATE DATABASE d100;

CREATE FUNCTION d100.udf100 AS 'org.apache.hadoop.hive.ql.udf.generic.GenericUDFUpper';

CREATE VIEW testview AS SELECT d100.udf100(name) FROM default.emp;

```

**Spark:**

```

sql("SELECT * FROM testview").show()

```

```

scala> sql("SELECT * FROM testview").show()

org.apache.spark.sql.AnalysisException: Undefined function: 'd100.udf100'. This function is neither a registered temporary function nor a permanent function registered in the database 'default'.; line 1 pos 7

```

Under the hood, it actually makes sense since the view is defined as `SELECT d100.udf100(name) FROM default.emp;` and Hive API:

```

org.apache.hadoop.hive.ql.metadata.Table.getViewExpandedText()

```

This returns a wrongly qualified SQL string for the view as below:

```

SELECT `d100.udf100`(`emp`.`name`) FROM `default`.`emp`

```

which works fine in Hive but not in Spark.

## How was this patch tested?

Manually:

```

18/09/06 19:32:48 DEBUG HiveSessionCatalog: 'SELECT `d100.udf100`(`emp`.`name`) FROM `default`.`emp`' will be used for the view(testview).

```

Closes#22351 from HyukjinKwon/minor-debug.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

Add new executor level memory metrics (JVM used memory, on/off heap execution memory, on/off heap storage memory, on/off heap unified memory, direct memory, and mapped memory), and expose via the executors REST API. This information will help provide insight into how executor and driver JVM memory is used, and for the different memory regions. It can be used to help determine good values for spark.executor.memory, spark.driver.memory, spark.memory.fraction, and spark.memory.storageFraction.

## What changes were proposed in this pull request?

An ExecutorMetrics class is added, with jvmUsedHeapMemory, jvmUsedNonHeapMemory, onHeapExecutionMemory, offHeapExecutionMemory, onHeapStorageMemory, and offHeapStorageMemory, onHeapUnifiedMemory, offHeapUnifiedMemory, directMemory and mappedMemory. The new ExecutorMetrics is sent by executors to the driver as part of the Heartbeat. A heartbeat is added for the driver as well, to collect these metrics for the driver.

The EventLoggingListener store information about the peak values for each metric, per active stage and executor. When a StageCompleted event is seen, a StageExecutorsMetrics event will be logged for each executor, with peak values for the stage.

The AppStatusListener records the peak values for each memory metric.

The new memory metrics are added to the executors REST API.

## How was this patch tested?

New unit tests have been added. This was also tested on our cluster.

Author: Edwina Lu <edlu@linkedin.com>

Author: Imran Rashid <irashid@cloudera.com>

Author: edwinalu <edwina.lu@gmail.com>

Closes#21221 from edwinalu/SPARK-23429.2.

## What changes were proposed in this pull request?

Fix unused imports & outdated comments on `kafka-0-10-sql` module. (Found while I was working on [SPARK-23539](https://github.com/apache/spark/pull/22282))

## How was this patch tested?

Existing unit tests.

Closes#22342 from dongjinleekr/feature/fix-kafka-sql-trivials.

Authored-by: Lee Dongjin <dongjin@apache.org>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Add [flake8](http://flake8.pycqa.org) tests to find Python syntax errors and undefined names.

__E901,E999,F821,F822,F823__ are the "_showstopper_" flake8 issues that can halt the runtime with a SyntaxError, NameError, etc. Most other flake8 issues are merely "style violations" -- useful for readability but they do not effect runtime safety.

* F821: undefined name `name`

* F822: undefined name `name` in `__all__`

* F823: local variable name referenced before assignment

* E901: SyntaxError or IndentationError

* E999: SyntaxError -- failed to compile a file into an Abstract Syntax Tree

## How was this patch tested?

$ __flake8 . --count --select=E901,E999,F821,F822,F823 --show-source --statistics__

$ __flake8 . --count --exit-zero --max-complexity=10 --max-line-length=127 --statistics__

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#22266 from cclauss/patch-3.

Authored-by: cclauss <cclauss@bluewin.ch>

Signed-off-by: Holden Karau <holden@pigscanfly.ca>

## What changes were proposed in this pull request?

Before Apache Spark 2.3, table properties were ignored when writing data to a hive table(created with STORED AS PARQUET/ORC syntax), because the compression configurations were not passed to the FileFormatWriter in hadoopConf. Then it was fixed in #20087. But actually for CTAS with USING PARQUET/ORC syntax, table properties were ignored too when convertMastore, so the test case for CTAS not supported.

Now it has been fixed in #20522 , the test case should be enabled too.

## How was this patch tested?

This only re-enables the test cases of previous PR.

Closes#22302 from fjh100456/compressionCodec.

Authored-by: fjh100456 <fu.jinhua6@zte.com.cn>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Add test cases for fromString

## How was this patch tested?

N/A

Closes#22345 from gatorsmile/addTest.

Authored-by: Xiao Li <gatorsmile@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

In SharedSparkSession and TestHive, we need to disable the rule ConvertToLocalRelation for better test case coverage.

## How was this patch tested?

Identify the failures after excluding "ConvertToLocalRelation" rule.

Closes#22270 from dilipbiswal/SPARK-25267-final.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

This pr removed the method `updateBytesReadWithFileSize` in `FileScanRDD` because it computes input metrics by file size supported in Hadoop 2.5 and earlier. The current Spark does not support the versions, so it causes wrong input metric numbers.

This is rework from #22232.

Closes#22232

## How was this patch tested?

Added tests in `FileBasedDataSourceSuite`.

Closes#22324 from maropu/pr22232-2.

Lead-authored-by: dujunling <dujunling@huawei.com>

Co-authored-by: Takeshi Yamamuro <yamamuro@apache.org>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This adds a test following https://github.com/apache/spark/pull/21638

## How was this patch tested?

Existing tests and new test.

Closes#22356 from srowen/SPARK-22357.2.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

How to reproduce permission issue:

```sh

# build spark

./dev/make-distribution.sh --name SPARK-25330 --tgz -Phadoop-2.7 -Phive -Phive-thriftserver -Pyarn

tar -zxf spark-2.4.0-SNAPSHOT-bin-SPARK-25330.tar && cd spark-2.4.0-SNAPSHOT-bin-SPARK-25330

export HADOOP_PROXY_USER=user_a

bin/spark-sql

export HADOOP_PROXY_USER=user_b

bin/spark-sql

```

```java

Exception in thread "main" java.lang.RuntimeException: org.apache.hadoop.security.AccessControlException: Permission denied: user=user_b, access=EXECUTE, inode="/tmp/hive-$%7Buser.name%7D/user_b/668748f2-f6c5-4325-a797-fd0a7ee7f4d4":user_b:hadoop:drwx------

at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.check(FSPermissionChecker.java:319)

at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkTraverse(FSPermissionChecker.java:259)

at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:205)

at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:190)

```

The issue occurred in this commit: feb886f209. This pr revert Hadoop 2.7 to 2.7.3 to avoid this issue.

## How was this patch tested?

unit tests and manual tests.

Closes#22327 from wangyum/SPARK-25330.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This is a follow-up pr of #22200.

When casting to decimal type, if `Cast.canNullSafeCastToDecimal()`, overflow won't happen, so we don't need to check the result of `Decimal.changePrecision()`.

## How was this patch tested?

Existing tests.

Closes#22352 from ueshin/issues/SPARK-25208/reduce_code_size.

Authored-by: Takuya UESHIN <ueshin@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The default behaviour of Spark on K8S currently is to create `emptyDir` volumes to back `SPARK_LOCAL_DIRS`. In some environments e.g. diskless compute nodes this may actually hurt performance because these are backed by the Kubelet's node storage which on a diskless node will typically be some remote network storage.

Even if this is enterprise grade storage connected via a high speed interconnect the way Spark uses these directories as scratch space (lots of relatively small short lived files) has been observed to cause serious performance degradation. Therefore we would like to provide the option to use K8S's ability to instead back these `emptyDir` volumes with `tmpfs`. Therefore this PR adds a configuration option that enables `SPARK_LOCAL_DIRS` to be backed by Memory backed `emptyDir` volumes rather than the default.

Documentation is added to describe both the default behaviour plus this new option and its implications. One of which is that scratch space then counts towards your pods memory limits and therefore users will need to adjust their memory requests accordingly.

*NB* - This is an alternative version of PR #22256 reduced to just the `tmpfs` piece

## How was this patch tested?

Ran with this option in our diskless compute environments to verify functionality

Author: Rob Vesse <rvesse@dotnetrdf.org>

Closes#22323 from rvesse/SPARK-25262-tmpfs.

## What changes were proposed in this pull request?

Add value length check in `_create_row`, forbid extra value for custom Row in PySpark.

## How was this patch tested?

New UT in pyspark-sql

Closes#22140 from xuanyuanking/SPARK-25072.

Lead-authored-by: liyuanjian <liyuanjian@baidu.com>

Co-authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

## What changes were proposed in this pull request?

mapValues in scala is currently not serializable. To avoid the serialization issue while running pageRank, we need to use map instead of mapValues.

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#22271 from shahidki31/master_latest.

Authored-by: Shahid <shahidki31@gmail.com>

Signed-off-by: Joseph K. Bradley <joseph@databricks.com>

## What changes were proposed in this pull request?

This PR proposes to add another example for multiple grouping key in group aggregate pandas UDF since this feature could make users still confused.

## How was this patch tested?

Manually tested and documentation built.

Closes#22329 from HyukjinKwon/SPARK-25328.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

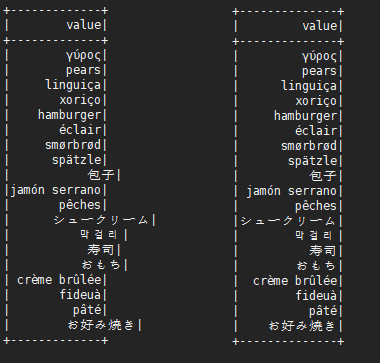

This is not a perfect solution. It is designed to minimize complexity on the basis of solving problems.

It is effective for English, Chinese characters, Japanese, Korean and so on.

```scala

before:

+---+---------------------------+-------------+

|id |中国 |s2 |

+---+---------------------------+-------------+

|1 |ab |[a] |

|2 |null |[中国, abc] |

|3 |ab1 |[hello world]|

|4 |か行 きゃ(kya) きゅ(kyu) きょ(kyo) |[“中国] |

|5 |中国(你好)a |[“中(国), 312] |

|6 |中国山(东)服务区 |[“中(国)] |

|7 |中国山东服务区 |[中(国)] |

|8 | |[中国] |

+---+---------------------------+-------------+

after:

+---+-----------------------------------+----------------+

|id |中国 |s2 |

+---+-----------------------------------+----------------+

|1 |ab |[a] |

|2 |null |[中国, abc] |

|3 |ab1 |[hello world] |

|4 |か行 きゃ(kya) きゅ(kyu) きょ(kyo) |[“中国] |

|5 |中国(你好)a |[“中(国), 312]|

|6 |中国山(东)服务区 |[“中(国)] |

|7 |中国山东服务区 |[中(国)] |

|8 | |[中国] |

+---+-----------------------------------+----------------+

```

## What changes were proposed in this pull request?

When there are wide characters such as Chinese characters or Japanese characters in the data, the show method has a alignment problem.

Try to fix this problem.

## How was this patch tested?

(Please explain how this patch was tested. E.g. unit tests, integration tests, manual tests)

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#22048 from xuejianbest/master.

Authored-by: xuejianbest <384329882@qq.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

SPARK-10399 introduced a performance regression on the hash computation for UTF8String.

The regression can be evaluated with the code attached in the JIRA. That code runs in about 120 us per method on my laptop (MacBook Pro 2.5 GHz Intel Core i7, RAM 16 GB 1600 MHz DDR3) while the code from branch 2.3 takes on the same machine about 45 us for me. After the PR, the code takes about 45 us on the master branch too.

## How was this patch tested?

running the perf test from the JIRA

Closes#22338 from mgaido91/SPARK-25317.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In the PR, I propose to extended `to_json` and support any types as element types of input arrays. It should allow converting arrays of primitive types and arrays of arrays. For example:

```

select to_json(array('1','2','3'))

> ["1","2","3"]

select to_json(array(array(1,2,3),array(4)))

> [[1,2,3],[4]]

```

## How was this patch tested?

Added a couple sql tests for arrays of primitive type and of arrays. Also I added round trip test `from_json` -> `to_json`.

Closes#22226 from MaxGekk/to_json-array.

Authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

`HiveExternalCatalogVersionsSuite` Scala-2.12 test has been failing due to class path issue. It is marked as `ABORTED` because it fails at `beforeAll` during data population stage.

- https://amplab.cs.berkeley.edu/jenkins/view/Spark%20QA%20Test%20(Dashboard)/job/spark-master-test-maven-hadoop-2.7-ubuntu-scala-2.12/

```

org.apache.spark.sql.hive.HiveExternalCatalogVersionsSuite *** ABORTED ***

Exception encountered when invoking run on a nested suite - spark-submit returned with exit code 1.

```

The root cause of the failure is that `runSparkSubmit` mixes 2.4.0-SNAPSHOT classes and old Spark (2.1.3/2.2.2/2.3.1) together during `spark-submit`. This PR aims to provide `non-test` mode execution mode to `runSparkSubmit` by removing the followings.

- SPARK_TESTING

- SPARK_SQL_TESTING

- SPARK_PREPEND_CLASSES

- SPARK_DIST_CLASSPATH

Previously, in the class path, new Spark classes are behind the old Spark classes. So, new ones are unseen. However, Spark 2.4.0 reveals this bug due to the recent data source class changes.

## How was this patch tested?

Manual test. After merging, it will be tested via Jenkins.

```scala

$ dev/change-scala-version.sh 2.12

$ build/mvn -DskipTests -Phive -Pscala-2.12 clean package

$ build/mvn -Phive -Pscala-2.12 -Dtest=none -DwildcardSuites=org.apache.spark.sql.hive.HiveExternalCatalogVersionsSuite test

...

HiveExternalCatalogVersionsSuite:

- backward compatibility

...

Tests: succeeded 1, failed 0, canceled 0, ignored 0, pending 0

All tests passed.

```

Closes#22340 from dongjoon-hyun/SPARK-25337.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

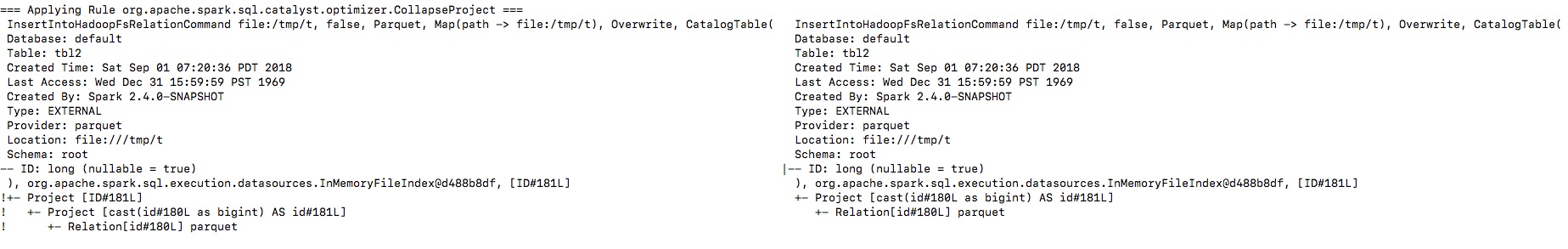

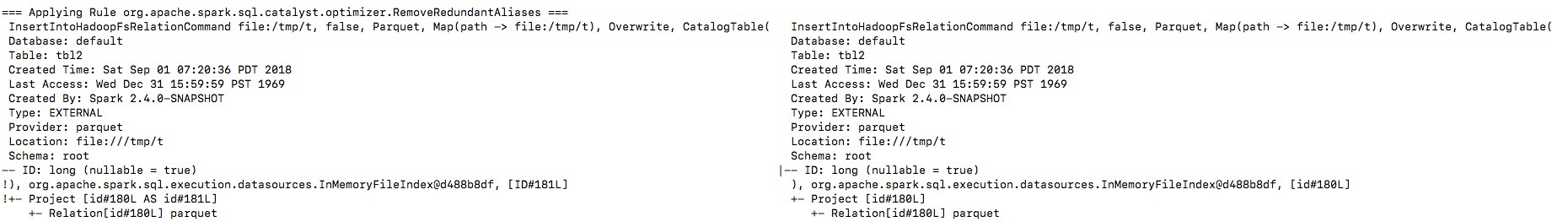

## What changes were proposed in this pull request?

Let's see the follow example:

```

val location = "/tmp/t"

val df = spark.range(10).toDF("id")

df.write.format("parquet").saveAsTable("tbl")

spark.sql("CREATE VIEW view1 AS SELECT id FROM tbl")

spark.sql(s"CREATE TABLE tbl2(ID long) USING parquet location $location")

spark.sql("INSERT OVERWRITE TABLE tbl2 SELECT ID FROM view1")

println(spark.read.parquet(location).schema)

spark.table("tbl2").show()

```

The output column name in schema will be `id` instead of `ID`, thus the last query shows nothing from `tbl2`.

By enabling the debug message we can see that the output naming is changed from `ID` to `id`, and then the `outputColumns` in `InsertIntoHadoopFsRelationCommand` is changed in `RemoveRedundantAliases`.

**To guarantee correctness**, we should change the output columns from `Seq[Attribute]` to `Seq[String]` to avoid its names being replaced by optimizer.

I will fix project elimination related rules in https://github.com/apache/spark/pull/22311 after this one.

## How was this patch tested?

Unit test.

Closes#22320 from gengliangwang/fixOutputSchema.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Upgrade chill to 0.9.3, Kryo to 4.0.2, to get bug fixes and improvements.

The resolved tickets includes:

- SPARK-25258 Upgrade kryo package to version 4.0.2

- SPARK-23131 Kryo raises StackOverflow during serializing GLR model

- SPARK-25176 Kryo fails to serialize a parametrised type hierarchy

More details:

https://github.com/twitter/chill/releases/tag/v0.9.3cc3910d501

## How was this patch tested?

Existing tests.

Closes#22179 from wangyum/SPARK-23131.

Lead-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Zinc is 23.5MB (tgz).

```

$ curl -LO https://downloads.lightbend.com/zinc/0.3.15/zinc-0.3.15.tgz

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 23.5M 100 23.5M 0 0 35.4M 0 --:--:-- --:--:-- --:--:-- 35.3M

```

Currently, Spark downloads Zinc once. However, it occurs too many times in build systems. This PR aims to skip Zinc downloading when the system already has it.

```

$ build/mvn clean

exec: curl --progress-bar -L https://downloads.lightbend.com/zinc/0.3.15/zinc-0.3.15.tgz

######################################################################## 100.0%

```

This will reduce many resources(CPU/Networks/DISK) at least in Mac and Docker-based build system.

## How was this patch tested?

Pass the Jenkins.

Closes#22333 from dongjoon-hyun/SPARK-25335.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

An alternative fix for https://github.com/apache/spark/pull/21698

When Spark rerun tasks for an RDD, there are 3 different behaviors:

1. determinate. Always return the same result with same order when rerun.

2. unordered. Returns same data set in random order when rerun.

3. indeterminate. Returns different result when rerun.

Normally Spark doesn't need to care about it. Spark runs stages one by one, when a task is failed, just rerun it. Although the rerun task may return a different result, users will not be surprised.

However, Spark may rerun a finished stage when seeing fetch failures. When this happens, Spark needs to rerun all the tasks of all the succeeding stages if the RDD output is indeterminate, because the input of the succeeding stages has been changed.

If the RDD output is determinate, we only need to rerun the failed tasks of the succeeding stages, because the input doesn't change.

If the RDD output is unordered, it's same as determinate, because shuffle partitioner is always deterministic(round-robin partitioner is not a shuffle partitioner that extends `org.apache.spark.Partitioner`), so the reducers will still get the same input data set.

This PR fixed the failure handling for `repartition`, to avoid correctness issues.

For `repartition`, it applies a stateful map function to generate a round-robin id, which is order sensitive and makes the RDD's output indeterminate. When the stage contains `repartition` reruns, we must also rerun all the tasks of all the succeeding stages.

**future improvement:**

1. Currently we can't rollback and rerun a shuffle map stage, and just fail. We should fix it later. https://issues.apache.org/jira/browse/SPARK-25341

2. Currently we can't rollback and rerun a result stage, and just fail. We should fix it later. https://issues.apache.org/jira/browse/SPARK-25342

3. We should provide public API to allow users to tag the random level of the RDD's computing function.

## How is this pull request tested?

a new test case

Closes#22112 from cloud-fan/repartition.

Lead-authored-by: Wenchen Fan <wenchen@databricks.com>

Co-authored-by: Xingbo Jiang <xingbo.jiang@databricks.com>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>

Running a large Spark job with speculation turned on was causing executor heartbeats to time out on the driver end after sometime and eventually, after hitting the max number of executor failures, the job would fail.

## What changes were proposed in this pull request?

The main reason for the heartbeat timeouts was that the heartbeat-receiver-event-loop-thread was blocked waiting on the TaskSchedulerImpl object which was being held by one of the dispatcher-event-loop threads executing the method dequeueSpeculativeTasks() in TaskSetManager.scala. On further analysis of the heartbeat receiver method executorHeartbeatReceived() in TaskSchedulerImpl class, we found out that instead of waiting to acquire the lock on the TaskSchedulerImpl object, we can remove that lock and make the operations to the global variables inside the code block to be atomic. The block of code in that method only uses one global HashMap taskIdToTaskSetManager. Making that map a ConcurrentHashMap, we are ensuring atomicity of operations and speeding up the heartbeat receiver thread operation.

## How was this patch tested?

Screenshots of the thread dump have been attached below:

**heartbeat-receiver-event-loop-thread:**

<img width="1409" alt="screen shot 2018-08-24 at 9 19 57 am" src="https://user-images.githubusercontent.com/22228190/44593413-e25df780-a788-11e8-9520-176a18401a59.png">

**dispatcher-event-loop-thread:**

<img width="1409" alt="screen shot 2018-08-24 at 9 21 56 am" src="https://user-images.githubusercontent.com/22228190/44593484-13d6c300-a789-11e8-8d88-34b1d51d4541.png">

Closes#22221 from pgandhi999/SPARK-25231.

Authored-by: pgandhi <pgandhi@oath.com>

Signed-off-by: Thomas Graves <tgraves@apache.org>

## What changes were proposed in this pull request?

Implement an image schema datasource.

This image datasource support:

- partition discovery (loading partitioned images)

- dropImageFailures (the same behavior with `ImageSchema.readImage`)

- path wildcard matching (the same behavior with `ImageSchema.readImage`)

- loading recursively from directory (different from `ImageSchema.readImage`, but use such path: `/path/to/dir/**`)

This datasource **NOT** support:

- specify `numPartitions` (it will be determined by datasource automatically)

- sampling (you can use `df.sample` later but the sampling operator won't be pushdown to datasource)

## How was this patch tested?

Unit tests.

## Benchmark

I benchmark and compare the cost time between old `ImageSchema.read` API and my image datasource.

**cluster**: 4 nodes, each with 64GB memory, 8 cores CPU

**test dataset**: Flickr8k_Dataset (about 8091 images)

**time cost**:

- My image datasource time (automatically generate 258 partitions): 38.04s

- `ImageSchema.read` time (set 16 partitions): 68.4s

- `ImageSchema.read` time (set 258 partitions): 90.6s

**time cost when increase image number by double (clone Flickr8k_Dataset and loads double number images)**:

- My image datasource time (automatically generate 515 partitions): 95.4s

- `ImageSchema.read` (set 32 partitions): 109s

- `ImageSchema.read` (set 515 partitions): 105s

So we can see that my image datasource implementation (this PR) bring some performance improvement compared against old`ImageSchema.read` API.

Closes#22328 from WeichenXu123/image_datasource.

Authored-by: WeichenXu <weichen.xu@databricks.com>

Signed-off-by: Xiangrui Meng <meng@databricks.com>

## What changes were proposed in this pull request?

This is a follow-up of #22313 and aim to ignore the micro benchmark test which takes over 2 minutes in Jenkins.

- https://amplab.cs.berkeley.edu/jenkins/view/Spark%20QA%20Test%20(Dashboard)/job/spark-master-test-sbt-hadoop-2.6/4939/consoleFull

## How was this patch tested?

The test case should be ignored in Jenkins.

```

[info] FilterPushdownBenchmark:

...

[info] - Pushdown benchmark with many filters !!! IGNORED !!!

```

Closes#22336 from dongjoon-hyun/SPARK-25306-2.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>

The problem occurs because stage object is removed from liveStages in

AppStatusListener onStageCompletion. Because of this any onTaskEnd event

received after onStageCompletion event do not update stage metrics.

The fix is to retain stage objects in liveStages until all tasks are complete.

1. Fixed the reproducible example posted in the JIRA

2. Added unit test

Closes#22209 from ankuriitg/ankurgupta/SPARK-24415.

Authored-by: ankurgupta <ankur.gupta@cloudera.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

Add a new metric to measure the executor's process (JVM) CPU time.

## How was this patch tested?

Manually tested on a Spark cluster (see SPARK-25228 for an example screenshot).

Closes#22218 from LucaCanali/AddExecutrCPUTimeMetric.

Authored-by: LucaCanali <luca.canali@cern.ch>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This is a followup of https://github.com/apache/spark/pull/22259 .

Scala case class has a wide surface: apply, unapply, accessors, copy, etc.

In https://github.com/apache/spark/pull/22259 , we change the type of `UserDefinedFunction.inputTypes` from `Option[Seq[DataType]]` to `Option[Seq[Schema]]`. This breaks backward compatibility.

This PR changes the type back, and use a `var` to keep the new nullable info.

## How was this patch tested?

N/A

Closes#22319 from cloud-fan/revert.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Revert SPARK-24863 (#21819) and SPARK-24748 (#21721) as per discussion in #21721. We will revisit them when the data source v2 APIs are out.

## How was this patch tested?

Jenkins

Closes#22334 from zsxwing/revert-SPARK-24863-SPARK-24748.

Authored-by: Shixiong Zhu <zsxwing@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The configuration parameter "spark.shuffle.service.enabled" has defined in `package.scala`, and it is also used in many place, so we can replace it with `SHUFFLE_SERVICE_ENABLED`.

and unified this configuration parameter "spark.shuffle.service.port" together.

## How was this patch tested?

N/A

Closes#22306 from 10110346/unifiedserviceenable.

Authored-by: liuxian <liu.xian3@zte.com.cn>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In both ORC data sources, `createFilter` function has exponential time complexity due to its skewed filter tree generation. This PR aims to improve it by using new `buildTree` function.

**REPRODUCE**

```scala

// Create and read 1 row table with 1000 columns

sql("set spark.sql.orc.filterPushdown=true")

val selectExpr = (1 to 1000).map(i => s"id c$i")

spark.range(1).selectExpr(selectExpr: _*).write.mode("overwrite").orc("/tmp/orc")

print(s"With 0 filters, ")

spark.time(spark.read.orc("/tmp/orc").count)

// Increase the number of filters

(20 to 30).foreach { width =>

val whereExpr = (1 to width).map(i => s"c$i is not null").mkString(" and ")

print(s"With $width filters, ")

spark.time(spark.read.orc("/tmp/orc").where(whereExpr).count)

}

```

**RESULT**

```scala

With 0 filters, Time taken: 653 ms

With 20 filters, Time taken: 962 ms

With 21 filters, Time taken: 1282 ms

With 22 filters, Time taken: 1982 ms

With 23 filters, Time taken: 3855 ms

With 24 filters, Time taken: 6719 ms

With 25 filters, Time taken: 12669 ms

With 26 filters, Time taken: 25032 ms

With 27 filters, Time taken: 49585 ms

With 28 filters, Time taken: 98980 ms // over 1 min 38 seconds

With 29 filters, Time taken: 198368 ms // over 3 mins

With 30 filters, Time taken: 393744 ms // over 6 mins

```

**AFTER THIS PR**

```scala

With 0 filters, Time taken: 774 ms

With 20 filters, Time taken: 601 ms

With 21 filters, Time taken: 399 ms

With 22 filters, Time taken: 679 ms

With 23 filters, Time taken: 363 ms

With 24 filters, Time taken: 342 ms

With 25 filters, Time taken: 336 ms

With 26 filters, Time taken: 352 ms

With 27 filters, Time taken: 322 ms

With 28 filters, Time taken: 302 ms

With 29 filters, Time taken: 307 ms

With 30 filters, Time taken: 301 ms

```

## How was this patch tested?

Pass the Jenkins with newly added test cases.

Closes#22313 from dongjoon-hyun/SPARK-25306.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

I made one pass over barrier APIs added to Spark 2.4 and updates some scopes and docs. I will update Python docs once Scala doc was reviewed.

One major issue is that `BarrierTaskContext` implements `TaskContextImpl` that exposes some public methods. And internally there were several direct references to `TaskContextImpl` methods instead of `TaskContext`. This PR moved some methods from `TaskContextImpl` to `TaskContext`, remaining package private, and used delegate methods to avoid inheriting `TaskContextImp` and exposing unnecessary APIs.

TODOs:

- [x] scala doc

- [x] python doc (#22261 ).

Closes#22240 from mengxr/SPARK-25248.

Authored-by: Xiangrui Meng <meng@databricks.com>

Signed-off-by: Xiangrui Meng <meng@databricks.com>

## What changes were proposed in this pull request?

Previously in `TakeOrderedAndProjectSuite` the SparkSession will not get recycled when the test suite finishes.

## How was this patch tested?

N/A

Closes#22330 from jiangxb1987/SPARK-19355.

Authored-by: Xingbo Jiang <xingbo.jiang@databricks.com>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

This PR integrates handling of `UnsafeArrayData` and `GenericArrayData` into one. The current `CodeGenerator.createUnsafeArray` handles only allocation of `UnsafeArrayData`.

This PR introduces a new method `createArrayData` that returns a code to allocate `UnsafeArrayData` or `GenericArrayData` and to assign a value into the allocated array.

This PR also reduce the size of generated code by calling a runtime helper.

This PR replaced `createArrayData` with `createUnsafeArray`. This PR also refactor `ArraySetLike` that can be used for `ArrayDistinct`, too.

This PR also refactors`ArrayDistinct` to use `ArraryBuilder`.

## How was this patch tested?

Existing tests

Closes#21912 from kiszk/SPARK-24962.

Lead-authored-by: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

Co-authored-by: Takuya UESHIN <ueshin@happy-camper.st>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This PR fixes a problem that `ArraysOverlap` function throws a `CompilationException` with non-nullable array type.

The following is the stack trace of the original problem:

```

Code generation of arrays_overlap([1,2,3], [4,5,3]) failed:

java.util.concurrent.ExecutionException: org.codehaus.commons.compiler.CompileException: File 'generated.java', Line 56, Column 11: failed to compile: org.codehaus.commons.compiler.CompileException: File 'generated.java', Line 56, Column 11: Expression "isNull_0" is not an rvalue

java.util.concurrent.ExecutionException: org.codehaus.commons.compiler.CompileException: File 'generated.java', Line 56, Column 11: failed to compile: org.codehaus.commons.compiler.CompileException: File 'generated.java', Line 56, Column 11: Expression "isNull_0" is not an rvalue

at com.google.common.util.concurrent.AbstractFuture$Sync.getValue(AbstractFuture.java:306)

at com.google.common.util.concurrent.AbstractFuture$Sync.get(AbstractFuture.java:293)

at com.google.common.util.concurrent.AbstractFuture.get(AbstractFuture.java:116)

at com.google.common.util.concurrent.Uninterruptibles.getUninterruptibly(Uninterruptibles.java:135)

at com.google.common.cache.LocalCache$Segment.getAndRecordStats(LocalCache.java:2410)

at com.google.common.cache.LocalCache$Segment.loadSync(LocalCache.java:2380)

at com.google.common.cache.LocalCache$Segment.lockedGetOrLoad(LocalCache.java:2342)

at com.google.common.cache.LocalCache$Segment.get(LocalCache.java:2257)

at com.google.common.cache.LocalCache.get(LocalCache.java:4000)

at com.google.common.cache.LocalCache.getOrLoad(LocalCache.java:4004)

at com.google.common.cache.LocalCache$LocalLoadingCache.get(LocalCache.java:4874)

at org.apache.spark.sql.catalyst.expressions.codegen.CodeGenerator$.compile(CodeGenerator.scala:1305)

at org.apache.spark.sql.catalyst.expressions.codegen.GenerateMutableProjection$.create(GenerateMutableProjection.scala:143)

at org.apache.spark.sql.catalyst.expressions.codegen.GenerateMutableProjection$.create(GenerateMutableProjection.scala:48)

at org.apache.spark.sql.catalyst.expressions.codegen.GenerateMutableProjection$.create(GenerateMutableProjection.scala:32)

at org.apache.spark.sql.catalyst.expressions.codegen.CodeGenerator.generate(CodeGenerator.scala:1260)

```

## How was this patch tested?

Added test in `CollectionExpressionSuite`.

Closes#22317 from kiszk/SPARK-25310.

Authored-by: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

Signed-off-by: Takuya UESHIN <ueshin@databricks.com>