## What changes were proposed in this pull request?

This pull request fixes [SPARK-26114](https://issues.apache.org/jira/browse/SPARK-26114) issue that occurs when trying to reduce the number of partitions by means of coalesce without shuffling after shuffle-based transformations.

The leak occurs because of not cleaning up `ExternalSorter`'s `readingIterator` field as it's done for its `map` and `buffer` fields.

Additionally there are changes to the `CompletionIterator` to prevent capturing its `sub`-iterator and holding it even after the completion iterator completes. It is necessary because in some cases, e.g. in case of standard scala's `flatMap` iterator (which is used is `CoalescedRDD`'s `compute` method) the next value of the main iterator is assigned to `flatMap`'s `cur` field only after it is available.

For DAGs where ShuffledRDD is a parent of CoalescedRDD it means that the data should be fetched from the map-side of the shuffle, but the process of fetching this data consumes quite a lot of memory in addition to the memory already consumed by the iterator held by `flatMap`'s `cur` field (until it is reassigned).

For the following data

```scala

import org.apache.hadoop.io._

import org.apache.hadoop.io.compress._

import org.apache.commons.lang._

import org.apache.spark._

// generate 100M records of sample data

sc.makeRDD(1 to 1000, 1000)

.flatMap(item => (1 to 100000)

.map(i => new Text(RandomStringUtils.randomAlphanumeric(3).toLowerCase) -> new Text(RandomStringUtils.randomAlphanumeric(1024))))

.saveAsSequenceFile("/tmp/random-strings", Some(classOf[GzipCodec]))

```

and the following job

```scala

import org.apache.hadoop.io._

import org.apache.spark._

import org.apache.spark.storage._

val rdd = sc.sequenceFile("/tmp/random-strings", classOf[Text], classOf[Text])

rdd

.map(item => item._1.toString -> item._2.toString)

.repartitionAndSortWithinPartitions(new HashPartitioner(1000))

.coalesce(10,false)

.count

```

... executed like the following

```bash

spark-shell \

--num-executors=5 \

--executor-cores=2 \

--master=yarn \

--deploy-mode=client \

--conf spark.executor.memoryOverhead=512 \

--conf spark.executor.memory=1g \

--conf spark.dynamicAllocation.enabled=false \

--conf spark.executor.extraJavaOptions='-XX:+HeapDumpOnOutOfMemoryError -XX:HeapDumpPath=/tmp -Dio.netty.noUnsafe=true'

```

... executors are always failing with OutOfMemoryErrors.

The main issue is multiple leaks of ExternalSorter references.

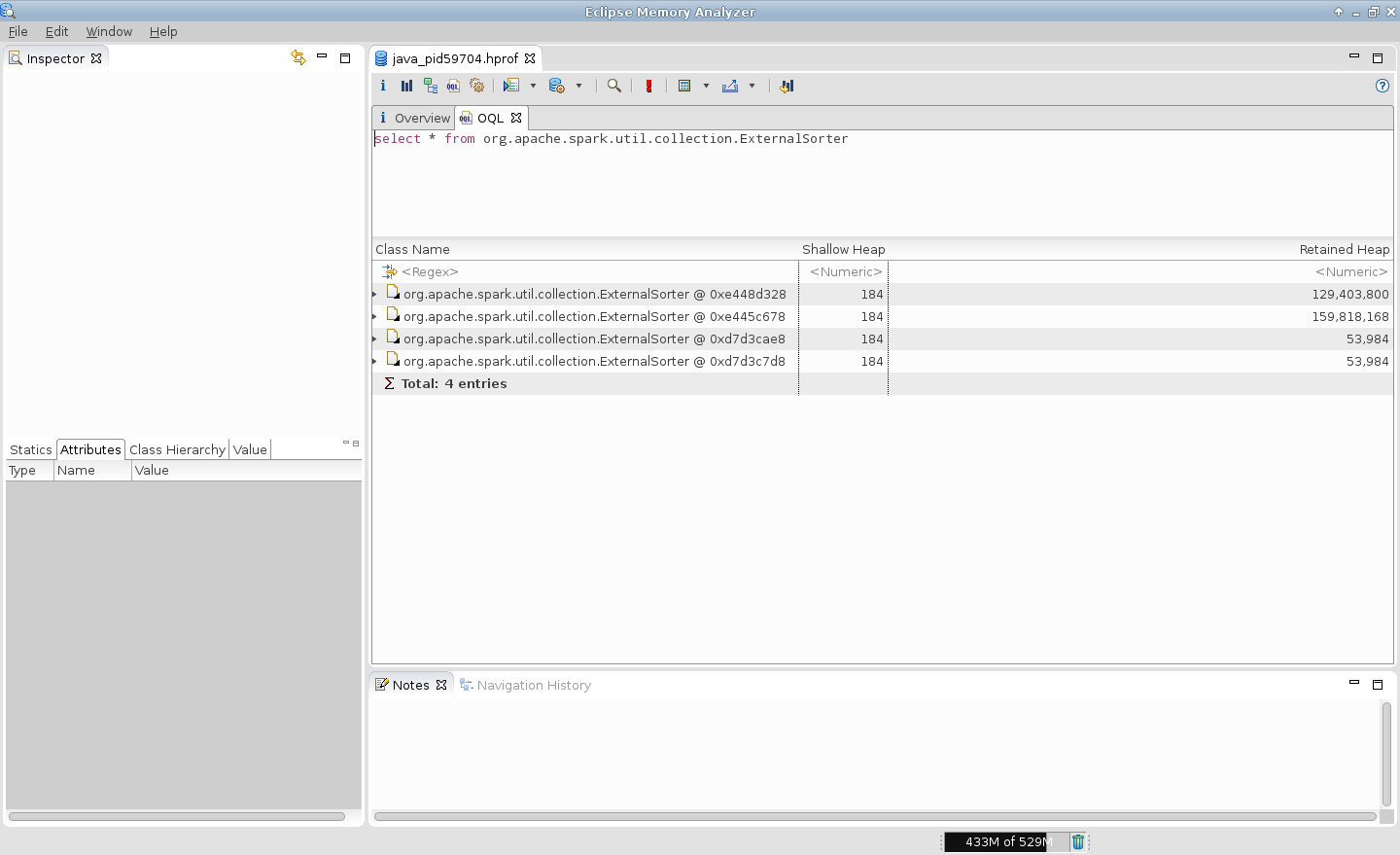

For example, in case of 2 tasks per executor it is expected to be 2 simultaneous instances of ExternalSorter per executor but heap dump generated on OutOfMemoryError shows that there are more ones.

P.S. This PR does not cover cases with CoGroupedRDDs which use ExternalAppendOnlyMap internally, which itself can lead to OutOfMemoryErrors in many places.

## How was this patch tested?

- Existing unit tests

- New unit tests

- Job executions on the live environment

Here is the screenshot before applying this patch

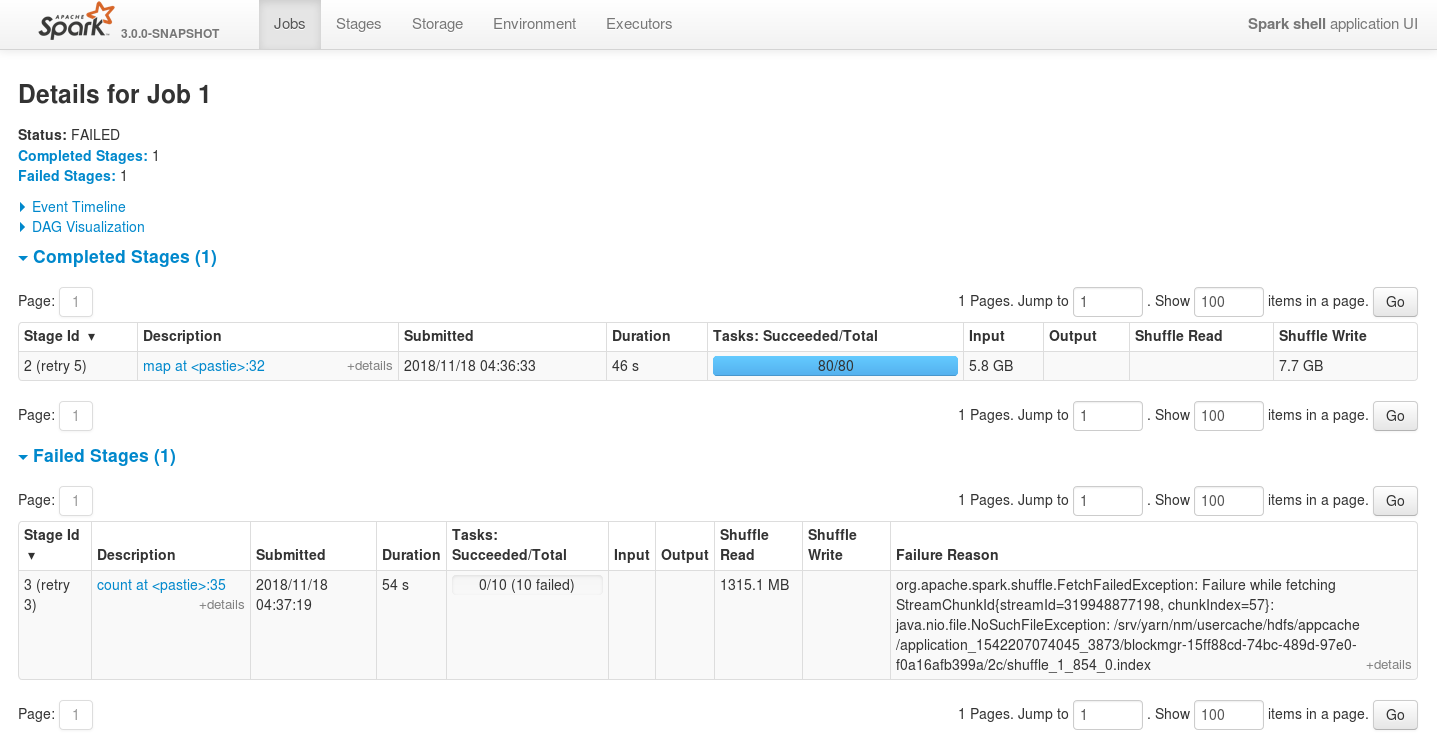

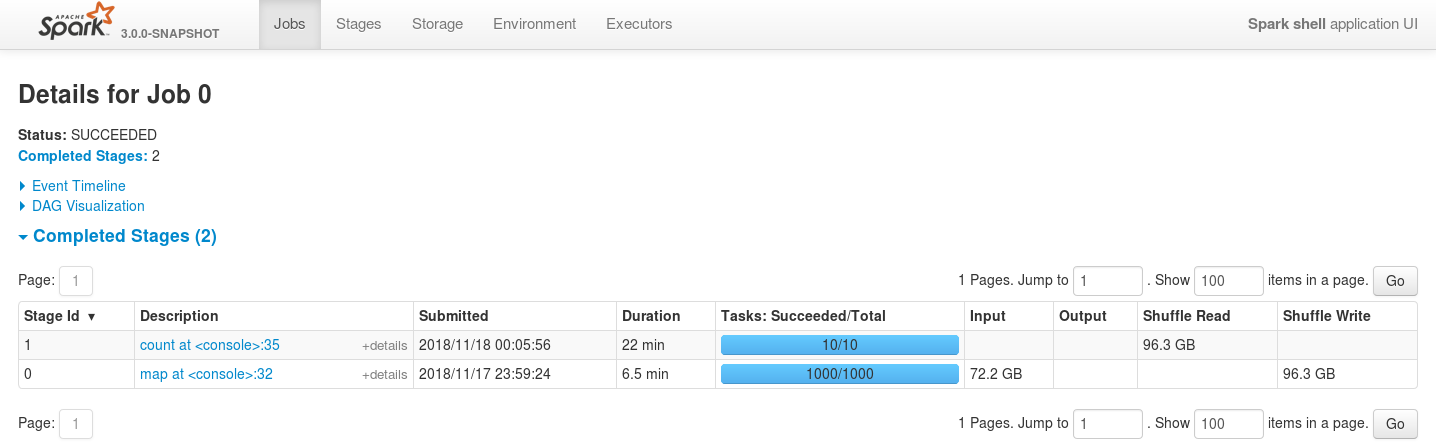

Here is the screenshot after applying this patch

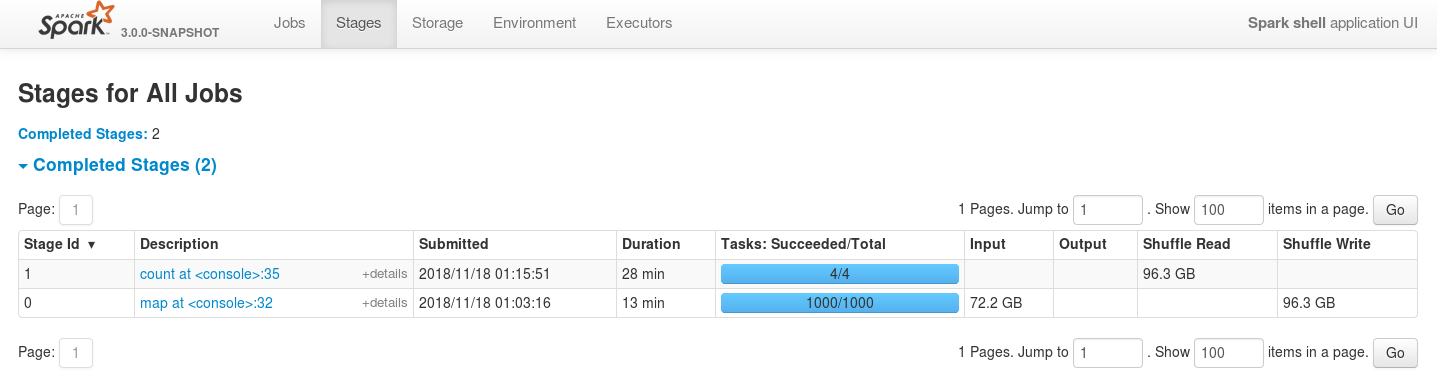

And in case of reducing the number of executors even more the job is still stable

Closes#23083 from szhem/SPARK-26114-externalsorter-leak.

Authored-by: Sergey Zhemzhitsky <szhemzhitski@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This is the write side counterpart to https://github.com/apache/spark/pull/23105

## How was this patch tested?

No behavior change expected, as it is a straightforward refactoring. Updated all existing test cases.

Closes#23106 from rxin/SPARK-26141.

Authored-by: Reynold Xin <rxin@databricks.com>

Signed-off-by: Reynold Xin <rxin@databricks.com>

## What changes were proposed in this pull request?

In https://github.com/apache/spark/pull/23105, due to working on two parallel PRs at once, I made the mistake of committing the copy of the PR that used the name ShuffleMetricsReporter for the interface, rather than the appropriate one ShuffleReadMetricsReporter. This patch fixes that.

## How was this patch tested?

This should be fine as long as compilation passes.

Closes#23147 from rxin/ShuffleReadMetricsReporter.

Authored-by: Reynold Xin <rxin@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

Total tasks in the aggregated table and the tasks table are not matching some times in the WEBUI.

We need to force update the executor summary of the particular executorId, when ever last task of that executor has reached. Currently it force update based on last task on the stage end. So, for some particular executorId task might miss at the stage end.

Tests to reproduce:

```

bin/spark-shell --master yarn --conf spark.executor.instances=3

sc.parallelize(1 to 10000, 10).map{ x => throw new RuntimeException("Bad executor")}.collect()

```

Before patch:

After patch:

Closes#23038 from shahidki31/SPARK-25451.

Authored-by: Shahid <shahidki31@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

Support column sort, pagination and search for Stage Page using jQuery DataTable and REST API. Before this commit, the Stage page generated a hard-coded HTML table that could not support search. Supporting search and sort (over all applications rather than the 20 entries in the current page) in any case will greatly improve the user experience.

Created the stagespage-template.html for displaying application information in datables. Added REST api endpoint and javascript code to fetch data from the endpoint and display it on the data table.

Because of the above change, certain functionalities in the page had to be modified to support the addition of datatables. For example, the toggle checkbox 'Select All' previously would add the checked fields as columns in the Task table and as rows in the Summary Metrics table, but after the change, only columns are added in the Task Table as it got tricky to add rows dynamically in the datatables.

## How was this patch tested?

I have attached the screenshots of the Stage Page UI before and after the fix.

**Before:**

<img width="1419" alt="30564304-35991e1c-9c8a-11e7-850f-2ac7a347f600" src="https://user-images.githubusercontent.com/22228190/42137915-52054558-7d3a-11e8-8c85-433b2c94161d.png">

<img width="1435" alt="31360592-cbaa2bae-ad14-11e7-941d-95b4c7d14970" src="https://user-images.githubusercontent.com/22228190/42137928-79df500a-7d3a-11e8-9068-5630afe46ff3.png">

**After:**

<img width="1432" alt="31360591-c5650ee4-ad14-11e7-9665-5a08d8f21830" src="https://user-images.githubusercontent.com/22228190/42137936-a3fb9f42-7d3a-11e8-8502-22b3897cbf64.png">

<img width="1388" alt="31360604-d266b6b0-ad14-11e7-94b5-dcc4bb5443f4" src="https://user-images.githubusercontent.com/22228190/42137970-0fabc58c-7d3b-11e8-95ad-383b1bd1f106.png">

Closes#21688 from pgandhi999/SPARK-21809-2.3.

Authored-by: pgandhi <pgandhi@oath.com>

Signed-off-by: Thomas Graves <tgraves@apache.org>

## What changes were proposed in this pull request?

The DOI foundation recommends [this new resolver](https://www.doi.org/doi_handbook/3_Resolution.html#3.8). Accordingly, this PR re`sed`s all static DOI links ;-)

## How was this patch tested?

It wasn't, since it seems as safe as a "[typo fix](https://spark.apache.org/contributing.html)".

In case any of the files is included from other projects, and should be updated there, please let me know.

Closes#23129 from katrinleinweber/resolve-DOIs-securely.

Authored-by: Katrin Leinweber <9948149+katrinleinweber@users.noreply.github.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

In the summary section of stage page:

1. the following metrics names can be revised:

Output => Output Size / Records

Shuffle Read: => Shuffle Read Size / Records

Shuffle Write => Shuffle Write Size / Records

After changes, the names are more clear, and consistent with the other names in the same page.

2. The associated job id URL should not contain the 3 tails spaces. Reduce the number of spaces to one, and exclude the space from link. This is consistent with SQL execution page.

## How was this patch tested?

Manual check:

Closes#23125 from gengliangwang/reviseStagePage.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

`deserialize` for kryo, the type of input parameter is ByteBuffer, if it is not backed by an accessible byte array. it will throw `UnsupportedOperationException`

Exception Info:

```

java.lang.UnsupportedOperationException was thrown.

java.lang.UnsupportedOperationException

at java.nio.ByteBuffer.array(ByteBuffer.java:994)

at org.apache.spark.serializer.KryoSerializerInstance.deserialize(KryoSerializer.scala:362)

```

## How was this patch tested?

Added a unit test

Closes#22779 from 10110346/InputStreamKryo.

Authored-by: liuxian <liu.xian3@zte.com.cn>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This patch defines an internal Spark interface for reporting shuffle metrics and uses that in shuffle reader. Before this patch, shuffle metrics is tied to a specific implementation (using a thread local temporary data structure and accumulators). After this patch, callers that define their own shuffle RDDs can create a custom metrics implementation.

With this patch, we would be able to create a better metrics for the SQL layer, e.g. reporting shuffle metrics in the SQL UI, for each exchange operator.

Note that I'm separating read side and write side implementations, as they are very different, to simplify code review. Write side change is at https://github.com/apache/spark/pull/23106

## How was this patch tested?

No behavior change expected, as it is a straightforward refactoring. Updated all existing test cases.

Closes#23105 from rxin/SPARK-26140.

Authored-by: Reynold Xin <rxin@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

the pr #20014 which introduced `SparkOutOfMemoryError` to avoid killing the entire executor when an `OutOfMemoryError `is thrown.

so apply for memory using `MemoryConsumer. allocatePage `when catch exception, use `SparkOutOfMemoryError `instead of `OutOfMemoryError`

## How was this patch tested?

N / A

Closes#23084 from heary-cao/SparkOutOfMemoryError.

Authored-by: caoxuewen <cao.xuewen@zte.com.cn>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This PR is a follow-up PR of #21600 to fix the unnecessary UI redirect.

## How was this patch tested?

Local verification

Closes#23116 from jerryshao/SPARK-24553.

Authored-by: jerryshao <jerryshao@tencent.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

In the PR, I propose:

- new SQL config `spark.sql.debug.maxToStringFields` to control maximum number fields up to which `truncatedString` cuts its input sequences.

- Moving `truncatedString` out of `core` to `sql/catalyst` because it is used only in the `sql/catalyst` packages for restricting number of fields converted to strings from `TreeNode` and expressions of`StructType`.

## How was this patch tested?

Added a test to `QueryExecutionSuite` to check that `spark.sql.debug.maxToStringFields` impacts to behavior of `truncatedString`.

Closes#23039 from MaxGekk/truncated-string-catalyst.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Task summary table displays the summary of the task table in the stage page. However, the 'Duration' metrics of 'task summary' table and 'task table' are not matching. The reason is because, in the 'task summary' we display 'executorRunTime' as the duration, and in the 'task table' the actual duration of the task. Except duration metrics, all other metrics are properly displaying in the task summary.

In Spark2.2, used to show 'executorRunTime' as duration in the 'taskTable'. That is why, in summary metrics also the 'exeuctorRunTime' shows as the duration. So, we need to show 'executorRunTime' as the duration in the tasks table to follow the same behaviour as the previous versions of spark.

## How was this patch tested?

Before patch:

After patch:

Closes#23081 from shahidki31/duratinSummary.

Authored-by: Shahid <shahidki31@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Hotfix a change to SparkHadoopUtil that doesn't work in 2.11

## How was this patch tested?

Existing tests.

Closes#23097 from srowen/SPARK-26043.2.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Introducing spark.ui.requestHeaderSize for configuring Jetty's HTTP requestHeaderSize.

This way long authorization field does not lead to HTTP 413.

## How was this patch tested?

Manually with curl (which version must be at least 7.55).

With the original default value (8k limit):

```bash

# Starting history server with default requestHeaderSize

$ ./sbin/start-history-server.sh

starting org.apache.spark.deploy.history.HistoryServer, logging to /Users/attilapiros/github/spark/logs/spark-attilapiros-org.apache.spark.deploy.history.HistoryServer-1-apiros-MBP.lan.out

# Creating huge header

$ echo -n "X-Custom-Header: " > cookie

$ printf 'A%.0s' {1..9500} >> cookie

# HTTP GET with huge header fails with 431

$ curl -H cookie http://458apiros-MBP.lan:18080/

<h1>Bad Message 431</h1><pre>reason: Request Header Fields Too Large</pre>

# The log contains the error

$ tail -1 /Users/attilapiros/github/spark/logs/spark-attilapiros-org.apache.spark.deploy.history.HistoryServer-1-apiros-MBP.lan.out

18/11/19 21:24:28 WARN HttpParser: Header is too large 8193>8192

```

After:

```bash

# Creating the history properties file with the increased requestHeaderSize

$ echo spark.ui.requestHeaderSize=10000 > history.properties

# Starting Spark History Server with the settings

$ ./sbin/start-history-server.sh --properties-file history.properties

starting org.apache.spark.deploy.history.HistoryServer, logging to /Users/attilapiros/github/spark/logs/spark-attilapiros-org.apache.spark.deploy.history.HistoryServer-1-apiros-MBP.lan.out

# HTTP GET with huge header gives back HTML5 (I have added here only just a part of the response)

$ curl -H cookie http://458apiros-MBP.lan:18080/

<!DOCTYPE html><html>

<head>...

<link rel="shortcut icon" href="/static/spark-logo-77x50px-hd.png"></link>

<title>History Server</title>

</head>

<body>

...

```

Closes#23090 from attilapiros/JettyHeaderSize.

Authored-by: “attilapiros” <piros.attila.zsolt@gmail.com>

Signed-off-by: Imran Rashid <irashid@cloudera.com>

## What changes were proposed in this pull request?

The build has a lot of deprecation warnings. Some are new in Scala 2.12 and Java 11. We've fixed some, but I wanted to take a pass at fixing lots of easy miscellaneous ones here.

They're too numerous and small to list here; see the pull request. Some highlights:

- `BeanInfo` is deprecated in 2.12, and BeanInfo classes are pretty ancient in Java. Instead, case classes can explicitly declare getters

- Eta expansion of zero-arg methods; foo() becomes () => foo() in many cases

- Floating-point Range is inexact and deprecated, like 0.0 to 100.0 by 1.0

- finalize() is finally deprecated (just needs to be suppressed)

- StageInfo.attempId was deprecated and easiest to remove here

I'm not now going to touch some chunks of deprecation warnings:

- Parquet deprecations

- Hive deprecations (particularly serde2 classes)

- Deprecations in generated code (mostly Thriftserver CLI)

- ProcessingTime deprecations (we may need to revive this class as internal)

- many MLlib deprecations because they concern methods that may be removed anyway

- a few Kinesis deprecations I couldn't figure out

- Mesos get/setRole, which I don't know well

- Kafka/ZK deprecations (e.g. poll())

- Kinesis

- a few other ones that will probably resolve by deleting a deprecated method

## How was this patch tested?

Existing tests, including manual testing with the 2.11 build and Java 11.

Closes#23065 from srowen/SPARK-26090.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Empty chunk in ChunkedByteBuffer will truncate the ChunkedByteBufferInputStream.

The detail reason is described in: https://issues.apache.org/jira/browse/SPARK-26068

## How was this patch tested?

Modified current UT to cover this case.

Closes#23040 from LinhongLiu/fix-empty-chunked-byte-buffer.

Lead-authored-by: Liu,Linhong <liulinhong@baidu.com>

Co-authored-by: Xianjin YE <yexianjin@baidu.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Make SparkHadoopUtil private to Spark

## How was this patch tested?

Existing tests.

Closes#23066 from srowen/SPARK-26043.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Don't propagate SPARK_CONF_DIR to the driver in mesos cluster mode.

## How was this patch tested?

I built the 2.3.2 tag with this patch added and deployed a test job to a mesos cluster to confirm that the incorrect SPARK_CONF_DIR was no longer passed from the submit command.

Closes#22937 from mpmolek/fix-conf-dir.

Authored-by: Matt Molek <mpmolek@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

SparkSubmit determines pyspark app by the suffix of primary resource but Livy

uses "spark-internal" as the primary resource when calling spark-submit,

therefore args.isPython is set to false in SparkSubmit.scala.

In Yarn mode, SparkSubmit module is responsible for resolving maven coordinates

and adding them to "spark.submit.pyFiles" so that python's system path can be set correctly.

The fix is to resolve maven coordinates not only when args.isPython is true,

but also when primary resource is spark-internal.

Tested the patch with Livy submitting pyspark app, spark-submit, pyspark with or without packages config.

Signed-off-by: Shanyu Zhao <shzhaomicrosoft.com>

Closes#23009 from shanyu/shanyu-26011.

Authored-by: Shanyu Zhao <shzhao@microsoft.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

register following classes in Kryo:

"org.apache.spark.ml.stat.distribution.MultivariateGaussian",

"org.apache.spark.mllib.stat.distribution.MultivariateGaussian"

## How was this patch tested?

added tests

Due to existing module dependency, I can not import spark-core in mllib-local's testsuits, so I do not add testsuite in `org.apache.spark.ml.stat.distribution.MultivariateGaussianSuite`.

And I notice that class `ClusterStats` in `ClusteringEvaluator` is registered in a different way, should it be modified to keep in line with others in ML? srowen

Closes#22974 from zhengruifeng/kryo_MultivariateGaussian.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Add scala and java lint check rules to ban the usage of `throw new xxxErrors` and fix up all exists instance followed by https://github.com/apache/spark/pull/22989#issuecomment-437939830. See more details in https://github.com/apache/spark/pull/22969.

## How was this patch tested?

Local test with lint-scala and lint-java.

Closes#22989 from xuanyuanking/SPARK-25986.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

…. Other related changes to get JDK 11 working, to test

## What changes were proposed in this pull request?

- Access `sun.misc.Cleaner` (Java 8) and `jdk.internal.ref.Cleaner` (JDK 9+) by reflection (note: the latter only works if illegal reflective access is allowed)

- Access `sun.misc.Unsafe.invokeCleaner` in Java 9+ instead of `sun.misc.Cleaner` (Java 8)

In order to test anything on JDK 11, I also fixed a few small things, which I include here:

- Fix minor JDK 11 compile issues

- Update scala plugin, Jetty for JDK 11, to facilitate tests too

This doesn't mean JDK 11 tests all pass now, but lots do. Note also that the JDK 9+ solution for the Cleaner has a big caveat.

## How was this patch tested?

Existing tests. Manually tested JDK 11 build and tests, and tests covering this change appear to pass. All Java 8 tests should still pass, but this change alone does not achieve full JDK 11 compatibility.

Closes#22993 from srowen/SPARK-24421.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

Currently, we do not have a mechanism to collect driver logs if a user chooses

to run their application in client mode. This is a big issue as admin teams need

to create their own mechanisms to capture driver logs.

This commit adds a logger which, if enabled, adds a local log appender to the

root logger and asynchronously syncs it an application specific log file on hdfs

(Spark Driver Log Dir).

Additionally, this collects spark-shell driver logs at INFO level by default.

The change is that instead of setting root logger level to WARN, we will set the

consoleAppender threshold to WARN, in case of spark-shell. This ensures that

only WARN logs are printed on CONSOLE but other log appenders still capture INFO

(or the default log level logs).

1. Verified that logs are written to local and remote dir

2. Added a unit test case

3. Verified this for spark-shell, client mode and pyspark.

4. Verified in both non-kerberos and kerberos environment

5. Verified with following unexpected termination conditions: Ctrl + C, Driver

OOM, Large Log Files

6. Ran an application in spark-shell and ensured that driver logs were

captured at INFO level

7. Started the application at WARN level, programmatically changed the level to

INFO and ensured that logs on console were printed at INFO level

Closes#22504 from ankuriitg/ankurgupta/SPARK-25118.

Authored-by: ankurgupta <ankur.gupta@cloudera.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

* Implement (optional) use of KryoPool in KryoSerializer, an alternative to the existing implementation of caching a Kryo instance inside KryoSerializerInstance

* Add config key & documentation of spark.kryo.pool in order to turn this on

* Add benchmark KryoSerializerBenchmark to compare new and old implementation

* Add results of benchmark

## How was this patch tested?

Added new tests inside KryoSerializerSuite to test the pool implementation as well as added the pool option to the existing regression testing for SPARK-7766

This is my original work and I license the work to the project under the project’s open source license.

Closes#22855 from patrickbrownsync/kryo-pool.

Authored-by: Patrick Brown <patrick.brown@blyncsy.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Deprecated in Java 11, replace Class.newInstance with Class.getConstructor.getInstance, and primtive wrapper class constructors with valueOf or equivalent

## How was this patch tested?

Existing tests.

Closes#22988 from srowen/SPARK-25984.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Currently, Spark writes Spark version number into Hive Table properties with `spark.sql.create.version`.

```

parameters:{

spark.sql.sources.schema.part.0={

"type":"struct",

"fields":[{"name":"a","type":"integer","nullable":true,"metadata":{}}]

},

transient_lastDdlTime=1541142761,

spark.sql.sources.schema.numParts=1,

spark.sql.create.version=2.4.0

}

```

This PR aims to write Spark versions to ORC/Parquet file metadata with `org.apache.spark.sql.create.version` because we used `org.apache.` prefix in Parquet metadata already. It's different from Hive Table property key `spark.sql.create.version`, but it seems that we cannot change Hive Table property for backward compatibility.

After this PR, ORC and Parquet file generated by Spark will have the following metadata.

**ORC (`native` and `hive` implmentation)**

```

$ orc-tools meta /tmp/o

File Version: 0.12 with ...

...

User Metadata:

org.apache.spark.sql.create.version=3.0.0

```

**PARQUET**

```

$ parquet-tools meta /tmp/p

...

creator: parquet-mr version 1.10.0 (build 031a6654009e3b82020012a18434c582bd74c73a)

extra: org.apache.spark.sql.create.version = 3.0.0

extra: org.apache.spark.sql.parquet.row.metadata = {"type":"struct","fields":[{"name":"id","type":"long","nullable":false,"metadata":{}}]}

```

## How was this patch tested?

Pass the Jenkins with newly added test cases.

This closes#22255.

Closes#22932 from dongjoon-hyun/SPARK-25102.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

HistoryPage.scala counts applications (with a predicate depending on if it is displaying incomplete or complete applications) to check if it must display the dataTable.

Since it only checks if allAppsSize > 0, we could use exists method on the iterator. This way we stop iterating at the first occurence found.

Such a change has been relevant (roughly 12s improvement on page loading) on our cluster that runs tens of thousands of jobs per day.

Closes#22982 from Willymontaz/SPARK-25973.

Authored-by: William Montaz <w.montaz@criteo.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

- Remove some AccumulableInfo .apply() methods

- Remove non-label-specific multiclass precision/recall/fScore in favor of accuracy

- Remove toDegrees/toRadians in favor of degrees/radians (SparkR: only deprecated)

- Remove approxCountDistinct in favor of approx_count_distinct (SparkR: only deprecated)

- Remove unused Python StorageLevel constants

- Remove Dataset unionAll in favor of union

- Remove unused multiclass option in libsvm parsing

- Remove references to deprecated spark configs like spark.yarn.am.port

- Remove TaskContext.isRunningLocally

- Remove ShuffleMetrics.shuffle* methods

- Remove BaseReadWrite.context in favor of session

- Remove Column.!== in favor of =!=

- Remove Dataset.explode

- Remove Dataset.registerTempTable

- Remove SQLContext.getOrCreate, setActive, clearActive, constructors

Not touched yet

- everything else in MLLib

- HiveContext

- Anything deprecated more recently than 2.0.0, generally

## How was this patch tested?

Existing tests

Closes#22921 from srowen/SPARK-25908.

Lead-authored-by: Sean Owen <sean.owen@databricks.com>

Co-authored-by: hyukjinkwon <gurwls223@apache.org>

Co-authored-by: Sean Owen <srowen@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Removal of intermediate structures in HighlyCompressedMapStatus will speed up its creation and deserialization time.

https://issues.apache.org/jira/browse/SPARK-25885

## How was this patch tested?

Additional tests are not necessary for the patch.

Closes#22894 from Koraseg/mapStatusesOptimization.

Authored-by: koraseg <artem.kupchinsky@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

JVMs can't allocate arrays of length exactly Int.MaxValue, so ensure we never try to allocate an array that big. This commit changes some defaults & configs to gracefully fallover to something that doesn't require one large array in some cases; in other cases it simply improves an error message for cases which will still fail.

Closes#22818 from squito/SPARK-25827.

Authored-by: Imran Rashid <irashid@cloudera.com>

Signed-off-by: Imran Rashid <irashid@cloudera.com>

## What changes were proposed in this pull request?

Every time a task is unschedulable because of the condition where no. of task failures < no. of executors available, we currently abort the taskSet - failing the job. This change tries to acquire new executors so that we can complete the job successfully. We try to acquire a new executor only when we can kill an existing idle executor. We fallback to the older implementation where we abort the job if we cannot find an idle executor.

## How was this patch tested?

I performed some manual tests to check and validate the behavior.

```scala

val rdd = sc.parallelize(Seq(1 to 10), 3)

import org.apache.spark.TaskContext

val mapped = rdd.mapPartitionsWithIndex ( (index, iterator) => { if (index == 2) { Thread.sleep(30 * 1000); val attemptNum = TaskContext.get.attemptNumber; if (attemptNum < 3) throw new Exception("Fail for blacklisting")}; iterator.toList.map (x => x + " -> " + index).iterator } )

mapped.collect

```

Closes#22288 from dhruve/bug/SPARK-22148.

Lead-authored-by: Dhruve Ashar <dhruveashar@gmail.com>

Co-authored-by: Dhruve Ashar <dhruve@users.noreply.github.com>

Co-authored-by: Tom Graves <tgraves@apache.org>

Signed-off-by: Thomas Graves <tgraves@apache.org>

## What changes were proposed in this pull request?

Upgrade ASM to 7.x to support JDK11

## How was this patch tested?

Existing tests.

Closes#22953 from dbtsai/asm7.

Authored-by: DB Tsai <d_tsai@apple.com>

Signed-off-by: DB Tsai <d_tsai@apple.com>

## What changes were proposed in this pull request?

Currently definitions of config entries in `core` module are in several files separately. We should move them into `internal/config` to be easy to manage.

## How was this patch tested?

Existing tests.

Closes#22928 from ueshin/issues/SPARK-25926/single_config_file.

Authored-by: Takuya UESHIN <ueshin@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Fix typos and misspellings, per https://github.com/apache/spark-website/pull/158#issuecomment-435790366

## How was this patch tested?

Existing tests.

Closes#22950 from srowen/Typos.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

In `UnsafeSorterSpillWriter.java`, when we write a record to a spill file wtih ` void write(Object baseObject, long baseOffset, int recordLength, long keyPrefix)`, `recordLength` and `keyPrefix` will be written the disk write buffer first, and these will take 12 bytes, so the disk write buffer size must be greater than 12.

If `diskWriteBufferSize` is 10, it will print this exception info:

_java.lang.ArrayIndexOutOfBoundsException: 10

at org.apache.spark.util.collection.unsafe.sort.UnsafeSorterSpillWriter.writeLongToBuffer (UnsafeSorterSpillWriter.java:91)

at org.apache.spark.util.collection.unsafe.sort.UnsafeSorterSpillWriter.write(UnsafeSorterSpillWriter.java:123)

at org.apache.spark.util.collection.unsafe.sort.UnsafeExternalSorter.spillIterator(UnsafeExternalSorter.java:498)

at org.apache.spark.util.collection.unsafe.sort.UnsafeExternalSorter.spill(UnsafeExternalSorter.java:222)

at org.apache.spark.memory.MemoryConsumer.spill(MemoryConsumer.java:65)_

## How was this patch tested?

Existing UT in `UnsafeExternalSorterSuite`

Closes#22754 from 10110346/diskWriteBufferSize.

Authored-by: liuxian <liu.xian3@zte.com.cn>

Signed-off-by: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

## What changes were proposed in this pull request?

Now we use only one `timer` (and thus a backing thread) in `BarrierTaskContext` companion object, and the objects can add `timerTasks` to that `timer`.

## How was this patch tested?

This was tested manually by generating logs and seeing that they look the same as ones before, namely, that is, a partition waiting on another partition for 5seconds generates 4-5 log messages when the frequency of logging is set to 1second.

Closes#22912 from yogeshg/thread.

Authored-by: Yogesh Garg <1059168+yogeshg@users.noreply.github.com>

Signed-off-by: Xingbo Jiang <xingbo.jiang@databricks.com>

## What changes were proposed in this pull request?

'refreshInterval' is not used any where in the headerSparkPage method. So, we don't need to pass the parameter while calling the 'headerSparkPage' method.

## How was this patch tested?

Existing tests

Closes#22864 from shahidki31/unusedCode.

Authored-by: Shahid <shahidki31@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Avoid converting encrypted bocks to regular ByteBuffers, to ensure they can be sent over the network for replication & remote reads even when > 2GB.

Also updates some TODOs with links to a SPARK-25905 for improving the

handling here.

## How was this patch tested?

Tested on a cluster with encrypted data > 2GB (after SPARK-25904 was

applied as well).

Closes#22917 from squito/real_SPARK-25827.

Authored-by: Imran Rashid <irashid@cloudera.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

* Update `AppStatusListener` `cleanupStages` method to remove tasks for those stages in a single pass instead of 1 for each stage.

* This fixes an issue where the cleanupStages method would get backed up, causing a backup in the executor in ElementTrackingStore, resulting in stages and jobs not getting cleaned up properly.

Tasks seem most susceptible to this as there are a lot of them, however a similar issue could arise in other locations the `KVStore` `view` method is used. A broader fix might involve updates to `KVStoreView` and `InMemoryView` as it appears this interface and implementation can lead to multiple and inefficient traversals of the stored data.

## How was this patch tested?

Using existing tests in AppStatusListenerSuite

This is my original work and I license the work to the project under the project’s open source license.

Closes#22883 from patrickbrownsync/cleanup-stages-fix.

Authored-by: Patrick Brown <patrick.brown@blyncsy.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

I saw CoarseGrainedSchedulerBackendSuite failed in my PR and finally reproduced the following error on a very busy machine:

```

sbt.ForkMain$ForkError: org.scalatest.exceptions.TestFailedDueToTimeoutException: The code passed to eventually never returned normally. Attempted 400 times over 10.009828643999999 seconds. Last failure message: ArrayBuffer("2", "0", "3") had length 3 instead of expected length 4.

```

The logs in this test shows executor 1 was not up when the test failed.

```

18/10/30 11:34:03.563 dispatcher-event-loop-12 INFO CoarseGrainedSchedulerBackend$DriverEndpoint: Registered executor NettyRpcEndpointRef(spark-client://Executor) (172.17.0.2:43656) with ID 2

18/10/30 11:34:03.593 dispatcher-event-loop-3 INFO CoarseGrainedSchedulerBackend$DriverEndpoint: Registered executor NettyRpcEndpointRef(spark-client://Executor) (172.17.0.2:43658) with ID 3

18/10/30 11:34:03.629 dispatcher-event-loop-6 INFO CoarseGrainedSchedulerBackend$DriverEndpoint: Registered executor NettyRpcEndpointRef(spark-client://Executor) (172.17.0.2:43654) with ID 0

18/10/30 11:34:03.885 pool-1-thread-1-ScalaTest-running-CoarseGrainedSchedulerBackendSuite INFO CoarseGrainedSchedulerBackendSuite:

===== FINISHED o.a.s.scheduler.CoarseGrainedSchedulerBackendSuite: 'compute max number of concurrent tasks can be launched' =====

```

And the following logs in executor 1 shows it was still doing the initialization when the timeout happened (at 18/10/30 11:34:03.885).

```

18/10/30 11:34:03.463 netty-rpc-connection-0 INFO TransportClientFactory: Successfully created connection to 54b6b6217301/172.17.0.2:33741 after 37 ms (0 ms spent in bootstraps)

18/10/30 11:34:03.959 main INFO DiskBlockManager: Created local directory at /home/jenkins/workspace/core/target/tmp/spark-383518bc-53bd-4d9c-885b-d881f03875bf/executor-61c406e4-178f-40a6-ac2c-7314ee6fb142/blockmgr-03fb84a1-eedc-4055-8743-682eb3ac5c67

18/10/30 11:34:03.993 main INFO MemoryStore: MemoryStore started with capacity 546.3 MB

```

Hence, I think our current 10 seconds is not enough on a slow Jenkins machine. This PR just increases the timeout from 10 seconds to 60 seconds to make the test more stable.

## How was this patch tested?

Jenkins

Closes#22910 from zsxwing/fix-flaky-test.

Authored-by: Shixiong Zhu <zsxwing@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

This avoids having two classes to deal with tokens; now the above

class is a one-stop shop for dealing with delegation tokens. The

YARN backend extends that class instead of doing composition like

before, resulting in a bit less code there too.

The renewer functionality is basically the same code that used to

be in YARN's AMCredentialRenewer. That is also the reason why the

public API of HadoopDelegationTokenManager is a little bit odd;

the YARN AM has some odd requirements for how this all should be

initialized, and the weirdness is needed currently to support that.

Tested:

- YARN with stress app for DT renewal

- Mesos and K8S with basic kerberos tests (both tgt and keytab)

Closes#22624 from vanzin/SPARK-23781.

Authored-by: Marcelo Vanzin <vanzin@cloudera.com>

Signed-off-by: Imran Rashid <irashid@cloudera.com>

## What changes were proposed in this pull request?

This turns off hdfs erasure coding by default for event logs, regardless of filesystem defaults. Because this requires apis only available in hadoop 3, this uses reflection. EC isn't a very good choice for event logs, as hflush() is a no-op, and so updates to the file are not visible for a long time. This can still be configured by setting "spark.eventLog.allowErasureCoding=true", which will use filesystem defaults.

## How was this patch tested?

deployed a cluster with the changes with HDFS EC on. By default, event logs didn't use EC, but configuration still would allow EC. Also tried writing to the local fs (which doesn't support EC at all) and things worked fine.

Closes#22881 from squito/SPARK-25855.

Authored-by: Imran Rashid <irashid@cloudera.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

Py4J 0.10.8.1 is released on October 21st and is the first release of Py4J to support Python 3.7 officially. We had better have this to get the official support. Also, there are some patches related to garbage collections.

https://www.py4j.org/changelog.html#py4j-0-10-8-and-py4j-0-10-8-1

## How was this patch tested?

Pass the Jenkins.

Closes#22901 from dongjoon-hyun/SPARK-25891.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

When a job finishes, there may be some zombie tasks still running due to stage retry. Since a result stage will never be used by other jobs, running these tasks are just wasting the cluster resource. This PR just asks TaskScheduler to cancel the running tasks of a result stage when it's already finished. Credits go to srinathshankar who suggested this idea to me.

This PR also fixes two minor issues while I'm touching DAGScheduler:

- Invalid spark.job.interruptOnCancel should not crash DAGScheduler.

- Non fatal errors should not crash DAGScheduler.

## How was this patch tested?

The new unit tests.

Closes#22771 from zsxwing/SPARK-25773.

Lead-authored-by: Shixiong Zhu <zsxwing@gmail.com>

Co-authored-by: Shixiong Zhu <shixiong@databricks.com>

Signed-off-by: Shixiong Zhu <zsxwing@gmail.com>

## What changes were proposed in this pull request?

MetricGetter should rename to ExecutorMetricType in comments.

## How was this patch tested?

Just comments, no need to test.

Closes#22884 from LantaoJin/SPARK-23429_FOLLOWUP.

Authored-by: LantaoJin <jinlantao@gmail.com>

Signed-off-by: Imran Rashid <irashid@cloudera.com>

## What changes were proposed in this pull request?

Set main args correctly in BenchmarkBase, to make it accessible for its subclass.

It will benefit:

- BuiltInDataSourceWriteBenchmark

- AvroWriteBenchmark

## How was this patch tested?

manual tests

Closes#22872 from yucai/main_args.

Authored-by: yucai <yyu1@ebay.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>