## What changes were proposed in this pull request?

Adding spark image reader, an implementation of schema for representing images in spark DataFrames

The code is taken from the spark package located here:

(https://github.com/Microsoft/spark-images)

Please see the JIRA for more information (https://issues.apache.org/jira/browse/SPARK-21866)

Please see mailing list for SPIP vote and approval information:

(http://apache-spark-developers-list.1001551.n3.nabble.com/VOTE-SPIP-SPARK-21866-Image-support-in-Apache-Spark-td22510.html)

# Background and motivation

As Apache Spark is being used more and more in the industry, some new use cases are emerging for different data formats beyond the traditional SQL types or the numerical types (vectors and matrices). Deep Learning applications commonly deal with image processing. A number of projects add some Deep Learning capabilities to Spark (see list below), but they struggle to communicate with each other or with MLlib pipelines because there is no standard way to represent an image in Spark DataFrames. We propose to federate efforts for representing images in Spark by defining a representation that caters to the most common needs of users and library developers.

This SPIP proposes a specification to represent images in Spark DataFrames and Datasets (based on existing industrial standards), and an interface for loading sources of images. It is not meant to be a full-fledged image processing library, but rather the core description that other libraries and users can rely on. Several packages already offer various processing facilities for transforming images or doing more complex operations, and each has various design tradeoffs that make them better as standalone solutions.

This project is a joint collaboration between Microsoft and Databricks, which have been testing this design in two open source packages: MMLSpark and Deep Learning Pipelines.

The proposed image format is an in-memory, decompressed representation that targets low-level applications. It is significantly more liberal in memory usage than compressed image representations such as JPEG, PNG, etc., but it allows easy communication with popular image processing libraries and has no decoding overhead.

## How was this patch tested?

Unit tests in scala ImageSchemaSuite, unit tests in python

Author: Ilya Matiach <ilmat@microsoft.com>

Author: hyukjinkwon <gurwls223@gmail.com>

Closes#19439 from imatiach-msft/ilmat/spark-images.

## What changes were proposed in this pull request?

Add python api for VectorIndexerModel support handle unseen categories via handleInvalid.

## How was this patch tested?

doctest added.

Author: WeichenXu <weichen.xu@databricks.com>

Closes#19753 from WeichenXu123/vector_indexer_invalid_py.

## What changes were proposed in this pull request?

Add parallelism support for ML tuning in pyspark.

## How was this patch tested?

Test updated.

Author: WeichenXu <weichen.xu@databricks.com>

Closes#19122 from WeichenXu123/par-ml-tuning-py.

## What changes were proposed in this pull request?

This PR proposes to mark the existing warnings as `DeprecationWarning` and print out warnings for deprecated functions.

This could be actually useful for Spark app developers. I use (old) PyCharm and this IDE can detect this specific `DeprecationWarning` in some cases:

**Before**

<img src="https://user-images.githubusercontent.com/6477701/31762664-df68d9f8-b4f6-11e7-8773-f0468f70a2cc.png" height="45" />

**After**

<img src="https://user-images.githubusercontent.com/6477701/31762662-de4d6868-b4f6-11e7-98dc-3c8446a0c28a.png" height="70" />

For console usage, `DeprecationWarning` is usually disabled (see https://docs.python.org/2/library/warnings.html#warning-categories and https://docs.python.org/3/library/warnings.html#warning-categories):

```

>>> import warnings

>>> filter(lambda f: f[2] == DeprecationWarning, warnings.filters)

[('ignore', <_sre.SRE_Pattern object at 0x10ba58c00>, <type 'exceptions.DeprecationWarning'>, <_sre.SRE_Pattern object at 0x10bb04138>, 0), ('ignore', None, <type 'exceptions.DeprecationWarning'>, None, 0)]

```

so, it won't actually mess up the terminal much unless it is intended.

If this is intendedly enabled, it'd should as below:

```

>>> import warnings

>>> warnings.simplefilter('always', DeprecationWarning)

>>>

>>> from pyspark.sql import functions

>>> functions.approxCountDistinct("a")

.../spark/python/pyspark/sql/functions.py:232: DeprecationWarning: Deprecated in 2.1, use approx_count_distinct instead.

"Deprecated in 2.1, use approx_count_distinct instead.", DeprecationWarning)

...

```

These instances were found by:

```

cd python/pyspark

grep -r "Deprecated" .

grep -r "deprecated" .

grep -r "deprecate" .

```

## How was this patch tested?

Manually tested.

Author: hyukjinkwon <gurwls223@gmail.com>

Closes#19535 from HyukjinKwon/deprecated-warning.

This PR adds methods `recommendForUserSubset` and `recommendForItemSubset` to `ALSModel`. These allow recommending for a specified set of user / item ids rather than for every user / item (as in the `recommendForAllX` methods).

The subset methods take a `DataFrame` as input, containing ids in the column specified by the param `userCol` or `itemCol`. The model will generate recommendations for each _unique_ id in this input dataframe.

## How was this patch tested?

New unit tests in `ALSSuite` and Python doctests in `ALS`. Ran updated examples locally.

Author: Nick Pentreath <nickp@za.ibm.com>

Closes#18748 from MLnick/als-recommend-df.

## What changes were proposed in this pull request?

Added Python interface for ClusteringEvaluator

## How was this patch tested?

Manual test, eg. the example Python code in the comments.

cc yanboliang

Author: Marco Gaido <mgaido@hortonworks.com>

Author: Marco Gaido <marcogaido91@gmail.com>

Closes#19204 from mgaido91/SPARK-21981.

## What changes were proposed in this pull request?

Remove unnecessary default value setting for all evaluators, as we have set them in corresponding _HasXXX_ base classes.

## How was this patch tested?

Existing tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#19262 from yanboliang/evaluation.

## What changes were proposed in this pull request?

#19197 fixed double caching for MLlib algorithms, but missed PySpark ```OneVsRest```, this PR fixed it.

## How was this patch tested?

Existing tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#19220 from yanboliang/SPARK-18608.

## What changes were proposed in this pull request?

Added LogisticRegressionTrainingSummary for MultinomialLogisticRegression in Python API

## How was this patch tested?

Added unit test

Please review http://spark.apache.org/contributing.html before opening a pull request.

Author: Ming Jiang <mjiang@fanatics.com>

Author: Ming Jiang <jmwdpk@gmail.com>

Author: jmwdpk <jmwdpk@gmail.com>

Closes#19185 from jmwdpk/SPARK-21854.

# What changes were proposed in this pull request?

Added tunable parallelism to the pyspark implementation of one vs. rest classification. Added a parallelism parameter to the Scala implementation of one vs. rest along with functionality for using the parameter to tune the level of parallelism.

I take this PR #18281 over because the original author is busy but we need merge this PR soon.

After this been merged, we can close#18281 .

## How was this patch tested?

Test suite added.

Author: Ajay Saini <ajays725@gmail.com>

Author: WeichenXu <weichen.xu@databricks.com>

Closes#19110 from WeichenXu123/spark-21027.

Probability and rawPrediction has been added to MultilayerPerceptronClassifier for Python

Add unit test.

Author: Chunsheng Ji <chunsheng.ji@gmail.com>

Closes#19172 from chunshengji/SPARK-21856.

https://issues.apache.org/jira/browse/SPARK-19866

## What changes were proposed in this pull request?

Add Python API for findSynonymsArray matching Scala API.

## How was this patch tested?

Manual test

`./python/run-tests --python-executables=python2.7 --modules=pyspark-ml`

Author: Xin Ren <iamshrek@126.com>

Author: Xin Ren <renxin.ubc@gmail.com>

Author: Xin Ren <keypointt@users.noreply.github.com>

Closes#17451 from keypointt/SPARK-19866.

## What changes were proposed in this pull request?

This PR proposes to support unicodes in Param methods in ML, other missed functions in DataFrame.

For example, this causes a `ValueError` in Python 2.x when param is a unicode string:

```python

>>> from pyspark.ml.classification import LogisticRegression

>>> lr = LogisticRegression()

>>> lr.hasParam("threshold")

True

>>> lr.hasParam(u"threshold")

Traceback (most recent call last):

...

raise TypeError("hasParam(): paramName must be a string")

TypeError: hasParam(): paramName must be a string

```

This PR is based on https://github.com/apache/spark/pull/13036

## How was this patch tested?

Unit tests in `python/pyspark/ml/tests.py` and `python/pyspark/sql/tests.py`.

Author: hyukjinkwon <gurwls223@gmail.com>

Author: sethah <seth.hendrickson16@gmail.com>

Closes#17096 from HyukjinKwon/SPARK-15243.

## What changes were proposed in this pull request?

Modify MLP model to inherit `ProbabilisticClassificationModel` and so that it can expose the probability column when transforming data.

## How was this patch tested?

Test added.

Author: WeichenXu <WeichenXu123@outlook.com>

Closes#17373 from WeichenXu123/expose_probability_in_mlp_model.

## What changes were proposed in this pull request?

Added call to copy values of Params from Estimator to Model after fit in PySpark ML. This will copy values for any params that are also defined in the Model. Since currently most Models do not define the same params from the Estimator, also added method to create new Params from looking at the Java object if they do not exist in the Python object. This is a temporary fix that can be removed once the PySpark models properly define the params themselves.

## How was this patch tested?

Refactored the `check_params` test to optionally check if the model params for Python and Java match and added this check to an existing fitted model that shares params between Estimator and Model.

Author: Bryan Cutler <cutlerb@gmail.com>

Closes#17849 from BryanCutler/pyspark-models-own-params-SPARK-10931.

Add Python API for `FeatureHasher` transformer.

## How was this patch tested?

New doc test.

Author: Nick Pentreath <nickp@za.ibm.com>

Closes#18970 from MLnick/SPARK-21468-pyspark-hasher.

## What changes were proposed in this pull request?

Implemented a Python-only persistence framework for pipelines containing stages that cannot be saved using Java.

## How was this patch tested?

Created a custom Python-only UnaryTransformer, included it in a Pipeline, and saved/loaded the pipeline. The loaded pipeline was compared against the original using _compare_pipelines() in tests.py.

Author: Ajay Saini <ajays725@gmail.com>

Closes#18888 from ajaysaini725/PythonPipelines.

## What changes were proposed in this pull request?

Update breeze to 0.13.1 for an emergency bugfix in strong wolfe line search

https://github.com/scalanlp/breeze/pull/651

## How was this patch tested?

N/A

Author: WeichenXu <WeichenXu123@outlook.com>

Closes#18797 from WeichenXu123/update-breeze.

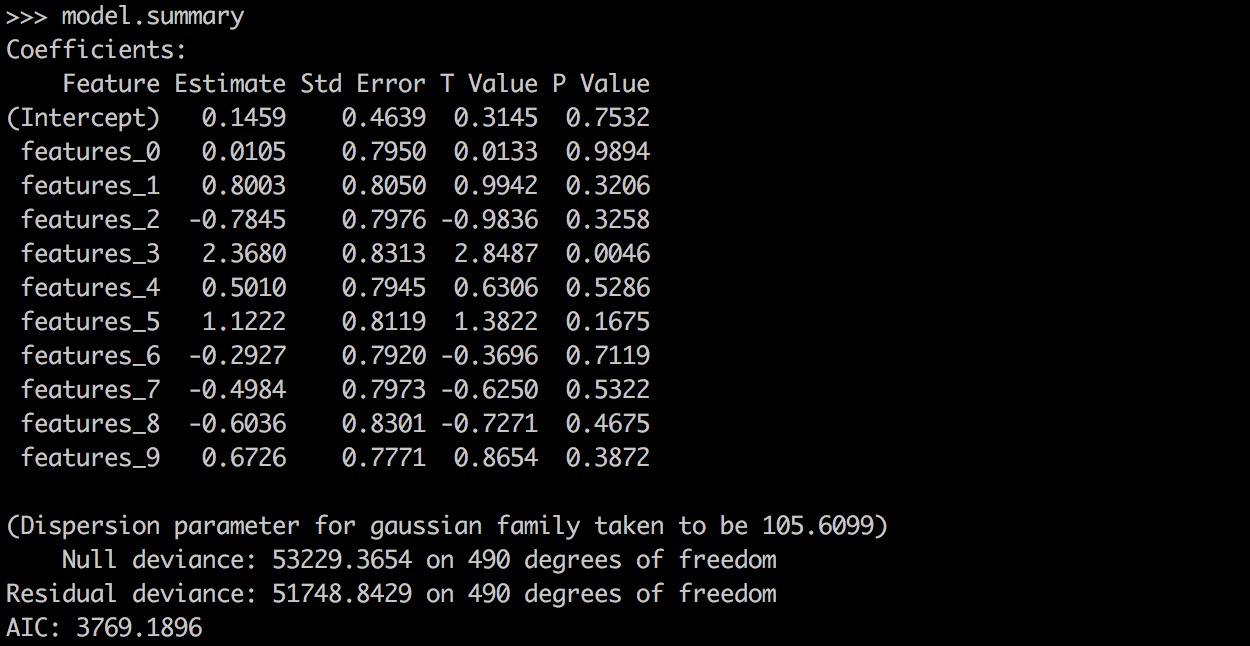

## What changes were proposed in this pull request?

PySpark GLR ```model.summary``` should return a printable representation by calling Scala ```toString```.

## How was this patch tested?

```

from pyspark.ml.regression import GeneralizedLinearRegression

dataset = spark.read.format("libsvm").load("data/mllib/sample_linear_regression_data.txt")

glr = GeneralizedLinearRegression(family="gaussian", link="identity", maxIter=10, regParam=0.3)

model = glr.fit(dataset)

model.summary

```

Before this PR:

After this PR:

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#18870 from yanboliang/spark-19270.

## What changes were proposed in this pull request?

Added DefaultParamsWriteable, DefaultParamsReadable, DefaultParamsWriter, and DefaultParamsReader to Python to support Python-only persistence of Json-serializable parameters.

## How was this patch tested?

Instantiated an estimator with Json-serializable parameters (ex. LogisticRegression), saved it using the added helper functions, and loaded it back, and compared it to the original instance to make sure it is the same. This test was both done in the Python REPL and implemented in the unit tests.

Note to reviewers: there are a few excess comments that I left in the code for clarity but will remove before the code is merged to master.

Author: Ajay Saini <ajays725@gmail.com>

Closes#18742 from ajaysaini725/PythonPersistenceHelperFunctions.

## What changes were proposed in this pull request?

Implemented UnaryTransformer in Python.

## How was this patch tested?

This patch was tested by creating a MockUnaryTransformer class in the unit tests that extends UnaryTransformer and testing that the transform function produced correct output.

Author: Ajay Saini <ajays725@gmail.com>

Closes#18746 from ajaysaini725/AddPythonUnaryTransformer.

## What changes were proposed in this pull request?

Python API for Constrained Logistic Regression based on #17922 , thanks for the original contribution from zero323 .

## How was this patch tested?

Unit tests.

Author: zero323 <zero323@users.noreply.github.com>

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#18759 from yanboliang/SPARK-20601.

## What changes were proposed in this pull request?

GBTs inherit from HasStepSize & LInearSVC/Binarizer from HasThreshold

## How was this patch tested?

existing tests

Author: Zheng RuiFeng <ruifengz@foxmail.com>

Author: Ruifeng Zheng <ruifengz@foxmail.com>

Closes#18612 from zhengruifeng/override_HasXXX.

## What changes were proposed in this pull request?

add `setWeightCol` method for OneVsRest.

`weightCol` is ignored if classifier doesn't inherit HasWeightCol trait.

## How was this patch tested?

+ [x] add an unit test.

Author: Yan Facai (颜发才) <facai.yan@gmail.com>

Closes#18554 from facaiy/BUG/oneVsRest_missing_weightCol.

## What changes were proposed in this pull request?

Added functionality for CrossValidator and TrainValidationSplit to persist nested estimators such as OneVsRest. Also added CrossValidator and TrainValidation split persistence to pyspark.

## How was this patch tested?

Performed both cross validation and train validation split with a one vs. rest estimator and tested read/write functionality of the estimator parameter maps required by these meta-algorithms.

Author: Ajay Saini <ajays725@gmail.com>

Closes#18428 from ajaysaini725/MetaAlgorithmPersistNestedEstimators.

## What changes were proposed in this pull request?

```RFormula``` should handle invalid for both features and label column.

#18496 only handle invalid values in features column. This PR add handling invalid values for label column and test cases.

## How was this patch tested?

Add test cases.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#18613 from yanboliang/spark-20307.

## What changes were proposed in this pull request?

1, HasHandleInvaild support override

2, Make QuantileDiscretizer/Bucketizer/StringIndexer/RFormula inherit from HasHandleInvalid

## How was this patch tested?

existing tests

[JIRA](https://issues.apache.org/jira/browse/SPARK-18619)

Author: Zheng RuiFeng <ruifengz@foxmail.com>

Closes#18582 from zhengruifeng/heritate_HasHandleInvalid.

## What changes were proposed in this pull request?

Add offset to PySpark in GLM as in #16699.

## How was this patch tested?

Python test

Author: actuaryzhang <actuaryzhang10@gmail.com>

Closes#18534 from actuaryzhang/pythonOffset.

## What changes were proposed in this pull request?

This PR is to maintain API parity with changes made in SPARK-17498 to support a new option

'keep' in StringIndexer to handle unseen labels or NULL values with PySpark.

Note: This is updated version of #17237 , the primary author of this PR is VinceShieh .

## How was this patch tested?

Unit tests.

Author: VinceShieh <vincent.xie@intel.com>

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#18453 from yanboliang/spark-19852.

## What changes were proposed in this pull request?

1, make param support non-final with `finalFields` option

2, generate `HasSolver` with `finalFields = false`

3, override `solver` in LiR, GLR, and make MLPC inherit `HasSolver`

## How was this patch tested?

existing tests

Author: Ruifeng Zheng <ruifengz@foxmail.com>

Author: Zheng RuiFeng <ruifengz@foxmail.com>

Closes#16028 from zhengruifeng/param_non_final.

## What changes were proposed in this pull request?

LinearSVC should use its own threshold param, rather than the shared one, since it applies to rawPrediction instead of probability. This PR changes the param in the Scala, Python and R APIs.

## How was this patch tested?

New unit test to make sure the threshold can be set to any Double value.

Author: Joseph K. Bradley <joseph@databricks.com>

Closes#18151 from jkbradley/ml-2.2-linearsvc-cleanup.

## What changes were proposed in this pull request?

PySpark supports stringIndexerOrderType in RFormula as in #17967.

## How was this patch tested?

docstring test

Author: actuaryzhang <actuaryzhang10@gmail.com>

Closes#18122 from actuaryzhang/PythonRFormula.

## What changes were proposed in this pull request?

Expose numPartitions (expert) param of PySpark FPGrowth.

## How was this patch tested?

+ [x] Pass all unit tests.

Author: Yan Facai (颜发才) <facai.yan@gmail.com>

Closes#18058 from facaiy/ENH/pyspark_fpg_add_num_partition.

## What changes were proposed in this pull request?

Follow-up for #17218, some minor fix for PySpark ```FPGrowth```.

## How was this patch tested?

Existing UT.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#18089 from yanboliang/spark-19281.

## What changes were proposed in this pull request?

- Fix incorrect tests for `_check_thresholds`.

- Move test to `ParamTests`.

## How was this patch tested?

Unit tests.

Author: zero323 <zero323@users.noreply.github.com>

Closes#18085 from zero323/SPARK-20631-FOLLOW-UP.

## What changes were proposed in this pull request?

Add test cases for PR-18062

## How was this patch tested?

The existing UT

Author: Peng <peng.meng@intel.com>

Closes#18068 from mpjlu/moreTest.

Changes:

pyspark.ml Estimators can take either a list of param maps or a dict of params. This change allows the CrossValidator and TrainValidationSplit Estimators to pass through lists of param maps to the underlying estimators so that those estimators can handle parallelization when appropriate (eg distributed hyper parameter tuning).

Testing:

Existing unit tests.

Author: Bago Amirbekian <bago@databricks.com>

Closes#18077 from MrBago/delegate_params.

## What changes were proposed in this pull request?

SPARK-20097 exposed degreesOfFreedom in LinearRegressionSummary and numInstances in GeneralizedLinearRegressionSummary. Python API should be updated to reflect these changes.

## How was this patch tested?

The existing UT

Author: Peng <peng.meng@intel.com>

Closes#18062 from mpjlu/spark-20764.

## What changes were proposed in this pull request?

PySpark StringIndexer supports StringOrderType added in #17879.

Author: Wayne Zhang <actuaryzhang@uber.com>

Closes#17978 from actuaryzhang/PythonStringIndexer.

## What changes were proposed in this pull request?

Review new Scala APIs introduced in 2.2.

## How was this patch tested?

Existing tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#17934 from yanboliang/spark-20501.

## What changes were proposed in this pull request?

Before 2.2, MLlib keep to remove APIs deprecated in last feature/minor release. But from Spark 2.2, we decide to remove deprecated APIs in a major release, so we need to change corresponding annotations to tell users those will be removed in 3.0.

Meanwhile, this fixed bugs in ML documents. The original ML docs can't show deprecated annotations in ```MLWriter``` and ```MLReader``` related class, we correct it in this PR.

Before:

After:

## How was this patch tested?

Existing tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#17946 from yanboliang/spark-20707.

## What changes were proposed in this pull request?

- Replace `getParam` calls with `getOrDefault` calls.

- Fix exception message to avoid unintended `TypeError`.

- Add unit tests

## How was this patch tested?

New unit tests.

Author: zero323 <zero323@users.noreply.github.com>

Closes#17891 from zero323/SPARK-20631.

## What changes were proposed in this pull request?

Remove ML methods we deprecated in 2.1.

## How was this patch tested?

Existing tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#17867 from yanboliang/spark-20606.

Add Python API for `ALSModel` methods `recommendForAllUsers`, `recommendForAllItems`

## How was this patch tested?

New doc tests.

Author: Nick Pentreath <nickp@za.ibm.com>

Closes#17622 from MLnick/SPARK-20300-pyspark-recall.

## What changes were proposed in this pull request?

Some PySpark & SparkR tests run with tiny dataset and tiny ```maxIter```, which means they are not converged. I don’t think checking intermediate result during iteration make sense, and these intermediate result may vulnerable and not stable, so we should switch to check the converged result. We hit this issue at #17746 when we upgrade breeze to 0.13.1.

## How was this patch tested?

Existing tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#17757 from yanboliang/flaky-test.

## What changes were proposed in this pull request?

Upgrade breeze version to 0.13.1, which fixed some critical bugs of L-BFGS-B.

## How was this patch tested?

Existing unit tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#17746 from yanboliang/spark-20449.

## What changes were proposed in this pull request?

The Dataframes-based support for the correlation statistics is added in #17108. This patch adds the Python interface for it.

## How was this patch tested?

Python unit test.

Please review http://spark.apache.org/contributing.html before opening a pull request.

Author: Liang-Chi Hsieh <viirya@gmail.com>

Closes#17494 from viirya/correlation-python-api.

## What changes were proposed in this pull request?

`_convert_to_vector` converts a scipy sparse matrix to csc matrix for initializing `SparseVector`. However, it doesn't guarantee the converted csc matrix has sorted indices and so a failure happens when you do something like that:

from scipy.sparse import lil_matrix

lil = lil_matrix((4, 1))

lil[1, 0] = 1

lil[3, 0] = 2

_convert_to_vector(lil.todok())

File "/home/jenkins/workspace/python/pyspark/mllib/linalg/__init__.py", line 78, in _convert_to_vector

return SparseVector(l.shape[0], csc.indices, csc.data)

File "/home/jenkins/workspace/python/pyspark/mllib/linalg/__init__.py", line 556, in __init__

% (self.indices[i], self.indices[i + 1]))

TypeError: Indices 3 and 1 are not strictly increasing

A simple test can confirm that `dok_matrix.tocsc()` won't guarantee sorted indices:

>>> from scipy.sparse import lil_matrix

>>> lil = lil_matrix((4, 1))

>>> lil[1, 0] = 1

>>> lil[3, 0] = 2

>>> dok = lil.todok()

>>> csc = dok.tocsc()

>>> csc.has_sorted_indices

0

>>> csc.indices

array([3, 1], dtype=int32)

I checked the source codes of scipy. The only way to guarantee it is `csc_matrix.tocsr()` and `csr_matrix.tocsc()`.

## How was this patch tested?

Existing tests.

Please review http://spark.apache.org/contributing.html before opening a pull request.

Author: Liang-Chi Hsieh <viirya@gmail.com>

Closes#17532 from viirya/make-sure-sorted-indices.

## What changes were proposed in this pull request?

A pyspark wrapper for spark.ml.stat.ChiSquareTest

## How was this patch tested?

unit tests

doctests

Author: Bago Amirbekian <bago@databricks.com>

Closes#17421 from MrBago/chiSquareTestWrapper.

## What changes were proposed in this pull request?

- Add `HasSupport` and `HasConfidence` `Params`.

- Add new module `pyspark.ml.fpm`.

- Add `FPGrowth` / `FPGrowthModel` wrappers.

- Provide tests for new features.

## How was this patch tested?

Unit tests.

Author: zero323 <zero323@users.noreply.github.com>

Closes#17218 from zero323/SPARK-19281.

Add Python wrapper for `Imputer` feature transformer.

## How was this patch tested?

New doc tests and tweak to PySpark ML `tests.py`

Author: Nick Pentreath <nickp@za.ibm.com>

Closes#17316 from MLnick/SPARK-15040-pyspark-imputer.

## What changes were proposed in this pull request?

PySpark ```GeneralizedLinearRegression``` supports tweedie distribution.

## How was this patch tested?

Add unit tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#17146 from yanboliang/spark-19806.

## What changes were proposed in this pull request?

The `keyword_only` decorator in PySpark is not thread-safe. It writes kwargs to a static class variable in the decorator, which is then retrieved later in the class method as `_input_kwargs`. If multiple threads are constructing the same class with different kwargs, it becomes a race condition to read from the static class variable before it's overwritten. See [SPARK-19348](https://issues.apache.org/jira/browse/SPARK-19348) for reproduction code.

This change will write the kwargs to a member variable so that multiple threads can operate on separate instances without the race condition. It does not protect against multiple threads operating on a single instance, but that is better left to the user to synchronize.

## How was this patch tested?

Added new unit tests for using the keyword_only decorator and a regression test that verifies `_input_kwargs` can be overwritten from different class instances.

Author: Bryan Cutler <cutlerb@gmail.com>

Closes#16782 from BryanCutler/pyspark-keyword_only-threadsafe-SPARK-19348.

## What changes were proposed in this pull request?

Updates the doc string to match up with the code

i.e. say dropLast instead of includeFirst

## How was this patch tested?

Not much, since it's a doc-like change. Will run unit tests via Jenkins job.

Author: Mark Grover <mark@apache.org>

Closes#17127 from markgrover/spark_19734.

## What changes were proposed in this pull request?

Remove `org.apache.spark.examples.` in

Add slash in one of the python doc.

## How was this patch tested?

Run examples using the commands in the comments.

Author: Yun Ni <yunn@uber.com>

Closes#17104 from Yunni/yunn_minor.

This PR adds a param to `ALS`/`ALSModel` to set the strategy used when encountering unknown users or items at prediction time in `transform`. This can occur in 2 scenarios: (a) production scoring, and (b) cross-validation & evaluation.

The current behavior returns `NaN` if a user/item is unknown. In scenario (b), this can easily occur when using `CrossValidator` or `TrainValidationSplit` since some users/items may only occur in the test set and not in the training set. In this case, the evaluator returns `NaN` for all metrics, making model selection impossible.

The new param, `coldStartStrategy`, defaults to `nan` (the current behavior). The other option supported initially is `drop`, which drops all rows with `NaN` predictions. This flag allows users to use `ALS` in cross-validation settings. It is made an `expertParam`. The param is made a string so that the set of strategies can be extended in future (some options are discussed in [SPARK-14489](https://issues.apache.org/jira/browse/SPARK-14489)).

## How was this patch tested?

New unit tests, and manual "before and after" tests for Scala & Python using MovieLens `ml-latest-small` as example data. Here, using `CrossValidator` or `TrainValidationSplit` with the default param setting results in metrics that are all `NaN`, while setting `coldStartStrategy` to `drop` results in valid metrics.

Author: Nick Pentreath <nickp@za.ibm.com>

Closes#12896 from MLnick/SPARK-14489-als-nan.

## What changes were proposed in this pull request?

Fixed the PySpark Params.copy method to behave like the Scala implementation. The main issue was that it did not account for the _defaultParamMap and merged it into the explicitly created param map.

## How was this patch tested?

Added new unit test to verify the copy method behaves correctly for copying uid, explicitly created params, and default params.

Author: Bryan Cutler <cutlerb@gmail.com>

Closes#16772 from BryanCutler/pyspark-ml-param_copy-Scala_sync-SPARK-14772.

## What changes were proposed in this pull request?

This pull request includes python API and examples for LSH. The API changes was based on yanboliang 's PR #15768 and resolved conflicts and API changes on the Scala API. The examples are consistent with Scala examples of MinHashLSH and BucketedRandomProjectionLSH.

## How was this patch tested?

API and examples are tested using spark-submit:

`bin/spark-submit examples/src/main/python/ml/min_hash_lsh.py`

`bin/spark-submit examples/src/main/python/ml/bucketed_random_projection_lsh.py`

User guide changes are generated and manually inspected:

`SKIP_API=1 jekyll build`

Author: Yun Ni <yunn@uber.com>

Author: Yanbo Liang <ybliang8@gmail.com>

Author: Yunni <Euler57721@gmail.com>

Closes#16715 from Yunni/spark-18080.

## What changes were proposed in this pull request?

This PR is to document the changes on QuantileDiscretizer in pyspark for PR:

https://github.com/apache/spark/pull/15428

## How was this patch tested?

No test needed

Signed-off-by: VinceShieh <vincent.xieintel.com>

Author: VinceShieh <vincent.xie@intel.com>

Closes#16922 from VinceShieh/spark-19590.

## What changes were proposed in this pull request?

Add missing `warnings` import.

## How was this patch tested?

Manual tests.

Author: zero323 <zero323@users.noreply.github.com>

Closes#16846 from zero323/SPARK-19506.

## What changes were proposed in this pull request?

Remove cyclic imports between `pyspark.ml.pipeline` and `pyspark.ml`.

## How was this patch tested?

Existing unit tests.

Author: zero323 <zero323@users.noreply.github.com>

Closes#16814 from zero323/SPARK-19467.

## What changes were proposed in this pull request?

Methods `numClasses` and `numFeatures` in LinearSVCModel are already usable by inheriting `JavaClassificationModel`

we should not explicitly add them.

## How was this patch tested?

existing tests

Author: Zheng RuiFeng <ruifengz@foxmail.com>

Closes#16727 from zhengruifeng/nits_in_linearSVC.

## What changes were proposed in this pull request?

* Removed Since tags in Python Params since they are inherited by other classes

* Fixed doc links for LinearSVC

## How was this patch tested?

* doc tests

* generating docs locally and checking manually

Author: Joseph K. Bradley <joseph@databricks.com>

Closes#16723 from jkbradley/pyparam-fix-doc.

## What changes were proposed in this pull request?

Adding convenience function to Python `JavaWrapper` so that it is easy to create a Py4J JavaArray that is compatible with current class constructors that have a Scala `Array` as input so that it is not necessary to have a Java/Python friendly constructor. The function takes a Java class as input that is used by Py4J to create the Java array of the given class. As an example, `OneVsRest` has been updated to use this and the alternate constructor is removed.

## How was this patch tested?

Added unit tests for the new convenience function and updated `OneVsRest` doctests which use this to persist the model.

Author: Bryan Cutler <cutlerb@gmail.com>

Closes#14725 from BryanCutler/pyspark-new_java_array-CountVectorizer-SPARK-17161.

## What changes were proposed in this pull request?

Add Python API for the newly added LinearSVC algorithm.

## How was this patch tested?

Add new doc string test.

Author: wm624@hotmail.com <wm624@hotmail.com>

Closes#16694 from wangmiao1981/ser.

## What changes were proposed in this pull request?

add loglikelihood in GMM.summary

## How was this patch tested?

added tests

Author: Zheng RuiFeng <ruifengz@foxmail.com>

Author: Ruifeng Zheng <ruifengz@foxmail.com>

Closes#12064 from zhengruifeng/gmm_metric.

## What changes were proposed in this pull request?

Add FDR test case in ml/feature/ChiSqSelectorSuite.

Improve some comments in the code.

This is a follow-up pr for #15212.

## How was this patch tested?

ut

Author: Peng, Meng <peng.meng@intel.com>

Closes#16434 from mpjlu/fdr_fwe_update.

## What changes were proposed in this pull request?

Copy `GaussianMixture` implementation from mllib to ml, then we can add new features to it.

I left mllib `GaussianMixture` untouched, unlike some other algorithms to wrap the ml implementation. For the following reasons:

- mllib `GaussianMixture` allows k == 1, but ml does not.

- mllib `GaussianMixture` supports setting initial model, but ml does not support currently. (We will definitely add this feature for ml in the future)

We can get around these issues to make mllib as a wrapper calling into ml, but I'd prefer to leave mllib untouched which can make ml clean.

Meanwhile, There is a big performance improvement for `GaussianMixture` in this PR. Since the covariance matrix of multivariate gaussian distribution is symmetric, we can only store the upper triangular part of the matrix and it will greatly reduce the shuffled data size. In my test, this change will reduce shuffled data size by about 50% and accelerate the job execution.

Before this PR:

After this PR:

## How was this patch tested?

Existing tests and added new tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#15413 from yanboliang/spark-17847.

## What changes were proposed in this pull request?

There are many locations in the Spark repo where the same word occurs consecutively. Sometimes they are appropriately placed, but many times they are not. This PR removes the inappropriately duplicated words.

## How was this patch tested?

N/A since only docs or comments were updated.

Author: Niranjan Padmanabhan <niranjan.padmanabhan@gmail.com>

Closes#16455 from neurons/np.structure_streaming_doc.

## What changes were proposed in this pull request?

Univariate feature selection works by selecting the best features based on univariate statistical tests.

FDR and FWE are a popular univariate statistical test for feature selection.

In 2005, the Benjamini and Hochberg paper on FDR was identified as one of the 25 most-cited statistical papers. The FDR uses the Benjamini-Hochberg procedure in this PR. https://en.wikipedia.org/wiki/False_discovery_rate.

In statistics, FWE is the probability of making one or more false discoveries, or type I errors, among all the hypotheses when performing multiple hypotheses tests.

https://en.wikipedia.org/wiki/Family-wise_error_rate

We add FDR and FWE methods for ChiSqSelector in this PR, like it is implemented in scikit-learn.

http://scikit-learn.org/stable/modules/feature_selection.html#univariate-feature-selection

## How was this patch tested?

ut will be added soon

(Please explain how this patch was tested. E.g. unit tests, integration tests, manual tests)

(If this patch involves UI changes, please attach a screenshot; otherwise, remove this)

Author: Peng <peng.meng@intel.com>

Author: Peng, Meng <peng.meng@intel.com>

Closes#15212 from mpjlu/fdr_fwe.

## What changes were proposed in this pull request?

Updated Scala param and Python param to have quotes around the options making it easier for users to read.

## How was this patch tested?

Manually checked the docstrings

Author: krishnakalyan3 <krishnakalyan3@gmail.com>

Closes#16242 from krishnakalyan3/doc-string.

## What changes were proposed in this pull request?

In`JavaWrapper `'s destructor make Java Gateway dereference object in destructor, using `SparkContext._active_spark_context._gateway.detach`

Fixing the copying parameter bug, by moving the `copy` method from `JavaModel` to `JavaParams`

## How was this patch tested?

```scala

import random, string

from pyspark.ml.feature import StringIndexer

l = [(''.join(random.choice(string.ascii_uppercase) for _ in range(10)), ) for _ in range(int(7e5))] # 700000 random strings of 10 characters

df = spark.createDataFrame(l, ['string'])

for i in range(50):

indexer = StringIndexer(inputCol='string', outputCol='index')

indexer.fit(df)

```

* Before: would keep StringIndexer strong reference, causing GC issues and is halted midway

After: garbage collection works as the object is dereferenced, and computation completes

* Mem footprint tested using profiler

* Added a parameter copy related test which was failing before.

Author: Sandeep Singh <sandeep@techaddict.me>

Author: jkbradley <joseph.kurata.bradley@gmail.com>

Closes#15843 from techaddict/SPARK-18274.

## What changes were proposed in this pull request?

added the new handleInvalid param for these transformers to Python to maintain API parity.

## How was this patch tested?

existing tests

testing is done with new doctests

Author: Sandeep Singh <sandeep@techaddict.me>

Closes#15817 from techaddict/SPARK-18366.

## What changes were proposed in this pull request?

Add python api for KMeansSummary

## How was this patch tested?

unit test added

Author: Jeff Zhang <zjffdu@apache.org>

Closes#13557 from zjffdu/SPARK-15819.

## What changes were proposed in this pull request?

make a pass through the items marked as Experimental or DeveloperApi and see if any are stable enough to be unmarked. Also check for items marked final or sealed to see if they are stable enough to be opened up as APIs.

Some discussions in the jira: https://issues.apache.org/jira/browse/SPARK-18319

## How was this patch tested?

existing ut

Author: Yuhao <yuhao.yang@intel.com>

Author: Yuhao Yang <hhbyyh@gmail.com>

Closes#15972 from hhbyyh/experimental21.

## What changes were proposed in this pull request?

Remove deprecated methods for ML.

## How was this patch tested?

Existing tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#15913 from yanboliang/spark-18481.

## What changes were proposed in this pull request?

Add model summary APIs for `GaussianMixtureModel` and `BisectingKMeansModel` in pyspark.

## How was this patch tested?

Unit tests.

Author: sethah <seth.hendrickson16@gmail.com>

Closes#15777 from sethah/pyspark_cluster_summaries.

## What changes were proposed in this pull request?

Gradient Boosted Tree in R.

With a few minor improvements to RandomForest in R.

Since this is relatively isolated I'd like to target this for branch-2.1

## How was this patch tested?

manual tests, unit tests

Author: Felix Cheung <felixcheung_m@hotmail.com>

Closes#15746 from felixcheung/rgbt.

## What changes were proposed in this pull request?

Add missing 'subsamplingRate' of pyspark GBTClassifier

## How was this patch tested?

existing tests

Author: Zheng RuiFeng <ruifengz@foxmail.com>

Closes#15692 from zhengruifeng/gbt_subsamplingRate.

## What changes were proposed in this pull request?

- Renamed kbest to numTopFeatures

- Renamed alpha to fpr

- Added missing Since annotations

- Doc cleanups

## How was this patch tested?

Added new standardized unit tests for spark.ml.

Improved existing unit test coverage a bit.

Author: Joseph K. Bradley <joseph@databricks.com>

Closes#15647 from jkbradley/chisqselector-follow-ups.

## What changes were proposed in this pull request?

Add subsmaplingRate to randomForestClassifier

Add varianceCol to randomForestRegressor

In Python

## How was this patch tested?

manual tests

Author: Felix Cheung <felixcheung_m@hotmail.com>

Closes#15638 from felixcheung/pyrandomforest.

## What changes were proposed in this pull request?

This PR is an enhancement of PR with commit ID:57dc326bd00cf0a49da971e9c573c48ae28acaa2.

NaN is a special type of value which is commonly seen as invalid. But We find that there are certain cases where NaN are also valuable, thus need special handling. We provided user when dealing NaN values with 3 options, to either reserve an extra bucket for NaN values, or remove the NaN values, or report an error, by setting handleNaN "keep", "skip", or "error"(default) respectively.

'''Before:

val bucketizer: Bucketizer = new Bucketizer()

.setInputCol("feature")

.setOutputCol("result")

.setSplits(splits)

'''After:

val bucketizer: Bucketizer = new Bucketizer()

.setInputCol("feature")

.setOutputCol("result")

.setSplits(splits)

.setHandleNaN("keep")

## How was this patch tested?

Tests added in QuantileDiscretizerSuite, BucketizerSuite and DataFrameStatSuite

Signed-off-by: VinceShieh <vincent.xieintel.com>

Author: VinceShieh <vincent.xie@intel.com>

Author: Vincent Xie <vincent.xie@intel.com>

Author: Joseph K. Bradley <joseph@databricks.com>

Closes#15428 from VinceShieh/spark-17219_followup.

## What changes were proposed in this pull request?

For feature selection method ChiSquareSelector, it is based on the ChiSquareTestResult.statistic (ChiSqure value) to select the features. It select the features with the largest ChiSqure value. But the Degree of Freedom (df) of ChiSqure value is different in Statistics.chiSqTest(RDD), and for different df, you cannot base on ChiSqure value to select features.

So we change statistic to pValue for SelectKBest and SelectPercentile

## How was this patch tested?

change existing test

Author: Peng <peng.meng@intel.com>

Closes#15444 from mpjlu/chisqure-bug.

## What changes were proposed in this pull request?

Since ```ml.evaluation``` has supported save/load at Scala side, supporting it at Python side is very straightforward and easy.

## How was this patch tested?

Add python doctest.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#13194 from yanboliang/spark-15402.

## What changes were proposed in this pull request?

Follow-up work of #13675, add Python API for ```RFormula forceIndexLabel```.

## How was this patch tested?

Unit test.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#15430 from yanboliang/spark-15957-python.

## What changes were proposed in this pull request?

update python api for NaiveBayes: add weight col parameter.

## How was this patch tested?

doctests added.

Author: WeichenXu <WeichenXu123@outlook.com>

Closes#15406 from WeichenXu123/nb_python_update.

## What changes were proposed in this pull request?

1,parity check and add missing test suites for ml's NB

2,remove some unused imports

## How was this patch tested?

manual tests in spark-shell

Author: Zheng RuiFeng <ruifengz@foxmail.com>

Closes#15312 from zhengruifeng/nb_test_parity.

## What changes were proposed in this pull request?

Replaces` ValueError` with `IndexError` when index passed to `ml` / `mllib` `SparseVector.__getitem__` is out of range. This ensures correct iteration behavior.

Replaces `ValueError` with `IndexError` for `DenseMatrix` and `SparkMatrix` in `ml` / `mllib`.

## How was this patch tested?

PySpark `ml` / `mllib` unit tests. Additional unit tests to prove that the problem has been resolved.

Author: zero323 <zero323@users.noreply.github.com>

Closes#15144 from zero323/SPARK-17587.

## What changes were proposed in this pull request?

Partial revert of #15277 to instead sort and store input to model rather than require sorted input

## How was this patch tested?

Existing tests.

Author: Sean Owen <sowen@cloudera.com>

Closes#15299 from srowen/SPARK-17704.2.

## What changes were proposed in this pull request?

Add Python API for multinomial logistic regression.

- add `family` param in python api.

- expose `coefficientMatrix` and `interceptVector` for `LogisticRegressionModel`

- add python-side testcase for multinomial logistic regression

- update python doc.

## How was this patch tested?

existing and added doc tests.

Author: WeichenXu <WeichenXu123@outlook.com>

Closes#14852 from WeichenXu123/add_MLOR_python.

## What changes were proposed in this pull request?

#14597 modified ```ChiSqSelector``` to support ```fpr``` type selector, however, it left some issue need to be addressed:

* We should allow users to set selector type explicitly rather than switching them by using different setting function, since the setting order will involves some unexpected issue. For example, if users both set ```numTopFeatures``` and ```percentile```, it will train ```kbest``` or ```percentile``` model based on the order of setting (the latter setting one will be trained). This make users confused, and we should allow users to set selector type explicitly. We handle similar issues at other place of ML code base such as ```GeneralizedLinearRegression``` and ```LogisticRegression```.

* Meanwhile, if there are more than one parameter except ```alpha``` can be set for ```fpr``` model, we can not handle it elegantly in the existing framework. And similar issues for ```kbest``` and ```percentile``` model. Setting selector type explicitly can solve this issue also.

* If setting selector type explicitly by users is allowed, we should handle param interaction such as if users set ```selectorType = percentile``` and ```alpha = 0.1```, we should notify users the parameter ```alpha``` will take no effect. We should handle complex parameter interaction checks at ```transformSchema```. (FYI #11620)

* We should use lower case of the selector type names to follow MLlib convention.

* Add ML Python API.

## How was this patch tested?

Unit test.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#15214 from yanboliang/spark-17017.

## What changes were proposed in this pull request?

Match ProbabilisticClassifer.thresholds requirements to R randomForest cutoff, requiring all > 0

## How was this patch tested?

Jenkins tests plus new test cases

Author: Sean Owen <sowen@cloudera.com>

Closes#15149 from srowen/SPARK-17057.

## What changes were proposed in this pull request?

Add treeAggregateDepth parameter for AFTSurvivalRegression to keep consistent with LiR/LoR.

## How was this patch tested?

Existing tests.

Author: WeichenXu <WeichenXu123@outlook.com>

Closes#14851 from WeichenXu123/add_treeAggregate_param_for_survival_regression.

## What changes were proposed in this pull request?

This PR fixes an issue when Bucketizer is called to handle a dataset containing NaN value.

Sometimes, null value might also be useful to users, so in these cases, Bucketizer should

reserve one extra bucket for NaN values, instead of throwing an illegal exception.

Before:

```

Bucketizer.transform on NaN value threw an illegal exception.

```

After:

```

NaN values will be grouped in an extra bucket.

```

## How was this patch tested?

New test cases added in `BucketizerSuite`.

Signed-off-by: VinceShieh <vincent.xieintel.com>

Author: VinceShieh <vincent.xie@intel.com>

Closes#14858 from VinceShieh/spark-17219.

## What changes were proposed in this pull request?

#14956 reduced default k-means|| init steps to 2 from 5 only for spark.mllib package, we should also do same change for spark.ml and PySpark.

## How was this patch tested?

Existing tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#15050 from yanboliang/spark-17389.

## What changes were proposed in this pull request?

```weights``` of ```MultilayerPerceptronClassificationModel``` should be the output weights of layers rather than initial weights, this PR correct it.

## How was this patch tested?

Doc change.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#14967 from yanboliang/mlp-weights.

## What changes were proposed in this pull request?

Add missing `numFeatures` and `numClasses` to the wrapped Java models in PySpark ML pipelines. Also tag `DecisionTreeClassificationModel` as Expiremental to match Scala doc.

## How was this patch tested?

Extended doctests

Author: Holden Karau <holden@us.ibm.com>

Closes#12889 from holdenk/SPARK-15113-add-missing-numFeatures-numClasses.

## What changes were proposed in this pull request?

When fitting a PySpark Pipeline without the `stages` param set, a confusing NoneType error is raised as attempts to iterate over the pipeline stages. A pipeline with no stages should act as an identity transform, however the `stages` param still needs to be set to an empty list. This change improves the error output when the `stages` param is not set and adds a better description of what the API expects as input. Also minor cleanup of related code.

## How was this patch tested?

Added new unit tests to verify an empty Pipeline acts as an identity transformer

Author: Bryan Cutler <cutlerb@gmail.com>

Closes#12790 from BryanCutler/pipeline-identity-SPARK-15018.

## What changes were proposed in this pull request?

1. In scala, add negative low bound checking and put all the low/upper bound checking in one place

2. In python, add low/upper bound checking of indices.

## How was this patch tested?

unit test added

Author: Jeff Zhang <zjffdu@apache.org>

Closes#14555 from zjffdu/SPARK-16965.

JIRA issue link:

https://issues.apache.org/jira/browse/SPARK-16961

Changed one line of Utils.randomizeInPlace to allow elements to stay in place.

Created a unit test that runs a Pearson's chi squared test to determine whether the output diverges significantly from a uniform distribution.

Author: Nick Lavers <nick.lavers@videoamp.com>

Closes#14551 from nicklavers/SPARK-16961-randomizeInPlace.

## What changes were proposed in this pull request?

Fix the typo of ```TreeEnsembleModels``` for PySpark, it should ```TreeEnsembleModel``` which will be consistent with Scala. What's more, it represents a tree ensemble model, so ```TreeEnsembleModel``` should be more reasonable. This should not be used public, so it will not involve breaking change.

## How was this patch tested?

No new tests, should pass existing ones.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#14454 from yanboliang/TreeEnsembleModel.

## What changes were proposed in this pull request?

avgMetrics was summed, not averaged, across folds

Author: =^_^= <maxmoroz@gmail.com>

Closes#14456 from pkch/pkch-patch-1.

## What changes were proposed in this pull request?

Updated ML pipeline Cross Validation Scaladoc & PyDoc.

## How was this patch tested?

Documentation update

(If this patch involves UI changes, please attach a screenshot; otherwise, remove this)

Author: krishnakalyan3 <krishnakalyan3@gmail.com>

Closes#13894 from krishnakalyan3/kfold-cv.

## What changes were proposed in this pull request?

replace ANN convergence tolerance param default

from 1e-4 to 1e-6

so that it will be the same with other algorithms in MLLib which use LBFGS as optimizer.

## How was this patch tested?

Existing Test.

Author: WeichenXu <WeichenXu123@outlook.com>

Closes#14286 from WeichenXu123/update_ann_tol.

## What changes were proposed in this pull request?

add `SparseMatrix` class whick support pickler.

## How was this patch tested?

Existing test.

Author: WeichenXu <WeichenXu123@outlook.com>

Closes#14265 from WeichenXu123/picklable_py.

## What changes were proposed in this pull request?

breeze 0.12 has been released for more than half a year, and it brings lots of new features, performance improvement and bug fixes.

One of the biggest features is ```LBFGS-B``` which is an implementation of ```LBFGS``` with box constraints and much faster for some special case.

We would like to implement Huber loss function for ```LinearRegression``` ([SPARK-3181](https://issues.apache.org/jira/browse/SPARK-3181)) and it requires ```LBFGS-B``` as the optimization solver. So we should bump up the dependent breeze version to 0.12.

For more features, improvements and bug fixes of breeze 0.12, you can refer the following link:

https://groups.google.com/forum/#!topic/scala-breeze/nEeRi_DcY5c

## How was this patch tested?

No new tests, should pass the existing ones.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#14150 from yanboliang/spark-16494.

## What changes were proposed in this pull request?

Made DataFrame-based API primary

* Spark doc menu bar and other places now link to ml-guide.html, not mllib-guide.html

* mllib-guide.html keeps RDD-specific list of features, with a link at the top redirecting people to ml-guide.html

* ml-guide.html includes a "maintenance mode" announcement about the RDD-based API

* **Reviewers: please check this carefully**

* (minor) Titles for DF API no longer include "- spark.ml" suffix. Titles for RDD API have "- RDD-based API" suffix

* Moved migration guide to ml-guide from mllib-guide

* Also moved past guides from mllib-migration-guides to ml-migration-guides, with a redirect link on mllib-migration-guides

* **Reviewers**: I did not change any of the content of the migration guides.

Reorganized DataFrame-based guide:

* ml-guide.html mimics the old mllib-guide.html page in terms of content: overview, migration guide, etc.

* Moved Pipeline description into ml-pipeline.html and moved tuning into ml-tuning.html

* **Reviewers**: I did not change the content of these guides, except some intro text.

* Sidebar remains the same, but with pipeline and tuning sections added

Other:

* ml-classification-regression.html: Moved text about linear methods to new section in page

## How was this patch tested?

Generated docs locally

Author: Joseph K. Bradley <joseph@databricks.com>

Closes#14213 from jkbradley/ml-guide-2.0.

## What changes were proposed in this pull request?

General decisions to follow, except where noted:

* spark.mllib, pyspark.mllib: Remove all Experimental annotations. Leave DeveloperApi annotations alone.

* spark.ml, pyspark.ml

** Annotate Estimator-Model pairs of classes and companion objects the same way.

** For all algorithms marked Experimental with Since tag <= 1.6, remove Experimental annotation.

** For all algorithms marked Experimental with Since tag = 2.0, leave Experimental annotation.

* DeveloperApi annotations are left alone, except where noted.

* No changes to which types are sealed.

Exceptions where I am leaving items Experimental in spark.ml, pyspark.ml, mainly because the items are new:

* Model Summary classes

* MLWriter, MLReader, MLWritable, MLReadable

* Evaluator and subclasses: There is discussion of changes around evaluating multiple metrics at once for efficiency.

* RFormula: Its behavior may need to change slightly to match R in edge cases.

* AFTSurvivalRegression

* MultilayerPerceptronClassifier

DeveloperApi changes:

* ml.tree.Node, ml.tree.Split, and subclasses should no longer be DeveloperApi

## How was this patch tested?

N/A

Note to reviewers:

* spark.ml.clustering.LDA underwent significant changes (additional methods), so let me know if you want me to leave it Experimental.

* Be careful to check for cases where a class should no longer be Experimental but has an Experimental method, val, or other feature. I did not find such cases, but please verify.

Author: Joseph K. Bradley <joseph@databricks.com>

Closes#14147 from jkbradley/experimental-audit.

## What changes were proposed in this pull request?

Issue: Omitting the full classpath can cause problems when calling JVM methods or classes from pyspark.

This PR: Changed all uses of jvm.X in pyspark.ml and pyspark.mllib to use full classpath for X

## How was this patch tested?

Existing unit tests. Manual testing in an environment where this was an issue.

Author: Joseph K. Bradley <joseph@databricks.com>

Closes#14023 from jkbradley/SPARK-16348.

[SPARK-14615](https://issues.apache.org/jira/browse/SPARK-14615) and #12627 changed `spark.ml` pipelines to use the new `ml.linalg` classes for `Vector`/`Matrix`. Some `Since` annotations for public methods/vals have not been updated accordingly to be `2.0.0`. This PR updates them.

## How was this patch tested?

Existing unit tests.

Author: Nick Pentreath <nickp@za.ibm.com>

Closes#13840 from MLnick/SPARK-16127-ml-linalg-since.

## What changes were proposed in this pull request?

Mark ml.classification algorithms as experimental to match Scala algorithms, update PyDoc for for thresholds on `LogisticRegression` to have same level of info as Scala, and enable mathjax for PyDoc.

## How was this patch tested?

Built docs locally & PySpark SQL tests

Author: Holden Karau <holden@us.ibm.com>

Closes#12938 from holdenk/SPARK-15162-SPARK-15164-update-some-pydocs.

## What changes were proposed in this pull request?

Several places set the seed Param default value to None which will translate to a zero value on the Scala side. This is unnecessary because a default fixed value already exists and if a test depends on a zero valued seed, then it should explicitly set it to zero instead of relying on this translation. These cases can be safely removed except for the ALS doc test, which has been changed to set the seed value to zero.

## How was this patch tested?

Ran PySpark tests locally

Author: Bryan Cutler <cutlerb@gmail.com>

Closes#13672 from BryanCutler/pyspark-cleanup-setDefault-seed-SPARK-15741.

This PR adds missing `Since` annotations to `ml.feature` package.

Closes#8505.

## How was this patch tested?

Existing tests.

Author: Nick Pentreath <nickp@za.ibm.com>

Closes#13641 from MLnick/add-since-annotations.

## What changes were proposed in this pull request?

Fixed missing import for DecisionTreeRegressionModel used in GBTClassificationModel trees method.

## How was this patch tested?

Local tests

Author: Bryan Cutler <cutlerb@gmail.com>

Closes#13787 from BryanCutler/pyspark-GBTClassificationModel-import-SPARK-16079.

## What changes were proposed in this pull request?

Now we have PySpark picklers for new and old vector/matrix, individually. However, they are all implemented under `PythonMLlibAPI`. To separate spark.mllib from spark.ml, we should implement the picklers of new vector/matrix under `spark.ml.python` instead.

## How was this patch tested?

Existing tests.

Author: Liang-Chi Hsieh <simonh@tw.ibm.com>

Closes#13219 from viirya/pyspark-pickler-ml.

## What changes were proposed in this pull request?

Adding __str__ to RFormula and model that will show the set formula param and resolved formula. This is currently present in the Scala API, found missing in PySpark during Spark 2.0 coverage review.

## How was this patch tested?

run pyspark-ml tests locally

Author: Bryan Cutler <cutlerb@gmail.com>

Closes#13481 from BryanCutler/pyspark-ml-rformula_str-SPARK-15738.

## What changes were proposed in this pull request?

Word2vec python add maxsentence parameter.

## How was this patch tested?

Existing test.

Author: WeichenXu <WeichenXu123@outlook.com>

Closes#13578 from WeichenXu123/word2vec_python_add_maxsentence.

## What changes were proposed in this pull request?

add method idf to IDF in pyspark

## How was this patch tested?

add unit test

Author: Jeff Zhang <zjffdu@apache.org>

Closes#13540 from zjffdu/SPARK-15788.

## What changes were proposed in this pull request?

Since [SPARK-15617](https://issues.apache.org/jira/browse/SPARK-15617) deprecated ```precision``` in ```MulticlassClassificationEvaluator```, many ML examples broken.

```python

pyspark.sql.utils.IllegalArgumentException: u'MulticlassClassificationEvaluator_4c3bb1d73d8cc0cedae6 parameter metricName given invalid value precision.'

```

We should use ```accuracy``` to replace ```precision``` in these examples.

## How was this patch tested?

Offline tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#13519 from yanboliang/spark-15771.

## What changes were proposed in this pull request?

`an -> a`

Use cmds like `find . -name '*.R' | xargs -i sh -c "grep -in ' an [^aeiou]' {} && echo {}"` to generate candidates, and review them one by one.

## How was this patch tested?

manual tests

Author: Zheng RuiFeng <ruifengz@foxmail.com>

Closes#13515 from zhengruifeng/an_a.

## What changes were proposed in this pull request?

1, del precision,recall in `ml.MulticlassClassificationEvaluator`

2, update user guide for `mlllib.weightedFMeasure`

## How was this patch tested?

local build

Author: Ruifeng Zheng <ruifengz@foxmail.com>

Closes#13390 from zhengruifeng/clarify_f1.

## What changes were proposed in this pull request?

MultilayerPerceptronClassifier is missing step size, solver, and weights. Add these params. Also clarify the scaladoc a bit while we are updating these params.

Eventually we should follow up and unify the HasSolver params (filed https://issues.apache.org/jira/browse/SPARK-15169 )

## How was this patch tested?

Doc tests

Author: Holden Karau <holden@us.ibm.com>

Closes#12943 from holdenk/SPARK-15168-add-missing-params-to-MultilayerPerceptronClassifier.

## What changes were proposed in this pull request?

Add `toDebugString` and `totalNumNodes` to `TreeEnsembleModels` and add `toDebugString` to `DecisionTreeModel`

## How was this patch tested?

Extended doc tests.

Author: Holden Karau <holden@us.ibm.com>

Closes#12919 from holdenk/SPARK-15139-pyspark-treeEnsemble-missing-methods.

## What changes were proposed in this pull request?

ML 2.0 QA: Scala APIs audit for ml.feature. Mainly include:

* Remove seed for ```QuantileDiscretizer```, since we use ```approxQuantile``` to produce bins and ```seed``` is useless.

* Scala API docs update.

* Sync Scala and Python API docs for these changes.

## How was this patch tested?

Exist tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#13410 from yanboliang/spark-15587.

## What changes were proposed in this pull request?

Since we done Scala API audit for ml.clustering at #13148, we should also fix and update the corresponding Python API docs to keep them in sync.

## How was this patch tested?

Docs change, no tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#13291 from yanboliang/spark-15361-followup.

## What changes were proposed in this pull request?

1. Add `_transfer_param_map_to/from_java` for OneVsRest;

2. Add `_compare_params` in ml/tests.py to help compare params.

3. Add `test_onevsrest` as the integration test for OneVsRest.

## How was this patch tested?

Python unit test.

Author: yinxusen <yinxusen@gmail.com>

Closes#12875 from yinxusen/SPARK-15008.

Remove "Default: MEMORY_AND_DISK" from `Param` doc field in ALS storage level params. This fixes up the output of `explainParam(s)` so that default values are not displayed twice.

We can revisit in the case that [SPARK-15130](https://issues.apache.org/jira/browse/SPARK-15130) moves ahead with adding defaults in some way to PySpark param doc fields.

Tests N/A.

Author: Nick Pentreath <nickp@za.ibm.com>

Closes#13277 from MLnick/SPARK-15500-als-remove-default-storage-param.

## What changes were proposed in this pull request?

PySpark: Add links to the predictors from the models in regression.py, improve linear and isotonic pydoc in minor ways.

User guide / R: Switch the installed package list to be enough to build the R docs on a "fresh" install on ubuntu and add sudo to match the rest of the commands.

User Guide: Add a note about using gem2.0 for systems with both 1.9 and 2.0 (e.g. some ubuntu but maybe more).

## How was this patch tested?

built pydocs locally, tested new user build instructions

Author: Holden Karau <holden@us.ibm.com>

Closes#13199 from holdenk/SPARK-15412-improve-linear-isotonic-regression-pydoc.

This PR adds the `relativeError` param to PySpark's `QuantileDiscretizer` to match Scala.

Also cleaned up a duplication of `numBuckets` where the param is both a class and instance attribute (I removed the instance attr to match the style of params throughout `ml`).

Finally, cleaned up the docs for `QuantileDiscretizer` to reflect that it now uses `approxQuantile`.

## How was this patch tested?

A little doctest and built API docs locally to check HTML doc generation.

Author: Nick Pentreath <nickp@za.ibm.com>

Closes#13228 from MLnick/SPARK-15442-py-relerror-param.

## What changes were proposed in this pull request?

Replace SQLContext and SparkContext with SparkSession using builder pattern in python test code.

## How was this patch tested?

Existing test.

Author: WeichenXu <WeichenXu123@outlook.com>

Closes#13242 from WeichenXu123/python_doctest_update_sparksession.

## What changes were proposed in this pull request?

Default value mismatch of param linkPredictionCol for GeneralizedLinearRegression between PySpark and Scala. That is because default value conflict between #13106 and #13129. This causes ml.tests failed.

## How was this patch tested?

Existing tests.

Author: Liang-Chi Hsieh <simonh@tw.ibm.com>

Closes#13220 from viirya/hotfix-regresstion.

## What changes were proposed in this pull request?

```ml.evaluation``` Scala and Python API sync.

## How was this patch tested?

Only API docs change, no new tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#13195 from yanboliang/evaluation-doc.

## What changes were proposed in this pull request?

Add linkPredictionCol to GeneralizedLinearRegression and fix the PyDoc to generate the bullet list

## How was this patch tested?

doctests & built docs locally

Author: Holden Karau <holden@us.ibm.com>

Closes#13106 from holdenk/SPARK-15316-add-linkPredictionCol-toGeneralizedLinearRegression.

## What changes were proposed in this pull request?

I reviewed Scala and Python APIs for ml.feature and corrected discrepancies.

## How was this patch tested?

Built docs locally, ran style checks

Author: Bryan Cutler <cutlerb@gmail.com>

Closes#13159 from BryanCutler/ml.feature-api-sync.

This PR adds schema validation to `ml`'s ALS and ALSModel. Currently, no schema validation was performed as `transformSchema` was never called in `ALS.fit` or `ALSModel.transform`. Furthermore, due to no schema validation, if users passed in Long (or Float etc) ids, they would be silently cast to Int with no warning or error thrown.

With this PR, ALS now supports all numeric types for `user`, `item`, and `rating` columns. The rating column is cast to `Float` and the user and item cols are cast to `Int` (as is the case currently) - however for user/item, the cast throws an error if the value is outside integer range. Behavior for rating col is unchanged (as it is not an issue).

## How was this patch tested?

New test cases in `ALSSuite`.

Author: Nick Pentreath <nickp@za.ibm.com>

Closes#12762 from MLnick/SPARK-14891-als-validate-schema.

## What changes were proposed in this pull request?

This pull request includes supporting validationMetrics for TrainValidationSplitModel with Python and test for it.

## How was this patch tested?

test in `python/pyspark/ml/tests.py`

Author: Takuya Kuwahara <taakuu19@gmail.com>

Closes#12767 from taku-k/spark-14978.

## What changes were proposed in this pull request?

Once SPARK-14487 and SPARK-14549 are merged, we will migrate to use the new vector and matrix type in the new ml pipeline based apis.

## How was this patch tested?

Unit tests

Author: DB Tsai <dbt@netflix.com>

Author: Liang-Chi Hsieh <simonh@tw.ibm.com>

Author: Xiangrui Meng <meng@databricks.com>

Closes#12627 from dbtsai/SPARK-14615-NewML.

## What changes were proposed in this pull request?

Copy the linalg (Vector/Matrix and VectorUDT/MatrixUDT) in PySpark to new ML package.

## How was this patch tested?

Existing tests.

Author: Xiangrui Meng <meng@databricks.com>

Author: Liang-Chi Hsieh <simonh@tw.ibm.com>

Author: Liang-Chi Hsieh <viirya@gmail.com>

Closes#13099 from viirya/move-pyspark-vector-matrix-udt4.

## What changes were proposed in this pull request?

This patch adds a python API for generalized linear regression summaries (training and test). This helps provide feature parity for Python GLMs.

## How was this patch tested?

Added a unit test to `pyspark.ml.tests`

Author: sethah <seth.hendrickson16@gmail.com>

Closes#12961 from sethah/GLR_summary.

## What changes were proposed in this pull request?

1, add arg-checkings for `tol` and `stepSize` to keep in line with `SharedParamsCodeGen.scala`

2, fix one typo

## How was this patch tested?

local build

Author: Zheng RuiFeng <ruifengz@foxmail.com>

Closes#12996 from zhengruifeng/py_args_checking.

## What changes were proposed in this pull request?

Add missing thresholds param to NiaveBayes

## How was this patch tested?

doctests

Author: Holden Karau <holden@us.ibm.com>

Closes#12963 from holdenk/SPARK-15188-add-missing-naive-bayes-param.

## What changes were proposed in this pull request?

Add impurity param to GBTRegressor and mark the of the models & regressors in regression.py as experimental to match Scaladoc.

## How was this patch tested?

Added default value to init, tested with unit/doc tests.

Author: Holden Karau <holden@us.ibm.com>

Closes#13071 from holdenk/SPARK-15281-GBTRegressor-impurity.

## What changes were proposed in this pull request?

Use SparkSession instead of SQLContext in Python TestSuites

## How was this patch tested?

Existing tests

Author: Sandeep Singh <sandeep@techaddict.me>

Closes#13044 from techaddict/SPARK-15037-python.

## What changes were proposed in this pull request?

Fix doctest issue, short param description, and tag items as Experimental

## How was this patch tested?

build docs locally & doctests

Author: Holden Karau <holden@us.ibm.com>

Closes#12964 from holdenk/SPARK-15189-ml.Evaluation-PyDoc-issues.

## What changes were proposed in this pull request?

Tag classes in ml.tuning as experimental, add docs for kfolds avg metric, and copy TrainValidationSplit scaladoc for more detailed explanation.

## How was this patch tested?

built docs locally

Author: Holden Karau <holden@us.ibm.com>

Closes#12967 from holdenk/SPARK-15195-pydoc-ml-tuning.

## What changes were proposed in this pull request?

PyDoc links in ml are in non-standard format. Switch to standard sphinx link format for better formatted documentation. Also add a note about default value in one place. Copy some extended docs from scala for GBT

## How was this patch tested?

Built docs locally.

Author: Holden Karau <holden@us.ibm.com>

Closes#12918 from holdenk/SPARK-15137-linkify-pyspark-ml-classification.

## What changes were proposed in this pull request?

This PR continues the work from #11871 with the following changes:

* load English stopwords as default

* covert stopwords to list in Python

* update some tests and doc

## How was this patch tested?

Unit tests.

Closes#11871

cc: burakkose srowen

Author: Burak Köse <burakks41@gmail.com>

Author: Xiangrui Meng <meng@databricks.com>

Author: Burak KOSE <burakks41@gmail.com>

Closes#12843 from mengxr/SPARK-14050.

## What changes were proposed in this pull request?

Copy the package documentation from Scala/Java to Python for ML package and remove beta tags. Not super sure if we want to keep the BETA tag but since we are making it the default it seems like probably the time to remove it (happy to put it back in if we want to keep it BETA).

## How was this patch tested?

Python documentation built locally as HTML and text and verified output.

Author: Holden Karau <holden@us.ibm.com>

Closes#12883 from holdenk/SPARK-15106-add-pyspark-package-doc-for-ml.

## What changes were proposed in this pull request?

PySpark ML Params setter code clean up.

For examples,

```setInputCol``` can be simplified from

```

self._set(inputCol=value)

return self

```

to:

```

return self._set(inputCol=value)

```

This is a pretty big sweeps, and we cleaned wherever possible.

## How was this patch tested?

Exist unit tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#12749 from yanboliang/spark-14971.

## What changes were proposed in this pull request?

This PR is an update for [https://github.com/apache/spark/pull/12738] which:

* Adds a generic unit test for JavaParams wrappers in pyspark.ml for checking default Param values vs. the defaults in the Scala side

* Various fixes for bugs found

* This includes changing classes taking weightCol to treat unset and empty String Param values the same way.

Defaults changed:

* Scala

* LogisticRegression: weightCol defaults to not set (instead of empty string)

* StringIndexer: labels default to not set (instead of empty array)

* GeneralizedLinearRegression:

* maxIter always defaults to 25 (simpler than defaulting to 25 for a particular solver)

* weightCol defaults to not set (instead of empty string)

* LinearRegression: weightCol defaults to not set (instead of empty string)

* Python

* MultilayerPerceptron: layers default to not set (instead of [1,1])

* ChiSqSelector: numTopFeatures defaults to 50 (instead of not set)

## How was this patch tested?

Generic unit test. Manually tested that unit test by changing defaults and verifying that broke the test.

Author: Joseph K. Bradley <joseph@databricks.com>

Author: yinxusen <yinxusen@gmail.com>

Closes#12816 from jkbradley/yinxusen-SPARK-14931.

#### What changes were proposed in this pull request?

This PR removes three methods the were deprecated in 1.6.0:

- `PortableDataStream.close()`

- `LinearRegression.weights`

- `LogisticRegression.weights`

The rationale for doing this is that the impact is small and that Spark 2.0 is a major release.

#### How was this patch tested?

Compilation succeded.

Author: Herman van Hovell <hvanhovell@questtec.nl>

Closes#12732 from hvanhovell/SPARK-14952.

## What changes were proposed in this pull request?

This PR fixes the bug that generates infinite distances between word vectors. For example,

Before this PR, we have

```

val synonyms = model.findSynonyms("who", 40)

```

will give the following results:

```

to Infinity

and Infinity

that Infinity

with Infinity

```

With this PR, the distance between words is a value between 0 and 1, as follows:

```

scala> model.findSynonyms("who", 10)