### What changes were proposed in this pull request?

This is a re-proposal of https://github.com/apache/spark/pull/23163. Currently spark always requires a [local sort](https://github.com/apache/spark/blob/master/sql/core/src/main/scala/org/apache/spark/sql/execution/datasources/FileFormatWriter.scala#L188) before writing to output table with dynamic partition/bucket columns. The sort can be unnecessary if cardinality of partition/bucket values is small, and can be avoided by keeping multiple output writers concurrently.

This PR introduces a config `spark.sql.maxConcurrentOutputFileWriters` (which disables this feature by default), where user can tune the maximal number of concurrent writers. The config is needed here as we cannot keep arbitrary number of writers in task memory which can cause OOM (especially for Parquet/ORC vectorization writer).

The feature is to first use concurrent writers to write rows. If the number of writers exceeds the above config specified limit. Sort rest of rows and write rows one by one (See `DynamicPartitionDataConcurrentWriter.writeWithIterator()`).

In addition, interface `WriteTaskStatsTracker` and its implementation `BasicWriteTaskStatsTracker` are also changed because previously they are relying on the assumption that only one writer is active for writing dynamic partitions and bucketed table.

### Why are the changes needed?

Avoid the sort before writing output for dynamic partitioned query and bucketed table.

Help improve CPU and IO performance for these queries.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Added unit test in `DataFrameReaderWriterSuite.scala`.

Closes#32198 from c21/writer.

Authored-by: Cheng Su <chengsu@fb.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

1, remove existing agg, and use a new agg supporting virtual centering

2, add related testsuites

### Why are the changes needed?

centering vectors should accelerate convergence, and generate solution more close to R

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

updated testsuites and added testsuites

Closes#32124 from zhengruifeng/svc_agg_refactor.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Ruifeng Zheng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

This patch introduces a VectorizedBLAS class which implements such hardware-accelerated BLAS operations. This feature is hidden behind the "vectorized" profile that you can enable by passing "-Pvectorized" to sbt or maven.

The Vector API has been introduced in JDK 16. Following discussion on the mailing list, this API is introduced transparently and needs to be enabled explicitely.

### Why are the changes needed?

Whenever a native BLAS implementation isn't available on the system, Spark automatically falls back onto a Java implementation. With the recent release of the Vector API in the OpenJDK [1], we can use hardware acceleration for such operations.

This change was also discussed on the mailing list. [2]

### Does this PR introduce _any_ user-facing change?

It introduces a build-time profile called `vectorized`. You can pass it to sbt and mvn with `-Pvectorized`. There is no change to the end-user of Spark and it should only impact Spark developpers. It is also disabled by default.

### How was this patch tested?

It passes `build/sbt mllib-local/test` with and without `-Pvectorized` with JDK 16. This patch also introduces benchmarks for BLAS.

The benchmark results are as follows:

```

[info] daxpy: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

[info] ------------------------------------------------------------------------------------------------------------------------

[info] f2j 37 37 0 271.5 3.7 1.0X

[info] vector 24 25 4 416.1 2.4 1.5X

[info]

[info] ddot: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

[info] ------------------------------------------------------------------------------------------------------------------------

[info] f2j 70 70 0 143.2 7.0 1.0X

[info] vector 35 35 2 288.7 3.5 2.0X

[info]

[info] sdot: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

[info] ------------------------------------------------------------------------------------------------------------------------

[info] f2j 50 51 1 199.8 5.0 1.0X

[info] vector 15 15 0 648.7 1.5 3.2X

[info]

[info] dscal: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

[info] ------------------------------------------------------------------------------------------------------------------------

[info] f2j 34 34 0 295.6 3.4 1.0X

[info] vector 19 19 0 531.2 1.9 1.8X

[info]

[info] sscal: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

[info] ------------------------------------------------------------------------------------------------------------------------

[info] f2j 25 25 1 399.0 2.5 1.0X

[info] vector 8 9 1 1177.3 0.8 3.0X

[info]

[info] dgemv[N]: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

[info] ------------------------------------------------------------------------------------------------------------------------

[info] f2j 27 27 0 0.0 26651.5 1.0X

[info] vector 21 21 0 0.0 20646.3 1.3X

[info]

[info] dgemv[T]: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

[info] ------------------------------------------------------------------------------------------------------------------------

[info] f2j 36 36 0 0.0 35501.4 1.0X

[info] vector 22 22 0 0.0 21930.3 1.6X

[info]

[info] sgemv[N]: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

[info] ------------------------------------------------------------------------------------------------------------------------

[info] f2j 20 20 0 0.0 20283.3 1.0X

[info] vector 9 9 0 0.1 8657.7 2.3X

[info]

[info] sgemv[T]: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

[info] ------------------------------------------------------------------------------------------------------------------------

[info] f2j 30 30 0 0.0 29845.8 1.0X

[info] vector 10 10 1 0.1 9695.4 3.1X

[info]

[info] dgemm[N,N]: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

[info] ------------------------------------------------------------------------------------------------------------------------

[info] f2j 182 182 0 0.5 1820.0 1.0X

[info] vector 160 160 1 0.6 1597.6 1.1X

[info]

[info] dgemm[N,T]: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

[info] ------------------------------------------------------------------------------------------------------------------------

[info] f2j 211 211 1 0.5 2106.2 1.0X

[info] vector 156 157 0 0.6 1564.4 1.3X

[info]

[info] dgemm[T,N]: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

[info] ------------------------------------------------------------------------------------------------------------------------

[info] f2j 276 276 0 0.4 2757.8 1.0X

[info] vector 137 137 0 0.7 1365.1 2.0X

```

/cc srowen xkrogen

[1] https://openjdk.java.net/jeps/338

[2] https://mail-archives.apache.org/mod_mbox/spark-dev/202012.mbox/%3cDM5PR2101MB11106162BB3AF32AD29C6C79B0C69DM5PR2101MB1110.namprd21.prod.outlook.com%3eCloses#30810 from luhenry/master.

Lead-authored-by: Ludovic Henry <luhenry@microsoft.com>

Co-authored-by: Ludovic Henry <git@ludovic.dev>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

One of the main performance bottlenecks in query compilation is overly-generic tree transformation methods, namely `mapChildren` and `withNewChildren` (defined in `TreeNode`). These methods have an overly-generic implementation to iterate over the children and rely on reflection to create new instances. We have observed that, especially for queries with large query plans, a significant amount of CPU cycles are wasted in these methods. In this PR we make these methods more efficient, by delegating the iteration and instantiation to concrete node types. The benchmarks show that we can expect significant performance improvement in total query compilation time in queries with large query plans (from 30-80%) and about 20% on average.

#### Problem detail

The `mapChildren` method in `TreeNode` is overly generic and costly. To be more specific, this method:

- iterates over all the fields of a node using Scala’s product iterator. While the iteration is not reflection-based, thanks to the Scala compiler generating code for `Product`, we create many anonymous functions and visit many nested structures (recursive calls).

The anonymous functions (presumably compiled to Java anonymous inner classes) also show up quite high on the list in the object allocation profiles, so we are putting unnecessary pressure on GC here.

- does a lot of comparisons. Basically for each element returned from the product iterator, we check if it is a child (contained in the list of children) and then transform it. We can avoid that by just iterating over children, but in the current implementation, we need to gather all the fields (only transform the children) so that we can instantiate the object using the reflection.

- creates objects using reflection, by delegating to the `makeCopy` method, which is several orders of magnitude slower than using the constructor.

#### Solution

The proposed solution in this PR is rather straightforward: we rewrite the `mapChildren` method using the `children` and `withNewChildren` methods. The default `withNewChildren` method suffers from the same problems as `mapChildren` and we need to make it more efficient by specializing it in concrete classes. Similar to how each concrete query plan node already defines its children, it should also define how they can be constructed given a new list of children. Actually, the implementation is quite simple in most cases and is a one-liner thanks to the copy method present in Scala case classes. Note that we cannot abstract over the copy method, it’s generated by the compiler for case classes if no other type higher in the hierarchy defines it. For most concrete nodes, the implementation of `withNewChildren` looks like this:

```

override def withNewChildren(newChildren: Seq[LogicalPlan]): LogicalPlan = copy(children = newChildren)

```

The current `withNewChildren` method has two properties that we should preserve:

- It returns the same instance if the provided children are the same as its children, i.e., it preserves referential equality.

- It copies tags and maintains the origin links when a new copy is created.

These properties are hard to enforce in the concrete node type implementation. Therefore, we propose a template method `withNewChildrenInternal` that should be rewritten by the concrete classes and let the `withNewChildren` method take care of referential equality and copying:

```

override def withNewChildren(newChildren: Seq[LogicalPlan]): LogicalPlan = {

if (childrenFastEquals(children, newChildren)) {

this

} else {

CurrentOrigin.withOrigin(origin) {

val res = withNewChildrenInternal(newChildren)

res.copyTagsFrom(this)

res

}

}

}

```

With the refactoring done in a previous PR (https://github.com/apache/spark/pull/31932) most tree node types fall in one of the categories of `Leaf`, `Unary`, `Binary` or `Ternary`. These traits have a more efficient implementation for `mapChildren` and define a more specialized version of `withNewChildrenInternal` that avoids creating unnecessary lists. For example, the `mapChildren` method in `UnaryLike` is defined as follows:

```

override final def mapChildren(f: T => T): T = {

val newChild = f(child)

if (newChild fastEquals child) {

this.asInstanceOf[T]

} else {

CurrentOrigin.withOrigin(origin) {

val res = withNewChildInternal(newChild)

res.copyTagsFrom(this.asInstanceOf[T])

res

}

}

}

```

#### Results

With this PR, we have observed significant performance improvements in query compilation time, more specifically in the analysis and optimization phases. The table below shows the TPC-DS queries that had more than 25% speedup in compilation times. Biggest speedups are observed in queries with large query plans.

| Query | Speedup |

| ------------- | ------------- |

|q4 |29%|

|q9 |81%|

|q14a |31%|

|q14b |28%|

|q22 |33%|

|q33 |29%|

|q34 |25%|

|q39 |27%|

|q41 |27%|

|q44 |26%|

|q47 |28%|

|q48 |76%|

|q49 |46%|

|q56 |26%|

|q58 |43%|

|q59 |46%|

|q60 |50%|

|q65 |59%|

|q66 |46%|

|q67 |52%|

|q69 |31%|

|q70 |30%|

|q96 |26%|

|q98 |32%|

#### Binary incompatibility

Changing the `withNewChildren` in `TreeNode` breaks the binary compatibility of the code compiled against older versions of Spark because now it is expected that concrete `TreeNode` subclasses all implement the `withNewChildrenInternal` method. This is a problem, for example, when users write custom expressions. This change is the right choice, since it forces all newly added expressions to Catalyst implement it in an efficient manner and will prevent future regressions.

Please note that we have not completely removed the old implementation and renamed it to `legacyWithNewChildren`. This method will be removed in the future and for now helps the transition. There are expressions such as `UpdateFields` that have a complex way of defining children. Writing `withNewChildren` for them requires refactoring the expression. For now, these expressions use the old, slow method. In a future PR we address these expressions.

### Does this PR introduce _any_ user-facing change?

This PR does not introduce user facing changes but my break binary compatibility of the code compiled against older versions. See the binary compatibility section.

### How was this patch tested?

This PR is mainly a refactoring and passes existing tests.

Closes#32030 from dbaliafroozeh/ImprovedMapChildren.

Authored-by: Ali Afroozeh <ali.afroozeh@databricks.com>

Signed-off-by: herman <herman@databricks.com>

### What changes were proposed in this pull request?

This is a followup for https://github.com/apache/spark/pull/31932.

In this PR we:

- Introduce the `QuaternaryLike` trait for node types with 4 children.

- Specialize more node types

- Fix a number of style errors that were introduced in the original PR.

### Why are the changes needed?

### Does this PR introduce _any_ user-facing change?

### How was this patch tested?

This is a refactoring, passes existing tests.

Closes#32065 from dbaliafroozeh/FollowupSPARK-34906.

Authored-by: Ali Afroozeh <ali.afroozeh@databricks.com>

Signed-off-by: herman <herman@databricks.com>

### What changes were proposed in this pull request?

https://github.com/apache/spark/pull/32015 added a way to run benchmarks much more easily in the same GitHub Actions build. This PR updates the benchmark results by using the way.

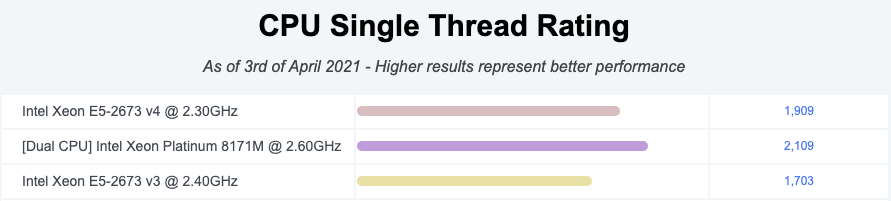

**NOTE** that looks like GitHub Actions use four types of CPU given my observations:

- Intel(R) Xeon(R) Platinum 8171M CPU 2.60GHz

- Intel(R) Xeon(R) CPU E5-2673 v4 2.30GHz

- Intel(R) Xeon(R) CPU E5-2673 v3 2.40GHz

- Intel(R) Xeon(R) Platinum 8272CL CPU 2.60GHz

Given my quick research, seems like they perform roughly similarly:

I couldn't find enough information about Intel(R) Xeon(R) Platinum 8272CL CPU 2.60GHz but the performance seems roughly similar given the numbers.

So shouldn't be a big deal especially given that this way is much easier, encourages contributors to run more and guarantee the same number of cores and same memory with the same softwares.

### Why are the changes needed?

To have a base line of the benchmarks accordingly.

### Does this PR introduce _any_ user-facing change?

No, dev-only.

### How was this patch tested?

It was generated from:

- [Run benchmarks: * (JDK 11)](https://github.com/HyukjinKwon/spark/actions/runs/713575465)

- [Run benchmarks: * (JDK 8)](https://github.com/HyukjinKwon/spark/actions/runs/713154337)

Closes#32044 from HyukjinKwon/SPARK-34950.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Max Gekk <max.gekk@gmail.com>

### What changes were proposed in this pull request?

1, use new `MultinomialLogisticBlockAggregator` which support virtual centering

2, remove no-used `BlockLogisticAggregator`

### Why are the changes needed?

1, for better convergence;

2, its solution is much close to GLMNET;

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

updated and new test suites

Closes#31985 from zhengruifeng/mlr_center.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

Some `spark-submit` commands used to run benchmarks in the user's guide is wrong, we can't use these commands to run benchmarks successful.

So the major changes of this pr is correct these wrong commands, for example, run a benchmark which inherits from `SqlBasedBenchmark`, we must specify `--jars <spark core test jar>,<spark catalyst test jar>` because `SqlBasedBenchmark` based benchmark extends `BenchmarkBase(defined in spark core test jar)` and `SQLHelper(defined in spark catalyst test jar)`.

Another change of this pr is removed the `scalatest Assertions` dependency of Benchmarks because `scalatest-*.jar` are not in the distribution package, it will be troublesome to use.

### Why are the changes needed?

Make sure benchmarks can run using spark-submit cmd described in the guide

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Use the corrected `spark-submit` commands to run benchmarks successfully.

Closes#31995 from LuciferYang/fix-benchmark-guide.

Authored-by: yangjie01 <yangjie01@baidu.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

for binary lr, performance centering by subtracting the mean, if possible;

### Why are the changes needed?

for a feature with small std (i.e. 0.03), the scaled feature may have a large value (i.e. >30),

underlying solvers (**OWLQN/LBFGS/LBFGSB**) can not handle a feature with large values;

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

added testsuite

Closes#31693 from zhengruifeng/blr_center.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

1, add `BinaryLogisticBlockAggregator` and `MultinomialLogisticBlockAggregator` and related testsuites;

2, impl `virtual centering` in standardization;

3, remove old `LogisticAggregator`

### Why are the changes needed?

previous [pr](https://github.com/apache/spark/pull/31693) and related works is too large, we need to split it into 3 prs:

1, this one, impl new agg supporting `virtual centering`, remove old `LogisticAggregator`;

2, adopt new blor-agg in lor, add new test suite for small var features;

3, adopt new mlor-agg in lor, add new test suite for small var features, remove `BlockLogisticAggregator`;

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

added testsuites

Closes#31889 from zhengruifeng/blor_mlor_agg.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

1, add a new param `sorted` in `slice`;

2, in `VectorSlicer`, set `sorted = true` if input indices are ordered.

### Why are the changes needed?

The input indices of VectorSlicer are probably ordered.

VectorSlicer should use this attribute if possible.

I did a simple test and `sorted = true` maybe about 70% faster than existing `slice`

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

added testsuite

Closes#31588 from zhengruifeng/vector_slice_for_sorted_indices.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Ruifeng Zheng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

Document `mode` as a supported Imputer strategy in Pyspark docs.

### Why are the changes needed?

Support was added in 3.1, and documented in Scala, but some Python docs were missed.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Existing tests.

Closes#31883 from srowen/ImputerModeDocs.

Authored-by: Sean Owen <srowen@gmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

pyrolite 4.21 introduced and enabled value comparison by default (`valueCompare=true`) during object memoization and serialization: https://github.com/irmen/Pyrolite/blob/pyrolite-4.21/java/src/main/java/net/razorvine/pickle/Pickler.java#L112-L122

This change has undesired effect when we serialize a row (actually `GenericRowWithSchema`) to be passed to python: https://github.com/apache/spark/blob/branch-3.0/sql/core/src/main/scala/org/apache/spark/sql/execution/python/EvaluatePython.scala#L60. A simple example is that

```

new GenericRowWithSchema(Array(1.0, 1.0), StructType(Seq(StructField("_1", DoubleType), StructField("_2", DoubleType))))

```

and

```

new GenericRowWithSchema(Array(1, 1), StructType(Seq(StructField("_1", IntegerType), StructField("_2", IntegerType))))

```

are currently equal and the second instance is replaced to the short code of the first one during serialization.

### Why are the changes needed?

The above can cause nasty issues like the one in https://issues.apache.org/jira/browse/SPARK-34545 description:

```

>>> from pyspark.sql.functions import udf

>>> from pyspark.sql.types import *

>>>

>>> def udf1(data_type):

def u1(e):

return e[0]

return udf(u1, data_type)

>>>

>>> df = spark.createDataFrame([((1.0, 1.0), (1, 1))], ['c1', 'c2'])

>>>

>>> df = df.withColumn("c3", udf1(DoubleType())("c1"))

>>> df = df.withColumn("c4", udf1(IntegerType())("c2"))

>>>

>>> df.select("c3").show()

+---+

| c3|

+---+

|1.0|

+---+

>>> df.select("c4").show()

+---+

| c4|

+---+

| 1|

+---+

>>> df.select("c3", "c4").show()

+---+----+

| c3| c4|

+---+----+

|1.0|null|

+---+----+

```

This is because during serialization from JVM to Python `GenericRowWithSchema(1.0, 1.0)` (`c1`) is memoized first and when `GenericRowWithSchema(1, 1)` (`c2`) comes next, it is replaced to some short code of the `c1` (instead of serializing `c2` out) as they are `equal()`. The python functions then runs but the return type of `c4` is expected to be `IntegerType` and if a different type (`DoubleType`) comes back from python then it is discarded: https://github.com/apache/spark/blob/branch-3.0/sql/core/src/main/scala/org/apache/spark/sql/execution/python/EvaluatePython.scala#L108-L113

After this PR:

```

>>> df.select("c3", "c4").show()

+---+---+

| c3| c4|

+---+---+

|1.0| 1|

+---+---+

```

### Does this PR introduce _any_ user-facing change?

Yes, fixes a correctness issue.

### How was this patch tested?

Added new UT + manual tests.

Closes#31682 from peter-toth/SPARK-34545-fix-row-comparison.

Authored-by: Peter Toth <peter.toth@gmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

Code in the PR generates random parameters for hyperparameter tuning. A discussion with Sean Owen can be found on the dev mailing list here:

http://apache-spark-developers-list.1001551.n3.nabble.com/Hyperparameter-Optimization-via-Randomization-td30629.html

All code is entirely my own work and I license the work to the project under the project’s open source license.

### Why are the changes needed?

Randomization can be a more effective techinique than a grid search since min/max points can fall between the grid and never be found. Randomisation is not so restricted although the probability of finding minima/maxima is dependent on the number of attempts.

Alice Zheng has an accessible description on how this technique works at https://www.oreilly.com/library/view/evaluating-machine-learning/9781492048756/ch04.html

Although there are Python libraries with more sophisticated techniques, not every Spark developer is using Python.

### Does this PR introduce _any_ user-facing change?

A new class (`ParamRandomBuilder.scala`) and its tests have been created but there is no change to existing code. This class offers an alternative to `ParamGridBuilder` and can be dropped into the code wherever `ParamGridBuilder` appears. Indeed, it extends `ParamGridBuilder` and is completely compatible with its interface. It merely adds one method that provides a range over which a hyperparameter will be randomly defined.

### How was this patch tested?

Tests `ParamRandomBuilderSuite.scala` and `RandomRangesSuite.scala` were added.

`ParamRandomBuilderSuite` is the analogue of the already existing `ParamGridBuilderSuite` which tests the user-facing interface.

`RandomRangesSuite` uses ScalaCheck to test the random ranges over which hyperparameters are distributed.

Closes#31535 from PhillHenry/ParamRandomBuilder.

Authored-by: Phillip Henry <PhillHenry@gmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

UserDefinedType and UDTRegistration become public Developer APIs, not package-private to Spark.

### Why are the changes needed?

This proposes to simply open up the UserDefinedType class as a developer API. It was public in 1.x, but closed in 2.x for some possible redesign that does not seem to have happened.

Other libraries have managed to define UDTs anyway by inserting shims into the Spark namespace, and this evidently has worked OK. But package isolation in Java 9+ breaks this.

The logic here is mostly: this is de facto a stable API, so can at least be open to developers with the usual caveats about developer APIs.

Open questions:

- Is there in fact some important redesign that's needed before opening it? The comment to this effect is from 2016

- Is this all that needs to be opened up? Like PythonUserDefinedType?

- Should any of this be kept package-private?

This was first proposed in https://github.com/apache/spark/pull/16478 though it was a larger change, but, the other API issues it was fixing seem to have been addressed already (e.g. no need to return internal Spark types). It was never really reviewed.

My hunch is that there isn't much downside, and some upside, to just opening this as-is now.

### Does this PR introduce _any_ user-facing change?

UserDefinedType becomes visible to developers to subclass.

### How was this patch tested?

Existing tests; there is no change to the existing logic.

Closes#31461 from srowen/SPARK-7768.

Authored-by: Sean Owen <srowen@gmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

This follows up #31160 to update score function in the document.

### Why are the changes needed?

Currently we use `f_classif`, `ch2`, `f_regression`, which sound to me the sklearn's naming. It is good to have it but I think it is nice if we have formal score function name with sklearn's ones.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

No, only doc change.

Closes#31531 from viirya/SPARK-34080-minor.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Increase the rel tol from 0.2 to 0.35.

### Why are the changes needed?

Fix flaky test

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

UT.

Closes#31536 from WeichenXu123/ES-65815.

Authored-by: Weichen Xu <weichen.xu@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

1, clear predictionCol & probabilityCol, use tmp rawPred col, to avoid potential column conflict;

2, use array instead of map, to keep in line with the python side;

3, simplify transform

### Why are the changes needed?

if input dataset has a column whose name is `predictionCol`,`probabilityCol`,`RawPredictionCol`, transfrom will fail.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

added testsuite

Closes#31472 from zhengruifeng/ovr_submodel_skip_pred_prob.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

`hashDistance` optimization: if two vectors in a pair are the same, directly return 0.0

### Why are the changes needed?

it should be faster than existing impl, because of short-circuit

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

existing testsuites

Closes#31394 from zhengruifeng/min_hash_distance_opt.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

Param Validation throw `IllegalArgumentException`

### Why are the changes needed?

Param Validation should throw `IllegalArgumentException` instead of `IllegalStateException`

### Does this PR introduce _any_ user-facing change?

Yes, the type of exception changed

### How was this patch tested?

existing testsuites

Closes#31469 from zhengruifeng/mllib_exceptions.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Ruifeng Zheng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

1, update checking of numFeatures;

2, update `toString` to take `names` into account;

### Why are the changes needed?

1, should use `inputAttr.size` instead of `inputAttr.numAttributes` to get numFeatures;

2, this checking is necessary only if `$(indices).nonEmpty`, otherwise, `$(indices).max` will throw exception java.lang.UnsupportedOperationException: empty.max

3, in toString, should add length of names;

### Does this PR introduce _any_ user-facing change?

Yes, toString now count `names`;

### How was this patch tested?

existing testsuites

Closes#31354 from zhengruifeng/vector_slicer_clean.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Ruifeng Zheng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

Use `count` to simplify `find + size(or length)` operation, it's semantically consistent, but looks simpler.

**Before**

```

seq.filter(p).size

```

**After**

```

seq.count(p)

```

### Why are the changes needed?

Code Simpilefications.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Pass the Jenkins or GitHub Action

Closes#31374 from LuciferYang/SPARK-34275.

Authored-by: yangjie01 <yangjie01@baidu.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

1, use Guavaording instead of BoundedPriorityQueue;

2, use local variables;

3, avoid conversion: ml.vector -> mllib.vector

### Why are the changes needed?

this pr is about 30% faster than existing impl

### Does this PR introduce _any_ user-facing change?

NO

### How was this patch tested?

existing testsuites

Closes#31276 from zhengruifeng/w2v_findSynonyms_opt.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Ruifeng Zheng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

use GEMV instead of DOT

### Why are the changes needed?

1, better performance, could be 20% faster than existing impl

2, simplify model saving

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

existing testsuites

Closes#31313 from zhengruifeng/random_project_opt.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Ruifeng Zheng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

1, update related doc;

2, MatrixFactorizationModel use GEMV;

### Why are the changes needed?

see performance gain in https://github.com/apache/spark/pull/30468

### Does this PR introduce _any_ user-facing change?

NO

### How was this patch tested?

existing testsuites

Closes#31279 from zhengruifeng/als_follow_up.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Ruifeng Zheng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

1, make `silhouette` a method;

2, change return type of `setDistanceMeasure` to `this.type`;

### Why are the changes needed?

see comments in https://github.com/apache/spark/pull/28590

### Does this PR introduce _any_ user-facing change?

No, 3.1 has not been released

### How was this patch tested?

existing testsuites

Closes#31334 from zhengruifeng/31768-followup.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Weichen Xu <weichen.xu@databricks.com>

### What changes were proposed in this pull request?

determine the numParts by numNodes

### Why are the changes needed?

current model saving may generate too many small files,

a tree model can be too large to single partition (a RandomForestClassificationModel with numTrees=100 and depth=20, its size is 226M)

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

existing testsuites

Closes#31090 from zhengruifeng/treemodel_single_part.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Ruifeng Zheng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

This test fails flakily. I found it failing in 1 out of 80 runs.

```

Expected -0.35667494393873245 and -0.41914521201224453 to be within 0.15 using relative tolerance.

```

Increasing relative tolerance to 0.2 should improve flakiness.

```

0.2 * 0.35667494393873245 = 0.071 > 0.062 = |-0.35667494393873245 - (-0.41914521201224453)|

```

### Why are the changes needed?

### Does this PR introduce _any_ user-facing change?

### How was this patch tested?

Closes#31266 from Loquats/NaiveBayesSuite-reltol.

Authored-by: Andy Zhang <yue.zhang@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Increase the timeout for StreamingLinearRegressionSuite to 60s to deflake the test.

### Why are the changes needed?

Reduce merge conflict.

### Does this PR introduce _any_ user-facing change?

### How was this patch tested?

Closes#31248 from liangz1/increase-timeout.

Authored-by: Liang Zhang <liang.zhang@databricks.com>

Signed-off-by: Weichen Xu <weichen.xu@databricks.com>

### What changes were proposed in this pull request?

update the ParamValidators of `maxDepth`

### Why are the changes needed?

current impl of tree models only support maxDepth<=30

### Does this PR introduce _any_ user-facing change?

If `maxDepth`>30, fail quickly

### How was this patch tested?

existing testsuites

Closes#31163 from zhengruifeng/param_maxDepth_upbound.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

1, make `getTopIndices`/`selectIndicesFromPValues` private;

2, avoid setting `selectionThreshold` in `fit`

3, move param checking to `transformSchema`

### Why are the changes needed?

`getTopIndices`/`selectIndicesFromPValues` should not be exposed to end users;

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

existing testsuites

Closes#31222 from zhengruifeng/selector_clean_up.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Add UnivariateFeatureSelector

### Why are the changes needed?

Have one UnivariateFeatureSelector, so we don't need to have three Feature Selectors.

### Does this PR introduce _any_ user-facing change?

Yes

```

selector = UnivariateFeatureSelector(featureCols=["x", "y", "z"], labelCol=["target"], featureType="categorical", labelType="continuous", selectorType="numTopFeatures", numTopFeatures=100)

```

Or

numTopFeatures

```

selector = UnivariateFeatureSelector(featureCols=["x", "y", "z"], labelCol=["target"], scoreFunction="f_classif", selectorType="numTopFeatures", numTopFeatures=100)

```

### How was this patch tested?

Add Unit test

Closes#31160 from huaxingao/UnivariateSelector.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Weichen Xu <weichen.xu@databricks.com>

### What changes were proposed in this pull request?

Some local variables are declared as `var`, but they are never reassigned and should be declared as `val`, so this pr turn these from `var` to `val` except for `mockito` related cases.

### Why are the changes needed?

Use `val` instead of `var` when possible.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Pass the Jenkins or GitHub Action

Closes#31142 from LuciferYang/SPARK-33346.

Authored-by: yangjie01 <yangjie01@baidu.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

There are some redundant collection conversion can be removed, for version compatibility, clean up these with Scala-2.13 profile.

### Why are the changes needed?

Remove redundant collection conversion

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

- Pass the Jenkins or GitHub Action

- Manual test `core`, `graphx`, `mllib`, `mllib-local`, `sql`, `yarn`,`kafka-0-10` in Scala 2.13 passed

Closes#31125 from LuciferYang/SPARK-34068.

Authored-by: yangjie01 <yangjie01@baidu.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

use a tmp model in OneVsRestModel.transform, to avoid calling directly setter of model

### Why are the changes needed?

params of model (submodels) should not be changed in transform

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

added testsuite

Closes#31086 from zhengruifeng/ovr_transform_tmp_model.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Ruifeng Zheng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

Improve flaky NaiveBayes test

Current test may sometimes fail under different BLAS library. Due to some absTol check. Error like

```

Expected 0.7 and 0.6485507246376814 to be within 0.05 using absolute tolerance...

```

* Change absTol to relTol: The `absTol 0.05` in some cases (such as compare 0.1 and 0.05) is a big difference

* Remove the `exp` when comparing params. The `exp` will amplify the relative error.

### Why are the changes needed?

Flaky test

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

N/A

Closes#31004 from WeichenXu123/improve_bayes_tests.

Authored-by: Weichen Xu <weichen.xu@databricks.com>

Signed-off-by: Ruifeng Zheng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

Change visibility modifier of two case classes defined inside objects in mllib from private to private[OuterClass]

### Why are the changes needed?

Without this change when running tests for Scala 2.13 you get runtime code generation errors. These errors look like this:

```

[info] Cause: java.util.concurrent.ExecutionException: org.codehaus.commons.compiler.CompileException: File 'generated.java', Line 73, Column 65: failed to compile: org.codehaus.commons.compiler.CompileException: File 'generated.java', Line 73, Column 65: No applicable constructor/method found for zero actual parameters; candidates are: "public java.lang.String org.apache.spark.ml.feature.Word2VecModel$Data.word()"

```

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Existing tests now pass for Scala 2.13

Closes#31018 from koertkuipers/feat-visibility-scala213.

Authored-by: Koert Kuipers <koert@tresata.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

In https://github.com/apache/spark/pull/21632/files#diff-0fdae8a6782091746ed20ea43f77b639f9c6a5f072dd2f600fcf9a7b37db4f47, a new field `rawCount` was added into `NodeData`, which cause that a tree model trained in 2.4 can not be loaded in 3.0/3.1/master;

field `rawCount` is only used in training, and not used in `transform`/`predict`/`featureImportance`. So I just set it to -1L.

### Why are the changes needed?

to support load old tree model in 3.0/3.1/master

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

added testsuites

Closes#30889 from zhengruifeng/fix_tree_load.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

There were a lot of works on improving ALS's recommendForAll

For now, I found that it maybe futhermore optimized by

1, using GEMV and sharing a pre-allocated buffer per task;

2, using guava.ordering instead of BoundedPriorityQueue;

### Why are the changes needed?

In my test, using `f2jBLAS.sgemv`, it is about 2.3X faster than existing impl.

|Impl| Master | GEMM | GEMV | GEMV + array aggregator | GEMV + guava ordering + array aggregator | GEMV + guava ordering|

|------|----------|------------|----------|------------|------------|------------|

|Duration|341229|363741|191201|189790|148417|147222|

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

existing testsuites

Closes#30468 from zhengruifeng/als_rec_opt.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

Improve LogisticRegression test error tolerance

### Why are the changes needed?

When we switch BLAS version, some of the tests will fail due to too strict error tolerance in test.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

N/A

Closes#30587 from WeichenXu123/fix_lor_test.

Authored-by: Weichen Xu <weichen.xu@databricks.com>

Signed-off-by: Weichen Xu <weichen.xu@databricks.com>

### What changes were proposed in this pull request?

1, directly use float vectors instead of converting to double vectors, this is about 2x faster than using vec.axpy;

2, mark `wordList` and `wordVecNorms` lazy

3, avoid slicing in computation of `wordVecNorms`

### Why are the changes needed?

halve broadcast size

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

existing testsuites

Closes#30548 from zhengruifeng/w2v_float32_transform.

Lead-authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Co-authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: Ruifeng Zheng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

This PR aims to update `master` branch version to 3.2.0-SNAPSHOT.

### Why are the changes needed?

Start to prepare Apache Spark 3.2.0.

### Does this PR introduce _any_ user-facing change?

N/A.

### How was this patch tested?

Pass the CIs.

Closes#30606 from dongjoon-hyun/SPARK-3.2.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

on each call of `transform`, a head() job will be triggered, which can be skipped by using a lazy var.

### Why are the changes needed?

avoiding duplicate head() jobs

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

existing tests

Closes#30550 from zhengruifeng/imputer_transform.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: Ruifeng Zheng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

Add array_to_vector function for dataframe column

### Why are the changes needed?

Utility function for array to vector conversion.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

scala unit test & doctest.

Closes#30498 from WeichenXu123/array_to_vec.

Lead-authored-by: Weichen Xu <weichen.xu@databricks.com>

Co-authored-by: Hyukjin Kwon <gurwls223@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR intends to fix typos in the sub-modules:

* `bin`

* `core`

* `docs`

* `external`

* `mllib`

* `repl`

* `pom.xml`

Split per srowen https://github.com/apache/spark/pull/30323#issuecomment-728981618

NOTE: The misspellings have been reported at 706a726f87 (commitcomment-44064356)

### Why are the changes needed?

Misspelled words make it harder to read / understand content.

### Does this PR introduce _any_ user-facing change?

There are various fixes to documentation, etc...

### How was this patch tested?

No testing was performed

Closes#30530 from jsoref/spelling-bin-core-docs-external-mllib-repl.

Authored-by: Josh Soref <jsoref@users.noreply.github.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

This PR aims the followings.

1. Upgrade from Scala 2.13.3 to 2.13.4 for Apache Spark 3.1

2. Fix exhaustivity issues in both Scala 2.12/2.13 (Scala 2.13.4 requires this for compilation.)

3. Enforce the improved exhaustive check by using the existing Scala 2.13 GitHub Action compilation job.

### Why are the changes needed?

Scala 2.13.4 is a maintenance release for 2.13 line and improves JDK 15 support.

- https://github.com/scala/scala/releases/tag/v2.13.4

Also, it improves exhaustivity check.

- https://github.com/scala/scala/pull/9140 (Check exhaustivity of pattern matches with "if" guards and custom extractors)

- https://github.com/scala/scala/pull/9147 (Check all bindings exhaustively, e.g. tuples components)

### Does this PR introduce _any_ user-facing change?

Yep. Although it's a maintenance version change, it's a Scala version change.

### How was this patch tested?

Pass the CIs and do the manual testing.

- Scala 2.12 CI jobs(GitHub Action/Jenkins UT/Jenkins K8s IT) to check the validity of code change.

- Scala 2.13 Compilation job to check the compilation

Closes#30455 from dongjoon-hyun/SCALA_3.13.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

impl a new strategy `mode`: replace missing using the most frequent value along each column.

### Why are the changes needed?

it is highly scalable, and had been a function in [sklearn.impute.SimpleImputer](https://scikit-learn.org/stable/modules/generated/sklearn.impute.SimpleImputer.html#sklearn.impute.SimpleImputer) for a long time.

### Does this PR introduce _any_ user-facing change?

Yes, a new strategy is added

### How was this patch tested?

updated testsuites

Closes#30397 from zhengruifeng/imputer_max_freq.

Lead-authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Co-authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

This pr add a new Scala compile arg to `pom.xml` to defense against new unused imports:

- `-Ywarn-unused-import` for Scala 2.12

- `-Wconf:cat=unused-imports:e` for Scala 2.13

The other fIles change are remove all unused imports in Spark code

### Why are the changes needed?

Cleanup code and add guarantee to defense against new unused imports

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Pass the Jenkins or GitHub Action

Closes#30351 from LuciferYang/remove-imports-core-module.

Authored-by: yangjie01 <yangjie01@baidu.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>