## What changes were proposed in this pull request?

Add docstring/doctest for `SCALAR_ITER` Pandas UDF. I explicitly mentioned that per-partition execution is an implementation detail, not guaranteed. I will submit another PR to add the same to user guide, just to keep this PR minimal.

I didn't add "doctest: +SKIP" in the first commit so it is easy to test locally.

cc: HyukjinKwon gatorsmile icexelloss BryanCutler WeichenXu123

## How was this patch tested?

doctest

Closes#25005 from mengxr/SPARK-28056.2.

Authored-by: Xiangrui Meng <meng@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

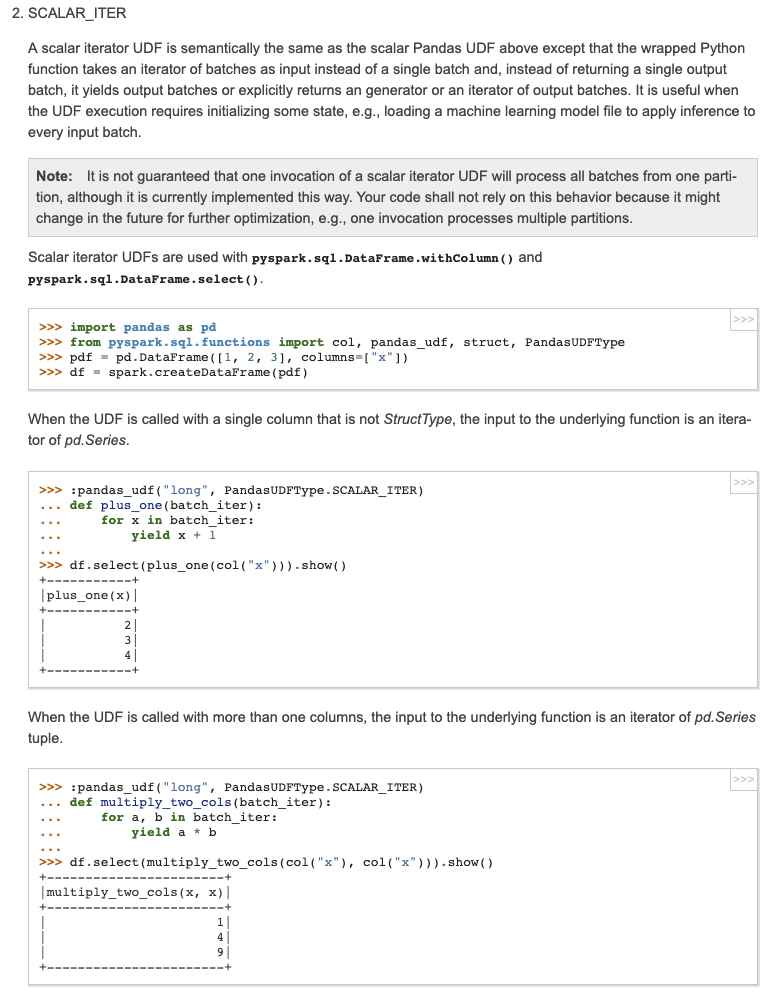

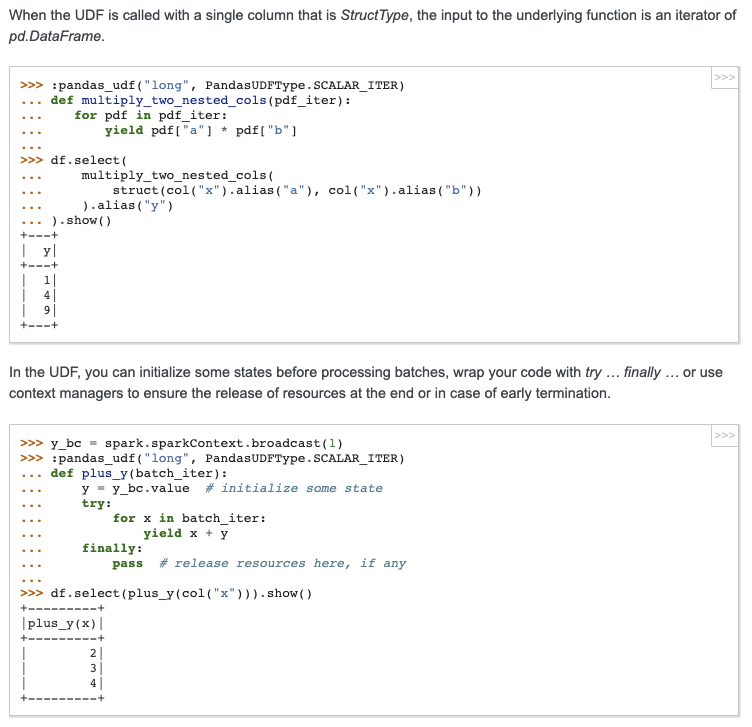

Allow Pandas UDF to take an iterator of pd.Series or an iterator of tuple of pd.Series.

Note the UDF input args will be always one iterator:

* if the udf take only column as input, the iterator's element will be pd.Series (corresponding to the column values batch)

* if the udf take multiple columns as inputs, the iterator's element will be a tuple composed of multiple `pd.Series`s, each one corresponding to the multiple columns as inputs (keep the same order). For example:

```

pandas_udf("int", PandasUDFType.SCALAR_ITER)

def the_udf(iterator):

for col1_batch, col2_batch in iterator:

yield col1_batch + col2_batch

df.select(the_udf("col1", "col2"))

```

The udf above will add col1 and col2.

I haven't add unit tests, but manually tests show it works fine. So it is ready for first pass review.

We can test several typical cases:

```

from pyspark.sql import SparkSession

from pyspark.sql.functions import pandas_udf, PandasUDFType

from pyspark.sql.functions import udf

from pyspark.taskcontext import TaskContext

df = spark.createDataFrame([(1, 20), (3, 40)], ["a", "b"])

pandas_udf("int", PandasUDFType.SCALAR_ITER)

def fi1(it):

pid = TaskContext.get().partitionId()

print("DBG: fi1: do init stuff, partitionId=" + str(pid))

for batch in it:

yield batch + 100

print("DBG: fi1: do close stuff, partitionId=" + str(pid))

pandas_udf("int", PandasUDFType.SCALAR_ITER)

def fi2(it):

pid = TaskContext.get().partitionId()

print("DBG: fi2: do init stuff, partitionId=" + str(pid))

for batch in it:

yield batch + 10000

print("DBG: fi2: do close stuff, partitionId=" + str(pid))

pandas_udf("int", PandasUDFType.SCALAR_ITER)

def fi3(it):

pid = TaskContext.get().partitionId()

print("DBG: fi3: do init stuff, partitionId=" + str(pid))

for x, y in it:

yield x + y * 10 + 100000

print("DBG: fi3: do close stuff, partitionId=" + str(pid))

pandas_udf("int", PandasUDFType.SCALAR)

def fp1(x):

return x + 1000

udf("int")

def fu1(x):

return x + 10

# test select "pandas iter udf/pandas udf/sql udf" expressions at the same time.

# Note this case the `fi1("a"), fi2("b"), fi3("a", "b")` will generate only one plan,

# and `fu1("a")`, `fp1("a")` will generate another two separate plans.

df.select(fi1("a"), fi2("b"), fi3("a", "b"), fu1("a"), fp1("a")).show()

# test chain two pandas iter udf together

# Note this case `fi2(fi1("a"))` will generate only one plan

# Also note the init stuff/close stuff call order will be like:

# (debug output following)

# DBG: fi2: do init stuff, partitionId=0

# DBG: fi1: do init stuff, partitionId=0

# DBG: fi1: do close stuff, partitionId=0

# DBG: fi2: do close stuff, partitionId=0

df.select(fi2(fi1("a"))).show()

# test more complex chain

# Note this case `fi1("a"), fi2("a")` will generate one plan,

# and `fi3(fi1_output, fi2_output)` will generate another plan

df.select(fi3(fi1("a"), fi2("a"))).show()

```

## How was this patch tested?

To be added.

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#24643 from WeichenXu123/pandas_udf_iter.

Lead-authored-by: WeichenXu <weichen.xu@databricks.com>

Co-authored-by: Xiangrui Meng <meng@databricks.com>

Signed-off-by: Xiangrui Meng <meng@databricks.com>

## What changes were proposed in this pull request?

This PR addresses SPARK-23619: https://issues.apache.org/jira/browse/SPARK-23619

It adds additional comments indicating the default column names for the `explode` and `posexplode`

functions in Spark-SQL.

Functions for which comments have been updated so far:

* stack

* inline

* explode

* posexplode

* explode_outer

* posexplode_outer

## How was this patch tested?

This is just a change in the comments. The package builds and tests successfullly after the change.

Closes#23748 from jashgala/SPARK-23619.

Authored-by: Jash Gala <jashgala@amazon.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In the PR, I propose to deprecate the `from_utc_timestamp()` and `to_utc_timestamp`, and disable them by default. The functions can be enabled back via the SQL config `spark.sql.legacy.utcTimestampFunc.enabled`. By default, any calls of the functions throw an analysis exception.

One of the reason for deprecation is functions violate semantic of `TimestampType` which is number of microseconds since epoch in UTC time zone. Shifting microseconds since epoch by time zone offset doesn't make sense because the result doesn't represent microseconds since epoch in UTC time zone any more, and cannot be considered as `TimestampType`.

## How was this patch tested?

The changes were tested by `DateExpressionsSuite` and `DateFunctionsSuite`.

Closes#24195 from MaxGekk/conv-utc-timestamp-deprecate.

Lead-authored-by: Maxim Gekk <max.gekk@gmail.com>

Co-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Hyukjin Kwon <gurwls223@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In the PR, I propose to deprecate the `from_utc_timestamp()` and `to_utc_timestamp`, and disable them by default. The functions can be enabled back via the SQL config `spark.sql.legacy.utcTimestampFunc.enabled`. By default, any calls of the functions throw an analysis exception.

One of the reason for deprecation is functions violate semantic of `TimestampType` which is number of microseconds since epoch in UTC time zone. Shifting microseconds since epoch by time zone offset doesn't make sense because the result doesn't represent microseconds since epoch in UTC time zone any more, and cannot be considered as `TimestampType`.

## How was this patch tested?

The changes were tested by `DateExpressionsSuite` and `DateFunctionsSuite`.

Closes#24195 from MaxGekk/conv-utc-timestamp-deprecate.

Lead-authored-by: Maxim Gekk <max.gekk@gmail.com>

Co-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Hyukjin Kwon <gurwls223@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The hashSeed method allocates 64 bytes instead of 8. Other bytes are always zeros (thanks to default behavior of ByteBuffer). And they could be excluded from hash calculation because they don't differentiate inputs.

## How was this patch tested?

By running the existing tests - XORShiftRandomSuite

Closes#20793 from MaxGekk/hash-buff-size.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This introduces a new SQL function 'xxhash64' for getting a 64-bit hash of an arbitrary number of columns.

This is designed to exactly mimic the 32-bit `hash`, which uses

MurmurHash3. The name is designed to be more future-proof than the

'hash', by indicating the exact algorithm used, similar to md5 and the

sha hashes.

## How was this patch tested?

The tests for the existing `hash` function were duplicated to run with `xxhash64`.

Closes#24019 from huonw/hash64.

Authored-by: Huon Wilson <Huon.Wilson@data61.csiro.au>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This is a followup of https://github.com/apache/spark/pull/23882 to handle binary math/string functions. For instance, see the cases below:

**Before:**

```python

>>> from pyspark.sql.functions import lit, ascii

>>> spark.range(1).select(lit('a').alias("value")).select(ascii("value"))

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/.../spark/python/pyspark/sql/functions.py", line 51, in _

jc = getattr(sc._jvm.functions, name)(col._jc if isinstance(col, Column) else col)

File "/.../spark/python/lib/py4j-0.10.8.1-src.zip/py4j/java_gateway.py", line 1286, in __call__

File "/.../spark/python/pyspark/sql/utils.py", line 63, in deco

return f(*a, **kw)

File "/.../spark/python/lib/py4j-0.10.8.1-src.zip/py4j/protocol.py", line 332, in get_return_value

py4j.protocol.Py4JError: An error occurred while calling z:org.apache.spark.sql.functions.ascii. Trace:

py4j.Py4JException: Method ascii([class java.lang.String]) does not exist

at py4j.reflection.ReflectionEngine.getMethod(ReflectionEngine.java:318)

at py4j.reflection.ReflectionEngine.getMethod(ReflectionEngine.java:339)

at py4j.Gateway.invoke(Gateway.java:276)

at py4j.commands.AbstractCommand.invokeMethod(AbstractCommand.java:132)

at py4j.commands.CallCommand.execute(CallCommand.java:79)

at py4j.GatewayConnection.run(GatewayConnection.java:238)

at java.lang.Thread.run(Thread.java:748)

```

```python

>>> from pyspark.sql.functions import atan2

>>> spark.range(1).select(atan2("id", "id"))

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/.../spark/python/pyspark/sql/functions.py", line 78, in _

jc = getattr(sc._jvm.functions, name)(col1._jc if isinstance(col1, Column) else float(col1),

ValueError: could not convert string to float: id

```

**After:**

```python

>>> from pyspark.sql.functions import lit, ascii

>>> spark.range(1).select(lit('a').alias("value")).select(ascii("value"))

DataFrame[ascii(value): int]

```

```python

>>> from pyspark.sql.functions import atan2

>>> spark.range(1).select(atan2("id", "id"))

DataFrame[ATAN2(id, id): double]

```

Note that,

- This PR causes a slight behaviour changes for math functions. For instance, numbers as strings (e.g., `"1"`) were supported as arguments of binary math functions before. After this PR, it recognises it as column names.

- I also intentionally didn't document this behaviour changes since we're going ahead for Spark 3.0 and I don't think numbers as strings make much sense in math functions.

- There is another exception `when`, which takes string as literal values as below. This PR doeesn't fix this ambiguity.

```python

>>> spark.range(1).select(when(lit(True), col("id"))).show()

```

```

+--------------------------+

|CASE WHEN true THEN id END|

+--------------------------+

| 0|

+--------------------------+

```

```python

>>> spark.range(1).select(when(lit(True), "id")).show()

```

```

+--------------------------+

|CASE WHEN true THEN id END|

+--------------------------+

| id|

+--------------------------+

```

This PR also fixes as below:

https://github.com/apache/spark/pull/23882 fixed it to:

- Rename `_create_function` to `_create_name_function`

- Define new `_create_function` to take strings as column names.

This PR, I proposes to:

- Revert `_create_name_function` name to `_create_function`.

- Define new `_create_function_over_column` to take strings as column names.

## How was this patch tested?

Some unit tests were added for binary math / string functions.

Closes#24121 from HyukjinKwon/SPARK-26979.

Authored-by: Hyukjin Kwon <gurwls223@apache.org>

Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Most SQL functions defined in `spark.sql.functions` have two calling patterns, one with a Column object as input, and another with a string representing a column name, which is then converted into a Column object internally.

There are, however, a few notable exceptions:

- lower()

- upper()

- abs()

- bitwiseNOT()

- ltrim()

- rtrim()

- trim()

- ascii()

- base64()

- unbase64()

While this doesn't break anything, as you can easily create a Column object yourself prior to passing it to one of these functions, it has two undesirable consequences:

1. It is surprising - it breaks coder's expectations when they are first starting with Spark. Every API should be as consistent as possible, so as to make the learning curve smoother and to reduce causes for human error;

2. It gets in the way of stylistic conventions. Most of the time it makes Python code more readable to use literal names, and the API provides ample support for that, but these few exceptions prevent this pattern from being universally applicable.

This patch is meant to fix the aforementioned problem.

### Effect

This patch **enables** support for passing column names as input to those functions mentioned above.

### Side effects

This PR also **fixes** an issue with some functions being defined multiple times by using `_create_function()`.

### How it works

`_create_function()` was redefined to always convert the argument to a Column object. The old implementation has been kept under `_create_name_function()`, and is still being used to generate the following special functions:

- lit()

- col()

- column()

- asc()

- desc()

- asc_nulls_first()

- asc_nulls_last()

- desc_nulls_first()

- desc_nulls_last()

This is because these functions can only take a column name as their argument. This is not a problem, as their semantics require so.

## How was this patch tested?

Ran ./dev/run-tests and tested it manually.

Closes#23882 from asmello/col-name-support-pyspark.

Authored-by: André Sá de Mello <amello@palantir.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This change adds support for returning StructType from a scalar Pandas UDF, where the return value of the function is a pandas.DataFrame. Nested structs are not supported and an error will be raised, child types can be any other type currently supported.

## How was this patch tested?

Added additional unit tests to `test_pandas_udf_scalar`

Closes#23900 from BryanCutler/pyspark-support-scalar_udf-StructType-SPARK-23836.

Authored-by: Bryan Cutler <cutlerb@gmail.com>

Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

## What changes were proposed in this pull request?

Change aligns argument name with that in Scala version and documentation.

## How was this patch tested?

(Please explain how this patch was tested. E.g. unit tests, integration tests, manual tests)

(If this patch involves UI changes, please attach a screenshot; otherwise, remove this)

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#23357 from deepyaman/patch-1.

Authored-by: deepyaman <deepyaman.datta@utexas.edu>

Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In the PR, I propose to switch the `DateFormatClass`, `ToUnixTimestamp`, `FromUnixTime`, `UnixTime` on java.time API for parsing/formatting dates and timestamps. The API has been already implemented by the `Timestamp`/`DateFormatter` classes. One of benefit is those classes support parsing timestamps with microsecond precision. Old behaviour can be switched on via SQL config: `spark.sql.legacy.timeParser.enabled` (`false` by default).

## How was this patch tested?

It was tested by existing test suites - `DateFunctionsSuite`, `DateExpressionsSuite`, `JsonSuite`, `CsvSuite`, `SQLQueryTestSuite` as well as PySpark tests.

Closes#23358 from MaxGekk/new-time-cast.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This PR implements a new feature - window aggregation Pandas UDF for bounded window.

#### Doc:

https://docs.google.com/document/d/14EjeY5z4-NC27-SmIP9CsMPCANeTcvxN44a7SIJtZPc/edit#heading=h.c87w44wcj3wj

#### Example:

```

from pyspark.sql.functions import pandas_udf, PandasUDFType

from pyspark.sql.window import Window

df = spark.range(0, 10, 2).toDF('v')

w1 = Window.partitionBy().orderBy('v').rangeBetween(-2, 4)

w2 = Window.partitionBy().orderBy('v').rowsBetween(-2, 2)

pandas_udf('double', PandasUDFType.GROUPED_AGG)

def avg(v):

return v.mean()

df.withColumn('v_mean', avg(df['v']).over(w1)).show()

# +---+------+

# | v|v_mean|

# +---+------+

# | 0| 1.0|

# | 2| 2.0|

# | 4| 4.0|

# | 6| 6.0|

# | 8| 7.0|

# +---+------+

df.withColumn('v_mean', avg(df['v']).over(w2)).show()

# +---+------+

# | v|v_mean|

# +---+------+

# | 0| 2.0|

# | 2| 3.0|

# | 4| 4.0|

# | 6| 5.0|

# | 8| 6.0|

# +---+------+

```

#### High level changes:

This PR modifies the existing WindowInPandasExec physical node to deal with unbounded (growing, shrinking and sliding) windows.

* `WindowInPandasExec` now share the same base class as `WindowExec` and share utility functions. See `WindowExecBase`

* `WindowFunctionFrame` now has two new functions `currentLowerBound` and `currentUpperBound` - to return the lower and upper window bound for the current output row. It is also modified to allow `AggregateProcessor` == null. Null aggregator processor is used for `WindowInPandasExec` where we don't have an aggregator and only uses lower and upper bound functions from `WindowFunctionFrame`

* The biggest change is in `WindowInPandasExec`, where it is modified to take `currentLowerBound` and `currentUpperBound` and write those values together with the input data to the python process for rolling window aggregation. See `WindowInPandasExec` for more details.

#### Discussion

In benchmarking, I found numpy variant of the rolling window UDF is much faster than the pandas version:

Spark SQL window function: 20s

Pandas variant: ~80s

Numpy variant: 10s

Numpy variant with numba: 4s

Allowing numpy variant of the vectorized UDFs is something I want to discuss because of the performance improvement, but doesn't have to be in this PR.

## How was this patch tested?

New tests

Closes#22305 from icexelloss/SPARK-24561-bounded-window-udf.

Authored-by: Li Jin <ice.xelloss@gmail.com>

Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Currently duplicated map keys are not handled consistently. For example, map look up respects the duplicated key appears first, `Dataset.collect` only keeps the duplicated key appears last, `MapKeys` returns duplicated keys, etc.

This PR proposes to remove duplicated map keys with last wins policy, to follow Java/Scala and Presto. It only applies to built-in functions, as users can create map with duplicated map keys via private APIs anyway.

updated functions: `CreateMap`, `MapFromArrays`, `MapFromEntries`, `StringToMap`, `MapConcat`, `TransformKeys`.

For other places:

1. data source v1 doesn't have this problem, as users need to provide a java/scala map, which can't have duplicated keys.

2. data source v2 may have this problem. I've added a note to `ArrayBasedMapData` to ask the caller to take care of duplicated keys. In the future we should enforce it in the stable data APIs for data source v2.

3. UDF doesn't have this problem, as users need to provide a java/scala map. Same as data source v1.

4. file format. I checked all of them and only parquet does not enforce it. For backward compatibility reasons I change nothing but leave a note saying that the behavior will be undefined if users write map with duplicated keys to parquet files. Maybe we can add a config and fail by default if parquet files have map with duplicated keys. This can be done in followup.

## How was this patch tested?

updated tests and new tests

Closes#23124 from cloud-fan/map.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The following 5 functions were removed from branch-2.4:

- map_entries

- map_filter

- transform_values

- transform_keys

- map_zip_with

We should update the since version to 3.0.0.

## How was this patch tested?

Existing tests.

Closes#23082 from ueshin/issues/SPARK-26112/since.

Authored-by: Takuya UESHIN <ueshin@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

- Remove some AccumulableInfo .apply() methods

- Remove non-label-specific multiclass precision/recall/fScore in favor of accuracy

- Remove toDegrees/toRadians in favor of degrees/radians (SparkR: only deprecated)

- Remove approxCountDistinct in favor of approx_count_distinct (SparkR: only deprecated)

- Remove unused Python StorageLevel constants

- Remove Dataset unionAll in favor of union

- Remove unused multiclass option in libsvm parsing

- Remove references to deprecated spark configs like spark.yarn.am.port

- Remove TaskContext.isRunningLocally

- Remove ShuffleMetrics.shuffle* methods

- Remove BaseReadWrite.context in favor of session

- Remove Column.!== in favor of =!=

- Remove Dataset.explode

- Remove Dataset.registerTempTable

- Remove SQLContext.getOrCreate, setActive, clearActive, constructors

Not touched yet

- everything else in MLLib

- HiveContext

- Anything deprecated more recently than 2.0.0, generally

## How was this patch tested?

Existing tests

Closes#22921 from srowen/SPARK-25908.

Lead-authored-by: Sean Owen <sean.owen@databricks.com>

Co-authored-by: hyukjinkwon <gurwls223@apache.org>

Co-authored-by: Sean Owen <srowen@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

New functions takes a struct and converts it to a CSV strings using passed CSV options. It accepts the same CSV options as CSV data source does.

## How was this patch tested?

Added `CsvExpressionsSuite`, `CsvFunctionsSuite` as well as R, Python and SQL tests similar to tests for `to_json()`

Closes#22626 from MaxGekk/to_csv.

Lead-authored-by: Maxim Gekk <max.gekk@gmail.com>

Co-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In the PR, I propose to add new function - *schema_of_csv()* which infers schema of CSV string literal. The result of the function is a string containing a schema in DDL format. For example:

```sql

select schema_of_csv('1|abc', map('delimiter', '|'))

```

```

struct<_c0:int,_c1:string>

```

## How was this patch tested?

Added new tests to `CsvFunctionsSuite`, `CsvExpressionsSuite` and SQL tests to `csv-functions.sql`

Closes#22666 from MaxGekk/schema_of_csv-function.

Lead-authored-by: hyukjinkwon <gurwls223@apache.org>

Co-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

The main purpose of `schema_of_json` is the usage of combination with `from_json` (to make up the leak of schema inference) which takes its schema only as literal; however, currently `schema_of_json` allows JSON input as non-literal expressions (e.g, column).

This was mistakenly allowed - we don't have to take other usages rather then the main purpose into account for now.

This PR makes a followup to only allow literals for `schema_of_json`'s JSON input. We can allow non literal expressions later when it's needed or there are some usecase for it.

## How was this patch tested?

Unit tests were added.

Closes#22775 from HyukjinKwon/SPARK-25447-followup.

Lead-authored-by: hyukjinkwon <gurwls223@apache.org>

Co-authored-by: Hyukjin Kwon <gurwls223@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

See the detailed information at https://issues.apache.org/jira/browse/SPARK-25841 on why these APIs should be deprecated and redesigned.

This patch also reverts 8acb51f08b which applies to 2.4.

## How was this patch tested?

Only deprecation and doc changes.

Closes#22841 from rxin/SPARK-25842.

Authored-by: Reynold Xin <rxin@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In the PR, I propose to switch `from_json` on `FailureSafeParser`, and to make the function compatible to `PERMISSIVE` mode by default, and to support the `FAILFAST` mode as well. The `DROPMALFORMED` mode is not supported by `from_json`.

## How was this patch tested?

It was tested by existing `JsonSuite`/`CSVSuite`, `JsonFunctionsSuite` and `JsonExpressionsSuite` as well as new tests for `from_json` which checks different modes.

Closes#22237 from MaxGekk/from_json-failuresafe.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The PR adds new function `from_csv()` similar to `from_json()` to parse columns with CSV strings. I added the following methods:

```Scala

def from_csv(e: Column, schema: StructType, options: Map[String, String]): Column

```

and this signature to call it from Python, R and Java:

```Scala

def from_csv(e: Column, schema: String, options: java.util.Map[String, String]): Column

```

## How was this patch tested?

Added new test suites `CsvExpressionsSuite`, `CsvFunctionsSuite` and sql tests.

Closes#22379 from MaxGekk/from_csv.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Maxim Gekk <max.gekk@gmail.com>

Co-authored-by: Hyukjin Kwon <gurwls223@gmail.com>

Co-authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

We are facing some problems about type conversions between Python data and SQL types in UDFs (Pandas UDFs as well).

It's even difficult to identify the problems (see https://github.com/apache/spark/pull/20163 and https://github.com/apache/spark/pull/22610).

This PR targets to internally document the type conversion table. Some of them looks buggy and we should fix them.

```python

import sys

import array

import datetime

from decimal import Decimal

from pyspark.sql import Row

from pyspark.sql.types import *

from pyspark.sql.functions import udf

if sys.version >= '3':

long = int

data = [

None,

True,

1,

long(1),

"a",

u"a",

datetime.date(1970, 1, 1),

datetime.datetime(1970, 1, 1, 0, 0),

1.0,

array.array("i", [1]),

[1],

(1,),

bytearray([65, 66, 67]),

Decimal(1),

{"a": 1},

Row(kwargs=1),

Row("namedtuple")(1),

]

types = [

BooleanType(),

ByteType(),

ShortType(),

IntegerType(),

LongType(),

StringType(),

DateType(),

TimestampType(),

FloatType(),

DoubleType(),

ArrayType(IntegerType()),

BinaryType(),

DecimalType(10, 0),

MapType(StringType(), IntegerType()),

StructType([StructField("_1", IntegerType())]),

]

df = spark.range(1)

results = []

count = 0

total = len(types) * len(data)

spark.sparkContext.setLogLevel("FATAL")

for t in types:

result = []

for v in data:

try:

row = df.select(udf(lambda: v, t)()).first()

ret_str = repr(row[0])

except Exception:

ret_str = "X"

result.append(ret_str)

progress = "SQL Type: [%s]\n Python Value: [%s(%s)]\n Result Python Value: [%s]" % (

t.simpleString(), str(v), type(v).__name__, ret_str)

count += 1

print("%s/%s:\n %s" % (count, total, progress))

results.append([t.simpleString()] + list(map(str, result)))

schema = ["SQL Type \\ Python Value(Type)"] + list(map(lambda v: "%s(%s)" % (str(v), type(v).__name__), data))

strings = spark.createDataFrame(results, schema=schema)._jdf.showString(20, 20, False)

print("\n".join(map(lambda line: " # %s # noqa" % line, strings.strip().split("\n"))))

```

This table was generated under Python 2 but the code above is Python 3 compatible as well.

## How was this patch tested?

Manually tested and lint check.

Closes#22655 from HyukjinKwon/SPARK-25666.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

For Pandas UDFs, we get arrow type from defined Catalyst return data type of UDFs. We use this arrow type to do serialization of data. If the defined return data type doesn't match with actual return type of Pandas.Series returned by Pandas UDFs, it has a risk to return incorrect data from Python side.

Currently we don't have reliable approach to check if the data conversion is safe or not. We leave some document to notify this to users for now. When there is next upgrade of PyArrow available we can use to check it, we should add the option to check it.

## How was this patch tested?

Only document change.

Closes#22610 from viirya/SPARK-25461.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Adds support for the setting limit in the sql split function

## How was this patch tested?

1. Updated unit tests

2. Tested using Scala spark shell

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#22227 from phegstrom/master.

Authored-by: Parker Hegstrom <phegstrom@palantir.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This patch is to bump the master branch version to 3.0.0-SNAPSHOT.

## How was this patch tested?

N/A

Closes#22606 from gatorsmile/bump3.0.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

In the PR, I propose to extended the `schema_of_json()` function, and accept JSON options since they can impact on schema inferring. Purpose is to support the same options that `from_json` can use during schema inferring.

## How was this patch tested?

Added SQL, Python and Scala tests (`JsonExpressionsSuite` and `JsonFunctionsSuite`) that checks JSON options are used.

Closes#22442 from MaxGekk/schema_of_json-options.

Authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

We have an agreement that the behavior of `from/to_utc_timestamp` is corrected, although the function itself doesn't make much sense in Spark: https://issues.apache.org/jira/browse/SPARK-23715

This PR improves the document.

## How was this patch tested?

N/A

Closes#22543 from cloud-fan/doc.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The PR introduces new JSON option `pretty` which allows to turn on `DefaultPrettyPrinter` of `Jackson`'s Json generator. New option is useful in exploring of deep nested columns and in converting of JSON columns in more readable representation (look at the added test).

## How was this patch tested?

Added rount trip test which convert an JSON string to pretty representation via `from_json()` and `to_json()`.

Closes#22534 from MaxGekk/pretty-json.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

(This change is a subset of the changes needed for the JIRA; see https://github.com/apache/spark/pull/22231)

## What changes were proposed in this pull request?

Use raw strings and simpler regex syntax consistently in Python, which also avoids warnings from pycodestyle about accidentally relying Python's non-escaping of non-reserved chars in normal strings. Also, fix a few long lines.

## How was this patch tested?

Existing tests, and some manual double-checking of the behavior of regexes in Python 2/3 to be sure.

Closes#22400 from srowen/SPARK-25238.2.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Clarify docstring for Scalar functions

## How was this patch tested?

Adds a unit test showing use similar to wordcount, there's existing unit test for array of floats as well.

Closes#20908 from holdenk/SPARK-23672-document-support-for-nested-return-types-in-scalar-with-arrow-udfs.

Authored-by: Holden Karau <holden@pigscanfly.ca>

Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

## What changes were proposed in this pull request?

This PR proposes to add another example for multiple grouping key in group aggregate pandas UDF since this feature could make users still confused.

## How was this patch tested?

Manually tested and documentation built.

Closes#22329 from HyukjinKwon/SPARK-25328.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

## What changes were proposed in this pull request?

In the PR, I propose to extended `to_json` and support any types as element types of input arrays. It should allow converting arrays of primitive types and arrays of arrays. For example:

```

select to_json(array('1','2','3'))

> ["1","2","3"]

select to_json(array(array(1,2,3),array(4)))

> [[1,2,3],[4]]

```

## How was this patch tested?

Added a couple sql tests for arrays of primitive type and of arrays. Also I added round trip test `from_json` -> `to_json`.

Closes#22226 from MaxGekk/to_json-array.

Authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Include PandasUDFType in the import all of pyspark.sql.functions

## How was this patch tested?

Run the test case from the pyspark shell from the jira [spark-25105](https://jira.apache.org/jira/browse/SPARK-25105?jql=project%20%3D%20SPARK%20AND%20component%20in%20(ML%2C%20PySpark%2C%20SQL%2C%20%22Structured%20Streaming%22))

I manually test on pyspark-shell:

before:

`

>>> from pyspark.sql.functions import *

>>> foo = pandas_udf(lambda x: x, 'v int', PandasUDFType.GROUPED_MAP)

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

NameError: name 'PandasUDFType' is not defined

>>>

`

after:

`

>>> from pyspark.sql.functions import *

>>> foo = pandas_udf(lambda x: x, 'v int', PandasUDFType.GROUPED_MAP)

>>>

`

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#22100 from kevinyu98/spark-25105.

Authored-by: Kevin Yu <qyu@us.ibm.com>

Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

## What changes were proposed in this pull request?

The PR removes a restriction for element types of array type which exists in `from_json` for the root type. Currently, the function can handle only arrays of structs. Even array of primitive types is disallowed. The PR allows arrays of any types currently supported by JSON datasource. Here is an example of an array of a primitive type:

```

scala> import org.apache.spark.sql.functions._

scala> val df = Seq("[1, 2, 3]").toDF("a")

scala> val schema = new ArrayType(IntegerType, false)

scala> val arr = df.select(from_json($"a", schema))

scala> arr.printSchema

root

|-- jsontostructs(a): array (nullable = true)

| |-- element: integer (containsNull = true)

```

and result of converting of the json string to the `ArrayType`:

```

scala> arr.show

+----------------+

|jsontostructs(a)|

+----------------+

| [1, 2, 3]|

+----------------+

```

## How was this patch tested?

I added a few positive and negative tests:

- array of primitive types

- array of arrays

- array of structs

- array of maps

Closes#21439 from MaxGekk/from_json-array.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

The PR adds the SQL function `array_intersect`. The behavior of the function is based on Presto's one.

This function returns returns an array of the elements in the intersection of array1 and array2.

Note: The order of elements in the result is not defined.

## How was this patch tested?

Added UTs

Author: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

Closes#21102 from kiszk/SPARK-23913.

## What changes were proposed in this pull request?

The PR adds the SQL function `array_except`. The behavior of the function is based on Presto's one.

This function returns returns an array of the elements in array1 but not in array2.

Note: The order of elements in the result is not defined.

## How was this patch tested?

Added UTs.

Author: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

Closes#21103 from kiszk/SPARK-23915.

## What changes were proposed in this pull request?

Update Pandas UDFs section in sql-programming-guide. Add section for grouped aggregate pandas UDF.

## How was this patch tested?

Author: Li Jin <ice.xelloss@gmail.com>

Closes#21887 from icexelloss/SPARK-23633-sql-programming-guide.

## What changes were proposed in this pull request?

This PR adds a new collection function: shuffle. It generates a random permutation of the given array. This implementation uses the "inside-out" version of Fisher-Yates algorithm.

## How was this patch tested?

New tests are added to CollectionExpressionsSuite.scala and DataFrameFunctionsSuite.scala.

Author: Takuya UESHIN <ueshin@databricks.com>

Author: pkuwm <ihuizhi.lu@gmail.com>

Closes#21802 from ueshin/issues/SPARK-23928/shuffle.

## What changes were proposed in this pull request?

Add ```sequence``` in functions.py

## How was this patch tested?

Add doctest.

Author: Huaxin Gao <huaxing@us.ibm.com>

Closes#21820 from huaxingao/spark-24868.

## What changes were proposed in this pull request?

The PR adds the SQL function `array_union`. The behavior of the function is based on Presto's one.

This function returns returns an array of the elements in the union of array1 and array2.

Note: The order of elements in the result is not defined.

## How was this patch tested?

Added UTs

Author: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

Closes#21061 from kiszk/SPARK-23914.

## What changes were proposed in this pull request?

Implement map_concat high order function.

This implementation does not pick a winner when the specified maps have overlapping keys. Therefore, this implementation preserves existing duplicate keys in the maps and potentially introduces new duplicates (After discussion with ueshin, we settled on option 1 from [here](https://issues.apache.org/jira/browse/SPARK-23936?focusedCommentId=16464245&page=com.atlassian.jira.plugin.system.issuetabpanels%3Acomment-tabpanel#comment-16464245)).

## How was this patch tested?

New tests

Manual tests

Run all sbt SQL tests

Run all pyspark sql tests

Author: Bruce Robbins <bersprockets@gmail.com>

Closes#21073 from bersprockets/SPARK-23936.

## What changes were proposed in this pull request?

This pr supported column arguments in timezone of `from_utc_timestamp/to_utc_timestamp` (follow-up of #21693).

## How was this patch tested?

Added tests.

Author: Takeshi Yamamuro <yamamuro@apache.org>

Closes#21723 from maropu/SPARK-24673-FOLLOWUP.

## What changes were proposed in this pull request?

In the PR, I propose to add new function - *schema_of_json()* which infers schema of JSON string literal. The result of the function is a string containing a schema in DDL format.

One of the use cases is using of *schema_of_json()* in the combination with *from_json()*. Currently, _from_json()_ requires a schema as a mandatory argument. The *schema_of_json()* function will allow to point out an JSON string as an example which has the same schema as the first argument of _from_json()_. For instance:

```sql

select from_json(json_column, schema_of_json('{"c1": [0], "c2": [{"c3":0}]}'))

from json_table;

```

## How was this patch tested?

Added new test to `JsonFunctionsSuite`, `JsonExpressionsSuite` and SQL tests to `json-functions.sql`

Author: Maxim Gekk <maxim.gekk@databricks.com>

Closes#21686 from MaxGekk/infer_schema_json.