Make sure comma-separated paths get processed correcly in ResolvedDataSource for a HadoopFsRelationProvider

Author: Koert Kuipers <koert@tresata.com>

Closes#8416 from koertkuipers/feat-sql-comma-separated-paths.

Documentation for dropDuplicates() and drop_duplicates() is one and the same. Resolved the error in the example for drop_duplicates using the same approach used for groupby and groupBy, by indicating that dropDuplicates and drop_duplicates are aliases.

Author: asokadiggs <asoka.diggs@intel.com>

Closes#8930 from asokadiggs/jira-10782.

Python DataFrame.head/take now requires scanning all the partitions. This pull request changes them to delegate the actual implementation to Scala DataFrame (by calling DataFrame.take).

This is more of a hack for fixing this issue in 1.5.1. A more proper fix is to change executeCollect and executeTake to return InternalRow rather than Row, and thus eliminate the extra round-trip conversion.

Author: Reynold Xin <rxin@databricks.com>

Closes#8876 from rxin/SPARK-10731.

JIRA: https://issues.apache.org/jira/browse/SPARK-10446

Currently the method `join(right: DataFrame, usingColumns: Seq[String])` only supports inner join. It is more convenient to have it support other join types.

Author: Liang-Chi Hsieh <viirya@appier.com>

Closes#8600 from viirya/usingcolumns_df.

As ```assertEquals``` is deprecated, so we need to change ```assertEquals``` to ```assertEqual``` for existing python unit tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#8814 from yanboliang/spark-10615.

Adding STDDEV support for DataFrame using 1-pass online /parallel algorithm to compute variance. Please review the code change.

Author: JihongMa <linlin200605@gmail.com>

Author: Jihong MA <linlin200605@gmail.com>

Author: Jihong MA <jihongma@jihongs-mbp.usca.ibm.com>

Author: Jihong MA <jihongma@Jihongs-MacBook-Pro.local>

Closes#6297 from JihongMA/SPARK-SQL.

`pyspark.sql.column.Column` object has `__getitem__` method, which makes it iterable for Python. In fact it has `__getitem__` to address the case when the column might be a list or dict, for you to be able to access certain element of it in DF API. The ability to iterate over it is just a side effect that might cause confusion for the people getting familiar with Spark DF (as you might iterate this way on Pandas DF for instance)

Issue reproduction:

```

df = sqlContext.jsonRDD(sc.parallelize(['{"name": "El Magnifico"}']))

for i in df["name"]: print i

```

Author: 0x0FFF <programmerag@gmail.com>

Closes#8574 from 0x0FFF/SPARK-10417.

This PR addresses issue [SPARK-10392](https://issues.apache.org/jira/browse/SPARK-10392)

The problem is that for "start of epoch" date (01 Jan 1970) PySpark class DateType returns 0 instead of the `datetime.date` due to implementation of its return statement

Issue reproduction on master:

```

>>> from pyspark.sql.types import *

>>> a = DateType()

>>> a.fromInternal(0)

0

>>> a.fromInternal(1)

datetime.date(1970, 1, 2)

```

Author: 0x0FFF <programmerag@gmail.com>

Closes#8556 from 0x0FFF/SPARK-10392.

This PR addresses [SPARK-10162](https://issues.apache.org/jira/browse/SPARK-10162)

The issue is with DataFrame filter() function, if datetime.datetime is passed to it:

* Timezone information of this datetime is ignored

* This datetime is assumed to be in local timezone, which depends on the OS timezone setting

Fix includes both code change and regression test. Problem reproduction code on master:

```python

import pytz

from datetime import datetime

from pyspark.sql import *

from pyspark.sql.types import *

sqc = SQLContext(sc)

df = sqc.createDataFrame([], StructType([StructField("dt", TimestampType())]))

m1 = pytz.timezone('UTC')

m2 = pytz.timezone('Etc/GMT+3')

df.filter(df.dt > datetime(2000, 01, 01, tzinfo=m1)).explain()

df.filter(df.dt > datetime(2000, 01, 01, tzinfo=m2)).explain()

```

It gives the same timestamp ignoring time zone:

```

>>> df.filter(df.dt > datetime(2000, 01, 01, tzinfo=m1)).explain()

Filter (dt#0 > 946713600000000)

Scan PhysicalRDD[dt#0]

>>> df.filter(df.dt > datetime(2000, 01, 01, tzinfo=m2)).explain()

Filter (dt#0 > 946713600000000)

Scan PhysicalRDD[dt#0]

```

After the fix:

```

>>> df.filter(df.dt > datetime(2000, 01, 01, tzinfo=m1)).explain()

Filter (dt#0 > 946684800000000)

Scan PhysicalRDD[dt#0]

>>> df.filter(df.dt > datetime(2000, 01, 01, tzinfo=m2)).explain()

Filter (dt#0 > 946695600000000)

Scan PhysicalRDD[dt#0]

```

PR [8536](https://github.com/apache/spark/pull/8536) was occasionally closed by me dropping the repo

Author: 0x0FFF <programmerag@gmail.com>

Closes#8555 from 0x0FFF/SPARK-10162.

PySpark DataFrameReader should could accept an RDD of Strings (like the Scala version does) for JSON, rather than only taking a path.

If this PR is merged, it should be duplicated to cover the other input types (not just JSON).

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#8444 from yanboliang/spark-9964.

Replace `JavaConversions` implicits with `JavaConverters`

Most occurrences I've seen so far are necessary conversions; a few have been avoidable. None are in critical code as far as I see, yet.

Author: Sean Owen <sowen@cloudera.com>

Closes#8033 from srowen/SPARK-9613.

DataFrame.withColumn in Python should be consistent with the Scala one (replacing the existing column that has the same name).

cc marmbrus

Author: Davies Liu <davies@databricks.com>

Closes#8300 from davies/with_column.

This bug is caused by a wrong column-exist-check in `__getitem__` of pyspark dataframe. `DataFrame.apply` accepts not only top level column names, but also nested column name like `a.b`, so we should remove that check from `__getitem__`.

Author: Wenchen Fan <cloud0fan@outlook.com>

Closes#8202 from cloud-fan/nested.

If pandas is broken (can't be imported, raise other exceptions other than ImportError), pyspark can't be imported, we should ignore all the exceptions.

Author: Davies Liu <davies@databricks.com>

Closes#8173 from davies/fix_pandas.

rxin

First pull request for Spark so let me know if I am missing anything

The contribution is my original work and I license the work to the project under the project's open source license.

Author: Brennan Ashton <bashton@brennanashton.com>

Closes#8016 from btashton/patch-1.

Raise an read-only exception when user try to mutable a Row.

Author: Davies Liu <davies@databricks.com>

Closes#8009 from davies/readonly_row and squashes the following commits:

8722f3f [Davies Liu] add tests

05a3d36 [Davies Liu] Row should be read-only

Add an option `recursive` to `Row.asDict()`, when True (default is False), it will convert the nested Row into dict.

Author: Davies Liu <davies@databricks.com>

Closes#8006 from davies/as_dict and squashes the following commits:

922cc5a [Davies Liu] turn Row into dict recursively

All data sources show up as "PhysicalRDD" in physical plan explain. It'd be better if we can show the name of the data source.

Without this patch:

```

== Physical Plan ==

NewAggregate with UnsafeHybridAggregationIterator ArrayBuffer(date#0, cat#1) ArrayBuffer((sum(CAST((CAST(count#2, IntegerType) + 1), LongType))2,mode=Final,isDistinct=false))

Exchange hashpartitioning(date#0,cat#1)

NewAggregate with UnsafeHybridAggregationIterator ArrayBuffer(date#0, cat#1) ArrayBuffer((sum(CAST((CAST(count#2, IntegerType) + 1), LongType))2,mode=Partial,isDistinct=false))

PhysicalRDD [date#0,cat#1,count#2], MapPartitionsRDD[3] at

```

With this patch:

```

== Physical Plan ==

TungstenAggregate(key=[date#0,cat#1], value=[(sum(CAST((CAST(count#2, IntegerType) + 1), LongType)),mode=Final,isDistinct=false)]

Exchange hashpartitioning(date#0,cat#1)

TungstenAggregate(key=[date#0,cat#1], value=[(sum(CAST((CAST(count#2, IntegerType) + 1), LongType)),mode=Partial,isDistinct=false)]

ConvertToUnsafe

Scan ParquetRelation[file:/scratch/rxin/spark/sales4][date#0,cat#1,count#2]

```

Author: Reynold Xin <rxin@databricks.com>

Closes#8024 from rxin/SPARK-9733 and squashes the following commits:

811b90e [Reynold Xin] Fixed Python test case.

52cab77 [Reynold Xin] Cast.

eea9ccc [Reynold Xin] Fix test case.

fcecb22 [Reynold Xin] [SPARK-9733][SQL] Improve explain message for data source scan node.

https://issues.apache.org/jira/browse/SPARK-9691

jkbradley rxin

Author: Yin Huai <yhuai@databricks.com>

Closes#7999 from yhuai/pythonRand and squashes the following commits:

4187e0c [Yin Huai] Regression test.

a985ef9 [Yin Huai] Use "if seed is not None" instead "if seed" because "if seed" returns false when seed is 0.

Inspiration drawn from this blog post: https://lab.getbase.com/pandarize-spark-dataframes/

Author: Reynold Xin <rxin@databricks.com>

Closes#7977 from rxin/isin and squashes the following commits:

9b1d3d6 [Reynold Xin] Added return.

2197d37 [Reynold Xin] Fixed test case.

7c1b6cf [Reynold Xin] Import warnings.

4f4a35d [Reynold Xin] [SPARK-9659][SQL] Rename inSet to isin to match Pandas function.

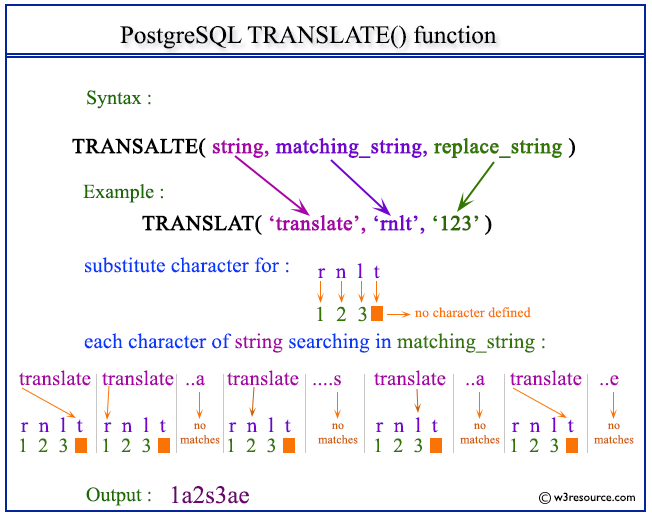

Author: zhichao.li <zhichao.li@intel.com>

Closes#7709 from zhichao-li/translate and squashes the following commits:

9418088 [zhichao.li] refine checking condition

f2ab77a [zhichao.li] clone string

9d88f2d [zhichao.li] fix indent

6aa2962 [zhichao.li] style

e575ead [zhichao.li] add python api

9d4bab0 [zhichao.li] add special case for fodable and refactor unittest

eda7ad6 [zhichao.li] update to use TernaryExpression

cdfd4be [zhichao.li] add function translate

This PR is based on #7580 , thanks to EntilZha

PR for work on https://issues.apache.org/jira/browse/SPARK-8231

Currently, I have an initial implementation for contains. Based on discussion on JIRA, it should behave same as Hive: https://github.com/apache/hive/blob/master/ql/src/java/org/apache/hadoop/hive/ql/udf/generic/GenericUDFArrayContains.java#L102-L128

Main points are:

1. If the array is empty, null, or the value is null, return false

2. If there is a type mismatch, throw error

3. If comparison is not supported, throw error

Closes#7580

Author: Pedro Rodriguez <prodriguez@trulia.com>

Author: Pedro Rodriguez <ski.rodriguez@gmail.com>

Author: Davies Liu <davies@databricks.com>

Closes#7949 from davies/array_contains and squashes the following commits:

d3c08bc [Davies Liu] use foreach() to avoid copy

bc3d1fe [Davies Liu] fix array_contains

719e37d [Davies Liu] Merge branch 'master' of github.com:apache/spark into array_contains

e352cf9 [Pedro Rodriguez] fixed diff from master

4d5b0ff [Pedro Rodriguez] added docs and another type check

ffc0591 [Pedro Rodriguez] fixed unit test

7a22deb [Pedro Rodriguez] Changed test to use strings instead of long/ints which are different between python 2 an 3

b5ffae8 [Pedro Rodriguez] fixed pyspark test

4e7dce3 [Pedro Rodriguez] added more docs

3082399 [Pedro Rodriguez] fixed unit test

46f9789 [Pedro Rodriguez] reverted change

d3ca013 [Pedro Rodriguez] Fixed type checking to match hive behavior, then added tests to insure this

8528027 [Pedro Rodriguez] added more tests

686e029 [Pedro Rodriguez] fix scala style

d262e9d [Pedro Rodriguez] reworked type checking code and added more tests

2517a58 [Pedro Rodriguez] removed unused import

28b4f71 [Pedro Rodriguez] fixed bug with type conversions and re-added tests

12f8795 [Pedro Rodriguez] fix scala style checks

e8a20a9 [Pedro Rodriguez] added python df (broken atm)

65b562c [Pedro Rodriguez] made array_contains nullable false

33b45aa [Pedro Rodriguez] reordered test

9623c64 [Pedro Rodriguez] fixed test

4b4425b [Pedro Rodriguez] changed Arrays in tests to Seqs

72cb4b1 [Pedro Rodriguez] added checkInputTypes and docs

69c46fb [Pedro Rodriguez] added tests and codegen

9e0bfc4 [Pedro Rodriguez] initial attempt at implementation

This adds Python API for those DataFrame functions that is introduced in 1.5.

There is issue with serialize byte_array in Python 3, so some of functions (for BinaryType) does not have tests.

cc rxin

Author: Davies Liu <davies@databricks.com>

Closes#7922 from davies/python_functions and squashes the following commits:

8ad942f [Davies Liu] fix test

5fb6ec3 [Davies Liu] fix bugs

3495ed3 [Davies Liu] fix issues

ea5f7bb [Davies Liu] Add python API for DataFrame functions

This PR is based on #7208 , thanks to HuJiayin

Closes#7208

Author: HuJiayin <jiayin.hu@intel.com>

Author: Davies Liu <davies@databricks.com>

Closes#7850 from davies/initcap and squashes the following commits:

54472e9 [Davies Liu] fix python test

17ffe51 [Davies Liu] Merge branch 'master' of github.com:apache/spark into initcap

ca46390 [Davies Liu] Merge branch 'master' of github.com:apache/spark into initcap

3a906e4 [Davies Liu] implement title case in UTF8String

8b2506a [HuJiayin] Update functions.py

2cd43e5 [HuJiayin] fix python style check

b616c0e [HuJiayin] add python api

1f5a0ef [HuJiayin] add codegen

7e0c604 [HuJiayin] Merge branch 'master' of https://github.com/apache/spark into initcap

6a0b958 [HuJiayin] add column

c79482d [HuJiayin] support soundex

7ce416b [HuJiayin] support initcap rebase code

This is based on #7641, thanks to zhichao-li

Closes#7641

Author: zhichao.li <zhichao.li@intel.com>

Author: Davies Liu <davies@databricks.com>

Closes#7848 from davies/substr and squashes the following commits:

461b709 [Davies Liu] remove bytearry from tests

b45377a [Davies Liu] Merge branch 'master' of github.com:apache/spark into substr

01d795e [zhichao.li] scala style

99aa130 [zhichao.li] add substring to dataframe

4f68bfe [zhichao.li] add binary type support for substring

This PR is based on #7581 , just fix the conflict.

Author: Cheng Hao <hao.cheng@intel.com>

Author: Davies Liu <davies@databricks.com>

Closes#7851 from davies/sort_array and squashes the following commits:

a80ef66 [Davies Liu] fix conflict

7cfda65 [Davies Liu] Merge branch 'master' of github.com:apache/spark into sort_array

664c960 [Cheng Hao] update the sort_array by using the ArrayData

276d2d5 [Cheng Hao] add empty line

0edab9c [Cheng Hao] Add asending/descending support for sort_array

80fc0f8 [Cheng Hao] Add type checking

a42b678 [Cheng Hao] Add sort_array support

Add expression `sort_array` support.

Author: Cheng Hao <hao.cheng@intel.com>

This patch had conflicts when merged, resolved by

Committer: Davies Liu <davies.liu@gmail.com>

Closes#7581 from chenghao-intel/sort_array and squashes the following commits:

664c960 [Cheng Hao] update the sort_array by using the ArrayData

276d2d5 [Cheng Hao] add empty line

0edab9c [Cheng Hao] Add asending/descending support for sort_array

80fc0f8 [Cheng Hao] Add type checking

a42b678 [Cheng Hao] Add sort_array support

This PR is based on #7533 , thanks to zhichao-li

Closes#7533

Author: zhichao.li <zhichao.li@intel.com>

Author: Davies Liu <davies@databricks.com>

Closes#7843 from davies/str_index and squashes the following commits:

391347b [Davies Liu] add python api

3ce7802 [Davies Liu] fix substringIndex

f2d29a1 [Davies Liu] Merge branch 'master' of github.com:apache/spark into str_index

515519b [zhichao.li] add foldable and remove null checking

9546991 [zhichao.li] scala style

67c253a [zhichao.li] hide some apis and clean code

b19b013 [zhichao.li] add codegen and clean code

ac863e9 [zhichao.li] reduce the calling of numChars

12e108f [zhichao.li] refine unittest

d92951b [zhichao.li] add lastIndexOf

52d7b03 [zhichao.li] add substring_index function

This PR brings SQL function soundex(), see https://issues.apache.org/jira/browse/HIVE-9738

It's based on #7115 , thanks to HuJiayin

Author: HuJiayin <jiayin.hu@intel.com>

Author: Davies Liu <davies@databricks.com>

Closes#7812 from davies/soundex and squashes the following commits:

fa75941 [Davies Liu] Merge branch 'master' of github.com:apache/spark into soundex

a4bd6d8 [Davies Liu] fix soundex

2538908 [HuJiayin] add codegen soundex

d15d329 [HuJiayin] add back ut

ded1a14 [HuJiayin] Merge branch 'master' of https://github.com/apache/spark

e2dec2c [HuJiayin] support soundex rebase code

This PR is based on #6988 , thanks to adrian-wang .

This brings two SQL functions: to_date() and trunc().

Closes#6988

Author: Daoyuan Wang <daoyuan.wang@intel.com>

Author: Davies Liu <davies@databricks.com>

Closes#7805 from davies/to_date and squashes the following commits:

2c7beba [Davies Liu] Merge branch 'master' of github.com:apache/spark into to_date

310dd55 [Daoyuan Wang] remove dup test in rebase

980b092 [Daoyuan Wang] resolve rebase conflict

a476c5a [Daoyuan Wang] address comments from davies

d44ea5f [Daoyuan Wang] function to_date, trunc

This was previously committed but then reverted due to test failures (see #6769).

Author: Xiangrui Meng <meng@databricks.com>

Closes#7755 from rxin/SPARK-7157 and squashes the following commits:

fbf9044 [Xiangrui Meng] fix python test

542bd37 [Xiangrui Meng] update test

604fe6d [Xiangrui Meng] Merge remote-tracking branch 'apache/master' into SPARK-7157

f051afd [Xiangrui Meng] use udf instead of building expression

f4e9425 [Xiangrui Meng] Merge remote-tracking branch 'apache/master' into SPARK-7157

8fb990b [Xiangrui Meng] Merge remote-tracking branch 'apache/master' into SPARK-7157

103beb3 [Xiangrui Meng] add Java-friendly sampleBy

991f26f [Xiangrui Meng] fix seed

4a14834 [Xiangrui Meng] move sampleBy to stat

832f7cc [Xiangrui Meng] add sampleBy to DataFrame

This is based on MechCoder 's PR https://github.com/apache/spark/pull/7731. Hopefully it could pass tests. MechCoder I tried to make minimal changes. If this passes Jenkins, we can merge this one first and then try to move `__init__.py` to `local.py` in a separate PR.

Closes#7731

Author: Xiangrui Meng <meng@databricks.com>

Closes#7746 from mengxr/SPARK-9408 and squashes the following commits:

0e05a3b [Xiangrui Meng] merge master

1135551 [Xiangrui Meng] add a comment for str(...)

c48cae0 [Xiangrui Meng] update tests

173a805 [Xiangrui Meng] move linalg.py to linalg/__init__.py

This PR is based on #7589 , thanks to adrian-wang

Added SQL function date_add, date_sub, add_months, month_between, also add a rule for

add/subtract of date/timestamp and interval.

Closes#7589

cc rxin

Author: Daoyuan Wang <daoyuan.wang@intel.com>

Author: Davies Liu <davies@databricks.com>

Closes#7754 from davies/date_add and squashes the following commits:

e8c633a [Davies Liu] Merge branch 'master' of github.com:apache/spark into date_add

9e8e085 [Davies Liu] Merge branch 'master' of github.com:apache/spark into date_add

6224ce4 [Davies Liu] fix conclict

bd18cd4 [Davies Liu] Merge branch 'master' of github.com:apache/spark into date_add

e47ff2c [Davies Liu] add python api, fix date functions

01943d0 [Davies Liu] Merge branch 'master' into date_add

522e91a [Daoyuan Wang] fix

e8a639a [Daoyuan Wang] fix

42df486 [Daoyuan Wang] fix style

87c4b77 [Daoyuan Wang] function add_months, months_between and some fixes

1a68e03 [Daoyuan Wang] poc of time interval calculation

c506661 [Daoyuan Wang] function date_add , date_sub

Also we could create a Python UDT without having a Scala one, it's important for Python users.

cc mengxr JoshRosen

Author: Davies Liu <davies@databricks.com>

Closes#7453 from davies/class_in_main and squashes the following commits:

4dfd5e1 [Davies Liu] add tests for Python and Scala UDT

793d9b2 [Davies Liu] Merge branch 'master' of github.com:apache/spark into class_in_main

dc65f19 [Davies Liu] address comment

a9a3c40 [Davies Liu] Merge branch 'master' of github.com:apache/spark into class_in_main

a86e1fc [Davies Liu] fix serialization

ad528ba [Davies Liu] Merge branch 'master' of github.com:apache/spark into class_in_main

63f52ef [Davies Liu] fix pylint check

655b8a9 [Davies Liu] Merge branch 'master' of github.com:apache/spark into class_in_main

316a394 [Davies Liu] support Python UDT with UTF

0bcb3ef [Davies Liu] fix bug in mllib

de986d6 [Davies Liu] fix test

83d65ac [Davies Liu] fix bug in StructType

55bb86e [Davies Liu] support Python UDT in __main__ (without Scala one)

`names` is not defined in this context, I think you meant `self.names`.

davies

Author: Alex Angelini <alex.louis.angelini@gmail.com>

Closes#7766 from angelini/fix_struct_type_names and squashes the following commits:

01543a1 [Alex Angelini] Fix reference to self.names in StructType

Author: JD <jd@csh.rit.edu>

Author: Joseph Batchik <josephbatchik@gmail.com>

Closes#7606 from JDrit/expr and squashes the following commits:

ad7f607 [Joseph Batchik] fixing python linter error

9d6daea [Joseph Batchik] removed order by per @rxin's comment

707d5c6 [Joseph Batchik] Added expr to fuctions.py

79df83c [JD] added example to the docs

b89eec8 [JD] moved function up as per @rxin's comment

4960909 [JD] updated per @JoshRosen's comment

2cb329c [JD] updated per @rxin's comment

9a9ad0c [JD] removing unused import

6dc26d0 [JD] removed split

7f2222c [JD] Adding expr function as per SPARK-8668

Romove Decimal.Unlimited (change to support precision up to 38, to match with Hive and other databases).

In order to keep backward source compatibility, Decimal.Unlimited is still there, but change to Decimal(38, 18).

If no precision and scale is provide, it's Decimal(10, 0) as before.

Author: Davies Liu <davies@databricks.com>

Closes#7605 from davies/decimal_unlimited and squashes the following commits:

aa3f115 [Davies Liu] fix tests and style

fb0d20d [Davies Liu] address comments

bfaae35 [Davies Liu] fix style

df93657 [Davies Liu] address comments and clean up

06727fd [Davies Liu] Merge branch 'master' of github.com:apache/spark into decimal_unlimited

4c28969 [Davies Liu] fix tests

8d783cc [Davies Liu] fix tests

788631c [Davies Liu] fix double with decimal in Union/except

1779bde [Davies Liu] fix scala style

c9c7c78 [Davies Liu] remove Decimal.Unlimited

We forgot to update doc. brkyvz

Author: Xiangrui Meng <meng@databricks.com>

Closes#7608 from mengxr/SPARK-9243 and squashes the following commits:

0ea3236 [Xiangrui Meng] null -> zero in crosstab doc