### What changes were proposed in this pull request?

https://github.com/apache/spark/pull/32015 added a way to run benchmarks much more easily in the same GitHub Actions build. This PR updates the benchmark results by using the way.

**NOTE** that looks like GitHub Actions use four types of CPU given my observations:

- Intel(R) Xeon(R) Platinum 8171M CPU 2.60GHz

- Intel(R) Xeon(R) CPU E5-2673 v4 2.30GHz

- Intel(R) Xeon(R) CPU E5-2673 v3 2.40GHz

- Intel(R) Xeon(R) Platinum 8272CL CPU 2.60GHz

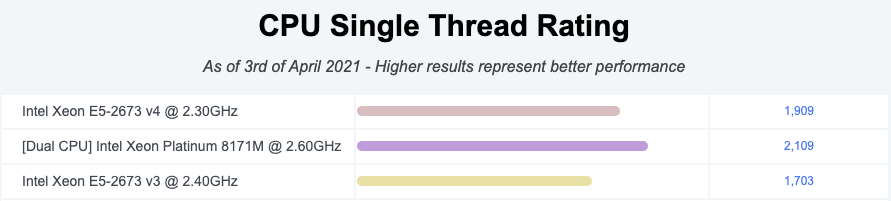

Given my quick research, seems like they perform roughly similarly:

I couldn't find enough information about Intel(R) Xeon(R) Platinum 8272CL CPU 2.60GHz but the performance seems roughly similar given the numbers.

So shouldn't be a big deal especially given that this way is much easier, encourages contributors to run more and guarantee the same number of cores and same memory with the same softwares.

### Why are the changes needed?

To have a base line of the benchmarks accordingly.

### Does this PR introduce _any_ user-facing change?

No, dev-only.

### How was this patch tested?

It was generated from:

- [Run benchmarks: * (JDK 11)](https://github.com/HyukjinKwon/spark/actions/runs/713575465)

- [Run benchmarks: * (JDK 8)](https://github.com/HyukjinKwon/spark/actions/runs/713154337)

Closes#32044 from HyukjinKwon/SPARK-34950.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Max Gekk <max.gekk@gmail.com>

### What changes were proposed in this pull request?

In the PR, I propose to replace `.collect()`, `.count()` and `.foreach(_ => ())` in SQL benchmarks and use the `NoOp` datasource. I added an implicit class to `SqlBasedBenchmark` with the `.noop()` method. It can be used in benchmark like: `ds.noop()`. The last one is unfolded to `ds.write.format("noop").mode(Overwrite).save()`.

### Why are the changes needed?

To avoid additional overhead that `collect()` (and other actions) has. For example, `.collect()` has to convert values according to external types and pull data to the driver. This can hide actual performance regressions or improvements of benchmarked operations.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Re-run all modified benchmarks using Amazon EC2.

| Item | Description |

| ---- | ----|

| Region | us-west-2 (Oregon) |

| Instance | r3.xlarge (spot instance) |

| AMI | ami-06f2f779464715dc5 (ubuntu/images/hvm-ssd/ubuntu-bionic-18.04-amd64-server-20190722.1) |

| Java | OpenJDK8/10 |

- Run `TPCDSQueryBenchmark` using instructions from the PR #26049

```

# `spark-tpcds-datagen` needs this. (JDK8)

$ git clone https://github.com/apache/spark.git -b branch-2.4 --depth 1 spark-2.4

$ export SPARK_HOME=$PWD

$ ./build/mvn clean package -DskipTests

# Generate data. (JDK8)

$ git clone gitgithub.com:maropu/spark-tpcds-datagen.git

$ cd spark-tpcds-datagen/

$ build/mvn clean package

$ mkdir -p /data/tpcds

$ ./bin/dsdgen --output-location /data/tpcds/s1 // This need `Spark 2.4`

```

- Other benchmarks ran by the script:

```

#!/usr/bin/env python3

import os

from sparktestsupport.shellutils import run_cmd

benchmarks = [

['sql/test', 'org.apache.spark.sql.execution.benchmark.AggregateBenchmark'],

['avro/test', 'org.apache.spark.sql.execution.benchmark.AvroReadBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.benchmark.BloomFilterBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.benchmark.DataSourceReadBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.benchmark.DateTimeBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.benchmark.ExtractBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.benchmark.FilterPushdownBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.benchmark.InExpressionBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.benchmark.IntervalBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.benchmark.JoinBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.benchmark.MakeDateTimeBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.benchmark.MiscBenchmark'],

['hive/test', 'org.apache.spark.sql.execution.benchmark.ObjectHashAggregateExecBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.benchmark.OrcNestedSchemaPruningBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.benchmark.OrcV2NestedSchemaPruningBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.benchmark.ParquetNestedSchemaPruningBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.benchmark.RangeBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.benchmark.UDFBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.benchmark.WideSchemaBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.benchmark.WideTableBenchmark'],

['hive/test', 'org.apache.spark.sql.hive.orc.OrcReadBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.datasources.csv.CSVBenchmark'],

['sql/test', 'org.apache.spark.sql.execution.datasources.json.JsonBenchmark']

]

print('Set SPARK_GENERATE_BENCHMARK_FILES=1')

os.environ['SPARK_GENERATE_BENCHMARK_FILES'] = '1'

for b in benchmarks:

print("Run benchmark: %s" % b[1])

run_cmd(['build/sbt', '%s:runMain %s' % (b[0], b[1])])

```

Closes#27078 from MaxGekk/noop-in-benchmarks.

Lead-authored-by: Maxim Gekk <max.gekk@gmail.com>

Co-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR regenerates the `sql/core` benchmarks in JDK8/11 to compare the result. In general, we compare the ratio instead of the time. However, in this PR, the average time is compared. This PR should be considered as a rough comparison.

**A. EXPECTED CASES(JDK11 is faster in general)**

- [x] BloomFilterBenchmark (JDK11 is faster except one case)

- [x] BuiltInDataSourceWriteBenchmark (JDK11 is faster at CSV/ORC)

- [x] CSVBenchmark (JDK11 is faster except five cases)

- [x] ColumnarBatchBenchmark (JDK11 is faster at `boolean`/`string` and some cases in `int`/`array`)

- [x] DatasetBenchmark (JDK11 is faster with `string`, but is slower for `long` type)

- [x] ExternalAppendOnlyUnsafeRowArrayBenchmark (JDK11 is faster except two cases)

- [x] ExtractBenchmark (JDK11 is faster except HOUR/MINUTE/SECOND/MILLISECONDS/MICROSECONDS)

- [x] HashedRelationMetricsBenchmark (JDK11 is faster)

- [x] JSONBenchmark (JDK11 is much faster except eight cases)

- [x] JoinBenchmark (JDK11 is faster except five cases)

- [x] OrcNestedSchemaPruningBenchmark (JDK11 is faster in nine cases)

- [x] PrimitiveArrayBenchmark (JDK11 is faster)

- [x] SortBenchmark (JDK11 is faster except `Arrays.sort` case)

- [x] UDFBenchmark (N/A, values are too small)

- [x] UnsafeArrayDataBenchmark (JDK11 is faster except one case)

- [x] WideTableBenchmark (JDK11 is faster except two cases)

**B. CASES WE NEED TO INVESTIGATE MORE LATER**

- [x] AggregateBenchmark (JDK11 is slower in general)

- [x] CompressionSchemeBenchmark (JDK11 is slower in general except `string`)

- [x] DataSourceReadBenchmark (JDK11 is slower in general)

- [x] DateTimeBenchmark (JDK11 is slightly slower in general except `parsing`)

- [x] MakeDateTimeBenchmark (JDK11 is slower except two cases)

- [x] MiscBenchmark (JDK11 is slower except ten cases)

- [x] OrcV2NestedSchemaPruningBenchmark (JDK11 is slower)

- [x] ParquetNestedSchemaPruningBenchmark (JDK11 is slower except six cases)

- [x] RangeBenchmark (JDK11 is slower except one case)

`FilterPushdownBenchmark/InExpressionBenchmark/WideSchemaBenchmark` will be compared later because it took long timer.

### Why are the changes needed?

According to the result, there are some difference between JDK8/JDK11.

This will be a baseline for the future improvement and comparison. Also, as a reproducible environment, the following environment is used.

- Instance: `r3.xlarge`

- OS: `CentOS Linux release 7.5.1804 (Core)`

- JDK:

- `OpenJDK Runtime Environment (build 1.8.0_222-b10)`

- `OpenJDK Runtime Environment 18.9 (build 11.0.4+11-LTS)`

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

This is a test-only PR. We need to run benchmark.

Closes#26003 from dongjoon-hyun/SPARK-29320.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>