### What changes were proposed in this pull request?

This PR proposes to use a proper built-in exceptions instead of the plain `Exception` in Python.

While I am here, I fixed another minor issue at `DataFrams.schema` together:

```diff

- except AttributeError as e:

- raise Exception(

- "Unable to parse datatype from schema. %s" % e)

+ except Exception as e:

+ raise ValueError(

+ "Unable to parse datatype from schema. %s" % e) from e

```

Now it catches all exceptions during schema parsing, chains the exception with `ValueError`. Previously it only caught `AttributeError` that does not catch all cases.

### Why are the changes needed?

For users to expect the proper exceptions.

### Does this PR introduce _any_ user-facing change?

Yeah, the exception classes became different but should be compatible because previous exception was plain `Exception` which other exceptions inherit.

### How was this patch tested?

Existing unittests should cover,

Closes#31238Closes#32650 from HyukjinKwon/SPARK-32194.

Authored-by: Hyukjin Kwon <gurwls223@apache.org>

Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This is rather a followup of https://github.com/apache/spark/pull/30518 that should be ported back to `branch-3.1` too.

`STOP_AT_DELIMITER` was mistakenly used twice. The duplicated `STOP_AT_DELIMITER` should be `SKIP_VALUE` in the documentation.

### Why are the changes needed?

To correctly document.

### Does this PR introduce _any_ user-facing change?

Yes, it fixes the user-facing documentation.

### How was this patch tested?

I checked them via running linters.

Closes#32423 from HyukjinKwon/SPARK-35250.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Move the datetime rebase SQL configs from the `legacy` namespace by:

1. Renaming of the existing rebase configs like `spark.sql.legacy.parquet.datetimeRebaseModeInRead` -> `spark.sql.parquet.datetimeRebaseModeInRead`.

2. Add the legacy configs as alternatives

3. Deprecate the legacy rebase configs.

### Why are the changes needed?

The rebasing SQL configs like `spark.sql.legacy.parquet.datetimeRebaseModeInRead` can be used not only for migration from previous Spark versions but also to read/write datatime columns saved by other systems/frameworks/libs. So, the configs shouldn't be considered as legacy configs.

### Does this PR introduce _any_ user-facing change?

Should not. Users will see a warning if they still use one of the legacy configs.

### How was this patch tested?

1. Manually checking new configs:

```scala

scala> spark.conf.get("spark.sql.parquet.datetimeRebaseModeInRead")

res0: String = EXCEPTION

scala> spark.conf.set("spark.sql.legacy.parquet.datetimeRebaseModeInRead", "LEGACY")

21/02/17 14:57:10 WARN SQLConf: The SQL config 'spark.sql.legacy.parquet.datetimeRebaseModeInRead' has been deprecated in Spark v3.2 and may be removed in the future. Use 'spark.sql.parquet.datetimeRebaseModeInRead' instead.

scala> spark.conf.get("spark.sql.parquet.datetimeRebaseModeInRead")

res2: String = LEGACY

```

2. By running a datetime rebasing test suite:

```

$ build/sbt "test:testOnly *ParquetRebaseDatetimeV1Suite"

```

Closes#31576 from MaxGekk/rebase-confs-alternatives.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose new options for the Parquet datasource:

1. `datetimeRebaseMode`

2. `int96RebaseMode`

Both options influence on loading ancient dates and timestamps column values from parquet files. The `datetimeRebaseMode` option impacts on loading values of the `DATE`, `TIMESTAMP_MICROS` and `TIMESTAMP_MILLIS` types, `int96RebaseMode` impacts on loading of `INT96` timestamps.

The options support the same values as the SQL configs `spark.sql.legacy.parquet.datetimeRebaseModeInRead` and `spark.sql.legacy.parquet.int96RebaseModeInRead` namely;

- `"LEGACY"`, when an option is set to this value, Spark rebases dates/timestamps from the legacy hybrid calendar (Julian + Gregorian) to the Proleptic Gregorian calendar.

- `"CORRECTED"`, dates/timestamps are read AS IS from parquet files.

- `"EXCEPTION"`, when it is set as an option value, Spark will fail the reading if it sees ancient dates/timestamps that are ambiguous between the two calendars.

### Why are the changes needed?

1. New options will allow to load parquet files from at least two sources in different rebasing modes in the same query. For instance:

```scala

val df1 = spark.read.option("datetimeRebaseMode", "legacy").parquet(folder1)

val df2 = spark.read.option("datetimeRebaseMode", "corrected").parquet(folder2)

df1.join(df2, ...)

```

Before the changes, it is impossible because the SQL config `spark.sql.legacy.parquet.datetimeRebaseModeInRead` influences on both reads.

2. Mixing of Dataset/DataFrame and RDD APIs should become possible. Since SQL configs are not propagated through RDDs, the following code fails on ancient timestamps:

```scala

spark.conf.set("spark.sql.legacy.parquet.datetimeRebaseModeInRead", "legacy")

spark.read.parquet(folder).distinct.rdd.collect()

```

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

By running the modified test suites:

```

$ build/sbt "sql/test:testOnly *ParquetRebaseDatetimeV1Suite"

$ build/sbt "sql/test:testOnly *ParquetRebaseDatetimeV2Suite"

```

Closes#31489 from MaxGekk/parquet-rebase-options.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Follow up work for #30521, document the following behaviors in the API doc:

- Figure out the effects when configurations are (provider/partitionBy) conflicting with the existing table.

- Document the lack of functionality on creating a v2 table, and guide that the users should ensure a table is created in prior to avoid the behavior unintended/insufficient table is being created.

### Why are the changes needed?

We didn't have full support for the V2 table created in the API now. (TODO SPARK-33638)

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Document only.

Closes#30885 from xuanyuanking/SPARK-33659.

Authored-by: Yuanjian Li <yuanjian.li@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR proposes to:

- Make doctests simpler to show the usage (since we're not running them now).

- Use the test utils to drop the tables if exists.

### Why are the changes needed?

Better docs and code readability.

### Does this PR introduce _any_ user-facing change?

No, dev-only. It includes some doc changes in unreleased branches.

### How was this patch tested?

Manually tested.

```bash

cd python

./run-tests --python-executable=python3.9,python3.8 --testnames "pyspark.sql.tests.test_streaming StreamingTests"

```

Closes#30873 from HyukjinKwon/SPARK-33836.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Jungtaek Lim <kabhwan.opensource@gmail.com>

### What changes were proposed in this pull request?

This PR proposes to expose `DataStreamReader.table` (SPARK-32885) and `DataStreamWriter.toTable` (SPARK-32896) to PySpark, which are the only way to read and write with table in Structured Streaming.

### Why are the changes needed?

Please refer SPARK-32885 and SPARK-32896 for rationalizations of these public APIs. This PR only exposes them to PySpark.

### Does this PR introduce _any_ user-facing change?

Yes, PySpark users will be able to read and write with table in Structured Streaming query.

### How was this patch tested?

Manually tested.

> v1 table

>> create table A and ingest to the table A

```

spark.sql("""

create table table_pyspark_parquet (

value long,

`timestamp` timestamp

) USING parquet

""")

df = spark.readStream.format('rate').option('rowsPerSecond', 100).load()

query = df.writeStream.toTable('table_pyspark_parquet', checkpointLocation='/tmp/checkpoint5')

query.lastProgress

query.stop()

```

>> read table A and ingest to the table B which doesn't exist

```

df2 = spark.readStream.table('table_pyspark_parquet')

query2 = df2.writeStream.toTable('table_pyspark_parquet_nonexist', format='parquet', checkpointLocation='/tmp/checkpoint2')

query2.lastProgress

query2.stop()

```

>> select tables

```

spark.sql("DESCRIBE TABLE table_pyspark_parquet").show()

spark.sql("SELECT * FROM table_pyspark_parquet").show()

spark.sql("DESCRIBE TABLE table_pyspark_parquet_nonexist").show()

spark.sql("SELECT * FROM table_pyspark_parquet_nonexist").show()

```

> v2 table (leveraging Apache Iceberg as it provides V2 table and custom catalog as well)

>> create table A and ingest to the table A

```

spark.sql("""

create table iceberg_catalog.default.table_pyspark_v2table (

value long,

`timestamp` timestamp

) USING iceberg

""")

df = spark.readStream.format('rate').option('rowsPerSecond', 100).load()

query = df.select('value', 'timestamp').writeStream.toTable('iceberg_catalog.default.table_pyspark_v2table', checkpointLocation='/tmp/checkpoint_v2table_1')

query.lastProgress

query.stop()

```

>> ingest to the non-exist table B

```

df2 = spark.readStream.format('rate').option('rowsPerSecond', 100).load()

query2 = df2.select('value', 'timestamp').writeStream.toTable('iceberg_catalog.default.table_pyspark_v2table_nonexist', checkpointLocation='/tmp/checkpoint_v2table_2')

query2.lastProgress

query2.stop()

```

>> ingest to the non-exist table C partitioned by `value % 10`

```

df3 = spark.readStream.format('rate').option('rowsPerSecond', 100).load()

df3a = df3.selectExpr('value', 'timestamp', 'value % 10 AS partition').repartition('partition')

query3 = df3a.writeStream.partitionBy('partition').toTable('iceberg_catalog.default.table_pyspark_v2table_nonexist_partitioned', checkpointLocation='/tmp/checkpoint_v2table_3')

query3.lastProgress

query3.stop()

```

>> select tables

```

spark.sql("DESCRIBE TABLE iceberg_catalog.default.table_pyspark_v2table").show()

spark.sql("SELECT * FROM iceberg_catalog.default.table_pyspark_v2table").show()

spark.sql("DESCRIBE TABLE iceberg_catalog.default.table_pyspark_v2table_nonexist").show()

spark.sql("SELECT * FROM iceberg_catalog.default.table_pyspark_v2table_nonexist").show()

spark.sql("DESCRIBE TABLE iceberg_catalog.default.table_pyspark_v2table_nonexist_partitioned").show()

spark.sql("SELECT * FROM iceberg_catalog.default.table_pyspark_v2table_nonexist_partitioned").show()

```

Closes#30835 from HeartSaVioR/SPARK-33836.

Lead-authored-by: Jungtaek Lim <kabhwan.opensource@gmail.com>

Co-authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

There are some differences between Spark CSV, opencsv and commons-csv, the typical case are described in SPARK-33566, When there are both unescaped quotes and unescaped qualifier in value, the results of parsing are different.

The reason for the difference is Spark use `STOP_AT_DELIMITER` as default `UnescapedQuoteHandling` to build `CsvParser` and it not configurable.

On the other hand, opencsv and commons-csv use the parsing mechanism similar to `STOP_AT_CLOSING_QUOTE ` by default.

So this pr make `unescapedQuoteHandling` option configurable to get the same parsing result as opencsv and commons-csv.

### Why are the changes needed?

Make unescapedQuoteHandling option configurable when read CSV to make parsing more flexible。

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

- Pass the Jenkins or GitHub Action

- Add a new case similar to that described in SPARK-33566

Closes#30518 from LuciferYang/SPARK-33566.

Authored-by: yangjie01 <yangjie01@baidu.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR proposes to migrate to [NumPy documentation style](https://numpydoc.readthedocs.io/en/latest/format.html), see also SPARK-33243.

While I am migrating, I also fixed some Python type hints accordingly.

### Why are the changes needed?

For better documentation as text itself, and generated HTMLs

### Does this PR introduce _any_ user-facing change?

Yes, they will see a better format of HTMLs, and better text format. See SPARK-33243.

### How was this patch tested?

Manually tested via running `./dev/lint-python`.

Closes#30181 from HyukjinKwon/SPARK-33250.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR adjusts signatures of methods decorated with `keyword_only` to indicate using [Python 3 keyword-only syntax](https://www.python.org/dev/peps/pep-3102/).

__Note__:

For the moment the goal is not to replace `keyword_only`. For justification see https://github.com/apache/spark/pull/29591#discussion_r489402579

### Why are the changes needed?

Right now it is not clear that `keyword_only` methods are indeed keyword only. This proposal addresses that.

In practice we could probably capture `locals` and drop `keyword_only` completel, i.e:

```python

keyword_only

def __init__(self, *, featuresCol="features"):

...

kwargs = self._input_kwargs

self.setParams(**kwargs)

```

could be replaced with

```python

def __init__(self, *, featuresCol="features"):

kwargs = locals()

del kwargs["self"]

...

self.setParams(**kwargs)

```

### Does this PR introduce _any_ user-facing change?

Docstrings and inspect tools will now indicate that `keyword_only` methods expect only keyword arguments.

For example with ` LinearSVC` will change from

```

>>> from pyspark.ml.classification import LinearSVC

>>> ?LinearSVC.__init__

Signature:

LinearSVC.__init__(

self,

featuresCol='features',

labelCol='label',

predictionCol='prediction',

maxIter=100,

regParam=0.0,

tol=1e-06,

rawPredictionCol='rawPrediction',

fitIntercept=True,

standardization=True,

threshold=0.0,

weightCol=None,

aggregationDepth=2,

)

Docstring: __init__(self, featuresCol="features", labelCol="label", predictionCol="prediction", maxIter=100, regParam=0.0, tol=1e-6, rawPredictionCol="rawPrediction", fitIntercept=True, standardization=True, threshold=0.0, weightCol=None, aggregationDepth=2):

File: /path/to/python/pyspark/ml/classification.py

Type: function

```

to

```

>>> from pyspark.ml.classification import LinearSVC

>>> ?LinearSVC.__init__

Signature:

LinearSVC.__init__ (

self,

*,

featuresCol='features',

labelCol='label',

predictionCol='prediction',

maxIter=100,

regParam=0.0,

tol=1e-06,

rawPredictionCol='rawPrediction',

fitIntercept=True,

standardization=True,

threshold=0.0,

weightCol=None,

aggregationDepth=2,

blockSize=1,

)

Docstring: __init__(self, \*, featuresCol="features", labelCol="label", predictionCol="prediction", maxIter=100, regParam=0.0, tol=1e-6, rawPredictionCol="rawPrediction", fitIntercept=True, standardization=True, threshold=0.0, weightCol=None, aggregationDepth=2, blockSize=1):

File: ~/Workspace/spark/python/pyspark/ml/classification.py

Type: function

```

### How was this patch tested?

Existing tests.

Closes#29799 from zero323/SPARK-32933.

Authored-by: zero323 <mszymkiewicz@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

https://issues.apache.org/jira/browse/SPARK-32719

### What changes were proposed in this pull request?

Add a check to detect missing imports. This makes sure that if we use a specific class, it should be explicitly imported (not using a wildcard).

### Why are the changes needed?

To make sure that the quality of the Python code is up to standard.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Existing unit-tests and Flake8 static analysis

Closes#29563 from Fokko/fd-add-check-missing-imports.

Authored-by: Fokko Driesprong <fokko@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

Disallow the use of unused imports:

- Unnecessary increases the memory footprint of the application

- Removes the imports that are required for the examples in the docstring from the file-scope to the example itself. This keeps the files itself clean, and gives a more complete example as it also includes the imports :)

```

fokkodriesprongFan spark % flake8 python | grep -i "imported but unused"

python/pyspark/cloudpickle.py:46:1: F401 'functools.partial' imported but unused

python/pyspark/cloudpickle.py:55:1: F401 'traceback' imported but unused

python/pyspark/heapq3.py:868:5: F401 '_heapq.*' imported but unused

python/pyspark/__init__.py:61:1: F401 'pyspark.version.__version__' imported but unused

python/pyspark/__init__.py:62:1: F401 'pyspark._globals._NoValue' imported but unused

python/pyspark/__init__.py:115:1: F401 'pyspark.sql.SQLContext' imported but unused

python/pyspark/__init__.py:115:1: F401 'pyspark.sql.HiveContext' imported but unused

python/pyspark/__init__.py:115:1: F401 'pyspark.sql.Row' imported but unused

python/pyspark/rdd.py:21:1: F401 're' imported but unused

python/pyspark/rdd.py:29:1: F401 'tempfile.NamedTemporaryFile' imported but unused

python/pyspark/mllib/regression.py:26:1: F401 'pyspark.mllib.linalg.SparseVector' imported but unused

python/pyspark/mllib/clustering.py:28:1: F401 'pyspark.mllib.linalg.SparseVector' imported but unused

python/pyspark/mllib/clustering.py:28:1: F401 'pyspark.mllib.linalg.DenseVector' imported but unused

python/pyspark/mllib/classification.py:26:1: F401 'pyspark.mllib.linalg.SparseVector' imported but unused

python/pyspark/mllib/feature.py:28:1: F401 'pyspark.mllib.linalg.DenseVector' imported but unused

python/pyspark/mllib/feature.py:28:1: F401 'pyspark.mllib.linalg.SparseVector' imported but unused

python/pyspark/mllib/feature.py:30:1: F401 'pyspark.mllib.regression.LabeledPoint' imported but unused

python/pyspark/mllib/tests/test_linalg.py:18:1: F401 'sys' imported but unused

python/pyspark/mllib/tests/test_linalg.py:642:5: F401 'pyspark.mllib.tests.test_linalg.*' imported but unused

python/pyspark/mllib/tests/test_feature.py:21:1: F401 'numpy.random' imported but unused

python/pyspark/mllib/tests/test_feature.py:21:1: F401 'numpy.exp' imported but unused

python/pyspark/mllib/tests/test_feature.py:23:1: F401 'pyspark.mllib.linalg.Vector' imported but unused

python/pyspark/mllib/tests/test_feature.py:23:1: F401 'pyspark.mllib.linalg.VectorUDT' imported but unused

python/pyspark/mllib/tests/test_feature.py:185:5: F401 'pyspark.mllib.tests.test_feature.*' imported but unused

python/pyspark/mllib/tests/test_util.py:97:5: F401 'pyspark.mllib.tests.test_util.*' imported but unused

python/pyspark/mllib/tests/test_stat.py:23:1: F401 'pyspark.mllib.linalg.Vector' imported but unused

python/pyspark/mllib/tests/test_stat.py:23:1: F401 'pyspark.mllib.linalg.SparseVector' imported but unused

python/pyspark/mllib/tests/test_stat.py:23:1: F401 'pyspark.mllib.linalg.DenseVector' imported but unused

python/pyspark/mllib/tests/test_stat.py:23:1: F401 'pyspark.mllib.linalg.VectorUDT' imported but unused

python/pyspark/mllib/tests/test_stat.py:23:1: F401 'pyspark.mllib.linalg._convert_to_vector' imported but unused

python/pyspark/mllib/tests/test_stat.py:23:1: F401 'pyspark.mllib.linalg.DenseMatrix' imported but unused

python/pyspark/mllib/tests/test_stat.py:23:1: F401 'pyspark.mllib.linalg.SparseMatrix' imported but unused

python/pyspark/mllib/tests/test_stat.py:23:1: F401 'pyspark.mllib.linalg.MatrixUDT' imported but unused

python/pyspark/mllib/tests/test_stat.py:181:5: F401 'pyspark.mllib.tests.test_stat.*' imported but unused

python/pyspark/mllib/tests/test_streaming_algorithms.py:18:1: F401 'time.time' imported but unused

python/pyspark/mllib/tests/test_streaming_algorithms.py:18:1: F401 'time.sleep' imported but unused

python/pyspark/mllib/tests/test_streaming_algorithms.py:470:5: F401 'pyspark.mllib.tests.test_streaming_algorithms.*' imported but unused

python/pyspark/mllib/tests/test_algorithms.py:295:5: F401 'pyspark.mllib.tests.test_algorithms.*' imported but unused

python/pyspark/tests/test_serializers.py:90:13: F401 'xmlrunner' imported but unused

python/pyspark/tests/test_rdd.py:21:1: F401 'sys' imported but unused

python/pyspark/tests/test_rdd.py:29:1: F401 'pyspark.resource.ResourceProfile' imported but unused

python/pyspark/tests/test_rdd.py:885:5: F401 'pyspark.tests.test_rdd.*' imported but unused

python/pyspark/tests/test_readwrite.py:19:1: F401 'sys' imported but unused

python/pyspark/tests/test_readwrite.py:22:1: F401 'array.array' imported but unused

python/pyspark/tests/test_readwrite.py:309:5: F401 'pyspark.tests.test_readwrite.*' imported but unused

python/pyspark/tests/test_join.py:62:5: F401 'pyspark.tests.test_join.*' imported but unused

python/pyspark/tests/test_taskcontext.py:19:1: F401 'shutil' imported but unused

python/pyspark/tests/test_taskcontext.py:325:5: F401 'pyspark.tests.test_taskcontext.*' imported but unused

python/pyspark/tests/test_conf.py:36:5: F401 'pyspark.tests.test_conf.*' imported but unused

python/pyspark/tests/test_broadcast.py:148:5: F401 'pyspark.tests.test_broadcast.*' imported but unused

python/pyspark/tests/test_daemon.py:76:5: F401 'pyspark.tests.test_daemon.*' imported but unused

python/pyspark/tests/test_util.py:77:5: F401 'pyspark.tests.test_util.*' imported but unused

python/pyspark/tests/test_pin_thread.py:19:1: F401 'random' imported but unused

python/pyspark/tests/test_pin_thread.py:149:5: F401 'pyspark.tests.test_pin_thread.*' imported but unused

python/pyspark/tests/test_worker.py:19:1: F401 'sys' imported but unused

python/pyspark/tests/test_worker.py:26:5: F401 'resource' imported but unused

python/pyspark/tests/test_worker.py:203:5: F401 'pyspark.tests.test_worker.*' imported but unused

python/pyspark/tests/test_profiler.py:101:5: F401 'pyspark.tests.test_profiler.*' imported but unused

python/pyspark/tests/test_shuffle.py:18:1: F401 'sys' imported but unused

python/pyspark/tests/test_shuffle.py:171:5: F401 'pyspark.tests.test_shuffle.*' imported but unused

python/pyspark/tests/test_rddbarrier.py:43:5: F401 'pyspark.tests.test_rddbarrier.*' imported but unused

python/pyspark/tests/test_context.py:129:13: F401 'userlibrary.UserClass' imported but unused

python/pyspark/tests/test_context.py:140:13: F401 'userlib.UserClass' imported but unused

python/pyspark/tests/test_context.py:310:5: F401 'pyspark.tests.test_context.*' imported but unused

python/pyspark/tests/test_appsubmit.py:241:5: F401 'pyspark.tests.test_appsubmit.*' imported but unused

python/pyspark/streaming/dstream.py:18:1: F401 'sys' imported but unused

python/pyspark/streaming/tests/test_dstream.py:27:1: F401 'pyspark.RDD' imported but unused

python/pyspark/streaming/tests/test_dstream.py:647:5: F401 'pyspark.streaming.tests.test_dstream.*' imported but unused

python/pyspark/streaming/tests/test_kinesis.py:83:5: F401 'pyspark.streaming.tests.test_kinesis.*' imported but unused

python/pyspark/streaming/tests/test_listener.py:152:5: F401 'pyspark.streaming.tests.test_listener.*' imported but unused

python/pyspark/streaming/tests/test_context.py:178:5: F401 'pyspark.streaming.tests.test_context.*' imported but unused

python/pyspark/testing/utils.py:30:5: F401 'scipy.sparse' imported but unused

python/pyspark/testing/utils.py:36:5: F401 'numpy as np' imported but unused

python/pyspark/ml/regression.py:25:1: F401 'pyspark.ml.tree._TreeEnsembleParams' imported but unused

python/pyspark/ml/regression.py:25:1: F401 'pyspark.ml.tree._HasVarianceImpurity' imported but unused

python/pyspark/ml/regression.py:29:1: F401 'pyspark.ml.wrapper.JavaParams' imported but unused

python/pyspark/ml/util.py:19:1: F401 'sys' imported but unused

python/pyspark/ml/__init__.py:25:1: F401 'pyspark.ml.pipeline' imported but unused

python/pyspark/ml/pipeline.py:18:1: F401 'sys' imported but unused

python/pyspark/ml/stat.py:22:1: F401 'pyspark.ml.linalg.DenseMatrix' imported but unused

python/pyspark/ml/stat.py:22:1: F401 'pyspark.ml.linalg.Vectors' imported but unused

python/pyspark/ml/tests/test_training_summary.py:18:1: F401 'sys' imported but unused

python/pyspark/ml/tests/test_training_summary.py:364:5: F401 'pyspark.ml.tests.test_training_summary.*' imported but unused

python/pyspark/ml/tests/test_linalg.py:381:5: F401 'pyspark.ml.tests.test_linalg.*' imported but unused

python/pyspark/ml/tests/test_tuning.py:427:9: F401 'pyspark.sql.functions as F' imported but unused

python/pyspark/ml/tests/test_tuning.py:757:5: F401 'pyspark.ml.tests.test_tuning.*' imported but unused

python/pyspark/ml/tests/test_wrapper.py:120:5: F401 'pyspark.ml.tests.test_wrapper.*' imported but unused

python/pyspark/ml/tests/test_feature.py:19:1: F401 'sys' imported but unused

python/pyspark/ml/tests/test_feature.py:304:5: F401 'pyspark.ml.tests.test_feature.*' imported but unused

python/pyspark/ml/tests/test_image.py:19:1: F401 'py4j' imported but unused

python/pyspark/ml/tests/test_image.py:22:1: F401 'pyspark.testing.mlutils.PySparkTestCase' imported but unused

python/pyspark/ml/tests/test_image.py:71:5: F401 'pyspark.ml.tests.test_image.*' imported but unused

python/pyspark/ml/tests/test_persistence.py:456:5: F401 'pyspark.ml.tests.test_persistence.*' imported but unused

python/pyspark/ml/tests/test_evaluation.py:56:5: F401 'pyspark.ml.tests.test_evaluation.*' imported but unused

python/pyspark/ml/tests/test_stat.py:43:5: F401 'pyspark.ml.tests.test_stat.*' imported but unused

python/pyspark/ml/tests/test_base.py:70:5: F401 'pyspark.ml.tests.test_base.*' imported but unused

python/pyspark/ml/tests/test_param.py:20:1: F401 'sys' imported but unused

python/pyspark/ml/tests/test_param.py:375:5: F401 'pyspark.ml.tests.test_param.*' imported but unused

python/pyspark/ml/tests/test_pipeline.py:62:5: F401 'pyspark.ml.tests.test_pipeline.*' imported but unused

python/pyspark/ml/tests/test_algorithms.py:333:5: F401 'pyspark.ml.tests.test_algorithms.*' imported but unused

python/pyspark/ml/param/__init__.py:18:1: F401 'sys' imported but unused

python/pyspark/resource/tests/test_resources.py:17:1: F401 'random' imported but unused

python/pyspark/resource/tests/test_resources.py:20:1: F401 'pyspark.resource.ResourceProfile' imported but unused

python/pyspark/resource/tests/test_resources.py:75:5: F401 'pyspark.resource.tests.test_resources.*' imported but unused

python/pyspark/sql/functions.py:32:1: F401 'pyspark.sql.udf.UserDefinedFunction' imported but unused

python/pyspark/sql/functions.py:34:1: F401 'pyspark.sql.pandas.functions.pandas_udf' imported but unused

python/pyspark/sql/session.py:30:1: F401 'pyspark.sql.types.Row' imported but unused

python/pyspark/sql/session.py:30:1: F401 'pyspark.sql.types.StringType' imported but unused

python/pyspark/sql/readwriter.py:1084:5: F401 'pyspark.sql.Row' imported but unused

python/pyspark/sql/context.py:26:1: F401 'pyspark.sql.types.IntegerType' imported but unused

python/pyspark/sql/context.py:26:1: F401 'pyspark.sql.types.Row' imported but unused

python/pyspark/sql/context.py:26:1: F401 'pyspark.sql.types.StringType' imported but unused

python/pyspark/sql/context.py:27:1: F401 'pyspark.sql.udf.UDFRegistration' imported but unused

python/pyspark/sql/streaming.py:1212:5: F401 'pyspark.sql.Row' imported but unused

python/pyspark/sql/tests/test_utils.py:55:5: F401 'pyspark.sql.tests.test_utils.*' imported but unused

python/pyspark/sql/tests/test_pandas_map.py:18:1: F401 'sys' imported but unused

python/pyspark/sql/tests/test_pandas_map.py:22:1: F401 'pyspark.sql.functions.pandas_udf' imported but unused

python/pyspark/sql/tests/test_pandas_map.py:22:1: F401 'pyspark.sql.functions.PandasUDFType' imported but unused

python/pyspark/sql/tests/test_pandas_map.py:119:5: F401 'pyspark.sql.tests.test_pandas_map.*' imported but unused

python/pyspark/sql/tests/test_catalog.py:193:5: F401 'pyspark.sql.tests.test_catalog.*' imported but unused

python/pyspark/sql/tests/test_group.py:39:5: F401 'pyspark.sql.tests.test_group.*' imported but unused

python/pyspark/sql/tests/test_session.py:361:5: F401 'pyspark.sql.tests.test_session.*' imported but unused

python/pyspark/sql/tests/test_conf.py:49:5: F401 'pyspark.sql.tests.test_conf.*' imported but unused

python/pyspark/sql/tests/test_pandas_cogrouped_map.py:19:1: F401 'sys' imported but unused

python/pyspark/sql/tests/test_pandas_cogrouped_map.py:21:1: F401 'pyspark.sql.functions.sum' imported but unused

python/pyspark/sql/tests/test_pandas_cogrouped_map.py:21:1: F401 'pyspark.sql.functions.PandasUDFType' imported but unused

python/pyspark/sql/tests/test_pandas_cogrouped_map.py:29:5: F401 'pandas.util.testing.assert_series_equal' imported but unused

python/pyspark/sql/tests/test_pandas_cogrouped_map.py:32:5: F401 'pyarrow as pa' imported but unused

python/pyspark/sql/tests/test_pandas_cogrouped_map.py:248:5: F401 'pyspark.sql.tests.test_pandas_cogrouped_map.*' imported but unused

python/pyspark/sql/tests/test_udf.py:24:1: F401 'py4j' imported but unused

python/pyspark/sql/tests/test_pandas_udf_typehints.py:246:5: F401 'pyspark.sql.tests.test_pandas_udf_typehints.*' imported but unused

python/pyspark/sql/tests/test_functions.py:19:1: F401 'sys' imported but unused

python/pyspark/sql/tests/test_functions.py:362:9: F401 'pyspark.sql.functions.exists' imported but unused

python/pyspark/sql/tests/test_functions.py:387:5: F401 'pyspark.sql.tests.test_functions.*' imported but unused

python/pyspark/sql/tests/test_pandas_udf_scalar.py:21:1: F401 'sys' imported but unused

python/pyspark/sql/tests/test_pandas_udf_scalar.py:45:5: F401 'pyarrow as pa' imported but unused

python/pyspark/sql/tests/test_pandas_udf_window.py:355:5: F401 'pyspark.sql.tests.test_pandas_udf_window.*' imported but unused

python/pyspark/sql/tests/test_arrow.py:38:5: F401 'pyarrow as pa' imported but unused

python/pyspark/sql/tests/test_pandas_grouped_map.py:20:1: F401 'sys' imported but unused

python/pyspark/sql/tests/test_pandas_grouped_map.py:38:5: F401 'pyarrow as pa' imported but unused

python/pyspark/sql/tests/test_dataframe.py:382:9: F401 'pyspark.sql.DataFrame' imported but unused

python/pyspark/sql/avro/functions.py:125:5: F401 'pyspark.sql.Row' imported but unused

python/pyspark/sql/pandas/functions.py:19:1: F401 'sys' imported but unused

```

After:

```

fokkodriesprongFan spark % flake8 python | grep -i "imported but unused"

fokkodriesprongFan spark %

```

### What changes were proposed in this pull request?

Removing unused imports from the Python files to keep everything nice and tidy.

### Why are the changes needed?

Cleaning up of the imports that aren't used, and suppressing the imports that are used as references to other modules, preserving backward compatibility.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Adding the rule to the existing Flake8 checks.

Closes#29121 from Fokko/SPARK-32319.

Authored-by: Fokko Driesprong <fokko@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

1. Describe the JSON option `allowNonNumericNumbers` which is used in read

2. Add new test cases for allowed JSON field values: NaN, +INF, +Infinity, Infinity, -INF and -Infinity

### Why are the changes needed?

To improve UX with Spark SQL and to provide users full info about the supported option.

### Does this PR introduce _any_ user-facing change?

Yes, in PySpark.

### How was this patch tested?

Added new test to `JsonParsingOptionsSuite`

Closes#29275 from MaxGekk/allowNonNumericNumbers-doc.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR proposes to redesign the PySpark documentation.

I made a demo site to make it easier to review: https://hyukjin-spark.readthedocs.io/en/stable/reference/index.html.

Here is the initial draft for the final PySpark docs shape: https://hyukjin-spark.readthedocs.io/en/latest/index.html.

In more details, this PR proposes:

1. Use [pydata_sphinx_theme](https://github.com/pandas-dev/pydata-sphinx-theme) theme - [pandas](https://pandas.pydata.org/docs/) and [Koalas](https://koalas.readthedocs.io/en/latest/) use this theme. The CSS overwrite is ported from Koalas. The colours in the CSS were actually chosen by designers to use in Spark.

2. Use the Sphinx option to separate `source` and `build` directories as the documentation pages will likely grow.

3. Port current API documentation into the new style. It mimics Koalas and pandas to use the theme most effectively.

One disadvantage of this approach is that you should list up APIs or classes; however, I think this isn't a big issue in PySpark since we're being conservative on adding APIs. I also intentionally listed classes only instead of functions in ML and MLlib to make it relatively easier to manage.

### Why are the changes needed?

Often I hear the complaints, from the users, that current PySpark documentation is pretty messy to read - https://spark.apache.org/docs/latest/api/python/index.html compared other projects such as [pandas](https://pandas.pydata.org/docs/) and [Koalas](https://koalas.readthedocs.io/en/latest/).

It would be nicer if we can make it more organised instead of just listing all classes, methods and attributes to make it easier to navigate.

Also, the documentation has been there from almost the very first version of PySpark. Maybe it's time to update it.

### Does this PR introduce _any_ user-facing change?

Yes, PySpark API documentation will be redesigned.

### How was this patch tested?

Manually tested, and the demo site was made to show.

Closes#29188 from HyukjinKwon/SPARK-32179.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR aims to drop Python 2.7, 3.4 and 3.5.

Roughly speaking, it removes all the widely known Python 2 compatibility workarounds such as `sys.version` comparison, `__future__`. Also, it removes the Python 2 dedicated codes such as `ArrayConstructor` in Spark.

### Why are the changes needed?

1. Unsupport EOL Python versions

2. Reduce maintenance overhead and remove a bit of legacy codes and hacks for Python 2.

3. PyPy2 has a critical bug that causes a flaky test, SPARK-28358 given my testing and investigation.

4. Users can use Python type hints with Pandas UDFs without thinking about Python version

5. Users can leverage one latest cloudpickle, https://github.com/apache/spark/pull/28950. With Python 3.8+ it can also leverage C pickle.

### Does this PR introduce _any_ user-facing change?

Yes, users cannot use Python 2.7, 3.4 and 3.5 in the upcoming Spark version.

### How was this patch tested?

Manually tested and also tested in Jenkins.

Closes#28957 from HyukjinKwon/SPARK-32138.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

This commit is published into the public domain.

### What changes were proposed in this pull request?

Some syntax issues in docstrings have been fixed.

### Why are the changes needed?

In some places, the documentation did not render as intended, e.g. parameter documentations were not formatted as such.

### Does this PR introduce any user-facing change?

Slight improvements in documentation.

### How was this patch tested?

Manual testing and `dev/lint-python` run. No new Sphinx warnings arise due to this change.

Closes#28559 from DavidToneian/SPARK-31739.

Authored-by: David Toneian <david@toneian.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Update default datetime pattern from `yyyy-MM-dd'T'HH:mm:ss.SSSXXX ` to `yyyy-MM-dd'T'HH:mm:ss[.SSS][XXX] ` for JSON/CSV APIs documentations

### Why are the changes needed?

doc fix

### Does this PR introduce any user-facing change?

Yes, the documentation will change

### How was this patch tested?

Passing Jenkins

Closes#28204 from yaooqinn/SPARK-31414-F.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

In the PR, I propose to update the doc for the `timeZone` option in JSON/CSV datasources and for the `tz` parameter of the `from_utc_timestamp()`/`to_utc_timestamp()` functions, and to restrict format of config's values to 2 forms:

1. Geographical regions, such as `America/Los_Angeles`.

2. Fixed offsets - a fully resolved offset from UTC. For example, `-08:00`.

### Why are the changes needed?

Other formats such as three-letter time zone IDs are ambitious, and depend on the locale. For example, `CST` could be U.S. `Central Standard Time` and `China Standard Time`. Such formats have been already deprecated in JDK, see [Three-letter time zone IDs](https://docs.oracle.com/javase/8/docs/api/java/util/TimeZone.html).

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By running `./dev/scalastyle`, and manual testing.

Closes#28051 from MaxGekk/doc-time-zone-option.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

the link for `partition discovery` is malformed, because for releases, there will contains` /docs/<version>/` in the full URL.

### Why are the changes needed?

fix doc

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

`SKIP_SCALADOC=1 SKIP_RDOC=1 SKIP_SQLDOC=1 jekyll serve` locally verified

Closes#28017 from yaooqinn/doc.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

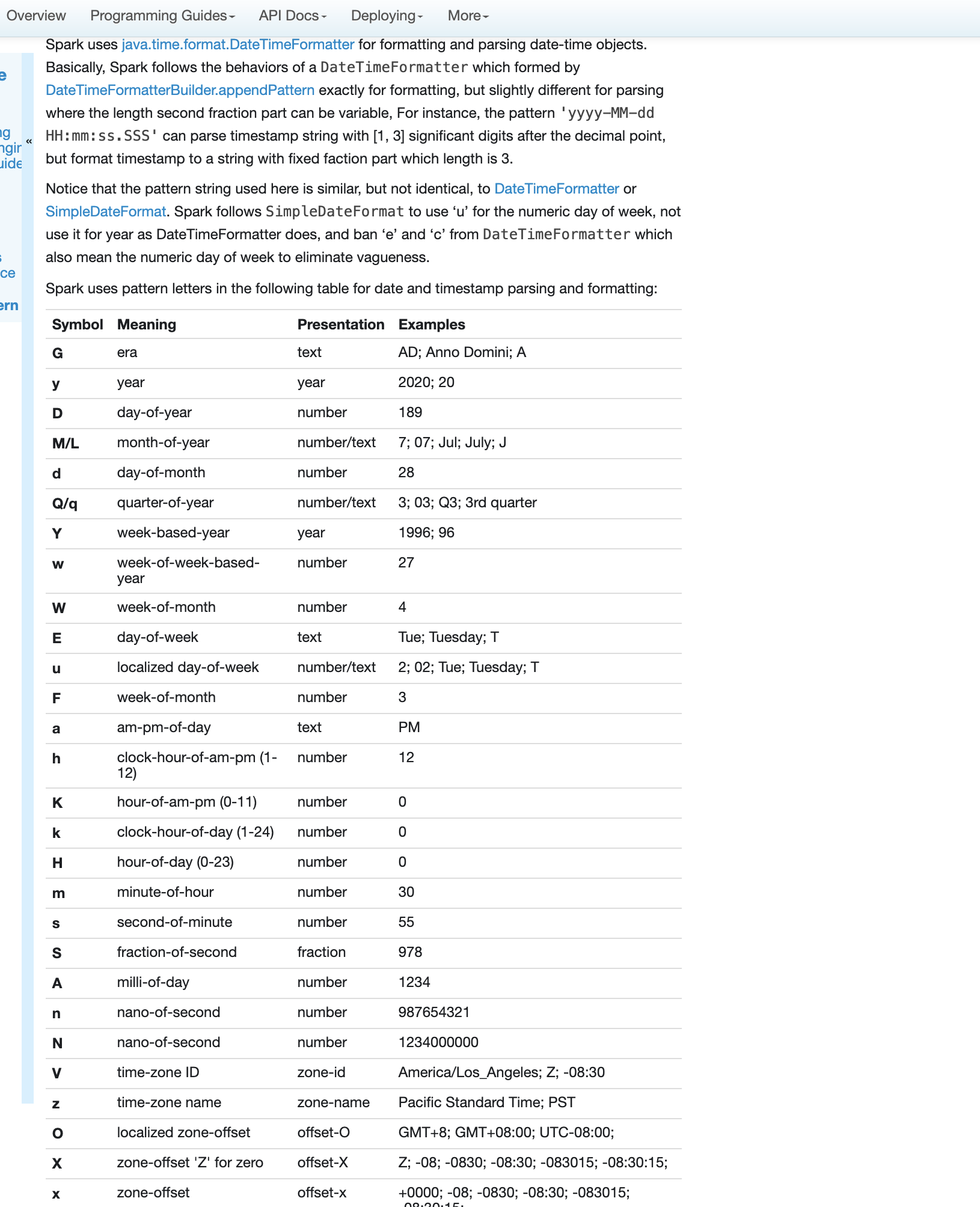

### What changes were proposed in this pull request?

Fix errors and missing parts for datetime pattern document

1. The pattern we use is similar to DateTimeFormatter and SimpleDateFormat but not identical. So we shouldn't use any of them in the API docs but use a link to the doc of our own.

2. Some pattern letters are missing

3. Some pattern letters are explicitly banned - Set('A', 'c', 'e', 'n', 'N')

4. the second fraction pattern different logic for parsing and formatting

### Why are the changes needed?

fix and improve doc

### Does this PR introduce any user-facing change?

yes, new and updated doc

### How was this patch tested?

pass Jenkins

viewed locally with `jekyll serve`

Closes#27956 from yaooqinn/SPARK-31189.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

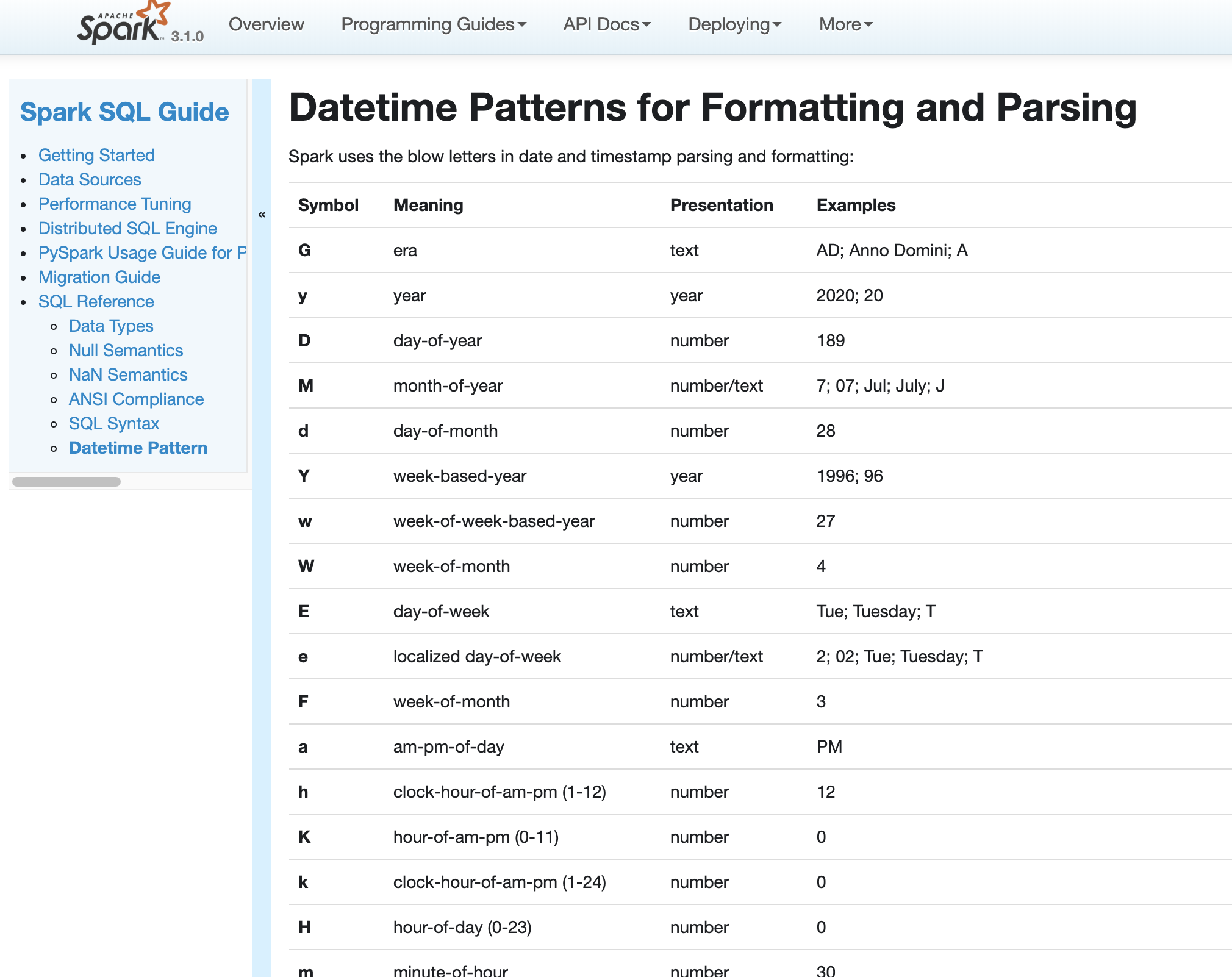

### What changes were proposed in this pull request?

In Spark version 2.4 and earlier, datetime parsing, formatting and conversion are performed by using the hybrid calendar (Julian + Gregorian).

Since the Proleptic Gregorian calendar is de-facto calendar worldwide, as well as the chosen one in ANSI SQL standard, Spark 3.0 switches to it by using Java 8 API classes (the java.time packages that are based on ISO chronology ). The switching job is completed in SPARK-26651.

But after the switching, there are some patterns not compatible between Java 8 and Java 7, Spark needs its own definition on the patterns rather than depends on Java API.

In this PR, we achieve this by writing the document and shadow the incompatible letters. See more details in [SPARK-31030](https://issues.apache.org/jira/browse/SPARK-31030)

### Why are the changes needed?

For backward compatibility.

### Does this PR introduce any user-facing change?

No.

After we define our own datetime parsing and formatting patterns, it's same to old Spark version.

### How was this patch tested?

Existing and new added UT.

Locally document test:

Closes#27830 from xuanyuanking/SPARK-31030.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

This commit is published into the public domain.

### What changes were proposed in this pull request?

Some syntax issues in docstrings have been fixed.

### Why are the changes needed?

In some places, the documentation did not render as intended, e.g. parameter documentations were not formatted as such.

### Does this PR introduce any user-facing change?

Slight improvements in documentation.

### How was this patch tested?

Manual testing. No new Sphinx warnings arise due to this change.

Closes#27613 from DavidToneian/SPARK-30859.

Authored-by: David Toneian <david@toneian.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

This commit is published into the public domain.

### What changes were proposed in this pull request?

This PR fixes the documentation of `DataFrameReader`, `DataFrameWriter`, `DataStreamReader`, and `DataStreamWriter`, where attributes of other classes were misrepresented as functions. Additionally, creation of hyperlinks across modules was fixed in these instances.

### Why are the changes needed?

The old state produced documentation that suggested invalid usage of PySpark objects (accessing attributes as though they were callable.)

### Does this PR introduce any user-facing change?

No, except for improved documentation.

### How was this patch tested?

No test added; documentation build runs through.

Closes#27553 from DavidToneian/docfix-DataFrameReader-DataFrameWriter-DataStreamReader-DataStreamWriter.

Authored-by: David Toneian <david@toneian.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR adds and exposes the options, 'recursiveFileLookup' and 'pathGlobFilter' in file sources 'mergeSchema' in ORC, into documentation.

- `recursiveFileLookup` at file sources: https://github.com/apache/spark/pull/24830 ([SPARK-27627](https://issues.apache.org/jira/browse/SPARK-27627))

- `pathGlobFilter` at file sources: https://github.com/apache/spark/pull/24518 ([SPARK-27990](https://issues.apache.org/jira/browse/SPARK-27990))

- `mergeSchema` at ORC: https://github.com/apache/spark/pull/24043 ([SPARK-11412](https://issues.apache.org/jira/browse/SPARK-11412))

**Note that** `timeZone` option was not moved from `DataFrameReader.options` as I assume it will likely affect other datasources as well once DSv2 is complete.

### Why are the changes needed?

To document available options in sources properly.

### Does this PR introduce any user-facing change?

In PySpark, `pathGlobFilter` can be set via `DataFrameReader.(text|orc|parquet|json|csv)` and `DataStreamReader.(text|orc|parquet|json|csv)`.

### How was this patch tested?

Manually built the doc and checked the output. Option setting in PySpark is rather a logical change. I manually tested one only:

```bash

$ ls -al tmp

...

-rw-r--r-- 1 hyukjin.kwon staff 3 Dec 20 12:19 aa

-rw-r--r-- 1 hyukjin.kwon staff 3 Dec 20 12:19 ab

-rw-r--r-- 1 hyukjin.kwon staff 3 Dec 20 12:19 ac

-rw-r--r-- 1 hyukjin.kwon staff 3 Dec 20 12:19 cc

```

```python

>>> spark.read.text("tmp", pathGlobFilter="*c").show()

```

```

+-----+

|value|

+-----+

| ac|

| cc|

+-----+

```

Closes#26958 from HyukjinKwon/doc-followup.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR is a follow-up to #24043 and cousin of #26730. It exposes the `mergeSchema` option directly in the ORC APIs.

### Why are the changes needed?

So the Python API matches the Scala API.

### Does this PR introduce any user-facing change?

Yes, it adds a new option directly in the ORC reader method signatures.

### How was this patch tested?

I tested this manually as follows:

```

>>> spark.range(3).write.orc('test-orc')

>>> spark.range(3).withColumnRenamed('id', 'name').write.orc('test-orc/nested')

>>> spark.read.orc('test-orc', recursiveFileLookup=True, mergeSchema=True)

DataFrame[id: bigint, name: bigint]

>>> spark.read.orc('test-orc', recursiveFileLookup=True, mergeSchema=False)

DataFrame[id: bigint]

>>> spark.conf.set('spark.sql.orc.mergeSchema', True)

>>> spark.read.orc('test-orc', recursiveFileLookup=True)

DataFrame[id: bigint, name: bigint]

>>> spark.read.orc('test-orc', recursiveFileLookup=True, mergeSchema=False)

DataFrame[id: bigint]

```

Closes#26755 from nchammas/SPARK-30113-ORC-mergeSchema.

Authored-by: Nicholas Chammas <nicholas.chammas@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This change properly documents the `mergeSchema` option directly in the Python APIs for reading Parquet data.

### Why are the changes needed?

The docstring for `DataFrameReader.parquet()` mentions `mergeSchema` but doesn't show it in the API. It seems like a simple oversight.

Before this PR, you'd have to do this to use `mergeSchema`:

```python

spark.read.option('mergeSchema', True).parquet('test-parquet').show()

```

After this PR, you can use the option as (I believe) it was intended to be used:

```python

spark.read.parquet('test-parquet', mergeSchema=True).show()

```

### Does this PR introduce any user-facing change?

Yes, this PR changes the signatures of `DataFrameReader.parquet()` and `DataStreamReader.parquet()` to match their docstrings.

### How was this patch tested?

Testing the `mergeSchema` option directly seems to be left to the Scala side of the codebase. I tested my change manually to confirm the API works.

I also confirmed that setting `spark.sql.parquet.mergeSchema` at the session does not get overridden by leaving `mergeSchema` at its default when calling `parquet()`:

```

>>> spark.conf.set('spark.sql.parquet.mergeSchema', True)

>>> spark.range(3).write.parquet('test-parquet/id')

>>> spark.range(3).withColumnRenamed('id', 'name').write.parquet('test-parquet/name')

>>> spark.read.option('recursiveFileLookup', True).parquet('test-parquet').show()

+----+----+

| id|name|

+----+----+

|null| 1|

|null| 2|

|null| 0|

| 1|null|

| 2|null|

| 0|null|

+----+----+

>>> spark.read.option('recursiveFileLookup', True).parquet('test-parquet', mergeSchema=False).show()

+----+

| id|

+----+

|null|

|null|

|null|

| 1|

| 2|

| 0|

+----+

```

Closes#26730 from nchammas/parquet-merge-schema.

Authored-by: Nicholas Chammas <nicholas.chammas@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

As a follow-up to #24830, this PR adds the `recursiveFileLookup` option to the Python DataFrameReader API.

### Why are the changes needed?

This PR maintains Python feature parity with Scala.

### Does this PR introduce any user-facing change?

Yes.

Before this PR, you'd only be able to use this option as follows:

```python

spark.read.option("recursiveFileLookup", True).text("test-data").show()

```

With this PR, you can reference the option from within the format-specific method:

```python

spark.read.text("test-data", recursiveFileLookup=True).show()

```

This option now also shows up in the Python API docs.

### How was this patch tested?

I tested this manually by creating the following directories with dummy data:

```

test-data

├── 1.txt

└── nested

└── 2.txt

test-parquet

├── nested

│ ├── _SUCCESS

│ ├── part-00000-...-.parquet

├── _SUCCESS

├── part-00000-...-.parquet

```

I then ran the following tests and confirmed the output looked good:

```python

spark.read.parquet("test-parquet", recursiveFileLookup=True).show()

spark.read.text("test-data", recursiveFileLookup=True).show()

spark.read.csv("test-data", recursiveFileLookup=True).show()

```

`python/pyspark/sql/tests/test_readwriter.py` seems pretty sparse. I'm happy to add my tests there, though it seems we have been deferring testing like this to the Scala side of things.

Closes#26718 from nchammas/SPARK-27990-recursiveFileLookup-python.

Authored-by: Nicholas Chammas <nicholas.chammas@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

I propose that we change the example code documentation to call the proper function .

For example, under the `foreachBatch` function, the example code was calling the `foreach()` function by mistake.

### Why are the changes needed?

I suppose it could confuse some people, and it is a typo

### Does this PR introduce any user-facing change?

No, there is no "meaningful" code being change, simply the documentation

### How was this patch tested?

I made the change on a fork and it still worked

Closes#26299 from mstill3/patch-1.

Authored-by: Matt Stillwell <18670089+mstill3@users.noreply.github.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

Updating univocity-parsers version to 2.8.3, which adds support for multiple character delimiters

Moving univocity-parsers version to spark-parent pom dependencyManagement section

Adding new utility method to build multi-char delimiter string, which delegates to existing one

Adding tests for multiple character delimited CSV

### What changes were proposed in this pull request?

Adds support for parsing CSV data using multiple-character delimiters. Existing logic for converting the input delimiter string to characters was kept and invoked in a loop. Project dependencies were updated to remove redundant declaration of `univocity-parsers` version, and also to change that version to the latest.

### Why are the changes needed?

It is quite common for people to have delimited data, where the delimiter is not a single character, but rather a sequence of characters. Currently, it is difficult to handle such data in Spark (typically needs pre-processing).

### Does this PR introduce any user-facing change?

Yes. Specifying the "delimiter" option for the DataFrame read, and providing more than one character, will no longer result in an exception. Instead, it will be converted as before and passed to the underlying library (Univocity), which has accepted multiple character delimiters since 2.8.0.

### How was this patch tested?

The `CSVSuite` tests were confirmed passing (including new methods), and `sbt` tests for `sql` were executed.

Closes#26027 from jeff303/SPARK-24540.

Authored-by: Jeff Evans <jeffrey.wayne.evans@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Right now, batch DataFrame always changes the schema to nullable automatically (See this line: 325bc8e9c6/sql/core/src/main/scala/org/apache/spark/sql/execution/datasources/DataSource.scala (L399)). But streaming file source is missing this.

This PR updates the streaming file source schema to force it be nullable. I also added a flag `spark.sql.streaming.fileSource.schema.forceNullable` to disable this change since some users may rely on the old behavior.

## How was this patch tested?

The new unit test.

Closes#25382 from zsxwing/SPARK-28651.

Authored-by: Shixiong Zhu <zsxwing@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In the PR, I propose to use `uuuu` for years instead of `yyyy` in date/timestamp patterns without the era pattern `G` (https://docs.oracle.com/javase/8/docs/api/java/time/format/DateTimeFormatter.html). **Parsing/formatting of positive years (current era) will be the same.** The difference is in formatting negative years belong to previous era - BC (Before Christ).

I replaced the `yyyy` pattern by `uuuu` everywhere except:

1. Test, Suite & Benchmark. Existing tests must work as is.

2. `SimpleDateFormat` because it doesn't support the `uuuu` pattern.

3. Comments and examples (except comments related to already replaced patterns).

Before the changes, the year of common era `100` and the year of BC era `-99`, showed similarly as `100`. After the changes negative years will be formatted with the `-` sign.

Before:

```Scala

scala> Seq(java.time.LocalDate.of(-99, 1, 1)).toDF().show

+----------+

| value|

+----------+

|0100-01-01|

+----------+

```

After:

```Scala

scala> Seq(java.time.LocalDate.of(-99, 1, 1)).toDF().show

+-----------+

| value|

+-----------+

|-0099-01-01|

+-----------+

```

## How was this patch tested?

By existing test suites, and added tests for negative years to `DateFormatterSuite` and `TimestampFormatterSuite`.

Closes#25230 from MaxGekk/year-pattern-uuuu.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

### Background:

The data source option `pathGlobFilter` is introduced for Binary file format: https://github.com/apache/spark/pull/24354 , which can be used for filtering file names, e.g. reading `.png` files only while there is `.json` files in the same directory.

### Proposal:

Make the option `pathGlobFilter` as a general option for all file sources. The path filtering should happen in the path globbing on Driver.

### Motivation:

Filtering the file path names in file scan tasks on executors is kind of ugly.

### Impact:

1. The splitting of file partitions will be more balanced.

2. The metrics of file scan will be more accurate.

3. Users can use the option for reading other file sources.

## How was this patch tested?

Unit tests

Closes#24518 from gengliangwang/globFilter.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

There is a MemorySink v2 already so v1 can be removed. In this PR I've removed it completely.

What this PR contains:

* V1 memory sink removal

* V2 memory sink renamed to become the only implementation

* Since DSv2 sends exceptions in a chained format (linking them with cause field) I've made python side compliant

* Adapted all the tests

## How was this patch tested?

Existing unit tests.

Closes#24403 from gaborgsomogyi/SPARK-23014.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

Clarify that text DataSource read/write, and RDD methods that read text, always use UTF-8 as they use Hadoop's implementation underneath. I think these are all the places that this needs a mention in the user-facing docs.

## How was this patch tested?

Doc tests.

Closes#23962 from srowen/SPARK-26016.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

The PRs #23150 and #23196 switched JSON and CSV datasources on new formatter for dates/timestamps which is based on `DateTimeFormatter`. In this PR, I replaced `SimpleDateFormat` by `DateTimeFormatter` to reflect the changes.

Closes#23374 from MaxGekk/java-time-docs.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In the PR, I propose to return partial results from JSON datasource and JSON functions in the PERMISSIVE mode if some of JSON fields are parsed and converted to desired types successfully. The changes are made only for `StructType`. Whole bad JSON records are placed into the corrupt column specified by the `columnNameOfCorruptRecord` option or SQL config.

Partial results are not returned for malformed JSON input.

## How was this patch tested?

Added new UT which checks converting JSON strings with one invalid and one valid field at the end of the string.

Closes#23253 from MaxGekk/json-bad-record.

Lead-authored-by: Maxim Gekk <max.gekk@gmail.com>

Co-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In the PR, I propose new options for CSV datasource - `lineSep` similar to Text and JSON datasource. The option allows to specify custom line separator of maximum length of 2 characters (because of a restriction in `uniVocity` parser). New option can be used in reading and writing CSV files.

## How was this patch tested?

Added a few tests with custom `lineSep` for enabled/disabled `multiLine` in read as well as tests in write. Also I added roundtrip tests.

Closes#23080 from MaxGekk/csv-line-sep.

Lead-authored-by: Maxim Gekk <max.gekk@gmail.com>

Co-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Added JSON options for `json()` in streaming.py that are presented in the similar method in readwriter.py. In particular, missed options are `dropFieldIfAllNull` and `encoding`.

Closes#22973 from MaxGekk/streaming-missed-options.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In the PR, I propose to add new option `locale` into CSVOptions/JSONOptions to make parsing date/timestamps in local languages possible. Currently the locale is hard coded to `Locale.US`.

## How was this patch tested?

Added two tests for parsing a date from CSV/JSON - `ноя 2018`.

Closes#22951 from MaxGekk/locale.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

(This change is a subset of the changes needed for the JIRA; see https://github.com/apache/spark/pull/22231)

## What changes were proposed in this pull request?

Use raw strings and simpler regex syntax consistently in Python, which also avoids warnings from pycodestyle about accidentally relying Python's non-escaping of non-reserved chars in normal strings. Also, fix a few long lines.

## How was this patch tested?

Existing tests, and some manual double-checking of the behavior of regexes in Python 2/3 to be sure.

Closes#22400 from srowen/SPARK-25238.2.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In the PR, I propose new CSV option `emptyValue` and an update in the SQL Migration Guide which describes how to revert previous behavior when empty strings were not written at all. Since Spark 2.4, empty strings are saved as `""` to distinguish them from saved `null`s.

Closes#22234Closes#22367

## How was this patch tested?

It was tested by `CSVSuite` and new tests added in the PR #22234Closes#22389 from MaxGekk/csv-empty-value-master.

Lead-authored-by: Mario Molina <mmolimar@gmail.com>

Co-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Fix issues arising from the fact that builtins __file__, __long__, __raw_input()__, __unicode__, __xrange()__, etc. were all removed from Python 3. __Undefined names__ have the potential to raise [NameError](https://docs.python.org/3/library/exceptions.html#NameError) at runtime.

## How was this patch tested?

* $ __python2 -m flake8 . --count --select=E9,F82 --show-source --statistics__

* $ __python3 -m flake8 . --count --select=E9,F82 --show-source --statistics__

holdenk

flake8 testing of https://github.com/apache/spark on Python 3.6.3

$ __python3 -m flake8 . --count --select=E901,E999,F821,F822,F823 --show-source --statistics__

```

./dev/merge_spark_pr.py:98:14: F821 undefined name 'raw_input'

result = raw_input("\n%s (y/n): " % prompt)

^

./dev/merge_spark_pr.py:136:22: F821 undefined name 'raw_input'

primary_author = raw_input(

^

./dev/merge_spark_pr.py:186:16: F821 undefined name 'raw_input'

pick_ref = raw_input("Enter a branch name [%s]: " % default_branch)

^

./dev/merge_spark_pr.py:233:15: F821 undefined name 'raw_input'

jira_id = raw_input("Enter a JIRA id [%s]: " % default_jira_id)

^

./dev/merge_spark_pr.py:278:20: F821 undefined name 'raw_input'

fix_versions = raw_input("Enter comma-separated fix version(s) [%s]: " % default_fix_versions)

^

./dev/merge_spark_pr.py:317:28: F821 undefined name 'raw_input'

raw_assignee = raw_input(

^

./dev/merge_spark_pr.py:430:14: F821 undefined name 'raw_input'

pr_num = raw_input("Which pull request would you like to merge? (e.g. 34): ")

^

./dev/merge_spark_pr.py:442:18: F821 undefined name 'raw_input'

result = raw_input("Would you like to use the modified title? (y/n): ")

^

./dev/merge_spark_pr.py:493:11: F821 undefined name 'raw_input'

while raw_input("\n%s (y/n): " % pick_prompt).lower() == "y":

^

./dev/create-release/releaseutils.py:58:16: F821 undefined name 'raw_input'

response = raw_input("%s [y/n]: " % msg)

^

./dev/create-release/releaseutils.py:152:38: F821 undefined name 'unicode'

author = unidecode.unidecode(unicode(author, "UTF-8")).strip()

^

./python/setup.py:37:11: F821 undefined name '__version__'

VERSION = __version__

^

./python/pyspark/cloudpickle.py:275:18: F821 undefined name 'buffer'

dispatch[buffer] = save_buffer

^

./python/pyspark/cloudpickle.py:807:18: F821 undefined name 'file'

dispatch[file] = save_file

^

./python/pyspark/sql/conf.py:61:61: F821 undefined name 'unicode'

if not isinstance(obj, str) and not isinstance(obj, unicode):

^

./python/pyspark/sql/streaming.py:25:21: F821 undefined name 'long'

intlike = (int, long)

^

./python/pyspark/streaming/dstream.py:405:35: F821 undefined name 'long'

return self._sc._jvm.Time(long(timestamp * 1000))

^

./sql/hive/src/test/resources/data/scripts/dumpdata_script.py:21:10: F821 undefined name 'xrange'

for i in xrange(50):

^

./sql/hive/src/test/resources/data/scripts/dumpdata_script.py:22:14: F821 undefined name 'xrange'

for j in xrange(5):

^

./sql/hive/src/test/resources/data/scripts/dumpdata_script.py:23:18: F821 undefined name 'xrange'

for k in xrange(20022):

^

20 F821 undefined name 'raw_input'

20

```

Closes#20838 from cclauss/fix-undefined-names.

Authored-by: cclauss <cclauss@bluewin.ch>

Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

## What changes were proposed in this pull request?

Currently, the micro-batches in the MicroBatchExecution is not exposed to the user through any public API. This was because we did not want to expose the micro-batches, so that all the APIs we expose, we can eventually support them in the Continuous engine. But now that we have better sense of buiding a ContinuousExecution, I am considering adding APIs which will run only the MicroBatchExecution. I have quite a few use cases where exposing the microbatch output as a dataframe is useful.

- Pass the output rows of each batch to a library that is designed only the batch jobs (example, uses many ML libraries need to collect() while learning).

- Reuse batch data sources for output whose streaming version does not exists (e.g. redshift data source).

- Writer the output rows to multiple places by writing twice for each batch. This is not the most elegant thing to do for multiple-output streaming queries but is likely to be better than running two streaming queries processing the same data twice.

The proposal is to add a method `foreachBatch(f: Dataset[T] => Unit)` to Scala/Java/Python `DataStreamWriter`.

## How was this patch tested?

New unit tests.

Author: Tathagata Das <tathagata.das1565@gmail.com>

Closes#21571 from tdas/foreachBatch.

## What changes were proposed in this pull request?

This PR adds `foreach` for streaming queries in Python. Users will be able to specify their processing logic in two different ways.

- As a function that takes a row as input.

- As an object that has methods `open`, `process`, and `close` methods.

See the python docs in this PR for more details.

## How was this patch tested?

Added java and python unit tests

Author: Tathagata Das <tathagata.das1565@gmail.com>

Closes#21477 from tdas/SPARK-24396.

## What changes were proposed in this pull request?

Currently column names of headers in CSV files are not checked against provided schema of CSV data. It could cause errors like showed in the [SPARK-23786](https://issues.apache.org/jira/browse/SPARK-23786) and https://github.com/apache/spark/pull/20894#issuecomment-375957777. I introduced new CSV option - `enforceSchema`. If it is enabled (by default `true`), Spark forcibly applies provided or inferred schema to CSV files. In that case, CSV headers are ignored and not checked against the schema. If `enforceSchema` is set to `false`, additional checks can be performed. For example, if column in CSV header and in the schema have different ordering, the following exception is thrown:

```

java.lang.IllegalArgumentException: CSV file header does not contain the expected fields

Header: depth, temperature

Schema: temperature, depth

CSV file: marina.csv

```

## How was this patch tested?

The changes were tested by existing tests of CSVSuite and by 2 new tests.

Author: Maxim Gekk <maxim.gekk@databricks.com>

Author: Maxim Gekk <max.gekk@gmail.com>

Closes#20894 from MaxGekk/check-column-names.