### What changes were proposed in this pull request?

This PR is to fix the LIMIT code-gen bug in https://issues.apache.org/jira/browse/SPARK-34796, where the counter variable from `BaseLimitExec` is not initialized but used in code-gen. This is because the limit counter variable will be used in upstream operators (LIMIT's child plan, e.g. `ColumnarToRowExec` operator for early termination), but in the same stage, there can be some operators doing the shortcut and not calling `BaseLimitExec`'s `doConsume()`, e.g. [HashJoin.codegenInner](https://github.com/apache/spark/blob/master/sql/core/src/main/scala/org/apache/spark/sql/execution/joins/HashJoin.scala#L402). So if we have query that `LocalLimit - BroadcastHashJoin - FileScan` in the same stage, the whole stage code-gen compilation will be failed.

Here is an example:

```

test("failed limit query") {

withTable("left_table", "empty_right_table", "output_table") {

spark.range(5).toDF("k").write.saveAsTable("left_table")

spark.range(0).toDF("k").write.saveAsTable("empty_right_table")

withSQLConf(SQLConf.ADAPTIVE_EXECUTION_ENABLED.key -> "false") {

spark.sql("CREATE TABLE output_table (k INT) USING parquet")

spark.sql(

s"""

|INSERT INTO TABLE output_table

|SELECT t1.k FROM left_table t1

|JOIN empty_right_table t2

|ON t1.k = t2.k

|LIMIT 3

|""".stripMargin)

}

}

}

```

Query plan:

```

Execute InsertIntoHadoopFsRelationCommand file:/Users/chengsu/spark/sql/core/spark-warehouse/org.apache.spark.sql.SQLQuerySuite/output_table, false, Parquet, Map(path -> file:/Users/chengsu/spark/sql/core/spark-warehouse/org.apache.spark.sql.SQLQuerySuite/output_table), Append, CatalogTable(

Database: default

Table: output_table

Created Time: Thu Mar 18 21:46:26 PDT 2021

Last Access: UNKNOWN

Created By: Spark 3.2.0-SNAPSHOT

Type: MANAGED

Provider: parquet

Location: file:/Users/chengsu/spark/sql/core/spark-warehouse/org.apache.spark.sql.SQLQuerySuite/output_table

Schema: root

|-- k: integer (nullable = true)

), org.apache.spark.sql.execution.datasources.InMemoryFileIndexb25d08b, [k]

+- *(3) Project [ansi_cast(k#228L as int) AS k#231]

+- *(3) GlobalLimit 3

+- Exchange SinglePartition, ENSURE_REQUIREMENTS, [id=#179]

+- *(2) LocalLimit 3

+- *(2) Project [k#228L]

+- *(2) BroadcastHashJoin [k#228L], [k#229L], Inner, BuildRight, false

:- *(2) Filter isnotnull(k#228L)

: +- *(2) ColumnarToRow

: +- FileScan parquet default.left_table[k#228L] Batched: true, DataFilters: [isnotnull(k#228L)], Format: Parquet, Location: InMemoryFileIndex(1 paths)[file:/Users/chengsu/spark/sql/core/spark-warehouse/org.apache.spark.sq..., PartitionFilters: [], PushedFilters: [IsNotNull(k)], ReadSchema: struct<k:bigint>

+- BroadcastExchange HashedRelationBroadcastMode(List(input[0, bigint, false]),false), [id=#173]

+- *(1) Filter isnotnull(k#229L)

+- *(1) ColumnarToRow

+- FileScan parquet default.empty_right_table[k#229L] Batched: true, DataFilters: [isnotnull(k#229L)], Format: Parquet, Location: InMemoryFileIndex(1 paths)[file:/Users/chengsu/spark/sql/core/spark-warehouse/org.apache.spark.sq..., PartitionFilters: [], PushedFilters: [IsNotNull(k)], ReadSchema: struct<k:bigint>

```

Codegen failure - https://gist.github.com/c21/ea760c75b546d903247582be656d9d66 .

The uninitialized variable `_limit_counter_1` from `LocalLimitExec` is referenced in `ColumnarToRowExec`, but `BroadcastHashJoinExec` does not call `LocalLimitExec.doConsume()` to initialize the counter variable.

The fix is to move the counter variable initialization to `doProduce()`, as in whole stage code-gen framework, `doProduce()` will definitely be called if upstream operators `doProduce()`/`doConsume()` is called.

Note: this only happens in AQE disabled case, because we have an AQE optimization rule [EliminateUnnecessaryJoin](https://github.com/apache/spark/blob/master/sql/core/src/main/scala/org/apache/spark/sql/execution/adaptive/EliminateUnnecessaryJoin.scala#L69) to change the whole query to an empty `LocalRelation` if inner join broadcast side is empty with AQE enabled.

### Why are the changes needed?

Fix query failure.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Added unit test in `SQLQuerySuite.scala`.

Closes#31892 from c21/limit-fix.

Authored-by: Cheng Su <chengsu@fb.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

This patch proposes to fix a bug related to `NestedColumnAliasing`. The root cause is `Window` doesn't override `producedAttributes` so `NestedColumnAliasing` rule wrongly prune attributes produced by `Window`.

The master and branch-3.1 both have this issue.

### Why are the changes needed?

It is needed to fix a bug of nested column pruning.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Unit test.

Closes#31897 from viirya/SPARK-34776.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR aims to support remote driver/executor template files.

### Why are the changes needed?

Currently, `KubernetesUtils.loadPodFromTemplate` supports only local files.

With this PR, we can do the following.

```bash

bin/spark-submit \

...

-c spark.kubernetes.driver.podTemplateFile=s3a://dongjoon/driver.yml \

-c spark.kubernetes.executor.podTemplateFile=s3a://dongjoon/executor.yml \

...

```

### Does this PR introduce _any_ user-facing change?

Yes, this is an improvement.

### How was this patch tested?

Manual testing.

Closes#31877 from dongjoon-hyun/SPARK-34783-2.

Lead-authored-by: Dongjoon Hyun <dhyun@apple.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

For all built-in datasources, prohibit saving of year-month and day-time intervals that were introduced by SPARK-27793. We plan to support saving of such types at the milestone 2, see SPARK-27790.

### Why are the changes needed?

To improve user experience with Spark SQL, and print nicer error message. Current error message might confuse users:

```

scala> Seq(java.time.Period.ofMonths(1)).toDF.write.mode("overwrite").json("/Users/maximgekk/tmp/123")

21/03/18 22:44:35 ERROR FileFormatWriter: Aborting job 8de402d7-ab69-4dc0-aa8e-14ef06bd2d6b.

org.apache.spark.SparkException: Job aborted due to stage failure: Task 0 in stage 1.0 failed 1 times, most recent failure: Lost task 0.0 in stage 1.0 (TID 1) (192.168.1.66 executor driver): org.apache.spark.SparkException: Task failed while writing rows.

at org.apache.spark.sql.errors.QueryExecutionErrors$.taskFailedWhileWritingRowsError(QueryExecutionErrors.scala:418)

at org.apache.spark.sql.execution.datasources.FileFormatWriter$.executeTask(FileFormatWriter.scala:298)

at org.apache.spark.sql.execution.datasources.FileFormatWriter$.$anonfun$write$15(FileFormatWriter.scala:211)

at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:90)

at org.apache.spark.scheduler.Task.run(Task.scala:131)

at org.apache.spark.executor.Executor$TaskRunner.$anonfun$run$3(Executor.scala:498)

at org.apache.spark.util.Utils$.tryWithSafeFinally(Utils.scala:1437)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:501)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.lang.RuntimeException: Failed to convert value 1 (class of class java.lang.Integer}) with the type of YearMonthIntervalType to JSON.

at scala.sys.package$.error(package.scala:30)

at org.apache.spark.sql.catalyst.json.JacksonGenerator.$anonfun$makeWriter$23(JacksonGenerator.scala:179)

at org.apache.spark.sql.catalyst.json.JacksonGenerator.$anonfun$makeWriter$23$adapted(JacksonGenerator.scala:176)

```

### Does this PR introduce _any_ user-facing change?

Yes. After the changes, the example above:

```

scala> Seq(java.time.Period.ofMonths(1)).toDF.write.mode("overwrite").json("/Users/maximgekk/tmp/123")

org.apache.spark.sql.AnalysisException: Cannot save interval data type into external storage.

```

### How was this patch tested?

1. Checked nested intervals:

```

scala> spark.range(1).selectExpr("""struct(timestamp'2021-01-02 00:01:02' - timestamp'2021-01-01 00:00:00')""").write.mode("overwrite").parquet("/Users/maximgekk/tmp/123")

org.apache.spark.sql.AnalysisException: Cannot save interval data type into external storage.

scala> Seq(Seq(java.time.Period.ofMonths(1))).toDF.write.mode("overwrite").json("/Users/maximgekk/tmp/123")

org.apache.spark.sql.AnalysisException: Cannot save interval data type into external storage.

```

2. By running existing test suites:

```

$ build/sbt -Phive-2.3 -Phive-thriftserver "test:testOnly *DataSourceV2DataFrameSuite"

$ build/sbt -Phive-2.3 -Phive-thriftserver "test:testOnly *DataSourceV2SQLSuite"

```

Closes#31884 from MaxGekk/ban-save-intervals.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Max Gekk <max.gekk@gmail.com>

### What changes were proposed in this pull request?

join condition 'a.attr == 'c.attr check the reference of these 2 objects which will always returns false. we need to use === instead

### Why are the changes needed?

Although this join condition always false doesn't break the test but it is not what we expected. We should fix it to avoid future confusing

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

UT

Closes#31890 from opensky142857/SPARK-34798.

Authored-by: Hongyi Zhang <hongyzhang@ebay.com>

Signed-off-by: Yuming Wang <yumwang@ebay.com>

### What changes were proposed in this pull request?

This is a follow-up of https://github.com/apache/spark/pull/31671https://github.com/apache/spark/pull/31671 has an unexpected behavior change that it uses a different Hadoop conf (`sparkContext.hadoopConfiguration`) to instantiate `FileSystem`, which is used to qualify the warehouse path. Before https://github.com/apache/spark/pull/31671 , the Hadoop conf to instantiate `FileSystem` is `session.sessionState.newHadoopConf()`.

More specifically, `session.sessionState.newHadoopConf()` has more conf entries:

1. it includes configs from `SharedState.initialConfigs`

2. in includes configs from `sparkContext.conf`

This PR updates `SharedState` to use the final Hadoop conf to instantiate `FileSystem`.

### Why are the changes needed?

fix behavior change

### Does this PR introduce _any_ user-facing change?

yes, the behavior will be the same before https://github.com/apache/spark/pull/31671

### How was this patch tested?

manually check the log of `FileSystem` and verify the passed in configs.

Closes#31868 from cloud-fan/followup.

Lead-authored-by: Wenchen Fan <wenchen@databricks.com>

Co-authored-by: Wenchen Fan <cloud0fan@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Change context classloader to Spark classloader at `RebaseDateTime.loadRebaseRecords`

### Why are the changes needed?

With custom `spark.sql.hive.metastore.version` and `spark.sql.hive.metastore.jars`.

Spark would use date formatter in `HiveShim` that convert `date` to `string`, if we set `spark.sql.legacy.timeParserPolicy=LEGACY` and the partition type is `date` the `RebaseDateTime` code will be invoked. At that moment, if `RebaseDateTime` is initialized the first time then context class loader is `IsolatedClientLoader`. Such error msg would throw:

```

java.lang.IllegalArgumentException: argument "src" is null

at com.fasterxml.jackson.databind.ObjectMapper._assertNotNull(ObjectMapper.java:4413)

at com.fasterxml.jackson.databind.ObjectMapper.readValue(ObjectMapper.java:3157)

at com.fasterxml.jackson.module.scala.ScalaObjectMapper.readValue(ScalaObjectMapper.scala:187)

at com.fasterxml.jackson.module.scala.ScalaObjectMapper.readValue$(ScalaObjectMapper.scala:186)

at org.apache.spark.sql.catalyst.util.RebaseDateTime$$anon$1.readValue(RebaseDateTime.scala:267)

at org.apache.spark.sql.catalyst.util.RebaseDateTime$.loadRebaseRecords(RebaseDateTime.scala:269)

at org.apache.spark.sql.catalyst.util.RebaseDateTime$.<init>(RebaseDateTime.scala:291)

at org.apache.spark.sql.catalyst.util.RebaseDateTime$.<clinit>(RebaseDateTime.scala)

at org.apache.spark.sql.catalyst.util.DateTimeUtils$.toJavaDate(DateTimeUtils.scala:109)

at org.apache.spark.sql.catalyst.util.LegacyDateFormatter.format(DateFormatter.scala:95)

at org.apache.spark.sql.catalyst.util.LegacyDateFormatter.format$(DateFormatter.scala:94)

at org.apache.spark.sql.catalyst.util.LegacySimpleDateFormatter.format(DateFormatter.scala:138)

at org.apache.spark.sql.hive.client.Shim_v0_13$ExtractableLiteral$1$.unapply(HiveShim.scala:661)

at org.apache.spark.sql.hive.client.Shim_v0_13.convert$1(HiveShim.scala:785)

at org.apache.spark.sql.hive.client.Shim_v0_13.$anonfun$convertFilters$4(HiveShim.scala:826)

```

```

java.lang.NoClassDefFoundError: Could not initialize class org.apache.spark.sql.catalyst.util.RebaseDateTime$

at org.apache.spark.sql.catalyst.util.DateTimeUtils$.toJavaDate(DateTimeUtils.scala:109)

at org.apache.spark.sql.catalyst.util.LegacyDateFormatter.format(DateFormatter.scala:95)

at org.apache.spark.sql.catalyst.util.LegacyDateFormatter.format$(DateFormatter.scala:94)

at org.apache.spark.sql.catalyst.util.LegacySimpleDateFormatter.format(DateFormatter.scala:138)

at org.apache.spark.sql.hive.client.Shim_v0_13$ExtractableLiteral$1$.unapply(HiveShim.scala:661)

at org.apache.spark.sql.hive.client.Shim_v0_13.convert$1(HiveShim.scala:785)

at org.apache.spark.sql.hive.client.Shim_v0_13.$anonfun$convertFilters$4(HiveShim.scala:826)

at scala.collection.immutable.Stream.flatMap(Stream.scala:493)

at org.apache.spark.sql.hive.client.Shim_v0_13.convertFilters(HiveShim.scala:826)

at org.apache.spark.sql.hive.client.Shim_v0_13.getPartitionsByFilter(HiveShim.scala:848)

at org.apache.spark.sql.hive.client.HiveClientImpl.$anonfun$getPartitionsByFilter$1(HiveClientImpl.scala:749)

at org.apache.spark.sql.hive.client.HiveClientImpl.$anonfun$withHiveState$1(HiveClientImpl.scala:291)

at org.apache.spark.sql.hive.client.HiveClientImpl.liftedTree1$1(HiveClientImpl.scala:224)

at org.apache.spark.sql.hive.client.HiveClientImpl.retryLocked(HiveClientImpl.scala:223)

at org.apache.spark.sql.hive.client.HiveClientImpl.withHiveState(HiveClientImpl.scala:273)

at org.apache.spark.sql.hive.client.HiveClientImpl.getPartitionsByFilter(HiveClientImpl.scala:747)

at org.apache.spark.sql.hive.HiveExternalCatalog.$anonfun$listPartitionsByFilter$1(HiveExternalCatalog.scala:1273)

```

The reproduce steps:

1. `spark.sql.hive.metastore.version` and `spark.sql.hive.metastore.jars`.

2. `CREATE TABLE t (c int) PARTITIONED BY (p date)`

3. `SET spark.sql.legacy.timeParserPolicy=LEGACY`

4. `SELECT * FROM t WHERE p='2021-01-01'`

### Does this PR introduce _any_ user-facing change?

Yes, bug fix.

### How was this patch tested?

pass `org.apache.spark.sql.catalyst.util.RebaseDateTimeSuite` and add new unit test to `HiveSparkSubmitSuite.scala`.

Closes#31864 from ulysses-you/SPARK-34772.

Authored-by: ulysses-you <ulyssesyou18@gmail.com>

Signed-off-by: Yuming Wang <yumwang@ebay.com>

### What changes were proposed in this pull request?

Support `timestamp +/- day-time interval`. In the PR, I propose to extend the `TimeAdd` expression and support `DayTimeIntervalType` as the `interval` parameter. The expression invokes the new method `DateTimeUtils.timestampAddDayTime()` which splits the input day-time interval to `days` and `microsecond adjustment` of a day, and adds `days` (and the microseconds) to a local timestamp derived from the given timestamp at the given time zone. The resulted local timestamp is converted back to the offset in microseconds since the epoch.

Also I updated the rules that handle `CalendarIntervalType` and produce `TimeAdd` to take into account new type `DateTimeIntervalType` for the `interval` parameter of `TimeAdd`.

### Why are the changes needed?

To conform the ANSI SQL standard which requires to support such operation over timestamps and intervals:

<img width="811" alt="Screenshot 2021-03-12 at 11 36 14" src="https://user-images.githubusercontent.com/1580697/111081674-865d4900-8515-11eb-86c8-3538ecaf4804.png">

### Does this PR introduce _any_ user-facing change?

Should not since new intervals have not been released yet.

### How was this patch tested?

By running new tests:

```

$ build/sbt "test:testOnly *DateTimeUtilsSuite"

$ build/sbt "test:testOnly *DateExpressionsSuite"

$ build/sbt "test:testOnly *ColumnExpressionSuite"

```

Closes#31855 from MaxGekk/timestamp-add-day-time-interval.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In `EliminateJoinToEmptyRelation.scala`, we can extend it to cover more cases for LEFT SEMI and LEFT ANI joins:

* Join is left semi join, join right side is non-empty and condition is empty. Eliminate join to its left side.

* Join is left anti join, join right side is empty. Eliminate join to its left side.

Given we eliminate join to its left side here, renaming the current optimization rule to `EliminateUnnecessaryJoin` instead.

In addition, also change to use `checkRowCount()` to check run time row count, instead of using `EmptyHashedRelation`. So this can cover `BroadcastNestedLoopJoin` as well. (`BroadcastNestedLoopJoin`'s broadcast side is `Array[InternalRow]`, not `HashedRelation`).

### Why are the changes needed?

Cover more join cases, and improve query performance for affected queries.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Added unit tests in `AdaptiveQueryExecSuite.scala`.

Closes#31873 from c21/aqe-join.

Authored-by: Cheng Su <chengsu@fb.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

In JavaSparkSQLExample when excecute 'peopleDF.write().partitionBy("favorite_color").bucketBy(42,"name").saveAsTable("people_partitioned_bucketed");'

throws Exception: 'Exception in thread "main" org.apache.spark.sql.AnalysisException: partition column favorite_color is not defined in table people_partitioned_bucketed, defined table columns are: age, name;'

Change the column favorite_color to age.

### Why are the changes needed?

Run JavaSparkSQLExample successfully.

### Does this PR introduce _any_ user-facing change?

NO

### How was this patch tested?

test in JavaSparkSQLExample .

Closes#31851 from zengruios/SPARK-34760.

Authored-by: zengruios <578395184@qq.com>

Signed-off-by: Kent Yao <yao@apache.org>

### What changes were proposed in this pull request?

After SPARK-34507, execute` change-scala-version.sh` script will update `scala.version` in parent pom, but if we execute the following commands in order:

```

dev/change-scala-version.sh 2.13

dev/change-scala-version.sh 2.12

git status

```

there will generate git diff as follow:

```

diff --git a/pom.xml b/pom.xml

index ddc4ce2f68..f43d8c8f78 100644

--- a/pom.xml

+++ b/pom.xml

-162,7 +162,7

<commons.math3.version>3.4.1</commons.math3.version>

<commons.collections.version>3.2.2</commons.collections.version>

- <scala.version>2.12.10</scala.version>

+ <scala.version>2.13.5</scala.version>

<scala.binary.version>2.12</scala.binary.version>

<scalatest-maven-plugin.version>2.0.0</scalatest-maven-plugin.version>

<scalafmt.parameters>--test</scalafmt.parameters>

```

seem 'scala.version' property was not update correctly.

So this pr add an extra 'scala.version' to scala-2.12 profile to ensure change-scala-version.sh can update the public `scala.version` property correctly.

### Why are the changes needed?

Bug fix.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

**Manual test**

Execute the following commands in order:

```

dev/change-scala-version.sh 2.13

dev/change-scala-version.sh 2.12

git status

```

**Before**

```

diff --git a/pom.xml b/pom.xml

index ddc4ce2f68..f43d8c8f78 100644

--- a/pom.xml

+++ b/pom.xml

-162,7 +162,7

<commons.math3.version>3.4.1</commons.math3.version>

<commons.collections.version>3.2.2</commons.collections.version>

- <scala.version>2.12.10</scala.version>

+ <scala.version>2.13.5</scala.version>

<scala.binary.version>2.12</scala.binary.version>

<scalatest-maven-plugin.version>2.0.0</scalatest-maven-plugin.version>

<scalafmt.parameters>--test</scalafmt.parameters>

```

**After**

No git diff.

Closes#31865 from LuciferYang/SPARK-34774.

Authored-by: yangjie01 <yangjie01@baidu.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

This PR proposes to deduplicate the source table when there're conflicting attributes between the target table and the source table.

### Why are the changes needed?

When resolving the `UpdateAction`, which could reference attributes from both target and source tables, Spark should know clearly where the attribute comes from when there're conflicting attributes instead of picking up a random one.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Added a unit test and updated existing tests.

Closes#31835 from Ngone51/dedup-MergeIntoTable.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This change is to ignore cache for SNAPSHOT dependencies in spark-submit.

### Why are the changes needed?

When spark-submit is executed with --packages, it will not download the dependency jars when they are available in cache (e.g. ivy cache), even when the dependencies are SNAPSHOT.

This might block developers who work on external modules in Spark (e.g. spark-avro), since they need to remove the cache manually every time when they update the code during developments (which generates SNAPSHOT jars). Without knowing this, they could be blocked wondering why their code changes are not reflected in spark-submit executions.

### Does this PR introduce _any_ user-facing change?

Yes. With this change, developers/users who run spark-submit with SNAPSHOT dependencies do not need to remove the cache every time when the SNAPSHOT dependencies are updated.

### How was this patch tested?

Added a unit test.

Closes#31849 from bozhang2820/spark-submit-cache-ignore.

Authored-by: Bo Zhang <bo.zhang@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Remove all SQLConf.get to conf if extends from SQLConfHelper

### Why are the changes needed?

Clean up code.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Existing unit tests.

Closes#31822 from leoluan2009/SPARK-34728.

Authored-by: Luan <luanxuedong2009@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Change DAGScheduler to pass a clone of the Properties object, rather than the original object, to the SparkListenerJobStart event.

### Why are the changes needed?

DAGScheduler might modify the Properties object (e.g., in addPySparkConfigsToProperties) after firing off the SparkListenerJobStart event. Since the handler for that event (onJobStart in EventLoggingListener) will iterate over the elements of the Property object, this sometimes results in a ConcurrentModificationException.

This can be demonstrated using these steps:

```

$ bin/spark-shell --conf spark.ui.showConsoleProgress=false \

--conf spark.executor.cores=1 --driver-memory 4g --conf \

"spark.ui.showConsoleProgress=false" \

--conf spark.eventLog.enabled=true \

--conf spark.eventLog.dir=/tmp/spark-events

...

scala> (0 to 500).foreach { i =>

| val df = spark.range(0, 20000).toDF("a")

| df.filter("a > 12").count

| }

21/03/12 18:16:44 ERROR AsyncEventQueue: Listener EventLoggingListener threw an exception

java.util.ConcurrentModificationException

at java.util.Hashtable$Enumerator.next(Hashtable.java:1387)

```

I've not actually seen a ConcurrentModificationException in onStageSubmitted, only in onJobStart. However, they both iterate over the Properties object, so for safety's sake I pass a clone to SparkListenerStageSubmitted as well.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

By repeatedly running the reproduction steps from above.

Closes#31826 from bersprockets/elconcurrent.

Authored-by: Bruce Robbins <bersprockets@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR proposes an alternative way to fix the memory leak of `ExecutionListenerBus`, which would automatically clean them up.

Basically, the idea is to add `registerSparkListenerForCleanup` to `ContextCleaner`, so we can remove the `ExecutionListenerBus` from `LiveListenerBus` when the `SparkSession` is GC'ed.

On the other hand, to make the `SparkSession` GC-able, we need to get rid of the reference of `SparkSession` in `ExecutionListenerBus`. Therefore, we introduced the `sessionUUID`, which is a unique identifier for SparkSession, to replace the `SparkSession` object.

Note that, the proposal wouldn't take effect when `spark.cleaner.referenceTracking=false` since it depends on `ContextCleaner`.

### Why are the changes needed?

Fix the memory leak caused by `ExecutionListenerBus` mentioned in SPARK-34087.

### Does this PR introduce _any_ user-facing change?

Yes, save memory for users.

### How was this patch tested?

Added unit test.

Closes#31839 from Ngone51/fix-mem-leak-of-ExecutionListenerBus.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR fixes the build failure with Scala 2.13 which is related to `commons-cli`.

The last few days, build with Scala 2.13 on GA continues to fail and the error message says like as follows.

```

[error] /home/runner/work/spark/spark/sql/hive-thriftserver/src/main/java/org/apache/hive/service/server/HiveServer2.java:26:1: error: package org.apache.commons.cli does not exist

1278[error] import org.apache.commons.cli.GnuParser;

```

The reason is that `mvn help` in `change-scala-version.sh` downloads the POM file of `commons-cli` but doesn't download the JAR file, leading the build failure.

This PR also adds `commons-cli` to the dependencies explicitly because HiveThriftServer depends on it.

### Why are the changes needed?

Expect to fix the build failure with Scala 2.13.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

I confirmed that build successfully finishes with Scala 2.13 on my laptop.

```

find ~/.m2 -name commons-cli -exec rm -rf {} \;

find ~/.ivy2 -name commons-cli -exec rm -rf {} \;

find ~/.cache/ -name commons-cli -exec rm -rf {} \; // For Linux

find ~/Library/Caches -name commons-cli -exec rm -rf {} \; // For macOS

dev/change-scala-version 2.13

./build/sbt -Pyarn -Pmesos -Pkubernetes -Phive -Phive-thriftserver -Phadoop-cloud -Pkinesis-asl -Pdocker-integration-tests -Pkubernetes-integration-tests -Pspark-ganglia-lgpl -Pscala-2.13 clean compile test:compile

```

Closes#31862 from sarutak/commons-cli.

Authored-by: Kousuke Saruta <sarutak@oss.nttdata.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR group exception messages in `/core/src/main/scala/org/apache/spark/sql/execution/datasources`.

### Why are the changes needed?

It will largely help with standardization of error messages and its maintenance.

### Does this PR introduce _any_ user-facing change?

No. Error messages remain unchanged.

### How was this patch tested?

No new tests - pass all original tests to make sure it doesn't break any existing behavior.

Closes#31757 from beliefer/SPARK-33602.

Authored-by: gengjiaan <gengjiaan@360.cn>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR simplifies `Analyzer.resolveLiteralFunction` to always create the `Alias`. The caller side will remove the `Alias` if it's not necessary.

### Why are the changes needed?

code simplification.

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

existing tests

Closes#31844 from cloud-fan/minor.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

This is a follow-up of https://github.com/apache/spark/pull/31808 and simplifies its fix to one line (excluding comments).

### Why are the changes needed?

code simplification

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

N/A

Closes#31843 from cloud-fan/simplify.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

Skip capture maven repo config for views.

### Why are the changes needed?

Due to the bad network, we always use the thirdparty maven repo to run test. e.g.,

```

build/sbt "test:testOnly *SQLQueryTestSuite" -Dspark.sql.maven.additionalRemoteRepositories=xxxxx

```

It's failed with such error msg

```

[info] - show-tblproperties.sql *** FAILED *** (128 milliseconds)

[info] show-tblproperties.sql

[info] Expected "...rredTempViewNames [][]", but got "...rredTempViewNames [][

[info] view.sqlConfig.spark.sql.maven.additionalRemoteRepositories xxxxx]" Result did not match for query #6

[info] SHOW TBLPROPERTIES view (SQLQueryTestSuite.scala:464)

```

It's not necessary to capture the maven config to view since it's a session level config.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

manual test pass

```

build/sbt "test:testOnly *SQLQueryTestSuite" -Dspark.sql.maven.additionalRemoteRepositories=xxx

```

Closes#31856 from ulysses-you/skip-maven-config.

Authored-by: ulysses-you <ulyssesyou18@gmail.com>

Signed-off-by: Kent Yao <yao@apache.org>

### What changes were proposed in this pull request?

This PR proposes to follow Univocity's input buffer.

### Why are the changes needed?

- Firstly, it's best to trust their judgement on the default values. Also 128 is too low.

- Default values arguably have more test coverage in Univocity.

- It will also fix https://github.com/uniVocity/univocity-parsers/issues/449

- ^ is a regression compared to Spark 2.4

### Does this PR introduce _any_ user-facing change?

No. In addition, It fixes a regression.

### How was this patch tested?

Manually tested, and added a unit test.

Closes#31858 from HyukjinKwon/SPARK-34768.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR updates `InMemoryCatalog.tableExists` to return false if database doesn't exist, instead of failing. The new behavior is consistent with `HiveExternalCatalog` which is used in production, so this bug mostly only affects tests.

### Why are the changes needed?

bug fix

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

a new test

Closes#31860 from cloud-fan/catalog.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

`TmpOutputFile` and `TmpErrOutputFile` are registered in `o.a.h.u.ShutdownHookManager `during creatation. The `state.close()` will delete them if they are not null and try remove them from the `o.a.h.u.ShutdownHookManager` which causes IllegalStateException when we call it in our ShutdownHookManager too.

In this PR, we delete them ahead with a high priority hook in Spark and set them to null to bypass the deletion and canceling in `state.close()`

### Why are the changes needed?

W/ or w/o this PR, the deletion of these files is not affected, we just mute an undesirable error log here.

### Does this PR introduce _any_ user-facing change?

no, this is a follow-up

### How was this patch tested?

#### the undesirable gone

```scala

spark-sql> 21/03/16 18:41:31 ERROR Utils: Uncaught exception in thread shutdown-hook-0

java.lang.IllegalStateException: Shutdown in progress, cannot cancel a deleteOnExit

at org.apache.hive.common.util.ShutdownHookManager.cancelDeleteOnExit(ShutdownHookManager.java:106)

at org.apache.hadoop.hive.common.FileUtils.deleteTmpFile(FileUtils.java:861)

at org.apache.hadoop.hive.ql.session.SessionState.deleteTmpErrOutputFile(SessionState.java:325)

at org.apache.hadoop.hive.ql.session.SessionState.dropSessionPaths(SessionState.java:829)

at org.apache.hadoop.hive.ql.session.SessionState.close(SessionState.java:1585)

at org.apache.hadoop.hive.cli.CliSessionState.close(CliSessionState.java:66)

at org.apache.spark.sql.hive.client.HiveClientImpl.closeState(HiveClientImpl.scala:172)

at org.apache.spark.sql.hive.client.HiveClientImpl.$anonfun$new$1(HiveClientImpl.scala:175)

at org.apache.spark.util.SparkShutdownHook.run(ShutdownHookManager.scala:214)

at org.apache.spark.util.SparkShutdownHookManager.$anonfun$runAll$2(ShutdownHookManager.scala:188)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at org.apache.spark.util.Utils$.logUncaughtExceptions(Utils.scala:1994)

at org.apache.spark.util.SparkShutdownHookManager.$anonfun$runAll$1(ShutdownHookManager.scala:188)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at scala.util.Try$.apply(Try.scala:213)

at org.apache.spark.util.SparkShutdownHookManager.runAll(ShutdownHookManager.scala:188)

at org.apache.spark.util.SparkShutdownHookManager$$anon$2.run(ShutdownHookManager.scala:178)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

(python) ✘ kentyaohulk ~/Downloads/spark/spark-3.2.0-SNAPSHOT-bin-20210316 cd ..

(python) kentyaohulk ~/Downloads/spark tar zxf spark-3.2.0-SNAPSHOT-bin-20210316.tgz

(python) kentyaohulk ~/Downloads/spark cd -

~/Downloads/spark/spark-3.2.0-SNAPSHOT-bin-20210316

(python) kentyaohulk ~/Downloads/spark/spark-3.2.0-SNAPSHOT-bin-20210316 bin/spark-sql --conf spark.local.dir=./local --conf spark.hive.exec.local.scratchdir=./local

21/03/16 18:42:15 WARN Utils: Your hostname, hulk.local resolves to a loopback address: 127.0.0.1; using 10.242.189.214 instead (on interface en0)

21/03/16 18:42:15 WARN Utils: Set SPARK_LOCAL_IP if you need to bind to another address

Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

21/03/16 18:42:15 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

21/03/16 18:42:16 WARN SparkConf: Note that spark.local.dir will be overridden by the value set by the cluster manager (via SPARK_LOCAL_DIRS in mesos/standalone/kubernetes and LOCAL_DIRS in YARN).

21/03/16 18:42:18 WARN HiveConf: HiveConf of name hive.stats.jdbc.timeout does not exist

21/03/16 18:42:18 WARN HiveConf: HiveConf of name hive.stats.retries.wait does not exist

21/03/16 18:42:19 WARN ObjectStore: Version information not found in metastore. hive.metastore.schema.verification is not enabled so recording the schema version 2.3.0

21/03/16 18:42:19 WARN ObjectStore: setMetaStoreSchemaVersion called but recording version is disabled: version = 2.3.0, comment = Set by MetaStore kentyao127.0.0.1

Spark master: local[*], Application Id: local-1615891336877

spark-sql> %

```

#### and the deletion is still fine

```shell

kentyaohulk ~/Downloads/spark/spark-3.2.0-SNAPSHOT-bin-20210316

ls -al local

total 0

drwxr-xr-x 7 kentyao staff 224 3 16 18:42 .

drwxr-xr-x 19 kentyao staff 608 3 16 18:42 ..

drwx------ 2 kentyao staff 64 3 16 18:42 16cc5238-e25e-4c0f-96ef-0c4bdecc7e51

-rw-r--r-- 1 kentyao staff 0 3 16 18:42 16cc5238-e25e-4c0f-96ef-0c4bdecc7e51219959790473242539.pipeout

-rw-r--r-- 1 kentyao staff 0 3 16 18:42 16cc5238-e25e-4c0f-96ef-0c4bdecc7e518816377057377724129.pipeout

drwxr-xr-x 2 kentyao staff 64 3 16 18:42 blockmgr-37a52ad2-eb56-43a5-8803-8f58d08fe9ad

drwx------ 3 kentyao staff 96 3 16 18:42 spark-101971df-f754-47c2-8764-58c45586be7e

kentyaohulk ~/Downloads/spark/spark-3.2.0-SNAPSHOT-bin-20210316 ls -al local

total 0

drwxr-xr-x 2 kentyao staff 64 3 16 19:22 .

drwxr-xr-x 19 kentyao staff 608 3 16 18:42 ..

kentyaohulk ~/Downloads/spark/spark-3.2.0-SNAPSHOT-bin-20210316

```

Closes#31850 from yaooqinn/followup.

Authored-by: Kent Yao <yao@apache.org>

Signed-off-by: Kent Yao <yao@apache.org>

### What changes were proposed in this pull request?

Push down limit through `Window` when the partitionSpec of all window functions is empty and the same order is used. This is a real case from production:

This pr support 2 cases:

1. All window functions have same orderSpec:

```sql

SELECT *, ROW_NUMBER() OVER(ORDER BY a) AS rn, RANK() OVER(ORDER BY a) AS rk FROM t1 LIMIT 5;

== Optimized Logical Plan ==

Window [row_number() windowspecdefinition(a#9L ASC NULLS FIRST, specifiedwindowframe(RowFrame, unboundedpreceding$(), currentrow$())) AS rn#4, rank(a#9L) windowspecdefinition(a#9L ASC NULLS FIRST, specifiedwindowframe(RowFrame, unboundedpreceding$(), currentrow$())) AS rk#5], [a#9L ASC NULLS FIRST]

+- GlobalLimit 5

+- LocalLimit 5

+- Sort [a#9L ASC NULLS FIRST], true

+- Relation default.t1[A#9L,B#10L,C#11L] parquet

```

2. There is a window function with a different orderSpec:

```sql

SELECT a, ROW_NUMBER() OVER(ORDER BY a) AS rn, RANK() OVER(ORDER BY b DESC) AS rk FROM t1 LIMIT 5;

== Optimized Logical Plan ==

Project [a#9L, rn#4, rk#5]

+- Window [rank(b#10L) windowspecdefinition(b#10L DESC NULLS LAST, specifiedwindowframe(RowFrame, unboundedpreceding$(), currentrow$())) AS rk#5], [b#10L DESC NULLS LAST]

+- GlobalLimit 5

+- LocalLimit 5

+- Sort [b#10L DESC NULLS LAST], true

+- Window [row_number() windowspecdefinition(a#9L ASC NULLS FIRST, specifiedwindowframe(RowFrame, unboundedpreceding$(), currentrow$())) AS rn#4], [a#9L ASC NULLS FIRST]

+- Project [a#9L, b#10L]

+- Relation default.t1[A#9L,B#10L,C#11L] parquet

```

### Why are the changes needed?

Improve query performance.

```scala

spark.range(500000000L).selectExpr("id AS a", "id AS b").write.saveAsTable("t1")

spark.sql("SELECT *, ROW_NUMBER() OVER(ORDER BY a) AS rowId FROM t1 LIMIT 5").show

```

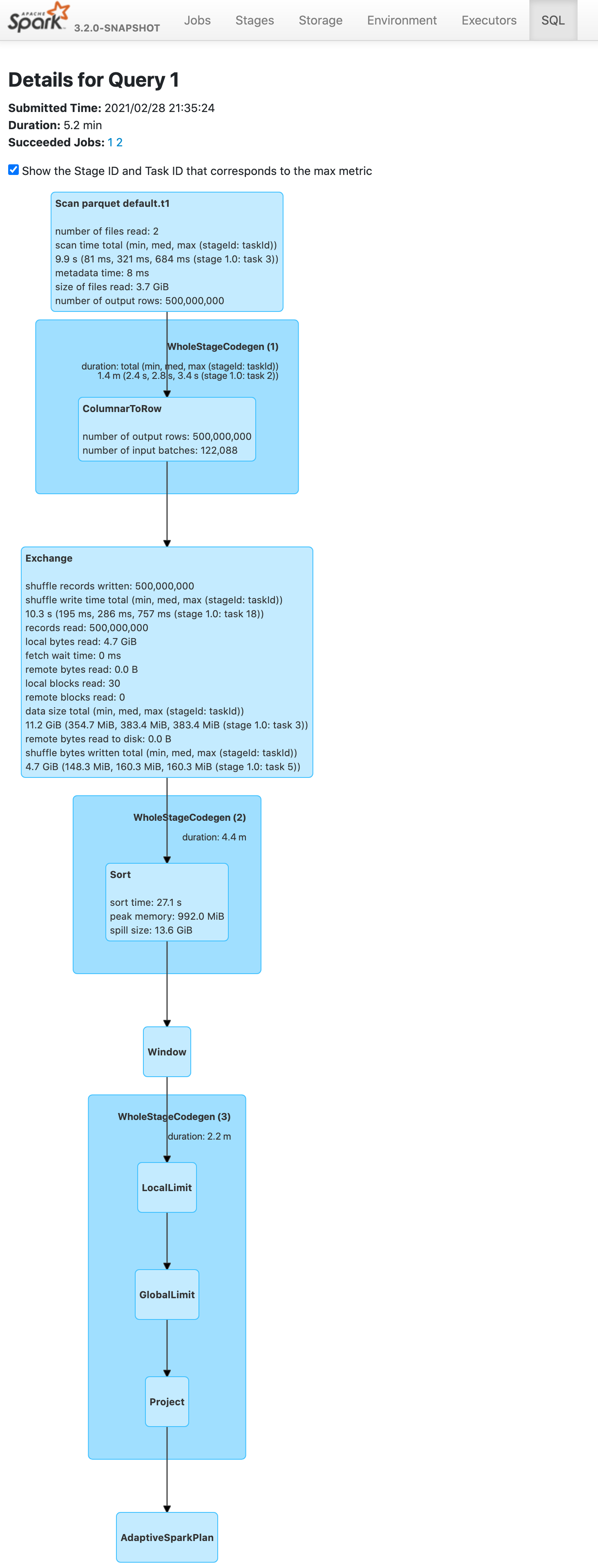

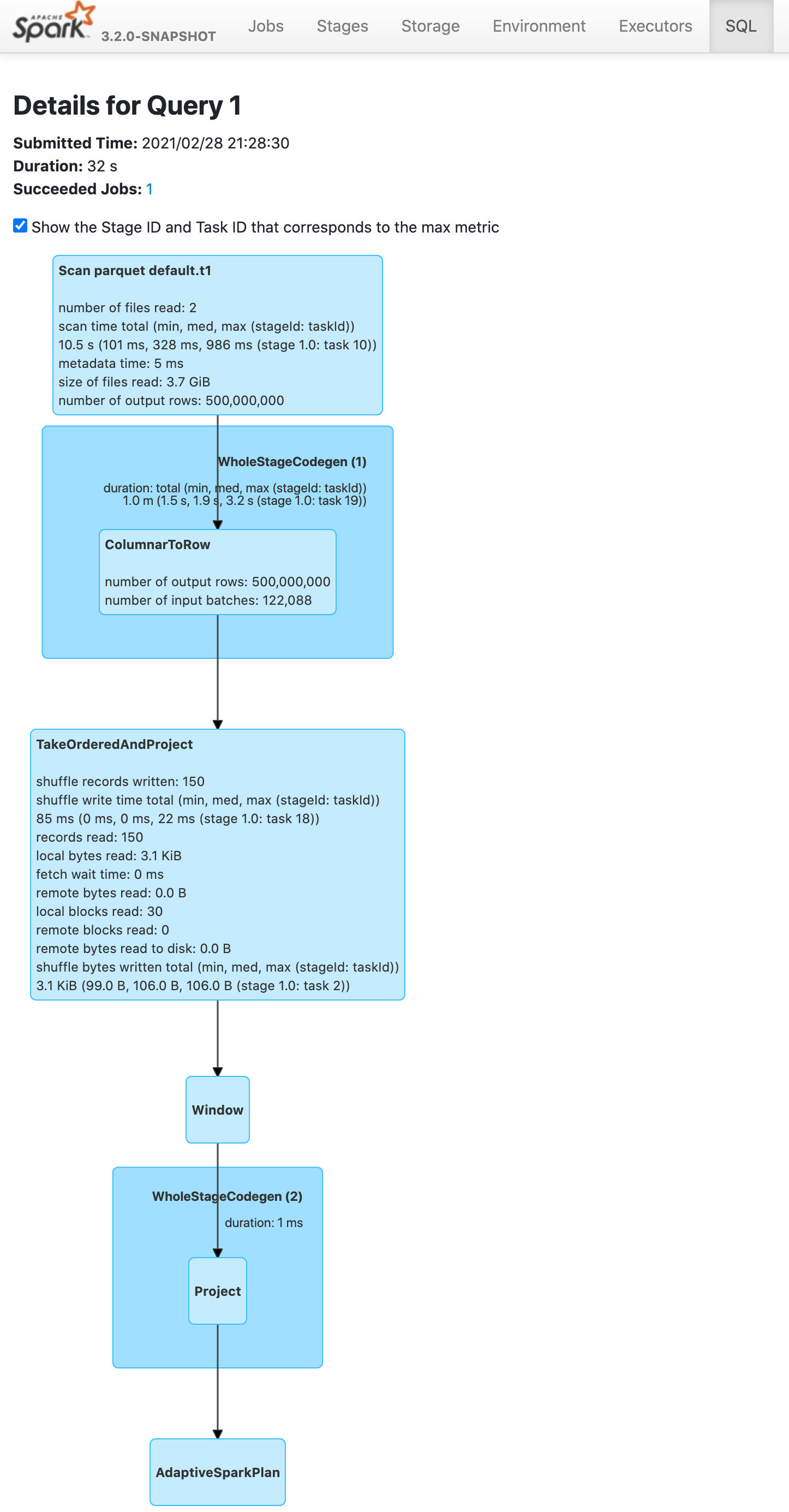

Before this pr | After this pr

-- | --

|

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Unit test.

Closes#31691 from wangyum/SPARK-34575.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

For the following cases, ABS should throw exceptions since the results are out of the range of the result data types in ANSI mode.

```

SELECT abs(${Int.MinValue});

SELECT abs(${Long.MinValue});

```

### Why are the changes needed?

Better ANSI compliance

### Does this PR introduce _any_ user-facing change?

Yes, Abs throws an exception if input is out of range in ANSI mode

### How was this patch tested?

Unit test

Closes#31836 from gengliangwang/ansiAbs.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR proposes:

1. `CREATE OR REPLACE TEMP VIEW USING` should use `TemporaryViewRelation` to store temp views.

2. By doing #1, it fixes the issue where the temp view being replaced is not uncached.

### Why are the changes needed?

This is a part of an ongoing work to wrap all the temporary views with `TemporaryViewRelation`: [SPARK-34698](https://issues.apache.org/jira/browse/SPARK-34698).

This also fixes a bug where the temp view being replaced is not uncached.

### Does this PR introduce _any_ user-facing change?

Yes, the temp view being replaced with `CREATE OR REPLACE TEMP VIEW USING` is correctly uncached if the temp view is cached.

### How was this patch tested?

Added new tests.

Closes#31825 from imback82/create_temp_view_using.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

For DDL commands like DROP VIEW, they don't really need to resolve the view (parse and analyze the view SQL text), they just need to get the view metadata.

This PR fixes the rule `ResolveTempViews` to only resolve the temp view for `UnresolvedRelation`. This also fixes a bug for DROP VIEW, as previously it tried to resolve the view and failed to drop invalid views.

### Why are the changes needed?

bug fix

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

new test

Closes#31853 from cloud-fan/view-resolve.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This is a followup minor change from https://github.com/apache/spark/pull/31821#discussion_r594110622 , where we change from using `execute()` to `executeTake()`. Performance-wise there's no difference. We are just using a different API to be aligned with code path of `Dataset`.

### Why are the changes needed?

To align with other code paths in SQL/Dataset.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Existing unit tests same as https://github.com/apache/spark/pull/31821 .

Closes#31845 from c21/join-followup.

Authored-by: Cheng Su <chengsu@fb.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

We initialized a Hive `SessionState` to interact with the external hive metastore server but left it behind after we finished.

We should close the metastore client explicitly in case of connection leaks with HMS

and we should trigger the `SessionState` to close itself to clean the residual dirs to fix issues reported by SPARK-21449 and SPARK-23745.

`hive.downloaded.resources.dir` contains transient files, such as UDF jars, it will not be used anymore after spark applications exit.

### Why are the changes needed?

1. prevent potential metastore client leak

2. clean `hive.downloaded.resources.dir`

```

DOWNLOADED_RESOURCES_DIR("hive.downloaded.resources.dir", "${system:java.io.tmpdir}" + File.separator + "${hive.session.id}_resources", "Temporary local directory for added resources in the remote file system."),

```

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

passing jenkins and verify locally

Closes#31833 from yaooqinn/SPARK-21449-2.

Authored-by: Kent Yao <yao@apache.org>

Signed-off-by: Yuming Wang <yumwang@ebay.com>

### What changes were proposed in this pull request?

This PR removes one unused variable in `NewInstance.constructor`.

### Why are the changes needed?

This looks like a variable for debugging at the initial commit of SPARK-23584 .

- 1b08c4393c (diff-2a36e31684505fd22e2d12a864ce89fd350656d716a3f2d7789d2cdbe38e15fbR461)

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Pass the CIs.

Closes#31838 from dongjoon-hyun/minor-object.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Support `timestamp +/- year-month interval`. In the PR, I propose to introduce new binary expression `TimestampAddYMInterval` similarly to `DateAddYMInterval`. It invokes new method `timestampAddMonths` from `DateTimeUtils` by passing a timestamp as an offset in microseconds since the epoch, amount of months from the giveb year-month interval, and the time zone ID in which the operation is performed. The `timestampAddMonths()` method converts the input microseconds to a local timestamp, adds months to it, and converts the results back to an instant in microseconds at the given time zone.

### Why are the changes needed?

To conform the ANSI SQL standard which requires to support such operation over timestamps and intervals:

<img width="811" alt="Screenshot 2021-03-12 at 11 36 14" src="https://user-images.githubusercontent.com/1580697/111081674-865d4900-8515-11eb-86c8-3538ecaf4804.png">

### Does this PR introduce _any_ user-facing change?

Should not since new intervals have not been released yet.

### How was this patch tested?

By running new tests:

```

$ build/sbt "test:testOnly *DateTimeUtilsSuite"

$ build/sbt "test:testOnly *DateExpressionsSuite"

$ build/sbt "test:testOnly *ColumnExpressionSuite"

```

Closes#31832 from MaxGekk/timestamp-add-year-month-interval.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Max Gekk <max.gekk@gmail.com>

### What changes were proposed in this pull request?

This PR aims to make `ExpressionEncoderSuite` to use `deepEquals` instead of `equals` when `input` is `array of array`.

This comparison code itself was added by SPARK-11727 at Apache Spark 1.6.0.

### Why are the changes needed?

Currently, the interpreted mode fails for `array of array` because the following line is used.

```

Arrays.equals(b1.asInstanceOf[Array[AnyRef]], b2.asInstanceOf[Array[AnyRef]])

```

### Does this PR introduce _any_ user-facing change?

No. This is a test-only PR.

### How was this patch tested?

Pass the existing CIs.

Closes#31837 from dongjoon-hyun/SPARK-34743.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

In `Analyzer.resolveExpression`, we have a parameter to decide if we should remove unnecessary `Alias` or not. This is over complicated and we can always remove unnecessary `Alias`.

This PR simplifies this part and removes the parameter.

### Why are the changes needed?

code cleanup

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

existing tests

Closes#31758 from cloud-fan/resolve.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

For `BroadcastNestedLoopJoinExec` left semi and left anti join without condition. If we broadcast left side. Currently we check whether every row from broadcast side has a match or not by [iterating broadcast side a lot of time](https://github.com/apache/spark/blob/master/sql/core/src/main/scala/org/apache/spark/sql/execution/joins/BroadcastNestedLoopJoinExec.scala#L256-L275). This is unnecessary and very inefficient when there's no condition, as we only need to check whether stream side is empty or not. Create this PR to add the optimization. This can boost the affected query execution performance a lot.

In addition, create a common method `getMatchedBroadcastRowsBitSet()` shared by several methods.

Refactor `defaultJoin()` to move

* left semi and left anti join related logic to `leftExistenceJoin`

* existence join related logic to `existenceJoin`.

After this, `defaultJoin()` holds logic only for outer join (left outer, right outer and full outer), which is much easier to read from my own opinion.

### Why are the changes needed?

Improve the affected query performance a lot.

Test with a simple query by modifying `JoinBenchmark.scala` locally:

```

val N = 20 << 20

val M = 1 << 4

val dim = broadcast(spark.range(M).selectExpr("id as k"))

val df = dim.join(spark.range(N), Seq.empty, "left_semi")

df.noop()

```

See >30x run time improvement. Note the stream side is only `spark.range(N)`. For complicated query with non-trivial stream side, the saving would be much more.

```

Running benchmark: broadcast nested loop left semi join

Running case: broadcast nested loop left semi join optimization off

Stopped after 2 iterations, 3163 ms

Running case: broadcast nested loop left semi join optimization on

Stopped after 5 iterations, 366 ms

Java HotSpot(TM) 64-Bit Server VM 1.8.0_181-b13 on Mac OS X 10.15.7

Intel(R) Core(TM) i9-9980HK CPU 2.40GHz

broadcast nested loop left semi join: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

-------------------------------------------------------------------------------------------------------------------------------------

broadcast nested loop left semi join optimization off 1568 1582 19 13.4 74.8 1.0X

broadcast nested loop left semi join optimization on 46 73 18 456.0 2.2 34.1X

```

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Added unit test in `ExistenceJoinSuite.scala`.

Closes#31821 from c21/nested-join.

Authored-by: Cheng Su <chengsu@fb.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

The main change of this pr as follows:

- Use `org.junit.Assert.assertThrows(String, Class, ThrowingRunnable)` method instead of `ExpectedException.none()`

- Use `org.hamcrest.MatcherAssert.assertThat()` method instead of `org.junit.Assert.assertThat(T, org.hamcrest.Matcher<? super T>)`

### Why are the changes needed?

Clean up deprecated API usage

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Pass the Jenkins or GitHub Action

Closes#31815 from LuciferYang/SPARK-34722.

Authored-by: yangjie01 <yangjie01@baidu.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

In the PR, I propose to cast the input float to double in the `SecondsToTimestamp` expression in the same way as in the `Cast` expression.

### Why are the changes needed?

To have the same results from `CAST(<float> AS TIMESTAMP)` and from `TIMESTAMP_SECONDS`:

```sql

spark-sql> SELECT CAST(16777215.0f AS TIMESTAMP);

1970-07-14 07:20:15

spark-sql> SELECT TIMESTAMP_SECONDS(16777215.0f);

1970-07-14 07:20:14.951424

```

### Does this PR introduce _any_ user-facing change?

Yes. After the changes:

```sql

spark-sql> SELECT TIMESTAMP_SECONDS(16777215.0f);

1970-07-14 07:20:15

```

### How was this patch tested?

By running new test:

```

$ build/sbt "test:testOnly *DateExpressionsSuite"

```

Closes#31831 from MaxGekk/adjust-SecondsToTimestamp.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR aims to update SBT from 1.4.7 to 1.4.9.

### Why are the changes needed?

This will bring the following bug fixes and improvements.

- https://github.com/sbt/sbt/releases/tag/v1.4.9

- https://github.com/sbt/sbt/releases/tag/v1.4.8

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Pass the CIs.

Closes#31828 from williamhyun/sbt149.

Authored-by: William Hyun <williamhyun3@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

In non-ANSI mode, casting float to timestamp has different implementation for codegen on and off.

Codegen on:

1. Multiply float input by MICROS_PER_SECOND

2. Cast resulting float value to long

Codegen off:

1. CAST float input to double input

2. Multiply double input by MICROS_PER_SECOND

3. Cast resulting double value to long

In the PR, I propose to align to non-codegen code, and cast input float to double in codegen.

### Why are the changes needed?

This fixes the issue which is demonstrated by the code:

```sql

spark-sql> CREATE TEMP VIEW v1 AS SELECT 16777215.0f AS f;

spark-sql> SELECT * FROM v1;

1.6777215E7

spark-sql> SELECT CAST(f AS TIMESTAMP) FROM v1;

1970-07-14 07:20:15

spark-sql> CACHE TABLE v1;

spark-sql> SELECT * FROM v1;

1.6777215E7

spark-sql> SELECT CAST(f AS TIMESTAMP) FROM v1;

1970-07-14 07:20:14.951424

```

The result from the cached view **1970-07-14 07:20:14.951424** is different from un-cached view **1970-07-14 07:20:15**.

### Does this PR introduce _any_ user-facing change?

Yes. After the changes, the example above outputs the same timestamp for the cached view:

```sql

spark-sql> CACHE TABLE v1;

spark-sql> SELECT * FROM v1;

1.6777215E7

spark-sql> SELECT CAST(f AS TIMESTAMP) FROM v1;

1970-07-14 07:20:15

```

### How was this patch tested?

By running new test:

```

$ build/sbt "test:testOnly *CastSuite"

```

Closes#31819 from MaxGekk/fix-float-to-timestamp.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Fixing `IndexOutOfBoundsException` in `logForFailedTest` method when driver is not started.

### Why are the changes needed?

Before this PR when the driver is not started an `IndexOutOfBoundsException` as the first item is tried to be accessed from an empty list:

```

- PVs with local storage *** FAILED ***

java.lang.IndexOutOfBoundsException: Index: 0, Size: 0

at java.util.ArrayList.rangeCheck(ArrayList.java:659)

at java.util.ArrayList.get(ArrayList.java:435)

at org.apache.spark.deploy.k8s.integrationtest.KubernetesSuite.logForFailedTest(KubernetesSuite.scala:83)

at org.apache.spark.SparkFunSuite.withFixture(SparkFunSuite.scala:181)

at org.scalatest.funsuite.AnyFunSuiteLike.invokeWithFixture$1(AnyFunSuiteLike.scala:188)

at org.scalatest.funsuite.AnyFunSuiteLike.$anonfun$runTest$1(AnyFunSuiteLike.scala:200)

at org.scalatest.SuperEngine.runTestImpl(Engine.scala:306)

at org.scalatest.funsuite.AnyFunSuiteLike.runTest(AnyFunSuiteLike.scala:200)

at org.scalatest.funsuite.AnyFunSuiteLike.runTest$(AnyFunSuiteLike.scala:182)

at org.apache.spark.SparkFunSuite.org$scalatest$BeforeAndAfterEach$$super$runTest(SparkFunSuite.scala:61)

...

```

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Running integration tests.

After this changes the above error become:

```

- PVs with local storage *** FAILED ***

java.io.IOException: No such file or directory

at java.io.UnixFileSystem.createFileExclusively(Native Method)

at java.io.File.createTempFile(File.java:2026)

at org.apache.spark.deploy.k8s.integrationtest.Utils$.createTempFile(Utils.scala:103)

at org.apache.spark.deploy.k8s.integrationtest.PVTestsSuite.$anonfun$$init$$1(PVTestsSuite.scala:135)

at org.scalatest.OutcomeOf.outcomeOf(OutcomeOf.scala:85)

at org.scalatest.OutcomeOf.outcomeOf$(OutcomeOf.scala:83)

at org.scalatest.OutcomeOf$.outcomeOf(OutcomeOf.scala:104)

at org.scalatest.Transformer.apply(Transformer.scala:22)

at org.scalatest.Transformer.apply(Transformer.scala:20)

at org.scalatest.funsuite.AnyFunSuiteLike$$anon$1.apply(AnyFunSuiteLike.scala:190)

...

```

Closes#31824 from attilapiros/SPARK-34732.

Authored-by: “attilapiros” <piros.attila.zsolt@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This patch proposes to fix incorrect parameter type for subexpression elimination under whole-stage.

### Why are the changes needed?

If the parameter is a byte array, the subexpression elimination under wholestage codegen will use incorrect parameter type and cause compile error. Although Spark can automatically fallback to interpreted mode, we should fix it.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Manually test with customer application. Unit test.

Closes#31814 from viirya/SPARK-34723.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Liang-Chi Hsieh <viirya@gmail.com>

### What changes were proposed in this pull request?

Support `date +/- year-month interval`. In the PR, I propose to re-use existing code from the `AddMonths` expression, and extract it to the common base class `AddMonthsBase`. That base class is used in new expression `DateAddYMInterval` and in the existing one `AddMonths` (the `add_months` function).

### Why are the changes needed?

To conform the ANSI SQL standard which requires to support such operation over dates and intervals:

<img width="811" alt="Screenshot 2021-03-12 at 11 36 14" src="https://user-images.githubusercontent.com/1580697/110914390-5f412480-8327-11eb-9f8b-e92e73c0b9cd.png">

### Does this PR introduce _any_ user-facing change?

Should not since new intervals have not been released yet.

### How was this patch tested?

By running new tests:

```

$ build/sbt "test:testOnly *ColumnExpressionSuite"

$ build/sbt "test:testOnly *DateExpressionsSuite"

```

Closes#31812 from MaxGekk/date-add-year-month-interval.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This bug was introduced by SPARK-23583 at Apache Spark 2.4.0.

This PR aims to use `getMethod` instead of `getDeclaredMethod`.

```scala

- obj.getClass.getDeclaredMethod(functionName, argClasses: _*)

+ obj.getClass.getMethod(functionName, argClasses: _*)

```

### Why are the changes needed?

`getDeclaredMethod` does not search the super class's method. To invoke `GenericArrayData.toIntArray`, we need to use `getMethod` because it's declared at the super class `ArrayData`.

```

[info] - encode/decode for array of int: [I74655d03 (interpreted path) *** FAILED *** (14 milliseconds)

[info] Exception thrown while decoding

[info] Converted: [0,1000000020,3,0,ffffff850000001f,4]

[info] Schema: value#680

[info] root

[info] -- value: array (nullable = true)

[info] |-- element: integer (containsNull = false)

[info]

[info]

[info] Encoder:

[info] class[value[0]: array<int>] (ExpressionEncoderSuite.scala:578)

[info] org.scalatest.exceptions.TestFailedException:

[info] at org.scalatest.Assertions.newAssertionFailedException(Assertions.scala:472)

[info] at org.scalatest.Assertions.newAssertionFailedException$(Assertions.scala:471)

[info] at org.scalatest.funsuite.AnyFunSuite.newAssertionFailedException(AnyFunSuite.scala:1563)

[info] at org.scalatest.Assertions.fail(Assertions.scala:949)

[info] at org.scalatest.Assertions.fail$(Assertions.scala:945)

[info] at org.scalatest.funsuite.AnyFunSuite.fail(AnyFunSuite.scala:1563)

[info] at org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite.$anonfun$encodeDecodeTest$1(ExpressionEncoderSuite.scala:578)

[info] at org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite.verifyNotLeakingReflectionObjects(ExpressionEncoderSuite.scala:656)

[info] at org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite.$anonfun$testAndVerifyNotLeakingReflectionObjects$2(ExpressionEncoderSuite.scala:669)

[info] at org.apache.spark.sql.catalyst.plans.CodegenInterpretedPlanTest.$anonfun$test$4(PlanTest.scala:50)

[info] at org.apache.spark.sql.catalyst.plans.SQLHelper.withSQLConf(SQLHelper.scala:54)

[info] at org.apache.spark.sql.catalyst.plans.SQLHelper.withSQLConf$(SQLHelper.scala:38)

[info] at org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite.withSQLConf(ExpressionEncoderSuite.scala:118)

[info] at org.apache.spark.sql.catalyst.plans.CodegenInterpretedPlanTest.$anonfun$test$3(PlanTest.scala:50)

...

[info] Cause: java.lang.RuntimeException: Error while decoding: java.lang.NoSuchMethodException: org.apache.spark.sql.catalyst.util.GenericArrayData.toIntArray()

[info] mapobjects(lambdavariable(MapObject, IntegerType, false, -1), assertnotnull(lambdavariable(MapObject, IntegerType, false, -1)), input[0, array<int>, true], None).toIntArray

[info] at org.apache.spark.sql.catalyst.encoders.ExpressionEncoder$Deserializer.apply(ExpressionEncoder.scala:186)

[info] at org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite.$anonfun$encodeDecodeTest$1(ExpressionEncoderSuite.scala:576)

[info] at org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite.verifyNotLeakingReflectionObjects(ExpressionEncoderSuite.scala:656)

[info] at org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite.$anonfun$testAndVerifyNotLeakingReflectionObjects$2(ExpressionEncoderSuite.scala:669)

```

### Does this PR introduce _any_ user-facing change?

This causes a runtime exception when we use the interpreted mode.

### How was this patch tested?

Pass the modified unit test case.

Closes#31816 from dongjoon-hyun/SPARK-34724.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR fixes an issue that `sql` method in the following classes which take qualified names don't quote the qualified names properly.

* UnresolvedAttribute

* AttributeReference

* Alias

One instance caused by this issue is reported in SPARK-34626.

```

UnresolvedAttribute("a" :: "b" :: Nil).sql

`a.b` // expected: `a`.`b`

```

And other instances are like as follows.

```

UnresolvedAttribute("a`b"::"c.d"::Nil).sql

a`b.`c.d` // expected: `a``b`.`c.d`

AttributeReference("a.b", IntegerType)(qualifier = "c.d"::Nil).sql

c.d.`a.b` // expected: `c.d`.`a.b`

Alias(AttributeReference("a", IntegerType)(), "b.c")(qualifier = "d.e"::Nil).sql

`a` AS d.e.`b.c` // expected: `a` AS `d.e`.`b.c`

```

### Why are the changes needed?

This is a bug.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

New test.

Closes#31754 from sarutak/fix-qualified-names.

Authored-by: Kousuke Saruta <sarutak@oss.nttdata.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to override the `typeName()` method in `YearMonthIntervalType` and `DayTimeIntervalType`, and assign them names according to the ANSI SQL standard:

<img width="836" alt="Screenshot 2021-03-11 at 17 29 04" src="https://user-images.githubusercontent.com/1580697/110802854-a54aa980-828f-11eb-956d-dd4fbf14aa72.png">

but keep the type name as singular according existing naming convention for other types.

### Why are the changes needed?

To improve Spark SQL user experience, and have readable types in error messages.

### Does this PR introduce _any_ user-facing change?

Should not since the types has not been released yet.

### How was this patch tested?

By running the modified tests:

```

$ build/sbt "test:testOnly *ExpressionTypeCheckingSuite"

$ build/sbt "sql/testOnly *SQLQueryTestSuite -- -z windowFrameCoercion.sql"

$ build/sbt "sql/testOnly *SQLQueryTestSuite -- -z literals.sql"

```

Closes#31810 from MaxGekk/interval-types-name.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>