### What changes were proposed in this pull request?

A small documentation change to clarify that the `rand()` function produces values in `[0.0, 1.0)`.

### Why are the changes needed?

`rand()` uses `Rand()` - which generates values in [0, 1) ([documented here](a1dbcd13a3/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/expressions/randomExpressions.scala (L71))). The existing documentation suggests that 1.0 is a possible value returned by rand (i.e for a distribution written as `X ~ U(a, b)`, x can be a or b, so `U[0.0, 1.0]` suggests the value returned could include 1.0).

### Does this PR introduce any user-facing change?

Only documentation changes.

### How was this patch tested?

Documentation changes only.

Closes#28071 from Smeb/master.

Authored-by: Ben Ryves <benjamin.ryves@getyourguide.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

In the PR, I propose to update the doc for the `timeZone` option in JSON/CSV datasources and for the `tz` parameter of the `from_utc_timestamp()`/`to_utc_timestamp()` functions, and to restrict format of config's values to 2 forms:

1. Geographical regions, such as `America/Los_Angeles`.

2. Fixed offsets - a fully resolved offset from UTC. For example, `-08:00`.

### Why are the changes needed?

Other formats such as three-letter time zone IDs are ambitious, and depend on the locale. For example, `CST` could be U.S. `Central Standard Time` and `China Standard Time`. Such formats have been already deprecated in JDK, see [Three-letter time zone IDs](https://docs.oracle.com/javase/8/docs/api/java/util/TimeZone.html).

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By running `./dev/scalastyle`, and manual testing.

Closes#28051 from MaxGekk/doc-time-zone-option.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Based on the discussion in the mailing list [[Proposal] Modification to Spark's Semantic Versioning Policy](http://apache-spark-developers-list.1001551.n3.nabble.com/Proposal-Modification-to-Spark-s-Semantic-Versioning-Policy-td28938.html) , this PR is to add back the following APIs whose maintenance cost are relatively small.

- functions.toDegrees/toRadians

- functions.approxCountDistinct

- functions.monotonicallyIncreasingId

- Column.!==

- Dataset.explode

- Dataset.registerTempTable

- SQLContext.getOrCreate, setActive, clearActive, constructors

Below is the other removed APIs in the original PR, but not added back in this PR [https://issues.apache.org/jira/browse/SPARK-25908]:

- Remove some AccumulableInfo .apply() methods

- Remove non-label-specific multiclass precision/recall/fScore in favor of accuracy

- Remove unused Python StorageLevel constants

- Remove unused multiclass option in libsvm parsing

- Remove references to deprecated spark configs like spark.yarn.am.port

- Remove TaskContext.isRunningLocally

- Remove ShuffleMetrics.shuffle* methods

- Remove BaseReadWrite.context in favor of session

### Why are the changes needed?

Avoid breaking the APIs that are commonly used.

### Does this PR introduce any user-facing change?

Adding back the APIs that were removed in 3.0 branch does not introduce the user-facing changes, because Spark 3.0 has not been released.

### How was this patch tested?

Added a new test suite for these APIs.

Author: gatorsmile <gatorsmile@gmail.com>

Author: yi.wu <yi.wu@databricks.com>

Closes#27821 from gatorsmile/addAPIBackV2.

### What changes were proposed in this pull request?

This PR proposes to make pandas function APIs (`groupby.(cogroup.)applyInPandas` and `mapInPandas`) to ignore Python type hints.

### Why are the changes needed?

Python type hints are optional. It shouldn't affect where pandas UDFs are not used.

This is also a future work for them to support other type hints. We shouldn't at least throw an exception at this moment.

### Does this PR introduce any user-facing change?

No, it's master-only change.

```python

import pandas as pd

def pandas_plus_one(pdf: pd.DataFrame) -> pd.DataFrame:

return pdf + 1

spark.range(10).groupby('id').applyInPandas(pandas_plus_one, schema="id long").show()

```

```python

import pandas as pd

def pandas_plus_one(left: pd.DataFrame, right: pd.DataFrame) -> pd.DataFrame:

return left + 1

spark.range(10).groupby('id').cogroup(spark.range(10).groupby("id")).applyInPandas(pandas_plus_one, schema="id long").show()

```

```python

from typing import Iterator

import pandas as pd

def pandas_plus_one(iter: Iterator[pd.DataFrame]) -> Iterator[pd.DataFrame]:

return map(lambda v: v + 1, iter)

spark.range(10).mapInPandas(pandas_plus_one, schema="id long").show()

```

**Before:**

Exception

**After:**

```

+---+

| id|

+---+

| 1|

| 2|

| 3|

| 4|

| 5|

| 6|

| 7|

| 8|

| 9|

| 10|

+---+

```

### How was this patch tested?

Closes#28052 from HyukjinKwon/SPARK-31287.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Based on the discussion in the mailing list [[Proposal] Modification to Spark's Semantic Versioning Policy](http://apache-spark-developers-list.1001551.n3.nabble.com/Proposal-Modification-to-Spark-s-Semantic-Versioning-Policy-td28938.html) , this PR is to add back the following APIs whose maintenance cost are relatively small.

- HiveContext

- createExternalTable APIs

### Why are the changes needed?

Avoid breaking the APIs that are commonly used.

### Does this PR introduce any user-facing change?

Adding back the APIs that were removed in 3.0 branch does not introduce the user-facing changes, because Spark 3.0 has not been released.

### How was this patch tested?

add a new test suite for createExternalTable APIs.

Closes#27815 from gatorsmile/addAPIsBack.

Lead-authored-by: gatorsmile <gatorsmile@gmail.com>

Co-authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

### What changes were proposed in this pull request?

Add ANOVATest and FValueTest to PySpark

### Why are the changes needed?

Parity between Scala and Python.

### Does this PR introduce any user-facing change?

Yes. Python ANOVATest and FValueTest

### How was this patch tested?

doctest

Closes#28012 from huaxingao/stats-python.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: zhengruifeng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

When `toPandas` API works on duplicate column names produced from operators like join, we see the error like:

```

ValueError: The truth value of a Series is ambiguous. Use a.empty, a.bool(), a.item(), a.any() or a.all().

```

This patch fixes the error in `toPandas` API.

### Why are the changes needed?

To make `toPandas` work on dataframe with duplicate column names.

### Does this PR introduce any user-facing change?

Yes. Previously calling `toPandas` API on a dataframe with duplicate column names will fail. After this patch, it will produce correct result.

### How was this patch tested?

Unit test.

Closes#28025 from viirya/SPARK-31186.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

the link for `partition discovery` is malformed, because for releases, there will contains` /docs/<version>/` in the full URL.

### Why are the changes needed?

fix doc

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

`SKIP_SCALADOC=1 SKIP_RDOC=1 SKIP_SQLDOC=1 jekyll serve` locally verified

Closes#28017 from yaooqinn/doc.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

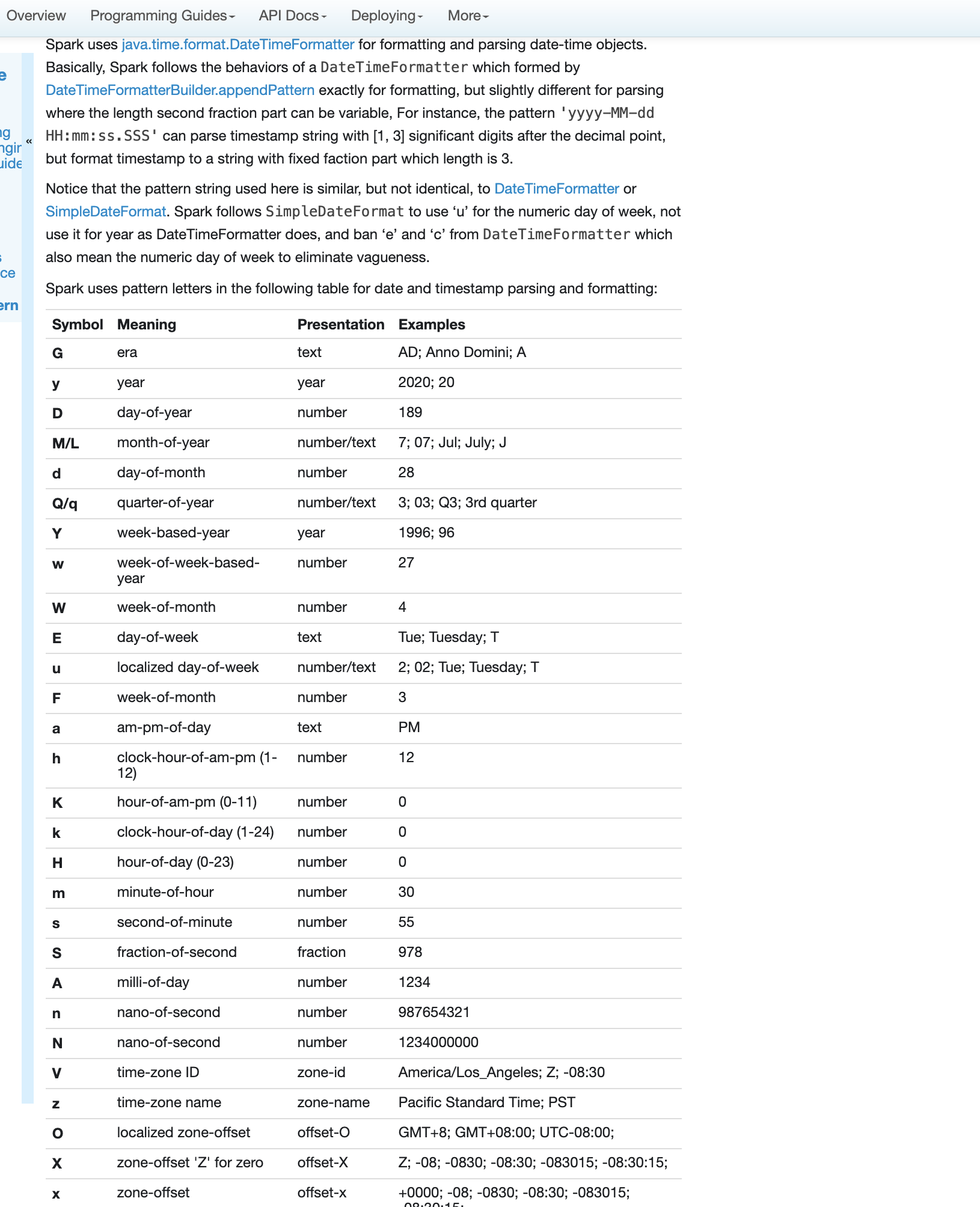

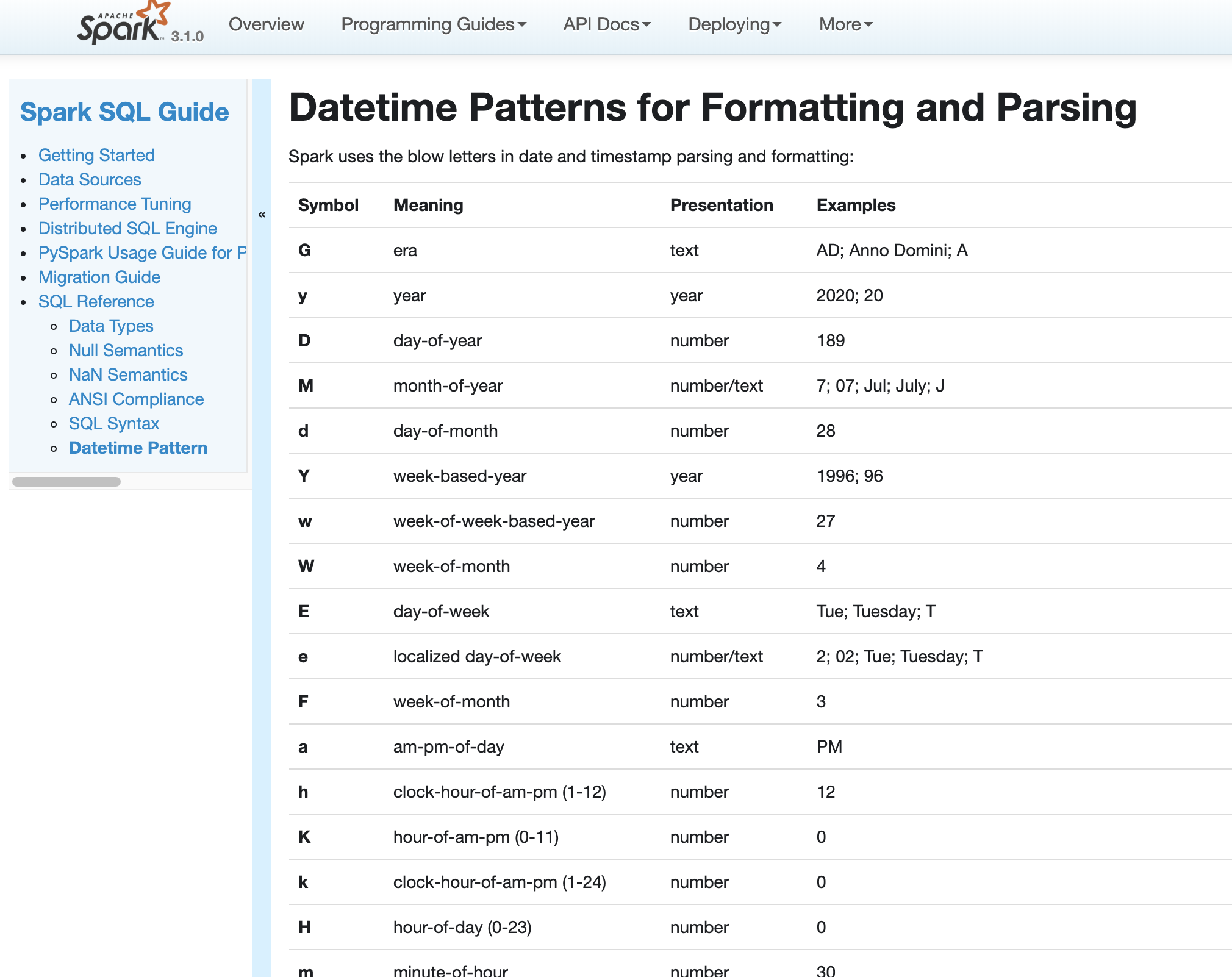

### What changes were proposed in this pull request?

Fix errors and missing parts for datetime pattern document

1. The pattern we use is similar to DateTimeFormatter and SimpleDateFormat but not identical. So we shouldn't use any of them in the API docs but use a link to the doc of our own.

2. Some pattern letters are missing

3. Some pattern letters are explicitly banned - Set('A', 'c', 'e', 'n', 'N')

4. the second fraction pattern different logic for parsing and formatting

### Why are the changes needed?

fix and improve doc

### Does this PR introduce any user-facing change?

yes, new and updated doc

### How was this patch tested?

pass Jenkins

viewed locally with `jekyll serve`

Closes#27956 from yaooqinn/SPARK-31189.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

- Adds following overloaded variants to Scala `o.a.s.sql.functions`:

- `percentile_approx(e: Column, percentage: Array[Double], accuracy: Long): Column`

- `percentile_approx(columnName: String, percentage: Array[Double], accuracy: Long): Column`

- `percentile_approx(e: Column, percentage: Double, accuracy: Long): Column`

- `percentile_approx(columnName: String, percentage: Double, accuracy: Long): Column`

- `percentile_approx(e: Column, percentage: Seq[Double], accuracy: Long): Column` (primarily for

Python interop).

- `percentile_approx(columnName: String, percentage: Seq[Double], accuracy: Long): Column`

- Adds `percentile_approx` to `pyspark.sql.functions`.

- Adds `percentile_approx` function to SparkR.

### Why are the changes needed?

Currently we support `percentile_approx` only in SQL expression. It is inconvenient and makes this function relatively unknown.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

New unit tests for SparkR an PySpark.

As for now there are no additional tests in Scala API ‒ `ApproximatePercentile` is well tested and Python (including docstrings) and R tests provide additional tests, so it seems unnecessary.

Closes#27278 from zero323/SPARK-30569.

Lead-authored-by: zero323 <mszymkiewicz@gmail.com>

Co-authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

jira link: https://issues.apache.org/jira/browse/SPARK-30930

Remove ML/MLLIB DeveloperApi annotations.

### Why are the changes needed?

The Developer APIs in ML/MLLIB have been there for a long time. They are stable now and are very unlikely to be changed or removed, so I unmark these Developer APIs in this PR.

### Does this PR introduce any user-facing change?

Yes. DeveloperApi annotations are removed from docs.

### How was this patch tested?

existing tests

Closes#27859 from huaxingao/spark-30930.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

In Spark version 2.4 and earlier, datetime parsing, formatting and conversion are performed by using the hybrid calendar (Julian + Gregorian).

Since the Proleptic Gregorian calendar is de-facto calendar worldwide, as well as the chosen one in ANSI SQL standard, Spark 3.0 switches to it by using Java 8 API classes (the java.time packages that are based on ISO chronology ). The switching job is completed in SPARK-26651.

But after the switching, there are some patterns not compatible between Java 8 and Java 7, Spark needs its own definition on the patterns rather than depends on Java API.

In this PR, we achieve this by writing the document and shadow the incompatible letters. See more details in [SPARK-31030](https://issues.apache.org/jira/browse/SPARK-31030)

### Why are the changes needed?

For backward compatibility.

### Does this PR introduce any user-facing change?

No.

After we define our own datetime parsing and formatting patterns, it's same to old Spark version.

### How was this patch tested?

Existing and new added UT.

Locally document test:

Closes#27830 from xuanyuanking/SPARK-31030.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Adding a note to document `Row.asDict` behavior when there are duplicate fields.

### Why are the changes needed?

When a row contains duplicate fields, `asDict` and `_get_item_` behaves differently. We should document it to let users know the difference explicitly.

### Does this PR introduce any user-facing change?

No. Only document change.

### How was this patch tested?

Existing test.

Closes#27853 from viirya/SPARK-30941.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Updating ML docs for 3.0 changes

### Why are the changes needed?

I am auditing 3.0 ML changes, found some docs are missing or not updated. Need to update these.

### Does this PR introduce any user-facing change?

Yes, doc changes

### How was this patch tested?

Manually build and check

Closes#27762 from huaxingao/spark-doc.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

Implement common base ML classes (`Predictor`, `PredictionModel`, `Classifier`, `ClasssificationModel` `ProbabilisticClassifier`, `ProbabilisticClasssificationModel`, `Regressor`, `RegrssionModel`) for non-Java backends.

Note

- `Predictor` and `JavaClassifier` should be abstract as `_fit` method is not implemented.

- `PredictionModel` should be abstract as `_transform` is not implemented.

### Why are the changes needed?

To provide extensions points for non-JVM algorithms, as well as a public (as opposed to `Java*` variants, which are commonly described in docstrings as private) hierarchy which can be used to distinguish between different classes of predictors.

For longer discussion see [SPARK-29212](https://issues.apache.org/jira/browse/SPARK-29212) and / or https://github.com/apache/spark/pull/25776.

### Does this PR introduce any user-facing change?

It adds new base classes as listed above, but effective interfaces (method resolution order notwithstanding) stay the same.

Additionally "private" `Java*` classes in`ml.regression` and `ml.classification` have been renamed to follow PEP-8 conventions (added leading underscore).

It is for discussion if the same should be done to equivalent classes from `ml.wrapper`.

If we take `JavaClassifier` as an example, type hierarchy will change from

to

Similarly the old model

will become

### How was this patch tested?

Existing unit tests.

Closes#27245 from zero323/SPARK-29212.

Authored-by: zero323 <mszymkiewicz@gmail.com>

Signed-off-by: zhengruifeng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

Current impl needs to convert ml.Vector to breeze.Vector, which can be skipped.

### Why are the changes needed?

avoid unnecessary vector conversions

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

existing testsuites

Closes#27519 from zhengruifeng/gmm_transform_opt.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: zhengruifeng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

Remove automatically resource coordination support from Standalone.

### Why are the changes needed?

Resource coordination is mainly designed for the scenario where multiple workers launched on the same host. However, it's, actually, a non-existed scenario for today's Spark. Because, Spark now can start multiple executors in a single Worker, while it only allow one executor per Worker at very beginning. So, now, it really help nothing for user to launch multiple workers on the same host. Thus, it's not worth for us to bring over complicated implementation and potential high maintain cost for such an impossible scenario.

### Does this PR introduce any user-facing change?

No, it's Spark 3.0 feature.

### How was this patch tested?

Pass Jenkins.

Closes#27722 from Ngone51/abandon_coordination.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Xingbo Jiang <xingbo.jiang@databricks.com>

### What changes were proposed in this pull request?

This PR add Python API for invoking following higher functions:

- `transform`

- `exists`

- `forall`

- `filter`

- `aggregate`

- `zip_with`

- `transform_keys`

- `transform_values`

- `map_filter`

- `map_zip_with`

to `pyspark.sql`. Each of these accepts plain Python functions of one of the following types

- `(Column) -> Column: ...`

- `(Column, Column) -> Column: ...`

- `(Column, Column, Column) -> Column: ...`

Internally this proposal piggbacks on objects supporting Scala implementation ([SPARK-27297](https://issues.apache.org/jira/browse/SPARK-27297)) by:

1. Creating required `UnresolvedNamedLambdaVariables` exposing these as PySpark `Columns`

2. Invoking Python function with these columns as arguments.

3. Using the result, and underlying JVM objects from 1., to create `expressions.LambdaFunction` which is passed to desired expression, and repacked as Python `Column`.

### Why are the changes needed?

Currently higher order functions are available only using SQL and Scala API and can use only SQL expressions

```python

df.selectExpr("transform(values, x -> x + 1)")

```

This works reasonably well for simple functions, but can get really ugly with complex functions (complex functions, casts), resulting objects are somewhat verbose and we don't get any IDE support. Additionally DSL used, though very simple, is not documented.

With changes propose here, above query could be rewritten as:

```python

df.select(transform("values", lambda x: x + 1))

```

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

- For positive cases this PR adds doctest strings covering possible usage patterns.

- For negative cases (unsupported function types) this PR adds unit tests.

### Notes

If approved, the same approach can be used in SparkR.

Closes#27406 from zero323/SPARK-30681.

Authored-by: zero323 <mszymkiewicz@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This patch is to bump the master branch version to 3.1.0-SNAPSHOT.

### Why are the changes needed?

N/A

### Does this PR introduce any user-facing change?

N/A

### How was this patch tested?

N/A

Closes#27698 from gatorsmile/updateVersion.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

Fix for #27395

### What changes were proposed in this pull request?

The `allGather` method is added to the `BarrierTaskContext`. This method contains the same functionality as the `BarrierTaskContext.barrier` method; it blocks the task until all tasks make the call, at which time they may continue execution. In addition, the `allGather` method takes an input message. Upon returning from the `allGather` the task receives a list of all the messages sent by all the tasks that made the `allGather` call.

### Why are the changes needed?

There are many situations where having the tasks communicate in a synchronized way is useful. One simple example is if each task needs to start a server to serve requests from one another; first the tasks must find a free port (the result of which is undetermined beforehand) and then start making requests, but to do so they each must know the port chosen by the other task. An `allGather` method would allow them to inform each other of the port they will run on.

### Does this PR introduce any user-facing change?

Yes, an `BarrierTaskContext.allGather` method will be available through the Scala, Java, and Python APIs.

### How was this patch tested?

Most of the code path is already covered by tests to the `barrier` method, since this PR includes a refactor so that much code is shared by the `barrier` and `allGather` methods. However, a test is added to assert that an all gather on each tasks partition ID will return a list of every partition ID.

An example through the Python API:

```python

>>> from pyspark import BarrierTaskContext

>>>

>>> def f(iterator):

... context = BarrierTaskContext.get()

... return [context.allGather('{}'.format(context.partitionId()))]

...

>>> sc.parallelize(range(4), 4).barrier().mapPartitions(f).collect()[0]

[u'3', u'1', u'0', u'2']

```

Closes#27640 from sarthfrey/master.

Lead-authored-by: sarthfrey-db <sarth.frey@databricks.com>

Co-authored-by: sarthfrey <sarth.frey@gmail.com>

Signed-off-by: Xingbo Jiang <xingbo.jiang@databricks.com>

### What changes were proposed in this pull request?

The style of `EXPLAIN FORMATTED` output needs to be improved. We’ve already got some observations/ideas in

https://github.com/apache/spark/pull/27368#discussion_r376694496https://github.com/apache/spark/pull/27368#discussion_r376927143

Observations/Ideas:

1. Using comma as the separator is not clear, especially commas are used inside the expressions too.

2. Show the column counts first? For example, `Results [4]: …`

3. Currently the attribute names are automatically generated, this need to refined.

4. Add arguments field in common implementations as `EXPLAIN EXTENDED` did by calling `argString` in `TreeNode.simpleString`. This will eliminate most existing minor differences between

`EXPLAIN EXTENDED` and `EXPLAIN FORMATTED`.

5. Another improvement we can do is: the generated alias shouldn't include attribute id. collect_set(val, 0, 0)#123 looks clearer than collect_set(val#456, 0, 0)#123

This PR is currently addressing comments 2 & 4, and open for more discussions on improving readability.

### Why are the changes needed?

The readability of `EXPLAIN FORMATTED` need to be improved, which will help user better understand the query plan.

### Does this PR introduce any user-facing change?

Yes, `EXPLAIN FORMATTED` output style changed.

### How was this patch tested?

Update expect results of test cases in explain.sql

Closes#27509 from Eric5553/ExplainFormattedRefine.

Authored-by: Eric Wu <492960551@qq.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

Split off from #27534.

### What changes were proposed in this pull request?

This PR deletes some unused cruft from the Sphinx Makefiles.

### Why are the changes needed?

To remove dead code and the associated maintenance burden.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Tested locally by building the Python docs and reviewing them in my browser.

Closes#27625 from nchammas/SPARK-30731-makefile-cleanup.

Lead-authored-by: Nicholas Chammas <nicholas.chammas@liveramp.com>

Co-authored-by: Nicholas Chammas <nicholas.chammas@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

This PR aims to upgrade Py4J to `0.10.9` for better Python 3.7 support in Apache Spark 3.0.0 (master/branch-3.0). This is not for `branch-2.4`.

- Apache Spark 3.0.0 is using `Py4J 0.10.8.1` (released on 2018-10-21) because `0.10.8.1` was the first official release to support Python 3.7.

- https://www.py4j.org/changelog.html#py4j-0-10-8-and-py4j-0-10-8-1

- `Py4J 0.10.9` was released on January 25th 2020 with better Python 3.7 support and `magic_member` bug fix.

- https://github.com/bartdag/py4j/releases/tag/0.10.9

- https://www.py4j.org/changelog.html#py4j-0-10-9

No.

Pass the Jenkins with the existing tests.

Closes#27641 from dongjoon-hyun/SPARK-30884.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

As discussed on the Jira ticket, this change clears the SQLContext._instantiatedContext class attribute when the SparkSession is stopped. That way, the attribute will be reset with a new, usable SQLContext when a new SparkSession is started.

### Why are the changes needed?

When the underlying SQLContext is instantiated for a SparkSession, the instance is saved as a class attribute and returned from subsequent calls to SQLContext.getOrCreate(). If the SparkContext is stopped and a new one started, the SQLContext class attribute is never cleared so any code which calls SQLContext.getOrCreate() will get a SQLContext with a reference to the old, unusable SparkContext.

A similar issue was identified and fixed for SparkSession in [SPARK-19055](https://issues.apache.org/jira/browse/SPARK-19055), but the fix did not change SQLContext as well. I ran into this because mllib still [uses](https://github.com/apache/spark/blob/master/python/pyspark/mllib/common.py#L105) SQLContext.getOrCreate() under the hood.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

A new test was added. I verified that the test fails without the included change.

Closes#27610 from afavaro/restart-sqlcontext.

Authored-by: Alex Favaro <alex.favaro@affirm.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

The `allGather` method is added to the `BarrierTaskContext`. This method contains the same functionality as the `BarrierTaskContext.barrier` method; it blocks the task until all tasks make the call, at which time they may continue execution. In addition, the `allGather` method takes an input message. Upon returning from the `allGather` the task receives a list of all the messages sent by all the tasks that made the `allGather` call.

### Why are the changes needed?

There are many situations where having the tasks communicate in a synchronized way is useful. One simple example is if each task needs to start a server to serve requests from one another; first the tasks must find a free port (the result of which is undetermined beforehand) and then start making requests, but to do so they each must know the port chosen by the other task. An `allGather` method would allow them to inform each other of the port they will run on.

### Does this PR introduce any user-facing change?

Yes, an `BarrierTaskContext.allGather` method will be available through the Scala, Java, and Python APIs.

### How was this patch tested?

Most of the code path is already covered by tests to the `barrier` method, since this PR includes a refactor so that much code is shared by the `barrier` and `allGather` methods. However, a test is added to assert that an all gather on each tasks partition ID will return a list of every partition ID.

An example through the Python API:

```python

>>> from pyspark import BarrierTaskContext

>>>

>>> def f(iterator):

... context = BarrierTaskContext.get()

... return [context.allGather('{}'.format(context.partitionId()))]

...

>>> sc.parallelize(range(4), 4).barrier().mapPartitions(f).collect()[0]

[u'3', u'1', u'0', u'2']

```

Closes#27395 from sarthfrey/master.

Lead-authored-by: sarthfrey-db <sarth.frey@databricks.com>

Co-authored-by: sarthfrey <sarth.frey@gmail.com>

Signed-off-by: Xiangrui Meng <meng@databricks.com>

(cherry picked from commit 57254c9719)

Signed-off-by: Xiangrui Meng <meng@databricks.com>

### What changes were proposed in this pull request?

This PR proposes to deprecate the APIs at `SQLContext` removed in SPARK-25908. We should remove equivalent APIs; however, seems we missed to deprecate.

While I am here, I fix one more issue. After SPARK-25908, `sc._jvm.SQLContext.getOrCreate` dose not exist anymore. So,

```python

from pyspark.sql import SQLContext

from pyspark import SparkContext

sc = SparkContext.getOrCreate()

SQLContext.getOrCreate(sc).range(10).show()

```

throws an exception as below:

```

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/.../spark/python/pyspark/sql/context.py", line 110, in getOrCreate

jsqlContext = sc._jvm.SQLContext.getOrCreate(sc._jsc.sc())

File "/.../spark/python/lib/py4j-0.10.8.1-src.zip/py4j/java_gateway.py", line 1516, in __getattr__

py4j.protocol.Py4JError: org.apache.spark.sql.SQLContext.getOrCreate does not exist in the JVM

```

After this PR:

```

/.../spark/python/pyspark/sql/context.py:113: DeprecationWarning: Deprecated in 3.0.0. Use SparkSession.builder.getOrCreate() instead.

DeprecationWarning)

+---+

| id|

+---+

| 0|

| 1|

| 2|

| 3|

| 4|

| 5|

| 6|

| 7|

| 8|

| 9|

+---+

```

In case of the constructor of `SQLContext`, after this PR:

```python

from pyspark.sql import SQLContext

sc = SparkContext.getOrCreate()

SQLContext(sc)

```

```

/.../spark/python/pyspark/sql/context.py:77: DeprecationWarning: Deprecated in 3.0.0. Use SparkSession.builder.getOrCreate() instead.

DeprecationWarning)

```

### Why are the changes needed?

To promote to use SparkSession, and keep the API party consistent with Scala side.

### Does this PR introduce any user-facing change?

Yes, it will show deprecation warning to users.

### How was this patch tested?

Manually tested as described above. Unittests were also added.

Closes#27614 from HyukjinKwon/SPARK-30861.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

This commit is published into the public domain.

### What changes were proposed in this pull request?

Some syntax issues in docstrings have been fixed.

### Why are the changes needed?

In some places, the documentation did not render as intended, e.g. parameter documentations were not formatted as such.

### Does this PR introduce any user-facing change?

Slight improvements in documentation.

### How was this patch tested?

Manual testing. No new Sphinx warnings arise due to this change.

Closes#27613 from DavidToneian/SPARK-30859.

Authored-by: David Toneian <david@toneian.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR added two DeveloperApis to the Dataset[T] class. Both methods are just exposing lower-level methods to the Dataset[T] class.

### Why are the changes needed?

They are useful for checking whether two dataframes are the same when implementing dataframe caching in python, and also get a unique ID. It's easier to use if we wrap the lower-level APIs.

### Does this PR introduce any user-facing change?

```

scala> val df1 = Seq((1,2),(4,5)).toDF("col1", "col2")

df1: org.apache.spark.sql.DataFrame = [col1: int, col2: int]

scala> val df2 = Seq((1,2),(4,5)).toDF("col1", "col2")

df2: org.apache.spark.sql.DataFrame = [col1: int, col2: int]

scala> val df3 = Seq((0,2),(4,5)).toDF("col1", "col2")

df3: org.apache.spark.sql.DataFrame = [col1: int, col2: int]

scala> val df4 = Seq((0,2),(4,5)).toDF("col0", "col2")

df4: org.apache.spark.sql.DataFrame = [col0: int, col2: int]

scala> df1.semanticHash

res0: Int = 594427822

scala> df2.semanticHash

res1: Int = 594427822

scala> df1.sameSemantics(df2)

res2: Boolean = true

scala> df1.sameSemantics(df3)

res3: Boolean = false

scala> df3.semanticHash

res4: Int = -1592702048

scala> df4.semanticHash

res5: Int = -1592702048

scala> df4.sameSemantics(df3)

res6: Boolean = true

```

### How was this patch tested?

Unit test in scala and doctest in python.

Note: comments are copied from the corresponding lower-level APIs.

Note: There are some issues to be fixed that would improve the hash collision rate: https://github.com/apache/spark/pull/27565#discussion_r379881028Closes#27565 from liangz1/df-same-result.

Authored-by: Liang Zhang <liang.zhang@databricks.com>

Signed-off-by: WeichenXu <weichen.xu@databricks.com>

### What changes were proposed in this pull request?

This is a follow-up for #23124, add a new config `spark.sql.legacy.allowDuplicatedMapKeys` to control the behavior of removing duplicated map keys in build-in functions. With the default value `false`, Spark will throw a RuntimeException while duplicated keys are found.

### Why are the changes needed?

Prevent silent behavior changes.

### Does this PR introduce any user-facing change?

Yes, new config added and the default behavior for duplicated map keys changed to RuntimeException thrown.

### How was this patch tested?

Modify existing UT.

Closes#27478 from xuanyuanking/SPARK-25892-follow.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

This commit is published into the public domain.

### What changes were proposed in this pull request?

In analogy to `python/docs/Makefile`, which has

> export PYTHONPATH=$(realpath ..):$(realpath ../lib/py4j-0.10.8.1-src.zip)

on line 10, this PR adds

> set PYTHONPATH=..;..\lib\py4j-0.10.8.1-src.zip

to `make2.bat`.

Since there is no `realpath` in default installations of Windows, I left the relative paths unresolved. Per the instructions on how to build docs, `make.bat` is supposed to be run from `python/docs` as the working directory, so this should probably not cause issues (`%BUILDDIR%` is a relative path as well.)

### Why are the changes needed?

When building the PySpark documentation on Windows, by changing directory to `python/docs` and running `make.bat` (which runs `make2.bat`), the majority of the documentation may not be built if pyspark is not in the default `%PYTHONPATH%`. Sphinx then reports that `pyspark` (and possibly dependencies) cannot be imported.

If `pyspark` is in the default `%PYTHONPATH%`, I suppose it is that version of `pyspark` – as opposed to the version found above the `python/docs` directory – that is considered when building the documentation, which may result in documentation that does not correspond to the development version one is trying to build.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Manual tests on my Windows 10 machine. Additional tests with other environments very welcome!

Closes#27569 from DavidToneian/SPARK-30823.

Authored-by: David Toneian <david@toneian.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

This commit is published into the public domain.

### What changes were proposed in this pull request?

This PR fixes the documentation of `DataFrameReader`, `DataFrameWriter`, `DataStreamReader`, and `DataStreamWriter`, where attributes of other classes were misrepresented as functions. Additionally, creation of hyperlinks across modules was fixed in these instances.

### Why are the changes needed?

The old state produced documentation that suggested invalid usage of PySpark objects (accessing attributes as though they were callable.)

### Does this PR introduce any user-facing change?

No, except for improved documentation.

### How was this patch tested?

No test added; documentation build runs through.

Closes#27553 from DavidToneian/docfix-DataFrameReader-DataFrameWriter-DataStreamReader-DataStreamWriter.

Authored-by: David Toneian <david@toneian.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

The `allGather` method is added to the `BarrierTaskContext`. This method contains the same functionality as the `BarrierTaskContext.barrier` method; it blocks the task until all tasks make the call, at which time they may continue execution. In addition, the `allGather` method takes an input message. Upon returning from the `allGather` the task receives a list of all the messages sent by all the tasks that made the `allGather` call.

### Why are the changes needed?

There are many situations where having the tasks communicate in a synchronized way is useful. One simple example is if each task needs to start a server to serve requests from one another; first the tasks must find a free port (the result of which is undetermined beforehand) and then start making requests, but to do so they each must know the port chosen by the other task. An `allGather` method would allow them to inform each other of the port they will run on.

### Does this PR introduce any user-facing change?

Yes, an `BarrierTaskContext.allGather` method will be available through the Scala, Java, and Python APIs.

### How was this patch tested?

Most of the code path is already covered by tests to the `barrier` method, since this PR includes a refactor so that much code is shared by the `barrier` and `allGather` methods. However, a test is added to assert that an all gather on each tasks partition ID will return a list of every partition ID.

An example through the Python API:

```python

>>> from pyspark import BarrierTaskContext

>>>

>>> def f(iterator):

... context = BarrierTaskContext.get()

... return [context.allGather('{}'.format(context.partitionId()))]

...

>>> sc.parallelize(range(4), 4).barrier().mapPartitions(f).collect()[0]

[u'3', u'1', u'0', u'2']

```

Closes#27395 from sarthfrey/master.

Lead-authored-by: sarthfrey-db <sarth.frey@databricks.com>

Co-authored-by: sarthfrey <sarth.frey@gmail.com>

Signed-off-by: Xiangrui Meng <meng@databricks.com>

### What changes were proposed in this pull request?

In this PR, we add a parameter in the python function vector_to_array(col) that allows converting to a column of arrays of Float (32bits) in scala, which would be mapped to a numpy array of dtype=float32.

### Why are the changes needed?

In the downstream ML training, using float32 instead of float64 (default) would allow a larger batch size, i.e., allow more data to fit in the memory.

### Does this PR introduce any user-facing change?

Yes.

Old: `vector_to_array()` only take one param

```

df.select(vector_to_array("colA"), ...)

```

New: `vector_to_array()` can take an additional optional param: `dtype` = "float32" (or "float64")

```

df.select(vector_to_array("colA", "float32"), ...)

```

### How was this patch tested?

Unit test in scala.

doctest in python.

Closes#27522 from liangz1/udf-float32.

Authored-by: Liang Zhang <liang.zhang@databricks.com>

Signed-off-by: WeichenXu <weichen.xu@databricks.com>

### What changes were proposed in this pull request?

This is another PR for stage level scheduling. In particular this adds changes to the dynamic allocation manager and the scheduler backend to be able to track what executors are needed per ResourceProfile. Note the api is still private to Spark until the entire feature gets in, so this functionality will be there but only usable by tests for profiles other then the DefaultProfile.

The main changes here are simply tracking things on a ResourceProfile basis as well as sending the executor requests to the scheduler backend for all ResourceProfiles.

I introduce a ResourceProfileManager in this PR that will track all the actual ResourceProfile objects so that we can keep them all in a single place and just pass around and use in datastructures the resource profile id. The resource profile id can be used with the ResourceProfileManager to get the actual ResourceProfile contents.

There are various places in the code that use executor "slots" for things. The ResourceProfile adds functionality to keep that calculation in it. This logic is more complex then it should due to standalone mode and mesos coarse grained not setting the executor cores config. They default to all cores on the worker, so calculating slots is harder there.

This PR keeps the functionality to make the cores the limiting resource because the scheduler still uses that for "slots" for a few things.

This PR does also add the resource profile id to the Stage and stage info classes to be able to test things easier. That full set of changes will come with the scheduler PR that will be after this one.

The PR stops at the scheduler backend pieces for the cluster manager and the real YARN support hasn't been added in this PR, that again will be in a separate PR, so this has a few of the API changes up to the cluster manager and then just uses the default profile requests to continue.

The code for the entire feature is here for reference: https://github.com/apache/spark/pull/27053/files although it needs to be upmerged again as well.

### Why are the changes needed?

Needed for stage level scheduling feature.

### Does this PR introduce any user-facing change?

No user facing api changes added here.

### How was this patch tested?

Lots of unit tests and manually testing. I tested on yarn, k8s, standalone, local modes. Ran both failure and success cases.

Closes#27313 from tgravescs/SPARK-29148.

Authored-by: Thomas Graves <tgraves@nvidia.com>

Signed-off-by: Thomas Graves <tgraves@apache.org>

### What changes were proposed in this pull request?

This PR targets to document the Pandas UDF redesign with type hints introduced at SPARK-28264.

Mostly self-describing; however, there are few things to note for reviewers.

1. This PR replace the existing documentation of pandas UDFs to the newer redesign to promote the Python type hints. I added some words that Spark 3.0 still keeps the compatibility though.

2. This PR proposes to name non-pandas UDFs as "Pandas Function API"

3. SCALAR_ITER become two separate sections to reduce confusion:

- `Iterator[pd.Series]` -> `Iterator[pd.Series]`

- `Iterator[Tuple[pd.Series, ...]]` -> `Iterator[pd.Series]`

4. I removed some examples that look overkill to me.

5. I also removed some information in the doc, that seems duplicating or too much.

### Why are the changes needed?

To document new redesign in pandas UDF.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing tests should cover.

Closes#27466 from HyukjinKwon/SPARK-30722.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Fix PySpark test failures for using Pandas >= 1.0.0.

### Why are the changes needed?

Pandas 1.0.0 has recently been released and has API changes that result in PySpark test failures, this PR fixes the broken tests.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Manually tested with Pandas 1.0.1 and PyArrow 0.16.0

Closes#27529 from BryanCutler/pandas-fix-tests-1.0-SPARK-30777.

Authored-by: Bryan Cutler <cutlerb@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Add ```HasBlockSize``` in shared Params in both Scala and Python.

Make ALS/MLP extend ```HasBlockSize```

### Why are the changes needed?

Add ```HasBlockSize ``` in ALS, so user can specify the blockSize.

Make ```HasBlockSize``` a shared param so both ALS and MLP can use it.

### Does this PR introduce any user-facing change?

Yes

```ALS.setBlockSize/getBlockSize```

```ALSModel.setBlockSize/getBlockSize```

### How was this patch tested?

Manually tested. Also added doctest.

Closes#27501 from huaxingao/spark_30662.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: zhengruifeng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

Revert

#27360#27396#27374#27389

### Why are the changes needed?

BLAS need more performace tests, specially on sparse datasets.

Perfermance test of LogisticRegression (https://github.com/apache/spark/pull/27374) on sparse dataset shows that blockify vectors to matrices and use BLAS will cause performance regression.

LinearSVC and LinearRegression were also updated in the same way as LogisticRegression, so we need to revert them to make sure no regression.

### Does this PR introduce any user-facing change?

remove newly added param blockSize

### How was this patch tested?

reverted testsuites

Closes#27487 from zhengruifeng/revert_blockify_ii.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: zhengruifeng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

This PR fixes some typos in `python/pyspark/sql/types.py` file.

### Why are the changes needed?

To deliver correct wording in documentation and codes.

### Does this PR introduce any user-facing change?

Yes, it fixes some typos in user-facing API documentation.

### How was this patch tested?

Locally tested the linter.

Closes#27475 from sharifahmad2061/master.

Lead-authored-by: sharif ahmad <sharifahmad2061@gmail.com>

Co-authored-by: Sharif ahmad <sharifahmad2061@users.noreply.github.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR renames `spark.sql.pandas.udf.buffer.size` to `spark.sql.execution.pandas.udf.buffer.size` to be more consistent with other pandas configuration prefixes, given:

- `spark.sql.execution.pandas.arrowSafeTypeConversion`

- `spark.sql.execution.pandas.respectSessionTimeZone`

- `spark.sql.legacy.execution.pandas.groupedMap.assignColumnsByName`

- other configurations like `spark.sql.execution.arrow.*`.

### Why are the changes needed?

To make configuration names consistent.

### Does this PR introduce any user-facing change?

No because this configuration was not released yet.

### How was this patch tested?

Existing tests should cover.

Closes#27450 from HyukjinKwon/SPARK-27870-followup.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR aims to increase the timeout of `StreamingLogisticRegressionWithSGDTests.test_parameter_accuracy` from 30s (default) to 60s.

In this PR, before increasing the timeout,

1. I verified that this is not a JDK11 environmental issue by repeating 3 times first.

2. I reproduced the accuracy failure by reducing the timeout in Jenkins (https://github.com/apache/spark/pull/27424#issuecomment-580981262)

Then, the final commit passed the Jenkins.

### Why are the changes needed?

This seems to happen when Jenkins environment has congestion and the jobs are slowdown. The streaming job seems to be unable to repeat the designed iteration `numIteration=25` in 30 seconds. Since the error is decreasing at each iteration, the failure occurs.

By reducing the timeout, we can reproduce the similar issue locally like Jenkins.

```python

- eventually(condition, catch_assertions=True)

+ eventually(condition, timeout=10.0, catch_assertions=True)

```

```

$ python/run-tests --testname 'pyspark.mllib.tests.test_streaming_algorithms StreamingLogisticRegressionWithSGDTests.test_parameter_accuracy' --python-executables=python

...

======================================================================

FAIL: test_parameter_accuracy (pyspark.mllib.tests.test_streaming_algorithms.StreamingLogisticRegressionWithSGDTests)

----------------------------------------------------------------------

Traceback (most recent call last):

File "/Users/dongjoon/PRS/SPARK-TEST/python/pyspark/mllib/tests/test_streaming_algorithms.py", line 229, in test_parameter_accuracy

eventually(condition, timeout=10.0, catch_assertions=True)

File "/Users/dongjoon/PRS/SPARK-TEST/python/pyspark/testing/utils.py", line 86, in eventually

raise lastValue

Reproduce the error

File "/Users/dongjoon/PRS/SPARK-TEST/python/pyspark/testing/utils.py", line 77, in eventually

lastValue = condition()

File "/Users/dongjoon/PRS/SPARK-TEST/python/pyspark/mllib/tests/test_streaming_algorithms.py", line 226, in condition

self.assertAlmostEqual(rel, 0.1, 1)

AssertionError: 0.25749106949322637 != 0.1 within 1 places (0.15749106949322636 difference)

----------------------------------------------------------------------

Ran 1 test in 14.814s

FAILED (failures=1)

```

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Pass the Jenkins (and manual check by reducing the timeout).

Since this is a flakiness issue depending on the Jenkins job situation, it's difficult to reproduce there.

Closes#27424 from dongjoon-hyun/SPARK-TEST.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

1, use blocks instead of vectors for performance improvement

2, use Level-2 BLAS

3, move standardization of input vectors outside of gradient computation

### Why are the changes needed?

1, less RAM to persist training data; (save ~40%)

2, faster than existing impl; (30% ~ 102%)

### Does this PR introduce any user-facing change?

add a new expert param `blockSize`

### How was this patch tested?

updated testsuites

Closes#27396 from zhengruifeng/blockify_lireg.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

remove duplicate setter in ```BucketedRandomProjectionLSH```

### Why are the changes needed?

Remove the duplicate ```setInputCol/setOutputCol``` in ```BucketedRandomProjectionLSH``` because these two setter are already in super class ```LSH```

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Manually checked.

Closes#27397 from huaxingao/spark-29093.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Make ALS/MLP extend ```HasBlockSize```

### Why are the changes needed?

Currently, MLP has its own ```blockSize``` param, we should make MLP extend ```HasBlockSize``` since ```HasBlockSize``` was added in ```sharedParams.scala``` recently.

ALS doesn't have ```blockSize``` param now, we can make it extend ```HasBlockSize```, so user can specify the ```blockSize```.

### Does this PR introduce any user-facing change?

Yes

```ALS.setBlockSize``` and ```ALS.getBlockSize```

```ALSModel.setBlockSize``` and ```ALSModel.getBlockSize```

### How was this patch tested?

Manually tested. Also added doctest.

Closes#27389 from huaxingao/spark-30662.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

1, use blocks instead of vectors

2, use Level-2 BLAS for binary, use Level-3 BLAS for multinomial

### Why are the changes needed?

1, less RAM to persist training data; (save ~40%)

2, faster than existing impl; (40% ~ 92%)

### Does this PR introduce any user-facing change?

add a new expert param `blockSize`

### How was this patch tested?

updated testsuites

Closes#27374 from zhengruifeng/blockify_lor.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

This PR removes any dependencies on pypandoc. It also makes related tweaks to the docs README to clarify the dependency on pandoc (not pypandoc).

### Why are the changes needed?

We are using pypandoc to convert the Spark README from Markdown to ReST for PyPI. PyPI now natively supports Markdown, so we don't need pypandoc anymore. The dependency on pypandoc also sometimes causes issues when installing Python packages that depend on PySpark, as described in #18981.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Manually:

```sh

python -m venv venv

source venv/bin/activate

pip install -U pip

cd python/

python setup.py sdist

pip install dist/pyspark-3.0.0.dev0.tar.gz

pyspark --version

```

I also built the PySpark and R API docs with `jekyll` and reviewed them locally.

It would be good if a maintainer could also test this by creating a PySpark distribution and uploading it to [Test PyPI](https://test.pypi.org) to confirm the README looks as it should.

Closes#27376 from nchammas/SPARK-30665-pypandoc.

Authored-by: Nicholas Chammas <nicholas.chammas@liveramp.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

1, stack input vectors to blocks (like ALS/MLP);

2, add new param `blockSize`;

3, add a new class `InstanceBlock`

4, standardize the input outside of optimization procedure;

### Why are the changes needed?

1, reduce RAM to persist traing dataset; (save ~40% in test)

2, use Level-2 BLAS routines; (12% ~ 28% faster, without native BLAS)

### Does this PR introduce any user-facing change?

a new param `blockSize`

### How was this patch tested?

existing and updated testsuites

Closes#27360 from zhengruifeng/blockify_svc.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: zhengruifeng <ruifengz@foxmail.com>