## What changes were proposed in this pull request?

In `SparkPlan.getByteArrayRdd`, we should only call `it.hasNext` when the limit is not hit, as `iter.hasNext` may produce one row and buffer it, and cause wrong metrics.

## How was this patch tested?

new tests

Closes#22621 from cloud-fan/range.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Refactor `UnsafeArrayDataBenchmark` to use main method.

Generate benchmark result:

```sh

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.UnsafeArrayDataBenchmark"

```

## How was this patch tested?

manual tests

Closes#22491 from wangyum/SPARK-25483.

Lead-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

This PR aims to add `BloomFilterBenchmark`. For ORC data source, Apache Spark has been supporting for a long time. For Parquet data source, it's expected to be added with next Parquet release update.

## How was this patch tested?

Manual.

```scala

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.BloomFilterBenchmark"

```

Closes#22605 from dongjoon-hyun/SPARK-25589.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Rename method `benchmark` in `BenchmarkBase` as `runBenchmarkSuite `. Also add comments.

Currently the method name `benchmark` is a bit confusing. Also the name is the same as instances of `Benchmark`:

f246813afb/sql/hive/src/test/scala/org/apache/spark/sql/hive/orc/OrcReadBenchmark.scala (L330-L339)

## How was this patch tested?

Unit test.

Closes#22599 from gengliangwang/renameBenchmarkSuite.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

This patch is to bump the master branch version to 3.0.0-SNAPSHOT.

## How was this patch tested?

N/A

Closes#22606 from gatorsmile/bump3.0.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

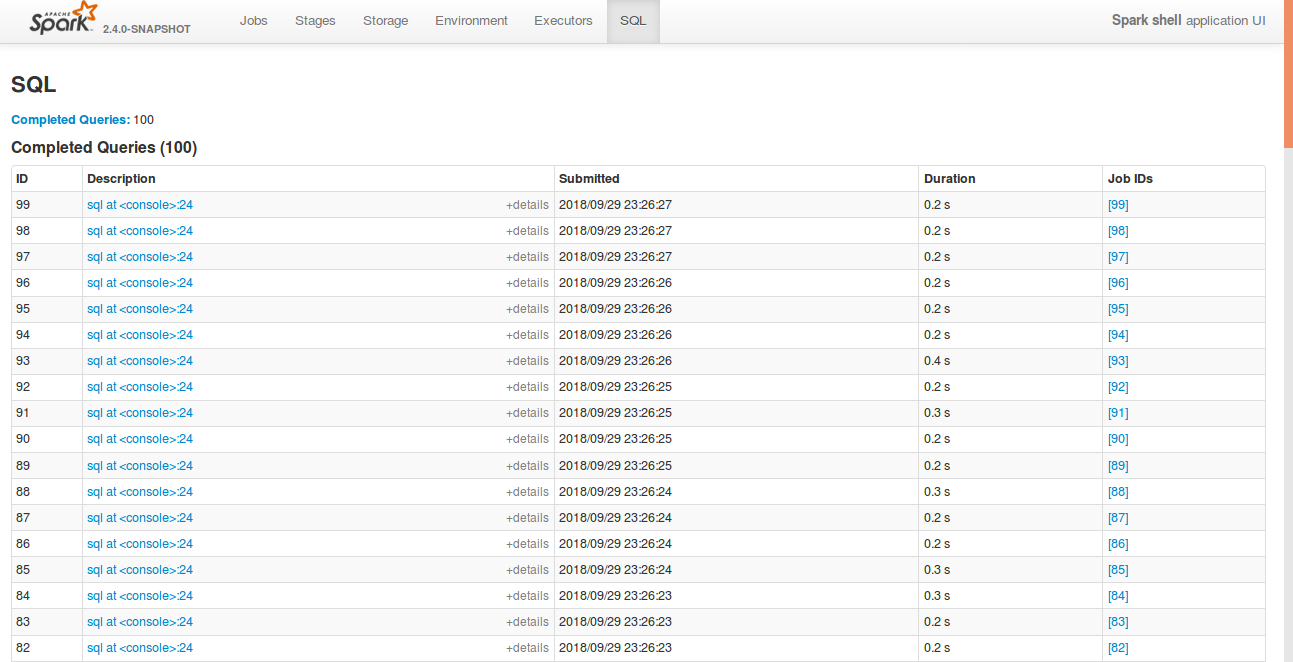

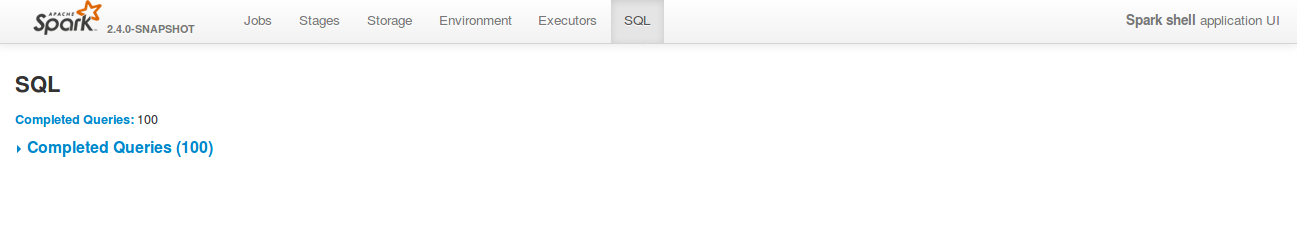

## What changes were proposed in this pull request?

Currently, SQL tab in the WEBUI doesn't support hiding table. Other tabs in the web ui like, Jobs, stages etc supports hiding table (refer SPARK-23024 https://github.com/apache/spark/pull/20216).

In this PR, added the support for hide table in the sql tab also.

## How was this patch tested?

bin/spark-shell

```

sql("create table a (id int)")

for(i <- 1 to 100) sql(s"insert into a values ($i)")

```

Open SQL tab in the web UI

**Before fix:**

**After fix:** Consistent with the other tabs.

(Please explain how this patch was tested. E.g. unit tests, integration tests, manual tests)

(If this patch involves UI changes, please attach a screenshot; otherwise, remove this)

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#22592 from shahidki31/SPARK-25575.

Authored-by: Shahid <shahidki31@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This PR does 2 things:

1. Add a new trait(`SqlBasedBenchmark`) to better support Dataset and DataFrame API.

2. Refactor `AggregateBenchmark` to use main method. Generate benchmark result:

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.AggregateBenchmark"

```

## How was this patch tested?

manual tests

Closes#22484 from wangyum/SPARK-25476.

Lead-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

#22519 introduced a bug when the attributes in the pivot clause are cosmetically different from the output ones (eg. different case). In particular, the problem is that the PR used a `Set[Attribute]` instead of an `AttributeSet`.

## How was this patch tested?

added UT

Closes#22582 from mgaido91/SPARK-25505_followup.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

This PR adds a rule to force `.toLowerCase(Locale.ROOT)` or `toUpperCase(Locale.ROOT)`.

It produces an error as below:

```

[error] Are you sure that you want to use toUpperCase or toLowerCase without the root locale? In most cases, you

[error] should use toUpperCase(Locale.ROOT) or toLowerCase(Locale.ROOT) instead.

[error] If you must use toUpperCase or toLowerCase without the root locale, wrap the code block with

[error] // scalastyle:off caselocale

[error] .toUpperCase

[error] .toLowerCase

[error] // scalastyle:on caselocale

```

This PR excludes the cases above for SQL code path for external calls like table name, column name and etc.

For test suites, or when it's clear there's no locale problem like Turkish locale problem, it uses `Locale.ROOT`.

One minor problem is, `UTF8String` has both methods, `toLowerCase` and `toUpperCase`, and the new rule detects them as well. They are ignored.

## How was this patch tested?

Manually tested, and Jenkins tests.

Closes#22581 from HyukjinKwon/SPARK-25565.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In the PR, I propose to extend implementation of existing method:

```

def pivot(pivotColumn: Column, values: Seq[Any]): RelationalGroupedDataset

```

to support values of the struct type. This allows pivoting by multiple columns combined by `struct`:

```

trainingSales

.groupBy($"sales.year")

.pivot(

pivotColumn = struct(lower($"sales.course"), $"training"),

values = Seq(

struct(lit("dotnet"), lit("Experts")),

struct(lit("java"), lit("Dummies")))

).agg(sum($"sales.earnings"))

```

## How was this patch tested?

Added a test for values specified via `struct` in Java and Scala.

Closes#22316 from MaxGekk/pivoting-by-multiple-columns2.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In the PR, I propose to extended the `schema_of_json()` function, and accept JSON options since they can impact on schema inferring. Purpose is to support the same options that `from_json` can use during schema inferring.

## How was this patch tested?

Added SQL, Python and Scala tests (`JsonExpressionsSuite` and `JsonFunctionsSuite`) that checks JSON options are used.

Closes#22442 from MaxGekk/schema_of_json-options.

Authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Currently, in `ParquetFilters`, if one of the children predicates is not supported by Parquet, the entire predicates will be thrown away. In fact, if the unsupported predicate is in the top level `And` condition or in the child before hitting `Not` or `Or` condition, it can be safely removed.

## How was this patch tested?

Tests are added.

Closes#22574 from dbtsai/removeUnsupportedPredicatesInParquet.

Lead-authored-by: DB Tsai <d_tsai@apple.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Co-authored-by: DB Tsai <dbtsai@dbtsai.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Use `Set` instead of `Array` to improve `accumulatorIds.contains(acc.id)` performance.

This PR close https://github.com/apache/spark/pull/22420

## How was this patch tested?

manual tests.

Benchmark code:

```scala

def benchmark(func: () => Unit): Long = {

val start = System.currentTimeMillis()

func()

val end = System.currentTimeMillis()

end - start

}

val range = Range(1, 1000000)

val set = range.toSet

val array = range.toArray

for (i <- 0 until 5) {

val setExecutionTime =

benchmark(() => for (i <- 0 until 500) { set.contains(scala.util.Random.nextInt()) })

val arrayExecutionTime =

benchmark(() => for (i <- 0 until 500) { array.contains(scala.util.Random.nextInt()) })

println(s"set execution time: $setExecutionTime, array execution time: $arrayExecutionTime")

}

```

Benchmark result:

```

set execution time: 4, array execution time: 2760

set execution time: 1, array execution time: 1911

set execution time: 3, array execution time: 2043

set execution time: 12, array execution time: 2214

set execution time: 6, array execution time: 1770

```

Closes#22579 from wangyum/SPARK-25429.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

**Description from the JIRA :**

Currently, to collect the statistics of all the columns, users need to specify the names of all the columns when calling the command "ANALYZE TABLE ... FOR COLUMNS...". This is not user friendly. Instead, we can introduce the following SQL command to achieve it without specifying the column names.

```

ANALYZE TABLE [db_name.]tablename COMPUTE STATISTICS FOR ALL COLUMNS;

```

## How was this patch tested?

Added new tests in SparkSqlParserSuite and StatisticsSuite

Closes#22566 from dilipbiswal/SPARK-25458.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

The grouping columns from a Pivot query are inferred as "input columns - pivot columns - pivot aggregate columns", where input columns are the output of the child relation of Pivot. The grouping columns will be the leading columns in the pivot output and they should preserve the same order as specified by the input. For example,

```

SELECT * FROM (

SELECT course, earnings, "a" as a, "z" as z, "b" as b, "y" as y, "c" as c, "x" as x, "d" as d, "w" as w

FROM courseSales

)

PIVOT (

sum(earnings)

FOR course IN ('dotNET', 'Java')

)

```

The output columns should be "a, z, b, y, c, x, d, w, ..." but now it is "a, b, c, d, w, x, y, z, ..."

The fix is to use the child plan's `output` instead of `outputSet` so that the order can be preserved.

## How was this patch tested?

Added UT.

Closes#22519 from maryannxue/spark-25505.

Authored-by: maryannxue <maryannxue@apache.org>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

The `show create table` will show a lot of generated attributes for views that created by older Spark version. This PR will basically revert https://issues.apache.org/jira/browse/SPARK-19272 back, so when you `DESC [FORMATTED|EXTENDED] view` will show the original view DDL text.

## How was this patch tested?

Unit test.

Closes#22458 from zheyuan28/testbranch.

Lead-authored-by: Chris Zhao <chris.zhao@databricks.com>

Co-authored-by: Christopher Zhao <chris.zhao@databricks.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

There are 2 places we check for problematic `InSubquery`: the rule `ResolveSubquery` and `InSubquery.checkInputDataTypes`. We should unify them.

## How was this patch tested?

existing tests

Closes#22563 from cloud-fan/followup.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

As per the discussion in https://github.com/apache/spark/pull/22553#pullrequestreview-159192221,

override `filterKeys` violates the documented semantics.

This PR is to remove it and add documentation.

Also fix one potential non-serializable map in `FileStreamOptions`.

The only one call of `CaseInsensitiveMap`'s `filterKeys` left is

c3c45cbd76/sql/hive/src/main/scala/org/apache/spark/sql/hive/execution/HiveOptions.scala (L88-L90)

But this one is OK.

## How was this patch tested?

Existing unit tests.

Closes#22562 from gengliangwang/SPARK-25541-FOLLOWUP.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The PR removes the `InSubquery` expression which was introduced a long time ago and its only usage was removed in 4ce970d714. Hence it is not used anymore.

## How was this patch tested?

existing UTs

Closes#22556 from mgaido91/minor_insubq.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In ElementAt, when first argument is MapType, we should coerce the key type and the second argument based on findTightestCommonType. This is not happening currently. We may produce wrong output as we will incorrectly downcast the right hand side double expression to int.

```SQL

spark-sql> select element_at(map(1,"one", 2, "two"), 2.2);

two

```

Also, when the first argument is ArrayType, the second argument should be an integer type or a smaller integral type that can be safely casted to an integer type. Currently we may do an unsafe cast. In the following case, we should fail with an error as 2.2 is not a integer index. But instead we down cast it to int currently and return a result instead.

```SQL

spark-sql> select element_at(array(1,2), 1.24D);

1

```

This PR also supports implicit cast between two MapTypes. I have followed similar logic that exists today to do implicit casts between two array types.

## How was this patch tested?

Added new tests in DataFrameFunctionSuite, TypeCoercionSuite.

Closes#22544 from dilipbiswal/SPARK-25522.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Refactor `ColumnarBatchBenchmark` to use main method.

Generate benchmark result:

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.vectorized.ColumnarBatchBenchmark"

```

## How was this patch tested?

manual tests

Closes#22490 from yucai/SPARK-25481.

Lead-authored-by: yucai <yyu1@ebay.com>

Co-authored-by: Yucai Yu <yucai.yu@foxmail.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

https://github.com/apache/spark/pull/20023 proposed to allow precision lose during decimal operations, to reduce the possibilities of overflow. This is a behavior change and is protected by the DECIMAL_OPERATIONS_ALLOW_PREC_LOSS config. However, that PR introduced another behavior change: pick a minimum precision for integral literals, which is not protected by a config. This PR add a new config for it: `spark.sql.literal.pickMinimumPrecision`.

This can allow users to work around issue in SPARK-25454, which is caused by a long-standing bug of negative scale.

## How was this patch tested?

a new test

Closes#22494 from cloud-fan/decimal.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Currently, Spark has 7 `withTempPath` and 6 `withSQLConf` functions. This PR aims to remove duplicated and inconsistent code and reduce them to the following meaningful implementations.

**withTempPath**

- `SQLHelper.withTempPath`: The one which was used in `SQLTestUtils`.

**withSQLConf**

- `SQLHelper.withSQLConf`: The one which was used in `PlanTest`.

- `ExecutorSideSQLConfSuite.withSQLConf`: The one which doesn't throw `AnalysisException` on StaticConf changes.

- `SQLTestUtils.withSQLConf`: The one which overrides intentionally to change the active session.

```scala

protected override def withSQLConf(pairs: (String, String)*)(f: => Unit): Unit = {

SparkSession.setActiveSession(spark)

super.withSQLConf(pairs: _*)(f)

}

```

## How was this patch tested?

Pass the Jenkins with the existing tests.

Closes#22548 from dongjoon-hyun/SPARK-25534.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

The PR introduces new JSON option `pretty` which allows to turn on `DefaultPrettyPrinter` of `Jackson`'s Json generator. New option is useful in exploring of deep nested columns and in converting of JSON columns in more readable representation (look at the added test).

## How was this patch tested?

Added rount trip test which convert an JSON string to pretty representation via `from_json()` and `to_json()`.

Closes#22534 from MaxGekk/pretty-json.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Add the legacy prefix for spark.sql.execution.pandas.groupedMap.assignColumnsByPosition and rename it to spark.sql.legacy.execution.pandas.groupedMap.assignColumnsByName

## How was this patch tested?

The existing tests.

Closes#22540 from gatorsmile/renameAssignColumnsByPosition.

Lead-authored-by: gatorsmile <gatorsmile@gmail.com>

Co-authored-by: Hyukjin Kwon <gurwls223@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Refactor SortBenchmark to use main method.

Generate benchmark result:

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.SortBenchmark"

```

## How was this patch tested?

manual tests

Closes#22495 from yucai/SPARK-25486.

Authored-by: yucai <yyu1@ebay.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

This patch reverts entirely all the regr_* functions added in SPARK-23907. These were added by mgaido91 (and proposed by gatorsmile) to improve compatibility with other database systems, without any actual use cases. However, they are very rarely used, and in Spark there are much better ways to compute these functions, due to Spark's flexibility in exposing real programming APIs.

I'm going through all the APIs added in Spark 2.4 and I think we should revert these. If there are strong enough demands and more use cases, we can add them back in the future pretty easily.

## How was this patch tested?

Reverted test cases also.

Closes#22541 from rxin/SPARK-23907.

Authored-by: Reynold Xin <rxin@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In ArrayRemove, we currently cast the right hand side expression to match the element type of the left hand side Array. This may result in down casting and may return wrong result or questionable result.

Example :

```SQL

spark-sql> select array_remove(array(1,2,3), 1.23D);

[2,3]

```

```SQL

spark-sql> select array_remove(array(1,2,3), 'foo');

NULL

```

We should safely coerce both left and right hand side expressions.

## How was this patch tested?

Added tests in DataFrameFunctionsSuite

Closes#22542 from dilipbiswal/SPARK-25519.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In ArrayPosition, we currently cast the right hand side expression to match the element type of the left hand side Array. This may result in down casting and may return wrong result or questionable result.

Example :

```SQL

spark-sql> select array_position(array(1), 1.34);

1

```

```SQL

spark-sql> select array_position(array(1), 'foo');

null

```

We should safely coerce both left and right hand side expressions.

## How was this patch tested?

Added tests in DataFrameFunctionsSuite

Closes#22407 from dilipbiswal/SPARK-25416.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Refactor `CompressionSchemeBenchmark` to use main method.

Generate benchmark result:

```sh

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.columnar.compression.CompressionSchemeBenchmark"

```

## How was this patch tested?

manual tests

Closes#22486 from wangyum/SPARK-25478.

Lead-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Currently there are two classes with the same naming BenchmarkBase:

1. `org.apache.spark.util.BenchmarkBase`

2. `org.apache.spark.sql.execution.benchmark.BenchmarkBase`

This is very confusing. And the benchmark object `org.apache.spark.sql.execution.benchmark.FilterPushdownBenchmark` is using the one in `org.apache.spark.util.BenchmarkBase`, while there is another class `BenchmarkBase` in the same package of it...

Here I propose:

1. the package `org.apache.spark.util.BenchmarkBase` should be in test package of core module. Move it to package `org.apache.spark.benchmark` .

2. Move `org.apache.spark.util.Benchmark` to test package of core module. Move it to package `org.apache.spark.benchmark` .

3. Rename the class `org.apache.spark.sql.execution.benchmark.BenchmarkBase` as `BenchmarkWithCodegen`

## How was this patch tested?

Unit test

Closes#22513 from gengliangwang/refactorBenchmarkBase.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Refactor PrimitiveArrayBenchmark to use main method and print the output as a separate file.

Run blow command to generate benchmark results:

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.PrimitiveArrayBenchmark"

```

## How was this patch tested?

Manual tests.

Closes#22497 from seancxmao/SPARK-25487.

Authored-by: seancxmao <seancxmao@gmail.com>

Signed-off-by: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

## What changes were proposed in this pull request?

This reverts commit 417ad92502.

We decided to keep the current behaviors unchanged and will consider whether we will deprecate the these functions in 3.0. For more details, see the discussion in https://issues.apache.org/jira/browse/SPARK-23715

## How was this patch tested?

The existing tests.

Closes#22505 from gatorsmile/revertSpark-23715.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Refactor `DataSourceWriteBenchmark` and add write benchmark for AVRO.

## How was this patch tested?

Build and run the benchmark.

Closes#22451 from gengliangwang/avroWriteBenchmark.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Legitimate stops of streams may actually cause an exception to be captured by stream execution, because the job throws a SparkException regarding job cancellation during a stop. This PR makes the stop more graceful by swallowing this cancellation error.

## How was this patch tested?

This is pretty hard to test. The existing tests should make sure that we're not swallowing other specific SparkExceptions. I've also run the `KafkaSourceStressForDontFailOnDataLossSuite`100 times, and it didn't fail, whereas it used to be flaky.

Closes#22478 from brkyvz/SPARK-25472.

Authored-by: Burak Yavuz <brkyvz@gmail.com>

Signed-off-by: Burak Yavuz <brkyvz@gmail.com>

## What changes were proposed in this pull request?

In the PR, I propose to add an overloaded method for `sampleBy` which accepts the first argument of the `Column` type. This will allow to sample by any complex columns as well as sampling by multiple columns. For example:

```Scala

spark.createDataFrame(Seq(("Bob", 17), ("Alice", 10), ("Nico", 8), ("Bob", 17),

("Alice", 10))).toDF("name", "age")

.stat

.sampleBy(struct($"name", $"age"), Map(Row("Alice", 10) -> 0.3, Row("Nico", 8) -> 1.0), 36L)

.show()

+-----+---+

| name|age|

+-----+---+

| Nico| 8|

|Alice| 10|

+-----+---+

```

## How was this patch tested?

Added new test for sampling by multiple columns for Scala and test for Java, Python to check that `sampleBy` is able to sample by `Column` type argument.

Closes#22365 from MaxGekk/sample-by-column.

Authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In ArrayContains, we currently cast the right hand side expression to match the element type of the left hand side Array. This may result in down casting and may return wrong result or questionable result.

Example :

```SQL

spark-sql> select array_contains(array(1), 1.34);

true

```

```SQL

spark-sql> select array_contains(array(1), 'foo');

null

```

We should safely coerce both left and right hand side expressions.

## How was this patch tested?

Added tests in DataFrameFunctionsSuite

Closes#22408 from dilipbiswal/SPARK-25417.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This PR proposes to respect `SessionConfigSupport` in SS datasources as well. Currently these are only respected in batch sources:

e06da95cd9/sql/core/src/main/scala/org/apache/spark/sql/DataFrameReader.scala (L198-L203)e06da95cd9/sql/core/src/main/scala/org/apache/spark/sql/DataFrameWriter.scala (L244-L249)

If a developer makes a datasource V2 that supports both structured streaming and batch jobs, batch jobs respect a specific configuration, let's say, URL to connect and fetch data (which end users might not be aware of); however, structured streaming ends up with not supporting this (and should explicitly be set into options).

## How was this patch tested?

Unit tests were added.

Closes#22462 from HyukjinKwon/SPARK-25460.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This goes to revert sequential PRs based on some discussion and comments at https://github.com/apache/spark/pull/16677#issuecomment-422650759.

#22344#22330#22239#16677

## How was this patch tested?

Existing tests.

Closes#22481 from viirya/revert-SPARK-19355-1.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Refactor `FilterPushdownBenchmark` use `main` method. we can use 3 ways to run this test now:

1. bin/spark-submit --class org.apache.spark.sql.execution.benchmark.FilterPushdownBenchmark spark-sql_2.11-2.5.0-SNAPSHOT-tests.jar

2. build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.FilterPushdownBenchmark"

3. SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.FilterPushdownBenchmark"

The method 2 and the method 3 do not need to compile the `spark-sql_*-tests.jar` package. So these two methods are mainly for developers to quickly do benchmark.

## How was this patch tested?

manual tests

Closes#22443 from wangyum/SPARK-25339.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The PR proposes to return the data type of the operands as a result for the `div` operator. Before the PR, `bigint` is always returned. It introduces also a `spark.sql.legacy.integralDivide.returnBigint` config in order to let the users restore the legacy behavior.

## How was this patch tested?

added UTs

Closes#22465 from mgaido91/SPARK-25457.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

For self-join/self-union, Spark will produce a physical plan which has multiple `DataSourceV2ScanExec` instances referring to the same `ReadSupport` instance. In this case, the streaming source is indeed scanned multiple times, and the `numInputRows` metrics should be counted for each scan.

Actually we already have 2 test cases to verify the behavior:

1. `StreamingQuerySuite.input row calculation with same V2 source used twice in self-join`

2. `KafkaMicroBatchSourceSuiteBase.ensure stream-stream self-join generates only one offset in log and correct metrics`.

However, in these 2 tests, the expected result is different, which is super confusing. It turns out that, the first test doesn't trigger exchange reuse, so the source is scanned twice. The second test triggers exchange reuse, and the source is scanned only once.

This PR proposes to improve these 2 tests, to test with/without exchange reuse.

## How was this patch tested?

test only change

Closes#22402 from cloud-fan/bug.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In SPARK-23711, `UnsafeProjection` supports fallback to an interpreted mode. Therefore, this pr fixed code to support the same fallback mode in `MutableProjection` based on `CodeGeneratorWithInterpretedFallback`.

## How was this patch tested?

Added tests in `CodeGeneratorWithInterpretedFallbackSuite`.

Closes#22355 from maropu/SPARK-25358.

Authored-by: Takeshi Yamamuro <yamamuro@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

PythonForeachWriterSuite was failing because RowQueue now needs to have a handle on a SparkEnv with a SerializerManager, so added a mock env with a serializer manager.

Also fixed a typo in the `finally` that was hiding the real exception.

Tested PythonForeachWriterSuite locally, full tests via jenkins.

Closes#22452 from squito/SPARK-25456.

Authored-by: Imran Rashid <irashid@cloudera.com>

Signed-off-by: Imran Rashid <irashid@cloudera.com>

## What changes were proposed in this pull request?

The PR takes over #14036 and it introduces a new expression `IntegralDivide` in order to avoid the several unneded cast added previously.

In order to prove the performance gain, the following benchmark has been run:

```

test("Benchmark IntegralDivide") {

val r = new scala.util.Random(91)

val nData = 1000000

val testDataInt = (1 to nData).map(_ => (r.nextInt(), r.nextInt()))

val testDataLong = (1 to nData).map(_ => (r.nextLong(), r.nextLong()))

val testDataShort = (1 to nData).map(_ => (r.nextInt().toShort, r.nextInt().toShort))

// old code

val oldExprsInt = testDataInt.map(x =>

Cast(Divide(Cast(Literal(x._1), DoubleType), Cast(Literal(x._2), DoubleType)), LongType))

val oldExprsLong = testDataLong.map(x =>

Cast(Divide(Cast(Literal(x._1), DoubleType), Cast(Literal(x._2), DoubleType)), LongType))

val oldExprsShort = testDataShort.map(x =>

Cast(Divide(Cast(Literal(x._1), DoubleType), Cast(Literal(x._2), DoubleType)), LongType))

// new code

val newExprsInt = testDataInt.map(x => IntegralDivide(x._1, x._2))

val newExprsLong = testDataLong.map(x => IntegralDivide(x._1, x._2))

val newExprsShort = testDataShort.map(x => IntegralDivide(x._1, x._2))

Seq(("Long", "old", oldExprsLong),

("Long", "new", newExprsLong),

("Int", "old", oldExprsInt),

("Int", "new", newExprsShort),

("Short", "old", oldExprsShort),

("Short", "new", oldExprsShort)).foreach { case (dt, t, ds) =>

val start = System.nanoTime()

ds.foreach(e => e.eval(EmptyRow))

val endNoCodegen = System.nanoTime()

println(s"Running $nData op with $t code on $dt (no-codegen): ${(endNoCodegen - start) / 1000000} ms")

}

}

```

The results on my laptop are:

```

Running 1000000 op with old code on Long (no-codegen): 600 ms

Running 1000000 op with new code on Long (no-codegen): 112 ms

Running 1000000 op with old code on Int (no-codegen): 560 ms

Running 1000000 op with new code on Int (no-codegen): 135 ms

Running 1000000 op with old code on Short (no-codegen): 317 ms

Running 1000000 op with new code on Short (no-codegen): 153 ms

```

Showing a 2-5X improvement. The benchmark doesn't include code generation as it is pretty hard to test the performance there as for such simple operations the most of the time is spent in the code generation/compilation process.

## How was this patch tested?

added UTs

Closes#22395 from mgaido91/SPARK-16323.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Output `dataFilters` in `DataSourceScanExec.metadata`.

## How was this patch tested?

unit tests

Closes#22435 from wangyum/SPARK-25423.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Spark supports BloomFilter creation for ORC files. This PR aims to add test coverages to prevent accidental regressions like [SPARK-12417](https://issues.apache.org/jira/browse/SPARK-12417).

## How was this patch tested?

Pass the Jenkins with newly added test cases.

Closes#22418 from dongjoon-hyun/SPARK-25427.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Fixes TPCH DDL datatype of `customer.c_nationkey` from `STRING` to `BIGINT` according to spec and `nation.nationkey` in `TPCHQuerySuite.scala`. The rest of the keys are OK.

Note, this will lead to **non-comparable previous results** to new runs involving the customer table.

## How was this patch tested?

Manual tests

Author: npoggi <npmnpm@gmail.com>

Closes#22430 from npoggi/SPARK-25439_Fix-TPCH-customer-c_nationkey.