### What changes were proposed in this pull request?

https://github.com/apache/spark/pull/32015 added a way to run benchmarks much more easily in the same GitHub Actions build. This PR updates the benchmark results by using the way.

**NOTE** that looks like GitHub Actions use four types of CPU given my observations:

- Intel(R) Xeon(R) Platinum 8171M CPU 2.60GHz

- Intel(R) Xeon(R) CPU E5-2673 v4 2.30GHz

- Intel(R) Xeon(R) CPU E5-2673 v3 2.40GHz

- Intel(R) Xeon(R) Platinum 8272CL CPU 2.60GHz

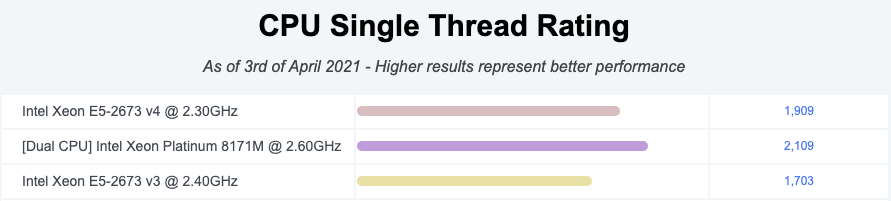

Given my quick research, seems like they perform roughly similarly:

I couldn't find enough information about Intel(R) Xeon(R) Platinum 8272CL CPU 2.60GHz but the performance seems roughly similar given the numbers.

So shouldn't be a big deal especially given that this way is much easier, encourages contributors to run more and guarantee the same number of cores and same memory with the same softwares.

### Why are the changes needed?

To have a base line of the benchmarks accordingly.

### Does this PR introduce _any_ user-facing change?

No, dev-only.

### How was this patch tested?

It was generated from:

- [Run benchmarks: * (JDK 11)](https://github.com/HyukjinKwon/spark/actions/runs/713575465)

- [Run benchmarks: * (JDK 8)](https://github.com/HyukjinKwon/spark/actions/runs/713154337)

Closes#32044 from HyukjinKwon/SPARK-34950.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Max Gekk <max.gekk@gmail.com>

### What changes were proposed in this pull request?

1. Refactored `WithFields` Expression to make it more extensible (now `UpdateFields`).

2. Added a new `dropFields` method to the `Column` class. This method should allow users to drop a `StructField` in a `StructType` column (with similar semantics to the `drop` method on `Dataset`).

### Why are the changes needed?

Often Spark users have to work with deeply nested data e.g. to fix a data quality issue with an existing `StructField`. To do this with the existing Spark APIs, users have to rebuild the entire struct column.

For example, let's say you have the following deeply nested data structure which has a data quality issue (`5` is missing):

```

import org.apache.spark.sql._

import org.apache.spark.sql.functions._

import org.apache.spark.sql.types._

val data = spark.createDataFrame(sc.parallelize(

Seq(Row(Row(Row(1, 2, 3), Row(Row(4, null, 6), Row(7, 8, 9), Row(10, 11, 12)), Row(13, 14, 15))))),

StructType(Seq(

StructField("a", StructType(Seq(

StructField("a", StructType(Seq(

StructField("a", IntegerType),

StructField("b", IntegerType),

StructField("c", IntegerType)))),

StructField("b", StructType(Seq(

StructField("a", StructType(Seq(

StructField("a", IntegerType),

StructField("b", IntegerType),

StructField("c", IntegerType)))),

StructField("b", StructType(Seq(

StructField("a", IntegerType),

StructField("b", IntegerType),

StructField("c", IntegerType)))),

StructField("c", StructType(Seq(

StructField("a", IntegerType),

StructField("b", IntegerType),

StructField("c", IntegerType))))

))),

StructField("c", StructType(Seq(

StructField("a", IntegerType),

StructField("b", IntegerType),

StructField("c", IntegerType))))

)))))).cache

data.show(false)

+---------------------------------+

|a |

+---------------------------------+

|[[1, 2, 3], [[4,, 6], [7, 8, 9]]]|

+---------------------------------+

```

Currently, to drop the missing value users would have to do something like this:

```

val result = data.withColumn("a",

struct(

$"a.a",

struct(

struct(

$"a.b.a.a",

$"a.b.a.c"

).as("a"),

$"a.b.b",

$"a.b.c"

).as("b"),

$"a.c"

))

result.show(false)

+---------------------------------------------------------------+

|a |

+---------------------------------------------------------------+

|[[1, 2, 3], [[4, 6], [7, 8, 9], [10, 11, 12]], [13, 14, 15]]|

+---------------------------------------------------------------+

```

As you can see above, with the existing methods users must call the `struct` function and list all fields, including fields they don't want to change. This is not ideal as:

>this leads to complex, fragile code that cannot survive schema evolution.

[SPARK-16483](https://issues.apache.org/jira/browse/SPARK-16483)

In contrast, with the method added in this PR, a user could simply do something like this to get the same result:

```

val result = data.withColumn("a", 'a.dropFields("b.a.b"))

result.show(false)

+---------------------------------------------------------------+

|a |

+---------------------------------------------------------------+

|[[1, 2, 3], [[4, 6], [7, 8, 9], [10, 11, 12]], [13, 14, 15]]|

+---------------------------------------------------------------+

```

This is the second of maybe 3 methods that could be added to the `Column` class to make it easier to manipulate nested data.

Other methods under discussion in [SPARK-22231](https://issues.apache.org/jira/browse/SPARK-22231) include `withFieldRenamed`.

However, this should be added in a separate PR.

### Does this PR introduce _any_ user-facing change?

The documentation for `Column.withField` method has changed to include an additional note about how to write optimized queries when adding multiple nested Column directly.

### How was this patch tested?

New unit tests were added. Jenkins must pass them.

### Related JIRAs:

More discussion on this topic can be found here:

- https://issues.apache.org/jira/browse/SPARK-22231

- https://issues.apache.org/jira/browse/SPARK-16483Closes#29795 from fqaiser94/SPARK-32511-dropFields-second-try.

Authored-by: fqaiser94@gmail.com <fqaiser94@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>