### What changes were proposed in this pull request?

Update `InsertAdaptiveSparkPlan` to not log warning if AQE is skipped intentionally.

This PR also add a config to not skip AQE.

### Why are the changes needed?

It's not a warning at all if we intentionally skip AQE.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

run `AdaptiveQueryExecSuite` locally and verify that there is no warning logs.

Closes#27452 from cloud-fan/aqe.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>

### What changes were proposed in this pull request?

Add examples and parameter description for these Scala functions:

* transform

* exists

* forall

* aggregate

* zip_with

* transform_keys

* transform_values

* map_filter

* map_zip_with

### Why are the changes needed?

Better documentation for UX.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Pass Jenkins.

Closes#27449 from Ngone51/doc-funcs.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Use `CommandUtils.calculateTotalLocationSize` for `AnalyzePartitionCommand` in order to calculate location sizes in parallel.

### Why are the changes needed?

For better performance.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Pass Jenkins.

Closes#27471 from Ngone51/dev_calculate_in_parallel.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

When I running the `window_part2.sql` tests find it lack insert sql. Therefore, the output is empty.

I checked the postgresql and reference https://github.com/postgres/postgres/blob/master/src/test/regress/sql/window.sql

Although `window_part1.sql` and `window_part3.sql` exists the insert sql, I think should also add it into `window_part2.sql`.

Because only one case reference the table `empsalary` and it throws `AnalysisException`.

```

-- !query

select last(salary) over(order by salary range between 1000 preceding and 1000 following),

lag(salary) over(order by salary range between 1000 preceding and 1000 following),

salary from empsalary

-- !query schema

struct<>

-- !query output

org.apache.spark.sql.AnalysisException

Window Frame specifiedwindowframe(RangeFrame, -1000, 1000) must match the required frame specifiedwindowframe(RowFrame, -1, -1);

```

So we should do four work:

1. comment out the only one case and create a new ticket.

2. Add `INSERT INTO empsalary`.

Note: window_part4.sql not use the table `empsalary`.

### Why are the changes needed?

Supplementary test data.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

New test case

Closes#27439 from beliefer/add-insert-to-window.

Authored-by: beliefer <beliefer@163.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR fixes the issue where queries with qualified columns like `SELECT t.a FROM t` would fail to resolve for v2 tables.

This PR would allow qualified column names in query as following:

```SQL

SELECT testcat.ns1.ns2.tbl.foo FROM testcat.ns1.ns2.tbl

SELECT ns1.ns2.tbl.foo FROM testcat.ns1.ns2.tbl

SELECT ns2.tbl.foo FROM testcat.ns1.ns2.tbl

SELECT tbl.foo FROM testcat.ns1.ns2.tbl

```

### Why are the changes needed?

This is a bug because you cannot qualify column names in queries.

### Does this PR introduce any user-facing change?

Yes, now users can qualify column names for v2 tables.

### How was this patch tested?

Added new tests.

Closes#27391 from imback82/qualified_col.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

update `DataFrameAggregateSuite` to make it pass with AQE

### Why are the changes needed?

We don't need to turn off AQE in `DataFrameAggregateSuite`

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

run `DataFrameAggregateSuite` locally with AQE on.

Closes#27451 from cloud-fan/aqe-test.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Follow up work for SPARK-29864, reference the config `spark.sql.legacy.fromDayTimeString.enabled` in error message.

### Why are the changes needed?

For better usability.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing tests.

Closes#27464 from xuanyuanking/SPARK-29864-follow.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

When spark.sql.ansi.enabled is true, for the statement:

```

select cast(cast(2147483648 as Float) as Integer) //result is 2147483647

```

Its result is 2147483647 and does not throw `ArithmeticException`.

The root cause is that, the below code does not work for some corner cases.

94fc0e3235/sql/catalyst/src/main/scala/org/apache/spark/sql/types/numerics.scala (L129-L141)

For example:

In this PR, I fix it by comparing Math.floor(x) with Int.MaxValue directly.

### Why are the changes needed?

Result corrupt.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Added Unit test.

Closes#27151 from turboFei/SPARK-26218-follow-up-int-overflow.

Authored-by: turbofei <fwang12@ebay.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to partially revert the commit 51a6ba0181, and provide a legacy parser based on `FastDateFormat` which is compatible to `SimpleDateFormat`.

To enable the legacy parser, set `spark.sql.legacy.timeParser.enabled` to `true`.

### Why are the changes needed?

To allow users to restore old behavior in parsing timestamps/dates using `SimpleDateFormat` patterns. The main reason for restoring is `DateTimeFormatter`'s patterns are not fully compatible to `SimpleDateFormat` patterns, see https://issues.apache.org/jira/browse/SPARK-30668

### Does this PR introduce any user-facing change?

Yes

### How was this patch tested?

- Added new test to `DateFunctionsSuite`

- Restored additional test cases in `JsonInferSchemaSuite`.

Closes#27441 from MaxGekk/support-simpledateformat.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR renames `spark.sql.pandas.udf.buffer.size` to `spark.sql.execution.pandas.udf.buffer.size` to be more consistent with other pandas configuration prefixes, given:

- `spark.sql.execution.pandas.arrowSafeTypeConversion`

- `spark.sql.execution.pandas.respectSessionTimeZone`

- `spark.sql.legacy.execution.pandas.groupedMap.assignColumnsByName`

- other configurations like `spark.sql.execution.arrow.*`.

### Why are the changes needed?

To make configuration names consistent.

### Does this PR introduce any user-facing change?

No because this configuration was not released yet.

### How was this patch tested?

Existing tests should cover.

Closes#27450 from HyukjinKwon/SPARK-27870-followup.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This reverts commit b89c3de1a4.

### Why are the changes needed?

`FIRST_VALUE` is used only for window expression. Please see the discussion on https://github.com/apache/spark/pull/25082 .

### Does this PR introduce any user-facing change?

Yes.

### How was this patch tested?

Pass the Jenkins.

Closes#27458 from dongjoon-hyun/SPARK-28310.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This is a follow-up for #22787. In #22787 we disallowed empty strings for json parser except for string and binary types. This follow-up adds a legacy config for restoring previous behavior of allowing empty string.

### Why are the changes needed?

Adding a legacy config to make migration easy for Spark users.

### Does this PR introduce any user-facing change?

Yes. If set this legacy config to true, the users can restore previous behavior prior to Spark 3.0.0.

### How was this patch tested?

Unit test.

Closes#27456 from viirya/SPARK-25040-followup.

Lead-authored-by: Liang-Chi Hsieh <liangchi@uber.com>

Co-authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

All legacy SQL configs are marked as internal configs. In particular, the following configs are updated as internals:

- spark.sql.legacy.sizeOfNull

- spark.sql.legacy.replaceDatabricksSparkAvro.enabled

- spark.sql.legacy.typeCoercion.datetimeToString.enabled

- spark.sql.legacy.looseUpcast

- spark.sql.legacy.arrayExistsFollowsThreeValuedLogic

### Why are the changes needed?

In general case, users shouldn't change legacy configs, so, they can be marked as internals.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Should be tested by jenkins build and run tests.

Closes#27448 from MaxGekk/legacy-internal-sql-conf.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR is to fix a potential bug in AQE where an `ExecSubqueryExpression` could be mistakenly replaced with another `ExecSubqueryExpression` with the same `ListQuery` but a different `child` expression.

This is because a ListQuery's id can only identify the ListQuery itself, not the parent expression `InSubquery`, but right now the `subqueryMap` in `InsertAdaptiveSparkPlan` uses the `ListQuery`'s id as key and the corresponding `InSubqueryExec` for the `ListQuery`'s parent expression as value. So the fix uses the corresponding `SubqueryExec` for the `ListQuery` itself as the map's value.

### Why are the changes needed?

This logical bug could potentially cause a wrong query plan, which could throw an exception related to unresolved columns.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Passed existing UTs.

Closes#27446 from maryannxue/spark-30717.

Authored-by: maryannxue <maryannxue@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

HiveTableScanExec does not prune partitions again after SessionCatalog.listPartitionsByFilter called.

### Why are the changes needed?

In HiveTableScanExec, it will push down to hive metastore for partition pruning if spark.sql.hive.metastorePartitionPruning is true, and then it will prune the returned partitions again using partition filters, because some predicates, eg. "b like 'xyz'", are not supported in hive metastore. But now this problem is already fixed in HiveExternalCatalog.listPartitionsByFilter, the HiveExternalCatalog.listPartitionsByFilter can return exactly what we want now. So it is not necessary any more to double prune in HiveTableScanExec.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

Existing unit tests.

Closes#27232 from fuwhu/SPARK-30525.

Authored-by: fuwhu <bestwwg@163.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Put the configs below needed by Structured Streaming UI into StaticSQLConf:

- spark.sql.streaming.ui.enabled

- spark.sql.streaming.ui.retainedProgressUpdates

- spark.sql.streaming.ui.retainedQueries

### Why are the changes needed?

Make all SS UI configs consistent with other similar configs in usage and naming.

### Does this PR introduce any user-facing change?

Yes, add new static config `spark.sql.streaming.ui.retainedProgressUpdates`.

### How was this patch tested?

Existing UT.

Closes#27425 from xuanyuanking/SPARK-29543-follow.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Shixiong Zhu <zsxwing@gmail.com>

### What changes were proposed in this pull request?

Adds NoSuchDatabaseException and NoSuchNamespaceException to the `isView` method for SessionCatalog.

### Why are the changes needed?

This method prevents specialized resolutions from kicking in within Analysis when using V2 Catalogs if the identifier is a specialized identifier.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Added test to DataSourceV2SessionCatalogSuite

Closes#27423 from brkyvz/isViewF.

Authored-by: Burak Yavuz <brkyvz@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This patch adds a DDL command `SHOW CREATE TABLE AS SERDE`. It is used to generate Hive DDL for a Hive table.

For original `SHOW CREATE TABLE`, it now shows Spark DDL always. If given a Hive table, it tries to generate Spark DDL.

For Hive serde to data source conversion, this uses the existing mapping inside `HiveSerDe`. If can't find a mapping there, throws an analysis exception on unsupported serde configuration.

It is arguably that some Hive fileformat + row serde might be mapped to Spark data source, e.g., CSV. It is not included in this PR. To be conservative, it may not be supported.

For Hive serde properties, for now this doesn't save it to Spark DDL because it may not useful to keep Hive serde properties in Spark table.

## How was this patch tested?

Added test.

Closes#24938 from viirya/SPARK-27946.

Lead-authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Co-authored-by: Liang-Chi Hsieh <liangchi@uber.com>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>

### What changes were proposed in this pull request?

This PR adds `SparkSession.executeCommand` API for external datasource to execute a random command like

```

val df = spark.executeCommand("xxxCommand", "xxxSource", "xxxOptions")

```

Note that the command doesn't execute in Spark, but inside an external execution engine depending on data source. And it will be eagerly executed after `executeCommand` called and the returned `DataFrame` will contain the output of the command(if any).

### Why are the changes needed?

This can be useful when user wants to execute some commands out of Spark. For example, executing custom DDL/DML command for JDBC, creating index for ElasticSearch, creating cores for Solr and so on(as HyukjinKwon suggested).

Previously, user needs to use an option to achieve the goal, e.g. `spark.read.format("xxxSource").option("command", "xxxCommand").load()`, which is kind of cumbersome. With this change, it can be more convenient for user to achieve the same goal.

### Does this PR introduce any user-facing change?

Yes, new API from `SparkSession` and a new interface `ExternalCommandRunnableProvider`.

### How was this patch tested?

Added a new test suite.

Closes#27199 from Ngone51/dev-executeCommand.

Lead-authored-by: yi.wu <yi.wu@databricks.com>

Co-authored-by: Xiao Li <gatorsmile@gmail.com>

Co-authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>

### What changes were proposed in this pull request?

- Replace `new Integer(0)` by a serializable instance in RDD.scala

- Use `.valueOf()` instead of constructors of `java.lang.Integer` and `java.lang.Double` because constructors has been deprecated, see https://docs.oracle.com/javase/9/docs/api/java/lang/Integer.html

### Why are the changes needed?

This fixes the following warnings:

1. RDD.scala:240: constructor Integer in class Integer is deprecated: see corresponding Javadoc for more information.

2. MutableProjectionSuite.scala:63: constructor Integer in class Integer is deprecated: see corresponding Javadoc for more information.

3. UDFSuite.scala:446: constructor Integer in class Integer is deprecated: see corresponding Javadoc for more information.

4. UDFSuite.scala:451: constructor Double in class Double is deprecated: see corresponding Javadoc for more information.

5. HiveUserDefinedTypeSuite.scala:71: constructor Double in class Double is deprecated: see corresponding Javadoc for more information.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

- By RDDSuite, MutableProjectionSuite, UDFSuite and HiveUserDefinedTypeSuite

Closes#27399 from MaxGekk/eliminate-warning-part4.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

Added description for metrics shown in the WholeStageCodegen-node in DAG viz.

This is before the change is applied.

And following is after change.

For this change, I also modify the layout of DAG viz.

Actually, I noticed it's not enough to just added the description.

Following is without changing the layout.

### Why are the changes needed?

Users can't understand what those metrics mean.

### Does this PR introduce any user-facing change?

Yes. The layout is a little bit changed.

### How was this patch tested?

I confirm the result of DAG viz with following 3 operations.

`sc.parallelize(1 to 10).toDF.sort("value").filter("value > 1").selectExpr("value * 2").show`

`sc.parallelize(1 to 10).toDF.sort("value").filter("value > 1").selectExpr("value * 2").write.format("json").mode("overwrite").save("/tmp/test_output")`

`sc.parallelize(1 to 10).toDF.write.format("json").mode("append").save("/tmp/test_output")`

Closes#27405 from sarutak/sql-dag-metrics.

Authored-by: Kousuke Saruta <sarutak@oss.nttdata.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR reflects review comments on post-hoc review among PRs for SPARK-29779 (#27085), SPARK-30479 (#27164). The list of review comments this PR addresses are below:

* https://github.com/apache/spark/pull/27085#discussion_r373304218

* https://github.com/apache/spark/pull/27164#discussion_r373300793

* https://github.com/apache/spark/pull/27164#discussion_r373301193

* https://github.com/apache/spark/pull/27164#discussion_r373301351

I also applied review comments to the CORE module (BasicEventFilterBuilder.scala) as well, as the review comments for SQL/core module (SQLEventFilterBuilder.scala) can be applied there as well.

### Why are the changes needed?

There're post-hoc reviews on PRs for such issues, like links in above section.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Existing UTs.

Closes#27414 from HeartSaVioR/SPARK-28869-SPARK-29779-SPARK-30479-FOLLOWUP-posthoc-reviews.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

This PR solves two bugs related to streaming limits

**Bug 1 (SPARK-30658)**: Limit before a streaming aggregate (i.e. `df.limit(5).groupBy().count()`) in complete mode was not being planned as a stateful streaming limit. The planner rule planned a logical limit with a stateful streaming limit plan only if the query is in append mode. As a result, instead of allowing max 5 rows across batches, the planned streaming query was allowing 5 rows in every batch thus producing incorrect results.

**Solution**: Change the planner rule to plan the logical limit with a streaming limit plan even when the query is in complete mode if the logical limit has no stateful operator before it.

**Bug 2 (SPARK-30657)**: `LocalLimitExec` does not consume the iterator of the child plan. So if there is a limit after a stateful operator like streaming dedup in append mode (e.g. `df.dropDuplicates().limit(5)`), the state changes of streaming duplicate may not be committed (most stateful ops commit state changes only after the generated iterator is fully consumed).

**Solution**: Change the planner rule to always use a new `StreamingLocalLimitExec` which always fully consumes the iterator. This is the safest thing to do. However, this will introduce a performance regression as consuming the iterator is extra work. To minimize this performance impact, add an additional post-planner optimization rule to replace `StreamingLocalLimitExec` with `LocalLimitExec` when there is no stateful operator before the limit that could be affected by it.

No

Updated incorrect unit tests and added new ones

Closes#27373 from tdas/SPARK-30657.

Authored-by: Tathagata Das <tathagata.das1565@gmail.com>

Signed-off-by: Shixiong Zhu <zsxwing@gmail.com>

All credit to Ngone51, Closes#27293.

### What changes were proposed in this pull request?

This PR improves `AlterTableAddPartitionCommand` by:

1. adds an internal config for partitions batch size to avoid hard code

2. reuse `InMemoryFileIndex.bulkListLeafFiles` to perform parallel file listing to improve code reuse

### Why are the changes needed?

Improve code quality.

### Does this PR introduce any user-facing change?

Yes. We renamed `spark.sql.statistics.parallelFileListingInStatsComputation.enabled` to `spark.sql.parallelFileListingInCommands.enabled` as a side effect of this change.

### How was this patch tested?

Pass Jenkins.

Closes#27413 from xuanyuanking/SPARK-29938.

Lead-authored-by: yi.wu <yi.wu@databricks.com>

Co-authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Adds an Analyzer rule to normalize the column names used in V2 AlterTable table changes. We need to handle all ColumnChange operations. We add an extra match statement for future proofing new changes that may be added. This prevents downstream consumers (e.g. catalogs) to deal about case sensitivity or check that columns exist, etc.

We also fix the behavior for ALTER TABLE CHANGE COLUMN (Hive style syntax) for adding comments to complex data types. Currently, the data type needs to be provided as part of the Hive style syntax. This assumes that the data type as changed when it may have not and the user only wants to add a comment, which fails in CheckAnalysis.

### Why are the changes needed?

Currently we do not handle case sensitivity correctly for ALTER TABLE ALTER COLUMN operations.

### Does this PR introduce any user-facing change?

No, fixes a bug.

### How was this patch tested?

Introduced v2CommandsCaseSensitivitySuite and added a test around HiveStyle Change columns to PlanResolutionSuite

Closes#27350 from brkyvz/normalizeAlter.

Authored-by: Burak Yavuz <brkyvz@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR makes `SparkSession.emptyDataFrame` use an empty local relation instead of an empty RDD. This allows to optimizer to recognize this as an empty relation, and creates the opportunity to do some more aggressive optimizations.

### Why are the changes needed?

It allows us to optimize empty dataframes better.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Added a test case to `DataFrameSuite`.

Closes#27400 from hvanhovell/SPARK-30671.

Authored-by: herman <herman@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR renames a variable `SINGLETON` to `INSTANCE`.

### Why are the changes needed?

This is a minor change for consistency with the other parts.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Pass the existing tests.

Closes#27409 from dongjoon-hyun/SPARK-30192.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

We propose to add a new interface `SupportsAdmissionControl` and `ReadLimit`. A ReadLimit defines how much data should be read in the next micro-batch. `SupportsAdmissionControl` specifies that a source can rate limit its ingest into the system. The source can tell the system what the user specified as a read limit, and the system can enforce this limit within each micro-batch or impose its own limit if the Trigger is Trigger.Once() for example.

We then use this interface in FileStreamSource, KafkaSource, and KafkaMicroBatchStream.

### Why are the changes needed?

Sources currently have no information around execution semantics such as whether the stream is being executed in Trigger.Once() mode. This interface will pass this information into the sources as part of planning. With a trigger like Trigger.Once(), the semantics are to process all the data available to the datasource in a single micro-batch. However, this semantic can be broken when data source options such as `maxOffsetsPerTrigger` (in the Kafka source) rate limit the amount of data read for that micro-batch without this interface.

### Does this PR introduce any user-facing change?

DataSource developers can extend this interface for their streaming sources to add admission control into their system and correctly support Trigger.Once().

### How was this patch tested?

Existing tests, as this API is mostly internal

Closes#27380 from brkyvz/rateLimit.

Lead-authored-by: Burak Yavuz <brkyvz@gmail.com>

Co-authored-by: Burak Yavuz <burak@databricks.com>

Signed-off-by: Burak Yavuz <brkyvz@gmail.com>

### What changes were proposed in this pull request?

Instead of having several overloads of `getTable` method in `TableProvider`, it's better to have 2 methods explicitly: `inferSchema` and `inferPartitioning`. With a single `getTable` method that takes everything: schema, partitioning and properties.

This PR also adds a `supportsExternalMetadata` method in `TableProvider`, to indicate if the source support external table metadata. If this flag is false:

1. spark.read.schema... is disallowed and fails

2. when we support creating v2 tables in session catalog, spark only keeps table properties in the catalog.

### Why are the changes needed?

API improvement.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

existing tests

Closes#26868 from cloud-fan/provider2.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Credit to uncleGen for discovering the problem and providing simple reproducer as UT. New UT in this patch is borrowed from #26156 and I'm retaining a commit from #26156 (except unnecessary part on this path) to properly give a credit.

This patch fixes the issue that partition ID could be mis-assigned when the query contains UNION and stream-stream join is placed on the right side. We assume the range of partition IDs as `(0 ~ number of shuffle partitions - 1)` for stateful operators, but when we use stream-stream join on the right side of UNION, the range of partition ID of task goes to `(number of partitions in left side, number of partitions in left side + number of shuffle partitions - 1)`, which `number of partitions in left side` can be changed in some cases (new UT points out the one of the cases).

The root reason of bug is that stream-stream join picks the partition ID from TaskContext, which wouldn't be same as partition ID from source if union is being used. Hopefully we can pick the right partition ID from source in StateStoreAwareZipPartitionsRDD - this patch leverages that partition ID.

### Why are the changes needed?

This patch will fix the broken of assumption of partition range on stateful operator, as well as fix the issue reported in JIRA issue SPARK-29438.

### Does this PR introduce any user-facing change?

Yes, if their query is using UNION and stream-stream join is placed on the right side. They may encounter the problem to read state from checkpoint and may need to discard checkpoint to continue.

### How was this patch tested?

Added UT which fails on current master branch, and passes with this patch.

Closes#26162 from HeartSaVioR/SPARK-29438.

Lead-authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Co-authored-by: uncleGen <hustyugm@gmail.com>

Signed-off-by: Tathagata Das <tathagata.das1565@gmail.com>

### What changes were proposed in this pull request?

This patch makes the test cases in HiveShowCreateTableSuite create Hive table instead of data source table.

### Why are the changes needed?

Because SparkSQL now creates data source table if no provider is specified in SQL command, some test cases in HiveShowCreateTableSuite don't create Hive table, but data source table.

It is confusing and not good for the purpose of this test suite.

### Does this PR introduce any user-facing change?

No, only test case.

### How was this patch tested?

Unit test.

Closes#27393 from viirya/SPARK-30673.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Liang-Chi Hsieh <liangchi@uber.com>

### What changes were proposed in this pull request?

Update `ResolvedNamespace` to accept catalog as `CatalogPlugin` not `SupportsNamespaces`.

This is extracted from https://github.com/apache/spark/pull/27345

### Why are the changes needed?

not all commands that need to resolve namespaces require a namespace catalog. For example, `SHOW TABLE` is implemented by `TableCatalog.listTables`, and is nothing to do with `SupportsNamespace`.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

existing tests

Closes#27403 from cloud-fan/ns.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

override `Command.stats` to return a dummy statistics (Long.Max).

### Why are the changes needed?

Commands are eagerly executed. They will be converted to LocalRelation after the DataFrame is created. That said, the statistics of a command is useless. We should avoid unnecessary statistics calculation of command's children.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

new test

Closes#27344 from cloud-fan/command.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR is a follow-up of #25728. #25728 introduces additional arguments to determine sort order. Thus, this function does not sort only in ascending order. However, the description was not updated.

This PR updates the description to follow the latest feature.

### Why are the changes needed?

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Existing tests since this PR just updates description text.

Closes#27404 from kiszk/SPARK-29020-followup.

Authored-by: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Fix following typos:

- tranformation -> transformation

- the boolean -> the Boolean

- signle -> single

### Why are the changes needed?

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Scala linter.

Closes#27382 from zero323/functions-typos.

Authored-by: zero323 <mszymkiewicz@gmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

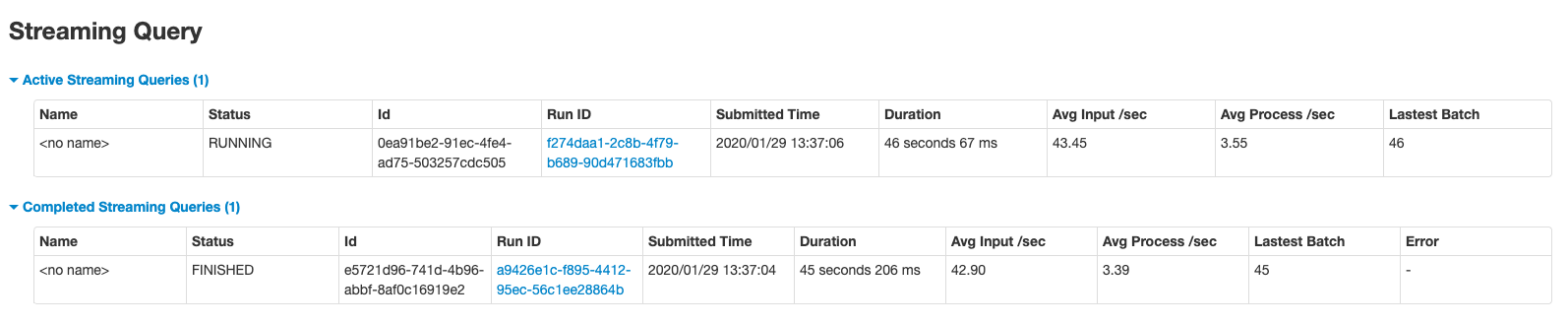

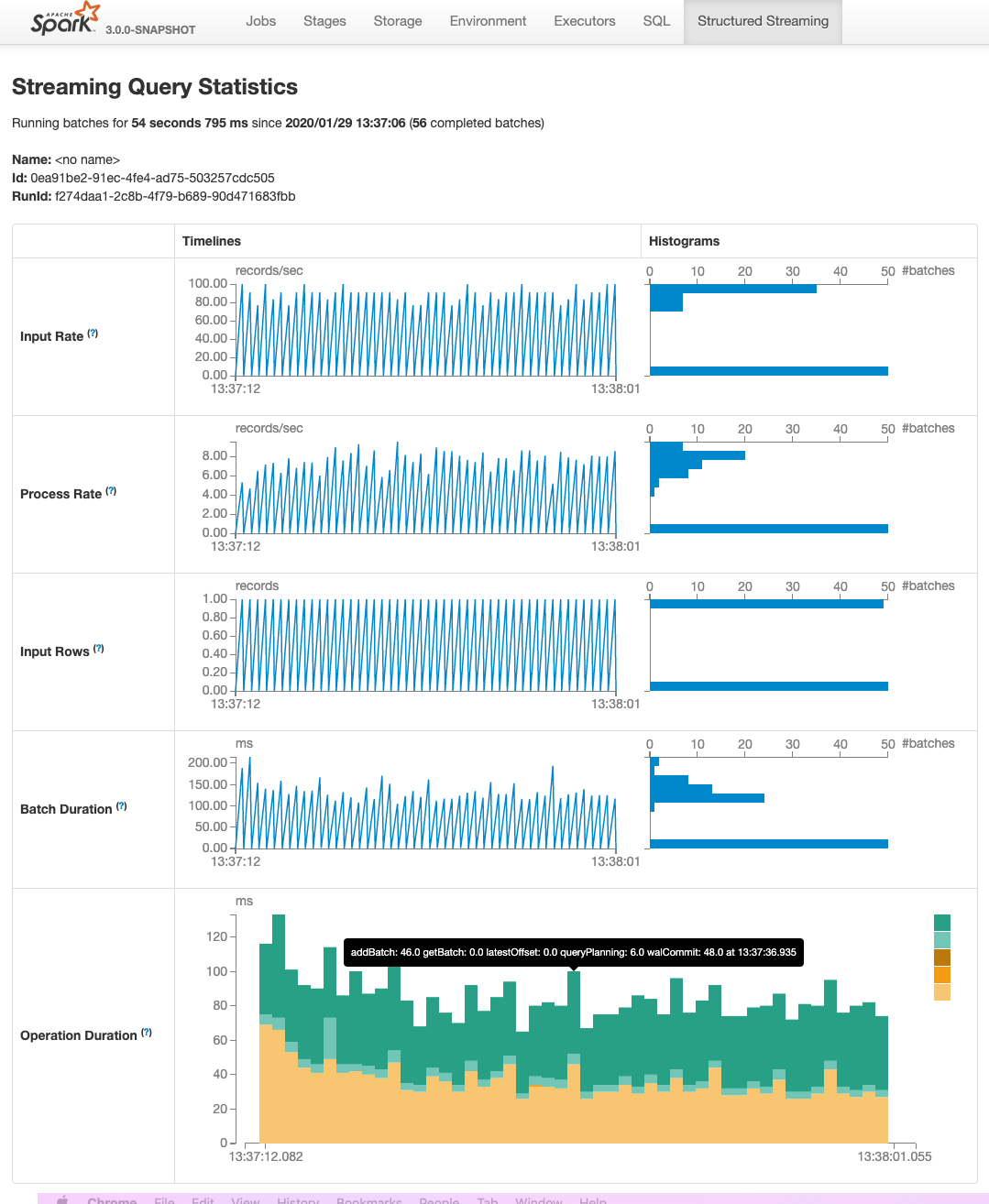

This PR adds two pages to Web UI for Structured Streaming:

- "/streamingquery": Streaming Query Page, providing some aggregate information for running/completed streaming queries.

- "/streamingquery/statistics": Streaming Query Statistics Page, providing detailed information for streaming query, including `Input Rate`, `Process Rate`, `Input Rows`, `Batch Duration` and `Operation Duration`

### Why are the changes needed?

It helps users to better monitor Structured Streaming query.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

- new added and existing UTs

- manual test

Closes#26201 from uncleGen/SPARK-29543.

Lead-authored-by: uncleGen <hustyugm@gmail.com>

Co-authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Co-authored-by: Genmao Yu <hustyugm@gmail.com>

Signed-off-by: Shixiong Zhu <zsxwing@gmail.com>

### What changes were proposed in this pull request?

This pr intends to rename `spark.sql.legacy.addDirectory.recursive` into `spark.sql.legacy.addDirectory.recursive.enabled`.

### Why are the changes needed?

For consistent option names.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

N/A

Closes#27372 from maropu/SPARK-30234-FOLLOWUP.

Authored-by: Takeshi Yamamuro <yamamuro@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This is a follow-up for https://github.com/apache/spark/pull/24098 to refactor to build string once according to the [review comment](https://github.com/apache/spark/pull/24098#discussion_r369845234)

### Why are the changes needed?

Previously, we chose the minimal change way.

In this PR, we choose a more robust way than the previous post-step string processing.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

The test case is extended with more cases.

Closes#27353 from dongjoon-hyun/SPARK-27166-2.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

In the PR, I propose to transform the `Like` expression to `TernaryExpression`, and add third parameter `escape`. So, the `like` function will have feature parity with `LIKE ... ESCAPE` syntax supported by 187f3c1773.

### Why are the changes needed?

The `like` functions can be called with 2 or 3 parameters, and functionally equivalent to `LIKE` and `LIKE ... ESCAPE` SQL expressions.

### Does this PR introduce any user-facing change?

Yes, before `like` fails with the exception:

```sql

spark-sql> SELECT like('_Apache Spark_', '__%Spark__', '_');

Error in query: Invalid number of arguments for function like. Expected: 2; Found: 3; line 1 pos 7

```

After:

```sql

spark-sql> SELECT like('_Apache Spark_', '__%Spark__', '_');

true

```

### How was this patch tested?

- Add new example for the `like` function which is checked by `SQLQuerySuite`

- Run `RegexpExpressionsSuite` and `ExpressionParserSuite`.

Closes#27355 from MaxGekk/like-3-args.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Allow for using longs as seed for xxHash.

### Why are the changes needed?

Codegen fails when passing a seed to xxHash that is > 2^31.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing tests pass. Should more be added?

Closes#27354 from patrickcording/fix_xxhash_seed_bug.

Authored-by: Patrick Cording <patrick.cording@datarobot.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This patch converts CR/LF into LF in 3 source files, which most files are only using LF. This patch also add rules to enforce EOL as LF for all java, scala, xml, py, R files.

### Why are the changes needed?

The majority of source code files are using LF and only three files are CR/LF. While using IDE would let us don't bother with the difference, it still has a chance to make unnecessary diff if the file is modified with the editor which doesn't handle it automatically.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

```

grep -IUrl --color "^M" . | grep "\.java\|\.scala\|\.xml\|\.py\|\.R" | grep -v "/target/" | grep -v "/build/" | grep -v "/dist/" | grep -v "dependency-reduced-pom.xml" | grep -v ".pyc"

```

(Please note you'll need to type CTRL+V -> CTRL+M in bash shell to get `^M` because it's representing CR/LF, not a combination of `^` and `M`.)

Before the patch, the result is:

```

./sql/core/src/main/java/org/apache/spark/sql/execution/columnar/ColumnDictionary.java

./sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/optimizer/complexTypesSuite.scala

./sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/optimizer/ComplexTypes.scala

```

and after the patch, the result is None.

And git shows WARNING message if EOL of any of source files in given types are modified to CR/LF, like below:

```

warning: CRLF will be replaced by LF in sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/analysis/Analyzer.scala.

The file will have its original line endings in your working directory.

```

Closes#27365 from HeartSaVioR/MINOR-remove-CRLF-in-source-codes.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Add identifier and catalog information in DataSourceV2Relation so it would be possible to do richer checks in checkAnalysis step.

### Why are the changes needed?

In data source v2, table implementations are all customized so we may not be able to get the resolved identifier from tables them selves. Therefore we encode the table and catalog information in DSV2Relation so no external changes are needed to make sure this information is available.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Unit tests in the following suites:

CatalogManagerSuite.scala

CatalogV2UtilSuite.scala

SupportsCatalogOptionsSuite.scala

PlanResolutionSuite.scala

Closes#26957 from yuchenhuo/SPARK-30314.

Authored-by: Yuchen Huo <yuchen.huo@databricks.com>

Signed-off-by: Burak Yavuz <brkyvz@gmail.com>

### What changes were proposed in this pull request?

This PR is to remove query index from the golden files of SQLQueryTestSuite

### Why are the changes needed?

Because the SQLQueryTestSuite's golden files have the query index for each query, removal of any query statement [except the last one] will generate many unneeded difference. This will make code review harder. The number of changed lines is misleading.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

N/A

Closes#27361 from gatorsmile/removeIndexNum.

Authored-by: Xiao Li <gatorsmile@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

This reverts commit 1d20d13149.

Closes#27351 from gatorsmile/revertSPARK25496.

Authored-by: Xiao Li <gatorsmile@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This patch proposes to prune unnecessary nested fields from Generate which has no Project on top of it.

### Why are the changes needed?

In Optimizer, we can prune nested columns from Project(projectList, Generate). However, unnecessary columns could still possibly be read in Generate, if no Project on top of it. We should prune it too.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Unit test.

Closes#26978 from viirya/SPARK-29721.

Lead-authored-by: Liang-Chi Hsieh <liangchi@uber.com>

Co-authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Disable all the V2 file sources in Spark 3.0 by default.

### Why are the changes needed?

There are still some missing parts in the file source V2 framework:

1. It doesn't support reporting file scan metrics such as "numOutputRows"/"numFiles"/"fileSize" like `FileSourceScanExec`. This requires another patch in the data source V2 framework. Tracked by [SPARK-30362](https://issues.apache.org/jira/browse/SPARK-30362)

2. It doesn't support partition pruning with subqueries(including dynamic partition pruning) for now. Tracked by [SPARK-30628](https://issues.apache.org/jira/browse/SPARK-30628)

As we are going to code freeze on Jan 31st, this PR proposes to disable all the V2 file sources in Spark 3.0 by default.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Existing tests.

Closes#27348 from gengliangwang/disableFileSourceV2.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR is a follow-up PR https://github.com/apache/spark/pull/25666 for adding the description and example for the Scala function API `filter`.

### Why are the changes needed?

It is hard to tell which parameter is the index column.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

N/A

Closes#27336 from gatorsmile/spark28962.

Authored-by: Xiao Li <gatorsmile@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR is to rename spark.sql.subquery.reuse to spark.sql.execution.subquery.reuse.enabled

### Why are the changes needed?

Make it consistent and clear.

### Does this PR introduce any user-facing change?

N/A. This is a [new conf added in Spark 3.0](https://github.com/apache/spark/pull/23998)

### How was this patch tested?

N/A

Closes#27346 from gatorsmile/spark27083.

Authored-by: Xiao Li <gatorsmile@gmail.com>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>