## What changes were proposed in this pull request?

Try testing timezones in parallel instead in CastSuite, instead of random sampling.

See also #22631

## How was this patch tested?

Existing test.

Closes#22672 from srowen/SPARK-25605.2.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This is a follow up of https://github.com/apache/spark/pull/22574. Renamed the parameter and added comments.

## How was this patch tested?

N/A

Closes#22679 from gatorsmile/followupSPARK-25559.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: DB Tsai <d_tsai@apple.com>

## What changes were proposed in this pull request?

This PR is inspired by https://github.com/apache/spark/pull/22524, but proposes a safer fix.

The current limit whole stage codegen has 2 problems:

1. It's only applied to `InputAdapter`, many leaf nodes can't stop earlier w.r.t. limit.

2. It needs to override a method, which will break if we have more than one limit in the whole-stage.

The first problem is easy to fix, just figure out which nodes can stop earlier w.r.t. limit, and update them. This PR updates `RangeExec`, `ColumnarBatchScan`, `SortExec`, `HashAggregateExec`.

The second problem is hard to fix. This PR proposes to propagate the limit counter variable name upstream, so that the upstream leaf/blocking nodes can check the limit counter and quit the loop earlier.

For better performance, the implementation here follows `CodegenSupport.needStopCheck`, so that we only codegen the check only if there is limit in the query. For columnar node like range, we check the limit counter per-batch instead of per-row, to make the inner loop tight and fast.

Why this is safer?

1. the leaf/blocking nodes don't have to check the limit counter and stop earlier. It's only for performance. (this is same as before)

2. The blocking operators can stop propagating the limit counter name, because the counter of limit after blocking operators will never increase, before blocking operators consume all the data from upstream operators. So the upstream operators don't care about limit after blocking operators. This is also for performance only, it's OK if we forget to do it for some new blocking operators.

## How was this patch tested?

a new test

Closes#22630 from cloud-fan/limit.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

## What changes were proposed in this pull request?

Currently the first row of dataset of CSV strings is compared to field names of user specified or inferred schema independently of presence of CSV header. It causes false-positive error messages. For example, parsing `"1,2"` outputs the error:

```java

java.lang.IllegalArgumentException: CSV header does not conform to the schema.

Header: 1, 2

Schema: _c0, _c1

Expected: _c0 but found: 1

```

In the PR, I propose:

- Checking CSV header only when it exists

- Filter header from the input dataset only if it exists

## How was this patch tested?

Added a test to `CSVSuite` which reproduces the issue.

Closes#22656 from MaxGekk/inferred-header-check.

Authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

There was 5 suites extends `HadoopFsRelationTest`, for testing "orc"/"parquet"/"text"/"json" data sources.

This PR refactor the base trait `HadoopFsRelationTest`:

1. Rename unnecessary loop for setting parquet conf

2. The test case `SPARK-8406: Avoids name collision while writing files` takes about 14 to 20 seconds. As now all the file format data source are using common code, for creating result files, we can test one data source(Parquet) only to reduce test time.

To run related 5 suites:

```

./build/sbt "hive/testOnly *HadoopFsRelationSuite"

```

The total test run time is reduced from 5 minutes 40 seconds to 3 minutes 50 seconds.

## How was this patch tested?

Unit test

Closes#22643 from gengliangwang/refactorHadoopFsRelationTest.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

While working on another PR, I noticed that there is quite some legacy Java in there that can be beautified. For example the use of features from Java8, such as:

- Collection libraries

- Try-with-resource blocks

No logic has been changed. I think it is important to have a solid codebase with examples that will inspire next PR's to follow up on the best practices.

What are your thoughts on this?

This makes code easier to read, and using try-with-resource makes is less likely to forget to close something.

## What changes were proposed in this pull request?

No changes in the logic of Spark, but more in the aesthetics of the code.

## How was this patch tested?

Using the existing unit tests. Since no logic is changed, the existing unit tests should pass.

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#22637 from Fokko/SPARK-25408.

Authored-by: Fokko Driesprong <fokkodriesprong@godatadriven.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Refactor `HashBenchmark` to use main method.

1. use `spark-submit`:

```console

bin/spark-submit --class org.apache.spark.sql.HashBenchmark --jars ./core/target/spark-core_2.11-3.0.0-SNAPSHOT-tests.jar ./sql/catalyst/target/spark-catalyst_2.11-3.0.0-SNAPSHOT-tests.jar

```

2. Generate benchmark result:

```console

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "catalyst/test:runMain org.apache.spark.sql.HashBenchmark"

```

## How was this patch tested?

manual tests

Closes#22651 from wangyum/SPARK-25657.

Lead-authored-by: Yuming Wang <wgyumg@gmail.com>

Co-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Refactor `HashByteArrayBenchmark` to use main method.

1. use `spark-submit`:

```console

bin/spark-submit --class org.apache.spark.sql.HashByteArrayBenchmark --jars ./core/target/spark-core_2.11-3.0.0-SNAPSHOT-tests.jar ./sql/catalyst/target/spark-catalyst_2.11-3.0.0-SNAPSHOT-tests.jar

```

2. Generate benchmark result:

```console

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "catalyst/test:runMain org.apache.spark.sql.HashByteArrayBenchmark"

```

## How was this patch tested?

manual tests

Closes#22652 from wangyum/SPARK-25658.

Lead-authored-by: Yuming Wang <wgyumg@gmail.com>

Co-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

`InMemoryFileIndex` contains a cache of `LocatedFileStatus` objects. Each `LocatedFileStatus` object can contain several `BlockLocation`s or some subclass of it. Filling up this cache by listing files happens recursively either on the driver or on the executors, depending on the parallel discovery threshold (`spark.sql.sources.parallelPartitionDiscovery.threshold`). If the listing happens on the executors block location objects are converted to simple `BlockLocation` objects to ensure serialization requirements. If it happens on the driver then there is no conversion and depending on the file system a `BlockLocation` object can be a subclass like `HdfsBlockLocation` and consume more memory. This PR adds the conversion to the latter case and decreases memory consumption.

## How was this patch tested?

Added unit test.

Closes#22603 from peter-toth/SPARK-25062.

Authored-by: Peter Toth <peter.toth@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

This PR fixes the Scala-2.12 build error due to ambiguity in `foreachBatch` test cases.

- https://amplab.cs.berkeley.edu/jenkins/view/Spark%20QA%20Test%20(Dashboard)/job/spark-master-test-maven-hadoop-2.7-ubuntu-scala-2.12/428/console

```scala

[error] /home/jenkins/workspace/spark-master-test-maven-hadoop-2.7-ubuntu-scala-2.12/sql/core/src/test/scala/org/apache/spark/sql/execution/streaming/sources/ForeachBatchSinkSuite.scala:102: ambiguous reference to overloaded definition,

[error] both method foreachBatch in class DataStreamWriter of type (function: org.apache.spark.api.java.function.VoidFunction2[org.apache.spark.sql.Dataset[Int],Long])org.apache.spark.sql.streaming.DataStreamWriter[Int]

[error] and method foreachBatch in class DataStreamWriter of type (function: (org.apache.spark.sql.Dataset[Int], Long) => Unit)org.apache.spark.sql.streaming.DataStreamWriter[Int]

[error] match argument types ((org.apache.spark.sql.Dataset[Int], Any) => Unit)

[error] ds.writeStream.foreachBatch((_, _) => {}).trigger(Trigger.Continuous("1 second")).start()

[error] ^

[error] /home/jenkins/workspace/spark-master-test-maven-hadoop-2.7-ubuntu-scala-2.12/sql/core/src/test/scala/org/apache/spark/sql/execution/streaming/sources/ForeachBatchSinkSuite.scala:106: ambiguous reference to overloaded definition,

[error] both method foreachBatch in class DataStreamWriter of type (function: org.apache.spark.api.java.function.VoidFunction2[org.apache.spark.sql.Dataset[Int],Long])org.apache.spark.sql.streaming.DataStreamWriter[Int]

[error] and method foreachBatch in class DataStreamWriter of type (function: (org.apache.spark.sql.Dataset[Int], Long) => Unit)org.apache.spark.sql.streaming.DataStreamWriter[Int]

[error] match argument types ((org.apache.spark.sql.Dataset[Int], Any) => Unit)

[error] ds.writeStream.foreachBatch((_, _) => {}).partitionBy("value").start()

[error] ^

```

## How was this patch tested?

Manual.

Since this failure occurs in Scala-2.12 profile and test cases, Jenkins will not test this. We need to build with Scala-2.12 and run the tests.

Closes#22649 from dongjoon-hyun/SPARK-SCALA212.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Clean up the joinCriteria parsing in the parser by directly using identifierList

## How was this patch tested?

N/A

Closes#22648 from gatorsmile/cleanupJoinCriteria.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Refactor `MiscBenchmark ` to use main method.

Generate benchmark result:

```sh

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.MiscBenchmark"

```

## How was this patch tested?

manual tests

Closes#22500 from wangyum/SPARK-25488.

Lead-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Yuming Wang <wgyumg@gmail.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

By replacing loops with random possible value.

- `read partitioning bucketed tables with bucket pruning filters` reduce from 55s to 7s

- `read partitioning bucketed tables having composite filters` reduce from 54s to 8s

- total time: reduce from 288s to 192s

## How was this patch tested?

Unit test

Closes#22640 from gengliangwang/fastenBucketedReadSuite.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Current the CSV's infer schema code inlines `TypeCoercion.findTightestCommonType`. This is a minor refactor to make use of the common type coercion code when applicable. This way we can take advantage of any improvement to the base method.

Thanks to MaxGekk for finding this while reviewing another PR.

## How was this patch tested?

This is a minor refactor. Existing tests are used to verify the change.

Closes#22619 from dilipbiswal/csv_minor.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Adds support for the setting limit in the sql split function

## How was this patch tested?

1. Updated unit tests

2. Tested using Scala spark shell

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#22227 from phegstrom/master.

Authored-by: Parker Hegstrom <phegstrom@palantir.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In this test case, we are verifying that the result of an UDF is cached when the underlying data frame is cached and that the udf is not evaluated again when the cached data frame is used.

To reduce the runtime we do :

1) Use a single partition dataframe, so the total execution time of UDF is more deterministic.

2) Cut down the size of the dataframe from 10 to 2.

3) Reduce the sleep time in the UDF from 5secs to 2secs.

4) Reduce the failafter condition from 3 to 2.

With the above change, it takes about 4 secs to cache the first dataframe. And subsequent check takes a few hundred milliseconds.

The new runtime for 5 consecutive runs of this test is as follows :

```

[info] - cache UDF result correctly (4 seconds, 906 milliseconds)

[info] - cache UDF result correctly (4 seconds, 281 milliseconds)

[info] - cache UDF result correctly (4 seconds, 288 milliseconds)

[info] - cache UDF result correctly (4 seconds, 355 milliseconds)

[info] - cache UDF result correctly (4 seconds, 280 milliseconds)

```

## How was this patch tested?

This is s test fix.

Closes#22638 from dilipbiswal/SPARK-25610.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

The total run time of `HiveSparkSubmitSuite` is about 10 minutes.

While the related code is stable, add tag `ExtendedHiveTest` for it.

## How was this patch tested?

Unit test.

Closes#22642 from gengliangwang/addTagForHiveSparkSubmitSuite.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Before ORC 1.5.3, `orc.dictionary.key.threshold` and `hive.exec.orc.dictionary.key.size.threshold` are applied for all columns. This has been a big huddle to enable dictionary encoding. From ORC 1.5.3, `orc.column.encoding.direct` is added to enforce direct encoding selectively in a column-wise manner. This PR aims to add that feature by upgrading ORC from 1.5.2 to 1.5.3.

The followings are the patches in ORC 1.5.3 and this feature is the only one related to Spark directly.

```

ORC-406: ORC: Char(n) and Varchar(n) writers truncate to n bytes & corrupts multi-byte data (gopalv)

ORC-403: [C++] Add checks to avoid invalid offsets in InputStream

ORC-405: Remove calcite as a dependency from the benchmarks.

ORC-375: Fix libhdfs on gcc7 by adding #include <functional> two places.

ORC-383: Parallel builds fails with ConcurrentModificationException

ORC-382: Apache rat exclusions + add rat check to travis

ORC-401: Fix incorrect quoting in specification.

ORC-385: Change RecordReader to extend Closeable.

ORC-384: [C++] fix memory leak when loading non-ORC files

ORC-391: [c++] parseType does not accept underscore in the field name

ORC-397: Allow selective disabling of dictionary encoding. Original patch was by Mithun Radhakrishnan.

ORC-389: Add ability to not decode Acid metadata columns

```

## How was this patch tested?

Pass the Jenkins with newly added test cases.

Closes#22622 from dongjoon-hyun/SPARK-25635.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Improve the runtime by reducing the number of partitions created in the test. The number of partitions are reduced from 280 to 60.

Here are the test times for the `getPartitionsByFilter returns all partitions` test on my laptop.

```

[info] - 0.13: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (4 seconds, 230 milliseconds)

[info] - 0.14: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (3 seconds, 576 milliseconds)

[info] - 1.0: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (3 seconds, 495 milliseconds)

[info] - 1.1: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (6 seconds, 728 milliseconds)

[info] - 1.2: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (7 seconds, 260 milliseconds)

[info] - 2.0: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (8 seconds, 270 milliseconds)

[info] - 2.1: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (6 seconds, 856 milliseconds)

[info] - 2.2: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (7 seconds, 587 milliseconds)

[info] - 2.3: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (7 seconds, 230 milliseconds)

## How was this patch tested?

Test only.

Closes#22644 from dilipbiswal/SPARK-25626.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

The java `foreachBatch` API in `DataStreamWriter` should accept `java.lang.Long` rather `scala.Long`.

## How was this patch tested?

New java test.

Closes#22633 from zsxwing/fix-java-foreachbatch.

Authored-by: Shixiong Zhu <zsxwing@gmail.com>

Signed-off-by: Shixiong Zhu <zsxwing@gmail.com>

## What changes were proposed in this pull request?

When constructing a DataFrame from a Java bean, using nested beans throws an error despite [documentation](http://spark.apache.org/docs/latest/sql-programming-guide.html#inferring-the-schema-using-reflection) stating otherwise. This PR aims to add that support.

This PR does not yet add nested beans support in array or List fields. This can be added later or in another PR.

## How was this patch tested?

Nested bean was added to the appropriate unit test.

Also manually tested in Spark shell on code emulating the referenced JIRA:

```

scala> import scala.beans.BeanProperty

import scala.beans.BeanProperty

scala> class SubCategory(BeanProperty var id: String, BeanProperty var name: String) extends Serializable

defined class SubCategory

scala> class Category(BeanProperty var id: String, BeanProperty var subCategory: SubCategory) extends Serializable

defined class Category

scala> import scala.collection.JavaConverters._

import scala.collection.JavaConverters._

scala> spark.createDataFrame(Seq(new Category("s-111", new SubCategory("sc-111", "Sub-1"))).asJava, classOf[Category])

java.lang.IllegalArgumentException: The value (SubCategory65130cf2) of the type (SubCategory) cannot be converted to struct<id:string,name:string>

at org.apache.spark.sql.catalyst.CatalystTypeConverters$StructConverter.toCatalystImpl(CatalystTypeConverters.scala:262)

at org.apache.spark.sql.catalyst.CatalystTypeConverters$StructConverter.toCatalystImpl(CatalystTypeConverters.scala:238)

at org.apache.spark.sql.catalyst.CatalystTypeConverters$CatalystTypeConverter.toCatalyst(CatalystTypeConverters.scala:103)

at org.apache.spark.sql.catalyst.CatalystTypeConverters$$anonfun$createToCatalystConverter$2.apply(CatalystTypeConverters.scala:396)

at org.apache.spark.sql.SQLContext$$anonfun$beansToRows$1$$anonfun$apply$1.apply(SQLContext.scala:1108)

at org.apache.spark.sql.SQLContext$$anonfun$beansToRows$1$$anonfun$apply$1.apply(SQLContext.scala:1108)

at scala.collection.TraversableLike$$anonfun$map$1.apply(TraversableLike.scala:234)

at scala.collection.TraversableLike$$anonfun$map$1.apply(TraversableLike.scala:234)

at scala.collection.IndexedSeqOptimized$class.foreach(IndexedSeqOptimized.scala:33)

at scala.collection.mutable.ArrayOps$ofRef.foreach(ArrayOps.scala:186)

at scala.collection.TraversableLike$class.map(TraversableLike.scala:234)

at scala.collection.mutable.ArrayOps$ofRef.map(ArrayOps.scala:186)

at org.apache.spark.sql.SQLContext$$anonfun$beansToRows$1.apply(SQLContext.scala:1108)

at org.apache.spark.sql.SQLContext$$anonfun$beansToRows$1.apply(SQLContext.scala:1106)

at scala.collection.Iterator$$anon$11.next(Iterator.scala:410)

at scala.collection.Iterator$class.toStream(Iterator.scala:1320)

at scala.collection.AbstractIterator.toStream(Iterator.scala:1334)

at scala.collection.TraversableOnce$class.toSeq(TraversableOnce.scala:298)

at scala.collection.AbstractIterator.toSeq(Iterator.scala:1334)

at org.apache.spark.sql.SparkSession.createDataFrame(SparkSession.scala:423)

... 51 elided

```

New behavior:

```

scala> spark.createDataFrame(Seq(new Category("s-111", new SubCategory("sc-111", "Sub-1"))).asJava, classOf[Category])

res0: org.apache.spark.sql.DataFrame = [id: string, subCategory: struct<id: string, name: string>]

scala> res0.show()

+-----+---------------+

| id| subCategory|

+-----+---------------+

|s-111|[sc-111, Sub-1]|

+-----+---------------+

```

Closes#22527 from michalsenkyr/SPARK-17952.

Authored-by: Michal Senkyr <mike.senkyr@gmail.com>

Signed-off-by: Takuya UESHIN <ueshin@databricks.com>

Hi all,

Jackson is incompatible with upstream versions, therefore bump the Jackson version to a more recent one. I bumped into some issues with Azure CosmosDB that is using a more recent version of Jackson. This can be fixed by adding exclusions and then it works without any issues. So no breaking changes in the API's.

I would also consider bumping the version of Jackson in Spark. I would suggest to keep up to date with the dependencies, since in the future this issue will pop up more frequently.

## What changes were proposed in this pull request?

Bump Jackson to 2.9.6

## How was this patch tested?

Compiled and tested it locally to see if anything broke.

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#21596 from Fokko/fd-bump-jackson.

Authored-by: Fokko Driesprong <fokkodriesprong@godatadriven.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

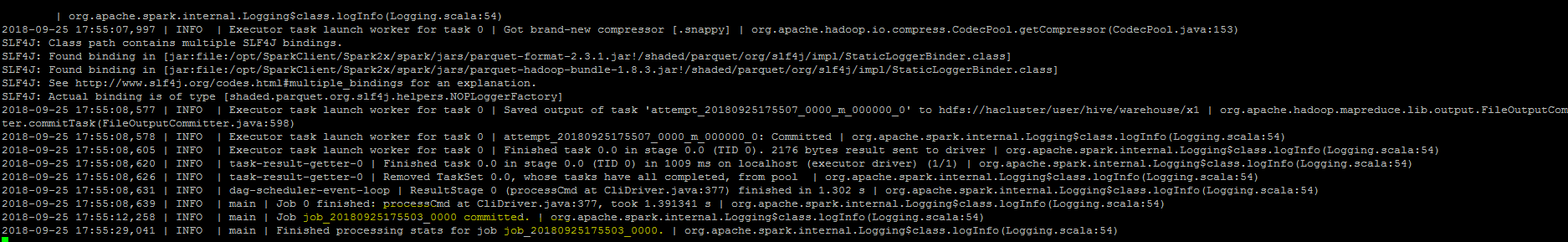

``As part of insert command in FileFormatWriter, a job context is created for handling the write operation , While initializing the job context using setupJob() API

in HadoopMapReduceCommitProtocol , we set the jobid in the Jobcontext configuration.In FileFormatWriter since we are directly getting the jobId from the map reduce JobContext the job id will come as null while adding the log. As a solution we shall get the jobID from the configuration of the map reduce Jobcontext.``

## How was this patch tested?

Manually, verified the logs after the changes.

Closes#22572 from sujith71955/master_log_issue.

Authored-by: s71955 <sujithchacko.2010@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

While working on another PR, I noticed that there is quite some legacy Java in there that can be beautified. For example the use og features from Java8, such as:

- Collection libraries

- Try-with-resource blocks

No code has been changed

What are your thoughts on this?

This makes code easier to read, and using try-with-resource makes is less likely to forget to close something.

## What changes were proposed in this pull request?

(Please fill in changes proposed in this fix)

## How was this patch tested?

(Please explain how this patch was tested. E.g. unit tests, integration tests, manual tests)

(If this patch involves UI changes, please attach a screenshot; otherwise, remove this)

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#22399 from Fokko/SPARK-25408.

Authored-by: Fokko Driesprong <fokkodriesprong@godatadriven.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

The test `cast string to timestamp` used to run for all time zones. So it run for more than 600 times. Running the tests for a significant subset of time zones is probably good enough and doing this in a randomized manner enforces anyway that we are going to test all time zones in different runs.

## How was this patch tested?

the test time reduces to 11 seconds from more than 2 minutes

Closes#22631 from mgaido91/SPARK-25605.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Reduce `DateExpressionsSuite.Hour` test time costs in Jenkins by reduce iteration times.

## How was this patch tested?

Manual tests on my local machine.

before:

```

- Hour (34 seconds, 54 milliseconds)

```

after:

```

- Hour (2 seconds, 697 milliseconds)

```

Closes#22632 from wangyum/SPARK-25606.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

The PR changes the test introduced for SPARK-22226, so that we don't run analysis and optimization on the plan. The scope of the test is code generation and running the above mentioned operation is expensive and useless for the test.

The UT was also moved to the `CodeGenerationSuite` which is a better place given the scope of the test.

## How was this patch tested?

running the UT before SPARK-22226 fails, after it passes. The execution time is about 50% the original one. On my laptop this means that the test now runs in about 23 seconds (instead of 50 seconds).

Closes#22629 from mgaido91/SPARK-25609.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Refactor `DatasetBenchmark` to use main method.

Generate benchmark result:

```sh

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.DatasetBenchmark"

```

## How was this patch tested?

manual tests

Closes#22488 from wangyum/SPARK-25479.

Lead-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

In `SparkPlan.getByteArrayRdd`, we should only call `it.hasNext` when the limit is not hit, as `iter.hasNext` may produce one row and buffer it, and cause wrong metrics.

## How was this patch tested?

new tests

Closes#22621 from cloud-fan/range.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In #20850 when writing non-null decimals, instead of zero-ing all the 16 allocated bytes, we zero-out only the padding bytes. Since we always allocate 16 bytes, if the number of bytes needed for a decimal is lower than 9, then this means that the bytes between 8 and 16 are not zero-ed.

I see 2 solutions here:

- we can zero-out all the bytes in advance as it was done before #20850 (safer solution IMHO);

- we can allocate only the needed bytes (may be a bit more efficient in terms of memory used, but I have not investigated the feasibility of this option).

Hence I propose here the first solution in order to fix the correctness issue. We can eventually switch to the second if we think is more efficient later.

## How was this patch tested?

Running the test attached in the JIRA + added UT

Closes#22602 from mgaido91/SPARK-25582.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Refactor `UnsafeArrayDataBenchmark` to use main method.

Generate benchmark result:

```sh

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.UnsafeArrayDataBenchmark"

```

## How was this patch tested?

manual tests

Closes#22491 from wangyum/SPARK-25483.

Lead-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

This PR aims to add `BloomFilterBenchmark`. For ORC data source, Apache Spark has been supporting for a long time. For Parquet data source, it's expected to be added with next Parquet release update.

## How was this patch tested?

Manual.

```scala

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.BloomFilterBenchmark"

```

Closes#22605 from dongjoon-hyun/SPARK-25589.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Rename method `benchmark` in `BenchmarkBase` as `runBenchmarkSuite `. Also add comments.

Currently the method name `benchmark` is a bit confusing. Also the name is the same as instances of `Benchmark`:

f246813afb/sql/hive/src/test/scala/org/apache/spark/sql/hive/orc/OrcReadBenchmark.scala (L330-L339)

## How was this patch tested?

Unit test.

Closes#22599 from gengliangwang/renameBenchmarkSuite.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

This patch is to bump the master branch version to 3.0.0-SNAPSHOT.

## How was this patch tested?

N/A

Closes#22606 from gatorsmile/bump3.0.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

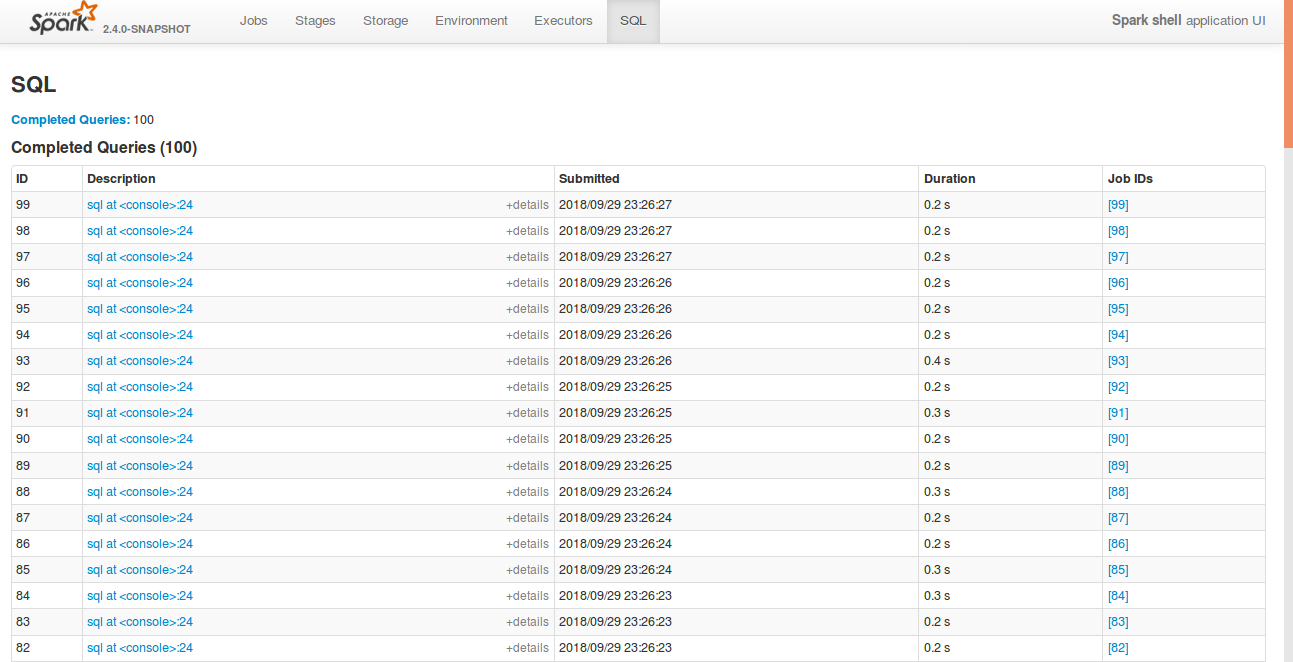

## What changes were proposed in this pull request?

Currently, SQL tab in the WEBUI doesn't support hiding table. Other tabs in the web ui like, Jobs, stages etc supports hiding table (refer SPARK-23024 https://github.com/apache/spark/pull/20216).

In this PR, added the support for hide table in the sql tab also.

## How was this patch tested?

bin/spark-shell

```

sql("create table a (id int)")

for(i <- 1 to 100) sql(s"insert into a values ($i)")

```

Open SQL tab in the web UI

**Before fix:**

**After fix:** Consistent with the other tabs.

(Please explain how this patch was tested. E.g. unit tests, integration tests, manual tests)

(If this patch involves UI changes, please attach a screenshot; otherwise, remove this)

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#22592 from shahidki31/SPARK-25575.

Authored-by: Shahid <shahidki31@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This PR does 2 things:

1. Add a new trait(`SqlBasedBenchmark`) to better support Dataset and DataFrame API.

2. Refactor `AggregateBenchmark` to use main method. Generate benchmark result:

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.AggregateBenchmark"

```

## How was this patch tested?

manual tests

Closes#22484 from wangyum/SPARK-25476.

Lead-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

#22519 introduced a bug when the attributes in the pivot clause are cosmetically different from the output ones (eg. different case). In particular, the problem is that the PR used a `Set[Attribute]` instead of an `AttributeSet`.

## How was this patch tested?

added UT

Closes#22582 from mgaido91/SPARK-25505_followup.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

This PR adds a rule to force `.toLowerCase(Locale.ROOT)` or `toUpperCase(Locale.ROOT)`.

It produces an error as below:

```

[error] Are you sure that you want to use toUpperCase or toLowerCase without the root locale? In most cases, you

[error] should use toUpperCase(Locale.ROOT) or toLowerCase(Locale.ROOT) instead.

[error] If you must use toUpperCase or toLowerCase without the root locale, wrap the code block with

[error] // scalastyle:off caselocale

[error] .toUpperCase

[error] .toLowerCase

[error] // scalastyle:on caselocale

```

This PR excludes the cases above for SQL code path for external calls like table name, column name and etc.

For test suites, or when it's clear there's no locale problem like Turkish locale problem, it uses `Locale.ROOT`.

One minor problem is, `UTF8String` has both methods, `toLowerCase` and `toUpperCase`, and the new rule detects them as well. They are ignored.

## How was this patch tested?

Manually tested, and Jenkins tests.

Closes#22581 from HyukjinKwon/SPARK-25565.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Refactor OrcReadBenchmark to use main method.

Generate benchmark result:

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "hive/test:runMain org.apache.spark.sql.hive.orc.OrcReadBenchmark"

```

## How was this patch tested?

manual tests

Closes#22580 from yucai/SPARK-25508.

Lead-authored-by: yucai <yyu1@ebay.com>

Co-authored-by: Yucai Yu <yucai.yu@foxmail.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

In the PR, I propose to extend implementation of existing method:

```

def pivot(pivotColumn: Column, values: Seq[Any]): RelationalGroupedDataset

```

to support values of the struct type. This allows pivoting by multiple columns combined by `struct`:

```

trainingSales

.groupBy($"sales.year")

.pivot(

pivotColumn = struct(lower($"sales.course"), $"training"),

values = Seq(

struct(lit("dotnet"), lit("Experts")),

struct(lit("java"), lit("Dummies")))

).agg(sum($"sales.earnings"))

```

## How was this patch tested?

Added a test for values specified via `struct` in Java and Scala.

Closes#22316 from MaxGekk/pivoting-by-multiple-columns2.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In the PR, I propose to extended the `schema_of_json()` function, and accept JSON options since they can impact on schema inferring. Purpose is to support the same options that `from_json` can use during schema inferring.

## How was this patch tested?

Added SQL, Python and Scala tests (`JsonExpressionsSuite` and `JsonFunctionsSuite`) that checks JSON options are used.

Closes#22442 from MaxGekk/schema_of_json-options.

Authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This PR aims to prevent test slowdowns at `HiveExternalCatalogVersionsSuite` by using the latest Apache Spark 2.3.2 link because the Apache mirrors will remove the old Spark 2.3.1 binaries eventually. `HiveExternalCatalogVersionsSuite` will not fail because [SPARK-24813](https://issues.apache.org/jira/browse/SPARK-24813) implements a fallback logic. However, it will cause many trials and fallbacks in all builds over `branch-2.3/branch-2.4/master`. We had better fix this issue.

## How was this patch tested?

Pass the Jenkins with the updated version.

Closes#22587 from dongjoon-hyun/SPARK-25570.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Currently, in `ParquetFilters`, if one of the children predicates is not supported by Parquet, the entire predicates will be thrown away. In fact, if the unsupported predicate is in the top level `And` condition or in the child before hitting `Not` or `Or` condition, it can be safely removed.

## How was this patch tested?

Tests are added.

Closes#22574 from dbtsai/removeUnsupportedPredicatesInParquet.

Lead-authored-by: DB Tsai <d_tsai@apple.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Co-authored-by: DB Tsai <dbtsai@dbtsai.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Use `Set` instead of `Array` to improve `accumulatorIds.contains(acc.id)` performance.

This PR close https://github.com/apache/spark/pull/22420

## How was this patch tested?

manual tests.

Benchmark code:

```scala

def benchmark(func: () => Unit): Long = {

val start = System.currentTimeMillis()

func()

val end = System.currentTimeMillis()

end - start

}

val range = Range(1, 1000000)

val set = range.toSet

val array = range.toArray

for (i <- 0 until 5) {

val setExecutionTime =

benchmark(() => for (i <- 0 until 500) { set.contains(scala.util.Random.nextInt()) })

val arrayExecutionTime =

benchmark(() => for (i <- 0 until 500) { array.contains(scala.util.Random.nextInt()) })

println(s"set execution time: $setExecutionTime, array execution time: $arrayExecutionTime")

}

```

Benchmark result:

```

set execution time: 4, array execution time: 2760

set execution time: 1, array execution time: 1911

set execution time: 3, array execution time: 2043

set execution time: 12, array execution time: 2214

set execution time: 6, array execution time: 1770

```

Closes#22579 from wangyum/SPARK-25429.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

**Description from the JIRA :**

Currently, to collect the statistics of all the columns, users need to specify the names of all the columns when calling the command "ANALYZE TABLE ... FOR COLUMNS...". This is not user friendly. Instead, we can introduce the following SQL command to achieve it without specifying the column names.

```

ANALYZE TABLE [db_name.]tablename COMPUTE STATISTICS FOR ALL COLUMNS;

```

## How was this patch tested?

Added new tests in SparkSqlParserSuite and StatisticsSuite

Closes#22566 from dilipbiswal/SPARK-25458.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

The grouping columns from a Pivot query are inferred as "input columns - pivot columns - pivot aggregate columns", where input columns are the output of the child relation of Pivot. The grouping columns will be the leading columns in the pivot output and they should preserve the same order as specified by the input. For example,

```

SELECT * FROM (

SELECT course, earnings, "a" as a, "z" as z, "b" as b, "y" as y, "c" as c, "x" as x, "d" as d, "w" as w

FROM courseSales

)

PIVOT (

sum(earnings)

FOR course IN ('dotNET', 'Java')

)

```

The output columns should be "a, z, b, y, c, x, d, w, ..." but now it is "a, b, c, d, w, x, y, z, ..."

The fix is to use the child plan's `output` instead of `outputSet` so that the order can be preserved.

## How was this patch tested?

Added UT.

Closes#22519 from maryannxue/spark-25505.

Authored-by: maryannxue <maryannxue@apache.org>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

The `show create table` will show a lot of generated attributes for views that created by older Spark version. This PR will basically revert https://issues.apache.org/jira/browse/SPARK-19272 back, so when you `DESC [FORMATTED|EXTENDED] view` will show the original view DDL text.

## How was this patch tested?

Unit test.

Closes#22458 from zheyuan28/testbranch.

Lead-authored-by: Chris Zhao <chris.zhao@databricks.com>

Co-authored-by: Christopher Zhao <chris.zhao@databricks.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

There are 2 places we check for problematic `InSubquery`: the rule `ResolveSubquery` and `InSubquery.checkInputDataTypes`. We should unify them.

## How was this patch tested?

existing tests

Closes#22563 from cloud-fan/followup.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

As per the discussion in https://github.com/apache/spark/pull/22553#pullrequestreview-159192221,

override `filterKeys` violates the documented semantics.

This PR is to remove it and add documentation.

Also fix one potential non-serializable map in `FileStreamOptions`.

The only one call of `CaseInsensitiveMap`'s `filterKeys` left is

c3c45cbd76/sql/hive/src/main/scala/org/apache/spark/sql/hive/execution/HiveOptions.scala (L88-L90)

But this one is OK.

## How was this patch tested?

Existing unit tests.

Closes#22562 from gengliangwang/SPARK-25541-FOLLOWUP.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The PR removes the `InSubquery` expression which was introduced a long time ago and its only usage was removed in 4ce970d714. Hence it is not used anymore.

## How was this patch tested?

existing UTs

Closes#22556 from mgaido91/minor_insubq.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Thanks for bahchis reporting this. It is more like a follow up work for #16581, this PR fix the scenario of Python UDF accessing attributes from both side of join in join condition.

## How was this patch tested?

Add regression tests in PySpark and `BatchEvalPythonExecSuite`.

Closes#22326 from xuanyuanking/SPARK-25314.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In ElementAt, when first argument is MapType, we should coerce the key type and the second argument based on findTightestCommonType. This is not happening currently. We may produce wrong output as we will incorrectly downcast the right hand side double expression to int.

```SQL

spark-sql> select element_at(map(1,"one", 2, "two"), 2.2);

two

```

Also, when the first argument is ArrayType, the second argument should be an integer type or a smaller integral type that can be safely casted to an integer type. Currently we may do an unsafe cast. In the following case, we should fail with an error as 2.2 is not a integer index. But instead we down cast it to int currently and return a result instead.

```SQL

spark-sql> select element_at(array(1,2), 1.24D);

1

```

This PR also supports implicit cast between two MapTypes. I have followed similar logic that exists today to do implicit casts between two array types.

## How was this patch tested?

Added new tests in DataFrameFunctionSuite, TypeCoercionSuite.

Closes#22544 from dilipbiswal/SPARK-25522.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

We have an agreement that the behavior of `from/to_utc_timestamp` is corrected, although the function itself doesn't make much sense in Spark: https://issues.apache.org/jira/browse/SPARK-23715

This PR improves the document.

## How was this patch tested?

N/A

Closes#22543 from cloud-fan/doc.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Refactor `UnsafeProjectionBenchmark` to use main method.

Generate benchmark result:

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "catalyst/test:runMain org.apache.spark.sql.UnsafeProjectionBenchmark"

```

## How was this patch tested?

manual test

Closes#22493 from yucai/SPARK-25485.

Lead-authored-by: yucai <yyu1@ebay.com>

Co-authored-by: Yucai Yu <yucai.yu@foxmail.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Refactor `ColumnarBatchBenchmark` to use main method.

Generate benchmark result:

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.vectorized.ColumnarBatchBenchmark"

```

## How was this patch tested?

manual tests

Closes#22490 from yucai/SPARK-25481.

Lead-authored-by: yucai <yyu1@ebay.com>

Co-authored-by: Yucai Yu <yucai.yu@foxmail.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

https://github.com/apache/spark/pull/20023 proposed to allow precision lose during decimal operations, to reduce the possibilities of overflow. This is a behavior change and is protected by the DECIMAL_OPERATIONS_ALLOW_PREC_LOSS config. However, that PR introduced another behavior change: pick a minimum precision for integral literals, which is not protected by a config. This PR add a new config for it: `spark.sql.literal.pickMinimumPrecision`.

This can allow users to work around issue in SPARK-25454, which is caused by a long-standing bug of negative scale.

## How was this patch tested?

a new test

Closes#22494 from cloud-fan/decimal.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Describe spark.sql.parquet.writeLegacyFormat property in Spark SQL, DataFrames and Datasets Guide.

## How was this patch tested?

N/A

Closes#22453 from seancxmao/SPARK-20937.

Authored-by: seancxmao <seancxmao@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This PR contains 3 optimizations:

1) it improves significantly the operation `--` on `AttributeSet`. As a benchmark for the `--` operation, the following code has been run

```

test("AttributeSet -- benchmark") {

val attrSetA = AttributeSet((1 to 100).map { i => AttributeReference(s"c$i", IntegerType)() })

val attrSetB = AttributeSet(attrSetA.take(80).toSeq)

val attrSetC = AttributeSet((1 to 100).map { i => AttributeReference(s"c2_$i", IntegerType)() })

val attrSetD = AttributeSet((attrSetA.take(50) ++ attrSetC.take(50)).toSeq)

val attrSetE = AttributeSet((attrSetC.take(50) ++ attrSetA.take(50)).toSeq)

val n_iter = 1000000

val t0 = System.nanoTime()

(1 to n_iter) foreach { _ =>

val r1 = attrSetA -- attrSetB

val r2 = attrSetA -- attrSetC

val r3 = attrSetA -- attrSetD

val r4 = attrSetA -- attrSetE

}

val t1 = System.nanoTime()

val totalTime = t1 - t0

println(s"Average time: ${totalTime / n_iter} us")

}

```

The results are:

```

Before PR - Average time: 67674 us (100 %)

After PR - Average time: 28827 us (42.6 %)

```

2) In `ColumnPruning`, it replaces the occurrences of `(attributeSet1 -- attributeSet2).nonEmpty` with `attributeSet1.subsetOf(attributeSet2)` which is order of magnitudes more efficient (especially where there are many attributes). Running the previous benchmark replacing `--` with `subsetOf` returns:

```

Average time: 67 us (0.1 %)

```

3) Provides a more efficient way of building `AttributeSet`s, which can greatly improve the performance of the methods `references` and `outputSet` of `Expression` and `QueryPlan`. This basically avoids unneeded operations (eg. creating many `AttributeEqual` wrapper classes which could be avoided)

The overall effect of those optimizations has been tested on `ColumnPruning` with the following benchmark:

```

test("ColumnPruning benchmark") {

val attrSetA = (1 to 100).map { i => AttributeReference(s"c$i", IntegerType)() }

val attrSetB = attrSetA.take(80)

val attrSetC = attrSetA.take(20).map(a => Alias(Add(a, Literal(1)), s"${a.name}_1")())

val input = LocalRelation(attrSetA)

val query1 = Project(attrSetB, Project(attrSetA, input)).analyze

val query2 = Project(attrSetC, Project(attrSetA, input)).analyze

val query3 = Project(attrSetA, Project(attrSetA, input)).analyze

val nIter = 100000

val t0 = System.nanoTime()

(1 to nIter).foreach { _ =>

ColumnPruning(query1)

ColumnPruning(query2)

ColumnPruning(query3)

}

val t1 = System.nanoTime()

val totalTime = t1 - t0

println(s"Average time: ${totalTime / nIter} us")

}

```

The output of the test is:

```

Before PR - Average time: 733471 us (100 %)

After PR - Average time: 362455 us (49.4 %)

```

The performance improvement has been evaluated also on the `SQLQueryTestSuite`'s queries:

```

(before) org.apache.spark.sql.catalyst.optimizer.ColumnPruning 518413198 / 1377707172 2756 / 15717

(after) org.apache.spark.sql.catalyst.optimizer.ColumnPruning 415432579 / 1121147950 2756 / 15717

% Running time 80.1% / 81.3%

```

Also other rules benefit especially from (3), despite the impact is lower, eg:

```

(before) org.apache.spark.sql.catalyst.analysis.Analyzer$ResolveReferences 307341442 / 623436806 2154 / 16480

(after) org.apache.spark.sql.catalyst.analysis.Analyzer$ResolveReferences 290511312 / 560962495 2154 / 16480

% Running time 94.5% / 90.0%

```

The reason why the impact on the `SQLQueryTestSuite`'s queries is lower compared to the other benchmark is that the optimizations are more significant when the number of attributes involved is higher. Since in the tests we often have very few attributes, the effect there is lower.

## How was this patch tested?

run benchmarks + existing UTs

Closes#22364 from mgaido91/SPARK-25379.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

`CaseInsensitiveMap` is declared as Serializable. However, it is no serializable after `-` operator or `filterKeys` method.

This PR fix the issue by overriding the operator `-` and method `filterKeys`. So the we can avoid potential `NotSerializableException` on using `CaseInsensitiveMap`.

## How was this patch tested?

New test suite.

Closes#22553 from gengliangwang/fixCaseInsensitiveMap.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Currently, Spark has 7 `withTempPath` and 6 `withSQLConf` functions. This PR aims to remove duplicated and inconsistent code and reduce them to the following meaningful implementations.

**withTempPath**

- `SQLHelper.withTempPath`: The one which was used in `SQLTestUtils`.

**withSQLConf**

- `SQLHelper.withSQLConf`: The one which was used in `PlanTest`.

- `ExecutorSideSQLConfSuite.withSQLConf`: The one which doesn't throw `AnalysisException` on StaticConf changes.

- `SQLTestUtils.withSQLConf`: The one which overrides intentionally to change the active session.

```scala

protected override def withSQLConf(pairs: (String, String)*)(f: => Unit): Unit = {

SparkSession.setActiveSession(spark)

super.withSQLConf(pairs: _*)(f)

}

```

## How was this patch tested?

Pass the Jenkins with the existing tests.

Closes#22548 from dongjoon-hyun/SPARK-25534.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

The PR introduces new JSON option `pretty` which allows to turn on `DefaultPrettyPrinter` of `Jackson`'s Json generator. New option is useful in exploring of deep nested columns and in converting of JSON columns in more readable representation (look at the added test).

## How was this patch tested?

Added rount trip test which convert an JSON string to pretty representation via `from_json()` and `to_json()`.

Closes#22534 from MaxGekk/pretty-json.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

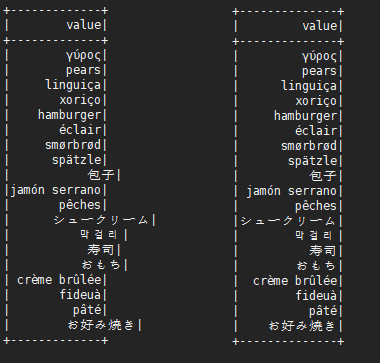

Add the legacy prefix for spark.sql.execution.pandas.groupedMap.assignColumnsByPosition and rename it to spark.sql.legacy.execution.pandas.groupedMap.assignColumnsByName

## How was this patch tested?

The existing tests.

Closes#22540 from gatorsmile/renameAssignColumnsByPosition.

Lead-authored-by: gatorsmile <gatorsmile@gmail.com>

Co-authored-by: Hyukjin Kwon <gurwls223@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Refactor SortBenchmark to use main method.

Generate benchmark result:

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.SortBenchmark"

```

## How was this patch tested?

manual tests

Closes#22495 from yucai/SPARK-25486.

Authored-by: yucai <yyu1@ebay.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

This patch reverts entirely all the regr_* functions added in SPARK-23907. These were added by mgaido91 (and proposed by gatorsmile) to improve compatibility with other database systems, without any actual use cases. However, they are very rarely used, and in Spark there are much better ways to compute these functions, due to Spark's flexibility in exposing real programming APIs.

I'm going through all the APIs added in Spark 2.4 and I think we should revert these. If there are strong enough demands and more use cases, we can add them back in the future pretty easily.

## How was this patch tested?

Reverted test cases also.

Closes#22541 from rxin/SPARK-23907.

Authored-by: Reynold Xin <rxin@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In ArrayRemove, we currently cast the right hand side expression to match the element type of the left hand side Array. This may result in down casting and may return wrong result or questionable result.

Example :

```SQL

spark-sql> select array_remove(array(1,2,3), 1.23D);

[2,3]

```

```SQL

spark-sql> select array_remove(array(1,2,3), 'foo');

NULL

```

We should safely coerce both left and right hand side expressions.

## How was this patch tested?

Added tests in DataFrameFunctionsSuite

Closes#22542 from dilipbiswal/SPARK-25519.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In ArrayPosition, we currently cast the right hand side expression to match the element type of the left hand side Array. This may result in down casting and may return wrong result or questionable result.

Example :

```SQL

spark-sql> select array_position(array(1), 1.34);

1

```

```SQL

spark-sql> select array_position(array(1), 'foo');

null

```

We should safely coerce both left and right hand side expressions.

## How was this patch tested?

Added tests in DataFrameFunctionsSuite

Closes#22407 from dilipbiswal/SPARK-25416.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The function actually exists in current selected database, and it's failed to init during `lookupFunciton`, but the exception message is:

```

This function is neither a registered temporary function nor a permanent function registered in the database 'default'.

```

This is not conducive to positioning problems. This PR fix the problem.

## How was this patch tested?

new test case + manual tests

Closes#18544 from stanzhai/fix-udf-error-message.

Authored-by: Stan Zhai <mail@stanzhai.site>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Refactor `CompressionSchemeBenchmark` to use main method.

Generate benchmark result:

```sh

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.columnar.compression.CompressionSchemeBenchmark"

```

## How was this patch tested?

manual tests

Closes#22486 from wangyum/SPARK-25478.

Lead-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Add `Locale.ROOT` when `toUpperCase`.

## How was this patch tested?

manual tests

Closes#22531 from wangyum/SPARK-25415.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Current the file [parquetSuites.scala](f29c2b5287/sql/hive/src/test/scala/org/apache/spark/sql/hive/parquetSuites.scala) is not recognizable.

When I tried to find test suites for built-in Parquet conversions for Hive serde, I can only find [HiveParquetSuite](f29c2b5287/sql/hive/src/test/scala/org/apache/spark/sql/hive/HiveParquetSuite.scala) in the first few minutes.

This PR is to:

1. Rename `ParquetMetastoreSuite` to `HiveParquetMetastoreSuite`, and create a single file for it.

2. Rename `ParquetSourceSuite` to `HiveParquetSourceSuite`, and create a single file for it.

3. Create a single file for `ParquetPartitioningTest`.

4. Delete `parquetSuites.scala` .

## How was this patch tested?

Unit test

Closes#22467 from gengliangwang/refactor_parquet_suites.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

One more legacy config to go ...

Closes#22515 from rxin/allowCreatingManagedTableUsingNonemptyLocation.

Authored-by: Reynold Xin <rxin@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Currently there are two classes with the same naming BenchmarkBase:

1. `org.apache.spark.util.BenchmarkBase`

2. `org.apache.spark.sql.execution.benchmark.BenchmarkBase`

This is very confusing. And the benchmark object `org.apache.spark.sql.execution.benchmark.FilterPushdownBenchmark` is using the one in `org.apache.spark.util.BenchmarkBase`, while there is another class `BenchmarkBase` in the same package of it...

Here I propose:

1. the package `org.apache.spark.util.BenchmarkBase` should be in test package of core module. Move it to package `org.apache.spark.benchmark` .

2. Move `org.apache.spark.util.Benchmark` to test package of core module. Move it to package `org.apache.spark.benchmark` .

3. Rename the class `org.apache.spark.sql.execution.benchmark.BenchmarkBase` as `BenchmarkWithCodegen`

## How was this patch tested?

Unit test

Closes#22513 from gengliangwang/refactorBenchmarkBase.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The PR proposes to avoid usage of pattern matching for each call of ```eval``` method within:

- ```Concat```

- ```Reverse```

- ```ElementAt```

## How was this patch tested?

Run the existing tests for ```Concat```, ```Reverse``` and ```ElementAt``` expression classes.

Closes#22471 from mn-mikke/SPARK-25470.

Authored-by: Marek Novotny <mn.mikke@gmail.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

## What changes were proposed in this pull request?

See title. Makes our legacy backward compatibility configs more consistent.

## How was this patch tested?

Make sure all references have been updated:

```

> git grep compareDateTimestampInTimestamp

docs/sql-programming-guide.md: - Since Spark 2.4, Spark compares a DATE type with a TIMESTAMP type after promotes both sides to TIMESTAMP. To set `false` to `spark.sql.legacy.compareDateTimestampInTimestamp` restores the previous behavior. This option will be removed in Spark 3.0.

sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/analysis/TypeCoercion.scala: // if conf.compareDateTimestampInTimestamp is true

sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/analysis/TypeCoercion.scala: => if (conf.compareDateTimestampInTimestamp) Some(TimestampType) else Some(StringType)

sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/analysis/TypeCoercion.scala: => if (conf.compareDateTimestampInTimestamp) Some(TimestampType) else Some(StringType)

sql/catalyst/src/main/scala/org/apache/spark/sql/internal/SQLConf.scala: buildConf("spark.sql.legacy.compareDateTimestampInTimestamp")

sql/catalyst/src/main/scala/org/apache/spark/sql/internal/SQLConf.scala: def compareDateTimestampInTimestamp : Boolean = getConf(COMPARE_DATE_TIMESTAMP_IN_TIMESTAMP)

sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/analysis/TypeCoercionSuite.scala: "spark.sql.legacy.compareDateTimestampInTimestamp" -> convertToTS.toString) {

```

Closes#22508 from rxin/SPARK-23549.

Authored-by: Reynold Xin <rxin@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

See above. This should go into the 2.4 release.

Closes#22509 from rxin/SPARK-25384.

Authored-by: Reynold Xin <rxin@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Refactor PrimitiveArrayBenchmark to use main method and print the output as a separate file.

Run blow command to generate benchmark results:

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.PrimitiveArrayBenchmark"

```

## How was this patch tested?

Manual tests.

Closes#22497 from seancxmao/SPARK-25487.

Authored-by: seancxmao <seancxmao@gmail.com>

Signed-off-by: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

## What changes were proposed in this pull request?

This reverts commit 417ad92502.

We decided to keep the current behaviors unchanged and will consider whether we will deprecate the these functions in 3.0. For more details, see the discussion in https://issues.apache.org/jira/browse/SPARK-23715

## How was this patch tested?

The existing tests.

Closes#22505 from gatorsmile/revertSpark-23715.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Refactor `DataSourceWriteBenchmark` and add write benchmark for AVRO.

## How was this patch tested?

Build and run the benchmark.

Closes#22451 from gengliangwang/avroWriteBenchmark.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Legitimate stops of streams may actually cause an exception to be captured by stream execution, because the job throws a SparkException regarding job cancellation during a stop. This PR makes the stop more graceful by swallowing this cancellation error.

## How was this patch tested?

This is pretty hard to test. The existing tests should make sure that we're not swallowing other specific SparkExceptions. I've also run the `KafkaSourceStressForDontFailOnDataLossSuite`100 times, and it didn't fail, whereas it used to be flaky.

Closes#22478 from brkyvz/SPARK-25472.

Authored-by: Burak Yavuz <brkyvz@gmail.com>

Signed-off-by: Burak Yavuz <brkyvz@gmail.com>

## What changes were proposed in this pull request?

In the PR, I propose to add an overloaded method for `sampleBy` which accepts the first argument of the `Column` type. This will allow to sample by any complex columns as well as sampling by multiple columns. For example:

```Scala

spark.createDataFrame(Seq(("Bob", 17), ("Alice", 10), ("Nico", 8), ("Bob", 17),

("Alice", 10))).toDF("name", "age")

.stat

.sampleBy(struct($"name", $"age"), Map(Row("Alice", 10) -> 0.3, Row("Nico", 8) -> 1.0), 36L)

.show()

+-----+---+

| name|age|

+-----+---+

| Nico| 8|

|Alice| 10|

+-----+---+

```

## How was this patch tested?

Added new test for sampling by multiple columns for Scala and test for Java, Python to check that `sampleBy` is able to sample by `Column` type argument.

Closes#22365 from MaxGekk/sample-by-column.

Authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

The problem was cause by the PushProjectThroughUnion rule, which, when creating new Project for each child of Union, uses the same exprId for expressions of the same position. This is wrong because, for each child of Union, the expressions are all independent, and it can lead to a wrong result if other rules like FoldablePropagation kicks in, taking two different expressions as the same.

This fix is to create new expressions in the new Project for each child of Union.

## How was this patch tested?

Added UT.

Closes#22447 from maryannxue/push-project-thru-union-bug.

Authored-by: maryannxue <maryannxue@apache.org>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

In ArrayContains, we currently cast the right hand side expression to match the element type of the left hand side Array. This may result in down casting and may return wrong result or questionable result.

Example :

```SQL

spark-sql> select array_contains(array(1), 1.34);

true

```

```SQL

spark-sql> select array_contains(array(1), 'foo');

null

```

We should safely coerce both left and right hand side expressions.

## How was this patch tested?

Added tests in DataFrameFunctionsSuite

Closes#22408 from dilipbiswal/SPARK-25417.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This PR proposes to respect `SessionConfigSupport` in SS datasources as well. Currently these are only respected in batch sources:

e06da95cd9/sql/core/src/main/scala/org/apache/spark/sql/DataFrameReader.scala (L198-L203)e06da95cd9/sql/core/src/main/scala/org/apache/spark/sql/DataFrameWriter.scala (L244-L249)

If a developer makes a datasource V2 that supports both structured streaming and batch jobs, batch jobs respect a specific configuration, let's say, URL to connect and fetch data (which end users might not be aware of); however, structured streaming ends up with not supporting this (and should explicitly be set into options).

## How was this patch tested?

Unit tests were added.

Closes#22462 from HyukjinKwon/SPARK-25460.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This goes to revert sequential PRs based on some discussion and comments at https://github.com/apache/spark/pull/16677#issuecomment-422650759.

#22344#22330#22239#16677

## How was this patch tested?

Existing tests.

Closes#22481 from viirya/revert-SPARK-19355-1.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Refactor `FilterPushdownBenchmark` use `main` method. we can use 3 ways to run this test now:

1. bin/spark-submit --class org.apache.spark.sql.execution.benchmark.FilterPushdownBenchmark spark-sql_2.11-2.5.0-SNAPSHOT-tests.jar

2. build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.FilterPushdownBenchmark"

3. SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.FilterPushdownBenchmark"

The method 2 and the method 3 do not need to compile the `spark-sql_*-tests.jar` package. So these two methods are mainly for developers to quickly do benchmark.

## How was this patch tested?

manual tests

Closes#22443 from wangyum/SPARK-25339.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This patch adds an "optimizer" prefix to nested schema pruning.

## How was this patch tested?

Should be covered by existing tests.

Closes#22475 from rxin/SPARK-4502.

Authored-by: Reynold Xin <rxin@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

The PR proposes to return the data type of the operands as a result for the `div` operator. Before the PR, `bigint` is always returned. It introduces also a `spark.sql.legacy.integralDivide.returnBigint` config in order to let the users restore the legacy behavior.

## How was this patch tested?

added UTs

Closes#22465 from mgaido91/SPARK-25457.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This patch changes the config option `spark.sql.streaming.noDataMicroBatchesEnabled` to `spark.sql.streaming.noDataMicroBatches.enabled` to be more consistent with rest of the configs. Unfortunately there is one streaming config called `spark.sql.streaming.metricsEnabled`. For that one we should just use a fallback config and change it in a separate patch.

## How was this patch tested?

Made sure no other references to this config are in the code base:

```

> git grep "noDataMicro"

sql/catalyst/src/main/scala/org/apache/spark/sql/internal/SQLConf.scala: buildConf("spark.sql.streaming.noDataMicroBatches.enabled")

```

Closes#22476 from rxin/SPARK-24157.

Authored-by: Reynold Xin <rxin@databricks.com>

Signed-off-by: Reynold Xin <rxin@databricks.com>

## What changes were proposed in this pull request?

For self-join/self-union, Spark will produce a physical plan which has multiple `DataSourceV2ScanExec` instances referring to the same `ReadSupport` instance. In this case, the streaming source is indeed scanned multiple times, and the `numInputRows` metrics should be counted for each scan.

Actually we already have 2 test cases to verify the behavior:

1. `StreamingQuerySuite.input row calculation with same V2 source used twice in self-join`

2. `KafkaMicroBatchSourceSuiteBase.ensure stream-stream self-join generates only one offset in log and correct metrics`.

However, in these 2 tests, the expected result is different, which is super confusing. It turns out that, the first test doesn't trigger exchange reuse, so the source is scanned twice. The second test triggers exchange reuse, and the source is scanned only once.

This PR proposes to improve these 2 tests, to test with/without exchange reuse.

## How was this patch tested?

test only change

Closes#22402 from cloud-fan/bug.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In SPARK-23711, `UnsafeProjection` supports fallback to an interpreted mode. Therefore, this pr fixed code to support the same fallback mode in `MutableProjection` based on `CodeGeneratorWithInterpretedFallback`.

## How was this patch tested?

Added tests in `CodeGeneratorWithInterpretedFallbackSuite`.

Closes#22355 from maropu/SPARK-25358.

Authored-by: Takeshi Yamamuro <yamamuro@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

To be more consistent with other statistics based configs.

## How was this patch tested?

N/A - straightforward rename of config option. Used `git grep` to make sure there are no mention of it.

Closes#22457 from rxin/SPARK-24626.

Authored-by: Reynold Xin <rxin@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

`spark.sql.fromJsonForceNullableSchema` -> `spark.sql.function.fromJson.forceNullable`

## How was this patch tested?

Made sure there are no more references to `spark.sql.fromJsonForceNullableSchema`.

Closes#22459 from rxin/SPARK-23173.

Authored-by: Reynold Xin <rxin@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

PythonForeachWriterSuite was failing because RowQueue now needs to have a handle on a SparkEnv with a SerializerManager, so added a mock env with a serializer manager.

Also fixed a typo in the `finally` that was hiding the real exception.

Tested PythonForeachWriterSuite locally, full tests via jenkins.

Closes#22452 from squito/SPARK-25456.

Authored-by: Imran Rashid <irashid@cloudera.com>

Signed-off-by: Imran Rashid <irashid@cloudera.com>

## What changes were proposed in this pull request?

SPARK-22333 introduced a regression in the resolution of `CURRENT_DATE` and `CURRENT_TIMESTAMP`. Before that ticket, these 2 functions were resolved in a case insensitive way. After, this depends on the value of `spark.sql.caseSensitive`.

The PR restores the previous behavior and makes their resolution case insensitive anyhow. The PR takes over #21217, therefore it closes#21217 and credit for this patch should be given to jamesthomp.

## How was this patch tested?

added UT

Closes#22440 from mgaido91/SPARK-24151.

Lead-authored-by: James Thompson <jamesthomp@users.noreply.github.com>

Co-authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

This PR makes `GenArrayData.genCodeToCreateArrayData` method simple by using `ArrayData.createArrayData` method.

Before this PR, `genCodeToCreateArrayData` method was complicated

* Generated a temporary Java array to create `ArrayData`

* Had separate code generation path to assign values for `GenericArrayData` and `UnsafeArrayData`

After this PR, the method

* Directly generates `GenericArrayData` or `UnsafeArrayData` without a temporary array

* Has only code generation path to assign values

## How was this patch tested?

Existing UTs

Closes#22439 from kiszk/SPARK-25444.

Authored-by: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

Signed-off-by: Takuya UESHIN <ueshin@databricks.com>

## What changes were proposed in this pull request?