### What changes were proposed in this pull request?

Add a new class HybridStore to make the history server faster when loading event files. When rebuilding the application state from event logs, HybridStore will write data to InMemoryStore at first and use a background thread to dump data to LevelDB once the writing to InMemoryStore is completed. HybridStore is to make content serving faster by using more memory. It's only safe to enable it when the cluster is not having a heavy load.

### Why are the changes needed?

HybridStore can greatly reduce the event logs loading time, especially for large log files. In general, it has 4x - 6x UI loading speed improvement for large log files. The detailed result is shown in comments.

### Does this PR introduce any user-facing change?

This PR adds new configs `spark.history.store.hybridStore.enabled` and `spark.history.store.hybridStore.maxMemoryUsage`.

### How was this patch tested?

A test suite for HybridStore is added. I also manually tested it on 3.1.0 on mac os.

This is a follow-up for the work done by Hieu Huynh in 2019.

Closes#28412 from baohe-zhang/SPARK-31608.

Authored-by: Baohe Zhang <baohe.zhang@verizonmedia.com>

Signed-off-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

### What changes were proposed in this pull request?

This PR aims to drop Python 2.7, 3.4 and 3.5.

Roughly speaking, it removes all the widely known Python 2 compatibility workarounds such as `sys.version` comparison, `__future__`. Also, it removes the Python 2 dedicated codes such as `ArrayConstructor` in Spark.

### Why are the changes needed?

1. Unsupport EOL Python versions

2. Reduce maintenance overhead and remove a bit of legacy codes and hacks for Python 2.

3. PyPy2 has a critical bug that causes a flaky test, SPARK-28358 given my testing and investigation.

4. Users can use Python type hints with Pandas UDFs without thinking about Python version

5. Users can leverage one latest cloudpickle, https://github.com/apache/spark/pull/28950. With Python 3.8+ it can also leverage C pickle.

### Does this PR introduce _any_ user-facing change?

Yes, users cannot use Python 2.7, 3.4 and 3.5 in the upcoming Spark version.

### How was this patch tested?

Manually tested and also tested in Jenkins.

Closes#28957 from HyukjinKwon/SPARK-32138.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This change replaces the world slave with alternatives matching the context.

### Why are the changes needed?

There is no need to call things slave, we might as well use better clearer names.

### Does this PR introduce _any_ user-facing change?

Yes, the ouput JSON does change. To allow backwards compatibility this is an additive change.

The shell scripts for starting & stopping workers are renamed, and for backwards compatibility old scripts are added to call through to the new ones while printing a deprecation message to stderr.

### How was this patch tested?

Existing tests.

Closes#28864 from holdenk/SPARK-32004-drop-references-to-slave.

Lead-authored-by: Holden Karau <hkarau@apple.com>

Co-authored-by: Holden Karau <holden@pigscanfly.ca>

Signed-off-by: Holden Karau <hkarau@apple.com>

### What changes were proposed in this pull request?

This PR changes `true` to `True` in the python code.

### Why are the changes needed?

The previous example is a syntax error.

### Does this PR introduce _any_ user-facing change?

Yes, but this is doc-only typo fix.

### How was this patch tested?

Manually run the example.

Closes#29073 from ChuliangXiao/patch-1.

Authored-by: Chuliang Xiao <ChuliangX@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This PR improves the test to make sure all the SQL keywords are documented correctly. It fixes several issues:

1. some keywords are not documented

2. some keywords are not ANSI SQL keywords but documented as reserved/non-reserved.

### Why are the changes needed?

To make sure the implementation matches the doc.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

new test

Closes#29055 from cloud-fan/keyword.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

In this PR, I suppose we support 'f'-suffixed float literal, e.g. `select 1.1f`

### Why are the changes needed?

a very common feature across platforms, checked with pg, presto, hive, MySQL...

### Does this PR introduce _any_ user-facing change?

yes,

`select 1.1f` results float value 1.1 instead of throwing AnlaysisExceptiion`Can't extract value from 1: need struct type but got int;`

### How was this patch tested?

add unit tests

Closes#29022 from yaooqinn/SPARK-32207.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

I resolved some of the inconsistencies of AWS env variables. They're fixed in the documentation as well as in the examples. I grep-ed through the repo to try & find any more instances but nothing popped up.

### Why are the changes needed?

As previously mentioned, there is a JIRA request, SPARK-32035, which encapsulates all the issues. But, in summary, the naming of items was inconsistent.

### Does this PR introduce _any_ user-facing change?

Correct names:

AWS_ACCESS_KEY_ID

AWS_SECRET_ACCESS_KEY

AWS_SESSION_TOKEN

These are the same that AWS uses in their libraries.

However, looking through the Spark documentation and comments, I see that these are not denoted correctly across the board:

docs/cloud-integration.md

106:1. `spark-submit` reads the `AWS_ACCESS_KEY`, `AWS_SECRET_KEY` <-- both different

107:and `AWS_SESSION_TOKEN` environment variables and sets the associated authentication options

docs/streaming-kinesis-integration.md

232:- Set up the environment variables `AWS_ACCESS_KEY_ID` and `AWS_SECRET_KEY` with your AWS credentials. <-- secret key different

external/kinesis-asl/src/main/python/examples/streaming/kinesis_wordcount_asl.py

34: $ export AWS_ACCESS_KEY_ID=<your-access-key>

35: $ export AWS_SECRET_KEY=<your-secret-key> <-- different

48: Environment Variables - AWS_ACCESS_KEY_ID and AWS_SECRET_KEY <-- secret key different

core/src/main/scala/org/apache/spark/deploy/SparkHadoopUtil.scala

438: val keyId = System.getenv("AWS_ACCESS_KEY_ID")

439: val accessKey = System.getenv("AWS_SECRET_ACCESS_KEY")

448: val sessionToken = System.getenv("AWS_SESSION_TOKEN")

external/kinesis-asl/src/main/scala/org/apache/spark/examples/streaming/KinesisWordCountASL.scala

53: * $ export AWS_ACCESS_KEY_ID=<your-access-key>

54: * $ export AWS_SECRET_KEY=<your-secret-key> <-- different

65: * Environment Variables - AWS_ACCESS_KEY_ID and AWS_SECRET_KEY <-- secret key different

external/kinesis-asl/src/main/java/org/apache/spark/examples/streaming/JavaKinesisWordCountASL.java

59: * $ export AWS_ACCESS_KEY_ID=[your-access-key]

60: * $ export AWS_SECRET_KEY=<your-secret-key> <-- different

71: * Environment Variables - AWS_ACCESS_KEY_ID and AWS_SECRET_KEY <-- secret key different

These were all fixed to match names listed under the "correct names" heading.

### How was this patch tested?

I built the documentation using jekyll and verified that the changes were present & accurate.

Closes#29058 from Moovlin/SPARK-32035.

Authored-by: moovlin <richjoerger@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

update REGEXP usage and examples in sql-ref-syntx-qry-select-like.cmd

### Why are the changes needed?

make the usage of REGEXP known to more users

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

No tests

Closes#29009 from GuoPhilipse/update-migrate-guide.

Lead-authored-by: GuoPhilipse <46367746+GuoPhilipse@users.noreply.github.com>

Co-authored-by: GuoPhilipse <guofei_ok@126.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

1. Set the SQL config `spark.sql.legacy.allowCastNumericToTimestamp` to `true` by default

2. Remove explicit sets of `spark.sql.legacy.allowCastNumericToTimestamp` to `true` in the cast suites.

### Why are the changes needed?

To avoid breaking changes in minor versions (in the upcoming Spark 3.1.0) according to the the semantic versioning guidelines (https://spark.apache.org/versioning-policy.html)

### Does this PR introduce _any_ user-facing change?

Yes

### How was this patch tested?

By `CastSuite`.

Closes#29012 from MaxGekk/allow-cast-numeric-to-timestamp.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

docs/sql-ref-syntax-qry-select-usedb.md -> docs/sql-ref-syntax-ddl-usedb.md

docs/sql-ref-syntax-aux-refresh-table.md -> docs/sql-ref-syntax-aux-cache-refresh-table.md

### Why are the changes needed?

usedb belongs to DDL. Its location should be consistent with other DDL commands file locations

similar reason for refresh table

### Does this PR introduce _any_ user-facing change?

before change, when clicking USE DATABASE, the side bar menu shows select commands

<img width="1200" alt="Screen Shot 2020-07-04 at 9 05 35 AM" src="https://user-images.githubusercontent.com/13592258/86516696-b45f8a80-bdd7-11ea-8dba-3a5cca22aad3.png">

after change, when clicking USE DATABASE, the side bar menu shows DDL commands

<img width="1120" alt="Screen Shot 2020-07-04 at 9 06 06 AM" src="https://user-images.githubusercontent.com/13592258/86516703-bf1a1f80-bdd7-11ea-8a90-ae7eaaafd44c.png">

before change, when clicking refresh table, the side bar menu shows Auxiliary statements

<img width="1200" alt="Screen Shot 2020-07-04 at 9 30 40 AM" src="https://user-images.githubusercontent.com/13592258/86516877-3d2af600-bdd9-11ea-9568-0a6f156f57da.png">

after change, when clicking refresh table, the side bar menu shows Cache statements

<img width="1199" alt="Screen Shot 2020-07-04 at 9 35 21 AM" src="https://user-images.githubusercontent.com/13592258/86516937-b4f92080-bdd9-11ea-8ad1-5f5a7f58d76b.png">

### How was this patch tested?

Manually build and check

Closes#28995 from huaxingao/docs_fix.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Huaxin Gao <huaxing@us.ibm.com>

### What changes were proposed in this pull request?

Set the JSON option `inferTimestamp` to `false` if an user don't pass it as datasource option.

### Why are the changes needed?

To prevent perf regression while inferring schemas from JSON with potential timestamps fields.

### Does this PR introduce _any_ user-facing change?

Yes

### How was this patch tested?

- Modified existing tests in `JsonSuite` and `JsonInferSchemaSuite`.

- Regenerated results of `JsonBenchmark` in the environment:

| Item | Description |

| ---- | ----|

| Region | us-west-2 (Oregon) |

| Instance | r3.xlarge |

| AMI | ubuntu/images/hvm-ssd/ubuntu-bionic-18.04-amd64-server-20190722.1 (ami-06f2f779464715dc5) |

| Java | OpenJDK 64-Bit Server VM 1.8.0_252 and OpenJDK 64-Bit Server VM 11.0.7+10 |

Closes#28966 from MaxGekk/json-inferTimestamps-disable-by-default.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This PR fixes a typo for a configuration property in the `spark-standalone.md`.

`spark.driver.resourcesfile` should be `spark.driver.resourcesFile`.

I look for similar typo but this is the only typo.

### Why are the changes needed?

The property name is wrong.

### Does this PR introduce _any_ user-facing change?

Yes. The property name is corrected.

### How was this patch tested?

I confirmed the spell of the property name is the correct from the property name defined in o.a.s.internal.config.package.scala.

Closes#28958 from sarutak/fix-resource-typo.

Authored-by: Kousuke Saruta <sarutak@oss.nttdata.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

The 3rd link in `IBM Cloud Object Storage connector for Apache Spark` is broken. The PR removes this link.

### Why are the changes needed?

broken link

### Does this PR introduce _any_ user-facing change?

yes, the broken link is removed from the doc.

### How was this patch tested?

doc generation passes successfully as before

Closes#28927 from guykhazma/spark32099.

Authored-by: Guy Khazma <guykhag@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This PR is to add a redirect to sql-ref.html.

### Why are the changes needed?

Before Spark 3.0 release, we are using sql-reference.md, which was replaced by sql-ref.md instead. A number of Google searches I’ve done today have turned up https://spark.apache.org/docs/latest/sql-reference.html, which does not exist any more. Thus, we should add a redirect to sql-ref.html.

### Does this PR introduce _any_ user-facing change?

https://spark.apache.org/docs/latest/sql-reference.html will be redirected to https://spark.apache.org/docs/latest/sql-ref.html

### How was this patch tested?

Build it in my local environment. It works well. The sql-reference.html file was generated. The contents are like:

```

<!DOCTYPE html>

<html lang="en-US">

<meta charset="utf-8">

<title>Redirecting…</title>

<link rel="canonical" href="http://localhost:4000/sql-ref.html">

<script>location="http://localhost:4000/sql-ref.html"</script>

<meta http-equiv="refresh" content="0; url=http://localhost:4000/sql-ref.html">

<meta name="robots" content="noindex">

<h1>Redirecting…</h1>

<a href="http://localhost:4000/sql-ref.html">Click here if you are not redirected.</a>

</html>

```

Closes#28914 from gatorsmile/addRedirectSQLRef.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Spark 3.0 accidentally dropped R < 3.5. It is built by R 3.6.3 which not support R < 3.5:

```

Error in readRDS(pfile) : cannot read workspace version 3 written by R 3.6.3; need R 3.5.0 or newer version.

```

In fact, with SPARK-31918, we will have to drop R < 3.5 entirely to support R 4.0.0. This is inevitable to release on CRAN because they require to make the tests pass with the latest R.

### Why are the changes needed?

To show the supported versions correctly, and support R 4.0.0 to unblock the releases.

### Does this PR introduce _any_ user-facing change?

In fact, no because Spark 3.0.0 already does not work with R < 3.5.

Compared to Spark 2.4, yes. R < 3.5 would not work.

### How was this patch tested?

Jenkins should test it out.

Closes#28908 from HyukjinKwon/SPARK-32073.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Spark uses an old and deprecated API named `KafkaConsumer.poll(long)` which never returns and stays in live lock if metadata is not updated (for instance when broker disappears at consumer creation). Please see [Kafka documentation](https://kafka.apache.org/25/javadoc/org/apache/kafka/clients/consumer/KafkaConsumer.html#poll-long-) and [standalone test application](https://github.com/gaborgsomogyi/kafka-get-assignment) for further details.

In this PR I've applied the new `KafkaConsumer.poll(Duration)` API on executor side. Please note driver side still uses the old API which will be fixed in SPARK-32032.

### Why are the changes needed?

Infinite wait in `KafkaConsumer.poll(long)`.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Existing unit tests.

Closes#28871 from gaborgsomogyi/SPARK-32033.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Emphasize the Streaming tab is for DStream API.

### Why are the changes needed?

Some users reported that it's a little confusing of the streaming tab and structured streaming tab.

### Does this PR introduce _any_ user-facing change?

Document change.

### How was this patch tested?

N/A

Closes#28854 from xuanyuanking/minor-doc.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Fix executor container name typo. `executor` should be `spark-kubernetes-executor`.

### Why are the changes needed?

The Executor pod container name the users actually get from their Kubernetes clusters is different from that described in the documentation.

For example, below is what a user get from an executor pod.

```

Containers:

spark-kubernetes-executor:

Container ID: docker://aaaabbbbccccddddeeeeffff

Image: <imagename>

Image ID: docker-pullable://0000.dkr.ecr.us-east-0.amazonaws.com/spark

Port: 7079/TCP

Host Port: 0/TCP

Args:

executor

State: Running

Started: Thu, 28 May 2020 05:54:04 -0700

Ready: True

Restart Count: 0

Limits:

memory: 16Gi

```

### Does this PR introduce _any_ user-facing change?

Document change.

### How was this patch tested?

N/A

Closes#28862 from yuj/patch-1.

Authored-by: James Yu <yuj@users.noreply.github.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

If a Spark distribution has built-in hadoop runtime, Spark will not populate the hadoop classpath from `yarn.application.classpath` and `mapreduce.application.classpath` when a job is submitted to Yarn. Users can override this behavior by setting `spark.yarn.populateHadoopClasspath` to `true`.

### Why are the changes needed?

Without this, Spark will populate the hadoop classpath from `yarn.application.classpath` and `mapreduce.application.classpath` even Spark distribution has built-in hadoop. This results jar conflict and many unexpected behaviors in runtime.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Manually test with two builds, with-hadoop and no-hadoop builds.

Closes#28788 from dbtsai/yarn-classpath.

Authored-by: DB Tsai <d_tsai@apple.com>

Signed-off-by: DB Tsai <d_tsai@apple.com>

## What changes were proposed in this pull request?

we fail casting from numeric to timestamp by default.

## Why are the changes needed?

casting from numeric to timestamp is not a non-standard,meanwhile it may generate different result between spark and other systems,for example hive

## Does this PR introduce any user-facing change?

Yes,user cannot cast numeric to timestamp directly,user have to use the following function to achieve the same effect:TIMESTAMP_SECONDS/TIMESTAMP_MILLIS/TIMESTAMP_MICROS

## How was this patch tested?

unit test added

Closes#28593 from GuoPhilipse/31710-fix-compatibility.

Lead-authored-by: GuoPhilipse <guofei_ok@126.com>

Co-authored-by: GuoPhilipse <46367746+GuoPhilipse@users.noreply.github.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

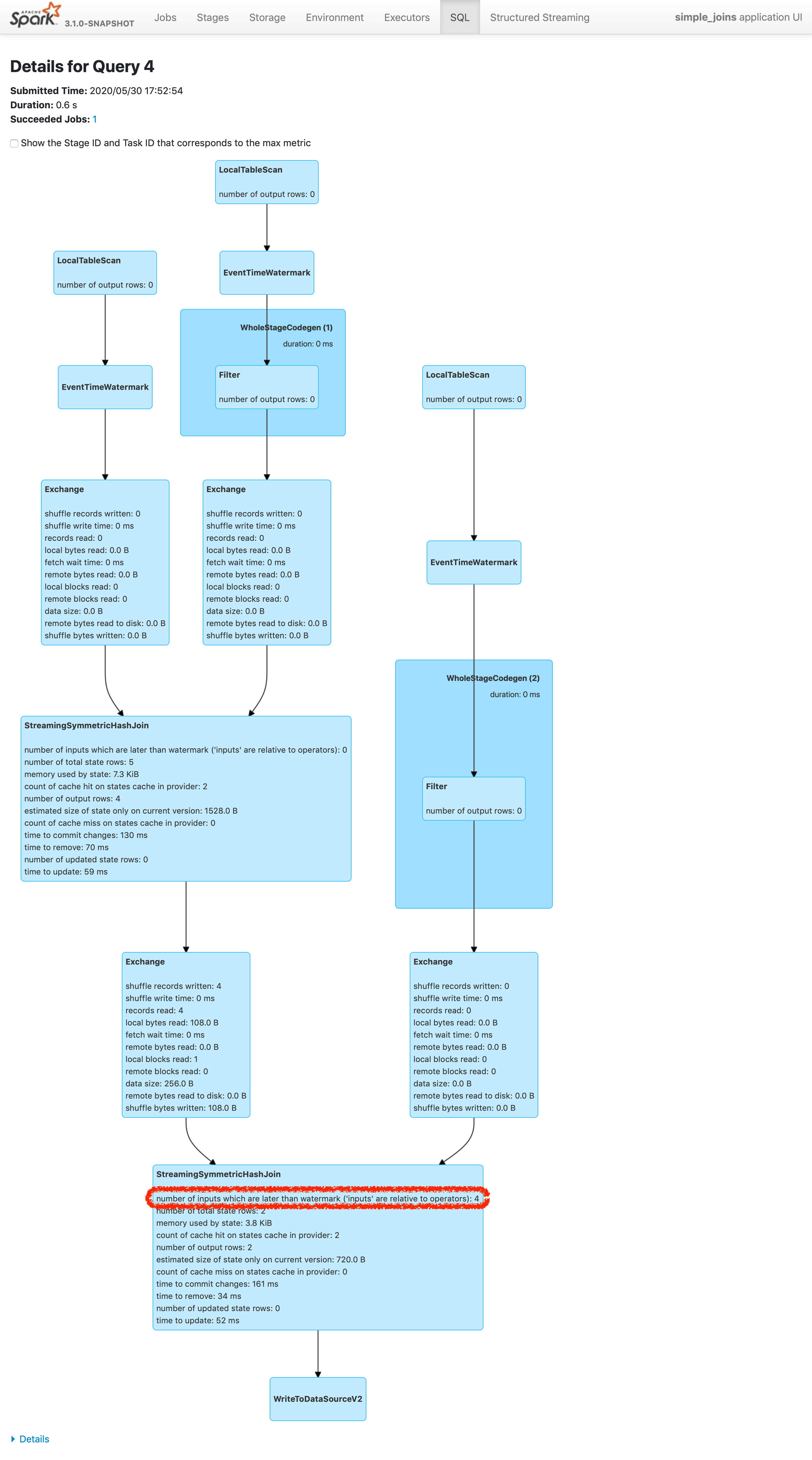

### What changes were proposed in this pull request?

This PR renames the variable from "numLateInputs" to "numRowsDroppedByWatermark" so that it becomes self-explanation.

### Why are the changes needed?

This is originated from post-review, see https://github.com/apache/spark/pull/28607#discussion_r439853232

### Does this PR introduce _any_ user-facing change?

No, as SPARK-24634 is not introduced in any release yet.

### How was this patch tested?

Existing UTs.

Closes#28828 from HeartSaVioR/SPARK-24634-v3-followup.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR intends to move keywords `ANTI`, `SEMI`, and `MINUS` from reserved to non-reserved.

### Why are the changes needed?

To comply with the ANSI/SQL standard.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Added tests.

Closes#28807 from maropu/SPARK-26905-2.

Authored-by: Takeshi Yamamuro <yamamuro@apache.org>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

This PR proposes to use "mdc.XXX" as the consistent key for both `sc.setLocalProperty` and `log4j.properties` when setting up configurations for MDC.

### Why are the changes needed?

It's weird that we use "mdc.XXX" as key to set MDC value via `sc.setLocalProperty` while we use "XXX" as key to set MDC pattern in log4j.properties. It could also bring extra burden to the user.

### Does this PR introduce _any_ user-facing change?

No, as MDC feature is added in version 3.1, which hasn't been released.

### How was this patch tested?

Tested manually.

Closes#28801 from Ngone51/consistent-mdc.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Please refer https://issues.apache.org/jira/browse/SPARK-24634 to see rationalization of the issue.

This patch adds a new metric to count the number of inputs arrived later than watermark plus allowed delay. To make changes simpler, this patch doesn't count the exact number of input rows which are later than watermark plus allowed delay. Instead, this patch counts the inputs which are dropped in the logic of operator. The difference of twos are shown in streaming aggregation: to optimize the calculation, streaming aggregation "pre-aggregates" the input rows, and later checks the lateness against "pre-aggregated" inputs, hence the number might be reduced.

The new metric will be provided via two places:

1. On Spark UI: check the metrics in stateful operator nodes in query execution details page in SQL tab

2. On Streaming Query Listener: check "numLateInputs" in "stateOperators" in QueryProcessEvent.

### Why are the changes needed?

Dropping late inputs means that end users might not get expected outputs. Even end users may indicate the fact and tolerate the result (as that's what allowed lateness is for), but they should be able to observe whether the current value of allowed lateness drops inputs or not so that they can adjust the value.

Also, whatever the chance they have multiple of stateful operators in a single query, if Spark drops late inputs "between" these operators, it becomes "correctness" issue. Spark should disallow such possibility, but given we already provided the flexibility, at least we should provide the way to observe the correctness issue and decide whether they should make correction of their query or not.

### Does this PR introduce _any_ user-facing change?

Yes. End users will be able to retrieve the information of late inputs via two ways:

1. SQL tab in Spark UI

2. Streaming Query Listener

### How was this patch tested?

New UTs added & existing UTs are modified to reflect the change.

And ran manual test reproducing SPARK-28094.

I've picked the specific case on "B outer C outer D" which is enough to represent the "intermediate late row" issue due to global watermark.

https://gist.github.com/jammann/b58bfbe0f4374b89ecea63c1e32c8f17

Spark logs warning message on the query which means SPARK-28074 is working correctly,

```

20/05/30 17:52:47 WARN UnsupportedOperationChecker: Detected pattern of possible 'correctness' issue due to global watermark. The query contains stateful operation which can emit rows older than the current watermark plus allowed late record delay, which are "late rows" in downstream stateful operations and these rows can be discarded. Please refer the programming guide doc for more details.;

Join LeftOuter, ((D_FK#28 = D_ID#87) AND (B_LAST_MOD#26-T30000ms = D_LAST_MOD#88-T30000ms))

:- Join LeftOuter, ((C_FK#27 = C_ID#58) AND (B_LAST_MOD#26-T30000ms = C_LAST_MOD#59-T30000ms))

: :- EventTimeWatermark B_LAST_MOD#26: timestamp, 30 seconds

: : +- Project [v#23.B_ID AS B_ID#25, v#23.B_LAST_MOD AS B_LAST_MOD#26, v#23.C_FK AS C_FK#27, v#23.D_FK AS D_FK#28]

: : +- Project [from_json(StructField(B_ID,StringType,false), StructField(B_LAST_MOD,TimestampType,false), StructField(C_FK,StringType,true), StructField(D_FK,StringType,true), value#21, Some(UTC)) AS v#23]

: : +- Project [cast(value#8 as string) AS value#21]

: : +- StreamingRelationV2 org.apache.spark.sql.kafka010.KafkaSourceProvider3a7fd18c, kafka, org.apache.spark.sql.kafka010.KafkaSourceProvider$KafkaTable396d2958, org.apache.spark.sql.util.CaseInsensitiveStringMapa51ee61a, [key#7, value#8, topic#9, partition#10, offset#11L, timestamp#12, timestampType#13], StreamingRelation DataSource(org.apache.spark.sql.SparkSessiond221af8,kafka,List(),None,List(),None,Map(inferSchema -> true, startingOffsets -> earliest, subscribe -> B, kafka.bootstrap.servers -> localhost:9092),None), kafka, [key#0, value#1, topic#2, partition#3, offset#4L, timestamp#5, timestampType#6]

: +- EventTimeWatermark C_LAST_MOD#59: timestamp, 30 seconds

: +- Project [v#56.C_ID AS C_ID#58, v#56.C_LAST_MOD AS C_LAST_MOD#59]

: +- Project [from_json(StructField(C_ID,StringType,false), StructField(C_LAST_MOD,TimestampType,false), value#54, Some(UTC)) AS v#56]

: +- Project [cast(value#41 as string) AS value#54]

: +- StreamingRelationV2 org.apache.spark.sql.kafka010.KafkaSourceProvider3f507373, kafka, org.apache.spark.sql.kafka010.KafkaSourceProvider$KafkaTable7b6736a4, org.apache.spark.sql.util.CaseInsensitiveStringMapa51ee61b, [key#40, value#41, topic#42, partition#43, offset#44L, timestamp#45, timestampType#46], StreamingRelation DataSource(org.apache.spark.sql.SparkSessiond221af8,kafka,List(),None,List(),None,Map(inferSchema -> true, startingOffsets -> earliest, subscribe -> C, kafka.bootstrap.servers -> localhost:9092),None), kafka, [key#33, value#34, topic#35, partition#36, offset#37L, timestamp#38, timestampType#39]

+- EventTimeWatermark D_LAST_MOD#88: timestamp, 30 seconds

+- Project [v#85.D_ID AS D_ID#87, v#85.D_LAST_MOD AS D_LAST_MOD#88]

+- Project [from_json(StructField(D_ID,StringType,false), StructField(D_LAST_MOD,TimestampType,false), value#83, Some(UTC)) AS v#85]

+- Project [cast(value#70 as string) AS value#83]

+- StreamingRelationV2 org.apache.spark.sql.kafka010.KafkaSourceProvider2b90e779, kafka, org.apache.spark.sql.kafka010.KafkaSourceProvider$KafkaTable36f8cd29, org.apache.spark.sql.util.CaseInsensitiveStringMapa51ee620, [key#69, value#70, topic#71, partition#72, offset#73L, timestamp#74, timestampType#75], StreamingRelation DataSource(org.apache.spark.sql.SparkSessiond221af8,kafka,List(),None,List(),None,Map(inferSchema -> true, startingOffsets -> earliest, subscribe -> D, kafka.bootstrap.servers -> localhost:9092),None), kafka, [key#62, value#63, topic#64, partition#65, offset#66L, timestamp#67, timestampType#68]

```

and we can find the late inputs from the batch 4 as follows:

which represents intermediate inputs are being lost, ended up with correctness issue.

Closes#28607 from HeartSaVioR/SPARK-24634-v3.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Currently, `date_format` and `from_unixtime`, `unix_timestamp`,`to_unix_timestamp`, `to_timestamp`, `to_date` have different exception handling behavior for formatting datetime values.

In this PR, we apply the exception handling behavior of `date_format` to `from_unixtime`, `unix_timestamp`,`to_unix_timestamp`, `to_timestamp` and `to_date`.

In the phase of creating the datetime formatted or formating, exceptions will be raised.

e.g.

```java

spark-sql> select date_format(make_timestamp(1, 1 ,1,1,1,1), 'yyyyyyyyyyy-MM-aaa');

20/05/28 15:25:38 ERROR SparkSQLDriver: Failed in [select date_format(make_timestamp(1, 1 ,1,1,1,1), 'yyyyyyyyyyy-MM-aaa')]

org.apache.spark.SparkUpgradeException: You may get a different result due to the upgrading of Spark 3.0: Fail to recognize 'yyyyyyyyyyy-MM-aaa' pattern in the DateTimeFormatter. 1) You can set spark.sql.legacy.timeParserPolicy to LEGACY to restore the behavior before Spark 3.0. 2) You can form a valid datetime pattern with the guide from https://spark.apache.org/docs/latest/sql-ref-datetime-pattern.html

```

```java

spark-sql> select date_format(make_timestamp(1, 1 ,1,1,1,1), 'yyyyyyyyyyy-MM-AAA');

20/05/28 15:26:10 ERROR SparkSQLDriver: Failed in [select date_format(make_timestamp(1, 1 ,1,1,1,1), 'yyyyyyyyyyy-MM-AAA')]

java.lang.IllegalArgumentException: Illegal pattern character: A

```

```java

spark-sql> select date_format(make_timestamp(1,1,1,1,1,1), 'yyyyyyyyyyy-MM-dd');

20/05/28 15:23:23 ERROR SparkSQLDriver: Failed in [select date_format(make_timestamp(1,1,1,1,1,1), 'yyyyyyyyyyy-MM-dd')]

java.lang.ArrayIndexOutOfBoundsException: 11

at java.time.format.DateTimeFormatterBuilder$NumberPrinterParser.format(DateTimeFormatterBuilder.java:2568)

```

In the phase of parsing, `DateTimeParseException | DateTimeException | ParseException` will be suppressed, but `SparkUpgradeException` will still be raised

e.g.

```java

spark-sql> set spark.sql.legacy.timeParserPolicy=exception;

spark.sql.legacy.timeParserPolicy exception

spark-sql> select to_timestamp("2020-01-27T20:06:11.847-0800", "yyyy-MM-dd'T'HH:mm:ss.SSSz");

20/05/28 15:31:15 ERROR SparkSQLDriver: Failed in [select to_timestamp("2020-01-27T20:06:11.847-0800", "yyyy-MM-dd'T'HH:mm:ss.SSSz")]

org.apache.spark.SparkUpgradeException: You may get a different result due to the upgrading of Spark 3.0: Fail to parse '2020-01-27T20:06:11.847-0800' in the new parser. You can set spark.sql.legacy.timeParserPolicy to LEGACY to restore the behavior before Spark 3.0, or set to CORRECTED and treat it as an invalid datetime string.

```

```java

spark-sql> set spark.sql.legacy.timeParserPolicy=corrected;

spark.sql.legacy.timeParserPolicy corrected

spark-sql> select to_timestamp("2020-01-27T20:06:11.847-0800", "yyyy-MM-dd'T'HH:mm:ss.SSSz");

NULL

spark-sql> set spark.sql.legacy.timeParserPolicy=legacy;

spark.sql.legacy.timeParserPolicy legacy

spark-sql> select to_timestamp("2020-01-27T20:06:11.847-0800", "yyyy-MM-dd'T'HH:mm:ss.SSSz");

2020-01-28 12:06:11.847

```

### Why are the changes needed?

Consistency

### Does this PR introduce _any_ user-facing change?

Yes, invalid datetime patterns will fail `from_unixtime`, `unix_timestamp`,`to_unix_timestamp`, `to_timestamp` and `to_date` instead of resulting `NULL`

### How was this patch tested?

add more tests

Closes#28650 from yaooqinn/SPARK-31830.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

These changes implement an application wait mechanism which will allow spark-submit to wait until the application finishes in Standalone Spark Mode. This will delay the exit of spark-submit JVM until the job is completed. This implementation will keep monitoring the application until it is either finished, failed or killed. This will be controlled via a flag (spark.submit.waitForCompletion) which will be set to false by default.

### Why are the changes needed?

Currently, Livy API for Standalone Cluster Mode doesn't know when the job has finished. If this flag is enabled, this can be used by Livy API (/batches/{batchId}/state) to find out when the application has finished/failed. This flag is Similar to spark.yarn.submit.waitAppCompletion.

### Does this PR introduce any user-facing change?

Yes, this PR introduces a new flag but it will be disabled by default.

### How was this patch tested?

Couldn't implement unit tests since the pollAndReportStatus method has System.exit() calls. Please provide any suggestions.

Tested spark-submit locally for the following scenarios:

1. With the flag enabled, spark-submit exits once the job is finished.

2. With the flag enabled and job failed, spark-submit exits when the job fails.

3. With the flag disabled, spark-submit exists post submitting the job (existing behavior).

4. Existing behavior is unchanged when the flag is not added explicitly.

Closes#28258 from akshatb1/master.

Lead-authored-by: Akshat Bordia <akshat.bordia31@gmail.com>

Co-authored-by: Akshat Bordia <akshat.bordia@citrix.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

SPARK-28199 (#24996) made the trigger related public API to be exposed only from static methods of Trigger class. This is backward incompatible change, so some users may experience compilation error after upgrading to Spark 3.0.0.

While we plan to mention the change into release note, it's good to mention the change to the migration guide doc as well, since the purpose of the doc is to collect the major changes/incompatibilities between versions and end users would refer the doc.

### Why are the changes needed?

SPARK-28199 is technically backward incompatible change and we should kindly guide the change.

### Does this PR introduce _any_ user-facing change?

Doc change.

### How was this patch tested?

N/A, as it's just a doc change.

Closes#28763 from HeartSaVioR/SPARK-28199-FOLLOWUP-doc.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

`fileNameOnly` parameter is split to 2 pieces in [this](dbb8143501) commit. This PR re-unites it.

### Why are the changes needed?

Parameter description split in doc.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

```

cd docs/

SKIP_API=1 jekyll build

```

Manual webpage check.

Closes#28739 from gaborgsomogyi/datasettxtfix.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

# What changes were proposed in this pull request?

After all these attempts https://github.com/apache/spark/pull/28692 and https://github.com/apache/spark/pull/28719 an https://github.com/apache/spark/pull/28727.

they all have limitations as mentioned in their discussions.

Maybe the only way is to forbid them all

### Why are the changes needed?

These week-based fields need Locale to express their semantics, the first day of the week varies from country to country.

From the Java doc of WeekFields

```java

/**

* Gets the first day-of-week.

* <p>

* The first day-of-week varies by culture.

* For example, the US uses Sunday, while France and the ISO-8601 standard use Monday.

* This method returns the first day using the standard {code DayOfWeek} enum.

*

* return the first day-of-week, not null

*/

public DayOfWeek getFirstDayOfWeek() {

return firstDayOfWeek;

}

```

But for the SimpleDateFormat, the day-of-week is not localized

```

u Day number of week (1 = Monday, ..., 7 = Sunday) Number 1

```

Currently, the default locale we use is the US, so the result moved a day or a year or a week backward.

e.g.

For the date `2019-12-29(Sunday)`, in the Sunday Start system(e.g. en-US), it belongs to 2020 of week-based-year, in the Monday Start system(en-GB), it goes to 2019. the week-of-week-based-year(w) will be affected too

```sql

spark-sql> SELECT to_csv(named_struct('time', to_timestamp('2019-12-29', 'yyyy-MM-dd')), map('timestampFormat', 'YYYY', 'locale', 'en-US'));

2020

spark-sql> SELECT to_csv(named_struct('time', to_timestamp('2019-12-29', 'yyyy-MM-dd')), map('timestampFormat', 'YYYY', 'locale', 'en-GB'));

2019

spark-sql> SELECT to_csv(named_struct('time', to_timestamp('2019-12-29', 'yyyy-MM-dd')), map('timestampFormat', 'YYYY-ww-uu', 'locale', 'en-US'));

2020-01-01

spark-sql> SELECT to_csv(named_struct('time', to_timestamp('2019-12-29', 'yyyy-MM-dd')), map('timestampFormat', 'YYYY-ww-uu', 'locale', 'en-GB'));

2019-52-07

spark-sql> SELECT to_csv(named_struct('time', to_timestamp('2020-01-05', 'yyyy-MM-dd')), map('timestampFormat', 'YYYY-ww-uu', 'locale', 'en-US'));

2020-02-01

spark-sql> SELECT to_csv(named_struct('time', to_timestamp('2020-01-05', 'yyyy-MM-dd')), map('timestampFormat', 'YYYY-ww-uu', 'locale', 'en-GB'));

2020-01-07

```

For other countries, please refer to [First Day of the Week in Different Countries](http://chartsbin.com/view/41671)

### Does this PR introduce _any_ user-facing change?

With this change, user can not use 'YwuW', but 'e' for 'u' instead. This can at least turn this not to be a silent data change.

### How was this patch tested?

add unit tests

Closes#28728 from yaooqinn/SPARK-31879-NEW2.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

The Pyspark Migration Guide needs to mention a breaking change of the Pyspark ML API.

### Why are the changes needed?

In SPARK-29093, all setters have been removed from `Params` mixins in `pyspark.ml.param.shared`. Those setters had been part of the public pyspark ML API, hence this is a breaking change.

### Does this PR introduce _any_ user-facing change?

Only documentation.

### How was this patch tested?

Visually.

Closes#28663 from EnricoMi/branch-pyspark-migration-guide-setters.

Authored-by: Enrico Minack <github@enrico.minack.dev>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

This PR disables week-based date filed for parsing

closes#28674

### Why are the changes needed?

1. It's an un-fixable behavior change to fill the gap between SimpleDateFormat and DateTimeFormater and backward-compatibility for different JDKs.A lot of effort has been made to prove it at https://github.com/apache/spark/pull/28674

2. The existing behavior itself in 2.4 is confusing, e.g.

```sql

spark-sql> select to_timestamp('1', 'w');

1969-12-28 00:00:00

spark-sql> select to_timestamp('1', 'u');

1970-01-05 00:00:00

```

The 'u' here seems not to go to the Monday of the first week in week-based form or the first day of the year in non-week-based form but go to the Monday of the second week in week-based form.

And, e.g.

```sql

spark-sql> select to_timestamp('2020 2020', 'YYYY yyyy');

2020-01-01 00:00:00

spark-sql> select to_timestamp('2020 2020', 'yyyy YYYY');

2019-12-29 00:00:00

spark-sql> select to_timestamp('2020 2020 1', 'YYYY yyyy w');

NULL

spark-sql> select to_timestamp('2020 2020 1', 'yyyy YYYY w');

2019-12-29 00:00:00

```

I think we don't need to introduce all the weird behavior from Java

3. The current test coverage for week-based date fields is almost 0%, which indicates that we've never imagined using it.

4. Avoiding JDK bugs

https://issues.apache.org/jira/browse/SPARK-31880

### Does this PR introduce _any_ user-facing change?

Yes, the 'Y/W/w/u/F/E' pattern cannot be used datetime parsing functions.

### How was this patch tested?

more tests added

Closes#28706 from yaooqinn/SPARK-31892.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

SQL Rest API exposes query execution details and metrics as Public API. Its documentation will be useful for the end-users.

### Why are the changes needed?

SQL Rest API does not exist under Spark Rest API.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Manually build and check

Closes#28354 from erenavsarogullari/SPARK-31566.

Lead-authored-by: Eren Avsarogullari <eren.avsarogullari@gmail.com>

Co-authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Co-authored-by: Eren Avsarogullari <erenavsarogullari@gmail.com>

Signed-off-by: Gengliang Wang <gengliang.wang@databricks.com>

### What changes were proposed in this pull request?

We should use dataType.catalogString to unified the data type mismatch message.

Before:

```sql

spark-sql> create table SPARK_31834(a int) using parquet;

spark-sql> insert into SPARK_31834 select '1';

Error in query: Cannot write incompatible data to table '`default`.`spark_31834`':

- Cannot safely cast 'a': StringType to IntegerType;

```

After:

```sql

spark-sql> create table SPARK_31834(a int) using parquet;

spark-sql> insert into SPARK_31834 select '1';

Error in query: Cannot write incompatible data to table '`default`.`spark_31834`':

- Cannot safely cast 'a': string to int;

```

### How was this patch tested?

UT.

Closes#28654 from lipzhu/SPARK-31834.

Authored-by: lipzhu <lipzhu@ebay.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

As mentioned in https://github.com/apache/spark/pull/28673 and suggested via cloud-fan at https://github.com/apache/spark/pull/28673#discussion_r432817075

In this PR, we disable datetime pattern in the form of `y..y` and `Y..Y` whose lengths are greater than 10 to avoid sort of JDK bug as described below

he new datetime formatter introduces silent data change like,

```sql

spark-sql> select from_unixtime(1, 'yyyyyyyyyyy-MM-dd');

NULL

spark-sql> set spark.sql.legacy.timeParserPolicy=legacy;

spark.sql.legacy.timeParserPolicy legacy

spark-sql> select from_unixtime(1, 'yyyyyyyyyyy-MM-dd');

00000001970-01-01

spark-sql>

```

For patterns that support `SignStyle.EXCEEDS_PAD`, e.g. `y..y`(len >=4), when using the `NumberPrinterParser` to format it

```java

switch (signStyle) {

case EXCEEDS_PAD:

if (minWidth < 19 && value >= EXCEED_POINTS[minWidth]) {

buf.append(decimalStyle.getPositiveSign());

}

break;

....

```

the `minWidth` == `len(y..y)`

the `EXCEED_POINTS` is

```java

/**

* Array of 10 to the power of n.

*/

static final long[] EXCEED_POINTS = new long[] {

0L,

10L,

100L,

1000L,

10000L,

100000L,

1000000L,

10000000L,

100000000L,

1000000000L,

10000000000L,

};

```

So when the `len(y..y)` is greater than 10, ` ArrayIndexOutOfBoundsException` will be raised.

And at the caller side, for `from_unixtime`, the exception will be suppressed and silent data change occurs. for `date_format`, the `ArrayIndexOutOfBoundsException` will continue.

### Why are the changes needed?

fix silent data change

### Does this PR introduce _any_ user-facing change?

Yes, SparkUpgradeException will take place of `null` result when the pattern contains 10 or more continuous 'y' or 'Y'

### How was this patch tested?

new tests

Closes#28684 from yaooqinn/SPARK-31867-2.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

If `LLL`/`qqq` is used in the datetime pattern string, and the current JDK in use has a bug for the stand-alone form (see https://bugs.openjdk.java.net/browse/JDK-8114833), throw an exception with a clear error message.

### Why are the changes needed?

to keep backward compatibility with Spark 2.4

### Does this PR introduce _any_ user-facing change?

Yes

Spark 2.4

```

scala> sql("select date_format('1990-1-1', 'LLL')").show

+---------------------------------------------+

|date_format(CAST(1990-1-1 AS TIMESTAMP), LLL)|

+---------------------------------------------+

| Jan|

+---------------------------------------------+

```

Spark 3.0 with Java 11

```

scala> sql("select date_format('1990-1-1', 'LLL')").show

+---------------------------------------------+

|date_format(CAST(1990-1-1 AS TIMESTAMP), LLL)|

+---------------------------------------------+

| Jan|

+---------------------------------------------+

```

Spark 3.0 with Java 8

```

// before this PR

+---------------------------------------------+

|date_format(CAST(1990-1-1 AS TIMESTAMP), LLL)|

+---------------------------------------------+

| 1|

+---------------------------------------------+

// after this PR

scala> sql("select date_format('1990-1-1', 'LLL')").show

org.apache.spark.SparkUpgradeException

```

### How was this patch tested?

manual test with java 8 and 11

Closes#28646 from cloud-fan/format.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR adds the structured streaming UI introduction to the Web UI doc.

### Why are the changes needed?

The structured streaming web UI introduced before was missing from the Web UI documentation.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

N.A.

Closes#28609 from xccui/ss-ui-doc.

Authored-by: Xingcan Cui <xccui@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Five continuous pattern characters with 'G/M/L/E/u/Q/q' means Narrow-Text Style while we turn to use `java.time.DateTimeFormatterBuilder` since 3.0.0, which output the leading single letter of the value, e.g. `December` would be `D`. In Spark 2.4 they mean Full-Text Style.

In this PR, we explicitly disable Narrow-Text Style for these pattern characters.

### Why are the changes needed?

Without this change, there will be a silent data change.

### Does this PR introduce _any_ user-facing change?

Yes, queries with datetime operations using datetime patterns, e.g. `G/M/L/E/u` will fail if the pattern length is 5 and other patterns, e,g. 'k', 'm' also can accept a certain number of letters.

1. datetime patterns that are not supported by the new parser but the legacy will get SparkUpgradeException, e.g. "GGGGG", "MMMMM", "LLLLL", "EEEEE", "uuuuu", "aa", "aaa". 2 options are given to end-users, one is to use legacy mode, and the other is to follow the new online doc for correct datetime patterns

2, datetime patterns that are not supported by both the new parser and the legacy, e.g. "QQQQQ", "qqqqq", will get IllegalArgumentException which is captured by Spark internally and results NULL to end-users.

### How was this patch tested?

add unit tests

Closes#28592 from yaooqinn/SPARK-31771.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

add docs for sql migration-guide

### Why are the changes needed?

let user know more about the cast scenarios in which Hive and Spark generate different results

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

no need to test

Closes#28605 from GuoPhilipse/spark-docs.

Lead-authored-by: GuoPhilipse <guofei_ok@126.com>

Co-authored-by: GuoPhilipse <46367746+GuoPhilipse@users.noreply.github.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Added MDC support in all thread pools.

ThreaddUtils create new pools that pass over MDC.

### Why are the changes needed?

In many cases, it is very hard to understand from which actions the logs in the executor come from.

when you are doing multi-thread work in the driver and send actions in parallel.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

No test added because no new functionality added it is thread pull change and all current tests pass.

Closes#26624 from igreenfield/master.

Authored-by: Izek Greenfield <igreenfield@axiomsl.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

1. Describe standard 'M' and stand-alone 'L' text forms

2. Add examples for all supported number of month letters

<img width="1047" alt="Screenshot 2020-05-18 at 08 57 31" src="https://user-images.githubusercontent.com/1580697/82178856-b16f1000-98e5-11ea-87c0-456ef94dcd43.png">

### Why are the changes needed?

To improve docs and show how to use month patterns.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

By building docs and checking by eyes.

Closes#28558 from MaxGekk/describe-L-M-date-pattern.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This patch effectively reverts SPARK-30098 via below changes:

* Removed the config

* Removed the changes done in parser rule

* Removed the usage of config in tests

* Removed tests which depend on the config

* Rolled back some tests to before SPARK-30098 which were affected by SPARK-30098

* Reflect the change into docs (migration doc, create table syntax)

### Why are the changes needed?

SPARK-30098 brought confusion and frustration on using create table DDL query, and we agreed about the bad effect on the change.

Please go through the [discussion thread](http://apache-spark-developers-list.1001551.n3.nabble.com/DISCUSS-Resolve-ambiguous-parser-rule-between-two-quot-create-table-quot-s-td29051i20.html) to see the details.

### Does this PR introduce _any_ user-facing change?

No, compared to Spark 2.4.x. End users tried to experiment with Spark 3.0.0 previews will see the change that the behavior is going back to Spark 2.4.x, but I believe we won't guarantee compatibility in preview releases.

### How was this patch tested?

Existing UTs.

Closes#28517 from HeartSaVioR/revert-SPARK-30098.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

# What changes were proposed in this pull request?

This PR is a follow-up to fix a version of configuration document.

### Why are the changes needed?

The original PR is backported to branch-3.0.

### Does this PR introduce _any_ user-facing change?

Yes.

### How was this patch tested?

Manual.

Closes#28530 from dongjoon-hyun/SPARK-31696-2.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This PR aims to add `spark.kubernetes.driver.service.annotation` like `spark.kubernetes.driver.service.annotation`.

### Why are the changes needed?

Annotations are used in many ways. One example is that Prometheus monitoring system search metric endpoint via annotation.

- https://github.com/helm/charts/tree/master/stable/prometheus#scraping-pod-metrics-via-annotations

### Does this PR introduce _any_ user-facing change?

Yes. The documentation is added.

### How was this patch tested?

Pass Jenkins with the updated unit tests.

Closes#28518 from dongjoon-hyun/SPARK-31696.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This PR proposes to set the minimum Arrow version as 0.15.1 to be consistent with PySpark side at.

### Why are the changes needed?

It will reduce the maintenance overhead to match the Arrow versions, and minimize the supported range. SparkR Arrow optimization is experimental yet.

### Does this PR introduce _any_ user-facing change?

No, it's the change in unreleased branches only.

### How was this patch tested?

0.15.x was already tested at SPARK-29378, and we're testing the latest version of SparkR currently in AppVeyor. I already manually tested too.

Closes#28520 from HyukjinKwon/SPARK-31701.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This changes the docs to make it clearer that order preservation is not guaranteed when saving a RDD to disk and reading it back ([SPARK-5300](https://issues.apache.org/jira/browse/SPARK-5300)).

I added two sentences about this in the RDD Programming Guide.

The issue was discussed on the dev mailing list:

http://apache-spark-developers-list.1001551.n3.nabble.com/RDD-order-guarantees-td10142.html

### Why are the changes needed?

Because RDDs are order-aware collections, it is natural to expect that if I use `saveAsTextFile` and then load the resulting file with `sparkContext.textFile`, I obtain a RDD in the same order.

This is unfortunately not the case at the moment and there is no agreed upon way to fix this in Spark itself (see PR #4204 which attempted to fix this). Users should be aware of this.

### Does this PR introduce _any_ user-facing change?

Yes, two new sentences in the documentation.

### How was this patch tested?

By checking that the documentation looks good.

Closes#28465 from wetneb/SPARK-5300-docs.

Authored-by: Antonin Delpeuch <antonin@delpeuch.eu>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

This PR aims to new Prometheus-format metric endpoints experimental in Apache Spark 3.0.0.

### Why are the changes needed?

Although the new metrics are disabled by default, we had better make it experimental explicitly in Apache Spark 3.0.0 since the output format is still not fixed. We can finalize it in Apache Spark 3.1.0.

### Does this PR introduce _any_ user-facing change?

Only doc-change is visible to the users.

### How was this patch tested?

Manually check the code since this is a documentation and class annotation change.

Closes#28495 from dongjoon-hyun/SPARK-31674.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>