### What changes were proposed in this pull request?

This PR proposes to ask users if they want to download and install SparkR when they install SparkR from CRAN.

`SPARKR_ASK_INSTALLATION` environment variable was added in case other notebook projects are affected.

### Why are the changes needed?

This is required for CRAN. Currently SparkR is removed: https://cran.r-project.org/web/packages/SparkR/index.html.

See also https://lists.apache.org/thread.html/r02b9046273a518e347dfe85f864d23d63d3502c6c1edd33df17a3b86%40%3Cdev.spark.apache.org%3E

### Does this PR introduce _any_ user-facing change?

Yes, `sparkR.session(...)` will ask if users want to download and install Spark package or not if they are in the plain R shell or `Rscript`.

### How was this patch tested?

**R shell**

Valid input (`n`):

```

> sparkR.session(master="local")

Spark not found in SPARK_HOME:

Will you download and install (or reuse if it exists) Spark package under the cache [/.../Caches/spark]? (y/n): n

```

```

Error in sparkCheckInstall(sparkHome, master, deployMode) :

Please make sure Spark package is installed in this machine.

- If there is one, set the path in sparkHome parameter or environment variable SPARK_HOME.

- If not, you may run install.spark function to do the job.

```

Invalid input:

```

> sparkR.session(master="local")

Spark not found in SPARK_HOME:

Will you download and install (or reuse if it exists) Spark package under the cache [/.../Caches/spark]? (y/n): abc

```

```

Will you download and install (or reuse if it exists) Spark package under the cache [/.../Caches/spark]? (y/n):

```

Valid input (`y`):

```

> sparkR.session(master="local")

Will you download and install (or reuse if it exists) Spark package under the cache [/.../Caches/spark]? (y/n): y

Spark not found in the cache directory. Installation will start.

MirrorUrl not provided.

Looking for preferred site from apache website...

Preferred mirror site found: https://ftp.riken.jp/net/apache/spark

Downloading spark-3.3.0 for Hadoop 2.7 from:

- https://ftp.riken.jp/net/apache/spark/spark-3.3.0/spark-3.3.0-bin-hadoop2.7.tgz

trying URL 'https://ftp.riken.jp/net/apache/spark/spark-3.3.0/spark-3.3.0-bin-hadoop2.7.tgz'

...

```

**Rscript**

```

cat tmp.R

```

```

library(SparkR, lib.loc = c(file.path(".", "R", "lib")))

sparkR.session(master="local")

```

```

Rscript tmp.R

```

Valid input (`n`):

```

Spark not found in SPARK_HOME:

Will you download and install (or reuse if it exists) Spark package under the cache [/.../Caches/spark]? (y/n): n

```

```

Error in sparkCheckInstall(sparkHome, master, deployMode) :

Please make sure Spark package is installed in this machine.

- If there is one, set the path in sparkHome parameter or environment variable SPARK_HOME.

- If not, you may run install.spark function to do the job.

Calls: sparkR.session -> sparkCheckInstall

```

Invalid input:

```

Spark not found in SPARK_HOME:

Will you download and install (or reuse if it exists) Spark package under the cache [/.../Caches/spark]? (y/n): abc

```

```

Will you download and install (or reuse if it exists) Spark package under the cache [/.../Caches/spark]? (y/n):

```

Valid input (`y`):

```

...

Spark not found in SPARK_HOME:

Will you download and install (or reuse if it exists) Spark package under the cache [/.../Caches/spark]? (y/n): y

Spark not found in the cache directory. Installation will start.

MirrorUrl not provided.

Looking for preferred site from apache website...

Preferred mirror site found: https://ftp.riken.jp/net/apache/spark

Downloading spark-3.3.0 for Hadoop 2.7 from:

...

```

`bin/sparkR` and `bin/spark-submit *.R` are not affected (tested).

Closes#33887 from HyukjinKwon/SPARK-36631.

Authored-by: Hyukjin Kwon <gurwls223@apache.org>

Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

(cherry picked from commit e983ba8fce)

Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Add back the deprecated R APIs removed by https://github.com/apache/spark/pull/22843/ and https://github.com/apache/spark/pull/22815.

These APIs are

- `sparkR.init`

- `sparkRSQL.init`

- `sparkRHive.init`

- `registerTempTable`

- `createExternalTable`

- `dropTempTable`

No need to port the function such as

```r

createExternalTable <- function(x, ...) {

dispatchFunc("createExternalTable(tableName, path = NULL, source = NULL, ...)", x, ...)

}

```

because this was for the backward compatibility when SQLContext exists before assuming from https://github.com/apache/spark/pull/9192, but seems we don't need it anymore since SparkR replaced SQLContext with Spark Session at https://github.com/apache/spark/pull/13635.

### Why are the changes needed?

Amend Spark's Semantic Versioning Policy

### Does this PR introduce any user-facing change?

Yes

The removed R APIs are put back.

### How was this patch tested?

Add back the removed tests

Closes#28058 from huaxingao/r.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

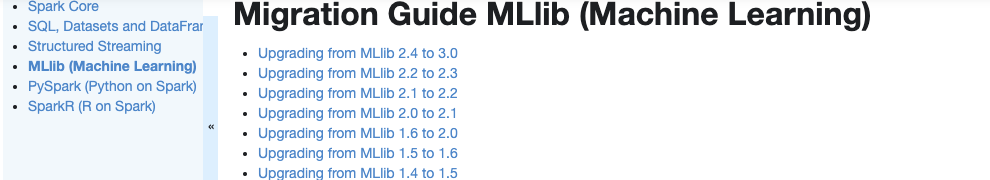

### What changes were proposed in this pull request?

Currently, there is no migration section for PySpark, SparkCore and Structured Streaming.

It is difficult for users to know what to do when they upgrade.

This PR proposes to create create a "Migration Guide" tap at Spark documentation.

This page will contain migration guides for Spark SQL, PySpark, SparkR, MLlib, Structured Streaming and Core. Basically it is a refactoring.

There are some new information added, which I will leave a comment inlined for easier review.

1. **MLlib**

Merge [ml-guide.html#migration-guide](https://spark.apache.org/docs/latest/ml-guide.html#migration-guide) and [ml-migration-guides.html](https://spark.apache.org/docs/latest/ml-migration-guides.html)

```

'docs/ml-guide.md'

↓ Merge new/old migration guides

'docs/ml-migration-guide.md'

```

2. **PySpark**

Extract PySpark specific items from https://spark.apache.org/docs/latest/sql-migration-guide-upgrade.html

```

'docs/sql-migration-guide-upgrade.md'

↓ Extract PySpark specific items

'docs/pyspark-migration-guide.md'

```

3. **SparkR**

Move [sparkr.html#migration-guide](https://spark.apache.org/docs/latest/sparkr.html#migration-guide) into a separate file, and extract from [sql-migration-guide-upgrade.html](https://spark.apache.org/docs/latest/sql-migration-guide-upgrade.html)

```

'docs/sparkr.md' 'docs/sql-migration-guide-upgrade.md'

Move migration guide section ↘ ↙ Extract SparkR specific items

docs/sparkr-migration-guide.md

```

4. **Core**

Newly created at `'docs/core-migration-guide.md'`. I skimmed resolved JIRAs at 3.0.0 and found some items to note.

5. **Structured Streaming**

Newly created at `'docs/ss-migration-guide.md'`. I skimmed resolved JIRAs at 3.0.0 and found some items to note.

6. **SQL**

Merged [sql-migration-guide-upgrade.html](https://spark.apache.org/docs/latest/sql-migration-guide-upgrade.html) and [sql-migration-guide-hive-compatibility.html](https://spark.apache.org/docs/latest/sql-migration-guide-hive-compatibility.html)

```

'docs/sql-migration-guide-hive-compatibility.md' 'docs/sql-migration-guide-upgrade.md'

Move Hive compatibility section ↘ ↙ Left over after filtering PySpark and SparkR items

'docs/sql-migration-guide.md'

```

### Why are the changes needed?

In order for users in production to effectively migrate to higher versions, and detect behaviour or breaking changes before upgrading and/or migrating.

### Does this PR introduce any user-facing change?

Yes, this changes Spark's documentation at https://spark.apache.org/docs/latest/index.html.

### How was this patch tested?

Manually build the doc. This can be verified as below:

```bash

cd docs

SKIP_API=1 jekyll build

open _site/index.html

```

Closes#25757 from HyukjinKwon/migration-doc.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>