### What changes were proposed in this pull request?

Change to use `dataTypes.foreach` instead of get the element use specified index in `def this(dataTypes: Seq[DataType]) `constructor of `SpecificInternalRow` because the random access performance is unsatisfactory if the input argument not a `IndexSeq`.

This pr followed srowen's advice.

### Why are the changes needed?

I found that SPARK-32550 had some negative impact on performance, the typical cases is "deterministic cardinality estimation" in `HyperLogLogPlusPlusSuite` when rsd is 0.001, we found the code that is significantly slower is line 41 in `HyperLogLogPlusPlusSuite`: `new SpecificInternalRow(hll.aggBufferAttributes.map(_.dataType)) `

08b951b1cb/sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/aggregate/HyperLogLogPlusPlusSuite.scala (L40-L44)

The size of "hll.aggBufferAttributes" in this case is 209716, the results of comparison before and after spark-32550 merged are as follows, The unit is ns:

| After SPARK-32550 createBuffer | After SPARK-32550 end to end | Before SPARK-32550 createBuffer | Before SPARK-32550 end to end

-- | -- | -- | -- | --

rsd 0.001, n 1000 | 52715513243 | 53004810687 | 195807999 | 773977677

rsd 0.001, n 5000 | 51881246165 | 52519358215 | 13689949 | 249974855

rsd 0.001, n 10000 | 52234282788 | 52374639172 | 14199071 | 183452846

rsd 0.001, n 50000 | 55503517122 | 55664035449 | 15219394 | 584477125

rsd 0.001, n 100000 | 51862662845 | 52116774177 | 19662834 | 166483678

rsd 0.001, n 500000 | 51619226715 | 52183189526 | 178048012 | 16681330

rsd 0.001, n 1000000 | 54861366981 | 54976399142 | 226178708 | 18826340

rsd 0.001, n 5000000 | 52023602143 | 52354615149 | 388173579 | 15446409

rsd 0.001, n 10000000 | 53008591660 | 53601392304 | 533454460 | 16033032

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

`mvn test -pl sql/catalyst -DwildcardSuites=org.apache.spark.sql.catalyst.expressions.aggregate.HyperLogLogPlusPlusSuite -Dtest=none`

**Before**:

```

Run completed in 8 minutes, 18 seconds.

Total number of tests run: 5

Suites: completed 2, aborted 0

Tests: succeeded 5, failed 0, canceled 0, ignored 0, pending 0

```

**After**

```

Run completed in 7 seconds, 65 milliseconds.

Total number of tests run: 5

Suites: completed 2, aborted 0

Tests: succeeded 5, failed 0, canceled 0, ignored 0, pending 0

```

Closes#29529 from LuciferYang/revert-spark-32550.

Authored-by: yangjie01 <yangjie01@baidu.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR proposes to make the behavior consistent for the `path` option when loading dataframes with a single path (e.g, `option("path", path).format("parquet").load(path)` vs. `option("path", path).parquet(path)`) by disallowing `path` option to coexist with `load`'s path parameters.

### Why are the changes needed?

The current behavior is inconsistent:

```scala

scala> Seq(1).toDF.write.mode("overwrite").parquet("/tmp/test")

scala> spark.read.option("path", "/tmp/test").format("parquet").load("/tmp/test").show

+-----+

|value|

+-----+

| 1|

+-----+

scala> spark.read.option("path", "/tmp/test").parquet("/tmp/test").show

+-----+

|value|

+-----+

| 1|

| 1|

+-----+

```

### Does this PR introduce _any_ user-facing change?

Yes, now if the `path` option is specified along with `load`'s path parameters, it would fail:

```scala

scala> Seq(1).toDF.write.mode("overwrite").parquet("/tmp/test")

scala> spark.read.option("path", "/tmp/test").format("parquet").load("/tmp/test").show

org.apache.spark.sql.AnalysisException: There is a path option set and load() is called with path parameters. Either remove the path option or move it into the load() parameters.;

at org.apache.spark.sql.DataFrameReader.verifyPathOptionDoesNotExist(DataFrameReader.scala:310)

at org.apache.spark.sql.DataFrameReader.load(DataFrameReader.scala:232)

... 47 elided

scala> spark.read.option("path", "/tmp/test").parquet("/tmp/test").show

org.apache.spark.sql.AnalysisException: There is a path option set and load() is called with path parameters. Either remove the path option or move it into the load() parameters.;

at org.apache.spark.sql.DataFrameReader.verifyPathOptionDoesNotExist(DataFrameReader.scala:310)

at org.apache.spark.sql.DataFrameReader.load(DataFrameReader.scala:250)

at org.apache.spark.sql.DataFrameReader.parquet(DataFrameReader.scala:778)

at org.apache.spark.sql.DataFrameReader.parquet(DataFrameReader.scala:756)

... 47 elided

```

The user can restore the previous behavior by setting `spark.sql.legacy.pathOptionBehavior.enabled` to `true`.

### How was this patch tested?

Added a test

Closes#29328 from imback82/dfw_option.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

For broadcast hash join and shuffled hash join, whenever the build side hashed relation turns out to be empty. We don't need to execute stream side plan at all, and can return an empty iterator (for inner join and left semi join), because we know for sure that none of stream side rows can be outputted as there's no match.

### Why are the changes needed?

A very minor optimization for rare use case, but in case build side turns out to be empty, we can leverage it to short-cut stream side to save CPU and IO.

Example broadcast hash join query similar to `JoinBenchmark` with empty hashed relation:

```

def broadcastHashJoinLongKey(): Unit = {

val N = 20 << 20

val M = 1 << 16

val dim = broadcast(spark.range(0).selectExpr("id as k", "cast(id as string) as v"))

codegenBenchmark("Join w long", N) {

val df = spark.range(N).join(dim, (col("id") % M) === col("k"))

assert(df.queryExecution.sparkPlan.find(_.isInstanceOf[BroadcastHashJoinExec]).isDefined)

df.noop()

}

}

```

Comparing wall clock time for enabling and disabling this PR (for non-codegen code path). Seeing like 8x improvement.

```

Java HotSpot(TM) 64-Bit Server VM 1.8.0_181-b13 on Mac OS X 10.15.4

Intel(R) Core(TM) i9-9980HK CPU 2.40GHz

Join w long: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

------------------------------------------------------------------------------------------------------------------------

Join PR disabled 637 646 12 32.9 30.4 1.0X

Join PR enabled 77 78 2 271.8 3.7 8.3X

```

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Added unit test in `JoinSuite`.

Closes#29484 from c21/empty-relation.

Authored-by: Cheng Su <chengsu@fb.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR fixes a bug of #29406. #29406 partially pushes down data filter even if it mixed in partition filters. But in some cases partition columns might be in data columns too. It will possibly push down a predicate with partition column to datasource.

### Why are the changes needed?

The test "org.apache.spark.sql.hive.orc.HiveOrcHadoopFsRelationSuite.save()/load() - partitioned table - simple queries - partition columns in data" is currently failed with hive-1.2 profile in master branch.

```

[info] - save()/load() - partitioned table - simple queries - partition columns in data *** FAILED *** (1 second, 457 milliseconds)

[info] java.util.NoSuchElementException: key not found: p1

[info] at scala.collection.immutable.Map$Map2.apply(Map.scala:138)

[info] at org.apache.spark.sql.hive.orc.OrcFilters$.buildLeafSearchArgument(OrcFilters.scala:250)

[info] at org.apache.spark.sql.hive.orc.OrcFilters$.convertibleFiltersHelper$1(OrcFilters.scala:143)

[info] at org.apache.spark.sql.hive.orc.OrcFilters$.$anonfun$convertibleFilters$4(OrcFilters.scala:146)

[info] at scala.collection.TraversableLike.$anonfun$flatMap$1(TraversableLike.scala:245)

[info] at scala.collection.IndexedSeqOptimized.foreach(IndexedSeqOptimized.scala:36)

[info] at scala.collection.IndexedSeqOptimized.foreach$(IndexedSeqOptimized.scala:33)

[info] at scala.collection.mutable.WrappedArray.foreach(WrappedArray.scala:38)

[info] at scala.collection.TraversableLike.flatMap(TraversableLike.scala:245)

[info] at scala.collection.TraversableLike.flatMap$(TraversableLike.scala:242)

[info] at scala.collection.AbstractTraversable.flatMap(Traversable.scala:108)

[info] at org.apache.spark.sql.hive.orc.OrcFilters$.convertibleFilters(OrcFilters.scala:145)

[info] at org.apache.spark.sql.hive.orc.OrcFilters$.createFilter(OrcFilters.scala:83)

[info] at org.apache.spark.sql.hive.orc.OrcFileFormat.buildReader(OrcFileFormat.scala:142)

```

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Unit test.

Closes#29526 from viirya/SPARK-32352-followup.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

Copy to master branch the unit test added for branch-2.4(https://github.com/apache/spark/pull/29430).

### Why are the changes needed?

The unit test will pass at master branch, indicating that issue reported in https://issues.apache.org/jira/browse/SPARK-32609 is already fixed at master branch. But adding this unit test for future possible failure catch.

### Does this PR introduce _any_ user-facing change?

no.

### How was this patch tested?

sbt test run

Closes#29435 from mingjialiu/master.

Authored-by: mingjial <mingjial@google.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Removing unused DELETE_ACTION in FileStreamSinkLog.

### Why are the changes needed?

DELETE_ACTION is not used nowhere in the code.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Tests where not added, because code was removed.

Closes#29505 from michal-wieleba/SPARK-32648.

Authored-by: majsiel <majsiel@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Override `def get: Date` in `DaysWritable` use the `daysToMillis(int d)` from the parent class `DateWritable` instead of `long daysToMillis(int d, boolean doesTimeMatter)`.

### Why are the changes needed?

It fixes failures of `HiveSerDeReadWriteSuite` with the profile `hive-1.2`. In that case, the parent class `DateWritable` has different implementation before the commit to Hive da3ed68eda. In particular, `get()` calls `new Date(daysToMillis(daysSinceEpoch))` instead of overrided `def get(doesTimeMatter: Boolean): Date` in the child class. The `get()` method returns wrong result `1970-01-01` because it uses not updated `daysSinceEpoch`.

### Does this PR introduce _any_ user-facing change?

Yes.

### How was this patch tested?

By running the test suite `HiveSerDeReadWriteSuite`:

```

$ build/sbt -Phive-1.2 -Phadoop-2.7 "test:testOnly org.apache.spark.sql.hive.execution.HiveSerDeReadWriteSuite"

```

and

```

$ build/sbt -Phive-2.3 -Phadoop-2.7 "test:testOnly org.apache.spark.sql.hive.execution.HiveSerDeReadWriteSuite"

```

Closes#29523 from MaxGekk/insert-date-into-hive-table-1.2.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Liang-Chi Hsieh <viirya@gmail.com>

### What changes were proposed in this pull request?

As mentioned in https://github.com/apache/spark/pull/29428#issuecomment-678735163 by viirya ,

fix bug in UT, since in script transformation no-serde mode, output of decimal is same in both hive-1.2/hive-2.3

### Why are the changes needed?

FIX UT

### Does this PR introduce _any_ user-facing change?

NO

### How was this patch tested?

EXISTED UT

Closes#29520 from AngersZhuuuu/SPARK-32608-FOLLOW.

Authored-by: angerszhu <angers.zhu@gmail.com>

Signed-off-by: Liang-Chi Hsieh <viirya@gmail.com>

### What changes were proposed in this pull request?

This reverts commit e277ef1a83.

### Why are the changes needed?

Because master and branch-3.0 both have few tests failed under hive-1.2 profile. And the PR #29457 missed a change in hive-1.2 code that causes compilation error. So it will make debugging the failed tests harder. I'd like revert #29457 first to unblock it.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Unit test

Closes#29519 from viirya/revert-SPARK-32646.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Liang-Chi Hsieh <viirya@gmail.com>

### What changes were proposed in this pull request?

Some Code refine.

1. rename EmptyHashedRelationWithAllNullKeys to HashedRelationWithAllNullKeys.

2. simplify generated code for BHJ NAAJ.

### Why are the changes needed?

Refine code and naming to avoid confusing understanding.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Existing test.

Closes#29503 from leanken/leanken-SPARK-32678.

Authored-by: xuewei.linxuewei <xuewei.linxuewei@alibaba-inc.com>

Signed-off-by: Liang-Chi Hsieh <viirya@gmail.com>

### What changes were proposed in this pull request?

The purpose of this pr is to resolve [SPARK-32526](https://issues.apache.org/jira/browse/SPARK-32526), all remaining failed cases are fixed.

The main change of this pr as follow:

- Change of `ExecutorAllocationManager.scala` for core module compilation in Scala 2.13, it's a blocking problem

- Change `Seq[_]` to `scala.collection.Seq[_]` refer to failed cases

- Added different expected plan of `Test 4: Star with several branches` of StarJoinCostBasedReorderSuite for Scala 2.13 because the candidates plans:

```

Join Inner, (d1_pk#5 = f1_fk1#0)

:- Join Inner, (f1_fk2#1 = d2_pk#8)

: :- Join Inner, (f1_fk3#2 = d3_pk#11)

```

and

```

Join Inner, (f1_fk2#1 = d2_pk#8)

:- Join Inner, (d1_pk#5 = f1_fk1#0)

: :- Join Inner, (f1_fk3#2 = d3_pk#11)

```

have same cost `Cost(200,9200)`, but `HashMap` is rewritten in scala 2.13 and The order of iterations leads to different results.

This pr fix test cases as follow:

- LiteralExpressionSuite (1 FAILED -> PASS)

- StarJoinCostBasedReorderSuite ( 1 FAILED-> PASS)

- ObjectExpressionsSuite( 2 FAILED-> PASS)

- ScalaReflectionSuite (1 FAILED-> PASS)

- RowEncoderSuite (10 FAILED-> PASS)

- ExpressionEncoderSuite (ABORTED-> PASS)

### Why are the changes needed?

We need to support a Scala 2.13 build.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

<!--

- Scala 2.12: Pass the Jenkins or GitHub Action

- Scala 2.13: Do the following:

```

dev/change-scala-version.sh 2.13

mvn clean install -DskipTests -pl sql/catalyst -Pscala-2.13 -am

mvn test -pl sql/catalyst -Pscala-2.13

```

**Before**

```

Tests: succeeded 4035, failed 17, canceled 0, ignored 6, pending 0

*** 1 SUITE ABORTED ***

*** 15 TESTS FAILED ***

```

**After**

```

Tests: succeeded 4338, failed 0, canceled 0, ignored 6, pending 0

All tests passed.

```

Closes#29434 from LuciferYang/sql-catalyst-tests.

Authored-by: yangjie01 <yangjie01@baidu.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

Rephrase the description for some operations to make it clearer.

### Why are the changes needed?

Add more detail in the document.

### Does this PR introduce _any_ user-facing change?

No, document only.

### How was this patch tested?

Document only.

Closes#29269 from xuanyuanking/SPARK-31792-follow.

Authored-by: Yuanjian Li <yuanjian.li@databricks.com>

Signed-off-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

## What changes were proposed in this pull request?

This fixed SPARK-32672 a data corruption. Essentially the BooleanBitSet CompressionScheme would miss nulls at the end of a CompressedBatch. The values would then default to false.

### Why are the changes needed?

It fixes data corruption

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

I manually tested it against the original issue that was producing errors for me. I also added in a unit test.

Closes#29506 from revans2/SPARK-32672.

Authored-by: Robert (Bobby) Evans <bobby@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Fix typo for docs, log messages and comments

### Why are the changes needed?

typo fix to increase readability

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

manual test has been performed to test the updated

Closes#29443 from brandonJY/spell-fix-doc.

Authored-by: Brandon Jiang <Brandon.jiang.a@outlook.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

Add document to `ExpressionEvalHelper`, and ask people to explore all the cases that can lead to null results (including null in struct fields, array elements and map values).

This PR also fixes `ComplexTypeSuite.GetArrayStructFields` to explore all the null cases.

### Why are the changes needed?

It happened several times that we hit correctness bugs caused by wrong expression nullability. When writing unit tests, we usually don't test the nullability flag directly, and it's too late to add such tests for all expressions.

In https://github.com/apache/spark/pull/22375, we extended the expression test framework, which checks the nullability flag when the expected result/field/element is null.

This requires the test cases to explore all the cases that can lead to null results

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

I reverted 5d296ed39e locally, and `ComplexTypeSuite` can catch the bug.

Closes#29493 from cloud-fan/small.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

This PR proposes to fix ORC predicate pushdown under case-insensitive analysis case. The field names in pushed down predicates don't need to match in exact letter case with physical field names in ORC files, if we enable case-insensitive analysis.

### Why are the changes needed?

Currently ORC predicate pushdown doesn't work with case-insensitive analysis. A predicate "a < 0" cannot pushdown to ORC file with field name "A" under case-insensitive analysis.

But Parquet predicate pushdown works with this case. We should make ORC predicate pushdown work with case-insensitive analysis too.

### Does this PR introduce _any_ user-facing change?

Yes, after this PR, under case-insensitive analysis, ORC predicate pushdown will work.

### How was this patch tested?

Unit tests.

Closes#29457 from viirya/fix-orc-pushdown.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Hive no serde mode when column less then output specified column, it will pad null value to it, spark should do this also.

```

hive> SELECT TRANSFORM(a, b)

> ROW FORMAT DELIMITED

> FIELDS TERMINATED BY '|'

> LINES TERMINATED BY '\n'

> NULL DEFINED AS 'NULL'

> USING 'cat' as (a string, b string, c string, d string)

> ROW FORMAT DELIMITED

> FIELDS TERMINATED BY '|'

> LINES TERMINATED BY '\n'

> NULL DEFINED AS 'NULL'

> FROM (

> select 1 as a, 2 as b

> ) tmp ;

OK

1 2 NULL NULL

Time taken: 24.626 seconds, Fetched: 1 row(s)

```

### Why are the changes needed?

Keep save behavior with hive data.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Added UT

Closes#29500 from AngersZhuuuu/SPARK-32667.

Authored-by: angerszhu <angers.zhu@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR reverts https://github.com/apache/spark/pull/27860 to downgrade Janino, as the new version has a bug.

### Why are the changes needed?

The symptom is about NaN comparison. For code below

```

if (double_value <= 0.0) {

...

} else {

...

}

```

If `double_value` is NaN, `NaN <= 0.0` is false and we should go to the else branch. However, current Spark goes to the if branch and causes correctness issues like SPARK-32640.

One way to fix it is:

```

boolean cond = double_value <= 0.0;

if (cond) {

...

} else {

...

}

```

I'm not familiar with Janino so I don't know what's going on there.

### Does this PR introduce _any_ user-facing change?

Yes, fix correctness bugs.

### How was this patch tested?

a new test

Closes#29495 from cloud-fan/revert.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This is a followup of https://github.com/apache/spark/pull/29469

Instead of passing the physical plan to the fallbacked v1 source directly and skipping analysis, optimization, planning altogether, this PR proposes to pass the optimized plan.

### Why are the changes needed?

It's a bit risky to pass the physical plan directly. When the fallbacked v1 source applies more operations to the input DataFrame, it will re-apply the post-planning physical rules like `CollapseCodegenStages`, `InsertAdaptiveSparkPlan`, etc., which is very tricky.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

existing test suite with some new tests

Closes#29489 from cloud-fan/follow.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In case that a last `SQLQueryTestSuite` test run is killed, it will fail in a next run because of a following reason:

```

[info] org.apache.spark.sql.SQLQueryTestSuite *** ABORTED *** (17 seconds, 483 milliseconds)

[info] org.apache.spark.sql.AnalysisException: Can not create the managed table('`testdata`'). The associated location('file:/Users/maropu/Repositories/spark/spark-master/sql/core/spark-warehouse/org.apache.spark.sql.SQLQueryTestSuite/testdata') already exists.;

[info] at org.apache.spark.sql.catalyst.catalog.SessionCatalog.validateTableLocation(SessionCatalog.scala:355)

[info] at org.apache.spark.sql.execution.command.CreateDataSourceTableAsSelectCommand.run(createDataSourceTables.scala:170)

[info] at org.apache.spark.sql.execution.command.DataWritingCommandExec.sideEffectResult$lzycompute(commands.scala:108)

```

This PR intends to add code to deletes orphan directories under a warehouse dir in `SQLQueryTestSuite` before creating test tables.

### Why are the changes needed?

To improve test convenience

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Manually checked

Closes#29488 from maropu/DeleteDirs.

Authored-by: Takeshi Yamamuro <yamamuro@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

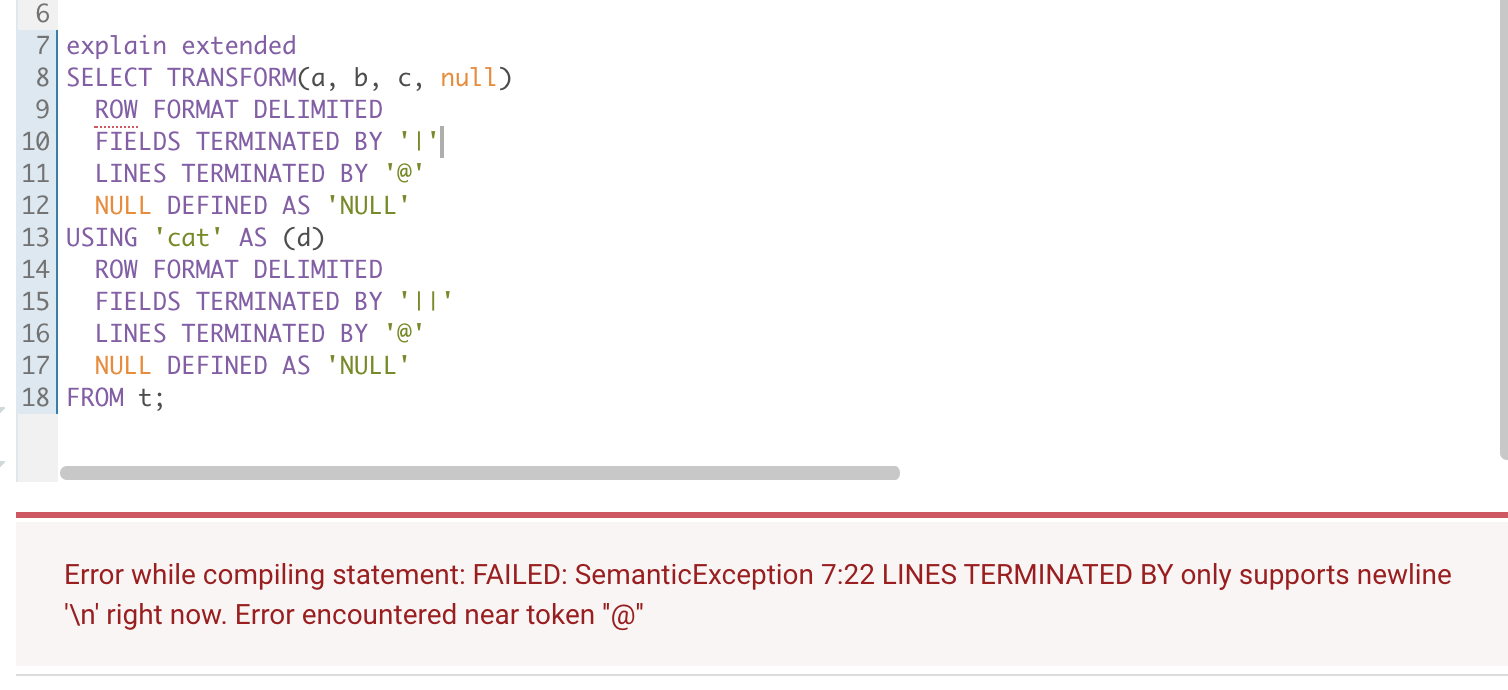

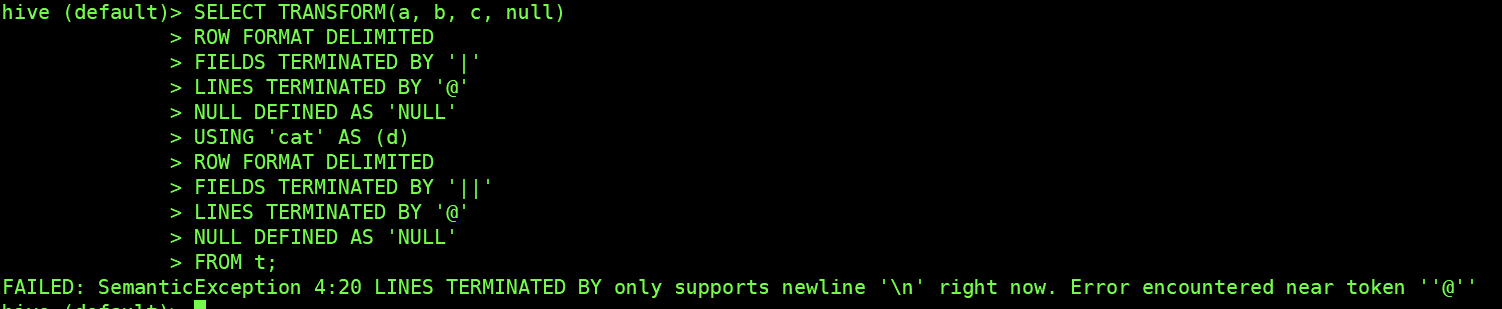

### What changes were proposed in this pull request?

Scrip Transform no-serde (`ROW FORMAT DELIMITED`) mode `LINE TERMINNATED BY `

only support `\n`.

Tested in hive :

Hive 1.1

Hive 2.3.7

### Why are the changes needed?

Strictly limit the use method to ensure the accuracy of data

### Does this PR introduce _any_ user-facing change?

User use Scrip Transform no-serde (ROW FORMAT DELIMITED) mode with `LINE TERMINNATED BY `

not equal `'\n'`. will throw error

### How was this patch tested?

Added UT

Closes#29438 from AngersZhuuuu/SPARK-32607.

Authored-by: angerszhu <angers.zhu@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In many operations on CompactibleFileStreamLog reads a metadata log file and materializes all entries into memory. As the nature of the compact operation, CompactibleFileStreamLog may have a huge compact log file with bunch of entries included, and for now they're just monotonically increasing, which means the amount of memory to materialize also grows incrementally. This leads pressure on GC.

This patch proposes to streamline the logic on file stream source and sink whenever possible to avoid memory issue. To make this possible we have to break the existing behavior of excluding entries - now the `compactLogs` method is called with all entries, which forces us to materialize all entries into memory. This is hopefully no effect on end users, because only file stream sink has a condition to exclude entries, and the condition has been never true. (DELETE_ACTION has been never set.)

Based on the observation, this patch also changes the existing UT a bit which simulates the situation where "A" file is added, and another batch marks the "A" file as deleted. This situation simply doesn't work with the change, but as I mentioned earlier it hasn't been used. (I'm not sure the UT is from the actual run. I guess not.)

### Why are the changes needed?

The memory issue (OOME) is reported by both JIRA issue and user mailing list.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

* Existing UTs

* Manual test done

The manual test leverages the simple apps which continuously writes the file stream sink metadata log.

bea7680e4c

The test is configured to have a batch metadata log file at 1.9M (10,000 entries) whereas other Spark configuration is set to the default. (compact interval = 10) The app runs as driver, and the heap memory on driver is set to 3g.

> before the patch

<img width="1094" alt="Screen Shot 2020-06-23 at 3 37 44 PM" src="https://user-images.githubusercontent.com/1317309/85375841-d94f3480-b571-11ea-817b-c6b48b34888a.png">

It only ran for 40 mins, with the latest compact batch file size as 1.3G. The process struggled with GC, and after some struggling, it threw OOME.

> after the patch

<img width="1094" alt="Screen Shot 2020-06-23 at 3 53 29 PM" src="https://user-images.githubusercontent.com/1317309/85375901-eff58b80-b571-11ea-837e-30d107f677f9.png">

It sustained 2 hours run (manually stopped as it's expected to run more), with the latest compact batch file size as 2.2G. The actual memory usage didn't even go up to 1.2G, and be cleaned up soon without outstanding GC activity.

Closes#28904 from HeartSaVioR/SPARK-30462.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Address the [#comment](https://github.com/apache/spark/pull/28840#discussion_r471172006).

### Why are the changes needed?

Make code robust.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

ut.

Closes#29453 from ulysses-you/SPARK-31999-FOLLOWUP.

Authored-by: ulysses <youxiduo@weidian.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR introduces a LogicalNode AlreadyPlanned, and related physical plan and preparation rule.

With the DataSourceV2 write operations, we have a way to fallback to the V1 writer APIs using InsertableRelation. The gross part is that we're in physical land, but the InsertableRelation takes a logical plan, so we have to pass the logical plans to these physical nodes, and then potentially go through re-planning. This re-planning can cause issues for an already optimized plan.

A useful primitive could be specifying that a plan is ready for execution through a logical node AlreadyPlanned. This would wrap a physical plan, and then we can go straight to execution.

### Why are the changes needed?

To avoid having a physical plan that is disconnected from the physical plan that is being executed in V1WriteFallback execution. When a physical plan node executes a logical plan, the inner query is not connected to the running physical plan. The physical plan that actually runs is not visible through the Spark UI and its metrics are not exposed. In some cases, the EXPLAIN plan doesn't show it.

### Does this PR introduce _any_ user-facing change?

Nope

### How was this patch tested?

V1FallbackWriterSuite tests that writes still work

Closes#29469 from brkyvz/alreadyAnalyzed2.

Lead-authored-by: Burak Yavuz <brkyvz@gmail.com>

Co-authored-by: Burak Yavuz <burak@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

When CSV/JSON datasources infer schema (e.g, `def inferSchema(files: Seq[FileStatus])`, they use the `files` along with the original options. `files` in `inferSchema` could have been deduced from the "path" option if the option was present, so this can cause issues (e.g., reading more data, listing the path again) since the "path" option is **added** to the `files`.

### Why are the changes needed?

The current behavior can cause the following issue:

```scala

class TestFileFilter extends PathFilter {

override def accept(path: Path): Boolean = path.getParent.getName != "p=2"

}

val path = "/tmp"

val df = spark.range(2)

df.write.json(path + "/p=1")

df.write.json(path + "/p=2")

val extraOptions = Map(

"mapred.input.pathFilter.class" -> classOf[TestFileFilter].getName,

"mapreduce.input.pathFilter.class" -> classOf[TestFileFilter].getName

)

// This works fine.

assert(spark.read.options(extraOptions).json(path).count == 2)

// The following with "path" option fails with the following:

// assertion failed: Conflicting directory structures detected. Suspicious paths

// file:/tmp

// file:/tmp/p=1

assert(spark.read.options(extraOptions).format("json").option("path", path).load.count() === 2)

```

### Does this PR introduce _any_ user-facing change?

Yes, the above failure doesn't happen and you get the consistent experience when you use `spark.read.csv(path)` or `spark.read.format("csv").option("path", path).load`.

### How was this patch tested?

Updated existing tests.

Closes#29437 from imback82/path_bug.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

For SQL

```

SELECT TRANSFORM(a, b, c)

ROW FORMAT DELIMITED

FIELDS TERMINATED BY ','

LINES TERMINATED BY '\n'

NULL DEFINED AS 'null'

USING 'cat' AS (a, b, c)

ROW FORMAT DELIMITED

FIELDS TERMINATED BY ','

LINES TERMINATED BY '\n'

NULL DEFINED AS 'NULL'

FROM testData

```

The correct

TOK_TABLEROWFORMATFIELD should be `, `nut actually ` ','`

TOK_TABLEROWFORMATLINES should be `\n` but actually` '\n'`

### Why are the changes needed?

Fix string value format

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Added UT

Closes#29428 from AngersZhuuuu/SPARK-32608.

Authored-by: angerszhu <angers.zhu@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Change maps in two constructors of SpecificInternalRow to while loops.

### Why are the changes needed?

This was originally noticed with https://github.com/apache/spark/pull/29353 and https://github.com/apache/spark/pull/29354 and will have impacts on performance of reading ORC and Avro files. Ran AvroReadBenchmarks with the new cases of nested and array'd structs in https://github.com/apache/spark/pull/29352. Haven't run benchmarks for ORC but can do that if needed.

**Before:**

```

Nested Struct Scan: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

Nested Struct 74674 75319 912 0.0 142429.1 1.0X

Array of Struct Scan: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

Array of Structs 34193 34339 206 0.0 65217.9 1.0X

```

**After:**

```

Nested Struct Scan: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

Nested Struct 48451 48619 237 0.0 92413.2 1.0X

Array of Struct Scan: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

Array of Structs 18518 18683 234 0.0 35319.6 1.0X

```

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Ran AvroReadBenchmarks with the new cases of nested and array'd structs in https://github.com/apache/spark/pull/29352.

Closes#29366 from msamirkhan/spark-32550.

Lead-authored-by: Samir Khan <muhammad.samir.khan@gmail.com>

Co-authored-by: skhan04 <samirkhan@verizonmedia.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Update `ObjectSerializerPruning.alignNullTypeInIf`, to consider the isNull check generated in `RowEncoder`, which is `Invoke(inputObject, "isNullAt", BooleanType, Literal(index) :: Nil)`.

### Why are the changes needed?

Query fails if we don't fix this bug, due to type mismatch in `If`.

### Does this PR introduce _any_ user-facing change?

Yes, the failed query can run after this fix.

### How was this patch tested?

new tests

Closes#29467 from cloud-fan/bug.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

During working on SPARK-25557, we found that ORC predicate pushdown doesn't have case-sensitivity test. This PR proposes to add case-sensitivity test for ORC predicate pushdown.

### Why are the changes needed?

Increasing test coverage for ORC predicate pushdown.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Pass Jenkins tests.

Closes#29427 from viirya/SPARK-25557-followup3.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Found java.util.NoSuchElementException in UT log of AdaptiveQueryExecSuite. During AQE, when sub-plan changed, LiveExecutionData is using the new sub-plan SQLMetrics to override the old ones, But in the final aggregateMetrics, since the plan was updated, the old metrics will throw NoSuchElementException when it try to match with the new metricTypes. To sum up, we need to filter out those outdated metrics to avoid throwing java.util.NoSuchElementException, which cause SparkUI SQL Tab abnormally rendered.

### Why are the changes needed?

SQL Metrics is not correct for some AQE cases, and it break SparkUI SQL Tab when it comes to NAAJ rewritten to LocalRelation case.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

* Added case in SQLAppStatusListenerSuite.

* Run AdaptiveQueryExecSuite with no "java.util.NoSuchElementException".

* Validation on Spark Web UI

Closes#29431 from leanken/leanken-SPARK-32615.

Authored-by: xuewei.linxuewei <xuewei.linxuewei@alibaba-inc.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR proposes to detect possible regression inside `SparkPlan`. To achieve this goal, this PR added a base test suite called `PlanStabilitySuite`. The basic workflow of this test suite is similar to `SQLQueryTestSuite`. It also uses `SPARK_GENERATE_GOLDEN_FILES` to decide whether it should regenerate the golden files or compare to the golden result for each input query. The difference is, `PlanStabilitySuite` uses the serialized explain result(.txt format) of the `SparkPlan` as the output of a query, instead of the data result.

And since `SparkPlan` is non-deterministic for various reasons, e.g., expressions ids changes, expression order changes, we'd reduce the plan to a simplified version that only contains node names and references. And we only identify those important nodes, e.g., `Exchange`, `SubqueryExec`, in the simplified plan.

And we'd reuse TPC-DS queries(v1.4, v2.7, modified) to test plans' stability. Currently, one TPC-DS query can only have one corresponding simplified golden plan.

This PR also did a few refactor, which extracts `TPCDSBase` from `TPCDSQuerySuite`. So, `PlanStabilitySuite` can use the TPC-DS queries as well.

### Why are the changes needed?

Nowadays, Spark is getting more and more complex. Any changes might cause regression unintentionally. Spark already has some benchmark to catch the performance regression. But, yet, it doesn't have a way to detect the regression inside `SparkPlan`. It would be good if we could detect the possible regression early during the compile phase before the runtime phase.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Added `PlanStabilitySuite` and it's subclasses.

Closes#29270 from Ngone51/plan-stable.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Add support for full outer join inside shuffled hash join. Currently if the query is a full outer join, we only use sort merge join as the physical operator. However it can be CPU and IO intensive in case input table is large for sort merge join. Shuffled hash join on the other hand saves the sort CPU and IO compared to sort merge join, especially when table is large.

This PR implements the full outer join as followed:

* Process rows from stream side by looking up hash relation, and mark the matched rows from build side by:

* for joining with unique key, a `BitSet` is used to record matched rows from build side (`key index` to represent each row)

* for joining with non-unique key, a `HashSet[Long]` is used to record matched rows from build side (`key index` + `value index` to represent each row).

`key index` is defined as the index into key addressing array `longArray` in `BytesToBytesMap`.

`value index` is defined as the iterator index of values for same key.

* Process rows from build side by iterating hash relation, and filter out rows from build side being looked up already (done in `ShuffledHashJoinExec.fullOuterJoin`)

For context, this PR was originally implemented as followed (up to commit e3322766d4):

1. Construct hash relation from build side, with extra boolean value at the end of row to track look up information (done in `ShuffledHashJoinExec.buildHashedRelation` and `UnsafeHashedRelation.apply`).

2. Process rows from stream side by looking up hash relation, and mark the matched rows from build side be looked up (done in `ShuffledHashJoinExec.fullOuterJoin`).

3. Process rows from build side by iterating hash relation, and filter out rows from build side being looked up already (done in `ShuffledHashJoinExec.fullOuterJoin`).

See discussion of pros and cons between these two approaches [here](https://github.com/apache/spark/pull/29342#issuecomment-672275450), [here](https://github.com/apache/spark/pull/29342#issuecomment-672288194) and [here](https://github.com/apache/spark/pull/29342#issuecomment-672640531).

TODO: codegen for full outer shuffled hash join can be implemented in another followup PR.

### Why are the changes needed?

As implementation in this PR, full outer shuffled hash join will have overhead to iterate build side twice (once for building hash map, and another for outputting non-matching rows), and iterate stream side once. However, full outer sort merge join needs to iterate both sides twice, and sort the large table can be more CPU and IO intensive. So full outer shuffled hash join can be more efficient than sort merge join when stream side is much more larger than build side.

For example query below, full outer SHJ saved 30% wall clock time compared to full outer SMJ.

```

def shuffleHashJoin(): Unit = {

val N: Long = 4 << 22

withSQLConf(

SQLConf.SHUFFLE_PARTITIONS.key -> "2",

SQLConf.AUTO_BROADCASTJOIN_THRESHOLD.key -> "20000000") {

codegenBenchmark("shuffle hash join", N) {

val df1 = spark.range(N).selectExpr(s"cast(id as string) as k1")

val df2 = spark.range(N / 10).selectExpr(s"cast(id * 10 as string) as k2")

val df = df1.join(df2, col("k1") === col("k2"), "full_outer")

df.noop()

}

}

}

```

```

Running benchmark: shuffle hash join

Running case: shuffle hash join off

Stopped after 2 iterations, 16602 ms

Running case: shuffle hash join on

Stopped after 5 iterations, 31911 ms

Java HotSpot(TM) 64-Bit Server VM 1.8.0_181-b13 on Mac OS X 10.15.4

Intel(R) Core(TM) i9-9980HK CPU 2.40GHz

shuffle hash join: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

------------------------------------------------------------------------------------------------------------------------

shuffle hash join off 7900 8301 567 2.1 470.9 1.0X

shuffle hash join on 6250 6382 95 2.7 372.5 1.3X

```

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Added unit test in `JoinSuite.scala`, `AbstractBytesToBytesMapSuite.java` and `HashedRelationSuite.scala`.

Closes#29342 from c21/full-outer-shj.

Authored-by: Cheng Su <chengsu@fb.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

This pr log the error message when falling back to interpreter mode.

### Why are the changes needed?

Not all error messages are in `CodeGenerator`, such as:

```

21:48:44.612 WARN org.apache.spark.sql.catalyst.expressions.Predicate: Expr codegen error and falling back to interpreter mode

java.lang.IllegalArgumentException: Can not interpolate org.apache.spark.sql.types.Decimal into code block.

at org.apache.spark.sql.catalyst.expressions.codegen.Block$BlockHelper$.$anonfun$code$1(javaCode.scala:240)

at org.apache.spark.sql.catalyst.expressions.codegen.Block$BlockHelper$.$anonfun$code$1$adapted(javaCode.scala:236)

at scala.collection.IndexedSeqOptimized.foreach(IndexedSeqOptimized.scala:36)

at scala.collection.IndexedSeqOptimized.foreach$(IndexedSeqOptimized.scala:33)

```

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Manual test.

Closes#29440 from wangyum/SPARK-32625.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Use the `LinkedHashMap` instead of `immutable.Map` to hold the `Window` expressions in `ExtractWindowExpressions.addWindow`.

### Why are the changes needed?

This is a bug fix for https://github.com/apache/spark/pull/29270. In that PR, the generated plan(especially for the queries q47, q49, q57) on Jenkins always can not match the golden plan generated on my laptop.

It happens because `ExtractWindowExpressions.addWindow` now uses `immutable.Map` to hold the `Window` expressions by the key `(spec.partitionSpec, spec.orderSpec, WindowFunctionType.functionType(expr))` and converts the map to `Seq` at the end. Then, the `Seq` is used to add Window operators on top of the child plan. However, for the same query, the order of Windows expression inside the `Seq` could be undetermined when the expression id changes(which can affect the key). As a result, the same query could have different plans because of the undetermined order of Window operators.

Therefore, we use `LinkedHashMap`, which records the insertion order of entries, to make the adding order determined.

### Does this PR introduce _any_ user-facing change?

Maybe yes, users now always see the same plan for the same queries with multiple Window operators.

### How was this patch tested?

It's really hard to make a reproduce demo. I just tested manually with https://github.com/apache/spark/pull/29270 and it looks good.

Closes#29432 from Ngone51/fix-addWindow.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the docs concerning the approx_count_distinct I have changed the description of the rsd parameter from **_maximum estimation error allowed_** to _**maximum relative standard deviation allowed**_

### Why are the changes needed?

Maximum estimation error allowed can be misleading. You can set the target relative standard deviation, which affects the estimation error, but on given runs the estimation error can still be above the rsd parameter.

### Does this PR introduce _any_ user-facing change?

This PR should make it easier for users reading the docs to understand that the rsd parameter in approx_count_distinct doesn't cap the estimation error, but just sets the target deviation instead,

### How was this patch tested?

No tests, as no code changes were made.

Closes#29424 from Comonut/fix-approx_count_distinct-rsd-param-description.

Authored-by: alexander-daskalov <alexander.daskalov@adevinta.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

Remove `fullOutput` from `RowDataSourceScanExec`

### Why are the changes needed?

`RowDataSourceScanExec` requires the full output instead of the scan output after column pruning. However, in v2 code path, we don't have the full output anymore so we just pass the pruned output. `RowDataSourceScanExec.fullOutput` is actually meaningless so we should remove it.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

existing tests

Closes#29415 from huaxingao/rm_full_output.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Discussion with [comment](https://github.com/apache/spark/pull/29244#issuecomment-671746329).

Add `HiveVoidType` class in `HiveClientImpl` then we can replace `NullType` to `HiveVoidType` before we call hive client.

### Why are the changes needed?

Better compatible with hive.

More details in [#29244](https://github.com/apache/spark/pull/29244).

### Does this PR introduce _any_ user-facing change?

Yes, user can create view with null type in Hive.

### How was this patch tested?

New test.

Closes#29423 from ulysses-you/add-HiveVoidType.

Authored-by: ulysses <youxiduo@weidian.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

The purpose of this pr is to partial resolve [SPARK-32526](https://issues.apache.org/jira/browse/SPARK-32526), total of 88 failed and 2 aborted test cases were fixed, the related suite as follow:

- `DataSourceV2AnalysisBaseSuite` related test cases (71 FAILED -> Pass)

- `TreeNodeSuite` (1 FAILED -> Pass)

- `MetadataSuite `(1 FAILED -> Pass)

- `InferFiltersFromConstraintsSuite `(3 FAILED -> Pass)

- `StringExpressionsSuite ` (1 FAILED -> Pass)

- `JacksonParserSuite ` (1 FAILED -> Pass)

- `HigherOrderFunctionsSuite `(1 FAILED -> Pass)

- `ExpressionParserSuite` (1 FAILED -> Pass)

- `CollectionExpressionsSuite `(6 FAILED -> Pass)

- `SchemaUtilsSuite` (2 FAILED -> Pass)

- `ExpressionSetSuite `(ABORTED -> Pass)

- `ArrayDataIndexedSeqSuite `(ABORTED -> Pass)

The main change of this pr as following:

- `Optimizer` and `Analyzer` are changed to pass compile, `ArrayBuffer` is not a `Seq` in scala 2.13, call `toSeq` method manually to compatible with Scala 2.12

- `m.mapValues().view.force` pattern return a `Map` in scala 2.12 but return a `IndexedSeq` in scala 2.13, call `toMap` method manually to compatible with Scala 2.12. `TreeNode` are changed to pass `DataSourceV2AnalysisBaseSuite` related test cases and `TreeNodeSuite` failed case.

- call `toMap` method of `Metadata#hash` method `case map` branch because `map.mapValues` return `Map` in Scala 2.12 and return `MapView` in Scala 2.13.

- `impl` contact method of `ExpressionSet` in Scala 2.13 version refer to `ExpressionSet` in Scala 2.12 to support `+ + ` method conform to `ExpressionSet` semantics

- `GenericArrayData` not accept `ArrayBuffer` input, call `toSeq` when use `ArrayBuffer` construction `GenericArrayData` for Scala version compatibility

- Call `toSeq` in `RandomDataGenerator#randomRow` method to ensure contents of `fields` is `Seq` not `ArrayBuffer`

- Call `toSeq` Let `JacksonParser#parse` still return a `Seq` because the check method of `JacksonParserSuite#"skipping rows using pushdown filters"` dependence on `Seq` type

- Call `toSeq` in `AstBuilder#visitFunctionCall`, otherwise `ctx.argument.asScala.map(expression)` is `Buffer` in Scala 2.13

- Add a `LongType` match to `ArraySetLike.nullValueHolder`

- Add a `sorted` to ensure `duplicateColumns` string in `SchemaUtils.checkColumnNameDuplication` method error message have a deterministic order

### Why are the changes needed?

We need to support a Scala 2.13 build.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

- Scala 2.12: Pass the Jenkins or GitHub Action

- Scala 2.13: Do the following:

```

dev/change-scala-version.sh 2.13

mvn clean install -DskipTests -pl sql/catalyst -Pscala-2.13 -am

mvn test -pl sql/catalyst -Pscala-2.13

```

**Before**

```

Tests: succeeded 3853, failed 103, canceled 0, ignored 6, pending 0

*** 3 SUITES ABORTED ***

*** 103 TESTS FAILED ***

```

**After**

```

Tests: succeeded 4035, failed 17, canceled 0, ignored 6, pending 0

*** 1 SUITE ABORTED ***

*** 15 TESTS FAILED ***

```

Closes#29370 from LuciferYang/fix-DataSourceV2AnalysisBaseSuite.

Authored-by: yangjie01 <yangjie01@baidu.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

Added a new `dropFields` method to the `Column` class.

This method should allow users to drop a `StructField` in a `StructType` column (with similar semantics to the `drop` method on `Dataset`).

### Why are the changes needed?

Often Spark users have to work with deeply nested data e.g. to fix a data quality issue with an existing `StructField`. To do this with the existing Spark APIs, users have to rebuild the entire struct column.

For example, let's say you have the following deeply nested data structure which has a data quality issue (`5` is missing):

```

import org.apache.spark.sql._

import org.apache.spark.sql.functions._

import org.apache.spark.sql.types._

val data = spark.createDataFrame(sc.parallelize(

Seq(Row(Row(Row(1, 2, 3), Row(Row(4, null, 6), Row(7, 8, 9), Row(10, 11, 12)), Row(13, 14, 15))))),

StructType(Seq(

StructField("a", StructType(Seq(

StructField("a", StructType(Seq(

StructField("a", IntegerType),

StructField("b", IntegerType),

StructField("c", IntegerType)))),

StructField("b", StructType(Seq(

StructField("a", StructType(Seq(

StructField("a", IntegerType),

StructField("b", IntegerType),

StructField("c", IntegerType)))),

StructField("b", StructType(Seq(

StructField("a", IntegerType),

StructField("b", IntegerType),

StructField("c", IntegerType)))),

StructField("c", StructType(Seq(

StructField("a", IntegerType),

StructField("b", IntegerType),

StructField("c", IntegerType))))

))),

StructField("c", StructType(Seq(

StructField("a", IntegerType),

StructField("b", IntegerType),

StructField("c", IntegerType))))

)))))).cache

data.show(false)

+---------------------------------+

|a |

+---------------------------------+

|[[1, 2, 3], [[4,, 6], [7, 8, 9]]]|

+---------------------------------+

```

Currently, to drop the missing value users would have to do something like this:

```

val result = data.withColumn("a",

struct(

$"a.a",

struct(

struct(

$"a.b.a.a",

$"a.b.a.c"

).as("a"),

$"a.b.b",

$"a.b.c"

).as("b"),

$"a.c"

))

result.show(false)

+---------------------------------------------------------------+

|a |

+---------------------------------------------------------------+

|[[1, 2, 3], [[4, 6], [7, 8, 9], [10, 11, 12]], [13, 14, 15]]|

+---------------------------------------------------------------+

```

As you can see above, with the existing methods users must call the `struct` function and list all fields, including fields they don't want to change. This is not ideal as:

>this leads to complex, fragile code that cannot survive schema evolution.

[SPARK-16483](https://issues.apache.org/jira/browse/SPARK-16483)

In contrast, with the method added in this PR, a user could simply do something like this to get the same result:

```

val result = data.withColumn("a", 'a.dropFields("b.a.b"))

result.show(false)

+---------------------------------------------------------------+

|a |

+---------------------------------------------------------------+

|[[1, 2, 3], [[4, 6], [7, 8, 9], [10, 11, 12]], [13, 14, 15]]|

+---------------------------------------------------------------+

```

This is the second of maybe 3 methods that could be added to the `Column` class to make it easier to manipulate nested data.

Other methods under discussion in [SPARK-22231](https://issues.apache.org/jira/browse/SPARK-22231) include `withFieldRenamed`.

However, this should be added in a separate PR.

### Does this PR introduce _any_ user-facing change?

Only one minor change. If the user submits the following query:

```

df.withColumn("a", $"a".withField(null, null))

```

instead of throwing:

```

java.lang.IllegalArgumentException: requirement failed: fieldName cannot be null

```

it will now throw:

```

java.lang.IllegalArgumentException: requirement failed: col cannot be null

```

I don't believe its should be an issue to change this because:

- neither message is incorrect

- Spark 3.1.0 has yet to be released

but please feel free to correct me if I am wrong.

### How was this patch tested?

New unit tests were added. Jenkins must pass them.

### Related JIRAs:

More discussion on this topic can be found here:

- https://issues.apache.org/jira/browse/SPARK-22231

- https://issues.apache.org/jira/browse/SPARK-16483Closes#29322 from fqaiser94/SPARK-32511.

Lead-authored-by: fqaiser94@gmail.com <fqaiser94@gmail.com>

Co-authored-by: fqaiser94 <fqaiser94@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Update URL of the parquet project in code comment.

### Why are the changes needed?

The original url is not available.

### Does this PR introduce _any_ user-facing change?

NO

### How was this patch tested?

No test needed.

Closes#29416 from izchen/Update-Parquet-URL.

Authored-by: Chen Zhang <izchen@126.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

There are no partition Commands, such as AlterTableAddPartition supported in DatasourceV2, it is widely used in mysql or hive or other datasources. Thus it is necessary to defined Partition API to support these Commands.

We defined the partition API as part of Table API, as it will change table data sometimes. And a partition is composed of identifier and properties, while identifier is defined with InternalRow and properties is defined as a Map.

### Does this PR introduce _any_ user-facing change?

Yes. This PR will enable user to use some partition commands

### How was this patch tested?

run all tests and add some partition api tests

Closes#28617 from stczwd/SPARK-31694.

Authored-by: stczwd <qcsd2011@163.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

We support partially push partition filters since SPARK-28169. We can also support partially push down data filters if it mixed in partition filters and data filters. For example:

```

spark.sql(

s"""

|CREATE TABLE t(i INT, p STRING)

|USING parquet

|PARTITIONED BY (p)""".stripMargin)

spark.range(0, 1000).selectExpr("id as col").createOrReplaceTempView("temp")

for (part <- Seq(1, 2, 3, 4)) {

sql(s"""

|INSERT OVERWRITE TABLE t PARTITION (p='$part')

|SELECT col FROM temp""".stripMargin)

}

spark.sql("SELECT * FROM t WHERE WHERE (p = '1' AND i = 1) OR (p = '2' and i = 2)").explain()

```

We can also push down ```i = 1 or i = 2 ```

### Why are the changes needed?

Extract more data filter to FileSourceScanExec

### Does this PR introduce _any_ user-facing change?

NO

### How was this patch tested?

Added UT

Closes#29406 from AngersZhuuuu/SPARK-32352.

Authored-by: angerszhu <angers.zhu@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

- Test TEXTFILE together with the PARQUET and ORC file formats in `HiveSerDeReadWriteSuite`

- Remove the "SPARK-32594: insert dates to a Hive table" added by #29409

### Why are the changes needed?

- To improve test coverage, and test other row SerDe - `org.apache.hadoop.hive.serde2.lazy.LazySimpleSerDe`.

- The removed test is not needed anymore because the bug reported in SPARK-32594 is triggered by the TEXTFILE file format too.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

By running the modified test suite `HiveSerDeReadWriteSuite`.

Closes#29417 from MaxGekk/textfile-HiveSerDeReadWriteSuite.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR fixes the issue introduced during SPARK-26985.

SPARK-26985 changes the `putDoubles()` and `putFloats()` methods to respect the platform's endian-ness. However, that causes the RLE paths in VectorizedRleValuesReader.java to read the RLE entries in parquet as BIG_ENDIAN on big endian platforms (i.e., as is), even though parquet data is always in little endian format.

The comments in `WriteableColumnVector.java` say those methods are used for "ieee formatted doubles in platform native endian" (or floats), but since the data in parquet is always in little endian format, use of those methods appears to be inappropriate.

To demonstrate the problem with spark-shell:

```scala

import org.apache.spark._

import org.apache.spark.sql._

import org.apache.spark.sql.types._

var data = Seq(

(1.0, 0.1),

(2.0, 0.2),

(0.3, 3.0),

(4.0, 4.0),

(5.0, 5.0))

var df = spark.createDataFrame(data).write.mode(SaveMode.Overwrite).parquet("/tmp/data.parquet2")

var df2 = spark.read.parquet("/tmp/data.parquet2")

df2.show()

```

result:

```scala

+--------------------+--------------------+

| _1| _2|

+--------------------+--------------------+

| 3.16E-322|-1.54234871366845...|

| 2.0553E-320| 2.0553E-320|

| 2.561E-320| 2.561E-320|

|4.66726145843124E-62| 1.0435E-320|

| 3.03865E-319|-1.54234871366757...|

+--------------------+--------------------+

```

Also tests in ParquetIOSuite that involve float/double data would fail, e.g.,

- basic data types (without binary)

- read raw Parquet file

/examples/src/main/python/mllib/isotonic_regression_example.py would fail as well.

Purposed code change is to add `putDoublesLittleEndian()` and `putFloatsLittleEndian()` methods for parquet to invoke, just like the existing `putIntsLittleEndian()` and `putLongsLittleEndian()`. On little endian platforms they would call `putDoubles()` and `putFloats()`, on big endian they would read the entries as little endian like pre-SPARK-26985.

No new unit-test is introduced as the existing ones are actually sufficient.

### Why are the changes needed?

RLE float/double data in parquet files will not be read back correctly on big endian platforms.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

All unit tests (mvn test) were ran and OK.

Closes#29383 from tinhto-000/SPARK-31703.

Authored-by: Tin Hang To <tinto@us.ibm.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

1. Extract common test case (no serde) to BasicScriptTransformationExecSuite

2. Add more test case for no serde mode about supported data type and behavior in `BasicScriptTransformationExecSuite`

3. Add more test case for hive serde mode about supported type and behavior in `HiveScriptTransformationExecSuite`

### Why are the changes needed?

Improve test coverage of Script Transformation

### Does this PR introduce _any_ user-facing change?

NO

### How was this patch tested?

Added UT

Closes#29401 from AngersZhuuuu/SPARK-32400.

Authored-by: angerszhu <angers.zhu@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Fix `DaysWritable` by overriding parent's method `def get(doesTimeMatter: Boolean): Date` from `DateWritable` instead of `Date get()` because the former one uses the first one. The bug occurs because `HiveOutputWriter.write()` call `def get(doesTimeMatter: Boolean): Date` transitively with default implementation from the parent class `DateWritable` which doesn't respect date rebases and uses not initialized `daysSinceEpoch` (0 which `1970-01-01`).

### Why are the changes needed?

The changes fix the bug:

```sql

spark-sql> CREATE TABLE table1 (d date);

spark-sql> INSERT INTO table1 VALUES (date '2020-08-11');

spark-sql> SELECT * FROM table1;

1970-01-01

```

The expected result of the last SQL statement must be **2020-08-11** but got **1970-01-01**.

### Does this PR introduce _any_ user-facing change?

Yes. After the fix, `INSERT` work correctly:

```sql

spark-sql> SELECT * FROM table1;

2020-08-11

```

### How was this patch tested?

Add new test to `HiveSerDeReadWriteSuite`

Closes#29409 from MaxGekk/insert-date-into-hive-table.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This pr fixes the error message of `NumberFormatException` when casting invalid input to FractionalType and enabling **ansi**:

```

spark-sql> set spark.sql.ansi.enabled=true;

spark.sql.ansi.enabled true

spark-sql> create table SPARK_32586 using parquet as select 's' s;

spark-sql> select * from SPARK_32586 where s > 1.13D;

java.lang.NumberFormatException: invalid input syntax for type numeric: columnartorow_value_0

```

After this pr:

```

spark-sql> select * from SPARK_32586 where s > 1.13D;

java.lang.NumberFormatException: invalid input syntax for type numeric: s

```

### Why are the changes needed?

Improve error message.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Unit test.

Closes#29405 from wangyum/SPARK-32586.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Spark SQL supported filter clause in aggregate, for example:

`select sum(distinct id) filter (where sex = 'man') from student;`

But sometimes we can eliminate the filter clause in aggregate.

`SELECT COUNT(DISTINCT 1) FILTER (WHERE true) FROM testData;`

could be transformed to

`SELECT COUNT(DISTINCT 1) FROM testData;`

`SELECT COUNT(DISTINCT 1) FILTER (WHERE false) FROM testData;`

could be transformed to

`SELECT 0 FROM testData;`

### Why are the changes needed?

Optimize the filter clause in aggregation

### Does this PR introduce _any_ user-facing change?

'No'.

### How was this patch tested?

New test.

Closes#29369 from beliefer/eliminate-filter-clause.

Lead-authored-by: gengjiaan <gengjiaan@360.cn>

Co-authored-by: beliefer <beliefer@163.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>