### What changes were proposed in this pull request?

To push the built jars to maven release repository, we need to remove the 'SNAPSHOT' tag from the version name.

Made the following changes in this PR:

* Update all the `3.0.0-SNAPSHOT` version name to `3.0.0-preview`

* Update the PySpark version from `3.0.0.dev0` to `3.0.0`

**Please note those changes were generated by the release script in the past, but this time since we manually add tags on master branch, we need to manually apply those changes too.**

We shall revert the changes after 3.0.0-preview release passed.

### Why are the changes needed?

To make the maven release repository to accept the built jars.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

N/A

Closes#26243 from jiangxb1987/3.0.0-preview-prepare.

Lead-authored-by: Xingbo Jiang <xingbo.jiang@databricks.com>

Co-authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Xingbo Jiang <xingbo.jiang@databricks.com>

### What changes were proposed in this pull request?

https://issues.apache.org/jira/browse/SPARK-29500

`KafkaRowWriter` now supports setting the Kafka partition by reading a "partition" column in the input dataframe.

Code changes in commit nr. 1.

Test changes in commit nr. 2.

Doc changes in commit nr. 3.

tcondie dongjinleekr srowen

### Why are the changes needed?

While it is possible to configure a custom Kafka Partitioner with

`.option("kafka.partitioner.class", "my.custom.Partitioner")`, this is not enough for certain use cases. See the Jira issue.

### Does this PR introduce any user-facing change?

No, as this behaviour is optional.

### How was this patch tested?

Two new UT were added and one was updated.

Closes#26153 from redsk/feature/SPARK-29500.

Authored-by: redsk <nicola.bova@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

This PR aims to deprecate old Java 8 versions prior to 8u92.

### Why are the changes needed?

This is a preparation to use JVM Option `ExitOnOutOfMemoryError`.

- https://www.oracle.com/technetwork/java/javase/8u92-relnotes-2949471.html

### Does this PR introduce any user-facing change?

Yes. It's highly recommended for users to use the latest JDK versions of Java 8/11.

### How was this patch tested?

NA (This is a doc change).

Closes#26249 from dongjoon-hyun/SPARK-29597.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR fixes our documentation build to copy minified jquery file instead.

The original file `jquery.js` seems missing as of Scala 2.12 upgrade. Scala 2.12 seems started to use minified `jquery.min.js` instead.

Since we dropped Scala 2.11, we won't have to take care about legacy `jquery.js` anymore.

Note that, there seem multiple weird stuff in the current ScalaDoc (e.g., some pages are weird, it starts from `scala.collection.*` or some pages are missing, or some docs are truncated, some badges look missing). It needs a separate double check and investigation.

This PR targets to make the documentation generation pass in order to unblock Spark 3.0 preview.

### Why are the changes needed?

To fix and make our official documentation build able to run.

### Does this PR introduce any user-facing change?

It will enable to build the documentation in our official way.

**Before:**

```

Making directory api/scala

cp -r ../target/scala-2.12/unidoc/. api/scala

Making directory api/java

cp -r ../target/javaunidoc/. api/java

Updating JavaDoc files for badge post-processing

Copying jquery.js from Scala API to Java API for page post-processing of badges

jekyll 3.8.6 | Error: No such file or directory rb_sysopen - ./api/scala/lib/jquery.js

```

**After:**

```

Making directory api/scala

cp -r ../target/scala-2.12/unidoc/. api/scala

Making directory api/java

cp -r ../target/javaunidoc/. api/java

Updating JavaDoc files for badge post-processing

Copying jquery.min.js from Scala API to Java API for page post-processing of badges

Copying api_javadocs.js to Java API for page post-processing of badges

Appending content of api-javadocs.css to JavaDoc stylesheet.css for badge styles

...

```

### How was this patch tested?

Manually tested via:

```

SKIP_PYTHONDOC=1 SKIP_RDOC=1 SKIP_SQLDOC=1 jekyll build

```

Closes#26228 from HyukjinKwon/SPARK-29569.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Xingbo Jiang <xingbo.jiang@databricks.com>

### What changes were proposed in this pull request?

This PR adds `CREATE NAMESPACE` support for V2 catalogs.

### Why are the changes needed?

Currently, you cannot explicitly create namespaces for v2 catalogs.

### Does this PR introduce any user-facing change?

The user can now perform the following:

```SQL

CREATE NAMESPACE mycatalog.ns

```

to create a namespace `ns` inside `mycatalog` V2 catalog.

### How was this patch tested?

Added unit tests.

Closes#26166 from imback82/create_namespace.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR remove unnecessary orc version and hive version in doc.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

N/A.

Closes#26146 from denglingang/SPARK-24576.

Lead-authored-by: denglingang <chitin1027@gmail.com>

Co-authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR fixes the incorrect `EqualNullSafe` symbol in `sql-migration-guide.md`.

### Why are the changes needed?

Fix documentation error.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

N/A

Closes#26163 from wangyum/EqualNullSafe-symbol.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

This patch is a part of [SPARK-28594](https://issues.apache.org/jira/browse/SPARK-28594) and design doc for SPARK-28594 is linked here: https://docs.google.com/document/d/12bdCC4nA58uveRxpeo8k7kGOI2NRTXmXyBOweSi4YcY/edit?usp=sharing

This patch proposes adding new feature to event logging, rolling event log files via configured file size.

Previously event logging is done with single file and related codebase (`EventLoggingListener`/`FsHistoryProvider`) is tightly coupled with it. This patch adds layer on both reader (`EventLogFileReader`) and writer (`EventLogFileWriter`) to decouple implementation details between "handling events" and "how to read/write events from/to file".

This patch adds two properties, `spark.eventLog.rollLog` and `spark.eventLog.rollLog.maxFileSize` which provides configurable behavior of rolling log. The feature is disabled by default, as we only expect huge event log for huge/long-running application. For other cases single event log file would be sufficient and still simpler.

### Why are the changes needed?

This is a part of SPARK-28594 which addresses event log growing infinitely for long-running application.

This patch itself also provides some option for the situation where event log file gets huge and consume their storage. End users may give up replaying their events and want to delete the event log file, but given application is still running and writing the file, it's not safe to delete the file. End users will be able to delete some of old files after applying rolling over event log.

### Does this PR introduce any user-facing change?

No, as the new feature is turned off by default.

### How was this patch tested?

Added unit tests, as well as basic manual tests.

Basic manual tests - ran SHS, ran structured streaming query with roll event log enabled, verified split files are generated as well as SHS can load these files, with handling app status as incomplete/complete.

Closes#25670 from HeartSaVioR/SPARK-28869.

Lead-authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Co-authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

### What changes were proposed in this pull request?

This PR proposes a few typos:

1. Sparks => Spark's

2. parallize => parallelize

3. doesnt => doesn't

Closes#26140 from plusplusjiajia/fix-typos.

Authored-by: Jiajia Li <jiajia.li@intel.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

When inserting a value into a column with the different data type, Spark performs type coercion. Currently, we support 3 policies for the store assignment rules: ANSI, legacy and strict, which can be set via the option "spark.sql.storeAssignmentPolicy":

1. ANSI: Spark performs the type coercion as per ANSI SQL. In practice, the behavior is mostly the same as PostgreSQL. It disallows certain unreasonable type conversions such as converting `string` to `int` and `double` to `boolean`. It will throw a runtime exception if the value is out-of-range(overflow).

2. Legacy: Spark allows the type coercion as long as it is a valid `Cast`, which is very loose. E.g., converting either `string` to `int` or `double` to `boolean` is allowed. It is the current behavior in Spark 2.x for compatibility with Hive. When inserting an out-of-range value to a integral field, the low-order bits of the value is inserted(the same as Java/Scala numeric type casting). For example, if 257 is inserted to a field of Byte type, the result is 1.

3. Strict: Spark doesn't allow any possible precision loss or data truncation in store assignment, e.g., converting either `double` to `int` or `decimal` to `double` is allowed. The rules are originally for Dataset encoder. As far as I know, no mainstream DBMS is using this policy by default.

Currently, the V1 data source uses "Legacy" policy by default, while V2 uses "Strict". This proposal is to use "ANSI" policy by default for both V1 and V2 in Spark 3.0.

### Why are the changes needed?

Following the ANSI SQL standard is most reasonable among the 3 policies.

### Does this PR introduce any user-facing change?

Yes.

The default store assignment policy is ANSI for both V1 and V2 data sources.

### How was this patch tested?

Unit test

Closes#26107 from gengliangwang/ansiPolicyAsDefault.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Add documentation to SQL programming guide to use PyArrow >= 0.15.0 with current versions of Spark.

### Why are the changes needed?

Arrow 0.15.0 introduced a change in format which requires an environment variable to maintain compatibility.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Ran pandas_udfs tests using PyArrow 0.15.0 with environment variable set.

Closes#26045 from BryanCutler/arrow-document-legacy-IPC-fix-SPARK-29367.

Authored-by: Bryan Cutler <cutlerb@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

This adds an entry about PrometheusServlet to the documentation, following SPARK-29032

### Why are the changes needed?

The monitoring documentation lists all the available metrics sinks, this should be added to the list for completeness.

Closes#26081 from LucaCanali/FollowupSpark29032.

Authored-by: Luca Canali <luca.canali@cern.ch>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

This is just a followup on https://github.com/apache/spark/pull/26062 -- see it for more detail.

I think we will eventually find more cases of this. It's hard to get them all at once as there are many different types of compile errors in earlier modules. I'm trying to address them in as a big a chunk as possible.

Closes#26074 from srowen/SPARK-29401.2.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

The commit 4e6d31f570 changed default behavior of `size()` for the `NULL` input. In this PR, I propose to update the SQL migration guide.

### Why are the changes needed?

To inform users about new behavior of the `size()` function for the `NULL` input.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

N/A

Closes#26066 from MaxGekk/size-null-migration-guide.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Invocations like `sc.parallelize(Array((1,2)))` cause a compile error in 2.13, like:

```

[ERROR] [Error] /Users/seanowen/Documents/spark_2.13/core/src/test/scala/org/apache/spark/ShuffleSuite.scala:47: overloaded method value apply with alternatives:

(x: Unit,xs: Unit*)Array[Unit] <and>

(x: Double,xs: Double*)Array[Double] <and>

(x: Float,xs: Float*)Array[Float] <and>

(x: Long,xs: Long*)Array[Long] <and>

(x: Int,xs: Int*)Array[Int] <and>

(x: Char,xs: Char*)Array[Char] <and>

(x: Short,xs: Short*)Array[Short] <and>

(x: Byte,xs: Byte*)Array[Byte] <and>

(x: Boolean,xs: Boolean*)Array[Boolean]

cannot be applied to ((Int, Int), (Int, Int), (Int, Int), (Int, Int))

```

Using a `Seq` instead appears to resolve it, and is effectively equivalent.

### Why are the changes needed?

To better cross-build for 2.13.

### Does this PR introduce any user-facing change?

None.

### How was this patch tested?

Existing tests.

Closes#26062 from srowen/SPARK-29401.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Fix a config name typo from the resource scheduling user docs. In case users might get confused with the wrong config name, we'd better fix this typo.

### How was this patch tested?

Document change, no need to run test.

Closes#26047 from jiangxb1987/doc.

Authored-by: Xingbo Jiang <xingbo.jiang@databricks.com>

Signed-off-by: Xingbo Jiang <xingbo.jiang@databricks.com>

### What changes were proposed in this pull request?

Document SHOW CREATE TABLE statement in SQL Reference

### Why are the changes needed?

To complete the SQL reference.

### Does this PR introduce any user-facing change?

Yes.

after the change:

### How was this patch tested?

Tested using jykyll build --serve

Closes#25885 from huaxingao/spark-28813.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

This PR proposes:

1. Use `is.data.frame` to check if it is a DataFrame.

2. to install Arrow and test Arrow optimization in AppVeyor build. We're currently not testing this in CI.

### Why are the changes needed?

1. To support SparkR with Arrow 0.14

2. To check if there's any regression and if it works correctly.

### Does this PR introduce any user-facing change?

```r

df <- createDataFrame(mtcars)

collect(dapply(df, function(rdf) { data.frame(rdf$gear + 1) }, structType("gear double")))

```

**Before:**

```

Error in readBin(con, raw(), as.integer(dataLen), endian = "big") :

invalid 'n' argument

```

**After:**

```

gear

1 5

2 5

3 5

4 4

5 4

6 4

7 4

8 5

9 5

...

```

### How was this patch tested?

AppVeyor

Closes#25993 from HyukjinKwon/arrow-r-appveyor.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR exposes USE CATALOG/USE SQL commands as described in this [SPIP](https://docs.google.com/document/d/1jEcvomPiTc5GtB9F7d2RTVVpMY64Qy7INCA_rFEd9HQ/edit#)

It also exposes `currentCatalog` in `CatalogManager`.

Finally, it changes `SHOW NAMESPACES` and `SHOW TABLES` to use the current catalog if no catalog is specified (instead of default catalog).

### Why are the changes needed?

There is currently no mechanism to change current catalog/namespace thru SQL commands.

### Does this PR introduce any user-facing change?

Yes, you can perform the following:

```scala

// Sets the current catalog to 'testcat'

spark.sql("USE CATALOG testcat")

// Sets the current catalog to 'testcat' and current namespace to 'ns1.ns2'.

spark.sql("USE ns1.ns2 IN testcat")

// Now, the following will use 'testcat' as the current catalog and 'ns1.ns2' as the current namespace.

spark.sql("SHOW NAMESPACES")

```

### How was this patch tested?

Added new unit tests.

Closes#25771 from imback82/use_namespace.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Please refer [the link on dev. mailing list](https://lists.apache.org/thread.html/cc6489a19316e7382661d305fabd8c21915e5faf6a928b4869ac2b4a%3Cdev.spark.apache.org%3E) to see rationalization of this patch.

This patch adds the functionality to detect the possible correct issue on multiple stateful operations in single streaming query and logs warning message to inform end users.

This patch also documents some notes to inform caveats when using multiple stateful operations in single query, and provide one known alternative.

## How was this patch tested?

Added new UTs in UnsupportedOperationsSuite to test various combination of stateful operators on streaming query.

Closes#24890 from HeartSaVioR/SPARK-28074.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

Updated the SQL migration guide regarding to recently supported special date and timestamp values, see https://github.com/apache/spark/pull/25716 and https://github.com/apache/spark/pull/25708.

Closes#25834

### Why are the changes needed?

To let users know about new feature in Spark 3.0.

### Does this PR introduce any user-facing change?

No

Closes#25948 from MaxGekk/special-values-migration-guide.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Changed 'Phive-thriftserver' to ' -Phive-thriftserver'.

### Why are the changes needed?

Typo

### Does this PR introduce any user-facing change?

Yes.

### How was this patch tested?

Manually tested.

Closes#25937 from TomokoKomiyama/fix-build-doc.

Authored-by: Tomoko Komiyama <btkomiyamatm@oss.nttdata.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

Copy any "spark.hive.foo=bar" spark properties into hadoop conf as "hive.foo=bar"

### Why are the changes needed?

Providing spark side config entry for hive configurations.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

UT.

Closes#25661 from WeichenXu123/add_hive_conf.

Authored-by: WeichenXu <weichen.xu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This patch introduces new options "startingOffsetsByTimestamp" and "endingOffsetsByTimestamp" to set specific timestamp per topic (since we're unlikely to set the different value per partition) to let source starts reading from offsets which have equal of greater timestamp, and ends reading until offsets which have equal of greater timestamp.

The new option would be optional of course, and take preference over existing offset options.

## How was this patch tested?

New unit tests added. Also manually tested basic functionality with Kafka 2.0.0 server.

Running query below

```

val df = spark.read.format("kafka")

.option("kafka.bootstrap.servers", "localhost:9092")

.option("subscribe", "spark_26848_test_v1,spark_26848_test_2_v1")

.option("startingOffsetsByTimestamp", """{"spark_26848_test_v1": 1549669142193, "spark_26848_test_2_v1": 1549669240965}""")

.option("endingOffsetsByTimestamp", """{"spark_26848_test_v1": 1549669265676, "spark_26848_test_2_v1": 1549699265676}""")

.load().selectExpr("CAST(value AS STRING)")

df.show()

```

with below records (one string which number part remarks when they're put after such timestamp) in

topic `spark_26848_test_v1`

```

hello1 1549669142193

world1 1549669142193

hellow1 1549669240965

world1 1549669240965

hello1 1549669265676

world1 1549669265676

```

topic `spark_26848_test_2_v1`

```

hello2 1549669142193

world2 1549669142193

hello2 1549669240965

world2 1549669240965

hello2 1549669265676

world2 1549669265676

```

the result of `df.show()` follows:

```

+--------------------+

| value|

+--------------------+

|world1 1549669240965|

|world1 1549669142193|

|world2 1549669240965|

|hello2 1549669240965|

|hellow1 154966924...|

|hello2 1549669265676|

|hello1 1549669142193|

|world2 1549669265676|

+--------------------+

```

Note that endingOffsets (as well as endingOffsetsByTimestamp) are exclusive.

Closes#23747 from HeartSaVioR/SPARK-26848.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

This PR supports UPDATE in the parser and add the corresponding logical plan. The SQL syntax is a standard UPDATE statement:

```

UPDATE tableName tableAlias SET colName=value [, colName=value]+ WHERE predicate?

```

### Why are the changes needed?

With this change, we can start to implement UPDATE in builtin sources and think about how to design the update API in DS v2.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

New test cases added.

Closes#25626 from xianyinxin/SPARK-28892.

Authored-by: xy_xin <xianyin.xxy@alibaba-inc.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Credit to vanzin as he found and commented on this while reviewing #25670 - [comment](https://github.com/apache/spark/pull/25670#discussion_r325383512).

This patch proposes to specify UTF-8 explicitly while reading/writer event log file.

### Why are the changes needed?

The event log file is being read/written as default character set of JVM process which may open the chance to bring some problems on reading event log files from another machines. Spark's de facto standard character set is UTF-8, so it should be explicitly set to.

### Does this PR introduce any user-facing change?

Yes, if end users have been running Spark process with different default charset than "UTF-8", especially their driver JVM processes. No otherwise.

### How was this patch tested?

Existing UTs, as ReplayListenerSuite contains "end-to-end" event logging/reading tests (both uncompressed/compressed).

Closes#25845 from HeartSaVioR/SPARK-29160.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PRs add Java 11 version to the document.

### Why are the changes needed?

Apache Spark 3.0.0 starts to support JDK11 officially.

### Does this PR introduce any user-facing change?

Yes.

### How was this patch tested?

Manually. Doc generation.

Closes#25875 from dongjoon-hyun/SPARK-29196.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Added document reference for USE databse sql command

### Why are the changes needed?

For USE database command usage

### Does this PR introduce any user-facing change?

It is adding the USE database sql command refernce information in the doc

### How was this patch tested?

Attached the test snap

Closes#25572 from shivusondur/jiraUSEDaBa1.

Lead-authored-by: shivusondur <shivusondur@gmail.com>

Co-authored-by: Xiao Li <gatorsmile@gmail.com>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>

### What changes were proposed in this pull request?

This PR aims to increase the JVM CodeCacheSize from 0.5G to 1G.

### Why are the changes needed?

After upgrading to `Scala 2.12.10`, the following is observed during building.

```

2019-09-18T20:49:23.5030586Z OpenJDK 64-Bit Server VM warning: CodeCache is full. Compiler has been disabled.

2019-09-18T20:49:23.5032920Z OpenJDK 64-Bit Server VM warning: Try increasing the code cache size using -XX:ReservedCodeCacheSize=

2019-09-18T20:49:23.5034959Z CodeCache: size=524288Kb used=521399Kb max_used=521423Kb free=2888Kb

2019-09-18T20:49:23.5035472Z bounds [0x00007fa62c000000, 0x00007fa64c000000, 0x00007fa64c000000]

2019-09-18T20:49:23.5035781Z total_blobs=156549 nmethods=155863 adapters=592

2019-09-18T20:49:23.5036090Z compilation: disabled (not enough contiguous free space left)

```

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Manually check the Jenkins or GitHub Action build log (which should not have the above).

Closes#25836 from dongjoon-hyun/SPARK-CODE-CACHE-1G.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Currently, there are new configurations for compatibility with ANSI SQL:

* `spark.sql.parser.ansi.enabled`

* `spark.sql.decimalOperations.nullOnOverflow`

* `spark.sql.failOnIntegralTypeOverflow`

This PR is to add new configuration `spark.sql.ansi.enabled` and remove the 3 options above. When the configuration is true, Spark tries to conform to the ANSI SQL specification. It will be disabled by default.

### Why are the changes needed?

Make it simple and straightforward.

### Does this PR introduce any user-facing change?

The new features for ANSI compatibility will be set via one configuration `spark.sql.ansi.enabled`.

### How was this patch tested?

Existing unit tests.

Closes#25693 from gengliangwang/ansiEnabled.

Lead-authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Co-authored-by: Xiao Li <gatorsmile@gmail.com>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

This PR upgrade Scala to **2.12.10**.

Release notes:

- Fix regression in large string interpolations with non-String typed splices

- Revert "Generate shallower ASTs in pattern translation"

- Fix regression in classpath when JARs have 'a.b' entries beside 'a/b'

- Faster compiler: 5–10% faster since 2.12.8

- Improved compatibility with JDK 11, 12, and 13

- Experimental support for build pipelining and outline type checking

More details:

https://github.com/scala/scala/releases/tag/v2.12.10https://github.com/scala/scala/releases/tag/v2.12.9

## How was this patch tested?

Existing tests

Closes#25404 from wangyum/SPARK-28683.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This proposes to improve Spark instrumentation by adding a hook for user-defined metrics, extending Spark’s Dropwizard/Codahale metrics system.

The original motivation of this work was to add instrumentation for S3 filesystem access metrics by Spark job. Currently, [[ExecutorSource]] instruments HDFS and local filesystem metrics. Rather than extending the code there, we proposes with this JIRA to add a metrics plugin system which is of more flexible and general use.

Context: The Spark metrics system provides a large variety of metrics, see also , useful to monitor and troubleshoot Spark workloads. A typical workflow is to sink the metrics to a storage system and build dashboards on top of that.

Highlights:

- The metric plugin system makes it easy to implement instrumentation for S3 access by Spark jobs.

- The metrics plugin system allows for easy extensions of how Spark collects HDFS-related workload metrics. This is currently done using the Hadoop Filesystem GetAllStatistics method, which is deprecated in recent versions of Hadoop. Recent versions of Hadoop Filesystem recommend using method GetGlobalStorageStatistics, which also provides several additional metrics. GetGlobalStorageStatistics is not available in Hadoop 2.7 (had been introduced in Hadoop 2.8). Using a metric plugin for Spark would allow an easy way to “opt in” using such new API calls for those deploying suitable Hadoop versions.

- We also have the use case of adding Hadoop filesystem monitoring for a custom Hadoop compliant filesystem in use in our organization (EOS using the XRootD protocol). The metrics plugin infrastructure makes this easy to do. Others may have similar use cases.

- More generally, this method makes it straightforward to plug in Filesystem and other metrics to the Spark monitoring system. Future work on plugin implementation can address extending monitoring to measure usage of external resources (OS, filesystem, network, accelerator cards, etc), that maybe would not normally be considered general enough for inclusion in Apache Spark code, but that can be nevertheless useful for specialized use cases, tests or troubleshooting.

Implementation:

The proposed implementation extends and modifies the work on Executor Plugin of SPARK-24918. Additionally, this is related to recent work on extending Spark executor metrics, such as SPARK-25228.

As discussed during the review, the implementaiton of this feature modifies the Developer API for Executor Plugins, such that the new version is incompatible with the original version in Spark 2.4.

## How was this patch tested?

This modifies existing tests for ExecutorPluginSuite to adapt them to the API changes. In addition, the new funtionality for registering pluginMetrics has been manually tested running Spark on YARN and K8S clusters, in particular for monitoring S3 and for extending HDFS instrumentation with the Hadoop Filesystem “GetGlobalStorageStatistics” metrics. Executor metric plugin example and code used for testing are available, for example at: https://github.com/cerndb/SparkExecutorPluginsCloses#24901 from LucaCanali/executorMetricsPlugin.

Authored-by: Luca Canali <luca.canali@cern.ch>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

HDFS doesn't update the file size reported by the NM if you just keep

writing to the file; this makes the SHS believe the file is inactive,

and so it may delete it after the configured max age for log files.

This change uses hsync to keep the log file as up to date as possible

when using HDFS. It also disables erasure coding by default for these

logs, since hsync (& friends) does not work with EC.

Tested with a SHS configured to aggressively clean up logs; verified

a spark-shell session kept updating the log, which was not deleted by

the SHS.

Closes#25819 from vanzin/SPARK-29105.

Authored-by: Marcelo Vanzin <vanzin@cloudera.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

### What changes were proposed in this pull request?

Updating unit description in configurations, inorder to maintain consistency across configurations.

### Why are the changes needed?

the description does not mention about suffix that can be mentioned while configuring this value.

For better user understanding

### Does this PR introduce any user-facing change?

yes. Doc description

### How was this patch tested?

generated document and checked.

Closes#25689 from PavithraRamachandran/heapsize_config.

Authored-by: Pavithra Ramachandran <pavi.rams@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

Document CREATE DATABASE statement in SQL Reference Guide.

### Why are the changes needed?

Currently Spark lacks documentation on the supported SQL constructs causing

confusion among users who sometimes have to look at the code to understand the

usage. This is aimed at addressing this issue.

### Does this PR introduce any user-facing change?

Yes.

### Before:

There was no documentation for this.

### After:

### How was this patch tested?

Manual Review and Tested using jykyll build --serve

Closes#25595 from sharangk/createDbDoc.

Lead-authored-by: sharangk <sharan.gk@gmail.com>

Co-authored-by: Xiao Li <gatorsmile@gmail.com>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>

### What changes were proposed in this pull request?

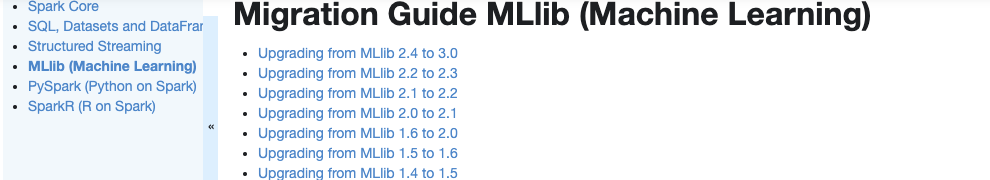

Currently, there is no migration section for PySpark, SparkCore and Structured Streaming.

It is difficult for users to know what to do when they upgrade.

This PR proposes to create create a "Migration Guide" tap at Spark documentation.

This page will contain migration guides for Spark SQL, PySpark, SparkR, MLlib, Structured Streaming and Core. Basically it is a refactoring.

There are some new information added, which I will leave a comment inlined for easier review.

1. **MLlib**

Merge [ml-guide.html#migration-guide](https://spark.apache.org/docs/latest/ml-guide.html#migration-guide) and [ml-migration-guides.html](https://spark.apache.org/docs/latest/ml-migration-guides.html)

```

'docs/ml-guide.md'

↓ Merge new/old migration guides

'docs/ml-migration-guide.md'

```

2. **PySpark**

Extract PySpark specific items from https://spark.apache.org/docs/latest/sql-migration-guide-upgrade.html

```

'docs/sql-migration-guide-upgrade.md'

↓ Extract PySpark specific items

'docs/pyspark-migration-guide.md'

```

3. **SparkR**

Move [sparkr.html#migration-guide](https://spark.apache.org/docs/latest/sparkr.html#migration-guide) into a separate file, and extract from [sql-migration-guide-upgrade.html](https://spark.apache.org/docs/latest/sql-migration-guide-upgrade.html)

```

'docs/sparkr.md' 'docs/sql-migration-guide-upgrade.md'

Move migration guide section ↘ ↙ Extract SparkR specific items

docs/sparkr-migration-guide.md

```

4. **Core**

Newly created at `'docs/core-migration-guide.md'`. I skimmed resolved JIRAs at 3.0.0 and found some items to note.

5. **Structured Streaming**

Newly created at `'docs/ss-migration-guide.md'`. I skimmed resolved JIRAs at 3.0.0 and found some items to note.

6. **SQL**

Merged [sql-migration-guide-upgrade.html](https://spark.apache.org/docs/latest/sql-migration-guide-upgrade.html) and [sql-migration-guide-hive-compatibility.html](https://spark.apache.org/docs/latest/sql-migration-guide-hive-compatibility.html)

```

'docs/sql-migration-guide-hive-compatibility.md' 'docs/sql-migration-guide-upgrade.md'

Move Hive compatibility section ↘ ↙ Left over after filtering PySpark and SparkR items

'docs/sql-migration-guide.md'

```

### Why are the changes needed?

In order for users in production to effectively migrate to higher versions, and detect behaviour or breaking changes before upgrading and/or migrating.

### Does this PR introduce any user-facing change?

Yes, this changes Spark's documentation at https://spark.apache.org/docs/latest/index.html.

### How was this patch tested?

Manually build the doc. This can be verified as below:

```bash

cd docs

SKIP_API=1 jekyll build

open _site/index.html

```

Closes#25757 from HyukjinKwon/migration-doc.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This update adds support for Kafka Headers functionality in Structured Streaming.

## How was this patch tested?

With following unit tests:

- KafkaRelationSuite: "default starting and ending offsets with headers" (new)

- KafkaSinkSuite: "batch - write to kafka" (updated)

Closes#22282 from dongjinleekr/feature/SPARK-23539.

Lead-authored-by: Lee Dongjin <dongjin@apache.org>

Co-authored-by: Jungtaek Lim <kabhwan@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

Added document for CREATE VIEW command.

### Why are the changes needed?

As a reference to syntax and examples of CREATE VIEW command.

### How was this patch tested?

Documentation update. Verified manually.

Closes#25543 from amanomer/spark-28795.

Lead-authored-by: aman_omer <amanomer1996@gmail.com>

Co-authored-by: Xiao Li <gatorsmile@gmail.com>

Co-authored-by: Aman Omer <amanomer1996@gmail.com>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>

### What changes were proposed in this pull request?

Document DROP DATABASE statement in SQL Reference

### Why are the changes needed?

Currently from spark there is no complete sql guide is present, so it is better to document all the sql commands, this jira is sub part of this task.

### Does this PR introduce any user-facing change?

Yes, Before there was no documentation about drop database syntax

After Fix

### How was this patch tested?

tested with jenkyll build

Closes#25554 from sandeep-katta/dropDbDoc.

Authored-by: sandeep katta <sandeep.katta2007@gmail.com>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>