## What changes were proposed in this pull request?

This change extends YARN resource allocation handling with blacklisting functionality.

This handles cases when node is messed up or misconfigured such that a container won't launch on it. Before this change backlisting only focused on task execution but this change introduces YarnAllocatorBlacklistTracker which tracks allocation failures per host (when enabled via "spark.yarn.blacklist.executor.launch.blacklisting.enabled").

## How was this patch tested?

### With unit tests

Including a new suite: YarnAllocatorBlacklistTrackerSuite.

#### Manually

It was tested on a cluster by deleting the Spark jars on one of the node.

#### Behaviour before these changes

Starting Spark as:

```

spark2-shell --master yarn --deploy-mode client --num-executors 4 --conf spark.executor.memory=4g --conf "spark.yarn.max.executor.failures=6"

```

Log is:

```

18/04/12 06:49:36 INFO yarn.ApplicationMaster: Final app status: FAILED, exitCode: 11, (reason: Max number of executor failures (6) reached)

18/04/12 06:49:39 INFO yarn.ApplicationMaster: Unregistering ApplicationMaster with FAILED (diag message: Max number of executor failures (6) reached)

18/04/12 06:49:39 INFO impl.AMRMClientImpl: Waiting for application to be successfully unregistered.

18/04/12 06:49:39 INFO yarn.ApplicationMaster: Deleting staging directory hdfs://apiros-1.gce.test.com:8020/user/systest/.sparkStaging/application_1523459048274_0016

18/04/12 06:49:39 INFO util.ShutdownHookManager: Shutdown hook called

```

#### Behaviour after these changes

Starting Spark as:

```

spark2-shell --master yarn --deploy-mode client --num-executors 4 --conf spark.executor.memory=4g --conf "spark.yarn.max.executor.failures=6" --conf "spark.yarn.blacklist.executor.launch.blacklisting.enabled=true"

```

And the log is:

```

18/04/13 05:37:43 INFO yarn.YarnAllocator: Will request 1 executor container(s), each with 1 core(s) and 4505 MB memory (including 409 MB of overhead)

18/04/13 05:37:43 INFO yarn.YarnAllocator: Submitted 1 unlocalized container requests.

18/04/13 05:37:43 INFO yarn.YarnAllocator: Launching container container_1523459048274_0025_01_000008 on host apiros-4.gce.test.com for executor with ID 6

18/04/13 05:37:43 INFO yarn.YarnAllocator: Received 1 containers from YARN, launching executors on 1 of them.

18/04/13 05:37:43 INFO yarn.YarnAllocator: Completed container container_1523459048274_0025_01_000007 on host: apiros-4.gce.test.com (state: COMPLETE, exit status: 1)

18/04/13 05:37:43 INFO yarn.YarnAllocatorBlacklistTracker: blacklisting host as YARN allocation failed: apiros-4.gce.test.com

18/04/13 05:37:43 INFO yarn.YarnAllocatorBlacklistTracker: adding nodes to YARN application master's blacklist: List(apiros-4.gce.test.com)

18/04/13 05:37:43 WARN yarn.YarnAllocator: Container marked as failed: container_1523459048274_0025_01_000007 on host: apiros-4.gce.test.com. Exit status: 1. Diagnostics: Exception from container-launch.

Container id: container_1523459048274_0025_01_000007

Exit code: 1

Stack trace: ExitCodeException exitCode=1:

at org.apache.hadoop.util.Shell.runCommand(Shell.java:604)

at org.apache.hadoop.util.Shell.run(Shell.java:507)

at org.apache.hadoop.util.Shell$ShellCommandExecutor.execute(Shell.java:789)

at org.apache.hadoop.yarn.server.nodemanager.DefaultContainerExecutor.launchContainer(DefaultContainerExecutor.java:213)

at org.apache.hadoop.yarn.server.nodemanager.containermanager.launcher.ContainerLaunch.call(ContainerLaunch.java:302)

at org.apache.hadoop.yarn.server.nodemanager.containermanager.launcher.ContainerLaunch.call(ContainerLaunch.java:82)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

```

Where the most important part is:

```

18/04/13 05:37:43 INFO yarn.YarnAllocatorBlacklistTracker: blacklisting host as YARN allocation failed: apiros-4.gce.test.com

18/04/13 05:37:43 INFO yarn.YarnAllocatorBlacklistTracker: adding nodes to YARN application master's blacklist: List(apiros-4.gce.test.com)

```

And execution was continued (no shutdown called).

### Testing the backlisting of the whole cluster

Starting Spark with YARN blacklisting enabled then removing a the Spark core jar one by one from all the cluster nodes. Then executing a simple spark job which fails checking the yarn log the expected exit status is contained:

```

18/06/15 01:07:10 INFO yarn.ApplicationMaster: Final app status: FAILED, exitCode: 11, (reason: Due to executor failures all available nodes are blacklisted)

18/06/15 01:07:13 INFO util.ShutdownHookManager: Shutdown hook called

```

Author: “attilapiros” <piros.attila.zsolt@gmail.com>

Closes#21068 from attilapiros/SPARK-16630.

## What changes were proposed in this pull request?

When a `union` is invoked on several RDDs of which one is an empty RDD, the result of the operation is a `UnionRDD`. This causes an unneeded extra-shuffle when all the other RDDs have the same partitioning.

The PR ignores incoming empty RDDs in the union method.

## How was this patch tested?

added UT

Author: Marco Gaido <marcogaido91@gmail.com>

Closes#21333 from mgaido91/SPARK-23778.

## What changes were proposed in this pull request?

We don't require specific ordering of the input data, the sort action is not necessary and misleading.

## How was this patch tested?

Existing test suite.

Author: Xingbo Jiang <xingbo.jiang@databricks.com>

Closes#21536 from jiangxb1987/sorterSuite.

## What changes were proposed in this pull request?

When user create a aggregator object in scala and pass the aggregator to Spark Dataset's agg() method, Spark's will initialize TypedAggregateExpression with the nodeName field as aggregator.getClass.getSimpleName. However, getSimpleName is not safe in scala environment, depending on how user creates the aggregator object. For example, if the aggregator class full qualified name is "com.my.company.MyUtils$myAgg$2$", the getSimpleName will throw java.lang.InternalError "Malformed class name". This has been reported in scalatest https://github.com/scalatest/scalatest/pull/1044 and discussed in many scala upstream jiras such as SI-8110, SI-5425.

To fix this issue, we follow the solution in https://github.com/scalatest/scalatest/pull/1044 to add safer version of getSimpleName as a util method, and TypedAggregateExpression will invoke this util method rather than getClass.getSimpleName.

## How was this patch tested?

added unit test

Author: Fangshi Li <fli@linkedin.com>

Closes#21276 from fangshil/SPARK-24216.

## What changes were proposed in this pull request?

Currently we only clean up the local directories on application removed. However, when executors die and restart repeatedly, many temp files are left untouched in the local directories, which is undesired behavior and could cause disk space used up gradually.

We can detect executor death in the Worker, and clean up the non-shuffle files (files not ended with ".index" or ".data") in the local directories, we should not touch the shuffle files since they are expected to be used by the external shuffle service.

Scope of this PR is limited to only implement the cleanup logic on a Standalone cluster, we defer to experts familiar with other cluster managers(YARN/Mesos/K8s) to determine whether it's worth to add similar support.

## How was this patch tested?

Add new test suite to cover.

Author: Xingbo Jiang <xingbo.jiang@databricks.com>

Closes#21390 from jiangxb1987/cleanupNonshuffleFiles.

## What changes were proposed in this pull request?

Improve the exception messages when retrieving Spark conf values to include the key name when the value is invalid.

## How was this patch tested?

Unit tests for all get* operations in SparkConf that require a specific value format

Author: William Sheu <william.sheu@databricks.com>

Closes#21454 from PenguinToast/SPARK-24337-spark-config-errors.

## What changes were proposed in this pull request?

For some Spark applications, though they're a java program, they require not only jar dependencies, but also python dependencies. One example is Livy remote SparkContext application, this application is actually an embedded REPL for Scala/Python/R, it will not only load in jar dependencies, but also python and R deps, so we should specify not only "--jars", but also "--py-files".

Currently for a Spark application, --py-files can only be worked for a pyspark application, so it will not be worked in the above case. So here propose to remove such restriction.

Also we tested that "spark.submit.pyFiles" only supports quite limited scenario (client mode with local deps), so here also expand the usage of "spark.submit.pyFiles" to be alternative of --py-files.

## How was this patch tested?

UT added.

Author: jerryshao <sshao@hortonworks.com>

Closes#21420 from jerryshao/SPARK-24377.

## What changes were proposed in this pull request?

The ultimate goal is for listeners to onTaskEnd to receive metrics when a task is killed intentionally, since the data is currently just thrown away. This is already done for ExceptionFailure, so this just copies the same approach.

## How was this patch tested?

Updated existing tests.

This is a rework of https://github.com/apache/spark/pull/17422, all credits should go to noodle-fb

Author: Xianjin YE <advancedxy@gmail.com>

Author: Charles Lewis <noodle@fb.com>

Closes#21165 from advancedxy/SPARK-20087.

## What changes were proposed in this pull request?

The PR retrieves the proxyBase automatically from the header `X-Forwarded-Context` (if available). This is the header used by Knox to inform the proxied service about the base path.

This provides 0-configuration support for Knox gateway (instead of having to properly set `spark.ui.proxyBase`) and it allows to access directly SHS when it is proxied by Knox. In the previous scenario, indeed, after setting `spark.ui.proxyBase`, direct access to SHS was not working fine (due to bad link generated).

## How was this patch tested?

added UT + manual tests

Author: Marco Gaido <marcogaido91@gmail.com>

Closes#21268 from mgaido91/SPARK-24209.

EventListeners can interrupt the event queue thread. In particular,

when the EventLoggingListener writes to hdfs, hdfs can interrupt the

thread. When there is an interrupt, the queue should be removed and stop

accepting any more events. Before this change, the queue would continue

to take more events (till it was full), and then would not stop when the

application was complete because the PoisonPill couldn't be added.

Added a unit test which failed before this change.

Author: Imran Rashid <irashid@cloudera.com>

Closes#21356 from squito/SPARK-24309.

## What changes were proposed in this pull request?

There's a period of time when an accumulator has been garbage collected, but hasn't been removed from AccumulatorContext.originals by ContextCleaner. When an update is received for such accumulator it will throw an exception and kill the whole job. This can happen when a stage completes, but there're still running tasks from other attempts, speculation etc. Since AccumulatorContext.get() returns an option we can just return None in such case.

## How was this patch tested?

Unit test.

Author: Artem Rudoy <artem.rudoy@gmail.com>

Closes#21114 from artemrd/SPARK-22371.

## What changes were proposed in this pull request?

```

~/spark-2.3.0-bin-hadoop2.7$ bin/spark-sql --num-executors 0 --conf spark.dynamicAllocation.enabled=true

Java HotSpot(TM) 64-Bit Server VM warning: ignoring option PermSize=1024m; support was removed in 8.0

Java HotSpot(TM) 64-Bit Server VM warning: ignoring option MaxPermSize=1024m; support was removed in 8.0

Error: Number of executors must be a positive number

Run with --help for usage help or --verbose for debug output

```

Actually, we could start up with min executor number with 0 before if dynamically

## How was this patch tested?

ut added

Author: Kent Yao <yaooqinn@hotmail.com>

Closes#21290 from yaooqinn/SPARK-24241.

## What changes were proposed in this pull request?

When multiple clients attempt to resolve artifacts via the `--packages` parameter, they could run into race condition when they each attempt to modify the dummy `org.apache.spark-spark-submit-parent-default.xml` file created in the default ivy cache dir.

This PR changes the behavior to encode UUID in the dummy module descriptor so each client will operate on a different resolution file in the ivy cache dir. In addition, this patch changes the behavior of when and which resolution files are cleaned to prevent accumulation of resolution files in the default ivy cache dir.

Since this PR is a successor of #18801, close#18801. Many codes were ported from #18801. **Many efforts were put here. I think this PR should credit to Victsm .**

## How was this patch tested?

added UT into `SparkSubmitUtilsSuite`

Author: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

Closes#21251 from kiszk/SPARK-10878.

## What changes were proposed in this pull request?

Sometimes "SparkListenerSuite.local metrics" test fails because the average of executorDeserializeTime is too short. As squito suggested to avoid these situations in one of the task a reference introduced to an object implementing a custom Externalizable.readExternal which sleeps 1ms before returning.

## How was this patch tested?

With unit tests (and checking the effect of this change to the average with a much larger sleep time).

Author: “attilapiros” <piros.attila.zsolt@gmail.com>

Author: Attila Zsolt Piros <2017933+attilapiros@users.noreply.github.com>

Closes#21280 from attilapiros/SPARK-19181.

It was missing the jax-rs annotation.

Author: Marcelo Vanzin <vanzin@cloudera.com>

Closes#21245 from vanzin/SPARK-24188.

Change-Id: Ib338e34b363d7c729cc92202df020dc51033b719

## What changes were proposed in this pull request?

SPARK-24160 is causing a compilation failure (after SPARK-24143 was merged). This fixes the issue.

## How was this patch tested?

building successfully

Author: Marco Gaido <marcogaido91@gmail.com>

Closes#21256 from mgaido91/SPARK-24160_FOLLOWUP.

## What changes were proposed in this pull request?

This patch modifies `ShuffleBlockFetcherIterator` so that the receipt of zero-size blocks is treated as an error. This is done as a preventative measure to guard against a potential source of data loss bugs.

In the shuffle layer, we guarantee that zero-size blocks will never be requested (a block containing zero records is always 0 bytes in size and is marked as empty such that it will never be legitimately requested by executors). However, the existing code does not fully take advantage of this invariant in the shuffle-read path: the existing code did not explicitly check whether blocks are non-zero-size.

Additionally, our decompression and deserialization streams treat zero-size inputs as empty streams rather than errors (EOF might actually be treated as "end-of-stream" in certain layers (longstanding behavior dating to earliest versions of Spark) and decompressors like Snappy may be tolerant to zero-size inputs).

As a result, if some other bug causes legitimate buffers to be replaced with zero-sized buffers (due to corruption on either the send or receive sides) then this would translate into silent data loss rather than an explicit fail-fast error.

This patch addresses this problem by adding a `buf.size != 0` check. See code comments for pointers to tests which guarantee the invariants relied on here.

## How was this patch tested?

Existing tests (which required modifications, since some were creating empty buffers in mocks). I also added a test to make sure we fail on zero-size blocks.

To test that the zero-size blocks are indeed a potential corruption source, I manually ran a workload in `spark-shell` with a modified build which replaces all buffers with zero-size buffers in the receive path.

Author: Josh Rosen <joshrosen@databricks.com>

Closes#21219 from JoshRosen/SPARK-24160.

## What changes were proposed in this pull request?

In current code(`MapOutputTracker.convertMapStatuses`), mapstatus are converted to (blockId, size) pair for all blocks – no matter the block is empty or not, which result in OOM when there are lots of consecutive empty blocks, especially when adaptive execution is enabled.

(blockId, size) pair is only used in `ShuffleBlockFetcherIterator` to control shuffle-read and only non-empty block request is sent. Can we just filter out the empty blocks in MapOutputTracker.convertMapStatuses and save memory?

## How was this patch tested?

not added yet.

Author: jinxing <jinxing6042@126.com>

Closes#21212 from jinxing64/SPARK-24143.

## What changes were proposed in this pull request?

It's possible that Accumulators of Spark 1.x may no longer work with Spark 2.x. This is because `LegacyAccumulatorWrapper.isZero` may return wrong answer if `AccumulableParam` doesn't define equals/hashCode.

This PR fixes this by using reference equality check in `LegacyAccumulatorWrapper.isZero`.

## How was this patch tested?

a new test

Author: Wenchen Fan <wenchen@databricks.com>

Closes#21229 from cloud-fan/accumulator.

Fetch failure lead to multiple tasksets which are active for a given

stage. While there is only one "active" version of the taskset, the

earlier attempts can still have running tasks, which can complete

successfully. So a task completion needs to update every taskset

so that it knows the partition is completed. That way the final active

taskset does not try to submit another task for the same partition,

and so that it knows when it is completed and when it should be

marked as a "zombie".

Added a regression test.

Author: Imran Rashid <irashid@cloudera.com>

Closes#21131 from squito/SPARK-23433.

JIRA Issue: https://issues.apache.org/jira/browse/SPARK-24107?jql=text%20~%20%22ChunkedByteBuffer%22

ChunkedByteBuffer.writeFully method has not reset the limit value. When

chunks larger than bufferWriteChunkSize, such as 80 * 1024 * 1024 larger than

config.BUFFER_WRITE_CHUNK_SIZE(64 * 1024 * 1024),only while once, will lost 16 * 1024 * 1024 byte

Author: WangJinhai02 <jinhai.wang02@ele.me>

Closes#21175 from manbuyun/bugfix-ChunkedByteBuffer.

## What changes were proposed in this pull request?

By default, the dynamic allocation will request enough executors to maximize the

parallelism according to the number of tasks to process. While this minimizes the

latency of the job, with small tasks this setting can waste a lot of resources due to

executor allocation overhead, as some executor might not even do any work.

This setting allows to set a ratio that will be used to reduce the number of

target executors w.r.t. full parallelism.

The number of executors computed with this setting is still fenced by

`spark.dynamicAllocation.maxExecutors` and `spark.dynamicAllocation.minExecutors`

## How was this patch tested?

Units tests and runs on various actual workloads on a Yarn Cluster

Author: Julien Cuquemelle <j.cuquemelle@criteo.com>

Closes#19881 from jcuquemelle/AddTaskPerExecutorSlot.

## What changes were proposed in this pull request?

SparkContextSuite.test("Cancelling stages/jobs with custom reasons.") could stay in an infinite loop because of the problem found and fixed in [SPARK-23775](https://issues.apache.org/jira/browse/SPARK-23775).

This PR solves this mentioned flakyness by removing shared variable usages when cancel happens in a loop and using wait and CountDownLatch for synhronization.

## How was this patch tested?

Existing unit test.

Author: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Closes#21105 from gaborgsomogyi/SPARK-24022.

## What changes were proposed in this pull request?

There‘s a miswrite in BlacklistTracker's updateBlacklistForFetchFailure:

```

val blacklistedExecsOnNode =

nodeToBlacklistedExecs.getOrElseUpdate(exec, HashSet[String]())

blacklistedExecsOnNode += exec

```

where first **exec** should be **host**.

## How was this patch tested?

adjust existed test.

Author: wuyi <ngone_5451@163.com>

Closes#21104 from Ngone51/SPARK-24021.

## What changes were proposed in this pull request?

SparkContext submitted a map stage from `submitMapStage` to `DAGScheduler`,

`markMapStageJobAsFinished` is called only in (https://github.com/apache/spark/blob/master/core/src/main/scala/org/apache/spark/scheduler/DAGScheduler.scala#L933 and https://github.com/apache/spark/blob/master/core/src/main/scala/org/apache/spark/scheduler/DAGScheduler.scala#L1314);

But think about below scenario:

1. stage0 and stage1 are all `ShuffleMapStage` and stage1 depends on stage0;

2. We submit stage1 by `submitMapStage`;

3. When stage 1 running, `FetchFailed` happened, stage0 and stage1 got resubmitted as stage0_1 and stage1_1;

4. When stage0_1 running, speculated tasks in old stage1 come as succeeded, but stage1 is not inside `runningStages`. So even though all splits(including the speculated tasks) in stage1 succeeded, job listener in stage1 will not be called;

5. stage0_1 finished, stage1_1 starts running. When `submitMissingTasks`, there is no missing tasks. But in current code, job listener is not triggered.

We should call the job listener for map stage in `5`.

## How was this patch tested?

Not added yet.

Author: jinxing <jinxing6042@126.com>

Closes#21019 from jinxing64/SPARK-23948.

## What changes were proposed in this pull request?

In current code, it will scanning all partition paths when spark.sql.hive.verifyPartitionPath=true.

e.g. table like below:

```

CREATE TABLE `test`(

`id` int,

`age` int,

`name` string)

PARTITIONED BY (

`A` string,

`B` string)

load data local inpath '/tmp/data0' into table test partition(A='00', B='00')

load data local inpath '/tmp/data1' into table test partition(A='01', B='01')

load data local inpath '/tmp/data2' into table test partition(A='10', B='10')

load data local inpath '/tmp/data3' into table test partition(A='11', B='11')

```

If I query with SQL – "select * from test where A='00' and B='01' ", current code will scan all partition paths including '/data/A=00/B=00', '/data/A=00/B=00', '/data/A=01/B=01', '/data/A=10/B=10', '/data/A=11/B=11'. It costs much time and memory cost.

This pr proposes to avoid iterating all partition paths. Add a config `spark.files.ignoreMissingFiles` and ignore the `file not found` when `getPartitions/compute`(for hive table scan). This is much like the logic brought by

`spark.sql.files.ignoreMissingFiles`(which is for datasource scan).

## How was this patch tested?

UT

Author: jinxing <jinxing6042@126.com>

Closes#19868 from jinxing64/SPARK-22676.

The current in-process launcher implementation just calls the SparkSubmit

object, which, in case of errors, will more often than not exit the JVM.

This is not desirable since this launcher is meant to be used inside other

applications, and that would kill the application.

The change turns SparkSubmit into a class, and abstracts aways some of

the functionality used to print error messages and abort the submission

process. The default implementation uses the logging system for messages,

and throws exceptions for errors. As part of that I also moved some code

that doesn't really belong in SparkSubmit to a better location.

The command line invocation of spark-submit now uses a special implementation

of the SparkSubmit class that overrides those behaviors to do what is expected

from the command line version (print to the terminal, exit the JVM, etc).

A lot of the changes are to replace calls to methods such as "printErrorAndExit"

with the new API.

As part of adding tests for this, I had to fix some small things in the

launcher option parser so that things like "--version" can work when

used in the launcher library.

There is still code that prints directly to the terminal, like all the

Ivy-related code in SparkSubmitUtils, and other areas where some re-factoring

would help, like the CommandLineUtils class, but I chose to leave those

alone to keep this change more focused.

Aside from existing and added unit tests, I ran command line tools with

a bunch of different arguments to make sure messages and errors behave

like before.

Author: Marcelo Vanzin <vanzin@cloudera.com>

Closes#20925 from vanzin/SPARK-22941.

This change introduces two optimizations to help speed up generation

of listing data when parsing events logs.

The first one allows the parser to be stopped when enough data to

create the listing entry has been read. This is currently the start

event plus environment info, to capture UI ACLs. If the end event is

needed, the code will skip to the end of the log to try to find that

information, instead of parsing the whole log file.

Unfortunately this works better with uncompressed logs. Skipping bytes

on compressed logs only saves the work of parsing lines and some events,

so not a lot of gains are observed.

The second optimization deals with in-progress logs. It works in two

ways: first, it completely avoids parsing the rest of the log for

these apps when enough listing data is read. This, unlike the above,

also speeds things up for compressed logs, since only the very beginning

of the log has to be read.

On top of that, the code that decides whether to re-parse logs to get

updated listing data will ignore in-progress applications until they've

completed.

Both optimizations can be disabled but are enabled by default.

I tested this on some fake event logs to see the effect. I created

500 logs of about 60M each (so ~30G uncompressed; each log was 1.7M

when compressed with zstd). Below, C = completed, IP = in-progress,

the size means the amount of data re-parsed at the end of logs

when necessary.

```

none/C none/IP zstd/C zstd/IP

On / 16k 2s 2s 22s 2s

On / 1m 3s 2s 24s 2s

Off 1.1m 1.1m 26s 24s

```

This was with 4 threads on a single local SSD. As expected from the

previous explanations, there are considerable gains for in-progress

logs, and for uncompressed logs, but not so much when looking at the

full compressed log.

As a side note, I removed the custom code to get the scan time by

creating a file on HDFS; since file mod times are not used to detect

changed logs anymore, local time is enough for the current use of

the SHS.

Author: Marcelo Vanzin <vanzin@cloudera.com>

Closes#20952 from vanzin/SPARK-6951.

SPARK-19276 ensured that FetchFailures do not get swallowed by other

layers of exception handling, but it also meant that a killed task could

look like a fetch failure. This is particularly a problem with

speculative execution, where we expect to kill tasks as they are reading

shuffle data. The fix is to ensure that we always check for killed

tasks first.

Added a new unit test which fails before the fix, ran it 1k times to

check for flakiness. Full suite of tests on jenkins.

Author: Imran Rashid <irashid@cloudera.com>

Closes#20987 from squito/SPARK-23816.

## What changes were proposed in this pull request?

The test case JobCancellationSuite."interruptible iterator of shuffle reader" has been flaky because `KillTask` event is handled asynchronously, so it can happen that the semaphore is released but the task is still running.

Actually we only have to check if the total number of processed elements is less than the input elements number, so we know the task get cancelled.

## How was this patch tested?

The new test case still fails without the purposed patch, and succeeded in current master.

Author: Xingbo Jiang <xingbo.jiang@databricks.com>

Closes#20993 from jiangxb1987/JobCancellationSuite.

## What changes were proposed in this pull request?

This PR avoids possible overflow at an operation `long = (long)(int * int)`. The multiplication of large positive integer values may set one to MSB. This leads to a negative value in long while we expected a positive value (e.g. `0111_0000_0000_0000 * 0000_0000_0000_0010`).

This PR performs long cast before the multiplication to avoid this situation.

## How was this patch tested?

Existing UTs

Author: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

Closes#21002 from kiszk/SPARK-23893.

## What changes were proposed in this pull request?

This PR allows us to use one of several types of `MemoryBlock`, such as byte array, int array, long array, or `java.nio.DirectByteBuffer`. To use `java.nio.DirectByteBuffer` allows to have off heap memory which is automatically deallocated by JVM. `MemoryBlock` class has primitive accessors like `Platform.getInt()`, `Platform.putint()`, or `Platform.copyMemory()`.

This PR uses `MemoryBlock` for `OffHeapColumnVector`, `UTF8String`, and other places. This PR can improve performance of operations involving memory accesses (e.g. `UTF8String.trim`) by 1.8x.

For now, this PR does not use `MemoryBlock` for `BufferHolder` based on cloud-fan's [suggestion](https://github.com/apache/spark/pull/11494#issuecomment-309694290).

Since this PR is a successor of #11494, close#11494. Many codes were ported from #11494. Many efforts were put here. **I think this PR should credit to yzotov.**

This PR can achieve **1.1-1.4x performance improvements** for operations in `UTF8String` or `Murmur3_x86_32`. Other operations are almost comparable performances.

Without this PR

```

OpenJDK 64-Bit Server VM 1.8.0_121-8u121-b13-0ubuntu1.16.04.2-b13 on Linux 4.4.0-22-generic

Intel(R) Xeon(R) CPU E5-2667 v3 3.20GHz

OpenJDK 64-Bit Server VM 1.8.0_121-8u121-b13-0ubuntu1.16.04.2-b13 on Linux 4.4.0-22-generic

Intel(R) Xeon(R) CPU E5-2667 v3 3.20GHz

Hash byte arrays with length 268435487: Best/Avg Time(ms) Rate(M/s) Per Row(ns) Relative

------------------------------------------------------------------------------------------------

Murmur3_x86_32 526 / 536 0.0 131399881.5 1.0X

UTF8String benchmark: Best/Avg Time(ms) Rate(M/s) Per Row(ns) Relative

------------------------------------------------------------------------------------------------

hashCode 525 / 552 1022.6 1.0 1.0X

substring 414 / 423 1298.0 0.8 1.3X

```

With this PR

```

OpenJDK 64-Bit Server VM 1.8.0_121-8u121-b13-0ubuntu1.16.04.2-b13 on Linux 4.4.0-22-generic

Intel(R) Xeon(R) CPU E5-2667 v3 3.20GHz

Hash byte arrays with length 268435487: Best/Avg Time(ms) Rate(M/s) Per Row(ns) Relative

------------------------------------------------------------------------------------------------

Murmur3_x86_32 474 / 488 0.0 118552232.0 1.0X

UTF8String benchmark: Best/Avg Time(ms) Rate(M/s) Per Row(ns) Relative

------------------------------------------------------------------------------------------------

hashCode 476 / 480 1127.3 0.9 1.0X

substring 287 / 291 1869.9 0.5 1.7X

```

Benchmark program

```

test("benchmark Murmur3_x86_32") {

val length = 8192 * 32768 + 31

val seed = 42L

val iters = 1 << 2

val random = new Random(seed)

val arrays = Array.fill[MemoryBlock](numArrays) {

val bytes = new Array[Byte](length)

random.nextBytes(bytes)

new ByteArrayMemoryBlock(bytes, Platform.BYTE_ARRAY_OFFSET, length)

}

val benchmark = new Benchmark("Hash byte arrays with length " + length,

iters * numArrays, minNumIters = 20)

benchmark.addCase("HiveHasher") { _: Int =>

var sum = 0L

for (_ <- 0L until iters) {

sum += HiveHasher.hashUnsafeBytesBlock(

arrays(i), Platform.BYTE_ARRAY_OFFSET, length)

}

}

benchmark.run()

}

test("benchmark UTF8String") {

val N = 512 * 1024 * 1024

val iters = 2

val benchmark = new Benchmark("UTF8String benchmark", N, minNumIters = 20)

val str0 = new java.io.StringWriter() { { for (i <- 0 until N) { write(" ") } } }.toString

val s0 = UTF8String.fromString(str0)

benchmark.addCase("hashCode") { _: Int =>

var h: Int = 0

for (_ <- 0L until iters) { h += s0.hashCode }

}

benchmark.addCase("substring") { _: Int =>

var s: UTF8String = null

for (_ <- 0L until iters) { s = s0.substring(N / 2 - 5, N / 2 + 5) }

}

benchmark.run()

}

```

I run [this benchmark program](https://gist.github.com/kiszk/94f75b506c93a663bbbc372ffe8f05de) using [the commit](ee5a79861c). I got the following results:

```

OpenJDK 64-Bit Server VM 1.8.0_151-8u151-b12-0ubuntu0.16.04.2-b12 on Linux 4.4.0-66-generic

Intel(R) Xeon(R) CPU E5-2667 v3 3.20GHz

Memory access benchmarks: Best/Avg Time(ms) Rate(M/s) Per Row(ns) Relative

------------------------------------------------------------------------------------------------

ByteArrayMemoryBlock get/putInt() 220 / 221 609.3 1.6 1.0X

Platform get/putInt(byte[]) 220 / 236 610.9 1.6 1.0X

Platform get/putInt(Object) 492 / 494 272.8 3.7 0.4X

OnHeapMemoryBlock get/putLong() 322 / 323 416.5 2.4 0.7X

long[] 221 / 221 608.0 1.6 1.0X

Platform get/putLong(long[]) 321 / 321 418.7 2.4 0.7X

Platform get/putLong(Object) 561 / 563 239.2 4.2 0.4X

```

I also run [this benchmark program](https://gist.github.com/kiszk/5fdb4e03733a5d110421177e289d1fb5) for comparing performance of `Platform.copyMemory()`.

```

OpenJDK 64-Bit Server VM 1.8.0_151-8u151-b12-0ubuntu0.16.04.2-b12 on Linux 4.4.0-66-generic

Intel(R) Xeon(R) CPU E5-2667 v3 3.20GHz

Platform copyMemory: Best/Avg Time(ms) Rate(M/s) Per Row(ns) Relative

------------------------------------------------------------------------------------------------

Object to Object 1961 / 1967 8.6 116.9 1.0X

System.arraycopy Object to Object 1917 / 1921 8.8 114.3 1.0X

byte array to byte array 1961 / 1968 8.6 116.9 1.0X

System.arraycopy byte array to byte array 1909 / 1937 8.8 113.8 1.0X

int array to int array 1921 / 1990 8.7 114.5 1.0X

double array to double array 1918 / 1923 8.7 114.3 1.0X

Object to byte array 1961 / 1967 8.6 116.9 1.0X

Object to short array 1965 / 1972 8.5 117.1 1.0X

Object to int array 1910 / 1915 8.8 113.9 1.0X

Object to float array 1971 / 1978 8.5 117.5 1.0X

Object to double array 1919 / 1944 8.7 114.4 1.0X

byte array to Object 1959 / 1967 8.6 116.8 1.0X

int array to Object 1961 / 1970 8.6 116.9 1.0X

double array to Object 1917 / 1924 8.8 114.3 1.0X

```

These results show three facts:

1. According to the second/third or sixth/seventh results in the first experiment, if we use `Platform.get/putInt(Object)`, we achieve more than 2x worse performance than `Platform.get/putInt(byte[])` with concrete type (i.e. `byte[]`).

2. According to the second/third or fourth/fifth/sixth results in the first experiment, the fastest way to access an array element on Java heap is `array[]`. **Cons of `array[]` is that it is not possible to support unaligned-8byte access.**

3. According to the first/second/third or fourth/sixth/seventh results in the first experiment, `getInt()/putInt() or getLong()/putLong()` in subclasses of `MemoryBlock` can achieve comparable performance to `Platform.get/putInt()` or `Platform.get/putLong()` with concrete type (second or sixth result). There is no overhead regarding virtual call.

4. According to results in the second experiment, for `Platform.copy()`, to pass `Object` can achieve the same performance as to pass any type of primitive array as source or destination.

5. According to second/fourth results in the second experiment, `Platform.copy()` can achieve the same performance as `System.arrayCopy`. **It would be good to use `Platform.copy()` since `Platform.copy()` can take any types for src and dst.**

We are incrementally replace `Platform.get/putXXX` with `MemoryBlock.get/putXXX`. This is because we have two advantages.

1) Achieve better performance due to having a concrete type for an array.

2) Use simple OO design instead of passing `Object`

It is easy to use `MemoryBlock` in `InternalRow`, `BufferHolder`, `TaskMemoryManager`, and others that are already abstracted. It is not easy to use `MemoryBlock` in utility classes related to hashing or others.

Other candidates are

- UnsafeRow, UnsafeArrayData, UnsafeMapData, SpecificUnsafeRowJoiner

- UTF8StringBuffer

- BufferHolder

- TaskMemoryManager

- OnHeapColumnVector

- BytesToBytesMap

- CachedBatch

- classes for hash

- others.

## How was this patch tested?

Added `UnsafeMemoryAllocator`

Author: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

Closes#19222 from kiszk/SPARK-10399.

These tests can fail with a timeout if the remote repos are not responding,

or slow. The tests don't need anything from those repos, so use an empty

ivy config file to avoid setting up the defaults.

The tests are passing reliably for me locally now, and failing more often

than not today without this change since http://dl.bintray.com/spark-packages/maven

doesn't seem to be loading from my machine.

Author: Marcelo Vanzin <vanzin@cloudera.com>

Closes#20916 from vanzin/SPARK-19964.

This particular test assumed that Hadoop libraries did not support

http as a file system. Hadoop 2.9 does, so the test failed. The test

now forces a non-existent implementation for the http fs, which

forces the expected error.

There were also a couple of other issues in the same test: SparkSubmit

arguments in the wrong order, and the wrong check later when asserting,

which was being masked by the previous issues.

Author: Marcelo Vanzin <vanzin@cloudera.com>

Closes#20895 from vanzin/SPARK-23787.

Firstly, glob resolution will not result in swallowing the remote name part (that is preceded by the `#` sign) in case of `--files` or `--archives` options

Moreover in the special case of multiple resolutions when the remote naming does not make sense and error is returned.

Enhanced current test and wrote additional test for the error case

Author: Mihaly Toth <misutoth@gmail.com>

Closes#20853 from misutoth/glob-with-remote-name.

## What changes were proposed in this pull request?

With SPARK-20236, `FileCommitProtocol.instantiate()` looks for a three argument constructor, passing in the `dynamicPartitionOverwrite` parameter. If there is no such constructor, it falls back to the classic two-arg one.

When `InsertIntoHadoopFsRelationCommand` passes down that `dynamicPartitionOverwrite` flag `to FileCommitProtocol.instantiate(`), it assumes that the instantiated protocol supports the specific requirements of dynamic partition overwrite. It does not notice when this does not hold, and so the output generated may be incorrect.

This patch changes `FileCommitProtocol.instantiate()` so when `dynamicPartitionOverwrite == true`, it requires the protocol implementation to have a 3-arg constructor. Classic two arg constructors are supported when it is false.

Also it adds some debug level logging for anyone trying to understand what's going on.

## How was this patch tested?

Unit tests verify that

* classes with only 2-arg constructor cannot be used with dynamic overwrite

* classes with only 2-arg constructor can be used without dynamic overwrite

* classes with 3 arg constructors can be used with both.

* the fallback to any two arg ctor takes place after the attempt to load the 3-arg ctor,

* passing in invalid class types fail as expected (regression tests on expected behavior)

Author: Steve Loughran <stevel@hortonworks.com>

Closes#20824 from steveloughran/stevel/SPARK-23683-protocol-instantiate.

A few different things going on:

- Remove unused methods.

- Move JSON methods to the only class that uses them.

- Move test-only methods to TestUtils.

- Make getMaxResultSize() a config constant.

- Reuse functionality from existing libraries (JRE or JavaUtils) where possible.

The change also includes changes to a few tests to call `Utils.createTempFile` correctly,

so that temp dirs are created under the designated top-level temp dir instead of

potentially polluting git index.

Author: Marcelo Vanzin <vanzin@cloudera.com>

Closes#20706 from vanzin/SPARK-23550.

These options were used to configure the built-in JRE SSL libraries

when downloading files from HTTPS servers. But because they were also

used to set up the now (long) removed internal HTTPS file server,

their default configuration chose convenience over security by having

overly lenient settings.

This change removes the configuration options that affect the JRE SSL

libraries. The JRE trust store can still be configured via system

properties (or globally in the JRE security config). The only lost

functionality is not being able to disable the default hostname

verifier when using spark-submit, which should be fine since Spark

itself is not using https for any internal functionality anymore.

I also removed the HTTP-related code from the REPL class loader, since

we haven't had a HTTP server for REPL-generated classes for a while.

Author: Marcelo Vanzin <vanzin@cloudera.com>

Closes#20723 from vanzin/SPARK-23538.

## What changes were proposed in this pull request?

Before this commit, a non-interruptible iterator is returned if aggregator or ordering is specified.

This commit also ensures that sorter is closed even when task is cancelled(killed) in the middle of sorting.

## How was this patch tested?

Add a unit test in JobCancellationSuite

Author: Xianjin YE <advancedxy@gmail.com>

Closes#20449 from advancedxy/SPARK-23040.

## What changes were proposed in this pull request?

The algorithm in `DefaultPartitionCoalescer.setupGroups` is responsible for picking preferred locations for coalesced partitions. It analyzes the preferred locations of input partitions. It starts by trying to create one partition for each unique location in the input. However, if the the requested number of coalesced partitions is higher that the number of unique locations, it has to pick duplicate locations.

Previously, the duplicate locations would be picked by iterating over the input partitions in order, and copying their preferred locations to coalesced partitions. If the input partitions were clustered by location, this could result in severe skew.

With the fix, instead of iterating over the list of input partitions in order, we pick them at random. It's not perfectly balanced, but it's much better.

## How was this patch tested?

Unit test reproducing the behavior was added.

Author: Ala Luszczak <ala@databricks.com>

Closes#20664 from ala/SPARK-23496.

## What changes were proposed in this pull request?

When the shuffle dependency specifies aggregation ,and `dependency.mapSideCombine=false`, in the map side,there is no need for aggregation and sorting, so we should be able to use serialized sorting.

## How was this patch tested?

Existing unit test

Author: liuxian <liu.xian3@zte.com.cn>

Closes#20576 from 10110346/mapsidecombine.

The ExecutorAllocationManager should not adjust the target number of

executors when killing idle executors, as it has already adjusted the

target number down based on the task backlog.

The name `replace` was misleading with DynamicAllocation on, as the target number

of executors is changed outside of the call to `killExecutors`, so I adjusted that name. Also separated out the logic of `countFailures` as you don't always want that tied to `replace`.

While I was there I made two changes that weren't directly related to this:

1) Fixed `countFailures` in a couple cases where it was getting an incorrect value since it used to be tied to `replace`, eg. when killing executors on a blacklisted node.

2) hard error if you call `sc.killExecutors` with dynamic allocation on, since that's another way the ExecutorAllocationManager and the CoarseGrainedSchedulerBackend would get out of sync.

Added a unit test case which verifies that the calls to ExecutorAllocationClient do not adjust the number of executors.

Author: Imran Rashid <irashid@cloudera.com>

Closes#20604 from squito/SPARK-23365.

## What changes were proposed in this pull request?

If spark is run with "spark.authenticate=true", then it will fail to start in local mode.

This PR generates secret in local mode when authentication on.

## How was this patch tested?

Modified existing unit test.

Manually started spark-shell.

Author: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Closes#20652 from gaborgsomogyi/SPARK-23476.

## What changes were proposed in this pull request?

SPARK-20648 introduced the status `SKIPPED` for the stages. On the UI, previously, skipped stages were shown as `PENDING`; after this change, they are not shown on the UI.

The PR introduce a new section in order to show also `SKIPPED` stages in a proper table.

## How was this patch tested?

manual tests

Author: Marco Gaido <marcogaido91@gmail.com>

Closes#20651 from mgaido91/SPARK-23475.

## What changes were proposed in this pull request?

The root cause of missing completed stages is because `cleanupStages` will never remove skipped stages.

This PR changes the logic to always remove skipped stage first. This is safe since the job itself contains enough information to render skipped stages in the UI.

## How was this patch tested?

The new unit tests.

Author: Shixiong Zhu <zsxwing@gmail.com>

Closes#20656 from zsxwing/SPARK-23475.

## What changes were proposed in this pull request?

The issue here is `AppStatusStore.lastStageAttempt` will return the next available stage in the store when a stage doesn't exist.

This PR adds `last(stageId)` to ensure it returns a correct `StageData`

## How was this patch tested?

The new unit test.

Author: Shixiong Zhu <zsxwing@gmail.com>

Closes#20654 from zsxwing/SPARK-23481.

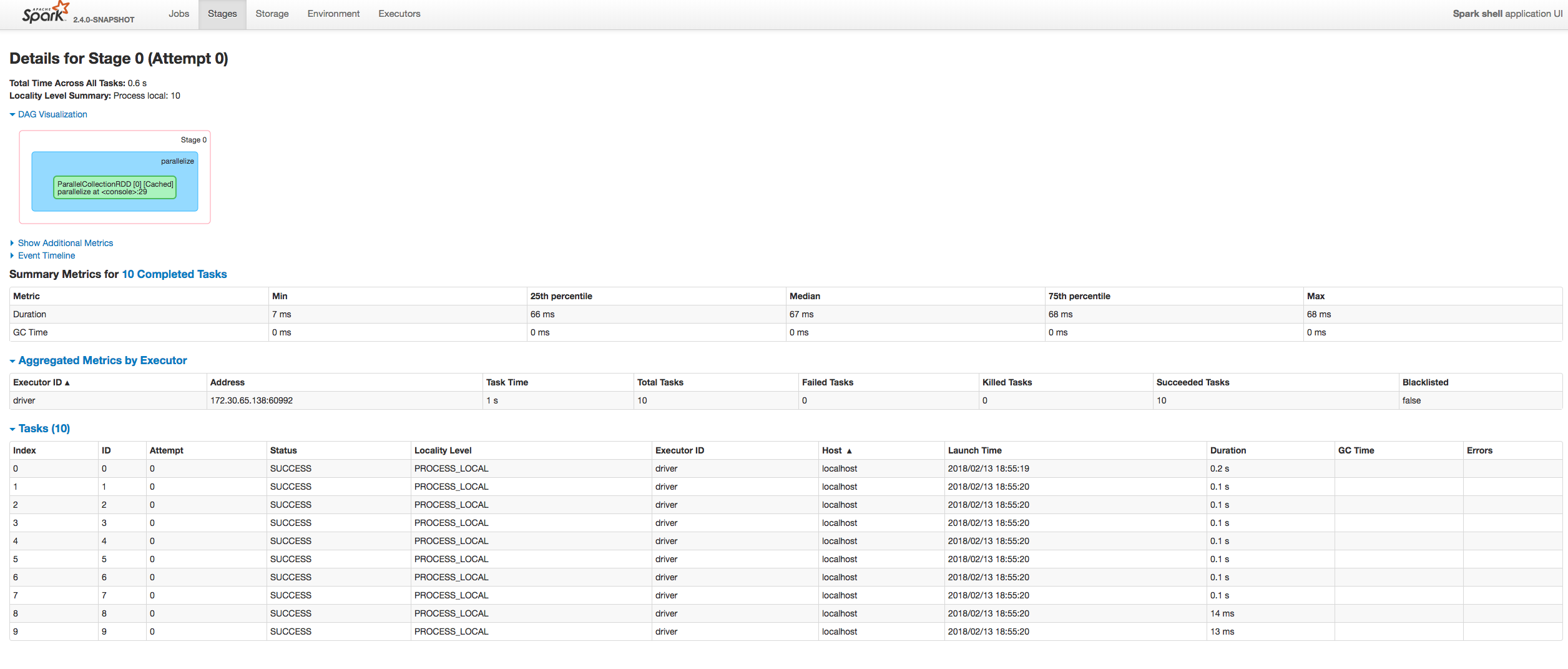

## What changes were proposed in this pull request?

Fixing exception got at sorting tasks by Host / Executor ID:

```

java.lang.IllegalArgumentException: Invalid sort column: Host

at org.apache.spark.ui.jobs.ApiHelper$.indexName(StagePage.scala:1017)

at org.apache.spark.ui.jobs.TaskDataSource.sliceData(StagePage.scala:694)

at org.apache.spark.ui.PagedDataSource.pageData(PagedTable.scala:61)

at org.apache.spark.ui.PagedTable$class.table(PagedTable.scala:96)

at org.apache.spark.ui.jobs.TaskPagedTable.table(StagePage.scala:708)

at org.apache.spark.ui.jobs.StagePage.liftedTree1$1(StagePage.scala:293)

at org.apache.spark.ui.jobs.StagePage.render(StagePage.scala:282)

at org.apache.spark.ui.WebUI$$anonfun$2.apply(WebUI.scala:82)

at org.apache.spark.ui.WebUI$$anonfun$2.apply(WebUI.scala:82)

at org.apache.spark.ui.JettyUtils$$anon$3.doGet(JettyUtils.scala:90)

at javax.servlet.http.HttpServlet.service(HttpServlet.java:687)

at javax.servlet.http.HttpServlet.service(HttpServlet.java:790)

at org.spark_project.jetty.servlet.ServletHolder.handle(ServletHolder.java:848)

at org.spark_project.jetty.servlet.ServletHandler.doHandle(ServletHandler.java:584)

```

Moreover some refactoring to avoid similar problems by introducing constants for each header name and reusing them at the identification of the corresponding sorting index.

## How was this patch tested?

Manually:

Author: “attilapiros” <piros.attila.zsolt@gmail.com>

Closes#20601 from attilapiros/SPARK-23413.

## What changes were proposed in this pull request?

`ReadAheadInputStream` was introduced in https://github.com/apache/spark/pull/18317/ to optimize reading spill files from disk.

However, from the profiles it seems that the hot path of reading small amounts of data (like readInt) is inefficient - it involves taking locks, and multiple checks.

Optimize locking: Lock is not needed when simply accessing the active buffer. Only lock when needing to swap buffers or trigger async reading, or get information about the async state.

Optimize short-path single byte reads, that are used e.g. by Java library DataInputStream.readInt.

The asyncReader used to call "read" only once on the underlying stream, that never filled the underlying buffer when it was wrapping an LZ4BlockInputStream. If the buffer was returned unfilled, that would trigger the async reader to be triggered to fill the read ahead buffer on each call, because the reader would see that the active buffer is below the refill threshold all the time.

However, filling the full buffer all the time could introduce increased latency, so also add an `AtomicBoolean` flag for the async reader to return earlier if there is a reader waiting for data.

Remove `readAheadThresholdInBytes` and instead immediately trigger async read when switching the buffers. It allows to simplify code paths, especially the hot one that then only has to check if there is available data in the active buffer, without worrying if it needs to retrigger async read. It seems to have positive effect on perf.

## How was this patch tested?

It was noticed as a regression in some workloads after upgrading to Spark 2.3.

It was particularly visible on TPCDS Q95 running on instances with fast disk (i3 AWS instances).

Running with profiling:

* Spark 2.2 - 5.2-5.3 minutes 9.5% in LZ4BlockInputStream.read

* Spark 2.3 - 6.4-6.6 minutes 31.1% in ReadAheadInputStream.read

* Spark 2.3 + fix - 5.3-5.4 minutes 13.3% in ReadAheadInputStream.read - very slightly slower, practically within noise.

We didn't see other regressions, and many workloads in general seem to be faster with Spark 2.3 (not investigated if thanks to async readed, or unrelated).

Author: Juliusz Sompolski <julek@databricks.com>

Closes#20555 from juliuszsompolski/SPARK-23366.