## What changes were proposed in this pull request?

Looks we intentionally set `null` for upper/lower bounds for complex types and don't use it. However, these look used in in-memory partition pruning, which ends up with incorrect results.

This PR proposes to explicitly whitelist the supported types.

```scala

val df = Seq(Array("a", "b"), Array("c", "d")).toDF("arrayCol")

df.cache().filter("arrayCol > array('a', 'b')").show()

```

```scala

val df = sql("select cast('a' as binary) as a")

df.cache().filter("a == cast('a' as binary)").show()

```

**Before:**

```

+--------+

|arrayCol|

+--------+

+--------+

```

```

+---+

| a|

+---+

+---+

```

**After:**

```

+--------+

|arrayCol|

+--------+

| [c, d]|

+--------+

```

```

+----+

| a|

+----+

|[61]|

+----+

```

## How was this patch tested?

Unit tests were added and manually tested.

Author: hyukjinkwon <gurwls223@apache.org>

Closes#21882 from HyukjinKwon/stats-filter.

## What changes were proposed in this pull request?

Implements INTERSECT ALL clause through query rewrites using existing operators in Spark. Please refer to [Link](https://drive.google.com/open?id=1nyW0T0b_ajUduQoPgZLAsyHK8s3_dko3ulQuxaLpUXE) for the design.

Input Query

``` SQL

SELECT c1 FROM ut1 INTERSECT ALL SELECT c1 FROM ut2

```

Rewritten Query

```SQL

SELECT c1

FROM (

SELECT replicate_row(min_count, c1)

FROM (

SELECT c1,

IF (vcol1_cnt > vcol2_cnt, vcol2_cnt, vcol1_cnt) AS min_count

FROM (

SELECT c1, count(vcol1) as vcol1_cnt, count(vcol2) as vcol2_cnt

FROM (

SELECT c1, true as vcol1, null as vcol2 FROM ut1

UNION ALL

SELECT c1, null as vcol1, true as vcol2 FROM ut2

) AS union_all

GROUP BY c1

HAVING vcol1_cnt >= 1 AND vcol2_cnt >= 1

)

)

)

```

## How was this patch tested?

Added test cases in SQLQueryTestSuite, DataFrameSuite, SetOperationSuite

Author: Dilip Biswal <dbiswal@us.ibm.com>

Closes#21886 from dilipbiswal/dkb_intersect_all_final.

## What changes were proposed in this pull request?

This PR propose to address https://github.com/apache/spark/pull/21318#discussion_r187843125 comment.

This is rather a nit but looks we better avoid to update the link for each release since it always points the latest (it doesn't look like worth enough updating release guide on the other hand as well).

## How was this patch tested?

N/A

Author: hyukjinkwon <gurwls223@apache.org>

Closes#21907 from HyukjinKwon/minor-fix.

## What changes were proposed in this pull request?

This PR propose to remove `-Phive-thriftserver` profile which seems not affecting the SparkR tests in AppVeyor.

Originally wanted to check if there's a meaningful build time decrease but seems not. It will have but seems not meaningfully decreased.

## How was this patch tested?

AppVeyor tests:

```

[00:40:49] Attaching package: 'SparkR'

[00:40:49]

[00:40:49] The following objects are masked from 'package:testthat':

[00:40:49]

[00:40:49] describe, not

[00:40:49]

[00:40:49] The following objects are masked from 'package:stats':

[00:40:49]

[00:40:49] cov, filter, lag, na.omit, predict, sd, var, window

[00:40:49]

[00:40:49] The following objects are masked from 'package:base':

[00:40:49]

[00:40:49] as.data.frame, colnames, colnames<-, drop, endsWith, intersect,

[00:40:49] rank, rbind, sample, startsWith, subset, summary, transform, union

[00:40:49]

[00:40:49] Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[00:41:43] basic tests for CRAN: .............

[00:41:43]

[00:41:43] DONE ===========================================================================

[00:41:43] binary functions: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[00:42:05] ...........

[00:42:05] functions on binary files: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[00:42:10] ....

[00:42:10] broadcast variables: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[00:42:12] ..

[00:42:12] functions in client.R: .....

[00:42:30] test functions in sparkR.R: ..............................................

[00:42:30] include R packages: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[00:42:31]

[00:42:31] JVM API: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[00:42:31] ..

[00:42:31] MLlib classification algorithms, except for tree-based algorithms: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[00:48:48] ......................................................................

[00:48:48] MLlib clustering algorithms: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[00:50:12] .....................................................................

[00:50:12] MLlib frequent pattern mining: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[00:50:18] .....

[00:50:18] MLlib recommendation algorithms: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[00:50:27] ........

[00:50:27] MLlib regression algorithms, except for tree-based algorithms: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[00:56:00] ................................................................................................................................

[00:56:00] MLlib statistics algorithms: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[00:56:04] ........

[00:56:04] MLlib tree-based algorithms: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[00:58:20] ..............................................................................................

[00:58:20] parallelize() and collect(): Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[00:58:20] .............................

[00:58:20] basic RDD functions: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[01:03:35] ............................................................................................................................................................................................................................................................................................................................................................................................................................................

[01:03:35] SerDe functionality: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[01:03:39] ...............................

[01:03:39] partitionBy, groupByKey, reduceByKey etc.: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[01:04:20] ....................

[01:04:20] functions in sparkR.R: ....

[01:04:20] SparkSQL functions: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[01:04:50] ........................................................................................................................................-chgrp: 'APPVYR-WIN\None' does not match expected pattern for group

[01:04:50] Usage: hadoop fs [generic options] -chgrp [-R] GROUP PATH...

[01:04:50] -chgrp: 'APPVYR-WIN\None' does not match expected pattern for group

[01:04:50] Usage: hadoop fs [generic options] -chgrp [-R] GROUP PATH...

[01:04:51] -chgrp: 'APPVYR-WIN\None' does not match expected pattern for group

[01:04:51] Usage: hadoop fs [generic options] -chgrp [-R] GROUP PATH...

[01:06:13] ............................................................................................................................................................................................................................................................................................................................................................-chgrp: 'APPVYR-WIN\None' does not match expected pattern for group

[01:06:13] Usage: hadoop fs [generic options] -chgrp [-R] GROUP PATH...

[01:06:14] .-chgrp: 'APPVYR-WIN\None' does not match expected pattern for group

[01:06:14] Usage: hadoop fs [generic options] -chgrp [-R] GROUP PATH...

[01:06:14] ....-chgrp: 'APPVYR-WIN\None' does not match expected pattern for group

[01:06:14] Usage: hadoop fs [generic options] -chgrp [-R] GROUP PATH...

[01:12:30] ...................................................................................................................................................................................................................................................................................................................................................................................................................................................................................................................................................................................................................................................

[01:12:30] Structured Streaming: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[01:14:27] ..........................................

[01:14:27] tests RDD function take(): Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[01:14:28] ................

[01:14:28] the textFile() function: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[01:14:44] .............

[01:14:44] functions in utils.R: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

[01:14:46] ............................................

[01:14:46] Windows-specific tests: .

[01:14:46]

[01:14:46] DONE ===========================================================================

[01:15:29] Build success

```

Author: hyukjinkwon <gurwls223@apache.org>

Closes#21894 from HyukjinKwon/wip-build.

When join key is long or int in broadcast join, Spark will use `LongToUnsafeRowMap` to store key-values of the table witch will be broadcasted. But, when `LongToUnsafeRowMap` is broadcasted to executors, and it is too big to hold in memory, it will be stored in disk. At that time, because `write` uses a variable `cursor` to determine how many bytes in `page` of `LongToUnsafeRowMap` will be write out and the `cursor` was not restore when deserializing, executor will write out nothing from page into disk.

## What changes were proposed in this pull request?

Restore cursor value when deserializing.

Author: liulijia <liutang123@yeah.net>

Closes#21772 from liutang123/SPARK-24809.

## What changes were proposed in this pull request?

This PR updates maven version from 3.3.9 to 3.5.4. The current build process uses mvn 3.3.9 that was release on 2015, which looks pretty old.

We met [an issue](https://issues.apache.org/jira/browse/SPARK-24895) to need the maven 3.5.2 or later.

The release note of the 3.5.4 is [here](https://maven.apache.org/docs/3.5.4/release-notes.html). Note version 3.4 was skipped.

From [the release note of the 3.5.0](https://maven.apache.org/docs/3.5.0/release-notes.html), the followings are new features:

1. ANSI color logging for improved output visibility

1. add support for module name != artifactId in every calculated URLs (project, SCM, site): special project.directory property

1. create a slf4j-simple provider extension that supports level color rendering

1. ModelResolver interface enhancement: addition of resolveModel(Dependency) supporting version ranges

## How was this patch tested?

Existing tests

Author: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

Closes#21905 from kiszk/SPARK-24956.

## What changes were proposed in this pull request?

- Update DateTimeUtilsSuite so that when testing roundtripping in daysToMillis and millisToDays multiple skipdates can be specified.

- Updated test so that both new years eve 2014 and new years day 2015 are skipped for kiribati time zones. This is necessary as java versions pre 181-b13 considered new years day 2015 to be skipped while susequent versions corrected this to new years eve.

## How was this patch tested?

Unit tests

Author: Chris Martin <chris@cmartinit.co.uk>

Closes#21901 from d80tb7/SPARK-24950_datetimeUtilsSuite_failures.

## What changes were proposed in this pull request?

Add one more test case for `com.databricks.spark.avro`.

## How was this patch tested?

N/A

Author: Xiao Li <gatorsmile@gmail.com>

Closes#21906 from gatorsmile/avro.

## What changes were proposed in this pull request?

Implements EXCEPT ALL clause through query rewrites using existing operators in Spark. In this PR, an internal UDTF (replicate_rows) is added to aid in preserving duplicate rows. Please refer to [Link](https://drive.google.com/open?id=1nyW0T0b_ajUduQoPgZLAsyHK8s3_dko3ulQuxaLpUXE) for the design.

**Note** This proposed UDTF is kept as a internal function that is purely used to aid with this particular rewrite to give us flexibility to change to a more generalized UDTF in future.

Input Query

``` SQL

SELECT c1 FROM ut1 EXCEPT ALL SELECT c1 FROM ut2

```

Rewritten Query

```SQL

SELECT c1

FROM (

SELECT replicate_rows(sum_val, c1)

FROM (

SELECT c1, sum_val

FROM (

SELECT c1, sum(vcol) AS sum_val

FROM (

SELECT 1L as vcol, c1 FROM ut1

UNION ALL

SELECT -1L as vcol, c1 FROM ut2

) AS union_all

GROUP BY union_all.c1

)

WHERE sum_val > 0

)

)

```

## How was this patch tested?

Added test cases in SQLQueryTestSuite, DataFrameSuite and SetOperationSuite

Author: Dilip Biswal <dbiswal@us.ibm.com>

Closes#21857 from dilipbiswal/dkb_except_all_final.

**Description**

Currently Speculative tasks that didn't commit can show up as success (depending on timing of commit). This is a bit confusing because that task didn't really succeed in the sense it didn't write anything.

I think these tasks should be marked as KILLED or something that is more obvious to the user exactly what happened. it is happened to hit the timing where it got a commit denied exception then it shows up as failed and counts against your task failures. It shouldn't count against task failures since that failure really doesn't matter.

MapReduce handles these situation so perhaps we can look there for a model.

<img width="1420" alt="unknown" src="https://user-images.githubusercontent.com/15680678/42013170-99db48c2-7a61-11e8-8c7b-ef94c84e36ea.png">

**How can this issue happen?**

When both attempts of a task finish before the driver sends command to kill one of them, both of them send the status update FINISHED to the driver. The driver calls TaskSchedulerImpl to handle one successful task at a time. When it handles the first successful task, it sends the command to kill the other copy of the task, however, because that task is already finished, the executor will ignore the command. After finishing handling the first attempt, it processes the second one, although all actions on the result of this task are skipped, this copy of the task is still marked as SUCCESS. As a result, even though this issue does not affect the result of the job, it might cause confusing to user because both of them appear to be successful.

**How does this PR fix the issue?**

The simple way to fix this issue is that when taskSetManager handles successful task, it checks if any other attempt succeeded. If this is the case, it will call handleFailedTask with state==KILLED and reason==TaskKilled(“another attempt succeeded”) to handle this task as begin killed.

**How was this patch tested?**

I tested this manually by running applications, that caused the issue before, a few times, and observed that the issue does not happen again. Also, I added a unit test in TaskSetManagerSuite to test that if we call handleSuccessfulTask to handle status update for 2 copies of a task, only the one that is handled first will be mark as SUCCESS

Author: Hieu Huynh <“Hieu.huynh@oath.com”>

Author: hthuynh2 <hthieu96@gmail.com>

Closes#21653 from hthuynh2/SPARK_13343.

## What changes were proposed in this pull request?

Removes check that `spark.executor.instances` is set to 0 when using Streaming DRA.

## How was this patch tested?

Manual tests

My only concern with this PR is that `spark.executor.instances` (or the actual initial number of executors that the cluster manager gives Spark) can be outside of `spark.streaming.dynamicAllocation.minExecutors` to `spark.streaming.dynamicAllocation.maxExecutors`. I don't see a good way around that, because this code only runs after the SparkContext has been created.

Author: Karthik Palaniappan <karthikpal@google.com>

Closes#19183 from karth295/master.

## What changes were proposed in this pull request?

In the PR, I added new option for Avro datasource - `compression`. The option allows to specify compression codec for saved Avro files. This option is similar to `compression` option in another datasources like `JSON` and `CSV`.

Also I added the SQL configs `spark.sql.avro.compression.codec` and `spark.sql.avro.deflate.level`. I put the configs into `SQLConf`. If the `compression` option is not specified by an user, the first SQL config is taken into account.

## How was this patch tested?

I added new test which read meta info from written avro files and checks `avro.codec` property.

Author: Maxim Gekk <maxim.gekk@databricks.com>

Closes#21837 from MaxGekk/avro-compression.

## What changes were proposed in this pull request?

Please see [SPARK-24927][1] for more details.

[1]: https://issues.apache.org/jira/browse/SPARK-24927

## How was this patch tested?

Manually tested.

Author: Cheng Lian <lian.cs.zju@gmail.com>

Closes#21879 from liancheng/spark-24927.

(cherry picked from commit d5f340f277)

Signed-off-by: Xiao Li <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

This PR adds a new collection function: shuffle. It generates a random permutation of the given array. This implementation uses the "inside-out" version of Fisher-Yates algorithm.

## How was this patch tested?

New tests are added to CollectionExpressionsSuite.scala and DataFrameFunctionsSuite.scala.

Author: Takuya UESHIN <ueshin@databricks.com>

Author: pkuwm <ihuizhi.lu@gmail.com>

Closes#21802 from ueshin/issues/SPARK-23928/shuffle.

## What changes were proposed in this pull request?

Add a JDBC Option "pushDownPredicate" (default `true`) to allow/disallow predicate push-down in JDBC data source.

## How was this patch tested?

Add a test in `JDBCSuite`

Author: maryannxue <maryannxue@apache.org>

Closes#21875 from maryannxue/spark-24288.

## What changes were proposed in this pull request?

AnalysisBarrier was introduced in SPARK-20392 to improve analysis speed (don't re-analyze nodes that have already been analyzed).

Before AnalysisBarrier, we already had some infrastructure in place, with analysis specific functions (resolveOperators and resolveExpressions). These functions do not recursively traverse down subplans that are already analyzed (with a mutable boolean flag _analyzed). The issue with the old system was that developers started using transformDown, which does a top-down traversal of the plan tree, because there was not top-down resolution function, and as a result analyzer performance became pretty bad.

In order to fix the issue in SPARK-20392, AnalysisBarrier was introduced as a special node and for this special node, transform/transformUp/transformDown don't traverse down. However, the introduction of this special node caused a lot more troubles than it solves. This implicit node breaks assumptions and code in a few places, and it's hard to know when analysis barrier would exist, and when it wouldn't. Just a simple search of AnalysisBarrier in PR discussions demonstrates it is a source of bugs and additional complexity.

Instead, this pull request removes AnalysisBarrier and reverts back to the old approach. We added infrastructure in tests that fail explicitly if transform methods are used in the analyzer.

## How was this patch tested?

Added a test suite AnalysisHelperSuite for testing the resolve* methods and transform* methods.

Author: Reynold Xin <rxin@databricks.com>

Author: Xiao Li <gatorsmile@gmail.com>

Closes#21822 from rxin/SPARK-24865.

## What changes were proposed in this pull request?

If you want to get out of the loop to assign JIRA's user by command+c (KeyboardInterrupt), I am unable to get out. I faced this problem when the user doesn't have a contributor role and I just wanted to cancel and manually take an action to the JIRA.

**Before:**

```

JIRA is unassigned, choose assignee

[0] todd.chen (Reporter)

Enter number of user, or userid, to assign to (blank to leave unassigned):Traceback (most recent call last):

File "./dev/merge_spark_pr.py", line 322, in choose_jira_assignee

"Enter number of user, or userid, to assign to (blank to leave unassigned):")

KeyboardInterrupt

Error assigning JIRA, try again (or leave blank and fix manually)

JIRA is unassigned, choose assignee

[0] todd.chen (Reporter)

Enter number of user, or userid, to assign to (blank to leave unassigned):Traceback (most recent call last):

File "./dev/merge_spark_pr.py", line 322, in choose_jira_assignee

"Enter number of user, or userid, to assign to (blank to leave unassigned):")

KeyboardInterrupt

```

**After:**

```

JIRA is unassigned, choose assignee

[0] Dongjoon Hyun (Reporter)

Enter number of user, or userid to assign to (blank to leave unassigned):Traceback (most recent call last):

File "./dev/merge_spark_pr.py", line 322, in choose_jira_assignee

"Enter number of user, or userid to assign to (blank to leave unassigned):")

KeyboardInterrupt

Restoring head pointer to master

git checkout master

Already on 'master'

git branch

```

## How was this patch tested?

I tested this manually (I use my own merging script with few fixes).

Author: hyukjinkwon <gurwls223@apache.org>

Closes#21880 from HyukjinKwon/key-error.

## What changes were proposed in this pull request?

Initialize SaslEncryption$EncryptedMessage.byteChannel lazily,

so that empty, not yet used instances of ByteArrayWritableChannel

referenced by this field don't use up memory.

I analyzed a heap dump from Yarn Node Manager where this code is used, and found that there are over 40,000 of the above objects in memory, each with a big empty byte[] array. The reason they are all there is because of Netty queued up a large number of messages in memory before transferTo() is called. There is a small number of netty ChannelOutboundBuffer objects, and then collectively , via linked lists starting from their flushedEntry data fields, they end up referencing over 40K ChannelOutboundBuffer$Entry objects, which ultimately reference EncryptedMessage objects.

## How was this patch tested?

Ran all the tests locally.

Author: Misha Dmitriev <misha@cloudera.com>

Closes#21811 from countmdm/misha/spark-24801.

## What changes were proposed in this pull request?

In most cases, we should use `spark.sessionState.newHadoopConf()` instead of `sparkContext.hadoopConfiguration`, so that the hadoop configurations specified in Spark session

configuration will come into effect.

Add a rule matching `spark.sparkContext.hadoopConfiguration` or `spark.sqlContext.sparkContext.hadoopConfiguration` to prevent the usage.

## How was this patch tested?

Unit test

Author: Gengliang Wang <gengliang.wang@databricks.com>

Closes#21873 from gengliangwang/linterRule.

In case there are any issues in converting FileSegmentManagedBuffer to

ChunkedByteBuffer, add a conf to go back to old code path.

Followup to 7e847646d1

Author: Imran Rashid <irashid@cloudera.com>

Closes#21867 from squito/SPARK-24307-p2.

## What changes were proposed in this pull request?

Propose new APIs and modify job/task scheduling to support barrier execution mode, which requires all tasks in a same barrier stage start at the same time, and retry all tasks in case some tasks fail in the middle. The barrier execution mode is useful for some ML/DL workloads.

The proposed API changes include:

- `RDDBarrier` that marks an RDD as barrier (Spark must launch all the tasks together for the current stage).

- `BarrierTaskContext` that support global sync of all tasks in a barrier stage, and provide extra `BarrierTaskInfo`s.

In DAGScheduler, we retry all tasks of a barrier stage in case some tasks fail in the middle, this is achieved by unregistering map outputs for a shuffleId (for ShuffleMapStage) or clear the finished partitions in an active job (for ResultStage).

## How was this patch tested?

Add `RDDBarrierSuite` to ensure we convert RDDs correctly;

Add new test cases in `DAGSchedulerSuite` to ensure we do task scheduling correctly;

Add new test cases in `SparkContextSuite` to ensure the barrier execution mode actually works (both under local mode and local cluster mode).

Add new test cases in `TaskSchedulerImplSuite` to ensure we schedule tasks for barrier taskSet together.

Author: Xingbo Jiang <xingbo.jiang@databricks.com>

Closes#21758 from jiangxb1987/barrier-execution-mode.

## What changes were proposed in this pull request?

This is an extension to the original PR, in which rule exclusion did not work for classes derived from Optimizer, e.g., SparkOptimizer.

To solve this issue, Optimizer and its derived classes will define/override `defaultBatches` and `nonExcludableRules` in order to define its default rule set as well as rules that cannot be excluded by the SQL config. In the meantime, Optimizer's `batches` method is dedicated to the rule exclusion logic and is defined "final".

## How was this patch tested?

Added UT.

Author: maryannxue <maryannxue@apache.org>

Closes#21876 from maryannxue/rule-exclusion.

## What changes were proposed in this pull request?

This PR aims to the followings.

1. Like `com.databricks.spark.csv` mapping, we had better map `com.databricks.spark.avro` to built-in Avro data source.

2. Remove incorrect error message, `Please find an Avro package at ...`.

## How was this patch tested?

Pass the newly added tests.

Author: Dongjoon Hyun <dongjoon@apache.org>

Closes#21878 from dongjoon-hyun/SPARK-24924.

## What changes were proposed in this pull request?

If we use `reverse` function for array type of primitive type containing `null` and the child array is `UnsafeArrayData`, the function returns a wrong result because `UnsafeArrayData` doesn't define the behavior of re-assignment, especially we can't set a valid value after we set `null`.

## How was this patch tested?

Added some tests.

Author: Takuya UESHIN <ueshin@databricks.com>

Closes#21830 from ueshin/issues/SPARK-24878/fix_reverse.

## What changes were proposed in this pull request?

```Scala

val udf1 = udf({(x: Int, y: Int) => x + y})

val df = spark.range(0, 3).toDF("a")

.withColumn("b", udf1($"a", udf1($"a", lit(10))))

df.cache()

df.write.saveAsTable("t")

```

Cache is not being used because the plans do not match with the cached plan. This is a regression caused by the changes we made in AnalysisBarrier, since not all the Analyzer rules are idempotent.

## How was this patch tested?

Added a test.

Also found a bug in the DSV1 write path. This is not a regression. Thus, opened a separate JIRA https://issues.apache.org/jira/browse/SPARK-24869

Author: Xiao Li <gatorsmile@gmail.com>

Closes#21821 from gatorsmile/testMaster22.

## What changes were proposed in this pull request?

Besides spark setting spark.sql.sources.partitionOverwriteMode also allow setting partitionOverWriteMode per write

## How was this patch tested?

Added unit test in InsertSuite

Please review http://spark.apache.org/contributing.html before opening a pull request.

Author: Koert Kuipers <koert@tresata.com>

Closes#21818 from koertkuipers/feat-partition-overwrite-mode-per-write.

## What changes were proposed in this pull request?

Enable client mode integration test after merging from master.

## How was this patch tested?

Check the integration test runs in the build.

Author: mcheah <mcheah@palantir.com>

Closes#21874 from mccheah/enable-client-mode-test.

## What changes were proposed in this pull request?

In the PR, I propose to extend the `StructType`/`StructField` classes by new method `toDDL` which converts a value of the `StructType`/`StructField` type to a string formatted in DDL style. The resulted string can be used in a table creation.

The `toDDL` method of `StructField` is reused in `SHOW CREATE TABLE`. In this way the PR fixes the bug of unquoted names of nested fields.

## How was this patch tested?

I add a test for checking the new method and 2 round trip tests: `fromDDL` -> `toDDL` and `toDDL` -> `fromDDL`

Author: Maxim Gekk <maxim.gekk@databricks.com>

Closes#21803 from MaxGekk/to-ddl.

## What changes were proposed in this pull request?

Support client mode for the Kubernetes scheduler.

Client mode works more or less identically to cluster mode. However, in client mode, the Spark Context needs to be manually bootstrapped with certain properties which would have otherwise been set up by spark-submit in cluster mode. Specifically:

- If the user doesn't provide a driver pod name, we don't add an owner reference. This is for usage when the driver is not running in a pod in the cluster. In such a case, the driver can only provide a best effort to clean up the executors when the driver exits, but cleaning up the resources is not guaranteed. The executor JVMs should exit if the driver JVM exits, but the pods will still remain in the cluster in a COMPLETED or FAILED state.

- The user must provide a host (spark.driver.host) and port (spark.driver.port) that the executors can connect to. When using spark-submit in cluster mode, spark-submit generates the headless service automatically; in client mode, the user is responsible for setting up their own connectivity.

We also change the authentication configuration prefixes for client mode.

## How was this patch tested?

Adding an integration test to exercise client mode support.

Author: mcheah <mcheah@palantir.com>

Closes#21748 from mccheah/k8s-client-mode.

## What changes were proposed in this pull request?

After rethinking on the artifactId, I think it should be `spark-avro` instead of `spark-sql-avro`, which is simpler, and consistent with the previous artifactId. I think we need to change it before Spark 2.4 release.

Also a tiny change: use `spark.sessionState.newHadoopConf()` to get the hadoop configuration, thus the related hadoop configurations in SQLConf will come into effect.

## How was this patch tested?

Unit test

Author: Gengliang Wang <gengliang.wang@databricks.com>

Closes#21866 from gengliangwang/avro_followup.

## What changes were proposed in this pull request?

Improvement `IN` predicate type mismatched message:

```sql

Mismatched columns:

[(, t, 4, ., `, t, 4, a, `, :, d, o, u, b, l, e, ,, , t, 5, ., `, t, 5, a, `, :, d, e, c, i, m, a, l, (, 1, 8, ,, 0, ), ), (, t, 4, ., `, t, 4, c, `, :, s, t, r, i, n, g, ,, , t, 5, ., `, t, 5, c, `, :, b, i, g, i, n, t, )]

```

After this patch:

```sql

Mismatched columns:

[(t4.`t4a`:double, t5.`t5a`:decimal(18,0)), (t4.`t4c`:string, t5.`t5c`:bigint)]

```

## How was this patch tested?

unit tests

Author: Yuming Wang <yumwang@ebay.com>

Closes#21863 from wangyum/SPARK-18874.

## What changes were proposed in this pull request?

Add support for custom encoding on csv writer, see https://issues.apache.org/jira/browse/SPARK-19018

## How was this patch tested?

Added two unit tests in CSVSuite

Author: crafty-coder <carlospb86@gmail.com>

Author: Carlos <crafty-coder@users.noreply.github.com>

Closes#20949 from crafty-coder/master.

## What changes were proposed in this pull request?

Thanks to henryr for the original idea at https://github.com/apache/spark/pull/21049

Description from the original PR :

Subqueries (at least in SQL) have 'bag of tuples' semantics. Ordering

them is therefore redundant (unless combined with a limit).

This patch removes the top sort operators from the subquery plans.

This closes https://github.com/apache/spark/pull/21049.

## How was this patch tested?

Added test cases in SubquerySuite to cover in, exists and scalar subqueries.

Please review http://spark.apache.org/contributing.html before opening a pull request.

Author: Dilip Biswal <dbiswal@us.ibm.com>

Closes#21853 from dilipbiswal/SPARK-23957.

## What changes were proposed in this pull request?

When `trueValue` and `falseValue` are semantic equivalence, the condition expression in `if` can be removed to avoid extra computation in runtime.

## How was this patch tested?

Test added.

Author: DB Tsai <d_tsai@apple.com>

Closes#21848 from dbtsai/short-circuit-if.

## What changes were proposed in this pull request?

The HandleNullInputsForUDF would always add a new `If` node every time it is applied. That would cause a difference between the same plan being analyzed once and being analyzed twice (or more), thus raising issues like plan not matched in the cache manager. The solution is to mark the arguments as null-checked, which is to add a "KnownNotNull" node above those arguments, when adding the UDF under an `If` node, because clearly the UDF will not be called when any of those arguments is null.

## How was this patch tested?

Add new tests under sql/UDFSuite and AnalysisSuite.

Author: maryannxue <maryannxue@apache.org>

Closes#21851 from maryannxue/spark-24891.

Fetch-to-mem is guaranteed to fail if the message is bigger than 2 GB,

so we might as well use fetch-to-disk in that case. The message includes

some metadata in addition to the block data itself (in particular

UploadBlock has a lot of metadata), so we leave a little room.

Author: Imran Rashid <irashid@cloudera.com>

Closes#21474 from squito/SPARK-24297.

## What changes were proposed in this pull request?

during my travails in porting spark builds to run on our centos worker, i managed to recreate (as best i could) the centos environment on our new ubuntu-testing machine.

while running my initial builds, lintr was crashing on some extraneous spaces in test_basic.R (see: https://amplab.cs.berkeley.edu/jenkins/job/spark-master-test-sbt-hadoop-2.6-ubuntu-test/862/console)

after removing those spaces, the ubuntu build happily passed the lintr tests.

## How was this patch tested?

i then tested this against a modified spark-master-test-sbt-hadoop-2.6 build (see https://amplab.cs.berkeley.edu/jenkins/view/RISELab%20Infra/job/testing-spark-master-test-with-updated-R-crap/4/), which scp'ed a copy of test_basic.R in to the repo after the git clone. everything seems to be working happily.

Author: shane knapp <incomplete@gmail.com>

Closes#21864 from shaneknapp/fixing-R-lint-spacing.

## What changes were proposed in this pull request?

Spotbugs maven plugin was a recently added plugin before 2.4.0 snapshot artifacts were broken. To ensure it does not affect the maven deploy plugin, this change removes it.

## How was this patch tested?

Local build was ran, but this patch will be actually tested by monitoring the apache repo artifacts and making sure metadata is correctly uploaded after this job is ran: https://amplab.cs.berkeley.edu/jenkins/view/Spark%20Packaging/job/spark-master-maven-snapshots/

Author: Eric Chang <eric.chang@databricks.com>

Closes#21865 from ericfchang/SPARK-24895.

## What changes were proposed in this pull request?

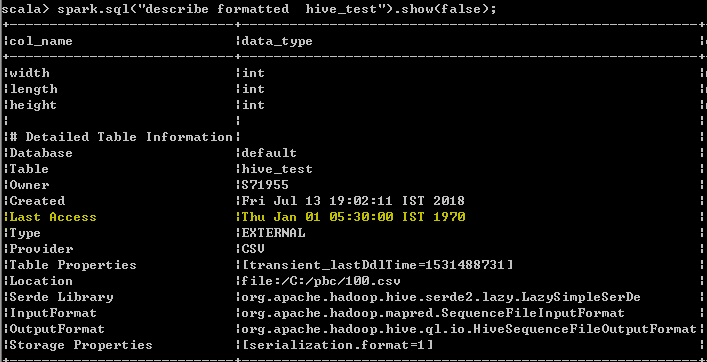

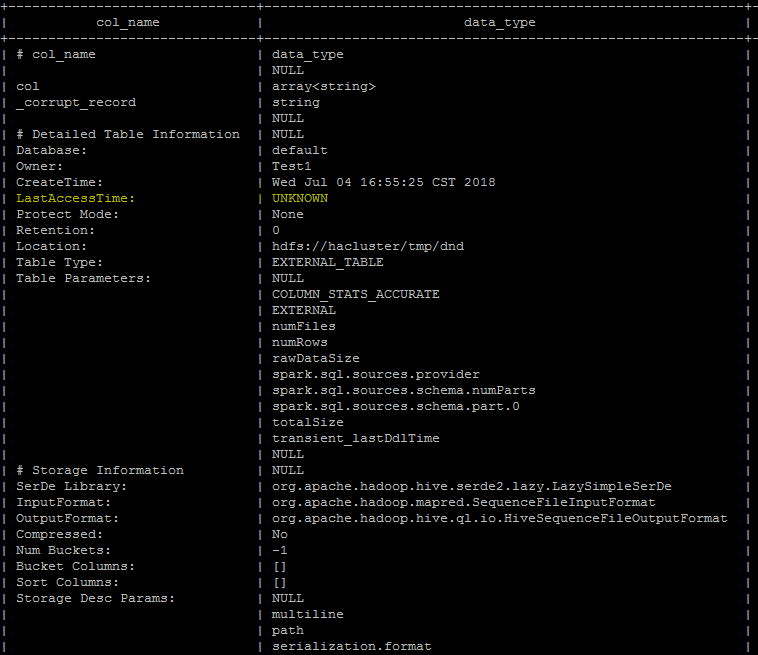

Last Access Time will always displayed wrong date Thu Jan 01 05:30:00 IST 1970 when user run DESC FORMATTED table command

In hive its displayed as "UNKNOWN" which makes more sense than displaying wrong date. seems to be a limitation as of now even from hive, better we can follow the hive behavior unless the limitation has been resolved from hive.

spark client output

Hive client output

## How was this patch tested?

UT has been added which makes sure that the wrong date "Thu Jan 01 05:30:00 IST 1970 "

shall not be added as value for the Last Access property

Author: s71955 <sujithchacko.2010@gmail.com>

Closes#21775 from sujith71955/master_hive.

## What changes were proposed in this pull request?

This updates the DataSourceV2 API to use InternalRow instead of Row for the default case with no scan mix-ins.

Support for readers that produce Row is added through SupportsDeprecatedScanRow, which matches the previous API. Readers that used Row now implement this class and should be migrated to InternalRow.

Readers that previously implemented SupportsScanUnsafeRow have been migrated to use no SupportsScan mix-ins and produce InternalRow.

## How was this patch tested?

This uses existing tests.

Author: Ryan Blue <blue@apache.org>

Closes#21118 from rdblue/SPARK-23325-datasource-v2-internal-row.

## What changes were proposed in this pull request?

It's minor and trivial but looks 2000 input is good enough to reproduce and test in SPARK-22499.

## How was this patch tested?

Manually brought the change and tested.

Locally tested:

Before: 3m 21s 288ms

After: 1m 29s 134ms

Given the latest successful build took:

```

ArithmeticExpressionSuite:

- SPARK-22499: Least and greatest should not generate codes beyond 64KB (7 minutes, 49 seconds)

```

I expect it's going to save 4ish mins.

Author: hyukjinkwon <gurwls223@apache.org>

Closes#21855 from HyukjinKwon/minor-fix-suite.

## What changes were proposed in this pull request?

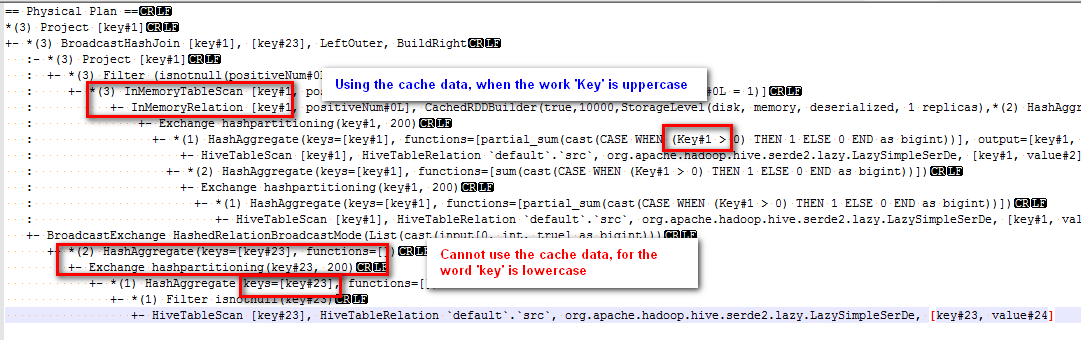

Modified the canonicalized to not case-insensitive.

Before the PR, cache can't work normally if there are case letters in SQL,

for example:

sql("CREATE TABLE IF NOT EXISTS src (key INT, value STRING) USING hive")

sql("select key, sum(case when Key > 0 then 1 else 0 end) as positiveNum " +

"from src group by key").cache().createOrReplaceTempView("src_cache")

sql(

s"""select a.key

from

(select key from src_cache where positiveNum = 1)a

left join

(select key from src_cache )b

on a.key=b.key

""").explain

The physical plan of the sql is:

The subquery "select key from src_cache where positiveNum = 1" on the left of join can use the cache data, but the subquery "select key from src_cache" on the right of join cannot use the cache data.

## How was this patch tested?

new added test

Author: 10129659 <chen.yanshan@zte.com.cn>

Closes#21823 from eatoncys/canonicalized.

## What changes were proposed in this pull request?

In this PR metrics are introduced for YARN. As up to now there was no metrics in the YARN module a new metric system is created with the name "applicationMaster".

To support both client and cluster mode the metric system lifecycle is bound to the AM.

## How was this patch tested?

Both client and cluster mode was tested manually.

Before the test on one of the YARN node spark-core was removed to cause the allocation failure.

Spark was started as (in case of client mode):

```

spark2-submit \

--class org.apache.spark.examples.SparkPi \

--conf "spark.yarn.blacklist.executor.launch.blacklisting.enabled=true" --conf "spark.blacklist.application.maxFailedExecutorsPerNode=2" --conf "spark.dynamicAllocation.enabled=true" --conf "spark.metrics.conf.*.sink.console.class=org.apache.spark.metrics.sink.ConsoleSink" \

--master yarn \

--deploy-mode client \

original-spark-examples_2.11-2.4.0-SNAPSHOT.jar \

1000

```

In both cases the YARN logs contained the new metrics as:

```

$ yarn logs --applicationId application_1529926424933_0015

...

-- Gauges ----------------------------------------------------------------------

application_1531751594108_0046.applicationMaster.numContainersPendingAllocate

value = 0

application_1531751594108_0046.applicationMaster.numExecutorsFailed

value = 3

application_1531751594108_0046.applicationMaster.numExecutorsRunning

value = 9

application_1531751594108_0046.applicationMaster.numLocalityAwareTasks

value = 0

application_1531751594108_0046.applicationMaster.numReleasedContainers

value = 0

...

```

Author: “attilapiros” <piros.attila.zsolt@gmail.com>

Author: Attila Zsolt Piros <2017933+attilapiros@users.noreply.github.com>

Closes#21635 from attilapiros/SPARK-24594.

## What changes were proposed in this pull request?

Modify the strategy in ColumnPruning to add a Project between ScriptTransformation and its child, this strategy can reduce the scan time especially in the scenario of the table has many columns.

## How was this patch tested?

Add UT in ColumnPruningSuite and ScriptTransformationSuite.

Author: Yuanjian Li <xyliyuanjian@gmail.com>

Closes#21839 from xuanyuanking/SPARK-24339.

## What changes were proposed in this pull request?

Streaming queries with watermarks do not work with Trigger.Once because of the following.

- Watermark is updated in the driver memory after a batch completes, but it is persisted to checkpoint (in the offset log) only when the next batch is planned

- In trigger.once, the query terminated as soon as one batch has completed. Hence, the updated watermark is never persisted anywhere.

The simple solution is to persist the updated watermark value in the commit log when a batch is marked as completed. Then the next batch, in the next trigger.once run can pick it up from the commit log.

## How was this patch tested?

new unit tests

Co-authored-by: Tathagata Das <tathagata.das1565gmail.com>

Co-authored-by: c-horn <chorn4033gmail.com>

Author: Tathagata Das <tathagata.das1565@gmail.com>

Closes#21746 from tdas/SPARK-24699.