## What changes were proposed in this pull request?

1. Remove parameter `isReadPath`. The supported types of read/write should be the same.

2. Disallow reading `NullType` for ORC data source. In #21667 and #21389, it was supposed that ORC supports reading `NullType`, but can't write it. This doesn't make sense. I read docs and did some tests. ORC doesn't support `NullType`.

## How was this patch tested?

Unit tset

Closes#23639 from gengliangwang/supportDataType.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

When we read a hive table and create RDDs in `TableReader`, it'll throw exception `java.lang.ClassCastException: org.apache.hadoop.mapreduce.lib.input.TextInputFormat cannot be cast to org.apache.hadoop.mapred.InputFormat` if the input format class of the table is from mapreduce package.

Now we use NewHadoopRDD to deal with the new input format and keep HadoopRDD to the old one.

This PR is from #23506. We can reproduce this issue by executing the new test with the code in old version. When create a table with `org.apache.hadoop.mapreduce.....` input format, we will find the exception thrown in `org.apache.spark.rdd.HadoopRDD.getInputFormat(HadoopRDD.scala:190)`

## How was this patch tested?

Added a new test.

Closes#23559 from Deegue/fix-hadoopRDD.

Lead-authored-by: heguozi <zyzzxycj@gmail.com>

Co-authored-by: Yizhong Zhang <zyzzxycj@163.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

There are ugly provided dependencies inside core for the following:

* Hive

* Kafka

In this PR I've extracted them out. This PR contains the following:

* Token providers are now loaded with service loader

* Hive token provider moved to hive project

* Kafka token provider extracted into a new project

## How was this patch tested?

Existing + newly added unit tests.

Additionally tested on cluster.

Closes#23499 from gaborgsomogyi/SPARK-26254.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

When reading from empty tables, the optimization `OptimizeMetadataOnlyQuery` may return wrong results:

```

sql("CREATE TABLE t (col1 INT, p1 INT) USING PARQUET PARTITIONED BY (p1)")

sql("INSERT INTO TABLE t PARTITION (p1 = 5) SELECT ID FROM range(1, 1)")

sql("SELECT MAX(p1) FROM t")

```

The result is supposed to be `null`. However, with the optimization the result is `5`.

The rule is originally ported from https://issues.apache.org/jira/browse/HIVE-1003 in #13494. In Hive, the rule is disabled by default in a later release(https://issues.apache.org/jira/browse/HIVE-15397), due to the same problem.

It is hard to completely avoid the correctness issue. Because data sources like Parquet can be metadata-only. Spark can't tell whether it is empty or not without actually reading it. This PR disable the optimization by default.

## How was this patch tested?

Unit test

Closes#23635 from gengliangwang/optimizeMetadata.

Lead-authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Co-authored-by: Xiao Li <gatorsmile@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

The explain output of the Hive CTAS command, regardless of whether it's actually writing via Hive's SerDe or converted into using Spark's data source, would always show that it's using `InsertIntoHiveTable` because it's hardcoded.

e.g.

```

Execute OptimizedCreateHiveTableAsSelectCommand [Database:default, TableName: foo, InsertIntoHiveTable]

```

This CTAS is converted into using Spark's data source, but it still says `InsertIntoHiveTable` in the explain output.

It's better to show the actual class name of the writing command used. For the example above, it'd be:

```

Execute OptimizedCreateHiveTableAsSelectCommand [Database:default, TableName: foo, InsertIntoHadoopFsRelationCommand]

```

## How was this patch tested?

Added test case in `HiveExplainSuite`

Closes#23582 from rednaxelafx/fix-explain-1.

Authored-by: Kris Mok <kris.mok@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

The PR makes hardcoded `spark.dynamicAllocation`, `spark.scheduler`, `spark.rpc`, `spark.task`, `spark.speculation`, and `spark.cleaner` configs to use `ConfigEntry`.

## How was this patch tested?

Existing tests

Closes#23416 from kiszk/SPARK-26463.

Authored-by: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

The PR makes hardcoded `spark.unsafe` configs to use ConfigEntry and put them in the `config` package.

## How was this patch tested?

Existing UTs

Closes#23412 from kiszk/SPARK-26477.

Authored-by: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Create a framework for file source V2 based on data source V2 API.

As a good example for demonstrating the framework, this PR also migrate ORC source. This is because ORC file source supports both row scan and columnar scan, and the implementation is simpler comparing with Parquet.

Note: Currently only read path of V2 API is done, this framework and migration are only for the read path.

Supports the following scan:

- Scan ColumnarBatch

- Scan UnsafeRow

- Push down filters

- Push down required columns

Not supported( due to the limitation of data source V2 API):

- Stats metrics

- Catalog table

- Writes

## How was this patch tested?

Unit test

Closes#23383 from gengliangwang/latest_orcV2.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Make sure broadcast hint is applied to partitioned tables.

## How was this patch tested?

- A new unit test in PruneFileSourcePartitionsSuite

- Unit test suites touched by SPARK-14581: JoinOptimizationSuite, FilterPushdownSuite, ColumnPruningSuite, and PruneFiltersSuite

Closes#23507 from jzhuge/SPARK-26576.

Closes#23530 from jzhuge/SPARK-26576-master.

Authored-by: John Zhuge <jzhuge@apache.org>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

The vote of final release of `branch-2.2` passed and the branch goes EOL. This PR removes Spark 2.2.x from the testing coverage.

## How was this patch tested?

Pass the Jenkins.

Closes#23526 from dongjoon-hyun/SPARK-26607.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

The PR makes hardcoded configs below to use `ConfigEntry`.

* spark.ui

* spark.ssl

* spark.authenticate

* spark.master.rest

* spark.master.ui

* spark.metrics

* spark.admin

* spark.modify.acl

This patch doesn't change configs which are not relevant to SparkConf (e.g. system properties).

## How was this patch tested?

Existing tests.

Closes#23423 from HeartSaVioR/SPARK-26466.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

Per discussion in #23391 (comment) this proposes to just remove the old pre-Spark-3 time parsing behavior.

This is a rebase of https://github.com/apache/spark/pull/23411

## How was this patch tested?

Existing tests.

Closes#23495 from srowen/SPARK-26503.2.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This PR aims to remove internal ORC configuration to simplify the code path for Spark 3.0.0. This removes the configuration `spark.sql.orc.copyBatchToSpark` and related ORC codes including tests and benchmarks.

## How was this patch tested?

Pass the Jenkins with the reduced test coverage.

Closes#23503 from dongjoon-hyun/SPARK-26584.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

In https://github.com/apache/spark/pull/23043 , we introduced a behavior change: Spark users are not able to distinguish 0.0 and -0.0 anymore.

This PR proposes an alternative fix to the original bug, to retain the difference between 0.0 and -0.0 inside Spark.

The idea is, we can rewrite the window partition key, join key and grouping key during logical phase, to normalize the special floating numbers. Thus only operators care about special floating numbers need to pay the perf overhead, and end users can distinguish -0.0.

## How was this patch tested?

existing test

Closes#23388 from cloud-fan/minor.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Currently Spark table maintains Hive catalog storage format, so that Hive client can read it. In `HiveSerDe.scala`, Spark uses a mapping from its data source to HiveSerde. The mapping is old, we need to update with latest canonical name of Parquet and Orc FileFormat.

Otherwise the following queries will result in wrong Serde value in Hive table(default value `org.apache.hadoop.mapred.SequenceFileInputFormat`), and Hive client will fail to read the output table:

```

df.write.format("org.apache.spark.sql.execution.datasources.parquet.ParquetFileFormat").saveAsTable(..)

```

```

df.write.format("org.apache.spark.sql.execution.datasources.orc.OrcFileFormat").saveAsTable(..)

```

This minor PR is to fix the mapping.

## How was this patch tested?

Unit test.

Closes#23491 from gengliangwang/fixHiveSerdeMap.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The `toHiveString()` and `toHiveStructString` methods were removed from `HiveUtils` because they have been already implemented in `HiveResult`. One related test was moved to `HiveResultSuite`.

## How was this patch tested?

By tests from `hive-thriftserver`.

Closes#23466 from MaxGekk/dedup-hive-result-string.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Increase test memory to avoid OOM in TimSort-related tests.

## How was this patch tested?

Existing tests.

Closes#23425 from srowen/SPARK-26306.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

In the PR, I propose to move `hiveResultString()` out of `QueryExecution` and put it to a separate object.

Closes#23409 from MaxGekk/hive-result-string.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Herman van Hovell <hvanhovell@databricks.com>

## What changes were proposed in this pull request?

Default timestamp pattern defined in `JSONOptions` doesn't allow saving/loading timestamps with time zones of seconds precision. Because of that, the round trip test failed for timestamps before 1582. In the PR, I propose to extend zone offset section from `XXX` to `XXXXX` which should allow to save/load zone offsets like `-07:52:48`.

## How was this patch tested?

It was tested by `JsonHadoopFsRelationSuite` and `TimestampFormatterSuite`.

Closes#23417 from MaxGekk/hadoopfsrelationtest-new-formatter.

Lead-authored-by: Maxim Gekk <max.gekk@gmail.com>

Co-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

When we operate as below:

`

0: jdbc:hive2://xxx/> create function funnel_analysis as 'com.xxx.hive.extend.udf.UapFunnelAnalysis';

`

`

0: jdbc:hive2://xxx/> select funnel_analysis(1,",",1,'');

Error: org.apache.spark.sql.AnalysisException: Undefined function: 'funnel_analysis'. This function is neither a registered temporary function nor a permanent function registered in the database 'xxx'.; line 1 pos 7 (state=,code=0)

`

`

0: jdbc:hive2://xxx/> describe function funnel_analysis;

+-----------------------------------------------------------+--+

| function_desc |

+-----------------------------------------------------------+--+

| Function: xxx.funnel_analysis |

| Class: com.xxx.hive.extend.udf.UapFunnelAnalysis |

| Usage: N/A. |

+-----------------------------------------------------------+--+

`

We can see describe funtion will get right information,but when we actually use this funtion,we will get an undefined exception.

Which is really misleading,the real cause is below:

`

No handler for Hive UDF 'com.xxx.xxx.hive.extend.udf.UapFunnelAnalysis': java.lang.IllegalStateException: Should not be called directly;

at org.apache.hadoop.hive.ql.udf.generic.GenericUDTF.initialize(GenericUDTF.java:72)

at org.apache.spark.sql.hive.HiveGenericUDTF.outputInspector$lzycompute(hiveUDFs.scala:204)

at org.apache.spark.sql.hive.HiveGenericUDTF.outputInspector(hiveUDFs.scala:204)

at org.apache.spark.sql.hive.HiveGenericUDTF.elementSchema$lzycompute(hiveUDFs.scala:212)

at org.apache.spark.sql.hive.HiveGenericUDTF.elementSchema(hiveUDFs.scala:212)

`

This patch print the actual failure for quick debugging.

## How was this patch tested?

UT

Closes#21790 from caneGuy/zhoukang/print-warning1.

Authored-by: zhoukang <zhoukang199191@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

In the PR, I propose to add `maxFields` parameter to all functions involved in creation of textual representation of spark plans such as `simpleString` and `verboseString`. New parameter restricts number of fields converted to truncated strings. Any elements beyond the limit will be dropped and replaced by a `"... N more fields"` placeholder. The threshold is bumped up to `Int.MaxValue` for `toFile()`.

## How was this patch tested?

Added a test to `QueryExecutionSuite` which checks `maxFields` impacts on number of truncated fields in `LocalRelation`.

Closes#23159 from MaxGekk/to-file-max-fields.

Lead-authored-by: Maxim Gekk <max.gekk@gmail.com>

Co-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: Herman van Hovell <hvanhovell@databricks.com>

## What changes were proposed in this pull request?

Spark SQL doesn't support creating partitioned table using Hive CTAS in SQL syntax. However it is supported by using DataFrameWriter API.

```scala

val df = Seq(("a", 1)).toDF("part", "id")

df.write.format("hive").partitionBy("part").saveAsTable("t")

```

Hive begins to support this syntax in newer version: https://issues.apache.org/jira/browse/HIVE-20241:

```

CREATE TABLE t PARTITIONED BY (part) AS SELECT 1 as id, "a" as part

```

This patch adds this support to SQL syntax.

## How was this patch tested?

Added tests.

Closes#23376 from viirya/hive-ctas-partitioned-table.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In Spark 2.3.0 and previous versions, Hive CTAS command will convert to use data source to write data into the table when the table is convertible. This behavior is controlled by the configs like HiveUtils.CONVERT_METASTORE_ORC and HiveUtils.CONVERT_METASTORE_PARQUET.

In 2.3.1, we drop this optimization by mistake in the PR [SPARK-22977](https://github.com/apache/spark/pull/20521/files#r217254430). Since that Hive CTAS command only uses Hive Serde to write data.

This patch adds this optimization back to Hive CTAS command. This patch adds OptimizedCreateHiveTableAsSelectCommand which uses data source to write data.

## How was this patch tested?

Added test.

Closes#22514 from viirya/SPARK-25271-2.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In the PR, I propose to switch on **java.time API** for parsing timestamps and dates from JSON inputs with microseconds precision. The SQL config `spark.sql.legacy.timeParser.enabled` allow to switch back to previous behavior with using `java.text.SimpleDateFormat`/`FastDateFormat` for parsing/generating timestamps/dates.

## How was this patch tested?

It was tested by `JsonExpressionsSuite`, `JsonFunctionsSuite` and `JsonSuite`.

Closes#23196 from MaxGekk/json-time-parser.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Add appendReadColumns Unit Test for HiveShimSuite.

## How was this patch tested?

```

$ build/sbt

> project hive

> testOnly *HiveShimSuite

```

Closes#23268 from sadhen/refactor/hiveshim.

Authored-by: Darcy Shen <sadhen@zoho.com>

Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This is a Spark 2.3 regression introduced in https://github.com/apache/spark/pull/20521. We should add the partition info for InsertIntoHiveTable in CreateHiveTableAsSelectCommand. Otherwise, we will hit the following error by running the newly added test case:

```

[info] - CTAS: INSERT a partitioned table using Hive serde *** FAILED *** (829 milliseconds)

[info] org.apache.spark.SparkException: Requested partitioning does not match the tab1 table:

[info] Requested partitions:

[info] Table partitions: part

[info] at org.apache.spark.sql.hive.execution.InsertIntoHiveTable.processInsert(InsertIntoHiveTable.scala:179)

[info] at org.apache.spark.sql.hive.execution.InsertIntoHiveTable.run(InsertIntoHiveTable.scala:107)

```

## How was this patch tested?

Added a test case.

Closes#23255 from gatorsmile/fixCTAS.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In SPARK-23711, we have implemented the expression fallback logic to an interpreted mode. So, this pr fixed code to support the same fallback mode in `SafeProjection` based on `CodeGeneratorWithInterpretedFallback`.

## How was this patch tested?

Add tests in `CodeGeneratorWithInterpretedFallbackSuite` and `UnsafeRowConverterSuite`.

Closes#22468 from maropu/SPARK-25374-3.

Authored-by: Takeshi Yamamuro <yamamuro@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

For now the `hasMinMaxStats` will return the same as `hasCountStats`, which is obviously not as expected.

## How was this patch tested?

Existing tests.

Closes#23152 from adrian-wang/minmaxstats.

Authored-by: Daoyuan Wang <me@daoyuan.wang>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Currently, the common `withTempDir` function is used in Spark SQL test cases. To handle `val dir = Utils. createTempDir()` and `Utils. deleteRecursively (dir)`. Unfortunately, the `withTempDir` function cannot be used in the Spark Core test case. This PR Sharing `withTempDir` function in Spark Sql and SparkCore to clean up SparkCore test cases. thanks.

## How was this patch tested?

N / A

Closes#23151 from heary-cao/withCreateTempDir.

Authored-by: caoxuewen <cao.xuewen@zte.com.cn>

Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

When I ran spark-shell on JDK11+28(2018-09-25), It failed with the error below.

```

Exception in thread "main" java.lang.ExceptionInInitializerError

at org.apache.hadoop.util.StringUtils.<clinit>(StringUtils.java:80)

at org.apache.hadoop.security.SecurityUtil.getAuthenticationMethod(SecurityUtil.java:611)

at org.apache.hadoop.security.UserGroupInformation.initialize(UserGroupInformation.java:273)

at org.apache.hadoop.security.UserGroupInformation.ensureInitialized(UserGroupInformation.java:261)

at org.apache.hadoop.security.UserGroupInformation.loginUserFromSubject(UserGroupInformation.java:791)

at org.apache.hadoop.security.UserGroupInformation.getLoginUser(UserGroupInformation.java:761)

at org.apache.hadoop.security.UserGroupInformation.getCurrentUser(UserGroupInformation.java:634)

at org.apache.spark.util.Utils$.$anonfun$getCurrentUserName$1(Utils.scala:2427)

at scala.Option.getOrElse(Option.scala:121)

at org.apache.spark.util.Utils$.getCurrentUserName(Utils.scala:2427)

at org.apache.spark.SecurityManager.<init>(SecurityManager.scala:79)

at org.apache.spark.deploy.SparkSubmit.secMgr$lzycompute$1(SparkSubmit.scala:359)

at org.apache.spark.deploy.SparkSubmit.secMgr$1(SparkSubmit.scala:359)

at org.apache.spark.deploy.SparkSubmit.$anonfun$prepareSubmitEnvironment$9(SparkSubmit.scala:367)

at scala.Option.map(Option.scala:146)

at org.apache.spark.deploy.SparkSubmit.prepareSubmitEnvironment(SparkSubmit.scala:367)

at org.apache.spark.deploy.SparkSubmit.submit(SparkSubmit.scala:143)

at org.apache.spark.deploy.SparkSubmit.doSubmit(SparkSubmit.scala:86)

at org.apache.spark.deploy.SparkSubmit$$anon$2.doSubmit(SparkSubmit.scala:927)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:936)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

Caused by: java.lang.StringIndexOutOfBoundsException: begin 0, end 3, length 2

at java.base/java.lang.String.checkBoundsBeginEnd(String.java:3319)

at java.base/java.lang.String.substring(String.java:1874)

at org.apache.hadoop.util.Shell.<clinit>(Shell.java:52)

```

This is a Hadoop issue that fails to parse some java.version. It has been fixed from Hadoop-2.7.4(see [HADOOP-14586](https://issues.apache.org/jira/browse/HADOOP-14586)).

Note, Hadoop-2.7.5 or upper have another problem with Spark ([SPARK-25330](https://issues.apache.org/jira/browse/SPARK-25330)). So upgrading to 2.7.4 would be fine for now.

## How was this patch tested?

Existing tests.

Closes#23101 from tasanuma/SPARK-26134.

Authored-by: Takanobu Asanuma <tasanuma@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

We currently don't have good visibility into query planning time (analysis vs optimization vs physical planning). This patch adds a simple utility to track the runtime of various rules and various planning phases.

## How was this patch tested?

Added unit tests and end-to-end integration tests.

Closes#23096 from rxin/SPARK-26129.

Authored-by: Reynold Xin <rxin@databricks.com>

Signed-off-by: Reynold Xin <rxin@databricks.com>

## What changes were proposed in this pull request?

This restores scaladoc artifact generation, which got dropped with the Scala 2.12 update. The change looks large, but is almost all due to needing to make the InterfaceStability annotations top-level classes (i.e. `InterfaceStability.Stable` -> `Stable`), unfortunately. A few inner class references had to be qualified too.

Lots of scaladoc warnings now reappear. We can choose to disable generation by default and enable for releases, later.

## How was this patch tested?

N/A; build runs scaladoc now.

Closes#23069 from srowen/SPARK-26026.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

[Hive 2.3.4 is released on Nov. 7th](https://hive.apache.org/downloads.html#7-november-2018-release-234-available). This PR aims to support that version.

## How was this patch tested?

Pass the Jenkins with the updated version

Closes#23059 from dongjoon-hyun/SPARK-26091.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Since [Spark 1.6.0](56d7da14ab (diff-6f38a103058a6e233b7ad80718452387R96)), there was a redundant '}' character in CTAS string plan's database argument string; `default}`. This PR aims to fix it.

**BEFORE**

```scala

scala> sc.version

res1: String = 1.6.0

scala> sql("create table t as select 1").explain

== Physical Plan ==

ExecutedCommand CreateTableAsSelect [Database:default}, TableName: t, InsertIntoHiveTable]

+- Project [1 AS _c0#3]

+- OneRowRelation$

```

**AFTER**

```scala

scala> sql("create table t as select 1").explain

== Physical Plan ==

Execute CreateHiveTableAsSelectCommand CreateHiveTableAsSelectCommand [Database:default, TableName: t, InsertIntoHiveTable]

+- *(1) Project [1 AS 1#4]

+- Scan OneRowRelation[]

```

## How was this patch tested?

Manual.

Closes#23064 from dongjoon-hyun/SPARK-FIX.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This PR makes Spark's default Scala version as 2.12, and Scala 2.11 will be the alternative version. This implies that Scala 2.12 will be used by our CI builds including pull request builds.

We'll update the Jenkins to include a new compile-only jobs for Scala 2.11 to ensure the code can be still compiled with Scala 2.11.

## How was this patch tested?

existing tests

Closes#22967 from dbtsai/scala2.12.

Authored-by: DB Tsai <d_tsai@apple.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Deprecated in Java 11, replace Class.newInstance with Class.getConstructor.getInstance, and primtive wrapper class constructors with valueOf or equivalent

## How was this patch tested?

Existing tests.

Closes#22988 from srowen/SPARK-25984.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Currently, Spark writes Spark version number into Hive Table properties with `spark.sql.create.version`.

```

parameters:{

spark.sql.sources.schema.part.0={

"type":"struct",

"fields":[{"name":"a","type":"integer","nullable":true,"metadata":{}}]

},

transient_lastDdlTime=1541142761,

spark.sql.sources.schema.numParts=1,

spark.sql.create.version=2.4.0

}

```

This PR aims to write Spark versions to ORC/Parquet file metadata with `org.apache.spark.sql.create.version` because we used `org.apache.` prefix in Parquet metadata already. It's different from Hive Table property key `spark.sql.create.version`, but it seems that we cannot change Hive Table property for backward compatibility.

After this PR, ORC and Parquet file generated by Spark will have the following metadata.

**ORC (`native` and `hive` implmentation)**

```

$ orc-tools meta /tmp/o

File Version: 0.12 with ...

...

User Metadata:

org.apache.spark.sql.create.version=3.0.0

```

**PARQUET**

```

$ parquet-tools meta /tmp/p

...

creator: parquet-mr version 1.10.0 (build 031a6654009e3b82020012a18434c582bd74c73a)

extra: org.apache.spark.sql.create.version = 3.0.0

extra: org.apache.spark.sql.parquet.row.metadata = {"type":"struct","fields":[{"name":"id","type":"long","nullable":false,"metadata":{}}]}

```

## How was this patch tested?

Pass the Jenkins with newly added test cases.

This closes#22255.

Closes#22932 from dongjoon-hyun/SPARK-25102.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Since Spark 2.4.0 is released, we should test it in HiveExternalCatalogVersionsSuite

## How was this patch tested?

N/A

Closes#22984 from cloud-fan/minor.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

1. OrcReadBenchmark is under hive module, so the way to run it should be

```

build/sbt "hive/test:runMain <this class>"

```

2. The benchmark "String with Nulls Scan" should be with case "String with Nulls Scan(5%/50%/95%)", not "(0.05%/0.5%/0.95%)"

3. Add the null value percentages in the test case names of DataSourceReadBenchmark, for the benchmark "String with Nulls Scan" .

## How was this patch tested?

Re-run benchmarks

Closes#22965 from gengliangwang/fixHiveOrcReadBenchmark.

Lead-authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Co-authored-by: Gengliang Wang <ltnwgl@gmail.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Fix typos and misspellings, per https://github.com/apache/spark-website/pull/158#issuecomment-435790366

## How was this patch tested?

Existing tests.

Closes#22950 from srowen/Typos.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This is a follow-up pr of #22892 which moved `sample.json` from hive module to sql module, but we still need the file in hive module.

## How was this patch tested?

Existing tests.

Closes#22942 from ueshin/issues/SPARK-25884/sample.json.

Authored-by: Takuya UESHIN <ueshin@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Unfortunately, it seems that we missed this in 2.4.0. In Spark 2.4, if the default file system is not the local file system, `LOAD DATA LOCAL INPATH` only works in case of absolute paths. This PR aims to fix it to support relative paths. This is a regression in 2.4.0.

```scala

$ ls kv1.txt

kv1.txt

scala> spark.sql("LOAD DATA LOCAL INPATH 'kv1.txt' INTO TABLE t")

org.apache.spark.sql.AnalysisException: LOAD DATA input path does not exist: kv1.txt;

```

## How was this patch tested?

Pass the Jenkins

Closes#22927 from dongjoon-hyun/SPARK-LOAD.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

When `SHOW CREATE TABLE` for Datasource tables, we are missing `TBLPROPERTIES` and `COMMENT`, and we should use `LOCATION` instead of path in `OPTION`.

## How was this patch tested?

Splitted `ShowCreateTableSuite` to confirm to work with both `InMemoryCatalog` and `HiveExternalCatalog`, and added some tests.

Closes#22892 from ueshin/issues/SPARK-25884/show_create_table.

Authored-by: Takuya UESHIN <ueshin@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

When `spark.sql.hive.metastore.version` is misconfigured, we had better give a directional error message.

**BEFORE**

```scala

scala> sql("show databases").show

scala.MatchError: 2.4 (of class java.lang.String)

```

**AFTER**

```scala

scala> sql("show databases").show

java.lang.UnsupportedOperationException: Unsupported Hive Metastore version (2.4).

Please set spark.sql.hive.metastore.version with a valid version.

```

## How was this patch tested?

Manual.

Closes#22902 from dongjoon-hyun/SPARK-25893.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Set main args correctly in BenchmarkBase, to make it accessible for its subclass.

It will benefit:

- BuiltInDataSourceWriteBenchmark

- AvroWriteBenchmark

## How was this patch tested?

manual tests

Closes#22872 from yucai/main_args.

Authored-by: yucai <yyu1@ebay.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The instance of `FileSplit` is redundant for `ParquetFileFormat` and `hive\orc\OrcFileFormat` class.

## How was this patch tested?

Existing unit tests in `ParquetQuerySuite.scala` and `HiveOrcQuerySuite.scala`

Closes#22802 from 10110346/FileSplitnotneed.

Authored-by: liuxian <liu.xian3@zte.com.cn>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Change the version number in comment of `HiveUtils.newClientForExecution` from `13` to `1.2.1` .

## How was this patch tested?

N/A

Closes#22850 from laskfla/HiveUtils-Comment.

Authored-by: laskfla <wwlsax11@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Refactor ObjectHashAggregateExecBenchmark to use main method

## How was this patch tested?

Manually tested:

```

bin/spark-submit --class org.apache.spark.sql.execution.benchmark.ObjectHashAggregateExecBenchmark --jars sql/catalyst/target/spark-catalyst_2.11-3.0.0-SNAPSHOT-tests.jar,core/target/spark-core_2.11-3.0.0-SNAPSHOT-tests.jar,sql/hive/target/spark-hive_2.11-3.0.0-SNAPSHOT.jar --packages org.spark-project.hive:hive-exec:1.2.1.spark2 sql/hive/target/spark-hive_2.11-3.0.0-SNAPSHOT-tests.jar

```

Generated results with:

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "hive/test:runMain org.apache.spark.sql.execution.benchmark.ObjectHashAggregateExecBenchmark"

```

Closes#22804 from peter-toth/SPARK-25665.

Lead-authored-by: Peter Toth <peter.toth@gmail.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

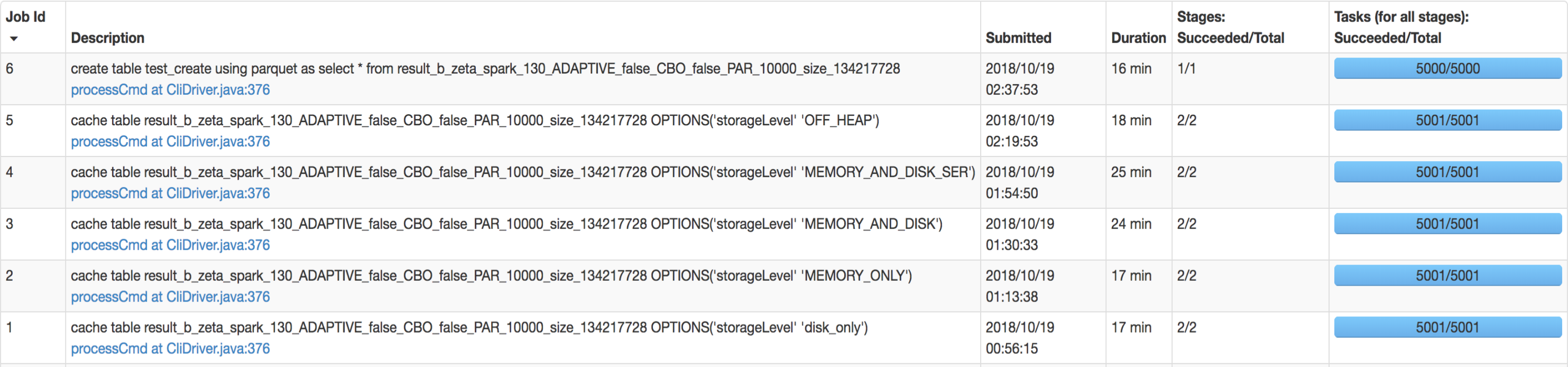

## What changes were proposed in this pull request?

SQL interface support specify `StorageLevel` when cache table. The semantic is:

```sql

CACHE TABLE tableName OPTIONS('storageLevel' 'DISK_ONLY');

```

All supported `StorageLevel` are:

eefdf9f9dd/core/src/main/scala/org/apache/spark/storage/StorageLevel.scala (L172-L183)

## How was this patch tested?

unit tests and manual tests.

manual tests configuration:

```

--executor-memory 15G --executor-cores 5 --num-executors 50

```

Data:

Input Size / Records: 1037.7 GB / 11732805788

Result:

Closes#22263 from wangyum/SPARK-25269.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>