## What changes were proposed in this pull request?

Added the number of iterations in `ClusteringSummary`. This is an helpful information in evaluating how to eventually modify the parameters in order to get a better model.

## How was this patch tested?

modified existing UTs

Author: Marco Gaido <marcogaido91@gmail.com>

Closes#20701 from mgaido91/SPARK-23528.

These changes allow an RPCHandler to receive an upload as a stream of

data, without having to buffer the entire message in the FrameDecoder.

The primary use case is for replicating large blocks. By itself, this change is adding dead-code that is not being used -- it is a step towards SPARK-24296.

Added unit tests for handling streaming data, including successfully sending data, and failures in reading the stream with concurrent requests.

Summary of changes:

* Introduce a new UploadStream RPC which is sent to push a large payload as a stream (in contrast, the pre-existing StreamRequest and StreamResponse RPCs are used for pull-based streaming).

* Generalize RpcHandler.receive() to support requests which contain streams.

* Generalize StreamInterceptor to handle both request and response messages (previously it only handled responses).

* Introduce StdChannelListener to abstract away common logging logic in ChannelFuture listeners.

Author: Imran Rashid <irashid@cloudera.com>

Closes#21346 from squito/upload_stream.

## What changes were proposed in this pull request?

The ultimate goal is for listeners to onTaskEnd to receive metrics when a task is killed intentionally, since the data is currently just thrown away. This is already done for ExceptionFailure, so this just copies the same approach.

## How was this patch tested?

Updated existing tests.

This is a rework of https://github.com/apache/spark/pull/17422, all credits should go to noodle-fb

Author: Xianjin YE <advancedxy@gmail.com>

Author: Charles Lewis <noodle@fb.com>

Closes#21165 from advancedxy/SPARK-20087.

## What changes were proposed in this pull request?

Add fit with validation set to spark.ml GBT

## How was this patch tested?

Will add later.

Author: WeichenXu <weichen.xu@databricks.com>

Closes#21129 from WeichenXu123/gbt_fit_validation.

## What changes were proposed in this pull request?

We save ML's user-supplied params and default params as one entity in metadata. During loading the saved models, we set all the loaded params into created ML model instances as user-supplied params.

It causes some problems, e.g., if we strictly disallow some params to be set at the same time, a default param can fail the param check because it is treated as user-supplied param after loading.

The loaded default params should not be set as user-supplied params. We should save ML default params separately in metadata.

For backward compatibility, when loading metadata, if it is a metadata file from previous Spark, we shouldn't raise error if we can't find the default param field.

## How was this patch tested?

Pass existing tests and added tests.

Author: Liang-Chi Hsieh <viirya@gmail.com>

Closes#20633 from viirya/save-ml-default-params.

The current in-process launcher implementation just calls the SparkSubmit

object, which, in case of errors, will more often than not exit the JVM.

This is not desirable since this launcher is meant to be used inside other

applications, and that would kill the application.

The change turns SparkSubmit into a class, and abstracts aways some of

the functionality used to print error messages and abort the submission

process. The default implementation uses the logging system for messages,

and throws exceptions for errors. As part of that I also moved some code

that doesn't really belong in SparkSubmit to a better location.

The command line invocation of spark-submit now uses a special implementation

of the SparkSubmit class that overrides those behaviors to do what is expected

from the command line version (print to the terminal, exit the JVM, etc).

A lot of the changes are to replace calls to methods such as "printErrorAndExit"

with the new API.

As part of adding tests for this, I had to fix some small things in the

launcher option parser so that things like "--version" can work when

used in the launcher library.

There is still code that prints directly to the terminal, like all the

Ivy-related code in SparkSubmitUtils, and other areas where some re-factoring

would help, like the CommandLineUtils class, but I chose to leave those

alone to keep this change more focused.

Aside from existing and added unit tests, I ran command line tools with

a bunch of different arguments to make sure messages and errors behave

like before.

Author: Marcelo Vanzin <vanzin@cloudera.com>

Closes#20925 from vanzin/SPARK-22941.

## What changes were proposed in this pull request?

The PR adds the option to specify a distance measure in BisectingKMeans. Moreover, it introduces the ability to use the cosine distance measure in it.

## How was this patch tested?

added UTs + existing UTs

Author: Marco Gaido <marcogaido91@gmail.com>

Closes#20600 from mgaido91/SPARK-23412.

## What changes were proposed in this pull request?

In this PR StorageStatus is made to private and simplified a bit moreover SparkContext.getExecutorStorageStatus method is removed. The reason of keeping StorageStatus is that it is usage from SparkContext.getRDDStorageInfo.

Instead of the method SparkContext.getExecutorStorageStatus executor infos are extended with additional memory metrics such as usedOnHeapStorageMemory, usedOffHeapStorageMemory, totalOnHeapStorageMemory, totalOffHeapStorageMemory.

## How was this patch tested?

By running existing unit tests.

Author: “attilapiros” <piros.attila.zsolt@gmail.com>

Author: Attila Zsolt Piros <2017933+attilapiros@users.noreply.github.com>

Closes#20546 from attilapiros/SPARK-20659.

## What changes were proposed in this pull request?

Bump previousSparkVersion in MimaBuild.scala to be 2.2.0 and add the missing exclusions to `v23excludes` in `MimaExcludes`. No item can be un-excluded in `v23excludes`.

## How was this patch tested?

The existing tests.

Author: gatorsmile <gatorsmile@gmail.com>

Closes#20264 from gatorsmile/bump22.

## What changes were proposed in this pull request?

This patch bumps the master branch version to `2.4.0-SNAPSHOT`.

## How was this patch tested?

N/A

Author: gatorsmile <gatorsmile@gmail.com>

Closes#20222 from gatorsmile/bump24.

## What changes were proposed in this pull request?

stageAttemptId added in TaskContext and corresponding construction modification

## How was this patch tested?

Added a new test in TaskContextSuite, two cases are tested:

1. Normal case without failure

2. Exception case with resubmitted stages

Link to [SPARK-22897](https://issues.apache.org/jira/browse/SPARK-22897)

Author: Xianjin YE <advancedxy@gmail.com>

Closes#20082 from advancedxy/SPARK-22897.

## What changes were proposed in this pull request?

Basic continuous execution, supporting map/flatMap/filter, with commits and advancement through RPC.

## How was this patch tested?

new unit-ish tests (exercising execution end to end)

Author: Jose Torres <jose@databricks.com>

Closes#19984 from jose-torres/continuous-impl.

## What changes were proposed in this pull request?

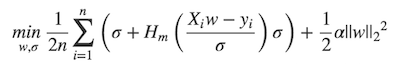

MLlib ```LinearRegression``` supports _huber_ loss addition to _leastSquares_ loss. The huber loss objective function is:

Refer Eq.(6) and Eq.(8) in [A robust hybrid of lasso and ridge regression](http://statweb.stanford.edu/~owen/reports/hhu.pdf). This objective is jointly convex as a function of (w, σ) ∈ R × (0,∞), we can use L-BFGS-B to solve it.

The current implementation is a straight forward porting for Python scikit-learn [```HuberRegressor```](http://scikit-learn.org/stable/modules/generated/sklearn.linear_model.HuberRegressor.html). There are some differences:

* We use mean loss (```lossSum/weightSum```), but sklearn uses total loss (```lossSum```).

* We multiply the loss function and L2 regularization by 1/2. It does not affect the result if we multiply the whole formula by a factor, we just keep consistent with _leastSquares_ loss.

So if fitting w/o regularization, MLlib and sklearn produce the same output. If fitting w/ regularization, MLlib should set ```regParam``` divide by the number of instances to match the output of sklearn.

## How was this patch tested?

Unit tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#19020 from yanboliang/spark-3181.

The main goal of this change is to allow multiple cluster-mode

submissions from the same JVM, without having them end up with

mixed configuration. That is done by extending the SparkApplication

trait, and doing so was reasonably trivial for standalone and

mesos modes.

For YARN mode, there was a complication. YARN used a "SPARK_YARN_MODE"

system property to control behavior indirectly in a whole bunch of

places, mainly in the SparkHadoopUtil / YarnSparkHadoopUtil classes.

Most of the changes here are removing that.

Since we removed support for Hadoop 1.x, some methods that lived in

YarnSparkHadoopUtil can now live in SparkHadoopUtil. The remaining

methods don't need to be part of the class, and can be called directly

from the YarnSparkHadoopUtil object, so now there's a single

implementation of SparkHadoopUtil.

There were two places in the code that relied on SPARK_YARN_MODE to

make decisions about YARN-specific functionality, and now explicitly check

the master from the configuration for that instead:

* fetching the external shuffle service port, which can come from the YARN

configuration.

* propagation of the authentication secret using Hadoop credentials. This also

was cleaned up a little to not need so many methods in `SparkHadoopUtil`.

With those out of the way, actually changing the YARN client

to extend SparkApplication was easy.

Tested with existing unit tests, and also by running YARN apps

with auth and kerberos both on and off in a real cluster.

Author: Marcelo Vanzin <vanzin@cloudera.com>

Closes#19631 from vanzin/SPARK-22372.

The only remaining use of this class was the SparkStatusTracker, which

was modified to use the new status store. The test code to wait for

executors was moved to TestUtils and now uses the SparkStatusTracker API.

Indirectly, ConsoleProgressBar also uses this data. Because it has

some lower latency requirements, a shortcut to efficiently get the

active stages from the active listener was added to the AppStateStore.

Now that all UI code goes through the status store to get its data,

the FsHistoryProvider can be cleaned up to only replay event logs

when needed - that is, when there is no pre-existing disk store for

the application.

As part of this change I also modified the streaming UI to read the needed

data from the store, which was missed in the previous patch that made

JobProgressListener redundant.

Author: Marcelo Vanzin <vanzin@cloudera.com>

Closes#19750 from vanzin/SPARK-20650.

## What changes were proposed in this pull request?

We add a parameter whether to collect the full model list when CrossValidator/TrainValidationSplit training (Default is NOT), avoid the change cause OOM)

- Add a method in CrossValidatorModel/TrainValidationSplitModel, allow user to get the model list

- CrossValidatorModelWriter add a “option”, allow user to control whether to persist the model list to disk (will persist by default).

- Note: when persisting the model list, use indices as the sub-model path

## How was this patch tested?

Test cases added.

Author: WeichenXu <weichen.xu@databricks.com>

Closes#19208 from WeichenXu123/expose-model-list.

This change is a little larger because there's a whole lot of logic

behind these pages, all really tied to internal types and listeners,

and some of that logic had to be implemented in the new listener and

the needed data exposed through the API types.

- Added missing StageData and ExecutorStageSummary fields which are

used by the UI. Some json golden files needed to be updated to account

for new fields.

- Save RDD graph data in the store. This tries to re-use existing types as

much as possible, so that the code doesn't need to be re-written. So it's

probably not very optimal.

- Some old classes (e.g. JobProgressListener) still remain, since they're used

in other parts of the code; they're not used by the UI anymore, though, and

will be cleaned up in a separate change.

- Save information about active pools in the store. This data is not really used

in the SHS, but it's not a lot of data so it's still recorded when replaying

applications.

- Because the new store sorts things slightly differently from the previous

code, some json golden files had some elements within them shuffled around.

- The retention unit test in UISeleniumSuite was disabled because the code

to throw away old stages / tasks hasn't been added yet.

- The job description field in the API tries to follow the old behavior, which

makes it be empty most of the time, even though there's information to fill it

in. For stages, a new field was added to hold the description (which is basically

the job description), so that the UI can be rendered in the old way.

- A new stage status ("SKIPPED") was added to account for the fact that the API

couldn't represent that state before. Without this, the stage would show up as

"PENDING" in the UI, which is now based on API types.

- The API used to expose "executorRunTime" as the value of the task's duration,

which wasn't really correct (also because that value was easily available

from the metrics object); this change fixes that by storing the correct duration,

which also means a few expectation files needed to be updated to account for

the new durations and sorting differences due to the changed values.

- Added changes to implement SPARK-20713 and SPARK-21922 in the new code.

Tested with existing unit tests (and by using the UI a lot).

Author: Marcelo Vanzin <vanzin@cloudera.com>

Closes#19698 from vanzin/SPARK-20648.

## What changes were proposed in this pull request?

`excludePackage` is deprecated like the [following](https://github.com/lightbend/migration-manager/blob/master/core/src/main/scala/com/typesafe/tools/mima/core/Filters.scala#L33-L36) and shows deprecation warnings now. This PR uses `exclude[Problem](packageName + ".*")` instead.

```scala

deprecated("Replace with ProblemFilters.exclude[Problem](\"my.package.*\")", "0.1.15")

def excludePackage(packageName: String): ProblemFilter = {

exclude[Problem](packageName + ".*")

}

```

## How was this patch tested?

Pass the Jenkins MiMa.

Author: Dongjoon Hyun <dongjoon@apache.org>

Closes#19710 from dongjoon-hyun/SPARK-22485.

This required adding information about StreamBlockId to the store,

which is not available yet via the API. So an internal type was added

until there's a need to expose that information in the API.

The UI only lists RDDs that have cached partitions, and that information

wasn't being correctly captured in the listener, so that's also fixed,

along with some minor (internal) API adjustments so that the UI can

get the correct data.

Because of the way partitions are cached, some optimizations w.r.t. how

often the data is flushed to the store could not be applied to this code;

because of that, some different ways to make the code more performant

were added to the data structures tracking RDD blocks, with the goal of

avoiding expensive copies when lots of blocks are being updated.

Tested with existing and updated unit tests.

Author: Marcelo Vanzin <vanzin@cloudera.com>

Closes#19679 from vanzin/SPARK-20647.

The executors page is built on top of the REST API, so the page itself

was easy to hook up to the new code.

Some other pages depend on the `ExecutorListener` class that is being

removed, though, so they needed to be modified to use data from the

new store. Fortunately, all they seemed to need is the map of executor

logs, so that was somewhat easy too.

The executor timeline graph required adding some properties to the

ExecutorSummary API type. Instead of following the previous code,

which stored all the listener events in memory, the timeline is

now created based on the data available from the API.

I had to change some of the test golden files because the old code would

return executors in "random" order (since it used a mutable Map instead

of something that returns a sorted list), and the new code returns executors

in id order.

Tested with existing unit tests.

Author: Marcelo Vanzin <vanzin@cloudera.com>

Closes#19678 from vanzin/SPARK-20646.

This change modifies the status listener to collect the information

needed to render the envionment page, and populates that page and the

API with information collected by the listener.

Tested with existing and added unit tests.

Author: Marcelo Vanzin <vanzin@cloudera.com>

Closes#19677 from vanzin/SPARK-20645.

There are two somewhat unrelated things going on in this patch, but

both are meant to make integration of individual UI pages later on

much easier.

The first part is some tweaking of the code in the listener so that

it does less updates of the kvstore for data that changes fast; for

example, it avoids writing changes down to the store for every

task-related event, since those can arrive very quickly at times.

Instead, for these kinds of events, it chooses to only flush things

if a certain interval has passed. The interval is based on how often

the current spark-shell code updates the progress bar for jobs, so

that users can get reasonably accurate data.

The code also delays as much as possible hitting the underlying kvstore

when replaying apps in the history server. This is to avoid unnecessary

writes to disk.

The second set of changes prepare the history server and SparkUI for

integrating with the kvstore. A new class, AppStatusStore, is used

for translating between the stored data and the types used in the

UI / API. The SHS now populates a kvstore with data loaded from

event logs when an application UI is requested.

Because this store can hold references to disk-based resources, the

code was modified to retrieve data from the store under a read lock.

This allows the SHS to detect when the store is still being used, and

only update it (e.g. because an updated event log was detected) when

there is no other thread using the store.

This change ended up creating a lot of churn in the ApplicationCache

code, which was cleaned up a lot in the process. I also removed some

metrics which don't make too much sense with the new code.

Tested with existing and added unit tests, and by making sure the SHS

still works on a real cluster.

Author: Marcelo Vanzin <vanzin@cloudera.com>

Closes#19582 from vanzin/SPARK-20644.

The initial listener code is based on the existing JobProgressListener (and others),

and tries to mimic their behavior as much as possible. The change also includes

some minor code movement so that some types and methods from the initial history

server code code can be reused.

The code introduces a few mutable versions of public API types, used internally,

to make it easier to update information without ugly copy methods, and also to

make certain updates cheaper.

Note the code here is not 100% correct. This is meant as a building ground for

the UI integration in the next milestones. As different parts of the UI are

ported, fixes will be made to the different parts of this code to account

for the needed behavior.

I also added annotations to API types so that Jackson is able to correctly

deserialize options, sequences and maps that store primitive types.

Author: Marcelo Vanzin <vanzin@cloudera.com>

Closes#19383 from vanzin/SPARK-20643.

…build; fix some things that will be warnings or errors in 2.12; restore Scala 2.12 profile infrastructure

## What changes were proposed in this pull request?

This change adds back the infrastructure for a Scala 2.12 build, but does not enable it in the release or Python test scripts.

In order to make that meaningful, it also resolves compile errors that the code hits in 2.12 only, in a way that still works with 2.11.

It also updates dependencies to the earliest minor release of dependencies whose current version does not yet support Scala 2.12. This is in a sense covered by other JIRAs under the main umbrella, but implemented here. The versions below still work with 2.11, and are the _latest_ maintenance release in the _earliest_ viable minor release.

- Scalatest 2.x -> 3.0.3

- Chill 0.8.0 -> 0.8.4

- Clapper 1.0.x -> 1.1.2

- json4s 3.2.x -> 3.4.2

- Jackson 2.6.x -> 2.7.9 (required by json4s)

This change does _not_ fully enable a Scala 2.12 build:

- It will also require dropping support for Kafka before 0.10. Easy enough, just didn't do it yet here

- It will require recreating `SparkILoop` and `Main` for REPL 2.12, which is SPARK-14650. Possible to do here too.

What it does do is make changes that resolve much of the remaining gap without affecting the current 2.11 build.

## How was this patch tested?

Existing tests and build. Manually tested with `./dev/change-scala-version.sh 2.12` to verify it compiles, modulo the exceptions above.

Author: Sean Owen <sowen@cloudera.com>

Closes#18645 from srowen/SPARK-14280.

## What changes were proposed in this pull request?

add an "asBinary" method to LogisticRegressionSummary for convenient casting to BinaryLogisticRegressionSummary.

## How was this patch tested?

Testcase updated.

Author: WeichenXu <weichen.xu@databricks.com>

Closes#19072 from WeichenXu123/mlor_summary_as_binary.

## What changes were proposed in this pull request?

Add 4 traits, using the following hierarchy:

LogisticRegressionSummary

LogisticRegressionTrainingSummary: LogisticRegressionSummary

BinaryLogisticRegressionSummary: LogisticRegressionSummary

BinaryLogisticRegressionTrainingSummary: LogisticRegressionTrainingSummary, BinaryLogisticRegressionSummary

and the public method such as `def summary` only return trait type listed above.

and then implement 4 concrete classes:

LogisticRegressionSummaryImpl (multiclass case)

LogisticRegressionTrainingSummaryImpl (multiclass case)

BinaryLogisticRegressionSummaryImpl (binary case).

BinaryLogisticRegressionTrainingSummaryImpl (binary case).

## How was this patch tested?

Existing tests & added tests.

Author: WeichenXu <WeichenXu123@outlook.com>

Closes#15435 from WeichenXu123/mlor_summary.

## What changes were proposed in this pull request?

When use Vector.compressed to change a Vector to SparseVector, the performance is very low comparing with Vector.toSparse.

This is because you have to scan the value three times using Vector.compressed, but you just need two times when use Vector.toSparse.

When the length of the vector is large, there is significant performance difference between this two method.

## How was this patch tested?

The existing UT

Author: Peng Meng <peng.meng@intel.com>

Closes#18899 from mpjlu/optVectorCompress.

## What changes were proposed in this pull request?

This pr updated `lz4-java` to the latest (v1.4.0) and removed custom `LZ4BlockInputStream`. We currently use custom `LZ4BlockInputStream` to read concatenated byte stream in shuffle. But, this functionality has been implemented in the latest lz4-java (https://github.com/lz4/lz4-java/pull/105). So, we might update the latest to remove the custom `LZ4BlockInputStream`.

Major diffs between the latest release and v1.3.0 in the master are as follows (62f7547abb...6d4693f562);

- fixed NPE in XXHashFactory similarly

- Don't place resources in default package to support shading

- Fixes ByteBuffer methods failing to apply arrayOffset() for array-backed

- Try to load lz4-java from java.library.path, then fallback to bundled

- Add ppc64le binary

- Add s390x JNI binding

- Add basic LZ4 Frame v1.5.0 support

- enable aarch64 support for lz4-java

- Allow unsafeInstance() for ppc64le archiecture

- Add unsafeInstance support for AArch64

- Support 64-bit JNI build on Solaris

- Avoid over-allocating a buffer

- Allow EndMark to be incompressible for LZ4FrameInputStream.

- Concat byte stream

## How was this patch tested?

Existing tests.

Author: Takeshi Yamamuro <yamamuro@apache.org>

Closes#18883 from maropu/SPARK-21276.

In current code(https://github.com/apache/spark/pull/16989), big blocks are shuffled to disk.

This pr proposes to collect metrics for remote bytes fetched to disk.

Author: jinxing <jinxing6042@126.com>

Closes#18249 from jinxing64/SPARK-19937.

## What changes were proposed in this pull request?

Spark Version for a specific application is not displayed on the history page now. It should be nice to switch the spark version on the UI when we click on the specific application.

Currently there seems to be way as SparkListenerLogStart records the application version. So, it should be trivial to listen to this event and provision this change on the UI.

For Example

<img width="1439" alt="screen shot 2017-04-06 at 3 23 41 pm" src="https://cloud.githubusercontent.com/assets/8295799/25092650/41f3970a-2354-11e7-9b0d-4646d0adeb61.png">

<img width="1399" alt="screen shot 2017-04-17 at 9 59 33 am" src="https://cloud.githubusercontent.com/assets/8295799/25092743/9f9e2f28-2354-11e7-9605-f2f1c63f21fe.png">

{"Event":"SparkListenerLogStart","Spark Version":"2.0.0"}

(Please fill in changes proposed in this fix)

Modified the SparkUI for History server to listen to SparkLogListenerStart event and extract the version and print it.

## How was this patch tested?

Manual testing of UI page. Attaching the UI screenshot changes here

(Please explain how this patch was tested. E.g. unit tests, integration tests, manual tests)

(If this patch involves UI changes, please attach a screenshot; otherwise, remove this)

Please review http://spark.apache.org/contributing.html before opening a pull request.

Author: Sanket <schintap@untilservice-lm>

Closes#17658 from redsanket/SPARK-20355.

## What changes were proposed in this pull request?

Remove ML methods we deprecated in 2.1.

## How was this patch tested?

Existing tests.

Author: Yanbo Liang <ybliang8@gmail.com>

Closes#17867 from yanboliang/spark-20606.

## What changes were proposed in this pull request?

Currently cacheTable API only supports MEMORY_AND_DISK. This PR adds additional API to take different storage levels.

## How was this patch tested?

unit tests

Author: madhu <phatak.dev@gmail.com>

Closes#17802 from phatak-dev/cacheTableAPI.

## What changes were proposed in this pull request?

With [SPARK-13992](https://issues.apache.org/jira/browse/SPARK-13992), Spark supports persisting data into off-heap memory, but the usage of on-heap and off-heap memory is not exposed currently, it is not so convenient for user to monitor and profile, so here propose to expose off-heap memory as well as on-heap memory usage in various places:

1. Spark UI's executor page will display both on-heap and off-heap memory usage.

2. REST request returns both on-heap and off-heap memory.

3. Also this can be gotten from MetricsSystem.

4. Last this usage can be obtained programmatically from SparkListener.

Attach the UI changes:

Backward compatibility is also considered for event-log and REST API. Old event log can still be replayed with off-heap usage displayed as 0. For REST API, only adds the new fields, so JSON backward compatibility can still be kept.

## How was this patch tested?

Unit test added and manual verification.

Author: jerryshao <sshao@hortonworks.com>

Closes#14617 from jerryshao/SPARK-17019.

## What changes were proposed in this pull request?

This patch adds a `compressed` method to ML `Matrix` class, which returns the minimal storage representation of the matrix - either sparse or dense. Because the space occupied by a sparse matrix is dependent upon its layout (i.e. column major or row major), this method must consider both cases. It may also be useful to force the layout to be column or row major beforehand, so an overload is added which takes in a `columnMajor: Boolean` parameter.

The compressed implementation relies upon two new abstract methods `toDense(columnMajor: Boolean)` and `toSparse(columnMajor: Boolean)`, similar to the compressed method implemented in the `Vector` class. These methods also allow the layout of the resulting matrix to be specified via the `columnMajor` parameter. More detail on the new methods is given below.

## How was this patch tested?

Added many new unit tests

## New methods (summary, not exhaustive list)

**Matrix trait**

- `private[ml] def toDenseMatrix(columnMajor: Boolean): DenseMatrix` (abstract) - converts the matrix (either sparse or dense) to dense format

- `private[ml] def toSparseMatrix(columnMajor: Boolean): SparseMatrix` (abstract) - converts the matrix (either sparse or dense) to sparse format

- `def toDense: DenseMatrix = toDense(true)` - converts the matrix (either sparse or dense) to dense format in column major layout

- `def toSparse: SparseMatrix = toSparse(true)` - converts the matrix (either sparse or dense) to sparse format in column major layout

- `def compressed: Matrix` - finds the minimum space representation of this matrix, considering both column and row major layouts, and converts it

- `def compressed(columnMajor: Boolean): Matrix` - finds the minimum space representation of this matrix considering only column OR row major, and converts it

**DenseMatrix class**

- `private[ml] def toDenseMatrix(columnMajor: Boolean): DenseMatrix` - converts the dense matrix to a dense matrix, optionally changing the layout (data is NOT duplicated if the layouts are the same)

- `private[ml] def toSparseMatrix(columnMajor: Boolean): SparseMatrix` - converts the dense matrix to sparse matrix, using the specified layout

**SparseMatrix class**

- `private[ml] def toDenseMatrix(columnMajor: Boolean): DenseMatrix` - converts the sparse matrix to a dense matrix, using the specified layout

- `private[ml] def toSparseMatrix(columnMajors: Boolean): SparseMatrix` - converts the sparse matrix to sparse matrix. If the sparse matrix contains any explicit zeros, they are removed. If the layout requested does not match the current layout, data is copied to a new representation. If the layouts match and no explicit zeros exist, the current matrix is returned.

Author: sethah <seth.hendrickson16@gmail.com>

Closes#15628 from sethah/matrix_compress.

This commit adds a killTaskAttempt method to SparkContext, to allow users to

kill tasks so that they can be re-scheduled elsewhere.

This also refactors the task kill path to allow specifying a reason for the task kill. The reason is propagated opaquely through events, and will show up in the UI automatically as `(N killed: $reason)` and `TaskKilled: $reason`. Without this change, there is no way to provide the user feedback through the UI.

Currently used reasons are "stage cancelled", "another attempt succeeded", and "killed via SparkContext.killTask". The user can also specify a custom reason through `SparkContext.killTask`.

cc rxin

In the stage overview UI the reasons are summarized:

Within the stage UI you can see individual task kill reasons:

Existing tests, tried killing some stages in the UI and verified the messages are as expected.

Author: Eric Liang <ekl@databricks.com>

Author: Eric Liang <ekl@google.com>

Closes#17166 from ericl/kill-reason.

## What changes were proposed in this pull request?

An additional trigger and trigger executor that will execute a single trigger only. One can use this OneTime trigger to have more control over the scheduling of triggers.

In addition, this patch requires an optimization to StreamExecution that logs a commit record at the end of successfully processing a batch. This new commit log will be used to determine the next batch (offsets) to process after a restart, instead of using the offset log itself to determine what batch to process next after restart; using the offset log to determine this would process the previously logged batch, always, thus not permitting a OneTime trigger feature.

## How was this patch tested?

A number of existing tests have been revised. These tests all assumed that when restarting a stream, the last batch in the offset log is to be re-processed. Given that we now have a commit log that will tell us if that last batch was processed successfully, the results/assumptions of those tests needed to be revised accordingly.

In addition, a OneTime trigger test was added to StreamingQuerySuite, which tests:

- The semantics of OneTime trigger (i.e., on start, execute a single batch, then stop).

- The case when the commit log was not able to successfully log the completion of a batch before restart, which would mean that we should fall back to what's in the offset log.

- A OneTime trigger execution that results in an exception being thrown.

marmbrus tdas zsxwing

Please review http://spark.apache.org/contributing.html before opening a pull request.

Author: Tyson Condie <tcondie@gmail.com>

Author: Tathagata Das <tathagata.das1565@gmail.com>

Closes#17219 from tcondie/stream-commit.

## What changes were proposed in this pull request?

This PR is an enhancement to ML StringIndexer.

Before this PR, String Indexer only supports "skip"/"error" options to deal with unseen records.

But those unseen records might still be useful and user would like to keep the unseen labels in

certain use cases, This PR enables StringIndexer to support keeping unseen labels as

indices [numLabels].

'''Before

StringIndexer().setHandleInvalid("skip")

StringIndexer().setHandleInvalid("error")

'''After

support the third option "keep"

StringIndexer().setHandleInvalid("keep")

## How was this patch tested?

Test added in StringIndexerSuite

Signed-off-by: VinceShieh <vincent.xieintel.com>

(Please fill in changes proposed in this fix)

Author: VinceShieh <vincent.xie@intel.com>

Closes#16883 from VinceShieh/spark-17498.

## What changes were proposed in this pull request?

Fault-tolerance in spark requires special handling of shuffle fetch

failures. The Executor would catch FetchFailedException and send a

special msg back to the driver.

However, intervening user code could intercept that exception, and wrap

it with something else. This even happens in SparkSQL. So rather than

checking the thrown exception only, we'll store the fetch failure directly

in the TaskContext, where users can't touch it.

## How was this patch tested?

Added a test case which failed before the fix. Full test suite via jenkins.

Author: Imran Rashid <irashid@cloudera.com>

Closes#16639 from squito/SPARK-19276.

The REST API has a security filter that performs auth checks

based on the UI root's security manager. That works fine when

the UI root is the app's UI, but not when it's the history server.

In the SHS case, all users would be allowed to see all applications

through the REST API, even if the UI itself wouldn't be available

to them.

This change adds auth checks for each app access through the API

too, so that only authorized users can see the app's data.

The change also modifies the existing security filter to use

`HttpServletRequest.getRemoteUser()`, which is used in other

places. That is not necessarily the same as the principal's

name; for example, when using Hadoop's SPNEGO auth filter,

the remote user strips the realm information, which then matches

the user name registered as the owner of the application.

I also renamed the UIRootFromServletContext trait to a more generic

name since I'm using it to store more context information now.

Tested manually with an authentication filter enabled.

Author: Marcelo Vanzin <vanzin@cloudera.com>

Closes#16978 from vanzin/SPARK-19652.

## What changes were proposed in this pull request?

Adding convenience function to Python `JavaWrapper` so that it is easy to create a Py4J JavaArray that is compatible with current class constructors that have a Scala `Array` as input so that it is not necessary to have a Java/Python friendly constructor. The function takes a Java class as input that is used by Py4J to create the Java array of the given class. As an example, `OneVsRest` has been updated to use this and the alternate constructor is removed.

## How was this patch tested?

Added unit tests for the new convenience function and updated `OneVsRest` doctests which use this to persist the model.

Author: Bryan Cutler <cutlerb@gmail.com>

Closes#14725 from BryanCutler/pyspark-new_java_array-CountVectorizer-SPARK-17161.

## What changes were proposed in this pull request?

Although Spark history server UI shows task ‘status’ and ‘duration’ fields, it does not expose these fields in the REST API response. For the Spark history server API users, it is not possible to determine task status and duration. Spark history server has access to task status and duration from event log, but it is not exposing these in API. This patch is proposed to expose task ‘status’ and ‘duration’ fields in Spark history server REST API.

## How was this patch tested?

Modified existing test cases in org.apache.spark.deploy.history.HistoryServerSuite.

Author: Parag Chaudhari <paragpc@amazon.com>

Closes#16473 from paragpc/expose_task_status.

## What changes were proposed in this pull request?

add loglikelihood in GMM.summary

## How was this patch tested?

added tests

Author: Zheng RuiFeng <ruifengz@foxmail.com>

Author: Ruifeng Zheng <ruifengz@foxmail.com>

Closes#12064 from zhengruifeng/gmm_metric.

## What changes were proposed in this pull request?

In https://github.com/apache/spark/pull/16296 , we reached a consensus that we should hide the external/managed table concept to users and only expose custom table path.

This PR renames `Catalog.createExternalTable` to `createTable`(still keep the old versions for backward compatibility), and only set the table type to EXTERNAL if `path` is specified in options.

## How was this patch tested?

new tests in `CatalogSuite`

Author: Wenchen Fan <wenchen@databricks.com>

Closes#16528 from cloud-fan/create-table.

## What changes were proposed in this pull request?

This PR is an inheritance from #16000, and is a completion of #15904.

**Description**

- Augment the `org.apache.spark.status.api.v1` package for serving streaming information.

- Retrieve the streaming information through StreamingJobProgressListener.

> this api should cover exceptly the same amount of information as you can get from the web interface

> the implementation is base on the current REST implementation of spark-core

> and will be available for running applications only

>

> https://issues.apache.org/jira/browse/SPARK-18537

## How was this patch tested?

Local test.

Author: saturday_s <shi.indetail@gmail.com>

Author: Chan Chor Pang <ChorPang.Chan@access-company.com>

Author: peterCPChan <universknight@gmail.com>

Closes#16253 from saturday-shi/SPARK-18537.

### What changes were proposed in this pull request?

Currently, we only have a SQL interface for recovering all the partitions in the directory of a table and update the catalog. `MSCK REPAIR TABLE` or `ALTER TABLE table RECOVER PARTITIONS`. (Actually, very hard for me to remember `MSCK` and have no clue what it means)

After the new "Scalable Partition Handling", the table repair becomes much more important for making visible the data in the created data source partitioned table.

Thus, this PR is to add it into the Catalog interface. After this PR, users can repair the table by

```Scala

spark.catalog.recoverPartitions("testTable")

```

### How was this patch tested?

Modified the existing test cases.

Author: gatorsmile <gatorsmile@gmail.com>

Closes#16356 from gatorsmile/repairTable.

Based on an informal survey, users find this option easier to understand / remember.

Author: Michael Armbrust <michael@databricks.com>

Closes#16182 from marmbrus/renameRecentProgress.

## What changes were proposed in this pull request?

Here are the major changes in this PR.

- Added the ability to recover `StreamingQuery.id` from checkpoint location, by writing the id to `checkpointLoc/metadata`.

- Added `StreamingQuery.runId` which is unique for every query started and does not persist across restarts. This is to identify each restart of a query separately (same as earlier behavior of `id`).

- Removed auto-generation of `StreamingQuery.name`. The purpose of name was to have the ability to define an identifier across restarts, but since id is precisely that, there is no need for a auto-generated name. This means name becomes purely cosmetic, and is null by default.

- Added `runId` to `StreamingQueryListener` events and `StreamingQueryProgress`.

Implementation details

- Renamed existing `StreamExecutionMetadata` to `OffsetSeqMetadata`, and moved it to the file `OffsetSeq.scala`, because that is what this metadata is tied to. Also did some refactoring to make the code cleaner (got rid of a lot of `.json` and `.getOrElse("{}")`).

- Added the `id` as the new `StreamMetadata`.

- When a StreamingQuery is created it gets or writes the `StreamMetadata` from `checkpointLoc/metadata`.

- All internal logging in `StreamExecution` uses `(name, id, runId)` instead of just `name`

TODO

- [x] Test handling of name=null in json generation of StreamingQueryProgress

- [x] Test handling of name=null in json generation of StreamingQueryListener events

- [x] Test python API of runId

## How was this patch tested?

Updated unit tests and new unit tests

Author: Tathagata Das <tathagata.das1565@gmail.com>

Closes#16113 from tdas/SPARK-18657.