## What changes were proposed in this pull request?

Refactor `MiscBenchmark ` to use main method.

Generate benchmark result:

```sh

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.MiscBenchmark"

```

## How was this patch tested?

manual tests

Closes#22500 from wangyum/SPARK-25488.

Lead-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Yuming Wang <wgyumg@gmail.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

By replacing loops with random possible value.

- `read partitioning bucketed tables with bucket pruning filters` reduce from 55s to 7s

- `read partitioning bucketed tables having composite filters` reduce from 54s to 8s

- total time: reduce from 288s to 192s

## How was this patch tested?

Unit test

Closes#22640 from gengliangwang/fastenBucketedReadSuite.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Current the CSV's infer schema code inlines `TypeCoercion.findTightestCommonType`. This is a minor refactor to make use of the common type coercion code when applicable. This way we can take advantage of any improvement to the base method.

Thanks to MaxGekk for finding this while reviewing another PR.

## How was this patch tested?

This is a minor refactor. Existing tests are used to verify the change.

Closes#22619 from dilipbiswal/csv_minor.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Adds support for the setting limit in the sql split function

## How was this patch tested?

1. Updated unit tests

2. Tested using Scala spark shell

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#22227 from phegstrom/master.

Authored-by: Parker Hegstrom <phegstrom@palantir.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In this test case, we are verifying that the result of an UDF is cached when the underlying data frame is cached and that the udf is not evaluated again when the cached data frame is used.

To reduce the runtime we do :

1) Use a single partition dataframe, so the total execution time of UDF is more deterministic.

2) Cut down the size of the dataframe from 10 to 2.

3) Reduce the sleep time in the UDF from 5secs to 2secs.

4) Reduce the failafter condition from 3 to 2.

With the above change, it takes about 4 secs to cache the first dataframe. And subsequent check takes a few hundred milliseconds.

The new runtime for 5 consecutive runs of this test is as follows :

```

[info] - cache UDF result correctly (4 seconds, 906 milliseconds)

[info] - cache UDF result correctly (4 seconds, 281 milliseconds)

[info] - cache UDF result correctly (4 seconds, 288 milliseconds)

[info] - cache UDF result correctly (4 seconds, 355 milliseconds)

[info] - cache UDF result correctly (4 seconds, 280 milliseconds)

```

## How was this patch tested?

This is s test fix.

Closes#22638 from dilipbiswal/SPARK-25610.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

The total run time of `HiveSparkSubmitSuite` is about 10 minutes.

While the related code is stable, add tag `ExtendedHiveTest` for it.

## How was this patch tested?

Unit test.

Closes#22642 from gengliangwang/addTagForHiveSparkSubmitSuite.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Before ORC 1.5.3, `orc.dictionary.key.threshold` and `hive.exec.orc.dictionary.key.size.threshold` are applied for all columns. This has been a big huddle to enable dictionary encoding. From ORC 1.5.3, `orc.column.encoding.direct` is added to enforce direct encoding selectively in a column-wise manner. This PR aims to add that feature by upgrading ORC from 1.5.2 to 1.5.3.

The followings are the patches in ORC 1.5.3 and this feature is the only one related to Spark directly.

```

ORC-406: ORC: Char(n) and Varchar(n) writers truncate to n bytes & corrupts multi-byte data (gopalv)

ORC-403: [C++] Add checks to avoid invalid offsets in InputStream

ORC-405: Remove calcite as a dependency from the benchmarks.

ORC-375: Fix libhdfs on gcc7 by adding #include <functional> two places.

ORC-383: Parallel builds fails with ConcurrentModificationException

ORC-382: Apache rat exclusions + add rat check to travis

ORC-401: Fix incorrect quoting in specification.

ORC-385: Change RecordReader to extend Closeable.

ORC-384: [C++] fix memory leak when loading non-ORC files

ORC-391: [c++] parseType does not accept underscore in the field name

ORC-397: Allow selective disabling of dictionary encoding. Original patch was by Mithun Radhakrishnan.

ORC-389: Add ability to not decode Acid metadata columns

```

## How was this patch tested?

Pass the Jenkins with newly added test cases.

Closes#22622 from dongjoon-hyun/SPARK-25635.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Improve the runtime by reducing the number of partitions created in the test. The number of partitions are reduced from 280 to 60.

Here are the test times for the `getPartitionsByFilter returns all partitions` test on my laptop.

```

[info] - 0.13: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (4 seconds, 230 milliseconds)

[info] - 0.14: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (3 seconds, 576 milliseconds)

[info] - 1.0: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (3 seconds, 495 milliseconds)

[info] - 1.1: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (6 seconds, 728 milliseconds)

[info] - 1.2: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (7 seconds, 260 milliseconds)

[info] - 2.0: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (8 seconds, 270 milliseconds)

[info] - 2.1: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (6 seconds, 856 milliseconds)

[info] - 2.2: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (7 seconds, 587 milliseconds)

[info] - 2.3: getPartitionsByFilter returns all partitions when hive.metastore.try.direct.sql=false (7 seconds, 230 milliseconds)

## How was this patch tested?

Test only.

Closes#22644 from dilipbiswal/SPARK-25626.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

The java `foreachBatch` API in `DataStreamWriter` should accept `java.lang.Long` rather `scala.Long`.

## How was this patch tested?

New java test.

Closes#22633 from zsxwing/fix-java-foreachbatch.

Authored-by: Shixiong Zhu <zsxwing@gmail.com>

Signed-off-by: Shixiong Zhu <zsxwing@gmail.com>

## What changes were proposed in this pull request?

When constructing a DataFrame from a Java bean, using nested beans throws an error despite [documentation](http://spark.apache.org/docs/latest/sql-programming-guide.html#inferring-the-schema-using-reflection) stating otherwise. This PR aims to add that support.

This PR does not yet add nested beans support in array or List fields. This can be added later or in another PR.

## How was this patch tested?

Nested bean was added to the appropriate unit test.

Also manually tested in Spark shell on code emulating the referenced JIRA:

```

scala> import scala.beans.BeanProperty

import scala.beans.BeanProperty

scala> class SubCategory(BeanProperty var id: String, BeanProperty var name: String) extends Serializable

defined class SubCategory

scala> class Category(BeanProperty var id: String, BeanProperty var subCategory: SubCategory) extends Serializable

defined class Category

scala> import scala.collection.JavaConverters._

import scala.collection.JavaConverters._

scala> spark.createDataFrame(Seq(new Category("s-111", new SubCategory("sc-111", "Sub-1"))).asJava, classOf[Category])

java.lang.IllegalArgumentException: The value (SubCategory65130cf2) of the type (SubCategory) cannot be converted to struct<id:string,name:string>

at org.apache.spark.sql.catalyst.CatalystTypeConverters$StructConverter.toCatalystImpl(CatalystTypeConverters.scala:262)

at org.apache.spark.sql.catalyst.CatalystTypeConverters$StructConverter.toCatalystImpl(CatalystTypeConverters.scala:238)

at org.apache.spark.sql.catalyst.CatalystTypeConverters$CatalystTypeConverter.toCatalyst(CatalystTypeConverters.scala:103)

at org.apache.spark.sql.catalyst.CatalystTypeConverters$$anonfun$createToCatalystConverter$2.apply(CatalystTypeConverters.scala:396)

at org.apache.spark.sql.SQLContext$$anonfun$beansToRows$1$$anonfun$apply$1.apply(SQLContext.scala:1108)

at org.apache.spark.sql.SQLContext$$anonfun$beansToRows$1$$anonfun$apply$1.apply(SQLContext.scala:1108)

at scala.collection.TraversableLike$$anonfun$map$1.apply(TraversableLike.scala:234)

at scala.collection.TraversableLike$$anonfun$map$1.apply(TraversableLike.scala:234)

at scala.collection.IndexedSeqOptimized$class.foreach(IndexedSeqOptimized.scala:33)

at scala.collection.mutable.ArrayOps$ofRef.foreach(ArrayOps.scala:186)

at scala.collection.TraversableLike$class.map(TraversableLike.scala:234)

at scala.collection.mutable.ArrayOps$ofRef.map(ArrayOps.scala:186)

at org.apache.spark.sql.SQLContext$$anonfun$beansToRows$1.apply(SQLContext.scala:1108)

at org.apache.spark.sql.SQLContext$$anonfun$beansToRows$1.apply(SQLContext.scala:1106)

at scala.collection.Iterator$$anon$11.next(Iterator.scala:410)

at scala.collection.Iterator$class.toStream(Iterator.scala:1320)

at scala.collection.AbstractIterator.toStream(Iterator.scala:1334)

at scala.collection.TraversableOnce$class.toSeq(TraversableOnce.scala:298)

at scala.collection.AbstractIterator.toSeq(Iterator.scala:1334)

at org.apache.spark.sql.SparkSession.createDataFrame(SparkSession.scala:423)

... 51 elided

```

New behavior:

```

scala> spark.createDataFrame(Seq(new Category("s-111", new SubCategory("sc-111", "Sub-1"))).asJava, classOf[Category])

res0: org.apache.spark.sql.DataFrame = [id: string, subCategory: struct<id: string, name: string>]

scala> res0.show()

+-----+---------------+

| id| subCategory|

+-----+---------------+

|s-111|[sc-111, Sub-1]|

+-----+---------------+

```

Closes#22527 from michalsenkyr/SPARK-17952.

Authored-by: Michal Senkyr <mike.senkyr@gmail.com>

Signed-off-by: Takuya UESHIN <ueshin@databricks.com>

Hi all,

Jackson is incompatible with upstream versions, therefore bump the Jackson version to a more recent one. I bumped into some issues with Azure CosmosDB that is using a more recent version of Jackson. This can be fixed by adding exclusions and then it works without any issues. So no breaking changes in the API's.

I would also consider bumping the version of Jackson in Spark. I would suggest to keep up to date with the dependencies, since in the future this issue will pop up more frequently.

## What changes were proposed in this pull request?

Bump Jackson to 2.9.6

## How was this patch tested?

Compiled and tested it locally to see if anything broke.

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#21596 from Fokko/fd-bump-jackson.

Authored-by: Fokko Driesprong <fokkodriesprong@godatadriven.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

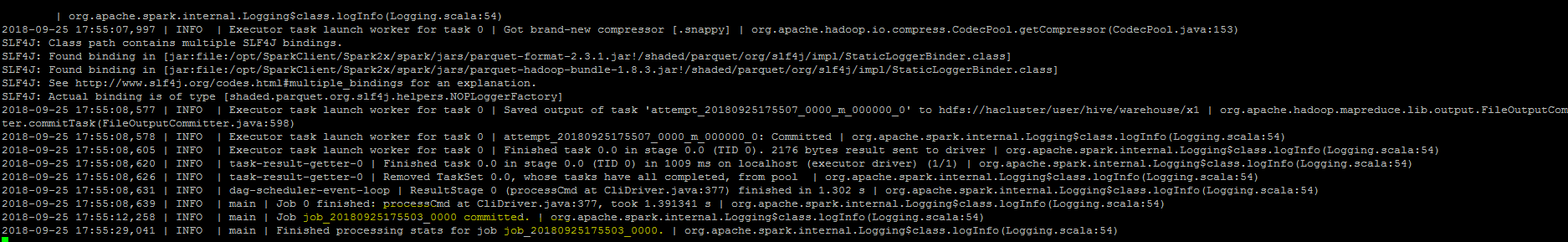

``As part of insert command in FileFormatWriter, a job context is created for handling the write operation , While initializing the job context using setupJob() API

in HadoopMapReduceCommitProtocol , we set the jobid in the Jobcontext configuration.In FileFormatWriter since we are directly getting the jobId from the map reduce JobContext the job id will come as null while adding the log. As a solution we shall get the jobID from the configuration of the map reduce Jobcontext.``

## How was this patch tested?

Manually, verified the logs after the changes.

Closes#22572 from sujith71955/master_log_issue.

Authored-by: s71955 <sujithchacko.2010@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

While working on another PR, I noticed that there is quite some legacy Java in there that can be beautified. For example the use og features from Java8, such as:

- Collection libraries

- Try-with-resource blocks

No code has been changed

What are your thoughts on this?

This makes code easier to read, and using try-with-resource makes is less likely to forget to close something.

## What changes were proposed in this pull request?

(Please fill in changes proposed in this fix)

## How was this patch tested?

(Please explain how this patch was tested. E.g. unit tests, integration tests, manual tests)

(If this patch involves UI changes, please attach a screenshot; otherwise, remove this)

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#22399 from Fokko/SPARK-25408.

Authored-by: Fokko Driesprong <fokkodriesprong@godatadriven.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

The test `cast string to timestamp` used to run for all time zones. So it run for more than 600 times. Running the tests for a significant subset of time zones is probably good enough and doing this in a randomized manner enforces anyway that we are going to test all time zones in different runs.

## How was this patch tested?

the test time reduces to 11 seconds from more than 2 minutes

Closes#22631 from mgaido91/SPARK-25605.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Reduce `DateExpressionsSuite.Hour` test time costs in Jenkins by reduce iteration times.

## How was this patch tested?

Manual tests on my local machine.

before:

```

- Hour (34 seconds, 54 milliseconds)

```

after:

```

- Hour (2 seconds, 697 milliseconds)

```

Closes#22632 from wangyum/SPARK-25606.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

The PR changes the test introduced for SPARK-22226, so that we don't run analysis and optimization on the plan. The scope of the test is code generation and running the above mentioned operation is expensive and useless for the test.

The UT was also moved to the `CodeGenerationSuite` which is a better place given the scope of the test.

## How was this patch tested?

running the UT before SPARK-22226 fails, after it passes. The execution time is about 50% the original one. On my laptop this means that the test now runs in about 23 seconds (instead of 50 seconds).

Closes#22629 from mgaido91/SPARK-25609.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Refactor `DatasetBenchmark` to use main method.

Generate benchmark result:

```sh

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.DatasetBenchmark"

```

## How was this patch tested?

manual tests

Closes#22488 from wangyum/SPARK-25479.

Lead-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

In `SparkPlan.getByteArrayRdd`, we should only call `it.hasNext` when the limit is not hit, as `iter.hasNext` may produce one row and buffer it, and cause wrong metrics.

## How was this patch tested?

new tests

Closes#22621 from cloud-fan/range.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In #20850 when writing non-null decimals, instead of zero-ing all the 16 allocated bytes, we zero-out only the padding bytes. Since we always allocate 16 bytes, if the number of bytes needed for a decimal is lower than 9, then this means that the bytes between 8 and 16 are not zero-ed.

I see 2 solutions here:

- we can zero-out all the bytes in advance as it was done before #20850 (safer solution IMHO);

- we can allocate only the needed bytes (may be a bit more efficient in terms of memory used, but I have not investigated the feasibility of this option).

Hence I propose here the first solution in order to fix the correctness issue. We can eventually switch to the second if we think is more efficient later.

## How was this patch tested?

Running the test attached in the JIRA + added UT

Closes#22602 from mgaido91/SPARK-25582.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Refactor `UnsafeArrayDataBenchmark` to use main method.

Generate benchmark result:

```sh

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.UnsafeArrayDataBenchmark"

```

## How was this patch tested?

manual tests

Closes#22491 from wangyum/SPARK-25483.

Lead-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

This PR aims to add `BloomFilterBenchmark`. For ORC data source, Apache Spark has been supporting for a long time. For Parquet data source, it's expected to be added with next Parquet release update.

## How was this patch tested?

Manual.

```scala

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.BloomFilterBenchmark"

```

Closes#22605 from dongjoon-hyun/SPARK-25589.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Rename method `benchmark` in `BenchmarkBase` as `runBenchmarkSuite `. Also add comments.

Currently the method name `benchmark` is a bit confusing. Also the name is the same as instances of `Benchmark`:

f246813afb/sql/hive/src/test/scala/org/apache/spark/sql/hive/orc/OrcReadBenchmark.scala (L330-L339)

## How was this patch tested?

Unit test.

Closes#22599 from gengliangwang/renameBenchmarkSuite.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

This patch is to bump the master branch version to 3.0.0-SNAPSHOT.

## How was this patch tested?

N/A

Closes#22606 from gatorsmile/bump3.0.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

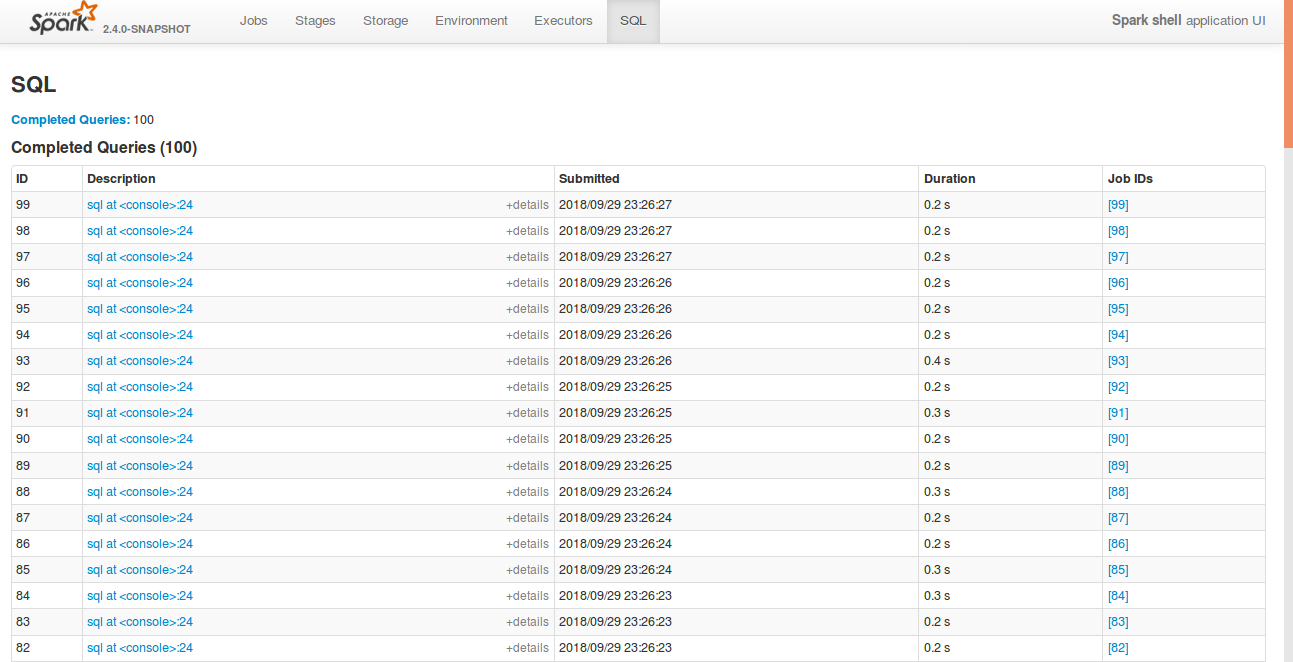

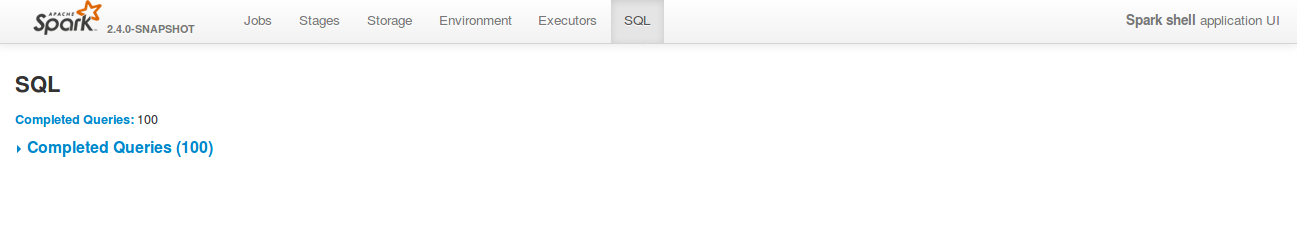

## What changes were proposed in this pull request?

Currently, SQL tab in the WEBUI doesn't support hiding table. Other tabs in the web ui like, Jobs, stages etc supports hiding table (refer SPARK-23024 https://github.com/apache/spark/pull/20216).

In this PR, added the support for hide table in the sql tab also.

## How was this patch tested?

bin/spark-shell

```

sql("create table a (id int)")

for(i <- 1 to 100) sql(s"insert into a values ($i)")

```

Open SQL tab in the web UI

**Before fix:**

**After fix:** Consistent with the other tabs.

(Please explain how this patch was tested. E.g. unit tests, integration tests, manual tests)

(If this patch involves UI changes, please attach a screenshot; otherwise, remove this)

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#22592 from shahidki31/SPARK-25575.

Authored-by: Shahid <shahidki31@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This PR does 2 things:

1. Add a new trait(`SqlBasedBenchmark`) to better support Dataset and DataFrame API.

2. Refactor `AggregateBenchmark` to use main method. Generate benchmark result:

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.AggregateBenchmark"

```

## How was this patch tested?

manual tests

Closes#22484 from wangyum/SPARK-25476.

Lead-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

#22519 introduced a bug when the attributes in the pivot clause are cosmetically different from the output ones (eg. different case). In particular, the problem is that the PR used a `Set[Attribute]` instead of an `AttributeSet`.

## How was this patch tested?

added UT

Closes#22582 from mgaido91/SPARK-25505_followup.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

This PR adds a rule to force `.toLowerCase(Locale.ROOT)` or `toUpperCase(Locale.ROOT)`.

It produces an error as below:

```

[error] Are you sure that you want to use toUpperCase or toLowerCase without the root locale? In most cases, you

[error] should use toUpperCase(Locale.ROOT) or toLowerCase(Locale.ROOT) instead.

[error] If you must use toUpperCase or toLowerCase without the root locale, wrap the code block with

[error] // scalastyle:off caselocale

[error] .toUpperCase

[error] .toLowerCase

[error] // scalastyle:on caselocale

```

This PR excludes the cases above for SQL code path for external calls like table name, column name and etc.

For test suites, or when it's clear there's no locale problem like Turkish locale problem, it uses `Locale.ROOT`.

One minor problem is, `UTF8String` has both methods, `toLowerCase` and `toUpperCase`, and the new rule detects them as well. They are ignored.

## How was this patch tested?

Manually tested, and Jenkins tests.

Closes#22581 from HyukjinKwon/SPARK-25565.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Refactor OrcReadBenchmark to use main method.

Generate benchmark result:

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "hive/test:runMain org.apache.spark.sql.hive.orc.OrcReadBenchmark"

```

## How was this patch tested?

manual tests

Closes#22580 from yucai/SPARK-25508.

Lead-authored-by: yucai <yyu1@ebay.com>

Co-authored-by: Yucai Yu <yucai.yu@foxmail.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

In the PR, I propose to extend implementation of existing method:

```

def pivot(pivotColumn: Column, values: Seq[Any]): RelationalGroupedDataset

```

to support values of the struct type. This allows pivoting by multiple columns combined by `struct`:

```

trainingSales

.groupBy($"sales.year")

.pivot(

pivotColumn = struct(lower($"sales.course"), $"training"),

values = Seq(

struct(lit("dotnet"), lit("Experts")),

struct(lit("java"), lit("Dummies")))

).agg(sum($"sales.earnings"))

```

## How was this patch tested?

Added a test for values specified via `struct` in Java and Scala.

Closes#22316 from MaxGekk/pivoting-by-multiple-columns2.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In the PR, I propose to extended the `schema_of_json()` function, and accept JSON options since they can impact on schema inferring. Purpose is to support the same options that `from_json` can use during schema inferring.

## How was this patch tested?

Added SQL, Python and Scala tests (`JsonExpressionsSuite` and `JsonFunctionsSuite`) that checks JSON options are used.

Closes#22442 from MaxGekk/schema_of_json-options.

Authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This PR aims to prevent test slowdowns at `HiveExternalCatalogVersionsSuite` by using the latest Apache Spark 2.3.2 link because the Apache mirrors will remove the old Spark 2.3.1 binaries eventually. `HiveExternalCatalogVersionsSuite` will not fail because [SPARK-24813](https://issues.apache.org/jira/browse/SPARK-24813) implements a fallback logic. However, it will cause many trials and fallbacks in all builds over `branch-2.3/branch-2.4/master`. We had better fix this issue.

## How was this patch tested?

Pass the Jenkins with the updated version.

Closes#22587 from dongjoon-hyun/SPARK-25570.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Currently, in `ParquetFilters`, if one of the children predicates is not supported by Parquet, the entire predicates will be thrown away. In fact, if the unsupported predicate is in the top level `And` condition or in the child before hitting `Not` or `Or` condition, it can be safely removed.

## How was this patch tested?

Tests are added.

Closes#22574 from dbtsai/removeUnsupportedPredicatesInParquet.

Lead-authored-by: DB Tsai <d_tsai@apple.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Co-authored-by: DB Tsai <dbtsai@dbtsai.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Use `Set` instead of `Array` to improve `accumulatorIds.contains(acc.id)` performance.

This PR close https://github.com/apache/spark/pull/22420

## How was this patch tested?

manual tests.

Benchmark code:

```scala

def benchmark(func: () => Unit): Long = {

val start = System.currentTimeMillis()

func()

val end = System.currentTimeMillis()

end - start

}

val range = Range(1, 1000000)

val set = range.toSet

val array = range.toArray

for (i <- 0 until 5) {

val setExecutionTime =

benchmark(() => for (i <- 0 until 500) { set.contains(scala.util.Random.nextInt()) })

val arrayExecutionTime =

benchmark(() => for (i <- 0 until 500) { array.contains(scala.util.Random.nextInt()) })

println(s"set execution time: $setExecutionTime, array execution time: $arrayExecutionTime")

}

```

Benchmark result:

```

set execution time: 4, array execution time: 2760

set execution time: 1, array execution time: 1911

set execution time: 3, array execution time: 2043

set execution time: 12, array execution time: 2214

set execution time: 6, array execution time: 1770

```

Closes#22579 from wangyum/SPARK-25429.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

**Description from the JIRA :**

Currently, to collect the statistics of all the columns, users need to specify the names of all the columns when calling the command "ANALYZE TABLE ... FOR COLUMNS...". This is not user friendly. Instead, we can introduce the following SQL command to achieve it without specifying the column names.

```

ANALYZE TABLE [db_name.]tablename COMPUTE STATISTICS FOR ALL COLUMNS;

```

## How was this patch tested?

Added new tests in SparkSqlParserSuite and StatisticsSuite

Closes#22566 from dilipbiswal/SPARK-25458.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

The grouping columns from a Pivot query are inferred as "input columns - pivot columns - pivot aggregate columns", where input columns are the output of the child relation of Pivot. The grouping columns will be the leading columns in the pivot output and they should preserve the same order as specified by the input. For example,

```

SELECT * FROM (

SELECT course, earnings, "a" as a, "z" as z, "b" as b, "y" as y, "c" as c, "x" as x, "d" as d, "w" as w

FROM courseSales

)

PIVOT (

sum(earnings)

FOR course IN ('dotNET', 'Java')

)

```

The output columns should be "a, z, b, y, c, x, d, w, ..." but now it is "a, b, c, d, w, x, y, z, ..."

The fix is to use the child plan's `output` instead of `outputSet` so that the order can be preserved.

## How was this patch tested?

Added UT.

Closes#22519 from maryannxue/spark-25505.

Authored-by: maryannxue <maryannxue@apache.org>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

The `show create table` will show a lot of generated attributes for views that created by older Spark version. This PR will basically revert https://issues.apache.org/jira/browse/SPARK-19272 back, so when you `DESC [FORMATTED|EXTENDED] view` will show the original view DDL text.

## How was this patch tested?

Unit test.

Closes#22458 from zheyuan28/testbranch.

Lead-authored-by: Chris Zhao <chris.zhao@databricks.com>

Co-authored-by: Christopher Zhao <chris.zhao@databricks.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

There are 2 places we check for problematic `InSubquery`: the rule `ResolveSubquery` and `InSubquery.checkInputDataTypes`. We should unify them.

## How was this patch tested?

existing tests

Closes#22563 from cloud-fan/followup.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

As per the discussion in https://github.com/apache/spark/pull/22553#pullrequestreview-159192221,

override `filterKeys` violates the documented semantics.

This PR is to remove it and add documentation.

Also fix one potential non-serializable map in `FileStreamOptions`.

The only one call of `CaseInsensitiveMap`'s `filterKeys` left is

c3c45cbd76/sql/hive/src/main/scala/org/apache/spark/sql/hive/execution/HiveOptions.scala (L88-L90)

But this one is OK.

## How was this patch tested?

Existing unit tests.

Closes#22562 from gengliangwang/SPARK-25541-FOLLOWUP.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The PR removes the `InSubquery` expression which was introduced a long time ago and its only usage was removed in 4ce970d714. Hence it is not used anymore.

## How was this patch tested?

existing UTs

Closes#22556 from mgaido91/minor_insubq.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Thanks for bahchis reporting this. It is more like a follow up work for #16581, this PR fix the scenario of Python UDF accessing attributes from both side of join in join condition.

## How was this patch tested?

Add regression tests in PySpark and `BatchEvalPythonExecSuite`.

Closes#22326 from xuanyuanking/SPARK-25314.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In ElementAt, when first argument is MapType, we should coerce the key type and the second argument based on findTightestCommonType. This is not happening currently. We may produce wrong output as we will incorrectly downcast the right hand side double expression to int.

```SQL

spark-sql> select element_at(map(1,"one", 2, "two"), 2.2);

two

```

Also, when the first argument is ArrayType, the second argument should be an integer type or a smaller integral type that can be safely casted to an integer type. Currently we may do an unsafe cast. In the following case, we should fail with an error as 2.2 is not a integer index. But instead we down cast it to int currently and return a result instead.

```SQL

spark-sql> select element_at(array(1,2), 1.24D);

1

```

This PR also supports implicit cast between two MapTypes. I have followed similar logic that exists today to do implicit casts between two array types.

## How was this patch tested?

Added new tests in DataFrameFunctionSuite, TypeCoercionSuite.

Closes#22544 from dilipbiswal/SPARK-25522.

Authored-by: Dilip Biswal <dbiswal@us.ibm.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

We have an agreement that the behavior of `from/to_utc_timestamp` is corrected, although the function itself doesn't make much sense in Spark: https://issues.apache.org/jira/browse/SPARK-23715

This PR improves the document.

## How was this patch tested?

N/A

Closes#22543 from cloud-fan/doc.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Refactor `UnsafeProjectionBenchmark` to use main method.

Generate benchmark result:

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "catalyst/test:runMain org.apache.spark.sql.UnsafeProjectionBenchmark"

```

## How was this patch tested?

manual test

Closes#22493 from yucai/SPARK-25485.

Lead-authored-by: yucai <yyu1@ebay.com>

Co-authored-by: Yucai Yu <yucai.yu@foxmail.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Refactor `ColumnarBatchBenchmark` to use main method.

Generate benchmark result:

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.vectorized.ColumnarBatchBenchmark"

```

## How was this patch tested?

manual tests

Closes#22490 from yucai/SPARK-25481.

Lead-authored-by: yucai <yyu1@ebay.com>

Co-authored-by: Yucai Yu <yucai.yu@foxmail.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

https://github.com/apache/spark/pull/20023 proposed to allow precision lose during decimal operations, to reduce the possibilities of overflow. This is a behavior change and is protected by the DECIMAL_OPERATIONS_ALLOW_PREC_LOSS config. However, that PR introduced another behavior change: pick a minimum precision for integral literals, which is not protected by a config. This PR add a new config for it: `spark.sql.literal.pickMinimumPrecision`.

This can allow users to work around issue in SPARK-25454, which is caused by a long-standing bug of negative scale.

## How was this patch tested?

a new test

Closes#22494 from cloud-fan/decimal.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Describe spark.sql.parquet.writeLegacyFormat property in Spark SQL, DataFrames and Datasets Guide.

## How was this patch tested?

N/A

Closes#22453 from seancxmao/SPARK-20937.

Authored-by: seancxmao <seancxmao@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This PR contains 3 optimizations:

1) it improves significantly the operation `--` on `AttributeSet`. As a benchmark for the `--` operation, the following code has been run

```

test("AttributeSet -- benchmark") {

val attrSetA = AttributeSet((1 to 100).map { i => AttributeReference(s"c$i", IntegerType)() })

val attrSetB = AttributeSet(attrSetA.take(80).toSeq)

val attrSetC = AttributeSet((1 to 100).map { i => AttributeReference(s"c2_$i", IntegerType)() })

val attrSetD = AttributeSet((attrSetA.take(50) ++ attrSetC.take(50)).toSeq)

val attrSetE = AttributeSet((attrSetC.take(50) ++ attrSetA.take(50)).toSeq)

val n_iter = 1000000

val t0 = System.nanoTime()

(1 to n_iter) foreach { _ =>

val r1 = attrSetA -- attrSetB

val r2 = attrSetA -- attrSetC

val r3 = attrSetA -- attrSetD

val r4 = attrSetA -- attrSetE

}

val t1 = System.nanoTime()

val totalTime = t1 - t0

println(s"Average time: ${totalTime / n_iter} us")

}

```

The results are:

```

Before PR - Average time: 67674 us (100 %)

After PR - Average time: 28827 us (42.6 %)

```

2) In `ColumnPruning`, it replaces the occurrences of `(attributeSet1 -- attributeSet2).nonEmpty` with `attributeSet1.subsetOf(attributeSet2)` which is order of magnitudes more efficient (especially where there are many attributes). Running the previous benchmark replacing `--` with `subsetOf` returns:

```

Average time: 67 us (0.1 %)

```

3) Provides a more efficient way of building `AttributeSet`s, which can greatly improve the performance of the methods `references` and `outputSet` of `Expression` and `QueryPlan`. This basically avoids unneeded operations (eg. creating many `AttributeEqual` wrapper classes which could be avoided)

The overall effect of those optimizations has been tested on `ColumnPruning` with the following benchmark:

```

test("ColumnPruning benchmark") {

val attrSetA = (1 to 100).map { i => AttributeReference(s"c$i", IntegerType)() }

val attrSetB = attrSetA.take(80)

val attrSetC = attrSetA.take(20).map(a => Alias(Add(a, Literal(1)), s"${a.name}_1")())

val input = LocalRelation(attrSetA)

val query1 = Project(attrSetB, Project(attrSetA, input)).analyze

val query2 = Project(attrSetC, Project(attrSetA, input)).analyze

val query3 = Project(attrSetA, Project(attrSetA, input)).analyze

val nIter = 100000

val t0 = System.nanoTime()

(1 to nIter).foreach { _ =>

ColumnPruning(query1)

ColumnPruning(query2)

ColumnPruning(query3)

}

val t1 = System.nanoTime()

val totalTime = t1 - t0

println(s"Average time: ${totalTime / nIter} us")

}

```

The output of the test is:

```

Before PR - Average time: 733471 us (100 %)

After PR - Average time: 362455 us (49.4 %)

```

The performance improvement has been evaluated also on the `SQLQueryTestSuite`'s queries:

```

(before) org.apache.spark.sql.catalyst.optimizer.ColumnPruning 518413198 / 1377707172 2756 / 15717

(after) org.apache.spark.sql.catalyst.optimizer.ColumnPruning 415432579 / 1121147950 2756 / 15717

% Running time 80.1% / 81.3%

```

Also other rules benefit especially from (3), despite the impact is lower, eg:

```

(before) org.apache.spark.sql.catalyst.analysis.Analyzer$ResolveReferences 307341442 / 623436806 2154 / 16480

(after) org.apache.spark.sql.catalyst.analysis.Analyzer$ResolveReferences 290511312 / 560962495 2154 / 16480

% Running time 94.5% / 90.0%

```

The reason why the impact on the `SQLQueryTestSuite`'s queries is lower compared to the other benchmark is that the optimizations are more significant when the number of attributes involved is higher. Since in the tests we often have very few attributes, the effect there is lower.

## How was this patch tested?

run benchmarks + existing UTs

Closes#22364 from mgaido91/SPARK-25379.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

`CaseInsensitiveMap` is declared as Serializable. However, it is no serializable after `-` operator or `filterKeys` method.

This PR fix the issue by overriding the operator `-` and method `filterKeys`. So the we can avoid potential `NotSerializableException` on using `CaseInsensitiveMap`.

## How was this patch tested?

New test suite.

Closes#22553 from gengliangwang/fixCaseInsensitiveMap.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Currently, Spark has 7 `withTempPath` and 6 `withSQLConf` functions. This PR aims to remove duplicated and inconsistent code and reduce them to the following meaningful implementations.

**withTempPath**

- `SQLHelper.withTempPath`: The one which was used in `SQLTestUtils`.

**withSQLConf**

- `SQLHelper.withSQLConf`: The one which was used in `PlanTest`.

- `ExecutorSideSQLConfSuite.withSQLConf`: The one which doesn't throw `AnalysisException` on StaticConf changes.

- `SQLTestUtils.withSQLConf`: The one which overrides intentionally to change the active session.

```scala

protected override def withSQLConf(pairs: (String, String)*)(f: => Unit): Unit = {

SparkSession.setActiveSession(spark)

super.withSQLConf(pairs: _*)(f)

}

```

## How was this patch tested?

Pass the Jenkins with the existing tests.

Closes#22548 from dongjoon-hyun/SPARK-25534.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>