## What changes were proposed in this pull request?

https://github.com/apache/spark/pull/7355 add support casting between IntervalType and StringType for scala interface:

```scala

import org.apache.spark.sql.types._

import org.apache.spark.sql.catalyst.expressions._

Cast(Literal("interval 3 month 1 hours"), CalendarIntervalType).eval()

res0: Any = interval 3 months 1 hours

```

But SQL interface does not support it:

```sql

scala> spark.sql("SELECT CAST('interval 3 month 1 hour' AS interval)").show

org.apache.spark.sql.catalyst.parser.ParseException:

DataType interval is not supported.(line 1, pos 41)

== SQL ==

SELECT CAST('interval 3 month 1 hour' AS interval)

-----------------------------------------^^^

at org.apache.spark.sql.catalyst.parser.AstBuilder.$anonfun$visitPrimitiveDataType$1(AstBuilder.scala:1931)

at org.apache.spark.sql.catalyst.parser.ParserUtils$.withOrigin(ParserUtils.scala:108)

at org.apache.spark.sql.catalyst.parser.AstBuilder.visitPrimitiveDataType(AstBuilder.scala:1909)

at org.apache.spark.sql.catalyst.parser.AstBuilder.visitPrimitiveDataType(AstBuilder.scala:52)

...

```

This PR add supports accepting the `interval` keyword in the schema string. So that SQL interface can support this feature.

## How was this patch tested?

unit tests

Closes#25189 from wangyum/SPARK-28435.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This PR proposes to use `InheritableThreadLocal` instead of `ThreadLocal` for current epoch in `EpochTracker`. Python UDF needs threads to write out to and read it from Python processes and when there are new threads, previously set epoch is lost.

After this PR, Python UDFs can be used at Structured Streaming with the continuous mode.

## How was this patch tested?

The test cases were written on the top of https://github.com/apache/spark/pull/24945.

Unit tests were added.

Manual tests.

Closes#24946 from HyukjinKwon/SPARK-27234.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This PR adds some tests converted from 'pgSQL/select_implicit.sql' to test UDFs

<details><summary>Diff comparing to 'pgSQL/select_implicit.sql'</summary>

<p>

```diff

... diff --git a/sql/core/src/test/resources/sql-tests/results/pgSQL/select_implicit.sql.out b/sql/core/src/test/resources/sql-tests/results/udf/pgSQL/udf-select_implicit.sql.out

index 0675820..e6a5995 100755

--- a/sql/core/src/test/resources/sql-tests/results/pgSQL/select_implicit.sql.out

+++ b/sql/core/src/test/resources/sql-tests/results/udf/pgSQL/udf-select_implicit.sql.out

-91,9 +91,11 struct<>

-- !query 11

-SELECT c, count(*) FROM test_missing_target GROUP BY test_missing_target.c ORDER BY c

+SELECT udf(c), udf(count(*)) FROM test_missing_target GROUP BY

+test_missing_target.c

+ORDER BY udf(c)

-- !query 11 schema

-struct<c:string,count(1):bigint>

+struct<CAST(udf(cast(c as string)) AS STRING):string,CAST(udf(cast(count(1) as string)) AS BIGINT):bigint>

-- !query 11 output

ABAB 2

BBBB 2

-104,9 +106,10 cccc 2

-- !query 12

-SELECT count(*) FROM test_missing_target GROUP BY test_missing_target.c ORDER BY c

+SELECT udf(count(*)) FROM test_missing_target GROUP BY test_missing_target.c

+ORDER BY udf(c)

-- !query 12 schema

-struct<count(1):bigint>

+struct<CAST(udf(cast(count(1) as string)) AS BIGINT):bigint>

-- !query 12 output

2

2

-117,18 +120,18 struct<count(1):bigint>

-- !query 13

-SELECT count(*) FROM test_missing_target GROUP BY a ORDER BY b

+SELECT udf(count(*)) FROM test_missing_target GROUP BY a ORDER BY udf(b)

-- !query 13 schema

struct<>

-- !query 13 output

org.apache.spark.sql.AnalysisException

-cannot resolve '`b`' given input columns: [count(1)]; line 1 pos 61

+cannot resolve '`b`' given input columns: [CAST(udf(cast(count(1) as string)) AS BIGINT)]; line 1 pos 70

-- !query 14

-SELECT count(*) FROM test_missing_target GROUP BY b ORDER BY b

+SELECT udf(count(*)) FROM test_missing_target GROUP BY b ORDER BY udf(b)

-- !query 14 schema

-struct<count(1):bigint>

+struct<CAST(udf(cast(count(1) as string)) AS BIGINT):bigint>

-- !query 14 output

1

2

-137,10 +140,10 struct<count(1):bigint>

-- !query 15

-SELECT test_missing_target.b, count(*)

- FROM test_missing_target GROUP BY b ORDER BY b

+SELECT udf(test_missing_target.b), udf(count(*))

+ FROM test_missing_target GROUP BY b ORDER BY udf(b)

-- !query 15 schema

-struct<b:int,count(1):bigint>

+struct<CAST(udf(cast(b as string)) AS INT):int,CAST(udf(cast(count(1) as string)) AS BIGINT):bigint>

-- !query 15 output

1 1

2 2

-149,9 +152,9 struct<b:int,count(1):bigint>

-- !query 16

-SELECT c FROM test_missing_target ORDER BY a

+SELECT udf(c) FROM test_missing_target ORDER BY udf(a)

-- !query 16 schema

-struct<c:string>

+struct<CAST(udf(cast(c as string)) AS STRING):string>

-- !query 16 output

XXXX

ABAB

-166,9 +169,9 CCCC

-- !query 17

-SELECT count(*) FROM test_missing_target GROUP BY b ORDER BY b desc

+SELECT udf(count(*)) FROM test_missing_target GROUP BY b ORDER BY udf(b) desc

-- !query 17 schema

-struct<count(1):bigint>

+struct<CAST(udf(cast(count(1) as string)) AS BIGINT):bigint>

-- !query 17 output

4

3

-177,17 +180,17 struct<count(1):bigint>

-- !query 18

-SELECT count(*) FROM test_missing_target ORDER BY 1 desc

+SELECT udf(count(*)) FROM test_missing_target ORDER BY udf(1) desc

-- !query 18 schema

-struct<count(1):bigint>

+struct<CAST(udf(cast(count(1) as string)) AS BIGINT):bigint>

-- !query 18 output

10

-- !query 19

-SELECT c, count(*) FROM test_missing_target GROUP BY 1 ORDER BY 1

+SELECT udf(c), udf(count(*)) FROM test_missing_target GROUP BY 1 ORDER BY 1

-- !query 19 schema

-struct<c:string,count(1):bigint>

+struct<CAST(udf(cast(c as string)) AS STRING):string,CAST(udf(cast(count(1) as string)) AS BIGINT):bigint>

-- !query 19 output

ABAB 2

BBBB 2

-198,18 +201,18 cccc 2

-- !query 20

-SELECT c, count(*) FROM test_missing_target GROUP BY 3

+SELECT udf(c), udf(count(*)) FROM test_missing_target GROUP BY 3

-- !query 20 schema

struct<>

-- !query 20 output

org.apache.spark.sql.AnalysisException

-GROUP BY position 3 is not in select list (valid range is [1, 2]); line 1 pos 53

+GROUP BY position 3 is not in select list (valid range is [1, 2]); line 1 pos 63

-- !query 21

-SELECT count(*) FROM test_missing_target x, test_missing_target y

- WHERE x.a = y.a

- GROUP BY b ORDER BY b

+SELECT udf(count(*)) FROM test_missing_target x, test_missing_target y

+ WHERE udf(x.a) = udf(y.a)

+ GROUP BY b ORDER BY udf(b)

-- !query 21 schema

struct<>

-- !query 21 output

-218,10 +221,10 Reference 'b' is ambiguous, could be: x.b, y.b.; line 3 pos 10

-- !query 22

-SELECT a, a FROM test_missing_target

- ORDER BY a

+SELECT udf(a), udf(a) FROM test_missing_target

+ ORDER BY udf(a)

-- !query 22 schema

-struct<a:int,a:int>

+struct<CAST(udf(cast(a as string)) AS INT):int,CAST(udf(cast(a as string)) AS INT):int>

-- !query 22 output

0 0

1 1

-236,10 +239,10 struct<a:int,a:int>

-- !query 23

-SELECT a/2, a/2 FROM test_missing_target

- ORDER BY a/2

+SELECT udf(udf(a)/2), udf(udf(a)/2) FROM test_missing_target

+ ORDER BY udf(udf(a)/2)

-- !query 23 schema

-struct<(a div 2):int,(a div 2):int>

+struct<CAST(udf(cast((cast(udf(cast(a as string)) as int) div 2) as string)) AS INT):int,CAST(udf(cast((cast(udf(cast(a as string)) as int) div 2) as string)) AS INT):int>

-- !query 23 output

0 0

0 0

-254,10 +257,10 struct<(a div 2):int,(a div 2):int>

-- !query 24

-SELECT a/2, a/2 FROM test_missing_target

- GROUP BY a/2 ORDER BY a/2

+SELECT udf(a/2), udf(a/2) FROM test_missing_target

+ GROUP BY a/2 ORDER BY udf(a/2)

-- !query 24 schema

-struct<(a div 2):int,(a div 2):int>

+struct<CAST(udf(cast((a div 2) as string)) AS INT):int,CAST(udf(cast((a div 2) as string)) AS INT):int>

-- !query 24 output

0 0

1 1

-267,11 +270,11 struct<(a div 2):int,(a div 2):int>

-- !query 25

-SELECT x.b, count(*) FROM test_missing_target x, test_missing_target y

- WHERE x.a = y.a

- GROUP BY x.b ORDER BY x.b

+SELECT udf(x.b), udf(count(*)) FROM test_missing_target x, test_missing_target y

+ WHERE udf(x.a) = udf(y.a)

+ GROUP BY x.b ORDER BY udf(x.b)

-- !query 25 schema

-struct<b:int,count(1):bigint>

+struct<CAST(udf(cast(b as string)) AS INT):int,CAST(udf(cast(count(1) as string)) AS BIGINT):bigint>

-- !query 25 output

1 1

2 2

-280,11 +283,11 struct<b:int,count(1):bigint>

-- !query 26

-SELECT count(*) FROM test_missing_target x, test_missing_target y

- WHERE x.a = y.a

- GROUP BY x.b ORDER BY x.b

+SELECT udf(count(*)) FROM test_missing_target x, test_missing_target y

+ WHERE udf(x.a) = udf(y.a)

+ GROUP BY x.b ORDER BY udf(x.b)

-- !query 26 schema

-struct<count(1):bigint>

+struct<CAST(udf(cast(count(1) as string)) AS BIGINT):bigint>

-- !query 26 output

1

2

-293,22 +296,22 struct<count(1):bigint>

-- !query 27

-SELECT a%2, count(b) FROM test_missing_target

+SELECT a%2, udf(count(udf(b))) FROM test_missing_target

GROUP BY test_missing_target.a%2

-ORDER BY test_missing_target.a%2

+ORDER BY udf(test_missing_target.a%2)

-- !query 27 schema

-struct<(a % 2):int,count(b):bigint>

+struct<(a % 2):int,CAST(udf(cast(count(cast(udf(cast(b as string)) as int)) as string)) AS BIGINT):bigint>

-- !query 27 output

0 5

1 5

-- !query 28

-SELECT count(c) FROM test_missing_target

+SELECT udf(count(c)) FROM test_missing_target

GROUP BY lower(test_missing_target.c)

-ORDER BY lower(test_missing_target.c)

+ORDER BY udf(lower(test_missing_target.c))

-- !query 28 schema

-struct<count(c):bigint>

+struct<CAST(udf(cast(count(c) as string)) AS BIGINT):bigint>

-- !query 28 output

2

3

-317,18 +320,18 struct<count(c):bigint>

-- !query 29

-SELECT count(a) FROM test_missing_target GROUP BY a ORDER BY b

+SELECT udf(count(udf(a))) FROM test_missing_target GROUP BY a ORDER BY udf(b)

-- !query 29 schema

struct<>

-- !query 29 output

org.apache.spark.sql.AnalysisException

-cannot resolve '`b`' given input columns: [count(a)]; line 1 pos 61

+cannot resolve '`b`' given input columns: [CAST(udf(cast(count(cast(udf(cast(a as string)) as int)) as string)) AS BIGINT)]; line 1 pos 75

-- !query 30

-SELECT count(b) FROM test_missing_target GROUP BY b/2 ORDER BY b/2

+SELECT udf(count(b)) FROM test_missing_target GROUP BY b/2 ORDER BY udf(b/2)

-- !query 30 schema

-struct<count(b):bigint>

+struct<CAST(udf(cast(count(b) as string)) AS BIGINT):bigint>

-- !query 30 output

1

5

-336,10 +339,10 struct<count(b):bigint>

-- !query 31

-SELECT lower(test_missing_target.c), count(c)

- FROM test_missing_target GROUP BY lower(c) ORDER BY lower(c)

+SELECT udf(lower(test_missing_target.c)), udf(count(udf(c)))

+ FROM test_missing_target GROUP BY lower(c) ORDER BY udf(lower(c))

-- !query 31 schema

-struct<lower(c):string,count(c):bigint>

+struct<CAST(udf(cast(lower(c) as string)) AS STRING):string,CAST(udf(cast(count(cast(udf(cast(c as string)) as string)) as string)) AS BIGINT):bigint>

-- !query 31 output

abab 2

bbbb 3

-348,9 +351,9 xxxx 1

-- !query 32

-SELECT a FROM test_missing_target ORDER BY upper(d)

+SELECT udf(a) FROM test_missing_target ORDER BY udf(upper(udf(d)))

-- !query 32 schema

-struct<a:int>

+struct<CAST(udf(cast(a as string)) AS INT):int>

-- !query 32 output

0

1

-365,19 +368,19 struct<a:int>

-- !query 33

-SELECT count(b) FROM test_missing_target

- GROUP BY (b + 1) / 2 ORDER BY (b + 1) / 2 desc

+SELECT udf(count(b)) FROM test_missing_target

+ GROUP BY (b + 1) / 2 ORDER BY udf((b + 1) / 2) desc

-- !query 33 schema

-struct<count(b):bigint>

+struct<CAST(udf(cast(count(b) as string)) AS BIGINT):bigint>

-- !query 33 output

7

3

-- !query 34

-SELECT count(x.a) FROM test_missing_target x, test_missing_target y

- WHERE x.a = y.a

- GROUP BY b/2 ORDER BY b/2

+SELECT udf(count(udf(x.a))) FROM test_missing_target x, test_missing_target y

+ WHERE udf(x.a) = udf(y.a)

+ GROUP BY b/2 ORDER BY udf(b/2)

-- !query 34 schema

struct<>

-- !query 34 output

-386,11 +389,12 Reference 'b' is ambiguous, could be: x.b, y.b.; line 3 pos 10

-- !query 35

-SELECT x.b/2, count(x.b) FROM test_missing_target x, test_missing_target y

- WHERE x.a = y.a

- GROUP BY x.b/2 ORDER BY x.b/2

+SELECT udf(x.b/2), udf(count(udf(x.b))) FROM test_missing_target x,

+test_missing_target y

+ WHERE udf(x.a) = udf(y.a)

+ GROUP BY x.b/2 ORDER BY udf(x.b/2)

-- !query 35 schema

-struct<(b div 2):int,count(b):bigint>

+struct<CAST(udf(cast((b div 2) as string)) AS INT):int,CAST(udf(cast(count(cast(udf(cast(b as string)) as int)) as string)) AS BIGINT):bigint>

-- !query 35 output

0 1

1 5

-398,14 +402,14 struct<(b div 2):int,count(b):bigint>

-- !query 36

-SELECT count(b) FROM test_missing_target x, test_missing_target y

- WHERE x.a = y.a

+SELECT udf(count(udf(b))) FROM test_missing_target x, test_missing_target y

+ WHERE udf(x.a) = udf(y.a)

GROUP BY x.b/2

-- !query 36 schema

struct<>

-- !query 36 output

org.apache.spark.sql.AnalysisException

-Reference 'b' is ambiguous, could be: x.b, y.b.; line 1 pos 13

+Reference 'b' is ambiguous, could be: x.b, y.b.; line 1 pos 21

-- !query 37

```

</p>

</details>

## How was this patch tested?

Tested as Guided in SPARK-27921

Closes#25233 from Udbhav30/master.

Authored-by: Udbhav30 <u.agrawal30@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

query plan was designed to be immutable, but sometimes we do allow it to carry mutable states, because of the complexity of the SQL system. One example is `TreeNodeTag`. It's a state of `TreeNode` and can be carried over during copy and transform. The adaptive execution framework relies on it to link the logical and physical plans.

This leads to a problem: when we get `QueryExecution#analyzed`, the plan can be changed unexpectedly because it's mutable. I hit a real issue in https://github.com/apache/spark/pull/25107 : I use `TreeNodeTag` to carry dataset id in logical plans. However, the analyzed plan ends up with many duplicated dataset id tags in different nodes. It turns out that, the optimizer transforms the logical plan and add the tag to more nodes.

For example, the logical plan is `SubqueryAlias(Filter(...))`, and I expect only the `SubqueryAlais` has the dataset id tag. However, the optimizer removes `SubqueryAlias` and carries over the dataset id tag to `Filter`. When I go back to the analyzed plan, both `SubqueryAlias` and `Filter` has the dataset id tag, which breaks my assumption.

Since now query plan is mutable, I think it's better to limit the life cycle of a query plan instance. We can clone the query plan between analyzer, optimizer and planner, so that the life cycle is limited in one stage.

## How was this patch tested?

new test

Closes#25111 from cloud-fan/clone.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

This PR change `CalendarIntervalType`'s readable string representation from `calendarinterval` to `interval`.

## How was this patch tested?

Existing UT

Closes#25225 from wangyum/SPARK-28469.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

Fix CSV datasource to throw `com.univocity.parsers.common.TextParsingException` with large size message, which will make log output consume large disk space.

This issue is troublesome when sometimes we need parse CSV with large size column.

This PR proposes to set CSV parser/writer settings by `setErrorContentLength(1000)` to limit the error message length.

## How was this patch tested?

Manually.

```

val s = "a" * 40 * 1000000

Seq(s).toDF.write.mode("overwrite").csv("/tmp/bogdan/es4196.csv")

spark.read .option("maxCharsPerColumn", 30000000) .csv("/tmp/bogdan/es4196.csv").count

```

**Before:**

The thrown message will include error content of about 30MB size (The column size exceed the max value 30MB, so the error content include the whole parsed content, so it is 30MB).

**After:**

The thrown message will include error content like "...aaa...aa" (the number of 'a' is 1024), i.e. limit the content size to be 1024.

Closes#25184 from WeichenXu123/limit_csv_exception_size.

Authored-by: WeichenXu <weichen.xu@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

New function `make_date()` takes 3 columns `year`, `month` and `day`, and makes new column of the `DATE` type. If values in the input columns are `null` or out of valid ranges, the function returns `null`. Valid ranges are:

- `year` - `[1, 9999]`

- `month` - `[1, 12]`

- `day` - `[1, 31]`

Also constructed date must be valid otherwise `make_date` returns `null`.

The function is implemented similarly to `make_date` in PostgreSQL: https://www.postgresql.org/docs/11/functions-datetime.html to maintain feature parity with it.

Here is an example:

```sql

select make_date(2013, 7, 15);

2013-07-15

```

## How was this patch tested?

Added new tests to `DateExpressionsSuite`.

Closes#25210 from MaxGekk/make_date-timestamp.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This PR adds some tests converted from `group-by.sql` to test UDFs. Please see contribution guide of this umbrella ticket - [SPARK-27921](https://issues.apache.org/jira/browse/SPARK-27921).

<details><summary>Diff comparing to 'group-by.sql'</summary>

<p>

```diff

diff --git a/sql/core/src/test/resources/sql-tests/results/udf/udf-group-by.sql.out b/sql/core/src/test/resources/sql-tests/results/udf/udf-group-by.sql.out

index 3a5df254f2..0118c05b1d 100644

--- a/sql/core/src/test/resources/sql-tests/results/udf/udf-group-by.sql.out

+++ b/sql/core/src/test/resources/sql-tests/results/udf/udf-group-by.sql.out

-13,26 +13,26 struct<>

-- !query 1

-SELECT a, COUNT(b) FROM testData

+SELECT udf(a), udf(COUNT(b)) FROM testData

-- !query 1 schema

struct<>

-- !query 1 output

org.apache.spark.sql.AnalysisException

-grouping expressions sequence is empty, and 'testdata.`a`' is not an aggregate function. Wrap '(count(testdata.`b`) AS `count(b)`)' in windowing function(s) or wrap 'testdata.`a`' in first() (or first_value) if you don't care which value you get.;

+grouping expressions sequence is empty, and 'testdata.`a`' is not an aggregate function. Wrap '(CAST(udf(cast(count(b) as string)) AS BIGINT) AS `CAST(udf(cast(count(b) as string)) AS BIGINT)`)' in windowing function(s) or wrap 'testdata.`a`' in first() (or first_value) if you don't care which value you get.;

-- !query 2

-SELECT COUNT(a), COUNT(b) FROM testData

+SELECT COUNT(udf(a)), udf(COUNT(b)) FROM testData

-- !query 2 schema

-struct<count(a):bigint,count(b):bigint>

+struct<count(CAST(udf(cast(a as string)) AS INT)):bigint,CAST(udf(cast(count(b) as string)) AS BIGINT):bigint>

-- !query 2 output

7 7

-- !query 3

-SELECT a, COUNT(b) FROM testData GROUP BY a

+SELECT udf(a), COUNT(udf(b)) FROM testData GROUP BY a

-- !query 3 schema

-struct<a:int,count(b):bigint>

+struct<CAST(udf(cast(a as string)) AS INT):int,count(CAST(udf(cast(b as string)) AS INT)):bigint>

-- !query 3 output

1 2

2 2

-41,7 +41,7 NULL 1

-- !query 4

-SELECT a, COUNT(b) FROM testData GROUP BY b

+SELECT udf(a), udf(COUNT(udf(b))) FROM testData GROUP BY b

-- !query 4 schema

struct<>

-- !query 4 output

-50,9 +50,9 expression 'testdata.`a`' is neither present in the group by, nor is it an aggre

-- !query 5

-SELECT COUNT(a), COUNT(b) FROM testData GROUP BY a

+SELECT COUNT(udf(a)), COUNT(udf(b)) FROM testData GROUP BY udf(a)

-- !query 5 schema

-struct<count(a):bigint,count(b):bigint>

+struct<count(CAST(udf(cast(a as string)) AS INT)):bigint,count(CAST(udf(cast(b as string)) AS INT)):bigint>

-- !query 5 output

0 1

2 2

-61,15 +61,15 struct<count(a):bigint,count(b):bigint>

-- !query 6

-SELECT 'foo', COUNT(a) FROM testData GROUP BY 1

+SELECT 'foo', COUNT(udf(a)) FROM testData GROUP BY 1

-- !query 6 schema

-struct<foo:string,count(a):bigint>

+struct<foo:string,count(CAST(udf(cast(a as string)) AS INT)):bigint>

-- !query 6 output

foo 7

-- !query 7

-SELECT 'foo' FROM testData WHERE a = 0 GROUP BY 1

+SELECT 'foo' FROM testData WHERE a = 0 GROUP BY udf(1)

-- !query 7 schema

struct<foo:string>

-- !query 7 output

-77,25 +77,25 struct<foo:string>

-- !query 8

-SELECT 'foo', APPROX_COUNT_DISTINCT(a) FROM testData WHERE a = 0 GROUP BY 1

+SELECT 'foo', udf(APPROX_COUNT_DISTINCT(udf(a))) FROM testData WHERE a = 0 GROUP BY 1

-- !query 8 schema

-struct<foo:string,approx_count_distinct(a):bigint>

+struct<foo:string,CAST(udf(cast(approx_count_distinct(cast(udf(cast(a as string)) as int), 0.05, 0, 0) as string)) AS BIGINT):bigint>

-- !query 8 output

-- !query 9

-SELECT 'foo', MAX(STRUCT(a)) FROM testData WHERE a = 0 GROUP BY 1

+SELECT 'foo', MAX(STRUCT(udf(a))) FROM testData WHERE a = 0 GROUP BY 1

-- !query 9 schema

-struct<foo:string,max(named_struct(a, a)):struct<a:int>>

+struct<foo:string,max(named_struct(col1, CAST(udf(cast(a as string)) AS INT))):struct<col1:int>>

-- !query 9 output

-- !query 10

-SELECT a + b, COUNT(b) FROM testData GROUP BY a + b

+SELECT udf(a + b), udf(COUNT(b)) FROM testData GROUP BY a + b

-- !query 10 schema

-struct<(a + b):int,count(b):bigint>

+struct<CAST(udf(cast((a + b) as string)) AS INT):int,CAST(udf(cast(count(b) as string)) AS BIGINT):bigint>

-- !query 10 output

2 1

3 2

-105,7 +105,7 NULL 1

-- !query 11

-SELECT a + 2, COUNT(b) FROM testData GROUP BY a + 1

+SELECT udf(a + 2), udf(COUNT(b)) FROM testData GROUP BY a + 1

-- !query 11 schema

struct<>

-- !query 11 output

-114,37 +114,35 expression 'testdata.`a`' is neither present in the group by, nor is it an aggre

-- !query 12

-SELECT a + 1 + 1, COUNT(b) FROM testData GROUP BY a + 1

+SELECT udf(a + 1 + 1), udf(COUNT(b)) FROM testData GROUP BY udf(a + 1)

-- !query 12 schema

-struct<((a + 1) + 1):int,count(b):bigint>

+struct<>

-- !query 12 output

-3 2

-4 2

-5 2

-NULL 1

+org.apache.spark.sql.AnalysisException

+expression 'testdata.`a`' is neither present in the group by, nor is it an aggregate function. Add to group by or wrap in first() (or first_value) if you don't care which value you get.;

-- !query 13

-SELECT SKEWNESS(a), KURTOSIS(a), MIN(a), MAX(a), AVG(a), VARIANCE(a), STDDEV(a), SUM(a), COUNT(a)

+SELECT SKEWNESS(udf(a)), udf(KURTOSIS(a)), udf(MIN(a)), MAX(udf(a)), udf(AVG(udf(a))), udf(VARIANCE(a)), STDDEV(udf(a)), udf(SUM(a)), udf(COUNT(a))

FROM testData

-- !query 13 schema

-struct<skewness(CAST(a AS DOUBLE)):double,kurtosis(CAST(a AS DOUBLE)):double,min(a):int,max(a):int,avg(a):double,var_samp(CAST(a AS DOUBLE)):double,stddev_samp(CAST(a AS DOUBLE)):double,sum(a):bigint,count(a):bigint>

+struct<skewness(CAST(CAST(udf(cast(a as string)) AS INT) AS DOUBLE)):double,CAST(udf(cast(kurtosis(cast(a as double)) as string)) AS DOUBLE):double,CAST(udf(cast(min(a) as string)) AS INT):int,max(CAST(udf(cast(a as string)) AS INT)):int,CAST(udf(cast(avg(cast(cast(udf(cast(a as string)) as int) as bigint)) as string)) AS DOUBLE):double,CAST(udf(cast(var_samp(cast(a as double)) as string)) AS DOUBLE):double,stddev_samp(CAST(CAST(udf(cast(a as string)) AS INT) AS DOUBLE)):double,CAST(udf(cast(sum(cast(a as bigint)) as string)) AS BIGINT):bigint,CAST(udf(cast(count(a) as string)) AS BIGINT):bigint>

-- !query 13 output

-0.2723801058145729 -1.5069204152249134 1 3 2.142857142857143 0.8095238095238094 0.8997354108424372 15 7

-- !query 14

-SELECT COUNT(DISTINCT b), COUNT(DISTINCT b, c) FROM (SELECT 1 AS a, 2 AS b, 3 AS c) GROUP BY a

+SELECT COUNT(DISTINCT udf(b)), udf(COUNT(DISTINCT b, c)) FROM (SELECT 1 AS a, 2 AS b, 3 AS c) GROUP BY a

-- !query 14 schema

-struct<count(DISTINCT b):bigint,count(DISTINCT b, c):bigint>

+struct<count(DISTINCT CAST(udf(cast(b as string)) AS INT)):bigint,CAST(udf(cast(count(distinct b, c) as string)) AS BIGINT):bigint>

-- !query 14 output

1 1

-- !query 15

-SELECT a AS k, COUNT(b) FROM testData GROUP BY k

+SELECT a AS k, COUNT(udf(b)) FROM testData GROUP BY k

-- !query 15 schema

-struct<k:int,count(b):bigint>

+struct<k:int,count(CAST(udf(cast(b as string)) AS INT)):bigint>

-- !query 15 output

1 2

2 2

-153,21 +151,21 NULL 1

-- !query 16

-SELECT a AS k, COUNT(b) FROM testData GROUP BY k HAVING k > 1

+SELECT a AS k, udf(COUNT(b)) FROM testData GROUP BY k HAVING k > 1

-- !query 16 schema

-struct<k:int,count(b):bigint>

+struct<k:int,CAST(udf(cast(count(b) as string)) AS BIGINT):bigint>

-- !query 16 output

2 2

3 2

-- !query 17

-SELECT COUNT(b) AS k FROM testData GROUP BY k

+SELECT udf(COUNT(b)) AS k FROM testData GROUP BY k

-- !query 17 schema

struct<>

-- !query 17 output

org.apache.spark.sql.AnalysisException

-aggregate functions are not allowed in GROUP BY, but found count(testdata.`b`);

+aggregate functions are not allowed in GROUP BY, but found CAST(udf(cast(count(b) as string)) AS BIGINT);

-- !query 18

-180,7 +178,7 struct<>

-- !query 19

-SELECT k AS a, COUNT(v) FROM testDataHasSameNameWithAlias GROUP BY a

+SELECT k AS a, udf(COUNT(udf(v))) FROM testDataHasSameNameWithAlias GROUP BY a

-- !query 19 schema

struct<>

-- !query 19 output

-197,32 +195,32 spark.sql.groupByAliases false

-- !query 21

-SELECT a AS k, COUNT(b) FROM testData GROUP BY k

+SELECT a AS k, udf(COUNT(udf(b))) FROM testData GROUP BY k

-- !query 21 schema

struct<>

-- !query 21 output

org.apache.spark.sql.AnalysisException

-cannot resolve '`k`' given input columns: [testdata.a, testdata.b]; line 1 pos 47

+cannot resolve '`k`' given input columns: [testdata.a, testdata.b]; line 1 pos 57

-- !query 22

-SELECT a, COUNT(1) FROM testData WHERE false GROUP BY a

+SELECT a, COUNT(udf(1)) FROM testData WHERE false GROUP BY a

-- !query 22 schema

-struct<a:int,count(1):bigint>

+struct<a:int,count(CAST(udf(cast(1 as string)) AS INT)):bigint>

-- !query 22 output

-- !query 23

-SELECT COUNT(1) FROM testData WHERE false

+SELECT udf(COUNT(1)) FROM testData WHERE false

-- !query 23 schema

-struct<count(1):bigint>

+struct<CAST(udf(cast(count(1) as string)) AS BIGINT):bigint>

-- !query 23 output

0

-- !query 24

-SELECT 1 FROM (SELECT COUNT(1) FROM testData WHERE false) t

+SELECT 1 FROM (SELECT udf(COUNT(1)) FROM testData WHERE false) t

-- !query 24 schema

struct<1:int>

-- !query 24 output

-232,7 +230,7 struct<1:int>

-- !query 25

SELECT 1 from (

SELECT 1 AS z,

- MIN(a.x)

+ udf(MIN(a.x))

FROM (select 1 as x) a

WHERE false

) b

-244,32 +242,32 struct<1:int>

-- !query 26

-SELECT corr(DISTINCT x, y), corr(DISTINCT y, x), count(*)

+SELECT corr(DISTINCT x, y), udf(corr(DISTINCT y, x)), count(*)

FROM (VALUES (1, 1), (2, 2), (2, 2)) t(x, y)

-- !query 26 schema

-struct<corr(DISTINCT CAST(x AS DOUBLE), CAST(y AS DOUBLE)):double,corr(DISTINCT CAST(y AS DOUBLE), CAST(x AS DOUBLE)):double,count(1):bigint>

+struct<corr(DISTINCT CAST(x AS DOUBLE), CAST(y AS DOUBLE)):double,CAST(udf(cast(corr(distinct cast(y as double), cast(x as double)) as string)) AS DOUBLE):double,count(1):bigint>

-- !query 26 output

1.0 1.0 3

-- !query 27

-SELECT 1 FROM range(10) HAVING true

+SELECT udf(1) FROM range(10) HAVING true

-- !query 27 schema

-struct<1:int>

+struct<CAST(udf(cast(1 as string)) AS INT):int>

-- !query 27 output

1

-- !query 28

-SELECT 1 FROM range(10) HAVING MAX(id) > 0

+SELECT udf(udf(1)) FROM range(10) HAVING MAX(id) > 0

-- !query 28 schema

-struct<1:int>

+struct<CAST(udf(cast(cast(udf(cast(1 as string)) as int) as string)) AS INT):int>

-- !query 28 output

1

-- !query 29

-SELECT id FROM range(10) HAVING id > 0

+SELECT udf(id) FROM range(10) HAVING id > 0

-- !query 29 schema

struct<>

-- !query 29 output

-291,33 +289,33 struct<>

-- !query 31

-SELECT every(v), some(v), any(v) FROM test_agg WHERE 1 = 0

+SELECT udf(every(v)), udf(some(v)), any(v) FROM test_agg WHERE 1 = 0

-- !query 31 schema

-struct<every(v):boolean,some(v):boolean,any(v):boolean>

+struct<CAST(udf(cast(every(v) as string)) AS BOOLEAN):boolean,CAST(udf(cast(some(v) as string)) AS BOOLEAN):boolean,any(v):boolean>

-- !query 31 output

NULL NULL NULL

-- !query 32

-SELECT every(v), some(v), any(v) FROM test_agg WHERE k = 4

+SELECT udf(every(udf(v))), some(v), any(v) FROM test_agg WHERE k = 4

-- !query 32 schema

-struct<every(v):boolean,some(v):boolean,any(v):boolean>

+struct<CAST(udf(cast(every(cast(udf(cast(v as string)) as boolean)) as string)) AS BOOLEAN):boolean,some(v):boolean,any(v):boolean>

-- !query 32 output

NULL NULL NULL

-- !query 33

-SELECT every(v), some(v), any(v) FROM test_agg WHERE k = 5

+SELECT every(v), udf(some(v)), any(v) FROM test_agg WHERE k = 5

-- !query 33 schema

-struct<every(v):boolean,some(v):boolean,any(v):boolean>

+struct<every(v):boolean,CAST(udf(cast(some(v) as string)) AS BOOLEAN):boolean,any(v):boolean>

-- !query 33 output

false true true

-- !query 34

-SELECT k, every(v), some(v), any(v) FROM test_agg GROUP BY k

+SELECT k, every(v), udf(some(v)), any(v) FROM test_agg GROUP BY k

-- !query 34 schema

-struct<k:int,every(v):boolean,some(v):boolean,any(v):boolean>

+struct<k:int,every(v):boolean,CAST(udf(cast(some(v) as string)) AS BOOLEAN):boolean,any(v):boolean>

-- !query 34 output

1 false true true

2 true true true

-327,9 +325,9 struct<k:int,every(v):boolean,some(v):boolean,any(v):boolean>

-- !query 35

-SELECT k, every(v) FROM test_agg GROUP BY k HAVING every(v) = false

+SELECT udf(k), every(v) FROM test_agg GROUP BY k HAVING every(v) = false

-- !query 35 schema

-struct<k:int,every(v):boolean>

+struct<CAST(udf(cast(k as string)) AS INT):int,every(v):boolean>

-- !query 35 output

1 false

3 false

-337,16 +335,16 struct<k:int,every(v):boolean>

-- !query 36

-SELECT k, every(v) FROM test_agg GROUP BY k HAVING every(v) IS NULL

+SELECT k, udf(every(v)) FROM test_agg GROUP BY k HAVING every(v) IS NULL

-- !query 36 schema

-struct<k:int,every(v):boolean>

+struct<k:int,CAST(udf(cast(every(v) as string)) AS BOOLEAN):boolean>

-- !query 36 output

4 NULL

-- !query 37

SELECT k,

- Every(v) AS every

+ udf(Every(v)) AS every

FROM test_agg

WHERE k = 2

AND v IN (SELECT Any(v)

-360,7 +358,7 struct<k:int,every:boolean>

-- !query 38

-SELECT k,

+SELECT udf(udf(k)),

Every(v) AS every

FROM test_agg

WHERE k = 2

-369,45 +367,45 WHERE k = 2

WHERE k = 1)

GROUP BY k

-- !query 38 schema

-struct<k:int,every:boolean>

+struct<CAST(udf(cast(cast(udf(cast(k as string)) as int) as string)) AS INT):int,every:boolean>

-- !query 38 output

-- !query 39

-SELECT every(1)

+SELECT every(udf(1))

-- !query 39 schema

struct<>

-- !query 39 output

org.apache.spark.sql.AnalysisException

-cannot resolve 'every(1)' due to data type mismatch: Input to function 'every' should have been boolean, but it's [int].; line 1 pos 7

+cannot resolve 'every(CAST(udf(cast(1 as string)) AS INT))' due to data type mismatch: Input to function 'every' should have been boolean, but it's [int].; line 1 pos 7

-- !query 40

-SELECT some(1S)

+SELECT some(udf(1S))

-- !query 40 schema

struct<>

-- !query 40 output

org.apache.spark.sql.AnalysisException

-cannot resolve 'some(1S)' due to data type mismatch: Input to function 'some' should have been boolean, but it's [smallint].; line 1 pos 7

+cannot resolve 'some(CAST(udf(cast(1 as string)) AS SMALLINT))' due to data type mismatch: Input to function 'some' should have been boolean, but it's [smallint].; line 1 pos 7

-- !query 41

-SELECT any(1L)

+SELECT any(udf(1L))

-- !query 41 schema

struct<>

-- !query 41 output

org.apache.spark.sql.AnalysisException

-cannot resolve 'any(1L)' due to data type mismatch: Input to function 'any' should have been boolean, but it's [bigint].; line 1 pos 7

+cannot resolve 'any(CAST(udf(cast(1 as string)) AS BIGINT))' due to data type mismatch: Input to function 'any' should have been boolean, but it's [bigint].; line 1 pos 7

-- !query 42

-SELECT every("true")

+SELECT udf(every("true"))

-- !query 42 schema

struct<>

-- !query 42 output

org.apache.spark.sql.AnalysisException

-cannot resolve 'every('true')' due to data type mismatch: Input to function 'every' should have been boolean, but it's [string].; line 1 pos 7

+cannot resolve 'every('true')' due to data type mismatch: Input to function 'every' should have been boolean, but it's [string].; line 1 pos 11

-- !query 43

-428,9 +426,9 struct<k:int,v:boolean,every(v) OVER (PARTITION BY k ORDER BY v ASC NULLS FIRST

-- !query 44

-SELECT k, v, some(v) OVER (PARTITION BY k ORDER BY v) FROM test_agg

+SELECT k, udf(udf(v)), some(v) OVER (PARTITION BY k ORDER BY v) FROM test_agg

-- !query 44 schema

-struct<k:int,v:boolean,some(v) OVER (PARTITION BY k ORDER BY v ASC NULLS FIRST RANGE BETWEEN UNBOUNDED PRECEDING AND CURRENT ROW):boolean>

+struct<k:int,CAST(udf(cast(cast(udf(cast(v as string)) as boolean) as string)) AS BOOLEAN):boolean,some(v) OVER (PARTITION BY k ORDER BY v ASC NULLS FIRST RANGE BETWEEN UNBOUNDED PRECEDING AND CURRENT ROW):boolean>

-- !query 44 output

1 false false

1 true true

-445,9 +443,9 struct<k:int,v:boolean,some(v) OVER (PARTITION BY k ORDER BY v ASC NULLS FIRST R

-- !query 45

-SELECT k, v, any(v) OVER (PARTITION BY k ORDER BY v) FROM test_agg

+SELECT udf(udf(k)), v, any(v) OVER (PARTITION BY k ORDER BY v) FROM test_agg

-- !query 45 schema

-struct<k:int,v:boolean,any(v) OVER (PARTITION BY k ORDER BY v ASC NULLS FIRST RANGE BETWEEN UNBOUNDED PRECEDING AND CURRENT ROW):boolean>

+struct<CAST(udf(cast(cast(udf(cast(k as string)) as int) as string)) AS INT):int,v:boolean,any(v) OVER (PARTITION BY k ORDER BY v ASC NULLS FIRST RANGE BETWEEN UNBOUNDED PRECEDING AND CURRENT ROW):boolean>

-- !query 45 output

1 false false

1 true true

-462,17 +460,17 struct<k:int,v:boolean,any(v) OVER (PARTITION BY k ORDER BY v ASC NULLS FIRST RA

-- !query 46

-SELECT count(*) FROM test_agg HAVING count(*) > 1L

+SELECT udf(count(*)) FROM test_agg HAVING count(*) > 1L

-- !query 46 schema

-struct<count(1):bigint>

+struct<CAST(udf(cast(count(1) as string)) AS BIGINT):bigint>

-- !query 46 output

10

-- !query 47

-SELECT k, max(v) FROM test_agg GROUP BY k HAVING max(v) = true

+SELECT k, udf(max(v)) FROM test_agg GROUP BY k HAVING max(v) = true

-- !query 47 schema

-struct<k:int,max(v):boolean>

+struct<k:int,CAST(udf(cast(max(v) as string)) AS BOOLEAN):boolean>

-- !query 47 output

1 true

2 true

-480,7 +478,7 struct<k:int,max(v):boolean>

-- !query 48

-SELECT * FROM (SELECT COUNT(*) AS cnt FROM test_agg) WHERE cnt > 1L

+SELECT * FROM (SELECT udf(COUNT(*)) AS cnt FROM test_agg) WHERE cnt > 1L

-- !query 48 schema

struct<cnt:bigint>

-- !query 48 output

-488,7 +486,7 struct<cnt:bigint>

-- !query 49

-SELECT count(*) FROM test_agg WHERE count(*) > 1L

+SELECT udf(count(*)) FROM test_agg WHERE count(*) > 1L

-- !query 49 schema

struct<>

-- !query 49 output

-500,7 +498,7 Invalid expressions: [count(1)];

-- !query 50

-SELECT count(*) FROM test_agg WHERE count(*) + 1L > 1L

+SELECT udf(count(*)) FROM test_agg WHERE count(*) + 1L > 1L

-- !query 50 schema

struct<>

-- !query 50 output

-512,7 +510,7 Invalid expressions: [count(1)];

-- !query 51

-SELECT count(*) FROM test_agg WHERE k = 1 or k = 2 or count(*) + 1L > 1L or max(k) > 1

+SELECT udf(count(*)) FROM test_agg WHERE k = 1 or k = 2 or count(*) + 1L > 1L or max(k) > 1

-- !query 51 schema

struct<>

-- !query 51 output

```

</p>

</details>

## How was this patch tested?

Tested as guided in [SPARK-27921](https://issues.apache.org/jira/browse/SPARK-27921).

Verified pandas & pyarrow versions:

```$python3

Python 3.6.8 (default, Jan 14 2019, 11:02:34)

[GCC 8.0.1 20180414 (experimental) [trunk revision 259383]] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> import pandas

>>> import pyarrow

>>> pyarrow.__version__

'0.14.0'

>>> pandas.__version__

'0.24.2'

```

From the sql output it seems that sql statements are evaluated correctly given that udf returns a string and may change results as Null will be returned as None and will be counted in returned values.

Closes#25098 from skonto/group-by.sql.

Authored-by: Stavros Kontopoulos <st.kontopoulos@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Implements the `REPLACE TABLE` and `REPLACE TABLE AS SELECT` logical plans. `REPLACE TABLE` is now a valid operation in spark-sql provided that the tables being modified are managed by V2 catalogs.

This also introduces an atomic mix-in that table catalogs can choose to implement. Table catalogs can now implement `TransactionalTableCatalog`. The semantics of this API are that table creation and replacement can be "staged" and then "committed".

On the execution of `REPLACE TABLE AS SELECT`, `REPLACE TABLE`, and `CREATE TABLE AS SELECT`, if the catalog implements transactional operations, the physical plan will use said functionality. Otherwise, these operations fall back on non-atomic variants. For `REPLACE TABLE` in particular, the usage of non-atomic operations can unfortunately lead to inconsistent state.

## How was this patch tested?

Unit tests - multiple additions to `DataSourceV2SQLSuite`.

Closes#24798 from mccheah/spark-27724.

Authored-by: mcheah <mcheah@palantir.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This pr is to remove the unnecessary test in DataFrameSuite.

## How was this patch tested?

N/A

Closes#25216 from maropu/SPARK-28189-FOLLOWUP.

Authored-by: Takeshi Yamamuro <yamamuro@apache.org>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This PR adds some tests converted from `inline-table.sql` to test UDFs. Please see contribution guide of this umbrella ticket - [SPARK-27921](https://issues.apache.org/jira/browse/SPARK-27921).

<details><summary>Diff comparing to 'inline-table.sql'</summary>

<p>

```diff

diff --git a/sql/core/src/test/resources/sql-tests/results/inline-table.sql.out b/sql/core/src/test/resources/sql-tests/results/udf/udf-inline-table.sql.out

index 4e80f0bda5..2cf24e50c8 100644

--- a/sql/core/src/test/resources/sql-tests/results/inline-table.sql.out

+++ b/sql/core/src/test/resources/sql-tests/results/udf/udf-inline-table.sql.out

-3,33 +3,33

-- !query 0

-select * from values ("one", 1)

+select udf(col1), udf(col2) from values ("one", 1)

-- !query 0 schema

-struct<col1:string,col2:int>

+struct<CAST(udf(cast(col1 as string)) AS STRING):string,CAST(udf(cast(col2 as string)) AS INT):int>

-- !query 0 output

one 1

-- !query 1

-select * from values ("one", 1) as data

+select udf(col1), udf(udf(col2)) from values ("one", 1) as data

-- !query 1 schema

-struct<col1:string,col2:int>

+struct<CAST(udf(cast(col1 as string)) AS STRING):string,CAST(udf(cast(cast(udf(cast(col2 as string)) as int) as string)) AS INT):int>

-- !query 1 output

one 1

-- !query 2

-select * from values ("one", 1) as data(a, b)

+select udf(a), b from values ("one", 1) as data(a, b)

-- !query 2 schema

-struct<a:string,b:int>

+struct<CAST(udf(cast(a as string)) AS STRING):string,b:int>

-- !query 2 output

one 1

-- !query 3

-select * from values 1, 2, 3 as data(a)

+select udf(a) from values 1, 2, 3 as data(a)

-- !query 3 schema

-struct<a:int>

+struct<CAST(udf(cast(a as string)) AS INT):int>

-- !query 3 output

1

2

-37,9 +37,9 struct<a:int>

-- !query 4

-select * from values ("one", 1), ("two", 2), ("three", null) as data(a, b)

+select udf(a), b from values ("one", 1), ("two", 2), ("three", null) as data(a, b)

-- !query 4 schema

-struct<a:string,b:int>

+struct<CAST(udf(cast(a as string)) AS STRING):string,b:int>

-- !query 4 output

one 1

three NULL

-47,107 +47,107 two 2

-- !query 5

-select * from values ("one", null), ("two", null) as data(a, b)

+select udf(a), b from values ("one", null), ("two", null) as data(a, b)

-- !query 5 schema

-struct<a:string,b:null>

+struct<CAST(udf(cast(a as string)) AS STRING):string,b:null>

-- !query 5 output

one NULL

two NULL

-- !query 6

-select * from values ("one", 1), ("two", 2L) as data(a, b)

+select udf(a), b from values ("one", 1), ("two", 2L) as data(a, b)

-- !query 6 schema

-struct<a:string,b:bigint>

+struct<CAST(udf(cast(a as string)) AS STRING):string,b:bigint>

-- !query 6 output

one 1

two 2

-- !query 7

-select * from values ("one", 1 + 0), ("two", 1 + 3L) as data(a, b)

+select udf(udf(a)), udf(b) from values ("one", 1 + 0), ("two", 1 + 3L) as data(a, b)

-- !query 7 schema

-struct<a:string,b:bigint>

+struct<CAST(udf(cast(cast(udf(cast(a as string)) as string) as string)) AS STRING):string,CAST(udf(cast(b as string)) AS BIGINT):bigint>

-- !query 7 output

one 1

two 4

-- !query 8

-select * from values ("one", array(0, 1)), ("two", array(2, 3)) as data(a, b)

+select udf(a), b from values ("one", array(0, 1)), ("two", array(2, 3)) as data(a, b)

-- !query 8 schema

-struct<a:string,b:array<int>>

+struct<CAST(udf(cast(a as string)) AS STRING):string,b:array<int>>

-- !query 8 output

one [0,1]

two [2,3]

-- !query 9

-select * from values ("one", 2.0), ("two", 3.0D) as data(a, b)

+select udf(a), b from values ("one", 2.0), ("two", 3.0D) as data(a, b)

-- !query 9 schema

-struct<a:string,b:double>

+struct<CAST(udf(cast(a as string)) AS STRING):string,b:double>

-- !query 9 output

one 2.0

two 3.0

-- !query 10

-select * from values ("one", rand(5)), ("two", 3.0D) as data(a, b)

+select udf(a), b from values ("one", rand(5)), ("two", 3.0D) as data(a, b)

-- !query 10 schema

struct<>

-- !query 10 output

org.apache.spark.sql.AnalysisException

-cannot evaluate expression rand(5) in inline table definition; line 1 pos 29

+cannot evaluate expression rand(5) in inline table definition; line 1 pos 37

-- !query 11

-select * from values ("one", 2.0), ("two") as data(a, b)

+select udf(a), udf(b) from values ("one", 2.0), ("two") as data(a, b)

-- !query 11 schema

struct<>

-- !query 11 output

org.apache.spark.sql.AnalysisException

-expected 2 columns but found 1 columns in row 1; line 1 pos 14

+expected 2 columns but found 1 columns in row 1; line 1 pos 27

-- !query 12

-select * from values ("one", array(0, 1)), ("two", struct(1, 2)) as data(a, b)

+select udf(a), udf(b) from values ("one", array(0, 1)), ("two", struct(1, 2)) as data(a, b)

-- !query 12 schema

struct<>

-- !query 12 output

org.apache.spark.sql.AnalysisException

-incompatible types found in column b for inline table; line 1 pos 14

+incompatible types found in column b for inline table; line 1 pos 27

-- !query 13

-select * from values ("one"), ("two") as data(a, b)

+select udf(a), udf(b) from values ("one"), ("two") as data(a, b)

-- !query 13 schema

struct<>

-- !query 13 output

org.apache.spark.sql.AnalysisException

-expected 2 columns but found 1 columns in row 0; line 1 pos 14

+expected 2 columns but found 1 columns in row 0; line 1 pos 27

-- !query 14

-select * from values ("one", random_not_exist_func(1)), ("two", 2) as data(a, b)

+select udf(a), udf(b) from values ("one", random_not_exist_func(1)), ("two", 2) as data(a, b)

-- !query 14 schema

struct<>

-- !query 14 output

org.apache.spark.sql.AnalysisException

-Undefined function: 'random_not_exist_func'. This function is neither a registered temporary function nor a permanent function registered in the database 'default'.; line 1 pos 29

+Undefined function: 'random_not_exist_func'. This function is neither a registered temporary function nor a permanent function registered in the database 'default'.; line 1 pos 42

-- !query 15

-select * from values ("one", count(1)), ("two", 2) as data(a, b)

+select udf(a), udf(b) from values ("one", count(1)), ("two", 2) as data(a, b)

-- !query 15 schema

struct<>

-- !query 15 output

org.apache.spark.sql.AnalysisException

-cannot evaluate expression count(1) in inline table definition; line 1 pos 29

+cannot evaluate expression count(1) in inline table definition; line 1 pos 42

-- !query 16

-select * from values (timestamp('1991-12-06 00:00:00.0'), array(timestamp('1991-12-06 01:00:00.0'), timestamp('1991-12-06 12:00:00.0'))) as data(a, b)

+select udf(a), b from values (timestamp('1991-12-06 00:00:00.0'), array(timestamp('1991-12-06 01:00:00.0'), timestamp('1991-12-06 12:00:00.0'))) as data(a, b)

-- !query 16 schema

-struct<a:timestamp,b:array<timestamp>>

+struct<CAST(udf(cast(a as string)) AS TIMESTAMP):timestamp,b:array<timestamp>>

-- !query 16 output

1991-12-06 00:00:00 [1991-12-06 01:00:00.0,1991-12-06 12:00:00.0]

```

</p>

</details>

## How was this patch tested?

Tested as guided in [SPARK-27921](https://issues.apache.org/jira/browse/SPARK-27921).

Closes#25124 from imback82/inline-table-sql.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This PR adds some tests converted from group-analytics.sql to test UDFs. Please see contribution guide of this umbrella ticket - SPARK-27921.

<details><summary>Diff comparing to 'group-analytics.sql'</summary>

<p>

```diff

diff --git a/sql/core/src/test/resources/sql-tests/results/udf/udf-group-analytics.sql.out b/sql/core/src/test/resources/sql-tests/results/udf/udf-group-analytics.sql.out

index 31e9e08e2c..3439a05727 100644

--- a/sql/core/src/test/resources/sql-tests/results/udf/udf-group-analytics.sql.out

+++ b/sql/core/src/test/resources/sql-tests/results/udf/udf-group-analytics.sql.out

-13,9 +13,9 struct<>

-- !query 1

-SELECT a + b, b, udf(SUM(a - b)) FROM testData GROUP BY a + b, b WITH CUBE

+SELECT a + b, b, SUM(a - b) FROM testData GROUP BY a + b, b WITH CUBE

-- !query 1 schema

-struct<(a + b):int,b:int,CAST(udf(cast(sum(cast((a - b) as bigint)) as string)) AS BIGINT):bigint>

+struct<(a + b):int,b:int,sum((a - b)):bigint>

-- !query 1 output

2 1 0

2 NULL 0

-33,9 +33,9 NULL NULL 3

-- !query 2

-SELECT a, udf(b), SUM(b) FROM testData GROUP BY a, b WITH CUBE

+SELECT a, b, SUM(b) FROM testData GROUP BY a, b WITH CUBE

-- !query 2 schema

-struct<a:int,CAST(udf(cast(b as string)) AS INT):int,sum(b):bigint>

+struct<a:int,b:int,sum(b):bigint>

-- !query 2 output

1 1 1

1 2 2

-52,9 +52,9 NULL NULL 9

-- !query 3

-SELECT udf(a + b), b, SUM(a - b) FROM testData GROUP BY a + b, b WITH ROLLUP

+SELECT a + b, b, SUM(a - b) FROM testData GROUP BY a + b, b WITH ROLLUP

-- !query 3 schema

-struct<CAST(udf(cast((a + b) as string)) AS INT):int,b:int,sum((a - b)):bigint>

+struct<(a + b):int,b:int,sum((a - b)):bigint>

-- !query 3 output

2 1 0

2 NULL 0

-70,9 +70,9 NULL NULL 3

-- !query 4

-SELECT a, b, udf(SUM(b)) FROM testData GROUP BY a, b WITH ROLLUP

+SELECT a, b, SUM(b) FROM testData GROUP BY a, b WITH ROLLUP

-- !query 4 schema

-struct<a:int,b:int,CAST(udf(cast(sum(cast(b as bigint)) as string)) AS BIGINT):bigint>

+struct<a:int,b:int,sum(b):bigint>

-- !query 4 output

1 1 1

1 2 2

-97,7 +97,7 struct<>

-- !query 6

-SELECT course, year, SUM(earnings) FROM courseSales GROUP BY ROLLUP(course, year) ORDER BY udf(course), year

+SELECT course, year, SUM(earnings) FROM courseSales GROUP BY ROLLUP(course, year) ORDER BY course, year

-- !query 6 schema

struct<course:string,year:int,sum(earnings):bigint>

-- !query 6 output

-111,7 +111,7 dotNET 2013 48000

-- !query 7

-SELECT course, year, SUM(earnings) FROM courseSales GROUP BY CUBE(course, year) ORDER BY course, udf(year)

+SELECT course, year, SUM(earnings) FROM courseSales GROUP BY CUBE(course, year) ORDER BY course, year

-- !query 7 schema

struct<course:string,year:int,sum(earnings):bigint>

-- !query 7 output

-127,9 +127,9 dotNET 2013 48000

-- !query 8

-SELECT course, udf(year), SUM(earnings) FROM courseSales GROUP BY course, year GROUPING SETS(course, year)

+SELECT course, year, SUM(earnings) FROM courseSales GROUP BY course, year GROUPING SETS(course, year)

-- !query 8 schema

-struct<course:string,CAST(udf(cast(year as string)) AS INT):int,sum(earnings):bigint>

+struct<course:string,year:int,sum(earnings):bigint>

-- !query 8 output

Java NULL 50000

NULL 2012 35000

-138,26 +138,26 dotNET NULL 63000

-- !query 9

-SELECT course, year, udf(SUM(earnings)) FROM courseSales GROUP BY course, year GROUPING SETS(course)

+SELECT course, year, SUM(earnings) FROM courseSales GROUP BY course, year GROUPING SETS(course)

-- !query 9 schema

-struct<course:string,year:int,CAST(udf(cast(sum(cast(earnings as bigint)) as string)) AS BIGINT):bigint>

+struct<course:string,year:int,sum(earnings):bigint>

-- !query 9 output

Java NULL 50000

dotNET NULL 63000

-- !query 10

-SELECT udf(course), year, SUM(earnings) FROM courseSales GROUP BY course, year GROUPING SETS(year)

+SELECT course, year, SUM(earnings) FROM courseSales GROUP BY course, year GROUPING SETS(year)

-- !query 10 schema

-struct<CAST(udf(cast(course as string)) AS STRING):string,year:int,sum(earnings):bigint>

+struct<course:string,year:int,sum(earnings):bigint>

-- !query 10 output

NULL 2012 35000

NULL 2013 78000

-- !query 11

-SELECT course, udf(SUM(earnings)) AS sum FROM courseSales

-GROUP BY course, earnings GROUPING SETS((), (course), (course, earnings)) ORDER BY course, udf(sum)

+SELECT course, SUM(earnings) AS sum FROM courseSales

+GROUP BY course, earnings GROUPING SETS((), (course), (course, earnings)) ORDER BY course, sum

-- !query 11 schema

struct<course:string,sum:bigint>

-- !query 11 output

-173,7 +173,7 dotNET 63000

-- !query 12

SELECT course, SUM(earnings) AS sum, GROUPING_ID(course, earnings) FROM courseSales

-GROUP BY course, earnings GROUPING SETS((), (course), (course, earnings)) ORDER BY udf(course), sum

+GROUP BY course, earnings GROUPING SETS((), (course), (course, earnings)) ORDER BY course, sum

-- !query 12 schema

struct<course:string,sum:bigint,grouping_id(course, earnings):int>

-- !query 12 output

-188,10 +188,10 dotNET 63000 1

-- !query 13

-SELECT udf(course), udf(year), GROUPING(course), GROUPING(year), GROUPING_ID(course, year) FROM courseSales

+SELECT course, year, GROUPING(course), GROUPING(year), GROUPING_ID(course, year) FROM courseSales

GROUP BY CUBE(course, year)

-- !query 13 schema

-struct<CAST(udf(cast(course as string)) AS STRING):string,CAST(udf(cast(year as string)) AS INT):int,grouping(course):tinyint,grouping(year):tinyint,grouping_id(course, year):int>

+struct<course:string,year:int,grouping(course):tinyint,grouping(year):tinyint,grouping_id(course, year):int>

-- !query 13 output

Java 2012 0 0 0

Java 2013 0 0 0

-205,7 +205,7 dotNET NULL 0 1 1

-- !query 14

-SELECT course, udf(year), GROUPING(course) FROM courseSales GROUP BY course, year

+SELECT course, year, GROUPING(course) FROM courseSales GROUP BY course, year

-- !query 14 schema

struct<>

-- !query 14 output

-214,7 +214,7 grouping() can only be used with GroupingSets/Cube/Rollup;

-- !query 15

-SELECT course, udf(year), GROUPING_ID(course, year) FROM courseSales GROUP BY course, year

+SELECT course, year, GROUPING_ID(course, year) FROM courseSales GROUP BY course, year

-- !query 15 schema

struct<>

-- !query 15 output

-223,7 +223,7 grouping_id() can only be used with GroupingSets/Cube/Rollup;

-- !query 16

-SELECT course, year, grouping__id FROM courseSales GROUP BY CUBE(course, year) ORDER BY grouping__id, course, udf(year)

+SELECT course, year, grouping__id FROM courseSales GROUP BY CUBE(course, year) ORDER BY grouping__id, course, year

-- !query 16 schema

struct<course:string,year:int,grouping__id:int>

-- !query 16 output

-240,7 +240,7 NULL NULL 3

-- !query 17

SELECT course, year FROM courseSales GROUP BY CUBE(course, year)

-HAVING GROUPING(year) = 1 AND GROUPING_ID(course, year) > 0 ORDER BY course, udf(year)

+HAVING GROUPING(year) = 1 AND GROUPING_ID(course, year) > 0 ORDER BY course, year

-- !query 17 schema

struct<course:string,year:int>

-- !query 17 output

-250,7 +250,7 dotNET NULL

-- !query 18

-SELECT course, udf(year) FROM courseSales GROUP BY course, year HAVING GROUPING(course) > 0

+SELECT course, year FROM courseSales GROUP BY course, year HAVING GROUPING(course) > 0

-- !query 18 schema

struct<>

-- !query 18 output

-259,7 +259,7 grouping()/grouping_id() can only be used with GroupingSets/Cube/Rollup;

-- !query 19

-SELECT course, udf(udf(year)) FROM courseSales GROUP BY course, year HAVING GROUPING_ID(course) > 0

+SELECT course, year FROM courseSales GROUP BY course, year HAVING GROUPING_ID(course) > 0

-- !query 19 schema

struct<>

-- !query 19 output

-268,9 +268,9 grouping()/grouping_id() can only be used with GroupingSets/Cube/Rollup;

-- !query 20

-SELECT udf(course), year FROM courseSales GROUP BY CUBE(course, year) HAVING grouping__id > 0

+SELECT course, year FROM courseSales GROUP BY CUBE(course, year) HAVING grouping__id > 0

-- !query 20 schema

-struct<CAST(udf(cast(course as string)) AS STRING):string,year:int>

+struct<course:string,year:int>

-- !query 20 output

Java NULL

NULL 2012

-281,7 +281,7 dotNET NULL

-- !query 21

SELECT course, year, GROUPING(course), GROUPING(year) FROM courseSales GROUP BY CUBE(course, year)

-ORDER BY GROUPING(course), GROUPING(year), course, udf(year)

+ORDER BY GROUPING(course), GROUPING(year), course, year

-- !query 21 schema

struct<course:string,year:int,grouping(course):tinyint,grouping(year):tinyint>

-- !query 21 output

-298,7 +298,7 NULL NULL 1 1

-- !query 22

SELECT course, year, GROUPING_ID(course, year) FROM courseSales GROUP BY CUBE(course, year)

-ORDER BY GROUPING(course), GROUPING(year), course, udf(year)

+ORDER BY GROUPING(course), GROUPING(year), course, year

-- !query 22 schema

struct<course:string,year:int,grouping_id(course, year):int>

-- !query 22 output

-314,7 +314,7 NULL NULL 3

-- !query 23

-SELECT course, udf(year) FROM courseSales GROUP BY course, udf(year) ORDER BY GROUPING(course)

+SELECT course, year FROM courseSales GROUP BY course, year ORDER BY GROUPING(course)

-- !query 23 schema

struct<>

-- !query 23 output

-323,7 +323,7 grouping()/grouping_id() can only be used with GroupingSets/Cube/Rollup;

-- !query 24

-SELECT course, udf(year) FROM courseSales GROUP BY course, udf(year) ORDER BY GROUPING_ID(course)

+SELECT course, year FROM courseSales GROUP BY course, year ORDER BY GROUPING_ID(course)

-- !query 24 schema

struct<>

-- !query 24 output

-332,7 +332,7 grouping()/grouping_id() can only be used with GroupingSets/Cube/Rollup;

-- !query 25

-SELECT course, year FROM courseSales GROUP BY CUBE(course, year) ORDER BY grouping__id, udf(course), year

+SELECT course, year FROM courseSales GROUP BY CUBE(course, year) ORDER BY grouping__id, course, year

-- !query 25 schema

struct<course:string,year:int>

-- !query 25 output

-348,7 +348,7 NULL NULL

-- !query 26

-SELECT udf(a + b) AS k1, udf(b) AS k2, SUM(a - b) FROM testData GROUP BY CUBE(k1, k2)

+SELECT a + b AS k1, b AS k2, SUM(a - b) FROM testData GROUP BY CUBE(k1, k2)

-- !query 26 schema

struct<k1:int,k2:int,sum((a - b)):bigint>

-- !query 26 output

-368,7 +368,7 NULL NULL 3

-- !query 27

-SELECT udf(udf(a + b)) AS k, b, SUM(a - b) FROM testData GROUP BY ROLLUP(k, b)

+SELECT a + b AS k, b, SUM(a - b) FROM testData GROUP BY ROLLUP(k, b)

-- !query 27 schema

struct<k:int,b:int,sum((a - b)):bigint>

-- !query 27 output

-386,9 +386,9 NULL NULL 3

-- !query 28

-SELECT udf(a + b), udf(udf(b)) AS k, SUM(a - b) FROM testData GROUP BY a + b, k GROUPING SETS(k)

+SELECT a + b, b AS k, SUM(a - b) FROM testData GROUP BY a + b, k GROUPING SETS(k)

-- !query 28 schema

-struct<CAST(udf(cast((a + b) as string)) AS INT):int,k:int,sum((a - b)):bigint>

+struct<(a + b):int,k:int,sum((a - b)):bigint>

-- !query 28 output

NULL 1 3

NULL 2 0

```

</p>

</details>

## How was this patch tested?

Tested as guided in SPARK-27921.

Verified pandas & pyarrow versions:

```$python3

Python 3.6.8 (default, Jan 14 2019, 11:02:34)

[GCC 8.0.1 20180414 (experimental) [trunk revision 259383]] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> import pandas

>>> import pyarrow

>>> pyarrow.__version__

'0.14.0'

>>> pandas.__version__

'0.24.2'

```

From the sql output it seems that sql statements are evaluated correctly given that udf returns a string and may change results as Null will be returned as None and will be counted in returned values.

Closes#25196 from skonto/group-analytics.sql.

Authored-by: Stavros Kontopoulos <st.kontopoulos@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

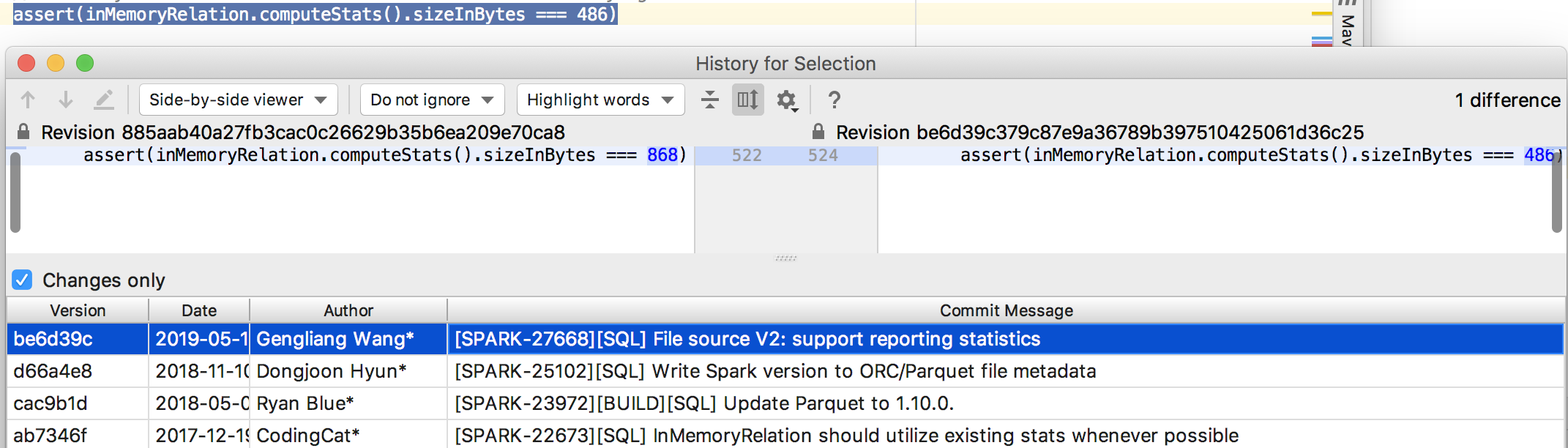

## What changes were proposed in this pull request?

This PR removes a few hardware-dependent assertions which can cause a failure in `aarch64`.

**x86_64**

```

rootdonotdel-openlab-allinone-l00242678:/home/ubuntu# uname -a

Linux donotdel-openlab-allinone-l00242678 4.4.0-154-generic #181-Ubuntu SMP Tue Jun 25 05:29:03 UTC

2019 x86_64 x86_64 x86_64 GNU/Linux

scala> import java.lang.Float.floatToRawIntBits

import java.lang.Float.floatToRawIntBits

scala> floatToRawIntBits(0.0f/0.0f)

res0: Int = -4194304

scala> floatToRawIntBits(Float.NaN)

res1: Int = 2143289344

```

**aarch64**

```

[rootarm-huangtianhua spark]# uname -a

Linux arm-huangtianhua 4.14.0-49.el7a.aarch64 #1 SMP Tue Apr 10 17:22:26 UTC 2018 aarch64 aarch64 aarch64 GNU/Linux

scala> import java.lang.Float.floatToRawIntBits

import java.lang.Float.floatToRawIntBits

scala> floatToRawIntBits(0.0f/0.0f)

res1: Int = 2143289344

scala> floatToRawIntBits(Float.NaN)

res2: Int = 2143289344

```

## How was this patch tested?

Pass the Jenkins (This removes the test coverage).

Closes#25186 from huangtianhua/special-test-case-for-aarch64.

Authored-by: huangtianhua <huangtianhua@huawei.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

SPARK-28199 (#24996) hid implementations of Triggers into `private[sql]` and encourage end users to use `Trigger.xxx` methods instead.

As I got some post review comment on 7548a8826d (r34366934) we could remove annotations which are meant to be used with public API.

## How was this patch tested?

N/A

Closes#25200 from HeartSaVioR/SPARK-28199-FOLLOWUP.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This PR adds some tests converted from `join-empty-relation.sql` to test UDFs. Please see contribution guide of this umbrella ticket - [SPARK-27921](https://issues.apache.org/jira/browse/SPARK-27921).

<details><summary>Diff comparing to 'join-empty-relation.sql'</summary>

<p>

```diff

diff --git a/sql/core/src/test/resources/sql-tests/results/join-empty-relation.sql.out b/sql/core/src/test/resources/sql-tests/results/udf/udf-join-empty-relation.sql.out

index 857073a827..e79d01fb14 100644

--- a/sql/core/src/test/resources/sql-tests/results/join-empty-relation.sql.out

+++ b/sql/core/src/test/resources/sql-tests/results/udf/udf-join-empty-relation.sql.out

-27,111 +27,111 struct<>

-- !query 3

-SELECT * FROM t1 INNER JOIN empty_table

+SELECT udf(t1.a), udf(empty_table.a) FROM t1 INNER JOIN empty_table ON (udf(t1.a) = udf(udf(empty_table.a)))

-- !query 3 schema

-struct<a:int,a:int>

+struct<CAST(udf(cast(a as string)) AS INT):int,CAST(udf(cast(a as string)) AS INT):int>

-- !query 3 output

-- !query 4

-SELECT * FROM t1 CROSS JOIN empty_table

+SELECT udf(t1.a), udf(udf(empty_table.a)) FROM t1 CROSS JOIN empty_table ON (udf(udf(t1.a)) = udf(empty_table.a))

-- !query 4 schema

-struct<a:int,a:int>

+struct<CAST(udf(cast(a as string)) AS INT):int,CAST(udf(cast(cast(udf(cast(a as string)) as int) as string)) AS INT):int>

-- !query 4 output

-- !query 5

-SELECT * FROM t1 LEFT OUTER JOIN empty_table

+SELECT udf(udf(t1.a)), empty_table.a FROM t1 LEFT OUTER JOIN empty_table ON (udf(t1.a) = udf(empty_table.a))

-- !query 5 schema

-struct<a:int,a:int>

+struct<CAST(udf(cast(cast(udf(cast(a as string)) as int) as string)) AS INT):int,a:int>

-- !query 5 output

1 NULL

-- !query 6

-SELECT * FROM t1 RIGHT OUTER JOIN empty_table

+SELECT udf(t1.a), udf(empty_table.a) FROM t1 RIGHT OUTER JOIN empty_table ON (udf(t1.a) = udf(empty_table.a))

-- !query 6 schema

-struct<a:int,a:int>

+struct<CAST(udf(cast(a as string)) AS INT):int,CAST(udf(cast(a as string)) AS INT):int>

-- !query 6 output

-- !query 7

-SELECT * FROM t1 FULL OUTER JOIN empty_table

+SELECT udf(t1.a), empty_table.a FROM t1 FULL OUTER JOIN empty_table ON (udf(t1.a) = udf(empty_table.a))

-- !query 7 schema

-struct<a:int,a:int>

+struct<CAST(udf(cast(a as string)) AS INT):int,a:int>

-- !query 7 output

1 NULL

-- !query 8

-SELECT * FROM t1 LEFT SEMI JOIN empty_table

+SELECT udf(udf(t1.a)) FROM t1 LEFT SEMI JOIN empty_table ON (udf(t1.a) = udf(udf(empty_table.a)))

-- !query 8 schema

-struct<a:int>

+struct<CAST(udf(cast(cast(udf(cast(a as string)) as int) as string)) AS INT):int>

-- !query 8 output

-- !query 9

-SELECT * FROM t1 LEFT ANTI JOIN empty_table

+SELECT udf(t1.a) FROM t1 LEFT ANTI JOIN empty_table ON (udf(t1.a) = udf(empty_table.a))

-- !query 9 schema

-struct<a:int>

+struct<CAST(udf(cast(a as string)) AS INT):int>

-- !query 9 output

1

-- !query 10

-SELECT * FROM empty_table INNER JOIN t1

+SELECT udf(empty_table.a), udf(t1.a) FROM empty_table INNER JOIN t1 ON (udf(udf(empty_table.a)) = udf(t1.a))

-- !query 10 schema

-struct<a:int,a:int>

+struct<CAST(udf(cast(a as string)) AS INT):int,CAST(udf(cast(a as string)) AS INT):int>

-- !query 10 output

-- !query 11

-SELECT * FROM empty_table CROSS JOIN t1

+SELECT udf(empty_table.a), udf(udf(t1.a)) FROM empty_table CROSS JOIN t1 ON (udf(empty_table.a) = udf(udf(t1.a)))

-- !query 11 schema

-struct<a:int,a:int>

+struct<CAST(udf(cast(a as string)) AS INT):int,CAST(udf(cast(cast(udf(cast(a as string)) as int) as string)) AS INT):int>

-- !query 11 output

-- !query 12

-SELECT * FROM empty_table LEFT OUTER JOIN t1

+SELECT udf(udf(empty_table.a)), udf(t1.a) FROM empty_table LEFT OUTER JOIN t1 ON (udf(empty_table.a) = udf(t1.a))

-- !query 12 schema

-struct<a:int,a:int>

+struct<CAST(udf(cast(cast(udf(cast(a as string)) as int) as string)) AS INT):int,CAST(udf(cast(a as string)) AS INT):int>

-- !query 12 output

-- !query 13

-SELECT * FROM empty_table RIGHT OUTER JOIN t1

+SELECT empty_table.a, udf(t1.a) FROM empty_table RIGHT OUTER JOIN t1 ON (udf(empty_table.a) = udf(t1.a))

-- !query 13 schema

-struct<a:int,a:int>

+struct<a:int,CAST(udf(cast(a as string)) AS INT):int>

-- !query 13 output

NULL 1

-- !query 14

-SELECT * FROM empty_table FULL OUTER JOIN t1

+SELECT empty_table.a, udf(udf(t1.a)) FROM empty_table FULL OUTER JOIN t1 ON (udf(empty_table.a) = udf(t1.a))

-- !query 14 schema

-struct<a:int,a:int>

+struct<a:int,CAST(udf(cast(cast(udf(cast(a as string)) as int) as string)) AS INT):int>

-- !query 14 output

NULL 1

-- !query 15

-SELECT * FROM empty_table LEFT SEMI JOIN t1

+SELECT udf(udf(empty_table.a)) FROM empty_table LEFT SEMI JOIN t1 ON (udf(empty_table.a) = udf(udf(t1.a)))

-- !query 15 schema

-struct<a:int>

+struct<CAST(udf(cast(cast(udf(cast(a as string)) as int) as string)) AS INT):int>

-- !query 15 output

-- !query 16

-SELECT * FROM empty_table LEFT ANTI JOIN t1

+SELECT empty_table.a FROM empty_table LEFT ANTI JOIN t1 ON (udf(empty_table.a) = udf(t1.a))

-- !query 16 schema

struct<a:int>

-- !query 16 output

-139,56 +139,56 struct<a:int>

-- !query 17

-SELECT * FROM empty_table INNER JOIN empty_table

+SELECT udf(empty_table.a) FROM empty_table INNER JOIN empty_table AS empty_table2 ON (udf(empty_table.a) = udf(udf(empty_table2.a)))

-- !query 17 schema

-struct<a:int,a:int>

+struct<CAST(udf(cast(a as string)) AS INT):int>

-- !query 17 output

-- !query 18

-SELECT * FROM empty_table CROSS JOIN empty_table

+SELECT udf(udf(empty_table.a)) FROM empty_table CROSS JOIN empty_table AS empty_table2 ON (udf(udf(empty_table.a)) = udf(empty_table2.a))

-- !query 18 schema

-struct<a:int,a:int>

+struct<CAST(udf(cast(cast(udf(cast(a as string)) as int) as string)) AS INT):int>

-- !query 18 output

-- !query 19

-SELECT * FROM empty_table LEFT OUTER JOIN empty_table

+SELECT udf(empty_table.a) FROM empty_table LEFT OUTER JOIN empty_table AS empty_table2 ON (udf(empty_table.a) = udf(empty_table2.a))

-- !query 19 schema

-struct<a:int,a:int>

+struct<CAST(udf(cast(a as string)) AS INT):int>

-- !query 19 output

-- !query 20

-SELECT * FROM empty_table RIGHT OUTER JOIN empty_table

+SELECT udf(udf(empty_table.a)) FROM empty_table RIGHT OUTER JOIN empty_table AS empty_table2 ON (udf(empty_table.a) = udf(udf(empty_table2.a)))

-- !query 20 schema

-struct<a:int,a:int>

+struct<CAST(udf(cast(cast(udf(cast(a as string)) as int) as string)) AS INT):int>

-- !query 20 output

-- !query 21

-SELECT * FROM empty_table FULL OUTER JOIN empty_table

+SELECT udf(empty_table.a) FROM empty_table FULL OUTER JOIN empty_table AS empty_table2 ON (udf(empty_table.a) = udf(empty_table2.a))

-- !query 21 schema

-struct<a:int,a:int>

+struct<CAST(udf(cast(a as string)) AS INT):int>

-- !query 21 output

-- !query 22

-SELECT * FROM empty_table LEFT SEMI JOIN empty_table

+SELECT udf(udf(empty_table.a)) FROM empty_table LEFT SEMI JOIN empty_table AS empty_table2 ON (udf(empty_table.a) = udf(empty_table2.a))

-- !query 22 schema

-struct<a:int>

+struct<CAST(udf(cast(cast(udf(cast(a as string)) as int) as string)) AS INT):int>

-- !query 22 output

-- !query 23

-SELECT * FROM empty_table LEFT ANTI JOIN empty_table

+SELECT udf(empty_table.a) FROM empty_table LEFT ANTI JOIN empty_table AS empty_table2 ON (udf(empty_table.a) = udf(empty_table2.a))

-- !query 23 schema

-struct<a:int>

+struct<CAST(udf(cast(a as string)) AS INT):int>

-- !query 23 output

```

</p>

</details>

## How was this patch tested?

Tested as guided in [SPARK-27921](https://issues.apache.org/jira/browse/SPARK-27921).

Closes#25127 from imback82/join-empty-relation-sql.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Performance issue using explode was found when a complex field contains huge array is to get duplicated as the number of exploded array elements. Given example:

```scala

val df = spark.sparkContext.parallelize(Seq(("1",

Array.fill(M)({

val i = math.random

(i.toString, (i + 1).toString, (i + 2).toString, (i + 3).toString)

})))).toDF("col", "arr")

.selectExpr("col", "struct(col, arr) as st")

.selectExpr("col", "st.col as col1", "explode(st.arr) as arr_col")

```

The explode causes `st` to be duplicated as many as the exploded elements.

Benchmarks it:

```

[info] Java HotSpot(TM) 64-Bit Server VM 1.8.0_202-b08 on Mac OS X 10.14.4

[info] Intel(R) Core(TM) i7-8750H CPU 2.20GHz

[info] generate big nested struct array: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

[info] ------------------------------------------------------------------------------------------------------------------------

[info] generate big nested struct array wholestage off 52668 53162 699 0.0 877803.4 1.0X

[info] generate big nested struct array wholestage on 47261 49093 1125 0.0 787690.2 1.1X

[info]

```

The query plan:

```

== Physical Plan ==

Project [col#508, st#512.col AS col1#515, arr_col#519]

+- Generate explode(st#512.arr), [col#508, st#512], false, [arr_col#519]

+- Project [_1#503 AS col#508, named_struct(col, _1#503, arr, _2#504) AS st#512]

+- SerializeFromObject [staticinvoke(class org.apache.spark.unsafe.types.UTF8String, StringType, fromString, knownnotnull(assertnotnull(input[0, scala.Tuple2, true]))._1, true, false) AS _1#503, mapobjects(MapObjects_loopValue84, MapObjects_loopIsNull84, ObjectType(class scala.Tuple4), if (isnull(lambdavariable(MapObjects_loopValue84, MapObjects_loopIsNull84, ObjectType(class scala.Tuple4), true))) null else named_struct(_1, staticinvoke(class org.apache.spark.unsafe.types.UTF8String, StringType, fromString, knownnotnull(lambdavariable(MapObjects_loopValue84, MapObjects_loopIsNull84, ObjectType(class scala.Tuple4), true))._1, true, false), _2, staticinvoke(class org.apache.spark.unsafe.types.UTF8String, StringType, fromString, knownnotnull(lambdavariable(MapObjects_loopValue84, MapObjects_loopIsNull84, ObjectType(class scala.Tuple4), true))._2, true, false), _3, staticinvoke(class org.apache.spark.unsafe.types.UTF8String, StringType, fromString, knownnotnull(lambdavariable(MapObjects_loopValue84, MapObjects_loopIsNull84, ObjectType(class scala.Tuple4), true))._3, true, false), _4, staticinvoke(class org.apache.spark.unsafe.types.UTF8String, StringType, fromString, knownnotnull(lambdavariable(MapObjects_loopValue84, MapObjects_loopIsNull84, ObjectType(class scala.Tuple4), true))._4, true, false)), knownnotnull(assertnotnull(input[0, scala.Tuple2, true]))._2, None) AS _2#504]

+- Scan[obj#534]

```

This patch takes nested column pruning approach to prune unnecessary nested fields. It adds a projection of the needed nested fields as aliases on the child of `Generate`, and substitutes them by alias attributes on the projection on top of `Generate`.

Benchmarks it after the change:

```

[info] Java HotSpot(TM) 64-Bit Server VM 1.8.0_202-b08 on Mac OS X 10.14.4

[info] Intel(R) Core(TM) i7-8750H CPU 2.20GHz

[info] generate big nested struct array: Best Time(ms) Avg Time(ms) Stdev(ms) Rate(M/s) Per Row(ns) Relative

[info] ------------------------------------------------------------------------------------------------------------------------

[info] generate big nested struct array wholestage off 311 331 28 0.2 5188.6 1.0X

[info] generate big nested struct array wholestage on 297 312 15 0.2 4947.3 1.0X

[info]

```

The query plan:

```

== Physical Plan ==

Project [col#592, _gen_alias_608#608 AS col1#599, arr_col#603]

+- Generate explode(st#596.arr), [col#592, _gen_alias_608#608], false, [arr_col#603]

+- Project [_1#587 AS col#592, named_struct(col, _1#587, arr, _2#588) AS st#596, _1#587 AS _gen_alias_608#608]

+- SerializeFromObject [staticinvoke(class org.apache.spark.unsafe.types.UTF8String, StringType, fromString, knownnotnull(assertnotnull(in

put[0, scala.Tuple2, true]))._1, true, false) AS _1#587, mapobjects(MapObjects_loopValue102, MapObjects_loopIsNull102, ObjectType(class scala.Tuple4),

if (isnull(lambdavariable(MapObjects_loopValue102, MapObjects_loopIsNull102, ObjectType(class scala.Tuple4), true))) null else named_struct(_1, staticinvoke(class org.apache.spark.unsafe.types.UTF8String, StringType, fromString, knownnotnull(lambdavariable(MapObjects_loopValue102, MapObjects_loopIsNull102, ObjectType(class scala.Tuple4), true))._1, true, false), _2, staticinvoke(class org.apache.spark.unsafe.types.UTF8String, StringType, fromString, knownnotnull(lambdavariable(MapObjects_loopValue102, MapObjects_loopIsNull102, ObjectType(class scala.Tuple4), true))._2, true, false), _3, staticinvoke(class org.apache.spark.unsafe.types.UTF8String, StringType, fromString, knownnotnull(lambdavariable(MapObjects_loopValue102, MapObjects_loopIsNull102, ObjectType(class scala.Tuple4), true))._3, true, false), _4, staticinvoke(class org.apache.spark.unsafe.types.UTF8String, StringType, fromString, knownnotnull(lambdavariable(MapObjects_loopValue102, MapObjects_loopIsNull102, ObjectType(class scala.Tuple4), true))._4, true, false)), knownnotnull(assertnotnull(input[0, scala.Tuple2, true]))._2, None) AS _2#588]

+- Scan[obj#586]

```

This behavior is controlled by a SQL config `spark.sql.optimizer.expression.nestedPruning.enabled`.