### What changes were proposed in this pull request?

`KafkaDelegationTokenSuite` fails on different platforms with the following problem:

```

19/09/11 11:07:42.690 pool-1-thread-1-SendThread(localhost:44965) DEBUG ZooKeeperSaslClient: creating sasl client: Client=zkclient/localhostEXAMPLE.COM;service=zookeeper;serviceHostname=localhost.localdomain

...

NIOServerCxn.Factory:localhost/127.0.0.1:0: Zookeeper Server failed to create a SaslServer to interact with a client during session initiation:

javax.security.sasl.SaslException: Failure to initialize security context [Caused by GSSException: No valid credentials provided (Mechanism level: Failed to find any Kerberos credentails)]

at com.sun.security.sasl.gsskerb.GssKrb5Server.<init>(GssKrb5Server.java:125)

at com.sun.security.sasl.gsskerb.FactoryImpl.createSaslServer(FactoryImpl.java:85)

at javax.security.sasl.Sasl.createSaslServer(Sasl.java:524)

at org.apache.zookeeper.util.SecurityUtils$2.run(SecurityUtils.java:233)

at org.apache.zookeeper.util.SecurityUtils$2.run(SecurityUtils.java:229)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.zookeeper.util.SecurityUtils.createSaslServer(SecurityUtils.java:228)

at org.apache.zookeeper.server.ZooKeeperSaslServer.createSaslServer(ZooKeeperSaslServer.java:44)

at org.apache.zookeeper.server.ZooKeeperSaslServer.<init>(ZooKeeperSaslServer.java:38)

at org.apache.zookeeper.server.NIOServerCnxn.<init>(NIOServerCnxn.java:100)

at org.apache.zookeeper.server.NIOServerCnxnFactory.createConnection(NIOServerCnxnFactory.java:186)

at org.apache.zookeeper.server.NIOServerCnxnFactory.run(NIOServerCnxnFactory.java:227)

at java.lang.Thread.run(Thread.java:748)

Caused by: GSSException: No valid credentials provided (Mechanism level: Failed to find any Kerberos credentails)

at sun.security.jgss.krb5.Krb5AcceptCredential.getInstance(Krb5AcceptCredential.java:87)

at sun.security.jgss.krb5.Krb5MechFactory.getCredentialElement(Krb5MechFactory.java:127)

at sun.security.jgss.GSSManagerImpl.getCredentialElement(GSSManagerImpl.java:193)

at sun.security.jgss.GSSCredentialImpl.add(GSSCredentialImpl.java:427)

at sun.security.jgss.GSSCredentialImpl.<init>(GSSCredentialImpl.java:62)

at sun.security.jgss.GSSManagerImpl.createCredential(GSSManagerImpl.java:154)

at com.sun.security.sasl.gsskerb.GssKrb5Server.<init>(GssKrb5Server.java:108)

... 13 more

NIOServerCxn.Factory:localhost/127.0.0.1:0: Client attempting to establish new session at /127.0.0.1:33742

SyncThread:0: Creating new log file: log.1

SyncThread:0: Established session 0x100003736ae0000 with negotiated timeout 10000 for client /127.0.0.1:33742

pool-1-thread-1-SendThread(localhost:35625): Session establishment complete on server localhost/127.0.0.1:35625, sessionid = 0x100003736ae0000, negotiated timeout = 10000

pool-1-thread-1-SendThread(localhost:35625): ClientCnxn:sendSaslPacket:length=0

pool-1-thread-1-SendThread(localhost:35625): saslClient.evaluateChallenge(len=0)

pool-1-thread-1-EventThread: zookeeper state changed (SyncConnected)

NioProcessor-1: No server entry found for kerberos principal name zookeeper/localhost.localdomainEXAMPLE.COM

NioProcessor-1: No server entry found for kerberos principal name zookeeper/localhost.localdomainEXAMPLE.COM

NioProcessor-1: Server not found in Kerberos database (7)

NioProcessor-1: Server not found in Kerberos database (7)

```

The problem reproducible if the `localhost` and `localhost.localdomain` order exhanged:

```

[systestgsomogyi-build spark]$ cat /etc/hosts

127.0.0.1 localhost.localdomain localhost localhost4 localhost4.localdomain4

::1 localhost.localdomain localhost localhost6 localhost6.localdomain6

```

The main problem is that `ZkClient` connects to the canonical loopback address (which is not necessarily `localhost`).

### Why are the changes needed?

`KafkaDelegationTokenSuite` failed in some environments.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing unit tests on different platforms.

Closes#25803 from gaborgsomogyi/SPARK-29027.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

### What changes were proposed in this pull request?

This patch adds new UT to ensure existing query (before Spark 3.0.0) with checkpoint doesn't break with default configuration of "includeHeaders" being introduced via SPARK-23539.

This patch also modifies existing test which checks type of columns to also check headers column as well.

### Why are the changes needed?

The patch adds missing tests which guarantees backward compatibility of the change of SPARK-23539.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

UT passed.

Closes#25792 from HeartSaVioR/SPARK-23539-FOLLOWUP.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This update adds support for Kafka Headers functionality in Structured Streaming.

## How was this patch tested?

With following unit tests:

- KafkaRelationSuite: "default starting and ending offsets with headers" (new)

- KafkaSinkSuite: "batch - write to kafka" (updated)

Closes#22282 from dongjinleekr/feature/SPARK-23539.

Lead-authored-by: Lee Dongjin <dongjin@apache.org>

Co-authored-by: Jungtaek Lim <kabhwan@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

reorganize the packages of DS v2 interfaces/classes:

1. `org.spark.sql.connector.catalog`: put `TableCatalog`, `Table` and other related interfaces/classes

2. `org.spark.sql.connector.expression`: put `Expression`, `Transform` and other related interfaces/classes

3. `org.spark.sql.connector.read`: put `ScanBuilder`, `Scan` and other related interfaces/classes

4. `org.spark.sql.connector.write`: put `WriteBuilder`, `BatchWrite` and other related interfaces/classes

### Why are the changes needed?

Data Source V2 has evolved a lot. It's a bit weird that `Expression` is in `org.spark.sql.catalog.v2` and `Table` is in `org.spark.sql.sources.v2`.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

existing tests

Closes#25700 from cloud-fan/package.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

At the moment there are 3 places where communication protocol with Kafka cluster has to be set when delegation token used:

* On delegation token

* On source

* On sink

Most of the time users are using the same protocol on all these places (within one Kafka cluster). It would be better to declare it in one place (delegation token side) and Kafka sources/sinks can take this config over.

In this PR I've I've modified the code in a way that Kafka sources/sinks are taking over delegation token side `security.protocol` configuration when the token and the source/sink matches in `bootstrap.servers` configuration. This default configuration can be overwritten on each source/sink independently by using `kafka.security.protocol` configuration.

### Why are the changes needed?

The actual configuration's default behavior represents the minority of the use-cases and inconvenient.

### Does this PR introduce any user-facing change?

Yes, with this change users need to provide less configuration parameters by default.

### How was this patch tested?

Existing + additional unit tests.

Closes#25631 from gaborgsomogyi/SPARK-28928.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

This patch does pooling for both kafka consumers as well as fetched data. The overall benefits of the patch are following:

* Both pools support eviction on idle objects, which will help closing invalid idle objects which topic or partition are no longer be assigned to any tasks.

* It also enables applying different policies on pool, which helps optimization of pooling for each pool.

* We concerned about multiple tasks pointing same topic partition as well as same group id, and existing code can't handle this hence excess seek and fetch could happen. This patch properly handles the case.

* It also makes the code always safe to leverage cache, hence no need to maintain reuseCache parameter.

Moreover, pooling kafka consumers is implemented based on Apache Commons Pool, which also gives couple of benefits:

* We can get rid of synchronization of KafkaDataConsumer object while acquiring and returning InternalKafkaConsumer.

* We can extract the feature of object pool to outside of the class, so that the behaviors of the pool can be tested easily.

* We can get various statistics for the object pool, and also be able to enable JMX for the pool.

FetchedData instances are pooled by custom implementation of pool instead of leveraging Apache Commons Pool, because they have CacheKey as first key and "desired offset" as second key which "desired offset" is changing - I haven't found any general pool implementations supporting this.

This patch brings additional dependency, Apache Commons Pool 2.6.0 into `spark-sql-kafka-0-10` module.

## How was this patch tested?

Existing unit tests as well as new tests for object pool.

Also did some experiment regarding proving concurrent access of consumers for same topic partition.

* Made change on both sides (master and patch) to log when creating Kafka consumer or fetching records from Kafka is happening.

* branches

* master: https://github.com/HeartSaVioR/spark/tree/SPARK-25151-master-ref-debugging

* patch: https://github.com/HeartSaVioR/spark/tree/SPARK-25151-debugging

* Test query (doing self-join)

* https://gist.github.com/HeartSaVioR/d831974c3f25c02846f4b15b8d232cc2

* Ran query from spark-shell, with using `local[*]` to maximize the chance to have concurrent access

* Collected the count of fetch requests on Kafka via command: `grep "creating new Kafka consumer" logfile | wc -l`

* Collected the count of creating Kafka consumers via command: `grep "fetching data from Kafka consumer" logfile | wc -l`

Topic and data distribution is follow:

```

truck_speed_events_stream_spark_25151_v1:0:99440

truck_speed_events_stream_spark_25151_v1:1:99489

truck_speed_events_stream_spark_25151_v1:2:397759

truck_speed_events_stream_spark_25151_v1:3:198917

truck_speed_events_stream_spark_25151_v1:4:99484

truck_speed_events_stream_spark_25151_v1:5:497320

truck_speed_events_stream_spark_25151_v1:6:99430

truck_speed_events_stream_spark_25151_v1:7:397887

truck_speed_events_stream_spark_25151_v1:8:397813

truck_speed_events_stream_spark_25151_v1:9:0

```

The experiment only used smallest 4 partitions (0, 1, 4, 6) from these partitions to finish the query earlier.

The result of experiment is below:

branch | create Kafka consumer | fetch request

-- | -- | --

master | 1986 | 2837

patch | 8 | 1706

Closes#22138 from HeartSaVioR/SPARK-25151.

Lead-authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan@gmail.com>

Co-authored-by: Jungtaek Lim <kabhwan@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

### What changes were proposed in this pull request?

At the moment no end-to-end Kafka delegation token test exists which was mainly because of missing embedded KDC. KDC is missing in general from the testing side so I've discovered what kind of possibilities are there. The most obvious choice is the MiniKDC inside the Hadoop library where Apache Kerby runs in the background. What this PR contains:

* Added MiniKDC as test dependency from Hadoop

* Added `maven-bundle-plugin` because couple of dependencies are coming in bundle format

* Added security mode to `KafkaTestUtils`. Namely start KDC -> start Zookeeper in secure mode -> start Kafka in secure mode

* Added a roundtrip test (saves and reads back data from Kafka)

### Why are the changes needed?

No such test exists + security testing with KDC is completely missing.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing + additional unit tests.

I've put the additional test into a loop and was consuming ~10 sec average.

Closes#25477 from gaborgsomogyi/SPARK-28760.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

Currently we have 2 configs to specify which v2 sources should fallback to v1 code path. One config for read path, and one config for write path.

However, I found it's awkward to work with these 2 configs:

1. for `CREATE TABLE USING format`, should this be read path or write path?

2. for `V2SessionCatalog.loadTable`, we need to return `UnresolvedTable` if it's a DS v1 or we need to fallback to v1 code path. However, at that time, we don't know if the returned table will be used for read or write.

We don't have any new features or perf improvement in file source v2. The fallback API is just a safeguard if we have bugs in v2 implementations. There are not many benefits to support falling back to v1 for read and write path separately.

This PR proposes to merge these 2 configs into one.

## How was this patch tested?

existing tests

Closes#25465 from cloud-fan/merge-conf.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This patch proposes to reuse KafkaSourceInitialOffsetWriter to remove identical code in KafkaSource.

Credit to jaceklaskowski for finding this.

https://lists.apache.org/thread.html/7faa6ac29d871444eaeccefc520e3543a77f4362af4bb0f12a3f7cb2%3Cdev.spark.apache.org%3E

### Why are the changes needed?

The code is duplicated with identical code, which opens the chance to maintain the code separately and might end up with bugs not addressed one side.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Existing UTs, as it's simple refactor.

Closes#25583 from HeartSaVioR/MINOR-SS-reuse-KafkaSourceInitialOffsetWriter.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

### What changes were proposed in this pull request?

When Task retry happens with Kafka source then it's not known whether the consumer is the issue so the old consumer removed from cache and new consumer created. The feature works fine but not covered with tests.

In this PR I've added such test for DStreams + Structured Streaming.

### Why are the changes needed?

No such tests are there.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing + new unit tests.

Closes#25582 from gaborgsomogyi/SPARK-28875.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

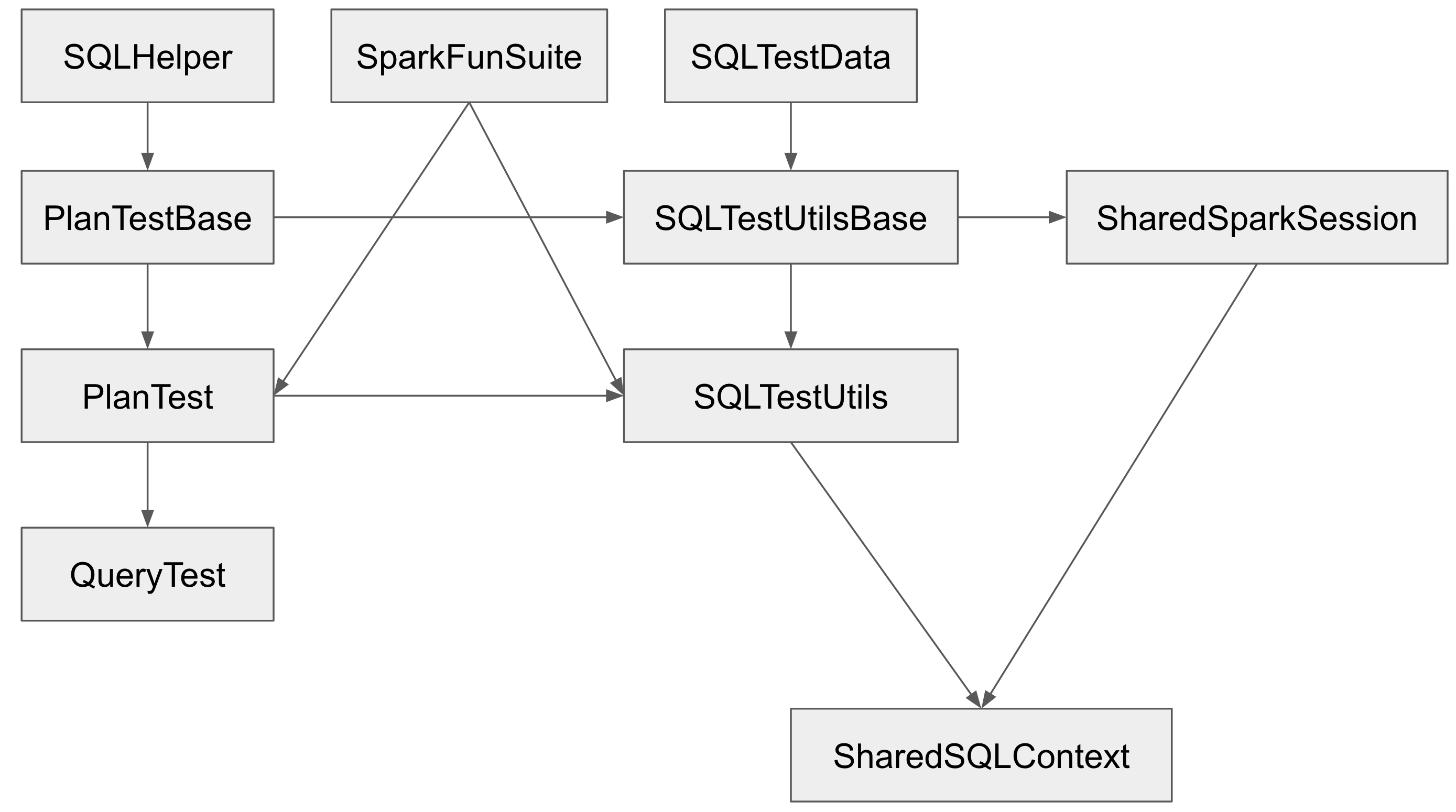

## What changes were proposed in this pull request?

The Spark SQL test framework needs to support 2 kinds of tests:

1. tests inside Spark to test Spark itself (extends `SparkFunSuite`)

2. test outside of Spark to test Spark applications (introduced at b57ed2245c)

The class hierarchy of the major testing traits:

`PlanTestBase`, `SQLTestUtilsBase` and `SharedSparkSession` intentionally don't extend `SparkFunSuite`, so that they can be used for tests outside of Spark. Tests in Spark should extends `QueryTest` and/or `SharedSQLContext` in most cases.

However, the name is a little confusing. As a result, some test suites extend `SharedSparkSession` instead of `SharedSQLContext`. `SharedSparkSession` doesn't work well with `SparkFunSuite` as it doesn't have the special handling of thread auditing in `SharedSQLContext`. For example, you will see a warning starting with `===== POSSIBLE THREAD LEAK IN SUITE` when you run `DataFrameSelfJoinSuite`.

This PR proposes to rename `SharedSparkSession` to `SharedSparkSessionBase`, and rename `SharedSQLContext` to `SharedSparkSession`.

## How was this patch tested?

(Please explain how this patch was tested. E.g. unit tests, integration tests, manual tests)

(If this patch involves UI changes, please attach a screenshot; otherwise, remove this)

Please review https://spark.apache.org/contributing.html before opening a pull request.

Closes#25463 from cloud-fan/minor.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

[SPARK-28163](https://issues.apache.org/jira/browse/SPARK-28163) fixed a bug and during the analysis we've concluded it would be more robust to use `CaseInsensitiveMap` inside Kafka source. This case less lower/upper case problem would rise in the future.

Please note this PR doesn't intend to solve any kind of actual problem but finish the concept added in [SPARK-28163](https://issues.apache.org/jira/browse/SPARK-28163) (in a fix PR I didn't want to add too invasive changes). In this PR I've changed `Map[String, String]` to `CaseInsensitiveMap[String]` to enforce the usage. These are the main use-cases:

* `contains` => `CaseInsensitiveMap` solves it

* `get...` => `CaseInsensitiveMap` solves it

* `filter` => keys must be converted to lowercase because there is no guarantee that the incoming map has such key set

* `find` => keys must be converted to lowercase because there is no guarantee that the incoming map has such key set

* passing parameters to Kafka consumer/producer => keys must be converted to lowercase because there is no guarantee that the incoming map has such key set

## How was this patch tested?

Existing unit tests.

Closes#25418 from gaborgsomogyi/SPARK-28695.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

There are "unsafe" conversions in the Kafka connector.

`CaseInsensitiveStringMap` comes in which is then converted the following way:

```

...

options.asScala.toMap

...

```

The main problem with this is that such case it looses its case insensitive nature

(case insensitive map is converting the key to lower case when get/contains called).

In this PR I'm using `CaseInsensitiveMap` to solve this problem.

## How was this patch tested?

Existing + additional unit tests.

Closes#24967 from gaborgsomogyi/SPARK-28163.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

`KafkaOffsetRangeCalculator.getRanges` may drop offsets due to round off errors. The test added in this PR is one example.

This PR rewrites the logic in `KafkaOffsetRangeCalculator.getRanges` to ensure it never drops offsets.

## How was this patch tested?

The regression test.

Closes#25237 from zsxwing/fix-range.

Authored-by: Shixiong Zhu <zsxwing@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This patch proposes moving all Trigger implementations to `Triggers.scala`, to avoid exposing these implementations to the end users and let end users only deal with `Trigger.xxx` static methods. This fits the intention of deprecation of `ProcessingTIme`, and we agree to move others without deprecation as this patch will be shipped in major version (Spark 3.0.0).

## How was this patch tested?

UTs modified to work with newly introduced class.

Closes#24996 from HeartSaVioR/SPARK-28199.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

There are some hardcoded configs, using config entry to replace them.

## How was this patch tested?

Existing UT

Closes#25059 from WangGuangxin/ConfigEntry.

Authored-by: wangguangxin.cn <wangguangxin.cn@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This patch adds missing UT which tests the changed behavior of original patch #24942.

## How was this patch tested?

Newly added UT.

Closes#24999 from HeartSaVioR/SPARK-28142-FOLLOWUP.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

According to the documentation `groupIdPrefix` should be available for `streaming and batch`.

It is not the case because the batch part is missing.

In this PR I've added:

* Structured Streaming test for v1 and v2 to cover `groupIdPrefix`

* Batch test for v1 and v2 to cover `groupIdPrefix`

* Added `groupIdPrefix` usage in batch

## How was this patch tested?

Additional + existing unit tests.

Closes#25030 from gaborgsomogyi/SPARK-28232.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Kafka batch data source is using v1 at the moment. In the PR I've migrated to v2. Majority of the change is moving code.

What this PR contains:

* useV1Sources usage fixed in `DataFrameReader` and `DataFrameWriter`

* `KafkaBatch` added to handle DSv2 batch reading

* `KafkaBatchWrite` added to handle DSv2 batch writing

* `KafkaBatchPartitionReader` extracted to share between batch and microbatch

* `KafkaDataWriter` extracted to share between batch, microbatch and continuous

* Batch related source/sink tests are now executing on v1 and v2 connectors

* Couple of classes hidden now, functions moved + couple of minor fixes

## How was this patch tested?

Existing + added unit tests.

Closes#24738 from gaborgsomogyi/SPARK-23098.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This patch addresses a missing spot which Map should be passed as CaseInsensitiveStringMap - KafkaContinuousStream seems to be only the missed one.

Before this fix, it has a relevant bug where `pollTimeoutMs` is always set to default value, as the value of `KafkaSourceProvider.CONSUMER_POLL_TIMEOUT` is `kafkaConsumer.pollTimeoutMs` which key-lowercased map has been provided as `sourceOptions`.

## How was this patch tested?

N/A.

Closes#24942 from HeartSaVioR/MINOR-use-case-insensitive-map-for-kafka-continuous-source.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Kafka parameters are logged at several places and the following parameters has to be redacted:

* Delegation token

* `ssl.truststore.password`

* `ssl.keystore.password`

* `ssl.key.password`

This PR contains:

* Spark central redaction framework used to redact passwords (`spark.redaction.regex`)

* Custom redaction added to handle `sasl.jaas.config` (delegation token)

* Redaction code added into consumer/producer code

* Test refactor

## How was this patch tested?

Existing + additional unit tests.

Closes#24627 from gaborgsomogyi/SPARK-27748.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

Kafka related Spark parameters has to start with `spark.kafka.` and not with `spark.sql.`. Because of this I've renamed `spark.sql.kafkaConsumerCache.capacity`.

Since Kafka consumer caching is not documented I've added this also.

## How was this patch tested?

Existing + added unit test.

```

cd docs

SKIP_API=1 jekyll build

```

and manual webpage check.

Closes#24590 from gaborgsomogyi/SPARK-27687.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

[SPARK-27343](https://issues.apache.org/jira/projects/SPARK/issues/SPARK-27343)

Based on the previous PR: https://github.com/apache/spark/pull/24270

Extracting parameters , building the objects of ConfigEntry.

For example:

for the parameter "spark.kafka.producer.cache.timeout",we build

```

private[kafka010] val PRODUCER_CACHE_TIMEOUT =

ConfigBuilder("spark.kafka.producer.cache.timeout")

.doc("The expire time to remove the unused producers.")

.timeConf(TimeUnit.MILLISECONDS)

.createWithDefaultString("10m")

```

Closes#24574 from hehuiyuan/hehuiyuan-patch-9.

Authored-by: hehuiyuan <hehuiyuan@ZBMAC-C02WD3K5H.local>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

To move DS v2 to the catalyst module, we can't make v2 offset rely on v1 offset, as v1 offset is in sql/core.

## How was this patch tested?

existing tests

Closes#24538 from cloud-fan/offset.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

For historical reasons, structured streaming still has some old way of use

`spark.network.timeout`

, even though

`org.apache.spark.internal.config.Network.NETWORK_TIMEOUT`

is now available.

## How was this patch tested?

Exists UT.

Closes#24545 from beliefer/unify-spark-network-timeout.

Authored-by: gengjiaan <gengjiaan@360.cn>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The actual implementation doesn't support multi-cluster Kafka connection with delegation token. In this PR I've added this functionality.

What this PR contains:

* New way of configuration

* Multiple delegation token obtain/store/use functionality

* Documentation

* The change works on DStreams also

## How was this patch tested?

Existing + additional unit tests.

Additionally tested on cluster.

Test scenario:

* 2 * 4 node clusters

* The 4-4 nodes are in different kerberos realms

* Cross-Realm trust between the 2 realms

* Yarn

* Kafka broker version 2.1.0

* security.protocol = SASL_SSL

* sasl.mechanism = SCRAM-SHA-512

* Artificial exceptions during processing

* Source reads from realm1 sink writes to realm2

Kafka broker settings:

* delegation.token.expiry.time.ms=600000 (10 min)

* delegation.token.max.lifetime.ms=1200000 (20 min)

* delegation.token.expiry.check.interval.ms=300000 (5 min)

Closes#24305 from gaborgsomogyi/SPARK-27294.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

`BaseStreamingSource` and `BaseStreamingSink` is used to unify v1 and v2 streaming data source API in some code paths.

This PR removes these 2 interfaces, and let the v1 API extend v2 API to keep API compatibility.

The motivation is https://github.com/apache/spark/pull/24416 . We want to move data source v2 to catalyst module, but `BaseStreamingSource` and `BaseStreamingSink` are in sql/core.

## How was this patch tested?

existing tests

Closes#24471 from cloud-fan/streaming.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

There is a MemorySink v2 already so v1 can be removed. In this PR I've removed it completely.

What this PR contains:

* V1 memory sink removal

* V2 memory sink renamed to become the only implementation

* Since DSv2 sends exceptions in a chained format (linking them with cause field) I've made python side compliant

* Adapted all the tests

## How was this patch tested?

Existing unit tests.

Closes#24403 from gaborgsomogyi/SPARK-23014.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

This is a followup of https://github.com/apache/spark/pull/24012 , to add the corresponding capabilities for streaming.

## How was this patch tested?

existing tests

Closes#24129 from cloud-fan/capability.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Right now Kafka source v2 doesn't support null values. The issue is in org.apache.spark.sql.kafka010.KafkaRecordToUnsafeRowConverter.toUnsafeRow which doesn't handle null values.

## How was this patch tested?

add new unit tests

Closes#24441 from uncleGen/SPARK-27494.

Authored-by: uncleGen <hustyugm@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Fix build warnings -- see some details below.

But mostly, remove use of postfix syntax where it causes warnings without the `scala.language.postfixOps` import. This is mostly in expressions like "120000 milliseconds". Which, I'd like to simplify to things like "2.minutes" anyway.

## How was this patch tested?

Existing tests.

Closes#24314 from srowen/SPARK-27404.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Use Single Abstract Method syntax where possible (and minor related cleanup). Comments below. No logic should change here.

## How was this patch tested?

Existing tests.

Closes#24241 from srowen/SPARK-27323.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This PR aims to update Kafka dependency to 2.2.0 to bring the following improvement and bug fixes.

- https://issues.apache.org/jira/projects/KAFKA/versions/12344063

Due to [KAFKA-4453](https://issues.apache.org/jira/browse/KAFKA-4453), data plane API and controller plane API are separated. Apache Spark needs the following changes.

```scala

- servers.head.apis.metadataCache

+ servers.head.dataPlaneRequestProcessor.metadataCache

```

## How was this patch tested?

Pass the Jenkins with the existing tests.

Closes#24190 from dongjoon-hyun/SPARK-27260.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This adds a new method, `capabilities` to `v2.Table` that returns a set of `TableCapability`. Capabilities are used to fail queries during analysis checks, `V2WriteSupportCheck`, when the table does not support operations, like truncation.

## How was this patch tested?

Existing tests for regressions, added new analysis suite, `V2WriteSupportCheckSuite`, for new capability checks.

Closes#24012 from rdblue/SPARK-26811-add-capabilities.

Authored-by: Ryan Blue <blue@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

To change calls to AdminUtils, currently used to create and delete topics in Kafka tests. With this change, it will rely on adminClient, the recommended way from now on.

## How was this patch tested?

I ran all unit tests and they are fine. Since it is already good tested, I thought that changes in the API wouldn't require new tests, as long as the current tests are working fine.

Closes#24071 from DylanGuedes/spark-27138.

Authored-by: DylanGuedes <djmgguedes@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

It's a little awkward to have 2 different classes(`CaseInsensitiveStringMap` and `DataSourceOptions`) to present the options in data source and catalog API.

This PR merges these 2 classes, while keeping the name `CaseInsensitiveStringMap`, which is more precise.

## How was this patch tested?

existing tests

Closes#24025 from cloud-fan/option.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

According to the [design](https://docs.google.com/document/d/1vI26UEuDpVuOjWw4WPoH2T6y8WAekwtI7qoowhOFnI4/edit?usp=sharing), the life cycle of `StreamingWrite` should be the same as the read side `MicroBatch/ContinuousStream`, i.e. each run of the stream query, instead of each epoch.

This PR fixes it.

## How was this patch tested?

existing tests

Closes#23981 from cloud-fan/dsv2.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

It adds Kafka delegation token support for DStreams. Please be aware as Kafka native sink is not available for DStreams this PR contains delegation token usage only on consumer side.

What this PR contains:

* Usage of token through dynamic JAAS configuration

* `KafkaConfigUpdater` moved to `kafka-0-10-token-provider`

* `KafkaSecurityHelper` functionality moved into `KafkaTokenUtil`

* Documentation

## How was this patch tested?

Existing unit tests + on cluster.

Long running Kafka to file tests on 4 node cluster with randomly thrown artificial exceptions.

Test scenario:

* 4 node cluster

* Yarn

* Kafka broker version 2.1.0

* security.protocol = SASL_SSL

* sasl.mechanism = SCRAM-SHA-512

Kafka broker settings:

* delegation.token.expiry.time.ms=600000 (10 min)

* delegation.token.max.lifetime.ms=1200000 (20 min)

* delegation.token.expiry.check.interval.ms=300000 (5 min)

After each 7.5 minutes new delegation token obtained from Kafka broker (10 min * 0.75).

When token expired after 10 minutes (Spark obtains new one and doesn't renew the old), the brokers expiring thread comes after each 5 minutes (invalidates expired tokens) and artificial exception has been thrown inside the Spark application (such case Spark closes connection), then the latest delegation token picked up correctly.

cd docs/

SKIP_API=1 jekyll build

Manual webpage check.

Closes#23929 from gaborgsomogyi/SPARK-27022.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

Similar to `SaveMode`, we should remove streaming `OutputMode` from data source v2 API, and use operations that has clear semantic.

The changes are:

1. append mode: create `StreamingWrite` directly. By default, the `WriteBuilder` will create `Write` to append data.

2. complete mode: call `SupportsTruncate#truncate`. Complete mode means truncating all the old data and appending new data of the current epoch. `SupportsTruncate` has exactly the same semantic.

3. update mode: fail. The current streaming framework can't propagate the update keys, so v2 sinks are not able to implement update mode. In the future we can introduce a `SupportsUpdate` trait.

The behavior changes:

1. all the v2 sinks(foreach, console, memory, kafka, noop) don't support update mode. The fact is, previously all the v2 sinks implement the update mode wrong. None of them can really support it.

2. kafka sink doesn't support complete mode. The fact is, the kafka sink can only append data.

## How was this patch tested?

existing tests

Closes#23859 from cloud-fan/update.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Redundant `get` when getting a value from `Map` given a key.

## How was this patch tested?

N/A

Closes#23901 from 10110346/removegetfrommap.

Authored-by: liuxian <liu.xian3@zte.com.cn>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Spark not always picking up the latest Kafka delegation tokens even if a new one properly obtained.

In the PR I'm setting delegation tokens right before `KafkaConsumer` and `KafkaProducer` creation to be on the safe side.

## How was this patch tested?

Long running Kafka to Kafka tests on 4 node cluster with randomly thrown artificial exceptions.

Test scenario:

* 4 node cluster

* Yarn

* Kafka broker version 2.1.0

* security.protocol = SASL_SSL

* sasl.mechanism = SCRAM-SHA-512

Kafka broker settings:

* delegation.token.expiry.time.ms=600000 (10 min)

* delegation.token.max.lifetime.ms=1200000 (20 min)

* delegation.token.expiry.check.interval.ms=300000 (5 min)

After each 7.5 minutes new delegation token obtained from Kafka broker (10 min * 0.75).

But when token expired after 10 minutes (Spark obtains new one and doesn't renew the old), the brokers expiring thread comes after each 5 minutes (invalidates expired tokens) and artificial exception has been thrown inside the Spark application (such case Spark closes connection), then the latest delegation token not always picked up.

Closes#23906 from gaborgsomogyi/SPARK-27002.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

Continue the API refactor for streaming write, according to the [doc](https://docs.google.com/document/d/1vI26UEuDpVuOjWw4WPoH2T6y8WAekwtI7qoowhOFnI4/edit?usp=sharing).

The major changes:

1. rename `StreamingWriteSupport` to `StreamingWrite`

2. add `WriteBuilder.buildForStreaming`

3. update existing sinks, to move the creation of `StreamingWrite` to `Table`

## How was this patch tested?

existing tests

Closes#23702 from cloud-fan/stream-write.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

In the PR, I propose to use `System.nanoTime()` instead of `System.currentTimeMillis()` in measurements of time intervals.

`System.currentTimeMillis()` returns current wallclock time and will follow changes to the system clock. Thus, negative wallclock adjustments can cause timeouts to "hang" for a long time (until wallclock time has caught up to its previous value again). This can happen when ntpd does a "step" after the network has been disconnected for some time. The most canonical example is during system bootup when DHCP takes longer than usual. This can lead to failures that are really hard to understand/reproduce. `System.nanoTime()` is guaranteed to be monotonically increasing irrespective of wallclock changes.

## How was this patch tested?

By existing test suites.

Closes#23727 from MaxGekk/system-nanotime.

Lead-authored-by: Maxim Gekk <max.gekk@gmail.com>

Co-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Fix the integer overflow issue in rateLimit.

## How was this patch tested?

Pass the Jenkins with newly added UT for the possible case where integer could be overflowed.

Closes#23666 from linehrr/master.

Authored-by: ryne.yang <ryne.yang@acuityads.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Following https://github.com/apache/spark/pull/23430, this PR does the API refactor for continuous read, w.r.t. the [doc](https://docs.google.com/document/d/1uUmKCpWLdh9vHxP7AWJ9EgbwB_U6T3EJYNjhISGmiQg/edit?usp=sharing)

The major changes:

1. rename `XXXContinuousReadSupport` to `XXXContinuousStream`

2. at the beginning of continuous streaming execution, convert `StreamingRelationV2` to `StreamingDataSourceV2Relation` directly, instead of `StreamingExecutionRelation`.

3. remove all the hacks as we have finished all the read side API refactor

## How was this patch tested?

existing tests

Closes#23619 from cloud-fan/continuous.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

There are ugly provided dependencies inside core for the following:

* Hive

* Kafka

In this PR I've extracted them out. This PR contains the following:

* Token providers are now loaded with service loader

* Hive token provider moved to hive project

* Kafka token provider extracted into a new project

## How was this patch tested?

Existing + newly added unit tests.

Additionally tested on cluster.

Closes#23499 from gaborgsomogyi/SPARK-26254.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

Following https://github.com/apache/spark/pull/23086, this PR does the API refactor for micro-batch read, w.r.t. the [doc](https://docs.google.com/document/d/1uUmKCpWLdh9vHxP7AWJ9EgbwB_U6T3EJYNjhISGmiQg/edit?usp=sharing)

The major changes:

1. rename `XXXMicroBatchReadSupport` to `XXXMicroBatchReadStream`

2. implement `TableProvider`, `Table`, `ScanBuilder` and `Scan` for streaming sources

3. at the beginning of micro-batch streaming execution, convert `StreamingRelationV2` to `StreamingDataSourceV2Relation` directly, instead of `StreamingExecutionRelation`.

followup:

support operator pushdown for stream sources

## How was this patch tested?

existing tests

Closes#23430 from cloud-fan/micro-batch.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

This patch adds the check to verify consumer group id is given correctly when custom group id is provided to Kafka parameter.

## How was this patch tested?

Modified UT.

Closes#23544 from HeartSaVioR/SPARK-26350-follow-up-actual-verification-on-UT.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan@gmail.com>

Signed-off-by: Shixiong Zhu <zsxwing@gmail.com>

## What changes were proposed in this pull request?

This PR allows the user to override `kafka.group.id` for better monitoring or security. The user needs to make sure there are not multiple queries or sources using the same group id.

It also fixes a bug that the `groupIdPrefix` option cannot be retrieved.

## How was this patch tested?

The new added unit tests.

Closes#23301 from zsxwing/SPARK-26350.

Authored-by: Shixiong Zhu <zsxwing@gmail.com>

Signed-off-by: Shixiong Zhu <zsxwing@gmail.com>

## What changes were proposed in this pull request?

This PR upgrades Mockito from 1.10.19 to 2.23.4. The following changes are required.

- Replace `org.mockito.Matchers` with `org.mockito.ArgumentMatchers`

- Replace `anyObject` with `any`

- Replace `getArgumentAt` with `getArgument` and add type annotation.

- Use `isNull` matcher in case of `null` is invoked.

```scala

saslHandler.channelInactive(null);

- verify(handler).channelInactive(any(TransportClient.class));

+ verify(handler).channelInactive(isNull());

```

- Make and use `doReturn` wrapper to avoid [SI-4775](https://issues.scala-lang.org/browse/SI-4775)

```scala

private def doReturn(value: Any) = org.mockito.Mockito.doReturn(value, Seq.empty: _*)

```

## How was this patch tested?

Pass the Jenkins with the existing tests.

Closes#23452 from dongjoon-hyun/SPARK-26536.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>