## What changes were proposed in this pull request?

When Arrow optimization is enabled in Python 2.7,

```python

import pandas

pdf = pandas.DataFrame(["test1", "test2"])

df = spark.createDataFrame(pdf)

df.show()

```

I got the following output:

```

+----------------+

| 0|

+----------------+

|[74 65 73 74 31]|

|[74 65 73 74 32]|

+----------------+

```

This looks because Python's `str` and `byte` are same. it does look right:

```python

>>> str == bytes

True

>>> isinstance("a", bytes)

True

```

To cut it short:

1. Python 2 treats `str` as `bytes`.

2. PySpark added some special codes and hacks to recognizes `str` as string types.

3. PyArrow / Pandas followed Python 2 difference

To fix, we have two options:

1. Fix it to match the behaviour to PySpark's

2. Note the differences

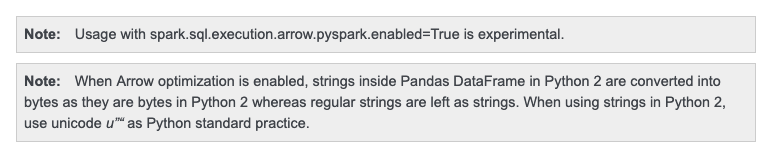

but Python 2 is deprecated anyway. I think it's better to just note it and for go option 2.

## How was this patch tested?

Manually tested.

Doc was checked too:

Closes#24838 from HyukjinKwon/SPARK-27995.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>