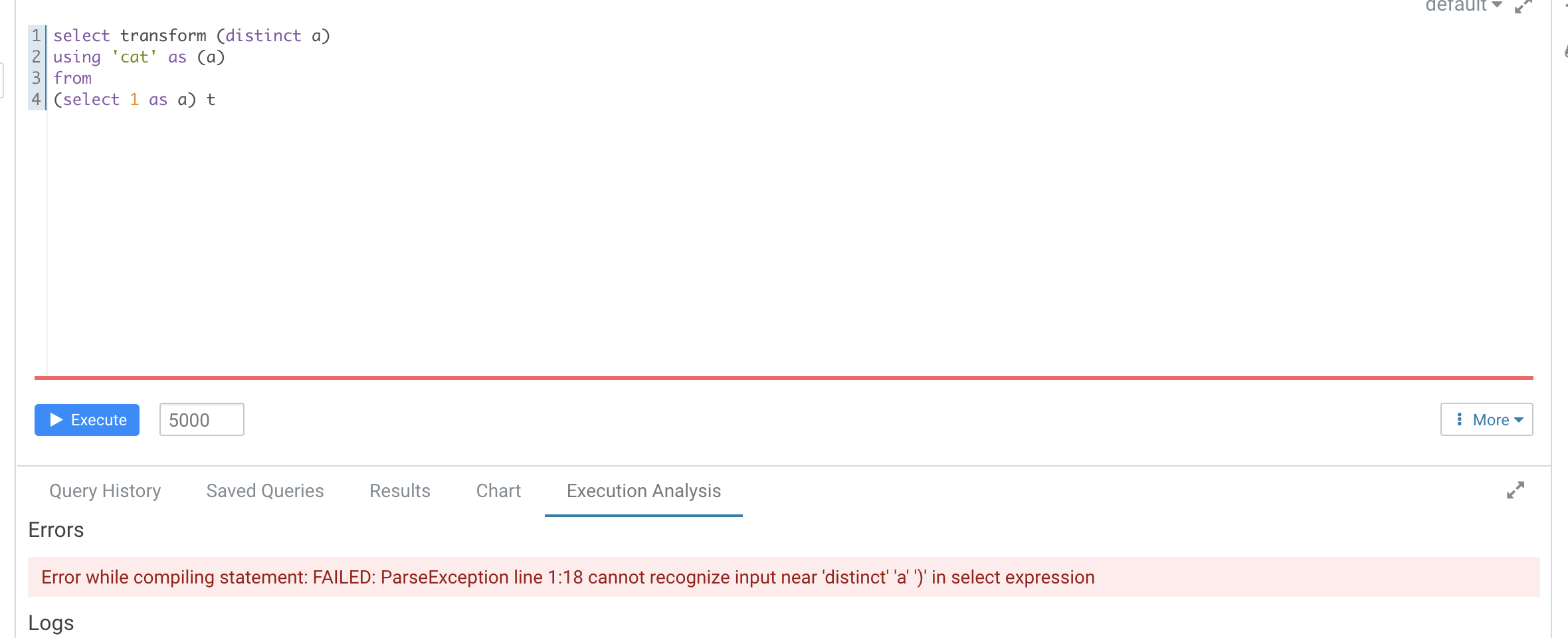

### What changes were proposed in this pull request? According to https://github.com/apache/spark/pull/29087#discussion_r612267050, add UT in `transform.sql` It seems that distinct is not recognized as a reserved word here ``` -- !query explain extended SELECT TRANSFORM(distinct b, a, c) USING 'cat' AS (a, b, c) FROM script_trans WHERE a <= 4 -- !query schema struct<plan:string> -- !query output == Parsed Logical Plan == 'ScriptTransformation [*], cat, [a#x, b#x, c#x], ScriptInputOutputSchema(List(),List(),None,None,List(),List(),None,None,false) +- 'Project ['distinct AS b#x, 'a, 'c] +- 'Filter ('a <= 4) +- 'UnresolvedRelation [script_trans], [], false == Analyzed Logical Plan == org.apache.spark.sql.AnalysisException: cannot resolve 'distinct' given input columns: [script_trans.a, script_trans.b, script_trans.c]; line 1 pos 34; 'ScriptTransformation [*], cat, [a#x, b#x, c#x], ScriptInputOutputSchema(List(),List(),None,None,List(),List(),None,None,false) +- 'Project ['distinct AS b#x, a#x, c#x] +- Filter (a#x <= 4) +- SubqueryAlias script_trans +- View (`script_trans`, [a#x,b#x,c#x]) +- Project [cast(a#x as int) AS a#x, cast(b#x as int) AS b#x, cast(c#x as int) AS c#x] +- Project [a#x, b#x, c#x] +- SubqueryAlias script_trans +- LocalRelation [a#x, b#x, c#x] ``` Hive's error  ### Why are the changes needed? ### Does this PR introduce _any_ user-facing change? No ### How was this patch tested? Added Ut Closes #32149 from AngersZhuuuu/SPARK-28227-new-followup. Authored-by: Angerszhuuuu <angers.zhu@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> |

||

|---|---|---|

| .. | ||

| catalyst | ||

| core | ||

| hive | ||

| hive-thriftserver | ||

| create-docs.sh | ||

| gen-sql-api-docs.py | ||

| gen-sql-config-docs.py | ||

| gen-sql-functions-docs.py | ||

| mkdocs.yml | ||

| README.md | ||

Spark SQL

This module provides support for executing relational queries expressed in either SQL or the DataFrame/Dataset API.

Spark SQL is broken up into four subprojects:

- Catalyst (sql/catalyst) - An implementation-agnostic framework for manipulating trees of relational operators and expressions.

- Execution (sql/core) - A query planner / execution engine for translating Catalyst's logical query plans into Spark RDDs. This component also includes a new public interface, SQLContext, that allows users to execute SQL or LINQ statements against existing RDDs and Parquet files.

- Hive Support (sql/hive) - Includes extensions that allow users to write queries using a subset of HiveQL and access data from a Hive Metastore using Hive SerDes. There are also wrappers that allow users to run queries that include Hive UDFs, UDAFs, and UDTFs.

- HiveServer and CLI support (sql/hive-thriftserver) - Includes support for the SQL CLI (bin/spark-sql) and a HiveServer2 (for JDBC/ODBC) compatible server.

Running ./sql/create-docs.sh generates SQL documentation for built-in functions under sql/site, and SQL configuration documentation that gets included as part of configuration.md in the main docs directory.