## What changes were proposed in this pull request?

Add a non-intrusive button for python API documentation, which will remove ">>>" prompts and outputs of code - for easier copying of code.

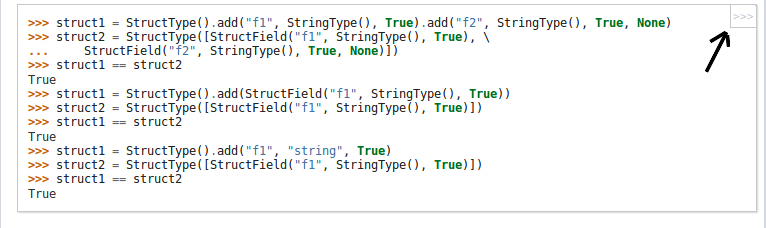

For example: The below code-snippet in the document is difficult to copy due to ">>>" prompts

```

>>> l = [('Alice', 1)]

>>> spark.createDataFrame(l).collect()

[Row(_1='Alice', _2=1)]

```

Becomes this - After the copybutton in the corner of of code-block is pressed - which is easier to copy

```

l = [('Alice', 1)]

spark.createDataFrame(l).collect()

```

## File changes

Made changes to python/docs/conf.py and copybutton.js - thus only modifying sphinx frontend and no changes were made to the documentation itself- Build process for documentation remains the same.

copybutton.js -> This JS snippet was taken from the official python.org documentation site.

## How was this patch tested?

NA

Closes #24456 from sangramga/copybutton.

Authored-by: sangramga <sangramga@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

|

||

|---|---|---|

| .. | ||

| docs | ||

| lib | ||

| pyspark | ||

| test_coverage | ||

| test_support | ||

| .coveragerc | ||

| .gitignore | ||

| MANIFEST.in | ||

| pylintrc | ||

| README.md | ||

| run-tests | ||

| run-tests-with-coverage | ||

| run-tests.py | ||

| setup.cfg | ||

| setup.py | ||

Apache Spark

Spark is a fast and general cluster computing system for Big Data. It provides high-level APIs in Scala, Java, Python, and R, and an optimized engine that supports general computation graphs for data analysis. It also supports a rich set of higher-level tools including Spark SQL for SQL and DataFrames, MLlib for machine learning, GraphX for graph processing, and Spark Streaming for stream processing.

Online Documentation

You can find the latest Spark documentation, including a programming guide, on the project web page

Python Packaging

This README file only contains basic information related to pip installed PySpark. This packaging is currently experimental and may change in future versions (although we will do our best to keep compatibility). Using PySpark requires the Spark JARs, and if you are building this from source please see the builder instructions at "Building Spark".

The Python packaging for Spark is not intended to replace all of the other use cases. This Python packaged version of Spark is suitable for interacting with an existing cluster (be it Spark standalone, YARN, or Mesos) - but does not contain the tools required to set up your own standalone Spark cluster. You can download the full version of Spark from the Apache Spark downloads page.

NOTE: If you are using this with a Spark standalone cluster you must ensure that the version (including minor version) matches or you may experience odd errors.

Python Requirements

At its core PySpark depends on Py4J (currently version 0.10.8.1), but some additional sub-packages have their own extra requirements for some features (including numpy, pandas, and pyarrow).