## What changes were proposed in this pull request?

The `TRIM` function accept these patterns:

```sql

TRIM(str)

TRIM(trimStr, str)

TRIM(BOTH trimStr FROM str)

TRIM(LEADING trimStr FROM str)

TRIM(TRAILING trimStr FROM str)

```

This pr add support other three patterns:

```sql

TRIM(BOTH FROM str)

TRIM(LEADING FROM str)

TRIM(TRAILING FROM str)

```

PostgreSQL, Vertica, MySQL, Teradata, Oracle and DB2 support these patterns. Hive, Presto and SQL Server does not support this feature.

**PostgreSQL**:

```sql

postgres=# select substr(version(), 0, 16), trim(BOTH from ' SparkSQL '), trim(LEADING FROM ' SparkSQL '), trim(TRAILING FROM ' SparkSQL ');

substr | btrim | ltrim | rtrim

-----------------+----------+-------------+--------------

PostgreSQL 11.3 | SparkSQL | SparkSQL | SparkSQL

(1 row)

```

**Vertica**:

```

dbadmin=> select version(), trim(BOTH from ' SparkSQL '), trim(LEADING FROM ' SparkSQL '), trim(TRAILING FROM ' SparkSQL ');

version | btrim | ltrim | rtrim

------------------------------------+----------+-------------+--------------

Vertica Analytic Database v9.1.1-0 | SparkSQL | SparkSQL | SparkSQL

(1 row)

```

**MySQL**:

```

mysql> select version(), trim(BOTH from ' SparkSQL '), trim(LEADING FROM ' SparkSQL '), trim(TRAILING FROM ' SparkSQL ');

+-----------+-----------------------------------+--------------------------------------+---------------------------------------+

| version() | trim(BOTH from ' SparkSQL ') | trim(LEADING FROM ' SparkSQL ') | trim(TRAILING FROM ' SparkSQL ') |

+-----------+-----------------------------------+--------------------------------------+---------------------------------------+

| 5.7.26 | SparkSQL | SparkSQL | SparkSQL |

+-----------+-----------------------------------+--------------------------------------+---------------------------------------+

1 row in set (0.01 sec)

```

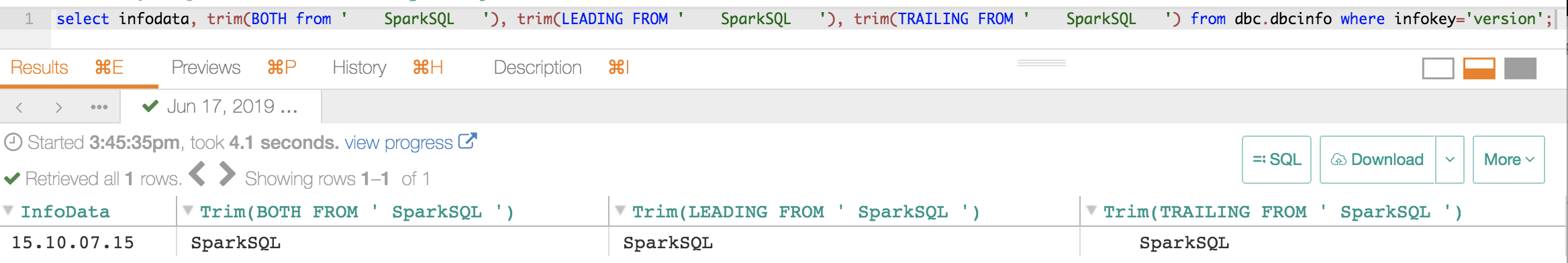

**Teradata**:

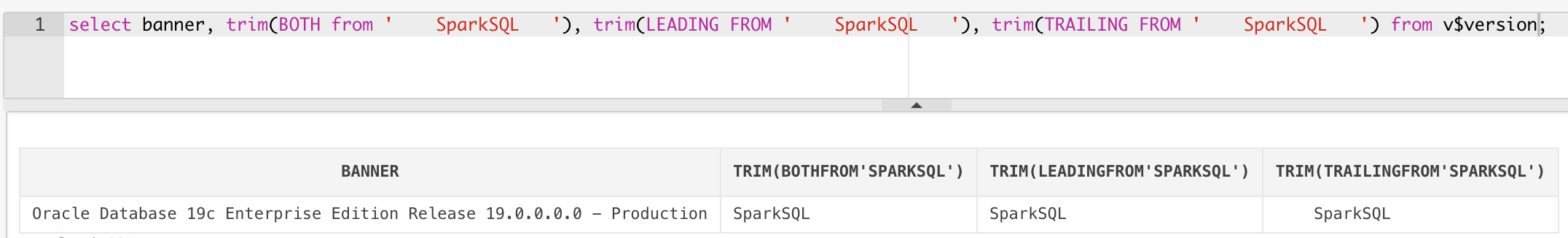

**Oracle**:

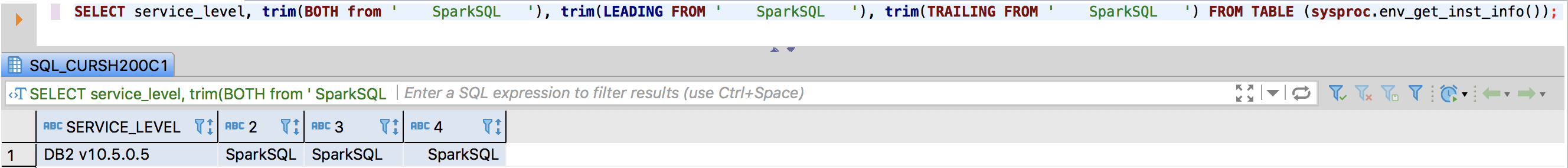

**DB2**:

## How was this patch tested?

unit tests

Closes #24891 from wangyum/SPARK-28075.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

|

||

|---|---|---|

| .. | ||

| catalyst | ||

| core | ||

| hive | ||

| hive-thriftserver | ||

| create-docs.sh | ||

| gen-sql-markdown.py | ||

| mkdocs.yml | ||

| README.md | ||

Spark SQL

This module provides support for executing relational queries expressed in either SQL or the DataFrame/Dataset API.

Spark SQL is broken up into four subprojects:

- Catalyst (sql/catalyst) - An implementation-agnostic framework for manipulating trees of relational operators and expressions.

- Execution (sql/core) - A query planner / execution engine for translating Catalyst's logical query plans into Spark RDDs. This component also includes a new public interface, SQLContext, that allows users to execute SQL or LINQ statements against existing RDDs and Parquet files.

- Hive Support (sql/hive) - Includes an extension of SQLContext called HiveContext that allows users to write queries using a subset of HiveQL and access data from a Hive Metastore using Hive SerDes. There are also wrappers that allow users to run queries that include Hive UDFs, UDAFs, and UDTFs.

- HiveServer and CLI support (sql/hive-thriftserver) - Includes support for the SQL CLI (bin/spark-sql) and a HiveServer2 (for JDBC/ODBC) compatible server.

Running sql/create-docs.sh generates SQL documentation for built-in functions under sql/site.