### What changes were proposed in this pull request?

This patch proposes to preserve existing permission/acls of paths when truncate table/partition.

### Why are the changes needed?

When Spark SQL truncates table, it deletes the paths of table/partitions, then re-create new ones. If permission/acls were set on the paths, the existing permission/acls will be deleted.

We should preserve the permission/acls if possible.

### Does this PR introduce any user-facing change?

Yes. When truncate table/partition, Spark will keep permission/acls of paths.

### How was this patch tested?

Unit test.

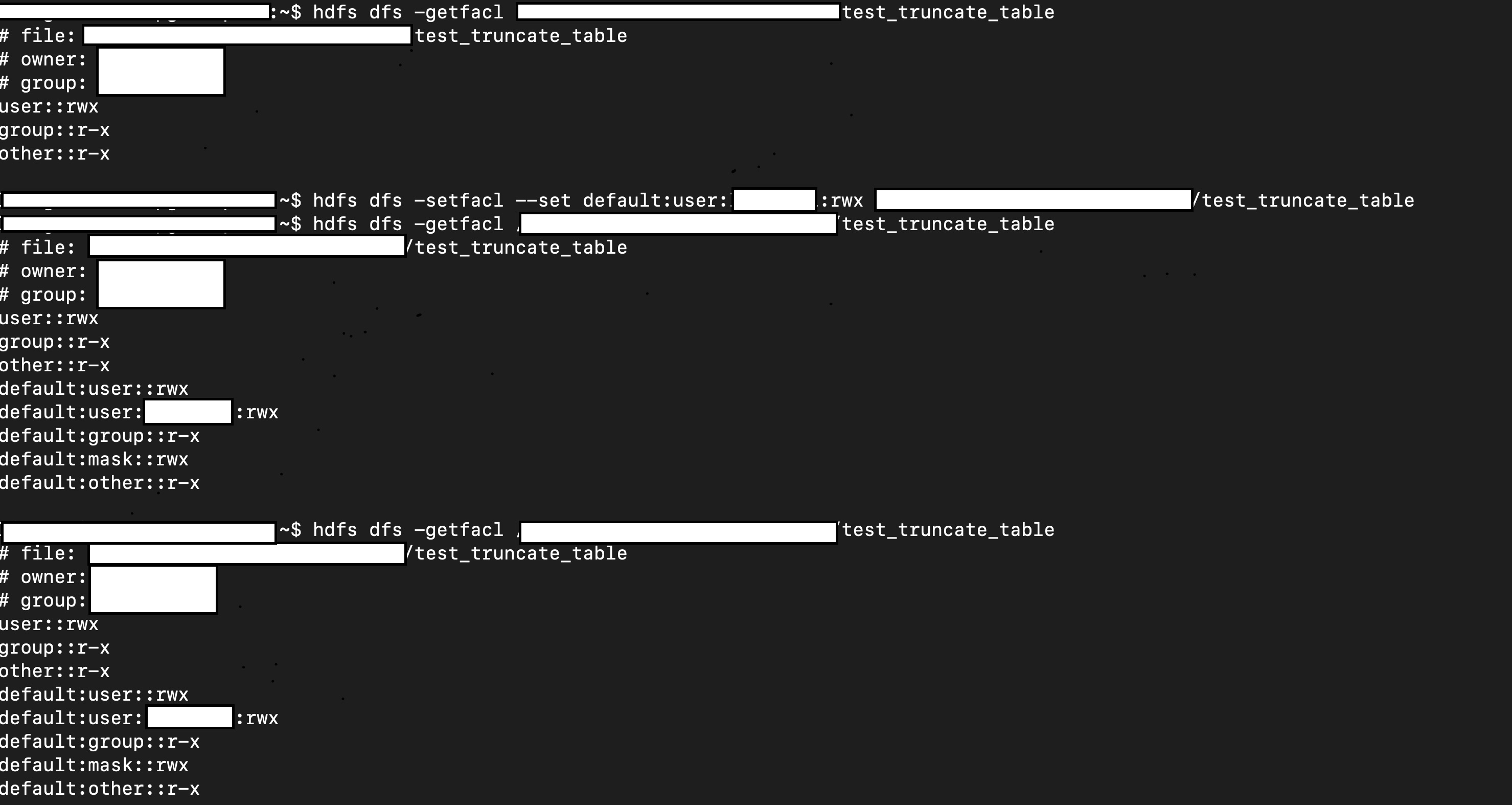

Manual test:

1. Create a table.

2. Manually change it permission/acl

3. Truncate table

4. Check permission/acl

```scala

val df = Seq(1, 2, 3).toDF

df.write.mode("overwrite").saveAsTable("test.test_truncate_table")

val testTable = spark.table("test.test_truncate_table")

testTable.show()

+-----+

|value|

+-----+

| 1|

| 2|

| 3|

+-----+

// hdfs dfs -setfacl ...

// hdfs dfs -getfacl ...

sql("truncate table test.test_truncate_table")

// hdfs dfs -getfacl ...

val testTable2 = spark.table("test.test_truncate_table")

testTable2.show()

+-----+

|value|

+-----+

+-----+

```

Closes #26956 from viirya/truncate-table-permission.

Lead-authored-by: Liang-Chi Hsieh <liangchi@uber.com>

Co-authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

|

||

|---|---|---|

| .. | ||

| catalyst | ||

| core | ||

| hive | ||

| hive-thriftserver | ||

| create-docs.sh | ||

| gen-sql-markdown.py | ||

| mkdocs.yml | ||

| README.md | ||

Spark SQL

This module provides support for executing relational queries expressed in either SQL or the DataFrame/Dataset API.

Spark SQL is broken up into four subprojects:

- Catalyst (sql/catalyst) - An implementation-agnostic framework for manipulating trees of relational operators and expressions.

- Execution (sql/core) - A query planner / execution engine for translating Catalyst's logical query plans into Spark RDDs. This component also includes a new public interface, SQLContext, that allows users to execute SQL or LINQ statements against existing RDDs and Parquet files.

- Hive Support (sql/hive) - Includes extensions that allow users to write queries using a subset of HiveQL and access data from a Hive Metastore using Hive SerDes. There are also wrappers that allow users to run queries that include Hive UDFs, UDAFs, and UDTFs.

- HiveServer and CLI support (sql/hive-thriftserver) - Includes support for the SQL CLI (bin/spark-sql) and a HiveServer2 (for JDBC/ODBC) compatible server.

Running ./sql/create-docs.sh generates SQL documentation for built-in functions under sql/site.