## What changes were proposed in this pull request?

Seems like we used to generate PySpark API documentation by Epydoc almost at the very first place (see 85b8f2c64f).

This fixes an actual issue:

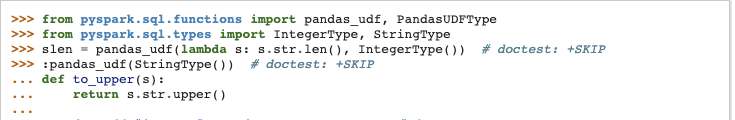

Before:

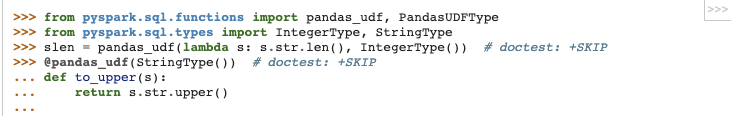

After:

It seems apparently a bug within `epytext` plugin during the conversion between`param` and `:param` syntax. See also [Epydoc syntax](http://epydoc.sourceforge.net/manual-epytext.html).

Actually, Epydoc syntax violates [PEP-257](https://www.python.org/dev/peps/pep-0257/) IIRC and blocks us to enable some rules for doctest linter as well.

We should remove this legacy away and I guess Spark 3 is good timing to do it.

## How was this patch tested?

Manually built the doc and check each.

I had to manually find the Epydoc syntax by `git grep -r "{L"`, for instance.

Closes #25060 from HyukjinKwon/SPARK-28206.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Xiangrui Meng <meng@databricks.com>

59 lines

1.8 KiB

Python

59 lines

1.8 KiB

Python

#

|

|

# Licensed to the Apache Software Foundation (ASF) under one or more

|

|

# contributor license agreements. See the NOTICE file distributed with

|

|

# this work for additional information regarding copyright ownership.

|

|

# The ASF licenses this file to You under the Apache License, Version 2.0

|

|

# (the "License"); you may not use this file except in compliance with

|

|

# the License. You may obtain a copy of the License at

|

|

#

|

|

# http://www.apache.org/licenses/LICENSE-2.0

|

|

#

|

|

# Unless required by applicable law or agreed to in writing, software

|

|

# distributed under the License is distributed on an "AS IS" BASIS,

|

|

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

|

# See the License for the specific language governing permissions and

|

|

# limitations under the License.

|

|

#

|

|

|

|

import os

|

|

|

|

|

|

__all__ = ['SparkFiles']

|

|

|

|

|

|

class SparkFiles(object):

|

|

|

|

"""

|

|

Resolves paths to files added through :meth:`SparkContext.addFile`.

|

|

|

|

SparkFiles contains only classmethods; users should not create SparkFiles

|

|

instances.

|

|

"""

|

|

|

|

_root_directory = None

|

|

_is_running_on_worker = False

|

|

_sc = None

|

|

|

|

def __init__(self):

|

|

raise NotImplementedError("Do not construct SparkFiles objects")

|

|

|

|

@classmethod

|

|

def get(cls, filename):

|

|

"""

|

|

Get the absolute path of a file added through :meth:`SparkContext.addFile`.

|

|

"""

|

|

path = os.path.join(SparkFiles.getRootDirectory(), filename)

|

|

return os.path.abspath(path)

|

|

|

|

@classmethod

|

|

def getRootDirectory(cls):

|

|

"""

|

|

Get the root directory that contains files added through

|

|

:meth:`SparkContext.addFile`.

|

|

"""

|

|

if cls._is_running_on_worker:

|

|

return cls._root_directory

|

|

else:

|

|

# This will have to change if we support multiple SparkContexts:

|

|

return cls._sc._jvm.org.apache.spark.SparkFiles.getRootDirectory()

|