### What changes were proposed in this pull request?

rename `QueryPlan.collectInPlanAndSubqueries` to `collectWithSubqueries`

### Why are the changes needed?

The old name is too verbose. `QueryPlan` is internal but it's the core of catalyst and we'd better make the API name clearer before we release it.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

N/A

Closes#28092 from cloud-fan/rename.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

In the PR, I propose to add new benchmarks to `DateTimeRebaseBenchmark` for saving and loading dates/timestamps to/from ORC files. I extracted common code from the benchmark for Parquet datasource and place it to the methods `caseName()` and `getPath()`. Added benchmarks for ORC save/load dates before and after 1582-10-15 because an implementation may have different performance for dates before the Julian calendar cutover day, see #28067 as an example.

### Why are the changes needed?

To have the base line for future optimizations of `fromJavaDate()`/`toJavaDate()` and `toJavaTimestamp()`/`fromJavaTimestamp()` in `DateTimeUtils`. The methods are used while saving/loading dates/timestamps by ORC datasource.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By running the updated benchmark `DateTimeRebaseBenchmark` via the command:

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.DateTimeRebaseBenchmark"

```

in the environment:

| Item | Description |

| ---- | ----|

| Region | us-west-2 (Oregon) |

| Instance | r3.xlarge |

| AMI | ubuntu/images/hvm-ssd/ubuntu-bionic-18.04-amd64-server-20190722.1 (ami-06f2f779464715dc5) |

| Java | OpenJDK 1.8.0_242-8u242/11.0.6+10 |

Closes#28076 from MaxGekk/rebase-benchmark-orc.

Lead-authored-by: Max Gekk <max.gekk@gmail.com>

Co-authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to replace the following SQL configs:

1. `spark.sql.legacy.parquet.rebaseDateTime.enabled` by

- `spark.sql.legacy.parquet.rebaseDateTimeInWrite.enabled` (`false` by default). The config enables rebasing dates/timestamps while saving to Parquet files. If it is set to `true`, dates/timestamps are converted to local date-time in Proleptic Gregorian calendar, date-time fields are extracted, and used in building new local date-time in the hybrid calendar (Julian + Gregorian). The resulted local date-time is converted to days or microseconds since the epoch.

- `spark.sql.legacy.parquet.rebaseDateTimeInRead.enabled` (`false` by default). The config enables rebasing of dates/timestamps in reading from Parquet files.

2. `spark.sql.legacy.avro.rebaseDateTime.enabled` by

- `spark.sql.legacy.avro.rebaseDateTimeInWrite.enabled` (`false` by default). It enables dates/timestamps rebasing from Proleptic Gregorian calendar to the hybrid calendar via local date/timestamps.

- `spark.sql.legacy.avro.rebaseDateTimeInRead.enabled` (`false` by default). It enables rebasing dates/timestamps from the hybrid calendar to Proleptic Gregorian calendar in read. The rebasing is performed by converting micros/millis/days to a local date/timestamp in the source calendar, interpreting the resulted date/timestamp in the target calendar, and getting the number of micros/millis/days since the epoch 1970-01-01 00:00:00Z.

### Why are the changes needed?

This allows to load dates/timestamps saved by Spark 2.4, and save to Parquet/Avro files without rebasing. And the reverse use case - load data saved by Spark 3.0, and save it in the form which is compatible with Spark 2.4.

### Does this PR introduce any user-facing change?

Yes, users have to use new SQL configs. Old SQL configs are removed by the PR.

### How was this patch tested?

By existing test suites `AvroV1Suite`, `AvroV2Suite` and `ParquetIOSuite`.

Closes#28082 from MaxGekk/split-rebase-configs.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

<!--

Thanks for sending a pull request! Here are some tips for you:

1. If this is your first time, please read our contributor guidelines: https://spark.apache.org/contributing.html

2. Ensure you have added or run the appropriate tests for your PR: https://spark.apache.org/developer-tools.html

3. If the PR is unfinished, add '[WIP]' in your PR title, e.g., '[WIP][SPARK-XXXX] Your PR title ...'.

4. Be sure to keep the PR description updated to reflect all changes.

5. Please write your PR title to summarize what this PR proposes.

6. If possible, provide a concise example to reproduce the issue for a faster review.

7. If you want to add a new configuration, please read the guideline first for naming configurations in

'core/src/main/scala/org/apache/spark/internal/config/ConfigEntry.scala'.

-->

### What changes were proposed in this pull request?

<!--

Please clarify what changes you are proposing. The purpose of this section is to outline the changes and how this PR fixes the issue.

If possible, please consider writing useful notes for better and faster reviews in your PR. See the examples below.

1. If you refactor some codes with changing classes, showing the class hierarchy will help reviewers.

2. If you fix some SQL features, you can provide some references of other DBMSes.

3. If there is design documentation, please add the link.

4. If there is a discussion in the mailing list, please add the link.

-->

Add SQL metrics to the AQE shuffle reader (`CustomShuffleReaderExec`)

### Why are the changes needed?

<!--

Please clarify why the changes are needed. For instance,

1. If you propose a new API, clarify the use case for a new API.

2. If you fix a bug, you can clarify why it is a bug.

-->

to be more UI friendly

### Does this PR introduce any user-facing change?

<!--

If yes, please clarify the previous behavior and the change this PR proposes - provide the console output, description and/or an example to show the behavior difference if possible.

If no, write 'No'.

-->

No

### How was this patch tested?

<!--

If tests were added, say they were added here. Please make sure to add some test cases that check the changes thoroughly including negative and positive cases if possible.

If it was tested in a way different from regular unit tests, please clarify how you tested step by step, ideally copy and paste-able, so that other reviewers can test and check, and descendants can verify in the future.

If tests were not added, please describe why they were not added and/or why it was difficult to add.

-->

new test

Closes#28022 from cloud-fan/metrics.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

### What changes were proposed in this pull request?

Added Java UDF suggestion in the in error message of untyped Scala UDF.

### Why are the changes needed?

To help user migrate their use case from deprecate untyped Scala UDF to other supported UDF.

### Does this PR introduce any user-facing change?

No. It haven't been released.

### How was this patch tested?

Pass Jenkins.

Closes#28070 from Ngone51/spark_31010.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Create statement plans in `DataFrameWriter(V2)`, like the SQL API.

### Why are the changes needed?

It's better to leave all the resolution work to the analyzer.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

existing tests

Closes#27992 from cloud-fan/statement.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to replace current implementation of the `rebaseGregorianToJulianDays()` and `rebaseJulianToGregorianDays()` functions in `DateTimeUtils` by new one which is based on the fact that difference between Proleptic Gregorian and the hybrid (Julian+Gregorian) calendars was changed only 14 times for entire supported range of valid dates `[0001-01-01, 9999-12-31]`:

| date | Proleptic Greg. days | Hybrid (Julian+Greg) days | diff|

| ---- | ----|----|----|

|0001-01-01|-719162|-719164|-2|

|0100-03-01|-682944|-682945|-1|

|0200-03-01|-646420|-646420|0|

|0300-03-01|-609896|-609895|1|

|0500-03-01|-536847|-536845|2|

|0600-03-01|-500323|-500320|3|

|0700-03-01|-463799|-463795|4|

|0900-03-01|-390750|-390745|5|

|1000-03-01|-354226|-354220|6|

|1100-03-01|-317702|-317695|7|

|1300-03-01|-244653|-244645|8|

|1400-03-01|-208129|-208120|9|

|1500-03-01|-171605|-171595|10|

|1582-10-15|-141427|-141427|0|

For the given days since the epoch, the proposed implementation finds the range of days which the input days belongs to, and adds the diff in days between calendars to the input. The result is rebased days since the epoch in the target calendar.

For example, if need to rebase -650000 days from Proleptic Gregorian calendar to the hybrid calendar. In that case, the input falls to the bucket [-682944, -646420), the diff associated with the range is -1. To get the rebased days in Julian calendar, we should add -1 to -650000, and the result is -650001.

### Why are the changes needed?

To make dates rebasing faster.

### Does this PR introduce any user-facing change?

No, the results should be the same for valid range of the `DATE` type `[0001-01-01, 9999-12-31]`.

### How was this patch tested?

- Added 2 tests to `DateTimeUtilsSuite` for the `rebaseGregorianToJulianDays()` and `rebaseJulianToGregorianDays()` functions. The tests check that results of old and new implementation (optimized version) are the same for all supported dates.

- Re-run `DateTimeRebaseBenchmark` on:

| Item | Description |

| ---- | ----|

| Region | us-west-2 (Oregon) |

| Instance | r3.xlarge |

| AMI | ubuntu/images/hvm-ssd/ubuntu-bionic-18.04-amd64-server-20190722.1 (ami-06f2f779464715dc5) |

| Java | OpenJDK8/11 |

Closes#28067 from MaxGekk/optimize-rebasing.

Lead-authored-by: Maxim Gekk <max.gekk@gmail.com>

Co-authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

A small documentation change to clarify that the `rand()` function produces values in `[0.0, 1.0)`.

### Why are the changes needed?

`rand()` uses `Rand()` - which generates values in [0, 1) ([documented here](a1dbcd13a3/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/expressions/randomExpressions.scala (L71))). The existing documentation suggests that 1.0 is a possible value returned by rand (i.e for a distribution written as `X ~ U(a, b)`, x can be a or b, so `U[0.0, 1.0]` suggests the value returned could include 1.0).

### Does this PR introduce any user-facing change?

Only documentation changes.

### How was this patch tested?

Documentation changes only.

Closes#28071 from Smeb/master.

Authored-by: Ben Ryves <benjamin.ryves@getyourguide.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

In the PR, I propose to add new benchmark `DateTimeRebaseBenchmark` which should measure the performance of rebasing of dates/timestamps from/to to the hybrid calendar (Julian+Gregorian) to/from Proleptic Gregorian calendar:

1. In write, it saves separately dates and timestamps before and after 1582 year w/ and w/o rebasing.

2. In read, it loads previously saved parquet files by vectorized reader and by regular reader.

Here is the summary of benchmarking:

- Saving timestamps is **~6 times slower**

- Loading timestamps w/ vectorized **off** is **~4 times slower**

- Loading timestamps w/ vectorized **on** is **~10 times slower**

### Why are the changes needed?

To know the impact of date-time rebasing introduced by #27915, #27953, #27807.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Run the `DateTimeRebaseBenchmark` benchmark using Amazon EC2:

| Item | Description |

| ---- | ----|

| Region | us-west-2 (Oregon) |

| Instance | r3.xlarge |

| AMI | ubuntu/images/hvm-ssd/ubuntu-bionic-18.04-amd64-server-20190722.1 (ami-06f2f779464715dc5) |

| Java | OpenJDK8/11 |

Closes#28057 from MaxGekk/rebase-bechmark.

Lead-authored-by: Maxim Gekk <max.gekk@gmail.com>

Co-authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

`DataFrameStatFunctions` now works correctly with fully qualified column name (Table.Column syntax) by properly resolving the name instead of relying on field names from schema, notably:

* `approxQuantile`

* `freqItems`

* `cov`

* `corr`

(other functions from `DataFrameStatFunctions` already work correctly).

See code examples below.

### Why are the changes needed?

With current implementation some stat functions are impossible to use when joining datasets with similar column names.

### Does this PR introduce any user-facing change?

Yes. Before the change, the following code would fail with `AnalysisException`.

```scala

scala> val df1 = sc.parallelize(0 to 10).toDF("num").as("table1")

df1: org.apache.spark.sql.Dataset[org.apache.spark.sql.Row] = [num: int]

scala> val df2 = sc.parallelize(0 to 10).toDF("num").as("table2")

df2: org.apache.spark.sql.Dataset[org.apache.spark.sql.Row] = [num: int]

scala> val dfx = df2.crossJoin(df1)

dfx: org.apache.spark.sql.DataFrame = [num: int, num: int]

scala> dfx.stat.approxQuantile("table1.num", Array(0.1), 0.0)

res0: Array[Double] = Array(1.0)

scala> dfx.stat.corr("table1.num", "table2.num")

res1: Double = 1.0

scala> dfx.stat.cov("table1.num", "table2.num")

res2: Double = 11.0

scala> dfx.stat.freqItems(Array("table1.num", "table2.num"))

res3: org.apache.spark.sql.DataFrame = [table1.num_freqItems: array<int>, table2.num_freqItems: array<int>]

```

### How was this patch tested?

Corresponding unit tests are added to `DataFrameStatSuite.scala` (marked as "SPARK-30532").

Closes#27916 from kachayev/fix-spark-30532.

Authored-by: Oleksii Kachaiev <kachayev@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to update the doc for the `timeZone` option in JSON/CSV datasources and for the `tz` parameter of the `from_utc_timestamp()`/`to_utc_timestamp()` functions, and to restrict format of config's values to 2 forms:

1. Geographical regions, such as `America/Los_Angeles`.

2. Fixed offsets - a fully resolved offset from UTC. For example, `-08:00`.

### Why are the changes needed?

Other formats such as three-letter time zone IDs are ambitious, and depend on the locale. For example, `CST` could be U.S. `Central Standard Time` and `China Standard Time`. Such formats have been already deprecated in JDK, see [Three-letter time zone IDs](https://docs.oracle.com/javase/8/docs/api/java/util/TimeZone.html).

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By running `./dev/scalastyle`, and manual testing.

Closes#28051 from MaxGekk/doc-time-zone-option.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

```sql

scala> spark.sql(" select * from values(1), (2) t(key) where key in (select 1 as key where 1=0)").queryExecution

res15: org.apache.spark.sql.execution.QueryExecution =

== Parsed Logical Plan ==

'Project [*]

+- 'Filter 'key IN (list#39 [])

: +- Project [1 AS key#38]

: +- Filter (1 = 0)

: +- OneRowRelation

+- 'SubqueryAlias t

+- 'UnresolvedInlineTable [key], [List(1), List(2)]

== Analyzed Logical Plan ==

key: int

Project [key#40]

+- Filter key#40 IN (list#39 [])

: +- Project [1 AS key#38]

: +- Filter (1 = 0)

: +- OneRowRelation

+- SubqueryAlias t

+- LocalRelation [key#40]

== Optimized Logical Plan ==

Join LeftSemi, (key#40 = key#38)

:- LocalRelation [key#40]

+- LocalRelation <empty>, [key#38]

== Physical Plan ==

*(1) BroadcastHashJoin [key#40], [key#38], LeftSemi, BuildRight

:- *(1) LocalTableScan [key#40]

+- Br...

```

`LocalRelation <empty> ` should be able to propagate after subqueries are lift up to joins

### Why are the changes needed?

optimize query

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

add new tests

Closes#28043 from yaooqinn/SPARK-31280.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Based on the discussion in the mailing list [[Proposal] Modification to Spark's Semantic Versioning Policy](http://apache-spark-developers-list.1001551.n3.nabble.com/Proposal-Modification-to-Spark-s-Semantic-Versioning-Policy-td28938.html) , this PR is to add back the following APIs whose maintenance cost are relatively small.

- functions.toDegrees/toRadians

- functions.approxCountDistinct

- functions.monotonicallyIncreasingId

- Column.!==

- Dataset.explode

- Dataset.registerTempTable

- SQLContext.getOrCreate, setActive, clearActive, constructors

Below is the other removed APIs in the original PR, but not added back in this PR [https://issues.apache.org/jira/browse/SPARK-25908]:

- Remove some AccumulableInfo .apply() methods

- Remove non-label-specific multiclass precision/recall/fScore in favor of accuracy

- Remove unused Python StorageLevel constants

- Remove unused multiclass option in libsvm parsing

- Remove references to deprecated spark configs like spark.yarn.am.port

- Remove TaskContext.isRunningLocally

- Remove ShuffleMetrics.shuffle* methods

- Remove BaseReadWrite.context in favor of session

### Why are the changes needed?

Avoid breaking the APIs that are commonly used.

### Does this PR introduce any user-facing change?

Adding back the APIs that were removed in 3.0 branch does not introduce the user-facing changes, because Spark 3.0 has not been released.

### How was this patch tested?

Added a new test suite for these APIs.

Author: gatorsmile <gatorsmile@gmail.com>

Author: yi.wu <yi.wu@databricks.com>

Closes#27821 from gatorsmile/addAPIBackV2.

### What changes were proposed in this pull request?

SPARK-25387 avoids npe for bad csv input, but when reading bad csv input with `columnNameCorruptRecord` specified, `getCurrentInput` is called and it still throws npe.

### Why are the changes needed?

Bug fix.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Add a test.

Closes#28029 from wzhfy/corrupt_column_npe.

Authored-by: Zhenhua Wang <wzh_zju@163.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR replaces the method calls of `toSet.toSeq` with `distinct`.

### Why are the changes needed?

`toSet.toSeq` is intended to make its elements unique but a bit verbose. Using `distinct` instead is easier to understand and improves readability.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Tested with the existing unit tests and found no problem.

Closes#28062 from sekikn/SPARK-31292.

Authored-by: Kengo Seki <sekikn@apache.org>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

This PR aims to copy a test resource file to a local file in `OrcTest` suite before reading it.

### Why are the changes needed?

SPARK-31238 and SPARK-31284 added test cases to access the resouce file in `sql/core` module from `sql/hive` module. In **Maven** test environment, this causes a failure.

```

- SPARK-31238: compatibility with Spark 2.4 in reading dates *** FAILED ***

java.lang.IllegalArgumentException: java.net.URISyntaxException: Relative path in absolute URI:

jar:file:/home/jenkins/workspace/spark-master-test-maven-hadoop-3.2-hive-2.3-jdk-11/sql/core/target/spark-sql_2.12-3.1.0-SNAPSHOT-tests.jar!/test-data/before_1582_date_v2_4.snappy.orc

```

```

- SPARK-31284: compatibility with Spark 2.4 in reading timestamps *** FAILED ***

java.lang.IllegalArgumentException: java.net.URISyntaxException: Relative path in absolute URI:

jar:file:/home/jenkins/workspace/spark-master-test-maven-hadoop-3.2-hive-2.3/sql/core/target/spark-sql_2.12-3.1.0-SNAPSHOT-tests.jar!/test-data/before_1582_ts_v2_4.snappy.orc

```

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Pass the Jenkins with Maven.

Closes#28059 from dongjoon-hyun/SPARK-31238.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This patch proposes to prune unnecessary nested fields from Generate which has no Project on top of it.

### Why are the changes needed?

In Optimizer, we can prune nested columns from Project(projectList, Generate). However, unnecessary columns could still possibly be read in Generate, if no Project on top of it. We should prune it too.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Unit test.

Closes#27517 from viirya/SPARK-29721-2.

Lead-authored-by: Liang-Chi Hsieh <liangchi@uber.com>

Co-authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

In the PR, I propose 2 tests to check that rebasing of timestamps from/to the hybrid calendar (Julian + Gregorian) to/from Proleptic Gregorian calendar works correctly.

1. The test `compatibility with Spark 2.4 in reading timestamps` load ORC file saved by Spark 2.4.5 via:

```shell

$ export TZ="America/Los_Angeles"

```

```scala

scala> spark.conf.set("spark.sql.session.timeZone", "America/Los_Angeles")

scala> val df = Seq("1001-01-01 01:02:03.123456").toDF("tsS").select($"tsS".cast("timestamp").as("ts"))

df: org.apache.spark.sql.DataFrame = [ts: timestamp]

scala> df.write.orc("/Users/maxim/tmp/before_1582/2_4_5_ts_orc")

scala> spark.read.orc("/Users/maxim/tmp/before_1582/2_4_5_ts_orc").show(false)

+--------------------------+

|ts |

+--------------------------+

|1001-01-01 01:02:03.123456|

+--------------------------+

```

2. The test `rebasing timestamps in write` is round trip test. Since the previous test confirms correct rebasing of timestamps in read. This test should pass only if rebasing works correctly in write.

### Why are the changes needed?

To guarantee that rebasing works correctly for timestamps in ORC datasource.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By running `OrcSourceSuite` for Hive 1.2 and 2.3 via the commands:

```

$ build/sbt -Phive-2.3 "test:testOnly *OrcSourceSuite"

```

and

```

$ build/sbt -Phive-1.2 "test:testOnly *OrcSourceSuite"

```

Closes#28047 from MaxGekk/rebase-ts-orc-test.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

In the PR, I propose to change types of `DateTimeTestUtils` values and functions by replacing `java.util.TimeZone` to `java.time.ZoneId`. In particular:

1. Type of `ALL_TIMEZONES` is changed to `Seq[ZoneId]`.

2. Remove `val outstandingTimezones: Seq[TimeZone]`.

3. Change the type of the time zone parameter in `withDefaultTimeZone` to `ZoneId`.

4. Modify affected test suites.

### Why are the changes needed?

Currently, Spark SQL's date-time expressions and functions have been already ported on Java 8 time API but tests still use old time APIs. In particular, `DateTimeTestUtils` exposes functions that accept only TimeZone instances. This is inconvenient, and CPU consuming because need to convert TimeZone instances to ZoneId instances via strings (zone ids).

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By affected test suites executed by jenkins builds.

Closes#28033 from MaxGekk/with-default-time-zone.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

OuterReference is one LeafExpression, so it's children is Nil, which makes its SQL representation always be outer(). This makes our explain-command and error msg unclear when OuterReference exists.

e.g.

```scala

org.apache.spark.sql.AnalysisException:

Aggregate/Window/Generate expressions are not valid in where clause of the query.

Expression in where clause: [(in.`value` = max(outer()))]

Invalid expressions: [max(outer())];;

```

This PR override its `sql` method with its `prettyName` and single argment `e`'s `sql` methond

### Why are the changes needed?

improve err message

### Does this PR introduce any user-facing change?

yes, the err msg caused by OuterReference has changed

### How was this patch tested?

modified ut results

Closes#27985 from yaooqinn/SPARK-31225.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Based on the discussion in the mailing list [[Proposal] Modification to Spark's Semantic Versioning Policy](http://apache-spark-developers-list.1001551.n3.nabble.com/Proposal-Modification-to-Spark-s-Semantic-Versioning-Policy-td28938.html) , this PR is to add back the following APIs whose maintenance cost are relatively small.

- HiveContext

- createExternalTable APIs

### Why are the changes needed?

Avoid breaking the APIs that are commonly used.

### Does this PR introduce any user-facing change?

Adding back the APIs that were removed in 3.0 branch does not introduce the user-facing changes, because Spark 3.0 has not been released.

### How was this patch tested?

add a new test suite for createExternalTable APIs.

Closes#27815 from gatorsmile/addAPIsBack.

Lead-authored-by: gatorsmile <gatorsmile@gmail.com>

Co-authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

### What changes were proposed in this pull request?

Based on the discussion in the mailing list [[Proposal] Modification to Spark's Semantic Versioning Policy](http://apache-spark-developers-list.1001551.n3.nabble.com/Proposal-Modification-to-Spark-s-Semantic-Versioning-Policy-td28938.html) , this PR is to add back the following APIs whose maintenance cost are relatively small.

- SQLContext.applySchema

- SQLContext.parquetFile

- SQLContext.jsonFile

- SQLContext.jsonRDD

- SQLContext.load

- SQLContext.jdbc

### Why are the changes needed?

Avoid breaking the APIs that are commonly used.

### Does this PR introduce any user-facing change?

Adding back the APIs that were removed in 3.0 branch does not introduce the user-facing changes, because Spark 3.0 has not been released.

### How was this patch tested?

The existing tests.

Closes#27839 from gatorsmile/addAPIBackV3.

Lead-authored-by: gatorsmile <gatorsmile@gmail.com>

Co-authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

### What changes were proposed in this pull request?

1. `DataSourceStrategy.scala` is extended to create `org.apache.spark.sql.sources.Filter` from nested expressions.

2. Translation from nested `org.apache.spark.sql.sources.Filter` to `org.apache.parquet.filter2.predicate.FilterPredicate` is implemented to support nested predicate pushdown for Parquet.

### Why are the changes needed?

Better performance for handling nested predicate pushdown.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

New tests are added.

Closes#27728 from dbtsai/SPARK-17636.

Authored-by: DB Tsai <d_tsai@apple.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

`HiveResult` performs some conversions for commands to be compatible with Hive output, e.g.:

```

// If it is a describe command for a Hive table, we want to have the output format be similar with Hive.

case ExecutedCommandExec(_: DescribeCommandBase) =>

...

// SHOW TABLES in Hive only output table names, while ours output database, table name, isTemp.

case command ExecutedCommandExec(s: ShowTablesCommand) if !s.isExtended =>

```

This conversion is needed for DatasourceV2 commands as well and this PR proposes to add the conversion for v2 commands `SHOW TABLES` and `DESCRIBE TABLE`.

### Why are the changes needed?

This is a bug where conversion is not applied to v2 commands.

### Does this PR introduce any user-facing change?

Yes, now the outputs for v2 commands `SHOW TABLES` and `DESCRIBE TABLE` are compatible with HIVE output.

For example, with a table created as:

```

CREATE TABLE testcat.ns.tbl (id bigint COMMENT 'col1') USING foo

```

The output of `SHOW TABLES` has changed from

```

ns table

```

to

```

table

```

And the output of `DESCRIBE TABLE` has changed from

```

id bigint col1

# Partitioning

Not partitioned

```

to

```

id bigint col1

# Partitioning

Not partitioned

```

### How was this patch tested?

Added unit tests.

Closes#28004 from imback82/hive_result.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In Spark CLI, we create a hive `CliSessionState` and it does not load the `hive-site.xml`. So the configurations in `hive-site.xml` will not take effects like other spark-hive integration apps.

Also, the warehouse directory is not correctly picked. If the `default` database does not exist, the `CliSessionState` will create one during the first time it talks to the metastore. The `Location` of the default DB will be neither the value of `spark.sql.warehousr.dir` nor the user-specified value of `hive.metastore.warehourse.dir`, but the default value of `hive.metastore.warehourse.dir `which will always be `/user/hive/warehouse`.

This PR fixes CLiSuite failure with the hive-1.2 profile in https://github.com/apache/spark/pull/27933.

In https://github.com/apache/spark/pull/27933, we fix the issue in JIRA by deciding the warehouse dir using all properties from spark conf and Hadoop conf, but properties from `--hiveconf` is not included, they will be applied to the `CliSessionState` instance after it initialized. When this command-line option key is `hive.metastore.warehouse.dir`, the actual warehouse dir is overridden. Because of the logic in Hive for creating the non-existing default database changed, that test passed with `Hive 2.3.6` but failed with `1.2`. So in this PR, Hadoop/Hive configurations are ordered by:

` spark.hive.xxx > spark.hadoop.xxx > --hiveconf xxx > hive-site.xml` througth `ShareState.loadHiveConfFile` before sessionState start

### Why are the changes needed?

Bugfix for Spark SQL CLI to pick right confs

### Does this PR introduce any user-facing change?

yes,

1. the non-exists default database will be created in the location specified by the users via `spark.sql.warehouse.dir` or `hive.metastore.warehouse.dir`, or the default value of `spark.sql.warehouse.dir` if none of them specified.

2. configurations from `hive-site.xml` will not override command-line options or the properties defined with `spark.hadoo(hive).` prefix in spark conf.

### How was this patch tested?

add cli ut

Closes#27969 from yaooqinn/SPARK-31170-2.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR related to https://github.com/apache/spark/pull/27481.

If test case A uses `--IMPORT` to import test case B contains bracketed comments, the output can't display bracketed comments in golden files well.

The content of `nested-comments.sql` show below:

```

-- This test case just used to test imported bracketed comments.

-- the first case of bracketed comment

--QUERY-DELIMITER-START

/* This is the first example of bracketed comment.

SELECT 'ommented out content' AS first;

*/

SELECT 'selected content' AS first;

--QUERY-DELIMITER-END

```

The test case `comments.sql` imports `nested-comments.sql` below:

`--IMPORT nested-comments.sql`

Before this PR, the output will be:

```

-- !query

/* This is the first example of bracketed comment.

SELECT 'ommented out content' AS first

-- !query schema

struct<>

-- !query output

org.apache.spark.sql.catalyst.parser.ParseException

mismatched input '/' expecting {'(', 'ADD', 'ALTER', 'ANALYZE', 'CACHE', 'CLEAR', 'COMMENT', 'COMMIT', 'CREATE', 'DELETE', 'DESC', 'DESCRIBE', 'DFS', 'DROP',

'EXPLAIN', 'EXPORT', 'FROM', 'GRANT', 'IMPORT', 'INSERT', 'LIST', 'LOAD', 'LOCK', 'MAP', 'MERGE', 'MSCK', 'REDUCE', 'REFRESH', 'REPLACE', 'RESET', 'REVOKE', '

ROLLBACK', 'SELECT', 'SET', 'SHOW', 'START', 'TABLE', 'TRUNCATE', 'UNCACHE', 'UNLOCK', 'UPDATE', 'USE', 'VALUES', 'WITH'}(line 1, pos 0)

== SQL ==

/* This is the first example of bracketed comment.

^^^

SELECT 'ommented out content' AS first

-- !query

*/

SELECT 'selected content' AS first

-- !query schema

struct<>

-- !query output

org.apache.spark.sql.catalyst.parser.ParseException

extraneous input '*/' expecting {'(', 'ADD', 'ALTER', 'ANALYZE', 'CACHE', 'CLEAR', 'COMMENT', 'COMMIT', 'CREATE', 'DELETE', 'DESC', 'DESCRIBE', 'DFS', 'DROP', 'EXPLAIN', 'EXPORT', 'FROM', 'GRANT', 'IMPORT', 'INSERT', 'LIST', 'LOAD', 'LOCK', 'MAP', 'MERGE', 'MSCK', 'REDUCE', 'REFRESH', 'REPLACE', 'RESET', 'REVOKE', 'ROLLBACK', 'SELECT', 'SET', 'SHOW', 'START', 'TABLE', 'TRUNCATE', 'UNCACHE', 'UNLOCK', 'UPDATE', 'USE', 'VALUES', 'WITH'}(line 1, pos 0)

== SQL ==

*/

^^^

SELECT 'selected content' AS first

```

After this PR, the output will be:

```

-- !query

/* This is the first example of bracketed comment.

SELECT 'ommented out content' AS first;

*/

SELECT 'selected content' AS first

-- !query schema

struct<first:string>

-- !query output

selected content

```

### Why are the changes needed?

Golden files can't display the bracketed comments in imported test cases.

### Does this PR introduce any user-facing change?

'No'.

### How was this patch tested?

New UT.

Closes#28018 from beliefer/fix-bug-tests-imported-bracketed-comments.

Authored-by: beliefer <beliefer@163.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

This PR (SPARK-31238) aims the followings.

1. Modified ORC Vectorized Reader, in particular, OrcColumnVector v1.2 and v2.3. After the changes, it uses `DateTimeUtils. rebaseJulianToGregorianDays()` added by https://github.com/apache/spark/pull/27915 . The method performs rebasing days from the hybrid calendar (Julian + Gregorian) to Proleptic Gregorian calendar. It builds a local date in the original calendar, extracts date fields `year`, `month` and `day` from the local date, and builds another local date in the target calendar. After that, it calculates days from the epoch `1970-01-01` for the resulted local date.

2. Introduced rebasing dates while saving ORC files, in particular, I modified `OrcShimUtils. getDateWritable` v1.2 and v2.3, and returned `DaysWritable` instead of Hive's `DateWritable`. The `DaysWritable` class was added by the PR https://github.com/apache/spark/pull/27890 (and fixed by https://github.com/apache/spark/pull/27962). I moved `DaysWritable` from `sql/hive` to `sql/core` to re-use it in ORC datasource.

### Why are the changes needed?

For the backward compatibility with Spark 2.4 and earlier versions. The changes allow users to read dates/timestamps saved by previous version, and get the same result.

### Does this PR introduce any user-facing change?

Yes. Before the changes, loading the date `1200-01-01` saved by Spark 2.4.5 returns the following:

```scala

scala> spark.read.orc("/Users/maxim/tmp/before_1582/2_4_5_date_orc").show(false)

+----------+

|dt |

+----------+

|1200-01-08|

+----------+

```

After the changes

```scala

scala> spark.read.orc("/Users/maxim/tmp/before_1582/2_4_5_date_orc").show(false)

+----------+

|dt |

+----------+

|1200-01-01|

+----------+

```

### How was this patch tested?

- By running `OrcSourceSuite` and `HiveOrcSourceSuite`.

- Add new test `SPARK-31238: compatibility with Spark 2.4 in reading dates` to `OrcSuite` which reads an ORC file saved by Spark 2.4.5 via the commands:

```shell

$ export TZ="America/Los_Angeles"

```

```scala

scala> sql("select cast('1200-01-01' as date) dt").write.mode("overwrite").orc("/Users/maxim/tmp/before_1582/2_4_5_date_orc")

scala> spark.read.orc("/Users/maxim/tmp/before_1582/2_4_5_date_orc").show(false)

+----------+

|dt |

+----------+

|1200-01-01|

+----------+

```

- Add round trip test `SPARK-31238: rebasing dates in write`. The test `SPARK-31238: compatibility with Spark 2.4 in reading dates` confirms rebasing in read. So, we can check rebasing in write.

Closes#28016 from MaxGekk/rebase-date-orc.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Skew join handling comes with an overhead: we need to read some data repeatedly. We should treat a partition as skewed if it's large enough so that it's beneficial to do so.

Currently the size threshold is the advisory partition size, which is 64 MB by default. This is not large enough for the skewed partition size threshold.

This PR adds a new config for the threshold and set default value as 256 MB.

### Why are the changes needed?

Avoid skew join handling that may introduce a perf regression.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

existing tests

Closes#27967 from cloud-fan/aqe.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Make `mergeSparkConf` in `WithTestConf` respects `spark.sql.legacy.sessionInitWithConfigDefaults`.

### Why are the changes needed?

Without the fix, conf specified by `withSQLConf` can be reverted to original value in a cloned SparkSession. For example, you will fail test below without the fix:

```

withSQLConf(SQLConf.CODEGEN_FALLBACK.key -> "true") {

val cloned = spark.cloneSession()

SparkSession.setActiveSession(cloned)

assert(SQLConf.get.getConf(SQLConf.CODEGEN_FALLBACK) === true)

}

```

So we should fix it just as #24540 did before.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Added tests.

Closes#28014 from Ngone51/sparksession_clone.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to define `timestampFormatter`, `dateFormatter` and `zoneId` as methods of the `HiveResult` object. This should guarantee that the formatters pick the current session time zone in `toHiveString()`

### Why are the changes needed?

Currently, date/timestamp formatters in `HiveResult.toHiveString` are initialized once on instantiation of the `HiveResult` object, and pick up the session time zone. If the sessions time zone is changed, the formatters still use the previous one.

### Does this PR introduce any user-facing change?

Yes

### How was this patch tested?

By existing test suites, in particular, by `HiveResultSuite`

Closes#28024 from MaxGekk/hive-result-datetime-formatters.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR targets for non-nullable null type not to coerce to nullable type in complex types.

Non-nullable fields in struct, elements in an array and entries in map can mean empty array, struct and map. They are empty so it does not need to force the nullability when we find common types.

This PR also reverts and supersedes d7b97a1d0d

### Why are the changes needed?

To make type coercion coherent and consistent. Currently, we correctly keep the nullability even between non-nullable fields:

```scala

import org.apache.spark.sql.types._

import org.apache.spark.sql.functions._

spark.range(1).select(array(lit(1)).cast(ArrayType(IntegerType, false))).printSchema()

spark.range(1).select(array(lit(1)).cast(ArrayType(DoubleType, false))).printSchema()

```

```scala

spark.range(1).selectExpr("concat(array(1), array(1)) as arr").printSchema()

```

### Does this PR introduce any user-facing change?

Yes.

```scala

import org.apache.spark.sql.types._

import org.apache.spark.sql.functions._

spark.range(1).select(array().cast(ArrayType(IntegerType, false))).printSchema()

```

```scala

spark.range(1).selectExpr("concat(array(), array(1)) as arr").printSchema()

```

**Before:**

```

org.apache.spark.sql.AnalysisException: cannot resolve 'array()' due to data type mismatch: cannot cast array<null> to array<int>;;

'Project [cast(array() as array<int>) AS array()#68]

+- Range (0, 1, step=1, splits=Some(12))

at org.apache.spark.sql.catalyst.analysis.package$AnalysisErrorAt.failAnalysis(package.scala:42)

at org.apache.spark.sql.catalyst.analysis.CheckAnalysis$$anonfun$$nestedInanonfun$checkAnalysis$1$2.applyOrElse(CheckAnalysis.scala:149)

at org.apache.spark.sql.catalyst.analysis.CheckAnalysis$$anonfun$$nestedInanonfun$checkAnalysis$1$2.applyOrElse(CheckAnalysis.scala:140)

at org.apache.spark.sql.catalyst.trees.TreeNode.$anonfun$transformUp$2(TreeNode.scala:333)

at org.apache.spark.sql.catalyst.trees.CurrentOrigin$.withOrigin(TreeNode.scala:72)

at org.apache.spark.sql.catalyst.trees.TreeNode.transformUp(TreeNode.scala:333)

at org.apache.spark.sql.catalyst.trees.TreeNode.$anonfun$transformUp$1(TreeNode.scala:330)

at org.apache.spark.sql.catalyst.trees.TreeNode.$anonfun$mapChildren$1(TreeNode.scala:399)

at org.apache.spark.sql.catalyst.trees.TreeNode.mapProductIterator(TreeNode.scala:237)

```

```

root

|-- arr: array (nullable = false)

| |-- element: integer (containsNull = true)

```

**After:**

```

root

|-- array(): array (nullable = false)

| |-- element: integer (containsNull = false)

```

```

root

|-- arr: array (nullable = false)

| |-- element: integer (containsNull = false)

```

### How was this patch tested?

Unittests were added and manually tested.

Closes#27991 from HyukjinKwon/SPARK-31227.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Currently, ResetCommand clear all configurations, including sql configs, static sql configs and spark context level configs.

for example:

```sql

spark-sql> set xyz=abc;

xyz abc

spark-sql> set;

spark.app.id local-1585055396930

spark.app.name SparkSQL::10.242.189.214

spark.driver.host 10.242.189.214

spark.driver.port 65094

spark.executor.id driver

spark.jars

spark.master local[*]

spark.sql.catalogImplementation hive

spark.sql.hive.version 1.2.1

spark.submit.deployMode client

xyz abc

spark-sql> reset;

spark-sql> set;

spark-sql> set spark.sql.hive.version;

spark.sql.hive.version 1.2.1

spark-sql> set spark.app.id;

spark.app.id <undefined>

```

In this PR, we restore spark confs to RuntimeConfig after it is cleared

### Why are the changes needed?

reset command overkills configs which are static.

### Does this PR introduce any user-facing change?

yes, the ResetCommand do not change static configs now

### How was this patch tested?

add ut

Closes#28003 from yaooqinn/SPARK-31234.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to add a few `ZoneId` constant values to the `DateTimeTestUtils` object, and reuse the constants in tests. Proposed the following constants:

- PST = -08:00

- UTC = +00:00

- CEST = +02:00

- CET = +01:00

- JST = +09:00

- MIT = -09:30

- LA = America/Los_Angeles

### Why are the changes needed?

All proposed constant values (except `LA`) are initialized by zone offsets according to their definitions. This will allow to avoid:

- Using of 3-letter time zones that have been already deprecated in JDK, see _Three-letter time zone IDs_ in https://docs.oracle.com/javase/8/docs/api/java/util/TimeZone.html

- Incorrect mapping of 3-letter time zones to zone offsets, see SPARK-31237. For example, `PST` is mapped to `America/Los_Angeles` instead of the `-08:00` zone offset.

Also this should improve stability and maintainability of test suites.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By running affected test suites.

Closes#28001 from MaxGekk/replace-pst.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Spark introduced CHAR type for hive compatibility but it only works for hive tables. CHAR type is never documented and is treated as STRING type for non-Hive tables.

However, this leads to confusing behaviors

**Apache Spark 3.0.0-preview2**

```

spark-sql> CREATE TABLE t(a CHAR(3));

spark-sql> INSERT INTO TABLE t SELECT 'a ';

spark-sql> SELECT a, length(a) FROM t;

a 2

```

**Apache Spark 2.4.5**

```

spark-sql> CREATE TABLE t(a CHAR(3));

spark-sql> INSERT INTO TABLE t SELECT 'a ';

spark-sql> SELECT a, length(a) FROM t;

a 3

```

According to the SQL standard, `CHAR(3)` should guarantee all the values are of length 3. Since `CHAR(3)` is treated as STRING so Spark doesn't guarantee it.

This PR forbids CHAR type in non-Hive tables as it's not supported correctly.

### Why are the changes needed?

avoid confusing/wrong behavior

### Does this PR introduce any user-facing change?

yes, now users can't create/alter non-Hive tables with CHAR type.

### How was this patch tested?

new tests

Closes#27902 from cloud-fan/char.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This is another solution for `SPARK-31081` and #27849 .

I added a checkbox which can toggle display of stageId/taskid in the SQL's DAG page.

Mainly, I implemented the toggleable texts in boxes with HTML label feature provided by `dagre-d3`.

The additional metrics are enclosed by `<span>` and control the appearance of the text.

But the exception is additional metrics in clusters.

We can use HTML label for cluster but layout will be broken so I choosed normal text label for clusters.

Due to that, this solution contains a little bit tricky code in`spark-sql-viz.js` to manipulate the metric texts and generate DOMs.

### Why are the changes needed?

It makes metrics harder to read after #26843 and user may not interest in extra info(stageId/StageAttemptId/taskId ) when they do not need debug.

#27849 control the appearance by a new configuration property but providing a checkbox is more flexible.

### Does this PR introduce any user-facing change?

Yes.

[Additional metrics shown]

[Additional metrics hidden]

### How was this patch tested?

Tested manually with a simple DataFrame operation.

* The appearance of additional metrics in the boxes are controlled by the newly added checkbox.

* No error found with JS-debugger.

* Checked/not-checked state is preserved after reloading.

Closes#27927 from sarutak/SPARK-31081.

Authored-by: Kousuke Saruta <sarutak@oss.nttdata.com>

Signed-off-by: Gengliang Wang <gengliang.wang@databricks.com>

### What changes were proposed in this pull request?

To support case class parameter for typed Scala UDF, e.g.

```

case class TestData(key: Int, value: String)

val f = (d: TestData) => d.key * d.value.toInt

val myUdf = udf(f)

val df = Seq(("data", TestData(50, "2"))).toDF("col1", "col2")

checkAnswer(df.select(myUdf(Column("col2"))), Row(100) :: Nil)

```

### Why are the changes needed?

Currently, Spark UDF can only work on data types like java.lang.String, o.a.s.sql.Row, Seq[_], etc. This is inconvenient if user want to apply an operation on one column, and the column is struct type. You must access data from a Row object, instead of domain object like Dataset operations. It will be great if UDF can work on types that are supported by Dataset, e.g. case class.

And here's benchmark result of using case class comparing to row:

```scala

// case class: 58ms 65ms 59ms 64ms 61ms

// row: 59ms 64ms 73ms 84ms 69ms

val f1 = (d: TestData) => s"${d.key}, ${d.value}"

val f2 = (r: Row) => s"${r.getInt(0)}, ${r.getString(1)}"

val udf1 = udf(f1)

// set spark.sql.legacy.allowUntypedScalaUDF=true

val udf2 = udf(f2, StringType)

val df = spark.range(100000).selectExpr("cast (id as int) as id")

.select(struct('id, lit("str")).as("col"))

df.cache().collect()

// warmup to exclude some extra influence

df.select(udf1('col)).write.mode(SaveMode.Overwrite).format("noop").save()

df.select(udf2('col)).write.mode(SaveMode.Overwrite).format("noop").save()

start = System.currentTimeMillis()

df.select(udf1('col)).write.mode(SaveMode.Overwrite).format("noop").save()

println(System.currentTimeMillis() - start)

start = System.currentTimeMillis()

df.select(udf2('col)).write.mode(SaveMode.Overwrite).format("noop").save()

println(System.currentTimeMillis() - start)

```

### Does this PR introduce any user-facing change?

Yes. User now could be able to use typed Scala UDF with case class as input parameter.

### How was this patch tested?

Added unit tests.

Closes#27937 from Ngone51/udf_caseclass_support.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

https://github.com/apache/spark/pull/26412 introduced a behavior change that `date_add`/`date_sub` functions can't accept string and double values in the second parameter. This is reasonable as it's error-prone to cast string/double to int at runtime.

However, using string literals as function arguments is very common in SQL databases. To avoid breaking valid use cases that the string literal is indeed an integer, this PR proposes to add ansi_cast for string literal in date_add/date_sub functions. If the string value is not a valid integer, we fail at query compiling time because of constant folding.

### Why are the changes needed?

avoid breaking changes

### Does this PR introduce any user-facing change?

Yes, now 3.0 can run `date_add('2011-11-11', '1')` like 2.4

### How was this patch tested?

new tests.

Closes#27965 from cloud-fan/string.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to refactor reading of timestamps of the `TIMESTAMP_MILLIS` logical type from Parquet files in `VectorizedColumnReader`, and move checking of the `rebaseDateTime` flag out of the internal loop.

### Why are the changes needed?

To avoid any additional overhead of the checking the SQL config `spark.sql.legacy.parquet.rebaseDateTime.enabled` introduced by the PR https://github.com/apache/spark/pull/27915.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By running the test suite `ParquetIOSuite`.

Closes#27973 from MaxGekk/rebase-parquet-datetime-followup.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

The cached RDD for plan "select 1" stays in memory forever until the session close. This cached data cannot be used since the view temp1 has been replaced by another plan. It's a memory leak.

We can reproduce by below commands:

```

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 3.0.0-SNAPSHOT

/_/

Using Scala version 2.12.10 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_201)

Type in expressions to have them evaluated.

Type :help for more information.

scala> spark.sql("create or replace temporary view temp1 as select 1")

scala> spark.sql("cache table temp1")

scala> spark.sql("create or replace temporary view temp1 as select 1, 2")

scala> spark.sql("cache table temp1")

scala> assert(spark.sharedState.cacheManager.lookupCachedData(sql("select 1, 2")).isDefined)

scala> assert(spark.sharedState.cacheManager.lookupCachedData(sql("select 1")).isDefined)

```

### Why are the changes needed?

Fix the memory leak, specially for long running mode.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Add an unit test.

Closes#27185 from LantaoJin/SPARK-30494.

Authored-by: LantaoJin <jinlantao@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

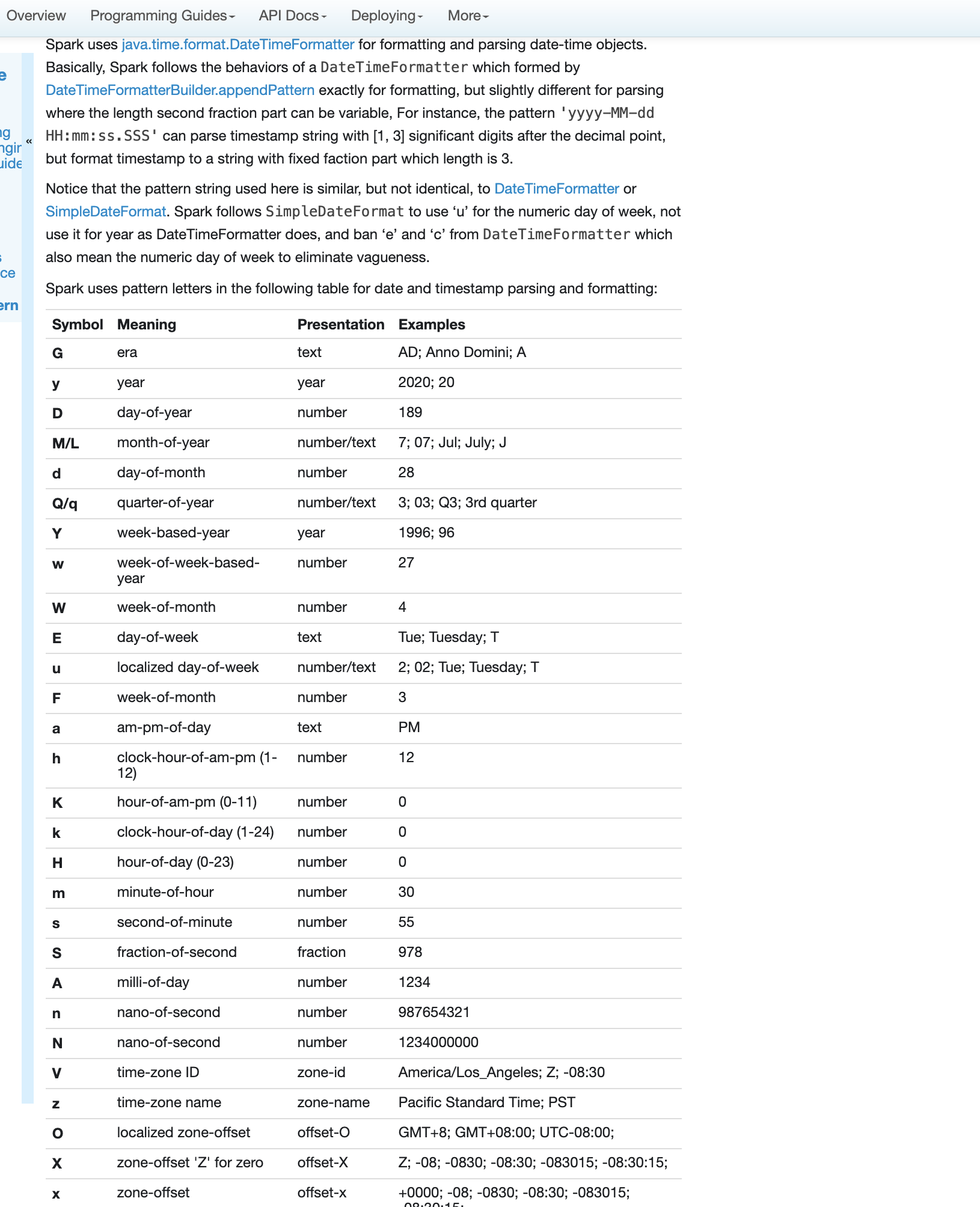

### What changes were proposed in this pull request?

Fix errors and missing parts for datetime pattern document

1. The pattern we use is similar to DateTimeFormatter and SimpleDateFormat but not identical. So we shouldn't use any of them in the API docs but use a link to the doc of our own.

2. Some pattern letters are missing

3. Some pattern letters are explicitly banned - Set('A', 'c', 'e', 'n', 'N')

4. the second fraction pattern different logic for parsing and formatting

### Why are the changes needed?

fix and improve doc

### Does this PR introduce any user-facing change?

yes, new and updated doc

### How was this patch tested?

pass Jenkins

viewed locally with `jekyll serve`

Closes#27956 from yaooqinn/SPARK-31189.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

A few `CREATE TABLE` test cases have some assumption on the default value of `LEGACY_CREATE_HIVE_TABLE_BY_DEFAULT_ENABLED`. This PR (SPARK-31181) makes the test cases more explicit from test-case side.

The configuration change was tested via https://github.com/apache/spark/pull/27894 during discussing SPARK-31136. This PR has only the test case part from that PR.

### Why are the changes needed?

This makes our test case more robust in terms of the default value of `LEGACY_CREATE_HIVE_TABLE_BY_DEFAULT_ENABLED`. Even in the case where we switch the conf value, that will be one-liner with no test case changes.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Pass the Jenkins with the existing tests.

Closes#27946 from dongjoon-hyun/SPARK-EXPLICIT-TEST.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Spark SQL's whole-stage codegen (WSCG) supports dumping the generated code to help with debugging. One way to get the generated code is through `df.queryExecution.debug.codegen`, or SQL `EXPLAIN CODEGEN` statement.

The generated code is currently printed without specific ordering, which can make debugging a bit annoying. This PR makes a minor improvement to sort the codegen dump by the `codegenStageId`, ascending.

After this change, the following query:

```scala

spark.range(10).agg(sum('id)).queryExecution.debug.codegen

```

will always dump the generated code in a natural, stable order. A version of this example with shorter output is:

```

spark.range(10).agg(sum('id)).queryExecution.debug.codegenToSeq.map(_._1).foreach(println)

*(1) HashAggregate(keys=[], functions=[partial_sum(id#8L)], output=[sum#15L])

+- *(1) Range (0, 10, step=1, splits=16)

*(2) HashAggregate(keys=[], functions=[sum(id#8L)], output=[sum(id)#12L])

+- Exchange SinglePartition, true, [id=#30]

+- *(1) HashAggregate(keys=[], functions=[partial_sum(id#8L)], output=[sum#15L])

+- *(1) Range (0, 10, step=1, splits=16)

```

The number of codegen stages within a single SQL query tends to be very small, most likely < 50, so the overhead of adding the sorting shouldn't be significant.

### Why are the changes needed?

Minor improvement to aid WSCG debugging.

### Does this PR introduce any user-facing change?

No user-facing change for end-users; minor change for developers who debug WSCG generated code.

### How was this patch tested?

Manually tested the output; all other tests still pass.

Closes#27955 from rednaxelafx/codegen.

Authored-by: Kris Mok <kris.mok@databricks.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

The PR addresses the issue of compatibility with Spark 2.4 and earlier version in reading/writing dates and timestamp via Parquet datasource. Previous releases are based on a hybrid calendar - Julian + Gregorian. Since Spark 3.0, Proleptic Gregorian calendar is used by default, see SPARK-26651. In particular, the issue pops up for dates/timestamps before 1582-10-15 when the hybrid calendar switches from/to Gregorian to/from Julian calendar. The same local date in different calendar is converted to different number of days since the epoch 1970-01-01. For example, the 1001-01-01 date is converted to:

- -719164 in Julian calendar. Spark 2.4 saves the number as a value of DATE type into parquet.

- -719162 in Proleptic Gregorian calendar. Spark 3.0 saves the number as a date value.

According to the parquet spec, parquet timestamps of the `TIMESTAMP_MILLIS`, `TIMESTAMP_MICROS` output type and parquet dates should be based on Proleptic Gregorian calendar but the `INT96` timestamps should be stored as Julian days. Since the version 3.0, Spark conforms the spec but for the backward compatibility with previous version, the PR proposes rebasing from/to Proleptic Gregorian calendar to the hybrid one under the SQL config:

```

spark.sql.legacy.parquet.rebaseDateTime.enabled

```

which is set to `false` by default which means the rebasing is not performed by default.

The details of the implementation:

1. Added 2 methods to `DateTimeUtils` for rebasing microseconds. `rebaseGregorianToJulianMicros()` builds a local timestamp in Proleptic Gregorian calendar, extracts date-time fields `year`, `month`, ..., `second fraction` from the local timestamp and uses them to build another local timestamp based on the hybrid calendar (using `java.util.Calendar` API). After that it calculates the number of microseconds since the epoch using the resulted local timestamp. The function performs the conversion via the system JVM time zone for compatibility with Spark 2.4 and earlier versions. The `rebaseJulianToGregorianMicros()` function does reverse conversion.

2. Added 2 methods to `DateTimeUtils` for rebasing days. `rebaseGregorianToJulianDays()` builds a local date from the passed number of days since the epoch in Proleptic Gregorian calendar, interprets the resulted date as a local date in the hybrid calendar and gets the number of days since the epoch from the resulted local date. The conversion is performed via the `UTC` time zone because the conversion is independent from time zones, and `UTC` is selected to void round issues of casting days to milliseconds and back. The `rebaseJulianToGregorianDays()` functions does revers conversion.

3. Use `rebaseGregorianToJulianMicros()` and `rebaseGregorianToJulianDays()` while saving timestamps/dates to parquet files if the SQL config is on.

4. Use `rebaseJulianToGregorianMicros()` and `rebaseJulianToGregorianDays()` while loading timestamps/dates from parquet files if the SQL config is on.

5. The SQL config `spark.sql.legacy.parquet.rebaseDateTime.enabled` controls conversions from/to dates, timestamps of `TIMESTAMP_MILLIS`, `TIMESTAMP_MICROS`, see the SQL config `spark.sql.parquet.outputTimestampType`.

6. The rebasing is always performed for `INT96` timestamps, independently from `spark.sql.legacy.parquet.rebaseDateTime.enabled`.

7. Supported the vectorized parquet reader, see the SQL config `spark.sql.parquet.enableVectorizedReader`.

### Why are the changes needed?

- For the backward compatibility with Spark 2.4 and earlier versions. The changes allow users to read dates/timestamps saved by previous version, and get the same result. Also after the changes, users can enable the rebasing in write, and save dates/timestamps that can be loaded correctly by Spark 2.4 and earlier versions.

- It fixes the bug of incorrect saving/loading timestamps of the `INT96` type

### Does this PR introduce any user-facing change?

Yes, the timestamp `1001-01-01 01:02:03.123456` saved by Spark 2.4.5 as `TIMESTAMP_MICROS` is interpreted by Spark 3.0.0-preview2 differently:

```scala

scala> spark.read.parquet("/Users/maxim/tmp/before_1582/2_4_5_ts_micros").show(false)

+--------------------------+

|ts |

+--------------------------+

|1001-01-07 11:32:20.123456|

+--------------------------+

```

After the changes:

```scala

scala> spark.conf.set("spark.sql.legacy.parquet.rebaseDateTime.enabled", true)

scala> spark.read.parquet("/Users/maxim/tmp/before_1582/2_4_5_ts_micros").show(false)

+--------------------------+

|ts |

+--------------------------+

|1001-01-01 01:02:03.123456|

+--------------------------+

```

### How was this patch tested?

1. Added tests to `ParquetIOSuite` to check rebasing in read for regular reader and vectorized parquet reader. The test reads back parquet files saved by Spark 2.4.5 via:

```shell

$ export TZ="America/Los_Angeles"

```

```scala

scala> spark.conf.set("spark.sql.session.timeZone", "America/Los_Angeles")

scala> val df = Seq("1001-01-01").toDF("dateS").select($"dateS".cast("date").as("date"))

df: org.apache.spark.sql.DataFrame = [date: date]

scala> df.write.parquet("/Users/maxim/tmp/before_1582/2_4_5_date")

scala> val df = Seq("1001-01-01 01:02:03.123456").toDF("tsS").select($"tsS".cast("timestamp").as("ts"))

df: org.apache.spark.sql.DataFrame = [ts: timestamp]

scala> spark.conf.set("spark.sql.parquet.outputTimestampType", "TIMESTAMP_MICROS")

scala> df.write.parquet("/Users/maxim/tmp/before_1582/2_4_5_ts_micros")

scala> spark.conf.set("spark.sql.parquet.outputTimestampType", "TIMESTAMP_MILLIS")

scala> df.write.parquet("/Users/maxim/tmp/before_1582/2_4_5_ts_millis")

scala> spark.conf.set("spark.sql.parquet.outputTimestampType", "INT96")

scala> df.write.parquet("/Users/maxim/tmp/before_1582/2_4_5_ts_int96")

```

2. Manually check the write code path. Save date/timestamps (TIMESTAMP_MICROS, TIMESTAMP_MILLIS, INT96) by Spark 3.1.0-SNAPSHOT (after the changes):

```bash

$ export TZ="America/Los_Angeles"

```

```scala

scala> spark.conf.set("spark.sql.session.timeZone", "America/Los_Angeles")

scala> spark.conf.set("spark.sql.legacy.parquet.rebaseDateTime.enabled", true)

scala> spark.conf.set("spark.sql.parquet.outputTimestampType", "TIMESTAMP_MICROS")

scala> val df = Seq(("1001-01-01", "1001-01-01 01:02:03.123456")).toDF("dateS", "tsS").select($"dateS".cast("date").as("d"), $"tsS".cast("timestamp").as("ts"))

df: org.apache.spark.sql.DataFrame = [d: date, ts: timestamp]

scala> df.write.parquet("/Users/maxim/tmp/before_1582/3_0_0_micros")

scala> spark.read.parquet("/Users/maxim/tmp/before_1582/3_0_0_micros").show(false)

+----------+--------------------------+

|d |ts |

+----------+--------------------------+

|1001-01-01|1001-01-01 01:02:03.123456|

+----------+--------------------------+

```

Read the saved date/timestamp by Spark 2.4.5:

```scala

scala> spark.conf.set("spark.sql.session.timeZone", "America/Los_Angeles")

scala> spark.read.parquet("/Users/maxim/tmp/before_1582/3_0_0_micros").show(false)

+----------+--------------------------+

|d |ts |

+----------+--------------------------+

|1001-01-01|1001-01-01 01:02:03.123456|

+----------+--------------------------+

```

Closes#27915 from MaxGekk/rebase-parquet-datetime.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR prevents the execution of V2 DataSource exec nodes multiple times when `collect()` is called on them. For V1 DataSources, commands would be executed as a RunnableCommand, which would cache the result as part of the `ExecutedCommandExec` node. We extend `V2CommandExec` for all the data writing commands so that they only get executed once as well.

### Why are the changes needed?

Calling `collect()` on a SQL command that inserts data or creates a table gets executed multiple times otherwise.

### Does this PR introduce any user-facing change?

Fixes a bug

### How was this patch tested?

Unit tests

Closes#27941 from brkyvz/doubleInsert.

Authored-by: Burak Yavuz <brkyvz@gmail.com>

Signed-off-by: Burak Yavuz <brkyvz@gmail.com>