### What changes were proposed in this pull request?

This pull request adds SparkR wrapper for `FMClassifier`:

- Supporting ` org.apache.spark.ml.r.FMClassifierWrapper`.

- `FMClassificationModel` S4 class.

- Corresponding `spark.fmClassifier`, `predict`, `summary` and `write.ml` generics.

- Corresponding docs and tests.

### Why are the changes needed?

Feature parity.

### Does this PR introduce any user-facing change?

No (new API).

### How was this patch tested?

New unit tests.

Closes#27570 from zero323/SPARK-30820.

Authored-by: zero323 <mszymkiewicz@gmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

Add back the deprecated R APIs removed by https://github.com/apache/spark/pull/22843/ and https://github.com/apache/spark/pull/22815.

These APIs are

- `sparkR.init`

- `sparkRSQL.init`

- `sparkRHive.init`

- `registerTempTable`

- `createExternalTable`

- `dropTempTable`

No need to port the function such as

```r

createExternalTable <- function(x, ...) {

dispatchFunc("createExternalTable(tableName, path = NULL, source = NULL, ...)", x, ...)

}

```

because this was for the backward compatibility when SQLContext exists before assuming from https://github.com/apache/spark/pull/9192, but seems we don't need it anymore since SparkR replaced SQLContext with Spark Session at https://github.com/apache/spark/pull/13635.

### Why are the changes needed?

Amend Spark's Semantic Versioning Policy

### Does this PR introduce any user-facing change?

Yes

The removed R APIs are put back.

### How was this patch tested?

Add back the removed tests

Closes#28058 from huaxingao/r.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

A small documentation change to clarify that the `rand()` function produces values in `[0.0, 1.0)`.

### Why are the changes needed?

`rand()` uses `Rand()` - which generates values in [0, 1) ([documented here](a1dbcd13a3/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/expressions/randomExpressions.scala (L71))). The existing documentation suggests that 1.0 is a possible value returned by rand (i.e for a distribution written as `X ~ U(a, b)`, x can be a or b, so `U[0.0, 1.0]` suggests the value returned could include 1.0).

### Does this PR introduce any user-facing change?

Only documentation changes.

### How was this patch tested?

Documentation changes only.

Closes#28071 from Smeb/master.

Authored-by: Ben Ryves <benjamin.ryves@getyourguide.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

In the PR, I propose to update the doc for the `timeZone` option in JSON/CSV datasources and for the `tz` parameter of the `from_utc_timestamp()`/`to_utc_timestamp()` functions, and to restrict format of config's values to 2 forms:

1. Geographical regions, such as `America/Los_Angeles`.

2. Fixed offsets - a fully resolved offset from UTC. For example, `-08:00`.

### Why are the changes needed?

Other formats such as three-letter time zone IDs are ambitious, and depend on the locale. For example, `CST` could be U.S. `Central Standard Time` and `China Standard Time`. Such formats have been already deprecated in JDK, see [Three-letter time zone IDs](https://docs.oracle.com/javase/8/docs/api/java/util/TimeZone.html).

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By running `./dev/scalastyle`, and manual testing.

Closes#28051 from MaxGekk/doc-time-zone-option.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

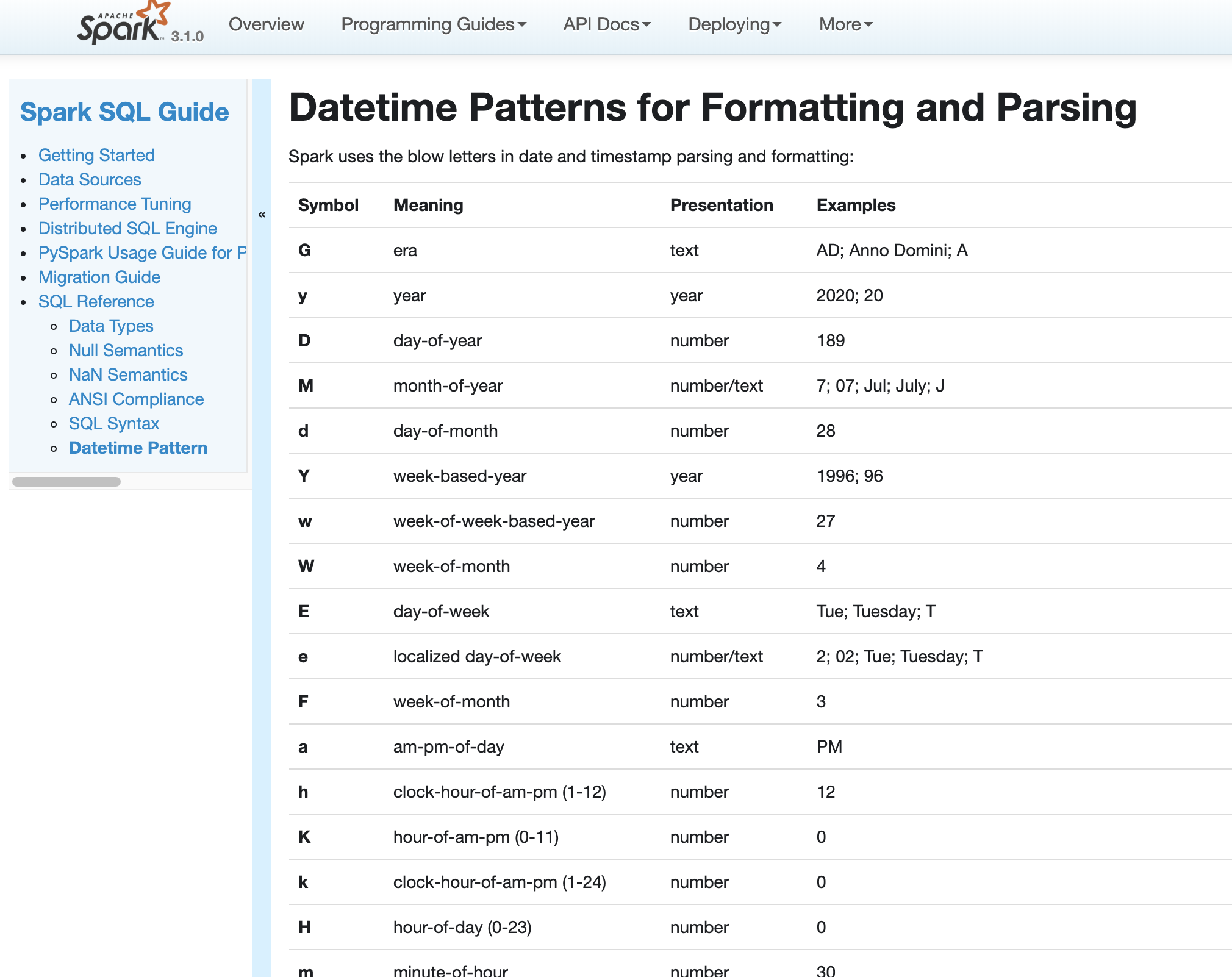

### What changes were proposed in this pull request?

Use our own docs for data pattern instructions to replace java doc.

### Why are the changes needed?

fix doc

### Does this PR introduce any user-facing change?

yes. doc changed

### How was this patch tested?

pass jenkins

Closes#27975 from yaooqinn/SPARK-31189-2.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

- Adds following overloaded variants to Scala `o.a.s.sql.functions`:

- `percentile_approx(e: Column, percentage: Array[Double], accuracy: Long): Column`

- `percentile_approx(columnName: String, percentage: Array[Double], accuracy: Long): Column`

- `percentile_approx(e: Column, percentage: Double, accuracy: Long): Column`

- `percentile_approx(columnName: String, percentage: Double, accuracy: Long): Column`

- `percentile_approx(e: Column, percentage: Seq[Double], accuracy: Long): Column` (primarily for

Python interop).

- `percentile_approx(columnName: String, percentage: Seq[Double], accuracy: Long): Column`

- Adds `percentile_approx` to `pyspark.sql.functions`.

- Adds `percentile_approx` function to SparkR.

### Why are the changes needed?

Currently we support `percentile_approx` only in SQL expression. It is inconvenient and makes this function relatively unknown.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

New unit tests for SparkR an PySpark.

As for now there are no additional tests in Scala API ‒ `ApproximatePercentile` is well tested and Python (including docstrings) and R tests provide additional tests, so it seems unnecessary.

Closes#27278 from zero323/SPARK-30569.

Lead-authored-by: zero323 <mszymkiewicz@gmail.com>

Co-authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

In Spark version 2.4 and earlier, datetime parsing, formatting and conversion are performed by using the hybrid calendar (Julian + Gregorian).

Since the Proleptic Gregorian calendar is de-facto calendar worldwide, as well as the chosen one in ANSI SQL standard, Spark 3.0 switches to it by using Java 8 API classes (the java.time packages that are based on ISO chronology ). The switching job is completed in SPARK-26651.

But after the switching, there are some patterns not compatible between Java 8 and Java 7, Spark needs its own definition on the patterns rather than depends on Java API.

In this PR, we achieve this by writing the document and shadow the incompatible letters. See more details in [SPARK-31030](https://issues.apache.org/jira/browse/SPARK-31030)

### Why are the changes needed?

For backward compatibility.

### Does this PR introduce any user-facing change?

No.

After we define our own datetime parsing and formatting patterns, it's same to old Spark version.

### How was this patch tested?

Existing and new added UT.

Locally document test:

Closes#27830 from xuanyuanking/SPARK-31030.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR add R API for invoking following higher functions:

- `transform` -> `array_transform` (to avoid conflict with `base::transform`).

- `exists` -> `array_exists` (to avoid conflict with `base::exists`).

- `forall` -> `array_forall` (no conflicts, renamed for consistency)

- `filter` -> `array_filter` (to avoid conflict with `stats::filter`).

- `aggregate` -> `array_aggregate` (to avoid conflict with `stats::transform`).

- `zip_with` -> `arrays_zip_with` (no conflicts, renamed for consistency)

- `transform_keys`

- `transform_values`

- `map_filter`

- `map_zip_with`

Overall implementation follows the same pattern as proposed for PySpark (#27406) and reuses object supporting Scala implementation (SPARK-27297).

### Why are the changes needed?

Currently higher order functions are available only using SQL and Scala API and can use only SQL expressions:

```r

select(df, expr("transform(xs, x -> x + 1)")

```

This is error-prone, and hard to do right, when complex logic is used (`when` / `otherwise`, complex objects).

If this PR is accepted, above function could be simply rewritten as:

```r

select(df, transform("xs", function(x) x + 1))

```

### Does this PR introduce any user-facing change?

No (but new user-facing functions are added).

### How was this patch tested?

Added new unit tests.

Closes#27433 from zero323/SPARK-30682.

Authored-by: zero323 <mszymkiewicz@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This patch is to bump the master branch version to 3.1.0-SNAPSHOT.

### Why are the changes needed?

N/A

### Does this PR introduce any user-facing change?

N/A

### How was this patch tested?

N/A

Closes#27698 from gatorsmile/updateVersion.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

There are currently the R test failures after upgrading `testthat` to 2.0.0, and R version 3.5.2 as of SPARK-23435. This PR targets to fix the tests and make the tests pass. See the explanations and causes below:

```

test_context.R:49: failure: Check masked functions

length(maskedCompletely) not equal to length(namesOfMaskedCompletely).

1/1 mismatches

[1] 6 - 4 == 2

test_context.R:53: failure: Check masked functions

sort(maskedCompletely, na.last = TRUE) not equal to sort(namesOfMaskedCompletely, na.last = TRUE).

5/6 mismatches

x[2]: "endsWith"

y[2]: "filter"

x[3]: "filter"

y[3]: "not"

x[4]: "not"

y[4]: "sample"

x[5]: "sample"

y[5]: NA

x[6]: "startsWith"

y[6]: NA

```

From my cursory look, R base and R's version are mismatched. I fixed accordingly and Jenkins will test it out.

```

test_includePackage.R:31: error: include inside function

package or namespace load failed for ���plyr���:

package ���plyr��� was installed by an R version with different internals; it needs to be reinstalled for use with this R version

Seems it's a package installation issue. Looks like plyr has to be re-installed.

```

From my cursory look, previously installed `plyr` remains and it's not compatible with the new R version. I fixed accordingly and Jenkins will test it out.

```

test_sparkSQL.R:499: warning: SPARK-17811: can create DataFrame containing NA as date and time

Your system is mis-configured: ���/etc/localtime��� is not a symlink

```

Seems a env problem. I suppressed the warnings for now.

```

test_sparkSQL.R:499: warning: SPARK-17811: can create DataFrame containing NA as date and time

It is strongly recommended to set envionment variable TZ to ���America/Los_Angeles��� (or equivalent)

```

Seems a env problem. I suppressed the warnings for now.

```

test_sparkSQL.R:1814: error: string operators

unable to find an inherited method for function ���startsWith��� for signature ���"character"���

1: expect_true(startsWith("Hello World", "Hello")) at /home/jenkins/workspace/SparkPullRequestBuilder2/R/pkg/tests/fulltests/test_sparkSQL.R:1814

2: quasi_label(enquo(object), label)

3: eval_bare(get_expr(quo), get_env(quo))

4: startsWith("Hello World", "Hello")

5: (function (classes, fdef, mtable)

{

methods <- .findInheritedMethods(classes, fdef, mtable)

if (length(methods) == 1L)

return(methods[[1L]])

else if (length(methods) == 0L) {

cnames <- paste0("\"", vapply(classes, as.character, ""), "\"", collapse = ", ")

stop(gettextf("unable to find an inherited method for function %s for signature %s",

sQuote(fdefgeneric), sQuote(cnames)), domain = NA)

}

else stop("Internal error in finding inherited methods; didn't return a unique method",

domain = NA)

})(list("character"), new("nonstandardGenericFunction", .Data = function (x, prefix)

{

standardGeneric("startsWith")

}, generic = structure("startsWith", package = "SparkR"), package = "SparkR", group = list(),

valueClass = character(0), signature = c("x", "prefix"), default = NULL, skeleton = (function (x,

prefix)

stop("invalid call in method dispatch to 'startsWith' (no default method)", domain = NA))(x,

prefix)), <environment>)

6: stop(gettextf("unable to find an inherited method for function %s for signature %s",

sQuote(fdefgeneric), sQuote(cnames)), domain = NA)

```

From my cursory look, R base and R's version are mismatched. I fixed accordingly and Jenkins will test it out.

Also, this PR causes a CRAN check failure as below:

```

* creating vignettes ... ERROR

Error: processing vignette 'sparkr-vignettes.Rmd' failed with diagnostics:

package ���htmltools��� was installed by an R version with different internals; it needs to be reinstalled for use with this R version

```

This PR disables it for now.

### Why are the changes needed?

To unblock other PRs.

### Does this PR introduce any user-facing change?

No. Test only and dev only.

### How was this patch tested?

No. I am going to use Jenkins to test.

Closes#27460 from HyukjinKwon/r-test-failure.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Remove duplicate `name` tags from `read.df` and `read.stream`.

### Why are the changes needed?

These tags are already present in

1adf3520e3/R/pkg/R/SQLContext.R (L546)

and

1adf3520e3/R/pkg/R/SQLContext.R (L678)

for `read.df` and `read.stream` respectively.

As only one `name` tag per block is allowed, this causes build warnings with recent `roxygen2` versions:

```

Warning: [/path/to/spark/R/pkg/R/SQLContext.R:559] name May only use one name per block

Warning: [/path/to/spark/R/pkg/R/SQLContext.R:690] name May only use one name per block

```

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing tests.

Closes#27437 from zero323/roxygen-warnings-names.

Authored-by: zero323 <mszymkiewicz@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

- Update `testthat` to >= 2.0.0

- Replace of `testthat:::run_tests` with `testthat:::test_package_dir`

- Add trivial assertions for tests, without any expectations, to avoid skipping.

- Update related docs.

### Why are the changes needed?

`testthat` version has been frozen by [SPARK-22817](https://issues.apache.org/jira/browse/SPARK-22817) / https://github.com/apache/spark/pull/20003, but 1.0.2 is pretty old, and we shouldn't keep things in this state forever.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

- Existing CI pipeline:

- Windows build on AppVeyor, R 3.6.2, testthtat 2.3.1

- Linux build on Jenkins, R 3.1.x, testthat 1.0.2

- Additional builds with thesthat 2.3.1 using [sparkr-build-sandbox](https://github.com/zero323/sparkr-build-sandbox) on c7ed64af9e697b3619779857dd820832176b3be3

R 3.4.4 (image digest ec9032f8cf98)

```

docker pull zero323/sparkr-build-sandbox:3.4.4

docker run zero323/sparkr-build-sandbox:3.4.4 zero323 --branch SPARK-23435 --commit c7ed64af9e697b3619779857dd820832176b3be3 --public-key https://keybase.io/zero323/pgp_keys.asc

```

3.5.3 (image digest 0b1759ee4d1d)

```

docker pull zero323/sparkr-build-sandbox:3.5.3

docker run zero323/sparkr-build-sandbox:3.5.3 zero323 --branch SPARK-23435 --commit

c7ed64af9e697b3619779857dd820832176b3be3 --public-key https://keybase.io/zero323/pgp_keys.asc

```

and 3.6.2 (image digest 6594c8ceb72f)

```

docker pull zero323/sparkr-build-sandbox:3.6.2

docker run zero323/sparkr-build-sandbox:3.6.2 zero323 --branch SPARK-23435 --commit c7ed64af9e697b3619779857dd820832176b3be3 --public-key https://keybase.io/zero323/pgp_keys.asc

````

Corresponding [asciicast](https://asciinema.org/) are available as 10.5281/zenodo.3629431

[](https://doi.org/10.5281/zenodo.3629431)

(a bit to large to burden asciinema.org, but can run locally via `asciinema play`).

----------------------------

Continued from #27328Closes#27359 from zero323/SPARK-23435.

Authored-by: zero323 <mszymkiewicz@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

This reverts commit 1d20d13149.

Closes#27351 from gatorsmile/revertSPARK25496.

Authored-by: Xiao Li <gatorsmile@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Disabling test for cleaning closure of recursive function.

### Why are the changes needed?

As of 9514b822a7 this test is no longer valid, and recursive calls, even simple ones:

```lead

f <- function(x) {

if(x > 0) {

f(x - 1)

} else {

x

}

}

```

lead to

```

Error: node stack overflow

```

This is issue is silenced when tested with `testthat` 1.x (reason unknown), but cause failures when using `testthat` 2.x (issue can be reproduced outside test context).

Problem is known and tracked by [SPARK-30629](https://issues.apache.org/jira/browse/SPARK-30629)

Therefore, keeping this test active doesn't make sense, as it will lead to continuous test failures, when `testthat` is updated (https://github.com/apache/spark/pull/27359 / SPARK-23435).

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing tests.

CC falaki

Closes#27363 from zero323/SPARK-29777-FOLLOWUP.

Authored-by: zero323 <mszymkiewicz@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

add a param `bootstrap` to control whether bootstrap samples are used.

### Why are the changes needed?

Current RF with numTrees=1 will directly build a tree using the orignial dataset,

while with numTrees>1 it will use bootstrap samples to build trees.

This design is for training a DecisionTreeModel by the impl of RandomForest, however, it is somewhat strange.

In Scikit-Learn, there is a param [bootstrap](https://scikit-learn.org/stable/modules/generated/sklearn.ensemble.RandomForestClassifier.html#sklearn.ensemble.RandomForestClassifier) to control whether bootstrap samples are used.

### Does this PR introduce any user-facing change?

Yes, new param is added

### How was this patch tested?

existing testsuites

Closes#27254 from zhengruifeng/add_bootstrap.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: zhengruifeng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

This PR adds:

- `pyspark.sql.functions.overlay` function to PySpark

- `overlay` function to SparkR

### Why are the changes needed?

Feature parity. At the moment R and Python users can access this function only using SQL or `expr` / `selectExpr`.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

New unit tests.

Closes#27325 from zero323/SPARK-30607.

Authored-by: zero323 <mszymkiewicz@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Fix all the failed tests when enable AQE.

### Why are the changes needed?

Run more tests with AQE to catch bugs, and make it easier to enable AQE by default in the future.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Existing unit tests

Closes#26813 from JkSelf/enableAQEDefault.

Authored-by: jiake <ke.a.jia@intel.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR adds a note first and last can be non-deterministic in SQL function docs as well.

This is already documented in `functions.scala`.

### Why are the changes needed?

Some people look reading SQL docs only.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Jenkins will test.

Closes#27099 from HyukjinKwon/SPARK-30335.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

1. Revert "Preparing development version 3.0.1-SNAPSHOT": 56dcd79

2. Revert "Preparing Spark release v3.0.0-preview2-rc2": c216ef1

### Why are the changes needed?

Shouldn't change master.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

manual test:

https://github.com/apache/spark/compare/5de5e46..wangyum:revert-masterCloses#26915 from wangyum/revert-master.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Yuming Wang <wgyumg@gmail.com>

### What changes were proposed in this pull request?

This PR proposes to fix documentation for slide function. Fixed the spacing issue and added some parameter related info.

### Why are the changes needed?

Documentation improvement

### Does this PR introduce any user-facing change?

No (doc-only change).

### How was this patch tested?

Manually tested by documentation build.

Closes#26896 from bboutkov/pyspark_doc_fix.

Authored-by: Boris Boutkov <boris.boutkov@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

The implementation for walking through the user function AST and picking referenced variables and functions, had an optimization to skip a branch if it had already seen it. This runs into an interesting problem in the following example

```

df <- createDataFrame(data.frame(x=1))

f1 <- function(x) x + 1

f2 <- function(x) f1(x) + 2

dapplyCollect(df, function(x) { f1(x); f2(x) })

```

Results in error:

```

org.apache.spark.SparkException: R computation failed with

Error in f1(x) : could not find function "f1"

Calls: compute -> computeFunc -> f2

```

### Why are the changes needed?

Bug fix

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Unit tests in `test_utils.R`

Closes#26429 from falaki/SPARK-29777.

Authored-by: Hossein <hossein@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR fixes SparkR lint errors and adds `lint-r` GitHub Action to protect the branch.

### Why are the changes needed?

It turns out that we currently don't run it. It's recovered yesterday. However, after that, our Jenkins linter jobs (`master`/`branch-2.4`) has been broken on `lint-r` tasks.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Pass the GitHub Action on this PR in addition to Jenkins R and AppVeyor R.

Closes#26564 from dongjoon-hyun/SPARK-29936.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

[[SPARK-29376] Upgrade Apache Arrow to version 0.15.1](https://github.com/apache/spark/pull/26133) upgrades to Arrow 0.15 at Scala/Java/Python. This PR aims to upgrade `SparkR` to use Arrow 0.15 API. Currently, it's broken.

### Why are the changes needed?

First of all, it turns out that our Jenkins jobs (including PR builder) ignores Arrow test. Arrow 0.15 has a breaking R API changes at [ARROW-5505](https://issues.apache.org/jira/browse/ARROW-5505) and we missed that. AppVeyor was the only one having SparkR Arrow tests but it's broken now.

**Jenkins**

```

Skipped ------------------------------------------------------------------------

1. createDataFrame/collect Arrow optimization (test_sparkSQL_arrow.R#25)

- arrow not installed

```

Second, Arrow throws OOM on AppVeyor environment (Windows JDK8) like the following because it still has Arrow 0.14.

```

Warnings -----------------------------------------------------------------------

1. createDataFrame/collect Arrow optimization (test_sparkSQL_arrow.R#39) - createDataFrame attempted Arrow optimization because 'spark.sql.execution.arrow.sparkr.enabled' is set to true; however, failed, attempting non-optimization. Reason: Error in handleErrors(returnStatus, conn): java.lang.OutOfMemoryError: Java heap space

at java.nio.HeapByteBuffer.<init>(HeapByteBuffer.java:57)

at java.nio.ByteBuffer.allocate(ByteBuffer.java:335)

at org.apache.arrow.vector.ipc.message.MessageSerializer.readMessage(MessageSerializer.java:669)

at org.apache.spark.sql.execution.arrow.ArrowConverters$$anon$3.readNextBatch(ArrowConverters.scala:243)

```

It is due to the version mismatch.

```java

int messageLength = MessageSerializer.bytesToInt(buffer.array());

if (messageLength == IPC_CONTINUATION_TOKEN) {

buffer.clear();

// ARROW-6313, if the first 4 bytes are continuation message, read the next 4 for the length

if (in.readFully(buffer) == 4) {

messageLength = MessageSerializer.bytesToInt(buffer.array());

}

}

// Length of 0 indicates end of stream

if (messageLength != 0) {

// Read the message into the buffer.

ByteBuffer messageBuffer = ByteBuffer.allocate(messageLength);

```

After upgrading this to 0.15, we are hitting ARROW-5505. This PR upgrades Arrow version in AppVeyor and fix the issue.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Pass the AppVeyor.

This PR passed here.

- https://ci.appveyor.com/project/ApacheSoftwareFoundation/spark/builds/28909044

```

SparkSQL Arrow optimization: Spark package found in SPARK_HOME: C:\projects\spark\bin\..

................

```

Closes#26555 from dongjoon-hyun/SPARK-R-TEST.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR aims to add `io.netty.tryReflectionSetAccessible=true` to the testing configuration for JDK11 because this is an officially documented requirement of Apache Arrow.

Apache Arrow community documented this requirement at `0.15.0` ([ARROW-6206](https://github.com/apache/arrow/pull/5078)).

> #### For java 9 or later, should set "-Dio.netty.tryReflectionSetAccessible=true".

> This fixes `java.lang.UnsupportedOperationException: sun.misc.Unsafe or java.nio.DirectByteBuffer.(long, int) not available`. thrown by netty.

### Why are the changes needed?

After ARROW-3191, Arrow Java library requires the property `io.netty.tryReflectionSetAccessible` to be set to true for JDK >= 9. After https://github.com/apache/spark/pull/26133, JDK11 Jenkins job seem to fail.

- https://amplab.cs.berkeley.edu/jenkins/view/Spark%20QA%20Test%20(Dashboard)/job/spark-master-test-maven-hadoop-3.2-jdk-11/676/

- https://amplab.cs.berkeley.edu/jenkins/view/Spark%20QA%20Test%20(Dashboard)/job/spark-master-test-maven-hadoop-3.2-jdk-11/677/

- https://amplab.cs.berkeley.edu/jenkins/view/Spark%20QA%20Test%20(Dashboard)/job/spark-master-test-maven-hadoop-3.2-jdk-11/678/

```scala

Previous exception in task:

sun.misc.Unsafe or java.nio.DirectByteBuffer.<init>(long, int) not available

io.netty.util.internal.PlatformDependent.directBuffer(PlatformDependent.java:473)

io.netty.buffer.NettyArrowBuf.getDirectBuffer(NettyArrowBuf.java:243)

io.netty.buffer.NettyArrowBuf.nioBuffer(NettyArrowBuf.java:233)

io.netty.buffer.ArrowBuf.nioBuffer(ArrowBuf.java:245)

org.apache.arrow.vector.ipc.message.ArrowRecordBatch.computeBodyLength(ArrowRecordBatch.java:222)

```

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Pass the Jenkins with JDK11.

Closes#26552 from dongjoon-hyun/SPARK-ARROW-JDK11.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This is a followup of https://github.com/apache/spark/pull/23977 I made a mistake related to this line: 3725b1324f (diff-71c2cad03f08cb5f6c70462aa4e28d3aL112)

Previously,

1. the reader iterator for R worker read some initial data eagerly during RDD materialization. So it read the data before actual execution. For some reasons, in this case, it showed standard error from R worker.

2. After that, when error happens during actual execution, stderr wasn't shown: 3725b1324f (diff-71c2cad03f08cb5f6c70462aa4e28d3aL260)

After my change 3725b1324f (diff-71c2cad03f08cb5f6c70462aa4e28d3aL112), it now ignores 1. case and only does 2. of previous code path, because 1. does not happen anymore as I avoided to such eager execution (which is consistent with PySpark code path).

This PR proposes to do only 1. before/after execution always because It is pretty much possible R worker was failed during actual execution and it's best to show the stderr from R worker whenever possible.

### Why are the changes needed?

It currently swallows standard error from R worker which makes debugging harder.

### Does this PR introduce any user-facing change?

Yes,

```R

df <- createDataFrame(list(list(n=1)))

collect(dapply(df, function(x) {

stop("asdkjasdjkbadskjbsdajbk")

x

}, structType("a double")))

```

**Before:**

```

Error in handleErrors(returnStatus, conn) :

org.apache.spark.SparkException: Job aborted due to stage failure: Task 0 in stage 13.0 failed 1 times, most recent failure: Lost task 0.0 in stage 13.0 (TID 13, 192.168.35.193, executor driver): org.apache.spark.SparkException: R worker exited unexpectedly (cranshed)

at org.apache.spark.api.r.RRunner$$anon$1.read(RRunner.scala:130)

at org.apache.spark.api.r.BaseRRunner$ReaderIterator.hasNext(BaseRRunner.scala:118)

at scala.collection.Iterator$$anon$10.hasNext(Iterator.scala:458)

at scala.collection.Iterator$$anon$10.hasNext(Iterator.scala:458)

at org.apache.spark.sql.catalyst.expressions.GeneratedClass$GeneratedIteratorForCodegenStage2.processNext(Unknown Source)

at org.apache.spark.sql.execution.BufferedRowIterator.hasNext(BufferedRowIterator.java:43)

at org.apache.spark.sql.execution.WholeStageCodegenExec$$anon$1.hasNext(WholeStageCodegenExec.scala:726)

at org.apache.spark.sql.execution.SparkPlan.$anonfun$getByteArrayRdd$1(SparkPlan.scala:337)

at org.apache.spark.

```

**After:**

```

Error in handleErrors(returnStatus, conn) :

org.apache.spark.SparkException: Job aborted due to stage failure: Task 0 in stage 1.0 failed 1 times, most recent failure: Lost task 0.0 in stage 1.0 (TID 1, 192.168.35.193, executor driver): org.apache.spark.SparkException: R unexpectedly exited.

R worker produced errors: Error in computeFunc(inputData) : asdkjasdjkbadskjbsdajbk

at org.apache.spark.api.r.BaseRRunner$ReaderIterator$$anonfun$1.applyOrElse(BaseRRunner.scala:144)

at org.apache.spark.api.r.BaseRRunner$ReaderIterator$$anonfun$1.applyOrElse(BaseRRunner.scala:137)

at scala.runtime.AbstractPartialFunction.apply(AbstractPartialFunction.scala:38)

at org.apache.spark.api.r.RRunner$$anon$1.read(RRunner.scala:128)

at org.apache.spark.api.r.BaseRRunner$ReaderIterator.hasNext(BaseRRunner.scala:113)

at scala.collection.Iterator$$anon$10.hasNext(Iterator.scala:458)

at scala.collection.Iterator$$anon$10.hasNext(Iterator.scala:458)

at org.apache.spark.sql.catalyst.expressions.GeneratedClass$GeneratedIteratorForCodegen

```

### How was this patch tested?

Manually tested and unittest was added.

Closes#26517 from HyukjinKwon/SPARK-26923-followup.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This reverts commit 91d990162f.

### Why are the changes needed?

CRAN check is pretty important for R package, we should enable it.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Unit tests.

Closes#26381 from viirya/revert-SPARK-24152.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR aims to remove `check-cran` from `run-tests.sh`.

We had better add an independent Jenkins job to run `check-cran`.

### Why are the changes needed?

CRAN instability has been a blocker for our daily dev process.

The following simple check causes consecutive failures in 4 of 9 Jenkins

jobs + PR builder.

```

* checking CRAN incoming feasibility ...Error in

.check_package_CRAN_incoming(pkgdir) :

dims [product 24] do not match the length of object [0]

```

- spark-branch-2.4-test-sbt-hadoop-2.6

- spark-branch-2.4-test-sbt-hadoop-2.7

- spark-master-test-sbt-hadoop-2.7

- spark-master-test-sbt-hadoop-3.2

- PRBuilder

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Currently, PR builder is failing due to the above issue. This PR should pass the Jenkins.

Closes#26375 from dongjoon-hyun/SPARK-24152.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

To push the built jars to maven release repository, we need to remove the 'SNAPSHOT' tag from the version name.

Made the following changes in this PR:

* Update all the `3.0.0-SNAPSHOT` version name to `3.0.0-preview`

* Update the sparkR version number check logic to allow jvm version like `3.0.0-preview`

**Please note those changes were generated by the release script in the past, but this time since we manually add tags on master branch, we need to manually apply those changes too.**

We shall revert the changes after 3.0.0-preview release passed.

### Why are the changes needed?

To make the maven release repository to accept the built jars.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

N/A

### What changes were proposed in this pull request?

To push the built jars to maven release repository, we need to remove the 'SNAPSHOT' tag from the version name.

Made the following changes in this PR:

* Update all the `3.0.0-SNAPSHOT` version name to `3.0.0-preview`

* Update the PySpark version from `3.0.0.dev0` to `3.0.0`

**Please note those changes were generated by the release script in the past, but this time since we manually add tags on master branch, we need to manually apply those changes too.**

We shall revert the changes after 3.0.0-preview release passed.

### Why are the changes needed?

To make the maven release repository to accept the built jars.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

N/A

Closes#26243 from jiangxb1987/3.0.0-preview-prepare.

Lead-authored-by: Xingbo Jiang <xingbo.jiang@databricks.com>

Co-authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Xingbo Jiang <xingbo.jiang@databricks.com>

### What changes were proposed in this pull request?

This PR proposes:

1. Use `is.data.frame` to check if it is a DataFrame.

2. to install Arrow and test Arrow optimization in AppVeyor build. We're currently not testing this in CI.

### Why are the changes needed?

1. To support SparkR with Arrow 0.14

2. To check if there's any regression and if it works correctly.

### Does this PR introduce any user-facing change?

```r

df <- createDataFrame(mtcars)

collect(dapply(df, function(rdf) { data.frame(rdf$gear + 1) }, structType("gear double")))

```

**Before:**

```

Error in readBin(con, raw(), as.integer(dataLen), endian = "big") :

invalid 'n' argument

```

**After:**

```

gear

1 5

2 5

3 5

4 4

5 4

6 4

7 4

8 5

9 5

...

```

### How was this patch tested?

AppVeyor

Closes#25993 from HyukjinKwon/arrow-r-appveyor.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Added "array indices start at 1" in annotation to make it clear for the usage of function slice, in R Scala Python component

### Why are the changes needed?

It will throw exception if the value stare is 0, but array indices start at 0 most of times in other scenarios.

### Does this PR introduce any user-facing change?

Yes, more info provided to user.

### How was this patch tested?

No tests added, only doc change.

Closes#25704 from sheepstop/master.

Authored-by: sheepstop <yangting617@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Fitting ALS model can be failed due to nondeterministic input data. Currently the failure is thrown by an ArrayIndexOutOfBoundsException which is not explainable for end users what is wrong in fitting.

This patch catches this exception and rethrows a more explainable one, when the input data is nondeterministic.

Because we may not exactly know the output deterministic level of RDDs produced by user code, this patch also adds a note to Scala/Python/R ALS document about the training data deterministic level.

### Why are the changes needed?

ArrayIndexOutOfBoundsException was observed during fitting ALS model. It was caused by mismatching between in/out user/item blocks during computing ratings.

If the training RDD output is nondeterministic, when fetch failure is happened, rerun part of training RDD can produce inconsistent user/item blocks.

This patch is needed to notify users ALS fitting on nondeterministic input.

### Does this PR introduce any user-facing change?

Yes. When fitting ALS model on nondeterministic input data, previously if rerun happens, users would see ArrayIndexOutOfBoundsException caused by mismatch between In/Out user/item blocks.

After this patch, a SparkException with more clear message will be thrown, and original ArrayIndexOutOfBoundsException is wrapped.

### How was this patch tested?

Tested on development cluster.

Closes#25789 from viirya/als-indeterminate-input.

Lead-authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Co-authored-by: Liang-Chi Hsieh <liangchi@uber.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

JIRA :https://issues.apache.org/jira/browse/SPARK-29050

'a hdfs' change into 'an hdfs'

'an unique' change into 'a unique'

'an url' change into 'a url'

'a error' change into 'an error'

Closes#25756 from dengziming/feature_fix_typos.

Authored-by: dengziming <dengziming@growingio.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

- Remove SQLContext.createExternalTable and Catalog.createExternalTable, deprecated in favor of createTable since 2.2.0, plus tests of deprecated methods

- Remove HiveContext, deprecated in 2.0.0, in favor of `SparkSession.builder.enableHiveSupport`

- Remove deprecated KinesisUtils.createStream methods, plus tests of deprecated methods, deprecate in 2.2.0

- Remove deprecated MLlib (not Spark ML) linear method support, mostly utility constructors and 'train' methods, and associated docs. This includes methods in LinearRegression, LogisticRegression, Lasso, RidgeRegression. These have been deprecated since 2.0.0

- Remove deprecated Pyspark MLlib linear method support, including LogisticRegressionWithSGD, LinearRegressionWithSGD, LassoWithSGD

- Remove 'runs' argument in KMeans.train() method, which has been a no-op since 2.0.0

- Remove deprecated ChiSqSelector isSorted protected method

- Remove deprecated 'yarn-cluster' and 'yarn-client' master argument in favor of 'yarn' and deploy mode 'cluster', etc

Notes:

- I was not able to remove deprecated DataFrameReader.json(RDD) in favor of DataFrameReader.json(Dataset); the former was deprecated in 2.2.0, but, it is still needed to support Pyspark's .json() method, which can't use a Dataset.

- Looks like SQLContext.createExternalTable was not actually deprecated in Pyspark, but, almost certainly was meant to be? Catalog.createExternalTable was.

- I afterwards noted that the toDegrees, toRadians functions were almost removed fully in SPARK-25908, but Felix suggested keeping just the R version as they hadn't been technically deprecated. I'd like to revisit that. Do we really want the inconsistency? I'm not against reverting it again, but then that implies leaving SQLContext.createExternalTable just in Pyspark too, which seems weird.

- I *kept* LogisticRegressionWithSGD, LinearRegressionWithSGD, LassoWithSGD, RidgeRegressionWithSGD in Pyspark, though deprecated, as it is hard to remove them (still used by StreamingLogisticRegressionWithSGD?) and they are not fully removed in Scala. Maybe should not have been deprecated.

### Why are the changes needed?

Deprecated items are easiest to remove in a major release, so we should do so as much as possible for Spark 3. This does not target items deprecated 'recently' as of Spark 2.3, which is still 18 months old.

### Does this PR introduce any user-facing change?

Yes, in that deprecated items are removed from some public APIs.

### How was this patch tested?

Existing tests.

Closes#25684 from srowen/SPARK-28980.

Lead-authored-by: Sean Owen <sean.owen@databricks.com>

Co-authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

`DataFrameReader.json()` accepts a partition column that is of numeric, date or timestamp type, according to the implementation in `JDBCRelation.scala`. Update the scaladoc accordingly, to match the documentation in `sql-data-sources-jdbc.md` too.

### Why are the changes needed?

scaladoc is incorrect.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

N/A

Closes#25687 from srowen/SPARK-28977.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR adds three more information:

- Mentions that `bash` in `PATH` to build is required.

- Specifies supported JDK and Maven versions

- Explicitly mentions that building on Windows is not the official support

### Why are the changes needed?

In order to make SparkR developers on Windows able to work, and describe what is needed for AppVeyor build.

### Does this PR introduce any user-facing change?

No. It just adds some information in `R/WINDOWS.md`

### How was this patch tested?

This is already being tested as so in AppVeyor. Also, I tested as so (long ago though).

Closes#25647 from HyukjinKwon/SPARK-28946.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Make `spark.sql.crossJoin.enabled` default value true

### Why are the changes needed?

For implicit cross join, we can set up a watchdog to cancel it if running for a long time.

When "spark.sql.crossJoin.enabled" is false, because `CheckCartesianProducts` is implemented in logical plan stage, it may generate some mismatching error which may confuse end user:

* it's done in logical phase, so we may fail queries that can be executed via broadcast join, which is very fast.

* if we move the check to the physical phase, then a query may success at the beginning, and begin to fail when the table size gets larger (other people insert data to the table). This can be quite confusing.

* the CROSS JOIN syntax doesn't work well if join reorder happens.

* some non-equi-join will generate plan using cartesian product, but `CheckCartesianProducts` do not detect it and raise error.

So that in order to address this in simpler way, we can turn off showing this cross-join error by default.

For reference, I list some cases raising mismatching error here:

Providing:

```

spark.range(2).createOrReplaceTempView("sm1") // can be broadcast

spark.range(50000000).createOrReplaceTempView("bg1") // cannot be broadcast

spark.range(60000000).createOrReplaceTempView("bg2") // cannot be broadcast

```

1) Some join could be convert to broadcast nested loop join, but CheckCartesianProducts raise error. e.g.

```

select sm1.id, bg1.id from bg1 join sm1 where sm1.id < bg1.id

```

2) Some join will run by CartesianJoin but CheckCartesianProducts DO NOT raise error. e.g.

```

select bg1.id, bg2.id from bg1 join bg2 where bg1.id < bg2.id

```

### Does this PR introduce any user-facing change?

### How was this patch tested?

Closes#25520 from WeichenXu123/SPARK-28621.

Authored-by: WeichenXu <weichen.xu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

<!--

Thanks for sending a pull request! Here are some tips for you:

1. If this is your first time, please read our contributor guidelines: https://spark.apache.org/contributing.html

2. Ensure you have added or run the appropriate tests for your PR: https://spark.apache.org/developer-tools.html

3. If the PR is unfinished, add '[WIP]' in your PR title, e.g., '[WIP][SPARK-XXXX] Your PR title ...'.

4. Be sure to keep the PR description updated to reflect all changes.

5. Please write your PR title to summarize what this PR proposes.

6. If possible, provide a concise example to reproduce the issue for a faster review.

-->

### What changes were proposed in this pull request?

<!--

Please clarify what changes you are proposing. The purpose of this section is to outline the changes and how this PR fixes the issue.

If possible, please consider writing useful notes for better and faster reviews in your PR. See the examples below.

1. If you refactor some codes with changing classes, showing the class hierarchy will help reviewers.

2. If you fix some SQL features, you can provide some references of other DBMSes.

3. If there is design documentation, please add the link.

4. If there is a discussion in the mailing list, please add the link.

-->

This PR proposes to set minimum and maximum Java version specification. (see https://cran.r-project.org/doc/manuals/r-release/R-exts.html#Writing-portable-packages).

Seems there is not the standard way to specify both given the documentation and other packages (see https://gist.github.com/glin/bd36cf1eb0c7f8b1f511e70e2fb20f8d).

I found two ways from existing packages on CRAN.

```

Package (<= 1 & > 2)

Package (<= 1, > 2)

```

The latter seems closer to other standard notations such as `R (>= 2.14.0), R (>= r56550)`. So I have chosen the latter way.

### Why are the changes needed?

<!--

Please clarify why the changes are needed. For instance,

1. If you propose a new API, clarify the use case for a new API.

2. If you fix a bug, you can clarify why it is a bug.

-->

Seems the package might be rejected by CRAN. See https://github.com/apache/spark/pull/25472#issuecomment-522405742

### Does this PR introduce any user-facing change?

<!--

If yes, please clarify the previous behavior and the change this PR proposes - provide the console output, description and/or an example to show the behavior difference if possible.

If no, write 'No'.

-->

No.

### How was this patch tested?

<!--

If tests were added, say they were added here. Please make sure to add some test cases that check the changes thoroughly including negative and positive cases if possible.

If it was tested in a way different from regular unit tests, please clarify how you tested step by step, ideally copy and paste-able, so that other reviewers can test and check, and descendants can verify in the future.

If tests were not added, please describe why they were not added and/or why it was difficult to add.

-->

JDK 8

```bash

./build/mvn -DskipTests -Psparkr clean package

./R/run-tests.sh

...

basic tests for CRAN: .............

...

```

JDK 11

```bash

./build/mvn -DskipTests -Psparkr -Phadoop-3.2 clean package

./R/run-tests.sh

...

basic tests for CRAN: .............

...

```

Closes#25490 from HyukjinKwon/SPARK-28756.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This updates an URL in R doc to fix `Had CRAN check errors; see logs`.

### Why are the changes needed?

Currently, this invalid link causes a warning during CRAN incoming feasibility. We had better fix this before submitting `3.0.0/2.4.4/2.3.4`.

**BEFORE**

```

* checking CRAN incoming feasibility ... NOTE

Maintainer: ‘Shivaram Venkataraman <shivaramcs.berkeley.edu>’

Found the following (possibly) invalid URLs:

URL: https://wiki.apache.org/hadoop/HCFS (moved to https://cwiki.apache.org/confluence/display/hadoop/HCFS)

From: man/spark.addFile.Rd

Status: 404

Message: Not Found

```

**AFTER**

```

* checking CRAN incoming feasibility ... Note_to_CRAN_maintainers

Maintainer: ‘Shivaram Venkataraman <shivaramcs.berkeley.edu>’

```

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Check the warning message during R testing.

```

$ R/install-dev.sh

$ R/run-tests.sh

```

Closes#25483 from dongjoon-hyun/SPARK-28766.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

<!--

Thanks for sending a pull request! Here are some tips for you:

1. If this is your first time, please read our contributor guidelines: https://spark.apache.org/contributing.html

2. Ensure you have added or run the appropriate tests for your PR: https://spark.apache.org/developer-tools.html

3. If the PR is unfinished, add '[WIP]' in your PR title, e.g., '[WIP][SPARK-XXXX] Your PR title ...'.

4. Be sure to keep the PR description updated to reflect all changes.

5. Please write your PR title to summarize what this PR proposes.

6. If possible, provide a concise example to reproduce the issue for a faster review.

-->

### What changes were proposed in this pull request?

<!--

Please clarify what changes you are proposing. The purpose of this section is to outline the changes and how this PR fixes the issue.

If possible, please consider writing useful notes for better and faster reviews in your PR. See the examples below.

1. If you refactor some codes with changing classes, showing the class hierarchy will help reviewers.

2. If you fix some SQL features, you can provide some references of other DBMSes.

3. If there is design documentation, please add the link.

4. If there is a discussion in the mailing list, please add the link.

-->

This PR proposes to increase the tolerance for the exact value comparison in `spark.mlp` test. I don't know the root cause but some tolerance is already expected. I suspect it is not a big deal considering all other tests pass.

The values are fairly close:

JDK 8:

```

-24.28415, 107.8701, 16.86376, 1.103736, 9.244488

```

JDK 11:

```

-24.33892, 108.0316, 16.89082, 1.090723, 9.260533

```

### Why are the changes needed?

<!--

Please clarify why the changes are needed. For instance,

1. If you propose a new API, clarify the use case for a new API.

2. If you fix a bug, you can clarify why it is a bug.

-->

To fully support JDK 11. See, for instance, #25443 and #25423 for ongoing efforts.

### Does this PR introduce any user-facing change?

<!--

If yes, please clarify the previous behavior and the change this PR proposes - provide the console output, description and/or an example to show the behavior difference if possible.

If no, write 'No'.

-->

No

### How was this patch tested?

<!--

If tests were added, say they were added here. Please make sure to add some test cases that check the changes thoroughly including negative and positive cases if possible.

If it was tested in a way different from regular unit tests, please clarify how you tested step by step, ideally copy and paste-able, so that other reviewers can test and check, and descendants can verify in the future.

If tests were not added, please describe why they were not added and/or why it was difficult to add.

-->

Manually tested on the top of https://github.com/apache/spark/pull/25472 with JDK 11

```bash

./build/mvn -DskipTests -Psparkr -Phadoop-3.2 package

./bin/sparkR

```

```R

absoluteSparkPath <- function(x) {

sparkHome <- sparkR.conf("spark.home")

file.path(sparkHome, x)

}

df <- read.df(absoluteSparkPath("data/mllib/sample_multiclass_classification_data.txt"),

source = "libsvm")

model <- spark.mlp(df, label ~ features, blockSize = 128, layers = c(4, 5, 4, 3),

solver = "l-bfgs", maxIter = 100, tol = 0.00001, stepSize = 1, seed = 1)

summary <- summary(model)

head(summary$weights, 5)

```

Closes#25478 from HyukjinKwon/SPARK-28755.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Currently, `checkJavaVersion` only accepts JDK8 because it compares with the number in `SystemRequirements`. This PR changes it to accept the higher version, too.

### Why are the changes needed?

Without this, two test suites are skipped on JDK11 environment due to this check.

**BEFORE**

```

$ build/mvn -Phadoop-3.2 -Psparkr -DskipTests package

$ R/install-dev.sh

$ R/run-tests.sh

...

basic tests for CRAN: SS

Skipped ------------------------------------------------------------------------

1. create DataFrame from list or data.frame (test_basic.R#21) - error on Java check

2. spark.glm and predict (test_basic.R#57) - error on Java check

DONE ===========================================================================

```

**AFTER**

```

basic tests for CRAN: .............

DONE ===========================================================================

```

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Manually, build and test on JDK11.

Closes#25472 from dongjoon-hyun/SPARK-28756.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

In the PR, I propose to use `uuuu` for years instead of `yyyy` in date/timestamp patterns without the era pattern `G` (https://docs.oracle.com/javase/8/docs/api/java/time/format/DateTimeFormatter.html). **Parsing/formatting of positive years (current era) will be the same.** The difference is in formatting negative years belong to previous era - BC (Before Christ).

I replaced the `yyyy` pattern by `uuuu` everywhere except:

1. Test, Suite & Benchmark. Existing tests must work as is.

2. `SimpleDateFormat` because it doesn't support the `uuuu` pattern.

3. Comments and examples (except comments related to already replaced patterns).

Before the changes, the year of common era `100` and the year of BC era `-99`, showed similarly as `100`. After the changes negative years will be formatted with the `-` sign.

Before:

```Scala

scala> Seq(java.time.LocalDate.of(-99, 1, 1)).toDF().show

+----------+

| value|

+----------+

|0100-01-01|

+----------+

```

After:

```Scala

scala> Seq(java.time.LocalDate.of(-99, 1, 1)).toDF().show

+-----------+

| value|

+-----------+

|-0099-01-01|

+-----------+

```

## How was this patch tested?

By existing test suites, and added tests for negative years to `DateFormatterSuite` and `TimestampFormatterSuite`.

Closes#25230 from MaxGekk/year-pattern-uuuu.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

Change the format of the build command in the README to start with a `./` prefix

./build/mvn -DskipTests clean package

This increases stylistic consistency across the README- all the other commands have a `./` prefix. Having a visible `./` prefix also makes it clear to the user that the shell command requires the current working directory to be at the repository root.

## How was this patch tested?

README.md was reviewed both in raw markdown and in the Github rendered landing page for stylistic consistency.

Closes#25231 from Mister-Meeseeks/master.

Lead-authored-by: Douglas R Colkitt <douglas.colkitt@gmail.com>

Co-authored-by: Mister-Meeseeks <douglas.colkitt@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

New R api of Arrow has removed `as_tibble` as of 2ef96c8623. Arrow optimization for DataFrame in R doesn't work due to the change.

This can be tested as below, after installing latest Arrow:

```

./bin/sparkR --conf spark.sql.execution.arrow.sparkr.enabled=true

```

```

> collect(createDataFrame(mtcars))

```

Before this PR:

```

> collect(createDataFrame(mtcars))

Error in get("as_tibble", envir = asNamespace("arrow")) :

object 'as_tibble' not found

```

After:

```

> collect(createDataFrame(mtcars))

mpg cyl disp hp drat wt qsec vs am gear carb

1 21.0 6 160.0 110 3.90 2.620 16.46 0 1 4 4

2 21.0 6 160.0 110 3.90 2.875 17.02 0 1 4 4

3 22.8 4 108.0 93 3.85 2.320 18.61 1 1 4 1

...

```

## How was this patch tested?

Manual test.

Closes#25012 from viirya/SPARK-28215.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>