## What changes were proposed in this pull request?

Convert `PartitionedFile.filePath` to URI first in binary file data source. Otherwise Spark will throw a FileNotFound exception because we create `Path` with URL encoded string, instead of wrapping it with URI.

## How was this patch tested?

Unit test.

Closes#24855 from mengxr/SPARK-28030.

Authored-by: Xiangrui Meng <meng@databricks.com>

Signed-off-by: Xiangrui Meng <meng@databricks.com>

## What changes were proposed in this pull request?

For Apache Spark 3.0.0 release, this PR aims to update Kafka dependency to 2.2.1 to bring the following improvement and bug fixes like [KAFKA-8134](https://issues.apache.org/jira/browse/KAFKA-8134) (`'linger.ms' must be a long`).

https://issues.apache.org/jira/projects/KAFKA/versions/12345010

## How was this patch tested?

Pass the Jenkins.

Closes#24847 from dongjoon-hyun/SPARK-28013.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

`NestedColumnAliasing` rule covers `GetStructField` only, currently. It means that some nested field extraction expressions aren't pruned. For example, if only accessing a nested field in an array of struct (`GetArrayStructFields`), this column isn't pruned.

This patch extends the rule to cover general nested field cases, including `GetArrayStructFields`.

## How was this patch tested?

Added tests.

Closes#24599 from viirya/nested-pruning-extract-value.

Lead-authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Co-authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

Current Spark SQL parser can have pretty confusing error messages when parsing an incorrect SELECT SQL statement. The proposed fix has the following effect.

BEFORE:

```

spark-sql> SELECT * FROM test WHERE x NOT NULL;

Error in query:

mismatched input 'FROM' expecting {<EOF>, 'CLUSTER', 'DISTRIBUTE', 'EXCEPT', 'GROUP', 'HAVING', 'INTERSECT', 'LATERAL', 'LIMIT', 'ORDER', 'MINUS', 'SORT', 'UNION', 'WHERE', 'WINDOW'}(line 1, pos 9)

== SQL ==

SELECT * FROM test WHERE x NOT NULL

---------^^^

```

where in fact the error message should be hinted to be near `NOT NULL`.

AFTER:

```

spark-sql> SELECT * FROM test WHERE x NOT NULL;

Error in query:

mismatched input 'NOT' expecting {<EOF>, 'AND', 'CLUSTER', 'DISTRIBUTE', 'EXCEPT', 'GROUP', 'HAVING', 'INTERSECT', 'LIMIT', 'OR', 'ORDER', 'MINUS', 'SORT', 'UNION', 'WINDOW'}(line 1, pos 27)

== SQL ==

SELECT * FROM test WHERE x NOT NULL

---------------------------^^^

```

In fact, this problem is brought by some problematic Spark SQL grammar. There are two kinds of SELECT statements that are supported by Hive (and thereby supported in SparkSQL):

* `FROM table SELECT blahblah SELECT blahblah`

* `SELECT blah FROM table`

*Reference* [HiveQL single-from stmt grammar](https://github.com/apache/hive/blob/master/ql/src/java/org/apache/hadoop/hive/ql/parse/HiveParser.g)

It is fine when these two SELECT syntaxes are supported separately. However, since we are currently supporting these two kinds of syntaxes in a single ANTLR rule, this can be problematic and therefore leading to confusing parser errors. This is because when a SELECT clause was parsed, it can't tell whether the following FROM clause actually belongs to it or is just the beginning of a new `FROM table SELECT *` statement.

## What changes were proposed in this pull request?

1. Modify ANTLR grammar to fix the above-mentioned problem. This fix is important because the previous problematic grammar does affect a lot of real-world queries. Due to the previous problematic and messy grammar, we refactored the grammar related to `querySpecification`.

2. Modify `AstBuilder` to have separate visitors for `SELECT ... FROM ...` and `FROM ... SELECT ...` statements.

3. Drop the `FROM table` statement, which is supported by accident and is actually parsed in the wrong code path. Both Hive and Presto do not support this syntax.

## How was this patch tested?

Existing UTs and new UTs.

Closes#24809 from yeshengm/parser-refactor.

Authored-by: Yesheng Ma <kimi.ysma@gmail.com>

Signed-off-by: Xingbo Jiang <xingbo.jiang@databricks.com>

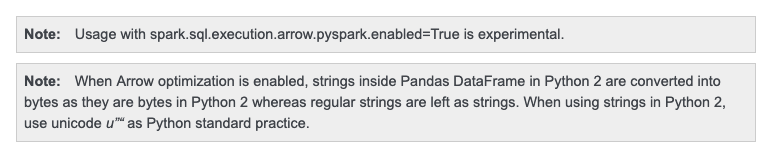

## What changes were proposed in this pull request?

When Arrow optimization is enabled in Python 2.7,

```python

import pandas

pdf = pandas.DataFrame(["test1", "test2"])

df = spark.createDataFrame(pdf)

df.show()

```

I got the following output:

```

+----------------+

| 0|

+----------------+

|[74 65 73 74 31]|

|[74 65 73 74 32]|

+----------------+

```

This looks because Python's `str` and `byte` are same. it does look right:

```python

>>> str == bytes

True

>>> isinstance("a", bytes)

True

```

To cut it short:

1. Python 2 treats `str` as `bytes`.

2. PySpark added some special codes and hacks to recognizes `str` as string types.

3. PyArrow / Pandas followed Python 2 difference

To fix, we have two options:

1. Fix it to match the behaviour to PySpark's

2. Note the differences

but Python 2 is deprecated anyway. I think it's better to just note it and for go option 2.

## How was this patch tested?

Manually tested.

Doc was checked too:

Closes#24838 from HyukjinKwon/SPARK-27995.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

The new Spark ThriftServer SparkGetTablesOperation implemented in https://github.com/apache/spark/pull/22794 does a catalog.getTableMetadata request for every table. This can get very slow for large schemas (~50ms per table with an external Hive metastore).

Hive ThriftServer GetTablesOperation uses HiveMetastoreClient.getTableObjectsByName to get table information in bulk, but we don't expose that through our APIs that go through Hive -> HiveClientImpl (HiveClient) -> HiveExternalCatalog (ExternalCatalog) -> SessionCatalog.

If we added and exposed getTableObjectsByName through our catalog APIs, we could resolve that performance problem in SparkGetTablesOperation.

## How was this patch tested?

Add UT

Closes#24774 from LantaoJin/SPARK-27899.

Authored-by: LantaoJin <jinlantao@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

This is a second try of #24824.

Since Apache Spark 2.0.0, SPARK-14867 deprecated `--force` option and made it ignored. This PR cleans up the related code completely at 3.0.0.

**BEFORE (Jenkins)**

```

========================================================================

Building Spark

========================================================================

[info] Building Spark using Maven with these arguments: -Phadoop-2.7 -Pkubernetes -Phive-thriftserver -Pkinesis-asl -Pyarn -Pspark-ganglia-lgpl -Phive -Pmesos clean package -DskipTests

WARNING: '--force' is deprecated and ignored.

...

========================================================================

Running Spark unit tests

========================================================================

[info] Running Spark tests using Maven with these arguments: -Phadoop-2.7 -Phive-thriftserver -Phive -Dtest.exclude.tags=org.apache.spark.tags.ExtendedHiveTest,org.apache.spark.tags.ExtendedYarnTest test --fail-at-end

WARNING: '--force' is deprecated and ignored.

```

**AFTER (Jenkins)**

```

========================================================================

Building Spark

========================================================================

[info] Building Spark using Maven with these arguments: -Phadoop-2.7 -Pkubernetes -Phive-thriftserver -Pkinesis-asl -Pyarn -Pspark-ganglia-lgpl -Phive -Pmesos clean package -DskipTests

...

========================================================================

Running Spark unit tests

========================================================================

[info] Running Spark tests using Maven with these arguments: -Phadoop-2.7 -Pkubernetes -Phive-thriftserver -Pyarn -Pspark-ganglia-lgpl -Phive -Pkinesis-asl -Pmesos -Dtest.exclude.tags=org.apache.spark.tags.ExtendedHiveTest,org.apache.spark.tags.ExtendedYarnTest test --fail-at-end

```

## How was this patch tested?

Manually check the Jenkins logs.

Closes#24833 from dongjoon-hyun/SPARK-FORCE-2.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

Handle the case when ParsedStatement subclass has a Map field but not of type Map[String, String].

In ParsedStatement.productIterator, `case mapArg: Map[_, _]` can match any Map type due to type erasure, thus causing `asInstanceOf[Map[String, String]]` to throw ClassCastException.

The following test reproduces the issue:

```

case class TestStatement(p: Map[String, Int]) extends ParsedStatement {

override def output: Seq[Attribute] = Nil

override def children: Seq[LogicalPlan] = Nil

}

TestStatement(Map("abc" -> 1)).toString

```

Changing the code to `case mapArg: Map[String, String]` will not help due to type erasure. As a matter of fact, compiler gives this warning:

```

Warning:(41, 18) non-variable type argument String in type pattern

scala.collection.immutable.Map[String,String] (the underlying of Map[String,String])

is unchecked since it is eliminated by erasure

case mapArg: Map[String, String] =>

```

## How was this patch tested?

Add 2 unit tests.

Closes#24800 from jzhuge/SPARK-27947.

Authored-by: John Zhuge <jzhuge@apache.org>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

For caseWhen Object canonicalized is not handled

for e.g let's consider below CaseWhen Object

val attrRef = AttributeReference("ACCESS_CHECK", StringType)()

val caseWhenObj1 = CaseWhen(Seq((attrRef, Literal("A"))))

caseWhenObj1.canonicalized **ouput** is as below

CASE WHEN ACCESS_CHECK#0 THEN A END (**Before Fix)**

**After Fix** : CASE WHEN none#0 THEN A END

So when there will be aliasref like below statements, semantic equals will fail. Sematic equals returns true if the canonicalized form of both the expressions are same.

val attrRef = AttributeReference("ACCESS_CHECK", StringType)()

val aliasAttrRef = attrRef.withName("access_check")

val caseWhenObj1 = CaseWhen(Seq((attrRef, Literal("A"))))

val caseWhenObj2 = CaseWhen(Seq((aliasAttrRef, Literal("A"))))

**assert(caseWhenObj2.semanticEquals(caseWhenObj1.semanticEquals) fails**

**caseWhenObj1.canonicalized**

Before Fix:CASE WHEN ACCESS_CHECK#0 THEN A END

After Fix: CASE WHEN none#0 THEN A END

**caseWhenObj2.canonicalized**

Before Fix:CASE WHEN access_check#0 THEN A END

After Fix: CASE WHEN none#0 THEN A END

## How was this patch tested?

Added UT

Closes#24766 from sandeep-katta/caseWhenIssue.

Authored-by: sandeep katta <sandeep.katta2007@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

Hadoop `Configuration` has an internal `properties` map which is lazily initialized. Initialization of this field, done in the private `Configuration.getProps()` method, is rather expensive because it ends up parsing XML configuration files. When cloning a `Configuration`, this `properties` field is cloned if it has been initialized.

In some cases it's possible that `sc.hadoopConfiguration` never ends up computing this `properties` field, leading to performance problems when this configuration is cloned in `SessionState.newHadoopConf()` because each cloned `Configuration` needs to re-parse configuration XML files from disk.

To avoid this problem, we can call `Configuration.size()` to trigger a call to `getProps()`, ensuring that this expensive computation is cached and re-used when cloning configurations.

I discovered this problem while performance profiling the Spark ThriftServer while running a SQL fuzzing workload.

## How was this patch tested?

Examined YourKit profiles before and after my change.

Closes#24714 from JoshRosen/fuzzing-perf-improvements.

Authored-by: Josh Rosen <rosenville@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

Fixes the service account inconsistency that breaks pull secrets. It gives the option to the user to setup a specific service account for the executors if he has to

(via `spark.kubernetes.authenticate.executor.serviceAccountName`). Defaults to the driver's one.

We are not supporting special authentication credentials for the executors with this PR.

## How was this patch tested?

Tested manually by launching a Spark job exercising the introduced settings.

Added a new integration tests for this fix.

Closes#24748 from skonto/fix_executor_sa.

Authored-by: Stavros Kontopoulos <stavros.kontopoulos@lightbend.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This PR introduces the necessary Maven modules for the new [Spark Graph](https://issues.apache.org/jira/browse/SPARK-25994) feature for Spark 3.0.

* `spark-graph` is a parent module that users depend on to get all graph functionalities (Cypher and Graph Algorithms)

* `spark-graph-api` defines the [Property Graph API](https://docs.google.com/document/d/1Wxzghj0PvpOVu7XD1iA8uonRYhexwn18utdcTxtkxlI) that is being shared between Cypher and Algorithms

* `spark-cypher` contains a Cypher query engine implementation

Both, `spark-graph-api` and `spark-cypher` depend on Spark SQL.

Note, that the Maven module for Graph Algorithms is not part of this PR and will be introduced in https://issues.apache.org/jira/browse/SPARK-27302

A PoC for a running Cypher implementation can be found in this WIP PR https://github.com/apache/spark/pull/24297

## How was this patch tested?

Pass the Jenkins with all profiles and manually build and check the followings.

```

$ ls assembly/target/scala-2.12/jars/spark-cypher*

assembly/target/scala-2.12/jars/spark-cypher_2.12-3.0.0-SNAPSHOT.jar

$ ls assembly/target/scala-2.12/jars/spark-graph* | grep -v graphx

assembly/target/scala-2.12/jars/spark-graph-api_2.12-3.0.0-SNAPSHOT.jar

assembly/target/scala-2.12/jars/spark-graph_2.12-3.0.0-SNAPSHOT.jar

```

Closes#24490 from s1ck/SPARK-27300.

Lead-authored-by: Martin Junghanns <martin.junghanns@neotechnology.com>

Co-authored-by: Max Kießling <max@kopfueber.org>

Co-authored-by: Martin Junghanns <martin.junghanns@neo4j.com>

Co-authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This PR is the same fix as https://github.com/apache/spark/pull/24816 but in vectorized `dapply` in SparkR.

## How was this patch tested?

Manually tested.

Closes#24818 from HyukjinKwon/SPARK-27971.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This PR aims to remove the following warnings for `java.nio.Bits.unaligned` at JDK9/10/11/12. Please note that there are more warnings which is beyond of this PR's scope. JDK9+ shows the first warning only if you don't give `--illegal-access=warn`.

**BEFORE (Among 5 warnings, there is `java.nio.Bits.unaligned` warning at the startup)**

```

$ bin/spark-shell --driver-java-options=--illegal-access=warn

WARNING: Illegal reflective access by org.apache.spark.unsafe.Platform (file:/Users/dhyun/APACHE/spark/assembly/target/scala-2.12/jars/spark-unsafe_2.12-3.0.0-SNAPSHOT.jar) to method java.nio.Bits.unaligned()

WARNING: Illegal reflective access by org.apache.spark.unsafe.Platform (file:/Users/dhyun/APACHE/spark/assembly/target/scala-2.12/jars/spark-unsafe_2.12-3.0.0-SNAPSHOT.jar) to constructor java.nio.DirectByteBuffer(long,int)

WARNING: Illegal reflective access by org.apache.spark.unsafe.Platform (file:/Users/dhyun/APACHE/spark/assembly/target/scala-2.12/jars/spark-unsafe_2.12-3.0.0-SNAPSHOT.jar) to field java.nio.DirectByteBuffer.cleaner

WARNING: Illegal reflective access by org.apache.hadoop.security.authentication.util.KerberosUtil (file:/Users/dhyun/APACHE/spark/assembly/target/scala-2.12/jars/hadoop-auth-2.7.4.jar) to method sun.security.krb5.Config.getInstance()

WARNING: Illegal reflective access by org.apache.hadoop.security.authentication.util.KerberosUtil (file:/Users/dhyun/APACHE/spark/assembly/target/scala-2.12/jars/hadoop-auth-2.7.4.jar) to method sun.security.krb5.Config.getDefaultRealm()

19/06/08 11:01:19 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

Spark context Web UI available at http://localhost:4040

Spark context available as 'sc' (master = local[*], app id = local-1560016882712).

Spark session available as 'spark'.

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 3.0.0-SNAPSHOT

/_/

Using Scala version 2.12.8 (OpenJDK 64-Bit Server VM, Java 11.0.3)

```

**AFTER (Among 4 warnings, there is no `java.nio.Bits.unaligned` warning with `hadoop-2.7` profile)**

```

$ bin/spark-shell --driver-java-options=--illegal-access=warn

WARNING: Illegal reflective access by org.apache.spark.unsafe.Platform (file:/Users/dhyun/PRS/PLATFORM/assembly/target/scala-2.12/jars/spark-unsafe_2.12-3.0.0-SNAPSHOT.jar) to constructor java.nio.DirectByteBuffer(long,int)

WARNING: Illegal reflective access by org.apache.spark.unsafe.Platform (file:/Users/dhyun/PRS/PLATFORM/assembly/target/scala-2.12/jars/spark-unsafe_2.12-3.0.0-SNAPSHOT.jar) to field java.nio.DirectByteBuffer.cleaner

WARNING: Illegal reflective access by org.apache.hadoop.security.authentication.util.KerberosUtil (file:/Users/dhyun/PRS/PLATFORM/assembly/target/scala-2.12/jars/hadoop-auth-2.7.4.jar) to method sun.security.krb5.Config.getInstance()

WARNING: Illegal reflective access by org.apache.hadoop.security.authentication.util.KerberosUtil (file:/Users/dhyun/PRS/PLATFORM/assembly/target/scala-2.12/jars/hadoop-auth-2.7.4.jar) to method sun.security.krb5.Config.getDefaultRealm()

19/06/08 11:08:27 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

Spark context Web UI available at http://localhost:4040

Spark context available as 'sc' (master = local[*], app id = local-1560017311171).

Spark session available as 'spark'.

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 3.0.0-SNAPSHOT

/_/

Using Scala version 2.12.8 (OpenJDK 64-Bit Server VM, Java 11.0.3)

```

**AFTER (Among 2 warnings, there is no `java.nio.Bits.unaligned` warning with `hadoop-3.2` profile)**

```

$ bin/spark-shell --driver-java-options=--illegal-access=warn

WARNING: Illegal reflective access by org.apache.spark.unsafe.Platform (file:/Users/dhyun/PRS/PLATFORM/assembly/target/scala-2.12/jars/spark-unsafe_2.12-3.0.0-SNAPSHOT.jar) to constructor java.nio.DirectByteBuffer(long,int)

WARNING: Illegal reflective access by org.apache.spark.unsafe.Platform (file:/Users/dhyun/PRS/PLATFORM/assembly/target/scala-2.12/jars/spark-unsafe_2.12-3.0.0-SNAPSHOT.jar) to field java.nio.DirectByteBuffer.cleaner

19/06/08 10:52:06 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

Spark context Web UI available at http://localhost:4040

Spark context available as 'sc' (master = local[*], app id = local-1560016330287).

Spark session available as 'spark'.

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 3.0.0-SNAPSHOT

/_/

Using Scala version 2.12.8 (OpenJDK 64-Bit Server VM, Java 11.0.3)

...

```

## How was this patch tested?

Manual. Run Spark command like `spark-shell` with `--driver-java-options=--illegal-access=warn` option in JDK9/10/11/12 environment.

Closes#24825 from dongjoon-hyun/SPARK-27981.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

The newly added `Refresh` method in PR #24401 prevented the work of moving DataSourceV2Relation into catalyst. It calls `case table: FileTable => table.fileIndex.refresh()` while `FileTable` belongs to sql/core.

More importantly, Ryan Blue pointed out DataSourceV2Relation is immutable by design, it should not have refresh method.

## How was this patch tested?

Unit test

Closes#24815 from gengliangwang/removeRefreshTable.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

Since Apache Spark 2.0.0, SPARK-14867 deprecated `--force` option and made it ignored. This PR cleans up the related code completely at 3.0.0.

**BEFORE (Jenkins)**

```

========================================================================

Building Spark

========================================================================

[info] Building Spark using Maven with these arguments: -Phadoop-2.7 -Pkubernetes -Phive-thriftserver -Pkinesis-asl -Pyarn -Pspark-ganglia-lgpl -Phive -Pmesos clean package -DskipTests

WARNING: '--force' is deprecated and ignored.

...

========================================================================

Running Spark unit tests

========================================================================

[info] Running Spark tests using Maven with these arguments: -Phadoop-2.7 -Phive-thriftserver -Phive -Dtest.exclude.tags=org.apache.spark.tags.ExtendedHiveTest,org.apache.spark.tags.ExtendedYarnTest test --fail-at-end

WARNING: '--force' is deprecated and ignored.

```

**AFTER (Jenkins)**

```

========================================================================

Building Spark

========================================================================

[info] Building Spark using Maven with these arguments: -Phadoop-2.7 -Pkubernetes -Phive-thriftserver -Pkinesis-asl -Pyarn -Pspark-ganglia-lgpl -Phive -Pmesos clean package -DskipTests

...

========================================================================

Running Spark unit tests

========================================================================

[info] Running Spark tests using Maven with these arguments: -Phadoop-2.7 -Pkubernetes -Phive-thriftserver -Pyarn -Pspark-ganglia-lgpl -Phive -Pkinesis-asl -Pmesos -Dtest.exclude.tags=org.apache.spark.tags.ExtendedHiveTest,org.apache.spark.tags.ExtendedYarnTest test --fail-at-end

```

## How was this patch tested?

Manually check the Jenkins logs.

Closes#24824 from dongjoon-hyun/SPARK-27979.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

It seems that some users are using Hive 3.0.0. This pr makes it support Hive 3.0 metastore.

## How was this patch tested?

unit tests

Closes#24688 from wangyum/SPARK-26145.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Flush batch timely for pandas UDF.

This could improve performance when multiple pandas UDF plans are pipelined.

When batch being flushed in time, downstream pandas UDFs will get pipelined as soon as possible, and pipeline will help hide the donwstream UDFs computation time. For example:

When the first UDF start computing on batch-3, the second pipelined UDF can start computing on batch-2, and the third pipelined UDF can start computing on batch-1.

If we do not flush each batch in time, the donwstream UDF's pipeline will lag behind too much, which may increase the total processing time.

I add flush at two places:

* JVM process feed data into python worker. In jvm side, when write one batch, flush it

* VM process read data from python worker output, In python worker side, when write one batch, flush it

If no flush, the default buffer size for them are both 65536. Especially in the ML case, in order to make realtime prediction, we will make batch size very small. The buffer size is too large for the case, which cause downstream pandas UDF pipeline lag behind too much.

### Note

* This is only applied to pandas scalar UDF.

* Do not flush for each batch. The minimum interval between two flush is 0.1 second. This avoid too frequent flushing when batch size is small. It works like:

```

last_flush_time = time.time()

for batch in iterator:

writer.write_batch(batch)

flush_time = time.time()

if self.flush_timely and (flush_time - last_flush_time > 0.1):

stream.flush()

last_flush_time = flush_time

```

## How was this patch tested?

### Benchmark to make sure the flush do not cause performance regression

#### Test code:

```

numRows = ...

batchSize = ...

spark.conf.set('spark.sql.execution.arrow.maxRecordsPerBatch', str(batchSize))

df = spark.range(1, numRows + 1, numPartitions=1).select(col('id').alias('a'))

pandas_udf("int", PandasUDFType.SCALAR)

def fp1(x):

return x + 10

beg_time = time.time()

result = df.select(sum(fp1('a'))).head()

print("result: " + str(result[0]))

print("consume time: " + str(time.time() - beg_time))

```

#### Test Result:

params | Consume time (Before) | Consume time (After)

------------ | ----------------------- | ----------------------

numRows=100000000, batchSize=10000 | 23.43s | 24.64s

numRows=100000000, batchSize=1000 | 36.73s | 34.50s

numRows=10000000, batchSize=100 | 35.67s | 32.64s

numRows=1000000, batchSize=10 | 33.60s | 32.11s

numRows=100000, batchSize=1 | 33.36s | 31.82s

### Benchmark pipelined pandas UDF

#### Test code:

```

spark.conf.set('spark.sql.execution.arrow.maxRecordsPerBatch', '1')

df = spark.range(1, 31, numPartitions=1).select(col('id').alias('a'))

pandas_udf("int", PandasUDFType.SCALAR)

def fp1(x):

print("run fp1")

time.sleep(1)

return x + 100

pandas_udf("int", PandasUDFType.SCALAR)

def fp2(x, y):

print("run fp2")

time.sleep(1)

return x + y

beg_time = time.time()

result = df.select(sum(fp2(fp1('a'), col('a')))).head()

print("result: " + str(result[0]))

print("consume time: " + str(time.time() - beg_time))

```

#### Test Result:

**Before**: consume time: 63.57s

**After**: consume time: 32.43s

**So the PR improve performance by make downstream UDF get pipelined early.**

Please review https://spark.apache.org/contributing.html before opening a pull request.

Closes#24734 from WeichenXu123/improve_pandas_udf_pipeline.

Lead-authored-by: WeichenXu <weichen.xu@databricks.com>

Co-authored-by: Xiangrui Meng <meng@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

When using `from_avro` to deserialize avro data to catalyst StructType format, if `ConvertToLocalRelation` is applied at the time, `from_avro` produces only the last value (overriding previous values).

The cause is `AvroDeserializer` reuses output row for StructType. Normally, it should be fine in Spark SQL. But `ConvertToLocalRelation` just uses `InterpretedProjection` to project local rows. `InterpretedProjection` creates new row for each output thro, it includes the same nested row object from `AvroDeserializer`. By the end, converted local relation has only last value.

I think there're two possible options:

1. Make `AvroDeserializer` output new row for StructType.

2. Use `InterpretedMutableProjection` in `ConvertToLocalRelation` and call `copy()` on output rows.

Option 2 is chose because previously `ConvertToLocalRelation` also creates new rows, this `InterpretedMutableProjection` + `copy()` shoudn't bring too much performance penalty. `ConvertToLocalRelation` should be arguably less critical, compared with `AvroDeserializer`.

## How was this patch tested?

Added test.

Closes#24805 from viirya/SPARK-27798.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

Usage: DirectKafkaWordCount <brokers> <topics>

--

<brokers> is a list of one or more Kafka brokers

<groupId> is a consumer group name to consume from topics

<topics> is a list of one or more kafka topics to consume from

## How was this patch tested?

N/A.

Please review https://spark.apache.org/contributing.html before opening a pull request.

Closes#24819 from cnZach/minor_DirectKafkaWordCount_UsageWithGroupId.

Authored-by: Yuexin Zhang <zach.yx.zhang@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Add extractors for v2 catalog transforms.

These extractors are used to match transforms that are equivalent to Spark's internal case classes. This makes it easier to work with v2 transforms.

## How was this patch tested?

Added test suite for the new extractors.

Closes#24812 from rdblue/SPARK-27965-add-transform-extractors.

Authored-by: Ryan Blue <blue@apache.org>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

In PR https://github.com/apache/spark/pull/24365, we pass in the partitionBy columns as options in `DataFrameWriter`. To make this change less intrusive for a patch release, we added a feature flag `LEGACY_PASS_PARTITION_BY_AS_OPTIONS` with the default to be false.

For 3.0, we should just do the correct behavior for DSV1, i.e., always passing partitionBy as options, and remove this legacy feature flag.

## How was this patch tested?

Existing tests.

Closes#24784 from liwensun/SPARK-27453-default.

Authored-by: liwensun <liwen.sun@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Issued fixed in https://github.com/apache/spark/pull/24734 but that PR might takes longer to merge.

## How was this patch tested?

It should pass existing unit tests.

Closes#24816 from mengxr/SPARK-27968.

Authored-by: Xiangrui Meng <meng@databricks.com>

Signed-off-by: Xiangrui Meng <meng@databricks.com>

## What changes were proposed in this pull request?

Change the resource config spark.{executor/driver}.resource.{resourceName}.count to .amount to allow future usage of containing both a count and a unit. Right now we only support counts - # of gpus for instance, but in the future we may want to support units for things like memory - 25G. I think making the user only have to specify a single config .amount is better then making them specify 2 separate configs of a .count and then a .unit. Change it now since its a user facing config.

Amount also matches how the spark on yarn configs are setup.

## How was this patch tested?

Unit tests and manually verified on yarn and local cluster mode

Closes#24810 from tgravescs/SPARK-27760-amount.

Authored-by: Thomas Graves <tgraves@nvidia.com>

Signed-off-by: Thomas Graves <tgraves@apache.org>

## What changes were proposed in this pull request?

Move methods that implement v2 catalog operations to CatalogV2Util so they can be used in #24768.

## How was this patch tested?

Behavior is validated by existing tests.

Closes#24813 from rdblue/SPARK-27964-add-catalog-v2-util.

Authored-by: Ryan Blue <blue@apache.org>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

I believe the log message: `Committer $committerClass is not a ParquetOutputCommitter and cannot create job summaries. Set Parquet option ${ParquetOutputFormat.JOB_SUMMARY_LEVEL} to NONE.` is at odds with the `if` statement that logs the warning. Despite the instructions in the warning, users still encounter the warning if `JOB_SUMMARY_LEVEL` is already set to `NONE`.

This pull request introduces a change to skip logging the warning if `JOB_SUMMARY_LEVEL` is set to `NONE`.

## How was this patch tested?

I built to make sure everything still compiled and I ran the existing test suite. I didn't feel it was worth the overhead to add a test to make sure a log message does not get logged, but if reviewers feel differently, I can add one.

Closes#24808 from jmsanders/master.

Authored-by: Jordan Sanders <jmsanders@users.noreply.github.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This moves parsing logic for `ALTER TABLE` into Catalyst and adds parsed logical plans for alter table changes that use multi-part identifiers. This PR is similar to SPARK-27108, PR #24029, that created parsed logical plans for create and CTAS.

* Create parsed logical plans

* Move parsing logic into Catalyst's AstBuilder

* Convert to DataSource plans in DataSourceResolution

* Parse `ALTER TABLE ... SET LOCATION ...` separately from the partition variant

* Parse `ALTER TABLE ... ALTER COLUMN ... [TYPE dataType] [COMMENT comment]` [as discussed on the dev list](http://apache-spark-developers-list.1001551.n3.nabble.com/DISCUSS-Syntax-for-table-DDL-td25197.html#a25270)

* Parse `ALTER TABLE ... RENAME COLUMN ... TO ...`

* Parse `ALTER TABLE ... DROP COLUMNS ...`

## How was this patch tested?

* Added new tests in Catalyst's `DDLParserSuite`

* Moved converted plan tests from SQL `DDLParserSuite` to `PlanResolutionSuite`

* Existing tests for regressions

Closes#24723 from rdblue/SPARK-27857-add-alter-table-statements-in-catalyst.

Authored-by: Ryan Blue <blue@apache.org>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

Extracting the common purge "behaviour" to the parent StreamExecution.

## How was this patch tested?

No added behaviour so relying on existing tests.

Closes#24781 from jaceklaskowski/StreamExecution-purge.

Authored-by: Jacek Laskowski <jacek@japila.pl>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Currently we are in a strange status that, some data source v2 interfaces(catalog related) are in sql/catalyst, some data source v2 interfaces(Table, ScanBuilder, DataReader, etc.) are in sql/core.

I don't see a reason to keep data source v2 API in 2 modules. If we should pick one module, I think sql/catalyst is the one to go.

Catalyst module already has some user-facing stuff like DataType, Row, etc. And we have to update `Analyzer` and `SessionCatalog` to support the new catalog plugin, which needs to be in the catalyst module.

This PR can solve the problem we have in https://github.com/apache/spark/pull/24246

## How was this patch tested?

existing tests

Closes#24416 from cloud-fan/move.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

This is a part of #24774, to reduce the code changes made by that.

## How was this patch tested?

Exist UTs.

Closes#24803 from LantaoJin/SPARK-27899_refactor.

Authored-by: LantaoJin <jinlantao@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

This change refactors the portions of the ExecutorAllocationManager class that

track executor state into a new class, to achieve a few goals:

- make the code easier to understand

- better separate concerns (task backlog vs. executor state)

- less synchronization between event and allocation threads

- less coupling between the allocation code and executor state tracking

The executor tracking code was moved to a new class (ExecutorMonitor) that

encapsulates all the logic of tracking what happens to executors and when

they can be timed out. The logic to actually remove the executors remains

in the EAM, since it still requires information that is not tracked by the

new executor monitor code.

In the executor monitor itself, of interest, specifically, is a change in

how cached blocks are tracked; instead of polling the block manager, the

monitor now uses events to track which executors have cached blocks, and

is able to detect also unpersist events and adjust the time when the executor

should be removed accordingly. (That's the bug mentioned in the PR title.)

Because of the refactoring, a few tests in the old EAM test suite were removed,

since they're now covered by the newly added test suite. The EAM suite was

also changed a little bit to not instantiate a SparkContext every time. This

allowed some cleanup, and the tests also run faster.

Tested with new and updated unit tests, and with multiple TPC-DS workloads

running with dynamic allocation on; also some manual tests for the caching

behavior.

Closes#24704 from vanzin/SPARK-20286.

Authored-by: Marcelo Vanzin <vanzin@cloudera.com>

Signed-off-by: Imran Rashid <irashid@cloudera.com>

## What changes were proposed in this pull request?

Use objects in `ResourceName` to represent resource names.

## How was this patch tested?

Existing tests.

Closes#24799 from jiangxb1987/ResourceName.

Authored-by: Xingbo Jiang <xingbo.jiang@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This PR adds support to schedule tasks with extra resource requirements (eg. GPUs) on executors with available resources. It also introduce a new method `TaskContext.resources()` so tasks can access available resource addresses allocated to them.

## How was this patch tested?

* Added new end-to-end test cases in `SparkContextSuite`;

* Added new test case in `CoarseGrainedSchedulerBackendSuite`;

* Added new test case in `CoarseGrainedExecutorBackendSuite`;

* Added new test case in `TaskSchedulerImplSuite`;

* Added new test case in `TaskSetManagerSuite`;

* Updated existing tests.

Closes#24374 from jiangxb1987/gpu.

Authored-by: Xingbo Jiang <xingbo.jiang@databricks.com>

Signed-off-by: Xiangrui Meng <meng@databricks.com>

## What changes were proposed in this pull request?

This is a minor documentation change whereby the https://spark.apache.org/docs/latest/sql-data-sources-avro.html mentions "The date type and naming of record fields should match the input Avro data or Catalyst data,"

The term Catalyst data is confusing. It should instead say, Spark's internal data type such as String

Type or IntegerType.

## How was this patch tested?

(Please explain how this patch was tested. E.g. unit tests, integration tests, manual tests)

There are no code changes; only doc changes.

Please review https://spark.apache.org/contributing.html before opening a pull request.

Closes#24787 from dmatrix/br-orc-ds.doc.changes.

Authored-by: Jules Damji <dmatrix@comcast.net>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

This updates CTE substitution to avoid needing to run all resolution rules on each substituted expression. Running resolution rules was previously used to avoid infinite recursion. In the updated rule, CTE plans are substituted as sub-queries from right to left. Using this scope-based order, it is not necessary to replace multiple CTEs at the same time using `resolveOperatorsDown`. Instead, `resolveOperatorsUp` is used to replace each CTE individually.

By resolving using `resolveOperatorsUp`, this no longer needs to run all analyzer rules on each substituted expression. Previously, this was done to apply `ResolveRelations`, which would throw an `AnalysisException` for all unresolved relations so that unresolved relations that may cause recursive substitutions were not left in the plan. Because this is no longer needed, `ResolveRelations` no longer needs to throw `AnalysisException` and resolution can be done in multiple rules.

## How was this patch tested?

Existing tests in `SQLQueryTestSuite`, `cte.sql`.

Closes#24763 from rdblue/SPARK-27909-fix-cte-substitution.

Authored-by: Ryan Blue <blue@apache.org>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Similar to https://github.com/apache/spark/pull/24070, we now propagate SparkExceptions that are encountered during the collect in the java process to the python process.

Fixes https://jira.apache.org/jira/browse/SPARK-27805

## How was this patch tested?

Added a new unit test

Closes#24677 from dvogelbacher/dv/betterErrorMsgWhenUsingArrow.

Authored-by: David Vogelbacher <dvogelbacher@palantir.com>

Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

## What changes were proposed in this pull request?

SPARK-27773 has introduced a new metric (counter) numCaughtExceptions to the Spark Dropwizard monitoring system. This PR adds an entry in the monitoring documentation to document this.

Closes#24790 from LucaCanali/addDocFollowingSPARK27773.

Authored-by: Luca Canali <luca.canali@cern.ch>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

The current `SQLTestUtils` created many `withXXX` utility functions to clean up tables/views/caches created for testing purpose. Java's `try-with-resources` statement does something similar, but it does not mask exception throwing in the try block with any exception caught in the 'close()' statement. Exception caught in the 'close()' statement would add as a suppressed exception instead.

This PR standardizes those 'withXXX' function to use`Utils.tryWithSafeFinally` function, which does something similar to Java's try-with-resources statement. The purpose of this proposal is to help developers to identify what actually breaks their tests.

## How was this patch tested?

Existing testcases.

Closes#24747 from William1104/feature/SPARK-27772-2.

Lead-authored-by: williamwong <william1104@gmail.com>

Co-authored-by: William Wong <william1104@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Added support for `*` and `^` operators, along with expressions within parentheses. New operators just expand to already supported terms, such as;

- y ~ a * b = y ~ a + b + a : b

- y ~ (a+b+c)^3 = y ~ a + b + c + a : b + a : c + a :b : c

## How was this patch tested?

Added new unit tests to RFormulaParserSuite

mengxr yanboliang

Closes#24764 from ozancicek/rformula.

Authored-by: ozan <ozancancicekci@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

I was quite surprised by the following behavior:

`SELECT str_to_map('1:2|3:4', '|')`

vs

`SELECT str_to_map(replace('1:2|3:4', '|', ','))`

The documentation does not make clear at all what's going on here, but a [dive into the source code shows](fa0d4bf699/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/expressions/complexTypeCreator.scala (L461-L466)) that `split` is being used and in turn the interpretation of `split`'s arguments as RegEx is clearly documented.

## What changes were proposed in this pull request?

Documentation clarification

## How was this patch tested?

N/A

Closes#23888 from MichaelChirico/patch-2.

Authored-by: Michael Chirico <michaelchirico4@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In the previous work of csv/json migration, CSVFileFormat/JsonFileFormat is removed in the table provider whitelist of `AlterTableAddColumnsCommand.verifyAlterTableAddColumn`:

https://github.com/apache/spark/pull/24005https://github.com/apache/spark/pull/24058

This is regression. If a table is created with Provider `org.apache.spark.sql.execution.datasources.csv.CSVFileFormat` or `org.apache.spark.sql.execution.datasources.json.JsonFileFormat`, Spark should allow the "alter table add column" operation.

## How was this patch tested?

Unit test

Closes#24776 from gengliangwang/v1Table.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>