## What changes were proposed in this pull request?

The main purpose of `schema_of_json` is the usage of combination with `from_json` (to make up the leak of schema inference) which takes its schema only as literal; however, currently `schema_of_json` allows JSON input as non-literal expressions (e.g, column).

This was mistakenly allowed - we don't have to take other usages rather then the main purpose into account for now.

This PR makes a followup to only allow literals for `schema_of_json`'s JSON input. We can allow non literal expressions later when it's needed or there are some usecase for it.

## How was this patch tested?

Unit tests were added.

Closes#22775 from HyukjinKwon/SPARK-25447-followup.

Lead-authored-by: hyukjinkwon <gurwls223@apache.org>

Co-authored-by: Hyukjin Kwon <gurwls223@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

See the detailed information at https://issues.apache.org/jira/browse/SPARK-25841 on why these APIs should be deprecated and redesigned.

This patch also reverts 8acb51f08b which applies to 2.4.

## How was this patch tested?

Only deprecation and doc changes.

Closes#22841 from rxin/SPARK-25842.

Authored-by: Reynold Xin <rxin@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

There is a race condition when releasing a Python worker. If `ReaderIterator.handleEndOfDataSection` is not running in the task thread, when a task is early terminated (such as `take(N)`), the task completion listener may close the worker but "handleEndOfDataSection" can still put the worker into the worker pool to reuse.

0e07b483d2 is a patch to reproduce this issue.

I also found a user reported this in the mail list: http://mail-archives.apache.org/mod_mbox/spark-user/201610.mbox/%3CCAAUq=H+YLUEpd23nwvq13Ms5hOStkhX3ao4f4zQV6sgO5zM-xAmail.gmail.com%3E

This PR fixes the issue by using `compareAndSet` to make sure we will never return a closed worker to the work pool.

## How was this patch tested?

Jenkins.

Closes#22816 from zsxwing/fix-socket-closed.

Authored-by: Shixiong Zhu <zsxwing@gmail.com>

Signed-off-by: Takuya UESHIN <ueshin@databricks.com>

## What changes were proposed in this pull request?

This is inspired during implementing #21732. For now `ScalaReflection` needs to consider how `ExpressionEncoder` uses generated serializers and deserializers. And `ExpressionEncoder` has a weird `flat` flag. After discussion with cloud-fan, it seems to be better to refactor `ExpressionEncoder`. It should make SPARK-24762 easier to do.

To summarize the proposed changes:

1. `serializerFor` and `deserializerFor` return expressions for serializing/deserializing an input expression for a given type. They are private and should not be called directly.

2. `serializerForType` and `deserializerForType` returns an expression for serializing/deserializing for an object of type T to/from Spark SQL representation. It assumes the input object/Spark SQL representation is located at ordinal 0 of a row.

So in other words, `serializerForType` and `deserializerForType` return expressions for atomically serializing/deserializing JVM object to/from Spark SQL value.

A serializer returned by `serializerForType` will serialize an object at `row(0)` to a corresponding Spark SQL representation, e.g. primitive type, array, map, struct.

A deserializer returned by `deserializerForType` will deserialize an input field at `row(0)` to an object with given type.

3. The construction of `ExpressionEncoder` takes a pair of serializer and deserializer for type `T`. It uses them to create serializer and deserializer for T <-> row serialization. Now `ExpressionEncoder` dones't need to remember if serializer is flat or not. When we need to construct new `ExpressionEncoder` based on existing ones, we only need to change input location in the atomic serializer and deserializer.

## How was this patch tested?

Existing tests.

Closes#22749 from viirya/SPARK-24762-refactor.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In the PR, I propose to switch `from_json` on `FailureSafeParser`, and to make the function compatible to `PERMISSIVE` mode by default, and to support the `FAILFAST` mode as well. The `DROPMALFORMED` mode is not supported by `from_json`.

## How was this patch tested?

It was tested by existing `JsonSuite`/`CSVSuite`, `JsonFunctionsSuite` and `JsonExpressionsSuite` as well as new tests for `from_json` which checks different modes.

Closes#22237 from MaxGekk/from_json-failuresafe.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

This is a follow-up PR for #22708. It considers another case of java beans deserialization: java maps with struct keys/values.

When deserializing values of MapType with struct keys/values in java beans, fields of structs get mixed up. I suggest using struct data types retrieved from resolved input data instead of inferring them from java beans.

## What changes were proposed in this pull request?

Invocations of "keyArray" and "valueArray" functions are used to extract arrays of keys and values. Struct type of keys or values is also inferred from java bean structure and ends up with mixed up field order.

I created a new UnresolvedInvoke expression as a temporary substitution of Invoke expression while no actual data is available. It allows to provide the resulting data type during analysis based on the resolved input data, not on the java bean (similar to UnresolvedMapObjects).

Key and value arrays are then fed to MapObjects expression which I replaced with UnresolvedMapObjects, just like in case of ArrayType.

Finally I added resolution of UnresolvedInvoke expressions in Analyzer.resolveExpression method as an additional pattern matching case.

## How was this patch tested?

Added a test case.

Built complete project on travis.

viirya kiszk cloud-fan michalsenkyr marmbrus liancheng

Closes#22745 from vofque/SPARK-21402-FOLLOWUP.

Lead-authored-by: Vladimir Kuriatkov <vofque@gmail.com>

Co-authored-by: Vladimir Kuriatkov <Vladimir_Kuriatkov@epam.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

The original test would sometimes fail if the listener bus did not keep

up, so just wait till the listener bus is empty. Tested by adding a

sleep in the listener, which made the test consistently fail without the

fix, but pass consistently after the fix.

Closes#22799 from squito/SPARK-25805.

Authored-by: Imran Rashid <irashid@cloudera.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This takes over original PR at #22019. The original proposal is to have null for float and double types. Later a more reasonable proposal is to disallow empty strings. This patch adds logic to throw exception when finding empty strings for non string types.

## How was this patch tested?

Added test.

Closes#22787 from viirya/SPARK-25040.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This goes to reduce test time for ContinuousStressSuite - from 8 mins 13 sec to 43 seconds.

The approach taken by this is to reduce the triggers and epochs to wait and to reduce the expected rows accordingly.

## How was this patch tested?

Existing tests.

Closes#22662 from viirya/SPARK-25627.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

Currently, there are some tests testing function descriptions:

```bash

$ grep -ir "describe function" sql/core/src/test/resources/sql-tests/inputs

sql/core/src/test/resources/sql-tests/inputs/json-functions.sql:describe function to_json;

sql/core/src/test/resources/sql-tests/inputs/json-functions.sql:describe function extended to_json;

sql/core/src/test/resources/sql-tests/inputs/json-functions.sql:describe function from_json;

sql/core/src/test/resources/sql-tests/inputs/json-functions.sql:describe function extended from_json;

```

Looks there are not quite good points about testing them since we're not going to test documentation itself.

For `DESCRIBE FCUNTION` functionality itself, they are already being tested here and there.

See the test failures in https://github.com/apache/spark/pull/18749 (where I added examples to function descriptions)

We better remove those tests so that people don't add such tests in the SQL tests.

## How was this patch tested?

Manual.

Closes#22776 from HyukjinKwon/SPARK-25779.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

`needsUnsafeRowConversion` is used in 2 places:

1. `ColumnarBatchScan.produceRows`

2. `FileSourceScanExec.doExecute`

When we hit `ColumnarBatchScan.produceRows`, it means whole stage codegen is on but the vectorized reader is off. The vectorized reader can be off for several reasons:

1. the file format doesn't have a vectorized reader(json, csv, etc.)

2. the vectorized reader config is off

3. the schema is not supported

Anyway when the vectorized reader is off, file format reader will always return unsafe rows, and other `ColumnarBatchScan` implementations also always return unsafe rows, so `ColumnarBatchScan.needsUnsafeRowConversion` is not needed.

When we hit `FileSourceScanExec.doExecute`, it means whole stage codegen is off. For this case, we need the `needsUnsafeRowConversion` to convert `ColumnarRow` to `UnsafeRow`, if the file format reader returns batch.

This PR removes `ColumnarBatchScan.needsUnsafeRowConversion`, and keep this flag only in `FileSourceScanExec`

## How was this patch tested?

existing tests

Closes#22750 from cloud-fan/minor.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Refactor `WideSchemaBenchmark` to use main method.

1. use `spark-submit`:

```console

bin/spark-submit --class org.apache.spark.sql.execution.benchmark.WideSchemaBenchmark --jars ./core/target/spark-core_2.11-3.0.0-SNAPSHOT-tests.jar ./sql/core/target/spark-sql_2.11-3.0.0-SNAPSHOT-tests.jar

```

2. Generate benchmark result:

```console

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.WideSchemaBenchmark"

```

## How was this patch tested?

manual tests

Closes#22501 from wangyum/SPARK-25492.

Lead-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

This PR adds `prettyNames` for `from_json`, `to_json`, `from_csv`, and `schema_of_json` so that appropriate names are used.

## How was this patch tested?

Unit tests

Closes#22773 from HyukjinKwon/minor-prettyNames.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

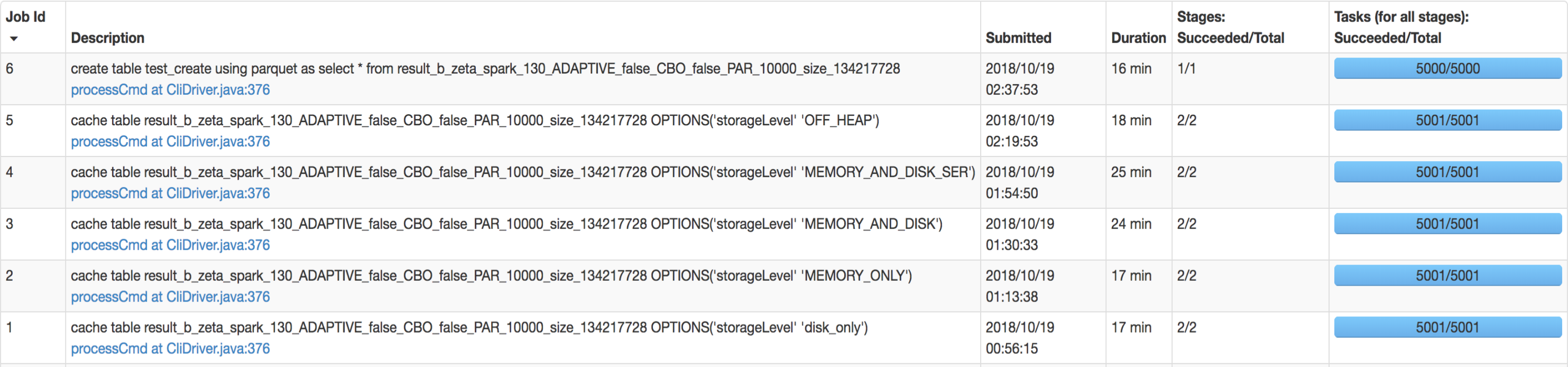

## What changes were proposed in this pull request?

SQL interface support specify `StorageLevel` when cache table. The semantic is:

```sql

CACHE TABLE tableName OPTIONS('storageLevel' 'DISK_ONLY');

```

All supported `StorageLevel` are:

eefdf9f9dd/core/src/main/scala/org/apache/spark/storage/StorageLevel.scala (L172-L183)

## How was this patch tested?

unit tests and manual tests.

manual tests configuration:

```

--executor-memory 15G --executor-cores 5 --num-executors 50

```

Data:

Input Size / Records: 1037.7 GB / 11732805788

Result:

Closes#22263 from wangyum/SPARK-25269.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

This is a follow-up PR for #22259. The extra field added in `ScalaUDF` with the original PR was declared optional, but should be indeed required, otherwise callers of `ScalaUDF`'s constructor could ignore this new field and cause the result to be incorrect. This PR makes the new field required and changes its name to `handleNullForInputs`.

#22259 breaks the previous behavior for null-handling of primitive-type input parameters. For example, for `val f = udf({(x: Int, y: Any) => x})`, `f(null, "str")` should return `null` but would return `0` after #22259. In this PR, all UDF methods except `def udf(f: AnyRef, dataType: DataType): UserDefinedFunction` have been restored with the original behavior. The only exception is documented in the Spark SQL migration guide.

In addition, now that we have this extra field indicating if a null-test should be applied on the corresponding input value, we can also make use of this flag to avoid the rule `HandleNullInputsForUDF` being applied infinitely.

## How was this patch tested?

Added UT in UDFSuite

Passed affected existing UTs:

AnalysisSuite

UDFSuite

Closes#22732 from maryannxue/spark-25044-followup.

Lead-authored-by: maryannxue <maryannxue@apache.org>

Co-authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

This allows an implementer of Spark Session Extensions to utilize a

method "injectFunction" which will add a new function to the default

Spark Session Catalogue.

## What changes were proposed in this pull request?

Adds a new function to SparkSessionExtensions

def injectFunction(functionDescription: FunctionDescription)

Where function description is a new type

type FunctionDescription = (FunctionIdentifier, FunctionBuilder)

The functions are loaded in BaseSessionBuilder when the function registry does not have a parent

function registry to get loaded from.

## How was this patch tested?

New unit tests are added for the extension in SparkSessionExtensionSuite

Closes#22576 from RussellSpitzer/SPARK-25560.

Authored-by: Russell Spitzer <Russell.Spitzer@gmail.com>

Signed-off-by: Herman van Hovell <hvanhovell@databricks.com>

## What changes were proposed in this pull request?

CSVs with windows style crlf ('\r\n') don't work in multiline mode. They work fine in single line mode because the line separation is done by Hadoop, which can handle all the different types of line separators. This PR fixes it by enabling Univocity's line separator detection in multiline mode, which will detect '\r\n', '\r', or '\n' automatically as it is done by hadoop in single line mode.

## How was this patch tested?

Unit test with a file with crlf line endings.

Closes#22503 from justinuang/fix-clrf-multiline.

Authored-by: Justin Uang <juang@palantir.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Only `AddJarCommand` return `0`, the user will be confused about what it means. This PR sets it to empty.

```sql

spark-sql> add jar /Users/yumwang/spark/sql/hive/src/test/resources/TestUDTF.jar;

ADD JAR /Users/yumwang/spark/sql/hive/src/test/resources/TestUDTF.jar

0

spark-sql>

```

## How was this patch tested?

manual tests

```sql

spark-sql> add jar /Users/yumwang/spark/sql/hive/src/test/resources/TestUDTF.jar;

ADD JAR /Users/yumwang/spark/sql/hive/src/test/resources/TestUDTF.jar

spark-sql>

```

Closes#22747 from wangyum/AddJarCommand.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

Master

## What changes were proposed in this pull request?

Previously Pyspark used the private constructor for SparkSession when

building that object. This resulted in a SparkSession without checking

the sql.extensions parameter for additional session extensions. To fix

this we instead use the Session.builder() path as SparkR uses, this

loads the extensions and allows their use in PySpark.

## How was this patch tested?

An integration test was added which mimics the Scala test for the same feature.

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#21990 from RussellSpitzer/SPARK-25003-master.

Authored-by: Russell Spitzer <Russell.Spitzer@gmail.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

When deserializing values of ArrayType with struct elements in java beans, fields of structs get mixed up.

I suggest using struct data types retrieved from resolved input data instead of inferring them from java beans.

## What changes were proposed in this pull request?

MapObjects expression is used to map array elements to java beans. Struct type of elements is inferred from java bean structure and ends up with mixed up field order.

I used UnresolvedMapObjects instead of MapObjects, which allows to provide element type for MapObjects during analysis based on the resolved input data, not on the java bean.

## How was this patch tested?

Added a test case.

Built complete project on travis.

michalsenkyr cloud-fan marmbrus liancheng

Closes#22708 from vofque/SPARK-21402.

Lead-authored-by: Vladimir Kuriatkov <vofque@gmail.com>

Co-authored-by: Vladimir Kuriatkov <Vladimir_Kuriatkov@epam.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The SQL execution listener framework was created from scratch(see https://github.com/apache/spark/pull/9078). It didn't leverage what we already have in the spark listener framework, and one major problem is, the listener runs on the spark execution thread, which means a bad listener can block spark's query processing.

This PR re-implements the SQL execution listener framework. Now `ExecutionListenerManager` is just a normal spark listener, which watches the `SparkListenerSQLExecutionEnd` events and post events to the

user-provided SQL execution listeners.

## How was this patch tested?

existing tests.

Closes#22674 from cloud-fan/listener.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

`Literal.value` should have a value a value corresponding to `dataType`. This pr added code to verify it and fixed the existing tests to do so.

## How was this patch tested?

Modified the existing tests.

Closes#22724 from maropu/SPARK-25734.

Authored-by: Takeshi Yamamuro <yamamuro@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The PR adds new function `from_csv()` similar to `from_json()` to parse columns with CSV strings. I added the following methods:

```Scala

def from_csv(e: Column, schema: StructType, options: Map[String, String]): Column

```

and this signature to call it from Python, R and Java:

```Scala

def from_csv(e: Column, schema: String, options: java.util.Map[String, String]): Column

```

## How was this patch tested?

Added new test suites `CsvExpressionsSuite`, `CsvFunctionsSuite` and sql tests.

Closes#22379 from MaxGekk/from_csv.

Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Co-authored-by: Maxim Gekk <max.gekk@gmail.com>

Co-authored-by: Hyukjin Kwon <gurwls223@gmail.com>

Co-authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

AFAIK multi-column count is not widely supported by the mainstream databases(postgres doesn't support), and the SQL standard doesn't define it clearly, as near as I can tell.

Since Spark supports it, we should clearly document the current behavior and add tests to verify it.

## How was this patch tested?

N/A

Closes#22728 from cloud-fan/doc.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Only test these 4 cases is enough:

be2238fb50/sql/core/src/main/scala/org/apache/spark/sql/execution/datasources/parquet/ParquetWriteSupport.scala (L269-L279)

## How was this patch tested?

Manual tests on my local machine.

before:

```

- filter pushdown - decimal (13 seconds, 683 milliseconds)

```

after:

```

- filter pushdown - decimal (9 seconds, 713 milliseconds)

```

Closes#22636 from wangyum/SPARK-25629.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

LOAD DATA INPATH didn't work if the defaultFS included a port for hdfs.

Handling this just requires a small change to use the correct URI

constructor.

## How was this patch tested?

Added a unit test, ran all tests via jenkins

Closes#22733 from squito/SPARK-25738.

Authored-by: Imran Rashid <irashid@cloudera.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

This PR is a follow-up of https://github.com/apache/spark/pull/22594 . This alternative can avoid the unneeded computation in the hot code path.

- For row-based scan, we keep the original way.

- For the columnar scan, we just need to update the stats after each batch.

## How was this patch tested?

N/A

Closes#22731 from gatorsmile/udpateStatsFileScanRDD.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The PR addresses [the comment](https://github.com/apache/spark/pull/22715#discussion_r225024084) in the previous one. `outputOrdering` becomes a field of `InMemoryRelation`.

## How was this patch tested?

existing UTs

Closes#22726 from mgaido91/SPARK-25727_followup.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Add `outputOrdering ` to `otherCopyArgs` in InMemoryRelation so that this field will be copied when we doing the tree transformation.

```

val data = Seq(100).toDF("count").cache()

data.queryExecution.optimizedPlan.toJSON

```

The above code can generate the following error:

```

assertion failed: InMemoryRelation fields: output, cacheBuilder, statsOfPlanToCache, outputOrdering, values: List(count#178), CachedRDDBuilder(true,10000,StorageLevel(disk, memory, deserialized, 1 replicas),*(1) Project [value#176 AS count#178]

+- LocalTableScan [value#176]

,None), Statistics(sizeInBytes=12.0 B, hints=none)

java.lang.AssertionError: assertion failed: InMemoryRelation fields: output, cacheBuilder, statsOfPlanToCache, outputOrdering, values: List(count#178), CachedRDDBuilder(true,10000,StorageLevel(disk, memory, deserialized, 1 replicas),*(1) Project [value#176 AS count#178]

+- LocalTableScan [value#176]

,None), Statistics(sizeInBytes=12.0 B, hints=none)

at scala.Predef$.assert(Predef.scala:170)

at org.apache.spark.sql.catalyst.trees.TreeNode.jsonFields(TreeNode.scala:611)

at org.apache.spark.sql.catalyst.trees.TreeNode.org$apache$spark$sql$catalyst$trees$TreeNode$$collectJsonValue$1(TreeNode.scala:599)

at org.apache.spark.sql.catalyst.trees.TreeNode.jsonValue(TreeNode.scala:604)

at org.apache.spark.sql.catalyst.trees.TreeNode.toJSON(TreeNode.scala:590)

```

## How was this patch tested?

Added a test

Closes#22715 from gatorsmile/copyArgs1.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

[SPARK-22479](https://github.com/apache/spark/pull/19708/files#diff-5c22ac5160d3c9d81225c5dd86265d27R31) adds a test case which sometimes fails because the used password string `123` matches `41230802`. This PR aims to fix the flakiness.

- https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/97343/consoleFull

```scala

SaveIntoDataSourceCommandSuite:

- simpleString is redacted *** FAILED ***

"SaveIntoDataSourceCommand .org.apache.spark.sql.execution.datasources.jdbc.JdbcRelationProvider41230802, Map(password -> *********(redacted), url -> *********(redacted), driver -> mydriver), ErrorIfExists

+- Range (0, 1, step=1, splits=Some(2))

" contained "123" (SaveIntoDataSourceCommandSuite.scala:42)

```

## How was this patch tested?

Pass the Jenkins with the updated test case

Closes#22716 from dongjoon-hyun/SPARK-25726.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Currently `Range` reports metrics in batch granularity. This is acceptable, but it's better if we can make it row granularity without performance penalty.

Before this PR, the metrics are updated when preparing the batch, which is before we actually consume data. In this PR, the metrics are updated after the data are consumed. There are 2 different cases:

1. The data processing loop has a stop check. The metrics are updated when we need to stop.

2. no stop check. The metrics are updated after the loop.

## How was this patch tested?

existing tests and a new benchmark

Closes#22698 from cloud-fan/range.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

```Scala

val df1 = Seq(("abc", 1), (null, 3)).toDF("col1", "col2")

df1.write.mode(SaveMode.Overwrite).parquet("/tmp/test1")

val df2 = spark.read.parquet("/tmp/test1")

df2.filter("col1 = 'abc' OR (col1 != 'abc' AND col2 == 3)").show()

```

Before the PR, it returns both rows. After the fix, it returns `Row ("abc", 1))`. This is to fix the bug in NULL handling in BooleanSimplification. This is a bug introduced in Spark 1.6 release.

## How was this patch tested?

Added test cases

Closes#22702 from gatorsmile/fixBooleanSimplify2.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Refactor `JoinBenchmark` to use main method.

1. use `spark-submit`:

```console

bin/spark-submit --class org.apache.spark.sql.execution.benchmark.JoinBenchmark --jars ./core/target/spark-core_2.11-3.0.0-SNAPSHOT-tests.jar ./sql/catalyst/target/spark-sql_2.11-3.0.0-SNAPSHOT-tests.jar

```

2. Generate benchmark result:

```console

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.JoinBenchmark"

```

## How was this patch tested?

manual tests

Closes#22661 from wangyum/SPARK-25664.

Lead-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Yuming Wang <wgyumg@gmail.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

The PR addresses the exception raised on accessing chars out of delimiter string. In particular, the backward slash `\` as the CSV fields delimiter causes the following exception on reading `abc\1`:

```Scala

String index out of range: 1

java.lang.StringIndexOutOfBoundsException: String index out of range: 1

at java.lang.String.charAt(String.java:658)

```

because `str.charAt(1)` tries to access a char out of `str` in `CSVUtils.toChar`

## How was this patch tested?

Added tests for empty string and string containing the backward slash to `CSVUtilsSuite`. Besides of that I added an end-to-end test to check how the backward slash is handled in reading CSV string with it.

Closes#22654 from MaxGekk/csv-slash-delim.

Authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

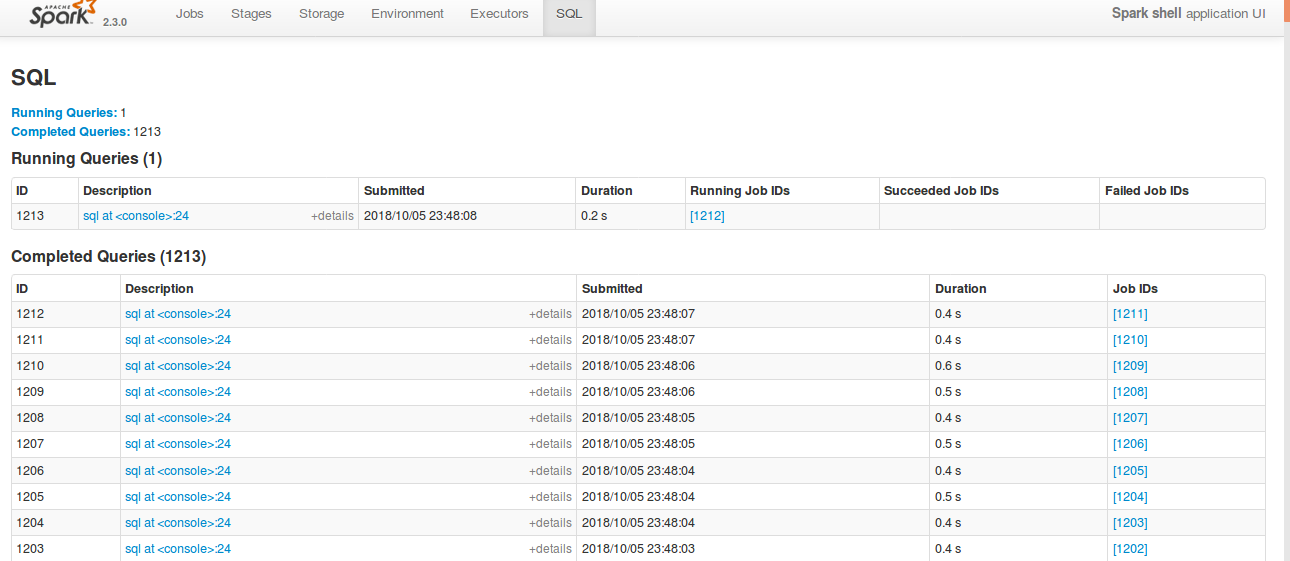

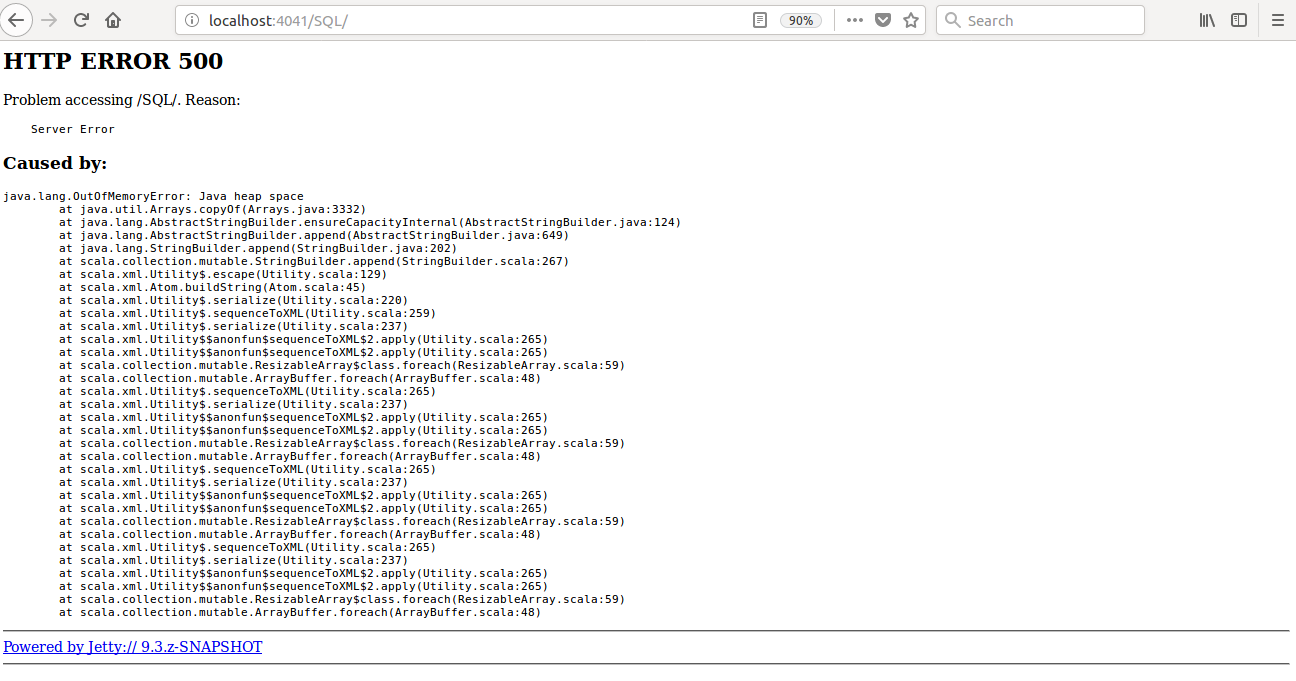

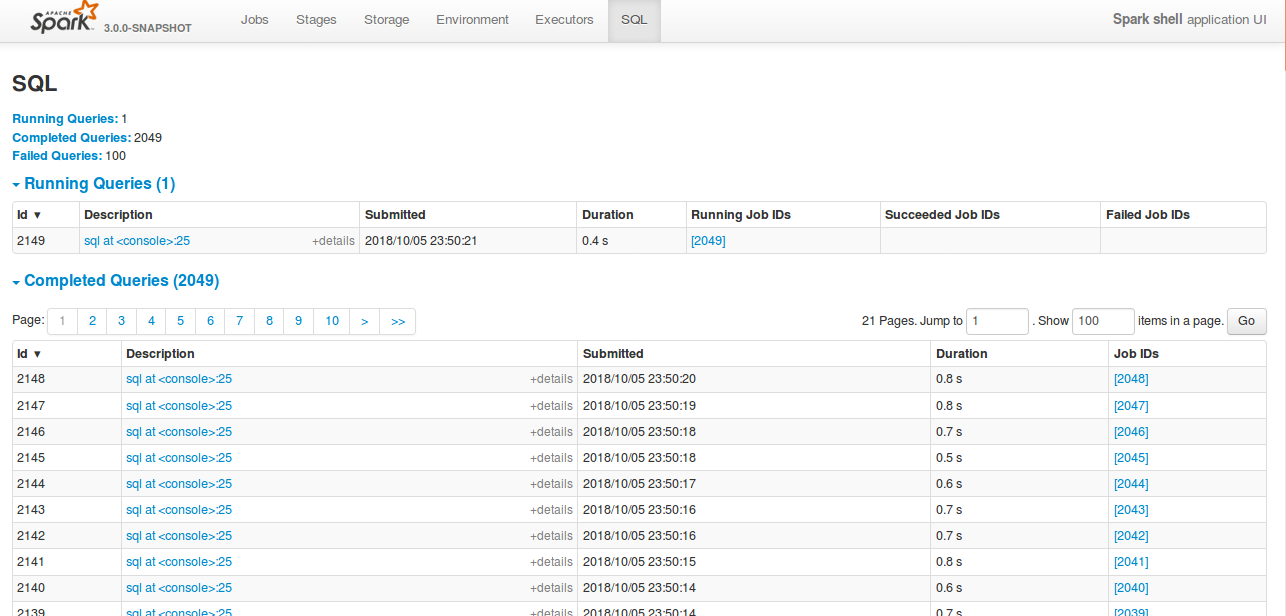

## What changes were proposed in this pull request?

Currently SQL tab in the WEBUI doesn't support pagination. Because of that following issues are happening.

1) For large number of executions, SQL page is throwing OOM exception (around 40,000)

2) For large number of executions, loading SQL page is taking time.

3) Difficult to analyse the execution table for large number of execution.

[Note: spark.sql.ui.retainedExecutions = 50000]

All the tabs, Jobs, Stages etc. supports pagination. So, to make it consistent with other tabs

SQL tab also should support pagination.

I have followed the similar flow of the pagination code in the Jobs and Stages page for SQL page.

Also, this patch doesn't make any behavior change for the SQL tab except the pagination support.

## How was this patch tested?

bin/spark-shell --conf spark.sql.ui.retainedExecutions=50000

Run 50,000 sql queries.

**Before this PR**

**After this PR**

Loading of the page is faster, and OOM issue doesn't happen.

Closes#22645 from shahidki31/SPARK-25566.

Authored-by: Shahid <shahidki31@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

According to the SQL standard, when a query contains `HAVING`, it indicates an aggregate operator. For more details please refer to https://blog.jooq.org/2014/12/04/do-you-really-understand-sqls-group-by-and-having-clauses/

However, in Spark SQL parser, we treat HAVING as a normal filter when there is no GROUP BY, which breaks SQL semantic and lead to wrong result. This PR fixes the parser.

## How was this patch tested?

new test

Closes#22696 from cloud-fan/having.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

1. Move `CSVDataSource.makeSafeHeader` to `CSVUtils.makeSafeHeader` (as is).

- Historically and at the first place of refactoring (which I did), I intended to put all CSV specific handling (like options), filtering, extracting header, etc.

- See `JsonDataSource`. Now `CSVDataSource` is quite consistent with `JsonDataSource`. Since CSV's code path is quite complicated, we might better match them as possible as we can.

2. Create `CSVHeaderChecker` and put `enforceSchema` logics into that.

- The checking header and column pruning stuff were added (per https://github.com/apache/spark/pull/20894 and https://github.com/apache/spark/pull/21296) but some of codes such as https://github.com/apache/spark/pull/22123 are duplicated

- Also, checking header code is basically here and there. We better put them in a single place, which was quite error-prone. See (https://github.com/apache/spark/pull/22656).

3. Move `CSVDataSource.checkHeaderColumnNames` to `CSVHeaderChecker.checkHeaderColumnNames` (as is).

- Similar reasons above with 1.

## How was this patch tested?

Existing tests should cover this.

Closes#22676 from HyukjinKwon/refactoring-csv.

Authored-by: hyukjinkwon <gurwls223@apache.org>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

If the records are incremented by more than 1 at a time,the number of bytes might rarely ever get updated,because it might skip over the count that is an exact multiple of UPDATE_INPUT_METRICS_INTERVAL_RECORDS.

This PR just checks whether the increment causes the value to exceed a higher multiple of UPDATE_INPUT_METRICS_INTERVAL_RECORDS.

## How was this patch tested?

existed unit tests

Closes#22594 from 10110346/inputMetrics.

Authored-by: liuxian <liu.xian3@zte.com.cn>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

remove Redundant semicolons in SortMergeJoinExec, thanks.

## How was this patch tested?

N/A

Closes#22695 from heary-cao/RedundantSemicolons.

Authored-by: caoxuewen <cao.xuewen@zte.com.cn>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

1. Refactor DataSourceReadBenchmark

## How was this patch tested?

Manually tested and regenerated results.

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.DataSourceReadBenchmark"

```

Closes#22664 from peter-toth/SPARK-25662.

Lead-authored-by: Peter Toth <peter.toth@gmail.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: DB Tsai <d_tsai@apple.com>

## What changes were proposed in this pull request?

Inspired by https://github.com/apache/spark/pull/22574 .

We can partially push down top level conjunctive predicates to Orc.

This PR improves Orc predicate push down in both SQL and Hive module.

## How was this patch tested?

New unit test.

Closes#22684 from gengliangwang/pushOrcFilters.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: DB Tsai <d_tsai@apple.com>

## What changes were proposed in this pull request?

This is a follow up of https://github.com/apache/spark/pull/22574. Renamed the parameter and added comments.

## How was this patch tested?

N/A

Closes#22679 from gatorsmile/followupSPARK-25559.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: DB Tsai <d_tsai@apple.com>

## What changes were proposed in this pull request?

This PR is inspired by https://github.com/apache/spark/pull/22524, but proposes a safer fix.

The current limit whole stage codegen has 2 problems:

1. It's only applied to `InputAdapter`, many leaf nodes can't stop earlier w.r.t. limit.

2. It needs to override a method, which will break if we have more than one limit in the whole-stage.

The first problem is easy to fix, just figure out which nodes can stop earlier w.r.t. limit, and update them. This PR updates `RangeExec`, `ColumnarBatchScan`, `SortExec`, `HashAggregateExec`.

The second problem is hard to fix. This PR proposes to propagate the limit counter variable name upstream, so that the upstream leaf/blocking nodes can check the limit counter and quit the loop earlier.

For better performance, the implementation here follows `CodegenSupport.needStopCheck`, so that we only codegen the check only if there is limit in the query. For columnar node like range, we check the limit counter per-batch instead of per-row, to make the inner loop tight and fast.

Why this is safer?

1. the leaf/blocking nodes don't have to check the limit counter and stop earlier. It's only for performance. (this is same as before)

2. The blocking operators can stop propagating the limit counter name, because the counter of limit after blocking operators will never increase, before blocking operators consume all the data from upstream operators. So the upstream operators don't care about limit after blocking operators. This is also for performance only, it's OK if we forget to do it for some new blocking operators.

## How was this patch tested?

a new test

Closes#22630 from cloud-fan/limit.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

## What changes were proposed in this pull request?

Currently the first row of dataset of CSV strings is compared to field names of user specified or inferred schema independently of presence of CSV header. It causes false-positive error messages. For example, parsing `"1,2"` outputs the error:

```java

java.lang.IllegalArgumentException: CSV header does not conform to the schema.

Header: 1, 2

Schema: _c0, _c1

Expected: _c0 but found: 1

```

In the PR, I propose:

- Checking CSV header only when it exists

- Filter header from the input dataset only if it exists

## How was this patch tested?

Added a test to `CSVSuite` which reproduces the issue.

Closes#22656 from MaxGekk/inferred-header-check.

Authored-by: Maxim Gekk <maxim.gekk@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

`InMemoryFileIndex` contains a cache of `LocatedFileStatus` objects. Each `LocatedFileStatus` object can contain several `BlockLocation`s or some subclass of it. Filling up this cache by listing files happens recursively either on the driver or on the executors, depending on the parallel discovery threshold (`spark.sql.sources.parallelPartitionDiscovery.threshold`). If the listing happens on the executors block location objects are converted to simple `BlockLocation` objects to ensure serialization requirements. If it happens on the driver then there is no conversion and depending on the file system a `BlockLocation` object can be a subclass like `HdfsBlockLocation` and consume more memory. This PR adds the conversion to the latter case and decreases memory consumption.

## How was this patch tested?

Added unit test.

Closes#22603 from peter-toth/SPARK-25062.

Authored-by: Peter Toth <peter.toth@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

This PR fixes the Scala-2.12 build error due to ambiguity in `foreachBatch` test cases.

- https://amplab.cs.berkeley.edu/jenkins/view/Spark%20QA%20Test%20(Dashboard)/job/spark-master-test-maven-hadoop-2.7-ubuntu-scala-2.12/428/console

```scala

[error] /home/jenkins/workspace/spark-master-test-maven-hadoop-2.7-ubuntu-scala-2.12/sql/core/src/test/scala/org/apache/spark/sql/execution/streaming/sources/ForeachBatchSinkSuite.scala:102: ambiguous reference to overloaded definition,

[error] both method foreachBatch in class DataStreamWriter of type (function: org.apache.spark.api.java.function.VoidFunction2[org.apache.spark.sql.Dataset[Int],Long])org.apache.spark.sql.streaming.DataStreamWriter[Int]

[error] and method foreachBatch in class DataStreamWriter of type (function: (org.apache.spark.sql.Dataset[Int], Long) => Unit)org.apache.spark.sql.streaming.DataStreamWriter[Int]

[error] match argument types ((org.apache.spark.sql.Dataset[Int], Any) => Unit)

[error] ds.writeStream.foreachBatch((_, _) => {}).trigger(Trigger.Continuous("1 second")).start()

[error] ^

[error] /home/jenkins/workspace/spark-master-test-maven-hadoop-2.7-ubuntu-scala-2.12/sql/core/src/test/scala/org/apache/spark/sql/execution/streaming/sources/ForeachBatchSinkSuite.scala:106: ambiguous reference to overloaded definition,

[error] both method foreachBatch in class DataStreamWriter of type (function: org.apache.spark.api.java.function.VoidFunction2[org.apache.spark.sql.Dataset[Int],Long])org.apache.spark.sql.streaming.DataStreamWriter[Int]

[error] and method foreachBatch in class DataStreamWriter of type (function: (org.apache.spark.sql.Dataset[Int], Long) => Unit)org.apache.spark.sql.streaming.DataStreamWriter[Int]

[error] match argument types ((org.apache.spark.sql.Dataset[Int], Any) => Unit)

[error] ds.writeStream.foreachBatch((_, _) => {}).partitionBy("value").start()

[error] ^

```

## How was this patch tested?

Manual.

Since this failure occurs in Scala-2.12 profile and test cases, Jenkins will not test this. We need to build with Scala-2.12 and run the tests.

Closes#22649 from dongjoon-hyun/SPARK-SCALA212.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request?

Refactor `MiscBenchmark ` to use main method.

Generate benchmark result:

```sh

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.MiscBenchmark"

```

## How was this patch tested?

manual tests

Closes#22500 from wangyum/SPARK-25488.

Lead-authored-by: Yuming Wang <yumwang@ebay.com>

Co-authored-by: Yuming Wang <wgyumg@gmail.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>