### What changes were proposed in this pull request?

This change is to use repos.spark-packages.org instead of Bintray as the repository service for spark-packages.

### Why are the changes needed?

The change is needed because Bintray will no longer be available from May 1st.

### Does this PR introduce _any_ user-facing change?

This should be transparent for users who use SparkSubmit.

### How was this patch tested?

Tested running spark-shell with --packages manually.

Closes#32346 from bozhang2820/replace-bintray.

Authored-by: Bo Zhang <bo.zhang@databricks.com>

Signed-off-by: hyukjinkwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This is one of the patches for SPIP SPARK-30602 for push-based shuffle.

Summary of changes:

- Introduce `MergeStatus` which tracks the partition level metadata for a merged shuffle partition in the Spark driver

- Unify `MergeStatus` and `MapStatus` under a single trait to allow code reusing inside `MapOutputTracker`

- Extend `MapOutputTracker` to support registering / unregistering `MergeStatus`, calculate preferred locations for a shuffle taking into consideration of merged shuffle partitions, and serving reducer requests for block fetching locations with merged shuffle partitions.

The added APIs in `MapOutputTracker` will be used by `DAGScheduler` in SPARK-32920 and by `ShuffleBlockFetcherIterator` in SPARK-32922

### Why are the changes needed?

Refer to SPARK-30602

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Added unit tests.

Lead-authored-by: Min Shen mshenlinkedin.com

Co-authored-by: Chandni Singh chsinghlinkedin.com

Co-authored-by: Venkata Sowrirajan vsowrirajanlinkedin.com

Closes#30480 from Victsm/SPARK-32921.

Lead-authored-by: Venkata krishnan Sowrirajan <vsowrirajan@linkedin.com>

Co-authored-by: Min Shen <mshen@linkedin.com>

Co-authored-by: Chandni Singh <singh.chandni@gmail.com>

Co-authored-by: Chandni Singh <chsingh@linkedin.com>

Signed-off-by: Mridul Muralidharan <mridul<at>gmail.com>

### What changes were proposed in this pull request?

columns like 'Shuffle Read Size / Records', 'Output Size/ Records' etc in table ` Aggregated Metrics by Executor` of stage-detail page should be sorted as numerical-order instead of lexicographical-order.

### Why are the changes needed?

buf fix,the sorting style should be consistent between different columns.

The correspondence between the table and the index is shown below(it is defined in stagespage-template.html):

| index | column name |

| ----- | -------------------------------------- |

| 0 | Executor ID |

| 1 | Logs |

| 2 | Address |

| 3 | Task Time |

| 4 | Total Tasks |

| 5 | Failed Tasks |

| 6 | Killed Tasks |

| 7 | Succeeded Tasks |

| 8 | Excluded |

| 9 | Input Size / Records |

| 10 | Output Size / Records |

| 11 | Shuffle Read Size / Records |

| 12 | Shuffle Write Size / Records |

| 13 | Spill (Memory) |

| 14 | Spill (Disk) |

| 15 | Peak JVM Memory OnHeap / OffHeap |

| 16 | Peak Execution Memory OnHeap / OffHeap |

| 17 | Peak Storage Memory OnHeap / OffHeap |

| 18 | Peak Pool Memory Direct / Mapped |

I constructed some data to simulate the sorting results of the index columns from 9 to 18.

As shown below,it can be seen that the sorting results of columns 9-12 are wrong:

The reason is that the real data corresponding to columns 9-12 (note that it is not the data displayed on the page) are **all strings similar to`94685/131`(bytes/records),while the real data corresponding to columns 13-18 are all numbers,**

so the sorting corresponding to columns 13-18 loos well, but the results of columns 9-12 are incorrect because the strings are sorted according to lexicographical order.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Only JS was modified, and the manual test result works well.

**before modified:**

**after modified:**

Closes#32190 from kyoty/aggregated-metrics-by-executor-sorted-incorrectly.

Authored-by: kyoty <echohlne@gmail.com>

Signed-off-by: Kousuke Saruta <sarutak@oss.nttdata.com>

### What changes were proposed in this pull request?

Avoid to recompute the pending speculative tasks in the ExecutorAllocationManager, and remove some unnecessary code.

### Why are the changes needed?

The number of the pending speculative tasks is recomputed in the ExecutorAllocationManager to calculate the maximum number of executors required. While , it only needs to be computed once to improve performance.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Existing tests.

Closes#32306 from weixiuli/SPARK-35200.

Authored-by: weixiuli <weixiuli@jd.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Close SparkContext after the Main method has finished, to allow SparkApplication on K8S to complete.

This is fixed version of [merged and reverted PR](https://github.com/apache/spark/pull/32081).

### Why are the changes needed?

if I don't call the method sparkContext.stop() explicitly, then a Spark driver process doesn't terminate even after its Main method has been completed. This behaviour is different from spark on yarn, where the manual sparkContext stopping is not required. It looks like, the problem is in using non-daemon threads, which prevent the driver jvm process from terminating.

So I have inserted code that closes sparkContext automatically.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Manually on the production AWS EKS environment in my company.

Closes#32283 from kotlovs/close-spark-context-on-exit-2.

Authored-by: skotlov <skotlov@joom.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

In YARN, ship the `spark.jars.ivySettings` file to the driver when using `cluster` deploy mode so that `addJar` is able to find it in order to resolve ivy paths.

### Why are the changes needed?

SPARK-33084 introduced support for Ivy paths in `sc.addJar` or Spark SQL `ADD JAR`. If we use a custom ivySettings file using `spark.jars.ivySettings`, it is loaded at b26e7b510b/core/src/main/scala/org/apache/spark/deploy/SparkSubmit.scala (L1280). However, this file is only accessible on the client machine. In YARN cluster mode, this file is not available on the driver and so `addJar` fails to find it.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Added unit tests to verify that the `ivySettings` file is localized by the YARN client and that a YARN cluster mode application is able to find to load the `ivySettings` file.

Closes#31591 from shardulm94/SPARK-34472.

Authored-by: Shardul Mahadik <smahadik@linkedin.com>

Signed-off-by: Thomas Graves <tgraves@apache.org>

### What changes were proposed in this pull request?

On Running Spark job with yarn and deployment mode as client, Spark Driver and Spark Application master launch in two separate containers. In various scenarios there is need to see Spark Application master logs to see the resource allocation, Decommissioning status and other information shared between yarn RM and Spark Application master.

In Cluster mode Spark driver and Spark AM is on same container, So Log link of the driver already there to see the logs in Spark UI

This PR is for adding the spark AM log link for spark job running in the client mode for yarn. Instead of searching the container id and then find the logs. We can directly check in the Spark UI

This change is only for showing the AM log links in the Client mode when resource manager is yarn.

### Why are the changes needed?

Till now the only way to check this by finding the container id of the AM and check the logs either using Yarn utility or Yarn RM Application History server.

This PR is for adding the spark AM log link for spark job running in the client mode for yarn. Instead of searching the container id and then find the logs. We can directly check in the Spark UI

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Added the unit test also checked the Spark UI

**In Yarn Client mode**

Before Change

After the Change - The AM info is there

AM Log

**In Yarn Cluster Mode** - The AM log link will not be there

Closes#31974 from SaurabhChawla100/SPARK-34877.

Authored-by: SaurabhChawla <s.saurabhtim@gmail.com>

Signed-off-by: Thomas Graves <tgraves@apache.org>

### What changes were proposed in this pull request?

- Share a static ImmutableBitSet for `treePatternBits` in all object instances of AttributeReference.

- Share three static ImmutableBitSets for `treePatternBits` in three kinds of Literals.

- Add an ImmutableBitSet as a subclass of BitSet.

### Why are the changes needed?

Reduce the additional memory usage caused by `treePatternBits`.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Existing tests.

Closes#32157 from sigmod/leaf.

Authored-by: Yingyi Bu <yingyi.bu@databricks.com>

Signed-off-by: Gengliang Wang <ltnwgl@gmail.com>

### What changes were proposed in this pull request?

Small UI update to add highlighting the number of tasks in progress in a stage/job instead of highlighting the whole in progress stage/job. This was the behavior pre Spark 3.1 and the bootstrap 4 upgrade.

### Why are the changes needed?

To add back in functionality lost between 3.0 and 3.1. This provides a great visual queue of how much of a stage/job is currently being run.

### Does this PR introduce _any_ user-facing change?

Small UI change.

Before:

After (and pre Spark 3.1):

### How was this patch tested?

Updated existing UT.

Closes#32214 from Kimahriman/progress-bar-started.

Authored-by: Adam Binford <adamq43@gmail.com>

Signed-off-by: Kousuke Saruta <sarutak@oss.nttdata.com>

### What changes were proposed in this pull request?

To prevent potential NullPointerExceptions, this PR changes the `LiveStage` constructor to take `info` as a constructor parameter and adds a nullcheck in `AppStatusListener.activeStages`.

### Why are the changes needed?

The `AppStatusListener.getOrCreateStage` would create a LiveStage object with the `info` field set to null and right after that set it to a specific StageInfo object. This can lead to a race condition when the `livestages` are read in between those calls. This could then lead to a null pointer exception in, for instance: `AppStatusListener.activeStages`.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Regular CI/CD tests

Closes#32233 from sander-goos/SPARK-35136-livestage.

Authored-by: Sander Goos <sander.goos@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

the auto-generated rdd's name in the storage tab should be truncated as a single line if it is too long.

### Why are the changes needed?

to make the ui shows more friendly.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

just a simple modifition in css, manual test works well like below:

before modified:

after modified:

Tht titile needs just one line:

Closes#32191 from kyoty/storage-rdd-titile-display-improve.

Authored-by: kyoty <echohlne@gmail.com>

Signed-off-by: Kousuke Saruta <sarutak@oss.nttdata.com>

### What changes were proposed in this pull request?

Make the attemptId in the log of historyServer to be more easily to read.

### Why are the changes needed?

Option variable in Spark historyServer log should be displayed as actual value instead of Some(XX)

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

manual test

Closes#32189 from kyoty/history-server-print-option-variable.

Authored-by: kyoty <echohlne@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Use hadoop FileSystem instead of FileInputStream.

### Why are the changes needed?

Make `spark.scheduler.allocation.file` suport remote file. When using Spark as a server (e.g. SparkThriftServer), it's hard for user to specify a local path as the scheduler pool.

### Does this PR introduce _any_ user-facing change?

Yes, a minor feature.

### How was this patch tested?

Pass `core/src/test/scala/org/apache/spark/scheduler/PoolSuite.scala` and manul test

After add config `spark.scheduler.allocation.file=hdfs:///tmp/fairscheduler.xml`. We intrudoce the configed pool.

Closes#32184 from ulysses-you/SPARK-35083.

Authored-by: ulysses-you <ulyssesyou18@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

When counting the number of started fetch requests, we should exclude the deferred requests.

### Why are the changes needed?

Fix the wrong number in the log.

### Does this PR introduce _any_ user-facing change?

Yes, users see the correct number of started requests in logs.

### How was this patch tested?

Manually tested.

Closes#32180 from Ngone51/count-deferred-request.

Lead-authored-by: yi.wu <yi.wu@databricks.com>

Co-authored-by: wuyi <yi.wu@databricks.com>

Signed-off-by: attilapiros <piros.attila.zsolt@gmail.com>

### What changes were proposed in this pull request?

This PR proposes to replace Hadoop's `Path` with `Utils.resolveURI` to make the way to get URI simple in `SparkContext`.

### Why are the changes needed?

Keep the code simple.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Existing tests.

Closes#32164 from sarutak/followup-SPARK-34225.

Authored-by: Kousuke Saruta <sarutak@oss.nttdata.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

In current code, if we run spark sql with

```

./bin/spark-sql --verbose

```

It won't be passed to end SparkSQLCliDriver, then the SessionState won't call `setIsVerbose`

In the CLI option, it shows

```

CLI options:

-v,--verbose Verbose mode (echo executed SQL to the

console)

```

It's not consistent. This pr fix this issue

### Why are the changes needed?

Fix bug

### Does this PR introduce _any_ user-facing change?

when user call `-v` when run spark sql, sql will be echoed to console.

### How was this patch tested?

Added UT

Closes#32163 from AngersZhuuuu/SPARK-35086.

Authored-by: Angerszhuuuu <angers.zhu@gmail.com>

Signed-off-by: Yuming Wang <yumwang@ebay.com>

### What changes were proposed in this pull request?

Introducing a new test construct:

```

withHttpServer() { baseURL =>

...

}

```

Which starts and stops a Jetty server to serve files via HTTP.

Moreover this PR uses this new construct in the test `Run SparkRemoteFileTest using a remote data file`.

### Why are the changes needed?

Before this PR github URLs was used like "https://raw.githubusercontent.com/apache/spark/master/data/mllib/pagerank_data.txt".

This connects two Spark version in an unhealthy way like connecting the "master" branch which is moving part with the committed test code which is a non-moving (as it might be even released).

So this way a test running for an earlier version of Spark expects something (filename, content, path) from a the latter release and what is worse when the moving version is changed the earlier test will break.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Existing unit test.

Closes#31935 from attilapiros/SPARK-34789.

Authored-by: “attilapiros” <piros.attila.zsolt@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Remove unused MapOutputTracker in BlockStoreShuffleReader

### Why are the changes needed?

Remove unused MapOutputTracker in BlockStoreShuffleReader

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Not need

Closes#32148 from AngersZhuuuu/SPARK-35049.

Authored-by: Angerszhuuuu <angers.zhu@gmail.com>

Signed-off-by: Mridul Muralidharan <mridul<at>gmail.com>

### What changes were proposed in this pull request?

The main change of this pr is extract a `private` method named `tryOrFetchFailedException` to eliminate duplicate code in `BufferReleasingInputStream`.

The patterns of duplicate code as follows:

```

try {

block

} catch {

case e: IOException if detectCorruption =>

IOUtils.closeQuietly(this)

iterator.throwFetchFailedException(blockId, mapIndex, address, e)

}

```

### Why are the changes needed?

Eliminate duplicate code.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Pass the Jenkins or GitHub Action

Closes#32130 from LuciferYang/SPARK-35029.

Authored-by: yangjie01 <yangjie01@baidu.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR contains:

- TreeNode, QueryPlan, AnalysisHelper changes to allow the transform function family to stop earlier without traversing the entire tree;

- Example changes in a few rules to support such pruning, e.g., ReorderJoin and OptimizeIn.

Here is a [design doc](https://docs.google.com/document/d/1SEUhkbo8X-0cYAJFYFDQhxUnKJBz4lLn3u4xR2qfWqk) that elaborates the ideas and benchmark numbers.

### Why are the changes needed?

It's a framework-level change for reducing the query compilation time.

In particular, if we update existing rules and transform call sites as per the examples in this PR, the analysis time and query optimization time can be reduced as described in this [doc](https://docs.google.com/document/d/1SEUhkbo8X-0cYAJFYFDQhxUnKJBz4lLn3u4xR2qfWqk) .

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

It is tested by existing tests.

Closes#32060 from sigmod/bits.

Authored-by: Yingyi Bu <yingyi.bu@databricks.com>

Signed-off-by: Gengliang Wang <ltnwgl@gmail.com>

### What changes were proposed in this pull request?

Line 425 in `MasterSuite` is considered as unused expression by Intellij IDE,

bfba7fadd2/core/src/test/scala/org/apache/spark/deploy/master/MasterSuite.scala (L421-L426)

If we merge lines 424 and 425 into one as:

```

System.getProperty("spark.ui.proxyBase") should startWith (s"$reverseProxyUrl/proxy/worker-")

```

this assertion will fail:

```

- master/worker web ui available behind front-end reverseProxy *** FAILED ***

The code passed to eventually never returned normally. Attempted 45 times over 5.091914027 seconds. Last failure message: "http://proxyhost:8080/path/to/spark" did not start with substring "http://proxyhost:8080/path/to/spark/proxy/worker-". (MasterSuite.scala:405)

```

`System.getProperty("spark.ui.proxyBase")` should be `reverseProxyUrl` because `Master#onStart` and `Worker#handleRegisterResponse` have not changed it.

So the main purpose of this pr is to fix the condition of this assertion.

### Why are the changes needed?

Bug fix.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

- Pass the Jenkins or GitHub Action

- Manual test:

1. merge lines 424 and 425 in `MasterSuite` into one to eliminate the unused expression:

```

System.getProperty("spark.ui.proxyBase") should startWith (s"$reverseProxyUrl/proxy/worker-")

```

2. execute `mvn clean test -pl core -Dtest=none -DwildcardSuites=org.apache.spark.deploy.master.MasterSuite`

**Before**

```

- master/worker web ui available behind front-end reverseProxy *** FAILED ***

The code passed to eventually never returned normally. Attempted 45 times over 5.091914027 seconds. Last failure message: "http://proxyhost:8080/path/to/spark" did not start with substring "http://proxyhost:8080/path/to/spark/proxy/worker-". (MasterSuite.scala:405)

Run completed in 1 minute, 14 seconds.

Total number of tests run: 32

Suites: completed 2, aborted 0

Tests: succeeded 31, failed 1, canceled 0, ignored 0, pending 0

*** 1 TEST FAILED ***

```

**After**

```

Run completed in 1 minute, 11 seconds.

Total number of tests run: 32

Suites: completed 2, aborted 0

Tests: succeeded 32, failed 0, canceled 0, ignored 0, pending 0

All tests passed.

```

Closes#32105 from LuciferYang/SPARK-35004.

Authored-by: yangjie01 <yangjie01@baidu.com>

Signed-off-by: Gengliang Wang <ltnwgl@gmail.com>

### What changes were proposed in this pull request?

Close SparkContext after the Main method has finished, to allow SparkApplication on K8S to complete

### Why are the changes needed?

if I don't call the method sparkContext.stop() explicitly, then a Spark driver process doesn't terminate even after its Main method has been completed. This behaviour is different from spark on yarn, where the manual sparkContext stopping is not required. It looks like, the problem is in using non-daemon threads, which prevent the driver jvm process from terminating.

So I have inserted code that closes sparkContext automatically.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Manually on the production AWS EKS environment in my company.

Closes#32081 from kotlovs/close-spark-context-on-exit.

Authored-by: skotlov <skotlov@joom.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR prevents reregistering BlockManager when a Executor is shutting down. It is achieved by checking `executorShutdown` before calling `env.blockManager.reregister()`.

### Why are the changes needed?

This change is required since Spark reports executors as active, even they are removed.

I was testing Dynamic Allocation on K8s with about 300 executors. While doing so, when the executors were torn down due to `spark.dynamicAllocation.executorIdleTimeout`, I noticed all the executor pods being removed from K8s, however, under the "Executors" tab in SparkUI, I could see some executors listed as alive. [spark.sparkContext.statusTracker.getExecutorInfos.length](65da9287bc/core/src/main/scala/org/apache/spark/SparkStatusTracker.scala (L105)) also returned a value greater than 1.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Added a new test.

## Logs

Following are the logs of the executor(Id:303) which re-registers `BlockManager`

```

21/04/02 21:33:28 INFO CoarseGrainedExecutorBackend: Got assigned task 1076

21/04/02 21:33:28 INFO Executor: Running task 4.0 in stage 3.0 (TID 1076)

21/04/02 21:33:28 INFO MapOutputTrackerWorker: Updating epoch to 302 and clearing cache

21/04/02 21:33:28 INFO TorrentBroadcast: Started reading broadcast variable 3

21/04/02 21:33:28 INFO TransportClientFactory: Successfully created connection to /100.100.195.227:33703 after 76 ms (62 ms spent in bootstraps)

21/04/02 21:33:28 INFO MemoryStore: Block broadcast_3_piece0 stored as bytes in memory (estimated size 2.4 KB, free 168.0 MB)

21/04/02 21:33:28 INFO TorrentBroadcast: Reading broadcast variable 3 took 168 ms

21/04/02 21:33:28 INFO MemoryStore: Block broadcast_3 stored as values in memory (estimated size 3.9 KB, free 168.0 MB)

21/04/02 21:33:29 INFO MapOutputTrackerWorker: Don't have map outputs for shuffle 1, fetching them

21/04/02 21:33:29 INFO MapOutputTrackerWorker: Doing the fetch; tracker endpoint = NettyRpcEndpointRef(spark://MapOutputTrackerda-lite-test-4-7a57e478947d206d-driver-svc.dex-app-n5ttnbmg.svc:7078)

21/04/02 21:33:29 INFO MapOutputTrackerWorker: Got the output locations

21/04/02 21:33:29 INFO ShuffleBlockFetcherIterator: Getting 2 non-empty blocks including 1 local blocks and 1 remote blocks

21/04/02 21:33:30 INFO TransportClientFactory: Successfully created connection to /100.100.80.103:40971 after 660 ms (528 ms spent in bootstraps)

21/04/02 21:33:30 INFO ShuffleBlockFetcherIterator: Started 1 remote fetches in 1042 ms

21/04/02 21:33:31 INFO Executor: Finished task 4.0 in stage 3.0 (TID 1076). 1276 bytes result sent to driver

.

.

.

21/04/02 21:34:16 INFO CoarseGrainedExecutorBackend: Driver commanded a shutdown

21/04/02 21:34:16 INFO Executor: Told to re-register on heartbeat

21/04/02 21:34:16 INFO BlockManager: BlockManager BlockManagerId(303, 100.100.122.34, 41265, None) re-registering with master

21/04/02 21:34:16 INFO BlockManagerMaster: Registering BlockManager BlockManagerId(303, 100.100.122.34, 41265, None)

21/04/02 21:34:16 INFO BlockManagerMaster: Registered BlockManager BlockManagerId(303, 100.100.122.34, 41265, None)

21/04/02 21:34:16 INFO BlockManager: Reporting 0 blocks to the master.

21/04/02 21:34:16 INFO MemoryStore: MemoryStore cleared

21/04/02 21:34:16 INFO BlockManager: BlockManager stopped

21/04/02 21:34:16 INFO FileDataSink: Closing sink with output file = /tmp/safari-events/.des_analysis/safari-events/hdp_spark_monitoring_random-container-037caf27-6c77-433f-820f-03cd9c7d9b6e-spark-8a492407d60b401bbf4309a14ea02ca2_events.tsv

21/04/02 21:34:16 INFO HonestProfilerBasedThreadSnapshotProvider: Stopping agent

21/04/02 21:34:16 INFO HonestProfilerHandler: Stopping honest profiler agent

21/04/02 21:34:17 INFO ShutdownHookManager: Shutdown hook called

21/04/02 21:34:17 INFO ShutdownHookManager: Deleting directory /var/data/spark-d886588c-2a7e-491d-bbcb-4f58b3e31001/spark-4aa337a0-60c0-45da-9562-8c50eaff3cea

```

Closes#32043 from sumeetgajjar/SPARK-34949.

Authored-by: Sumeet Gajjar <sumeetgajjar93@gmail.com>

Signed-off-by: Mridul Muralidharan <mridul<at>gmail.com>

### What changes were proposed in this pull request?

Synchronise access to `registerSource` and `removeSource` method since underlying `ArrayBuffer` is not thread safe.

### Why are the changes needed?

Unexpected behaviours are possible due to lack of thread safety, Like we got `ArrayIndexOutOfBoundsException` while adding new source.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Closes#32024 from BOOTMGR/SPARK-34934.

Lead-authored-by: Harsh Panchal <BOOTMGR@users.noreply.github.com>

Co-authored-by: BOOTMGR <panchal.harsh18@gmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

This patch catches `IOException`, which is possibly thrown due to unable to deserialize map statuses (e.g., broadcasted value is destroyed), when deserilizing map statuses. Once `IOException` is caught, `MetadataFetchFailedException` is thrown to let Spark handle it.

### Why are the changes needed?

One customer encountered application error. From the log, it is caused by accessing non-existing broadcasted value. The broadcasted value is map statuses. E.g.,

```

[info] Cause: java.io.IOException: org.apache.spark.SparkException: Failed to get broadcast_0_piece0 of broadcast_0

[info] at org.apache.spark.util.Utils$.tryOrIOException(Utils.scala:1410)

[info] at org.apache.spark.broadcast.TorrentBroadcast.readBroadcastBlock(TorrentBroadcast.scala:226)

[info] at org.apache.spark.broadcast.TorrentBroadcast.getValue(TorrentBroadcast.scala:103)

[info] at org.apache.spark.broadcast.Broadcast.value(Broadcast.scala:70)

[info] at org.apache.spark.MapOutputTracker$.$anonfun$deserializeMapStatuses$3(MapOutputTracker.scala:967)

[info] at org.apache.spark.internal.Logging.logInfo(Logging.scala:57)

[info] at org.apache.spark.internal.Logging.logInfo$(Logging.scala:56)

[info] at org.apache.spark.MapOutputTracker$.logInfo(MapOutputTracker.scala:887)

[info] at org.apache.spark.MapOutputTracker$.deserializeMapStatuses(MapOutputTracker.scala:967)

```

There is a race-condition. After map statuses are broadcasted and the executors obtain serialized broadcasted map statuses. If any fetch failure happens after, Spark scheduler invalidates cached map statuses and destroy broadcasted value of the map statuses. Then any executor trying to deserialize serialized broadcasted map statuses and access broadcasted value, `IOException` will be thrown. Currently we don't catch it in `MapOutputTrackerWorker` and above exception will fail the application.

Normally we should throw a fetch failure exception for such case. Spark scheduler will handle this.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Unit test.

Closes#32033 from viirya/fix-broadcast-master.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

https://github.com/apache/spark/pull/32015 added a way to run benchmarks much more easily in the same GitHub Actions build. This PR updates the benchmark results by using the way.

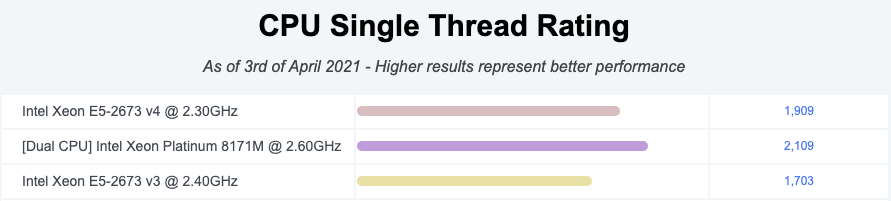

**NOTE** that looks like GitHub Actions use four types of CPU given my observations:

- Intel(R) Xeon(R) Platinum 8171M CPU 2.60GHz

- Intel(R) Xeon(R) CPU E5-2673 v4 2.30GHz

- Intel(R) Xeon(R) CPU E5-2673 v3 2.40GHz

- Intel(R) Xeon(R) Platinum 8272CL CPU 2.60GHz

Given my quick research, seems like they perform roughly similarly:

I couldn't find enough information about Intel(R) Xeon(R) Platinum 8272CL CPU 2.60GHz but the performance seems roughly similar given the numbers.

So shouldn't be a big deal especially given that this way is much easier, encourages contributors to run more and guarantee the same number of cores and same memory with the same softwares.

### Why are the changes needed?

To have a base line of the benchmarks accordingly.

### Does this PR introduce _any_ user-facing change?

No, dev-only.

### How was this patch tested?

It was generated from:

- [Run benchmarks: * (JDK 11)](https://github.com/HyukjinKwon/spark/actions/runs/713575465)

- [Run benchmarks: * (JDK 8)](https://github.com/HyukjinKwon/spark/actions/runs/713154337)

Closes#32044 from HyukjinKwon/SPARK-34950.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Max Gekk <max.gekk@gmail.com>

### What changes were proposed in this pull request?

This PR proposes to add a workflow that allows developers to run benchmarks and download the results files. After this PR, developers can run benchmarks in GitHub Actions in their fork.

### Why are the changes needed?

1. Very easy to use.

2. We can use the (almost) same environment to run the benchmarks. Given my few experiments and observation, the CPU, cores, and memory are same.

3. Does not burden ASF's resource at GitHub Actions.

### Does this PR introduce _any_ user-facing change?

No, dev-only.

### How was this patch tested?

Manually tested in https://github.com/HyukjinKwon/spark/pull/31.

Entire benchmarks are being run as below:

- [Run benchmarks: * (JDK 11)](https://github.com/HyukjinKwon/spark/actions/runs/713575465)

- [Run benchmarks: * (JDK 8)](https://github.com/HyukjinKwon/spark/actions/runs/713154337)

### How do developers use it in their fork?

1. **Go to Actions in your fork, and click "Run benchmarks"**

2. **Run the benchmarks with JDK 8 or 11 with benchmark classes to run. Glob pattern is supported just like `testOnly` in SBT**

3. **After finishing the jobs, the benchmark results are available on the top in the underlying workflow:**

4. **After downloading it, unzip and untar at Spark git root directory:**

```bash

cd .../spark

mv ~/Downloads/benchmark-results-8.zip .

unzip benchmark-results-8.zip

tar -xvf benchmark-results-8.tar

```

5. **Check the results:**

```bash

git status

```

```

...

modified: core/benchmarks/MapStatusesSerDeserBenchmark-results.txt

```

Closes#32015 from HyukjinKwon/SPARK-34821-pr.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Allow ExecutorMetricsPoller to keep stage entries in stageTCMP until a heartbeat occurs even if the entries have task count = 0.

### Why are the changes needed?

This is an improvement.

The current implementation of ExecutorMetricsPoller keeps a map, stageTCMP of (stageId, stageAttemptId) to (count of running tasks, executor metric peaks). The entry for the stage is removed on task completion if the task count decreases to 0. In the case of an executor with a single core, this leads to unnecessary removal and insertion of entries for a given stage.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

A new unit test is added.

Closes#31871 from baohe-zhang/SPARK-34779.

Authored-by: Baohe Zhang <baohe.zhang@verizonmedia.com>

Signed-off-by: “attilapiros” <piros.attila.zsolt@gmail.com>

### What changes were proposed in this pull request?

Add more flexable parameters for stage end point

endpoint /application/{app-id}/stages. It can be:

/application/{app-id}/stages?details=[true|false]&status=[ACTIVE|COMPLETE|FAILED|PENDING|SKIPPED]&withSummaries=[true|false]$quantiles=[comma separated quantiles string]&taskStatus=[RUNNING|SUCCESS|FAILED|PENDING]

where

```

query parameter details=true is to show the detailed task information within each stage. The default value is details=false;

query parameter status can select those stages with the specified status. When status parameter is not specified, a list of all stages are generated.

query parameter withSummaries=true is to show both task summary information in percentile distribution and executor summary information in percentile distribution. The default value is withSummaries=false.

query parameter quantiles support user defined quantiles, default quantiles is `0.0,0.25,0.5,0.75,1.0`

query parameter taskStatus is to show only those tasks with the specified status within their corresponding stages. This parameter will be set when details=true (i.e. this parameter will be ignored when details=false).

```

### Why are the changes needed?

More flexable restful API

### Does this PR introduce _any_ user-facing change?

### How was this patch tested?

UT

Closes#31204 from AngersZhuuuu/SPARK-26399-NEW.

Lead-authored-by: Angerszhuuuu <angers.zhu@gmail.com>

Co-authored-by: AngersZhuuuu <angers.zhu@gmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

This PR proposes to add a script that detects and runs all benchmarks.

### Why are the changes needed?

To run the benchmarks easily. This is actually for SPARK-34821.

### Does this PR introduce _any_ user-facing change?

No, dev-only.

### How was this patch tested?

Manually tested with the command below after building Spark:

```bash

SPARK_GENERATE_BENCHMARK_FILES=1 bin/spark-submit --class \

org.apache.spark.benchmark.Benchmarks --jars \

"`find . -name "*3.2.0-SNAPSHOT-tests.jar" | paste -sd ',' -`" \

./core/target/scala-2.12/spark-core_2.12-3.2.0-SNAPSHOT-tests.jar

```

This is ongoing work. I will double check with working on SPARK-34821 and updating the results.

Closes#32005 from HyukjinKwon/SPARK-34907.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Add a new config, `spark.shuffle.service.name`, which allows for Spark applications to look for a YARN shuffle service which is defined at a name other than the default `spark_shuffle`.

Add a new config, `spark.yarn.shuffle.service.metrics.namespace`, which allows for configuring the namespace used when emitting metrics from the shuffle service into the NodeManager's `metrics2` system.

Add a new mechanism by which to override shuffle service configurations independently of the configurations in the NodeManager. When a resource `spark-shuffle-site.xml` is present on the classpath of the shuffle service, the configs present within it will be used to override the configs coming from `yarn-site.xml` (via the NodeManager).

### Why are the changes needed?

There are two use cases which can benefit from these changes.

One use case is to run multiple instances of the shuffle service side-by-side in the same NodeManager. This can be helpful, for example, when running a YARN cluster with a mixed workload of applications running multiple Spark versions, since a given version of the shuffle service is not always compatible with other versions of Spark (e.g. see SPARK-27780). With this PR, it is possible to run two shuffle services like `spark_shuffle` and `spark_shuffle_3.2.0`, one of which is "legacy" and one of which is for new applications. This is possible because YARN versions since 2.9.0 support the ability to run shuffle services within an isolated classloader (see YARN-4577), meaning multiple Spark versions can coexist.

Besides this, the separation of shuffle service configs into `spark-shuffle-site.xml` can be useful for administrators who want to change and/or deploy Spark shuffle service configurations independently of the configurations for the NodeManager (e.g., perhaps they are owned by two different teams).

### Does this PR introduce _any_ user-facing change?

Yes. There are two new configurations related to the external shuffle service, and a new mechanism which can optionally be used to configure the shuffle service. `docs/running-on-yarn.md` has been updated to provide user instructions; please see this guide for more details.

### How was this patch tested?

In addition to the new unit tests added, I have deployed this to a live YARN cluster and successfully deployed two Spark shuffle services simultaneously, one running a modified version of Spark 2.3.0 (which supports some of the newer shuffle protocols) and one running Spark 3.1.1. Spark applications of both versions are able to communicate with their respective shuffle services without issue.

Closes#31936 from xkrogen/xkrogen-SPARK-34828-shufflecompat-config-from-classpath.

Authored-by: Erik Krogen <xkrogen@apache.org>

Signed-off-by: Thomas Graves <tgraves@apache.org>

### What changes were proposed in this pull request?

Some `spark-submit` commands used to run benchmarks in the user's guide is wrong, we can't use these commands to run benchmarks successful.

So the major changes of this pr is correct these wrong commands, for example, run a benchmark which inherits from `SqlBasedBenchmark`, we must specify `--jars <spark core test jar>,<spark catalyst test jar>` because `SqlBasedBenchmark` based benchmark extends `BenchmarkBase(defined in spark core test jar)` and `SQLHelper(defined in spark catalyst test jar)`.

Another change of this pr is removed the `scalatest Assertions` dependency of Benchmarks because `scalatest-*.jar` are not in the distribution package, it will be troublesome to use.

### Why are the changes needed?

Make sure benchmarks can run using spark-submit cmd described in the guide

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Use the corrected `spark-submit` commands to run benchmarks successfully.

Closes#31995 from LuciferYang/fix-benchmark-guide.

Authored-by: yangjie01 <yangjie01@baidu.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

In ProcfsMetricsGetter.scala, propogating IOException from addProcfsMetricsFromOneProcess to computeAllMetrics when the child pid's proc stat file is unavailable. As a result, the for-loop in computeAllMetrics() can terminate earlier and return an all-0 procfs metric.

### Why are the changes needed?

In the case of a child pid's stat file missing and the subsequent child pids' stat files exist, ProcfsMetricsGetter.computeAllMetrics() will return partial metrics (the sum of a subset of child pids), which can be misleading and is undesired per the existing code comments in https://github.com/apache/spark/blob/master/core/src/main/scala/org/apache/spark/executor/ProcfsMetricsGetter.scala#L214.

Also, a side effect of this bug is that it can lead to a verbose warning log if many pids' stat files are missing. An early terminating can make the warning logs more concise.

The unit test can also explain the bug well.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

A unit test is added.

Closes#31945 from baohe-zhang/SPARK-34845.

Authored-by: Baohe Zhang <baohe.zhang@verizonmedia.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

When we want to get stage's detail info with task information, it will return all tasks, the content is huge and always we just want to know some failed tasks/running tasks with whole stage info to judge is a task has some problem. This pr support

user to use

```

/application/[appid]/stages/[stage-id]?details=true&taskStatus=xxx

/application/[appid]/stages/[stage-id]/[stage-attempted-id]?details=true&taskStatus=xxx

```

to filter task details by task status

### Why are the changes needed?

More flexiable Restful API

### Does this PR introduce _any_ user-facing change?

User can use

```

/application/[appid]/stages/[stage-id]?details=true&taskStatus=xxx

/application/[appid]/stages/[stage-id]/[stage-attempted-id]?details=true&taskStatus=xxx

```

to filter task details by task status

### How was this patch tested?

Added

Closes#31165 from AngersZhuuuu/SPARK-34092.

Lead-authored-by: Angerszhuuuu <angers.zhu@gmail.com>

Co-authored-by: angerszhu <angers.zhu@gmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

Fix issue of https://github.com/apache/spark/pull/31948#issuecomment-808647121

### Why are the changes needed?

fix ut failed

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Existed UT

Closes#31977 from AngersZhuuuu/SPARK-34848-followup.

Authored-by: Angerszhuuuu <angers.zhu@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Task duration distribution is also very important for us to judge whether a stage's task is skew enough.

### Why are the changes needed?

Add important information in TaskMetricsDistribution

### Does this PR introduce _any_ user-facing change?

People can see duration distribution from TaskMetricsDistribution

### How was this patch tested?

Existed UT

Closes#31948 from AngersZhuuuu/SPARK-34848.

Authored-by: Angerszhuuuu <angers.zhu@gmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

For a specific stage, it is useful to show the task metrics in percentile distribution. This information can help users know whether or not there is a skew/bottleneck among tasks in a given stage. We list an example in taskMetricsDistributions.json

Similarly, it is useful to show the executor metrics in percentile distribution for a specific stage. This information can show whether or not there is a skewed load on some executors. We list an example in executorMetricsDistributions.json

We define `withSummaries` and `quantiles` query parameter in the REST API for a specific stage as:

applications/<application_id>/<application_attempt/stages/<stage_id>/<stage_attempt>?withSummaries=[true|false]& quantiles=0.05,0.25,0.5,0.75,0.95

1. withSummaries: default is false, define whether to show current stage's taskMetricsDistribution and executorMetricsDistribution

2. quantiles: default is `0.0,0.25,0.5,0.75,1.0` only effect when `withSummaries=true`, it define the quantiles we use when calculating metrics distributions.

When withSummaries=true, both task metrics in percentile distribution and executor metrics in percentile distribution are included in the REST API output. The default value of withSummaries is false, i.e. no metrics percentile distribution will be included in the REST API output.

### Why are the changes needed?

For a specific stage, it is useful to show the task metrics in percentile distribution. This information can help users know whether or not there is a skew/bottleneck among tasks in a given stage. We list an example in taskMetricsDistributions.json

### Does this PR introduce _any_ user-facing change?

User can use below restful API to get task metrics distribution and executor metrics distribution for indivial stage

```

applications/<application_id>/<application_attempt/stages/<stage_id>/<stage_attempt>?withSummaries=[true|false]

```

### How was this patch tested?

Added UT

Closes#31611 from AngersZhuuuu/SPARK-34488.

Authored-by: Angerszhuuuu <angers.zhu@gmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

This patch adds a config `spark.yarn.kerberos.renewal.excludeHadoopFileSystems` which lists the filesystems to be excluded from delegation token renewal at YARN.

### Why are the changes needed?

MapReduce jobs can instruct YARN to skip renewal of tokens obtained from certain hosts by specifying the hosts with configuration mapreduce.job.hdfs-servers.token-renewal.exclude=<host1>,<host2>,..,<hostN>.

But seems Spark lacks of similar option. So the job submission fails if YARN fails to renew DelegationToken for any of the remote HDFS cluster. The failure in DT renewal can happen due to many reason like Remote HDFS does not trust Kerberos identity of YARN etc. We have a customer facing such issue.

### Does this PR introduce _any_ user-facing change?

No, if the config is not set. Yes, as users can use this config to instruct YARN not to renew delegation token from certain filesystems.

### How was this patch tested?

It is hard to do unit test for this. We did verify it work from the customer using this fix in the production environment.

Closes#31761 from viirya/SPARK-34295.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Liang-Chi Hsieh <viirya@gmail.com>

### What changes were proposed in this pull request?

1) Modify `GenericAvroSerializer` to support serialization of any `GenericContainer`

2) Register `KryoSerializer`s for `GenericData.{Array, EnumSymbol, Fixed}` using the modified `GenericAvroSerializer`

### Why are the changes needed?

Without this change, Kryo throws NPEs when trying to serialize `GenericData.{Array, EnumSymbol, Fixed}`. More details in SPARK-34477 Jira

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Added unit tests for testing roundtrip serialization and deserialization of `GenericData.{Array, EnumSymbol, Fixed}` using `GenericAvroSerializer` directly and also indirectly through `KryoSerializer`

Closes#31597 from shardulm94/avro-array-serializer.

Authored-by: Shardul Mahadik <smahadik@linkedin.com>

Signed-off-by: Gengliang Wang <ltnwgl@gmail.com>

### What changes were proposed in this pull request?

Move `ExecutionListenerBus` register (both `ListenerBus` and `ContextCleaner` register) into itself.

Also with a minor change that put `registerSparkListenerForCleanup` to a better place.

### Why are the changes needed?

improve code

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Pass existing tests.

Closes#31919 from Ngone51/SPARK-34087-followup.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This patch proposes to disable fetching shuffle blocks in batch when io encryption is enabled. Adaptive Query Execution fetch contiguous shuffle blocks for the same map task in batch to reduce IO and improve performance. However, we found that batch fetching is incompatible with io encryption.

### Why are the changes needed?

Before this patch, we set `spark.io.encryption.enabled` to true, then run some queries which coalesced partitions by AEQ, may got following error message:

```14:05:52.638 WARN org.apache.spark.scheduler.TaskSetManager: Lost task 1.0 in stage 2.0 (TID 3) (11.240.37.88 executor driver): FetchFailed(BlockManagerId(driver, 11.240.37.88, 63574, None), shuffleId=0, mapIndex=0, mapId=0, reduceId=2, message=

org.apache.spark.shuffle.FetchFailedException: Stream is corrupted

at org.apache.spark.storage.ShuffleBlockFetcherIterator.throwFetchFailedException(ShuffleBlockFetcherIterator.scala:772)

at org.apache.spark.storage.BufferReleasingInputStream.read(ShuffleBlockFetcherIterator.scala:845)

at java.io.BufferedInputStream.fill(BufferedInputStream.java:246)

at java.io.BufferedInputStream.read(BufferedInputStream.java:265)

at java.io.DataInputStream.readInt(DataInputStream.java:387)

at org.apache.spark.sql.execution.UnsafeRowSerializerInstance$$anon$2$$anon$3.readSize(UnsafeRowSerializer.scala:113)

at org.apache.spark.sql.execution.UnsafeRowSerializerInstance$$anon$2$$anon$3.next(UnsafeRowSerializer.scala:129)

at org.apache.spark.sql.execution.UnsafeRowSerializerInstance$$anon$2$$anon$3.next(UnsafeRowSerializer.scala:110)

at scala.collection.Iterator$$anon$11.next(Iterator.scala:494)

at scala.collection.Iterator$$anon$10.next(Iterator.scala:459)

at org.apache.spark.util.CompletionIterator.next(CompletionIterator.scala:29)

at org.apache.spark.InterruptibleIterator.next(InterruptibleIterator.scala:40)

at scala.collection.Iterator$$anon$10.next(Iterator.scala:459)

at org.apache.spark.sql.execution.SparkPlan.$anonfun$getByteArrayRdd$1(SparkPlan.scala:345)

at org.apache.spark.rdd.RDD.$anonfun$mapPartitionsInternal$2(RDD.scala:898)

at org.apache.spark.rdd.RDD.$anonfun$mapPartitionsInternal$2$adapted(RDD.scala:898)

at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:52)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:373)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:337)

at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:90)

at org.apache.spark.scheduler.Task.run(Task.scala:131)

at org.apache.spark.executor.Executor$TaskRunner.$anonfun$run$3(Executor.scala:498)

at org.apache.spark.util.Utils$.tryWithSafeFinally(Utils.scala:1437)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:501)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.io.IOException: Stream is corrupted

at net.jpountz.lz4.LZ4BlockInputStream.refill(LZ4BlockInputStream.java:200)

at net.jpountz.lz4.LZ4BlockInputStream.refill(LZ4BlockInputStream.java:226)

at net.jpountz.lz4.LZ4BlockInputStream.read(LZ4BlockInputStream.java:157)

at org.apache.spark.storage.BufferReleasingInputStream.read(ShuffleBlockFetcherIterator.scala:841)

... 25 more

)

```

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

New tests.

Closes#31898 from hezuojiao/fetch_shuffle_in_batch.

Authored-by: hezuojiao <hezuojiao@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Remove the unused variable 'secMgr' in SparkSubmit.scala and DriverWrapper.scala

In jira https://issues.apache.org/jira/browse/SPARK-33925, The last usage of SecurityManager in Utils.fetchFile was removed. We don't need the variable anymore

### Why are the changes needed?

For better readablity of codes

### Does this PR introduce _any_ user-facing change?

No,dev-only

### How was this patch tested?

Manually complied. Github Actions and Jenkins build should test it out as well.

Closes#31928 from Peng-Lei/rm_secMgr.

Authored-by: PengLei <18066542445@189.cn>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

This PR fixes the `HybridRowQueue ` to respect the configured memory mode.

Besides, this PR also refactored the constructor of `MemoryConsumer` to accept the memory mode explicitly.

### Why are the changes needed?

`HybridRowQueue` supports both onHeap and offHeap manipulation. But it inherited the wrong `MemoryConsumer` constructor, which hard-coded the memory mode to `onHeap`.

### Does this PR introduce _any_ user-facing change?

No. (Maybe yes in some cases where users can't complete the job before could complete successfully after the fix because of `HybridRowQueue` is able to spill under offHeap mode now. )

### How was this patch tested?

Updated the existing test to make it test both offHeap and onHeap modes.

Closes#31152 from Ngone51/fix-MemoryConsumer-memorymode.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This reverts commit 86ea520320.

### Why are the changes needed?

The test added in the change was flaky.

Closes#31918 from bozhang2820/revert-spark-34757.

Authored-by: Bo Zhang <bo.zhang@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR fixes an issue that `addFile` and `addJar` further encode even though a URI form string is passed.

For example, the following operation will throw exception even though the file exists.

```

sc.addFile("file:/foo/test%20file.txt")

```

Another case is `--files` and `--jars` option when we submit an application.

```

bin/spark-shell --files "/foo/test file.txt"

```

The path above is transformed to URI form [here](ecf4811764/core/src/main/scala/org/apache/spark/deploy/SparkSubmitArguments.scala (L400)) and passed to `addFile` so the same issue happens.

### Why are the changes needed?

This is a bug.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

New test.

Closes#31718 from sarutak/fix-uri-encode-double.

Authored-by: Kousuke Saruta <sarutak@oss.nttdata.com>

Signed-off-by: Kousuke Saruta <sarutak@oss.nttdata.com>

### What changes were proposed in this pull request?

This PR aims to enable `spark.hadoopRDD.ignoreEmptySplits` by default for Apache Spark 3.2.0.

### Why are the changes needed?

Although this is a safe improvement, this hasn't been enabled by default to avoid the explicit behavior change. This PR aims to switch the default explicitly in Apache Spark 3.2.0.

### Does this PR introduce _any_ user-facing change?

Yes, the behavior change is documented.

### How was this patch tested?

Pass the existing CIs.

Closes#31909 from dongjoon-hyun/SPARK-34809.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Like we redact secrets and tokens, this PR aims to redact access key.

### Why are the changes needed?

Access key is also worth to hide.

### Does this PR introduce _any_ user-facing change?

This will hide this information from SparkUI (`Spark Properties` and `Hadoop Properties` and logs).

### How was this patch tested?

Pass the newly updated UT.

Closes#31912 from dongjoon-hyun/SPARK-34811.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

avoid unnecessary shuffle if possible

### Why are the changes needed?

avoid unnecessary shuffle.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

added testsuites and existing testsuites

Closes#31480 from zhengruifeng/repartitionAndSortWithinPartitions_opt_II.

Authored-by: Ruifeng Zheng <ruifengz@foxmail.com>

Signed-off-by: yi.wu <yi.wu@databricks.com>