### What changes were proposed in this pull request?

remove duplicate setter in ```BucketedRandomProjectionLSH```

### Why are the changes needed?

Remove the duplicate ```setInputCol/setOutputCol``` in ```BucketedRandomProjectionLSH``` because these two setter are already in super class ```LSH```

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Manually checked.

Closes#27397 from huaxingao/spark-29093.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR makes `SparkSession.emptyDataFrame` use an empty local relation instead of an empty RDD. This allows to optimizer to recognize this as an empty relation, and creates the opportunity to do some more aggressive optimizations.

### Why are the changes needed?

It allows us to optimize empty dataframes better.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Added a test case to `DataFrameSuite`.

Closes#27400 from hvanhovell/SPARK-30671.

Authored-by: herman <herman@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR renames a variable `SINGLETON` to `INSTANCE`.

### Why are the changes needed?

This is a minor change for consistency with the other parts.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Pass the existing tests.

Closes#27409 from dongjoon-hyun/SPARK-30192.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

We propose to add a new interface `SupportsAdmissionControl` and `ReadLimit`. A ReadLimit defines how much data should be read in the next micro-batch. `SupportsAdmissionControl` specifies that a source can rate limit its ingest into the system. The source can tell the system what the user specified as a read limit, and the system can enforce this limit within each micro-batch or impose its own limit if the Trigger is Trigger.Once() for example.

We then use this interface in FileStreamSource, KafkaSource, and KafkaMicroBatchStream.

### Why are the changes needed?

Sources currently have no information around execution semantics such as whether the stream is being executed in Trigger.Once() mode. This interface will pass this information into the sources as part of planning. With a trigger like Trigger.Once(), the semantics are to process all the data available to the datasource in a single micro-batch. However, this semantic can be broken when data source options such as `maxOffsetsPerTrigger` (in the Kafka source) rate limit the amount of data read for that micro-batch without this interface.

### Does this PR introduce any user-facing change?

DataSource developers can extend this interface for their streaming sources to add admission control into their system and correctly support Trigger.Once().

### How was this patch tested?

Existing tests, as this API is mostly internal

Closes#27380 from brkyvz/rateLimit.

Lead-authored-by: Burak Yavuz <brkyvz@gmail.com>

Co-authored-by: Burak Yavuz <burak@databricks.com>

Signed-off-by: Burak Yavuz <brkyvz@gmail.com>

### What changes were proposed in this pull request?

Instead of having several overloads of `getTable` method in `TableProvider`, it's better to have 2 methods explicitly: `inferSchema` and `inferPartitioning`. With a single `getTable` method that takes everything: schema, partitioning and properties.

This PR also adds a `supportsExternalMetadata` method in `TableProvider`, to indicate if the source support external table metadata. If this flag is false:

1. spark.read.schema... is disallowed and fails

2. when we support creating v2 tables in session catalog, spark only keeps table properties in the catalog.

### Why are the changes needed?

API improvement.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

existing tests

Closes#26868 from cloud-fan/provider2.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR fixes a couple of minor issues on SPARK-30481:

* SHS runs "compaction" regardless of the result of "merge application listing".

If "merge application listing" fails, most likely the application log will have some issue and "compaction" won't work properly then. We can just skip trying compaction when "merge application listing" fails.

* When "compaction" throws exception we don't handle it.

It's expected to swallow exception, but we don't even log the exception for now. It should be logged properly.

### Why are the changes needed?

Described in above section.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing UTs.

Closes#27408 from HeartSaVioR/SPARK-30481-FOLLOWUP-MINOR-FIXES.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Credit to uncleGen for discovering the problem and providing simple reproducer as UT. New UT in this patch is borrowed from #26156 and I'm retaining a commit from #26156 (except unnecessary part on this path) to properly give a credit.

This patch fixes the issue that partition ID could be mis-assigned when the query contains UNION and stream-stream join is placed on the right side. We assume the range of partition IDs as `(0 ~ number of shuffle partitions - 1)` for stateful operators, but when we use stream-stream join on the right side of UNION, the range of partition ID of task goes to `(number of partitions in left side, number of partitions in left side + number of shuffle partitions - 1)`, which `number of partitions in left side` can be changed in some cases (new UT points out the one of the cases).

The root reason of bug is that stream-stream join picks the partition ID from TaskContext, which wouldn't be same as partition ID from source if union is being used. Hopefully we can pick the right partition ID from source in StateStoreAwareZipPartitionsRDD - this patch leverages that partition ID.

### Why are the changes needed?

This patch will fix the broken of assumption of partition range on stateful operator, as well as fix the issue reported in JIRA issue SPARK-29438.

### Does this PR introduce any user-facing change?

Yes, if their query is using UNION and stream-stream join is placed on the right side. They may encounter the problem to read state from checkpoint and may need to discard checkpoint to continue.

### How was this patch tested?

Added UT which fails on current master branch, and passes with this patch.

Closes#26162 from HeartSaVioR/SPARK-29438.

Lead-authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Co-authored-by: uncleGen <hustyugm@gmail.com>

Signed-off-by: Tathagata Das <tathagata.das1565@gmail.com>

### What changes were proposed in this pull request?

- Add `minPartitions` support for Kafka Streaming V1 source.

- Add `minPartitions` support for Kafka batch V1 and V2 source.

- There is lots of refactoring (moving codes to KafkaOffsetReader) to reuse codes.

### Why are the changes needed?

Right now, the "minPartitions" option only works in Kafka streaming source v2. It would be great that we can support it in batch and streaming source v1 (v1 is the fallback mode when a user hits a regression in v2) as well.

### Does this PR introduce any user-facing change?

Yep. The `minPartitions` options is supported in Kafka batch and streaming queries for both data source V1 and V2.

### How was this patch tested?

New unit tests are added to test "minPartitions".

Closes#27388 from zsxwing/kafka-min-partitions.

Authored-by: Shixiong Zhu <zsxwing@gmail.com>

Signed-off-by: Shixiong Zhu <zsxwing@gmail.com>

### What changes were proposed in this pull request?

This patch makes the test cases in HiveShowCreateTableSuite create Hive table instead of data source table.

### Why are the changes needed?

Because SparkSQL now creates data source table if no provider is specified in SQL command, some test cases in HiveShowCreateTableSuite don't create Hive table, but data source table.

It is confusing and not good for the purpose of this test suite.

### Does this PR introduce any user-facing change?

No, only test case.

### How was this patch tested?

Unit test.

Closes#27393 from viirya/SPARK-30673.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Liang-Chi Hsieh <liangchi@uber.com>

### What changes were proposed in this pull request?

Make ALS/MLP extend ```HasBlockSize```

### Why are the changes needed?

Currently, MLP has its own ```blockSize``` param, we should make MLP extend ```HasBlockSize``` since ```HasBlockSize``` was added in ```sharedParams.scala``` recently.

ALS doesn't have ```blockSize``` param now, we can make it extend ```HasBlockSize```, so user can specify the ```blockSize```.

### Does this PR introduce any user-facing change?

Yes

```ALS.setBlockSize``` and ```ALS.getBlockSize```

```ALSModel.setBlockSize``` and ```ALSModel.getBlockSize```

### How was this patch tested?

Manually tested. Also added doctest.

Closes#27389 from huaxingao/spark-30662.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

Update `ResolvedNamespace` to accept catalog as `CatalogPlugin` not `SupportsNamespaces`.

This is extracted from https://github.com/apache/spark/pull/27345

### Why are the changes needed?

not all commands that need to resolve namespaces require a namespace catalog. For example, `SHOW TABLE` is implemented by `TableCatalog.listTables`, and is nothing to do with `SupportsNamespace`.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

existing tests

Closes#27403 from cloud-fan/ns.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

override `Command.stats` to return a dummy statistics (Long.Max).

### Why are the changes needed?

Commands are eagerly executed. They will be converted to LocalRelation after the DataFrame is created. That said, the statistics of a command is useless. We should avoid unnecessary statistics calculation of command's children.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

new test

Closes#27344 from cloud-fan/command.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR is a follow-up of #25728. #25728 introduces additional arguments to determine sort order. Thus, this function does not sort only in ascending order. However, the description was not updated.

This PR updates the description to follow the latest feature.

### Why are the changes needed?

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Existing tests since this PR just updates description text.

Closes#27404 from kiszk/SPARK-29020-followup.

Authored-by: Kazuaki Ishizaki <ishizaki@jp.ibm.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

In the PR, I propose to replace deprecated method `computeCost` of `BisectingKMeansModel` by `summary.trainingCost`.

### Why are the changes needed?

The changes eliminate deprecation warnings:

```

BisectingKMeansSuite.scala:108: method computeCost in class BisectingKMeansModel is deprecated (since 3.0.0): This method is deprecated and will be removed in future versions. Use ClusteringEvaluator instead. You can also get the cost on the training dataset in the summary.

[warn] assert(model.computeCost(dataset) < 0.1)

BisectingKMeansSuite.scala:135: method computeCost in class BisectingKMeansModel is deprecated (since 3.0.0): This method is deprecated and will be removed in future versions. Use ClusteringEvaluator instead. You can also get the cost on the training dataset in the summary.

[warn] assert(model.computeCost(dataset) == summary.trainingCost)

BisectingKMeansSuite.scala:323: method computeCost in class BisectingKMeansModel is deprecated (since 3.0.0): This method is deprecated and will be removed in future versions. Use ClusteringEvaluator instead. You can also get the cost on the training dataset in the summary.

[warn] model.computeCost(dataset)

```

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By running `BisectingKMeansSuite` via:

```

./build/sbt "test:testOnly *BisectingKMeansSuite"

```

Closes#27401 from MaxGekk/kmeans-computeCost-warning.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

1, use blocks instead of vectors

2, use Level-2 BLAS for binary, use Level-3 BLAS for multinomial

### Why are the changes needed?

1, less RAM to persist training data; (save ~40%)

2, faster than existing impl; (40% ~ 92%)

### Does this PR introduce any user-facing change?

add a new expert param `blockSize`

### How was this patch tested?

updated testsuites

Closes#27374 from zhengruifeng/blockify_lor.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

This PR aims to use `python3` instead of `python` in `dev/lint-python`.

### Why are the changes needed?

Currently, `dev/lint-python` fails at Python 2. And, Python 2 is EOL since January 1st 2020.

```

$ python -V

Python 2.7.17

$ dev/lint-python

starting python compilation test...

Python compilation failed with the following errors:

Compiling ./python/setup.py ...

File "./python/setup.py", line 27

file=sys.stderr)

^

SyntaxError: invalid syntax

```

### Does this PR introduce any user-facing change?

No. This is a dev environment.

### How was this patch tested?

Jenkins is running this with Python 3 already.

The following is a manual test.

```

$ python -V

Python 3.8.0

$ dev/lint-python

starting python compilation test...

python compilation succeeded.

```

Closes#27394 from dongjoon-hyun/SPARK-30674.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR removes any dependencies on pypandoc. It also makes related tweaks to the docs README to clarify the dependency on pandoc (not pypandoc).

### Why are the changes needed?

We are using pypandoc to convert the Spark README from Markdown to ReST for PyPI. PyPI now natively supports Markdown, so we don't need pypandoc anymore. The dependency on pypandoc also sometimes causes issues when installing Python packages that depend on PySpark, as described in #18981.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Manually:

```sh

python -m venv venv

source venv/bin/activate

pip install -U pip

cd python/

python setup.py sdist

pip install dist/pyspark-3.0.0.dev0.tar.gz

pyspark --version

```

I also built the PySpark and R API docs with `jekyll` and reviewed them locally.

It would be good if a maintainer could also test this by creating a PySpark distribution and uploading it to [Test PyPI](https://test.pypi.org) to confirm the README looks as it should.

Closes#27376 from nchammas/SPARK-30665-pypandoc.

Authored-by: Nicholas Chammas <nicholas.chammas@liveramp.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

add supported hive features

### Why are the changes needed?

update doc

### Does this PR introduce any user-facing change?

Before change UI info:

After this pr:

### How was this patch tested?

For PR about Spark Doc Web UI, we need to show UI format before and after pr.

We can build our local web server about spark docs with reference `$SPARK_PROJECT/docs/README.md`

You should install python and ruby in your env and also install plugin like below

```sh

$ sudo gem install jekyll jekyll-redirect-from rouge

# Following is needed only for generating API docs

$ sudo pip install sphinx pypandoc mkdocs

$ sudo Rscript -e 'install.packages(c("knitr", "devtools", "rmarkdown"), repos="https://cloud.r-project.org/")'

$ sudo Rscript -e 'devtools::install_version("roxygen2", version = "5.0.1", repos="https://cloud.r-project.org/")'

$ sudo Rscript -e 'devtools::install_version("testthat", version = "1.0.2", repos="https://cloud.r-project.org/")'

```

Then we call `jekyll serve --watch` after build we see below message

```

~/Documents/project/AngersZhu/spark/sql

Moving back into docs dir.

Making directory api/sql

cp -r ../sql/site/. api/sql

Source: /Users/angerszhu/Documents/project/AngersZhu/spark/docs

Destination: /Users/angerszhu/Documents/project/AngersZhu/spark/docs/_site

Incremental build: disabled. Enable with --incremental

Generating...

done in 24.717 seconds.

Auto-regeneration: enabled for '/Users/angerszhu/Documents/project/AngersZhu/spark/docs'

Server address: http://127.0.0.1:4000

Server running... press ctrl-c to stop.

```

Visit http://127.0.0.1:4000 to get your newest change in doc web.

Closes#27106 from AngersZhuuuu/SPARK-30435.

Authored-by: angerszhu <angers.zhu@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR adds `numpy` to the list of things that need to be installed in order to build the API docs. It doesn't add a new dependency; it just documents an existing dependency.

### Why are the changes needed?

You cannot build the PySpark API docs without numpy installed. Otherwise you get this series of errors:

```

$ SKIP_SCALADOC=1 SKIP_RDOC=1 SKIP_SQLDOC=1 jekyll serve

Configuration file: .../spark/docs/_config.yml

Moving to python/docs directory and building sphinx.

sphinx-build -b html -d _build/doctrees . _build/html

Running Sphinx v2.3.1

loading pickled environment... done

building [mo]: targets for 0 po files that are out of date

building [html]: targets for 0 source files that are out of date

updating environment: 0 added, 2 changed, 0 removed

reading sources... [100%] pyspark.mllib

WARNING: autodoc: failed to import module 'ml' from module 'pyspark'; the following exception was raised:

No module named 'numpy'

WARNING: autodoc: failed to import module 'ml.param' from module 'pyspark'; the following exception was raised:

No module named 'numpy'

...

```

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Manually, by building the API docs with and without numpy.

Closes#27390 from nchammas/SPARK-30672-numpy-pyspark-docs.

Authored-by: Nicholas Chammas <nicholas.chammas@liveramp.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Fix following typos:

- tranformation -> transformation

- the boolean -> the Boolean

- signle -> single

### Why are the changes needed?

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Scala linter.

Closes#27382 from zero323/functions-typos.

Authored-by: zero323 <mszymkiewicz@gmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

…

### What changes were proposed in this pull request?

If the resource discovery goes bad, like it doesn't return enough GPUs, currently it just throws an exception. This is hard for users to see because you have to go find the executor logs and its not reported back to the driver. On yarn if you exit explicitly then the driver logs show the error thrown and its much more useful. On yarn with the explicit exit with non-zero it also goes against failed executor launch attempts and the application will eventually exit. so if its fundamentally a bad configuration or bad discovery script it won't just hang forever. I also tested on k8s and standalone and the behaviors there don't change, the executor cleanly exit with an error message in the logs. The standalone ui makes it easy to see failed executors.

### Why are the changes needed?

better user experience.

### Does this PR introduce any user-facing change?

no api changes

### How was this patch tested?

ran unit tests and manually tested on yarn, standalone, and k8s.

Closes#27385 from tgravescs/SPARK-30529.

Authored-by: Thomas Graves <tgraves@nvidia.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

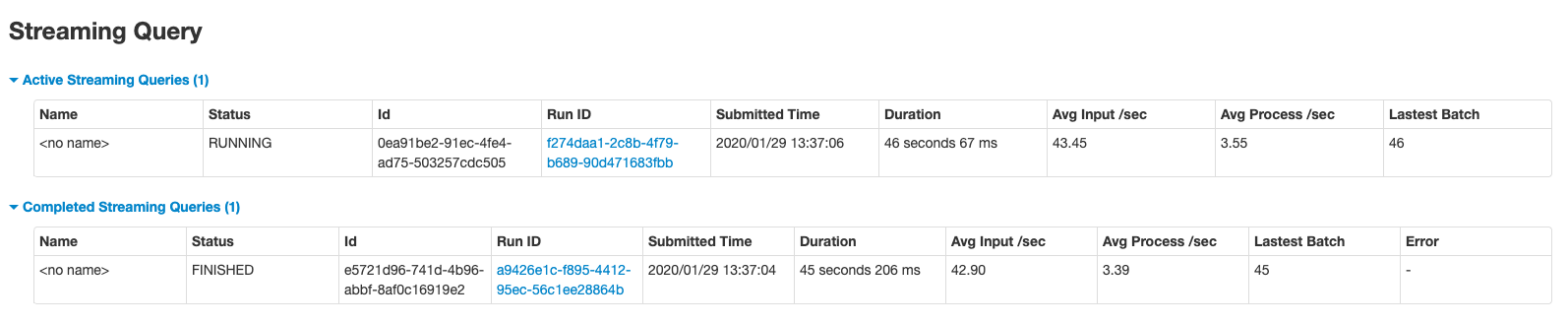

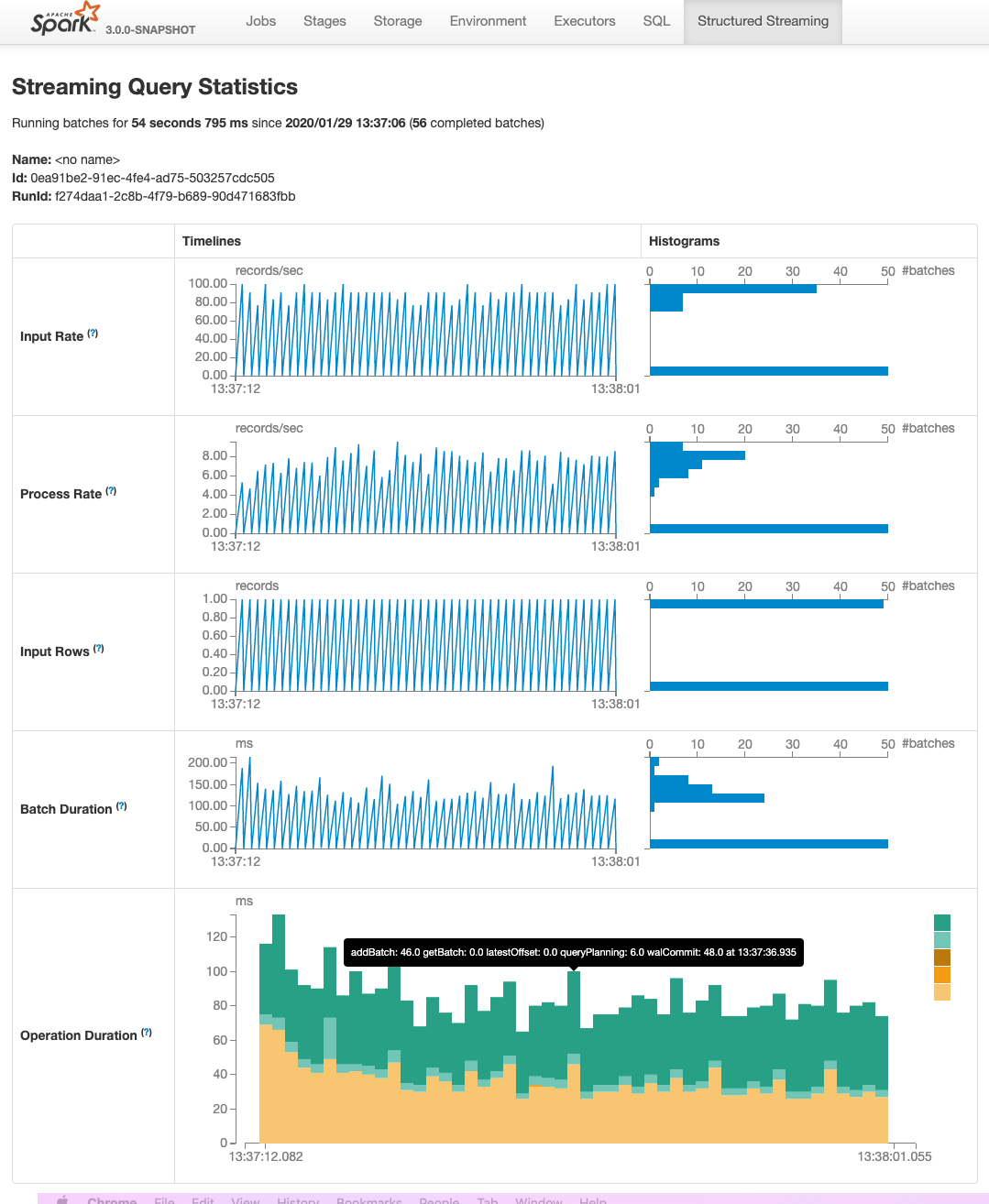

This PR adds two pages to Web UI for Structured Streaming:

- "/streamingquery": Streaming Query Page, providing some aggregate information for running/completed streaming queries.

- "/streamingquery/statistics": Streaming Query Statistics Page, providing detailed information for streaming query, including `Input Rate`, `Process Rate`, `Input Rows`, `Batch Duration` and `Operation Duration`

### Why are the changes needed?

It helps users to better monitor Structured Streaming query.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

- new added and existing UTs

- manual test

Closes#26201 from uncleGen/SPARK-29543.

Lead-authored-by: uncleGen <hustyugm@gmail.com>

Co-authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Co-authored-by: Genmao Yu <hustyugm@gmail.com>

Signed-off-by: Shixiong Zhu <zsxwing@gmail.com>

### What changes were proposed in this pull request?

Adding a dedicated boss event loop group to the Netty pipeline in the External Shuffle Service to avoid the delay in channel registration.

```

EventLoopGroup bossGroup = NettyUtils.createEventLoop(ioMode, 1,

conf.getModuleName() + "-boss");

EventLoopGroup workerGroup = NettyUtils.createEventLoop(ioMode, conf.serverThreads(),

conf.getModuleName() + "-server");

bootstrap = new ServerBootstrap()

.group(bossGroup, workerGroup)

.channel(NettyUtils.getServerChannelClass(ioMode))

.option(ChannelOption.ALLOCATOR, allocator)

```

### Why are the changes needed?

We have been seeing a large number of SASL authentication (RPC requests) timing out with the external shuffle service.

```

java.lang.RuntimeException: java.util.concurrent.TimeoutException: Timeout waiting for task.

at org.spark-project.guava.base.Throwables.propagate(Throwables.java:160)

at org.apache.spark.network.client.TransportClient.sendRpcSync(TransportClient.java:278)

at org.apache.spark.network.sasl.SaslClientBootstrap.doBootstrap(SaslClientBootstrap.java:80)

at org.apache.spark.network.client.TransportClientFactory.createClient(TransportClientFactory.java:228)

at org.apache.spark.network.client.TransportClientFactory.createUnmanagedClient(TransportClientFactory.java:181)

at org.apache.spark.network.shuffle.ExternalShuffleClient.registerWithShuffleServer(ExternalShuffleClient.java:141)

at org.apache.spark.storage.BlockManager$$anonfun$registerWithExternalShuffleServer$1.apply$mcVI$sp(BlockManager.scala:218)

```

The investigation that we have done is described here:

https://github.com/netty/netty/issues/9890

After adding `LoggingHandler` to the netty pipeline, we saw that the registration of the channel was getting delay which is because the worker threads are busy with the existing channels.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

We have tested the patch on our clusters and with a stress testing tool. After this change, we didn't see any SASL requests timing out. Existing unit tests pass.

Closes#27240 from otterc/SPARK-30512.

Authored-by: Chandni Singh <chsingh@linkedin.com>

Signed-off-by: Thomas Graves <tgraves@apache.org>

### What changes were proposed in this pull request?

There are scenarios where Spark History Server is located behind the VPC. So whenever api calls hit to get the executor Summary(allexecutors). There can be delay in getting the response of executor summary and in mean time "stage-page-template.html" is loaded and the response of executor Summary is not added to the stage-page-template.html.

As the result of which Aggregated Metrics by Executor in stage page is showing blank.

This scenario can be easily found in the cases when there is some proxy-server which is responsible for sending the request and response to spark History server.

This can be reproduced in Knox/In-house proxy servers which are used to send and receive response to Spark History Server.

Alternative scenario to test this case, Open the spark UI in developer mode in browser add some breakpoint in stagepage.js, this will add some delay in getting the response and now if we check the spark UI for stage Aggregated Metrics by Executor in stage page is showing blank.

So In-order to fix this there is a need to add the change in stagepage.js . There is a need to add the api call to get the html page(stage-page-template.html) first and after that other api calls to get the data that needs to attached in the stagepage (like executor Summary, stageExecutorSummaryInfoKeys exc)

### Why are the changes needed?

Since stage page is useful for debugging purpose, This helps in understanding how many task ran on the particular executor and information related to shuffle read and write on that executor.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Manually tested. Testing this in a reproducible way requires a running browser or HTML rendering engine that executes the JavaScript.Open the spark UI in developer mode in browser add some breakpoint in stagepage.js, this will add some delay in getting the response and now if we check the spark UI for stage Aggregated Metrics by Executor in stage page is showing blank.

Before fix

<img width="1529" alt="Screenshot 2020-01-20 at 3 21 55 PM" src="https://user-images.githubusercontent.com/34540906/72716739-bcfd3500-3b98-11ea-8dbe-90a135822f92.png">

After fix

<img width="1540" alt="Screenshot 2020-01-20 at 3 23 12 PM" src="https://user-images.githubusercontent.com/34540906/72716782-d30af580-3b98-11ea-8764-2bde77764604.png">

Closes#27292 from SaurabhChawla100/SPARK-30582.

Authored-by: Saurabh Chawla <saurabhc@qubole.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

- Sets up links between related sections.

- Add "Related sections" for each section.

- Change to the left hand side menu to reflect the current status of the doc.

- Other minor cleanups.

### Why are the changes needed?

Currently Spark lacks documentation on the supported SQL constructs causing

confusion among users who sometimes have to look at the code to understand the

usage. This is aimed at addressing this issue.

### Does this PR introduce any user-facing change?

Yes.

### How was this patch tested?

Tested using jykyll build --serve

Closes#27371 from dilipbiswal/select_finalization.

Authored-by: Dilip Biswal <dkbiswal@gmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

This pr intends to rename `spark.sql.legacy.addDirectory.recursive` into `spark.sql.legacy.addDirectory.recursive.enabled`.

### Why are the changes needed?

For consistent option names.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

N/A

Closes#27372 from maropu/SPARK-30234-FOLLOWUP.

Authored-by: Takeshi Yamamuro <yamamuro@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

- Update `testthat` to >= 2.0.0

- Replace of `testthat:::run_tests` with `testthat:::test_package_dir`

- Add trivial assertions for tests, without any expectations, to avoid skipping.

- Update related docs.

### Why are the changes needed?

`testthat` version has been frozen by [SPARK-22817](https://issues.apache.org/jira/browse/SPARK-22817) / https://github.com/apache/spark/pull/20003, but 1.0.2 is pretty old, and we shouldn't keep things in this state forever.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

- Existing CI pipeline:

- Windows build on AppVeyor, R 3.6.2, testthtat 2.3.1

- Linux build on Jenkins, R 3.1.x, testthat 1.0.2

- Additional builds with thesthat 2.3.1 using [sparkr-build-sandbox](https://github.com/zero323/sparkr-build-sandbox) on c7ed64af9e697b3619779857dd820832176b3be3

R 3.4.4 (image digest ec9032f8cf98)

```

docker pull zero323/sparkr-build-sandbox:3.4.4

docker run zero323/sparkr-build-sandbox:3.4.4 zero323 --branch SPARK-23435 --commit c7ed64af9e697b3619779857dd820832176b3be3 --public-key https://keybase.io/zero323/pgp_keys.asc

```

3.5.3 (image digest 0b1759ee4d1d)

```

docker pull zero323/sparkr-build-sandbox:3.5.3

docker run zero323/sparkr-build-sandbox:3.5.3 zero323 --branch SPARK-23435 --commit

c7ed64af9e697b3619779857dd820832176b3be3 --public-key https://keybase.io/zero323/pgp_keys.asc

```

and 3.6.2 (image digest 6594c8ceb72f)

```

docker pull zero323/sparkr-build-sandbox:3.6.2

docker run zero323/sparkr-build-sandbox:3.6.2 zero323 --branch SPARK-23435 --commit c7ed64af9e697b3619779857dd820832176b3be3 --public-key https://keybase.io/zero323/pgp_keys.asc

````

Corresponding [asciicast](https://asciinema.org/) are available as 10.5281/zenodo.3629431

[](https://doi.org/10.5281/zenodo.3629431)

(a bit to large to burden asciinema.org, but can run locally via `asciinema play`).

----------------------------

Continued from #27328Closes#27359 from zero323/SPARK-23435.

Authored-by: zero323 <mszymkiewicz@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This patch addresses remaining functionality on event log compaction: integrate compaction into FsHistoryProvider.

This patch is next task of SPARK-30479 (#27164), please refer the description of PR #27085 to see overall rationalization of this patch.

### Why are the changes needed?

One of major goal of SPARK-28594 is to prevent the event logs to become too huge, and SPARK-29779 achieves the goal. We've got another approach in prior, but the old approach required models in both KVStore and live entities to guarantee compatibility, while they're not designed to do so.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Added UT.

Closes#27208 from HeartSaVioR/SPARK-30481.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@apache.org>

### What changes were proposed in this pull request?

This is a follow-up for https://github.com/apache/spark/pull/24098 to refactor to build string once according to the [review comment](https://github.com/apache/spark/pull/24098#discussion_r369845234)

### Why are the changes needed?

Previously, we chose the minimal change way.

In this PR, we choose a more robust way than the previous post-step string processing.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

The test case is extended with more cases.

Closes#27353 from dongjoon-hyun/SPARK-27166-2.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

1, stack input vectors to blocks (like ALS/MLP);

2, add new param `blockSize`;

3, add a new class `InstanceBlock`

4, standardize the input outside of optimization procedure;

### Why are the changes needed?

1, reduce RAM to persist traing dataset; (save ~40% in test)

2, use Level-2 BLAS routines; (12% ~ 28% faster, without native BLAS)

### Does this PR introduce any user-facing change?

a new param `blockSize`

### How was this patch tested?

existing and updated testsuites

Closes#27360 from zhengruifeng/blockify_svc.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: zhengruifeng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

In the PR, I propose to transform the `Like` expression to `TernaryExpression`, and add third parameter `escape`. So, the `like` function will have feature parity with `LIKE ... ESCAPE` syntax supported by 187f3c1773.

### Why are the changes needed?

The `like` functions can be called with 2 or 3 parameters, and functionally equivalent to `LIKE` and `LIKE ... ESCAPE` SQL expressions.

### Does this PR introduce any user-facing change?

Yes, before `like` fails with the exception:

```sql

spark-sql> SELECT like('_Apache Spark_', '__%Spark__', '_');

Error in query: Invalid number of arguments for function like. Expected: 2; Found: 3; line 1 pos 7

```

After:

```sql

spark-sql> SELECT like('_Apache Spark_', '__%Spark__', '_');

true

```

### How was this patch tested?

- Add new example for the `like` function which is checked by `SQLQuerySuite`

- Run `RegexpExpressionsSuite` and `ExpressionParserSuite`.

Closes#27355 from MaxGekk/like-3-args.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Allow for using longs as seed for xxHash.

### Why are the changes needed?

Codegen fails when passing a seed to xxHash that is > 2^31.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing tests pass. Should more be added?

Closes#27354 from patrickcording/fix_xxhash_seed_bug.

Authored-by: Patrick Cording <patrick.cording@datarobot.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This patch converts CR/LF into LF in 3 source files, which most files are only using LF. This patch also add rules to enforce EOL as LF for all java, scala, xml, py, R files.

### Why are the changes needed?

The majority of source code files are using LF and only three files are CR/LF. While using IDE would let us don't bother with the difference, it still has a chance to make unnecessary diff if the file is modified with the editor which doesn't handle it automatically.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

```

grep -IUrl --color "^M" . | grep "\.java\|\.scala\|\.xml\|\.py\|\.R" | grep -v "/target/" | grep -v "/build/" | grep -v "/dist/" | grep -v "dependency-reduced-pom.xml" | grep -v ".pyc"

```

(Please note you'll need to type CTRL+V -> CTRL+M in bash shell to get `^M` because it's representing CR/LF, not a combination of `^` and `M`.)

Before the patch, the result is:

```

./sql/core/src/main/java/org/apache/spark/sql/execution/columnar/ColumnDictionary.java

./sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/optimizer/complexTypesSuite.scala

./sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/optimizer/ComplexTypes.scala

```

and after the patch, the result is None.

And git shows WARNING message if EOL of any of source files in given types are modified to CR/LF, like below:

```

warning: CRLF will be replaced by LF in sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/analysis/Analyzer.scala.

The file will have its original line endings in your working directory.

```

Closes#27365 from HeartSaVioR/MINOR-remove-CRLF-in-source-codes.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Document CLUSTER BY clause of SELECT statement in SQL Reference Guide.

### Why are the changes needed?

Currently Spark lacks documentation on the supported SQL constructs causing

confusion among users who sometimes have to look at the code to understand the

usage. This is aimed at addressing this issue.

### Does this PR introduce any user-facing change?

Yes.

**Before:**

There was no documentation for this.

**After.**

<img width="972" alt="Screen Shot 2020-01-20 at 2 59 05 PM" src="https://user-images.githubusercontent.com/14225158/72762704-7528de80-3b95-11ea-9d34-8fa0ab63d4c0.png">

<img width="972" alt="Screen Shot 2020-01-20 at 2 59 19 PM" src="https://user-images.githubusercontent.com/14225158/72762710-78bc6580-3b95-11ea-8279-2848d3b9e619.png">

### How was this patch tested?

Tested using jykyll build --serve

Closes#27297 from dilipbiswal/sql-ref-select-clusterby.

Authored-by: Dilip Biswal <dkbiswal@gmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

Document DISTRIBUTE BY clause of SELECT statement in SQL Reference Guide.

### Why are the changes needed?

Currently Spark lacks documentation on the supported SQL constructs causing

confusion among users who sometimes have to look at the code to understand the

usage. This is aimed at addressing this issue.

### Does this PR introduce any user-facing change?

Yes.

**Before:**

There was no documentation for this.

**After.**

<img width="972" alt="Screen Shot 2020-01-20 at 3 08 24 PM" src="https://user-images.githubusercontent.com/14225158/72763045-c08fbc80-3b96-11ea-8fb6-023cba5eb96a.png">

<img width="972" alt="Screen Shot 2020-01-20 at 3 08 34 PM" src="https://user-images.githubusercontent.com/14225158/72763047-c38aad00-3b96-11ea-80d8-cd3d2d4257c8.png">

### How was this patch tested?

Tested using jykyll build --serve

Closes#27298 from dilipbiswal/sql-ref-select-distributeby.

Authored-by: Dilip Biswal <dkbiswal@gmail.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

Prevent unnecessary copies of data during conversion from Arrow to Pandas.

### Why are the changes needed?

During conversion of pyarrow data to Pandas, columns are checked for timestamp types and then modified to correct for local timezone. If the data contains no timestamp types, then unnecessary copies of the data can be made. This is most prevalent when checking columns of a pandas DataFrame where each series is assigned back to the DataFrame, regardless if it had timestamps. See https://www.mail-archive.com/devarrow.apache.org/msg17008.html and ARROW-7596 for discussion.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Existing tests

Closes#27358 from BryanCutler/pyspark-pandas-timestamp-copy-fix-SPARK-30640.

Authored-by: Bryan Cutler <cutlerb@gmail.com>

Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

### What changes were proposed in this pull request?

Add identifier and catalog information in DataSourceV2Relation so it would be possible to do richer checks in checkAnalysis step.

### Why are the changes needed?

In data source v2, table implementations are all customized so we may not be able to get the resolved identifier from tables them selves. Therefore we encode the table and catalog information in DSV2Relation so no external changes are needed to make sure this information is available.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Unit tests in the following suites:

CatalogManagerSuite.scala

CatalogV2UtilSuite.scala

SupportsCatalogOptionsSuite.scala

PlanResolutionSuite.scala

Closes#26957 from yuchenhuo/SPARK-30314.

Authored-by: Yuchen Huo <yuchen.huo@databricks.com>

Signed-off-by: Burak Yavuz <brkyvz@gmail.com>

### What changes were proposed in this pull request?

This PR is to remove query index from the golden files of SQLQueryTestSuite

### Why are the changes needed?

Because the SQLQueryTestSuite's golden files have the query index for each query, removal of any query statement [except the last one] will generate many unneeded difference. This will make code review harder. The number of changed lines is misleading.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

N/A

Closes#27361 from gatorsmile/removeIndexNum.

Authored-by: Xiao Li <gatorsmile@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

This reverts commit 1d20d13149.

Closes#27351 from gatorsmile/revertSPARK25496.

Authored-by: Xiao Li <gatorsmile@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Disabling test for cleaning closure of recursive function.

### Why are the changes needed?

As of 9514b822a7 this test is no longer valid, and recursive calls, even simple ones:

```lead

f <- function(x) {

if(x > 0) {

f(x - 1)

} else {

x

}

}

```

lead to

```

Error: node stack overflow

```

This is issue is silenced when tested with `testthat` 1.x (reason unknown), but cause failures when using `testthat` 2.x (issue can be reproduced outside test context).

Problem is known and tracked by [SPARK-30629](https://issues.apache.org/jira/browse/SPARK-30629)

Therefore, keeping this test active doesn't make sense, as it will lead to continuous test failures, when `testthat` is updated (https://github.com/apache/spark/pull/27359 / SPARK-23435).

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing tests.

CC falaki

Closes#27363 from zero323/SPARK-29777-FOLLOWUP.

Authored-by: zero323 <mszymkiewicz@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

For better JDK11 support, this PR aims to upgrade **Jersey** and **javassist** to `2.30` and `3.35.0-GA` respectively.

### Why are the changes needed?

**Jersey**: This will bring the following `Jersey` updates.

- https://eclipse-ee4j.github.io/jersey.github.io/release-notes/2.30.html

- https://github.com/eclipse-ee4j/jersey/issues/4245 (Java 11 java.desktop module dependency)

**javassist**: This is a transitive dependency from 3.20.0-CR2 to 3.25.0-GA.

- `javassist` officially supports JDK11 from [3.24.0-GA release note](https://github.com/jboss-javassist/javassist/blob/master/Readme.html#L308).

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Pass the Jenkins with both JDK8 and JDK11.

Closes#27357 from dongjoon-hyun/SPARK-30639.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This patch proposes to prune unnecessary nested fields from Generate which has no Project on top of it.

### Why are the changes needed?

In Optimizer, we can prune nested columns from Project(projectList, Generate). However, unnecessary columns could still possibly be read in Generate, if no Project on top of it. We should prune it too.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Unit test.

Closes#26978 from viirya/SPARK-29721.

Lead-authored-by: Liang-Chi Hsieh <liangchi@uber.com>

Co-authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Remove ```numTrees``` in GBT in 3.0.0.

### Why are the changes needed?

Currently, GBT has

```

/**

* Number of trees in ensemble

*/

Since("2.0.0")

val getNumTrees: Int = trees.length

```

and

```

/** Number of trees in ensemble */

val numTrees: Int = trees.length

```

I think we should remove one of them. We deprecated it in 2.4.5 via https://github.com/apache/spark/pull/27352.

### Does this PR introduce any user-facing change?

Yes, remove ```numTrees``` in GBT in 3.0.0

### How was this patch tested?

existing tests

Closes#27330 from huaxingao/spark-numTrees.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Add SPARK_APPLICATION_ID environment when spark configure driver pod.

### Why are the changes needed?

Currently, driver doesn't have this in environments and it's no convenient to retrieve spark id.

The use case is we want to look up spark application id and create application folder and redirect driver logs to application folder.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

unit tested. I also build new distribution and container image to kick off a job in Kubernetes and I do see SPARK_APPLICATION_ID added there. .

Closes#27347 from Jeffwan/SPARK-30626.

Authored-by: Jiaxin Shan <seedjeffwan@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Disable all the V2 file sources in Spark 3.0 by default.

### Why are the changes needed?

There are still some missing parts in the file source V2 framework:

1. It doesn't support reporting file scan metrics such as "numOutputRows"/"numFiles"/"fileSize" like `FileSourceScanExec`. This requires another patch in the data source V2 framework. Tracked by [SPARK-30362](https://issues.apache.org/jira/browse/SPARK-30362)

2. It doesn't support partition pruning with subqueries(including dynamic partition pruning) for now. Tracked by [SPARK-30628](https://issues.apache.org/jira/browse/SPARK-30628)

As we are going to code freeze on Jan 31st, this PR proposes to disable all the V2 file sources in Spark 3.0 by default.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Existing tests.

Closes#27348 from gengliangwang/disableFileSourceV2.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>