## What changes were proposed in this pull request?

This is a followup of the discussion in https://github.com/apache/spark/pull/24675#discussion_r286786053

`QueryPlan#references` is an important property. The `ColumnPrunning` rule relies on it.

Some query plan nodes have `Seq[Attribute]` parameter, which is used as its output attributes. For example, leaf nodes, `Generate`, `MapPartitionsInPandas`, etc. These nodes override `producedAttributes` to make `missingInputs` correct.

However, these nodes also need to override `references` to make column pruning work. This PR proposes to exclude `producedAttributes` from the default implementation of `QueryPlan#references`, so that we don't need to override `references` in all these nodes.

Note that, technically we can remove `producedAttributes` and always ask query plan nodes to override `references`. But I do find the code can be simpler with `producedAttributes` in some places, where there is a base class for some specific query plan nodes.

## How was this patch tested?

existing tests

Closes#25052 from cloud-fan/minor.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This PR reverts the partial bug fix in `ShuffleBlockFetcherIterator` which was introduced by https://github.com/apache/spark/pull/23638 .

The reasons:

1. It's a potential bug. After fixing `PipelinedRDD` in #23638 , the original problem was resolved.

2. The fix is incomplete according to [the discussion](https://github.com/apache/spark/pull/23638#discussion_r251869084)

We should fix the potential bug completely later.

## How was this patch tested?

existing tests

Closes#25049 from cloud-fan/revert.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This PR adds some tests converted from `pgSQL/case.sql'` to test UDFs. Please see contribution guide of this umbrella ticket - [SPARK-27921](https://issues.apache.org/jira/browse/SPARK-27921).

This PR also contains two minor fixes:

1. Change name of Scala UDF from `UDF:name(...)` to `name(...)` to be consistent with Python'

2. Fix Scala UDF at `IntegratedUDFTestUtils.scala ` to handle `null` in strings.

<details><summary>Diff comparing to 'pgSQL/case.sql'</summary>

<p>

```diff

diff --git a/sql/core/src/test/resources/sql-tests/results/pgSQL/case.sql.out b/sql/core/src/test/resources/sql-tests/results/udf/pgSQL/udf-case.sql.out

index fa078d16d6d..55bef64338f 100644

--- a/sql/core/src/test/resources/sql-tests/results/pgSQL/case.sql.out

+++ b/sql/core/src/test/resources/sql-tests/results/udf/pgSQL/udf-case.sql.out

-115,7 +115,7 struct<>

-- !query 13

SELECT '3' AS `One`,

CASE

- WHEN 1 < 2 THEN 3

+ WHEN CAST(udf(1 < 2) AS boolean) THEN 3

END AS `Simple WHEN`

-- !query 13 schema

struct<One:string,Simple WHEN:int>

-126,10 +126,10 struct<One:string,Simple WHEN:int>

-- !query 14

SELECT '<NULL>' AS `One`,

CASE

- WHEN 1 > 2 THEN 3

+ WHEN 1 > 2 THEN udf(3)

END AS `Simple default`

-- !query 14 schema

-struct<One:string,Simple default:int>

+struct<One:string,Simple default:string>

-- !query 14 output

<NULL> NULL

-137,17 +137,17 struct<One:string,Simple default:int>

-- !query 15

SELECT '3' AS `One`,

CASE

- WHEN 1 < 2 THEN 3

- ELSE 4

+ WHEN udf(1) < 2 THEN udf(3)

+ ELSE udf(4)

END AS `Simple ELSE`

-- !query 15 schema

-struct<One:string,Simple ELSE:int>

+struct<One:string,Simple ELSE:string>

-- !query 15 output

3 3

-- !query 16

-SELECT '4' AS `One`,

+SELECT udf('4') AS `One`,

CASE

WHEN 1 > 2 THEN 3

ELSE 4

-159,10 +159,10 struct<One:string,ELSE default:int>

-- !query 17

-SELECT '6' AS `One`,

+SELECT udf('6') AS `One`,

CASE

- WHEN 1 > 2 THEN 3

- WHEN 4 < 5 THEN 6

+ WHEN CAST(udf(1 > 2) AS boolean) THEN 3

+ WHEN udf(4) < 5 THEN 6

ELSE 7

END AS `Two WHEN with default`

-- !query 17 schema

-173,7 +173,7 struct<One:string,Two WHEN with default:int>

-- !query 18

SELECT '7' AS `None`,

- CASE WHEN rand() < 0 THEN 1

+ CASE WHEN rand() < udf(0) THEN 1

END AS `NULL on no matches`

-- !query 18 schema

struct<None:string,NULL on no matches:int>

-182,36 +182,36 struct<None:string,NULL on no matches:int>

-- !query 19

-SELECT CASE WHEN 1=0 THEN 1/0 WHEN 1=1 THEN 1 ELSE 2/0 END

+SELECT CASE WHEN CAST(udf(1=0) AS boolean) THEN 1/0 WHEN 1=1 THEN 1 ELSE 2/0 END

-- !query 19 schema

-struct<CASE WHEN (1 = 0) THEN (CAST(1 AS DOUBLE) / CAST(0 AS DOUBLE)) WHEN (1 = 1) THEN CAST(1 AS DOUBLE) ELSE (CAST(2 AS DOUBLE) / CAST(0 AS DOUBLE)) END:double>

+struct<CASE WHEN CAST(udf((1 = 0)) AS BOOLEAN) THEN (CAST(1 AS DOUBLE) / CAST(0 AS DOUBLE)) WHEN (1 = 1) THEN CAST(1 AS DOUBLE) ELSE (CAST(2 AS DOUBLE) / CAST(0 AS DOUBLE)) END:double>

-- !query 19 output

1.0

-- !query 20

-SELECT CASE 1 WHEN 0 THEN 1/0 WHEN 1 THEN 1 ELSE 2/0 END

+SELECT CASE 1 WHEN 0 THEN 1/udf(0) WHEN 1 THEN 1 ELSE 2/0 END

-- !query 20 schema

-struct<CASE WHEN (1 = 0) THEN (CAST(1 AS DOUBLE) / CAST(0 AS DOUBLE)) WHEN (1 = 1) THEN CAST(1 AS DOUBLE) ELSE (CAST(2 AS DOUBLE) / CAST(0 AS DOUBLE)) END:double>

+struct<CASE WHEN (1 = 0) THEN (CAST(1 AS DOUBLE) / CAST(CAST(udf(0) AS DOUBLE) AS DOUBLE)) WHEN (1 = 1) THEN CAST(1 AS DOUBLE) ELSE (CAST(2 AS DOUBLE) / CAST(0 AS DOUBLE)) END:double>

-- !query 20 output

1.0

-- !query 21

-SELECT CASE WHEN i > 100 THEN 1/0 ELSE 0 END FROM case_tbl

+SELECT CASE WHEN i > 100 THEN udf(1/0) ELSE udf(0) END FROM case_tbl

-- !query 21 schema

-struct<CASE WHEN (i > 100) THEN (CAST(1 AS DOUBLE) / CAST(0 AS DOUBLE)) ELSE CAST(0 AS DOUBLE) END:double>

+struct<CASE WHEN (i > 100) THEN udf((cast(1 as double) / cast(0 as double))) ELSE udf(0) END:string>

-- !query 21 output

-0.0

-0.0

-0.0

-0.0

+0

+0

+0

+0

-- !query 22

-SELECT CASE 'a' WHEN 'a' THEN 1 ELSE 2 END

+SELECT CASE 'a' WHEN 'a' THEN udf(1) ELSE udf(2) END

-- !query 22 schema

-struct<CASE WHEN (a = a) THEN 1 ELSE 2 END:int>

+struct<CASE WHEN (a = a) THEN udf(1) ELSE udf(2) END:string>

-- !query 22 output

1

-283,7 +283,7 big

-- !query 27

-SELECT * FROM CASE_TBL WHERE COALESCE(f,i) = 4

+SELECT * FROM CASE_TBL WHERE udf(COALESCE(f,i)) = 4

-- !query 27 schema

struct<i:int,f:double>

-- !query 27 output

-291,7 +291,7 struct<i:int,f:double>

-- !query 28

-SELECT * FROM CASE_TBL WHERE NULLIF(f,i) = 2

+SELECT * FROM CASE_TBL WHERE udf(NULLIF(f,i)) = 2

-- !query 28 schema

struct<i:int,f:double>

-- !query 28 output

-299,10 +299,10 struct<i:int,f:double>

-- !query 29

-SELECT COALESCE(a.f, b.i, b.j)

+SELECT udf(COALESCE(a.f, b.i, b.j))

FROM CASE_TBL a, CASE2_TBL b

-- !query 29 schema

-struct<coalesce(f, CAST(i AS DOUBLE), CAST(j AS DOUBLE)):double>

+struct<udf(coalesce(f, cast(i as double), cast(j as double))):string>

-- !query 29 output

-30.3

-30.3

-332,8 +332,8 struct<coalesce(f, CAST(i AS DOUBLE), CAST(j AS DOUBLE)):double>

-- !query 30

SELECT *

- FROM CASE_TBL a, CASE2_TBL b

- WHERE COALESCE(a.f, b.i, b.j) = 2

+ FROM CASE_TBL a, CASE2_TBL b

+ WHERE udf(COALESCE(a.f, b.i, b.j)) = 2

-- !query 30 schema

struct<i:int,f:double,i:int,j:int>

-- !query 30 output

-342,7 +342,7 struct<i:int,f:double,i:int,j:int>

-- !query 31

-SELECT '' AS Five, NULLIF(a.i,b.i) AS `NULLIF(a.i,b.i)`,

+SELECT udf('') AS Five, NULLIF(a.i,b.i) AS `NULLIF(a.i,b.i)`,

NULLIF(b.i, 4) AS `NULLIF(b.i,4)`

FROM CASE_TBL a, CASE2_TBL b

-- !query 31 schema

-377,7 +377,7 struct<Five:string,NULLIF(a.i,b.i):int,NULLIF(b.i,4):int>

-- !query 32

SELECT '' AS `Two`, *

FROM CASE_TBL a, CASE2_TBL b

- WHERE COALESCE(f,b.i) = 2

+ WHERE CAST(udf(COALESCE(f,b.i) = 2) AS boolean)

-- !query 32 schema

struct<Two:string,i:int,f:double,i:int,j:int>

-- !query 32 output

-388,15 +388,15 struct<Two:string,i:int,f:double,i:int,j:int>

-- !query 33

SELECT CASE

(CASE vol('bar')

- WHEN 'foo' THEN 'it was foo!'

- WHEN vol(null) THEN 'null input'

+ WHEN udf('foo') THEN 'it was foo!'

+ WHEN udf(vol(null)) THEN 'null input'

WHEN 'bar' THEN 'it was bar!' END

)

- WHEN 'it was foo!' THEN 'foo recognized'

- WHEN 'it was bar!' THEN 'bar recognized'

- ELSE 'unrecognized' END

+ WHEN udf('it was foo!') THEN 'foo recognized'

+ WHEN 'it was bar!' THEN udf('bar recognized')

+ ELSE 'unrecognized' END AS col

-- !query 33 schema

-struct<CASE WHEN (CASE WHEN (UDF:vol(bar) = foo) THEN it was foo! WHEN (UDF:vol(bar) = UDF:vol(null)) THEN null input WHEN (UDF:vol(bar) = bar) THEN it was bar! END = it was foo!) THEN foo recognized WHEN (CASE WHEN (UDF:vol(bar) = foo) THEN it was foo! WHEN (UDF:vol(bar) = UDF:vol(null)) THEN null input WHEN (UDF:vol(bar) = bar) THEN it was bar! END = it was bar!) THEN bar recognized ELSE unrecognized END:string>

+struct<col:string>

-- !query 33 output

bar recognized

```

</p>

</details>

https://github.com/apache/spark/pull/25069 contains the same minor fixes as it's required to write the tests.

## How was this patch tested?

Tested as guided in [SPARK-27921](https://issues.apache.org/jira/browse/SPARK-27921).

Closes#25070 from HyukjinKwon/SPARK-28273.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The sub-second part of the interval should be padded before parsing. Currently, Spark gives a correct value only when there is 9 digits below `.`.

```

spark-sql> select interval '0 0:0:0.123456789' day to second;

interval 123 milliseconds 456 microseconds

spark-sql> select interval '0 0:0:0.12345678' day to second;

interval 12 milliseconds 345 microseconds

spark-sql> select interval '0 0:0:0.1234' day to second;

interval 1 microseconds

```

## How was this patch tested?

Pass the Jenkins with the fixed test cases.

Closes#25079 from dongjoon-hyun/SPARK-28308.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

Make the transform natively in ml framework to avoid extra conversion.

There are many TODOs in current ml module, like `// TODO: Make the transformer natively in ml framework to avoid extra conversion.` in ChiSqSelector.

This PR is to make ml algs no longer need to convert ml-vector to mllib-vector in transforms.

Including: LDA/ChiSqSelector/ElementwiseProduct/HashingTF/IDF/Normalizer/PCA/StandardScaler.

## How was this patch tested?

existing testsuites

Closes#24963 from zhengruifeng/to_ml_vector.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

In Dataset drop(col: Column) method, the `equals` comparison method was used instead of `semanticEquals`, which caused the problem of abnormal case-sensitivity behavior. When attributes of LogicalPlan are checked for equality, `semanticEquals` should be used instead.

A similar PR I referred to: https://github.com/apache/spark/pull/22713 created by mgaido91

## How was this patch tested?

- Added new unit test case in DataFrameSuite

- ./build/sbt "testOnly org.apache.spark.sql.*"

- The python code from ticket reporter at https://issues.apache.org/jira/browse/SPARK-28189Closes#25055 from Tonix517/SPARK-28189.

Authored-by: Tony Zhang <tony.zhang@uber.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

Mainly change logs:

### Version 3.0.13:

- Support for JDK 9/10 in Full Compiler

- The syntax elements that can have modifiers now all have sets of "is...()" methods that check for each modifier. Some also have methods "getAccess()" and/or "getAnnotations()".

- Implement "type annotations" (JLS8 9.7.4)

- Implemented parsing (but not compilation) of "modular compilation units" (JLS11 7.3).

- Replaced all "assert...Uncookable(..., Pattern messageRegex)" and "assert...Uncookable(..., String messageInfix)" method pairs with a single "assert...Uncookable(..., String messageRegex)" method.

Minor refactoring: Allowed modifiers are now checked in the Parser, not in Java.*. This saves a lot of THROWS clauses.

- Parse Type inference syntax: Type inference for generic instance creation implemented, test cases added.

- Parse MethodReference, ClassInstanceCreationReference and ArrayCreationReference

### Version 3.0.12

- Fixed: Operator "&" not defined on types "java.lang.Long" and "int"

- Major bug in JavaSourceClassLoader: When loading the second and following classes, CUs were compiled again, leading to an inconsistent class hierarchy.

- Fixed: Java 9 added "Override public final CharBuffer CharBuffer.rewind() { ..." -- leads easily to a java.lang.NoSuchMethodError

- Changed all occurences of the words "Java bytecode" to "JVM bytecode" to make clearer that the generated bytecode is for the JVMS and not suitable for, e.g. DALVIK.

http://janino-compiler.github.io/janino/changelog.html

## How was this patch tested?

Existing test

Closes#25021 from wangyum/SPARK-28221.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

We changed our non-standard syntax for `trim` function in #24902 from `TRIM(trimStr, str)` to `TRIM(str, trimStr)` to be compatible with other databases. This pr update the migration guide.

I checked various databases(PostgreSQL, Teradata, Vertica, Oracle, DB2, SQL Server 2019, MySQL, Hive, Presto) and it seems that only PostgreSQL and Presto support this non-standard syntax.

**PostgreSQL**:

```sql

postgres=# select substr(version(), 0, 16), trim('yxTomxx', 'x');

substr | btrim

-----------------+-------

PostgreSQL 11.3 | yxTom

(1 row)

```

**Presto**:

```sql

presto> select trim('yxTomxx', 'x');

_col0

-------

yxTom

(1 row)

```

## How was this patch tested?

manual tests

Closes#24948 from wangyum/SPARK-28093-FOLLOW-UP-DOCS.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This PR adds some more WITH test cases as a follow-up to https://github.com/apache/spark/pull/24842

## How was this patch tested?

Add new UTs.

Closes#24949 from peter-toth/SPARK-28002-follow-up.

Authored-by: Peter Toth <peter.toth@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

- Currently, `ExpressionEncoder` does not handle bigdecimal overflow. Round-tripping overflowing java/scala BigDecimal/BigInteger returns null.

- The serializer encode java/scala BigDecimal to to sql Decimal, which still has the underlying data to the former.

- When writing out to UnsafeRow, `changePrecision` will be false and row has null value.

24e1e41648/sql/catalyst/src/main/java/org/apache/spark/sql/catalyst/expressions/codegen/UnsafeRowWriter.java (L202-L206)

- In [SPARK-23179](https://github.com/apache/spark/pull/20350), an option to throw exception on decimal overflow was introduced.

- This PR adds the option in `ExpressionEncoder` to throw when detecting overflowing BigDecimal/BigInteger before its corresponding Decimal gets written to Row. This gives a consistent behavior between decimal arithmetic on sql expression (DecimalPrecision), and getting decimal from dataframe (RowEncoder)

Thanks to mgaido91 for the very first PR `SPARK-23179` and follow-up discussion on this change.

Thanks to JoshRosen for working with me on this.

## How was this patch tested?

added unit tests

Closes#25016 from mickjermsurawong-stripe/SPARK-28200.

Authored-by: Mick Jermsurawong <mickjermsurawong@stripe.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

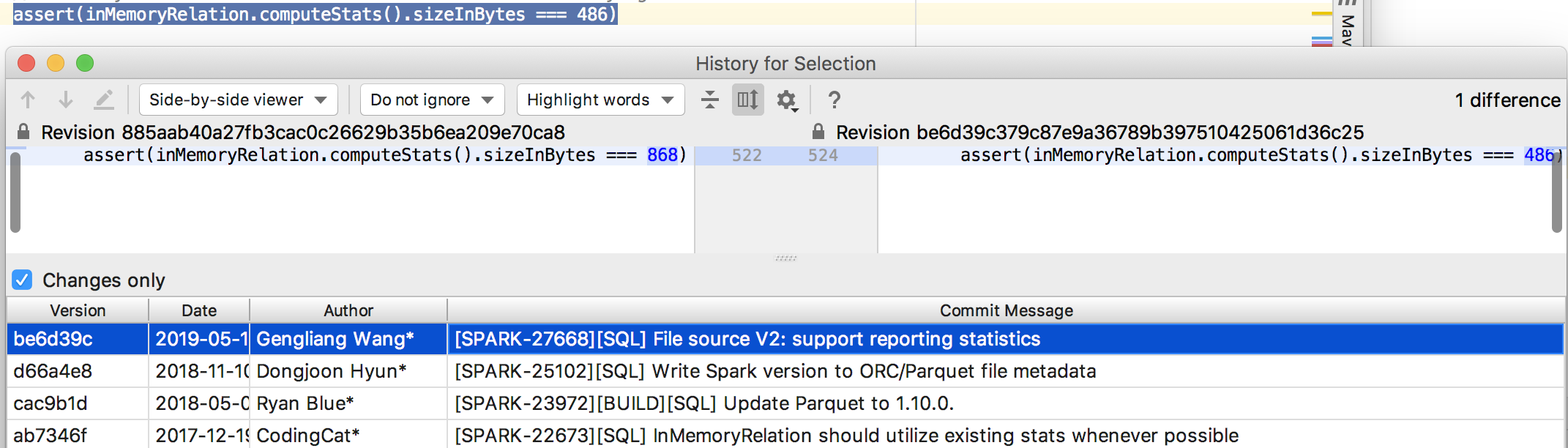

## What changes were proposed in this pull request?

Migrate Avro to File source V2.

## How was this patch tested?

Unit test

Closes#25017 from gengliangwang/avroV2.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This pr upgrades Postgres docker image for integration tests.

## How was this patch tested?

manual tests:

```

./build/mvn install -DskipTests

./build/mvn test -Pdocker-integration-tests -pl :spark-docker-integration-tests_2.12

```

Closes#25050 from wangyum/SPARK-28248.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This PR proposes to rename `mapPartitionsInPandas` to `mapInPandas` with a separate evaluation type .

Had an offline discussion with rxin, mengxr and cloud-fan

The reason is basically:

1. `SCALAR_ITER` doesn't make sense with `mapPartitionsInPandas`.

2. It cannot share the same Pandas UDF, for instance, at `select` and `mapPartitionsInPandas` unlike `GROUPED_AGG` because iterator's return type is different.

3. `mapPartitionsInPandas` -> `mapInPandas` - see https://github.com/apache/spark/pull/25044#issuecomment-508298552 and https://github.com/apache/spark/pull/25044#issuecomment-508299764

Renaming `SCALAR_ITER` as `MAP_ITER` is abandoned due to 2. reason.

For `XXX_ITER`, it might have to have a different interface in the future if we happen to add other versions of them. But this is an orthogonal topic with `mapPartitionsInPandas`.

## How was this patch tested?

Existing tests should cover.

Closes#25044 from HyukjinKwon/SPARK-28198.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Before this PR inserting into a non-existing table returned a weird error message:

```

sql("INSERT INTO test VALUES (1)").show

org.apache.spark.sql.AnalysisException: unresolved operator 'InsertIntoTable 'UnresolvedRelation [test], false, false;;

'InsertIntoTable 'UnresolvedRelation [test], false, false

+- LocalRelation [col1#4]

```

after this PR the error message becomes:

```

org.apache.spark.sql.AnalysisException: Table not found: test;;

'InsertIntoTable 'UnresolvedRelation [test], false, false

+- LocalRelation [col1#0]

```

## How was this patch tested?

Added a new UT.

Closes#25054 from peter-toth/SPARK-28251.

Authored-by: Peter Toth <peter.toth@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This PR adds support of `WITH` clause within a subquery so this query becomes valid:

```

SELECT max(c) FROM (

WITH t AS (SELECT 1 AS c)

SELECT * FROM t

)

```

## How was this patch tested?

Added new UTs.

Closes#24831 from peter-toth/SPARK-19799-2.

Authored-by: Peter Toth <peter.toth@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This is to implement a ReduceNumShufflePartitions rule in the new adaptive execution framework introduced in #24706. This rule is used to adjust the post shuffle partitions based on the map output statistics.

## How was this patch tested?

Added ReduceNumShufflePartitionsSuite

Closes#24978 from carsonwang/reduceNumShufflePartitions.

Authored-by: Carson Wang <carson.wang@intel.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This patch adds missing UT which tests the changed behavior of original patch #24942.

## How was this patch tested?

Newly added UT.

Closes#24999 from HeartSaVioR/SPARK-28142-FOLLOWUP.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This pr add calculate local directory size to `SQLTestUtils`.

We can avoid these changes after this pr:

## How was this patch tested?

Existing test

Closes#25014 from wangyum/SPARK-28216.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

`requestHeaderSize` is added in https://github.com/apache/spark/pull/23090 and applies to Spark + History server UI as well. Without debug log it's hard to find out on which side what configuration is used.

In this PR I've added a log message which prints out the value.

## How was this patch tested?

Manually checked log files.

Closes#25045 from gaborgsomogyi/SPARK-26118.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This PR makes the predicate pushdown logic in catalyst optimizer more efficient by unifying two existing rules `PushdownPredicates` and `PushPredicateThroughJoin`. Previously pushing down a predicate for queries such as `Filter(Join(Join(Join)))` requires n steps. This patch essentially reduces this to a single pass.

To make this actually work, we need to unify a few rules such as `CombineFilters`, `PushDownPredicate` and `PushDownPrdicateThroughJoin`. Otherwise cases such as `Filter(Join(Filter(Join)))` still requires several passes to fully push down predicates. This unification is done by composing several partial functions, which makes a minimal code change and can reuse existing UTs.

Results show that this optimization can improve the catalyst optimization time by 16.5%. For queries with more joins, the performance is even better. E.g., for TPC-DS q64, the performance boost is 49.2%.

## How was this patch tested?

Existing UTs + new a UT for the new rule.

Closes#24956 from yeshengm/fixed-point-opt.

Authored-by: Yesheng Ma <kimi.ysma@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

This pr add `PLACING` to `ansiNonReserved` and add `overlay` and `placing` to `TableIdentifierParserSuite`.

## How was this patch tested?

N/A

Closes#25013 from wangyum/SPARK-28077.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

In Python 2.7 with latest PyArrow and Pandas, the error message seems a bit different with Python 3. This PR simply fixes the test.

```

======================================================================

FAIL: test_createDataFrame_with_incorrect_schema (pyspark.sql.tests.test_arrow.ArrowTests)

----------------------------------------------------------------------

Traceback (most recent call last):

File "/.../spark/python/pyspark/sql/tests/test_arrow.py", line 275, in test_createDataFrame_with_incorrect_schema

self.spark.createDataFrame(pdf, schema=wrong_schema)

AssertionError: "integer.*required.*got.*str" does not match "('Exception thrown when converting pandas.Series (object) to Arrow Array (int32). It can be caused by overflows or other unsafe conversions warned by Arrow. Arrow safe type check can be disabled by using SQL config `spark.sql.execution.pandas.arrowSafeTypeConversion`.', ArrowTypeError('an integer is required',))"

======================================================================

FAIL: test_createDataFrame_with_incorrect_schema (pyspark.sql.tests.test_arrow.EncryptionArrowTests)

----------------------------------------------------------------------

Traceback (most recent call last):

File "/.../spark/python/pyspark/sql/tests/test_arrow.py", line 275, in test_createDataFrame_with_incorrect_schema

self.spark.createDataFrame(pdf, schema=wrong_schema)

AssertionError: "integer.*required.*got.*str" does not match "('Exception thrown when converting pandas.Series (object) to Arrow Array (int32). It can be caused by overflows or other unsafe conversions warned by Arrow. Arrow safe type check can be disabled by using SQL config `spark.sql.execution.pandas.arrowSafeTypeConversion`.', ArrowTypeError('an integer is required',))"

```

## How was this patch tested?

Manually tested.

```

cd python

./run-tests --python-executables=python --modules pyspark-sql

```

Closes#25042 from HyukjinKwon/SPARK-28240.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

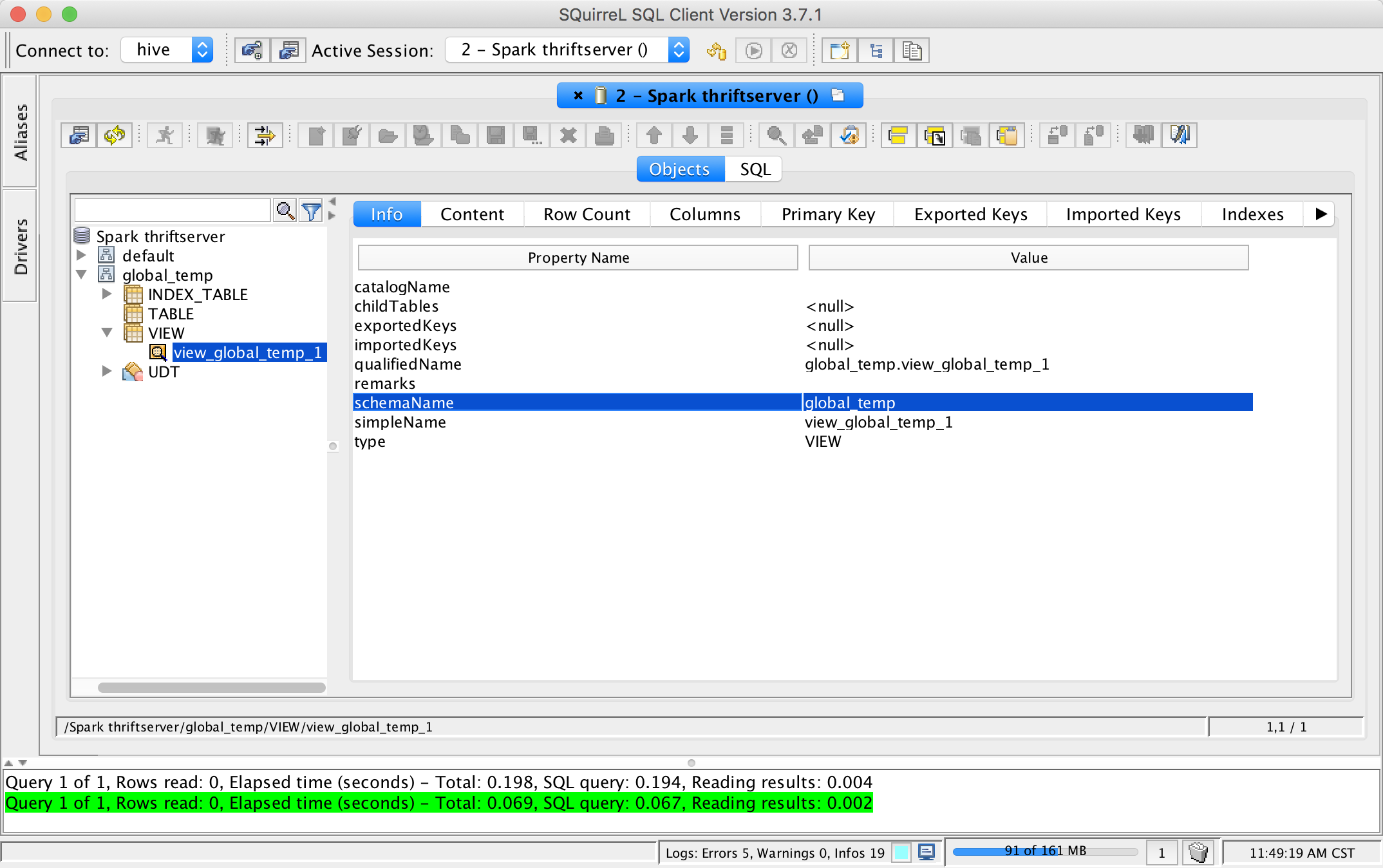

## What changes were proposed in this pull request?

This pr add support show global temporary view and local temporary view in database tool.

TODO: Database tools should support show temporary views because it's schema is null.

## How was this patch tested?

unit tests and manual tests:

Closes#24972 from wangyum/SPARK-28167.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

link doc & example of Interaction

## How was this patch tested?

existing tests

Closes#25027 from zhengruifeng/py_doc_interaction.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Right now they fail only for inner joins, because we implemented the check when that was the only supported type.

## How was this patch tested?

new unit test

Closes#25023 from jose-torres/changevalidation.

Authored-by: Jose Torres <torres.joseph.f+github@gmail.com>

Signed-off-by: Jose Torres <torres.joseph.f+github@gmail.com>

## What changes were proposed in this pull request?

In some cases, executeTake in SparkPlan could decode more than necessary.

For example, in case of below odd/even number partitioning, total row's count from partitions will be 100, although it is limited with 51. And 'executeTake' in SparkPlan decodes all of them, "49" rows of which are unnecessarily decoded.

```scala

spark.sparkContext.parallelize((0 until 100).map(i => (i, 1))).toDF()

.repartitionByRange(2, $"_1" % 2).limit(51).collect()

```

By using a iterator of the scalar collection, we can make ensure that at most n rows are decoded.

## How was this patch tested?

Existing unit tests that call limit function of DataFrame.

testOnly *SQLQuerySuite

testOnly *DataFrameSuite

Closes#22347 from Dooyoung-Hwang/refactor_execute_take.

Authored-by: Dooyoung Hwang <dooyoung.hwang@sk.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

According to the documentation `groupIdPrefix` should be available for `streaming and batch`.

It is not the case because the batch part is missing.

In this PR I've added:

* Structured Streaming test for v1 and v2 to cover `groupIdPrefix`

* Batch test for v1 and v2 to cover `groupIdPrefix`

* Added `groupIdPrefix` usage in batch

## How was this patch tested?

Additional + existing unit tests.

Closes#25030 from gaborgsomogyi/SPARK-28232.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This is a small follow-up for SPARK-28054 to fix wrong indent and use `withSQLConf` as suggested by gatorsmile.

## How was this patch tested?

Existing tests.

Closes#24971 from viirya/SPARK-28054-followup.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

When SPARK_HOME of env is set and contains a specific `spark-defaults,conf`, `org.apache.spark.util.loadDefaultSparkProperties` method may noise `system props`. So when runs `core/test` module, it is possible to fail to run `SparkConfSuite` .

It's easy to repair by setting `loadDefaults` in `SparkConf` to be false.

```

[info] - deprecated configs *** FAILED *** (79 milliseconds)

[info] 7 did not equal 4 (SparkConfSuite.scala:266)

[info] org.scalatest.exceptions.TestFailedException:

[info] at org.scalatest.Assertions.newAssertionFailedException(Assertions.scala:528)

[info] at org.scalatest.Assertions.newAssertionFailedException$(Assertions.scala:527)

[info] at org.scalatest.FunSuite.newAssertionFailedException(FunSuite.scala:1560)

[info] at org.scalatest.Assertions$AssertionsHelper.macroAssert(Assertions.scala:501)

[info] at org.apache.spark.SparkConfSuite.$anonfun$new$26(SparkConfSuite.scala:266)

[info] at org.scalatest.OutcomeOf.outcomeOf(OutcomeOf.scala:85)

[info] at org.scalatest.OutcomeOf.outcomeOf$(OutcomeOf.scala:83)

[info] at org.scalatest.OutcomeOf$.outcomeOf(OutcomeOf.scala:104)

[info] at org.scalatest.Transformer.apply(Transformer.scala:22)

[info] at org.scalatest.Transformer.apply(Transformer.scala:20)

[info] at org.scalatest.FunSuiteLike$$anon$1.apply(FunSuiteLike.scala:186)

[info] at org.apache.spark.SparkFunSuite.withFixture(SparkFunSuite.scala:149)

[info] at org.scalatest.FunSuiteLike.invokeWithFixture$1(FunSuiteLike.scala:184)

[info] at org.scalatest.FunSuiteLike.$anonfun$runTest$1(FunSuiteLike.scala:196)

[info] at org.scalatest.SuperEngine.runTestImpl(Engine.scala:289)

```

Closes#24998 from LiShuMing/SPARK-28202.

Authored-by: ShuMingLi <ming.moriarty@gmail.com>

Signed-off-by: jerryshao <jerryshao@tencent.com>

## What changes were proposed in this pull request?

This PR proposes to add `mapPartitionsInPandas` API to DataFrame by using existing `SCALAR_ITER` as below:

1. Filtering via setting the column

```python

from pyspark.sql.functions import pandas_udf, PandasUDFType

df = spark.createDataFrame([(1, 21), (2, 30)], ("id", "age"))

pandas_udf(df.schema, PandasUDFType.SCALAR_ITER)

def filter_func(iterator):

for pdf in iterator:

yield pdf[pdf.id == 1]

df.mapPartitionsInPandas(filter_func).show()

```

```

+---+---+

| id|age|

+---+---+

| 1| 21|

+---+---+

```

2. `DataFrame.loc`

```python

from pyspark.sql.functions import pandas_udf, PandasUDFType

import pandas as pd

df = spark.createDataFrame([['aa'], ['bb'], ['cc'], ['aa'], ['aa'], ['aa']], ["value"])

pandas_udf(df.schema, PandasUDFType.SCALAR_ITER)

def filter_func(iterator):

for pdf in iterator:

yield pdf.loc[pdf.value.str.contains('^a'), :]

df.mapPartitionsInPandas(filter_func).show()

```

```

+-----+

|value|

+-----+

| aa|

| aa|

| aa|

| aa|

+-----+

```

3. `pandas.melt`

```python

from pyspark.sql.functions import pandas_udf, PandasUDFType

import pandas as pd

df = spark.createDataFrame(

pd.DataFrame({'A': {0: 'a', 1: 'b', 2: 'c'},

'B': {0: 1, 1: 3, 2: 5},

'C': {0: 2, 1: 4, 2: 6}}))

pandas_udf("A string, variable string, value long", PandasUDFType.SCALAR_ITER)

def filter_func(iterator):

for pdf in iterator:

import pandas as pd

yield pd.melt(pdf, id_vars=['A'], value_vars=['B', 'C'])

df.mapPartitionsInPandas(filter_func).show()

```

```

+---+--------+-----+

| A|variable|value|

+---+--------+-----+

| a| B| 1|

| a| C| 2|

| b| B| 3|

| b| C| 4|

| c| B| 5|

| c| C| 6|

+---+--------+-----+

```

The current limitation of `SCALAR_ITER` is that it doesn't allow different length of result, which is pretty critical in practice - for instance, we cannot simply filter by using Pandas APIs but we merely just map N to N. This PR allows map N to M like flatMap.

This API mimics the way of `mapPartitions` but keeps API shape of `SCALAR_ITER` by allowing different results.

### How does this PR implement?

This PR adds mimics both `dapply` with Arrow optimization and Grouped Map Pandas UDF. At Python execution side, it reuses existing `SCALAR_ITER` code path.

Therefore, externally, we don't introduce any new type of Pandas UDF but internally we use another evaluation type code `205` (`SQL_MAP_PANDAS_ITER_UDF`).

This approach is similar with Pandas' Windows function implementation with Grouped Aggregation Pandas UDF functions - internally we have `203` (`SQL_WINDOW_AGG_PANDAS_UDF`) but externally we just share the same `GROUPED_AGG`.

## How was this patch tested?

Manually tested and unittests were added.

Closes#24997 from HyukjinKwon/scalar-udf-iter.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Kafka batch data source is using v1 at the moment. In the PR I've migrated to v2. Majority of the change is moving code.

What this PR contains:

* useV1Sources usage fixed in `DataFrameReader` and `DataFrameWriter`

* `KafkaBatch` added to handle DSv2 batch reading

* `KafkaBatchWrite` added to handle DSv2 batch writing

* `KafkaBatchPartitionReader` extracted to share between batch and microbatch

* `KafkaDataWriter` extracted to share between batch, microbatch and continuous

* Batch related source/sink tests are now executing on v1 and v2 connectors

* Couple of classes hidden now, functions moved + couple of minor fixes

## How was this patch tested?

Existing + added unit tests.

Closes#24738 from gaborgsomogyi/SPARK-23098.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In the migration PR of Kafka V2: ac16c9a9ef (r298470645)

We find that the useV1SourceList configuration(spark.sql.sources.read.useV1SourceList and spark.sql.sources.write.useV1SourceList) should be for all data sources, instead of file source V2 only.

This PR is to fix it in DataFrameWriter/DataFrameReader.

## How was this patch tested?

Unit test

Closes#25004 from gengliangwang/reviseUseV1List.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

New R api of Arrow has removed `as_tibble` as of 2ef96c8623. Arrow optimization for DataFrame in R doesn't work due to the change.

This can be tested as below, after installing latest Arrow:

```

./bin/sparkR --conf spark.sql.execution.arrow.sparkr.enabled=true

```

```

> collect(createDataFrame(mtcars))

```

Before this PR:

```

> collect(createDataFrame(mtcars))

Error in get("as_tibble", envir = asNamespace("arrow")) :

object 'as_tibble' not found

```

After:

```

> collect(createDataFrame(mtcars))

mpg cyl disp hp drat wt qsec vs am gear carb

1 21.0 6 160.0 110 3.90 2.620 16.46 0 1 4 4

2 21.0 6 160.0 110 3.90 2.875 17.02 0 1 4 4

3 22.8 4 108.0 93 3.85 2.320 18.61 1 1 4 1

...

```

## How was this patch tested?

Manual test.

Closes#25012 from viirya/SPARK-28215.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In SPARK-23179, it has been introduced a flag to control the behavior in case of overflow on decimals. The behavior is: returning `null` when `spark.sql.decimalOperations.nullOnOverflow` (default and traditional Spark behavior); throwing an `ArithmeticException` if that conf is false (according to SQL standards, other DBs behavior).

`MakeDecimal` so far had an ambiguous behavior. In case of codegen mode, it returned `null` as the other operators, but in interpreted mode, it was throwing an `IllegalArgumentException`.

The PR aligns `MakeDecimal`'s behavior with the one of other operators as defined in SPARK-23179. So now both modes return `null` or throw `ArithmeticException` according to `spark.sql.decimalOperations.nullOnOverflow`'s value.

Credits for this PR to mickjermsurawong-stripe who pointed out the wrong behavior in #20350.

## How was this patch tested?

improved UTs

Closes#25010 from mgaido91/SPARK-28201.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

The documentation in `linalg.py` is not consistent. This PR uniforms the documentation.

## How was this patch tested?

NA

Closes#25011 from mgaido91/SPARK-28170.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This is very like #23590 .

`ByteBuffer.allocate` may throw `OutOfMemoryError` when the response is large but no enough memory is available. However, when this happens, `TransportClient.sendRpcSync` will just hang forever if the timeout set to unlimited.

This PR catches `Throwable` and uses the error to complete `SettableFuture`.

## How was this patch tested?

I tested in my IDE by setting the value of size to -1 to verify the result. Without this patch, it won't be finished until timeout (May hang forever if timeout set to MAX_INT), or the expected `IllegalArgumentException` will be caught.

```java

Override

public void onSuccess(ByteBuffer response) {

try {

int size = response.remaining();

ByteBuffer copy = ByteBuffer.allocate(size); // set size to -1 in runtime when debug

copy.put(response);

// flip "copy" to make it readable

copy.flip();

result.set(copy);

} catch (Throwable t) {

result.setException(t);

}

}

```

Closes#24964 from LantaoJin/SPARK-28160.

Lead-authored-by: LantaoJin <jinlantao@gmail.com>

Co-authored-by: lajin <lajin@ebay.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This pr add two API for [SessionCatalog](df4cb471c9/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/catalog/SessionCatalog.scala):

```scala

def listTables(db: String, pattern: String, includeLocalTempViews: Boolean): Seq[TableIdentifier]

def listLocalTempViews(pattern: String): Seq[TableIdentifier]

```

Because in some cases `listTables` does not need local temporary view and sometimes only need list local temporary view.

## How was this patch tested?

unit tests

Closes#24995 from wangyum/SPARK-28196.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

To make the #24972 change smaller. This pr improves `SparkMetadataOperationSuite` to avoid creating new sessions when getSchemas/getTables/getColumns.

## How was this patch tested?

N/A

Closes#24985 from wangyum/SPARK-28184.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Currently, ORC's `inferSchema` is implemented as randomly choosing one ORC file and reading its schema.

This PR follows the behavior of Parquet, it implements merge schemas logic by reading all ORC files in parallel through a spark job.

Users can enable merge schema by `spark.read.orc("xxx").option("mergeSchema", "true")` or by setting `spark.sql.orc.mergeSchema` to `true`, the prior one has higher priority.

## How was this patch tested?

tested by UT OrcUtilsSuite.scala

Closes#24043 from WangGuangxin/SPARK-11412.

Lead-authored-by: wangguangxin.cn <wangguangxin.cn@gmail.com>

Co-authored-by: wangguangxin.cn <wangguangxin.cn@bytedance.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

SPARK-27534 missed to address my own comments at https://github.com/WeichenXu123/spark/pull/8

It's better to push this in since the codes are already cleaned up.

## How was this patch tested?

Unittests fixed

Closes#25003 from HyukjinKwon/SPARK-27534.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

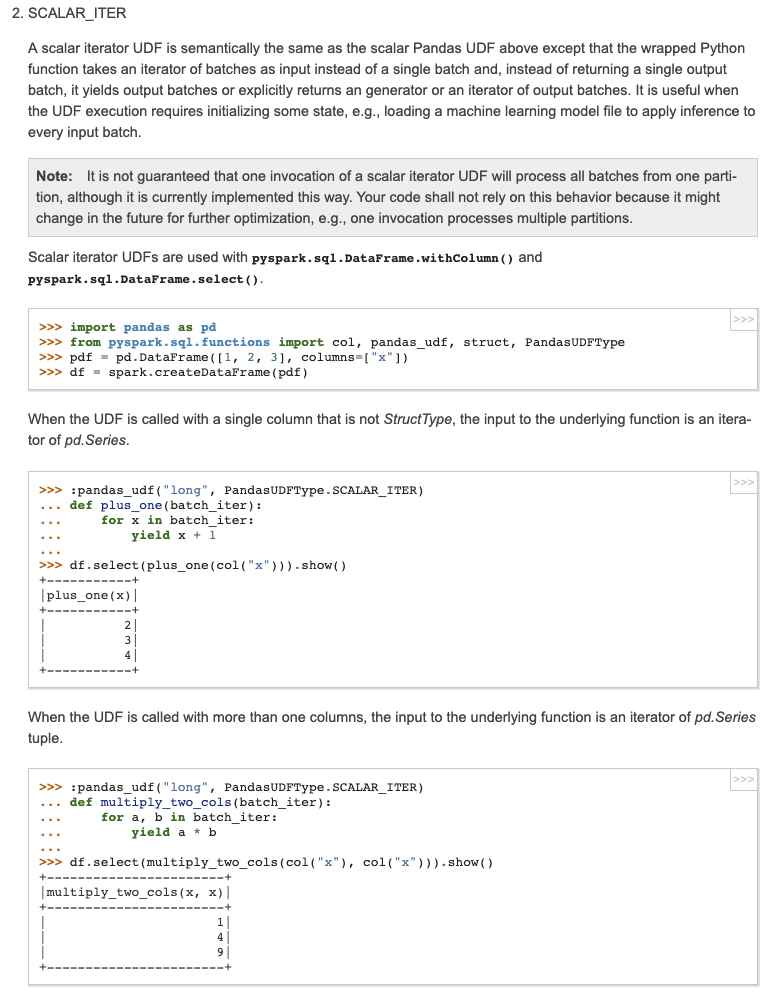

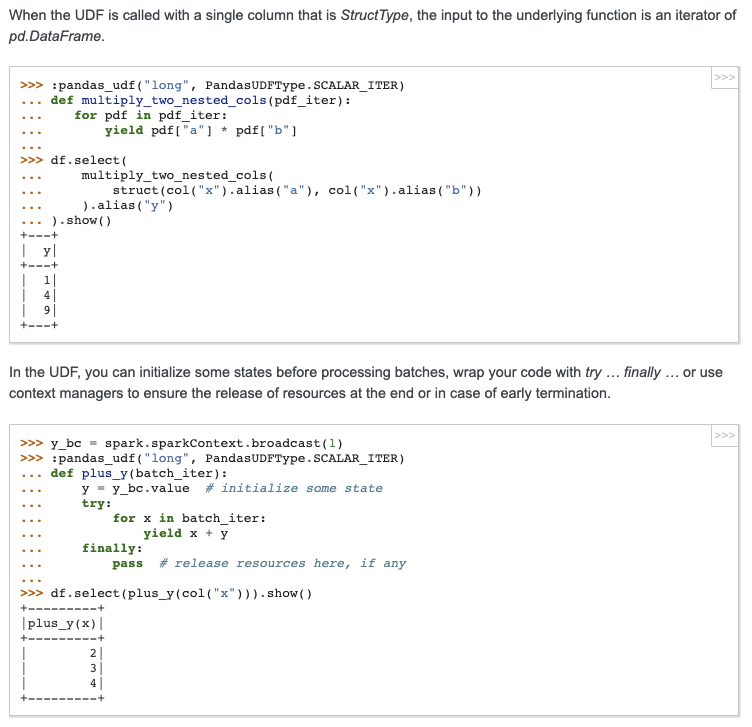

## What changes were proposed in this pull request?

Add docstring/doctest for `SCALAR_ITER` Pandas UDF. I explicitly mentioned that per-partition execution is an implementation detail, not guaranteed. I will submit another PR to add the same to user guide, just to keep this PR minimal.

I didn't add "doctest: +SKIP" in the first commit so it is easy to test locally.

cc: HyukjinKwon gatorsmile icexelloss BryanCutler WeichenXu123

## How was this patch tested?

doctest

Closes#25005 from mengxr/SPARK-28056.2.

Authored-by: Xiangrui Meng <meng@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

This is the first part of [SPARK-27396](https://issues.apache.org/jira/browse/SPARK-27396). This is the minimum set of changes necessary to support a pluggable back end for columnar processing. Follow on JIRAs would cover removing some of the duplication between functionality in this patch and functionality currently covered by things like ColumnarBatchScan.

## How was this patch tested?

I added in a new unit test to cover new code not really covered in other places.

I also did manual testing by implementing two plugins/extensions that take advantage of the new APIs to allow for columnar processing for some simple queries. One version runs on the [CPU](https://gist.github.com/revans2/c3cad77075c4fa5d9d271308ee2f1b1d). The other version run on a GPU, but because it has unreleased dependencies I will not include a link to it yet.

The CPU version I would expect to add in as an example with other documentation in a follow on JIRA

This is contributed on behalf of NVIDIA Corporation.

Closes#24795 from revans2/columnar-basic.

Authored-by: Robert (Bobby) Evans <bobby@apache.org>

Signed-off-by: Thomas Graves <tgraves@apache.org>