### What changes were proposed in this pull request?

Fitting ALS model can be failed due to nondeterministic input data. Currently the failure is thrown by an ArrayIndexOutOfBoundsException which is not explainable for end users what is wrong in fitting.

This patch catches this exception and rethrows a more explainable one, when the input data is nondeterministic.

Because we may not exactly know the output deterministic level of RDDs produced by user code, this patch also adds a note to Scala/Python/R ALS document about the training data deterministic level.

### Why are the changes needed?

ArrayIndexOutOfBoundsException was observed during fitting ALS model. It was caused by mismatching between in/out user/item blocks during computing ratings.

If the training RDD output is nondeterministic, when fetch failure is happened, rerun part of training RDD can produce inconsistent user/item blocks.

This patch is needed to notify users ALS fitting on nondeterministic input.

### Does this PR introduce any user-facing change?

Yes. When fitting ALS model on nondeterministic input data, previously if rerun happens, users would see ArrayIndexOutOfBoundsException caused by mismatch between In/Out user/item blocks.

After this patch, a SparkException with more clear message will be thrown, and original ArrayIndexOutOfBoundsException is wrapped.

### How was this patch tested?

Tested on development cluster.

Closes#25789 from viirya/als-indeterminate-input.

Lead-authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Co-authored-by: Liang-Chi Hsieh <liangchi@uber.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

Bucketizer support multi-column in the python side

### Why are the changes needed?

Bucketizer should support multi-column like the scala side.

### Does this PR introduce any user-facing change?

yes, this PR add new Python API

### How was this patch tested?

added testsuites

Closes#25801 from zhengruifeng/20542_py.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: zhengruifeng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

Follow the scala ```OneVsRestParams``` implementation, move ```setClassifier``` from ```OneVsRestParams``` to ```OneVsRest``` in Pyspark

### Why are the changes needed?

1. Maintain the parity between scala and python code.

2. ```Classifier``` can only be set in the estimator.

### Does this PR introduce any user-facing change?

Yes.

Previous behavior: ```OneVsRestModel``` has method ```setClassifier```

Current behavior: ```setClassifier``` is removed from ```OneVsRestModel```. ```classifier``` can only be set in ```OneVsRest```.

### How was this patch tested?

Use existing tests

Closes#25715 from huaxingao/spark-28969.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

reorganize the packages of DS v2 interfaces/classes:

1. `org.spark.sql.connector.catalog`: put `TableCatalog`, `Table` and other related interfaces/classes

2. `org.spark.sql.connector.expression`: put `Expression`, `Transform` and other related interfaces/classes

3. `org.spark.sql.connector.read`: put `ScanBuilder`, `Scan` and other related interfaces/classes

4. `org.spark.sql.connector.write`: put `WriteBuilder`, `BatchWrite` and other related interfaces/classes

### Why are the changes needed?

Data Source V2 has evolved a lot. It's a bit weird that `Expression` is in `org.spark.sql.catalog.v2` and `Table` is in `org.spark.sql.sources.v2`.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

existing tests

Closes#25700 from cloud-fan/package.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

JIRA :https://issues.apache.org/jira/browse/SPARK-29050

'a hdfs' change into 'an hdfs'

'an unique' change into 'a unique'

'an url' change into 'a url'

'a error' change into 'an error'

Closes#25756 from dengziming/feature_fix_typos.

Authored-by: dengziming <dengziming@growingio.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR proposes to allow `bytes` as an acceptable type for binary type for `createDataFrame`.

### Why are the changes needed?

`bytes` is a standard type for binary in Python. This should be respected in PySpark side.

### Does this PR introduce any user-facing change?

Yes, _when specified type is binary_, we will allow `bytes` as a binary type. Previously this was not allowed in both Python 2 and Python 3 as below:

```python

spark.createDataFrame([[b"abcd"]], "col binary")

```

in Python 3

```

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/.../spark/python/pyspark/sql/session.py", line 787, in createDataFrame

rdd, schema = self._createFromLocal(map(prepare, data), schema)

File "/.../spark/python/pyspark/sql/session.py", line 442, in _createFromLocal

data = list(data)

File "/.../spark/python/pyspark/sql/session.py", line 769, in prepare

verify_func(obj)

File "/.../forked/spark/python/pyspark/sql/types.py", line 1403, in verify

verify_value(obj)

File "/.../spark/python/pyspark/sql/types.py", line 1384, in verify_struct

verifier(v)

File "/.../spark/python/pyspark/sql/types.py", line 1403, in verify

verify_value(obj)

File "/.../spark/python/pyspark/sql/types.py", line 1397, in verify_default

verify_acceptable_types(obj)

File "/.../spark/python/pyspark/sql/types.py", line 1282, in verify_acceptable_types

% (dataType, obj, type(obj))))

TypeError: field col: BinaryType can not accept object b'abcd' in type <class 'bytes'>

```

in Python 2:

```

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/.../spark/python/pyspark/sql/session.py", line 787, in createDataFrame

rdd, schema = self._createFromLocal(map(prepare, data), schema)

File "/.../spark/python/pyspark/sql/session.py", line 442, in _createFromLocal

data = list(data)

File "/.../spark/python/pyspark/sql/session.py", line 769, in prepare

verify_func(obj)

File "/.../spark/python/pyspark/sql/types.py", line 1403, in verify

verify_value(obj)

File "/.../spark/python/pyspark/sql/types.py", line 1384, in verify_struct

verifier(v)

File "/.../spark/python/pyspark/sql/types.py", line 1403, in verify

verify_value(obj)

File "/.../spark/python/pyspark/sql/types.py", line 1397, in verify_default

verify_acceptable_types(obj)

File "/.../spark/python/pyspark/sql/types.py", line 1282, in verify_acceptable_types

% (dataType, obj, type(obj))))

TypeError: field col: BinaryType can not accept object 'abcd' in type <type 'str'>

```

So, it won't break anything.

### How was this patch tested?

Unittests were added and also manually tested as below.

```bash

./run-tests --python-executables=python2,python3 --testnames "pyspark.sql.tests.test_serde"

```

Closes#25749 from HyukjinKwon/SPARK-29041.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

- Remove SQLContext.createExternalTable and Catalog.createExternalTable, deprecated in favor of createTable since 2.2.0, plus tests of deprecated methods

- Remove HiveContext, deprecated in 2.0.0, in favor of `SparkSession.builder.enableHiveSupport`

- Remove deprecated KinesisUtils.createStream methods, plus tests of deprecated methods, deprecate in 2.2.0

- Remove deprecated MLlib (not Spark ML) linear method support, mostly utility constructors and 'train' methods, and associated docs. This includes methods in LinearRegression, LogisticRegression, Lasso, RidgeRegression. These have been deprecated since 2.0.0

- Remove deprecated Pyspark MLlib linear method support, including LogisticRegressionWithSGD, LinearRegressionWithSGD, LassoWithSGD

- Remove 'runs' argument in KMeans.train() method, which has been a no-op since 2.0.0

- Remove deprecated ChiSqSelector isSorted protected method

- Remove deprecated 'yarn-cluster' and 'yarn-client' master argument in favor of 'yarn' and deploy mode 'cluster', etc

Notes:

- I was not able to remove deprecated DataFrameReader.json(RDD) in favor of DataFrameReader.json(Dataset); the former was deprecated in 2.2.0, but, it is still needed to support Pyspark's .json() method, which can't use a Dataset.

- Looks like SQLContext.createExternalTable was not actually deprecated in Pyspark, but, almost certainly was meant to be? Catalog.createExternalTable was.

- I afterwards noted that the toDegrees, toRadians functions were almost removed fully in SPARK-25908, but Felix suggested keeping just the R version as they hadn't been technically deprecated. I'd like to revisit that. Do we really want the inconsistency? I'm not against reverting it again, but then that implies leaving SQLContext.createExternalTable just in Pyspark too, which seems weird.

- I *kept* LogisticRegressionWithSGD, LinearRegressionWithSGD, LassoWithSGD, RidgeRegressionWithSGD in Pyspark, though deprecated, as it is hard to remove them (still used by StreamingLogisticRegressionWithSGD?) and they are not fully removed in Scala. Maybe should not have been deprecated.

### Why are the changes needed?

Deprecated items are easiest to remove in a major release, so we should do so as much as possible for Spark 3. This does not target items deprecated 'recently' as of Spark 2.3, which is still 18 months old.

### Does this PR introduce any user-facing change?

Yes, in that deprecated items are removed from some public APIs.

### How was this patch tested?

Existing tests.

Closes#25684 from srowen/SPARK-28980.

Lead-authored-by: Sean Owen <sean.owen@databricks.com>

Co-authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

Add HasNumFeatures in the scala side, with `1<<18` as the default value

### Why are the changes needed?

HasNumFeatures is already added in the py side, it is reasonable to keep them in sync.

I don't find other similar place.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Existing testsuites

Closes#25671 from zhengruifeng/add_HasNumFeatures.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: zhengruifeng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

`DataFrameReader.json()` accepts a partition column that is of numeric, date or timestamp type, according to the implementation in `JDBCRelation.scala`. Update the scaladoc accordingly, to match the documentation in `sql-data-sources-jdbc.md` too.

### Why are the changes needed?

scaladoc is incorrect.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

N/A

Closes#25687 from srowen/SPARK-28977.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

The Experimental and Evolving annotations are both (like Unstable) used to express that a an API may change. However there are many things in the code that have been marked that way since even Spark 1.x. Per the dev thread, anything introduced at or before Spark 2.3.0 is pretty much 'stable' in that it would not change without a deprecation cycle. Therefore I'd like to remove most of these annotations. And, remove the `:: Experimental ::` scaladoc tag too. And likewise for Python, R.

The changes below can be summarized as:

- Generally, anything introduced at or before Spark 2.3.0 has been unmarked as neither Evolving nor Experimental

- Obviously experimental items like DSv2, Barrier mode, ExperimentalMethods are untouched

- I _did_ unmark a few MLlib classes introduced in 2.4, as I am quite confident they're not going to change (e.g. KolmogorovSmirnovTest, PowerIterationClustering)

It's a big change to review, so I'd suggest scanning the list of _files_ changed to see if any area seems like it should remain partly experimental and examine those.

### Why are the changes needed?

Many of these annotations are incorrect; the APIs are de facto stable. Leaving them also makes legitimate usages of the annotations less meaningful.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing tests.

Closes#25558 from srowen/SPARK-28855.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

This PR proposes to match the test with branch-2.4. See https://github.com/apache/spark/pull/25593#discussion_r318109047

Seems using `SparkSession.builder` with Spark conf possibly affects other tests.

### Why are the changes needed?

To match with branch-2.4 and to make easier to backport.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Test was fixed.

Closes#25603 from HyukjinKwon/SPARK-28881-followup.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Make `spark.sql.crossJoin.enabled` default value true

### Why are the changes needed?

For implicit cross join, we can set up a watchdog to cancel it if running for a long time.

When "spark.sql.crossJoin.enabled" is false, because `CheckCartesianProducts` is implemented in logical plan stage, it may generate some mismatching error which may confuse end user:

* it's done in logical phase, so we may fail queries that can be executed via broadcast join, which is very fast.

* if we move the check to the physical phase, then a query may success at the beginning, and begin to fail when the table size gets larger (other people insert data to the table). This can be quite confusing.

* the CROSS JOIN syntax doesn't work well if join reorder happens.

* some non-equi-join will generate plan using cartesian product, but `CheckCartesianProducts` do not detect it and raise error.

So that in order to address this in simpler way, we can turn off showing this cross-join error by default.

For reference, I list some cases raising mismatching error here:

Providing:

```

spark.range(2).createOrReplaceTempView("sm1") // can be broadcast

spark.range(50000000).createOrReplaceTempView("bg1") // cannot be broadcast

spark.range(60000000).createOrReplaceTempView("bg2") // cannot be broadcast

```

1) Some join could be convert to broadcast nested loop join, but CheckCartesianProducts raise error. e.g.

```

select sm1.id, bg1.id from bg1 join sm1 where sm1.id < bg1.id

```

2) Some join will run by CartesianJoin but CheckCartesianProducts DO NOT raise error. e.g.

```

select bg1.id, bg2.id from bg1 join bg2 where bg1.id < bg2.id

```

### Does this PR introduce any user-facing change?

### How was this patch tested?

Closes#25520 from WeichenXu123/SPARK-28621.

Authored-by: WeichenXu <weichen.xu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR proposes to add a test case for:

```bash

./bin/pyspark --conf spark.driver.maxResultSize=1m

spark.conf.set("spark.sql.execution.arrow.enabled",True)

```

```python

spark.range(10000000).toPandas()

```

```

Empty DataFrame

Columns: [id]

Index: []

```

which can result in partial results (see https://github.com/apache/spark/pull/25593#issuecomment-525153808). This regression was found between Spark 2.3 and Spark 2.4, and accidentally fixed.

### Why are the changes needed?

To prevent the same regression in the future.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Test was added.

Closes#25594 from HyukjinKwon/SPARK-28881.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

expose the newly added tree-based transformation in the py side

### Why are the changes needed?

function parity

### Does this PR introduce any user-facing change?

yes, add `setLeafCol` & `getLeafCol` in the py side

### How was this patch tested?

added tests & local tests

Closes#25566 from zhengruifeng/py_tree_path.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

<!--

Thanks for sending a pull request! Here are some tips for you:

1. If this is your first time, please read our contributor guidelines: https://spark.apache.org/contributing.html

2. Ensure you have added or run the appropriate tests for your PR: https://spark.apache.org/developer-tools.html

3. If the PR is unfinished, add '[WIP]' in your PR title, e.g., '[WIP][SPARK-XXXX] Your PR title ...'.

4. Be sure to keep the PR description updated to reflect all changes.

5. Please write your PR title to summarize what this PR proposes.

6. If possible, provide a concise example to reproduce the issue for a faster review.

-->

### What changes were proposed in this pull request?

SparkML writer gets hadoop conf from session state, instead of the spark context.

<!--

Please clarify what changes you are proposing. The purpose of this section is to outline the changes and how this PR fixes the issue.

If possible, please consider writing useful notes for better and faster reviews in your PR. See the examples below.

1. If you refactor some codes with changing classes, showing the class hierarchy will help reviewers.

2. If you fix some SQL features, you can provide some references of other DBMSes.

3. If there is design documentation, please add the link.

4. If there is a discussion in the mailing list, please add the link.

-->

### Why are the changes needed?

Allow for multiple sessions in the same context that have different hadoop configurations.

<!--

Please clarify why the changes are needed. For instance,

1. If you propose a new API, clarify the use case for a new API.

2. If you fix a bug, you can clarify why it is a bug.

-->

### Does this PR introduce any user-facing change?

<!--

If yes, please clarify the previous behavior and the change this PR proposes - provide the console output, description and/or an example to show the behavior difference if possible.

If no, write 'No'.

-->

No

### How was this patch tested?

<!--

If tests were added, say they were added here. Please make sure to add some test cases that check the changes thoroughly including negative and positive cases if possible.

If it was tested in a way different from regular unit tests, please clarify how you tested step by step, ideally copy and paste-able, so that other reviewers can test and check, and descendants can verify in the future.

If tests were not added, please describe why they were not added and/or why it was difficult to add.

-->

Tested in pyspark.ml.tests.test_persistence.PersistenceTest test_default_read_write

Closes#25505 from helenyugithub/SPARK-28776.

Authored-by: heleny <heleny@palantir.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

The parameters doc string of the function format_string was changed from _col_, _d_ to _format_, _cols_ which is what the actual function declaration states

### Why are the changes needed?

The parameters stated by the documentation was inaccurate

### Does this PR introduce any user-facing change?

Yes.

**BEFORE**

**AFTER**

### How was this patch tested?

N/A: documentation only

<!--

If tests were added, say they were added here. Please make sure to add some test cases that check the changes thoroughly including negative and positive cases if possible.

If it was tested in a way different from regular unit tests, please clarify how you tested step by step, ideally copy and paste-able, so that other reviewers can test and check, and descendants can verify in the future.

If tests were not added, please describe why they were not added and/or why it was difficult to add.

-->

Closes#25506 from darrentirto/SPARK-28777.

Authored-by: darrentirto <darrentirto@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

<!--

Thanks for sending a pull request! Here are some tips for you:

1. If this is your first time, please read our contributor guidelines: https://spark.apache.org/contributing.html

2. Ensure you have added or run the appropriate tests for your PR: https://spark.apache.org/developer-tools.html

3. If the PR is unfinished, add '[WIP]' in your PR title, e.g., '[WIP][SPARK-XXXX] Your PR title ...'.

4. Be sure to keep the PR description updated to reflect all changes.

5. Please write your PR title to summarize what this PR proposes.

6. If possible, provide a concise example to reproduce the issue for a faster review.

-->

### What changes were proposed in this pull request?

<!--

Please clarify what changes you are proposing. The purpose of this section is to outline the changes and how this PR fixes the issue.

If possible, please consider writing useful notes for better and faster reviews in your PR. See the examples below.

1. If you refactor some codes with changing classes, showing the class hierarchy will help reviewers.

2. If you fix some SQL features, you can provide some references of other DBMSes.

3. If there is design documentation, please add the link.

4. If there is a discussion in the mailing list, please add the link.

-->

This PR proposes to fix both tests below:

```

======================================================================

FAIL: test_raw_and_probability_prediction (pyspark.ml.tests.test_algorithms.MultilayerPerceptronClassifierTest)

----------------------------------------------------------------------

Traceback (most recent call last):

File "/Users/dongjoon/APACHE/spark-master/python/pyspark/ml/tests/test_algorithms.py", line 89, in test_raw_and_probability_prediction

self.assertTrue(np.allclose(result.rawPrediction, expected_rawPrediction, atol=1E-4))

AssertionError: False is not true

```

```

File "/Users/dongjoon/APACHE/spark-master/python/pyspark/mllib/clustering.py", line 386, in __main__.GaussianMixtureModel

Failed example:

abs(softPredicted[0] - 1.0) < 0.001

Expected:

True

Got:

False

**********************************************************************

File "/Users/dongjoon/APACHE/spark-master/python/pyspark/mllib/clustering.py", line 388, in __main__.GaussianMixtureModel

Failed example:

abs(softPredicted[1] - 0.0) < 0.001

Expected:

True

Got:

False

```

to pass in JDK 11.

The root cause seems to be different float values being understood via Py4J. This issue also was found in https://github.com/apache/spark/pull/25132 before.

When floats are transferred from Python to JVM, the values are sent as are. Python floats are not "precise" due to its own limitation - https://docs.python.org/3/tutorial/floatingpoint.html.

For some reasons, the floats from Python on JDK 8 and JDK 11 are different, which is already explicitly not guaranteed.

This seems why only some tests in PySpark with floats are being failed.

So, this PR fixes it by increasing tolerance in identified test cases in PySpark.

### Why are the changes needed?

<!--

Please clarify why the changes are needed. For instance,

1. If you propose a new API, clarify the use case for a new API.

2. If you fix a bug, you can clarify why it is a bug.

-->

To fully support JDK 11. See, for instance, https://github.com/apache/spark/pull/25443 and https://github.com/apache/spark/pull/25423 for ongoing efforts.

### Does this PR introduce any user-facing change?

<!--

If yes, please clarify the previous behavior and the change this PR proposes - provide the console output, description and/or an example to show the behavior difference if possible.

If no, write 'No'.

-->

No.

### How was this patch tested?

<!--

If tests were added, say they were added here. Please make sure to add some test cases that check the changes thoroughly including negative and positive cases if possible.

If it was tested in a way different from regular unit tests, please clarify how you tested step by step, ideally copy and paste-able, so that other reviewers can test and check, and descendants can verify in the future.

If tests were not added, please describe why they were not added and/or why it was difficult to add.

-->

Manually tested as described in JIRAs:

```

$ build/sbt -Phadoop-3.2 test:package

$ python/run-tests --testnames 'pyspark.ml.tests.test_algorithms' --python-executables python

```

```

$ build/sbt -Phadoop-3.2 test:package

$ python/run-tests --testnames 'pyspark.mllib.clustering' --python-executables python

```

Closes#25475 from HyukjinKwon/SPARK-28735.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Leave ```shared.py``` untouched. Move Python ```DecisionTreeParams``` to ```regression.py```

## How was this patch tested?

Use existing tests

Closes#25406 from huaxingao/spark-28243.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

A GROUPED_AGG pandas python udf can't work, if without group by clause, like `select udf(id) from table`.

This doesn't match with aggregate function like sum, count..., and also dataset API like `df.agg(udf(df['id']))`.

When we parse a udf (or an aggregate function) like that from SQL syntax, it is known as a function in a project. `GlobalAggregates` rule in analysis makes such project as aggregate, by looking for aggregate expressions. At the moment, we should also look for GROUPED_AGG pandas python udf.

## How was this patch tested?

Added tests.

Closes#25352 from viirya/SPARK-28422.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

The mapping of Spark schema to Avro schema is many-to-many. (See https://spark.apache.org/docs/latest/sql-data-sources-avro.html#supported-types-for-spark-sql---avro-conversion)

The default schema mapping might not be exactly what users want. For example, by default, a "string" column is always written as "string" Avro type, but users might want to output the column as "enum" Avro type.

With PR https://github.com/apache/spark/pull/21847, Spark supports user-specified schema in the batch writer.

For the function `to_avro`, we should support user-specified output schema as well.

## How was this patch tested?

Unit test.

Closes#25419 from gengliangwang/to_avro.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

In this PR, we implements a complete process of GPU-aware resources scheduling

in Standalone. The whole process looks like: Worker sets up isolated resources

when it starts up and registers to master along with its resources. And, Master

picks up usable workers according to driver/executor's resource requirements to

launch driver/executor on them. Then, Worker launches the driver/executor after

preparing resources file, which is created under driver/executor's working directory,

with specified resource addresses(told by master). When driver/executor finished,

their resources could be recycled to worker. Finally, if a worker stops, it

should always release its resources firstly.

For the case of Workers and Drivers in **client** mode run on the same host, we introduce

a config option named `spark.resources.coordinate.enable`(default true) to indicate

whether Spark should coordinate resources for user. If `spark.resources.coordinate.enable=false`, user should be responsible for configuring different resources for Workers and Drivers when use resourcesFile or discovery script. If true, Spark would help user to assign different resources for Workers and Drivers.

The solution for Spark to coordinate resources among Workers and Drivers is:

Generally, use a shared file named *____allocated_resources____.json* to sync allocated

resources info among Workers and Drivers on the same host.

After a Worker or Driver found all resources using the configured resourcesFile and/or

discovery script during launching, it should filter out available resources by excluding resources already allocated in *____allocated_resources____.json* and acquire resources from available resources according to its own requirement. After that, it should write its allocated resources along with its process id (pid) into *____allocated_resources____.json*. Pid (proposed by tgravescs) here used to check whether the allocated resources are still valid in case of Worker or Driver crashes and doesn't release resources properly. And when a Worker or Driver finished, normally, it would always clean up its own allocated resources in *____allocated_resources____.json*.

Note that we'll always get a file lock before any access to file *____allocated_resources____.json*

and release the lock finally.

Futhermore, we appended resources info in `WorkerSchedulerStateResponse` to work

around master change behaviour in HA mode.

## How was this patch tested?

Added unit tests in WorkerSuite, MasterSuite, SparkContextSuite.

Manually tested with client/cluster mode (e.g. multiple workers) in a single node Standalone.

Closes#25047 from Ngone51/SPARK-27371.

Authored-by: wuyi <ngone_5451@163.com>

Signed-off-by: Thomas Graves <tgraves@apache.org>

## What changes were proposed in this pull request?

Right now, batch DataFrame always changes the schema to nullable automatically (See this line: 325bc8e9c6/sql/core/src/main/scala/org/apache/spark/sql/execution/datasources/DataSource.scala (L399)). But streaming file source is missing this.

This PR updates the streaming file source schema to force it be nullable. I also added a flag `spark.sql.streaming.fileSource.schema.forceNullable` to disable this change since some users may rely on the old behavior.

## How was this patch tested?

The new unit test.

Closes#25382 from zsxwing/SPARK-28651.

Authored-by: Shixiong Zhu <zsxwing@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Currently, PythonBroadcast may delete its data file while a python worker still needs it. This happens because PythonBroadcast overrides the `finalize()` method to delete its data file. So, when GC happens and no references on broadcast variable, it may trigger `finalize()` to delete

data file. That's also means, data under python Broadcast variable couldn't be deleted when `unpersist()`/`destroy()` called but relys on GC.

In this PR, we removed the `finalize()` method, and map the PythonBroadcast data file to a BroadcastBlock(which has the same broadcast id with the broadcast variable who wrapped this PythonBroadcast) when PythonBroadcast is deserializing. As a result, the data file could be deleted just like other pieces of the Broadcast variable when `unpersist()`/`destroy()` called and do not rely on GC any more.

## How was this patch tested?

Added a Python test, and tested manually(verified create/delete the broadcast block).

Closes#25262 from Ngone51/SPARK-28486.

Authored-by: wuyi <ngone_5451@163.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This PR picks up https://github.com/apache/spark/pull/25315 back after removing `Popen.wait` usage which exists in Python 3 only. I saw the last test results wrongly and thought it was passed.

Fix flaky test DaemonTests.do_termination_test which fail on Python 3.7. I add a sleep after the test connection to daemon.

## How was this patch tested?

Run test

```

python/run-tests --python-executables=python3.7 --testname "pyspark.tests.test_daemon DaemonTests"

```

**Before**

Fail on test "test_termination_sigterm". And we can see daemon process do not exit.

**After**

Test passed

Closes#25343 from HyukjinKwon/SPARK-28582.

Authored-by: WeichenXu <weichen.xu@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Update HashingTF to use new implementation of MurmurHash3

Make HashingTF use the old MurmurHash3 when a model from pre 3.0 is loaded

## How was this patch tested?

Change existing unit tests. Also add one unit test to make sure HashingTF use the old MurmurHash3 when a model from pre 3.0 is loaded

Closes#25303 from huaxingao/spark-23469.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Fix flaky test DaemonTests.do_termination_test which fail on Python 3.7. I add a sleep after the test connection to daemon.

## How was this patch tested?

Run test

```

python/run-tests --python-executables=python3.7 --testname "pyspark.tests.test_daemon DaemonTests"

```

**Before**

Fail on test "test_termination_sigterm". And we can see daemon process do not exit.

**After**

Test passed

Closes#25315 from WeichenXu123/fix_py37_daemon.

Authored-by: WeichenXu <weichen.xu@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This PR proposes to use `AtomicReference` so that parent and child threads can access to the same file block holder.

Python UDF expressions are turned to a plan and then it launches a separate thread to consume the input iterator. In the separate child thread, the iterator sets `InputFileBlockHolder.set` before the parent does which the parent thread is unable to read later.

1. In this separate child thread, if it happens to call `InputFileBlockHolder.set` first without initialization of the parent's thread local (which is done when the `ThreadLocal.get()` is first called), the child thread seems calling its own `initialValue` to initialize.

2. After that, the parent calls its own `initialValue` to initializes at the first call of `ThreadLocal.get()`.

3. Both now have two different references. Updating at child isn't reflected to parent.

This PR fixes it via initializing parent's thread local with `AtomicReference` for file status so that they can be used in each task, and children thread's update is reflected.

I also tried to explain this a bit more at https://github.com/apache/spark/pull/24958#discussion_r297203041.

## How was this patch tested?

Manually tested and unittest was added.

Closes#24958 from HyukjinKwon/SPARK-28153.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

I remove the deprecate `ImageSchema.readImages`.

Move some useful methods from class `ImageSchema` into class `ImageFileFormat`.

In pyspark, I rename `ImageSchema` class to be `ImageUtils`, and keep some useful python methods in it.

## How was this patch tested?

UT.

Please review https://spark.apache.org/contributing.html before opening a pull request.

Closes#25245 from WeichenXu123/remove_image_schema.

Authored-by: WeichenXu <weichen.xu@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

PySpark worker daemon reads from stdin the worker PIDs to kill. 1bb60ab839/python/pyspark/daemon.py (L127)

However, the worker process is a forked process from the worker daemon process and we didn't close stdin on the child after fork. This means the child and user program can read stdin as well, which blocks daemon from receiving the PID to kill. This can cause issues because the task reaper might detect the task was not terminated and eventually kill the JVM.

This PR fix this by redirecting the standard input of the forked child to devnull.

## How was this patch tested?

Manually test.

In `pyspark`, run:

```

import subprocess

def task(_):

subprocess.check_output(["cat"])

sc.parallelize(range(1), 1).mapPartitions(task).count()

```

Before:

The job will get stuck and press Ctrl+C to exit the job but the python worker process do not exit.

After:

The job finish correctly. The "cat" print nothing (because the dummay stdin is "/dev/null").

The python worker process exit normally.

Please review https://spark.apache.org/contributing.html before opening a pull request.

Closes#25138 from WeichenXu123/SPARK-26175.

Authored-by: WeichenXu <weichen.xu@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Implement `RobustScaler`

Since the transformation is quite similar to `StandardScaler`, I refactor the transform function so that it can be reused in both scalers.

## How was this patch tested?

existing and added tests

Closes#25160 from zhengruifeng/robust_scaler.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

In the PR, I propose to use `uuuu` for years instead of `yyyy` in date/timestamp patterns without the era pattern `G` (https://docs.oracle.com/javase/8/docs/api/java/time/format/DateTimeFormatter.html). **Parsing/formatting of positive years (current era) will be the same.** The difference is in formatting negative years belong to previous era - BC (Before Christ).

I replaced the `yyyy` pattern by `uuuu` everywhere except:

1. Test, Suite & Benchmark. Existing tests must work as is.

2. `SimpleDateFormat` because it doesn't support the `uuuu` pattern.

3. Comments and examples (except comments related to already replaced patterns).

Before the changes, the year of common era `100` and the year of BC era `-99`, showed similarly as `100`. After the changes negative years will be formatted with the `-` sign.

Before:

```Scala

scala> Seq(java.time.LocalDate.of(-99, 1, 1)).toDF().show

+----------+

| value|

+----------+

|0100-01-01|

+----------+

```

After:

```Scala

scala> Seq(java.time.LocalDate.of(-99, 1, 1)).toDF().show

+-----------+

| value|

+-----------+

|-0099-01-01|

+-----------+

```

## How was this patch tested?

By existing test suites, and added tests for negative years to `DateFormatterSuite` and `TimestampFormatterSuite`.

Closes#25230 from MaxGekk/year-pattern-uuuu.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

Add indexOf method for ml.feature.HashingTF.

## How was this patch tested?

Add Unit test.

Closes#25250 from huaxingao/spark-21481.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Replace duplicate code by function `_memory_limit`

## How was this patch tested?

Existing UTs

Closes#25273 from WangGuangxin/python_memory_limit.

Authored-by: wangguangxin.cn <wangguangxin.cn@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

remove deprecated ``` def context(self, sqlContext)``` from ```pyspark/ml/util.py```

## How was this patch tested?

test with existing ml PySpark test suites

Closes#25246 from huaxingao/spark-28507.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Remove deprecated setFeatureSubsetStrategy and setSubsamplingRate from Python TreeEnsembleParams

## How was this patch tested?

Use existing tests.

Closes#25046 from huaxingao/spark-28243.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This adds simple check for `count` argument:

- If it is a `Column` we apply `_to_java_column` before invoking JVM counterpart

- Otherwise we proceed as before.

## How was this patch tested?

Manual testing.

Closes#25193 from zero323/SPARK-28278.

Authored-by: zero323 <mszymkiewicz@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

In the following python code

```

df.write.mode("overwrite").insertInto("table")

```

```insertInto``` ignores ```mode("overwrite")``` and appends by default.

## How was this patch tested?

Add Unit test.

Closes#25175 from huaxingao/spark-28411.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In the PR, I propose to convert options values to strings by using `to_str()` for the following functions: `from_csv()`, `to_csv()`, `from_json()`, `to_json()`, `schema_of_csv()` and `schema_of_json()`. This will make handling of function options consistent to option handling in `DataFrameReader`/`DataFrameWriter`.

For example:

```Python

df.select(from_csv(df.value, "s string", {'ignoreLeadingWhiteSpace': True})

```

## How was this patch tested?

Added an example for `from_csv()` which was tested by:

```Shell

./python/run-tests --testnames pyspark.sql.functions

```

Closes#25182 from MaxGekk/options_to_str.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

It fixes a flaky test:

```

ERROR [0.164s]: test_query_execution_listener_on_collect (pyspark.sql.tests.test_dataframe.QueryExecutionListenerTests)

----------------------------------------------------------------------

Traceback (most recent call last):

File "/home/jenkins/python/pyspark/sql/tests/test_dataframe.py", line 758, in test_query_execution_listener_on_collect

"The callback from the query execution listener should be called after 'collect'")

AssertionError: The callback from the query execution listener should be called after 'collect'

```

Seems it can be failed because the event was somehow delayed but checked first.

## How was this patch tested?

Manually.

Closes#25177 from HyukjinKwon/SPARK-28418.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This upgraded to a newer version of Pyrolite. Most updates [1] in the newer version are for dotnot. For java, it includes a bug fix to Unpickler regarding cleaning up Unpickler memo, and support of protocol 5.

After upgrading, we can remove the fix at SPARK-27629 for the bug in Unpickler.

[1] https://github.com/irmen/Pyrolite/compare/pyrolite-4.23...master

## How was this patch tested?

Manually tested on Python 3.6 in local on existing tests.

Closes#25143 from viirya/upgrade-pyrolite.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

The `_prepare_for_python_RDD` method currently broadcasts a pickled command if its length is greater than the hardcoded value `1 << 20` (1M). This change sets this value as a Spark conf instead.

## How was this patch tested?

Unit tests, manual tests.

Closes#25123 from jessecai/SPARK-28355.

Authored-by: Jesse Cai <jesse.cai@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Add python api support and JavaSparkContext support for resources(). I needed the JavaSparkContext support for it to properly translate into python with the py4j stuff.

## How was this patch tested?

Unit tests added and manually tested in local cluster mode and on yarn.

Closes#25087 from tgravescs/SPARK-28234-python.

Authored-by: Thomas Graves <tgraves@nvidia.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

There is a bug in `ExtractPythonUDFs` that produces wrong result attributes. It causes a failure when using `PythonUDF`s among multiple child plans, e.g., join. An example is using `PythonUDF`s in join condition.

```python

>>> left = spark.createDataFrame([Row(a=1, a1=1, a2=1), Row(a=2, a1=2, a2=2)])

>>> right = spark.createDataFrame([Row(b=1, b1=1, b2=1), Row(b=1, b1=3, b2=1)])

>>> f = udf(lambda a: a, IntegerType())

>>> df = left.join(right, [f("a") == f("b"), left.a1 == right.b1])

>>> df.collect()

19/07/10 12:20:49 ERROR Executor: Exception in task 5.0 in stage 0.0 (TID 5)

java.lang.ArrayIndexOutOfBoundsException: 1

at org.apache.spark.sql.catalyst.expressions.GenericInternalRow.genericGet(rows.scala:201)

at org.apache.spark.sql.catalyst.expressions.BaseGenericInternalRow.getAs(rows.scala:35)

at org.apache.spark.sql.catalyst.expressions.BaseGenericInternalRow.isNullAt(rows.scala:36)

at org.apache.spark.sql.catalyst.expressions.BaseGenericInternalRow.isNullAt$(rows.scala:36)

at org.apache.spark.sql.catalyst.expressions.GenericInternalRow.isNullAt(rows.scala:195)

at org.apache.spark.sql.catalyst.expressions.JoinedRow.isNullAt(JoinedRow.scala:70)

...

```

## How was this patch tested?

Added test.

Closes#25091 from viirya/SPARK-28323.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

## What changes were proposed in this pull request?

In both cases, the input `DataFrame` schema must contain only the information that's required for the matrix object, so a vector column in the case of `RowMatrix` and long and vector columns for `IndexedRowMatrix`.

## How was this patch tested?

Unit tests that verify:

- `RowMatrix` and `IndexedRowMatrix` can be created from `DataFrame`s

- If the schema does not match expectations, we throw an `IllegalArgumentException`

Please review https://spark.apache.org/contributing.html before opening a pull request.

Closes#24953 from henrydavidge/row-matrix-df.

Authored-by: Henry D <henrydavidge@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This PR adds some tests converted from `pgSQL/case.sql'` to test UDFs. Please see contribution guide of this umbrella ticket - [SPARK-27921](https://issues.apache.org/jira/browse/SPARK-27921).

This PR also contains two minor fixes:

1. Change name of Scala UDF from `UDF:name(...)` to `name(...)` to be consistent with Python'

2. Fix Scala UDF at `IntegratedUDFTestUtils.scala ` to handle `null` in strings.

<details><summary>Diff comparing to 'pgSQL/case.sql'</summary>

<p>

```diff

diff --git a/sql/core/src/test/resources/sql-tests/results/pgSQL/case.sql.out b/sql/core/src/test/resources/sql-tests/results/udf/pgSQL/udf-case.sql.out

index fa078d16d6d..55bef64338f 100644

--- a/sql/core/src/test/resources/sql-tests/results/pgSQL/case.sql.out

+++ b/sql/core/src/test/resources/sql-tests/results/udf/pgSQL/udf-case.sql.out

-115,7 +115,7 struct<>

-- !query 13

SELECT '3' AS `One`,

CASE

- WHEN 1 < 2 THEN 3

+ WHEN CAST(udf(1 < 2) AS boolean) THEN 3

END AS `Simple WHEN`

-- !query 13 schema

struct<One:string,Simple WHEN:int>

-126,10 +126,10 struct<One:string,Simple WHEN:int>

-- !query 14

SELECT '<NULL>' AS `One`,

CASE

- WHEN 1 > 2 THEN 3

+ WHEN 1 > 2 THEN udf(3)

END AS `Simple default`

-- !query 14 schema

-struct<One:string,Simple default:int>

+struct<One:string,Simple default:string>

-- !query 14 output

<NULL> NULL

-137,17 +137,17 struct<One:string,Simple default:int>

-- !query 15

SELECT '3' AS `One`,

CASE

- WHEN 1 < 2 THEN 3

- ELSE 4

+ WHEN udf(1) < 2 THEN udf(3)

+ ELSE udf(4)

END AS `Simple ELSE`

-- !query 15 schema

-struct<One:string,Simple ELSE:int>

+struct<One:string,Simple ELSE:string>

-- !query 15 output

3 3

-- !query 16

-SELECT '4' AS `One`,

+SELECT udf('4') AS `One`,

CASE

WHEN 1 > 2 THEN 3

ELSE 4

-159,10 +159,10 struct<One:string,ELSE default:int>

-- !query 17

-SELECT '6' AS `One`,

+SELECT udf('6') AS `One`,

CASE

- WHEN 1 > 2 THEN 3

- WHEN 4 < 5 THEN 6

+ WHEN CAST(udf(1 > 2) AS boolean) THEN 3

+ WHEN udf(4) < 5 THEN 6

ELSE 7

END AS `Two WHEN with default`

-- !query 17 schema

-173,7 +173,7 struct<One:string,Two WHEN with default:int>

-- !query 18

SELECT '7' AS `None`,

- CASE WHEN rand() < 0 THEN 1

+ CASE WHEN rand() < udf(0) THEN 1

END AS `NULL on no matches`

-- !query 18 schema

struct<None:string,NULL on no matches:int>

-182,36 +182,36 struct<None:string,NULL on no matches:int>

-- !query 19

-SELECT CASE WHEN 1=0 THEN 1/0 WHEN 1=1 THEN 1 ELSE 2/0 END

+SELECT CASE WHEN CAST(udf(1=0) AS boolean) THEN 1/0 WHEN 1=1 THEN 1 ELSE 2/0 END

-- !query 19 schema

-struct<CASE WHEN (1 = 0) THEN (CAST(1 AS DOUBLE) / CAST(0 AS DOUBLE)) WHEN (1 = 1) THEN CAST(1 AS DOUBLE) ELSE (CAST(2 AS DOUBLE) / CAST(0 AS DOUBLE)) END:double>

+struct<CASE WHEN CAST(udf((1 = 0)) AS BOOLEAN) THEN (CAST(1 AS DOUBLE) / CAST(0 AS DOUBLE)) WHEN (1 = 1) THEN CAST(1 AS DOUBLE) ELSE (CAST(2 AS DOUBLE) / CAST(0 AS DOUBLE)) END:double>

-- !query 19 output

1.0

-- !query 20

-SELECT CASE 1 WHEN 0 THEN 1/0 WHEN 1 THEN 1 ELSE 2/0 END

+SELECT CASE 1 WHEN 0 THEN 1/udf(0) WHEN 1 THEN 1 ELSE 2/0 END

-- !query 20 schema

-struct<CASE WHEN (1 = 0) THEN (CAST(1 AS DOUBLE) / CAST(0 AS DOUBLE)) WHEN (1 = 1) THEN CAST(1 AS DOUBLE) ELSE (CAST(2 AS DOUBLE) / CAST(0 AS DOUBLE)) END:double>

+struct<CASE WHEN (1 = 0) THEN (CAST(1 AS DOUBLE) / CAST(CAST(udf(0) AS DOUBLE) AS DOUBLE)) WHEN (1 = 1) THEN CAST(1 AS DOUBLE) ELSE (CAST(2 AS DOUBLE) / CAST(0 AS DOUBLE)) END:double>

-- !query 20 output

1.0

-- !query 21

-SELECT CASE WHEN i > 100 THEN 1/0 ELSE 0 END FROM case_tbl

+SELECT CASE WHEN i > 100 THEN udf(1/0) ELSE udf(0) END FROM case_tbl

-- !query 21 schema

-struct<CASE WHEN (i > 100) THEN (CAST(1 AS DOUBLE) / CAST(0 AS DOUBLE)) ELSE CAST(0 AS DOUBLE) END:double>

+struct<CASE WHEN (i > 100) THEN udf((cast(1 as double) / cast(0 as double))) ELSE udf(0) END:string>

-- !query 21 output

-0.0

-0.0

-0.0

-0.0

+0

+0

+0

+0

-- !query 22

-SELECT CASE 'a' WHEN 'a' THEN 1 ELSE 2 END

+SELECT CASE 'a' WHEN 'a' THEN udf(1) ELSE udf(2) END

-- !query 22 schema

-struct<CASE WHEN (a = a) THEN 1 ELSE 2 END:int>

+struct<CASE WHEN (a = a) THEN udf(1) ELSE udf(2) END:string>

-- !query 22 output

1

-283,7 +283,7 big

-- !query 27

-SELECT * FROM CASE_TBL WHERE COALESCE(f,i) = 4

+SELECT * FROM CASE_TBL WHERE udf(COALESCE(f,i)) = 4

-- !query 27 schema

struct<i:int,f:double>

-- !query 27 output

-291,7 +291,7 struct<i:int,f:double>

-- !query 28

-SELECT * FROM CASE_TBL WHERE NULLIF(f,i) = 2

+SELECT * FROM CASE_TBL WHERE udf(NULLIF(f,i)) = 2

-- !query 28 schema

struct<i:int,f:double>

-- !query 28 output

-299,10 +299,10 struct<i:int,f:double>

-- !query 29

-SELECT COALESCE(a.f, b.i, b.j)

+SELECT udf(COALESCE(a.f, b.i, b.j))

FROM CASE_TBL a, CASE2_TBL b

-- !query 29 schema

-struct<coalesce(f, CAST(i AS DOUBLE), CAST(j AS DOUBLE)):double>

+struct<udf(coalesce(f, cast(i as double), cast(j as double))):string>

-- !query 29 output

-30.3

-30.3

-332,8 +332,8 struct<coalesce(f, CAST(i AS DOUBLE), CAST(j AS DOUBLE)):double>

-- !query 30

SELECT *

- FROM CASE_TBL a, CASE2_TBL b

- WHERE COALESCE(a.f, b.i, b.j) = 2

+ FROM CASE_TBL a, CASE2_TBL b

+ WHERE udf(COALESCE(a.f, b.i, b.j)) = 2

-- !query 30 schema

struct<i:int,f:double,i:int,j:int>

-- !query 30 output

-342,7 +342,7 struct<i:int,f:double,i:int,j:int>

-- !query 31

-SELECT '' AS Five, NULLIF(a.i,b.i) AS `NULLIF(a.i,b.i)`,

+SELECT udf('') AS Five, NULLIF(a.i,b.i) AS `NULLIF(a.i,b.i)`,

NULLIF(b.i, 4) AS `NULLIF(b.i,4)`

FROM CASE_TBL a, CASE2_TBL b

-- !query 31 schema

-377,7 +377,7 struct<Five:string,NULLIF(a.i,b.i):int,NULLIF(b.i,4):int>

-- !query 32

SELECT '' AS `Two`, *

FROM CASE_TBL a, CASE2_TBL b

- WHERE COALESCE(f,b.i) = 2

+ WHERE CAST(udf(COALESCE(f,b.i) = 2) AS boolean)

-- !query 32 schema

struct<Two:string,i:int,f:double,i:int,j:int>

-- !query 32 output

-388,15 +388,15 struct<Two:string,i:int,f:double,i:int,j:int>

-- !query 33

SELECT CASE

(CASE vol('bar')

- WHEN 'foo' THEN 'it was foo!'

- WHEN vol(null) THEN 'null input'

+ WHEN udf('foo') THEN 'it was foo!'

+ WHEN udf(vol(null)) THEN 'null input'

WHEN 'bar' THEN 'it was bar!' END

)

- WHEN 'it was foo!' THEN 'foo recognized'

- WHEN 'it was bar!' THEN 'bar recognized'

- ELSE 'unrecognized' END

+ WHEN udf('it was foo!') THEN 'foo recognized'

+ WHEN 'it was bar!' THEN udf('bar recognized')

+ ELSE 'unrecognized' END AS col

-- !query 33 schema

-struct<CASE WHEN (CASE WHEN (UDF:vol(bar) = foo) THEN it was foo! WHEN (UDF:vol(bar) = UDF:vol(null)) THEN null input WHEN (UDF:vol(bar) = bar) THEN it was bar! END = it was foo!) THEN foo recognized WHEN (CASE WHEN (UDF:vol(bar) = foo) THEN it was foo! WHEN (UDF:vol(bar) = UDF:vol(null)) THEN null input WHEN (UDF:vol(bar) = bar) THEN it was bar! END = it was bar!) THEN bar recognized ELSE unrecognized END:string>

+struct<col:string>

-- !query 33 output

bar recognized

```

</p>

</details>

https://github.com/apache/spark/pull/25069 contains the same minor fixes as it's required to write the tests.

## How was this patch tested?

Tested as guided in [SPARK-27921](https://issues.apache.org/jira/browse/SPARK-27921).

Closes#25070 from HyukjinKwon/SPARK-28273.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This PR proposes to rename `mapPartitionsInPandas` to `mapInPandas` with a separate evaluation type .

Had an offline discussion with rxin, mengxr and cloud-fan

The reason is basically:

1. `SCALAR_ITER` doesn't make sense with `mapPartitionsInPandas`.

2. It cannot share the same Pandas UDF, for instance, at `select` and `mapPartitionsInPandas` unlike `GROUPED_AGG` because iterator's return type is different.

3. `mapPartitionsInPandas` -> `mapInPandas` - see https://github.com/apache/spark/pull/25044#issuecomment-508298552 and https://github.com/apache/spark/pull/25044#issuecomment-508299764

Renaming `SCALAR_ITER` as `MAP_ITER` is abandoned due to 2. reason.

For `XXX_ITER`, it might have to have a different interface in the future if we happen to add other versions of them. But this is an orthogonal topic with `mapPartitionsInPandas`.

## How was this patch tested?

Existing tests should cover.

Closes#25044 from HyukjinKwon/SPARK-28198.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In Python 2.7 with latest PyArrow and Pandas, the error message seems a bit different with Python 3. This PR simply fixes the test.

```

======================================================================

FAIL: test_createDataFrame_with_incorrect_schema (pyspark.sql.tests.test_arrow.ArrowTests)

----------------------------------------------------------------------

Traceback (most recent call last):

File "/.../spark/python/pyspark/sql/tests/test_arrow.py", line 275, in test_createDataFrame_with_incorrect_schema

self.spark.createDataFrame(pdf, schema=wrong_schema)

AssertionError: "integer.*required.*got.*str" does not match "('Exception thrown when converting pandas.Series (object) to Arrow Array (int32). It can be caused by overflows or other unsafe conversions warned by Arrow. Arrow safe type check can be disabled by using SQL config `spark.sql.execution.pandas.arrowSafeTypeConversion`.', ArrowTypeError('an integer is required',))"

======================================================================

FAIL: test_createDataFrame_with_incorrect_schema (pyspark.sql.tests.test_arrow.EncryptionArrowTests)

----------------------------------------------------------------------

Traceback (most recent call last):

File "/.../spark/python/pyspark/sql/tests/test_arrow.py", line 275, in test_createDataFrame_with_incorrect_schema

self.spark.createDataFrame(pdf, schema=wrong_schema)

AssertionError: "integer.*required.*got.*str" does not match "('Exception thrown when converting pandas.Series (object) to Arrow Array (int32). It can be caused by overflows or other unsafe conversions warned by Arrow. Arrow safe type check can be disabled by using SQL config `spark.sql.execution.pandas.arrowSafeTypeConversion`.', ArrowTypeError('an integer is required',))"

```

## How was this patch tested?

Manually tested.

```

cd python

./run-tests --python-executables=python --modules pyspark-sql

```

Closes#25042 from HyukjinKwon/SPARK-28240.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This PR proposes to add `mapPartitionsInPandas` API to DataFrame by using existing `SCALAR_ITER` as below:

1. Filtering via setting the column

```python

from pyspark.sql.functions import pandas_udf, PandasUDFType

df = spark.createDataFrame([(1, 21), (2, 30)], ("id", "age"))

pandas_udf(df.schema, PandasUDFType.SCALAR_ITER)

def filter_func(iterator):

for pdf in iterator:

yield pdf[pdf.id == 1]

df.mapPartitionsInPandas(filter_func).show()

```

```

+---+---+

| id|age|

+---+---+

| 1| 21|

+---+---+

```

2. `DataFrame.loc`

```python

from pyspark.sql.functions import pandas_udf, PandasUDFType

import pandas as pd

df = spark.createDataFrame([['aa'], ['bb'], ['cc'], ['aa'], ['aa'], ['aa']], ["value"])

pandas_udf(df.schema, PandasUDFType.SCALAR_ITER)

def filter_func(iterator):

for pdf in iterator:

yield pdf.loc[pdf.value.str.contains('^a'), :]

df.mapPartitionsInPandas(filter_func).show()

```

```

+-----+

|value|

+-----+

| aa|

| aa|

| aa|

| aa|

+-----+

```

3. `pandas.melt`

```python

from pyspark.sql.functions import pandas_udf, PandasUDFType

import pandas as pd

df = spark.createDataFrame(

pd.DataFrame({'A': {0: 'a', 1: 'b', 2: 'c'},

'B': {0: 1, 1: 3, 2: 5},

'C': {0: 2, 1: 4, 2: 6}}))

pandas_udf("A string, variable string, value long", PandasUDFType.SCALAR_ITER)

def filter_func(iterator):

for pdf in iterator:

import pandas as pd

yield pd.melt(pdf, id_vars=['A'], value_vars=['B', 'C'])

df.mapPartitionsInPandas(filter_func).show()

```

```

+---+--------+-----+

| A|variable|value|

+---+--------+-----+

| a| B| 1|

| a| C| 2|

| b| B| 3|

| b| C| 4|

| c| B| 5|

| c| C| 6|

+---+--------+-----+

```

The current limitation of `SCALAR_ITER` is that it doesn't allow different length of result, which is pretty critical in practice - for instance, we cannot simply filter by using Pandas APIs but we merely just map N to N. This PR allows map N to M like flatMap.

This API mimics the way of `mapPartitions` but keeps API shape of `SCALAR_ITER` by allowing different results.

### How does this PR implement?

This PR adds mimics both `dapply` with Arrow optimization and Grouped Map Pandas UDF. At Python execution side, it reuses existing `SCALAR_ITER` code path.

Therefore, externally, we don't introduce any new type of Pandas UDF but internally we use another evaluation type code `205` (`SQL_MAP_PANDAS_ITER_UDF`).

This approach is similar with Pandas' Windows function implementation with Grouped Aggregation Pandas UDF functions - internally we have `203` (`SQL_WINDOW_AGG_PANDAS_UDF`) but externally we just share the same `GROUPED_AGG`.

## How was this patch tested?

Manually tested and unittests were added.

Closes#24997 from HyukjinKwon/scalar-udf-iter.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

The documentation in `linalg.py` is not consistent. This PR uniforms the documentation.

## How was this patch tested?

NA

Closes#25011 from mgaido91/SPARK-28170.

Authored-by: Marco Gaido <marcogaido91@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

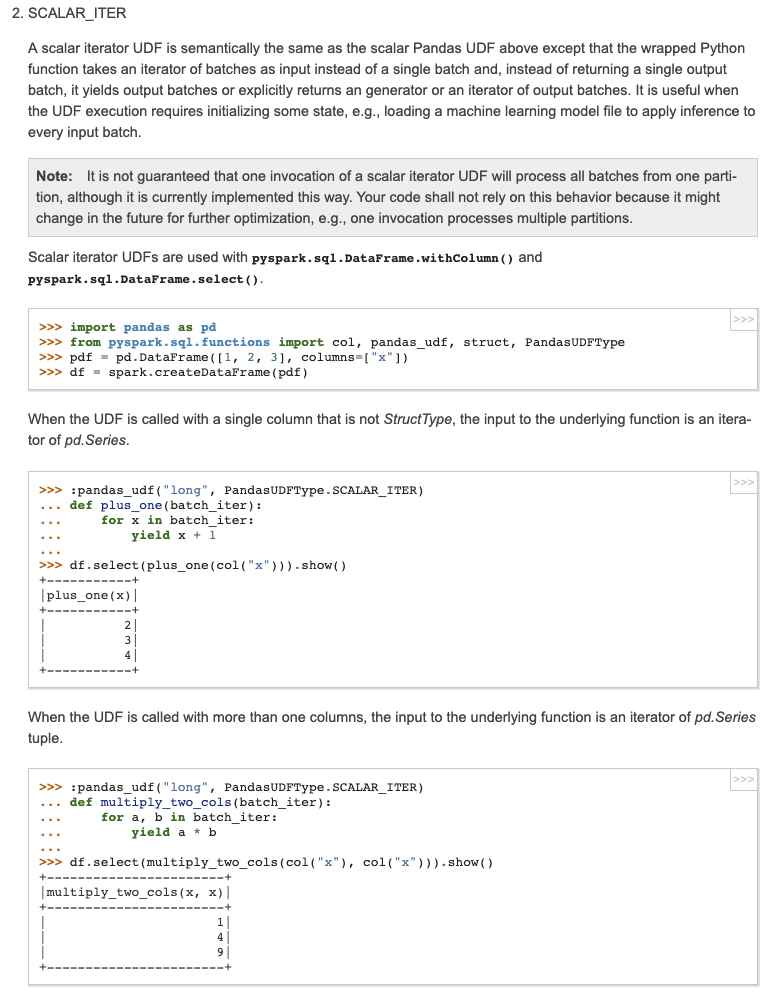

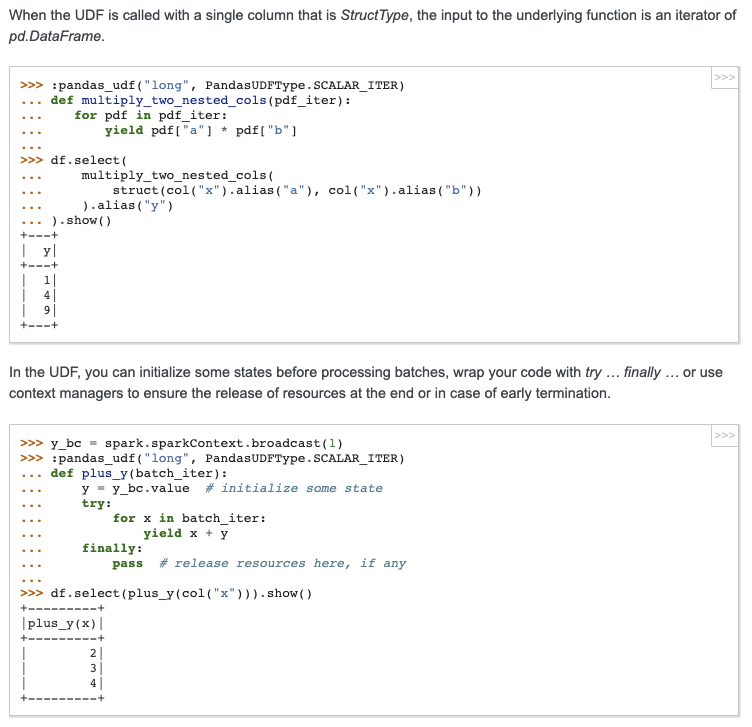

## What changes were proposed in this pull request?

Add docstring/doctest for `SCALAR_ITER` Pandas UDF. I explicitly mentioned that per-partition execution is an implementation detail, not guaranteed. I will submit another PR to add the same to user guide, just to keep this PR minimal.

I didn't add "doctest: +SKIP" in the first commit so it is easy to test locally.

cc: HyukjinKwon gatorsmile icexelloss BryanCutler WeichenXu123

## How was this patch tested?

doctest

Closes#25005 from mengxr/SPARK-28056.2.

Authored-by: Xiangrui Meng <meng@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

Closes the generator when Python UDFs stop early.

### Manually verification on pandas iterator UDF and mapPartitions

```python

from pyspark.sql import SparkSession

from pyspark.sql.functions import pandas_udf, PandasUDFType

from pyspark.sql.functions import col, udf

from pyspark.taskcontext import TaskContext

import time

import os

spark.conf.set('spark.sql.execution.arrow.maxRecordsPerBatch', '1')

spark.conf.set('spark.sql.pandas.udf.buffer.size', '4')

pandas_udf("int", PandasUDFType.SCALAR_ITER)

def fi1(it):

try:

for batch in it:

yield batch + 100

time.sleep(1.0)

except BaseException as be:

print("Debug: exception raised: " + str(type(be)))

raise be

finally:

open("/tmp/000001.tmp", "a").close()

df1 = spark.range(10).select(col('id').alias('a')).repartition(1)

# will see log Debug: exception raised: <class 'GeneratorExit'>

# and file "/tmp/000001.tmp" generated.

df1.select(col('a'), fi1('a')).limit(2).collect()

def mapper(it):

try:

for batch in it:

yield batch

except BaseException as be:

print("Debug: exception raised: " + str(type(be)))

raise be

finally:

open("/tmp/000002.tmp", "a").close()

df2 = spark.range(10000000).repartition(1)

# will see log Debug: exception raised: <class 'GeneratorExit'>

# and file "/tmp/000002.tmp" generated.

df2.rdd.mapPartitions(mapper).take(2)

```

## How was this patch tested?

Unit test added.

Please review https://spark.apache.org/contributing.html before opening a pull request.

Closes#24986 from WeichenXu123/pandas_iter_udf_limit.

Authored-by: WeichenXu <weichen.xu@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Currently with `toLocalIterator()` and `toPandas()` with Arrow enabled, if the Spark job being run in the background serving thread errors, it will be caught and sent to Python through the PySpark serializer.

This is not the ideal solution because it is only catch a SparkException, it won't handle an error that occurs in the serializer, and each method has to have it's own special handling to propagate the error.

This PR instead returns the Python Server object along with the serving port and authentication info, so that it allows the Python caller to join with the serving thread. During the call to join, the serving thread Future is completed either successfully or with an exception. In the latter case, the exception will be propagated to Python through the Py4j call.

## How was this patch tested?

Existing tests

Closes#24834 from BryanCutler/pyspark-propagate-server-error-SPARK-27992.

Authored-by: Bryan Cutler <cutlerb@gmail.com>

Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

## What changes were proposed in this pull request?

add missing RankingEvaluator

## How was this patch tested?

added testsuites

Closes#24869 from zhengruifeng/ranking_eval.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This patch removes `fillna(0)` when creating ArrowBatch from a pandas Series.

With `fillna(0)`, the original code would turn a timestamp type into object type, which pyarrow will complain later:

```

>>> s = pd.Series([pd.NaT, pd.Timestamp('2015-01-01')])

>>> s.dtypes

dtype('<M8[ns]')

>>> s.fillna(0)

0 0

1 2015-01-01 00:00:00

dtype: object

```

## How was this patch tested?

Added `test_timestamp_nat`

Closes#24844 from icexelloss/SPARK-28003-arrow-nat.

Authored-by: Li Jin <ice.xelloss@gmail.com>

Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

## What changes were proposed in this pull request?

Currently, pretty skipped message added by f7435bec6a mechanism seems not working when xmlrunner is installed apparently.

This PR fixes two things:

1. When `xmlrunner` is installed, seems `xmlrunner` does not respect `vervosity` level in unittests (default is level 1).

So the output looks as below

```

Running tests...

----------------------------------------------------------------------

SSSSSSSSSSSSSSSSSSSSSSSSSSSSSSSSSSSSSSSSSSSSSSSS

----------------------------------------------------------------------

```

So it is not caught by our message detection mechanism.

2. If we manually set the `vervocity` level to `xmlrunner`, it prints messages as below:

```

test_mixed_udf (pyspark.sql.tests.test_pandas_udf_scalar.ScalarPandasUDFTests) ... SKIP (0.000s)

test_mixed_udf_and_sql (pyspark.sql.tests.test_pandas_udf_scalar.ScalarPandasUDFTests) ... SKIP (0.000s)

...

```

This is different in our Jenkins machine:

```

test_createDataFrame_column_name_encoding (pyspark.sql.tests.test_arrow.ArrowTests) ... skipped 'Pandas >= 0.23.2 must be installed; however, it was not found.'

test_createDataFrame_does_not_modify_input (pyspark.sql.tests.test_arrow.ArrowTests) ... skipped 'Pandas >= 0.23.2 must be installed; however, it was not found.'

...

```

Note that last `SKIP` is different. This PR fixes the regular expression to catch `SKIP` case as well.

## How was this patch tested?

Manually tested.

**Before:**

```

Starting test(python2.7): pyspark....

Finished test(python2.7): pyspark.... (0s)

...

Tests passed in 562 seconds

========================================================================

...

```

**After:**

```

Starting test(python2.7): pyspark....

Finished test(python2.7): pyspark.... (48s) ... 93 tests were skipped

...

Tests passed in 560 seconds

Skipped tests pyspark.... with python2.7:

pyspark...(...) ... SKIP (0.000s)

...

========================================================================

...

```

Closes#24927 from HyukjinKwon/SPARK-28130.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

When running FlatMapGroupsInPandasExec or AggregateInPandasExec the shuffle uses a default number of partitions of 200 in "spark.sql.shuffle.partitions". If the data is small, e.g. in testing, many of the partitions will be empty but are treated just the same.

This PR checks the `mapPartitionsInternal` iterator to be non-empty before calling `ArrowPythonRunner` to start computation on the iterator.

## How was this patch tested?

Existing tests. Ran the following benchmarks a simple example where most partitions are empty:

```python

from pyspark.sql.functions import pandas_udf, PandasUDFType

from pyspark.sql.types import *

df = spark.createDataFrame(

[(1, 1.0), (1, 2.0), (2, 3.0), (2, 5.0), (2, 10.0)],

("id", "v"))

pandas_udf("id long, v double", PandasUDFType.GROUPED_MAP)

def normalize(pdf):

v = pdf.v

return pdf.assign(v=(v - v.mean()) / v.std())

df.groupby("id").apply(normalize).count()

```

**Before**

```

In [4]: %timeit df.groupby("id").apply(normalize).count()

1.58 s ± 62.8 ms per loop (mean ± std. dev. of 7 runs, 1 loop each)

In [5]: %timeit df.groupby("id").apply(normalize).count()

1.52 s ± 29.5 ms per loop (mean ± std. dev. of 7 runs, 1 loop each)

In [6]: %timeit df.groupby("id").apply(normalize).count()

1.52 s ± 37.8 ms per loop (mean ± std. dev. of 7 runs, 1 loop each)

```

**After this Change**

```

In [2]: %timeit df.groupby("id").apply(normalize).count()

646 ms ± 89.9 ms per loop (mean ± std. dev. of 7 runs, 1 loop each)

In [3]: %timeit df.groupby("id").apply(normalize).count()

408 ms ± 84.6 ms per loop (mean ± std. dev. of 7 runs, 1 loop each)

In [4]: %timeit df.groupby("id").apply(normalize).count()

381 ms ± 29.9 ms per loop (mean ± std. dev. of 7 runs, 1 loop each)

```

Closes#24926 from BryanCutler/pyspark-pandas_udf-map-agg-skip-empty-parts-SPARK-28128.

Authored-by: Bryan Cutler <cutlerb@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

expose more metrics in evaluator: weightedTruePositiveRate/weightedFalsePositiveRate/weightedFMeasure/truePositiveRateByLabel/falsePositiveRateByLabel/precisionByLabel/recallByLabel/fMeasureByLabel

## How was this patch tested?

existing cases and add cases

Closes#24868 from zhengruifeng/multi_class_support_bylabel.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

When using `DROPMALFORMED` mode, corrupted records aren't dropped if malformed columns aren't read. This behavior is due to CSV parser column pruning. Current doc of `DROPMALFORMED` doesn't mention the effect of column pruning. Users will be confused by the fact that `DROPMALFORMED` mode doesn't work as expected.

Column pruning also affects other modes. This is a doc improvement to add a note to doc of `mode` to explain it.

## How was this patch tested?

N/A. This is just doc change.

Closes#24894 from viirya/SPARK-28058.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This increases the minimum supported version of Pandas to 0.23.2. Using a lower version will raise an error `Pandas >= 0.23.2 must be installed; however, your version was 0.XX`. Also, a workaround for using pyarrow with Pandas 0.19.2 was removed.

## How was this patch tested?

Existing Tests

Closes#24867 from BryanCutler/pyspark-increase-min-pandas-SPARK-28041.

Authored-by: Bryan Cutler <cutlerb@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Allow Pandas UDF to take an iterator of pd.Series or an iterator of tuple of pd.Series.

Note the UDF input args will be always one iterator:

* if the udf take only column as input, the iterator's element will be pd.Series (corresponding to the column values batch)

* if the udf take multiple columns as inputs, the iterator's element will be a tuple composed of multiple `pd.Series`s, each one corresponding to the multiple columns as inputs (keep the same order). For example:

```

pandas_udf("int", PandasUDFType.SCALAR_ITER)

def the_udf(iterator):

for col1_batch, col2_batch in iterator:

yield col1_batch + col2_batch

df.select(the_udf("col1", "col2"))

```

The udf above will add col1 and col2.

I haven't add unit tests, but manually tests show it works fine. So it is ready for first pass review.

We can test several typical cases:

```

from pyspark.sql import SparkSession

from pyspark.sql.functions import pandas_udf, PandasUDFType

from pyspark.sql.functions import udf

from pyspark.taskcontext import TaskContext

df = spark.createDataFrame([(1, 20), (3, 40)], ["a", "b"])

pandas_udf("int", PandasUDFType.SCALAR_ITER)

def fi1(it):

pid = TaskContext.get().partitionId()

print("DBG: fi1: do init stuff, partitionId=" + str(pid))

for batch in it:

yield batch + 100

print("DBG: fi1: do close stuff, partitionId=" + str(pid))

pandas_udf("int", PandasUDFType.SCALAR_ITER)

def fi2(it):

pid = TaskContext.get().partitionId()

print("DBG: fi2: do init stuff, partitionId=" + str(pid))

for batch in it:

yield batch + 10000

print("DBG: fi2: do close stuff, partitionId=" + str(pid))

pandas_udf("int", PandasUDFType.SCALAR_ITER)

def fi3(it):

pid = TaskContext.get().partitionId()

print("DBG: fi3: do init stuff, partitionId=" + str(pid))

for x, y in it:

yield x + y * 10 + 100000

print("DBG: fi3: do close stuff, partitionId=" + str(pid))

pandas_udf("int", PandasUDFType.SCALAR)

def fp1(x):

return x + 1000

udf("int")

def fu1(x):

return x + 10

# test select "pandas iter udf/pandas udf/sql udf" expressions at the same time.

# Note this case the `fi1("a"), fi2("b"), fi3("a", "b")` will generate only one plan,

# and `fu1("a")`, `fp1("a")` will generate another two separate plans.

df.select(fi1("a"), fi2("b"), fi3("a", "b"), fu1("a"), fp1("a")).show()

# test chain two pandas iter udf together

# Note this case `fi2(fi1("a"))` will generate only one plan

# Also note the init stuff/close stuff call order will be like:

# (debug output following)

# DBG: fi2: do init stuff, partitionId=0

# DBG: fi1: do init stuff, partitionId=0

# DBG: fi1: do close stuff, partitionId=0

# DBG: fi2: do close stuff, partitionId=0

df.select(fi2(fi1("a"))).show()

# test more complex chain

# Note this case `fi1("a"), fi2("a")` will generate one plan,

# and `fi3(fi1_output, fi2_output)` will generate another plan

df.select(fi3(fi1("a"), fi2("a"))).show()

```

## How was this patch tested?

To be added.

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes#24643 from WeichenXu123/pandas_udf_iter.

Lead-authored-by: WeichenXu <weichen.xu@databricks.com>

Co-authored-by: Xiangrui Meng <meng@databricks.com>