### What changes were proposed in this pull request?

This PR cleans up several failures -- most of them silent -- in `dev/lint-python`. I don't understand how we haven't been bitten by these yet. Perhaps we've been lucky?

Fixes include:

* Fix how we compare versions. All the version checks currently in `master` silently fail with:

```

File "<string>", line 2

print(LooseVersion("""2.3.1""") >= LooseVersion("""2.4.0"""))

^

IndentationError: unexpected indent

```

Another problem is that `distutils.version` is undocumented and unsupported.

* Fix some basic bugs. e.g. We have an incorrect reference to `$PYDOCSTYLEBUILD`, which doesn't exist, which was causing the doc style test to silently fail with:

```

./dev/lint-python: line 193: --version: command not found

```

* Stop suppressing error output! It's hiding problems and serves no purpose here.

### Why are the changes needed?

`lint-python` is part of our CI build and is currently doing any combination of the following: silently failing; incorrectly skipping tests; incorrectly downloading libraries when a suitable library is already available.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Lots of manual testing with `set -x` enabled.

Closes#27910 from nchammas/SPARK-31153-lint-python.

Authored-by: Nicholas Chammas <nicholas.chammas@liveramp.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

The current migration guide of SQL is too long for most readers to find the needed info. This PR is to group the items in the migration guide of Spark SQL based on the corresponding components.

Note. This PR does not change the contents of the migration guides. Attached figure is the screenshot after the change.

### Why are the changes needed?

The current migration guide of SQL is too long for most readers to find the needed info.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

N/A

Closes#27909 from gatorsmile/migrationGuideReorg.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

This PR manually reverts changes in #25292 and then wraps java.lang.Error with `QueryExecutionException` to notify `QueryExecutionListener` to send it to `QueryExecutionListener.onFailure` which only accepts `Exception`.

The bug fix PR for 2.4 is #27904. It needs a separate PR because the touched codes were changed a lot.

### Why are the changes needed?

Avoid API changes and fix a bug.

### Does this PR introduce any user-facing change?

Yes. Reverting an API change happening in 3.0. QueryExecutionListener APIs will be the same as 2.4.

### How was this patch tested?

The new added test.

Closes#27907 from zsxwing/SPARK-31144.

Authored-by: Shixiong Zhu <zsxwing@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Spark's Web UI is using an older version of Bootstrap (v. 2.3.2) for the portal pages. Bootstrap 2.x was moved to EOL in Aug 2013 and Bootstrap 3.x was moved to EOL in July 2019 (https://github.com/twbs/release). Older versions of Bootstrap are also getting flagged in security scans for various CVEs:

https://snyk.io/vuln/SNYK-JS-BOOTSTRAP-72889https://snyk.io/vuln/SNYK-JS-BOOTSTRAP-173700https://snyk.io/vuln/npm:bootstrap:20180529https://snyk.io/vuln/npm:bootstrap:20160627

I haven't validated each CVE, but it would be nice to resolve any potential issues and get on a supported release.

The bad news is that there have been quite a few changes between Bootstrap 2 and Bootstrap 4. I've tried updating the library, refactoring/tweaking the CSS and JS to maintain a similar appearance and functionality, and testing the UI for functionality and appearance. This is a fairly large change so I'm sure additional testing and fixes will be needed.

### How was this patch tested?

This has been manually tested, but there is a ton of functionality and there are many pages and detail pages so it is very possible bugs introduced from the upgrade were missed. Additional testing and feedback is welcomed. If it appears a whole page was missed let me know and I'll take a pass at addressing that page/section.

Closes#27370 from clarkead/bootstrap4-core-upgrade.

Authored-by: Dale Clarke <a.dale.clarke@gmail.com>

Signed-off-by: Gengliang Wang <gengliang.wang@databricks.com>

### What changes were proposed in this pull request?

`StreamingQueryStatisticsPage` shows a message "No visualization information available because there is no batches" instead of showing empty timelines and histograms for empty streaming queries.

[Before this change applied]

[After this change applied]

### Why are the changes needed?

Empty charts are ugly and a little bit confusing.

It's better to clearly say "No visualization information available".

Also, this change fixes a JS error shown in the capture above.

This error occurs because `drawTimeline` in `streaming-page.js` is called even though `formattedDate` will be `undefined` for empty streaming queries.

### Does this PR introduce any user-facing change?

Yes. screen captures are shown above.

### How was this patch tested?

Manually tested by creating an empty streaming query like as follows.

```

val df = spark.readStream.format("socket").options(Map("host"->"<non-existing hostname>", "port"->"...")).load

df.writeStream.format("console").start

```

This streaming query will fail because of `non-existing hostname` and has no batches.

Closes#27755 from sarutak/fix-for-empty-batches.

Authored-by: Kousuke Saruta <sarutak@oss.nttdata.com>

Signed-off-by: Gengliang Wang <gengliang.wang@databricks.com>

### What changes were proposed in this pull request?

There is a minor issue in https://github.com/apache/spark/pull/26201

In the streaming statistics page, there is such error

```

streaming-page.js:211 Uncaught TypeError: Cannot read property 'top' of undefined

at SVGCircleElement.<anonymous> (streaming-page.js:211)

at SVGCircleElement.__onclick (d3.min.js:1)

```

in the console after clicking the timeline graph.

This PR is to fix it.

### Why are the changes needed?

Fix the error of javascript execution.

### Does this PR introduce any user-facing change?

No, the error shows up in the console.

### How was this patch tested?

Manual test.

Closes#27883 from gengliangwang/fixSelector.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Gengliang Wang <gengliang.wang@databricks.com>

### What changes were proposed in this pull request?

Since we reverted the original change in https://github.com/apache/spark/pull/27540, this PR is to remove the corresponding migration guide made in the commit https://github.com/apache/spark/pull/24948

### Why are the changes needed?

N/A

### Does this PR introduce any user-facing change?

N/A

### How was this patch tested?

N/A

Closes#27896 from gatorsmile/SPARK-28093Followup.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

When loading DataFrames from JDBC datasource with Kerberos authentication, remote executors (yarn-client/cluster etc. modes) fail to establish a connection due to lack of Kerberos ticket or ability to generate it.

This is a real issue when trying to ingest data from kerberized data sources (SQL Server, Oracle) in enterprise environment where exposing simple authentication access is not an option due to IT policy issues.

In this PR I've added Postgres support (other supported databases will come in later PRs).

What this PR contains:

* Added `keytab` and `principal` JDBC options

* Added `ConnectionProvider` trait and it's impementations:

* `BasicConnectionProvider` => unsecure connection

* `PostgresConnectionProvider` => postgres secure connection

* Added `ConnectionProvider` tests

* Added `PostgresKrbIntegrationSuite` docker integration test

* Created `SecurityUtils` to concentrate re-usable security related functionalities

* Documentation

### Why are the changes needed?

Missing JDBC kerberos support.

### Does this PR introduce any user-facing change?

Yes, 2 additional JDBC options added:

* keytab

* principal

If both provided then Spark does kerberos authentication.

### How was this patch tested?

To demonstrate the functionality with a standalone application I've created this repository: https://github.com/gaborgsomogyi/docker-kerberos

* Additional + existing unit tests

* Additional docker integration test

* Test on cluster manually

* `SKIP_API=1 jekyll build`

Closes#27637 from gaborgsomogyi/SPARK-30874.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@apache.org>

### What changes were proposed in this pull request?

This reverts commit 47d6e80a2e.

### Why are the changes needed?

There is no standard requiring that `div` must return the type of the operand, and always returning long type looks fine. This is kind of a cosmetic change and we should avoid it if it breaks existing queries. This is similar to reverting TRIM function parameter order change.

### Does this PR introduce any user-facing change?

Yes, change the behavior of `div` back to be the same as 2.4.

### How was this patch tested?

N/A

Closes#27835 from cloud-fan/revert2.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR aims to recover `IntervalBenchmark` and `DataTimeBenchmark` due to banning intervals as output.

### Why are the changes needed?

This PR recovers the benchmark suite.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Manually, re-run the benchmark.

Closes#27885 from yaooqinn/SPARK-31111-2.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

spark.sql.legacy.timeParser.enabled should be removed from SQLConf and the migration guide

spark.sql.legacy.timeParsePolicy is the right one

### Why are the changes needed?

fix doc

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

Pass the jenkins

Closes#27889 from yaooqinn/SPARK-31131.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This PR (SPARK-31130) aims to pin `Commons IO` version to `2.4` in SBT build like Maven build.

### Why are the changes needed?

[HADOOP-15261](https://issues.apache.org/jira/browse/HADOOP-15261) upgraded `commons-io` from 2.4 to 2.5 at Apache Hadoop 3.1.

In `Maven`, Apache Spark always uses `Commons IO 2.4` based on `pom.xml`.

```

$ git grep commons-io.version

pom.xml: <commons-io.version>2.4</commons-io.version>

pom.xml: <version>${commons-io.version}</version>

```

However, `SBT` choose `2.5`.

**branch-3.0**

```

$ build/sbt -Phadoop-3.2 "core/dependencyTree" | grep commons-io:commons-io | head -n1

[info] | | +-commons-io:commons-io:2.5

```

**branch-2.4**

```

$ build/sbt -Phadoop-3.1 "core/dependencyTree" | grep commons-io:commons-io | head -n1

[info] | | +-commons-io:commons-io:2.5

```

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Pass the Jenkins with `[test-hadoop3.2]` (the default PR Builder is `SBT`) and manually do the following locally.

```

build/sbt -Phadoop-3.2 "core/dependencyTree" | grep commons-io:commons-io | head -n1

```

Closes#27886 from dongjoon-hyun/SPARK-31130.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

AQE has a perf regression when using the default settings: if we coalesce the shuffle partitions into one or few partitions, we may leave many CPU cores idle and the perf is worse than with AQE off (which leverages all CPU cores).

Technically, this is not a bad thing. If there are many queries running at the same time, it's better to coalesce shuffle partitions into fewer partitions. However, the default settings of AQE should try to avoid any perf regression as possible as we can.

This PR changes the default value of minPartitionNum when coalescing shuffle partitions, to be `SparkContext.defaultParallelism`, so that AQE can leverage all the CPU cores.

### Why are the changes needed?

avoid AQE perf regression

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

existing tests

Closes#27879 from cloud-fan/aqe.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the error message, adding an example for typed Scala UDF.

### Why are the changes needed?

Help user to know how to migrate to typed Scala UDF.

### Does this PR introduce any user-facing change?

No, it's a new error message in Spark 3.0.

### How was this patch tested?

Pass Jenkins.

Closes#27884 from Ngone51/spark_31010_followup.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

As a common usage and according to the spark doc, users may often just copy their `hive-site.xml` to Spark directly from hive projects. Sometimes, the config file is not that clean for spark and may cause some side effects.

for example, `hive.session.history.enabled` will create a log for the hive jobs but useless for spark and also it will not be deleted on JVM exit.

this pr

1) disable `hive.session.history.enabled` explicitly to disable creating `hive_job_log` file, e.g.

```

Hive history file=/var/folders/01/h81cs4sn3dq2dd_k4j6fhrmc0000gn/T//kentyao/hive_job_log_79c63b29-95a4-4935-a9eb-2d89844dfe4f_493861201.txt

```

2) set `hive.execution.engine` to `spark` explicitly in case the config is `tez` and casue uneccesary problem like this:

```

Exception in thread "main" java.lang.NoClassDefFoundError: org/apache/tez/dag/api/SessionNotRunning

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:529)

```

### Why are the changes needed?

reduce overhead of internal complexity and users' hive cognitive load for running spark

### Does this PR introduce any user-facing change?

yes, `hive_job_log` file will not be created even enabled, and will not try to initialize tez kinds of stuff

### How was this patch tested?

add ut and verify manually

Closes#27827 from yaooqinn/SPARK-31066.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This patch changes to log better message (at least relevant to decommission) when registering signal handler for SIGPWR fails. SIGPWR is non-POSIX and not all unix-like OS support it; we can easily find the case, macOS.

### Why are the changes needed?

Spark already logs message on failing to register handler for SIGPWR, but the error message is too general which doesn't give the information of the impact. End users should be noticed that failing to register handler for SIGPWR effectively "disables" the feature of decommission.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Manually tested via running standalone master/worker in macOS 10.14.6, with `spark.worker.decommission.enabled= true`, and submit an example application to run executors.

(NOTE: the message may be different a bit, as the message can be updated in review phase.)

For worker log:

```

20/03/06 17:19:13 INFO Worker: Registering SIGPWR handler to trigger decommissioning.

20/03/06 17:19:13 INFO SignalUtils: Registering signal handler for PWR

20/03/06 17:19:13 WARN SignalUtils: Failed to register SIGPWR - disabling worker decommission.

java.lang.IllegalArgumentException: Unknown signal: PWR

at java.base/jdk.internal.misc.Signal.<init>(Signal.java:148)

at jdk.unsupported/sun.misc.Signal.<init>(Signal.java:139)

at org.apache.spark.util.SignalUtils$.$anonfun$registerSignal$1(SignalUtils.scala:95)

at scala.collection.mutable.HashMap.getOrElseUpdate(HashMap.scala:86)

at org.apache.spark.util.SignalUtils$.registerSignal(SignalUtils.scala:93)

at org.apache.spark.util.SignalUtils$.register(SignalUtils.scala:81)

at org.apache.spark.deploy.worker.Worker.<init>(Worker.scala:73)

at org.apache.spark.deploy.worker.Worker$.startRpcEnvAndEndpoint(Worker.scala:887)

at org.apache.spark.deploy.worker.Worker$.main(Worker.scala:855)

at org.apache.spark.deploy.worker.Worker.main(Worker.scala)

```

For executor:

```

20/03/06 17:21:52 INFO CoarseGrainedExecutorBackend: Registering PWR handler.

20/03/06 17:21:52 INFO SignalUtils: Registering signal handler for PWR

20/03/06 17:21:52 WARN SignalUtils: Failed to register SIGPWR - disabling decommission feature.

java.lang.IllegalArgumentException: Unknown signal: PWR

at java.base/jdk.internal.misc.Signal.<init>(Signal.java:148)

at jdk.unsupported/sun.misc.Signal.<init>(Signal.java:139)

at org.apache.spark.util.SignalUtils$.$anonfun$registerSignal$1(SignalUtils.scala:95)

at scala.collection.mutable.HashMap.getOrElseUpdate(HashMap.scala:86)

at org.apache.spark.util.SignalUtils$.registerSignal(SignalUtils.scala:93)

at org.apache.spark.util.SignalUtils$.register(SignalUtils.scala:81)

at org.apache.spark.executor.CoarseGrainedExecutorBackend.onStart(CoarseGrainedExecutorBackend.scala:86)

at org.apache.spark.rpc.netty.Inbox.$anonfun$process$1(Inbox.scala:120)

at org.apache.spark.rpc.netty.Inbox.safelyCall(Inbox.scala:203)

at org.apache.spark.rpc.netty.Inbox.process(Inbox.scala:100)

at org.apache.spark.rpc.netty.MessageLoop.org$apache$spark$rpc$netty$MessageLoop$$receiveLoop(MessageLoop.scala:75)

at org.apache.spark.rpc.netty.MessageLoop$$anon$1.run(MessageLoop.scala:41)

at java.base/java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:515)

at java.base/java.util.concurrent.FutureTask.run(FutureTask.java:264)

at java.base/java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1128)

at java.base/java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:628)

at java.base/java.lang.Thread.run(Thread.java:834)

```

Closes#27832 from HeartSaVioR/SPARK-31011.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

1, compute summary and update distributions in one pass;

2, remove logic related to check `shouldDistributeGaussians`

### Why are the changes needed?

In current impl, GMM need to trigger two jobs at one iteration:

1, one to compute summary;

2, if `shouldDistributeGaussians = ((k - 1.0) / k) * numFeatures > 25.0`, trigger another to update distributions;

`shouldDistributeGaussians` is almost true in practice, since numFeatures is likely to be greater than 25.

We can use only one job to impl above computation, by following the logic in `KMeans`: using `reduceByKey` to compute statistics for each center

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

existing testsuites

Closes#27784 from zhengruifeng/gmm_avoid_distri_gaussian.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: zhengruifeng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

This PR (SPARK-31126) aims to upgrade Kafka library to bring a client-side bug fix like KAFKA-8933

### Why are the changes needed?

The following is the full release note.

- https://downloads.apache.org/kafka/2.4.1/RELEASE_NOTES.html

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Pass the Jenkins with the existing test.

Closes#27881 from dongjoon-hyun/SPARK-KAFKA-2.4.1.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

1.Add version information to the configuration of `Status`.

2.Update the docs of `Status`.

3.By the way supplementary documentation about https://github.com/apache/spark/pull/27847

I sorted out some information show below.

Item name | Since version | JIRA ID | Commit ID | Note

-- | -- | -- | -- | --

spark.appStateStore.asyncTracking.enable | 2.3.0 | SPARK-20653 | 772e4648d95bda3353723337723543c741ea8476#diff-9ab674b7af7b2097f7d28cb6f5fd1e8c |

spark.ui.liveUpdate.period | 2.3.0 | SPARK-20644 | c7f38e5adb88d43ef60662c5d6ff4e7a95bff580#diff-9ab674b7af7b2097f7d28cb6f5fd1e8c |

spark.ui.liveUpdate.minFlushPeriod | 2.4.2 | SPARK-27394 | a8a2ba11ac10051423e58920062b50f328b06421#diff-9ab674b7af7b2097f7d28cb6f5fd1e8c |

spark.ui.retainedJobs | 1.2.0 | SPARK-2321 | 9530316887612dca060a128fca34dd5a6ab2a9a9#diff-1f32bcb61f51133bd0959a4177a066a5 |

spark.ui.retainedStages | 0.9.0 | None | 112c0a1776bbc866a1026a9579c6f72f293414c4#diff-1f32bcb61f51133bd0959a4177a066a5 | 0.9.0-incubating-SNAPSHOT

spark.ui.retainedTasks | 2.0.1 | SPARK-15083 | 55db26245d69bb02b7d7d5f25029b1a1cd571644#diff-6bdad48cfc34314e89599655442ff210 |

spark.ui.retainedDeadExecutors | 2.0.0 | SPARK-7729 | 9f4263392e492b5bc0acecec2712438ff9a257b7#diff-a0ba36f9b1f9829bf3c4689b05ab6cf2 |

spark.ui.dagGraph.retainedRootRDDs | 2.1.0 | SPARK-17171 | cc87280fcd065b01667ca7a59a1a32c7ab757355#diff-3f492c527ea26679d4307041b28455b8 |

spark.metrics.appStatusSource.enabled | 3.0.0 | SPARK-30060 | 60f20e5ea2000ab8f4a593b5e4217fd5637c5e22#diff-9f796ae06b0272c1f0a012652a5b68d0 |

### Why are the changes needed?

Supplemental configuration version information.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Exists UT

Closes#27848 from beliefer/add-version-to-status-config.

Lead-authored-by: beliefer <beliefer@163.com>

Co-authored-by: Jiaan Geng <beliefer@163.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

A few improvements to the sql ref SELECT doc:

1. correct the syntax of SELECT query

2. correct the default of null sort order

3. correct the GROUP BY syntax

4. several minor fixes

### Why are the changes needed?

refine document

### Does this PR introduce any user-facing change?

N/A

### How was this patch tested?

N/A

Closes#27866 from cloud-fan/doc.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Replace a sleep with waiting for the first collect to happen to try and make the K8s test code more reliable.

### Why are the changes needed?

Currently the Decommissioning test appears to be flaky in Jenkins.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Ran K8s test suite in a loop on minikube on my desktop for 10 iterations without this test failing on any of the runs.

Closes#27858 from holdenk/SPARK-31062-Improve-Spark-Decommissioning-K8s-test-teliability.

Authored-by: Holden Karau <hkarau@apple.com>

Signed-off-by: Holden Karau <hkarau@apple.com>

### What changes were proposed in this pull request?

```ChiSqSelector ``` depends on ```mllib.ChiSqSelectorModel``` to do the selection logic. Will remove the dependency in this PR.

### Why are the changes needed?

This PR is an intermediate PR. Removing ```ChiSqSelector``` dependency on ```mllib.ChiSqSelectorModel```. Next subtask will extract the common code between ```ChiSqSelector``` and ```FValueSelector``` and put in an abstract ```Selector```.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

New and existing tests

Closes#27841 from huaxingao/chisq.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

This PR reverts https://github.com/apache/spark/pull/26051 and https://github.com/apache/spark/pull/26066

### Why are the changes needed?

There is no standard requiring that `size(null)` must return null, and returning -1 looks reasonable as well. This is kind of a cosmetic change and we should avoid it if it breaks existing queries. This is similar to reverting TRIM function parameter order change.

### Does this PR introduce any user-facing change?

Yes, change the behavior of `size(null)` back to be the same as 2.4.

### How was this patch tested?

N/A

Closes#27834 from cloud-fan/revert.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

`DateTimeUtilsSuite.daysToMicros and microsToDays` takes 30 seconds, which is too long for a UT.

This PR changes the test to check random data, to reduce testing time. Now this test takes 1 second.

### Why are the changes needed?

make test faster

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

N/A

Closes#27873 from cloud-fan/test.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR makes `dev/make-distribution.sh` a bit easier to use by not hiding errors thrown by Maven.

As a supporting change, this PR also suppresses progress bar output from curl and wget that may be output from within `build/mvn`.

### Why are the changes needed?

It's surprising for command-line options to be position-dependent. The errors that get thrown when you pass the correct option (like `--pip`) but in the wrong order are confusing.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

I ran a few invocations of `make-distribution.sh` to confirm that, when passed incorrect options, I can actually see the errors thrown directly by Maven.

Closes#27800 from nchammas/SPARK-31041-make-distribution.

Authored-by: Nicholas Chammas <nicholas.chammas@liveramp.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

In the PR, I propose to change conversion of java.sql.Timestamp/Date values to/from internal values of Catalyst's TimestampType/DateType before cutover day `1582-10-15` of Gregorian calendar. I propose to construct local date-time from microseconds/days since the epoch. Take each date-time component `year`, `month`, `day`, `hour`, `minute`, `second` and `second fraction`, and construct java.sql.Timestamp/Date using the extracted components.

### Why are the changes needed?

This will rebase underlying time/date offset in the way that collected java.sql.Timestamp/Date values will have the same local time-date component as the original values in Gregorian calendar.

Here is the example which demonstrates the issue:

```sql

scala> sql("select date '1100-10-10'").collect()

res1: Array[org.apache.spark.sql.Row] = Array([1100-10-03])

```

### Does this PR introduce any user-facing change?

Yes, after the changes:

```sql

scala> sql("select date '1100-10-10'").collect()

res0: Array[org.apache.spark.sql.Row] = Array([1100-10-10])

```

### How was this patch tested?

By running `DateTimeUtilsSuite`, `DateFunctionsSuite` and `DateExpressionsSuite`.

Closes#27807 from MaxGekk/rebase-timestamp-before-1582.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

fix the error caused by interval output in ExtractBenchmark

### Why are the changes needed?

fix a bug in the test

```scala

[info] Running case: cast to interval

[error] Exception in thread "main" org.apache.spark.sql.AnalysisException: Cannot use interval type in the table schema.;;

[error] OverwriteByExpression RelationV2[] noop-table, true, true

[error] +- Project [(subtractdates(cast(cast(id#0L as timestamp) as date), -719162) + subtracttimestamps(cast(id#0L as timestamp), -30610249419876544)) AS ((CAST(CAST(id AS TIMESTAMP) AS DATE) - DATE '0001-01-01') + (CAST(id AS TIMESTAMP) - TIMESTAMP '1000-01-01 01:02:03.123456'))#2]

[error] +- Range (1262304000, 1272304000, step=1, splits=Some(1))

[error]

[error] at org.apache.spark.sql.catalyst.util.TypeUtils$.failWithIntervalType(TypeUtils.scala:106)

[error] at org.apache.spark.sql.catalyst.analysis.CheckAnalysis.$anonfun$checkAnalysis$25(CheckAnalysis.scala:389)

[error] at org.a

```

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

re-run benchmark

Closes#27867 from yaooqinn/SPARK-31111.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

When encoding Java Beans to Spark DataFrame, respecting `javax.annotation.Nonnull` and producing non-null fields.

### Why are the changes needed?

When encoding Java Beans to Spark DataFrame, non-primitive types are encoded as nullable fields. Although It works for most cases, it can be an issue under a few situations, e.g. the one described in the JIRA ticket when saving DataFrame to Avro format with non-null field.

We should allow Spark users more flexibility when creating Spark DataFrame from Java Beans. Currently, Spark users cannot create DataFrame with non-nullable fields in the schema from beans with non-nullable properties.

Although it is possible to project top-level columns with SQL expressions like `AssertNotNull` to make it non-null, for nested fields it is more tricky to do it similarly.

### Does this PR introduce any user-facing change?

Yes. After this change, Spark users can use `javax.annotation.Nonnull` to annotate non-null fields in Java Beans when encoding beans to Spark DataFrame.

### How was this patch tested?

Added unit test.

Closes#27851 from viirya/SPARK-31071.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

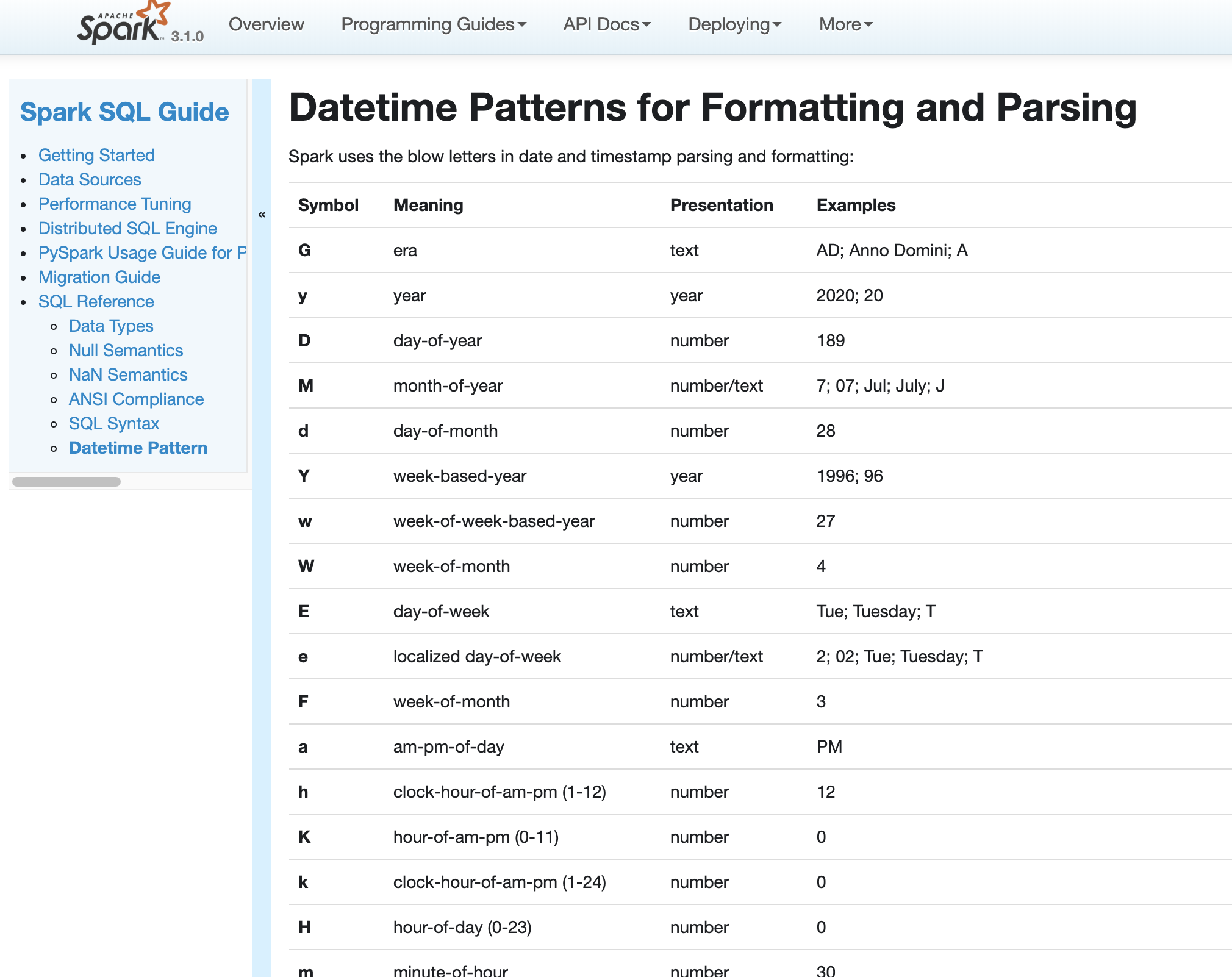

### What changes were proposed in this pull request?

In Spark version 2.4 and earlier, datetime parsing, formatting and conversion are performed by using the hybrid calendar (Julian + Gregorian).

Since the Proleptic Gregorian calendar is de-facto calendar worldwide, as well as the chosen one in ANSI SQL standard, Spark 3.0 switches to it by using Java 8 API classes (the java.time packages that are based on ISO chronology ). The switching job is completed in SPARK-26651.

But after the switching, there are some patterns not compatible between Java 8 and Java 7, Spark needs its own definition on the patterns rather than depends on Java API.

In this PR, we achieve this by writing the document and shadow the incompatible letters. See more details in [SPARK-31030](https://issues.apache.org/jira/browse/SPARK-31030)

### Why are the changes needed?

For backward compatibility.

### Does this PR introduce any user-facing change?

No.

After we define our own datetime parsing and formatting patterns, it's same to old Spark version.

### How was this patch tested?

Existing and new added UT.

Locally document test:

Closes#27830 from xuanyuanking/SPARK-31030.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

<!--

Thanks for sending a pull request! Here are some tips for you:

1. If this is your first time, please read our contributor guidelines: https://spark.apache.org/contributing.html

2. Ensure you have added or run the appropriate tests for your PR: https://spark.apache.org/developer-tools.html

3. If the PR is unfinished, add '[WIP]' in your PR title, e.g., '[WIP][SPARK-XXXX] Your PR title ...'.

4. Be sure to keep the PR description updated to reflect all changes.

5. Please write your PR title to summarize what this PR proposes.

6. If possible, provide a concise example to reproduce the issue for a faster review.

7. If you want to add a new configuration, please read the guideline first for naming configurations in

'core/src/main/scala/org/apache/spark/internal/config/ConfigEntry.scala'.

-->

### What changes were proposed in this pull request?

<!--

Please clarify what changes you are proposing. The purpose of this section is to outline the changes and how this PR fixes the issue.

If possible, please consider writing useful notes for better and faster reviews in your PR. See the examples below.

1. If you refactor some codes with changing classes, showing the class hierarchy will help reviewers.

2. If you fix some SQL features, you can provide some references of other DBMSes.

3. If there is design documentation, please add the link.

4. If there is a discussion in the mailing list, please add the link.

-->

There are two problems when splitting skewed partitions:

1. It's impossible that we can't split the skewed partition, then we shouldn't create a skew join.

2. When splitting, it's possible that we create a partition for very small amount of data..

This PR fixes them

1. don't create `PartialReducerPartitionSpec` if we can't split.

2. merge small partitions to the previous partition.

### Why are the changes needed?

<!--

Please clarify why the changes are needed. For instance,

1. If you propose a new API, clarify the use case for a new API.

2. If you fix a bug, you can clarify why it is a bug.

-->

make skew join split skewed partitions more evenly

### Does this PR introduce any user-facing change?

<!--

If yes, please clarify the previous behavior and the change this PR proposes - provide the console output, description and/or an example to show the behavior difference if possible.

If no, write 'No'.

-->

no

### How was this patch tested?

<!--

If tests were added, say they were added here. Please make sure to add some test cases that check the changes thoroughly including negative and positive cases if possible.

If it was tested in a way different from regular unit tests, please clarify how you tested step by step, ideally copy and paste-able, so that other reviewers can test and check, and descendants can verify in the future.

If tests were not added, please describe why they were not added and/or why it was difficult to add.

-->

updated test

Closes#27833 from cloud-fan/aqe.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

### What changes were proposed in this pull request?

Replace legacy `ReduceNumShufflePartitions` with `CoalesceShufflePartitions` in comment.

### Why are the changes needed?

Rule `ReduceNumShufflePartitions` has renamed to `CoalesceShufflePartitions`, we should update related comment as well.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

N/A.

Closes#27865 from Ngone51/spark_31037_followup.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Currently, we parse interval from multi units strings or from date-time/year-month pattern strings, the former handles all whitespace, the latter not or even spaces.

### Why are the changes needed?

behavior consistency

### Does this PR introduce any user-facing change?

yes, interval in date-time/year-month like

```

select interval '\n-\t10\t 12:34:46.789\t' day to second

-- !query 126 schema

struct<INTERVAL '-10 days -12 hours -34 minutes -46.789 seconds':interval>

-- !query 126 output

-10 days -12 hours -34 minutes -46.789 seconds

```

is valid now.

### How was this patch tested?

add ut.

Closes#26815 from yaooqinn/SPARK-30189.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

RuleExecutor already support metering for analyzer/optimizer rules. By providing such information in `PlanChangeLogger`, user can get more information when debugging rule changes .

This PR enhanced `PlanChangeLogger` to display RuleExecutor metrics. This can be easily done by calling the existing API `resetMetrics` and `dumpTimeSpent`, but there might be conflicts if user is also collecting total metrics of a sql job. Thus I introduced `QueryExecutionMetrics`, as the snapshot of `QueryExecutionMetering`, to better support this feature.

Information added to `PlanChangeLogger`

```

=== Metrics of Executed Rules ===

Total number of runs: 554

Total time: 0.107756568 seconds

Total number of effective runs: 11

Total time of effective runs: 0.047615486 seconds

```

### Why are the changes needed?

Provide better plan change debugging user experience

### Does this PR introduce any user-facing change?

Only add more debugging info of `planChangeLog`, default log level is TRACE.

### How was this patch tested?

Update existing tests to verify the new logs

Closes#27846 from Eric5553/ExplainRuleExecMetrics.

Authored-by: Eric Wu <492960551@qq.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

I found a lot scattered config in `Streaming`.I think should arrange these config in unified position.

### Why are the changes needed?

Arrange scattered config

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Exists UT

Closes#27744 from beliefer/arrange-scattered-streaming-config.

Authored-by: beliefer <beliefer@163.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR proposes two things:

1. Convert `null` to `string` type during schema inference of `schema_of_json` as JSON datasource does. This is a bug fix as well because `null` string is not the proper DDL formatted string and it is unable for SQL parser to recognise it as a type string. We should match it to JSON datasource and return a string type so `schema_of_json` returns a proper DDL formatted string.

2. Let `schema_of_json` respect `dropFieldIfAllNull` option during schema inference.

### Why are the changes needed?

To let `schema_of_json` return a proper DDL formatted string, and respect `dropFieldIfAllNull` option.

### Does this PR introduce any user-facing change?

Yes, it does.

```scala

import collection.JavaConverters._

import org.apache.spark.sql.functions._

spark.range(1).select(schema_of_json(lit("""{"id": ""}"""))).show()

spark.range(1).select(schema_of_json(lit("""{"id": "a", "drop": {"drop": null}}"""), Map("dropFieldIfAllNull" -> "true").asJava)).show(false)

```

**Before:**

```

struct<id:null>

struct<drop:struct<drop:null>,id:string>

```

**After:**

```

struct<id:string>

struct<id:string>

```

### How was this patch tested?

Manually tested, and unittests were added.

Closes#27854 from HyukjinKwon/SPARK-31065.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This PR changes the type of `CustomShuffleReaderExec`'s `partitionSpecs` from `Array` to `Seq`, since `Array` compares references not values for equality, which could lead to potential plan reuse problem.

### Why are the changes needed?

Unlike `Seq`, `Array` compares references not values for equality, which could lead to potential plan reuse problem.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Passes existing UTs.

Closes#27857 from maryannxue/aqe-customreader-fix.

Authored-by: maryannxue <maryannxue@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This is a follow up for https://github.com/apache/spark/pull/27650 where allow None provider for create table. Here we are doing the same thing for ReplaceTable.

### Why are the changes needed?

Although currently the ASTBuilder doesn't seem to allow `replace` without `USING` clause. This would allow `DataFrameWriterV2` to use the statements instead of commands directly.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing tests

Closes#27838 from yuchenhuo/SPARK-30902.

Authored-by: Yuchen Huo <yuchen.huo@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

with SPARK-27651 we now support host local reads for shuffle, but only when external shuffle service is enabled. Update the config docs to state that.

### Why are the changes needed?

clarify dependency

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

n/a

Closes#27812 from tgravescs/SPARK-27651-follow.

Authored-by: Thomas Graves <tgraves@nvidia.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Adding a note to document `Row.asDict` behavior when there are duplicate fields.

### Why are the changes needed?

When a row contains duplicate fields, `asDict` and `_get_item_` behaves differently. We should document it to let users know the difference explicitly.

### Does this PR introduce any user-facing change?

No. Only document change.

### How was this patch tested?

Existing test.

Closes#27853 from viirya/SPARK-30941.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Auditing new ML Scala APIs introduced in 3.0. Fix found issues.

### Why are the changes needed?

### Does this PR introduce any user-facing change?

Yes. Some doc changes

### How was this patch tested?

Existing tests

Closes#27818 from huaxingao/spark-30929.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

In `getMapLocation`, change the condition from `...endMapIndex < statuses.length` to `...endMapIndex <= statuses.length`.

### Why are the changes needed?

`endMapIndex` is exclusive, we should include it when comparing to `statuses.length`. Otherwise, we can't get the location for last mapIndex.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Updated existed test.

Closes#27850 from Ngone51/fix_getmaploction.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

I've applied following changed to StagePage.

1. Added `Shuffle Write Time` to task metrics summary.

2. Added checkbox for `Shuffle Write Time` as an additional metrics.

3. Renamed `Write Time` column in task table to `Shuffle Write Time` and let it as an additional column.

### Why are the changes needed?

Task metrics summary doesn't show `Shuffle Write Time` even though it shows `Shuffle Read Blocked Time`.

`Shuffle Read Blocked Time` is let as an additional metrics so I also let `Shuffle Write Time` as an other additional metrics.

### Does this PR introduce any user-facing change?

Yes. After this change, task metrics summary can show `Shuffle Write Time` and its visibility is controlled by a checkbox.

`Write Time` column is already shown in task table but the title is ambiguous so I've renamed it as `Shuffle Write Time`.

After this change, this column is also additional column like `Shuffle Read Blocked Time`.

### How was this patch tested?

I've tested manually using following code and confirm the UI.

`sc.parallelize(1 to 1000).map(x => (x,x)).reduceByKey(_+_).collect`

Closes#27837 from sarutak/write-time.

Authored-by: Kousuke Saruta <sarutak@oss.nttdata.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>