### What changes were proposed in this pull request?

This PR test `ThriftServerQueryTestSuite` in an asynchronous way.

### Why are the changes needed?

The default value of `spark.sql.hive.thriftServer.async` is `true`.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

```

build/sbt "hive-thriftserver/test-only *.ThriftServerQueryTestSuite" -Phive-thriftserver

build/mvn -Dtest=none -DwildcardSuites=org.apache.spark.sql.hive.thriftserver.ThriftServerQueryTestSuite test -Phive-thriftserver

```

Closes#26172 from wangyum/SPARK-29516.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Yuming Wang <wgyumg@gmail.com>

### What changes were proposed in this pull request?

This pr refine the code in ThriftServerQueryTestSuite.blackList to reuse the black list of SQLQueryTestSuite instead of duplicating all test cases from SQLQueryTestSuite.blackList.

### Why are the changes needed?

To reduce code duplication.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

N/A

Closes#26188 from fuwhu/SPARK-TBD.

Authored-by: fuwhu <bestwwg@163.com>

Signed-off-by: Yuming Wang <wgyumg@gmail.com>

### What changes were proposed in this pull request?

When adaptive execution is enabled, the Spark users who connected from JDBC always get adaptive execution error whatever the under root cause is. It's very confused. We have to check the driver log to find out why.

```shell

0: jdbc:hive2://localhost:10000> SELECT * FROM testData join testData2 ON key = v;

SELECT * FROM testData join testData2 ON key = v;

Error: Error running query: org.apache.spark.SparkException: Adaptive execution failed due to stage materialization failures. (state=,code=0)

0: jdbc:hive2://localhost:10000>

```

For example, a job queried from JDBC failed due to HDFS missing block. User still get the error message `Adaptive execution failed due to stage materialization failures`.

The easiest way to reproduce is changing the code of `AdaptiveSparkPlanExec`, to let it throws out an exception when it faces `StageSuccess`.

```scala

case class AdaptiveSparkPlanExec(

events.drainTo(rem)

(Seq(nextMsg) ++ rem.asScala).foreach {

case StageSuccess(stage, res) =>

// stage.resultOption = Some(res)

val ex = new SparkException("Wrapper Exception",

new IllegalArgumentException("Root cause is IllegalArgumentException for Test"))

errors.append(

new SparkException(s"Failed to materialize query stage: ${stage.treeString}", ex))

case StageFailure(stage, ex) =>

errors.append(

new SparkException(s"Failed to materialize query stage: ${stage.treeString}", ex))

```

### Why are the changes needed?

To make the error message more user-friend and more useful for query from JDBC.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Manually test query:

```shell

0: jdbc:hive2://localhost:10000> CREATE TEMPORARY VIEW testData (key, value) AS SELECT explode(array(1, 2, 3, 4)), cast(substring(rand(), 3, 4) as string);

CREATE TEMPORARY VIEW testData (key, value) AS SELECT explode(array(1, 2, 3, 4)), cast(substring(rand(), 3, 4) as string);

+---------+--+

| Result |

+---------+--+

+---------+--+

No rows selected (0.225 seconds)

0: jdbc:hive2://localhost:10000> CREATE TEMPORARY VIEW testData2 (k, v) AS SELECT explode(array(1, 1, 2, 2)), cast(substring(rand(), 3, 4) as int);

CREATE TEMPORARY VIEW testData2 (k, v) AS SELECT explode(array(1, 1, 2, 2)), cast(substring(rand(), 3, 4) as int);

+---------+--+

| Result |

+---------+--+

+---------+--+

No rows selected (0.043 seconds)

```

Before:

```shell

0: jdbc:hive2://localhost:10000> SELECT * FROM testData join testData2 ON key = v;

SELECT * FROM testData join testData2 ON key = v;

Error: Error running query: org.apache.spark.SparkException: Adaptive execution failed due to stage materialization failures. (state=,code=0)

0: jdbc:hive2://localhost:10000>

```

After:

```shell

0: jdbc:hive2://localhost:10000> SELECT * FROM testData join testData2 ON key = v;

SELECT * FROM testData join testData2 ON key = v;

Error: Error running query: java.lang.IllegalArgumentException: Root cause is IllegalArgumentException for Test (state=,code=0)

0: jdbc:hive2://localhost:10000>

```

Closes#25960 from LantaoJin/SPARK-29283.

Authored-by: lajin <lajin@ebay.com>

Signed-off-by: Yuming Wang <wgyumg@gmail.com>

### What changes were proposed in this pull request?

Support FETCH_PRIOR fetching in Thriftserver, and report correct fetch start offset it TFetchResultsResp.results.startRowOffset

The semantics of FETCH_PRIOR are as follow: Assuming the previous fetch returned a block of rows from offsets [10, 20)

* calling FETCH_PRIOR(maxRows=5) will scroll back and return rows [5, 10)

* calling FETCH_PRIOR(maxRows=10) again, will scroll back, but can't go earlier than 0. It will nevertheless return 10 rows, returning rows [0, 10) (overlapping with the previous fetch)

* calling FETCH_PRIOR(maxRows=4) again will again return rows starting from offset 0 - [0, 4)

* calling FETCH_NEXT(maxRows=6) after that will move the cursor forward and return rows [4, 10)

##### Client/server backwards/forwards compatibility:

Old driver with new server:

* Drivers that don't support FETCH_PRIOR will not attempt to use it

* Field TFetchResultsResp.results.startRowOffset was not set, old drivers don't depend on it.

New driver with old server

* Using an older thriftserver with FETCH_PRIOR will make the thriftserver return unsupported operation error. The driver can then recognize that it's an old server.

* Older thriftserver will return TFetchResultsResp.results.startRowOffset=0. If the client driver receives 0, it can know that it can not rely on it as correct offset. If the client driver intentionally wants to fetch from 0, it can use FETCH_FIRST.

### Why are the changes needed?

It's intended to be used to recover after connection errors. If a client lost connection during fetching (e.g. of rows [10, 20)), and wants to reconnect and continue, it could not know whether the request got lost before reaching the server, or on the response back. When it issued another FETCH_NEXT(10) request after reconnecting, because TFetchResultsResp.results.startRowOffset was not set, it could not know if the server will return rows [10,20) (because the previous request didn't reach it) or rows [20, 30) (because it returned data from the previous request but the connection got broken on the way back). Now, with TFetchResultsResp.results.startRowOffset the client can know after reconnecting which rows it is getting, and use FETCH_PRIOR to scroll back if a fetch block was lost in transmission.

Driver should always use FETCH_PRIOR after a broken connection.

* If the Thriftserver returns unsuported operation error, the driver knows that it's an old server that doesn't support it. The driver then must error the query, as it will also not support returning the correct startRowOffset, so the driver cannot reliably guarantee if it hadn't lost any rows on the fetch cursor.

* If the driver gets a response to FETCH_PRIOR, it should also have a correctly set startRowOffset, which the driver can use to position itself back where it left off before the connection broke.

* If FETCH_NEXT was used after a broken connection on the first fetch, and returned with an startRowOffset=0, then the client driver can't know if it's 0 because it's the older server version, or if it's genuinely 0. Better to call FETCH_PRIOR, as scrolling back may anyway be possibly required after a broken connection.

This way it is implemented in a backwards/forwards compatible way, and doesn't require bumping the protocol version. FETCH_ABSOLUTE might have been better, but that would require a bigger protocol change, as there is currently no field to specify the requested absolute offset.

### Does this PR introduce any user-facing change?

ODBC/JDBC drivers connecting to Thriftserver may now implement using the FETCH_PRIOR fetch order to scroll back in query results, and check TFetchResultsResp.results.startRowOffset if their cursor position is consistent after connection errors.

### How was this patch tested?

Added tests to HiveThriftServer2Suites

Closes#26014 from juliuszsompolski/SPARK-29349.

Authored-by: Juliusz Sompolski <julek@databricks.com>

Signed-off-by: Yuming Wang <wgyumg@gmail.com>

### What changes were proposed in this pull request?

When inserting a value into a column with the different data type, Spark performs type coercion. Currently, we support 3 policies for the store assignment rules: ANSI, legacy and strict, which can be set via the option "spark.sql.storeAssignmentPolicy":

1. ANSI: Spark performs the type coercion as per ANSI SQL. In practice, the behavior is mostly the same as PostgreSQL. It disallows certain unreasonable type conversions such as converting `string` to `int` and `double` to `boolean`. It will throw a runtime exception if the value is out-of-range(overflow).

2. Legacy: Spark allows the type coercion as long as it is a valid `Cast`, which is very loose. E.g., converting either `string` to `int` or `double` to `boolean` is allowed. It is the current behavior in Spark 2.x for compatibility with Hive. When inserting an out-of-range value to a integral field, the low-order bits of the value is inserted(the same as Java/Scala numeric type casting). For example, if 257 is inserted to a field of Byte type, the result is 1.

3. Strict: Spark doesn't allow any possible precision loss or data truncation in store assignment, e.g., converting either `double` to `int` or `decimal` to `double` is allowed. The rules are originally for Dataset encoder. As far as I know, no mainstream DBMS is using this policy by default.

Currently, the V1 data source uses "Legacy" policy by default, while V2 uses "Strict". This proposal is to use "ANSI" policy by default for both V1 and V2 in Spark 3.0.

### Why are the changes needed?

Following the ANSI SQL standard is most reasonable among the 3 policies.

### Does this PR introduce any user-facing change?

Yes.

The default store assignment policy is ANSI for both V1 and V2 data sources.

### How was this patch tested?

Unit test

Closes#26107 from gengliangwang/ansiPolicyAsDefault.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR adds 2 changes regarding exception handling in `SQLQueryTestSuite` and `ThriftServerQueryTestSuite`

- fixes an expected output sorting issue in `ThriftServerQueryTestSuite` as if there is an exception then there is no need for sort

- introduces common exception handling in those 2 suites with a new `handleExceptions` method

### Why are the changes needed?

Currently `ThriftServerQueryTestSuite` passes on master, but it fails on one of my PRs (https://github.com/apache/spark/pull/23531) with this error (https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/111651/testReport/org.apache.spark.sql.hive.thriftserver/ThriftServerQueryTestSuite/sql_3/):

```

org.scalatest.exceptions.TestFailedException: Expected "

[Recursion level limit 100 reached but query has not exhausted, try increasing spark.sql.cte.recursion.level.limit

org.apache.spark.SparkException]

", but got "

[org.apache.spark.SparkException

Recursion level limit 100 reached but query has not exhausted, try increasing spark.sql.cte.recursion.level.limit]

" Result did not match for query #4 WITH RECURSIVE r(level) AS ( VALUES (0) UNION ALL SELECT level + 1 FROM r ) SELECT * FROM r

```

The unexpected reversed order of expected output (error message comes first, then the exception class) is due to this line: https://github.com/apache/spark/pull/26028/files#diff-b3ea3021602a88056e52bf83d8782de8L146. It should not sort the expected output if there was an error during execution.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing UTs.

Closes#26028 from peter-toth/SPARK-29359-better-exception-handling.

Authored-by: Peter Toth <peter.toth@gmail.com>

Signed-off-by: Yuming Wang <wgyumg@gmail.com>

### What changes were proposed in this pull request?

For issue mentioned in [SPARK-29022](https://issues.apache.org/jira/browse/SPARK-29022)

Spark SQL CLI can't use class as serde class in jars add by SQL `ADD JAR`.

When we create table with `serde` class contains by jar added by SQL 'ADD JAR'.

We can create table with `serde` class construct success since we call `HiveClientImpl.createTable` under `withHiveState` method, it will add `clientLoader.classLoader` to `HiveClientImpl.state.getConf.classLoader`.

Jars added by SQL `ADD JAR` will be add to

1. `sparkSession.sharedState.jarClassLoader`.

2. 'HiveClientLoader.clientLoader.classLoader'

In Current spark-sql MODE, `HiveClientImpl.state` will use CliSessionState created when initialize

SparkSQLCliDriver, When we select data from table, it will check `serde` class, when call method `HiveTableScanExec#addColumnMetadataToConf()` to check for table desc serde class.

```

val deserializer = tableDesc.getDeserializerClass.getConstructor().newInstance()

deserializer.initialize(hiveConf, tableDesc.getProperties)

```

`getDeserializer` will use CliSessionState's hiveConf's classLoader in `Spark SQL CLI` mode.

But when we call `ADD JAR` in spark, the jar won't be added to `Classloader of CliSessionState' conf `, then `ClassNotFound` error happen.

So we reset `CliSessionState conf's classLoader ` to `sharedState.jarClassLoader` when `sharedState.jarClassLoader` has added jar passed by `HIVEAUXJARS`

Then when we use `ADD JAR ` to add jar, jar path will be added to CliSessionState's conf's ClassLoader

### Why are the changes needed?

Fix bug

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

ADD UT

Closes#25729 from AngersZhuuuu/SPARK-29015.

Authored-by: angerszhu <angers.zhu@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

This PR aims to remove `scalatest` deprecation warnings with the following changes.

- `org.scalatest.mockito.MockitoSugar` -> `org.scalatestplus.mockito.MockitoSugar`

- `org.scalatest.selenium.WebBrowser` -> `org.scalatestplus.selenium.WebBrowser`

- `org.scalatest.prop.Checkers` -> `org.scalatestplus.scalacheck.Checkers`

- `org.scalatest.prop.GeneratorDrivenPropertyChecks` -> `org.scalatestplus.scalacheck.ScalaCheckDrivenPropertyChecks`

### Why are the changes needed?

According to the Jenkins logs, there are 118 warnings about this.

```

grep "is deprecated" ~/consoleText | grep scalatest | wc -l

118

```

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

After Jenkins passes, we need to check the Jenkins log.

Closes#25982 from dongjoon-hyun/SPARK-29307.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Scala 2.13 emits a deprecation warning for procedure-like declarations:

```

def foo() {

...

```

This is equivalent to the following, so should be changed to avoid a warning:

```

def foo(): Unit = {

...

```

### Why are the changes needed?

It will avoid about a thousand compiler warnings when we start to support Scala 2.13. I wanted to make the change in 3.0 as there are less likely to be back-ports from 3.0 to 2.4 than 3.1 to 3.0, for example, minimizing that downside to touching so many files.

Unfortunately, that makes this quite a big change.

### Does this PR introduce any user-facing change?

No behavior change at all.

### How was this patch tested?

Existing tests.

Closes#25968 from srowen/SPARK-29291.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Some of the columns of JDBC/ODBC server tab in Web UI are hard to understand.

We have documented it at SPARK-28373 but I think it is better to have some tooltips in the SQL statistics table to explain the columns

The columns with new tooltips are finish time, close time, execution time and duration

Improvements in UIUtils can be used in other tables in WebUI to add tooltips

### Why are the changes needed?

It is interesting to improve the undestanding of the WebUI

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Unit tests are added and manual test.

Closes#25723 from planga82/feature/SPARK-29019_tooltipjdbcServer.

Lead-authored-by: Unknown <soypab@gmail.com>

Co-authored-by: Pablo <soypab@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

This PR moves Hive test jars(`hive-contrib-*.jar` and `hive-hcatalog-core-*.jar`) from maven dependency to local file.

### Why are the changes needed?

`--jars` can't be tested since `hive-contrib-*.jar` and `hive-hcatalog-core-*.jar` are already in classpath.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

manual test

Closes#25690 from wangyum/SPARK-27831-revert.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Yuming Wang <wgyumg@gmail.com>

### What changes were proposed in this pull request?

After https://github.com/apache/spark/pull/25158 and https://github.com/apache/spark/pull/25458, SQL features of PostgreSQL are introduced into Spark. AFAIK, both features are implementation-defined behaviors, which are not specified in ANSI SQL.

In such a case, this proposal is to add a configuration `spark.sql.dialect` for choosing a database dialect.

After this PR, Spark supports two database dialects, `Spark` and `PostgreSQL`. With `PostgreSQL` dialect, Spark will:

1. perform integral division with the / operator if both sides are integral types;

2. accept "true", "yes", "1", "false", "no", "0", and unique prefixes as input and trim input for the boolean data type.

### Why are the changes needed?

Unify the external database dialect with one configuration, instead of small flags.

### Does this PR introduce any user-facing change?

A new configuration `spark.sql.dialect` for choosing a database dialect.

### How was this patch tested?

Existing tests.

Closes#25697 from gengliangwang/dialect.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Rename the package pgSQL to postgreSQL

### Why are the changes needed?

To address the comment in https://github.com/apache/spark/pull/25697#discussion_r328431070 . The official full name seems more reasonable.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing unit tests.

Closes#25936 from gengliangwang/renamePGSQL.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Yuming Wang <wgyumg@gmail.com>

### What changes were proposed in this pull request?

This PR enable `ThriftServerQueryTestSuite` and fix previously flaky test by:

1. Start thriftserver in `beforeAll()`.

2. Disable `spark.sql.hive.thriftServer.async`.

### Why are the changes needed?

Improve test coverage.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

```shell

build/sbt "hive-thriftserver/test-only *.ThriftServerQueryTestSuite " -Phive-thriftserver

build/mvn -Dtest=none -DwildcardSuites=org.apache.spark.sql.hive.thriftserver.ThriftServerQueryTestSuite test -Phive-thriftserver

```

Closes#25868 from wangyum/SPARK-28527-enable.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Yuming Wang <wgyumg@gmail.com>

### What changes were proposed in this pull request?

Discuss in https://github.com/apache/spark/pull/25611

If cancel() and close() is called very quickly after the query is started, then they may both call cleanup() before Spark Jobs are started. Then sqlContext.sparkContext.cancelJobGroup(statementId) does nothing.

But then the execute thread can start the jobs, and only then get interrupted and exit through here. But then it will exit here, and no-one will cancel these jobs and they will keep running even though this execution has exited.

So when execute() was interrupted by `cancel()`, when get into catch block, we should call canJobGroup again to make sure the job was canceled.

### Why are the changes needed?

### Does this PR introduce any user-facing change?

NO

### How was this patch tested?

MT

Closes#25743 from AngersZhuuuu/SPARK-29036.

Authored-by: angerszhu <angers.zhu@gmail.com>

Signed-off-by: Yuming Wang <wgyumg@gmail.com>

### What changes were proposed in this pull request?

This PR add support sorting `Execution Time` and `Duration` columns for `ThriftServerSessionPage`.

### Why are the changes needed?

Previously, it's not sorted correctly.

### Does this PR introduce any user-facing change?

Yes.

### How was this patch tested?

Manually do the following and test sorting on those columns in the Spark Thrift Server Session Page.

```

$ sbin/start-thriftserver.sh

$ bin/beeline -u jdbc:hive2://localhost:10000

0: jdbc:hive2://localhost:10000> create table t(a int);

+---------+--+

| Result |

+---------+--+

+---------+--+

No rows selected (0.521 seconds)

0: jdbc:hive2://localhost:10000> select * from t;

+----+--+

| a |

+----+--+

+----+--+

No rows selected (0.772 seconds)

0: jdbc:hive2://localhost:10000> show databases;

+---------------+--+

| databaseName |

+---------------+--+

| default |

+---------------+--+

1 row selected (0.249 seconds)

```

**Sorted by `Execution Time` column**:

**Sorted by `Duration` column**:

Closes#25892 from wangyum/SPARK-28599.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Currently, there are new configurations for compatibility with ANSI SQL:

* `spark.sql.parser.ansi.enabled`

* `spark.sql.decimalOperations.nullOnOverflow`

* `spark.sql.failOnIntegralTypeOverflow`

This PR is to add new configuration `spark.sql.ansi.enabled` and remove the 3 options above. When the configuration is true, Spark tries to conform to the ANSI SQL specification. It will be disabled by default.

### Why are the changes needed?

Make it simple and straightforward.

### Does this PR introduce any user-facing change?

The new features for ANSI compatibility will be set via one configuration `spark.sql.ansi.enabled`.

### How was this patch tested?

Existing unit tests.

Closes#25693 from gengliangwang/ansiEnabled.

Lead-authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Co-authored-by: Xiao Li <gatorsmile@gmail.com>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>

### What changes were proposed in this pull request?

ThriftServerSessionPage displays timestamp 0 (1970/01/01) instead of nothing if query finish time and close time are not set.

Change it to display nothing, like ThriftServerPage.

### Why are the changes needed?

Obvious bug.

### Does this PR introduce any user-facing change?

Finish time and Close time will be displayed correctly on ThriftServerSessionPage in JDBC/ODBC Spark UI.

### How was this patch tested?

Manual test.

Closes#25762 from juliuszsompolski/SPARK-29056.

Authored-by: Juliusz Sompolski <julek@databricks.com>

Signed-off-by: Yuming Wang <wgyumg@gmail.com>

### What changes were proposed in this pull request?

Current Spark Thrift Server return TypeInfo includes

1. INTERVAL_YEAR_MONTH

2. INTERVAL_DAY_TIME

3. UNION

4. USER_DEFINED

Spark doesn't support INTERVAL_YEAR_MONTH, INTERVAL_YEAR_MONTH, UNION

and won't return USER)DEFINED type.

This PR overwrite GetTypeInfoOperation with SparkGetTypeInfoOperation to exclude types which we don't need.

In hive-1.2.1 Type class is `org.apache.hive.service.cli.Type`

In hive-2.3.x Type class is `org.apache.hadoop.hive.serde2.thrift.Type`

Use ThrifrserverShimUtils to fit version problem and exclude types we don't need

### Why are the changes needed?

We should return type info of Spark's own type info

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Manuel test & Added UT

Closes#25694 from AngersZhuuuu/SPARK-28982.

Lead-authored-by: angerszhu <angers.zhu@gmail.com>

Co-authored-by: AngersZhuuuu <angers.zhu@gmail.com>

Signed-off-by: Yuming Wang <wgyumg@gmail.com>

### What changes were proposed in this pull request?

The intent to use the --hiveconf/--hivevar parameter is just an initialization value, so setting it once in ```SparkSQLSessionManager#openSession``` is sufficient, and each time the ```SparkExecuteStatementOperation``` setting causes the variable to not be modified.

### Why are the changes needed?

It is wrong to set the --hivevar/--hiveconf variable in every ```SparkExecuteStatementOperation```, which prevents variable updates.

### Does this PR introduce any user-facing change?

```

cat <<EOF > test.sql

select '\${a}', '\${b}';

set b=bvalue_MOD_VALUE;

set b;

EOF

beeline -u jdbc:hive2://localhost:10000 --hiveconf a=avalue --hivevar b=bvalue -f test.sql

```

current result:

```

+-----------------+-----------------+--+

| avalue | bvalue |

+-----------------+-----------------+--+

| avalue | bvalue |

+-----------------+-----------------+--+

+-----------------+-----------------+--+

| key | value |

+-----------------+-----------------+--+

| b | bvalue |

+-----------------+-----------------+--+

1 row selected (0.022 seconds)

```

after modification:

```

+-----------------+-----------------+--+

| avalue | bvalue |

+-----------------+-----------------+--+

| avalue | bvalue |

+-----------------+-----------------+--+

+-----------------+-----------------+--+

| key | value |

+-----------------+-----------------+--+

| b | bvalue_MOD_VALUE|

+-----------------+-----------------+--+

1 row selected (0.022 seconds)

```

### How was this patch tested?

modified the existing unit test

Closes#25722 from cxzl25/fix_SPARK-26598.

Authored-by: sychen <sychen@ctrip.com>

Signed-off-by: Yuming Wang <wgyumg@gmail.com>

## What changes were proposed in this pull request?

`bin/spark-shell` support query interval value:

```scala

scala> spark.sql("SELECT interval 3 months 1 hours AS i").show(false)

+-------------------------+

|i |

+-------------------------+

|interval 3 months 1 hours|

+-------------------------+

```

But `sbin/start-thriftserver.sh` can't support query interval value:

```sql

0: jdbc:hive2://localhost:10000/default> SELECT interval 3 months 1 hours AS i;

Error: java.lang.IllegalArgumentException: Unrecognized type name: interval (state=,code=0)

```

This PR maps `CalendarIntervalType` to `StringType` for `TableSchema` to make Thriftserver support query interval value because we do not support `INTERVAL_YEAR_MONTH` type and `INTERVAL_DAY_TIME`:

02c33694c8/sql/hive-thriftserver/v1.2.1/src/main/java/org/apache/hive/service/cli/Type.java (L73-L78)

[SPARK-27791](https://issues.apache.org/jira/browse/SPARK-27791): Support SQL year-month INTERVAL type

[SPARK-27793](https://issues.apache.org/jira/browse/SPARK-27793): Support SQL day-time INTERVAL type

## How was this patch tested?

unit tests

Closes#25277 from wangyum/Thriftserver-support-interval-type.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>

### What changes were proposed in this pull request?

This PR ignores Thrift server `ThriftServerQueryTestSuite`.

### Why are the changes needed?

This ThriftServerQueryTestSuite test case led to frequent Jenkins build failure.

### Does this PR introduce any user-facing change?

Yes.

### How was this patch tested?

N/A

Closes#25592 from wangyum/SPARK-28527-f1.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR implements `SparkGetCatalogsOperation` for Thrift Server metadata completeness.

### Why are the changes needed?

Thrift Server metadata completeness.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Unit test

Closes#25555 from wangyum/SPARK-28852.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

While processing the Rowdata in the server side ColumnValue BigDecimal type value processed by server has to converted to the HiveDecmal data type for successful processing of query using Hive ODBC client.As per current logic corresponding to the Decimal column datatype, the Spark server uses BigDecimal, and the ODBC client uses HiveDecimal. If the data type does not match, the client fail to parse

Since this handing was missing the query executed in Hive ODBC client wont return or provides result to the user even though the decimal type column value data present.

## How was this patch tested?

Manual test report and impact assessment is done using existing test-cases

Before fix

After Fix

Closes#23899 from sujith71955/master_decimalissue.

Authored-by: s71955 <sujithchacko.2010@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This PR port [HIVE-10646](https://issues.apache.org/jira/browse/HIVE-10646) to fix Hive 0.12's JDBC client can not handle `NULL_TYPE`:

```sql

Connected to: Hive (version 3.0.0-SNAPSHOT)

Driver: Hive (version 0.12.0)

Transaction isolation: TRANSACTION_REPEATABLE_READ

Beeline version 0.12.0 by Apache Hive

0: jdbc:hive2://localhost:10000> select null;

org.apache.thrift.transport.TTransportException

at org.apache.thrift.transport.TIOStreamTransport.read(TIOStreamTransport.java:132)

at org.apache.thrift.transport.TTransport.readAll(TTransport.java:84)

at org.apache.thrift.transport.TSaslTransport.readLength(TSaslTransport.java:346)

at org.apache.thrift.transport.TSaslTransport.readFrame(TSaslTransport.java:423)

at org.apache.thrift.transport.TSaslTransport.read(TSaslTransport.java:405)

```

Server log:

```

19/08/07 09:34:07 ERROR TThreadPoolServer: Error occurred during processing of message.

java.lang.NullPointerException

at org.apache.hive.service.cli.thrift.TRow$TRowStandardScheme.write(TRow.java:388)

at org.apache.hive.service.cli.thrift.TRow$TRowStandardScheme.write(TRow.java:338)

at org.apache.hive.service.cli.thrift.TRow.write(TRow.java:288)

at org.apache.hive.service.cli.thrift.TRowSet$TRowSetStandardScheme.write(TRowSet.java:605)

at org.apache.hive.service.cli.thrift.TRowSet$TRowSetStandardScheme.write(TRowSet.java:525)

at org.apache.hive.service.cli.thrift.TRowSet.write(TRowSet.java:455)

at org.apache.hive.service.cli.thrift.TFetchResultsResp$TFetchResultsRespStandardScheme.write(TFetchResultsResp.java:550)

at org.apache.hive.service.cli.thrift.TFetchResultsResp$TFetchResultsRespStandardScheme.write(TFetchResultsResp.java:486)

at org.apache.hive.service.cli.thrift.TFetchResultsResp.write(TFetchResultsResp.java:412)

at org.apache.hive.service.cli.thrift.TCLIService$FetchResults_result$FetchResults_resultStandardScheme.write(TCLIService.java:13192)

at org.apache.hive.service.cli.thrift.TCLIService$FetchResults_result$FetchResults_resultStandardScheme.write(TCLIService.java:13156)

at org.apache.hive.service.cli.thrift.TCLIService$FetchResults_result.write(TCLIService.java:13107)

at org.apache.thrift.ProcessFunction.process(ProcessFunction.java:58)

at org.apache.thrift.TBaseProcessor.process(TBaseProcessor.java:39)

at org.apache.hive.service.auth.TSetIpAddressProcessor.process(TSetIpAddressProcessor.java:53)

at org.apache.thrift.server.TThreadPoolServer$WorkerProcess.run(TThreadPoolServer.java:310)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:819)

```

## How was this patch tested?

unit tests

Closes#25378 from wangyum/SPARK-28644.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This PR fix Hive 0.12 JDBC client can not handle binary type:

```sql

Connected to: Hive (version 3.0.0-SNAPSHOT)

Driver: Hive (version 0.12.0)

Transaction isolation: TRANSACTION_REPEATABLE_READ

Beeline version 0.12.0 by Apache Hive

0: jdbc:hive2://localhost:10000> SELECT cast('ABC' as binary);

Error: java.lang.ClassCastException: [B incompatible with java.lang.String (state=,code=0)

```

Server log:

```

19/08/07 10:10:04 WARN ThriftCLIService: Error fetching results:

java.lang.RuntimeException: java.lang.ClassCastException: [B incompatible with java.lang.String

at org.apache.hive.service.cli.session.HiveSessionProxy.invoke(HiveSessionProxy.java:83)

at org.apache.hive.service.cli.session.HiveSessionProxy.access$000(HiveSessionProxy.java:36)

at org.apache.hive.service.cli.session.HiveSessionProxy$1.run(HiveSessionProxy.java:63)

at java.security.AccessController.doPrivileged(AccessController.java:770)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1746)

at org.apache.hive.service.cli.session.HiveSessionProxy.invoke(HiveSessionProxy.java:59)

at com.sun.proxy.$Proxy26.fetchResults(Unknown Source)

at org.apache.hive.service.cli.CLIService.fetchResults(CLIService.java:455)

at org.apache.hive.service.cli.thrift.ThriftCLIService.FetchResults(ThriftCLIService.java:621)

at org.apache.hive.service.cli.thrift.TCLIService$Processor$FetchResults.getResult(TCLIService.java:1553)

at org.apache.hive.service.cli.thrift.TCLIService$Processor$FetchResults.getResult(TCLIService.java:1538)

at org.apache.thrift.ProcessFunction.process(ProcessFunction.java:38)

at org.apache.thrift.TBaseProcessor.process(TBaseProcessor.java:39)

at org.apache.hive.service.auth.TSetIpAddressProcessor.process(TSetIpAddressProcessor.java:53)

at org.apache.thrift.server.TThreadPoolServer$WorkerProcess.run(TThreadPoolServer.java:310)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:819)

Caused by: java.lang.ClassCastException: [B incompatible with java.lang.String

at org.apache.hive.service.cli.ColumnValue.toTColumnValue(ColumnValue.java:198)

at org.apache.hive.service.cli.RowBasedSet.addRow(RowBasedSet.java:60)

at org.apache.hive.service.cli.RowBasedSet.addRow(RowBasedSet.java:32)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation.$anonfun$getNextRowSet$1(SparkExecuteStatementOperation.scala:151)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation$$Lambda$1923.000000009113BFE0.apply(Unknown Source)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation.withSchedulerPool(SparkExecuteStatementOperation.scala:299)

at org.apache.spark.sql.hive.thriftserver.SparkExecuteStatementOperation.getNextRowSet(SparkExecuteStatementOperation.scala:113)

at org.apache.hive.service.cli.operation.OperationManager.getOperationNextRowSet(OperationManager.java:220)

at org.apache.hive.service.cli.session.HiveSessionImpl.fetchResults(HiveSessionImpl.java:785)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hive.service.cli.session.HiveSessionProxy.invoke(HiveSessionProxy.java:78)

... 18 more

```

## How was this patch tested?

unit tests

Closes#25379 from wangyum/SPARK-28474.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This patch tries to keep consistency whenever UTF-8 charset is needed, as using `StandardCharsets.UTF_8` instead of using "UTF-8". If the String type is needed, `StandardCharsets.UTF_8.name()` is used.

This change also brings the benefit of getting rid of `UnsupportedEncodingException`, as we're providing `Charset` instead of `String` whenever possible.

This also changes some private Catalyst helper methods to operate on encodings as `Charset` objects rather than strings.

## How was this patch tested?

Existing unit tests.

Closes#25335 from HeartSaVioR/SPARK-28601.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This PR implements Spark's own GetFunctionsOperation which mitigates the differences between Spark SQL and Hive UDFs. But our implementation is different from Hive's implementation:

- Our implementation always returns results. Hive only returns results when [(null == catalogName || "".equals(catalogName)) && (null == schemaName || "".equals(schemaName))](https://github.com/apache/hive/blob/rel/release-3.1.1/service/src/java/org/apache/hive/service/cli/operation/GetFunctionsOperation.java#L101-L119).

- Our implementation pads the `REMARKS` field with the function usage - Hive returns an empty string.

- Our implementation does not support `FUNCTION_TYPE`, but Hive does.

## How was this patch tested?

unit tests

Closes#25252 from wangyum/SPARK-28510.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

How to reproduce this issue:

```shell

build/sbt clean package -Phive -Phive-thriftserver -Phadoop-3.2

export SPARK_PREPEND_CLASSES=true

sbin/start-thriftserver.sh

[rootspark-3267648 spark]# bin/beeline -u jdbc:hive2://localhost:10000/default -e "select cast(1 as decimal(38, 18));"

Connecting to jdbc:hive2://localhost:10000/default

Connected to: Spark SQL (version 3.0.0-SNAPSHOT)

Driver: Hive JDBC (version 2.3.5)

Transaction isolation: TRANSACTION_REPEATABLE_READ

Error: java.lang.ClassCastException: java.math.BigDecimal incompatible with org.apache.hadoop.hive.common.type.HiveDecimal (state=,code=0)

Closing: 0: jdbc:hive2://localhost:10000/default

```

This pr fix this issue.

## How was this patch tested?

unit tests

Closes#25217 from wangyum/SPARK-28463.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

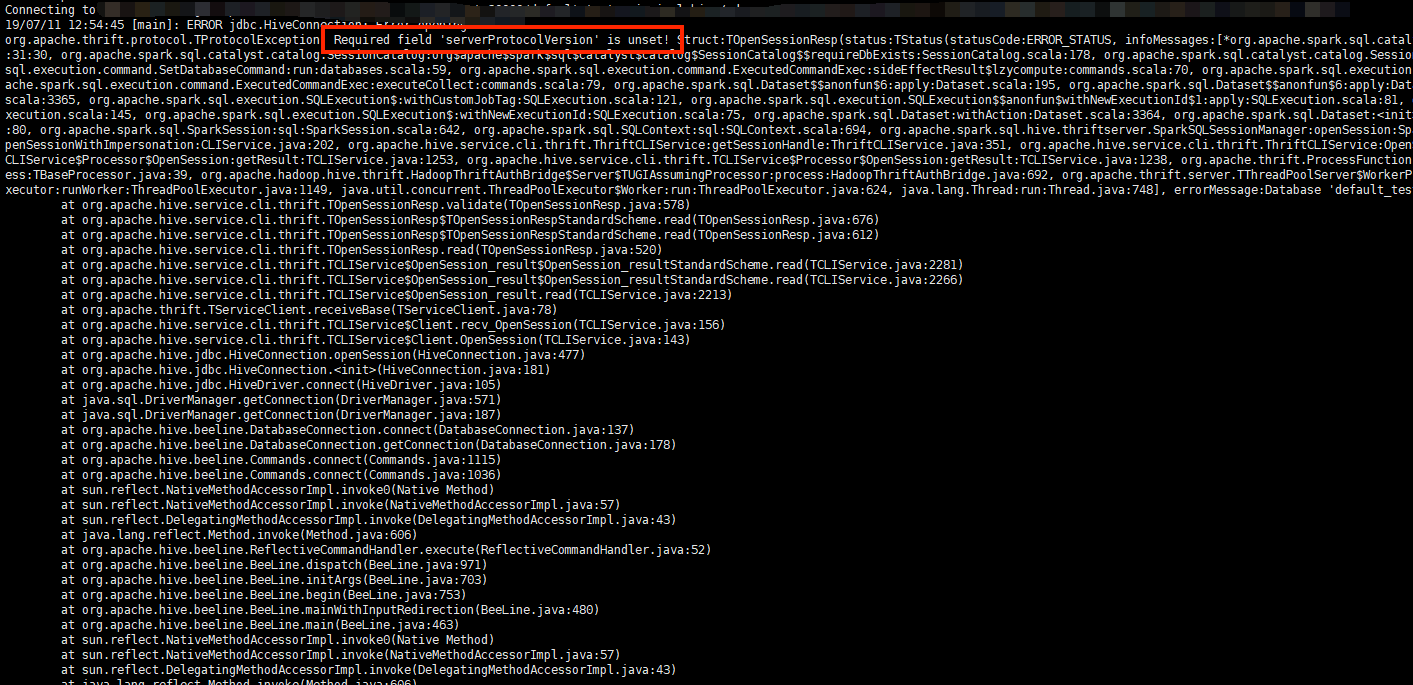

## What changes were proposed in this pull request?

For Thrift server, It's downward compatible. Such as if a PROTOCOL_VERSION_V7 client connect to a PROTOCOL_VERSION_V8 server, when OpenSession, server will change his response's protocol version to min of (client and server).

`TProtocolVersion protocol = getMinVersion(CLIService.SERVER_VERSION,`

` req.getClient_protocol());`

then set it to OpenSession's response.

But if OpenSession failed , it won't execute behavior of reset response's protocol_version.

Then it will return server's origin protocol version.

Finally client will get en error as below:

Since we write a wrong database,, OpenSession failed, right protocol version haven't been rest.

## How was this patch tested?

Since I really don't know how to write unit test about this, so I build a jar with this PR,and retry the error above, then it will return a reasonable Error of DB not found :

Closes#25083 from AngersZhuuuu/SPARK-28311.

Authored-by: 朱夷 <zhuyi01@corp.netease.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

There are some hardcoded configs, using config entry to replace them.

## How was this patch tested?

Existing UT

Closes#25059 from WangGuangxin/ConfigEntry.

Authored-by: wangguangxin.cn <wangguangxin.cn@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

`SslContextFactory` is deprecated at Jetty 9.4 and we are using `9.4.18.v20190429`. This PR aims to replace it with `SslContextFactory.Server`.

- https://www.eclipse.org/jetty/javadoc/9.4.19.v20190610/org/eclipse/jetty/util/ssl/SslContextFactory.html

- https://www.eclipse.org/jetty/javadoc/9.3.24.v20180605/org/eclipse/jetty/util/ssl/SslContextFactory.html

```

[WARNING] /Users/dhyun/APACHE/spark/core/src/main/scala/org/apache/spark/SSLOptions.scala:71:

constructor SslContextFactory in class SslContextFactory is deprecated:

see corresponding Javadoc for more information.

[WARNING] val sslContextFactory = new SslContextFactory()

[WARNING] ^

```

## How was this patch tested?

Pass the Jenkins with the existing tests.

Closes#25067 from dongjoon-hyun/SPARK-28290.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

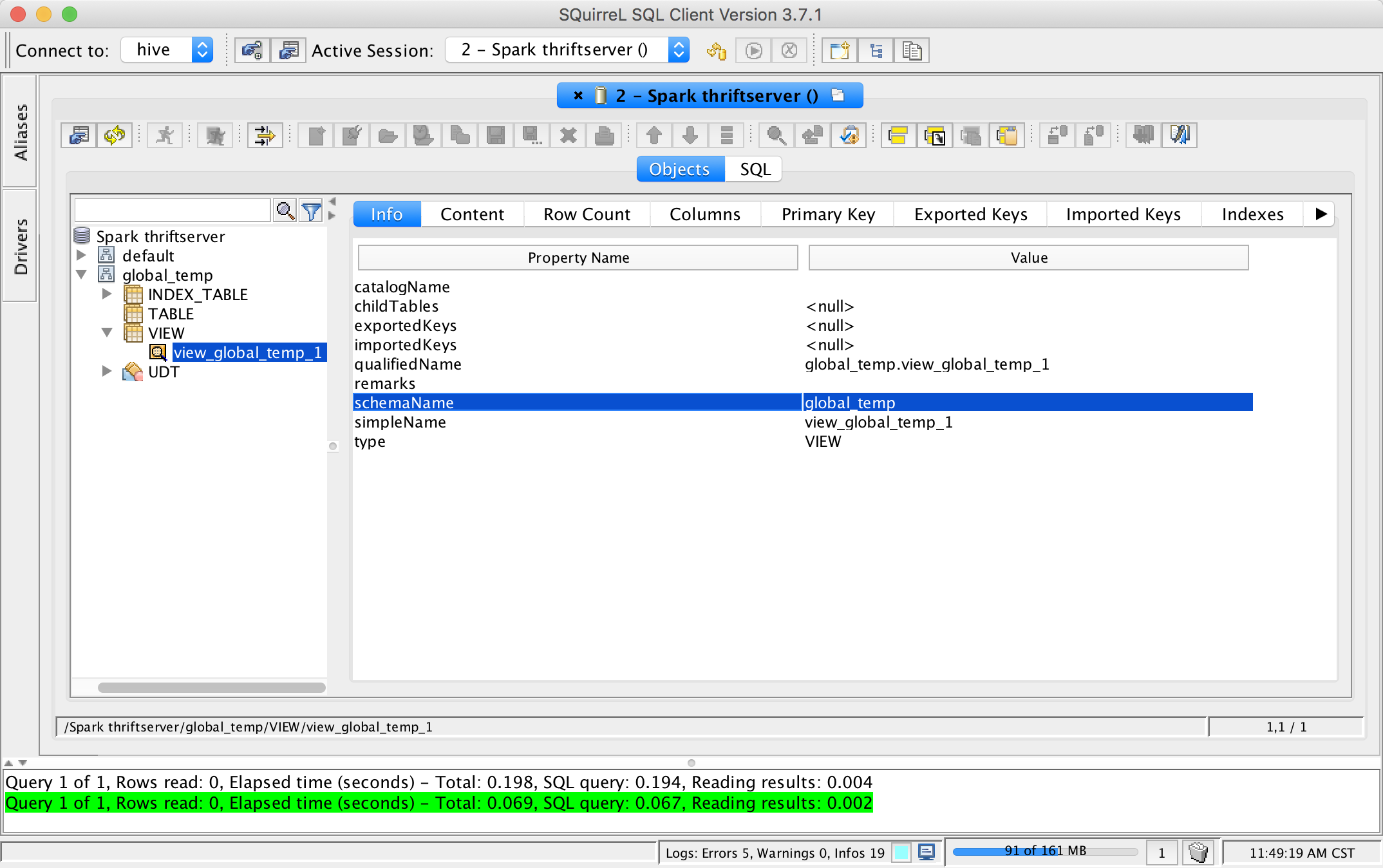

## What changes were proposed in this pull request?

This pr add support show global temporary view and local temporary view in database tool.

TODO: Database tools should support show temporary views because it's schema is null.

## How was this patch tested?

unit tests and manual tests:

Closes#24972 from wangyum/SPARK-28167.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request?

To make the #24972 change smaller. This pr improves `SparkMetadataOperationSuite` to avoid creating new sessions when getSchemas/getTables/getColumns.

## How was this patch tested?

N/A

Closes#24985 from wangyum/SPARK-28184.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>