## What changes were proposed in this pull request?

This PR adds some tests converted from `except-all.sql` to test UDFs. Please see contribution guide of this umbrella ticket - [SPARK-27921](https://issues.apache.org/jira/browse/SPARK-27921).

<details><summary>Diff comparing to 'except-all.sql'</summary>

<p>

```diff

diff --git a/sql/core/src/test/resources/sql-tests/results/except-all.sql.out b/sql/core/src/test/resources/sql-tests/results/udf/udf-except-all.sql.out

index 01091a2f75..b7bfad0e53 100644

--- a/sql/core/src/test/resources/sql-tests/results/except-all.sql.out

+++ b/sql/core/src/test/resources/sql-tests/results/udf/udf-except-all.sql.out

-49,11 +49,11 struct<>

-- !query 4

-SELECT * FROM tab1

+SELECT udf(c1) FROM tab1

EXCEPT ALL

-SELECT * FROM tab2

+SELECT udf(c1) FROM tab2

-- !query 4 schema

-struct<c1:int>

+struct<CAST(udf(cast(c1 as string)) AS INT):int>

-- !query 4 output

0

2

-62,11 +62,11 NULL

-- !query 5

-SELECT * FROM tab1

+SELECT udf(c1) FROM tab1

MINUS ALL

-SELECT * FROM tab2

+SELECT udf(c1) FROM tab2

-- !query 5 schema

-struct<c1:int>

+struct<CAST(udf(cast(c1 as string)) AS INT):int>

-- !query 5 output

0

2

-75,11 +75,11 NULL

-- !query 6

-SELECT * FROM tab1

+SELECT udf(c1) FROM tab1

EXCEPT ALL

-SELECT * FROM tab2 WHERE c1 IS NOT NULL

+SELECT udf(c1) FROM tab2 WHERE udf(c1) IS NOT NULL

-- !query 6 schema

-struct<c1:int>

+struct<CAST(udf(cast(c1 as string)) AS INT):int>

-- !query 6 output

0

2

-89,21 +89,21 NULL

-- !query 7

-SELECT * FROM tab1 WHERE c1 > 5

+SELECT udf(c1) FROM tab1 WHERE udf(c1) > 5

EXCEPT ALL

-SELECT * FROM tab2

+SELECT udf(c1) FROM tab2

-- !query 7 schema

-struct<c1:int>

+struct<CAST(udf(cast(c1 as string)) AS INT):int>

-- !query 7 output

-- !query 8

-SELECT * FROM tab1

+SELECT udf(c1) FROM tab1

EXCEPT ALL

-SELECT * FROM tab2 WHERE c1 > 6

+SELECT udf(c1) FROM tab2 WHERE udf(c1 > udf(6))

-- !query 8 schema

-struct<c1:int>

+struct<CAST(udf(cast(c1 as string)) AS INT):int>

-- !query 8 output

0

1

-117,11 +117,11 NULL

-- !query 9

-SELECT * FROM tab1

+SELECT udf(c1) FROM tab1

EXCEPT ALL

-SELECT CAST(1 AS BIGINT)

+SELECT CAST(udf(1) AS BIGINT)

-- !query 9 schema

-struct<c1:bigint>

+struct<CAST(udf(cast(c1 as string)) AS INT):bigint>

-- !query 9 output

0

2

-134,7 +134,7 NULL

-- !query 10

-SELECT * FROM tab1

+SELECT udf(c1) FROM tab1

EXCEPT ALL

SELECT array(1)

-- !query 10 schema

-145,62 +145,62 ExceptAll can only be performed on tables with the compatible column types. arra

-- !query 11

-SELECT * FROM tab3

+SELECT udf(k), v FROM tab3

EXCEPT ALL

-SELECT * FROM tab4

+SELECT k, udf(v) FROM tab4

-- !query 11 schema

-struct<k:int,v:int>

+struct<CAST(udf(cast(k as string)) AS INT):int,v:int>

-- !query 11 output

1 2

1 3

-- !query 12

-SELECT * FROM tab4

+SELECT k, udf(v) FROM tab4

EXCEPT ALL

-SELECT * FROM tab3

+SELECT udf(k), v FROM tab3

-- !query 12 schema

-struct<k:int,v:int>

+struct<k:int,CAST(udf(cast(v as string)) AS INT):int>

-- !query 12 output

2 2

2 20

-- !query 13

-SELECT * FROM tab4

+SELECT udf(k), udf(v) FROM tab4

EXCEPT ALL

-SELECT * FROM tab3

+SELECT udf(k), udf(v) FROM tab3

INTERSECT DISTINCT

-SELECT * FROM tab4

+SELECT udf(k), udf(v) FROM tab4

-- !query 13 schema

-struct<k:int,v:int>

+struct<CAST(udf(cast(k as string)) AS INT):int,CAST(udf(cast(v as string)) AS INT):int>

-- !query 13 output

2 2

2 20

-- !query 14

-SELECT * FROM tab4

+SELECT udf(k), v FROM tab4

EXCEPT ALL

-SELECT * FROM tab3

+SELECT k, udf(v) FROM tab3

EXCEPT DISTINCT

-SELECT * FROM tab4

+SELECT udf(k), udf(v) FROM tab4

-- !query 14 schema

-struct<k:int,v:int>

+struct<CAST(udf(cast(k as string)) AS INT):int,v:int>

-- !query 14 output

-- !query 15

-SELECT * FROM tab3

+SELECT k, udf(v) FROM tab3

EXCEPT ALL

-SELECT * FROM tab4

+SELECT udf(k), udf(v) FROM tab4

UNION ALL

-SELECT * FROM tab3

+SELECT udf(k), v FROM tab3

EXCEPT DISTINCT

-SELECT * FROM tab4

+SELECT k, udf(v) FROM tab4

-- !query 15 schema

-struct<k:int,v:int>

+struct<k:int,CAST(udf(cast(v as string)) AS INT):int>

-- !query 15 output

1 3

-217,83 +217,83 ExceptAll can only be performed on tables with the same number of columns, but t

-- !query 17

-SELECT * FROM tab3

+SELECT udf(k), udf(v) FROM tab3

EXCEPT ALL

-SELECT * FROM tab4

+SELECT udf(k), udf(v) FROM tab4

UNION

-SELECT * FROM tab3

+SELECT udf(k), udf(v) FROM tab3

EXCEPT DISTINCT

-SELECT * FROM tab4

+SELECT udf(k), udf(v) FROM tab4

-- !query 17 schema

-struct<k:int,v:int>

+struct<CAST(udf(cast(k as string)) AS INT):int,CAST(udf(cast(v as string)) AS INT):int>

-- !query 17 output

1 3

-- !query 18

-SELECT * FROM tab3

+SELECT udf(k), udf(v) FROM tab3

MINUS ALL

-SELECT * FROM tab4

+SELECT k, udf(v) FROM tab4

UNION

-SELECT * FROM tab3

+SELECT udf(k), udf(v) FROM tab3

MINUS DISTINCT

-SELECT * FROM tab4

+SELECT k, udf(v) FROM tab4

-- !query 18 schema

-struct<k:int,v:int>

+struct<CAST(udf(cast(k as string)) AS INT):int,CAST(udf(cast(v as string)) AS INT):int>

-- !query 18 output

1 3

-- !query 19

-SELECT * FROM tab3

+SELECT k, udf(v) FROM tab3

EXCEPT ALL

-SELECT * FROM tab4

+SELECT udf(k), v FROM tab4

EXCEPT DISTINCT

-SELECT * FROM tab3

+SELECT k, udf(v) FROM tab3

EXCEPT DISTINCT

-SELECT * FROM tab4

+SELECT udf(k), v FROM tab4

-- !query 19 schema

-struct<k:int,v:int>

+struct<k:int,CAST(udf(cast(v as string)) AS INT):int>

-- !query 19 output

-- !query 20

SELECT *

-FROM (SELECT tab3.k,

- tab4.v

+FROM (SELECT tab3.k,

+ udf(tab4.v)

FROM tab3

JOIN tab4

- ON tab3.k = tab4.k)

+ ON udf(tab3.k) = tab4.k)

EXCEPT ALL

SELECT *

-FROM (SELECT tab3.k,

- tab4.v

+FROM (SELECT udf(tab3.k),

+ tab4.v

FROM tab3

JOIN tab4

- ON tab3.k = tab4.k)

+ ON tab3.k = udf(tab4.k))

-- !query 20 schema

-struct<k:int,v:int>

+struct<k:int,CAST(udf(cast(v as string)) AS INT):int>

-- !query 20 output

-- !query 21

SELECT *

-FROM (SELECT tab3.k,

- tab4.v

+FROM (SELECT udf(udf(tab3.k)),

+ udf(tab4.v)

FROM tab3

JOIN tab4

- ON tab3.k = tab4.k)

+ ON udf(udf(tab3.k)) = udf(tab4.k))

EXCEPT ALL

SELECT *

-FROM (SELECT tab4.v AS k,

- tab3.k AS v

+FROM (SELECT udf(tab4.v) AS k,

+ udf(udf(tab3.k)) AS v

FROM tab3

JOIN tab4

- ON tab3.k = tab4.k)

+ ON udf(tab3.k) = udf(tab4.k))

-- !query 21 schema

-struct<k:int,v:int>

+struct<CAST(udf(cast(cast(udf(cast(k as string)) as int) as string)) AS INT):int,CAST(udf(cast(v as string)) AS INT):int>

-- !query 21 output

1 2

1 2

-305,11 +305,11 struct<k:int,v:int>

-- !query 22

-SELECT v FROM tab3 GROUP BY v

+SELECT udf(v) FROM tab3 GROUP BY v

EXCEPT ALL

-SELECT k FROM tab4 GROUP BY k

+SELECT udf(k) FROM tab4 GROUP BY k

-- !query 22 schema

-struct<v:int>

+struct<CAST(udf(cast(v as string)) AS INT):int>

-- !query 22 output

3

```

</p>

</details>

## How was this patch tested?

Tested as guided in [SPARK-27921](https://issues.apache.org/jira/browse/SPARK-27921).

Closes#25090 from imback82/except-all.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This PR adds some tests converted from `intersect-all.sql` to test UDFs. Please see contribution guide of this umbrella ticket - [SPARK-27921](https://issues.apache.org/jira/browse/SPARK-27921).

<details><summary>Diff comparing to 'intersect-all.sql'</summary>

<p>

```diff

diff --git a/sql/core/src/test/resources/sql-tests/results/intersect-all.sql.out b/sql/core/src/test/resources/sql-tests/results/udf/udf-intersect-all.sql.out

index 63dd56ce46..0cb82be2da 100644

--- a/sql/core/src/test/resources/sql-tests/results/intersect-all.sql.out

+++ b/sql/core/src/test/resources/sql-tests/results/udf/udf-intersect-all.sql.out

-34,11 +34,11 struct<>

-- !query 2

-SELECT * FROM tab1

+SELECT udf(k), v FROM tab1

INTERSECT ALL

-SELECT * FROM tab2

+SELECT k, udf(v) FROM tab2

-- !query 2 schema

-struct<k:int,v:int>

+struct<CAST(udf(cast(k as string)) AS INT):int,v:int>

-- !query 2 output

1 2

1 2

-48,11 +48,11 NULL NULL

-- !query 3

-SELECT * FROM tab1

+SELECT k, udf(v) FROM tab1

INTERSECT ALL

-SELECT * FROM tab1 WHERE k = 1

+SELECT udf(k), v FROM tab1 WHERE udf(k) = 1

-- !query 3 schema

-struct<k:int,v:int>

+struct<k:int,CAST(udf(cast(v as string)) AS INT):int>

-- !query 3 output

1 2

1 2

-61,39 +61,39 struct<k:int,v:int>

-- !query 4

-SELECT * FROM tab1 WHERE k > 2

+SELECT udf(k), udf(v) FROM tab1 WHERE k > udf(2)

INTERSECT ALL

-SELECT * FROM tab2

+SELECT udf(k), udf(v) FROM tab2

-- !query 4 schema

-struct<k:int,v:int>

+struct<CAST(udf(cast(k as string)) AS INT):int,CAST(udf(cast(v as string)) AS INT):int>

-- !query 4 output

-- !query 5

-SELECT * FROM tab1

+SELECT udf(k), v FROM tab1

INTERSECT ALL

-SELECT * FROM tab2 WHERE k > 3

+SELECT udf(k), v FROM tab2 WHERE udf(udf(k)) > 3

-- !query 5 schema

-struct<k:int,v:int>

+struct<CAST(udf(cast(k as string)) AS INT):int,v:int>

-- !query 5 output

-- !query 6

-SELECT * FROM tab1

+SELECT udf(k), v FROM tab1

INTERSECT ALL

-SELECT CAST(1 AS BIGINT), CAST(2 AS BIGINT)

+SELECT CAST(udf(1) AS BIGINT), CAST(udf(2) AS BIGINT)

-- !query 6 schema

-struct<k:bigint,v:bigint>

+struct<CAST(udf(cast(k as string)) AS INT):bigint,v:bigint>

-- !query 6 output

1 2

-- !query 7

-SELECT * FROM tab1

+SELECT k, udf(v) FROM tab1

INTERSECT ALL

-SELECT array(1), 2

+SELECT array(1), udf(2)

-- !query 7 schema

struct<>

-- !query 7 output

-102,9 +102,9 IntersectAll can only be performed on tables with the compatible column types. a

-- !query 8

-SELECT k FROM tab1

+SELECT udf(k) FROM tab1

INTERSECT ALL

-SELECT k, v FROM tab2

+SELECT udf(k), udf(v) FROM tab2

-- !query 8 schema

struct<>

-- !query 8 output

-113,13 +113,13 IntersectAll can only be performed on tables with the same number of columns, bu

-- !query 9

-SELECT * FROM tab2

+SELECT udf(k), v FROM tab2

INTERSECT ALL

-SELECT * FROM tab1

+SELECT k, udf(v) FROM tab1

INTERSECT ALL

-SELECT * FROM tab2

+SELECT udf(k), udf(v) FROM tab2

-- !query 9 schema

-struct<k:int,v:int>

+struct<CAST(udf(cast(k as string)) AS INT):int,v:int>

-- !query 9 output

1 2

1 2

-129,15 +129,15 NULL NULL

-- !query 10

-SELECT * FROM tab1

+SELECT udf(k), v FROM tab1

EXCEPT

-SELECT * FROM tab2

+SELECT k, udf(v) FROM tab2

UNION ALL

-SELECT * FROM tab1

+SELECT k, udf(udf(v)) FROM tab1

INTERSECT ALL

-SELECT * FROM tab2

+SELECT udf(k), v FROM tab2

-- !query 10 schema

-struct<k:int,v:int>

+struct<CAST(udf(cast(k as string)) AS INT):int,v:int>

-- !query 10 output

1 2

1 2

-148,15 +148,15 NULL NULL

-- !query 11

-SELECT * FROM tab1

+SELECT udf(k), udf(v) FROM tab1

EXCEPT

-SELECT * FROM tab2

+SELECT udf(k), v FROM tab2

EXCEPT

-SELECT * FROM tab1

+SELECT k, udf(v) FROM tab1

INTERSECT ALL

-SELECT * FROM tab2

+SELECT udf(k), udf(udf(v)) FROM tab2

-- !query 11 schema

-struct<k:int,v:int>

+struct<CAST(udf(cast(k as string)) AS INT):int,CAST(udf(cast(v as string)) AS INT):int>

-- !query 11 output

1 3

-165,38 +165,38 struct<k:int,v:int>

(

(

(

- SELECT * FROM tab1

+ SELECT udf(k), v FROM tab1

EXCEPT

- SELECT * FROM tab2

+ SELECT k, udf(v) FROM tab2

)

EXCEPT

- SELECT * FROM tab1

+ SELECT udf(k), udf(v) FROM tab1

)

INTERSECT ALL

- SELECT * FROM tab2

+ SELECT udf(k), udf(v) FROM tab2

)

-- !query 12 schema

-struct<k:int,v:int>

+struct<CAST(udf(cast(k as string)) AS INT):int,v:int>

-- !query 12 output

-- !query 13

SELECT *

-FROM (SELECT tab1.k,

- tab2.v

+FROM (SELECT udf(tab1.k),

+ udf(tab2.v)

FROM tab1

JOIN tab2

- ON tab1.k = tab2.k)

+ ON udf(udf(tab1.k)) = tab2.k)

INTERSECT ALL

SELECT *

-FROM (SELECT tab1.k,

- tab2.v

+FROM (SELECT udf(tab1.k),

+ udf(tab2.v)

FROM tab1

JOIN tab2

- ON tab1.k = tab2.k)

+ ON udf(tab1.k) = udf(udf(tab2.k)))

-- !query 13 schema

-struct<k:int,v:int>

+struct<CAST(udf(cast(k as string)) AS INT):int,CAST(udf(cast(v as string)) AS INT):int>

-- !query 13 output

1 2

1 2

-211,30 +211,30 struct<k:int,v:int>

-- !query 14

SELECT *

-FROM (SELECT tab1.k,

- tab2.v

+FROM (SELECT udf(tab1.k),

+ udf(tab2.v)

FROM tab1

JOIN tab2

- ON tab1.k = tab2.k)

+ ON udf(tab1.k) = udf(tab2.k))

INTERSECT ALL

SELECT *

-FROM (SELECT tab2.v AS k,

- tab1.k AS v

+FROM (SELECT udf(tab2.v) AS k,

+ udf(tab1.k) AS v

FROM tab1

JOIN tab2

- ON tab1.k = tab2.k)

+ ON tab1.k = udf(tab2.k))

-- !query 14 schema

-struct<k:int,v:int>

+struct<CAST(udf(cast(k as string)) AS INT):int,CAST(udf(cast(v as string)) AS INT):int>

-- !query 14 output

-- !query 15

-SELECT v FROM tab1 GROUP BY v

+SELECT udf(v) FROM tab1 GROUP BY v

INTERSECT ALL

-SELECT k FROM tab2 GROUP BY k

+SELECT udf(udf(k)) FROM tab2 GROUP BY k

-- !query 15 schema

-struct<v:int>

+struct<CAST(udf(cast(v as string)) AS INT):int>

-- !query 15 output

2

3

-250,15 +250,15 spark.sql.legacy.setopsPrecedence.enabled true

-- !query 17

-SELECT * FROM tab1

+SELECT udf(k), v FROM tab1

EXCEPT

-SELECT * FROM tab2

+SELECT k, udf(v) FROM tab2

UNION ALL

-SELECT * FROM tab1

+SELECT udf(k), udf(v) FROM tab1

INTERSECT ALL

-SELECT * FROM tab2

+SELECT udf(udf(k)), udf(v) FROM tab2

-- !query 17 schema

-struct<k:int,v:int>

+struct<CAST(udf(cast(k as string)) AS INT):int,v:int>

-- !query 17 output

1 2

1 2

-268,15 +268,15 NULL NULL

-- !query 18

-SELECT * FROM tab1

+SELECT k, udf(v) FROM tab1

EXCEPT

-SELECT * FROM tab2

+SELECT udf(k), v FROM tab2

UNION ALL

-SELECT * FROM tab1

+SELECT udf(k), udf(v) FROM tab1

INTERSECT

-SELECT * FROM tab2

+SELECT udf(k), udf(udf(v)) FROM tab2

-- !query 18 schema

-struct<k:int,v:int>

+struct<k:int,CAST(udf(cast(v as string)) AS INT):int>

-- !query 18 output

1 2

2 3

```

</p>

</details>

## How was this patch tested?

Tested as guided in [SPARK-27921](https://issues.apache.org/jira/browse/SPARK-27921).

Closes#25119 from imback82/intersect-all-sql.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This PR adds some tests converted from `cross-join.sql'` to test UDFs.

<details><summary>Diff comparing to 'cross-join.sql'</summary>

<p>

```diff

diff --git a/sql/core/src/test/resources/sql-tests/results/cross-join.sql.out b/sql/core/src/test/resources/sql-tests/results/udf/udf-cross-join.sql.out

index 3833c42bdf..11c1e01d54 100644

--- a/sql/core/src/test/resources/sql-tests/results/cross-join.sql.out

+++ b/sql/core/src/test/resources/sql-tests/results/udf/udf-cross-join.sql.out

-43,7 +43,7 two 2 two 22

-- !query 3

-SELECT * FROM nt1 cross join nt2 where nt1.k = nt2.k

+SELECT * FROM nt1 cross join nt2 where udf(nt1.k) = udf(nt2.k)

-- !query 3 schema

struct<k:string,v1:int,k:string,v2:int>

-- !query 3 output

-53,7 +53,7 two 2 two 22

-- !query 4

-SELECT * FROM nt1 cross join nt2 on (nt1.k = nt2.k)

+SELECT * FROM nt1 cross join nt2 on (udf(nt1.k) = udf(nt2.k))

-- !query 4 schema

struct<k:string,v1:int,k:string,v2:int>

-- !query 4 output

-63,7 +63,7 two 2 two 22

-- !query 5

-SELECT * FROM nt1 cross join nt2 where nt1.v1 = 1 and nt2.v2 = 22

+SELECT * FROM nt1 cross join nt2 where udf(nt1.v1) = "1" and udf(nt2.v2) = "22"

-- !query 5 schema

struct<k:string,v1:int,k:string,v2:int>

-- !query 5 output

-71,12 +71,12 one 1 two 22

-- !query 6

-SELECT a.key, b.key FROM

-(SELECT k key FROM nt1 WHERE v1 < 2) a

+SELECT udf(a.key), udf(b.key) FROM

+(SELECT udf(k) key FROM nt1 WHERE v1 < 2) a

CROSS JOIN

-(SELECT k key FROM nt2 WHERE v2 = 22) b

+(SELECT udf(k) key FROM nt2 WHERE v2 = 22) b

-- !query 6 schema

-struct<key:string,key:string>

+struct<udf(key):string,udf(key):string>

-- !query 6 output

one two

-114,23 +114,29 struct<>

-- !query 11

-select * from ((A join B on (a = b)) cross join C) join D on (a = d)

+select * from ((A join B on (udf(a) = udf(b))) cross join C) join D on (udf(a) = udf(d))

-- !query 11 schema

-struct<a:string,va:int,b:string,vb:int,c:string,vc:int,d:string,vd:int>

+struct<>

-- !query 11 output

-one 1 one 1 one 1 one 1

-one 1 one 1 three 3 one 1

-one 1 one 1 two 2 one 1

-three 3 three 3 one 1 three 3

-three 3 three 3 three 3 three 3

-three 3 three 3 two 2 three 3

-two 2 two 2 one 1 two 2

-two 2 two 2 three 3 two 2

-two 2 two 2 two 2 two 2

+org.apache.spark.sql.AnalysisException

+Detected implicit cartesian product for INNER join between logical plans

+Filter (udf(a#x) = udf(b#x))

++- Join Inner

+ :- Project [k#x AS a#x, v1#x AS va#x]

+ : +- LocalRelation [k#x, v1#x]

+ +- Project [k#x AS b#x, v1#x AS vb#x]

+ +- LocalRelation [k#x, v1#x]

+and

+Project [k#x AS d#x, v1#x AS vd#x]

++- LocalRelation [k#x, v1#x]

+Join condition is missing or trivial.

+Either: use the CROSS JOIN syntax to allow cartesian products between these

+relations, or: enable implicit cartesian products by setting the configuration

+variable spark.sql.crossJoin.enabled=true;

-- !query 12

-SELECT * FROM nt1 CROSS JOIN nt2 ON (nt1.k > nt2.k)

+SELECT * FROM nt1 CROSS JOIN nt2 ON (udf(nt1.k) > udf(nt2.k))

-- !query 12 schema

struct<k:string,v1:int,k:string,v2:int>

-- !query 12 output

```

</p>

</details>

## How was this patch tested?

Added test.

Closes#25168 from viirya/SPARK-28276.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In the following python code

```

df.write.mode("overwrite").insertInto("table")

```

```insertInto``` ignores ```mode("overwrite")``` and appends by default.

## How was this patch tested?

Add Unit test.

Closes#25175 from huaxingao/spark-28411.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

In the PR, I propose to convert options values to strings by using `to_str()` for the following functions: `from_csv()`, `to_csv()`, `from_json()`, `to_json()`, `schema_of_csv()` and `schema_of_json()`. This will make handling of function options consistent to option handling in `DataFrameReader`/`DataFrameWriter`.

For example:

```Python

df.select(from_csv(df.value, "s string", {'ignoreLeadingWhiteSpace': True})

```

## How was this patch tested?

Added an example for `from_csv()` which was tested by:

```Shell

./python/run-tests --testnames pyspark.sql.functions

```

Closes#25182 from MaxGekk/options_to_str.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

PostgreSQL doesn't have `TINYINT`, which would map directly, but `SMALLINT`s are sufficient for uni-directional translation.

A side-effect of this fix is that `AggregatedDialect` is now usable with multiple dialects targeting `jdbc:postgresql`, as `PostgresDialect.getJDBCType` no longer throws (for which reason backporting this fix would be lovely):

1217996f15/sql/core/src/main/scala/org/apache/spark/sql/jdbc/AggregatedDialect.scala (L42)

`dialects.flatMap` currently throws on the first attempt to get a JDBC type preventing subsequent dialects in the chain from providing an alternative.

## How was this patch tested?

Unit tests.

Closes#24845 from mojodna/postgres-byte-type-mapping.

Authored-by: Seth Fitzsimmons <seth@mojodna.net>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

Current UDFs available in `IntegratedUDFTestUtils` are not exactly no-op. It converts input column to strings and outputs to strings.

It causes some issues when we convert and port the tests at SPARK-27921. Integrated UDF test cases share one output file and it should outputs the same. However,

1. Special values are converted into strings differently:

| Scala | Python |

| ---------- | ------ |

| `null` | `None` |

| `Infinity` | `inf` |

| `-Infinity`| `-inf` |

| `NaN` | `nan` |

2. Due to float limitation at Python (see https://docs.python.org/3/tutorial/floatingpoint.html), if float is passed into Python and sent back to JVM, the values are potentially not exactly correct. See https://github.com/apache/spark/pull/25128 and https://github.com/apache/spark/pull/25110

To work around this, this PR targets to change the current UDF to be wrapped by cast. So, Input column is casted into string, UDF returns strings as are, and then output column is casted back to the input column.

Roughly:

**Before:**

```

JVM (col1) -> (cast to string within Python) Python (string) -> (string) JVM

```

**After:**

```

JVM (cast col1 to string) -> (string) Python (string) -> (cast back to col1's type) JVM

```

In this way, UDF is virtually no-op although there might be some subtleties due to roundtrip in string cast. I believe this is good enough.

Python native functions and Scala native functions will take strings and output strings as are. So, there will be no potential test failures due to differences of conversion between Python and Scala.

After this fix, for instance, `udf-aggregates_part1.sql` outputs exactly same as `aggregates_part1.sql`:

<details><summary>Diff comparing to 'pgSQL/aggregates_part1.sql'</summary>

<p>

```diff

diff --git a/sql/core/src/test/resources/sql-tests/results/pgSQL/aggregates_part1.sql.out b/sql/core/src/test/resources/sql-tests/results/udf/pgSQL/udf-aggregates_part1.sql.out

index 51ca1d55869..801735781c7 100644

--- a/sql/core/src/test/resources/sql-tests/results/pgSQL/aggregates_part1.sql.out

+++ b/sql/core/src/test/resources/sql-tests/results/udf/pgSQL/udf-aggregates_part1.sql.out

-3,7 +3,7

-- !query 0

-SELECT avg(four) AS avg_1 FROM onek

+SELECT avg(udf(four)) AS avg_1 FROM onek

-- !query 0 schema

struct<avg_1:double>

-- !query 0 output

-11,7 +11,7 struct<avg_1:double>

-- !query 1

-SELECT avg(a) AS avg_32 FROM aggtest WHERE a < 100

+SELECT udf(avg(a)) AS avg_32 FROM aggtest WHERE a < 100

-- !query 1 schema

struct<avg_32:double>

-- !query 1 output

-19,7 +19,7 struct<avg_32:double>

-- !query 2

-select CAST(avg(b) AS Decimal(10,3)) AS avg_107_943 FROM aggtest

+select CAST(avg(udf(b)) AS Decimal(10,3)) AS avg_107_943 FROM aggtest

-- !query 2 schema

struct<avg_107_943:decimal(10,3)>

-- !query 2 output

-27,7 +27,7 struct<avg_107_943:decimal(10,3)>

-- !query 3

-SELECT sum(four) AS sum_1500 FROM onek

+SELECT sum(udf(four)) AS sum_1500 FROM onek

-- !query 3 schema

struct<sum_1500:bigint>

-- !query 3 output

-35,7 +35,7 struct<sum_1500:bigint>

-- !query 4

-SELECT sum(a) AS sum_198 FROM aggtest

+SELECT udf(sum(a)) AS sum_198 FROM aggtest

-- !query 4 schema

struct<sum_198:bigint>

-- !query 4 output

-43,7 +43,7 struct<sum_198:bigint>

-- !query 5

-SELECT sum(b) AS avg_431_773 FROM aggtest

+SELECT udf(udf(sum(b))) AS avg_431_773 FROM aggtest

-- !query 5 schema

struct<avg_431_773:double>

-- !query 5 output

-51,7 +51,7 struct<avg_431_773:double>

-- !query 6

-SELECT max(four) AS max_3 FROM onek

+SELECT udf(max(four)) AS max_3 FROM onek

-- !query 6 schema

struct<max_3:int>

-- !query 6 output

-59,7 +59,7 struct<max_3:int>

-- !query 7

-SELECT max(a) AS max_100 FROM aggtest

+SELECT max(udf(a)) AS max_100 FROM aggtest

-- !query 7 schema

struct<max_100:int>

-- !query 7 output

-67,7 +67,7 struct<max_100:int>

-- !query 8

-SELECT max(aggtest.b) AS max_324_78 FROM aggtest

+SELECT udf(udf(max(aggtest.b))) AS max_324_78 FROM aggtest

-- !query 8 schema

struct<max_324_78:float>

-- !query 8 output

-75,237 +75,238 struct<max_324_78:float>

-- !query 9

-SELECT stddev_pop(b) FROM aggtest

+SELECT stddev_pop(udf(b)) FROM aggtest

-- !query 9 schema

-struct<stddev_pop(CAST(b AS DOUBLE)):double>

+struct<stddev_pop(CAST(CAST(udf(cast(b as string)) AS FLOAT) AS DOUBLE)):double>

-- !query 9 output

131.10703231895047

-- !query 10

-SELECT stddev_samp(b) FROM aggtest

+SELECT udf(stddev_samp(b)) FROM aggtest

-- !query 10 schema

-struct<stddev_samp(CAST(b AS DOUBLE)):double>

+struct<CAST(udf(cast(stddev_samp(cast(b as double)) as string)) AS DOUBLE):double>

-- !query 10 output

151.38936080399804

-- !query 11

-SELECT var_pop(b) FROM aggtest

+SELECT var_pop(udf(b)) FROM aggtest

-- !query 11 schema

-struct<var_pop(CAST(b AS DOUBLE)):double>

+struct<var_pop(CAST(CAST(udf(cast(b as string)) AS FLOAT) AS DOUBLE)):double>

-- !query 11 output

17189.053923482323

-- !query 12

-SELECT var_samp(b) FROM aggtest

+SELECT udf(var_samp(b)) FROM aggtest

-- !query 12 schema

-struct<var_samp(CAST(b AS DOUBLE)):double>

+struct<CAST(udf(cast(var_samp(cast(b as double)) as string)) AS DOUBLE):double>

-- !query 12 output

22918.738564643096

-- !query 13

-SELECT stddev_pop(CAST(b AS Decimal(38,0))) FROM aggtest

+SELECT udf(stddev_pop(CAST(b AS Decimal(38,0)))) FROM aggtest

-- !query 13 schema

-struct<stddev_pop(CAST(CAST(b AS DECIMAL(38,0)) AS DOUBLE)):double>

+struct<CAST(udf(cast(stddev_pop(cast(cast(b as decimal(38,0)) as double)) as string)) AS DOUBLE):double>

-- !query 13 output

131.18117242958306

-- !query 14

-SELECT stddev_samp(CAST(b AS Decimal(38,0))) FROM aggtest

+SELECT stddev_samp(CAST(udf(b) AS Decimal(38,0))) FROM aggtest

-- !query 14 schema

-struct<stddev_samp(CAST(CAST(b AS DECIMAL(38,0)) AS DOUBLE)):double>

+struct<stddev_samp(CAST(CAST(CAST(udf(cast(b as string)) AS FLOAT) AS DECIMAL(38,0)) AS DOUBLE)):double>

-- !query 14 output

151.47497042966097

-- !query 15

-SELECT var_pop(CAST(b AS Decimal(38,0))) FROM aggtest

+SELECT udf(var_pop(CAST(b AS Decimal(38,0)))) FROM aggtest

-- !query 15 schema

-struct<var_pop(CAST(CAST(b AS DECIMAL(38,0)) AS DOUBLE)):double>

+struct<CAST(udf(cast(var_pop(cast(cast(b as decimal(38,0)) as double)) as string)) AS DOUBLE):double>

-- !query 15 output

17208.5

-- !query 16

-SELECT var_samp(CAST(b AS Decimal(38,0))) FROM aggtest

+SELECT var_samp(udf(CAST(b AS Decimal(38,0)))) FROM aggtest

-- !query 16 schema

-struct<var_samp(CAST(CAST(b AS DECIMAL(38,0)) AS DOUBLE)):double>

+struct<var_samp(CAST(CAST(udf(cast(cast(b as decimal(38,0)) as string)) AS DECIMAL(38,0)) AS DOUBLE)):double>

-- !query 16 output

22944.666666666668

-- !query 17

-SELECT var_pop(1.0), var_samp(2.0)

+SELECT udf(var_pop(1.0)), var_samp(udf(2.0))

-- !query 17 schema

-struct<var_pop(CAST(1.0 AS DOUBLE)):double,var_samp(CAST(2.0 AS DOUBLE)):double>

+struct<CAST(udf(cast(var_pop(cast(1.0 as double)) as string)) AS DOUBLE):double,var_samp(CAST(CAST(udf(cast(2.0 as string)) AS DECIMAL(2,1)) AS DOUBLE)):double>

-- !query 17 output

0.0 NaN

-- !query 18

-SELECT stddev_pop(CAST(3.0 AS Decimal(38,0))), stddev_samp(CAST(4.0 AS Decimal(38,0)))

+SELECT stddev_pop(udf(CAST(3.0 AS Decimal(38,0)))), stddev_samp(CAST(udf(4.0) AS Decimal(38,0)))

-- !query 18 schema

-struct<stddev_pop(CAST(CAST(3.0 AS DECIMAL(38,0)) AS DOUBLE)):double,stddev_samp(CAST(CAST(4.0 AS DECIMAL(38,0)) AS DOUBLE)):double>

+struct<stddev_pop(CAST(CAST(udf(cast(cast(3.0 as decimal(38,0)) as string)) AS DECIMAL(38,0)) AS DOUBLE)):double,stddev_samp(CAST(CAST(CAST(udf(cast(4.0 as string)) AS DECIMAL(2,1)) AS DECIMAL(38,0)) AS DOUBLE)):double>

-- !query 18 output

0.0 NaN

-- !query 19

-select sum(CAST(null AS int)) from range(1,4)

+select sum(udf(CAST(null AS int))) from range(1,4)

-- !query 19 schema

-struct<sum(CAST(NULL AS INT)):bigint>

+struct<sum(CAST(udf(cast(cast(null as int) as string)) AS INT)):bigint>

-- !query 19 output

NULL

-- !query 20

-select sum(CAST(null AS long)) from range(1,4)

+select sum(udf(CAST(null AS long))) from range(1,4)

-- !query 20 schema

-struct<sum(CAST(NULL AS BIGINT)):bigint>

+struct<sum(CAST(udf(cast(cast(null as bigint) as string)) AS BIGINT)):bigint>

-- !query 20 output

NULL

-- !query 21

-select sum(CAST(null AS Decimal(38,0))) from range(1,4)

+select sum(udf(CAST(null AS Decimal(38,0)))) from range(1,4)

-- !query 21 schema

-struct<sum(CAST(NULL AS DECIMAL(38,0))):decimal(38,0)>

+struct<sum(CAST(udf(cast(cast(null as decimal(38,0)) as string)) AS DECIMAL(38,0))):decimal(38,0)>

-- !query 21 output

NULL

-- !query 22

-select sum(CAST(null AS DOUBLE)) from range(1,4)

+select sum(udf(CAST(null AS DOUBLE))) from range(1,4)

-- !query 22 schema

-struct<sum(CAST(NULL AS DOUBLE)):double>

+struct<sum(CAST(udf(cast(cast(null as double) as string)) AS DOUBLE)):double>

-- !query 22 output

NULL

-- !query 23

-select avg(CAST(null AS int)) from range(1,4)

+select avg(udf(CAST(null AS int))) from range(1,4)

-- !query 23 schema

-struct<avg(CAST(NULL AS INT)):double>

+struct<avg(CAST(udf(cast(cast(null as int) as string)) AS INT)):double>

-- !query 23 output

NULL

-- !query 24

-select avg(CAST(null AS long)) from range(1,4)

+select avg(udf(CAST(null AS long))) from range(1,4)

-- !query 24 schema

-struct<avg(CAST(NULL AS BIGINT)):double>

+struct<avg(CAST(udf(cast(cast(null as bigint) as string)) AS BIGINT)):double>

-- !query 24 output

NULL

-- !query 25

-select avg(CAST(null AS Decimal(38,0))) from range(1,4)

+select avg(udf(CAST(null AS Decimal(38,0)))) from range(1,4)

-- !query 25 schema

-struct<avg(CAST(NULL AS DECIMAL(38,0))):decimal(38,4)>

+struct<avg(CAST(udf(cast(cast(null as decimal(38,0)) as string)) AS DECIMAL(38,0))):decimal(38,4)>

-- !query 25 output

NULL

-- !query 26

-select avg(CAST(null AS DOUBLE)) from range(1,4)

+select avg(udf(CAST(null AS DOUBLE))) from range(1,4)

-- !query 26 schema

-struct<avg(CAST(NULL AS DOUBLE)):double>

+struct<avg(CAST(udf(cast(cast(null as double) as string)) AS DOUBLE)):double>

-- !query 26 output

NULL

-- !query 27

-select sum(CAST('NaN' AS DOUBLE)) from range(1,4)

+select sum(CAST(udf('NaN') AS DOUBLE)) from range(1,4)

-- !query 27 schema

-struct<sum(CAST(NaN AS DOUBLE)):double>

+struct<sum(CAST(CAST(udf(cast(NaN as string)) AS STRING) AS DOUBLE)):double>

-- !query 27 output

NaN

-- !query 28

-select avg(CAST('NaN' AS DOUBLE)) from range(1,4)

+select avg(CAST(udf('NaN') AS DOUBLE)) from range(1,4)

-- !query 28 schema

-struct<avg(CAST(NaN AS DOUBLE)):double>

+struct<avg(CAST(CAST(udf(cast(NaN as string)) AS STRING) AS DOUBLE)):double>

-- !query 28 output

NaN

-- !query 30

-SELECT avg(CAST(x AS DOUBLE)), var_pop(CAST(x AS DOUBLE))

+SELECT avg(CAST(udf(x) AS DOUBLE)), var_pop(CAST(udf(x) AS DOUBLE))

FROM (VALUES ('Infinity'), ('1')) v(x)

-- !query 30 schema

-struct<avg(CAST(x AS DOUBLE)):double,var_pop(CAST(x AS DOUBLE)):double>

+struct<avg(CAST(CAST(udf(cast(x as string)) AS STRING) AS DOUBLE)):double,var_pop(CAST(CAST(udf(cast(x as string)) AS STRING) AS DOUBLE)):double>

-- !query 30 output

Infinity NaN

-- !query 31

-SELECT avg(CAST(x AS DOUBLE)), var_pop(CAST(x AS DOUBLE))

+SELECT avg(CAST(udf(x) AS DOUBLE)), var_pop(CAST(udf(x) AS DOUBLE))

FROM (VALUES ('Infinity'), ('Infinity')) v(x)

-- !query 31 schema

-struct<avg(CAST(x AS DOUBLE)):double,var_pop(CAST(x AS DOUBLE)):double>

+struct<avg(CAST(CAST(udf(cast(x as string)) AS STRING) AS DOUBLE)):double,var_pop(CAST(CAST(udf(cast(x as string)) AS STRING) AS DOUBLE)):double>

-- !query 31 output

Infinity NaN

-- !query 32

-SELECT avg(CAST(x AS DOUBLE)), var_pop(CAST(x AS DOUBLE))

+SELECT avg(CAST(udf(x) AS DOUBLE)), var_pop(CAST(udf(x) AS DOUBLE))

FROM (VALUES ('-Infinity'), ('Infinity')) v(x)

-- !query 32 schema

-struct<avg(CAST(x AS DOUBLE)):double,var_pop(CAST(x AS DOUBLE)):double>

+struct<avg(CAST(CAST(udf(cast(x as string)) AS STRING) AS DOUBLE)):double,var_pop(CAST(CAST(udf(cast(x as string)) AS STRING) AS DOUBLE)):double>

-- !query 32 output

NaN NaN

-- !query 33

-SELECT avg(CAST(x AS DOUBLE)), var_pop(CAST(x AS DOUBLE))

+SELECT avg(udf(CAST(x AS DOUBLE))), udf(var_pop(CAST(x AS DOUBLE)))

FROM (VALUES (100000003), (100000004), (100000006), (100000007)) v(x)

-- !query 33 schema

-struct<avg(CAST(x AS DOUBLE)):double,var_pop(CAST(x AS DOUBLE)):double>

+struct<avg(CAST(udf(cast(cast(x as double) as string)) AS DOUBLE)):double,CAST(udf(cast(var_pop(cast(x as double)) as string)) AS DOUBLE):double>

-- !query 33 output

1.00000005E8 2.5

-- !query 34

-SELECT avg(CAST(x AS DOUBLE)), var_pop(CAST(x AS DOUBLE))

+SELECT avg(udf(CAST(x AS DOUBLE))), udf(var_pop(CAST(x AS DOUBLE)))

FROM (VALUES (7000000000005), (7000000000007)) v(x)

-- !query 34 schema

-struct<avg(CAST(x AS DOUBLE)):double,var_pop(CAST(x AS DOUBLE)):double>

+struct<avg(CAST(udf(cast(cast(x as double) as string)) AS DOUBLE)):double,CAST(udf(cast(var_pop(cast(x as double)) as string)) AS DOUBLE):double>

-- !query 34 output

7.000000000006E12 1.0

-- !query 35

-SELECT covar_pop(b, a), covar_samp(b, a) FROM aggtest

+SELECT udf(covar_pop(b, udf(a))), covar_samp(udf(b), a) FROM aggtest

-- !query 35 schema

-struct<covar_pop(CAST(b AS DOUBLE), CAST(a AS DOUBLE)):double,covar_samp(CAST(b AS DOUBLE), CAST(a AS DOUBLE)):double>

+struct<CAST(udf(cast(covar_pop(cast(b as double), cast(cast(udf(cast(a as string)) as int) as double)) as string)) AS DOUBLE):double,covar_samp(CAST(CAST(udf(cast(b as string)) AS FLOAT) AS DOUBLE), CAST(a AS DOUBLE)):double>

-- !query 35 output

653.6289553875104 871.5052738500139

-- !query 36

-SELECT corr(b, a) FROM aggtest

+SELECT corr(b, udf(a)) FROM aggtest

-- !query 36 schema

-struct<corr(CAST(b AS DOUBLE), CAST(a AS DOUBLE)):double>

+struct<corr(CAST(b AS DOUBLE), CAST(CAST(udf(cast(a as string)) AS INT) AS DOUBLE)):double>

-- !query 36 output

0.1396345165178734

-- !query 37

-SELECT count(four) AS cnt_1000 FROM onek

+SELECT count(udf(four)) AS cnt_1000 FROM onek

-- !query 37 schema

struct<cnt_1000:bigint>

-- !query 37 output

-313,7 +314,7 struct<cnt_1000:bigint>

-- !query 38

-SELECT count(DISTINCT four) AS cnt_4 FROM onek

+SELECT udf(count(DISTINCT four)) AS cnt_4 FROM onek

-- !query 38 schema

struct<cnt_4:bigint>

-- !query 38 output

-321,10 +322,10 struct<cnt_4:bigint>

-- !query 39

-select ten, count(*), sum(four) from onek

+select ten, udf(count(*)), sum(udf(four)) from onek

group by ten order by ten

-- !query 39 schema

-struct<ten:int,count(1):bigint,sum(four):bigint>

+struct<ten:int,CAST(udf(cast(count(1) as string)) AS BIGINT):bigint,sum(CAST(udf(cast(four as string)) AS INT)):bigint>

-- !query 39 output

0 100 100

1 100 200

-339,10 +340,10 struct<ten:int,count(1):bigint,sum(four):bigint>

-- !query 40

-select ten, count(four), sum(DISTINCT four) from onek

+select ten, count(udf(four)), udf(sum(DISTINCT four)) from onek

group by ten order by ten

-- !query 40 schema

-struct<ten:int,count(four):bigint,sum(DISTINCT four):bigint>

+struct<ten:int,count(CAST(udf(cast(four as string)) AS INT)):bigint,CAST(udf(cast(sum(distinct cast(four as bigint)) as string)) AS BIGINT):bigint>

-- !query 40 output

0 100 2

1 100 4

-357,11 +358,11 struct<ten:int,count(four):bigint,sum(DISTINCT four):bigint>

-- !query 41

-select ten, sum(distinct four) from onek a

+select ten, udf(sum(distinct four)) from onek a

group by ten

-having exists (select 1 from onek b where sum(distinct a.four) = b.four)

+having exists (select 1 from onek b where udf(sum(distinct a.four)) = b.four)

-- !query 41 schema

-struct<ten:int,sum(DISTINCT four):bigint>

+struct<ten:int,CAST(udf(cast(sum(distinct cast(four as bigint)) as string)) AS BIGINT):bigint>

-- !query 41 output

0 2

2 2

-374,23 +375,23 struct<ten:int,sum(DISTINCT four):bigint>

select ten, sum(distinct four) from onek a

group by ten

having exists (select 1 from onek b

- where sum(distinct a.four + b.four) = b.four)

+ where sum(distinct a.four + b.four) = udf(b.four))

-- !query 42 schema

struct<>

-- !query 42 output

org.apache.spark.sql.AnalysisException

Aggregate/Window/Generate expressions are not valid in where clause of the query.

-Expression in where clause: [(sum(DISTINCT CAST((outer() + b.`four`) AS BIGINT)) = CAST(b.`four` AS BIGINT))]

+Expression in where clause: [(sum(DISTINCT CAST((outer() + b.`four`) AS BIGINT)) = CAST(CAST(udf(cast(four as string)) AS INT) AS BIGINT))]

Invalid expressions: [sum(DISTINCT CAST((outer() + b.`four`) AS BIGINT))];

-- !query 43

select

- (select max((select i.unique2 from tenk1 i where i.unique1 = o.unique1)))

+ (select udf(max((select i.unique2 from tenk1 i where i.unique1 = o.unique1))))

from tenk1 o

-- !query 43 schema

struct<>

-- !query 43 output

org.apache.spark.sql.AnalysisException

-cannot resolve '`o.unique1`' given input columns: [i.even, i.fivethous, i.four, i.hundred, i.odd, i.string4, i.stringu1, i.stringu2, i.ten, i.tenthous, i.thousand, i.twenty, i.two, i.twothousand, i.unique1, i.unique2]; line 2 pos 63

+cannot resolve '`o.unique1`' given input columns: [i.even, i.fivethous, i.four, i.hundred, i.odd, i.string4, i.stringu1, i.stringu2, i.ten, i.tenthous, i.thousand, i.twenty, i.two, i.twothousand, i.unique1, i.unique2]; line 2 pos 67

```

</p>

</details>

## How was this patch tested?

Manually tested.

Closes#25130 from HyukjinKwon/SPARK-28359.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

It fixes a flaky test:

```

ERROR [0.164s]: test_query_execution_listener_on_collect (pyspark.sql.tests.test_dataframe.QueryExecutionListenerTests)

----------------------------------------------------------------------

Traceback (most recent call last):

File "/home/jenkins/python/pyspark/sql/tests/test_dataframe.py", line 758, in test_query_execution_listener_on_collect

"The callback from the query execution listener should be called after 'collect'")

AssertionError: The callback from the query execution listener should be called after 'collect'

```

Seems it can be failed because the event was somehow delayed but checked first.

## How was this patch tested?

Manually.

Closes#25177 from HyukjinKwon/SPARK-28418.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

This change adds a new option that enables dynamic allocation without

the need for a shuffle service. This mode works by tracking which stages

generate shuffle files, and keeping executors that generate data for those

shuffles alive while the jobs that use them are active.

A separate timeout is also added for shuffle data; so that executors that

hold shuffle data can use a separate timeout before being removed because

of being idle. This allows the shuffle data to be kept around in case it

is needed by some new job, or allow users to be more aggressive in timing

out executors that don't have shuffle data in active use.

The code also hooks up to the context cleaner so that shuffles that are

garbage collected are detected, and the respective executors not held

unnecessarily.

Testing done with added unit tests, and also with TPC-DS workloads on

YARN without a shuffle service.

Closes#24817 from vanzin/SPARK-27963.

Authored-by: Marcelo Vanzin <vanzin@cloudera.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

Add 4 additional agg to KeyValueGroupedDataset

## How was this patch tested?

New test in DatasetSuite for typed aggregation

Closes#24993 from nooberfsh/sqlagg.

Authored-by: nooberfsh <nooberfsh@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

we are adding in generic resource support into spark where we have suffix for the amount of the resource so that we could support other configs.

Spark on yarn already had added configs to request resources via the configs spark.yarn.{executor/driver/am}.resource=<some amount>, where the <some amount> is value and unit together. We should change those configs to have a `.amount` suffix on them to match the spark configs and to allow future configs to be more easily added. YARN itself already supports tags and attributes so if we want the user to be able to pass those from spark at some point having a suffix makes sense. it would allow for a spark.yarn.{executor/driver/am}.resource.{resource}.tag= type config.

## How was this patch tested?

Tested via unit tests and manually on a yarn 3.x cluster with GPU resources configured on.

Closes#24989 from tgravescs/SPARK-27959-yarn-resourceconfigs.

Authored-by: Thomas Graves <tgraves@nvidia.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

This PR enables `spark.sql.function.preferIntegralDivision` for PostgreSQL testing.

## How was this patch tested?

N/A

Closes#25170 from wangyum/SPARK-28343-2.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

In regression/clustering/ovr/als, if an output column name is empty, igore it. And if all names are empty, log a warning msg, then do nothing.

## How was this patch tested?

existing tests

Closes#24793 from zhengruifeng/aft_iso_check_empty_outputCol.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

PR builder failed with the following error:

```

[error] /home/jenkins/workspace/SparkPullRequestBuilder/sql/core/src/test/scala/org/apache/spark/sql/execution/PlannerSuite.scala:714: wrong number of arguments for pattern org.apache.spark.sql.execution.exchange.ShuffleExchangeExec(outputPartitioning: org.apache.spark.sql.catalyst.plans.physical.Partitioning,child: org.apache.spark.sql.execution.SparkPlan,canChangeNumPartitions: Boolean)

[error] ShuffleExchangeExec(HashPartitioning(leftPartitioningExpressions, _), _), _),

[error] ^

[error] /home/jenkins/workspace/SparkPullRequestBuilder/sql/core/src/test/scala/org/apache/spark/sql/execution/PlannerSuite.scala:716: wrong number of arguments for pattern org.apache.spark.sql.execution.exchange.ShuffleExchangeExec(outputPartitioning: org.apache.spark.sql.catalyst.plans.physical.Partitioning,child: org.apache.spark.sql.execution.SparkPlan,canChangeNumPartitions: Boolean)

[error] ShuffleExchangeExec(HashPartitioning(rightPartitioningExpressions, _), _), _)) =>

[error] ^

```

## How was this patch tested?

Existing unit test.

Closes#25171 from gaborgsomogyi/SPARK-27485.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: herman <herman@databricks.com>

## What changes were proposed in this pull request?

Adaptive execution reduces the number of post-shuffle partitions at runtime, even for shuffles caused by repartition. However, the user likely wants to get the desired number of partition when he calls repartition even in adaptive execution. This PR adds an internal config to control this and by default adaptive execution will not change the number of post-shuffle partition for repartition.

## How was this patch tested?

New tests added.

Closes#25121 from carsonwang/AE_repartition.

Authored-by: Carson Wang <carson.wang@intel.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

When reordering joins EnsureRequirements only checks if all the join keys are present in the partitioning expression seq. This is problematic when the joins keys and and partitioning expressions both contain duplicates but not the same number of duplicates for each expression, e.g. `Seq(a, a, b)` vs `Seq(a, b, b)`. This fails with an index lookup failure in the `reorder` function.

This PR fixes this removing the equality checking logic from the `reorderJoinKeys` function, and by doing the multiset equality in the `reorder` function while building the reordered key sequences.

## How was this patch tested?

Added a unit test to the `PlannerSuite` and added an integration test to `JoinSuite`

Closes#25167 from hvanhovell/SPARK-27485.

Authored-by: herman <herman@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

#25010 breaks the integration test suite due to the changing the user-facing exception like the following. This PR fixes the integration test suite.

```scala

- require(

- decimalVal.precision <= precision,

- s"Decimal precision ${decimalVal.precision} exceeds max precision $precision")

+ if (decimalVal.precision > precision) {

+ throw new ArithmeticException(

+ s"Decimal precision ${decimalVal.precision} exceeds max precision $precision")

+ }

```

## How was this patch tested?

Manual test.

```

$ build/mvn install -DskipTests

$ build/mvn -Pdocker-integration-tests -pl :spark-docker-integration-tests_2.12 test

```

Closes#25165 from dongjoon-hyun/SPARK-28201.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

ISSUE : https://issues.apache.org/jira/browse/SPARK-28106

When we use add jar in SQL, it will have three step:

- add jar to HiveClient's classloader

- HiveClientImpl.runHiveSQL("ADD JAR" + PATH)

- SessionStateBuilder.addJar

The second step seems has no impact to the whole process. Since event it failed, we still can execute.

The first step will add jar path to HiveClient's ClassLoader, then we can use the jar in HiveClientImpl

The Third Step will add this jar path to SparkContext. But expect local file path, it will call RpcServer's FileServer to add this to Env, the is you pass wrong path. it will cause error, but if you pass HDFS path or VIEWFS path, it won't check it and just add it to jar Path Map.

Then when next TaskSetManager send out Task, this path will be brought by TaskDescription. Then Executor will call updateDependencies, this method will check all jar path and file path in TaskDescription. Then error happends like below:

## How was this patch tested?

Exist Unit Test

Environment Test

Closes#24909 from AngersZhuuuu/SPARK-28106.

Lead-authored-by: Angers <angers.zhu@gamil.com>

Co-authored-by: 朱夷 <zhuyi01@corp.netease.com>

Signed-off-by: jerryshao <jerryshao@tencent.com>

## What changes were proposed in this pull request?

A `Filter` predicate using `PythonUDF` can't be push down into join condition, currently. A predicate like that should be able to push down to join condition. For `PythonUDF`s that can't be evaluated in join condition, `PullOutPythonUDFInJoinCondition` will pull them out later.

An example like:

```scala

val pythonTestUDF = TestPythonUDF(name = "udf")

val left = Seq((1, 2), (2, 3)).toDF("a", "b")

val right = Seq((1, 2), (3, 4)).toDF("c", "d")

val df = left.crossJoin(right).where(pythonTestUDF($"a") === pythonTestUDF($"c"))

```

Query plan before the PR:

```

== Physical Plan ==

*(3) Project [a#2121, b#2122, c#2132, d#2133]

+- *(3) Filter (pythonUDF0#2142 = pythonUDF1#2143)

+- BatchEvalPython [udf(a#2121), udf(c#2132)], [pythonUDF0#2142, pythonUDF1#2143]

+- BroadcastNestedLoopJoin BuildRight, Cross

:- *(1) Project [_1#2116 AS a#2121, _2#2117 AS b#2122]

: +- LocalTableScan [_1#2116, _2#2117]

+- BroadcastExchange IdentityBroadcastMode

+- *(2) Project [_1#2127 AS c#2132, _2#2128 AS d#2133]

+- LocalTableScan [_1#2127, _2#2128]

```

Query plan after the PR:

```

== Physical Plan ==

*(3) Project [a#2121, b#2122, c#2132, d#2133]

+- *(3) BroadcastHashJoin [pythonUDF0#2142], [pythonUDF0#2143], Cross, BuildRight

:- BatchEvalPython [udf(a#2121)], [pythonUDF0#2142]

: +- *(1) Project [_1#2116 AS a#2121, _2#2117 AS b#2122]

: +- LocalTableScan [_1#2116, _2#2117]

+- BroadcastExchange HashedRelationBroadcastMode(List(input[2, string, true]))

+- BatchEvalPython [udf(c#2132)], [pythonUDF0#2143]

+- *(2) Project [_1#2127 AS c#2132, _2#2128 AS d#2133]

+- LocalTableScan [_1#2127, _2#2128]

```

After this PR, the join can use `BroadcastHashJoin`, instead of `BroadcastNestedLoopJoin`.

## How was this patch tested?

Added tests.

Closes#25106 from viirya/pythonudf-join-condition.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Existing random generators in tests produce wide ranges of values that can be out of supported ranges for:

- `DateType`, the valid range is `[0001-01-01, 9999-12-31]`

- `TimestampType` supports values in `[0001-01-01T00:00:00.000000Z, 9999-12-31T23:59:59.999999Z]`

- `CalendarIntervalType` should define intervals for the ranges above.

Dates and timestamps produced by random literal generators are usually out of valid ranges for those types. And tests just check invalid values or values caused by arithmetic overflow.

In the PR, I propose to restrict tested pseudo-random values by valid ranges of `DateType`, `TimestampType` and `CalendarIntervalType`. This should allow to check valid values in test, and avoid wasting time on a priori invalid inputs.

## How was this patch tested?

The changes were checked by `DateExpressionsSuite` and modified `DateTimeUtils.dateAddMonths`:

```Scala

def dateAddMonths(days: SQLDate, months: Int): SQLDate = {

localDateToDays(LocalDate.ofEpochDay(days).plusMonths(months))

}

```

Closes#25166 from MaxGekk/datetime-lit-random-gen.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This PR aims to correct mappings in `MsSqlServerDialect`. `ShortType` is mapped to `SMALLINT` and `FloatType` is mapped to `REAL` per [JBDC mapping]( https://docs.microsoft.com/en-us/sql/connect/jdbc/using-basic-data-types?view=sql-server-2017) respectively.

ShortType and FloatTypes are not correctly mapped to right JDBC types when using JDBC connector. This results in tables and spark data frame being created with unintended types. The issue was observed when validating against SQLServer.

Refer [JBDC mapping]( https://docs.microsoft.com/en-us/sql/connect/jdbc/using-basic-data-types?view=sql-server-2017 ) for guidance on mappings between SQLServer, JDBC and Java. Note that java "Short" type should be mapped to JDBC "SMALLINT" and java Float should be mapped to JDBC "REAL".

Some example issue that can happen because of wrong mappings

- Write from df with column type results in a SQL table of with column type as INTEGER as opposed to SMALLINT.Thus a larger table that expected.

- Read results in a dataframe with type INTEGER as opposed to ShortType

- ShortType has a problem in both the the write and read path

- FloatTypes only have an issue with read path. In the write path Spark data type 'FloatType' is correctly mapped to JDBC equivalent data type 'Real'. But in the read path when JDBC data types need to be converted to Catalyst data types ( getCatalystType) 'Real' gets incorrectly gets mapped to 'DoubleType' rather than 'FloatType'.

Refer #28151 which contained this fix as one part of a larger PR. Following PR #28151 discussion it was decided to file seperate PRs for each of the fixes.

## How was this patch tested?

UnitTest added in JDBCSuite.scala and these were tested.

Integration test updated and passed in MsSqlServerDialect.scala

E2E test done with SQLServer

Closes#25146 from shivsood/float_short_type_fix.

Authored-by: shivsood <shivsood@microsoft.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

`System.currentTimeMillis` read two times in a loop in `RateStreamContinuousPartitionReader`. If the test machine is slow enough and it spends quite some time between the `while` condition check and the `Thread.sleep` then the timeout value is negative and throws `IllegalArgumentException`.

In this PR I've fixed this issue.

## How was this patch tested?

Existing unit tests.

Closes#25162 from gaborgsomogyi/SPARK-28404.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

Idempotence of the `NormalizeFloatingNumbers` rule was broken due to the implementation of `ExtractEquiJoinKeys`. There is no reason that we don't remove `EqualNullSafe` join keys from an equi-join's `otherPredicates`.

## How was this patch tested?

A new UT.

Closes#25126 from yeshengm/spark-28306.

Authored-by: Yesheng Ma <kimi.ysma@gmail.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

When fetching delegation tokens for a proxy user, don't try to log in,

since it will fail.

Closes#25141 from vanzin/SPARK-28150.2.

Authored-by: Marcelo Vanzin <vanzin@cloudera.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

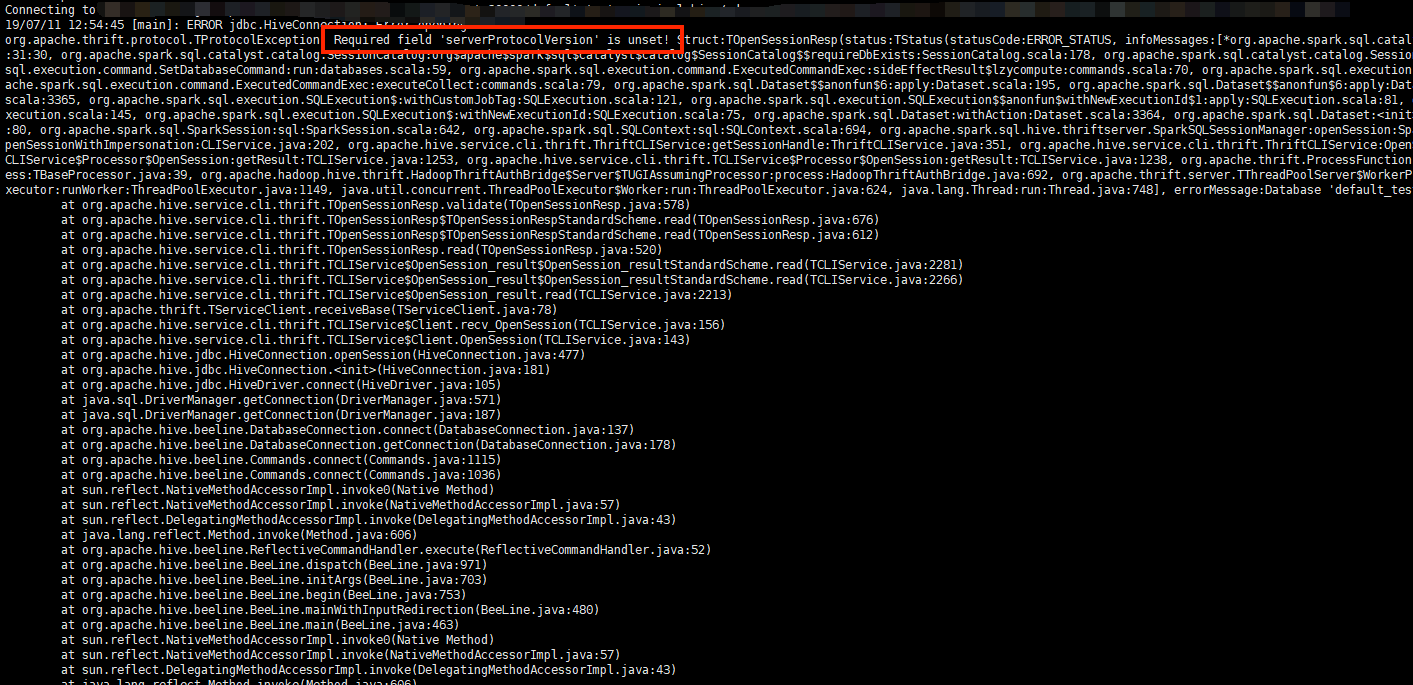

## What changes were proposed in this pull request?

For Thrift server, It's downward compatible. Such as if a PROTOCOL_VERSION_V7 client connect to a PROTOCOL_VERSION_V8 server, when OpenSession, server will change his response's protocol version to min of (client and server).

`TProtocolVersion protocol = getMinVersion(CLIService.SERVER_VERSION,`

` req.getClient_protocol());`

then set it to OpenSession's response.

But if OpenSession failed , it won't execute behavior of reset response's protocol_version.

Then it will return server's origin protocol version.

Finally client will get en error as below:

Since we write a wrong database,, OpenSession failed, right protocol version haven't been rest.

## How was this patch tested?

Since I really don't know how to write unit test about this, so I build a jar with this PR,and retry the error above, then it will return a reasonable Error of DB not found :

Closes#25083 from AngersZhuuuu/SPARK-28311.

Authored-by: 朱夷 <zhuyi01@corp.netease.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

In the PR, I propose to use the `plusMonths()` method of `LocalDate` to add months to a date. This method adds the specified amount to the months field of `LocalDate` in three steps:

1. Add the input months to the month-of-year field

2. Check if the resulting date would be invalid

3. Adjust the day-of-month to the last valid day if necessary

The difference between current behavior and propose one is in handling the last day of month in the original date. For example, adding 1 month to `2019-02-28` will produce `2019-03-28` comparing to the current implementation where the result is `2019-03-31`.

The proposed behavior is implemented in MySQL and PostgreSQL.

## How was this patch tested?

By existing test suites `DateExpressionsSuite`, `DateFunctionsSuite` and `DateTimeUtilsSuite`.

Closes#25153 from MaxGekk/add-months.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

This PR adds some traits so that we can deduplicate initialization stuff for each type of test case. For instance, see [SPARK-28343](https://issues.apache.org/jira/browse/SPARK-28343).

It's a little bit overkill but I think it will make adding test cases easier and cause less confusions.

This PR adds both:

```

private trait PgSQLTest

private trait UDFTest

```

To indicate and share the logics related to each combination of test types.

## How was this patch tested?

Manually tested.

Closes#25155 from HyukjinKwon/SPARK-28392.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Adding support to hyperbolic functions like asinh\acosh\atanh in spark SQL.

Feature parity: https://www.postgresql.org/docs/12/functions-math.html#FUNCTIONS-MATH-HYP-TABLE

The followings are the diffence from PostgreSQL.

```

spark-sql> SELECT acosh(0); (PostgreSQL returns `ERROR: input is out of range`)

NaN

spark-sql> SELECT atanh(2); (PostgreSQL returns `ERROR: input is out of range`)

NaN

```

Teradata has similar behavior as PostgreSQL with out of range input float values - It outputs **Invalid Input: numeric value within range only.**

These newly added asinh/acosh/atanh handles special input(NaN, +-Infinity) in the same way as existing cos/sin/tan/acos/asin/atan in spark. For which input value range is not (-∞, ∞)):

out of range float values: Spark returns NaN and PostgreSQL shows input is out of range

NaN: Spark returns NaN, PostgreSQL also returns NaN

Infinity: Spark return NaN, PostgreSQL shows input is out of range

## How was this patch tested?

```

spark.sql("select asinh(xx)")

spark.sql("select acosh(xx)")

spark.sql("select atanh(xx)")

./build/sbt "testOnly org.apache.spark.sql.MathFunctionsSuite"

./build/sbt "testOnly org.apache.spark.sql.catalyst.expressions.MathExpressionsSuite"

```

Closes#25041 from Tonix517/SPARK-28133.

Authored-by: Tony Zhang <tony.zhang@uber.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This upgraded to a newer version of Pyrolite. Most updates [1] in the newer version are for dotnot. For java, it includes a bug fix to Unpickler regarding cleaning up Unpickler memo, and support of protocol 5.

After upgrading, we can remove the fix at SPARK-27629 for the bug in Unpickler.

[1] https://github.com/irmen/Pyrolite/compare/pyrolite-4.23...master

## How was this patch tested?

Manually tested on Python 3.6 in local on existing tests.

Closes#25143 from viirya/upgrade-pyrolite.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This patch proposes moving all Trigger implementations to `Triggers.scala`, to avoid exposing these implementations to the end users and let end users only deal with `Trigger.xxx` static methods. This fits the intention of deprecation of `ProcessingTIme`, and we agree to move others without deprecation as this patch will be shipped in major version (Spark 3.0.0).

## How was this patch tested?

UTs modified to work with newly introduced class.

Closes#24996 from HeartSaVioR/SPARK-28199.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

This pr enables `spark.sql.crossJoin.enabled` and `spark.sql.parser.ansi.enabled` for PostgreSQL test.

## How was this patch tested?

manual tests:

Run `test.sql` in [pgSQL](https://github.com/apache/spark/tree/master/sql/core/src/test/resources/sql-tests/inputs/pgSQL) directory and in [inputs](https://github.com/apache/spark/tree/master/sql/core/src/test/resources/sql-tests/inputs) directory:

```sql

cat <<EOF > test.sql

create or replace temporary view t1 as

select * from (values(1), (2)) as v (val);

create or replace temporary view t2 as

select * from (values(2), (1)) as v (val);

select t1.*, t2.* from t1 join t2;

EOF

```

Closes#25109 from wangyum/SPARK-28343.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This PR aims to upgrade Mockito from **2.23.4** to **2.28.2** in order to bring the latest bug fixes and to be up-to-date for JDK9+ support before Apache Spark 3.0.0. There is Mockito 3.0 released 4 days ago, but we had better wait and see for the stability.

**RELEASE NOTE**

https://github.com/mockito/mockito/blob/release/2.x/doc/release-notes/official.md

**NOTABLE FIXES**

- Configure the MethodVisitor for Java 11+ compatibility (2.27.5)

- When mock is called multiple times, and verify fails, the error message reports only the first invocation (2.27.4)

- Memory leak in mockito-inline calling method on mock with at least a mock as parameter (2.25.0)

- Cross-references and a single spy cause memory leak (2.25.0)

- Nested spies cause memory leaks (2.25.0)

- [Java 9 support] ClassCastExceptions with JDK9 javac (2.24.9, 2.24.3)

- Return null instead of causing a CCE (2.24.9, 2.24.3)

- Issue with mocking type in "java.util.*", Java 12 (2.24.2)

Mainly, Maven (Hadoop-2.7/Hadoop-3.2) and SBT(Hadoop-2.7) Jenkins test passed.

## How was this patch tested?

Pass the Jenkins with the exiting UTs.

Closes#25139 from dongjoon-hyun/SPARK-28370.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

Parquet may call the filter with a null value to check whether nulls are

accepted. While it seems Spark avoids that path in Parquet with 1.10, in

1.11 that causes Spark unit tests to fail.

Tested with Parquet 1.11 (and new unit test).

Closes#25140 from vanzin/SPARK-28371.

Authored-by: Marcelo Vanzin <vanzin@cloudera.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This patch fixes the flaky test "query without test harness" on ContinuousSuite, via adding some more gaps on waiting query to commit the epoch which writes output rows.

The observation of this issue is below (injected some debug logs to get them):

```

reader creation time 1562225320210

epoch 1 launched 1562225320593 (+380ms from reader creation time)

epoch 13 launched 1562225321702 (+1.5s from reader creation time)

partition reader creation time 1562225321715 (+1.5s from reader creation time)

next read time for first next call 1562225321210 (+1s from reader creation time)

first next called in partition reader 1562225321746 (immediately after creation of partition reader)

wait finished in next called in partition reader 1562225321746 (no wait)

second next called in partition reader 1562225321747 (immediately after first next())

epoch 0 commit started 1562225321861

writing rows (0, 1) (belong to epoch 13) 1562225321866 (+100ms after first next())

wait start in waitForRateSourceTriggers(2) 1562225322059

next read time for second next call 1562225322210 (+1s from previous "next read time")

wait finished in next called in partition reader 1562225322211 (+450ms wait)

writing rows (2, 3) (belong to epoch 13) 1562225322211 (immediately after next())

epoch 14 launched 1562225322246

desired wait time in waitForRateSourceTriggers(2) 1562225322510 (+2.3s from reader creation time)

epoch 12 committed 1562225323034

```

These rows were written within desired wait time, but the epoch 13 couldn't be committed within it. Interestingly, epoch 12 was lucky to be committed within a gap between finished waiting in waitForRateSourceTriggers and query.stop() - but even suppose the rows were written in epoch 12, it would be just in luck and epoch should be committed within desired wait time.

This patch modifies Rate continuous stream to track the highest committed value, so that test can wait until desired value is reported to the stream as committed.

This patch also modifies Rate continuous stream to track the timestamp at stream gets the first committed offset, and let `waitForRateSourceTriggers` use the timestamp. This also relies on waiting for specific period, but safer approach compared to current based on the observation above. Based on the change, this patch saves couple of seconds in test time.

## How was this patch tested?

10 sequential test runs succeeded locally.

Closes#25048 from HeartSaVioR/SPARK-28247.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

The `_prepare_for_python_RDD` method currently broadcasts a pickled command if its length is greater than the hardcoded value `1 << 20` (1M). This change sets this value as a Spark conf instead.

## How was this patch tested?

Unit tests, manual tests.

Closes#25123 from jessecai/SPARK-28355.

Authored-by: Jesse Cai <jesse.cai@databricks.com>

Signed-off-by: gatorsmile <gatorsmile@gmail.com>

A code gen test in WholeStageCodeGenSuite was flaky because it used the codegen metrics class to test if the generated code for equivalent plans was identical under a particular flag. This patch switches the test to compare the generated code directly.

N/A

Closes#25131 from gatorsmile/WholeStageCodegenSuite.

Authored-by: gatorsmile <gatorsmile@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

fix typo in spark-28159

`transfromWithMean` -> `transformWithMean`

## How was this patch tested?

existing test

Closes#25129 from zhengruifeng/to_ml_vec_cleanup.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

This PR adds compatibility of handling a `WITH` clause within another `WITH` cause. Before this PR these queries retuned `1` while after this PR they return `2` as PostgreSQL does:

```

WITH

t AS (SELECT 1),

t2 AS (

WITH t AS (SELECT 2)

SELECT * FROM t

)

SELECT * FROM t2

```

```

WITH t AS (SELECT 1)

SELECT (

WITH t AS (SELECT 2)

SELECT * FROM t

)

```

As this is an incompatible change, the PR introduces the `spark.sql.legacy.cte.substitution.enabled` flag as an option to restore old behaviour.

## How was this patch tested?

Added new UTs.

Closes#25029 from peter-toth/SPARK-28228.

Authored-by: Peter Toth <peter.toth@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

`dev/merge_spark_pr.py` script always fail for some users because they have different `name` and `key`.

- https://issues.apache.org/jira/rest/api/2/user?username=yumwang

JIRA Client expects `name`, but we are using `key`. This PR fixes it.

```python

# This is JIRA client code `/usr/local/lib/python2.7/site-packages/jira/client.py`

def assign_issue(self, issue, assignee):

"""Assign an issue to a user. None will set it to unassigned. -1 will set it to Automatic.

:param issue: the issue ID or key to assign

:param assignee: the user to assign the issue to

:type issue: int or str

:type assignee: str

:rtype: bool

"""

url = self._options['server'] + \

'/rest/api/latest/issue/' + str(issue) + '/assignee'

payload = {'name': assignee}

r = self._session.put(

url, data=json.dumps(payload))

raise_on_error(r)

return True

```

## How was this patch tested?

Manual with the committer ID/password.

```python

import jira.client

asf_jira = jira.client.JIRA({'server': 'https://issues.apache.org/jira'}, basic_auth=('yourid', 'passwd'))

asf_jira.assign_issue("SPARK-28354", "q79969786") # This will raise exception.

asf_jira.assign_issue("SPARK-28354", "yumwang") # This works.

```

Closes#25120 from dongjoon-hyun/SPARK-28354.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

`SizeBasedRollingPolicy.shouldRollover` returns false when the size is equal to `rolloverSizeBytes`.

```scala

/** Should rollover if the next set of bytes is going to exceed the size limit */

def shouldRollover(bytesToBeWritten: Long): Boolean = {

logDebug(s"$bytesToBeWritten + $bytesWrittenSinceRollover > $rolloverSizeBytes")

bytesToBeWritten + bytesWrittenSinceRollover > rolloverSizeBytes

}

```

- https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/107553/testReport/org.apache.spark.util/FileAppenderSuite/rolling_file_appender___size_based_rolling__compressed_/

```

org.scalatest.exceptions.TestFailedException: 1000 was not less than 1000

```

## How was this patch tested?

Pass the Jenkins with the updated test.

Closes#25125 from dongjoon-hyun/SPARK-28357.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

There are some hardcoded configs, using config entry to replace them.

## How was this patch tested?

Existing UT

Closes#25059 from WangGuangxin/ConfigEntry.

Authored-by: wangguangxin.cn <wangguangxin.cn@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

0-args Java UDF alone calls the function even before making it as an expression.

It causes that the function always returns the same value and the function is called at driver side.

Seems like a mistake.

## How was this patch tested?

Unit test was added

Closes#25108 from HyukjinKwon/SPARK-28321.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>