### What changes were proposed in this pull request?

`HiveResult` performs some conversions for commands to be compatible with Hive output, e.g.:

```

// If it is a describe command for a Hive table, we want to have the output format be similar with Hive.

case ExecutedCommandExec(_: DescribeCommandBase) =>

...

// SHOW TABLES in Hive only output table names, while ours output database, table name, isTemp.

case command ExecutedCommandExec(s: ShowTablesCommand) if !s.isExtended =>

```

This conversion is needed for DatasourceV2 commands as well and this PR proposes to add the conversion for v2 commands `SHOW TABLES` and `DESCRIBE TABLE`.

### Why are the changes needed?

This is a bug where conversion is not applied to v2 commands.

### Does this PR introduce any user-facing change?

Yes, now the outputs for v2 commands `SHOW TABLES` and `DESCRIBE TABLE` are compatible with HIVE output.

For example, with a table created as:

```

CREATE TABLE testcat.ns.tbl (id bigint COMMENT 'col1') USING foo

```

The output of `SHOW TABLES` has changed from

```

ns table

```

to

```

table

```

And the output of `DESCRIBE TABLE` has changed from

```

id bigint col1

# Partitioning

Not partitioned

```

to

```

id bigint col1

# Partitioning

Not partitioned

```

### How was this patch tested?

Added unit tests.

Closes#28004 from imback82/hive_result.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In Spark CLI, we create a hive `CliSessionState` and it does not load the `hive-site.xml`. So the configurations in `hive-site.xml` will not take effects like other spark-hive integration apps.

Also, the warehouse directory is not correctly picked. If the `default` database does not exist, the `CliSessionState` will create one during the first time it talks to the metastore. The `Location` of the default DB will be neither the value of `spark.sql.warehousr.dir` nor the user-specified value of `hive.metastore.warehourse.dir`, but the default value of `hive.metastore.warehourse.dir `which will always be `/user/hive/warehouse`.

This PR fixes CLiSuite failure with the hive-1.2 profile in https://github.com/apache/spark/pull/27933.

In https://github.com/apache/spark/pull/27933, we fix the issue in JIRA by deciding the warehouse dir using all properties from spark conf and Hadoop conf, but properties from `--hiveconf` is not included, they will be applied to the `CliSessionState` instance after it initialized. When this command-line option key is `hive.metastore.warehouse.dir`, the actual warehouse dir is overridden. Because of the logic in Hive for creating the non-existing default database changed, that test passed with `Hive 2.3.6` but failed with `1.2`. So in this PR, Hadoop/Hive configurations are ordered by:

` spark.hive.xxx > spark.hadoop.xxx > --hiveconf xxx > hive-site.xml` througth `ShareState.loadHiveConfFile` before sessionState start

### Why are the changes needed?

Bugfix for Spark SQL CLI to pick right confs

### Does this PR introduce any user-facing change?

yes,

1. the non-exists default database will be created in the location specified by the users via `spark.sql.warehouse.dir` or `hive.metastore.warehouse.dir`, or the default value of `spark.sql.warehouse.dir` if none of them specified.

2. configurations from `hive-site.xml` will not override command-line options or the properties defined with `spark.hadoo(hive).` prefix in spark conf.

### How was this patch tested?

add cli ut

Closes#27969 from yaooqinn/SPARK-31170-2.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

When `toPandas` API works on duplicate column names produced from operators like join, we see the error like:

```

ValueError: The truth value of a Series is ambiguous. Use a.empty, a.bool(), a.item(), a.any() or a.all().

```

This patch fixes the error in `toPandas` API.

### Why are the changes needed?

To make `toPandas` work on dataframe with duplicate column names.

### Does this PR introduce any user-facing change?

Yes. Previously calling `toPandas` API on a dataframe with duplicate column names will fail. After this patch, it will produce correct result.

### How was this patch tested?

Unit test.

Closes#28025 from viirya/SPARK-31186.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR related to https://github.com/apache/spark/pull/27481.

If test case A uses `--IMPORT` to import test case B contains bracketed comments, the output can't display bracketed comments in golden files well.

The content of `nested-comments.sql` show below:

```

-- This test case just used to test imported bracketed comments.

-- the first case of bracketed comment

--QUERY-DELIMITER-START

/* This is the first example of bracketed comment.

SELECT 'ommented out content' AS first;

*/

SELECT 'selected content' AS first;

--QUERY-DELIMITER-END

```

The test case `comments.sql` imports `nested-comments.sql` below:

`--IMPORT nested-comments.sql`

Before this PR, the output will be:

```

-- !query

/* This is the first example of bracketed comment.

SELECT 'ommented out content' AS first

-- !query schema

struct<>

-- !query output

org.apache.spark.sql.catalyst.parser.ParseException

mismatched input '/' expecting {'(', 'ADD', 'ALTER', 'ANALYZE', 'CACHE', 'CLEAR', 'COMMENT', 'COMMIT', 'CREATE', 'DELETE', 'DESC', 'DESCRIBE', 'DFS', 'DROP',

'EXPLAIN', 'EXPORT', 'FROM', 'GRANT', 'IMPORT', 'INSERT', 'LIST', 'LOAD', 'LOCK', 'MAP', 'MERGE', 'MSCK', 'REDUCE', 'REFRESH', 'REPLACE', 'RESET', 'REVOKE', '

ROLLBACK', 'SELECT', 'SET', 'SHOW', 'START', 'TABLE', 'TRUNCATE', 'UNCACHE', 'UNLOCK', 'UPDATE', 'USE', 'VALUES', 'WITH'}(line 1, pos 0)

== SQL ==

/* This is the first example of bracketed comment.

^^^

SELECT 'ommented out content' AS first

-- !query

*/

SELECT 'selected content' AS first

-- !query schema

struct<>

-- !query output

org.apache.spark.sql.catalyst.parser.ParseException

extraneous input '*/' expecting {'(', 'ADD', 'ALTER', 'ANALYZE', 'CACHE', 'CLEAR', 'COMMENT', 'COMMIT', 'CREATE', 'DELETE', 'DESC', 'DESCRIBE', 'DFS', 'DROP', 'EXPLAIN', 'EXPORT', 'FROM', 'GRANT', 'IMPORT', 'INSERT', 'LIST', 'LOAD', 'LOCK', 'MAP', 'MERGE', 'MSCK', 'REDUCE', 'REFRESH', 'REPLACE', 'RESET', 'REVOKE', 'ROLLBACK', 'SELECT', 'SET', 'SHOW', 'START', 'TABLE', 'TRUNCATE', 'UNCACHE', 'UNLOCK', 'UPDATE', 'USE', 'VALUES', 'WITH'}(line 1, pos 0)

== SQL ==

*/

^^^

SELECT 'selected content' AS first

```

After this PR, the output will be:

```

-- !query

/* This is the first example of bracketed comment.

SELECT 'ommented out content' AS first;

*/

SELECT 'selected content' AS first

-- !query schema

struct<first:string>

-- !query output

selected content

```

### Why are the changes needed?

Golden files can't display the bracketed comments in imported test cases.

### Does this PR introduce any user-facing change?

'No'.

### How was this patch tested?

New UT.

Closes#28018 from beliefer/fix-bug-tests-imported-bracketed-comments.

Authored-by: beliefer <beliefer@163.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

This PR (SPARK-31238) aims the followings.

1. Modified ORC Vectorized Reader, in particular, OrcColumnVector v1.2 and v2.3. After the changes, it uses `DateTimeUtils. rebaseJulianToGregorianDays()` added by https://github.com/apache/spark/pull/27915 . The method performs rebasing days from the hybrid calendar (Julian + Gregorian) to Proleptic Gregorian calendar. It builds a local date in the original calendar, extracts date fields `year`, `month` and `day` from the local date, and builds another local date in the target calendar. After that, it calculates days from the epoch `1970-01-01` for the resulted local date.

2. Introduced rebasing dates while saving ORC files, in particular, I modified `OrcShimUtils. getDateWritable` v1.2 and v2.3, and returned `DaysWritable` instead of Hive's `DateWritable`. The `DaysWritable` class was added by the PR https://github.com/apache/spark/pull/27890 (and fixed by https://github.com/apache/spark/pull/27962). I moved `DaysWritable` from `sql/hive` to `sql/core` to re-use it in ORC datasource.

### Why are the changes needed?

For the backward compatibility with Spark 2.4 and earlier versions. The changes allow users to read dates/timestamps saved by previous version, and get the same result.

### Does this PR introduce any user-facing change?

Yes. Before the changes, loading the date `1200-01-01` saved by Spark 2.4.5 returns the following:

```scala

scala> spark.read.orc("/Users/maxim/tmp/before_1582/2_4_5_date_orc").show(false)

+----------+

|dt |

+----------+

|1200-01-08|

+----------+

```

After the changes

```scala

scala> spark.read.orc("/Users/maxim/tmp/before_1582/2_4_5_date_orc").show(false)

+----------+

|dt |

+----------+

|1200-01-01|

+----------+

```

### How was this patch tested?

- By running `OrcSourceSuite` and `HiveOrcSourceSuite`.

- Add new test `SPARK-31238: compatibility with Spark 2.4 in reading dates` to `OrcSuite` which reads an ORC file saved by Spark 2.4.5 via the commands:

```shell

$ export TZ="America/Los_Angeles"

```

```scala

scala> sql("select cast('1200-01-01' as date) dt").write.mode("overwrite").orc("/Users/maxim/tmp/before_1582/2_4_5_date_orc")

scala> spark.read.orc("/Users/maxim/tmp/before_1582/2_4_5_date_orc").show(false)

+----------+

|dt |

+----------+

|1200-01-01|

+----------+

```

- Add round trip test `SPARK-31238: rebasing dates in write`. The test `SPARK-31238: compatibility with Spark 2.4 in reading dates` confirms rebasing in read. So, we can check rebasing in write.

Closes#28016 from MaxGekk/rebase-date-orc.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Fix incorrect log of `cureRequestSize`.

### Why are the changes needed?

In batch mode, `curRequestSize` can be the total size of several block groups. And each group should have its own request size instead of using the total size.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

It's only affect log.

Closes#28028 from Ngone51/fix_curRequestSize.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This is the core scheduler changes to support Stage level scheduling.

The main changes here include modification to the DAGScheduler to look at the ResourceProfiles associated with an RDD and have those applied inside the scheduler.

Currently if multiple RDD's in a stage have conflicting ResourceProfiles we throw an error. logic to allow this will happen in SPARK-29153. I added the interfaces to RDD to add and get the REsourceProfile so that I could add unit tests for the scheduler. These are marked as private for now until we finish the feature and will be exposed in SPARK-29150. If you think this is confusing I can remove those and remove the tests and add them back later.

I modified the task scheduler to make sure to only schedule on executor that exactly match the resource profile. It will then check those executors to make sure the current resources meet the task needs before assigning it. In here I changed the way we do the custom resource assignment.

Other changes here include having the cpus per task passed around so that we can properly account for them. Previously we just used the one global config, but now it can change based on the ResourceProfile.

I removed the exceptions that require the cores to be the limiting resource. With this change all the places I found that used executor cores /task cpus as slots has been updated to use the ResourceProfile logic and look to see what resource is limiting.

### Why are the changes needed?

Stage level sheduling feature

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

unit tests and lots of manual testing

Closes#27773 from tgravescs/SPARK-29154.

Lead-authored-by: Thomas Graves <tgraves@nvidia.com>

Co-authored-by: Thomas Graves <tgraves@apache.org>

Signed-off-by: Thomas Graves <tgraves@apache.org>

### What changes were proposed in this pull request?

Skew join handling comes with an overhead: we need to read some data repeatedly. We should treat a partition as skewed if it's large enough so that it's beneficial to do so.

Currently the size threshold is the advisory partition size, which is 64 MB by default. This is not large enough for the skewed partition size threshold.

This PR adds a new config for the threshold and set default value as 256 MB.

### Why are the changes needed?

Avoid skew join handling that may introduce a perf regression.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

existing tests

Closes#27967 from cloud-fan/aqe.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Make `mergeSparkConf` in `WithTestConf` respects `spark.sql.legacy.sessionInitWithConfigDefaults`.

### Why are the changes needed?

Without the fix, conf specified by `withSQLConf` can be reverted to original value in a cloned SparkSession. For example, you will fail test below without the fix:

```

withSQLConf(SQLConf.CODEGEN_FALLBACK.key -> "true") {

val cloned = spark.cloneSession()

SparkSession.setActiveSession(cloned)

assert(SQLConf.get.getConf(SQLConf.CODEGEN_FALLBACK) === true)

}

```

So we should fix it just as #24540 did before.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Added tests.

Closes#28014 from Ngone51/sparksession_clone.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to define `timestampFormatter`, `dateFormatter` and `zoneId` as methods of the `HiveResult` object. This should guarantee that the formatters pick the current session time zone in `toHiveString()`

### Why are the changes needed?

Currently, date/timestamp formatters in `HiveResult.toHiveString` are initialized once on instantiation of the `HiveResult` object, and pick up the session time zone. If the sessions time zone is changed, the formatters still use the previous one.

### Does this PR introduce any user-facing change?

Yes

### How was this patch tested?

By existing test suites, in particular, by `HiveResultSuite`

Closes#28024 from MaxGekk/hive-result-datetime-formatters.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR targets for non-nullable null type not to coerce to nullable type in complex types.

Non-nullable fields in struct, elements in an array and entries in map can mean empty array, struct and map. They are empty so it does not need to force the nullability when we find common types.

This PR also reverts and supersedes d7b97a1d0d

### Why are the changes needed?

To make type coercion coherent and consistent. Currently, we correctly keep the nullability even between non-nullable fields:

```scala

import org.apache.spark.sql.types._

import org.apache.spark.sql.functions._

spark.range(1).select(array(lit(1)).cast(ArrayType(IntegerType, false))).printSchema()

spark.range(1).select(array(lit(1)).cast(ArrayType(DoubleType, false))).printSchema()

```

```scala

spark.range(1).selectExpr("concat(array(1), array(1)) as arr").printSchema()

```

### Does this PR introduce any user-facing change?

Yes.

```scala

import org.apache.spark.sql.types._

import org.apache.spark.sql.functions._

spark.range(1).select(array().cast(ArrayType(IntegerType, false))).printSchema()

```

```scala

spark.range(1).selectExpr("concat(array(), array(1)) as arr").printSchema()

```

**Before:**

```

org.apache.spark.sql.AnalysisException: cannot resolve 'array()' due to data type mismatch: cannot cast array<null> to array<int>;;

'Project [cast(array() as array<int>) AS array()#68]

+- Range (0, 1, step=1, splits=Some(12))

at org.apache.spark.sql.catalyst.analysis.package$AnalysisErrorAt.failAnalysis(package.scala:42)

at org.apache.spark.sql.catalyst.analysis.CheckAnalysis$$anonfun$$nestedInanonfun$checkAnalysis$1$2.applyOrElse(CheckAnalysis.scala:149)

at org.apache.spark.sql.catalyst.analysis.CheckAnalysis$$anonfun$$nestedInanonfun$checkAnalysis$1$2.applyOrElse(CheckAnalysis.scala:140)

at org.apache.spark.sql.catalyst.trees.TreeNode.$anonfun$transformUp$2(TreeNode.scala:333)

at org.apache.spark.sql.catalyst.trees.CurrentOrigin$.withOrigin(TreeNode.scala:72)

at org.apache.spark.sql.catalyst.trees.TreeNode.transformUp(TreeNode.scala:333)

at org.apache.spark.sql.catalyst.trees.TreeNode.$anonfun$transformUp$1(TreeNode.scala:330)

at org.apache.spark.sql.catalyst.trees.TreeNode.$anonfun$mapChildren$1(TreeNode.scala:399)

at org.apache.spark.sql.catalyst.trees.TreeNode.mapProductIterator(TreeNode.scala:237)

```

```

root

|-- arr: array (nullable = false)

| |-- element: integer (containsNull = true)

```

**After:**

```

root

|-- array(): array (nullable = false)

| |-- element: integer (containsNull = false)

```

```

root

|-- arr: array (nullable = false)

| |-- element: integer (containsNull = false)

```

### How was this patch tested?

Unittests were added and manually tested.

Closes#27991 from HyukjinKwon/SPARK-31227.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Currently, ResetCommand clear all configurations, including sql configs, static sql configs and spark context level configs.

for example:

```sql

spark-sql> set xyz=abc;

xyz abc

spark-sql> set;

spark.app.id local-1585055396930

spark.app.name SparkSQL::10.242.189.214

spark.driver.host 10.242.189.214

spark.driver.port 65094

spark.executor.id driver

spark.jars

spark.master local[*]

spark.sql.catalogImplementation hive

spark.sql.hive.version 1.2.1

spark.submit.deployMode client

xyz abc

spark-sql> reset;

spark-sql> set;

spark-sql> set spark.sql.hive.version;

spark.sql.hive.version 1.2.1

spark-sql> set spark.app.id;

spark.app.id <undefined>

```

In this PR, we restore spark confs to RuntimeConfig after it is cleared

### Why are the changes needed?

reset command overkills configs which are static.

### Does this PR introduce any user-facing change?

yes, the ResetCommand do not change static configs now

### How was this patch tested?

add ut

Closes#28003 from yaooqinn/SPARK-31234.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

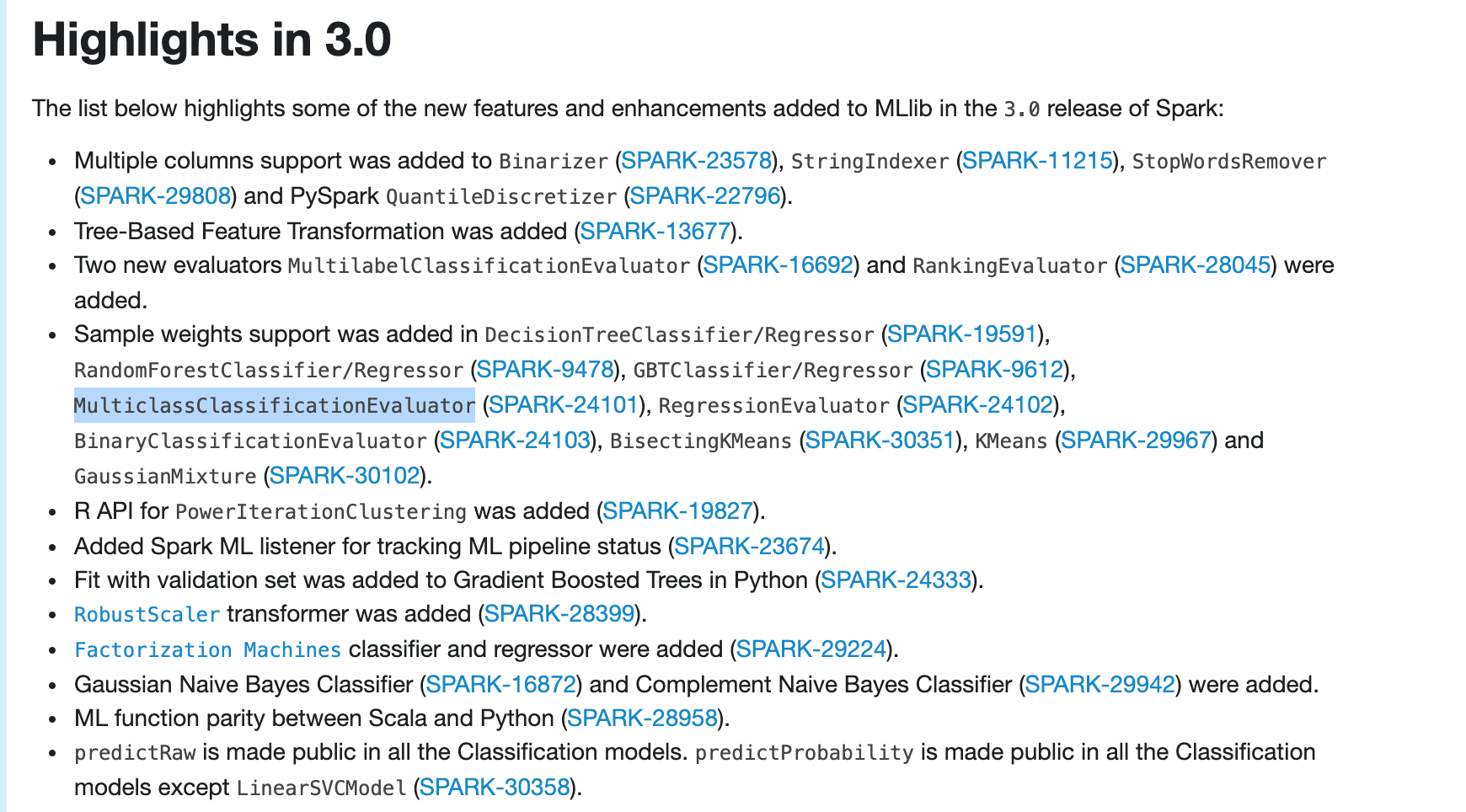

### What changes were proposed in this pull request?

Update ml-guide to include ```MulticlassClassificationEvaluator``` weight support in highlights

### Why are the changes needed?

```MulticlassClassificationEvaluator``` weight support is very important, so should include it in highlights

### Does this PR introduce any user-facing change?

Yes

after:

### How was this patch tested?

manually build and check

Closes#28031 from huaxingao/highlights-followup.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: zhengruifeng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

https://issues.apache.org/jira/browse/SPARK-31223

set seed in np.random when generating test data......

### Why are the changes needed?

so the same set of test data can be regenerated later.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

exiting tests

Closes#27994 from huaxingao/spark-31223.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: zhengruifeng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

In the PR, I propose to add a few `ZoneId` constant values to the `DateTimeTestUtils` object, and reuse the constants in tests. Proposed the following constants:

- PST = -08:00

- UTC = +00:00

- CEST = +02:00

- CET = +01:00

- JST = +09:00

- MIT = -09:30

- LA = America/Los_Angeles

### Why are the changes needed?

All proposed constant values (except `LA`) are initialized by zone offsets according to their definitions. This will allow to avoid:

- Using of 3-letter time zones that have been already deprecated in JDK, see _Three-letter time zone IDs_ in https://docs.oracle.com/javase/8/docs/api/java/util/TimeZone.html

- Incorrect mapping of 3-letter time zones to zone offsets, see SPARK-31237. For example, `PST` is mapped to `America/Los_Angeles` instead of the `-08:00` zone offset.

Also this should improve stability and maintainability of test suites.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By running affected test suites.

Closes#28001 from MaxGekk/replace-pst.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Fix the regression caused by #22173.

The original PR changes the logic of handling `ChunkFetchReqeust` from async to sync, that's causes the shuffle benchmark regression. This PR fixes the regression back to the async mode by reusing the config `spark.shuffle.server.chunkFetchHandlerThreadsPercent`.

When the user sets the config, ChunkFetchReqeust will be processed in a separate event loop group, otherwise, the code path is exactly the same as before.

### Why are the changes needed?

Fix the shuffle performance regression described in https://github.com/apache/spark/pull/22173#issuecomment-572459561

### Does this PR introduce any user-facing change?

Yes, this PR disable the separate event loop for FetchRequest by default.

### How was this patch tested?

Existing UT.

Closes#27665 from xuanyuanking/SPARK-24355-follow.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

the link for `partition discovery` is malformed, because for releases, there will contains` /docs/<version>/` in the full URL.

### Why are the changes needed?

fix doc

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

`SKIP_SCALADOC=1 SKIP_RDOC=1 SKIP_SQLDOC=1 jekyll serve` locally verified

Closes#28017 from yaooqinn/doc.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

This PR is a followup of apache/spark#27995. Rather then pining setuptools version, it sets upper bound so Python 3.5 with branch-2.4 tests can pass too.

## Why are the changes needed?

To make the CI build stable

## Does this PR introduce any user-facing change?

No, dev-only change.

## How was this patch tested?

Jenkins will test.

Closes#28005 from HyukjinKwon/investigate-pip-packaging-followup.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

add arvo dep in SparkBuild

### Why are the changes needed?

fix sbt unidoc like https://github.com/apache/spark/pull/28017#issuecomment-603828597

```scala

[warn] Multiple main classes detected. Run 'show discoveredMainClasses' to see the list

[warn] Multiple main classes detected. Run 'show discoveredMainClasses' to see the list

[info] Main Scala API documentation to /home/jenkins/workspace/SparkPullRequestBuilder6/target/scala-2.12/unidoc...

[info] Main Java API documentation to /home/jenkins/workspace/SparkPullRequestBuilder6/target/javaunidoc...

[error] /home/jenkins/workspace/SparkPullRequestBuilder6/core/src/main/scala/org/apache/spark/serializer/GenericAvroSerializer.scala:123: value createDatumWriter is not a member of org.apache.avro.generic.GenericData

[error] writerCache.getOrElseUpdate(schema, GenericData.get.createDatumWriter(schema))

[error] ^

[info] No documentation generated with unsuccessful compiler run

[error] one error found

```

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

pass jenkins

and verify manually with `sbt dependencyTree`

```scala

kentyaohulk ~/spark dep build/sbt dependencyTree | grep avro | grep -v Resolving

[info] +-org.apache.avro:avro-mapred:1.8.2

[info] | +-org.apache.avro:avro-ipc:1.8.2

[info] | | +-org.apache.avro:avro:1.8.2

[info] +-org.apache.avro:avro:1.8.2

[info] | | +-org.apache.avro:avro:1.8.2

[info] org.apache.spark:spark-avro_2.12:3.1.0-SNAPSHOT [S]

[info] | | | +-org.apache.avro:avro-mapred:1.8.2

[info] | | | | +-org.apache.avro:avro-ipc:1.8.2

[info] | | | | | +-org.apache.avro:avro:1.8.2

[info] | | | +-org.apache.avro:avro:1.8.2

[info] | | | | | +-org.apache.avro:avro:1.8.2

```

Closes#28020 from yaooqinn/dep.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR (SPARK-31244) replaces `Ceph` with `Minio` in K8S `DepsTestSuite`.

### Why are the changes needed?

Currently, `DepsTestsSuite` is using `ceph` for S3 storage. However, the used version and all new releases are broken on new `minikube` releases. We had better use more robust and small one.

```

$ minikube version

minikube version: v1.8.2

$ minikube -p minikube docker-env | source

$ docker run -it --rm -e NETWORK_AUTO_DETECT=4 -e RGW_FRONTEND_PORT=8000 -e SREE_PORT=5001 -e CEPH_DEMO_UID=nano -e CEPH_DAEMON=demo ceph/daemon:v4.0.3-stable-4.0-nautilus-centos-7-x86_64 /bin/sh

2020-03-25 04:26:21 /opt/ceph-container/bin/entrypoint.sh: ERROR- it looks like we have not been able to discover the network settings

$ docker run -it --rm -e NETWORK_AUTO_DETECT=4 -e RGW_FRONTEND_PORT=8000 -e SREE_PORT=5001 -e CEPH_DEMO_UID=nano -e CEPH_DAEMON=demo ceph/daemon:v4.0.11-stable-4.0-nautilus-centos-7 /bin/sh

2020-03-25 04:20:30 /opt/ceph-container/bin/entrypoint.sh: ERROR- it looks like we have not been able to discover the network settings

```

Also, the image size is unnecessarily big (almost `1GB`) and growing while `minio` is `55.8MB` with the same features.

```

$ docker images | grep ceph

ceph/daemon v4.0.3-stable-4.0-nautilus-centos-7-x86_64 a6a05ccdf924 6 months ago 852MB

ceph/daemon v4.0.11-stable-4.0-nautilus-centos-7 87f695550d8e 12 hours ago 901MB

$ docker images | grep minio

minio/minio latest 95c226551ea6 5 days ago 55.8MB

```

### Does this PR introduce any user-facing change?

No. (This is a test case change)

### How was this patch tested?

Pass the existing Jenkins K8s integration test job and test with the latest minikube.

```

$ minikube version

minikube version: v1.8.2

$ kubectl version --short

Client Version: v1.17.4

Server Version: v1.17.4

$ NO_MANUAL=1 ./dev/make-distribution.sh --r --pip --tgz -Pkubernetes

$ resource-managers/kubernetes/integration-tests/dev/dev-run-integration-tests.sh --spark-tgz $PWD/spark-*.tgz

...

KubernetesSuite:

- Run SparkPi with no resources

- Run SparkPi with a very long application name.

- Use SparkLauncher.NO_RESOURCE

- Run SparkPi with a master URL without a scheme.

- Run SparkPi with an argument.

- Run SparkPi with custom labels, annotations, and environment variables.

- All pods have the same service account by default

- Run extraJVMOptions check on driver

- Run SparkRemoteFileTest using a remote data file

- Run SparkPi with env and mount secrets.

- Run PySpark on simple pi.py example

- Run PySpark with Python2 to test a pyfiles example

- Run PySpark with Python3 to test a pyfiles example

- Run PySpark with memory customization

- Run in client mode.

- Start pod creation from template

- PVs with local storage *** FAILED *** // This is irrelevant to this PR.

- Launcher client dependencies // This is the fixed test case by this PR.

- Test basic decommissioning

- Run SparkR on simple dataframe.R example

Run completed in 12 minutes, 4 seconds.

...

```

The following is the working snapshot of `DepsTestSuite` test.

```

$ kubectl get all -ncf9438dd8a65436686b1196a6b73000f

NAME READY STATUS RESTARTS AGE

pod/minio-0 1/1 Running 0 70s

pod/spark-test-app-8494bddca3754390b9e59a2ef47584eb 1/1 Running 0 55s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/minio-s3 NodePort 10.109.54.180 <none> 9000:30678/TCP 70s

service/spark-test-app-fd916b711061c7b8-driver-svc ClusterIP None <none> 7078/TCP,7079/TCP,4040/TCP 55s

NAME READY AGE

statefulset.apps/minio 1/1 70s

```

Closes#28015 from dongjoon-hyun/SPARK-31244.

Authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Spark introduced CHAR type for hive compatibility but it only works for hive tables. CHAR type is never documented and is treated as STRING type for non-Hive tables.

However, this leads to confusing behaviors

**Apache Spark 3.0.0-preview2**

```

spark-sql> CREATE TABLE t(a CHAR(3));

spark-sql> INSERT INTO TABLE t SELECT 'a ';

spark-sql> SELECT a, length(a) FROM t;

a 2

```

**Apache Spark 2.4.5**

```

spark-sql> CREATE TABLE t(a CHAR(3));

spark-sql> INSERT INTO TABLE t SELECT 'a ';

spark-sql> SELECT a, length(a) FROM t;

a 3

```

According to the SQL standard, `CHAR(3)` should guarantee all the values are of length 3. Since `CHAR(3)` is treated as STRING so Spark doesn't guarantee it.

This PR forbids CHAR type in non-Hive tables as it's not supported correctly.

### Why are the changes needed?

avoid confusing/wrong behavior

### Does this PR introduce any user-facing change?

yes, now users can't create/alter non-Hive tables with CHAR type.

### How was this patch tested?

new tests

Closes#27902 from cloud-fan/char.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This pr intends to add unit tests for the other join hints (`MERGEJOIN`, `SHUFFLE_HASH`, and `SHUFFLE_REPLICATE_NL`). This is a followup PR of #27935.

### Why are the changes needed?

For better test coverage.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Added unit tests.

Closes#28013 from maropu/SPARK-25121-FOLLOWUP.

Authored-by: Takeshi Yamamuro <yamamuro@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

In the PR, I propose to update the doc for `spark.sql.session.timeZone`, and restrict format of config's values to 2 forms:

1. Geographical regions, such as `America/Los_Angeles`.

2. Fixed offsets - a fully resolved offset from UTC. For example, `-08:00`.

### Why are the changes needed?

Other formats such as three-letter time zone IDs are ambitious, and depend on the locale. For example, `CST` could be U.S. `Central Standard Time` and `China Standard Time`. Such formats have been already deprecated in JDK, see [Three-letter time zone IDs](https://docs.oracle.com/javase/8/docs/api/java/util/TimeZone.html).

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By running `./dev/scalastyle`, and manual testing.

Closes#27999 from MaxGekk/doc-session-time-zone.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Assert the number of blocks to fetch equals the number of local blocks + the number of hostLocal blocks + the number of remote blocks in ShuffleBlockFetcherIterator. Also refactor the code a bit to make it easier to follow.

### Why are the changes needed?

When the numbers don't match it means something is going wrong, we should fail fast.

### Does this PR introduce any user-facing change?

No. This is basically code refactoring.

### How was this patch tested?

Tested with existing test suites.

Closes#27972 from jiangxb1987/BlockFetcher.

Authored-by: Xingbo Jiang <xingbo.jiang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

The test case has been flaky because the execution time sometimes exceeds the await duration. Increase the await duration to avoid flakiness.

### How was this patch tested?

Tested locally and it didn't fail anymore.

Closes#28007 from jiangxb1987/DecomTest.

Authored-by: Xingbo Jiang <xingbo.jiang@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This is another solution for `SPARK-31081` and #27849 .

I added a checkbox which can toggle display of stageId/taskid in the SQL's DAG page.

Mainly, I implemented the toggleable texts in boxes with HTML label feature provided by `dagre-d3`.

The additional metrics are enclosed by `<span>` and control the appearance of the text.

But the exception is additional metrics in clusters.

We can use HTML label for cluster but layout will be broken so I choosed normal text label for clusters.

Due to that, this solution contains a little bit tricky code in`spark-sql-viz.js` to manipulate the metric texts and generate DOMs.

### Why are the changes needed?

It makes metrics harder to read after #26843 and user may not interest in extra info(stageId/StageAttemptId/taskId ) when they do not need debug.

#27849 control the appearance by a new configuration property but providing a checkbox is more flexible.

### Does this PR introduce any user-facing change?

Yes.

[Additional metrics shown]

[Additional metrics hidden]

### How was this patch tested?

Tested manually with a simple DataFrame operation.

* The appearance of additional metrics in the boxes are controlled by the newly added checkbox.

* No error found with JS-debugger.

* Checked/not-checked state is preserved after reloading.

Closes#27927 from sarutak/SPARK-31081.

Authored-by: Kousuke Saruta <sarutak@oss.nttdata.com>

Signed-off-by: Gengliang Wang <gengliang.wang@databricks.com>

### What changes were proposed in this pull request?

Refactor `streaming-page.js` by making on-click timeline action customizable.

### Why are the changes needed?

In the current implementation, `streaming-page.js` is used from Streaming page and Structured Streaming page but the implementation of the on-click timeline action is strongly dependent on Streamng page.

Structured Streaming page doesn't define the on-click action for now but it's better to remove the dependncy for the future.

Originally, I make this change to fix `SPARK-31128` but #27883 resolved it.

So, now this is just for refactoring.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Manual tests with following code and confirmed there are no regression and no error in the debug console in Firefox.

For Structured Streaming:

```

spark.readStream.format("socket").options(Map("host"->"localhost", "port"->"8765")).load.writeStream.format("console").start

```

And then, visited Structured Streaming page and there were no error in the debug console when I clicked a point in the timeline.

For Spark Streaming:

```

import org.apache.spark.streaming._

val ssc = new StreamingContext(sc, Seconds(1))

ssc.socketTextStream("localhost", 8765)

dstream.foreachRDD(rdd => rdd.foreach(println))

ssc.start

```

And then, visited Streaming page and confirmed scrolling down and hilighting work well and there were no error in the debug console when I clicked a point in the timeline.

Closes#27921 from sarutak/followup-SPARK-29543-fix-oncick.

Authored-by: Kousuke Saruta <sarutak@oss.nttdata.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

To support case class parameter for typed Scala UDF, e.g.

```

case class TestData(key: Int, value: String)

val f = (d: TestData) => d.key * d.value.toInt

val myUdf = udf(f)

val df = Seq(("data", TestData(50, "2"))).toDF("col1", "col2")

checkAnswer(df.select(myUdf(Column("col2"))), Row(100) :: Nil)

```

### Why are the changes needed?

Currently, Spark UDF can only work on data types like java.lang.String, o.a.s.sql.Row, Seq[_], etc. This is inconvenient if user want to apply an operation on one column, and the column is struct type. You must access data from a Row object, instead of domain object like Dataset operations. It will be great if UDF can work on types that are supported by Dataset, e.g. case class.

And here's benchmark result of using case class comparing to row:

```scala

// case class: 58ms 65ms 59ms 64ms 61ms

// row: 59ms 64ms 73ms 84ms 69ms

val f1 = (d: TestData) => s"${d.key}, ${d.value}"

val f2 = (r: Row) => s"${r.getInt(0)}, ${r.getString(1)}"

val udf1 = udf(f1)

// set spark.sql.legacy.allowUntypedScalaUDF=true

val udf2 = udf(f2, StringType)

val df = spark.range(100000).selectExpr("cast (id as int) as id")

.select(struct('id, lit("str")).as("col"))

df.cache().collect()

// warmup to exclude some extra influence

df.select(udf1('col)).write.mode(SaveMode.Overwrite).format("noop").save()

df.select(udf2('col)).write.mode(SaveMode.Overwrite).format("noop").save()

start = System.currentTimeMillis()

df.select(udf1('col)).write.mode(SaveMode.Overwrite).format("noop").save()

println(System.currentTimeMillis() - start)

start = System.currentTimeMillis()

df.select(udf2('col)).write.mode(SaveMode.Overwrite).format("noop").save()

println(System.currentTimeMillis() - start)

```

### Does this PR introduce any user-facing change?

Yes. User now could be able to use typed Scala UDF with case class as input parameter.

### How was this patch tested?

Added unit tests.

Closes#27937 from Ngone51/udf_caseclass_support.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to apply rebasing for all dates/timestamps in conversion functions `fromJavaDate()`, `toJavaDate()`, `toJavaTimestamp()` and `fromJavaTimestamp()`. The rebasing is performed via building a local date-time in an original calendar, extracting date-time fields from the result, and creating new local date-time in the target calendar.

### Why are the changes needed?

The changes are need to be compatible with previous Spark version (2.4.5 and earlier versions) not only before the Gregorian cutover date `1582-10-15` but also for dates after the date. For instance, Gregorian calendar implementation in Java 7 `java.util.GregorianCalendar` is not accurate in resolving time zone offsets as Gregorian calendar introduced since Java 8.

### Does this PR introduce any user-facing change?

Yes, this PR can introduce behavior changes for dates after `1582-10-15`, in particular conversions of zone ids to zone offsets will be much more accurate.

### How was this patch tested?

By existing test suites `DateTimeUtilsSuite`, `DateFunctionsSuite`, `DateExpressionsSuite`, `CollectionExpressionsSuite`, `HiveOrcHadoopFsRelationSuite`, `ParquetIOSuite`.

Closes#27980 from MaxGekk/reuse-rebase-funcs-in-java-funcs.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

For a bit of background,

PIP packaging test started to fail (see [this logs](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/120218/testReport/)) as of setuptools 46.1.0 release. In https://github.com/pypa/setuptools/issues/1424, they decided to don't keep the modes in `package_data`.

In PySpark pip installation, we keep the executable scripts in `package_data` fc4e56a54c/python/setup.py (L199-L200), and expose their symbolic links as executable scripts.

So, the symbolic links (or copied scripts) executes the scripts copied from `package_data`, which doesn't have the executable permission in its mode:

```

/tmp/tmp.UmkEGNFdKF/3.6/bin/spark-submit: line 27: /tmp/tmp.UmkEGNFdKF/3.6/lib/python3.6/site-packages/pyspark/bin/spark-class: Permission denied

/tmp/tmp.UmkEGNFdKF/3.6/bin/spark-submit: line 27: exec: /tmp/tmp.UmkEGNFdKF/3.6/lib/python3.6/site-packages/pyspark/bin/spark-class: cannot execute: Permission denied

```

The current issue is being tracked at https://github.com/pypa/setuptools/issues/2041

</br>

For what this PR proposes:

It sets the upper bound in PR builder for now to unblock other PRs. _This PR does not solve the issue yet. I will make a fix after monitoring https://github.com/pypa/setuptools/issues/2041_

### Why are the changes needed?

It currently affects users who uses the latest setuptools. So, _users seem unable to use PySpark with the latest setuptools._ See also https://github.com/pypa/setuptools/issues/2041#issuecomment-602566667

### Does this PR introduce any user-facing change?

It makes CI pass for now. No user-facing change yet.

### How was this patch tested?

Jenkins will test.

Closes#27995 from HyukjinKwon/investigate-pip-packaging.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR (SPARK-31229) is rather a followup of https://github.com/apache/spark/pull/27926 (SPARK-31166). It adds unittests for `TypeCoercion.findTypeForComplex` and `Cast.canCast` about struct, map and array with the respect to null types.

### Why are the changes needed?

To detect which scope was broken in the future easily.

### Does this PR introduce any user-facing change?

No, it's a test-only.

### How was this patch tested?

Unittests were added.

Closes#27990 from HyukjinKwon/SPARK-31166-followup.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

https://github.com/apache/spark/pull/26412 introduced a behavior change that `date_add`/`date_sub` functions can't accept string and double values in the second parameter. This is reasonable as it's error-prone to cast string/double to int at runtime.

However, using string literals as function arguments is very common in SQL databases. To avoid breaking valid use cases that the string literal is indeed an integer, this PR proposes to add ansi_cast for string literal in date_add/date_sub functions. If the string value is not a valid integer, we fail at query compiling time because of constant folding.

### Why are the changes needed?

avoid breaking changes

### Does this PR introduce any user-facing change?

Yes, now 3.0 can run `date_add('2011-11-11', '1')` like 2.4

### How was this patch tested?

new tests.

Closes#27965 from cloud-fan/string.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to refactor reading of timestamps of the `TIMESTAMP_MILLIS` logical type from Parquet files in `VectorizedColumnReader`, and move checking of the `rebaseDateTime` flag out of the internal loop.

### Why are the changes needed?

To avoid any additional overhead of the checking the SQL config `spark.sql.legacy.parquet.rebaseDateTime.enabled` introduced by the PR https://github.com/apache/spark/pull/27915.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By running the test suite `ParquetIOSuite`.

Closes#27973 from MaxGekk/rebase-parquet-datetime-followup.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

`INSERT OVERWRITE DIRECTORY` can only use file format (class implements `org.apache.spark.sql.execution.datasources.FileFormat`). This PR fixes it and other minor improvement.

### Why are the changes needed?

### Does this PR introduce any user-facing change?

### How was this patch tested?

Closes#27891 from cloud-fan/doc.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Improve `ScalaReflection` to only don't erasure non user defined `AnyVal` type, but still erasure other types, e.g. `Any`. And this brings two benefits:

1. Give better encode error message for some unsupported types, e.g. `Any`

2. Won't miss the walk path for the `AnyVal` type

### Why are the changes needed?

Firstly, PR #15284 added encode(serializeFor/deserializeFor) support for value class, which extends `AnyVal`, by not erasure types. But, this also introduce a problem that when user try to encoder unsupported types, e.g. `Any`, it will fail on `java.lang.ClassNotFoundException: scala.Any` due to the reason that `scala.Any` doesn't erasure to `java.lang.Object`.

Also, in current `getClassNameFromType()`, it always erasure types which could missing walked path for user defined `AnyVal` types.

### Does this PR introduce any user-facing change?

Yes. For the test below:

```

case class Bar(i: Any)

case class Foo(i: Bar) extends AnyVal

test() {

implicitly[ExpressionEncoder[Foo]]

}

```

Before:

```

java.lang.ClassNotFoundException: scala.Any

at java.net.URLClassLoader.findClass(URLClassLoader.java:382)

at java.lang.ClassLoader.loadClass(ClassLoader.java:418)

at sun.misc.Launcher$AppClassLoader.loadClass(Launcher.java:355)

...

````

After:

```

java.lang.UnsupportedOperationException: No Encoder found for Any

- field (class: "java.lang.Object", name: "i")

- field (class: "org.apache.spark.sql.catalyst.encoders.Bar", name: "i")

- root class: "org.apache.spark.sql.catalyst.encoders.Foo"

at org.apache.spark.sql.catalyst.ScalaReflection$.$anonfun$serializerFor$1(ScalaReflection.scala:561)

```

### How was this patch tested?

Added unit test and test manually.

Closes#27959 from Ngone51/impr_anyval.

Authored-by: yi.wu <yi.wu@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to fix the issue of rebasing leap years in Julian calendar to Proleptic Gregorian calendar in which the years are not leap years. In the Julian calendar, every four years is a leap year, with a leap day added to the month of February. In Proleptic Gregorian calendar, every year that is exactly divisible by four is a leap year, except for years that are exactly divisible by 100, but these centurial years are leap years, if they are exactly divisible by 400. In this ways, the date **1000-02-29** exists in the Julian calendar but not in Proleptic Gregorian calendar.

I modified the `rebaseJulianToGregorianMicros()` and `rebaseJulianToGregorianDays()` in `DateTimeUtils` by passing 1 as a day number of month while forming `LocalDate` or `LocalDateTime`, and adding the number of days using the `plusDays()` method. For example, **1000-02-29** doesn't exist in Proleptic Gregorian calendar, and `LocalDate.of(1000, 2, 29)` throws an exception. To avoid the issue, I build the `LocalDate.of(1000, 2, 1)` date and add 28 days. The `plusDays(28)` method produces the next valid date after `1000-02-28` which is **1000-03-01**.

### Why are the changes needed?

Before the changes, the `java.time.DateTimeException` exception is raised while loading the date `1000-02-29` from parquet files saved by Spark 2.4.5:

```scala

scala> spark.conf.set("spark.sql.legacy.parquet.rebaseDateTime.enabled", true)

scala> spark.read.parquet("/Users/maxim/tmp/before_1582/2_4_5_date_leap").show

20/03/21 03:03:59 ERROR Executor: Exception in task 0.0 in stage 3.0 (TID 3)

java.time.DateTimeException: Invalid date 'February 29' as '1000' is not a leap year

```

The parquet files were saved via the commands:

```shell

$ export TZ="America/Los_Angeles"

```

```scala

scala> scala> spark.conf.set("spark.sql.session.timeZone", "America/Los_Angeles")

scala> val df = Seq(java.sql.Date.valueOf("1000-02-29")).toDF("dateS").select($"dateS".as("date"))

df: org.apache.spark.sql.DataFrame = [date: date]

scala> df.write.mode("overwrite").parquet("/Users/maxim/tmp/before_1582/2_4_5_date_leap")

scala> spark.read.parquet("/Users/maxim/tmp/before_1582/2_4_5_date_leap").show

+----------+

| date|

+----------+

|1000-02-29|

+----------+

```

### Does this PR introduce any user-facing change?

Yes, after the fix:

```scala

scala> spark.conf.set("spark.sql.session.timeZone", "America/Los_Angeles")

scala> spark.conf.set("spark.sql.legacy.parquet.rebaseDateTime.enabled", true)

scala> spark.read.parquet("/Users/maxim/tmp/before_1582/2_4_5_date_leap").show

+----------+

| date|

+----------+

|1000-03-01|

+----------+

```

### How was this patch tested?

Added tests to `DateTimeUtilsSuite`.

Closes#27974 from MaxGekk/julian-date-29-feb.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

The cached RDD for plan "select 1" stays in memory forever until the session close. This cached data cannot be used since the view temp1 has been replaced by another plan. It's a memory leak.

We can reproduce by below commands:

```

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 3.0.0-SNAPSHOT

/_/

Using Scala version 2.12.10 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_201)

Type in expressions to have them evaluated.

Type :help for more information.

scala> spark.sql("create or replace temporary view temp1 as select 1")

scala> spark.sql("cache table temp1")

scala> spark.sql("create or replace temporary view temp1 as select 1, 2")

scala> spark.sql("cache table temp1")

scala> assert(spark.sharedState.cacheManager.lookupCachedData(sql("select 1, 2")).isDefined)

scala> assert(spark.sharedState.cacheManager.lookupCachedData(sql("select 1")).isDefined)

```

### Why are the changes needed?

Fix the memory leak, specially for long running mode.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Add an unit test.

Closes#27185 from LantaoJin/SPARK-30494.

Authored-by: LantaoJin <jinlantao@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

1, remove unused var `numFeatures`;

2, remove the computation of `numSamples` and `numClasses`, since they can be directly infered by `counts: OpenHashMap[Double, Long]`

### Why are the changes needed?

remove a unnecessary job to compute `numSamples` and `numClasses`

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

existing testsuites

Closes#27979 from zhengruifeng/anova_followup.

Authored-by: zhengruifeng <ruifengz@foxmail.com>

Signed-off-by: zhengruifeng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

Use our own docs for data pattern instructions to replace java doc.

### Why are the changes needed?

fix doc

### Does this PR introduce any user-facing change?

yes. doc changed

### How was this patch tested?

pass jenkins

Closes#27975 from yaooqinn/SPARK-31189-2.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Implement a Feature selector that removes all low-variance features. Features with a

variance lower than the threshold will be removed. The default is to keep all features with non-zero variance, i.e. remove the features that have the same value in all samples.

### Why are the changes needed?

VarianceThreshold is a simple baseline approach to feature selection. It removes all features whose variance doesn’t meet some threshold. The idea is when a feature doesn’t vary much within itself, it generally has very little predictive power.

scikit has implemented this selector.

https://scikit-learn.org/stable/modules/feature_selection.html#variance-threshold

### Does this PR introduce any user-facing change?

Yes.

### How was this patch tested?

Add new test suite.

Closes#27954 from huaxingao/variance-threshold.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: zhengruifeng <ruifengz@foxmail.com>

### What changes were proposed in this pull request?

This PR(SPARK-31101) proposes to upgrade Janino to 3.0.16 which is released recently.

* Merged pull request janino-compiler/janino#114 "Grow the code for relocatables, and do fixup, and relocate".

Please see the commit log.

- https://github.com/janino-compiler/janino/commits/3.0.16

You can see the changelog from the link: http://janino-compiler.github.io/janino/changelog.html / though release note for Janino 3.0.16 is actually incorrect.

### Why are the changes needed?

We got some report on failure on user's query which Janino throws error on compiling generated code. The issue is here: janino-compiler/janino#113 It contains the information of generated code, symptom (error), and analysis of the bug, so please refer the link for more details.

Janino 3.0.16 contains the PR janino-compiler/janino#114 which would enable Janino to succeed to compile user's query properly.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing UTs.

Closes#27932 from HeartSaVioR/SPARK-31101-janino-3.0.16.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

`KafkaDelegationTokenSuite` has been ignored because showed flaky behaviour. In this PR I've changed the approach how the test executed and turning it on again. This PR contains the following:

* The test runs in separate JVM in order to avoid modified security context

* The body of the test runs in `testRetry` which reties if failed

* Additional logs to analyse possible failures

* Enhanced clean-up code

### Why are the changes needed?

`KafkaDelegationTokenSuite ` is ignored.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Executed the test in loop 1k+ times in jenkins (locally much harder to reproduce).

Closes#27877 from gaborgsomogyi/SPARK-30541.

Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

This is a follow up for SPARK-30715 . Kubernetes client version in sync in integration-tests and kubernetes/core

### Why are the changes needed?

More than once, the kubernetes client version has gone out of sync between integration tests and kubernetes/core. So brought them up in sync again and added a comment to save us from future need of this additional followup.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Manually.

Closes#27948 from ScrapCodes/follow-up-spark-30715.

Authored-by: Prashant Sharma <prashsh1@in.ibm.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Hive 2.3+ supports `getTablesByType` API, which will provide an efficient way to get HiveTable with specific type. Now, we have following mappings when using `HiveExternalCatalog`.

```

CatalogTableType.EXTERNAL => HiveTableType.EXTERNAL_TABLE

CatalogTableType.MANAGED => HiveTableType.MANAGED_TABLE

CatalogTableType.VIEW => HiveTableType.VIRTUAL_VIEW

```

Without this API, we need to achieve the goal by `getTables` + `getTablesByName` + `filter with type`.

This PR add `getTablesByType` in `HiveShim`. For those hive versions don't support this API, `UnsupportedOperationException` will be thrown. And the upper logic should catch the exception and fallback to the filter solution mentioned above.

Since the JDK11 related fix in `Hive` is not released yet, manual tests against hive 2.3.7-SNAPSHOT is done by following the instructions of SPARK-29245.

### Why are the changes needed?

This API will provide better usability and performance if we want to get a list of hiveTables with specific type. For example `HiveTableType.VIRTUAL_VIEW` corresponding to `CatalogTableType.VIEW`.

### Does this PR introduce any user-facing change?

No, this is a support function.

### How was this patch tested?

Add tests in VersionsSuite and manually run JDK11 test with following settings:

- Hive 2.3.6 Metastore on JDK8

- Hive 2.3.7-SNAPSHOT library build from source of Hive 2.3 branch

- Spark build with Hive 2.3.7-SNAPSHOT on jdk-11.0.6

Closes#27952 from Eric5553/GetTableByType.

Authored-by: Eric Wu <492960551@qq.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

Change BLAS for part of level-1 routines(axpy, dot, scal(double, denseVector)) from java implementation to NativeBLAS when vector size>256

### Why are the changes needed?

In current ML BLAS.scala, all level-1 routines are fixed to use java

implementation. But NativeBLAS(intel MKL, OpenBLAS) can bring up to 11X

performance improvement based on performance test which apply direct

calls against these methods. We should provide a way to allow user take

advantage of NativeBLAS for level-1 routines. Here we do it through

switching to NativeBLAS for these methods from f2jBLAS.

### Does this PR introduce any user-facing change?

Yes, methods axpy, dot, scal in level-1 routines will switch to NativeBLAS when it has more than nativeL1Threshold(fixed value 256) elements and will fallback to f2jBLAS if native BLAS is not properly configured in system.

### How was this patch tested?

Perf test direct calls level-1 routines

Closes#27546 from yma11/SPARK-30773.

Lead-authored-by: yan ma <yan.ma@intel.com>

Co-authored-by: Ma Yan <yan.ma@intel.com>

Signed-off-by: Sean Owen <srowen@gmail.com>