### What changes were proposed in this pull request?

This PR group exception messages in `/core/src/main/scala/org/apache/spark/sql/execution/datasources`.

### Why are the changes needed?

It will largely help with standardization of error messages and its maintenance.

### Does this PR introduce _any_ user-facing change?

No. Error messages remain unchanged.

### How was this patch tested?

No new tests - pass all original tests to make sure it doesn't break any existing behavior.

Closes#31757 from beliefer/SPARK-33602.

Authored-by: gengjiaan <gengjiaan@360.cn>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR simplifies `Analyzer.resolveLiteralFunction` to always create the `Alias`. The caller side will remove the `Alias` if it's not necessary.

### Why are the changes needed?

code simplification.

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

existing tests

Closes#31844 from cloud-fan/minor.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

This is a follow-up of https://github.com/apache/spark/pull/31808 and simplifies its fix to one line (excluding comments).

### Why are the changes needed?

code simplification

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

N/A

Closes#31843 from cloud-fan/simplify.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

This PR proposes to follow Univocity's input buffer.

### Why are the changes needed?

- Firstly, it's best to trust their judgement on the default values. Also 128 is too low.

- Default values arguably have more test coverage in Univocity.

- It will also fix https://github.com/uniVocity/univocity-parsers/issues/449

- ^ is a regression compared to Spark 2.4

### Does this PR introduce _any_ user-facing change?

No. In addition, It fixes a regression.

### How was this patch tested?

Manually tested, and added a unit test.

Closes#31858 from HyukjinKwon/SPARK-34768.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR updates `InMemoryCatalog.tableExists` to return false if database doesn't exist, instead of failing. The new behavior is consistent with `HiveExternalCatalog` which is used in production, so this bug mostly only affects tests.

### Why are the changes needed?

bug fix

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

a new test

Closes#31860 from cloud-fan/catalog.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

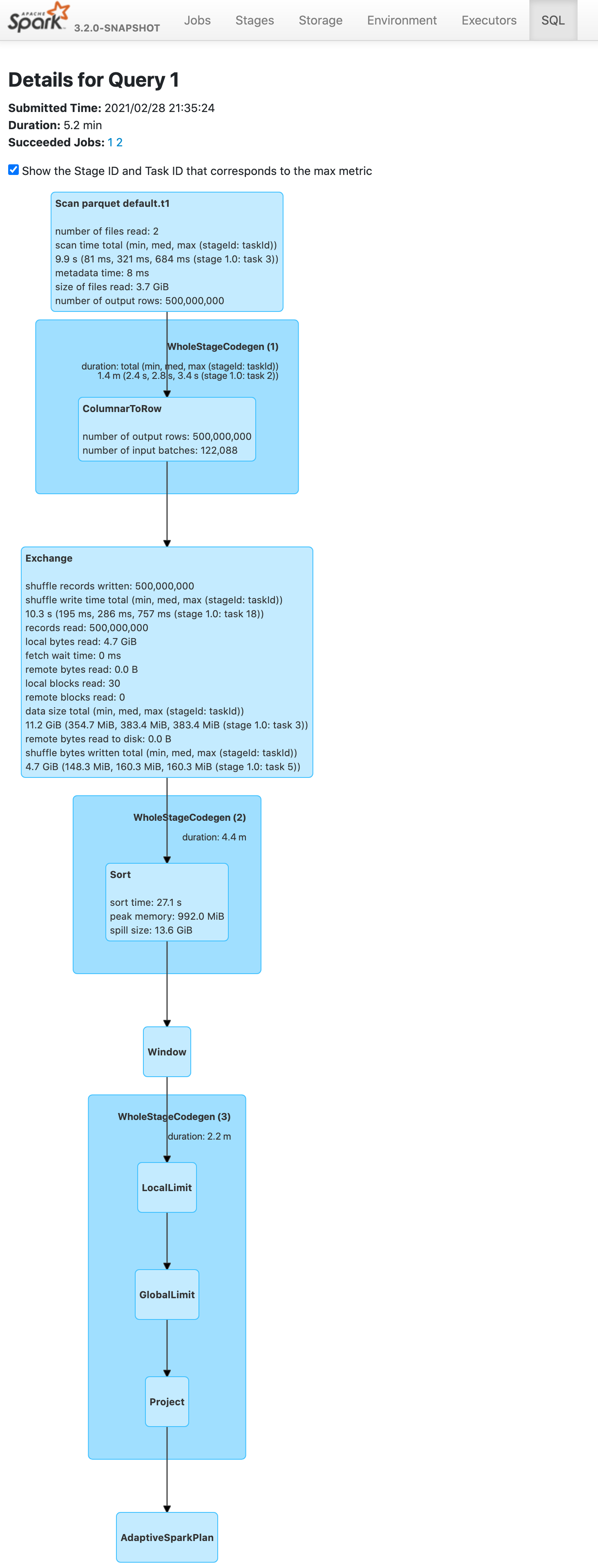

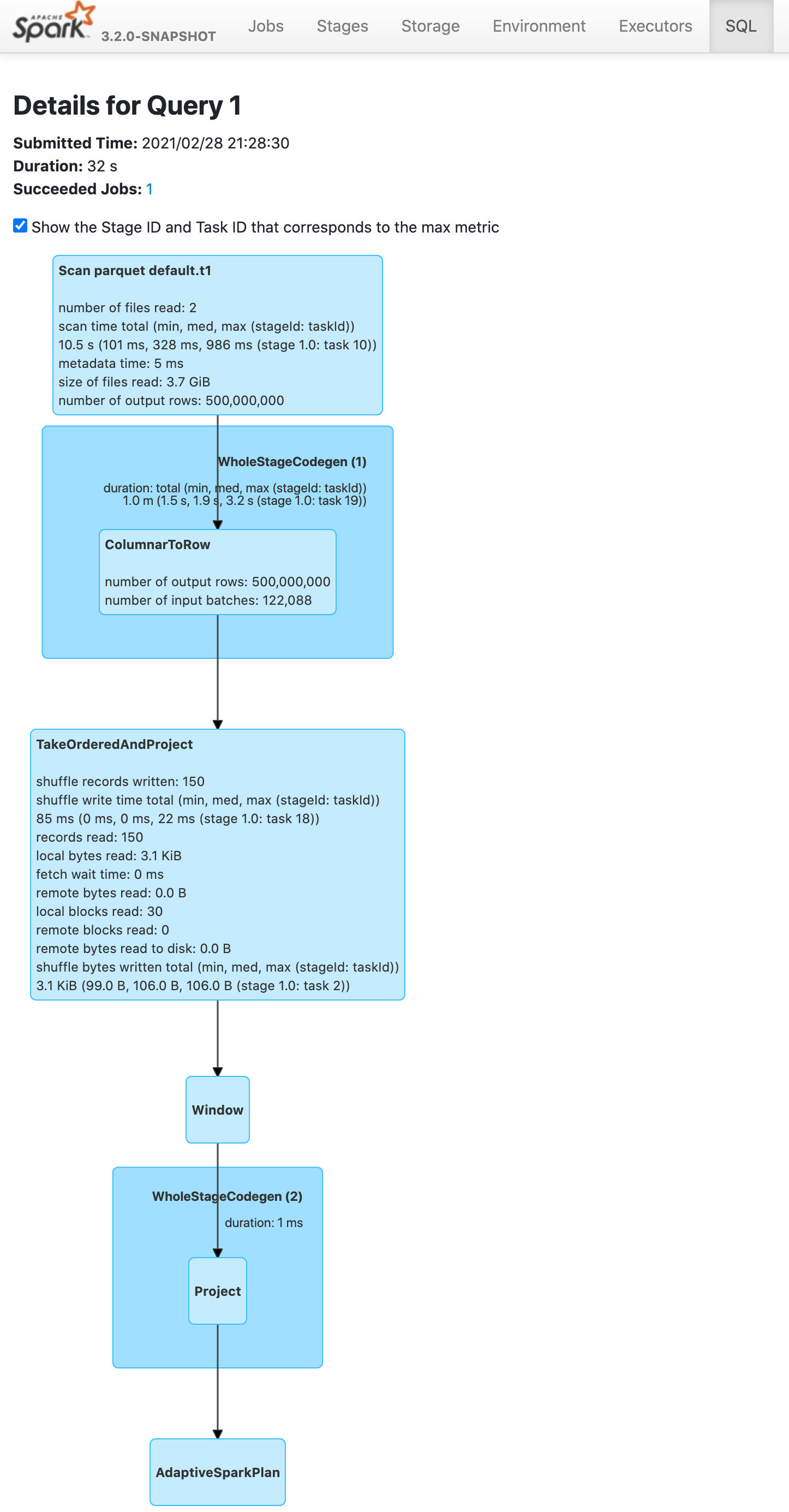

Push down limit through `Window` when the partitionSpec of all window functions is empty and the same order is used. This is a real case from production:

This pr support 2 cases:

1. All window functions have same orderSpec:

```sql

SELECT *, ROW_NUMBER() OVER(ORDER BY a) AS rn, RANK() OVER(ORDER BY a) AS rk FROM t1 LIMIT 5;

== Optimized Logical Plan ==

Window [row_number() windowspecdefinition(a#9L ASC NULLS FIRST, specifiedwindowframe(RowFrame, unboundedpreceding$(), currentrow$())) AS rn#4, rank(a#9L) windowspecdefinition(a#9L ASC NULLS FIRST, specifiedwindowframe(RowFrame, unboundedpreceding$(), currentrow$())) AS rk#5], [a#9L ASC NULLS FIRST]

+- GlobalLimit 5

+- LocalLimit 5

+- Sort [a#9L ASC NULLS FIRST], true

+- Relation default.t1[A#9L,B#10L,C#11L] parquet

```

2. There is a window function with a different orderSpec:

```sql

SELECT a, ROW_NUMBER() OVER(ORDER BY a) AS rn, RANK() OVER(ORDER BY b DESC) AS rk FROM t1 LIMIT 5;

== Optimized Logical Plan ==

Project [a#9L, rn#4, rk#5]

+- Window [rank(b#10L) windowspecdefinition(b#10L DESC NULLS LAST, specifiedwindowframe(RowFrame, unboundedpreceding$(), currentrow$())) AS rk#5], [b#10L DESC NULLS LAST]

+- GlobalLimit 5

+- LocalLimit 5

+- Sort [b#10L DESC NULLS LAST], true

+- Window [row_number() windowspecdefinition(a#9L ASC NULLS FIRST, specifiedwindowframe(RowFrame, unboundedpreceding$(), currentrow$())) AS rn#4], [a#9L ASC NULLS FIRST]

+- Project [a#9L, b#10L]

+- Relation default.t1[A#9L,B#10L,C#11L] parquet

```

### Why are the changes needed?

Improve query performance.

```scala

spark.range(500000000L).selectExpr("id AS a", "id AS b").write.saveAsTable("t1")

spark.sql("SELECT *, ROW_NUMBER() OVER(ORDER BY a) AS rowId FROM t1 LIMIT 5").show

```

Before this pr | After this pr

-- | --

|

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Unit test.

Closes#31691 from wangyum/SPARK-34575.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

For the following cases, ABS should throw exceptions since the results are out of the range of the result data types in ANSI mode.

```

SELECT abs(${Int.MinValue});

SELECT abs(${Long.MinValue});

```

### Why are the changes needed?

Better ANSI compliance

### Does this PR introduce _any_ user-facing change?

Yes, Abs throws an exception if input is out of range in ANSI mode

### How was this patch tested?

Unit test

Closes#31836 from gengliangwang/ansiAbs.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

For DDL commands like DROP VIEW, they don't really need to resolve the view (parse and analyze the view SQL text), they just need to get the view metadata.

This PR fixes the rule `ResolveTempViews` to only resolve the temp view for `UnresolvedRelation`. This also fixes a bug for DROP VIEW, as previously it tried to resolve the view and failed to drop invalid views.

### Why are the changes needed?

bug fix

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

new test

Closes#31853 from cloud-fan/view-resolve.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR removes one unused variable in `NewInstance.constructor`.

### Why are the changes needed?

This looks like a variable for debugging at the initial commit of SPARK-23584 .

- 1b08c4393c (diff-2a36e31684505fd22e2d12a864ce89fd350656d716a3f2d7789d2cdbe38e15fbR461)

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Pass the CIs.

Closes#31838 from dongjoon-hyun/minor-object.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Support `timestamp +/- year-month interval`. In the PR, I propose to introduce new binary expression `TimestampAddYMInterval` similarly to `DateAddYMInterval`. It invokes new method `timestampAddMonths` from `DateTimeUtils` by passing a timestamp as an offset in microseconds since the epoch, amount of months from the giveb year-month interval, and the time zone ID in which the operation is performed. The `timestampAddMonths()` method converts the input microseconds to a local timestamp, adds months to it, and converts the results back to an instant in microseconds at the given time zone.

### Why are the changes needed?

To conform the ANSI SQL standard which requires to support such operation over timestamps and intervals:

<img width="811" alt="Screenshot 2021-03-12 at 11 36 14" src="https://user-images.githubusercontent.com/1580697/111081674-865d4900-8515-11eb-86c8-3538ecaf4804.png">

### Does this PR introduce _any_ user-facing change?

Should not since new intervals have not been released yet.

### How was this patch tested?

By running new tests:

```

$ build/sbt "test:testOnly *DateTimeUtilsSuite"

$ build/sbt "test:testOnly *DateExpressionsSuite"

$ build/sbt "test:testOnly *ColumnExpressionSuite"

```

Closes#31832 from MaxGekk/timestamp-add-year-month-interval.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Max Gekk <max.gekk@gmail.com>

### What changes were proposed in this pull request?

This PR aims to make `ExpressionEncoderSuite` to use `deepEquals` instead of `equals` when `input` is `array of array`.

This comparison code itself was added by SPARK-11727 at Apache Spark 1.6.0.

### Why are the changes needed?

Currently, the interpreted mode fails for `array of array` because the following line is used.

```

Arrays.equals(b1.asInstanceOf[Array[AnyRef]], b2.asInstanceOf[Array[AnyRef]])

```

### Does this PR introduce _any_ user-facing change?

No. This is a test-only PR.

### How was this patch tested?

Pass the existing CIs.

Closes#31837 from dongjoon-hyun/SPARK-34743.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

In `Analyzer.resolveExpression`, we have a parameter to decide if we should remove unnecessary `Alias` or not. This is over complicated and we can always remove unnecessary `Alias`.

This PR simplifies this part and removes the parameter.

### Why are the changes needed?

code cleanup

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

existing tests

Closes#31758 from cloud-fan/resolve.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to cast the input float to double in the `SecondsToTimestamp` expression in the same way as in the `Cast` expression.

### Why are the changes needed?

To have the same results from `CAST(<float> AS TIMESTAMP)` and from `TIMESTAMP_SECONDS`:

```sql

spark-sql> SELECT CAST(16777215.0f AS TIMESTAMP);

1970-07-14 07:20:15

spark-sql> SELECT TIMESTAMP_SECONDS(16777215.0f);

1970-07-14 07:20:14.951424

```

### Does this PR introduce _any_ user-facing change?

Yes. After the changes:

```sql

spark-sql> SELECT TIMESTAMP_SECONDS(16777215.0f);

1970-07-14 07:20:15

```

### How was this patch tested?

By running new test:

```

$ build/sbt "test:testOnly *DateExpressionsSuite"

```

Closes#31831 from MaxGekk/adjust-SecondsToTimestamp.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

In non-ANSI mode, casting float to timestamp has different implementation for codegen on and off.

Codegen on:

1. Multiply float input by MICROS_PER_SECOND

2. Cast resulting float value to long

Codegen off:

1. CAST float input to double input

2. Multiply double input by MICROS_PER_SECOND

3. Cast resulting double value to long

In the PR, I propose to align to non-codegen code, and cast input float to double in codegen.

### Why are the changes needed?

This fixes the issue which is demonstrated by the code:

```sql

spark-sql> CREATE TEMP VIEW v1 AS SELECT 16777215.0f AS f;

spark-sql> SELECT * FROM v1;

1.6777215E7

spark-sql> SELECT CAST(f AS TIMESTAMP) FROM v1;

1970-07-14 07:20:15

spark-sql> CACHE TABLE v1;

spark-sql> SELECT * FROM v1;

1.6777215E7

spark-sql> SELECT CAST(f AS TIMESTAMP) FROM v1;

1970-07-14 07:20:14.951424

```

The result from the cached view **1970-07-14 07:20:14.951424** is different from un-cached view **1970-07-14 07:20:15**.

### Does this PR introduce _any_ user-facing change?

Yes. After the changes, the example above outputs the same timestamp for the cached view:

```sql

spark-sql> CACHE TABLE v1;

spark-sql> SELECT * FROM v1;

1.6777215E7

spark-sql> SELECT CAST(f AS TIMESTAMP) FROM v1;

1970-07-14 07:20:15

```

### How was this patch tested?

By running new test:

```

$ build/sbt "test:testOnly *CastSuite"

```

Closes#31819 from MaxGekk/fix-float-to-timestamp.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This patch proposes to fix incorrect parameter type for subexpression elimination under whole-stage.

### Why are the changes needed?

If the parameter is a byte array, the subexpression elimination under wholestage codegen will use incorrect parameter type and cause compile error. Although Spark can automatically fallback to interpreted mode, we should fix it.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Manually test with customer application. Unit test.

Closes#31814 from viirya/SPARK-34723.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Liang-Chi Hsieh <viirya@gmail.com>

### What changes were proposed in this pull request?

Support `date +/- year-month interval`. In the PR, I propose to re-use existing code from the `AddMonths` expression, and extract it to the common base class `AddMonthsBase`. That base class is used in new expression `DateAddYMInterval` and in the existing one `AddMonths` (the `add_months` function).

### Why are the changes needed?

To conform the ANSI SQL standard which requires to support such operation over dates and intervals:

<img width="811" alt="Screenshot 2021-03-12 at 11 36 14" src="https://user-images.githubusercontent.com/1580697/110914390-5f412480-8327-11eb-9f8b-e92e73c0b9cd.png">

### Does this PR introduce _any_ user-facing change?

Should not since new intervals have not been released yet.

### How was this patch tested?

By running new tests:

```

$ build/sbt "test:testOnly *ColumnExpressionSuite"

$ build/sbt "test:testOnly *DateExpressionsSuite"

```

Closes#31812 from MaxGekk/date-add-year-month-interval.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This bug was introduced by SPARK-23583 at Apache Spark 2.4.0.

This PR aims to use `getMethod` instead of `getDeclaredMethod`.

```scala

- obj.getClass.getDeclaredMethod(functionName, argClasses: _*)

+ obj.getClass.getMethod(functionName, argClasses: _*)

```

### Why are the changes needed?

`getDeclaredMethod` does not search the super class's method. To invoke `GenericArrayData.toIntArray`, we need to use `getMethod` because it's declared at the super class `ArrayData`.

```

[info] - encode/decode for array of int: [I74655d03 (interpreted path) *** FAILED *** (14 milliseconds)

[info] Exception thrown while decoding

[info] Converted: [0,1000000020,3,0,ffffff850000001f,4]

[info] Schema: value#680

[info] root

[info] -- value: array (nullable = true)

[info] |-- element: integer (containsNull = false)

[info]

[info]

[info] Encoder:

[info] class[value[0]: array<int>] (ExpressionEncoderSuite.scala:578)

[info] org.scalatest.exceptions.TestFailedException:

[info] at org.scalatest.Assertions.newAssertionFailedException(Assertions.scala:472)

[info] at org.scalatest.Assertions.newAssertionFailedException$(Assertions.scala:471)

[info] at org.scalatest.funsuite.AnyFunSuite.newAssertionFailedException(AnyFunSuite.scala:1563)

[info] at org.scalatest.Assertions.fail(Assertions.scala:949)

[info] at org.scalatest.Assertions.fail$(Assertions.scala:945)

[info] at org.scalatest.funsuite.AnyFunSuite.fail(AnyFunSuite.scala:1563)

[info] at org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite.$anonfun$encodeDecodeTest$1(ExpressionEncoderSuite.scala:578)

[info] at org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite.verifyNotLeakingReflectionObjects(ExpressionEncoderSuite.scala:656)

[info] at org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite.$anonfun$testAndVerifyNotLeakingReflectionObjects$2(ExpressionEncoderSuite.scala:669)

[info] at org.apache.spark.sql.catalyst.plans.CodegenInterpretedPlanTest.$anonfun$test$4(PlanTest.scala:50)

[info] at org.apache.spark.sql.catalyst.plans.SQLHelper.withSQLConf(SQLHelper.scala:54)

[info] at org.apache.spark.sql.catalyst.plans.SQLHelper.withSQLConf$(SQLHelper.scala:38)

[info] at org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite.withSQLConf(ExpressionEncoderSuite.scala:118)

[info] at org.apache.spark.sql.catalyst.plans.CodegenInterpretedPlanTest.$anonfun$test$3(PlanTest.scala:50)

...

[info] Cause: java.lang.RuntimeException: Error while decoding: java.lang.NoSuchMethodException: org.apache.spark.sql.catalyst.util.GenericArrayData.toIntArray()

[info] mapobjects(lambdavariable(MapObject, IntegerType, false, -1), assertnotnull(lambdavariable(MapObject, IntegerType, false, -1)), input[0, array<int>, true], None).toIntArray

[info] at org.apache.spark.sql.catalyst.encoders.ExpressionEncoder$Deserializer.apply(ExpressionEncoder.scala:186)

[info] at org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite.$anonfun$encodeDecodeTest$1(ExpressionEncoderSuite.scala:576)

[info] at org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite.verifyNotLeakingReflectionObjects(ExpressionEncoderSuite.scala:656)

[info] at org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite.$anonfun$testAndVerifyNotLeakingReflectionObjects$2(ExpressionEncoderSuite.scala:669)

```

### Does this PR introduce _any_ user-facing change?

This causes a runtime exception when we use the interpreted mode.

### How was this patch tested?

Pass the modified unit test case.

Closes#31816 from dongjoon-hyun/SPARK-34724.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR fixes an issue that `sql` method in the following classes which take qualified names don't quote the qualified names properly.

* UnresolvedAttribute

* AttributeReference

* Alias

One instance caused by this issue is reported in SPARK-34626.

```

UnresolvedAttribute("a" :: "b" :: Nil).sql

`a.b` // expected: `a`.`b`

```

And other instances are like as follows.

```

UnresolvedAttribute("a`b"::"c.d"::Nil).sql

a`b.`c.d` // expected: `a``b`.`c.d`

AttributeReference("a.b", IntegerType)(qualifier = "c.d"::Nil).sql

c.d.`a.b` // expected: `c.d`.`a.b`

Alias(AttributeReference("a", IntegerType)(), "b.c")(qualifier = "d.e"::Nil).sql

`a` AS d.e.`b.c` // expected: `a` AS `d.e`.`b.c`

```

### Why are the changes needed?

This is a bug.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

New test.

Closes#31754 from sarutak/fix-qualified-names.

Authored-by: Kousuke Saruta <sarutak@oss.nttdata.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to override the `typeName()` method in `YearMonthIntervalType` and `DayTimeIntervalType`, and assign them names according to the ANSI SQL standard:

<img width="836" alt="Screenshot 2021-03-11 at 17 29 04" src="https://user-images.githubusercontent.com/1580697/110802854-a54aa980-828f-11eb-956d-dd4fbf14aa72.png">

but keep the type name as singular according existing naming convention for other types.

### Why are the changes needed?

To improve Spark SQL user experience, and have readable types in error messages.

### Does this PR introduce _any_ user-facing change?

Should not since the types has not been released yet.

### How was this patch tested?

By running the modified tests:

```

$ build/sbt "test:testOnly *ExpressionTypeCheckingSuite"

$ build/sbt "sql/testOnly *SQLQueryTestSuite -- -z windowFrameCoercion.sql"

$ build/sbt "sql/testOnly *SQLQueryTestSuite -- -z literals.sql"

```

Closes#31810 from MaxGekk/interval-types-name.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This is a bug caused by https://issues.apache.org/jira/browse/SPARK-31670 . We remove the `Alias` when resolving column references in grouping expressions, which breaks `ResolveCreateNamedStruct`

### Why are the changes needed?

bug fix

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

new tests

Closes#31808 from cloud-fan/bug.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

In the PR, I propose to especially handle the amount of seconds `-9223372036855` in `IntervalUtils. durationToMicros()`. Starting from the amount (any durations with the second field < `-9223372036855`), input durations cannot fit to `Long` in the conversion to microseconds. For example, the amount of microseconds = `Long.MinValue = -9223372036854775808` can be represented in two forms:

1. seconds = -9223372036854, nanoAdjustment = -775808, or

2. seconds = -9223372036855, nanoAdjustment = +224192

And the method `Duration.ofSeconds()` produces the last form but such form causes overflow while converting `-9223372036855` seconds to microseconds.

In the PR, I propose to convert the second form to the first one if the second field of input duration is equal to `-9223372036855`.

### Why are the changes needed?

The changes fix the issue demonstrated by the code:

```scala

scala> durationToMicros(microsToDuration(Long.MinValue))

java.lang.ArithmeticException: long overflow

at java.lang.Math.multiplyExact(Math.java:892)

at org.apache.spark.sql.catalyst.util.IntervalUtils$.durationToMicros(IntervalUtils.scala:782)

... 49 elided

```

The `durationToMicros()` method cannot handle valid output of `microsToDuration()`.

### Does this PR introduce _any_ user-facing change?

Should not since new interval types has not been released yet.

### How was this patch tested?

By running new UT from `IntervalUtilsSuite`.

Closes#31799 from MaxGekk/fix-min-duration.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Add `Not(In)` and `Not(InSet)` pattern when convert filter to metastore.

### Why are the changes needed?

`NOT IN` is a useful condition to prune partition, it would be better to support it.

Technically, we can convert `c not in(x,y)` to `c != x and c != y`, then push it to metastore.

Avoid metastore overflow and respect the config `spark.sql.hive.metastorePartitionPruningInSetThreshold`, `Not(InSet)` won't push to metastore if it's value exceeds the threshold.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Add test.

Closes#31646 from ulysses-you/SPARK-34538.

Authored-by: ulysses-you <ulyssesyou18@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Extend the `Add`, `Subtract` and `UnaryMinus` expression to support `DayTimeIntervalType` and `YearMonthIntervalType` added by #31614.

Note: the expressions can throw the `overflow` exception independently from the SQL config `spark.sql.ansi.enabled`. In this way, the modified expressions always behave in the ANSI mode for the intervals.

### Why are the changes needed?

To conform to the ANSI SQL standard which defines `-/+` over intervals:

<img width="822" alt="Screenshot 2021-03-09 at 21 59 22" src="https://user-images.githubusercontent.com/1580697/110523128-bd50ea80-8122-11eb-9982-782da0088d27.png">

### Does this PR introduce _any_ user-facing change?

Should not since new types have not been released yet.

### How was this patch tested?

By running new tests in the test suites:

```

$ build/sbt "test:testOnly *ArithmeticExpressionSuite"

$ build/sbt "test:testOnly *ColumnExpressionSuite"

```

Closes#31789 from MaxGekk/add-subtruct-intervals.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

SPARK-23596 added `CodegenInterpretedPlanTest` at Apache Spark 2.4.0 in a wrong way because `withSQLConf` depends on the execution time `SQLConf.get` instead of `test` function declaration time. So, the following code executes the test twice without controlling the `CodegenObjectFactoryMode`. This PR aims to fix it correct and introduce a new function `testFallback`.

```scala

trait CodegenInterpretedPlanTest extends PlanTest {

override protected def test(

testName: String,

testTags: Tag*)(testFun: => Any)(implicit pos: source.Position): Unit = {

val codegenMode = CodegenObjectFactoryMode.CODEGEN_ONLY.toString

val interpretedMode = CodegenObjectFactoryMode.NO_CODEGEN.toString

withSQLConf(SQLConf.CODEGEN_FACTORY_MODE.key -> codegenMode) {

super.test(testName + " (codegen path)", testTags: _*)(testFun)(pos)

}

withSQLConf(SQLConf.CODEGEN_FACTORY_MODE.key -> interpretedMode) {

super.test(testName + " (interpreted path)", testTags: _*)(testFun)(pos)

}

}

}

```

### Why are the changes needed?

1. We need to use like the following.

```scala

super.test(testName + " (codegen path)", testTags: _*)(

withSQLConf(SQLConf.CODEGEN_FACTORY_MODE.key -> codegenMode) { testFun })(pos)

super.test(testName + " (interpreted path)", testTags: _*)(

withSQLConf(SQLConf.CODEGEN_FACTORY_MODE.key -> interpretedMode) { testFun })(pos)

```

2. After we fix this behavior with the above code, several test cases including SPARK-34596 and SPARK-34607 fail because they didn't work at both `CODEGEN` and `INTERPRETED` mode. Those test cases only work at `FALLBACK` mode. So, inevitably, we need to introduce `testFallback`.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Pass the CIs.

Closes#31766 from dongjoon-hyun/SPARK-34596-SPARK-34607.

Lead-authored-by: Dongjoon Hyun <dhyun@apple.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR adds a default null ordering to public `SortDirection` to match the Catalyst behavior.

### Why are the changes needed?

The SQL standard does not define the default null ordering for a sort direction. That's why it is up to a query engine to assign one. We need to standardize this in our public connector expressions to avoid ambiguity. That's why I propose to match the behavior in our Catalyst expressions.

### Does this PR introduce _any_ user-facing change?

Yes, it affects unreleased connector expression API.

### How was this patch tested?

Existing tests.

Closes#31580 from aokolnychyi/spark-34457.

Authored-by: Anton Okolnychyi <aokolnychyi@apple.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR proposes the following:

* `AlterViewAs.query` is currently analyzed in the physical operator `AlterViewAsCommand`, but it should be analyzed during the analysis phase.

* When `spark.sql.legacy.storeAnalyzedPlanForView` is set to true, store `TermporaryViewRelation` which wraps the analyzed plan, similar to #31273.

* Try to uncache the view you are altering.

### Why are the changes needed?

Analyzing a plan should be done in the analysis phase if possible.

Not uncaching the view (existing behavior) seems like a bug since the cache may not be used again.

### Does this PR introduce _any_ user-facing change?

Yes, now the view can be uncached if it's already cached.

### How was this patch tested?

Added new tests around uncaching.

The existing tests such as `SQLViewSuite` should cover the analysis changes.

Closes#31652 from imback82/alter_view_child.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR intends to remove unnecessary `SQLConf.withExistingConf` in `CastSuite`; since we've remove `ParVector ` in #31775, we no longer need to copy SQL configs into each thread env.

### Why are the changes needed?

Clean up the code.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Run the existing tests.

Closes#31785 from maropu/UpdateCastSuite.

Authored-by: Takeshi Yamamuro <yamamuro@apache.org>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Add `DayTimeIntervalType` and `YearMonthIntervalType` to `DataTypeTestUtils.ordered`/`atomicTypes`, and implement values generation of those types in `LiteralGenerator`/`RandomDataGenerator`. In this way, the types will be tested automatically in:

1. ArithmeticExpressionSuite:

- "function least"

- "function greatest"

2. PredicateSuite

- "BinaryComparison consistency check"

- "AND, OR, EqualTo, EqualNullSafe consistency check"

3. ConditionalExpressionSuite

- "if"

4. RandomDataGeneratorSuite

- "Basic types"

5. CastSuite

- "null cast"

- "up-cast"

- "SPARK-27671: cast from nested null type in struct"

6. OrderingSuite

- "GenerateOrdering with DayTimeIntervalType"

- "GenerateOrdering with YearMonthIntervalType"

7. PredicateSuite

- "IN with different types"

8. UnsafeRowSuite

- "calling get(ordinal, datatype) on null columns"

9. SortSuite

- "sorting on YearMonthIntervalType ..."

- "sorting on DayTimeIntervalType ..."

### Why are the changes needed?

To improve test coverage.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

By running the affected test suites.

Closes#31782 from MaxGekk/test-interval-as-atomic.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR updates UnresolvedTableValuedFunction's name to be a FunctionIdentifier instead of a string.

### Why are the changes needed?

To make UnresolvedTableValuedFunction consistent with UnresolvedFunction that uses FunctionIdentifier as the function name.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Unit test.

Closes#31749 from allisonwang-db/spark-34627.

Authored-by: allisonwang-db <66282705+allisonwang-db@users.noreply.github.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

RewritePredicateSubquery Optimizer Rule must not update Filters without subqueries.

Following is one such example.

```

=== Applying Rule org.apache.spark.sql.catalyst.optimizer.RewritePredicateSubquery ===

Project [a#0] Project [a#0]

!+- Filter (((a#0 > 1) OR (b#1 > 2)) AND ((c#2 > 1) AND (d#3 > 2))) +- Filter ((((a#0 > 1) OR (b#1 > 2)) AND (c#2 > 1)) AND (d#3 > 2))

+- LocalRelation <empty>, [a#0, b#1, c#2, d#3] +- LocalRelation <empty>, [a#0, b#1, c#2, d#3]

```

### Why are the changes needed?

minor change.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Existing UTs pass.

Closes#31712 from Swinky/rewritePredicateFix.

Authored-by: Swinky <mannswinky@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to extend Spark SQL API to accept [`java.time.Period`](https://docs.oracle.com/javase/8/docs/api/java/time/Period.html) as an external type of recently added new Catalyst type - `YearMonthIntervalType` (see #31614). The Java class `java.time.Period` has similar semantic to ANSI SQL year-month interval type, and it is the most suitable to be an external type for `YearMonthIntervalType`. In more details:

1. Added `PeriodConverter` which converts `java.time.Period` instances to/from internal representation of the Catalyst type `YearMonthIntervalType` (to `Int` type). The `PeriodConverter` object uses new methods of `IntervalUtils`:

- `periodToMonths()` converts the input period to the total length in months. If this period is too large to fit `Int`, the method throws the exception `ArithmeticException`. **Note:** _the input period has "days" precision, the method just ignores the days unit._

- `monthToPeriod()` obtains a `java.time.Period` representing a number of months.

2. Support new type `YearMonthIntervalType` in `RowEncoder` via the methods `createDeserializerForPeriod()` and `createSerializerForJavaPeriod()`.

3. Extended the Literal API to construct literals from `java.time.Period` instances.

### Why are the changes needed?

1. To allow users parallelization of `java.time.Period` collections, and construct year-month interval columns. Also to collect such columns back to the driver side.

2. This will allow to write tests in other sub-tasks of SPARK-27790.

### Does this PR introduce _any_ user-facing change?

The PR extends existing functionality. So, users can parallelize instances of the `java.time.Duration` class and collect them back:

```scala

scala> val ds = Seq(java.time.Period.ofYears(10).withMonths(2)).toDS

ds: org.apache.spark.sql.Dataset[java.time.Period] = [value: yearmonthinterval]

scala> ds.collect

res0: Array[java.time.Period] = Array(P10Y2M)

```

### How was this patch tested?

- Added a few tests to `CatalystTypeConvertersSuite` to check conversion from/to `java.time.Period`.

- Checking row encoding by new tests in `RowEncoderSuite`.

- Making literals of `YearMonthIntervalType` are tested in `LiteralExpressionSuite`.

- Check collecting by `DatasetSuite` and `JavaDatasetSuite`.

- New tests in `IntervalUtilsSuites` to check conversions `java.time.Period` <-> months.

Closes#31765 from MaxGekk/java-time-period.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

When we do self join with transform in a CTE, spark will throw AnalysisException.

A simple way to reproduce is

```

create temporary view t as select * from values 0, 1, 2 as t(a);

WITH temp AS (

SELECT TRANSFORM(a) USING 'cat' AS (b string) FROM t

)

SELECT t1.b FROM temp t1 JOIN temp t2 ON t1.b = t2.b

```

before this patch, it throws

```

org.apache.spark.sql.AnalysisException: cannot resolve '`t1.b`' given input columns: [t1.b]; line 6 pos 41;

'Project ['t1.b]

+- 'Join Inner, ('t1.b = 't2.b)

:- SubqueryAlias t1

: +- SubqueryAlias temp

: +- ScriptTransformation [a#1], cat, [b#2], ScriptInputOutputSchema(List(),List(),Some(org.apache.hadoop.hive.serde2.DelimitedJSONSerDe),Some(org.apache.hadoop.hive.serde2.lazy.LazySimpleSerDe),List((field.delim, )),List((field.delim, )),Some(org.apache.hadoop.hive.ql.exec.TextRecordReader),Some(org.apache.hadoop.hive.ql.exec.TextRecordWriter),false)

: +- SubqueryAlias t

: +- Project [a#1]

: +- SubqueryAlias t

: +- LocalRelation [a#1]

+- SubqueryAlias t2

+- SubqueryAlias temp

+- ScriptTransformation [a#1], cat, [b#2], ScriptInputOutputSchema(List(),List(),Some(org.apache.hadoop.hive.serde2.DelimitedJSONSerDe),Some(org.apache.hadoop.hive.serde2.lazy.LazySimpleSerDe),List((field.delim, )),List((field.delim, )),Some(org.apache.hadoop.hive.ql.exec.TextRecordReader),Some(org.apache.hadoop.hive.ql.exec.TextRecordWriter),false)

+- SubqueryAlias t

+- Project [a#1]

+- SubqueryAlias t

+- LocalRelation [a#1]

```

### Does this PR introduce _any_ user-facing change?

NO

### How was this patch tested?

Add a UT

Closes#31752 from WangGuangxin/selfjoin-with-transform.

Authored-by: wangguangxin.cn <wangguangxin.cn@bytedance.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This pr remove `GlobalLimit` operator if its child max rows not larger than limit number. For example:

```

val testRelation = LocalRelation.fromExternalRows(Seq("a".attr.int, "b".attr.int, "c".attr.int), 1.to(10).map(_ => Row(1, 2, 3)) )

val query = GlobalLimit(100, testRelation)

```

We can remove this `GlobalLimit`.

### Why are the changes needed?

Further optimize the query.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Unit test.

Closes#31750 from wangyum/SPARK-34628.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Unify output of ShowCreateTableAsSerdeCommand and ShowCreateTableCommand

### Why are the changes needed?

Unify output of ShowCreateTableAsSerdeCommand and ShowCreateTableCommand

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Closes#31737 from AngersZhuuuu/SPARK-34621.

Authored-by: Angerszhuuuu <angers.zhu@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR intends to refactor the logic to resolve `__grouping_id` in the `Analyzer`; it moves the logic from `ResolveFunctions` to `ResolveReferences` (`resolveLiteralFunction`).

The original author of this PR is sqlwindspeaker (#30781).

Closes#30781.

### Why are the changes needed?

Code refactoring.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Added tests in `AnalysisSuite`.

Closes#31751 from maropu/SPARK-22748.

Authored-by: suqilong <suqilong@qiyi.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

It's a bit confusing to see `resolveExpressionBottomUp` and `resolveExpressionTopDown`, which provide similar functionalities but with different tree traverse order. It turns out that the real difference between these 2 methods is: which attributes should the columns be resolved to? `resolveExpressionTopDown` resolves columns using output attributes of the plan children, `resolveExpressionBottomUp` resolves columns using output attributes of the plan itself.

This PR unifies `resolveExpressionBottomUp` and `resolveExpressionTopDown` and put the common logic in a new method, and let `resolveExpressionBottomUp` and `resolveExpressionTopDown` just call the new method. This PR also renames `resolveExpressionBottomUp` and `resolveExpressionTopDown` to make the difference clear.

### Why are the changes needed?

code cleanup

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

existing tests

Closes#31728 from cloud-fan/resolve.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

We have equality in `SqlBase.g4` for `RLIKE: 'RLIKE' | 'REGEXP';`

We seemed to miss adding` REGEXP` as a SQL function just like` RLIKE`

### Why are the changes needed?

symmetry and beauty

This is also a builtin function in Hive, we can reduce the migration pain for those users

### Does this PR introduce _any_ user-facing change?

yes new regexp function as an alias as rlike

### How was this patch tested?

new tests

Closes#31488 from yaooqinn/SPARK-34376.

Authored-by: Kent Yao <yao@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This PR intends to fix a bug of `objects.NewInstance` if a user runs Spark on jdk8u and a given `cls` in `NewInstance` is a deeply-nested inner class, e.g.,.

```

object OuterLevelWithVeryVeryVeryLongClassName1 {

object OuterLevelWithVeryVeryVeryLongClassName2 {

object OuterLevelWithVeryVeryVeryLongClassName3 {

object OuterLevelWithVeryVeryVeryLongClassName4 {

object OuterLevelWithVeryVeryVeryLongClassName5 {

object OuterLevelWithVeryVeryVeryLongClassName6 {

object OuterLevelWithVeryVeryVeryLongClassName7 {

object OuterLevelWithVeryVeryVeryLongClassName8 {

object OuterLevelWithVeryVeryVeryLongClassName9 {

object OuterLevelWithVeryVeryVeryLongClassName10 {

object OuterLevelWithVeryVeryVeryLongClassName11 {

object OuterLevelWithVeryVeryVeryLongClassName12 {

object OuterLevelWithVeryVeryVeryLongClassName13 {

object OuterLevelWithVeryVeryVeryLongClassName14 {

object OuterLevelWithVeryVeryVeryLongClassName15 {

object OuterLevelWithVeryVeryVeryLongClassName16 {

object OuterLevelWithVeryVeryVeryLongClassName17 {

object OuterLevelWithVeryVeryVeryLongClassName18 {

object OuterLevelWithVeryVeryVeryLongClassName19 {

object OuterLevelWithVeryVeryVeryLongClassName20 {

case class MalformedNameExample2(x: Int)

}}}}}}}}}}}}}}}}}}}}

```

The root cause that Kris (rednaxelafx) investigated is as follows (Kudos to Kris);

The reason why the test case above is so convoluted is in the way Scala generates the class name for nested classes. In general, Scala generates a class name for a nested class by inserting the dollar-sign ( `$` ) in between each level of class nesting. The problem is that this format can concatenate into a very long string that goes beyond certain limits, so Scala will change the class name format beyond certain length threshold.

For the example above, we can see that the first two levels of class nesting have class names that look like this:

```

org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite$OuterLevelWithVeryVeryVeryLongClassName1$

org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite$OuterLevelWithVeryVeryVeryLongClassName1$OuterLevelWithVeryVeryVeryLongClassName2$

```

If we leave out the fact that Scala uses a dollar-sign ( `$` ) suffix for the class name of the companion object, `OuterLevelWithVeryVeryVeryLongClassName1`'s full name is a prefix (substring) of `OuterLevelWithVeryVeryVeryLongClassName2`.

But if we keep going deeper into the levels of nesting, you'll find names that look like:

```

org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite$OuterLevelWithVeryVeryVeryLongClassNam$$$$2a1321b953c615695d7442b2adb1$$$$ryVeryLongClassName8$OuterLevelWithVeryVeryVeryLongClassName9$OuterLevelWithVeryVeryVeryLongClassName10$

org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite$OuterLevelWithVeryVeryVeryLongClassNam$$$$2a1321b953c615695d7442b2adb1$$$$ryVeryLongClassName8$OuterLevelWithVeryVeryVeryLongClassName9$OuterLevelWithVeryVeryVeryLongClassName10$OuterLevelWithVeryVeryVeryLongClassName11$

org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite$OuterLevelWithVeryVeryVeryLongClassNam$$$$85f068777e7ecf112afcbe997d461b$$$$VeryLongClassName11$OuterLevelWithVeryVeryVeryLongClassName12$

org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite$OuterLevelWithVeryVeryVeryLongClassNam$$$$85f068777e7ecf112afcbe997d461b$$$$VeryLongClassName11$OuterLevelWithVeryVeryVeryLongClassName12$OuterLevelWithVeryVeryVeryLongClassName13$

org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite$OuterLevelWithVeryVeryVeryLongClassNam$$$$85f068777e7ecf112afcbe997d461b$$$$VeryLongClassName11$OuterLevelWithVeryVeryVeryLongClassName12$OuterLevelWithVeryVeryVeryLongClassName13$OuterLevelWithVeryVeryVeryLongClassName14$

org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite$OuterLevelWithVeryVeryVeryLongClassNam$$$$5f7ad51804cb1be53938ea804699fa$$$$VeryLongClassName14$OuterLevelWithVeryVeryVeryLongClassName15$

org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite$OuterLevelWithVeryVeryVeryLongClassNam$$$$5f7ad51804cb1be53938ea804699fa$$$$VeryLongClassName14$OuterLevelWithVeryVeryVeryLongClassName15$OuterLevelWithVeryVeryVeryLongClassName16$

org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite$OuterLevelWithVeryVeryVeryLongClassNam$$$$5f7ad51804cb1be53938ea804699fa$$$$VeryLongClassName14$OuterLevelWithVeryVeryVeryLongClassName15$OuterLevelWithVeryVeryVeryLongClassName16$OuterLevelWithVeryVeryVeryLongClassName17$

org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite$OuterLevelWithVeryVeryVeryLongClassNam$$$$69b54f16b1965a31e88968df1a58d8$$$$VeryLongClassName17$OuterLevelWithVeryVeryVeryLongClassName18$

org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite$OuterLevelWithVeryVeryVeryLongClassNam$$$$69b54f16b1965a31e88968df1a58d8$$$$VeryLongClassName17$OuterLevelWithVeryVeryVeryLongClassName18$OuterLevelWithVeryVeryVeryLongClassName19$

org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite$OuterLevelWithVeryVeryVeryLongClassNam$$$$69b54f16b1965a31e88968df1a58d8$$$$VeryLongClassName17$OuterLevelWithVeryVeryVeryLongClassName18$OuterLevelWithVeryVeryVeryLongClassName19$OuterLevelWithVeryVeryVeryLongClassName20$

```

with a hash code in the middle and various levels of nesting omitted.

The `java.lang.Class.isMemberClass` method is implemented in JDK8u as:

http://hg.openjdk.java.net/jdk8u/jdk8u/jdk/file/tip/src/share/classes/java/lang/Class.java#l1425

```

/**

* Returns {code true} if and only if the underlying class

* is a member class.

*

* return {code true} if and only if this class is a member class.

* since 1.5

*/

public boolean isMemberClass() {

return getSimpleBinaryName() != null && !isLocalOrAnonymousClass();

}

/**

* Returns the "simple binary name" of the underlying class, i.e.,

* the binary name without the leading enclosing class name.

* Returns {code null} if the underlying class is a top level

* class.

*/

private String getSimpleBinaryName() {

Class<?> enclosingClass = getEnclosingClass();

if (enclosingClass == null) // top level class

return null;

// Otherwise, strip the enclosing class' name

try {

return getName().substring(enclosingClass.getName().length());

} catch (IndexOutOfBoundsException ex) {

throw new InternalError("Malformed class name", ex);

}

}

```

and the problematic code is `getName().substring(enclosingClass.getName().length())` -- if a class's enclosing class's full name is *longer* than the nested class's full name, this logic would end up going out of bounds.

The bug has been fixed in JDK9 by https://bugs.java.com/bugdatabase/view_bug.do?bug_id=8057919 , but still exists in the latest JDK8u release. So from the Spark side we'd need to do something to avoid hitting this problem.

### Why are the changes needed?

Bugfix on jdk8u.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Added tests.

Closes#31733 from maropu/SPARK-34607.

Authored-by: Takeshi Yamamuro <yamamuro@apache.org>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

In the PR, I propose to extend Spark SQL API to accept [`java.time.Duration`](https://docs.oracle.com/javase/8/docs/api/java/time/Duration.html) as an external type of recently added new Catalyst type - `DayTimeIntervalType` (see #31614). The Java class `java.time.Duration` has similar semantic to ANSI SQL day-time interval type, and it is the most suitable to be an external type for `DayTimeIntervalType`. In more details:

1. Added `DurationConverter` which converts `java.time.Duration` instances to/from internal representation of the Catalyst type `DayTimeIntervalType` (to `Long` type). The `DurationConverter` object uses new methods of `IntervalUtils`:

- `durationToMicros()` converts the input duration to the total length in microseconds. If this duration is too large to fit `Long`, the method throws the exception `ArithmeticException`. **Note:** _the input duration has nanosecond precision, the method casts the nanos part to microseconds by dividing by 1000._

- `microsToDuration()` obtains a `java.time.Duration` representing a number of microseconds.

2. Support new type `DayTimeIntervalType` in `RowEncoder` via the methods `createDeserializerForDuration()` and `createSerializerForJavaDuration()`.

3. Extended the Literal API to construct literals from `java.time.Duration` instances.

### Why are the changes needed?

1. To allow users parallelization of `java.time.Duration` collections, and construct day-time interval columns. Also to collect such columns back to the driver side.

2. This will allow to write tests in other sub-tasks of SPARK-27790.

### Does this PR introduce _any_ user-facing change?

The PR extends existing functionality. So, users can parallelize instances of the `java.time.Duration` class and collect them back:

```Scala

scala> val ds = Seq(java.time.Duration.ofDays(10)).toDS

ds: org.apache.spark.sql.Dataset[java.time.Duration] = [value: daytimeinterval]

scala> ds.collect

res0: Array[java.time.Duration] = Array(PT240H)

```

### How was this patch tested?

- Added a few tests to `CatalystTypeConvertersSuite` to check conversion from/to `java.time.Duration`.

- Checking row encoding by new tests in `RowEncoderSuite`.

- Making literals of `DayTimeIntervalType` are tested in `LiteralExpressionSuite`

- Check collecting by `DatasetSuite` and `JavaDatasetSuite`.

Closes#31729 from MaxGekk/java-time-duration.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In ANSI mode, casting String to Boolean should throw an exception on parse error, instead of returning null

### Why are the changes needed?

For better ANSI compliance

### Does this PR introduce _any_ user-facing change?

Yes, in ANSI mode there will be an exception on parse failure of casting String value to Boolean type.

### How was this patch tested?

Unit tests.

Closes#31734 from gengliangwang/ansiCastToBoolean.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Gengliang Wang <gengliang.wang@databricks.com>

### What changes were proposed in this pull request?

`ResolveInsertInto.staticDeleteExpression` should use `UnresolvedAttribute.quoted` to create the delete expression so that we will treat the entire `attr.name` as a column name.

### Why are the changes needed?

When users use `dot` in a partition column name, queries like ```INSERT OVERWRITE $t1 PARTITION (`a.b` = 'a') (`c.d`) VALUES('b')``` is not working.

### Does this PR introduce _any_ user-facing change?

Without this test, the above query will throw

```

[info] org.apache.spark.sql.AnalysisException: cannot resolve '`a.b`' given input columns: [a.b, c.d];

[info] 'OverwriteByExpression RelationV2[a.b#17, c.d#18] default.tbl, ('a.b <=> cast(a as string)), false

[info] +- Project [a.b#19, ansi_cast(col1#16 as string) AS c.d#20]

[info] +- Project [cast(a as string) AS a.b#19, col1#16]

[info] +- LocalRelation [col1#16]

```

With the fix, the query will run correctly.

### How was this patch tested?

The new added test.

Closes#31713 from zsxwing/SPARK-34599.

Authored-by: Shixiong Zhu <zsxwing@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to generate "stable" output attributes per the logical node of the DESCRIBE NAMESPACE command.

### Why are the changes needed?

This fixes the issue demonstrated by the example:

```

sql(s"CREATE NAMESPACE ns")

val description = sql(s"DESCRIBE NAMESPACE ns")

description.drop("name")

```

```

[info] org.apache.spark.sql.AnalysisException: Resolved attribute(s) name#74 missing from name#25,value#26 in operator !Project [name#74]. Attribute(s) with the same name appear in the operation: name. Please check if the right attribute(s) are used.;

[info] !Project [name#74]

[info] +- LocalRelation [name#25, value#26]

```

### Does this PR introduce _any_ user-facing change?

After this change user `drop()/add()` works well.

### How was this patch tested?

Added UT

Closes#31705 from AngersZhuuuu/SPARK-34577.

Authored-by: Angerszhuuuu <angers.zhu@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This is a followup of https://github.com/apache/spark/pull/27597 and simply apply the fix in the v2 table insertion code path.

### Why are the changes needed?

bug fix

### Does this PR introduce _any_ user-facing change?

yes, now v2 table insertion with static partitions also follow StoreAssignmentPolicy.

### How was this patch tested?

moved the test from https://github.com/apache/spark/pull/27597 to the general test suite `SQLInsertTestSuite`, which covers DS v2, file source, and hive tables.

Closes#31726 from cloud-fan/insert.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Use a non-recursive implementation for the function buildBalancedPredicate

### Why are the changes needed?

For better performance.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Existing unit tests.

Also, a quick benchmark:

```

test("buildBalancedPredicate") {

val expressions = (1 to 1000).map(_ => Literal(true))

val start = System.currentTimeMillis()

buildBalancedPredicate(expressions, And)

println(System.currentTimeMillis() - start)

}

```

Before: 47ms

After: 4ms

Closes#31724 from gengliangwang/nonrecursive.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Gengliang Wang <gengliang.wang@databricks.com>

### What changes were proposed in this pull request?

Add metadataOutput as a fallback to resolution.

Builds off https://github.com/apache/spark/pull/31654.

### Why are the changes needed?

The metadata columns could not be resolved via `df.col("metadataColName")` from the DataFrame API.

### Does this PR introduce _any_ user-facing change?

Yes, the metadata columns can now be resolved as described above.

### How was this patch tested?

Scala unit test.

Closes#31668 from karenfeng/spark-34555.

Authored-by: Karen Feng <karen.feng@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Hive support type constructed value as partition spec value, spark should support too.

### Why are the changes needed?

Support TypeConstructed partition spec value keep same with hive

### Does this PR introduce _any_ user-facing change?

Yes, user can use TypeConstruct value as partition spec value such as

```

CREATE TABLE t1(name STRING) PARTITIONED BY (part DATE)

INSERT INTO t1 PARTITION(part = date'2019-01-02') VALUES('a')

CREATE TABLE t2(name STRING) PARTITIONED BY (part TIMESTAMP)

INSERT INTO t2 PARTITION(part = timestamp'2019-01-02 11:11:11') VALUES('a')

CREATE TABLE t4(name STRING) PARTITIONED BY (part BINARY)

INSERT INTO t4 PARTITION(part = X'537061726B2053514C') VALUES('a')

```

### How was this patch tested?

Added UT

Closes#30421 from AngersZhuuuu/SPARK-33474.

Lead-authored-by: angerszhu <angers.zhu@gmail.com>

Co-authored-by: Angerszhuuuu <angers.zhu@gmail.com>

Co-authored-by: AngersZhuuuu <angers.zhu@gmail.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

spark.sql.adaptive.coalescePartitions.initialPartitionNum 200 -> (none)

spark.sql.adaptive.skewJoin.skewedPartitionFactor is 10 -> 5

### Why are the changes needed?

the wrong doc misguide people

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

passing doc

Closes#31717 from yaooqinn/minordoc0.

Authored-by: Kent Yao <yao@apache.org>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Enhance boolean simplification rule by handling following scenarios:

(((a && b) && a && (a && c))) => a && b && c)

(((a || b) || a || (a || c))) => a || b || c

### Why are the changes needed?

Minor improvement

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Added UTs

Closes#31318 from Swinky/booleansimplification.

Authored-by: Swinky <mannswinky@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to generate "stable" output attributes per the logical node of the DESCRIBE COLUMN command.

### Why are the changes needed?

This fixes the issue demonstrated by the example:

```

val tbl = "testcat.ns1.ns2.tbl"

sql(s"CREATE TABLE $tbl (c0 INT) USING _")

val description = sql(s"DESCRIBE TABLE $tbl c0")

description.drop("info_name")

```

```

[info] org.apache.spark.sql.AnalysisException: Resolved attribute(s) info_name#74 missing from info_name#25,info_value#26 in operator !Project [info_name#74]. Attribute(s) with the same name appear in the operation: info_name. Please check if the right attribute(s) are used.;

[info] !Project [info_name#74]

[info] +- LocalRelation [info_name#25, info_value#26]

```

### Does this PR introduce _any_ user-facing change?

After this change user `drop()/add()` works well.

### How was this patch tested?

Added UT

Closes#31696 from AngersZhuuuu/SPARK-34576.

Authored-by: Angerszhuuuu <angers.zhu@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to extend Catalyst's type system by two new types that conform to the SQL standard (see SQL:2016, section 4.6.3):

- `DayTimeIntervalType` represents the day-time interval type,

- `YearMonthIntervalType` for SQL year-month interval type.

This PR only adds the two new DataType implementations, and there will be more PRs as sub-tasks of SPARK-27790 to completely support the new ANSI interval types.

### Why are the changes needed?

Spark as it is today supports an INTERVAL datatype. However this type is of very limited use. Existing interval values cannot be compared with any other interval values, or persisted to storage. Spark users request to either implement new or expand existing built-in functions which produce some sort of measures for elapsed time, such as `DATEDIFF()`. Rather than work around the edges to fill the potholes of the existing INTERVAL data type, I would like to propose to deliver a proper ANSI compliant INTERVAL type that can be introduced with minimal incompatibility, is comparable and thus sortable, and can be persisted in tables.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

1. By checking coding style via:

```

$ ./dev/scalastyle

$ ./dev/lint-java

```

2. Run the test for the default sizes:

```

$ build/sbt "test:testOnly *DataTypeSuite"

```

Closes#31614 from MaxGekk/day-time-interval-type.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Use `Utils.getSimpleName` to avoid hitting `Malformed class name` error in `NewInstance.doGenCode`.

### Why are the changes needed?

On older JDK versions (e.g. JDK8u), nested Scala classes may trigger `java.lang.Class.getSimpleName` to throw an `java.lang.InternalError: Malformed class name` error.

In this particular case, creating an `ExpressionEncoder` on such a nested Scala class would create a `NewInstance` expression under the hood, which will trigger the problem during codegen.

Similar to https://github.com/apache/spark/pull/29050, we should use Spark's `Utils.getSimpleName` utility function in place of `Class.getSimpleName` to avoid hitting the issue.

There are two other occurrences of `java.lang.Class.getSimpleName` in the same file, but they're safe because they're only guaranteed to be only used on Java classes, which don't have this problem, e.g.:

```scala

// Make a copy of the data if it's unsafe-backed

def makeCopyIfInstanceOf(clazz: Class[_ <: Any], value: String) =

s"$value instanceof ${clazz.getSimpleName}? ${value}.copy() : $value"

val genFunctionValue: String = lambdaFunction.dataType match {

case StructType(_) => makeCopyIfInstanceOf(classOf[UnsafeRow], genFunction.value)

case ArrayType(_, _) => makeCopyIfInstanceOf(classOf[UnsafeArrayData], genFunction.value)

case MapType(_, _, _) => makeCopyIfInstanceOf(classOf[UnsafeMapData], genFunction.value)

case _ => genFunction.value

}

```

The Unsafe-* family of types are all Java types, so they're okay.

### Does this PR introduce _any_ user-facing change?

Fixes a bug that throws an error when using `ExpressionEncoder` on some nested Scala types, otherwise no changes.

### How was this patch tested?

Added a test case to `org.apache.spark.sql.catalyst.encoders.ExpressionEncoderSuite`. It'll fail on JDK8u before the fix, and pass after the fix.

Closes#31709 from rednaxelafx/spark-34596-master.

Authored-by: Kris Mok <kris.mok@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Call `toSeq` to fix Scala 2.13 build error.

### Why are the changes needed?

It is needed to fix 2.13 build error.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Existing tests.

Closes#31716 from viirya/SPARK-34548-followup.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This patch proposes to remove unnecessary children from Union under Distince and Deduplicate

### Why are the changes needed?

If there are any duplicate child of `Union` under `Distinct` and `Deduplicate`, it can be removed to simplify query plan.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Unit test

Closes#31656 from viirya/SPARK-34548.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Liang-Chi Hsieh <viirya@gmail.com>

### What changes were proposed in this pull request?

Today, child expressions may be resolved based on "real" or metadata output attributes. We should prefer the real attribute during resolution if one exists.

### Why are the changes needed?

Today, attempting to resolve an expression when there is a "real" output attribute and a metadata attribute with the same name results in resolution failure. This is likely unexpected, as the user may not know about the metadata attribute.

### Does this PR introduce _any_ user-facing change?

Yes. Previously, the user would see an error message when resolving a column with the same name as a "real" output attribute and a metadata attribute as below:

```

org.apache.spark.sql.AnalysisException: Reference 'index' is ambiguous, could be: testcat.ns1.ns2.tableTwo.index, testcat.ns1.ns2.tableOne.index.; line 1 pos 71

at org.apache.spark.sql.catalyst.expressions.package$AttributeSeq.resolve(package.scala:363)

at org.apache.spark.sql.catalyst.plans.logical.LogicalPlan.resolveChildren(LogicalPlan.scala:107)

```

Now, resolution succeeds and provides the "real" output attribute.

### How was this patch tested?

Added a unit test.

Closes#31654 from karenfeng/fallback-resolve-metadata.

Authored-by: Karen Feng <karen.feng@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### Why is this change being proposed?

This patch adds support for a new "product" aggregation function in `sql.functions` which multiplies-together all values in an aggregation group.

This is likely to be useful in statistical applications which involve combining probabilities, or financial applications that involve combining cumulative interest rates, but is also a versatile mathematical operation of similar status to `sum` or `stddev`. Other users [have noted](https://stackoverflow.com/questions/52991640/cumulative-product-in-spark) the absence of such a function in current releases of Spark.

This function is both much more concise than an expression of the form `exp(sum(log(...)))`, and avoids awkward edge-cases associated with some values being zero or negative, as well as being less computationally costly.

### Does this PR introduce _any_ user-facing change?

No - only adds new function.

### How was this patch tested?

Built-in tests have been added for the new `catalyst.expressions.aggregate.Product` class and its invocation via the (scala) `sql.functions.product` function. The latter, and the PySpark wrapper have also been manually tested in spark-shell and pyspark sessions. The SparkR wrapper is currently untested, and may need separate validation (I'm not an "R" user myself).

An illustration of the new functionality, within PySpark is as follows:

```

import pyspark.sql.functions as pf, pyspark.sql.window as pw

df = sqlContext.range(1, 17).toDF("x")

win = pw.Window.partitionBy(pf.lit(1)).orderBy(pf.col("x"))

df.withColumn("factorial", pf.product("x").over(win)).show(20, False)

+---+---------------+

|x |factorial |

+---+---------------+

|1 |1.0 |

|2 |2.0 |

|3 |6.0 |

|4 |24.0 |

|5 |120.0 |

|6 |720.0 |

|7 |5040.0 |

|8 |40320.0 |

|9 |362880.0 |

|10 |3628800.0 |

|11 |3.99168E7 |

|12 |4.790016E8 |

|13 |6.2270208E9 |

|14 |8.71782912E10 |

|15 |1.307674368E12 |

|16 |2.0922789888E13|

+---+---------------+

```

Closes#30745 from rwpenney/feature/agg-product.

Lead-authored-by: Richard Penney <rwp@rwpenney.uk>

Co-authored-by: Richard Penney <rwpenney@users.noreply.github.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

In the `SQLConf` object, the `sqlConfEntries` map is globally synchronized (it is a Java `Collections.synchronizedMap`): any operation, including a get, will need to acquire the lock.

An example of this is calling the `DatatType.sameType` method. This will trigger a check on `SQLConf.get.caseSensitiveAnalysis`. So every time we compare two datatypes with sameType, we hit a lock.

To avoid having multiple tasks locking on this, a better approach would be to use a map that does not lock on read (like a `ConcurrentHashMap`). This map implementation does not lock on read, and on write it only locks the map partially. The only lock that happens is on write on the same map key.

### Why are the changes needed?

Multiple tasks performing any operation that directly or indirectly trigger a query to the `SQLConf.sqlConfEntries` map, will require acquiring a global lock on that map. Something as easy as calling `DataType.sameType(...)` would be locking on the global `sqlConfEntries` lock of the `Collections.synchronizedMap`.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

No functionality change. Existing unit tests run normally.

Closes#31689 from gabrielenizzoli/SPARK-34573.

Authored-by: Gabriele Nizzoli <1545350+gabrielenizzoli@users.noreply.github.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

In the PR, I propose to generate unique attributes in the logical nodes of the `SHOW TABLES` command.

Also, this PR fixes similar issues in other logical nodes:

- ShowTableExtended

- ShowViews

- ShowTableProperties

- ShowFunctions

- ShowColumns

- ShowPartitions

- ShowNamespaces

### Why are the changes needed?

This fixes the issue which is demonstrated by the example below:

```scala

scala> val show1 = sql("SHOW TABLES IN ns1")

show1: org.apache.spark.sql.DataFrame = [namespace: string, tableName: string ... 1 more field]

scala> val show2 = sql("SHOW TABLES IN ns2")

show2: org.apache.spark.sql.DataFrame = [namespace: string, tableName: string ... 1 more field]

scala> show1.show

+---------+---------+-----------+

|namespace|tableName|isTemporary|

+---------+---------+-----------+

| ns1| tbl1| false|

+---------+---------+-----------+

scala> show2.show

+---------+---------+-----------+

|namespace|tableName|isTemporary|

+---------+---------+-----------+

| ns2| tbl2| false|

+---------+---------+-----------+

scala> show1.join(show2).where(show1("tableName") =!= show2("tableName")).show

org.apache.spark.sql.AnalysisException: Column tableName#17 are ambiguous. It's probably because you joined several Datasets together, and some of these Datasets are the same. This column points to one of the Datasets but Spark is unable to figure out which one. Please alias the Datasets with different names via `Dataset.as` before joining them, and specify the column using qualified name, e.g. `df.as("a").join(df.as("b"), $"a.id" > $"b.id")`. You can also set spark.sql.analyzer.failAmbiguousSelfJoin to false to disable this check.

at org.apache.spark.sql.execution.analysis.DetectAmbiguousSelfJoin$.apply(DetectAmbiguousSelfJoin.scala:157)

```

### Does this PR introduce _any_ user-facing change?

Yes. After the changes, the example above works as expected:

```scala

scala> show1.join(show2).where(show1("tableName") =!= show2("tableName")).show

+---------+---------+-----------+---------+---------+-----------+

|namespace|tableName|isTemporary|namespace|tableName|isTemporary|

+---------+---------+-----------+---------+---------+-----------+

| ns1| tbl1| false| ns2| tbl2| false|

+---------+---------+-----------+---------+---------+-----------+

```

### How was this patch tested?

By running the new test:

```

$ build/sbt -Phive-2.3 -Phive-thriftserver "test:testOnly *ShowTablesSuite"

```

Closes#31675 from MaxGekk/fix-output-attrs.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to generate "stable" output attributes per the logical node of the `DESCRIBE TABLE` command.

### Why are the changes needed?

This fixes the issue demonstrated by the example:

```scala

val tbl = "testcat.ns1.ns2.tbl"

sql(s"CREATE TABLE $tbl (c0 INT) USING _")

val description = sql(s"DESCRIBE TABLE $tbl")

description.drop("comment")

```

The `drop()` method fails with the error:

```

org.apache.spark.sql.AnalysisException: Resolved attribute(s) col_name#102,data_type#103 missing from col_name#29,data_type#30,comment#31 in operator !Project [col_name#102, data_type#103]. Attribute(s) with the same name appear in the operation: col_name,data_type. Please check if the right attribute(s) are used.;

!Project [col_name#102, data_type#103]

+- LocalRelation [col_name#29, data_type#30, comment#31]

at org.apache.spark.sql.catalyst.analysis.CheckAnalysis.failAnalysis(CheckAnalysis.scala:51)

at org.apache.spark.sql.catalyst.analysis.CheckAnalysis.failAnalysis$(CheckAnalysis.scala:50)

```

### Does this PR introduce _any_ user-facing change?

Yes. After the changes, `drop()`/`add()` works as expected:

```scala

description.drop("comment").show()

+---------------+---------+

| col_name|data_type|

+---------------+---------+

| c0| int|

| | |

| # Partitioning| |

|Not partitioned| |

+---------------+---------+

```

### How was this patch tested?

1. Run new test:

```

$ build/sbt -Phive-2.3 -Phive-thriftserver "test:testOnly *DataSourceV2SQLSuite"

```

2. Run existing test suite:

```

$ build/sbt -Phive-2.3 -Phive-thriftserver "test:testOnly *CatalogedDDLSuite"

```

Closes#31676 from MaxGekk/describe-table-drop-column.

Authored-by: Max Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR makes partition spec parsing respect case sensitive conf.

### Why are the changes needed?

When parsing the partition spec, Spark will call `org.apache.spark.sql.catalyst.parser.ParserUtils.checkDuplicateKeys` to check if there are duplicate partition column names in the list. But this method is always case sensitive and doesn't detect duplicate partition column names when using different cases.

### Does this PR introduce _any_ user-facing change?

Yep. This prevents users from writing incorrect queries such as `INSERT OVERWRITE t PARTITION (c='2', C='3') VALUES (1)` when they don't enable case sensitive conf.

### How was this patch tested?

The new added test will fail without this change.

Closes#31669 from zsxwing/SPARK-34556.

Authored-by: Shixiong Zhu <zsxwing@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Avro add zstandard codec since AVRO-2195. This pr add zstandard codec to Avro compression codec list.

### Why are the changes needed?

To make Avro support zstandard codec.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Unit test.

Closes#31673 from wangyum/SPARK-34479.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request?

To support +8:00 in Spark3 when execute sql

`select to_utc_timestamp("2020-02-07 16:00:00", "GMT+8:00")`

### Why are the changes needed?

+8:00 this format is supported in PostgreSQL,hive, presto, but not supported in Spark3

https://issues.apache.org/jira/browse/SPARK-34392

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

unit test

Closes#31624 from Karl-WangSK/zone.

Lead-authored-by: ShiKai Wang <wskqing@gmail.com>

Co-authored-by: Karl-WangSK <shikai.wang@linkflowtech.com>

Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request?

Add more aggregate expressions to `EliminateDistinct` rule.

### Why are the changes needed?

Distinct aggregation can add a significant overhead. It's better to remove distinct whenever possible.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

UT

Closes#30999 from tanelk/SPARK-33971_eliminate_distinct.

Authored-by: tanel.kiis@gmail.com <tanel.kiis@gmail.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

Implement `ColumnarMap.copy()` by using the `copy()` method of `ColumnarArray`.

### Why are the changes needed?

To eliminate `java.lang.UnsupportedOperationException` while using `ColumnarMap`.

### Does this PR introduce _any_ user-facing change?

Yes

### How was this patch tested?

By running new tests in `ColumnarBatchSuite`.