### What changes were proposed in this pull request?

This pr is to remove floating-point `Sum/Average/CentralMomentAgg` from order-insensitive aggregates in `EliminateSorts`.

This pr comes from the gatorsmile suggestion: https://github.com/apache/spark/pull/26011#discussion_r344583899

### Why are the changes needed?

Bug fix.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Added tests in `SubquerySuite`.

Closes#26534 from maropu/SPARK-29343-FOLLOWUP.

Authored-by: Takeshi Yamamuro <yamamuro@apache.org>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Add DescribeNamespaceStatement, DescribeNamespace and DescribeNamespaceExec

to make "DESC DATABASE" look up catalog like v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same catalog/namespace resolution behavior, to avoid confusing end-users.

### Does this PR introduce any user-facing change?

Yes, add "DESC NAMESPACE" whose function is same as "DESC DATABASE" and "DESC SCHEMA".

### How was this patch tested?

New unit test

Closes#26513 from fuwhu/SPARK-29834.

Authored-by: fuwhu <bestwwg@163.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Add ShowTableStatement and make SHOW TABLE EXTENDED go through the same catalog/table resolution framework of v2 commands.

We don’t have this methods in the catalog to implement an V2 command

- catalog.getPartition

- catalog.getTempViewOrPermanentTableMetadata

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing

```sql

USE my_catalog

DESC t // success and describe the table t from my_catalog

SHOW TABLE EXTENDED FROM LIKE 't' // report table not found as there is no table t in the session catalog

```

### Does this PR introduce any user-facing change?

Yes. When running SHOW TABLE EXTENDED Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

Unit tests.

Closes#26540 from planga82/feature/SPARK-29481_ShowTableExtended.

Authored-by: Pablo Langa <soypab@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Current string to interval cast logic does not support i.e. cast('.111 second' as interval) which will fail in SIGN state and return null, actually, it is 00:00:00.111.

```scala

-- !query 63

select interval '.111 seconds'

-- !query 63 schema

struct<0.111 seconds:interval>

-- !query 63 output

0.111 seconds

-- !query 64

select cast('.111 seconds' as interval)

-- !query 64 schema

struct<CAST(.111 seconds AS INTERVAL):interval>

-- !query 64 output

NULL

````

### Why are the changes needed?

bug fix.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

add ut

Closes#26514 from yaooqinn/SPARK-29888.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR addresses issues where conflicting attributes in `Expand` are not correctly handled.

### Why are the changes needed?

```Scala

val numsDF = Seq(1, 2, 3, 4, 5, 6).toDF("nums")

val cubeDF = numsDF.cube("nums").agg(max(lit(0)).as("agcol"))

cubeDF.join(cubeDF, "nums").show

```

fails with the following exception:

```

org.apache.spark.sql.AnalysisException:

Failure when resolving conflicting references in Join:

'Join Inner

:- Aggregate [nums#38, spark_grouping_id#36], [nums#38, max(0) AS agcol#35]

: +- Expand [List(nums#3, nums#37, 0), List(nums#3, null, 1)], [nums#3, nums#38, spark_grouping_id#36]

: +- Project [nums#3, nums#3 AS nums#37]

: +- Project [value#1 AS nums#3]

: +- LocalRelation [value#1]

+- Aggregate [nums#38, spark_grouping_id#36], [nums#38, max(0) AS agcol#58]

+- Expand [List(nums#3, nums#37, 0), List(nums#3, null, 1)], [nums#3, nums#38, spark_grouping_id#36]

^^^^^^^

+- Project [nums#3, nums#3 AS nums#37]

+- Project [value#1 AS nums#3]

+- LocalRelation [value#1]

Conflicting attributes: nums#38

```

As you can see from the above plan, `num#38`, the output of `Expand` on the right side of `Join`, should have been handled to produce new attribute. Since the conflict is not resolved in `Expand`, the failure is happening upstream at `Aggregate`. This PR addresses handling conflicting attributes in `Expand`.

### Does this PR introduce any user-facing change?

Yes, the previous example now shows the following output:

```

+----+-----+-----+

|nums|agcol|agcol|

+----+-----+-----+

| 1| 0| 0|

| 6| 0| 0|

| 4| 0| 0|

| 2| 0| 0|

| 5| 0| 0|

| 3| 0| 0|

+----+-----+-----+

```

### How was this patch tested?

Added new unit test.

Closes#26441 from imback82/spark-29682.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Make SparkSQL's `cast to boolean` behavior be consistent with PostgreSQL when

spark.sql.dialect is configured as PostgreSQL.

### Why are the changes needed?

SparkSQL and PostgreSQL have a lot different cast behavior between types by default. We should make SparkSQL's cast behavior be consistent with PostgreSQL when `spark.sql.dialect` is configured as PostgreSQL.

### Does this PR introduce any user-facing change?

Yes. If user switches to PostgreSQL dialect now, they will

* get an exception if they input a invalid string, e.g "erut", while they get `null` before;

* get an exception if they input `TimestampType`, `DateType`, `LongType`, `ShortType`, `ByteType`, `DecimalType`, `DoubleType`, `FloatType` values, while they get `true` or `false` result before.

And here're evidences for those unsupported types from PostgreSQL:

timestamp:

```

postgres=# select cast(cast('2019-11-11' as timestamp) as boolean);

ERROR: cannot cast type timestamp without time zone to boolean

```

date:

```

postgres=# select cast(cast('2019-11-11' as date) as boolean);

ERROR: cannot cast type date to boolean

```

bigint:

```

postgres=# select cast(cast('20191111' as bigint) as boolean);

ERROR: cannot cast type bigint to boolean

```

smallint:

```

postgres=# select cast(cast(2019 as smallint) as boolean);

ERROR: cannot cast type smallint to boolean

```

bytea:

```

postgres=# select cast(cast('2019' as bytea) as boolean);

ERROR: cannot cast type bytea to boolean

```

decimal:

```

postgres=# select cast(cast('2019' as decimal) as boolean);

ERROR: cannot cast type numeric to boolean

```

float:

```

postgres=# select cast(cast('2019' as float) as boolean);

ERROR: cannot cast type double precision to boolean

```

### How was this patch tested?

Added and tested manually.

Closes#26463 from Ngone51/dev-postgre-cast2bool.

Authored-by: wuyi <ngone_5451@163.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR adds a SQL Conf: `spark.sql.streaming.stopActiveRunOnRestart`. When this conf is `true` (by default it is), an already running stream will be stopped, if a new copy gets launched on the same checkpoint location.

### Why are the changes needed?

In multi-tenant environments where you have multiple SparkSessions, you can accidentally start multiple copies of the same stream (i.e. streams using the same checkpoint location). This will cause all new instantiations of the new stream to fail. However, sometimes you may want to turn off the old stream, as the old stream may have turned into a zombie (you no longer have access to the query handle or SparkSession).

It would be nice to have a SQL flag that allows the stopping of the old stream for such zombie cases.

### Does this PR introduce any user-facing change?

Yes. Now by default, if you launch a new copy of an already running stream on a multi-tenant cluster, the existing stream will be stopped.

### How was this patch tested?

Unit tests in StreamingQueryManagerSuite

Closes#26225 from brkyvz/stopStream.

Lead-authored-by: Burak Yavuz <brkyvz@gmail.com>

Co-authored-by: Burak Yavuz <burak@databricks.com>

Signed-off-by: Burak Yavuz <brkyvz@gmail.com>

### What changes were proposed in this pull request?

rename EveryAgg/AnyAgg to BoolAnd/BoolOr

### Why are the changes needed?

Under ansi mode, `every`, `any` and `some` are reserved keywords and can't be used as function names. `EveryAgg`/`AnyAgg` has several aliases and I think it's better to not pick reserved keywords as the primary name.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

existing tests

Closes#26486 from cloud-fan/naming.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

rename the config to address the comment: https://github.com/apache/spark/pull/24594#discussion_r285431212

improve the config description, provide a default value to simplify the code.

### Why are the changes needed?

make the config more understandable.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

existing tests

Closes#26395 from cloud-fan/config.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

The current parse and analyze flow for DELETE is: 1, the SQL string will be firstly parsed to `DeleteFromStatement`; 2, the `DeleteFromStatement` be converted to `DeleteFromTable`. However, the SQL string can be parsed to `DeleteFromTable` directly, where a `DeleteFromStatement` seems to be redundant.

It is the same for UPDATE.

This pr removes the unnecessary `DeleteFromStatement` and `UpdateTableStatement`.

### Why are the changes needed?

This makes the codes for DELETE and UPDATE cleaner, and keep align with MERGE INTO.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existed tests and new tests.

Closes#26464 from xianyinxin/SPARK-29835.

Authored-by: xy_xin <xianyin.xxy@alibaba-inc.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Currently, `SupportsNamespaces.dropNamespace` drops a namespace only if it is empty. Thus, to implement a cascading drop, one needs to iterate all objects (tables, view, etc.) within the namespace (including its sub-namespaces recursively) and drop them one by one. This can have a negative impact on the performance when there are large number of objects.

Instead, this PR proposes to change the default behavior of dropping a namespace to cascading such that implementing cascading/non-cascading drop is simpler without performance penalties.

### Why are the changes needed?

The new behavior makes implementing cascading/non-cascading drop simple without performance penalties.

### Does this PR introduce any user-facing change?

Yes. The default behavior of `SupportsNamespaces.dropNamespace` is now cascading.

### How was this patch tested?

Added new unit tests.

Closes#26476 from imback82/drop_ns_cascade.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Add 3 interval functions justify_days, justify_hours, justif_interval to support justify interval values

### Why are the changes needed?

For feature parity with postgres

add three interval functions to justify interval values.

justify_days(interval) | interval | Adjust interval so 30-day time periods are represented as months | justify_days(interval '35 days') | 1 mon 5 days

-- | -- | -- | -- | --

justify_hours(interval) | interval | Adjust interval so 24-hour time periods are represented as days | justify_hours(interval '27 hours') | 1 day 03:00:00

justify_interval(interval) | interval | Adjust interval using justify_days and justify_hours, with additional sign adjustments | justify_interval(interval '1 mon -1 hour') | 29 days 23:00:00

### Does this PR introduce any user-facing change?

yes. new interval functions are added

### How was this patch tested?

add ut

Closes#26465 from yaooqinn/SPARK-29390.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

When creating v2 expressions, we have public java APIs, as well as interval scala APIs. All of these APIs take a string column name and parse it to `NamedReference`.

This is convenient for end-users, but not for interval development. For example, the query plan already contains the parsed partition/bucket column names, and it's tricky if we need to quote the names before creating v2 expressions.

This PR proposes to change the interval scala APIs to take `NamedReference` directly, with a new method to create `NamedReference` with the exact name parts. The public java APIs are not changed.

### Why are the changes needed?

fix a bug, and make it easier to create v2 expressions correctly in the future.

### Does this PR introduce any user-facing change?

yes, now v2 CREATE TABLE works as expected.

### How was this patch tested?

a new test

Closes#26425 from cloud-fan/extract.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Ryan Blue <blue@apache.org>

### What changes were proposed in this pull request?

```sql

-- !query 83

select -integer '7'

-- !query 83 schema

struct<7:int>

-- !query 83 output

7

-- !query 86

select -date '1999-01-01'

-- !query 86 schema

struct<DATE '1999-01-01':date>

-- !query 86 output

1999-01-01

-- !query 87

select -timestamp '1999-01-01'

-- !query 87 schema

struct<TIMESTAMP('1999-01-01 00:00:00'):timestamp>

-- !query 87 output

1999-01-01 00:00:00

```

the integer should be -7 and the date and timestamp results are confusing which should throw exceptions

### Why are the changes needed?

bug fix

### Does this PR introduce any user-facing change?

NO

### How was this patch tested?

ADD UTs

Closes#26479 from yaooqinn/SPARK-29855.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Add ShowTablePropertiesStatement and make SHOW TBLPROPERTIES go through the same catalog/table resolution framework of v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

USE my_catalog

DESC t // success and describe the table t from my_catalog

SHOW TBLPROPERTIES t // report table not found as there is no table t in the session catalog

### Does this PR introduce any user-facing change?

yes. When running SHOW TBLPROPERTIES Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

Unit tests.

Closes#26176 from planga82/feature/SPARK-29519_SHOW_TBLPROPERTIES_datasourceV2.

Authored-by: Pablo Langa <soypab@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This patch fixes the edge case of streaming left/right outer join described below:

Suppose query is provided as

`select * from A join B on A.id = B.id AND (A.ts <= B.ts AND B.ts <= A.ts + interval 5 seconds)`

and there're two rows for L1 (from A) and R1 (from B) which ensures L1.id = R1.id and L1.ts = R1.ts.

(we can simply imagine it from self-join)

Then Spark processes L1 and R1 as below:

- row L1 and row R1 are joined at batch 1

- row R1 is evicted at batch 2 due to join and watermark condition, whereas row L1 is not evicted

- row L1 is evicted at batch 3 due to join and watermark condition

When determining outer rows to match with null, Spark applies some assumption commented in codebase, as below:

```

Checking whether the current row matches a key in the right side state, and that key

has any value which satisfies the filter function when joined. If it doesn't,

we know we can join with null, since there was never (including this batch) a match

within the watermark period. If it does, there must have been a match at some point, so

we know we can't join with null.

```

But as explained the edge-case earlier, the assumption is not correct. As we don't have any good assumption to optimize which doesn't have edge-case, we have to track whether such row is matched with others before, and match with null row only when the row is not matched.

To track the matching of row, the patch adds a new state to streaming join state manager, and mark whether the row is matched to others or not. We leverage the information when dealing with eviction of rows which would be candidates to match with null rows.

This approach introduces new state format which is not compatible with old state format - queries with old state format will be still running but they will still have the issue and be required to discard checkpoint and rerun to take this patch in effect.

### Why are the changes needed?

This patch fixes a correctness issue.

### Does this PR introduce any user-facing change?

No for compatibility viewpoint, but we'll encourage end users to discard the old checkpoint and rerun the query if they run stream-stream outer join query with old checkpoint, which might be "yes" for the question.

### How was this patch tested?

Added UT which fails on current Spark and passes with this patch. Also passed existing streaming join UTs.

Closes#26108 from HeartSaVioR/SPARK-26154-shorten-alternative.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

### What changes were proposed in this pull request?

Enable nested schema pruning and nested pruning on expressions by default. We have been using those features in production in Apple for couple months with great success. For some jobs, we reduce the data reading by more than 8x and 21x faster in wall clock time.

### Why are the changes needed?

Better performance.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing tests.

Closes#26443 from dbtsai/enableNestedSchemaPrunning.

Authored-by: DB Tsai <d_tsai@apple.com>

Signed-off-by: DB Tsai <d_tsai@apple.com>

### What changes were proposed in this pull request?

With the latest string to literal optimization https://github.com/apache/spark/pull/26256, some interval strings can not be cast when there are some spaces between signs and unit values. After state `PARSE_SIGN`, it directly goes to `PARSE_UNIT_VALUE` when takes a space character as the end. So when there are some white spaces come before the real unit value, it fails to parse, we should add a new state like `TRIM_VALUE` to trim all these spaces.

How to re-produce, which aim the revisions since https://github.com/apache/spark/pull/26256 is merged

```sql

select cast(v as interval) from values ('+ 1 second') t(v);

select cast(v as interval) from values ('- 1 second') t(v);

```

### Why are the changes needed?

bug fix

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

1. ut

2. new benchmark test

Closes#26449 from yaooqinn/SPARK-29605.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Hive support STORED AS new file format syntax:

```sql

CREATE TABLE tbl(a int) STORED AS TEXTFILE;

CREATE TABLE tbl2 LIKE tbl STORED AS PARQUET;

```

We add a similar syntax for Spark. Here we separate to two features:

1. specify a different table provider in CREATE TABLE LIKE

2. Hive compatibility

In this PR, we address the first one:

- [ ] Using `USING provider` to specify a different table provider in CREATE TABLE LIKE.

- [ ] Using `STORED AS file_format` in CREATE TABLE LIKE to address Hive compatibility.

### Why are the changes needed?

Use CREATE TABLE tb1 LIKE tb2 command to create an empty table tb1 based on the definition of table tb2. The most user case is to create tb1 with the same schema of tb2. But an inconvenient case here is this command also copies the FileFormat from tb2, it cannot change the input/output format and serde. Add the ability of changing file format is useful for some scenarios like upgrading a table from a low performance file format to a high performance one (parquet, orc).

### Does this PR introduce any user-facing change?

Add a new syntax based on current CTL:

```sql

CREATE TABLE tbl2 LIKE tbl [USING parquet];

```

### How was this patch tested?

Modify some exist UTs.

Closes#26097 from LantaoJin/SPARK-29421.

Authored-by: lajin <lajin@ebay.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose new expression `MakeInterval` and register it as the function `make_interval`. The function accepts the following parameters:

- `years` - the number of years in the interval, positive or negative. The parameter is multiplied by 12, and added to interval's `months`.

- `months` - the number of months in the interval, positive or negative.

- `weeks` - the number of months in the interval, positive or negative. The parameter is multiplied by 7, and added to interval's `days`.

- `hours`, `mins` - the number of hours and minutes. The parameters can be negative or positive. They are converted to microseconds and added to interval's `microseconds`.

- `seconds` - the number of seconds with the fractional part in microseconds precision. It is converted to microseconds, and added to total interval's `microseconds` as `hours` and `minutes`.

For example:

```sql

spark-sql> select make_interval(2019, 11, 1, 1, 12, 30, 01.001001);

2019 years 11 months 8 days 12 hours 30 minutes 1.001001 seconds

```

### Why are the changes needed?

- To improve user experience with Spark SQL, and allow users making `INTERVAL` columns from other columns containing `years`, `months` ... `seconds`. Currently, users can make an `INTERVAL` column from other columns only by constructing a `STRING` column and cast it to `INTERVAL`. Have a look at the `IntervalBenchmark` as an example.

- To maintain feature parity with PostgreSQL which provides such function:

```sql

# SELECT make_interval(2019, 11);

make_interval

--------------------

2019 years 11 mons

```

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

- By new tests for the `MakeInterval` expression to `IntervalExpressionsSuite`

- By tests in `interval.sql`

Closes#26446 from MaxGekk/make_interval.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

- `SqlBase.g4` is modified to support a negative sign `-` in the interval type constructor from a string and in interval literals

- Negate interval in `AstBuilder` if a sign presents.

- Interval related SQL statements are moved from `inputs/datetime.sql` to new file `inputs/interval.sql`

For example:

```sql

spark-sql> select -interval '-1 month 1 day -1 second';

1 months -1 days 1 seconds

spark-sql> select -interval -1 month 1 day -1 second;

1 months -1 days 1 seconds

```

### Why are the changes needed?

For feature parity with PostgreSQL which supports that:

```sql

# select -interval '-1 month 1 day -1 second';

?column?

-------------------------

1 mon -1 days +00:00:01

(1 row)

```

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

- Added tests to `ExpressionParserSuite`

- by `interval.sql`

Closes#26438 from MaxGekk/negative-interval.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

In the PR, I propose an enumeration for interval units with the value `YEAR`, `MONTH`, `WEEK`, `DAY`, `HOUR`, `MINUTE`, `SECOND`, `MILLISECOND`, `MICROSECOND` and `NANOSECOND`.

### Why are the changes needed?

- This should prevent typos in interval unit names

- Stronger type checking of unit parameters.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By existing test suites `ExpressionParserSuite` and `IntervalUtilsSuite`

Closes#26455 from MaxGekk/interval-unit-enum.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

Add AlterViewAsStatement and make ALTER VIEW ... QUERY go through the same catalog/table resolution framework of v2 commands.

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

```

USE my_catalog

DESC v // success and describe the view v from my_catalog

ALTER VIEW v SELECT 1 // report view not found as there is no view v in the session catalog

```

Yes. When running ALTER VIEW ... QUERY, Spark fails the command if the current catalog is set to a v2 catalog, or the view name specified a v2 catalog.

unit tests

Closes#26453 from huaxingao/spark-29730.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Remove the duplicate code

See the build failure: https://amplab.cs.berkeley.edu/jenkins/view/Spark%20QA%20Compile/job/spark-master-compile-maven-hadoop-3.2/986/

### Why are the changes needed?

Fix the compilation

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

The existing tests

Closes#26445 from gatorsmile/hotfixPraser.

Authored-by: Xiao Li <gatorsmile@gmail.com>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>

### What changes were proposed in this pull request?

This PR supports MERGE INTO in the parser and add the corresponding logical plan. The SQL syntax likes,

```

MERGE INTO [ds_catalog.][multi_part_namespaces.]target_table [AS target_alias]

USING [ds_catalog.][multi_part_namespaces.]source_table | subquery [AS source_alias]

ON <merge_condition>

[ WHEN MATCHED [ AND <condition> ] THEN <matched_action> ]

[ WHEN MATCHED [ AND <condition> ] THEN <matched_action> ]

[ WHEN NOT MATCHED [ AND <condition> ] THEN <not_matched_action> ]

```

where

```

<matched_action> =

DELETE |

UPDATE SET * |

UPDATE SET column1 = value1 [, column2 = value2 ...]

<not_matched_action> =

INSERT * |

INSERT (column1 [, column2 ...]) VALUES (value1 [, value2 ...])

```

### Why are the changes needed?

This is a start work for introduce `MERGE INTO` support for the builtin datasource, and the design work for the `MERGE INTO` support in DSV2.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

New test cases.

Closes#26167 from xianyinxin/SPARK-28893.

Authored-by: xy_xin <xianyin.xxy@alibaba-inc.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Replace qualifiedName with multipartIdentifier in parser rules of DDL commands.

### Why are the changes needed?

There are identifiers in some DDL rules we use `qualifiedName`. We should use `multipartIdentifier` because it can capture wrong identifiers such as `test-table`, `test-col`.

### Does this PR introduce any user-facing change?

Yes. Wrong identifiers such as test-table, will be captured now after this change.

### How was this patch tested?

Unit tests.

Closes#26419 from viirya/SPARK-29680-followup2.

Lead-authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Co-authored-by: Liang-Chi Hsieh <liangchi@uber.com>

Signed-off-by: Liang-Chi Hsieh <liangchi@uber.com>

### What changes were proposed in this pull request?

interval type support >, >=, <, <=, =, <=>, order by, min,max..

### Why are the changes needed?

Part of SPARK-27764 Feature Parity between PostgreSQL and Spark

### Does this PR introduce any user-facing change?

yes, we now support compare intervals

### How was this patch tested?

add ut

Closes#26337 from yaooqinn/SPARK-29679.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

avg aggregate support interval type values

### Why are the changes needed?

Part of SPARK-27764 Feature Parity between PostgreSQL and Spark

### Does this PR introduce any user-facing change?

yes, we can do avg on intervals

### How was this patch tested?

add ut

Closes#26347 from yaooqinn/SPARK-29688.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Spark org.apache.spark.sql.functions do not have `if` function so conditions are expressed using `when-otherwise` function. However `If` (which is available in SQL) has more efficient code gen. This pr rewrites `when-otherwise` conditions to `If` if it is possible (`when-otherwise` with single branch)

### Why are the changes needed?

It is an optimization enhancement. Here is a simple performance comparison (tested in local mode (with 4 cores)):

```

val df = spark.range(10000000000L).withColumn("x", rand)

val resultA = df.withColumn("r", when($"x" < 0.5, lit(1)).otherwise(lit(0))).agg(sum($"r"))

val resultB = df.withColumn("r", expr("if(x < 0.5, 1, 0)")).agg(sum($"r"))

resultA.collect() // takes 56s to finish

resultB.collect() // takes 30s to finish

```

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

New test is added.

Closes#26294 from davidvrba/spark-28477_rewriteCaseWhenToIf.

Authored-by: davidvrba <vrba.dave@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Move method add/subtract/negate from CalendarInterval to IntervalUtils

### Why are the changes needed?

https://github.com/apache/spark/pull/26410#discussion_r343125468 suggested here

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

add uts and move some

Closes#26423 from yaooqinn/SPARK-29787.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

```java

public static final int YEARS_PER_DECADE = 10;

public static final int YEARS_PER_CENTURY = 100;

public static final int YEARS_PER_MILLENNIUM = 1000;

public static final byte MONTHS_PER_QUARTER = 3;

public static final int MONTHS_PER_YEAR = 12;

public static final byte DAYS_PER_WEEK = 7;

public static final long DAYS_PER_MONTH = 30L;

public static final long HOURS_PER_DAY = 24L;

public static final long MINUTES_PER_HOUR = 60L;

public static final long SECONDS_PER_MINUTE = 60L;

public static final long SECONDS_PER_HOUR = MINUTES_PER_HOUR * SECONDS_PER_MINUTE;

public static final long SECONDS_PER_DAY = HOURS_PER_DAY * SECONDS_PER_HOUR;

public static final long MILLIS_PER_SECOND = 1000L;

public static final long MILLIS_PER_MINUTE = SECONDS_PER_MINUTE * MILLIS_PER_SECOND;

public static final long MILLIS_PER_HOUR = MINUTES_PER_HOUR * MILLIS_PER_MINUTE;

public static final long MILLIS_PER_DAY = HOURS_PER_DAY * MILLIS_PER_HOUR;

public static final long MICROS_PER_MILLIS = 1000L;

public static final long MICROS_PER_SECOND = MILLIS_PER_SECOND * MICROS_PER_MILLIS;

public static final long MICROS_PER_MINUTE = SECONDS_PER_MINUTE * MICROS_PER_SECOND;

public static final long MICROS_PER_HOUR = MINUTES_PER_HOUR * MICROS_PER_MINUTE;

public static final long MICROS_PER_DAY = HOURS_PER_DAY * MICROS_PER_HOUR;

public static final long MICROS_PER_MONTH = DAYS_PER_MONTH * MICROS_PER_DAY;

/* 365.25 days per year assumes leap year every four years */

public static final long MICROS_PER_YEAR = (36525L * MICROS_PER_DAY) / 100;

public static final long NANOS_PER_MICROS = 1000L;

public static final long NANOS_PER_MILLIS = MICROS_PER_MILLIS * NANOS_PER_MICROS;

public static final long NANOS_PER_SECOND = MILLIS_PER_SECOND * NANOS_PER_MILLIS;

```

The above parameters are defined in IntervalUtils, DateTimeUtils, and CalendarInterval, some of them are redundant, some of them are cross-referenced.

### Why are the changes needed?

To simplify code, enhance consistency and reduce risks

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

modified uts

Closes#26399 from yaooqinn/SPARK-29757.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

remove the leading "interval" in `CalendarInterval.toString`.

### Why are the changes needed?

Although it's allowed to have "interval" prefix when casting string to int, it's not recommended.

This is also consistent with pgsql:

```

cloud0fan=# select interval '1' day;

interval

----------

1 day

(1 row)

```

### Does this PR introduce any user-facing change?

yes, when display a dataframe with interval type column, the result is different.

### How was this patch tested?

updated tests.

Closes#26401 from cloud-fan/interval.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose new function `stringToInterval()` in `IntervalUtils` for converting `UTF8String` to `CalendarInterval`. The function is used in casting a `STRING` column to an `INTERVAL` column.

### Why are the changes needed?

The proposed implementation is ~10 times faster. For example, parsing 9 interval units on JDK 8:

Before:

```

9 units w/ interval 14004 14125 116 0.1 14003.6 0.0X

9 units w/o interval 13785 14056 290 0.1 13784.9 0.0X

```

After:

```

9 units w/ interval 1343 1344 1 0.7 1343.0 0.3X

9 units w/o interval 1345 1349 8 0.7 1344.6 0.3X

```

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

- By new tests for `stringToInterval` in `IntervalUtilsSuite`

- By existing tests

Closes#26256 from MaxGekk/string-to-interval.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Handle the inconsistence dividing zeros between literals and columns.

fix the null issue too.

### Why are the changes needed?

BUG FIX

### 1 Handle the inconsistence dividing zeros between literals and columns

```sql

-- !query 24

select

k,

v,

cast(k as interval) / v,

cast(k as interval) * v

from VALUES

('1 seconds', 1),

('2 seconds', 0),

('3 seconds', null),

(null, null),

(null, 0) t(k, v)

-- !query 24 schema

struct<k:string,v:int,divide_interval(CAST(k AS INTERVAL), CAST(v AS DOUBLE)):interval,multiply_interval(CAST(k AS INTERVAL), CAST(v AS DOUBLE)):interval>

-- !query 24 output

1 seconds 1 interval 1 seconds interval 1 seconds

2 seconds 0 interval 0 microseconds interval 0 microseconds

3 seconds NULL NULL NULL

NULL 0 NULL NULL

NULL NULL NULL NULL

```

```sql

-- !query 21

select interval '1 year 2 month' / 0

-- !query 21 schema

struct<divide_interval(interval 1 years 2 months, CAST(0 AS DOUBLE)):interval>

-- !query 21 output

NULL

```

in the first case, interval ’2 seconds ‘ / 0, it produces `interval 0 microseconds `

in the second case, it is `null`

### 2 null literal issues

```sql

-- !query 20

select interval '1 year 2 month' / null

-- !query 20 schema

struct<>

-- !query 20 output

org.apache.spark.sql.AnalysisException

cannot resolve '(interval 1 years 2 months / NULL)' due to data type mismatch: differing types in '(interval 1 years 2 months / NULL)' (interval and null).; line 1 pos 7

-- !query 22

select interval '4 months 2 weeks 6 days' * null

-- !query 22 schema

struct<>

-- !query 22 output

org.apache.spark.sql.AnalysisException

cannot resolve '(interval 4 months 20 days * NULL)' due to data type mismatch: differing types in '(interval 4 months 20 days * NULL)' (interval and null).; line 1 pos 7

-- !query 23

select null * interval '4 months 2 weeks 6 days'

-- !query 23 schema

struct<>

-- !query 23 output

org.apache.spark.sql.AnalysisException

cannot resolve '(NULL * interval 4 months 20 days)' due to data type mismatch: differing types in '(NULL * interval 4 months 20 days)' (null and interval).; line 1 pos 7

```

dividing or multiplying null literals, error occurs; where in column is fine as the first case

### Does this PR introduce any user-facing change?

NO, maybe yes, but it is just a follow-up

### How was this patch tested?

add uts

cc cloud-fan MaxGekk maropu

Closes#26410 from yaooqinn/SPARK-29387.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

During creation of array, if CreateArray does not gets any children to set data type for array, it will create an array of null type .

### Why are the changes needed?

When empty array is created, it should be declared as array<null>.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Tested manually

Closes#26324 from amanomer/29462.

Authored-by: Aman Omer <amanomer1996@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This patch removes v1 ALTER TABLE CHANGE COLUMN syntax.

### Why are the changes needed?

Since in v2 we have ALTER TABLE CHANGE COLUMN and ALTER TABLE RENAME COLUMN, this old syntax is not necessary now and can be confusing.

The v2 ALTER TABLE CHANGE COLUMN should fallback to v1 AlterTableChangeColumnCommand (#26354).

### Does this PR introduce any user-facing change?

Yes, the old v1 ALTER TABLE CHANGE COLUMN syntax is removed.

### How was this patch tested?

Unit tests.

Closes#26338 from viirya/SPARK-29680.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Added new expressions `MultiplyInterval` and `DivideInterval` to multiply/divide an interval by a numeric. Updated `TypeCoercion.DateTimeOperations` to turn the `Multiply`/`Divide` expressions of `CalendarIntervalType` and `NumericType` to `MultiplyInterval`/`DivideInterval`.

To support new operations, added new methods `multiply()` and `divide()` to `CalendarInterval`.

### Why are the changes needed?

- To maintain feature parity with PostgreSQL which supports multiplication and division of intervals by doubles:

```sql

# select interval '1 hour' / double precision '1.5';

?column?

----------

00:40:00

```

- To conform the SQL standard which defines those operations: `numeric * interval`, `interval * numeric` and `interval / numeric`. See [4.5.3 Operations involving datetimes and intervals](http://www.contrib.andrew.cmu.edu/~shadow/sql/sql1992.txt).

- Improve Spark SQL UX and allow users to adjust interval columns. For example:

```sql

spark-sql> select (timestamp'now' - timestamp'yesterday') * 1.3;

interval 2 days 10 hours 39 minutes 38 seconds 568 milliseconds 900 microseconds

```

### Does this PR introduce any user-facing change?

Yes, previously the following query fails with the error:

```sql

spark-sql> select interval 1 hour 30 minutes * 1.5;

Error in query: cannot resolve '(interval 1 hours 30 minutes * 1.5BD)' due to data type mismatch: differing types in '(interval 1 hours 30 minutes * 1.5BD)' (interval and decimal(2,1)).; line 1 pos 7;

```

After:

```sql

spark-sql> select interval 1 hour 30 minutes * 1.5;

interval 2 hours 15 minutes

```

### How was this patch tested?

- Added tests for the `multiply()` and `divide()` methods to `CalendarIntervalSuite.java`

- New test suite `IntervalExpressionsSuite`

- by tests for `Multiply` -> `MultiplyInterval` and `Divide` -> `DivideInterval` in `TypeCoercionSuite`

- updated `datetime.sql`

Closes#26132 from MaxGekk/interval-mul-div.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Add AlterTableSerDePropertiesStatement and make ALTER TABLE ... SET SERDE/SERDEPROPERTIES go through the same catalog/table resolution framework of v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

```

USE my_catalog

DESC t // success and describe the table t from my_catalog

ALTER TABLE t SET SERDE 'org.apache.class' // report table not found as there is no table t in the session catalog

```

### Does this PR introduce any user-facing change?

Yes. When running ALTER TABLE ... SET SERDE/SERDEPROPERTIES, Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

Unit tests.

Closes#26374 from huaxingao/spark_29695.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR introduces a new SQL command: `SHOW CURRENT NAMESPACE`.

### Why are the changes needed?

Datasource V2 supports multiple catalogs/namespaces and having `SHOW CURRENT NAMESPACE` to retrieve the current catalog/namespace info would be useful.

### Does this PR introduce any user-facing change?

Yes, the user can perform the following:

```

scala> spark.sql("SHOW CURRENT NAMESPACE").show

+-------------+---------+

| catalog|namespace|

+-------------+---------+

|spark_catalog| default|

+-------------+---------+

scala> spark.sql("USE testcat.ns1.ns2").show

scala> spark.sql("SHOW CURRENT NAMESPACE").show

+-------+---------+

|catalog|namespace|

+-------+---------+

|testcat| ns1.ns2|

+-------+---------+

```

### How was this patch tested?

Added unit tests.

Closes#26379 from imback82/show_current_catalog.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

sum support interval values

### Why are the changes needed?

Part of SPARK-27764 Feature Parity between PostgreSQL and Spark

### Does this PR introduce any user-facing change?

yes, sum can evaluate intervals

### How was this patch tested?

add ut

Closes#26325 from yaooqinn/SPARK-29663.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Add AlterTableAddPartitionStatement and make ALTER TABLE ... ADD PARTITION go through the same catalog/table resolution framework of v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

```

USE my_catalog

DESC t // success and describe the table t from my_catalog

ALTER TABLE t ADD PARTITION (id=1) // report table not found as there is no table t in the session catalog

```

### Does this PR introduce any user-facing change?

Yes. When running ALTER TABLE ... ADD PARTITION, Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

Unit tests

Closes#26369 from imback82/spark-29678.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

- Retry the tests for special date-time values on failure. The tests can potentially fail when reference values were taken before midnight and test code resolves special values after midnight. The retry can guarantees that the tests run during the same day.

- Simplify getting of the current timestamp via `Instant.now()`. This should avoid any issues of converting current local datetime to an instance. For example, the same local time can be mapped to 2 instants when clocks are turned backward 1 hour on daylight saving date.

- Extract common code to SQLHelper

- Set the tested zoneId to the session time zone in `DateTimeUtilsSuite`.

### Why are the changes needed?

To make the tests more stable.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By existing test suites `Date`/`TimestampFormatterSuite` and `DateTimeUtilsSuite`.

Closes#26380 from MaxGekk/retry-on-fail.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to changed `CalendarInterval.toString`:

- to skip the `week` unit

- to convert `milliseconds` and `microseconds` as the fractional part of the `seconds` unit.

### Why are the changes needed?

To improve readability.

### Does this PR introduce any user-facing change?

Yes

### How was this patch tested?

- By `CalendarIntervalSuite` and `IntervalUtilsSuite`

- `literals.sql`, `datetime.sql` and `interval.sql`

Closes#26367 from MaxGekk/interval-to-string-format.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This is somewhat a complement of https://github.com/apache/spark/pull/21853.

The `Sort` without `Limit` operator in `Join` subquery is useless, it's the same case in `GroupBy` when the aggregation function is order irrelevant, such as `count`, `sum`.

This PR try to remove this kind of `Sort` operator in `SQL Optimizer`.

### Why are the changes needed?

For example, `select count(1) from (select a from test1 order by a)` is equal to `select count(1) from (select a from test1)`.

'select * from (select a from test1 order by a) t1 join (select b from test2) t2 on t1.a = t2.b' is equal to `select * from (select a from test1) t1 join (select b from test2) t2 on t1.a = t2.b`.

Remove useless `Sort` operator can improve performance.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Adding new UT `RemoveSortInSubquerySuite.scala`

Closes#26011 from WangGuangxin/remove_sorts.

Authored-by: wangguangxin.cn <wangguangxin.cn@bytedance.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Support non-reversed keywords to be used in high order functions.

### Why are the changes needed?

the keywords are non-reversed.

### Does this PR introduce any user-facing change?

yes, all non-reversed keywords can be used in high order function correctly

### How was this patch tested?

add uts

Closes#26366 from yaooqinn/SPARK-29722.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

The `assertEquals` method of JUnit Assert requires the first parameter to be the expected value. In this PR, I propose to change the order of parameters when the expected value is passed as the second parameter.

### Why are the changes needed?

Wrong order of assert parameters confuses when the assert fails and the parameters have special string representation. For example:

```java

assertEquals(input1.add(input2), new CalendarInterval(5, 5, 367200000000L));

```

```

java.lang.AssertionError:

Expected :interval 5 months 5 days 101 hours

Actual :interval 5 months 5 days 102 hours

```

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By existing tests.

Closes#26377 from MaxGekk/fix-order-in-assert-equals.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

re-arrange the parser rules to make it clear that multiple unit TO unit statement like `SELECT INTERVAL '1-1' YEAR TO MONTH '2-2' YEAR TO MONTH` is not allowed.

### Why are the changes needed?

This is definitely an accident that we support such a weird syntax in the past. It's not supported by any other DBs and I can't think of any use case of it. Also no test covers this syntax in the current codebase.

### Does this PR introduce any user-facing change?

Yes, and a migration guide item is added.

### How was this patch tested?

new tests.

Closes#26285 from cloud-fan/syntax.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

###What changes were proposed in this pull request?

Add AlterTableDropPartitionStatement and make ALTER TABLE/VIEW ... DROP PARTITION go through the same catalog/table resolution framework of v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

```

USE my_catalog

DESC t // success and describe the table t from my_catalog

ALTER TABLE t DROP PARTITION (id=1) // report table not found as there is no table t in the session catalog

```

### Does this PR introduce any user-facing change?

Yes. When running ALTER TABLE/VIEW ... DROP PARTITION, Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

Unit tests.

Closes#26303 from huaxingao/spark-29643.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Current CalendarInterval has 2 fields: months and microseconds. This PR try to change it

to 3 fields: months, days and microseconds. This is because one logical day interval may

have different number of microseconds (daylight saving).

### Why are the changes needed?

One logical day interval may have different number of microseconds (daylight saving).

For example, in PST timezone, there will be 25 hours from 2019-11-2 12:00:00 to

2019-11-3 12:00:00

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

unit test and new added test cases

Closes#26134 from LinhongLiu/calendarinterval.

Authored-by: Liu,Linhong <liulinhong@baidu.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Add AlterTableRenamePartitionStatement and make ALTER TABLE ... RENAME TO PARTITION go through the same catalog/table resolution framework of v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

```

USE my_catalog

DESC t // success and describe the table t from my_catalog

ALTER TABLE t PARTITION (id=1) RENAME TO PARTITION (id=2) // report table not found as there is no table t in the session catalog

```

### Does this PR introduce any user-facing change?

Yes. When running ALTER TABLE ... RENAME TO PARTITION, Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

Unit tests.

Closes#26350 from huaxingao/spark_29676.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Liang-Chi Hsieh <liangchi@uber.com>

Bring back https://github.com/apache/spark/pull/25955

### What changes were proposed in this pull request?

This adds a new rule, `V2ScanRelationPushDown`, to push filters and projections in to a new `DataSourceV2ScanRelation` in the optimizer. That scan is then used when converting to a physical scan node. The new relation correctly reports stats based on the scan.

To run scan pushdown before rules where stats are used, this adds a new optimizer override, `earlyScanPushDownRules` and a batch for early pushdown in the optimizer, before cost-based join reordering. The other early pushdown rule, `PruneFileSourcePartitions`, is moved into the early pushdown rule set.

This also moves pushdown helper methods from `DataSourceV2Strategy` into a util class.

### Why are the changes needed?

This is needed for DSv2 sources to supply stats for cost-based rules in the optimizer.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

This updates the implementation of stats from `DataSourceV2Relation` so tests will fail if stats are accessed before early pushdown for v2 relations.

Closes#26341 from cloud-fan/back.

Lead-authored-by: Wenchen Fan <wenchen@databricks.com>

Co-authored-by: Ryan Blue <blue@apache.org>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

- Added `getDuration()` to calculate interval duration in specified time units assuming provided days per months

- Added `isNegative()` which return `true` is the interval duration is less than 0

- Fix checking negative intervals by using `isNegative()` in structured streaming classes

- Fix checking of `year-months` intervals

### Why are the changes needed?

This fixes incorrect checking of negative intervals. An interval is negative when its duration is negative but not if interval's months **or** microseconds is negative. Also this fixes checking of `year-month` interval support because the `month` field could be negative.

### Does this PR introduce any user-facing change?

Should not

### How was this patch tested?

- Added tests for the `getDuration()` and `isNegative()` methods to `IntervalUtilsSuite`

- By existing SS tests

Closes#26177 from MaxGekk/interval-is-positive.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Update `AlterTableSetLocationStatement` to store `partitionSpec` and make `ALTER TABLE a.b.c PARTITION(...) SET LOCATION 'loc'` fail if `partitionSpec` is set with unsupported message.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

```

USE my_catalog

DESC t // success and describe the table t from my_catalog

ALTER TABLE t PARTITION(...) SET LOCATION 'loc' // report set location with partition spec is not supported.

```

### Does this PR introduce any user-facing change?

yes. When running ALTER TABLE (set partition location), Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

New unit tests

Closes#26304 from imback82/alter_table_partition_loc.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Add ShowColumnsStatement and make SHOW COLUMNS go through the same catalog/table resolution framework of v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

USE my_catalog

DESC t // success and describe the table t from my_catalog

SHOW COLUMNS FROM t // report table not found as there is no table t in the session catalog

### Does this PR introduce any user-facing change?

yes. When running SHOW COLUMNS Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

Unit tests.

Closes#26182 from planga82/feature/SPARK-29523_SHOW_COLUMNS_datasourceV2.

Authored-by: Unknown <soypab@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

In the PR, I propose to extract parsing of the seconds interval units to the private method `parseNanos` in `IntervalUtils` and modify the code to correctly parse the fractional part of the seconds unit of intervals in the cases:

- When the fractional part has less than 9 digits

- The seconds unit is negative

### Why are the changes needed?

The changes are needed to fix the issues:

```sql

spark-sql> select interval '10.123456 seconds';

interval 10 seconds 123 microseconds

```

The correct result must be `interval 10 seconds 123 milliseconds 456 microseconds`

```sql

spark-sql> select interval '-10.123456789 seconds';

interval -9 seconds -876 milliseconds -544 microseconds

```

but the whole interval should be negated, and the result must be `interval -10 seconds -123 milliseconds -456 microseconds`, taking into account the truncation to microseconds.

### Does this PR introduce any user-facing change?

Yes. After changes:

```sql

spark-sql> select interval '10.123456 seconds';

interval 10 seconds 123 milliseconds 456 microseconds

spark-sql> select interval '-10.123456789 seconds';

interval -10 seconds -123 milliseconds -456 microseconds

```

### How was this patch tested?

By existing and new tests in `ExpressionParserSuite`.

Closes#26313 from MaxGekk/fix-interval-nanos-parsing.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This adds a new rule, `V2ScanRelationPushDown`, to push filters and projections in to a new `DataSourceV2ScanRelation` in the optimizer. That scan is then used when converting to a physical scan node. The new relation correctly reports stats based on the scan.

To run scan pushdown before rules where stats are used, this adds a new optimizer override, `earlyScanPushDownRules` and a batch for early pushdown in the optimizer, before cost-based join reordering. The other early pushdown rule, `PruneFileSourcePartitions`, is moved into the early pushdown rule set.

This also moves pushdown helper methods from `DataSourceV2Strategy` into a util class.

### Why are the changes needed?

This is needed for DSv2 sources to supply stats for cost-based rules in the optimizer.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

This updates the implementation of stats from `DataSourceV2Relation` so tests will fail if stats are accessed before early pushdown for v2 relations.

Closes#25955 from rdblue/move-v2-pushdown.

Authored-by: Ryan Blue <blue@apache.org>

Signed-off-by: Ryan Blue <blue@apache.org>

### What changes were proposed in this pull request?

To push the built jars to maven release repository, we need to remove the 'SNAPSHOT' tag from the version name.

Made the following changes in this PR:

* Update all the `3.0.0-SNAPSHOT` version name to `3.0.0-preview`

* Update the sparkR version number check logic to allow jvm version like `3.0.0-preview`

**Please note those changes were generated by the release script in the past, but this time since we manually add tags on master branch, we need to manually apply those changes too.**

We shall revert the changes after 3.0.0-preview release passed.

### Why are the changes needed?

To make the maven release repository to accept the built jars.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

N/A

### What changes were proposed in this pull request?

MICROS_PER_MONTH = DAYS_PER_MONTH * MICROS_PER_DAY

### Why are the changes needed?

fix bug

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

add ut

Closes#26321 from yaooqinn/SPARK-29653.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

```

postgres=# select date '2001-09-28' + integer '7';

?column?

------------

2001-10-05

(1 row)postgres=# select integer '7';

int4

------

7

(1 row)

```

Add support for typed integer literal expression from postgreSQL.

### Why are the changes needed?

SPARK-27764 Feature Parity between PostgreSQL and Spark

### Does this PR introduce any user-facing change?

support typed integer lit in SQL

### How was this patch tested?

add uts

Closes#26291 from yaooqinn/SPARK-29629.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

## What changes were proposed in this pull request?

Now, `RepartitionByExpression` is allowed at Dataset method `Dataset.repartition()`. But in spark sql, we do not have an equivalent functionality.

In hive, we can use `distribute by`, so it's worth to add a hint to support such function.

Similar jira [SPARK-24940](https://issues.apache.org/jira/browse/SPARK-24940)

## Why are the changes needed?

Make repartition hints consistent with repartition api .

## Does this PR introduce any user-facing change?

This pr intends to support quries below;

```

// SQL cases

- sql("SELECT /*+ REPARTITION(c) */ * FROM t")

- sql("SELECT /*+ REPARTITION(1, c) */ * FROM t")

- sql("SELECT /*+ REPARTITION_BY_RANGE(c) */ * FROM t")

- sql("SELECT /*+ REPARTITION_BY_RANGE(1, c) */ * FROM t")

```

## How was this patch tested?

UT

Closes#25464 from ulysses-you/SPARK-28746.

Lead-authored-by: ulysses <youxiduo@weidian.com>

Co-authored-by: ulysses <646303253@qq.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

In the PR, I propose to move all static methods from the `CalendarInterval` class to the `IntervalUtils` object. All those methods are rewritten from Java to Scala.

### Why are the changes needed?

- For consistency with other helper methods. Such methods were placed to the helper object `IntervalUtils`, see https://github.com/apache/spark/pull/26190

- Taking into account that `CalendarInterval` will be fully exposed to users in the future (see https://github.com/apache/spark/pull/25022), it would be nice to clean it up by moving service methods to an internal object.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

- By moved tests from `CalendarIntervalSuite` to `IntervalUtilsSuite`

- By existing test suites

Closes#26261 from MaxGekk/refactoring-calendar-interval.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Add AlterTableRecoverPartitionsStatement and make ALTER TABLE ... RECOVER PARTITIONS go through the same catalog/table resolution framework of v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

```

USE my_catalog

DESC t // success and describe the table t from my_catalog

ALTER TABLE t RECOVER PARTITIONS // report table not found as there is no table t in the session catalog

```

### Does this PR introduce any user-facing change?

Yes. When running ALTER TABLE ... RECOVER PARTITIONS Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

Unit tests.

Closes#26269 from huaxingao/spark-29612.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

To push the built jars to maven release repository, we need to remove the 'SNAPSHOT' tag from the version name.

Made the following changes in this PR:

* Update all the `3.0.0-SNAPSHOT` version name to `3.0.0-preview`

* Update the PySpark version from `3.0.0.dev0` to `3.0.0`

**Please note those changes were generated by the release script in the past, but this time since we manually add tags on master branch, we need to manually apply those changes too.**

We shall revert the changes after 3.0.0-preview release passed.

### Why are the changes needed?

To make the maven release repository to accept the built jars.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

N/A

Closes#26243 from jiangxb1987/3.0.0-preview-prepare.

Lead-authored-by: Xingbo Jiang <xingbo.jiang@databricks.com>

Co-authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Xingbo Jiang <xingbo.jiang@databricks.com>

### What changes were proposed in this pull request?

This PR adds `DROP NAMESPACE` support for V2 catalogs.

### Why are the changes needed?

Currently, you cannot drop namespaces for v2 catalogs.

### Does this PR introduce any user-facing change?

The user can now perform the following:

```SQL

CREATE NAMESPACE mycatalog.ns

DROP NAMESPACE mycatalog.ns

SHOW NAMESPACES IN mycatalog # Will show no namespaces

```

to drop a namespace `ns` inside `mycatalog` V2 catalog.

### How was this patch tested?

Added unit tests.

Closes#26262 from imback82/drop_namespace.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Add LoadDataStatement and make LOAD DATA INTO TABLE go through the same catalog/table resolution framework of v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

```

USE my_catalog

DESC t // success and describe the table t from my_catalog

LOAD DATA INPATH 'filepath' INTO TABLE t // report table not found as there is no table t in the session catalog

```

### Does this PR introduce any user-facing change?

yes. When running LOAD DATA INTO TABLE, Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

Unit tests.

Closes#26178 from viirya/SPARK-29521.

Lead-authored-by: Liang-Chi Hsieh <liangchi@uber.com>

Co-authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This pr is to fix wrong code to check parameter lengths of split methods in `subexpressionEliminationForWholeStageCodegen`.

### Why are the changes needed?

Bug fix.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing tests.

Closes#26267 from maropu/SPARK-29008-FOLLOWUP.

Authored-by: Takeshi Yamamuro <yamamuro@apache.org>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

The `DateTimeUtilsSuite` and `TimestampFormatterSuite` assume constant time difference between `timestamp'yesterday'`, `timestamp'today'` and `timestamp'tomorrow'` which is wrong on daylight switching day - day length can be 23 or 25 hours. In the PR, I propose to use Java 8 time API to calculate instances of `yesterday` and `tomorrow` timestamps.

### Why are the changes needed?

The changes fix test failures and make the tests tolerant to daylight time switching.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By existing test suites `DateTimeUtilsSuite` and `TimestampFormatterSuite`.

Closes#26273 from MaxGekk/midnight-tolerant.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

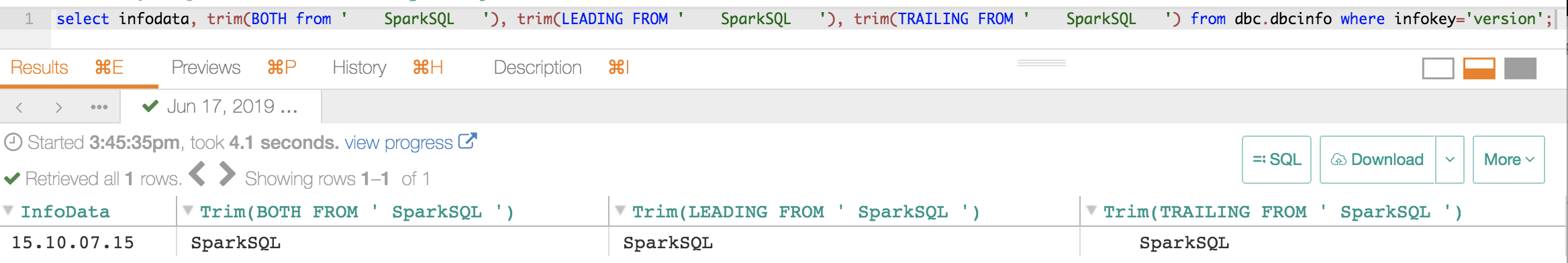

### What changes were proposed in this pull request?

```

hive> select version();

OK

3.1.1 rf4e0529634b6231a0072295da48af466cf2f10b7

Time taken: 2.113 seconds, Fetched: 1 row(s)

```

### Why are the changes needed?

From hive behavior and I guess it is useful for debugging and developing etc.

### Does this PR introduce any user-facing change?

add a misc func

### How was this patch tested?

add ut

Closes#26209 from yaooqinn/SPARK-29554.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This is a follow-up of https://github.com/apache/spark/pull/24557 to fix `since` version.

### Why are the changes needed?

This is found during 3.0.0-preview preparation.

The version will be exposed to our SQL document like the following. We had better fix this.

- https://spark.apache.org/docs/latest/api/sql/#array_min

### Does this PR introduce any user-facing change?

Yes. It's exposed at `DESC FUNCTION EXTENDED` SQL command and SQL doc, but this is new at 3.0.0.

### How was this patch tested?

Manual.

```

spark-sql> DESC FUNCTION EXTENDED min_by;

Function: min_by

Class: org.apache.spark.sql.catalyst.expressions.aggregate.MinBy

Usage: min_by(x, y) - Returns the value of `x` associated with the minimum value of `y`.

Extended Usage:

Examples:

> SELECT min_by(x, y) FROM VALUES (('a', 10)), (('b', 50)), (('c', 20)) AS tab(x, y);

a

Since: 3.0.0

```

Closes#26264 from dongjoon-hyun/SPARK-27653.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Add ShowCreateTableStatement and make SHOW CREATE TABLE go through the same catalog/table resolution framework of v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

```

USE my_catalog

DESC t // success and describe the table t from my_catalog

SHOW CREATE TABLE t // report table not found as there is no table t in the session catalog

```

### Does this PR introduce any user-facing change?

yes. When running SHOW CREATE TABLE, Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

Unit tests.

Closes#26184 from viirya/SPARK-29527.

Lead-authored-by: Liang-Chi Hsieh <liangchi@uber.com>

Co-authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Add UncacheTableStatement and make UNCACHE TABLE go through the same catalog/table resolution framework of v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

```

USE my_catalog

DESC t // success and describe the table t from my_catalog

UNCACHE TABLE t // report table not found as there is no table t in the session catalog

```

### Does this PR introduce any user-facing change?

yes. When running UNCACHE TABLE, Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

New unit tests

Closes#26237 from imback82/uncache_table.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

# What changes were proposed in this pull request?

Add description for ignoreNullFields, which is commited in #26098 , in DataFrameWriter and readwriter.py.

Enable user to use ignoreNullFields in pyspark.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

run unit tests

Closes#26227 from stczwd/json-generator-doc.

Authored-by: stczwd <qcsd2011@163.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Only use antlr4 to parse the interval string, and remove the duplicated parsing logic from `CalendarInterval`.

### Why are the changes needed?

Simplify the code and fix inconsistent behaviors.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Pass the Jenkins with the updated test cases.

Closes#26190 from cloud-fan/parser.

Lead-authored-by: Wenchen Fan <wenchen@databricks.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Recent timezone definition changes in very new JDK 8 (and beyond) releases cause test failures. The below was observed on JDK 1.8.0_232. As before, the easy fix is to allow for these inconsequential variations in test results due to differing definition of timezones.

### Why are the changes needed?

Keeps test passing on the latest JDK releases.

### Does this PR introduce any user-facing change?

None

### How was this patch tested?

Existing tests

Closes#26236 from srowen/SPARK-29578.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

Support SparkSQL use iN/EXISTS with subquery in JOIN condition.

### Why are the changes needed?

Support SQL use iN/EXISTS with subquery in JOIN condition.

### Does this PR introduce any user-facing change?

This PR is for enable user use subquery in `JOIN`'s ON condition. such as we have create three table

```

CREATE TABLE A(id String);

CREATE TABLE B(id String);

CREATE TABLE C(id String);

```

we can do query like :

```

SELECT A.id from A JOIN B ON A.id = B.id and A.id IN (select C.id from C)

```

### How was this patch tested?

ADDED UT

Closes#25854 from AngersZhuuuu/SPARK-29145.

Lead-authored-by: angerszhu <angers.zhu@gmail.com>

Co-authored-by: AngersZhuuuu <angers.zhu@gmail.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>